2. Related Work

Recent research has extensively investigated task orchestration, offloading, and resource allocation in MEC. This study focuses on decision-making approaches that explicitly determine the execution location of tasks across available computing layers. In the literature, this execution-location decision is described using different terms. Some studies refer to it as

task orchestration [

15,

16,

17,

18], while others describe it as

task offloading or

resource allocation [

19,

20]. Additionally, several works formulate the problem as a

scheduling or

task assignment [

13,

21,

22]. These terminological differences are reflected in

Table 1, which compares existing approaches based on their decision scope, execution location, and algorithmic methodology.

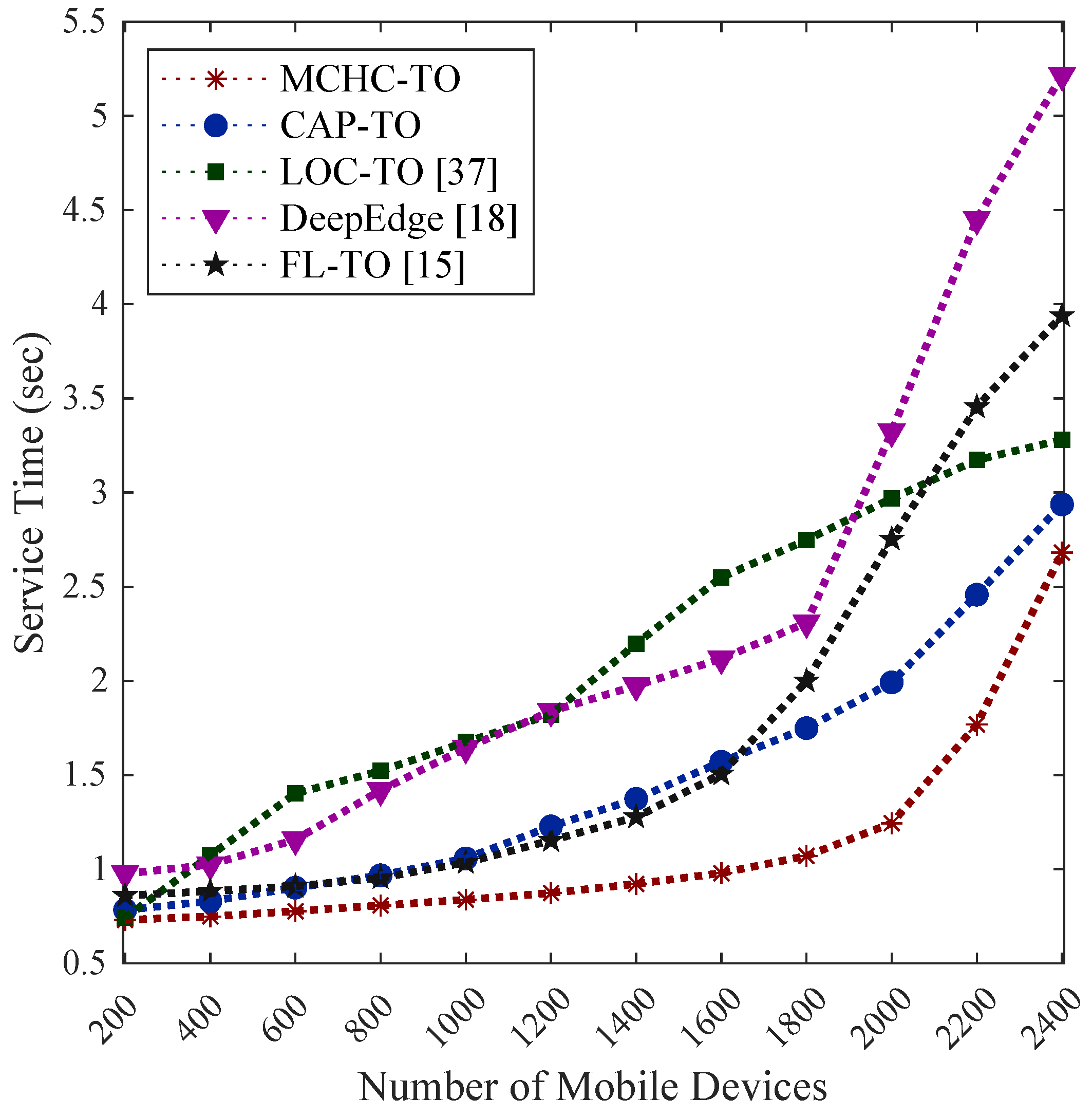

Early approaches to MEC orchestration relied on rule-based techniques. Sonmez et al. [

15] proposed a two-stage fuzzy logic-based orchestrator that considered task requirements, edge server capabilities, and network conditions to define a set of fuzzy rules used to select the execution location. Similarly, Jamil et al. [

16] introduced a two-stage Belief Rule-Based (BRB) orchestrator that distinguished between delay-sensitive and delay-tolerant applications using conjunctive and disjunctive belief rules. Their approach achieved higher success rates than the fuzzy-based method by better capturing application requirements and execution environments. While rule-based approaches can be effective, they require manual rule design and tuning, which becomes increasingly complex and less scalable as MEC systems grow and evolve [

21].

To overcome these limitations, many recent studies have adopted machine learning (ML) and reinforcement learning (RL) techniques to automate orchestration decisions. For instance, the authors of [

17] proposed a two-stage model combining a multilayer perceptron (MLP)–based classification and regression to predict offloading success and service time in vehicular edge computing, achieving improved Quality of Experience (QoE). Yamansavascilar et al. [

18] introduced DeepEdge, a Double Deep Q-Network (DDQN)-based orchestrator designed to select execution locations across edge and cloud servers in order to reduce task failure rates and improve edge resource utilization. In addition, Alfakih et al. [

19] addressed offloading problem using a state–action–reward–state–action (SARSA)-based RL algorithm to minimize energy consumption and processing delay, outperforming Q-learning in their experiments. Similarly, Zheng et al. [

21] developed a Deep Q-Network (DQN)-based orchestration framework that selects execution locations among local edge servers, multiple nearby edge servers, and the cloud. Their approach outperformed Proximal Policy Optimization (PPO) and Deep Deterministic Policy Gradient (DDPG) models in terms of latency, resource utilization, and task success rate. More recently, Wu et al. [

20] proposed a Deep Reinforcement Learning (DRL)-based online scheduler in an Internet of Things (IoT)–MEC–cloud framework that jointly considered performance and security trade-offs, achieving lower latency than offline baselines. Hsieh et al. [

22] investigated task assignment in cooperative MEC systems using Markov decision process (MDP) and DRL models. Specifically, they developed and compared value-based Double Deep Q-Network (DDQN), policy-based Policy Gradient (PG), and hybrid Actor–Critic (AC) approaches to optimize execution delay and energy consumption under dynamic conditions. Similarly, Liu et al. [

23] proposed a Multi-agent reinforcement learning (MARL) framework in which edge nodes collaboratively learn resource allocation and scheduling policies through decentralized DQN-based training.

Although learning-driven approaches can adapt to changing network conditions and often achieve significant performance gains, they typically require extensive training, careful parameter tuning, and considerable computational overhead. These requirements can limit their practicality in large-scale or resource-constrained MEC environments. As a result, alternative solutions that reduce computational complexity while maintaining adaptability remain of interest.

Clustering-based methods offer a training-free and interpretable alternative by reducing the search space for orchestration decisions. Shooshtarian et al. [

24] proposed a two-stage hierarchical clustering approach for resource allocation in fog computing, in which fog nodes were grouped based on resource characteristics and tasks were assigned to the nearest fog layer to minimize latency. However, the proximity-based layer selection strategy may have overlooked better resource options in other layers, limiting its adaptability. Hao et al. [

13] introduced an energy-aware clustering approach in MEC, where edge nodes were clustered using attenuation-based distance metrics and a heuristic scheduler managed intra- and inter-cluster allocations. Since clustering was performed only once during system initialization, the approach was not well-suited to dynamic workloads. Moreover, classical distance-based clustering methods often suffer from scalability and dimensionality challenges as system complexity increases [

25].

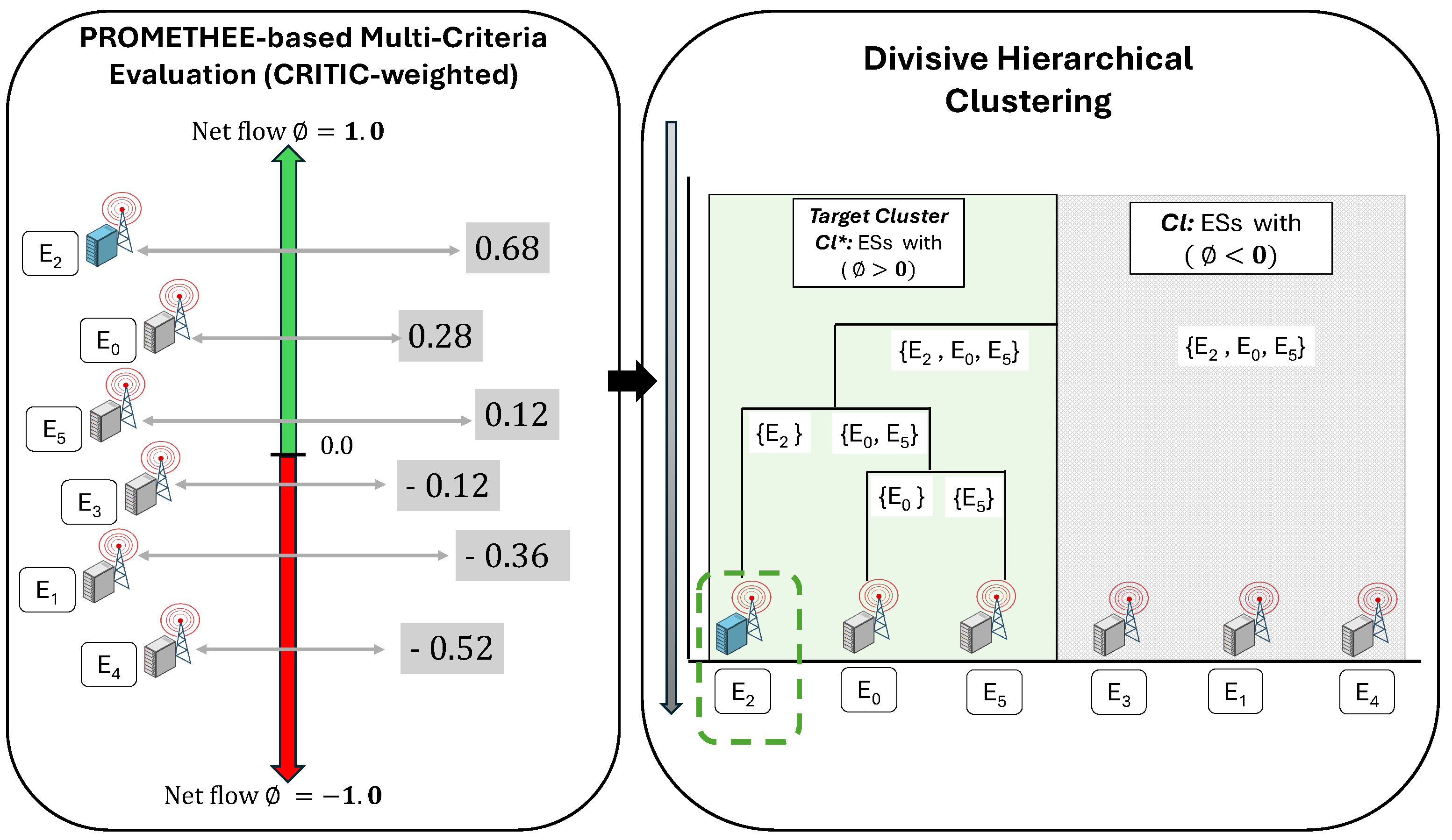

To address these limitations, some studies have integrated clustering with MCDM. De Smet et al. [

26] extended K-means to a preference-based clustering approach, in which binary relations were used to define similarity instead of conventional distance measures. Subsequent works proposed divisive hierarchical clustering approaches based on the Preference Ranking Organization Method for Enrichment Evaluation (PROMETHEE), which provides preference-based rankings to guide cluster formation [

27]. Later studies further extended this approach by incorporating uncertainty modeling techniques, such as Stochastic Multiobjective Acceptability Analysis (SMAA), to explicitly handle uncertainty in preference modeling [

4]. While these methods offer flexible, preference-driven clustering, their applications have largely been limited to financial and decision analysis domains, rather than MEC or task orchestration.

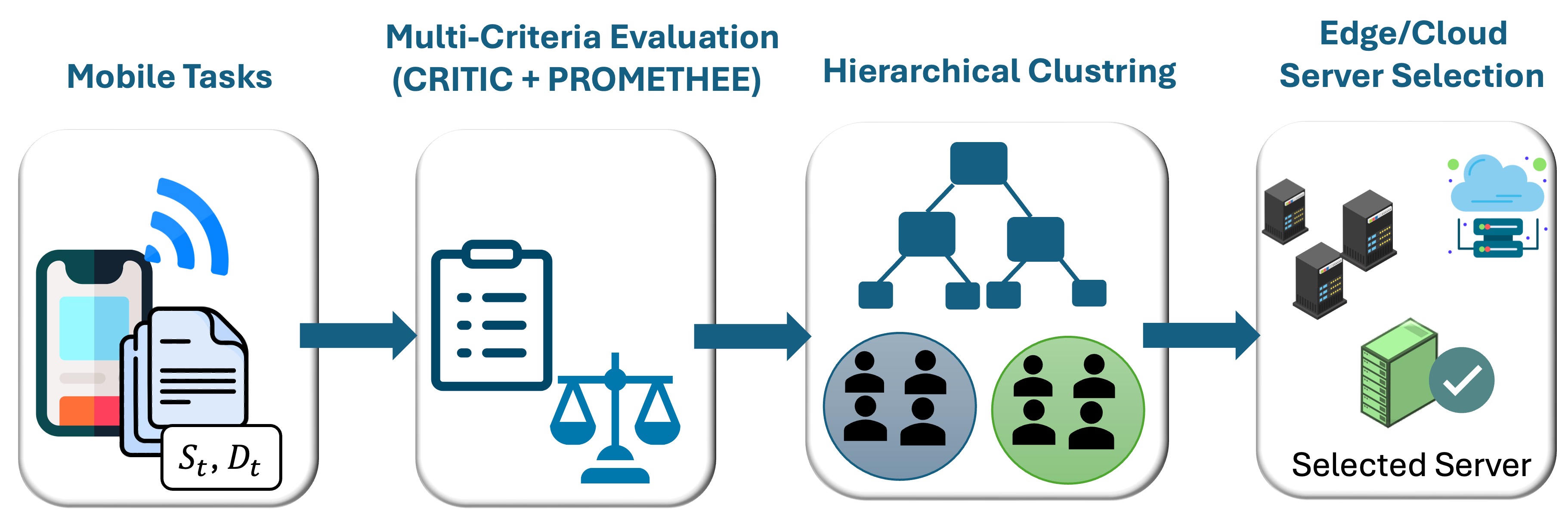

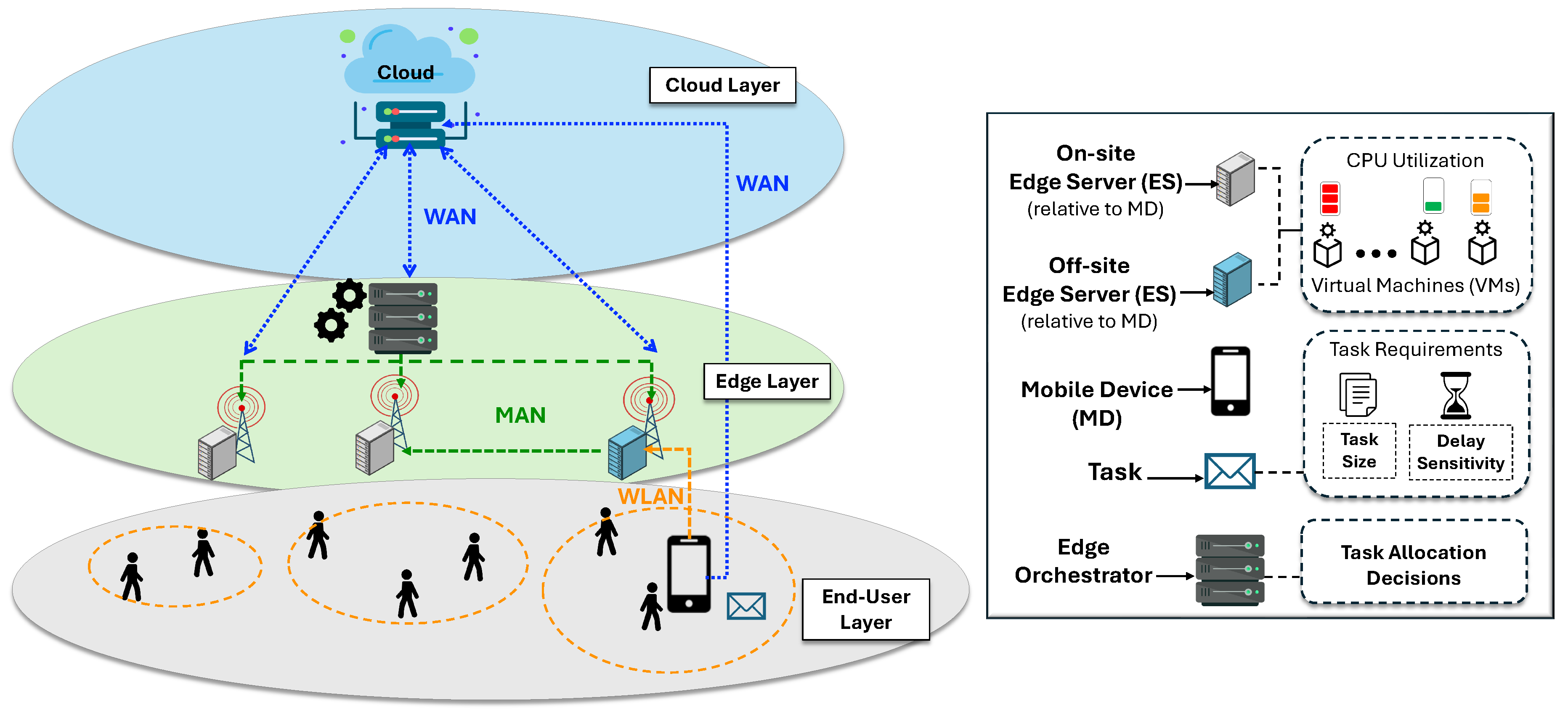

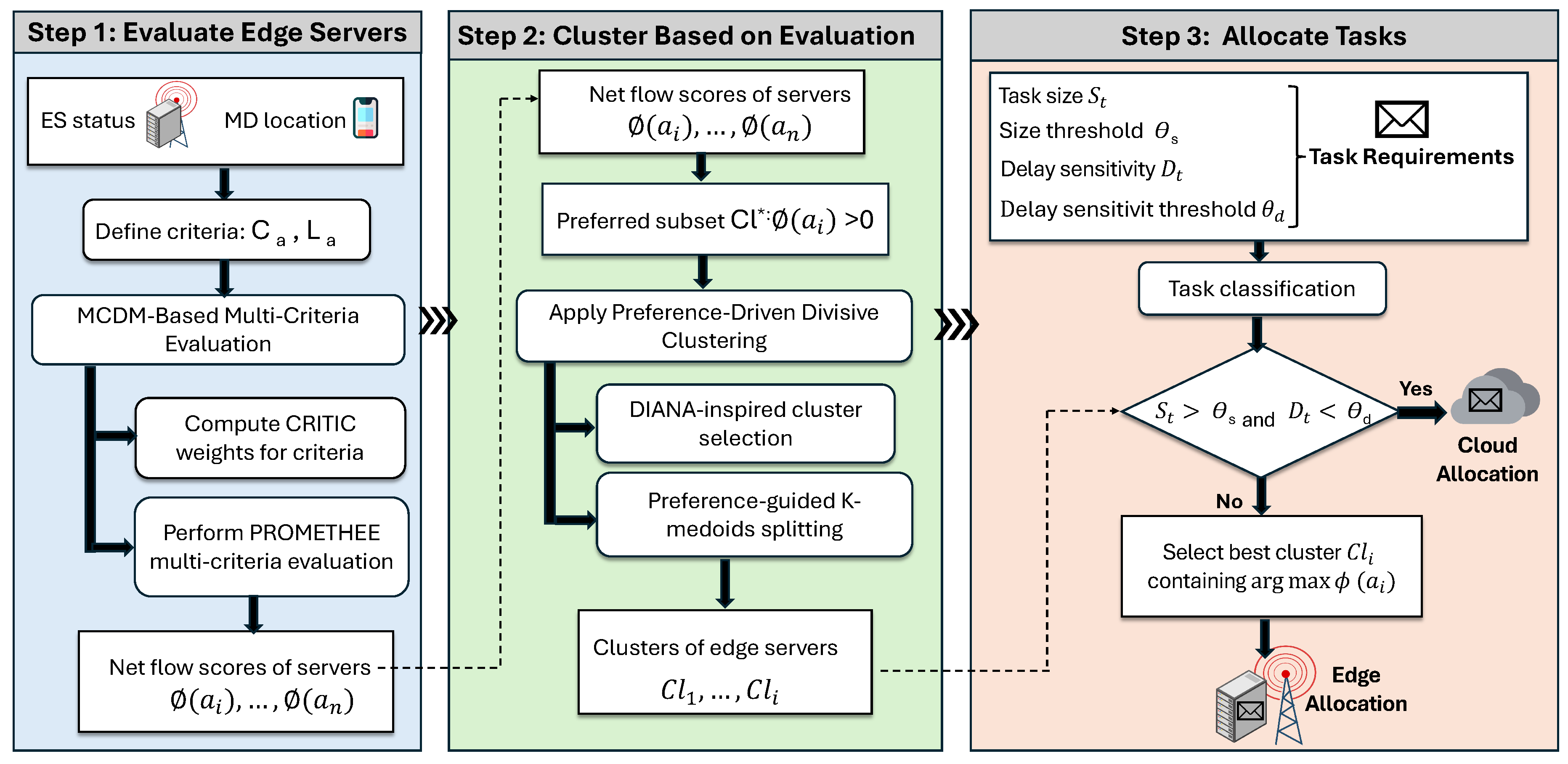

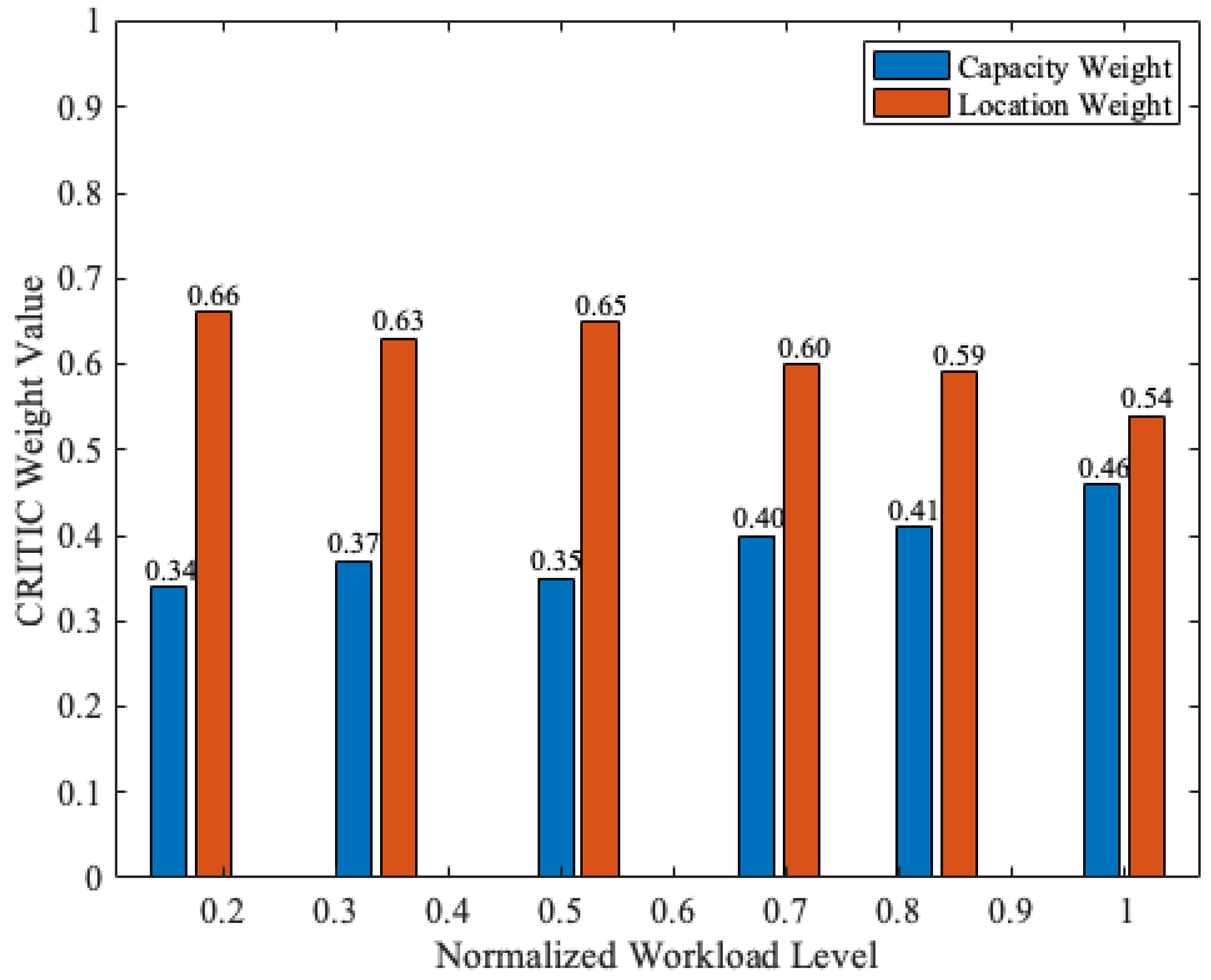

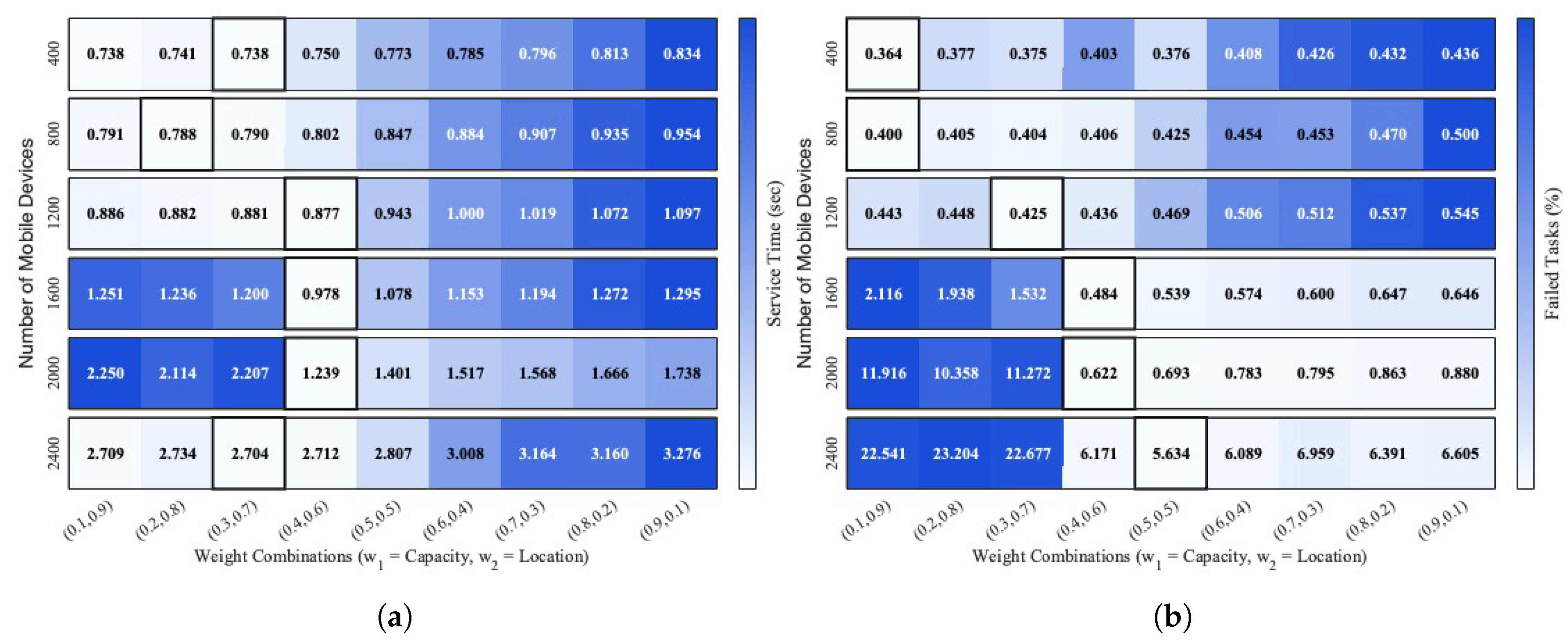

In the existing MEC orchestration literature, most approaches either rely on learning-based models that incur significant training overhead or apply clustering techniques based on static or single-metric criteria. In contrast, the MCHC-TO framework proposed in this work adopts a dynamic, preference-driven orchestration approach that integrates MCDM, specifically PROMETHEE, with hierarchical clustering to group edge servers based on multiple criteria, such as capacity, proximity, and load. This design enables adaptive task offloading while avoiding training overhead. To the best of our knowledge, this is the first study to combine MCDM-based preference evaluation and hierarchical clustering specifically for task orchestration in MEC. A comparative overview of existing approaches is provided in

Table 1, highlighting the methodological gaps addressed by MCHC-TO.

Table 1.

Comparison of this study’s work with existing related works.

Table 1.

Comparison of this study’s work with existing related works.

| Ref |

Decision Scope |

Execution Location |

Method |

Algorithm |

| [15] |

Orchestration |

Edge/Cloud |

Rule-based |

Fuzzy logic |

| [16] |

Orchestration |

Edge/Cloud |

Rule-based |

BRB |

| [17] |

Orchestration |

Edge/Cloud |

Supervised ML |

MLP classification + regression |

| [18] |

Orchestration |

Edge/Cloud |

DRL |

DDQN |

| [19] |

Offloading |

MD/Edge/Cloud |

RL |

SARSA |

| [21] |

Orchestration |

Edge/Cloud |

DRL |

DQN |

| [20] |

Scheduling |

MD/Edge/Cloud |

DRL |

DQN |

| [22] |

Offloading |

Edge/Cloud |

DRL |

DDQN, PG, AC |

| [23] |

Scheduling |

Edge |

MARL |

DQN |

| [24] |

Application Placement |

Fog |

Classical clustering |

AHC |

| [13] |

Scheduling |

Edge |

Classical clustering |

Custom distance-based clustering |

| [26] |

– |

N/A |

MCDM-based clustering |

Extended K-means |

| [27] |

– |

N/A |

MCDM-based clustering |

DHC (PROMETHEE) |

| [4] |

– |

N/A |

MCDM-based clustering |

DHC (SMAA–PROMETHEE) |

| This paper |

Orchestration |

Edge/Cloud |

MCDM-based clustering |

DHC (CRITIC–PROMETHEE) |