Submitted:

22 February 2026

Posted:

25 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We provide a structured survey of anomaly detection approaches that incorporate both agentic AI and multimodal data fusion.

- We introduce a novel taxonomy to classify existing methods based on agent architecture (single-agent vs. multi-agent), reasoning capability, tool integration, and modality scope.

- We review recent benchmark datasets and evaluation methods for multimodal anomaly detection.

- We present key challenges and summarize mitigation strategies and future directions in agentic and multimodal anomaly detection.

2. Agentic Anomaly Detection

2.1. Architectures

2.1.1. Single-Agent Systems

2.1.2. Multi-Agent Systems

- Collaborative Pipelines: In a collaborative multi-agent pipeline, each agent is assigned a specific subtask (data preprocessing, feature extraction, anomaly scoring, explanation, etc.), and the output of one agent feeds into the input of the next. If we label the agents as they appear in the pipeline, the overall detection function can be viewed as a composite of their operations:where x is the initial input and y the final anomaly decision or score. Each focuses on a delimited aspect of the task, and their coordination can be managed via an LLM-based planner. For example, Gu et al. [34] propose ARGOS, a multi-agent time-series AD framework that autonomously generates, validates, and refines detection rules using collaborative agents. Similarly, Yang et al.[35] introduce AD-AGENT, which employs a team of LLM agents to interactively build a complete anomaly detection pipeline from a high-level user instruction. Not all pipelines are strictly sequential; some agents might work in parallel on different data streams or features. For instance, Qin et al. [44] propose MAS-LSTM, where multiple LSTM-based detector agents each monitor a different subset of IIoT sensor streams, and anomalies are decided by voting or averaging their scores.

- Oversight Agents: As agentic systems become more complex, ensuring reliability and consistency is essential. Oversight architectures introduce dedicated agents that monitor and verify the outputs of task-oriented agents, effectively performing anomaly detection on the multi-agent system itself. These agents catch logical inconsistencies, hallucinations, or coordination failures that could compromise anomaly decisions. Formally, let be a collection of observations or outputs from various agents during an investigation; the oversight agent computes a consistency score or logical coherence measure over these. If C falls below a threshold (indicating incoherence or inconsistency), the oversight agent flags a meta-anomaly and can intervene (e.g., by resetting certain agents or requesting additional information). This adds a layer of fault tolerance and accountability to the agentic AD pipeline. For example, He et al. [36] propose SentinelAgent, which deploys an LLM oversight agent to supervise a team of collaborating agents. Similarly, in the Audit-LLM framework for security logs [37], a critic agent reviews the decisions made by a Detector agent and either approves them or asks for refinement, ensuring that high-stakes anomaly alerts

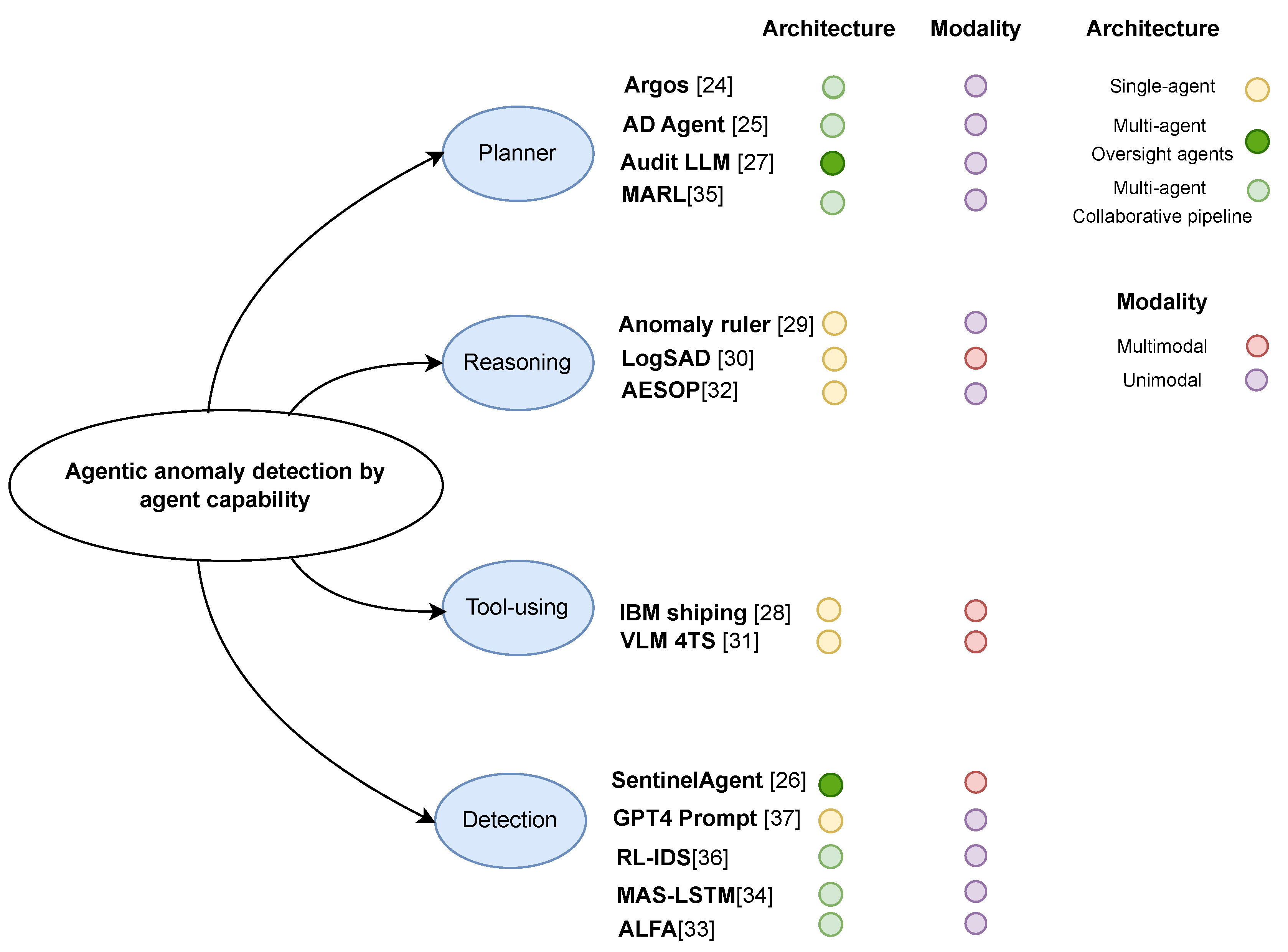

2.2. Agent Capability

2.2.1. Detection-Only Agents

2.2.2. Reasoning Agents

2.2.3. Tool-Using Agents

2.2.4. Planner Agents

2.3. Modality Integration

2.3.1. Unimodal Agentic Detectors

2.3.2. Multimodal Agentic Detectors

3. Multimodal Anomaly Detection

3.1. Foundation Models

3.2. Cross-Modal Fusion Models

3.3. Multimodal Augmentation

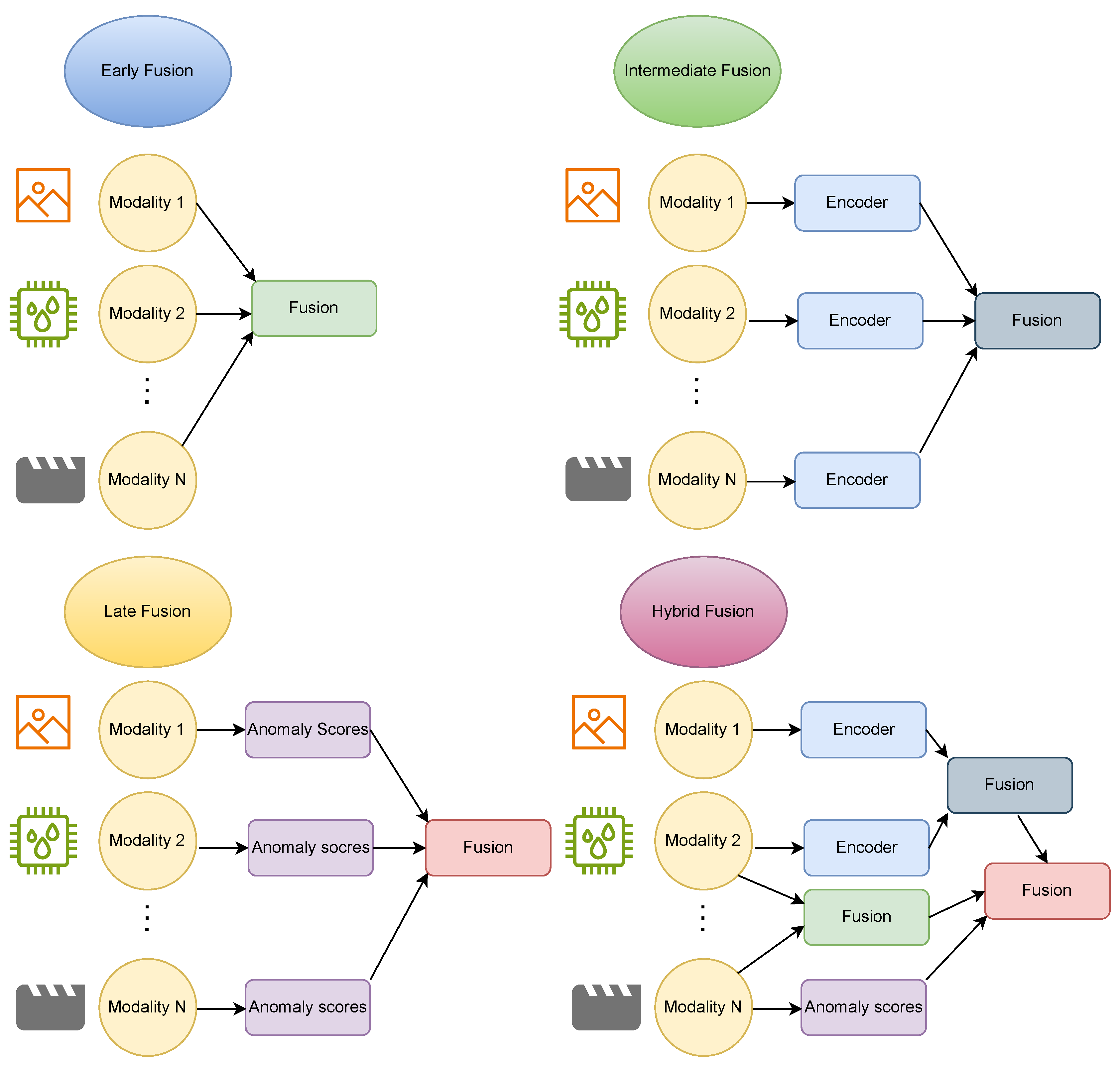

4. Multimodal Fusion Methods

4.1. Fusion Stages

4.1.1. Early Fusion (Data-Level)

4.1.2. Intermediate Fusion (Feature-Level)

4.1.3. Late Fusion (Decision-Level)

4.2. Fusion Operations/architectures

4.2.1. Concatenation

4.2.2. Element-wise Addition or Weighted Sum

4.2.3. Multiplicative or Bilinear Fusion

4.2.4. Attention-Based Fusion

4.2.5. Feature Mapping

4.2.6. Modality and Temporal Alignment

4.2.7. Graph-Based Fusion

4.3. Fusion Design Principles and Trade-offs

- Fuse at Multiple Levels: Combining mid-level feature fusion with late-stage score fusion enhances robustness. Early fusion may overlook modality-specific noise, while multi-scale fusion captures both structural and semantic information [59].

- Selective Feature Fusion: Not all layers contribute equally to cross-modal alignment. Shallow or mid-level features often provide better spatial or temporal grounding across modalities than very early or late representations.

- Learnable and Adaptive Fusion: Models that use attention, gating, or mixture-of-experts mechanisms dynamically adjust modality contributions, outperforming static fusion strategies. This adaptiveness is especially valuable in heterogeneous or noisy environments [56].

- Efficiency: Fusing mid-level or compact embeddings is computationally efficient compared to raw input fusion. Techniques such as bottleneck layers and dimensionality reduction maintain informativeness while reducing overhead.

- Domain-Specific Design: Fusion should be tailored to the task and data characteristics. For instance, if one modality is unreliable or frequently missing, late fusion provides robustness. If anomalies depend on fine-grained correlations across streams (e.g., visual flashes coinciding with audio spikes), then mid-level feature fusion is essential. Domain knowledge can guide initial architecture choices, with further refinement via ablation or architecture search.

5. Multimodal Datasets and Benchmarks

6. Challenges, Mitigations and Future Works

6.1. Data Scarcity

6.2. Multimodal Representation and Alignment

6.3. Agentic Reasoning and LLM Limitations

6.4. Real-Time Inference and Scalability

6.5. Interpretability

6.6. Evaluation and Benchmarking

6.7. Theoretical Foundations

| Challenge | Current Mitigation | Future Directions |

|---|---|---|

| Data scarcity | Synthetic-anomaly generation via GANs / diffusion, data augmentation | Advanced conditional generation (text / prompts), hybrid GAN–diffusion approaches, few-shot simulation |

| Modality alignment | Cross-modal embeddings (CLIP, contrastive losses), feature-fusion layers | Unified multimodal representations (transformers), dynamic fusion strategies, multimodal foundation models |

| LLM domain mismatch | Prompt engineering, few-shot normal exemplars, rule-based prompting | Domain-adapted LLMs, hybrid neuro-symbolic systems, anomaly-aware fine-tuning |

| Real-time & scalability | Knowledge distillation (LLM to lightweight student), fast/slow pipelines | Model compression, efficient LLM architectures, adaptive inference scheduling |

| Benchmarking & evaluation | Emerging datasets (MMAD, AnoVox) | Comprehensive multimodal AD benchmarks & metrics, standardized anomaly taxonomies |

| Theoretical foundations | Ad-hoc frameworks (e.g., graph models for multi-agent systems) | Formal analysis of LLM-agent behavior, robustness theory, multi-agent anomaly theory |

7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Yin, S.; Ding, S.X.; Xie, X.; Luo, H. A review on basic data-driven approaches for industrial process monitoring. IEEE Transactions on Industrial Electronics 2014, 61, 6418–6428. [CrossRef]

- Nizam, H.; Zafar, S.; Lv, Z.; Wang, F.; Hu, X. Real-Time Deep Anomaly Detection Framework for Multivariate Time-Series Data in Industrial IoT. IEEE Sensors Journal 2022, 22, 22836–22849. [CrossRef]

- Park, T. Enhancing Anomaly Detection in Financial Markets with an LLM-based Multi-Agent Framework. arXiv preprint arXiv:2403.19735 2024.

- Ukil, A.; Bandyoapdhyay, S.; Puri, C.; Pal, A. IoT healthcare analytics: The importance of anomaly detection. In Proceedings of the International Conference on Advanced Information Networking and Applications (AINA), 2016, pp. 994–997. [CrossRef]

- García-Teodoro, P.; Díaz-Verdejo, J.; Maciá-Fernández, G.; Vázquez, E. Anomaly-based network intrusion detection: Techniques, systems and challenges. Computers & Security 2009, 28, 18–28. [CrossRef]

- Bhuyan, M.H.; Bhattacharyya, D.K.; Kalita, J.K. Network anomaly detection: Methods, systems and tools. IEEE Communications Surveys & Tutorials 2014, 16, 303–336. [CrossRef]

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. Digital Twin-Based Federated Transfer Learning for Anomaly Detection in Industrial IoT. In Proceedings of the 2025 IEEE Symposium on Computational Intelligence on Engineering/Cyber Physical Systems (CIES), 2025. [CrossRef]

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. Digital Twin Knowledge Distillation for Federated Semi-Supervised Industrial IoT DDoS Detection. In Proceedings of the 2025 IEEE Symposium on Computational Intelligence in Security, Defence and Biometrics Companion (CISDB Companion), 2025, pp. 1–5. [CrossRef]

- Belay, M.A.; Rasheed, A.; Rossi, P.S. Digital Twin-Driven Communication-Efficient Federated Anomaly Detection for Industrial IoT. arXiv preprint arXiv:2601.01701 2026.

- Van Wyk, F.; Wang, Y.; Khojandi, A.; Masoud, N. Real-time sensor anomaly detection and identification in automated vehicles. IEEE Transactions on Intelligent Transportation Systems 2020, 21, 1264–1276. [CrossRef]

- Chandola, V.; Banerjee, A.; Kumar, V. Anomaly detection: A survey. ACM Computing Surveys 2009, 41. [CrossRef]

- Belay, M.A.; Blakseth, S.S.; Rasheed, A.; Salvo Rossi, P. Unsupervised Anomaly Detection for IoT-Based Multivariate Time Series: Existing Solutions, Performance Analysis and Future Directions. Sensors 2023, 23, 2844. [CrossRef]

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. Sparse Non-Linear Vector Autoregressive Networks for Multivariate Time Series Anomaly Detection. IEEE Signal Processing Letters 2025, 32, 331–335. [CrossRef]

- Belay, M.A.; Bernardino, L.F.; Rasheed, A.; Montañés, R.M.; Salvo Rossi, P. Unsupervised Leak Detection for Heat Recovery Steam Generators in Combined-Cycle Gas and Steam Turbine Power Plants. IEEE Sensors Journal 2025. Under review.

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. Autoregressive Density Estimation Transformers for Multivariate Time Series Anomaly Detection. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2025. [CrossRef]

- Pang, G.; Shen, C.; Cao, L.; van den Hengel, A. Deep Learning for Anomaly Detection: A Review. ACM Computing Surveys 2021, 54. [CrossRef]

- Liu, J.; Ma, Z.; Wang, Z.; Zou, C.; Ren, J.; Wang, Z.; Song, L.; Hu, B.; Liu, Y.; Leung, V.C.M. A Survey on Diffusion Models for Anomaly Detection. arXiv preprint arXiv:2501.11430 2025.

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. MTAD: Multiobjective Transformer Network for Unsupervised Multisensor Anomaly Detection. IEEE Sensors Journal 2024, 24, 20254–20265. [CrossRef]

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. Self-Supervised Modular Architecture for Multi-Sensor Anomaly Detection and Localization. In Proceedings of the 2024 IEEE Conference on Artificial Intelligence (CAI), 2024, pp. 1278–1283. [CrossRef]

- Haghipour, A.; Tabella, G.; Stang, J.; Rossi, P.S. Sensor Validation in Carbon Capture and Storage Infrastructures. IEEE Sensors Letters 2025.

- Belay, M.A.; Rasheed, A.; Salvo Rossi, P. Multivariate Time Series Anomaly Detection via Low-Rank and Sparse Decomposition. IEEE Sensors Journal 2024, 24, 34942–34952. [CrossRef]

- Lin, Y.; Chang, Y.; Tong, X.; Yu, J.; Liotta, A.; Huang, G.; Song, W.; Zeng, D.; Wu, Z.; Wang, Y.; et al. A Survey on RGB, 3D, and Multimodal Approaches for Unsupervised Industrial Image Anomaly Detection. arXiv preprint arXiv:2410.21982 2025.

- Li, W.; Zheng, B.; Xu, X.; Gan, J.; Lu, F.; Li, X.; Ni, N.; Tian, Z.; Huang, X.; Gao, S.; et al. Multi-Sensor Object Anomaly Detection: Unifying Appearance, Geometry, and Internal Properties. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025, pp. 9984–9993.

- Willibald, C.; Sliwowski, D.; Lee, D. Multimodal Anomaly Detection with a Mixture-of-Experts. arXiv preprint arXiv:2506.19077 2025.

- Xi, Z.; Chen, W.; Guo, X.; He, W.; Ding, Y.; Hong, B.; Zhang, M.; Wang, J.; Jin, S.; Zhou, E.; et al. The Rise and Potential of Large Language Model Based Agents: A Survey. arXiv preprint arXiv:2309.07864 2023.

- Acharya, D.B.; Kuppan, K.; Divya, B. Agentic AI: Autonomous Intelligence for Complex Goals - A Comprehensive Survey. IEEE Access 2025. [CrossRef]

- Plaat, A.; Van Duijn, M.; Van Stein, N.; Preuss, M.; Van Der, P.; Kees, P.; Batenburg, J. Agentic Large Language Models, a survey. arXiv preprint arXiv:2503.23037 2025.

- Russell-Gilbert, A.; Sommers, A.; Thompson, A.; Cummins, L.; Mittal, S.; Rahimi, S.; Seale, M.; Jaboure, J.; Arnold, T.; Church, J. AAD-LLM: Adaptive Anomaly Detection Using Large Language Models. In Proceedings of the 2024 IEEE International Conference on Big Data (BigData), 2024, pp. 4194–4203. [CrossRef]

- Chalapathy, R.; Chawla, S. Deep Learning for Anomaly Detection: A Survey. arXiv preprint arXiv:1901.03407 2019. [CrossRef]

- Cook, A.A.; Misirli, G.; Fan, Z. Anomaly Detection for IoT Time-Series Data: A Survey. IEEE Internet of Things Journal 2020, 7, 6481–6494. [CrossRef]

- Erhan, L.; Ndubuaku, M.; Di Mauro, M.; Song, W.; Chen, M.; Fortino, G.; Bagdasar, O.; Liotta, A. Smart anomaly detection in sensor systems: A multi-perspective review. Information Fusion 2021, 67, 64–79. [CrossRef]

- Choi, K.; Yi, J.; Park, C.; Yoon, S. Deep Learning for Anomaly Detection in Time-Series Data: Review, Analysis, and Guidelines. IEEE Access 2021, 9, 120043–120065. [CrossRef]

- Garg, A.; Zhang, W.; Samaran, J.; Savitha, R.; Foo, C.S. An Evaluation of Anomaly Detection and Diagnosis in Multivariate Time Series. IEEE Transactions on Neural Networks and Learning Systems 2022, 33, 2508–2517. [CrossRef]

- Gu, Y.; Xiong, Y.; Mace, J.; Jiang, Y.; Hu, Y.; Kasikci, B.; Cheng, P. Argos: Agentic Time-Series Anomaly Detection with Autonomous Rule Generation via Large Language Models. arXiv preprint arXiv:2501.14170 2025.

- Yang, T.; Liu, J.; Siu, W.; Wang, J.; Qian, Z.; Song, C.; Cheng, C.; Hu, X.; Zhao, Y. AD-AGENT: A Multi-agent Framework for End-to-end Anomaly Detection. arXiv preprint arXiv:2505.12594 2025.

- He, X.; Wu, D.; Zhai, Y.; Sun, K. SentinelAgent: Graph-based Anomaly Detection in Multi-Agent Systems. arXiv preprint arXiv:2505.24201 2025.

- Song, C.; Ma, L.; Zheng, J.; Liao, J.; Kuang, H.; Yang, L. Audit-LLM: Multi-Agent Collaboration for Log-based Insider Threat Detection. arXiv preprint arXiv:2408.08902 2024.

- Timms, A.; Langbridge, A.; O’Donncha, F. Agentic Anomaly Detection for Shipping. In Proceedings of the Proceedings of the NeurIPS 2024 Workshop on Open-World Agents, 2024.

- Ren, J.; Tang, T.; Jia, H.; Xu, Z.; Fayek, H.; Li, X.; Ma, S.; Xu, X.; Xia, F. Foundation Models for Anomaly Detection: Vision and Challenges. arXiv preprint arXiv:2502.06911 2025.

- Zhang, J.; Wang, G.; Jin, Y.; Huang, D. Towards Training-free Anomaly Detection with Vision and Language Foundation Models. arXiv preprint arXiv:2503.18325 2025.

- He, Z.; Alnegheimish, S.; Reimherr, M. Harnessing Vision-Language Models for Time Series Anomaly Detection. arXiv preprint 2025.

- Sinha, R.; Elhafsi, A.; Agia, C.; Foutter, M.; Schmerling, E.; Pavone, M. Real-Time Anomaly Detection and Reactive Planning with Large Language Models. arXiv preprint 2024.

- Zhu, J.; Cai, S.; Deng, F.; Ooi, B.C.; Wu, J.; Ooi, C.; Wu, J. Do LLMs Understand Visual Anomalies? Uncovering LLM’s Capabilities in Zero-shot Anomaly Detection. In Proceedings of the Proceedings of the ACM Multimedia Conference, 2024, p. 10. [CrossRef]

- Qin, Z.; Luo, Q.; Nong, X.; Chen, X.; Zhang, H.; Wong, C.U.I. MAS-LSTM: A Multi-Agent LSTM-Based Approach for Scalable Anomaly Detection in IIoT Networks. Processes 2025, 13, 753. [CrossRef]

- Kazari, K.; Shereen, E.; Dán, G. Decentralized Anomaly Detection in Cooperative Multi-Agent Reinforcement Learning. In Proceedings of the Proceedings of the International Joint Conference on Artificial Intelligence (IJCAI), 2023, Vol. 1, pp. 162–170. [CrossRef]

- Tellache, A.; Mokhtari, A.; Korba, A.A.; Ghamri-Doudane, Y. Multi-agent Reinforcement Learning-based Network Intrusion Detection System. arXiv preprint 2024.

- Dong, M.; Huang, H.; Cao, L. Can LLMs Serve As Time Series Anomaly Detectors? arXiv preprint 2024.

- Alnegheimish, S.; Nguyen, L.; Berti-Equille, L.; Veeramachaneni, K. Large language models can be zero-shot anomaly detectors for time series? arXiv preprint 2024.

- Yang, T.; Nian, Y.; Li, S.; Xu, R.; Li, Y.; Li, J.; Xiao, Z.; Hu, X.; Rossi, R.; Ding, K.; et al. AD-LLM: Benchmarking Large Language Models for Anomaly Detection. arXiv preprint 2025.

- Cao, Y.; Yang, S.; Li, C.; Xiang, H.; Qi, L.; Liu, B.; Li, R.; Liu, M. TAD-Bench: A Comprehensive Benchmark for Embedding-Based Text Anomaly Detection. arXiv preprint 2025.

- Derakhshan, M.; Ceravolo, P.; Mohammadi, F. Leveraging GPT-4o Efficiency for Detecting Rework Anomaly in Business Processes. arXiv preprint 2025.

- Ding, C.; Sun, S.; Zhao, J. MST-GAT: A multimodal spatial–temporal graph attention network for time series anomaly detection. Information Fusion 2023, 89, 527–536. [CrossRef]

- Han, X.; Chen, S.; Fu, Z.; Feng, Z.; Fan, L.; An, D.; Wang, C.; Guo, L.; Meng, W.; Zhang, X.; et al. Multimodal Fusion and Vision-Language Models: A Survey for Robot Vision. arXiv preprint 2025.

- Shangguan, W.; Wu, H.; Niu, Y.; Yin, H.; Yu, J.; Chen, B.; Huang, B. CPIR: Multimodal Industrial Anomaly Detection via Latent Bridged Cross-modal Prediction and Intra-modal Reconstruction. Advanced Engineering Informatics 2025, 65, 103240. [CrossRef]

- Costanzino, A.; Ramirez, P.Z.; Lisanti, G.; Di Stefano, L. Multimodal Industrial Anomaly Detection by Crossmodal Feature Mapping. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024. [CrossRef]

- Ghadiya, A.; Kar, P.; Chudasama, V.; Wasnik, P. Cross-Modal Fusion and Attention Mechanism for Weakly Supervised Video Anomaly Detection. arXiv preprint 2024.

- Horwitz, E.; Hoshen, Y. Back to the Feature: Classical 3D Features are (Almost) All You Need for 3D Anomaly Detection. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 2023, pp. 2968–2977. [CrossRef]

- Wang, Y.; Peng, J.; Zhang, J.; Yi, R.; Wang, Y.; Wang, C. Multimodal Industrial Anomaly Detection via Hybrid Fusion. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023, pp. 8032–8041. [CrossRef]

- Long, K.; Xie, G.; Ma, L.; Liu, J.; Lu, Z. Revisiting Multimodal Fusion for 3D Anomaly Detection from an Architectural Perspective. arXiv preprint 2024.

- Gu, Z.; Zhu, B.; Zhu, G.; Chen, Y.; Tang, M.; Wang, J. AnomalyGPT: Detecting Industrial Anomalies Using Large Vision-Language Models. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol. 38, pp. 1932–1940. [CrossRef]

- Li, W.; Chu, G.; Chen, J.; Xie, G.S.; Shan, C.; Zhao, F. LAD-Reasoner: Tiny Multimodal Models are Good Reasoners for Logical Anomaly Detection. arXiv preprint arXiv:2504.12749 2025.

- Xu, X.; Cao, Y.; Chen, Y.; Shen, W.; Huang, X. Customizing Visual-Language Foundation Models for Multi-modal Anomaly Detection and Reasoning. In Proceedings of the Proceedings of the IEEE 28th International Conference on Computer Supported Cooperative Work in Design (CSCWD), 2025.

- Xu, J.; Lo, S.Y.; Safaei, B.; Patel, V.M.; Dwivedi, I. Towards Zero-Shot Anomaly Detection and Reasoning with Multimodal Large Language Models. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025.

- Wu, P.; Su, W.; Pang, G.; Sun, Y.; Yan, Q.; Wang, P.; Zhang, Y. AVadCLIP: Audio-Visual Collaboration for Robust Video Anomaly Detection. arXiv preprint arXiv:2504.04495 2025.

- Barusco, M.; Borsatti, F.; Pezze, D.D.; Paissan, F.; Farella, E.; Susto, G.A. From Vision to Sound: Advancing Audio Anomaly Detection with Vision-Based Algorithms. arXiv preprint arXiv:2502.18328 2025.

- Lee, B.; Won, J.; Lee, S.; Shin, J. CLIP Meets Diffusion: A Synergistic Approach to Anomaly Detection. arXiv preprint arXiv:2506.11772 2025.

- Wang, Y.; Zhao, Y.; Huo, Y.; Lu, Y. Multimodal anomaly detection in complex environments using video and audio fusion. Scientific Reports 2025, 15, 1–22. [CrossRef]

- Qu, X.; Liu, Z.; Wu, C.Q.; Hou, A.; Yin, X.; Chen, Z. MFGAN: Multimodal Fusion for Industrial Anomaly Detection Using Attention-Based Autoencoder and Generative Adversarial Network. Sensors 2024, 24, 637. [CrossRef]

- Chen, D.; Hu, Z.; Fan, P.; Zhuang, Y.; Li, Y.; Liu, Q.; Jiang, X.; Xu, M. KKA: Improving Vision Anomaly Detection through Anomaly-related Knowledge from Large Language Models. arXiv preprint arXiv:2502.14880 2025.

- Zhang, H.; Zhu, Q.; Guan, J.; Liu, H.; Xiao, F.; Tian, J.; Mei, X.; Liu, X.; Wang, W. First-Shot Unsupervised Anomalous Sound Detection With Unknown Anomalies Estimated by Metadata-Assisted Audio Generation. IEEE/ACM Transactions on Audio, Speech, and Language Processing 2023, 32, 1188–1201. [CrossRef]

- Lin, S.; Wang, C.; Ding, X.; Wang, Y.; Du, B.; Song, L.; Wang, C.; Liu, H. A VLM-based Method for Visual Anomaly Detection in Robotic Scientific Laboratories. arXiv preprint arXiv:2506.05405 2025.

- Iqbal, H.; Khalid, U.; Chen, C.; Hua, J. Unsupervised Anomaly Detection in Medical Images Using Masked Diffusion Model. In Proceedings of the Machine Learning in Medical Imaging. MLMI 2023 (Lecture Notes in Computer Science). Springer, 2023, Vol. 14348, pp. 372–381. [CrossRef]

- Liang, M.; Yang, B.; Chen, Y.; Hu, R.; Urtasun, R. Multi-Task Multi-Sensor Fusion for 3D Object Detection. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019, pp. 7337–7345. [CrossRef]

- Kaneko, Y.; Miah, A.S.M.; Hassan, N.; Lee, H.S.; Jang, S.W.; Shin, J. Multimodal Attention-Enhanced Feature Fusion-based Weekly Supervised Anomaly Violence Detection. IEEE Open Journal of the Computer Society 2024, 5, 1–12. [CrossRef]

- Zang, R.; Guo, H.; Yang, J.; Liu, J.; Li, Z.; Zheng, T.; Shi, X.; Zheng, L.; Zhang, B. MLAD: A Unified Model for Multi-system Log Anomaly Detection. arXiv preprint arXiv:2401.07655 2024.

- Nagrani, A.; Yang, S.; Arnab, A.; Jansen, A.; Schmid, C.; Sun, C. Attention Bottlenecks for Multimodal Fusion. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2021, Vol. 34, pp. 14200–14213.

- Gan, C.; Fu, X.; Feng, Q.; Zhu, Q.; Cao, Y.; Zhu, Y. A multimodal fusion network with attention mechanisms for visual–textual sentiment analysis. Expert Systems with Applications 2024, 242, 122731. [CrossRef]

- Arevalo, J.; Solorio, T.; Montes-y Gómez, M.; González, F.A. Gated Multimodal Units for Information Fusion. In Proceedings of the 5th International Conference on Learning Representations (ICLR), Workshop Track Proceedings, 2017.

- Lin, T.Y.; RoyChowdhury, A.; Maji, S. Bilinear CNN Models for Fine-grained Visual Recognition. In Proceedings of the Proceedings of the IEEE International Conference on Computer Vision (ICCV), 2015, pp. 1449–1457.

- Fukui, A.; Park, D.H.; Yang, D.; Rohrbach, A.; Darrell, T.; Rohrbach, M. Multimodal Compact Bilinear Pooling for Visual Question Answering and Visual Grounding. In Proceedings of the Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), 2016, pp. 457–468. [CrossRef]

- Jeong, S.; Moloco, J.P.; Imani, M. Uncertainty-Weighted Image-Event Multimodal Fusion for Video Anomaly Detection. arXiv preprint 2025.

- Ektefaie, Y.; Dasoulas, G.; Noori, A.; Farhat, M.; Zitnik, M. Multimodal learning with graphs. Nature Machine Intelligence 2023, 5, 340–350. [CrossRef]

- Passos, L.A.; Papa, J.P.; Del Ser, J.; Hussain, A.; Adeel, A. Multimodal audio-visual information fusion using canonical-correlated Graph Neural Network for energy-efficient speech enhancement. Information Fusion 2023, 90, 1–11. [CrossRef]

- Xia, C.; Liu, C.; Zhou, Y.; Li, K.C. VLDFNet: Views-Graph and Latent Feature Disentangled Fusion Network for Multimodal Industrial Anomaly Detection. IEEE Transactions on Instrumentation and Measurement 2025. [CrossRef]

- Jiang, X.; Li, J.; Deng, H.; Liu, Y.; Gao, B.B.; Zhou, Y.; Li, J.; Wang, C.; Zheng, F. MMAD: A Comprehensive Benchmark for Multimodal Large Language Models in Industrial Anomaly Detection. arXiv preprint 2025.

- Bogdoll, D.; Hamdard, I.; Rößler, L.N.; Geisler, F.; Bayram, M.; Wang, F.; Imhof, J.; de Campos, M.; Tabarov, A.; Yang, Y.; et al. AnoVox: A Benchmark for Multimodal Anomaly Detection in Autonomous Driving. arXiv preprint arXiv:2402.13846 2024. Duplicate entry of BogdollAnoVox:Driving.

- Leporowski, B.; Bakhtiarnia, A.; Bonnici, N.; Muscat, A.; Zanella, L.; Wang, Y.; Iosifidis, A. MAVAD: Audio-Visual Dataset and Method for Anomaly Detection in Traffic Videos. In Proceedings of the Proceedings of the IEEE International Conference on Image Processing (ICIP), 2024, pp. 1106–1112. [CrossRef]

- Bergmann, P.; Batzner, K.; Fauser, M.; Sattlegger, D.; Steger, C. Beyond Dents and Scratches: Logical Constraints in Unsupervised Anomaly Detection and Localization. International Journal of Computer Vision 2022, 130, 947–969. [CrossRef]

- Bergmann, P.; Jin, X.; Sattlegger, D.; Steger, C. The MVTec 3D-AD Dataset for Unsupervised 3D Anomaly Detection and Localization. In Proceedings of the Proceedings of the International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP), 2021, Vol. 5, pp. 202–213. [CrossRef]

- Leporowski, B.; Tola, D.; Hansen, C.; Iosifidis, A. AURSAD: Universal Robot Screwdriving Anomaly Detection Dataset. arXiv preprint 2021.

- Yao, Y.; Wang, X.; Xu, M.; Pu, Z.; Wang, Y.; Atkins, E.; Crandall, D.J. DoTA: Unsupervised Detection of Traffic Anomaly in Driving Videos. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 444–459. [CrossRef]

- Zhao, T.; Zhang, L.; Ma, Y.; Cheng, L. A Survey on Safe Multi-Modal Learning System. arXiv preprint 2024.

- Li, Z.; Yan, Y.; Wang, X.; Ge, Y.; Meng, L. A survey of deep learning for industrial visual anomaly detection. Artificial Intelligence Review 2025, 58, 1–82. [CrossRef]

- Zhang, X.; Xu, M.; Zhou, X. RealNet: A Feature Selection Network with Realistic Synthetic Anomaly for Anomaly Detection. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024. [CrossRef]

- Bi, Y.; Huang, L.; Clarenbach, R.; Ghotbi, R.; Karlas, A.; Navab, N.; Jiang, Z. Synomaly Noise and Multi-Stage Diffusion: A Novel Approach for Unsupervised Anomaly Detection in Ultrasound Imaging. arXiv preprint 2024.

- Ma, X.; Chen, H.; Deng, Y. Improving Multimodal Learning Balance and Sufficiency through Data Remixing. arXiv preprint 2025.

- Xu, R.; Ding, K. Large Language Models for Anomaly and Out-of-Distribution Detection: A Survey. In Proceedings of the Findings of the Association for Computational Linguistics: NAACL, 2025, pp. 5992–6012. [CrossRef]

- Rakhmonov, A.A.U.; Subramanian, B.; Olimov, B.; Kim, J. Extensive Knowledge Distillation Model: An End-to-End Effective Anomaly Detection Model for Real-Time Industrial Applications. IEEE Access 2023, 11, 69750–69761. [CrossRef]

- Bogdoll, D.; Hamdard, I.; Rößler, L.N.; Geisler, F.; Bayram, M.; Wang, F.; Imhof, J.; de Campos, M.; Tabarov, A.; Yang, Y.; et al. AnoVox: A Benchmark for Multimodal Anomaly Detection in Autonomous Driving. arXiv preprint arXiv:2402.13846 2024.

| Framework | Agent Type | Capabilities | Modality / Scope | Evaluation / Dataset |

|---|---|---|---|---|

| ARGOS [34] | Planner (Multi-agent LLM) | Workflow planning, tool use, external retrieval | Multimodal (TS + logs + web-text + meta) | KPI, Yahoo, internal Microsoft data |

| AD-Agent [35] | Multi-agent (LLM pipeline) | Instruction parsing, model selection, code generation | Tabular / Graph / Time-series | ADBench |

| SentinelAgent [36] | Oversight (LLM tool-user) | Graph modeling, oversight, cognitive inconsistency detection | Multimodal logs + plans + MAS interactions | Simulated email assistant; Magnetic-One |

| Audit-LLM [37] | Planner (Multi-agent) | Task decomposition, feedback, log auditing | Logs + metadata | Cybersecurity benchmarks |

| IBM Shipping [38] | Single-agent (LLM+tools) | Reasoning on multimodal sensor/knowledge graph (KG) data | Sensor + KG (maritime) | Real shipping operation logs |

| AnomalyRuler [39] | Single-agent (Reasoning) | Rule induction, chain-of-thought reasoning | Video | Few-shot video AD benchmarks |

| LogSAD [40] | Single-agent (Reasoning) | Compositional vision–language reasoning (GPT-4V+CLIP) | Mixed (image + text) | Industrial image and text AD tasks |

| VLM4TS [41] | Tool-using agent | Vision–language transformation, retrieval-augmented AD | Multimodal (time-series + text) | Time-series AD benchmarks |

| AESOP [42] | Single-agent (LLM) | Fast anomaly classification with fallback planning | Visual (robotics) | Quadrotor & vehicle simulations |

| ALFA [43] | VLM (LLM+vision) | Zero-shot visual anomaly detection via prompts | Visual (images) | MVTec, VisA anomaly datasets |

| MAS-LSTM [44] | Multi-agent (LSTM) | Local LSTM voting-based fusion | Time-series (IIoT) | Industrial IoT traffic |

| MARL [45] | Multi-agent (RL) | Decentralized RNN predictors, normality scoring | Observations (MARL) | StarCraft (multi-agent env.) |

| RL-IDS [46] | Multi-agent (RL) | Parallel DQN agents, cost-sensitive learning | Network traffic | CIC-IDS-2017 network dataset |

| GPT-4 Prompt [47] | Detection-only agent | Zero-shot anomaly classification via prompting | Time-series, Text | Prompt-based scoring benchmarks |

| Agent Type | Key Capability | Examples | Strengths | Limitations |

|---|---|---|---|---|

| Detection-Only Agents | Direct labeling via prompt or model output | SigLLM [48]; GPT-4V zero-shot [47] | Simple deployment, fast inference | Prone to LLM errors (hallucinations), no deep reasoning |

| Reasoning Agents | Chain-of-thought, rule induction from few-shot normals | AnomalyRuler [39]; LogSAD [40] | Explainable decisions; uses in-context learning | Sensitive to prompt design; needs clean normal data |

| Tool-Using Agents | External API or tool integration | ARGOS [34]; VLM4TS [41]; SentinelAgent [36] | Context-aware and domain-grounded | Tool dependency; higher latency and complexity |

| Planner Agents | Workflow decomposition, memory usage, multi-step planning | Audit-LLM [37]; ARGOS pipeline; LLM-based FM [3] | Tackles complex multi-stage tasks; dynamic adaptation | Complex architecture; more costly to develop/maintain |

| Method | Modalities | Key Idea/Innovation |

|---|---|---|

| BTF (Back to the Feature) [57] | RGB + 3D (depth) | First RGB–3D industrial AD: add 3D point-cloud features to a pre-trained 2D CNN representation; uses a memory bank of normal feature patches for anomaly scoring. |

| M3DM (Multimodal 3D AD via Hybrid Fusion) [58] | RGB + 3D | Uses frozen transformers (ViT and Point-MAE) to extract rich features and a memory bank for multimodal features. Hybrid fusion strategy with point-level feature alignment improves on BTF. |

| CFM (Crossmodal Feature Mapping) [55] | RGB + 3D | Learns mapping functions to translate 2D features into 3D and vice versa using only normal data. Anomalies detected via disagreement beyond threshold; eliminates memory bank. |

| CPIR (Cross-modal Prediction & Intra-modal Reconstruction) [54] | RGB + 3D | Enhances cross-modal mapping by adding autoencoder reconstruction and a shared latent bridge (LB3M) to ensure anomalies in one modality are caught while maintaining consistency. |

| 3D-ADNAS (AD via neural architecture search) [59] | RGB + 3D | Uses Neural Architecture Search to find optimal multimodal fusion. Two-level search: intra-module (fusion ops and stage) and inter-module (connection of fusion modules). |

| WS-VAD (weakly supervised video anomaly detection) [56] | Video + Audio | Weakly supervised video anomaly detection using Cross-Modal Fusion Adapter (CFA) and Hyperbolic Graph Attention (HLGAtt). CFA gates noisy/dominant modalities; HLGAtt links segments via hyperbolic embeddings. |

| AnomalyGPT [60] | Image + Text | Uses large vision–language model (MiniGPT-4) for AD. Prompted fine-tuning maps simulated anomaly images to descriptive text. Image decoder adds fine-grained vision; learned prompts adapt LVLM. |

| LAD-Reasoner [61] | Image + Text | “Tiny” (3B) multimodal language model trained for logical anomaly detection with natural language explanations. Two-stage training: supervised fine-tuning (SFT) + GRPO reinforcement for reasoning. Based on Qwen-VL. |

| Fusion Methods | Description | Pros | Cons |

|---|---|---|---|

| Early Fusion | Merge raw inputs or low-level features, then a single model processes them. E.g., treat LiDAR depth as extra image channels. | Captures raw cross-modal correlations; simple implementation. | Modalities must be aligned; model may be overwhelmed by heterogeneous input. |

| Intermediate Fusion | Separate encoders, fuse at intermediate layer(s) via concat, add, attention, etc. | Balances modality specialization and interaction; learnable fusion can emphasize important features. | Need to choose when and how to fuse (hyperparameters); improper fusion point can hurt performance. |

| Late Fusion | Independent anomaly scores or decisions per modality, combined at end (e.g., weighted average or voting). | Each modality can be optimized/tuned separately; interpretable contributions; robust if one modality fails (others still contribute). | Loses benefit of joint feature learning; needs method to set weights or logic for combining decisions. |

| Hybrid / Multi-stage | Fuse at multiple points or use a mix of the above (including multi-modal transformers). | Very flexible, can capture both low-level and high-level interactions; often highest accuracy. | Increased complexity; requires sufficient data; harder to interpret and configure. |

| Dataset | Modalities | Size/Scale | Anomaly Types | Ground Truth |

|---|---|---|---|---|

| AnoVox [86] | RGB + LiDAR | City-scale driving (multisensor) | Spatial and temporal road anomalies | Voxel-level segmentation |

| MAVAD [87] | Video + Audio | 764 videos (11 classes) | Traffic anomalies (e.g. u-turns, obstructions) | Clip-level labels |

| MVTec LOCO [88] | RGB images | 3,644 images (5 categories) | Structural & logical anomalies | Pixel-level masks |

| MVTec 3D-AD [89] | RGB + Depth (3D) | 4,000+ high resolution scans (10 categories) | Surface & depth irregularities | 2D masks + precise depth |

| MMAD [85] | RGB + Text prompts | 8,366 images with 39,672 QA pairs | Caption-based AD behaviors | QA accuracy & response correctness |

| AURSAD [90] | Multisensor time-series | 2,045 samples | Robot screwdriving anomalies | Sample-level labels |

| DoTA [91] | Video | 4,677 dash-cam clips | Traffic accidents/anomalies | Temporal, spatial, categorical |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).