Submitted:

18 February 2026

Posted:

25 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. History as Data Science? The Absence of Information Theory in Historical Methodology

3. Bridging the Gap: Circulating Reference and Historical Sources

3.1. Latour’s Circulating Reference: From Matter to Form

3.2. Tracing the Hybrid Chain: Transformations in a Blended Workflow

3.3. The Data Layer as Intermediate Transformation

- 1.

- Input (Visual representation): The digitized map is treated as a matrix of pixels. At this stage, it preserves the visual complexity and the “noise” of the original archival document.

- 2.

- Operation (Algorithmic segmentation): An AI model predicts pixel classes (e.g., “building” vs. “background”). This is the act of discretization—forcing unambiguous categories onto a continuous or ambiguous reality.

- 3.

- Output (Data layer): The probability maps are converted into vector polygons. These are immutable mobiles: distinct, countable objects that can be moved into databases, separated entirely from the visual context of the map.

3.4. From Source to Data: The Chain of Circulating Reference

- Data are not atomic facts, but highly processed, mobile transformations optimized for manipulation.

- Information is not “contextualized data,” but the active maintenance of the link between the abstraction (data) and its origin (source).

- Knowledge implies the mastery of the entire transformation chain—understanding not just what the data signify, but how to retrace the steps of their production and how to account for the decisions of all actors (human and non-human) involved in the process.

3.5. Implications for Data-Driven Historical Research

- 1.

- Transparency about transformations: Explicitly documenting what is preserved and lost at each stage

- 2.

- Traceability: Maintaining reversible links between transformed data and source materials

- 3.

- Multiplicity: Creating multiple transformations of the same sources to preserve different features

- 4.

- Bidirectionality: Developing workflows that allow movement both toward abstraction (statistics, models) and toward specificity (individual sources, contexts)

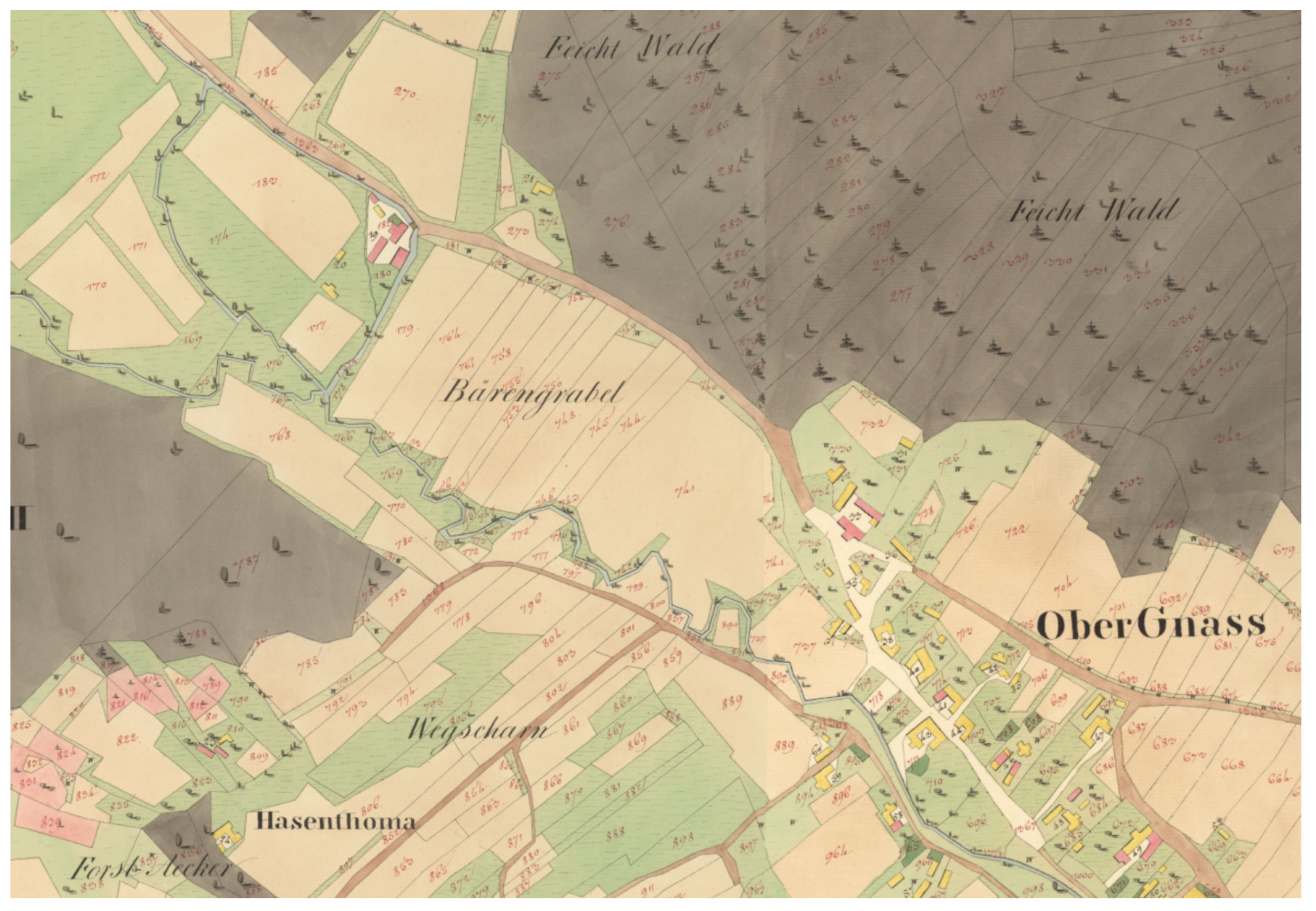

4. Case Study 1: The Franziszeische Kataster

4.1. The Cadastre as Research Resource

4.2. Georeferencing as First Transformation

4.3. AI-Supported Segmentation: Decomposing Information into Data

4.4. Scaling Analysis: From Exemplary to Comprehensive

4.5. Insights into Historical Source Production

4.6. Theoretical Analysis: Data, Information, and the Transformation Chain

- Territorial reality: The physical landscape (villages, fields, forests) observed by surveyors.

- Field survey: Measurement and field book recording. Loss: Continuous landscape becomes discrete points. Gain: Quantifiable, transportable data.

- Cartographic production: Translation into graphic representation. Loss: 3D terrain becomes 2D map; precision reduced to resolution. Gain: Visual comprehensibility and preservation.

- Digitization & Georeferencing: Scanning and coordinate alignment. Loss: Materiality and original spatial framework. Gain: Digital reproducibility and GIS integration.

- AI segmentation: Pixel classification via CNN. Loss: Cartographic style, aesthetics, and ambiguity. Gain: Systematic classification, scalability, and statistical analyzability.

- Vector conversion: Creation of geometric polygons. Loss: Pixel-level granularity. Gain: Topological relationships and precise measurements.

- Spatial analysis: GIS operations. Loss: Individual feature uniqueness. Gain: Pattern recognition and hypothesis testing.

- 1.

- Resolution as Context (The Receptive Field): We downsampled images not merely for efficiency, but to expand the CNN’s “receptive field.” This allowed the network to perceive sufficient spatial context to distinguish complex entities like buildings, rather than just analyzing texture at full resolution.

- 2.

- Contextual Redundancy (Overlapping Inference): To counter edge errors, we implemented a “quadruple inference” strategy. Each map section was classified four times with shifting input windows. The final result is a consensus, mimicking a rigorous source critique where observations are verified from multiple angles.

- 3.

- The Ontology of “Streets” (Hermeneutics of Classes): Initial segmentation of streets failed due to heterogeneity. Historical analysis revealed that maps depicted “common spaces” (Gemeinflächen) rather than modern infrastructure. Adjusting the target class from a visual/modern category to a historical/legal one allowed the algorithm to converge.

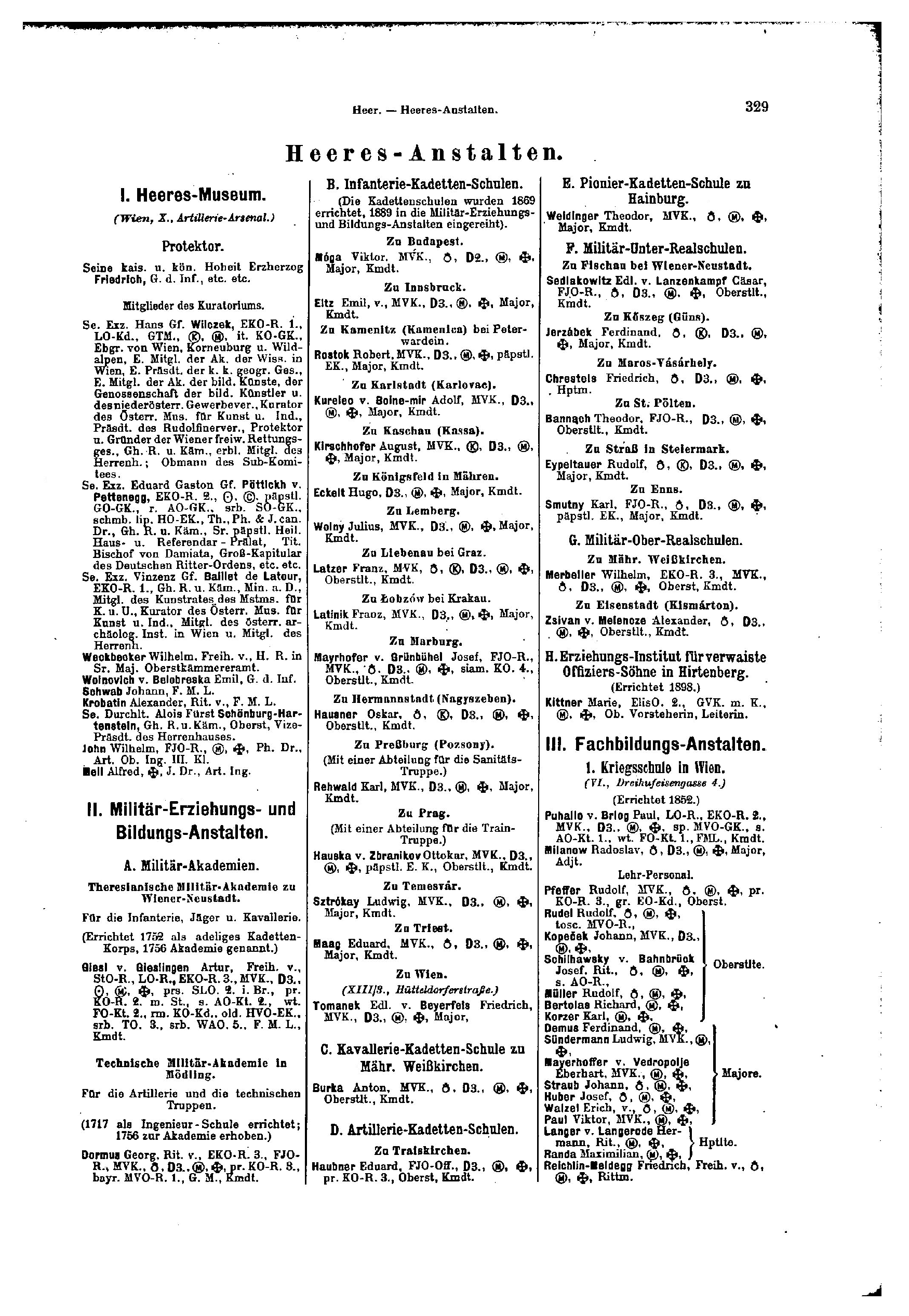

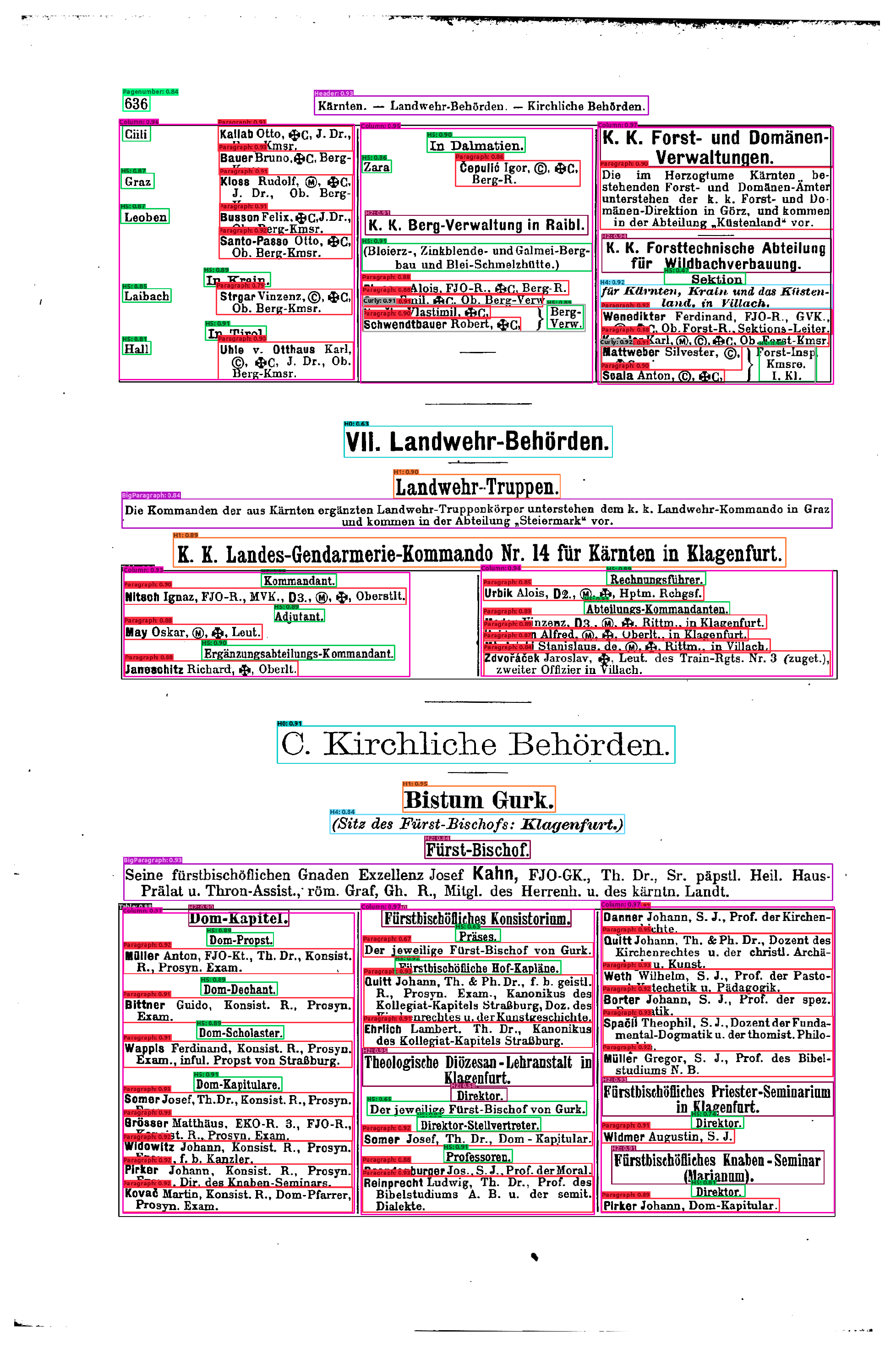

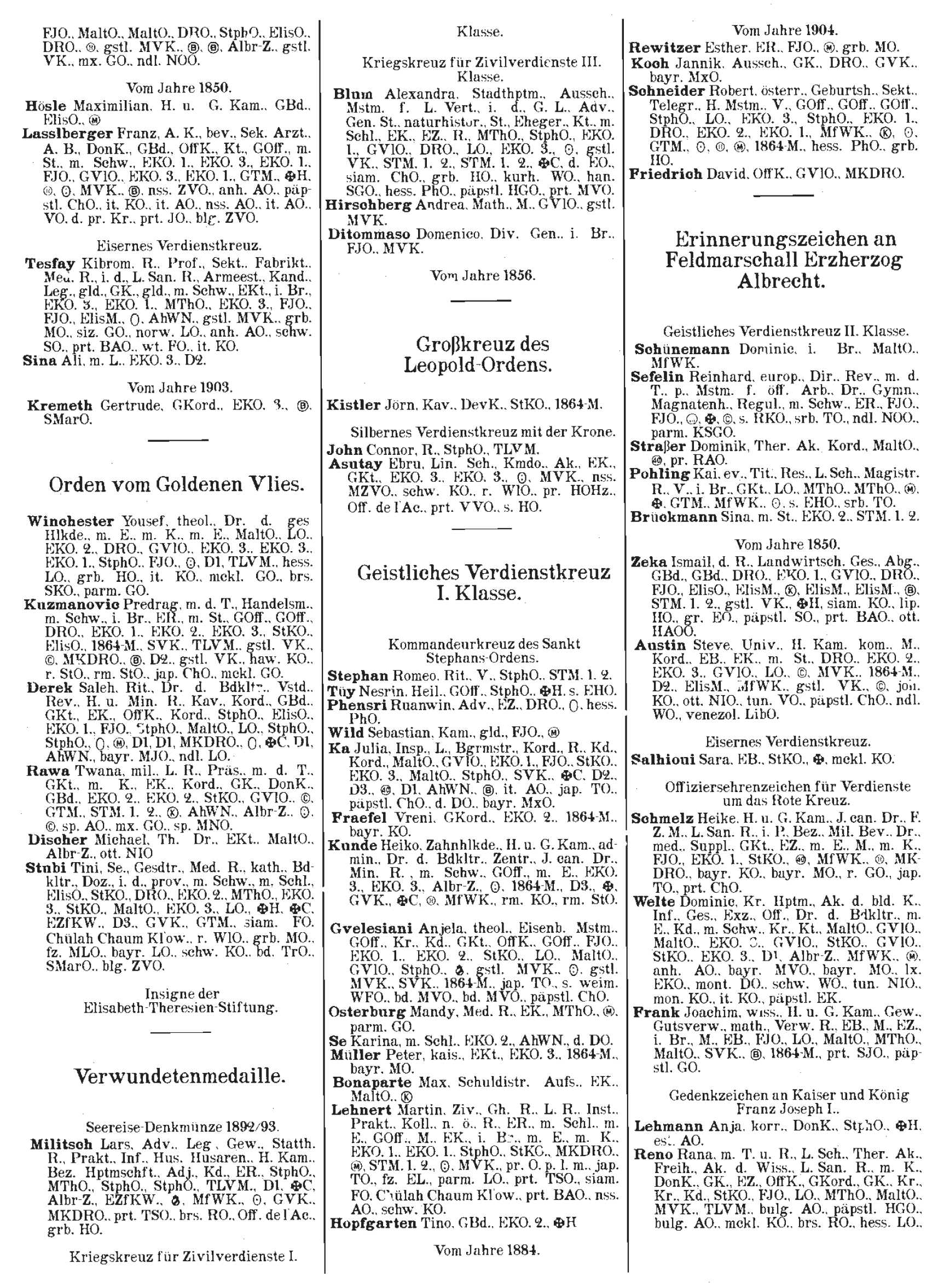

5. Case Study 2: Structuring the Social Body – The Hof- und Staatsschematismus

5.1. From Courtly Representation to Administrative Statistics

5.2. The Layout as an Institutional Archive

- 1.

- The Early Phase (1702–1774): Characterized by single, crowded columns with minimal typographical differentiation between headings and content, making semantic structure difficult to parse.

- 2.

- The Transitional Phase (1775–1848): Aspect ratios normalize and centered headings appear to separate organizational units, yet the layout remains fluid, experimenting with varying column structures.

- 3.

- The Consolidated Phase (1868–1918): Following the constitutional changes of 1848/1867, the layout stabilizes into a standardized three-column format, marking the “industrialization” of the administrative record.

5.3. Defining the Scope: A Strategic Reduction

5.4. The Synthetic Bridge: Manufacturing Data to Read Data

5.5. The Architecture of Extraction: Layout Analysis and OCR

5.5.1. Stage 1: Layout Detection (Faster R-CNN)

5.5.2. Stage 2: Optical Character Recognition (Tesseract)

5.6. Negotiating the Logic of the List

- 1.

- The Ontology of the “Curly” (Handling Hierarchy): The curly bracket (Geschweifte Klammer) is a pervasive visual element in the Schematismus, grouping officials under shared institutional headings. Technically, however, it is merely a graphical symbol. To preserve its hierarchical function for computational processing, we defined a dedicated “Curly” class within a three-tiered layout ontology: at the lowest level, Paragraphs capture individual text blocks; Headers operate across all three levels to mark organizational units; and Curly, as a container class at the intermediate level, groups Paragraphs and Headers into semantically coherent units. This decision elevated a graphical punctuation mark to a structural entity, encoding the insight that in bureaucratic documents, visual syntax carries as much institutional meaning as the text itself.

- 2.

- The Simulation of Variance (Synthetic Data Strategy): Using synthetic data required encoding our understanding into generator code (e.g., distinguishing indentation types). Consequently, the algorithm’s subsequent “discovery” of structures is tautological: it finds what it was told to look for. However, the strictly rule-based synthetic datasets are augmented during training with a comparatively small proportion (between 10 and 20%) of annotated original pages, which proves sufficient to enable the model to handle the diversity and heterogeneity of the historical source material with confidence. The extraction’s “objectivity” is thus bound by the historian’s initial formalization of layout rules, yet tempered by the model’s empirical exposure to the irregularities of the actual documents – a productive tension between idealized structure and archival reality.

- 3.

- Resolution as an Epistemological Dialectic: Comparing the case studies reveals a dialectic of resolution. We downsampled maps (Section 4) to capture context (“forest over trees”), but upsampled text (Section 5) to capture structural “fences” like separators. There is no “correct” digital resolution; technical parameters depend on the hermeneutic goal—land use requires blur, administrative hierarchy requires sharpness. Moreover, the choice of model architecture itself imposes additional constraints. In our preliminary experiments with YOLO for layout detection, we found that it operated optimally within different parameter ranges than the Faster R-CNN architecture ultimately adopted, particularly regarding input dimensions and aspect ratios. Depending on the model, it can prove worthwhile to invest considerable effort in pre-processing—adjusting crop dimensions to achieve different aspect ratios, or up- and downscaling resolution—before the source material ever enters the detection pipeline. The “correct” resolution is thus doubly contingent: determined not only by the historical question but also by the computational affordances of the chosen architecture.

6. Case Study 3: The Datafication of everyday Resistance in Nazi Germany – a Digital “Upcycling” of an Archive Inventory about 10,000 + criminal proceedings of the Munich Special Court

7. Conclusions

7.1. Parameters as a Transdisciplinary Negotiation

7.2. The Machine as a Stress Test for Source Quality

7.3. Scaling the Hermeneutic Circle

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| OCR | Optical Character Recognition |

| HTR | Handwritten Text Recognition |

| AI | Artificial Intelligence |

| CNN | Convolutional Neural Network |

| GIS | Geographic Information System/Science |

| DPI | Dots Per Inch |

| DIKW | Data-Information-Knowledge-Wisdom |

| IoU | Intersection over Union |

| R-CNN | Region-based Convolutional Neural Network |

| Portable Document Format | |

| LiDAR | Light Detection and Ranging |

| STS | Science and Technology Studies |

References

- In Digital Humanities in den Geschichtswissenschaften. Antenhofer, Christina, Christoph Kühberger, and Arno Strohmeyer (Eds.). 2024. Digital Humanities in den Geschichtswissenschaften, Volume 6116 of UTB. Wien: UTB/Böhlau. [CrossRef]

- Bauer, Volker. 2002. Territoriale Amtskalender und Amtshandbücher im Alten Reich. Bilanz und Perspektiven der Forschung. Daphnis 31(1–2), 217–252.

- Baumann, Jörg, David Fleischhacker, Wolfgang Goederle, Nicole Kamp, and Roman Kern. 2025. Automated segmentation of historical cadastral maps: A machine learning approach to the Franziszeischer Kataster. In AGIT Conference.

- Berry, David M. and Anders Fagerjord. 2017. Digital Humanities: Knowledge and Critique in a Digital Age. Cambridge: Polity Press.

- BEV – Bundesamt für Eich- und Vermessungswesen (Ed.). 2017. 200 Jahre Kataster. Österreichisches Kulturgut 1817–2017. Wien: BEV – Bundesamt für Eich- und Vermessungswesen.

- Bloch, Marc. 1953. The Historian’s Craft. New York: Knopf.

- Bloor, David. 1999. Anti-latour. Studies in History and Philosophy of Science Part A 30(1), 81–112. [CrossRef]

- Boonstra, Onno, Leen Breure, and Peter Doorn. 2004. Past, Present and Future of Historical Information Science. Amsterdam: NIWI-KNAW.

- Boshof, Egon, Kurt Düwell, and Hans Kloft. 1973. Grundlagen des Studiums der Geschichte: Eine Einführung (1 ed.). Cologne: Böhlau.

- Bowker, Geoffrey C. and Susan Leigh Star. 2000. Sorting Things Out: Classification and Its Consequences. Cambridge, MA: MIT Press.

- Brandt, Ahasver von. 2012. Werkzeug des Historikers: Eine Einführung in die Historischen Hilfswissenschaften (18 ed.). Stuttgart: Kohlhammer.

- Capurro, Rafael. 2010. Digital hermeneutics: An outline. AI & Society 35(1), 35–42. [CrossRef]

- Collingwood, R. G. 1946. The Idea of History. Oxford: Oxford University Press.

- Daston, Lorraine and Peter Galison. 2007. Objectivity. New York: Zone Books.

- Droysen, Johann Gustav. 1868. Historik: Vorlesungen über Enzyklopädie und Methodologie der Geschichte. Munich: Oldenbourg.

- Drucker, Johanna. 2011. Humanities approaches to graphical display. Digital Humanities Quarterly 5(1).

- In Digital History: Konzepte, Methoden und Kritiken Digitaler Geschichtswissenschaft. Döring, Karoline Dominika, Stefan Haas, Mareike König, and Jörg Wettlaufer (Eds.). 2022. Digital History: Konzepte, Methoden und Kritiken Digitaler Geschichtswissenschaft. Berlin: De Gruyter Oldenbourg. [CrossRef]

- Eberle, Oliver, Jochen Büttner, Hassan El-Hajj, Grégoire Montavon, Klaus-Robert Müller, and Matteo Valleriani. 2024. Historical insights at scale: A corpus-wide machine learning analysis of early modern astronomic tables. Science Advances 10(43), eadj1719. [CrossRef]

- Ernst, Marlene, Sebastian Gassner, Markus Gerstmeier, and Malte Rehbein. 2023. Categorising legal records – deductive, pragmatic, and computational strategies. Digital Humanities Quarterly 17(3).

- Feucht, Rainer. 2008, 3. Flächenangaben im österreichischen Kataster. Diplomarbeit, Technische Universität Wien. Institut für Geoinformation und Kartografie.

- Fickers, Andreas. 2012. Towards a new digital historicism? doing history in the age of abundance. Journal of European Television History and Culture 1(1), 19–26. [CrossRef]

- Fickers, Andreas, Juliane Tatarinov, and Tim van der Heijden. 2022. Digital history and hermeneutics – between theory and practice: An introduction. In A. Fickers and J. Tatarinov (Eds.), Digital History and Hermeneutics: Between Theory and Practice, Volume 2 of Studies in Digital History and Hermeneutics, pp. 1–20. Berlin: De Gruyter Oldenbourg. [CrossRef]

- Fickers, Andreas and Gerben Zaagsma. 2022. "; towards a digital hermeneutics?". In G. Balbi, N. Ribeiro, V. Schafer, and C. Schwarzenegger (Eds.), Digital Roots: Historicizing Media and Communication Concepts of the Digital Age. Berlin: De Gruyter. [CrossRef]

- Finzsch, Norbert. 2004. Latour und die historische forschung. Historische Anthropologie 12(2), 285–303.

- Fleischhacker, David, Roman Kern, and Wolfgang Göderle. 2025. Enhancing OCR in historical documents with complex layouts through machine learning. International Journal on Digital Libraries 26(1). [CrossRef]

- Fogel, Robert William and Stanley L. Engerman. 1974. Time on the Cross: The Economics of American Negro Slavery. Boston: Little, Brown.

- Frické, Martin. 2009. The knowledge pyramid: A critique of the DIKW hierarchy. Journal of Information Science 35(2), 131–142. [CrossRef]

- Generaldirektion der Staatlichen Archive Bayerns (Ed.). 1975–1977. Widerstand und Verfolgung in Bayern 1933–1945. Hilfsmittel. Archivinventare, Bd. 3: Sondergericht München, 7 Teile. Munich / Regensburg.

- Gerstmeier, Markus, Simon Donig, Sebastian Gassner, and Malte Rehbein. 2022. Die Archivinventare zum Sondergericht München (1933–1945) digital. Quellenwert – Verdatung – Erkenntnisperspektiven. Archivalische Zeitschrift 99(1), 215–251.

- In Raw Data Is an Oxymoron. Gitelman, Lisa (Ed.). 2013. Raw Data Is an Oxymoron. Cambridge, MA: MIT Press.

- Goederle, Wolfgang. 2015. Die räumliche Matrix des modernen Staates: Die Volkszählung des Jahres 1869 im Habsburgerreich im Lichte von Latours zirkulierender Referenz. Schweizerische Zeitschrift für Geschichte – Revue Suisse d’histoire 65(3), 414–427.

- Graham, Shawn, Ian Milligan, Scott B. Weingart, and Kim Martin. 2022. Exploring Big Historical Data: The Historian’s Macroscope (2 ed.). New Jersey: Imperial College Press.

- Guldi, Jo and David Armitage. 2014. The history manifesto: A critique. Perspectives on History.

- Hiltmann, Torsten. 2024. (epistemologische) Grundlagen der Anwendung digitaler Methoden in den Geschichtswissenschaften. In C. Antenhofer, C. Kühberger, and A. Strohmeyer (Eds.), Digital Humanities in den Geschichtswissenschaften, Volume 6116 of UTB, pp. 43–59. Wien: UTB/Böhlau. [CrossRef]

- Hitchcock, Tim. 2013. Confronting the digital: Or how academic history writing lost the plot. Cultural and Social History 10(1), 9–23. [CrossRef]

- Hitchcock, Tim. 2014. Confronting the digital: Or how academic history writing lost the plot. Cultural and Social History 10(1), 9–23. [CrossRef]

- Howell, Martha and Walter Prevenier. 2001. From Reliable Sources: An Introduction to Historical Methods. Ithaca, NY: Cornell University Press.

- Hudson, Pat. 2000. History by Numbers: An Introduction to Quantitative Approaches. London: Arnold.

- In Digital Humanities: Eine Einführung. Jannidis, Fotis, Hubertus Kohle, and Malte Rehbein (Eds.). 2017. Digital Humanities: Eine Einführung. Stuttgart: J.B. Metzler. [CrossRef]

- Journet, Nicholas, Muriel Visani, Boris Mansencal, Kieu Van-Phan, and Antoine Billy. 2017. DocCreator: A new software for creating synthetic ground-truthed document images. Journal of Imaging 3(4), 62. [CrossRef]

- Kain, Roger J. P. and Elizabeth Baigent. 1992. The Cadastral Map in the Service of the State: A History of Property Mapping. Chicago: University of Chicago Press.

- Kiessling, Benjamin. 2019. Kraken - an universal text recognizer for the humanities. In Digital Humanities Conference 2019, Utrecht.

- In Placing History: How Maps, Spatial Data, and GIS Are Changing Historical Scholarship. Knowles, Anne Kelly (Ed.). 2008. Placing History: How Maps, Spatial Data, and GIS Are Changing Historical Scholarship. Redlands, CA: ESRI Press.

- Langlois, Charles-Victor and Charles Seignobos. 1898. Introduction aux études historiques. Paris: Hachette.

- Latour, Bruno. 1986. Visualization and cognition: Drawing things together. In H. Kuklick (Ed.), Knowledge and Society: Studies in the Sociology of Culture Past and Present, Volume 6, pp. 1–40. Greenwich, CT: JAI Press.

- Latour, Bruno. 1999a. Circulating reference: Sampling the soil in the Amazon forest. In Pandora’s Hope: Essays on the Reality of Science Studies, Chapter 2, pp. 24–79. Cambridge, MA: Harvard University Press.

- Latour, Bruno. 1999b. Pandora’s Hope: Essays on the Reality of Science Studies. Cambridge, MA: Harvard University Press.

- Manovich, Lev. 2020. Cultural Analytics. Cambridge, MA: MIT Press.

- Moretti, Franco. 2013. Distant Reading. London: Verso.

- Muehlberger, Guenter et al. 2019. Transforming scholarship in the archives through handwritten text recognition: Transkribus as a case study. Journal of Documentation 75(5), 954–976. [CrossRef]

- Noflatscher, Heinz. 2004. “Ordonnances de l’hôtel”, Hofstaatsverzeichnisse, Hof- und Staatskalender. In J. Pauser, M. Scheutz, and T. Winkelbauer (Eds.), Quellenkunde der Habsburgermonarchie (16.–18. Jahrhundert). Ein exemplarisches Handbuch, pp. 59–75. Wien and München: R. Oldenbourg Verlag.

- Österreichische Nationalbibliothek. 2011. ALEX – Historische Rechts- und Gesetzestexte: Staatshandbuch. Digitale Sammlung. Digitalisierte Ausgaben des Hof- und Staatsschematismus (1702–1918).

- Putnam, Lara. 2016. The transnational and the text-searchable: Digitized sources and the shadows they cast. American Historical Review 121(2), 377–402. [CrossRef]

- Rehbein, Malte. 2021. A responsible knowledge society within a colourful world. Philosophisches Jahrbuch 128(1), 156–168. On epistemological responsibility in digital humanities and knowledge production.

- Rehbein, Malte. 2024. Künstliche Intelligenz und Datenmobilisierung zwischen Geschichtswissenschaft und Archiv. über Forschung in der digitalen Transformation und die Notwendigkeit des Umdenkens. Archivalische Zeitschrift 101, 11–27. [CrossRef]

- Ren, Shaoqing, Kaiming He, Ross Girshick, and Jian Sun. 2017. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Transactions on Pattern Analysis and Machine Intelligence 39(6), 1137–1149. [CrossRef]

- Rheinberger, Hans-Jörg. 1997. Toward a History of Epistemic Things: Synthesizing Proteins in the Test Tube. Stanford, CA: Stanford University Press.

- Rowley, Jennifer. 2007. The wisdom hierarchy: Representations of the DIKW hierarchy. Journal of Information Science 33(2), 163–180. [CrossRef]

- Scharr, Kurt. 2017. Kataster und Grundbuch im Kaisertum österreich. Ausgangssituation und Entwicklung bis 1866. In BEV – Bundesamt für Eich- und Vermessungswesen (Ed.), 200 Jahre Kataster. Österreichisches Kulturgut 1817–2017, pp. 37–51. Wien: BEV – Bundesamt für Eich- und Vermessungswesen.

- Schlögl, Michaela. 2008, 3. Ein digitales Abbild Kakaniens. Die Presse.

- Schöch, Christof. 2013. Big? smart? clean? messy? data in the humanities. Journal of Digital Humanities 2(3), 2–13.

- Siebold, Anna and Matteo Valleriani. 2022. Digital perspectives in history. Histories 2(2), 170–177. [CrossRef]

- Spiegel, Gabrielle M. 2005. Revising the past/revisiting the present: How change happens in historiography. History and Theory 44(4), 1–19. [CrossRef]

- Stulpe, Alexander and Matthias Lemke. 2016. Blended reading. Theoretische und praktische Dimensionen der Analyse von Text und sozialer Wirklichkeit im Zeitalter der Digitalisierung. In M. Lemke and G. Wiedemann (Eds.), Text Mining in den Sozialwissenschaften. Grundlagen und Anwendungen zwischen qualitativer und quantitativer Diskursanalyse, pp. 17–61. Wiesbaden: Springer VS. [CrossRef]

- Thaller, Manfred. 1993. Historical information science: Is there such a thing? new comments on an old idea. Historical Social Research / Historische Sozialforschung 18(3), 5–19.

- Tosh, John. 2021. The Pursuit of History: Aims, Methods and New Directions in the Study of History (7 ed.). London: Routledge.

- Zhou, Zongwei, Md Mahfuzur Rahman Siddiquee, Nima Tajbakhsh, and Jianming Liang. 2018. UNet++: A nested U-Net architecture for medical image segmentation. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support, pp. 3–11. Springer. [CrossRef]

| 1 | The Boa Vista expedition is recounted in Chapter 2 (“Circulating Reference”) of Latour (Latour 1999b, pp. 24-79). For earlier formulations of these ideas on “immutable mobiles” and “centers of calculation,” see Latour (1986). |

| 2 | This directly challenges what Drucker (2011) terms the “data/capta” distinction—the notion that humanities scholars should recognize their materials as “taken” (capta) rather than “given” (data). While Drucker’s intervention usefully denaturalizes data, Latour’s transformation chain framework goes further: it shows that all data—in sciences and humanities alike—are generated through transformation processes. The question is not whether data are “given” or “taken,” but whether transformation chains remain traceable and preserve features relevant to research questions. |

| 3 | Note that the workflow in (Fleischhacker et al. 2025) is a developmental milestone and differs from the current project workflow. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).