Submitted:

13 February 2026

Posted:

23 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

2.1. Normative AI Ethics and the Presumption of Organisational Capability

2.2. Gaps in Accountability, Responsibility, and Governance

2.3. Organisational AI Governance and Role Emergence

2.4. Symbolic Governance and Institutional Adoption

2.5. Ethics of Absence and the Empirical Blind Spot

3. Research Questions and Objectives

- Record the existence or non-existence of formal AI governance roles inside organisations to provide an empirical baseline.

- Examine the structural organisation of AI governance responsibilities within entities and discover prevalent governance practices.

- RQ1: Prevalence:

- RQ2: Sectoral Variation:

- RQ3: Geographical Variation:

- RQ4: Structural Characteristics of Roles:

- RQ5: Governance Maturity Profiles:

4. Methodology

4.1. Research Design

4.2. Sampling Strategy and Scope

Sampling Approach

Identification of Organisations and Recruitment of Participants

- Exploring corporate websites with an emphasis on sections related to AI, Data, Innovation, Digital, Risk & Compliance, and Governance.

- Researching professional networking and job-search websites (such as LinkedIn, Indeed, Glassdoor, Google Careers and company career pages) to identify roles or governance functions related to AI.

- Consulted publicly available business profile websites (e.g., Crunchbase, Bloomberg company profiles, and Reuters company profiles) to validate organisational context and AI-facing functions.

- Reviewing organisational newsrooms, press releases, annual reports, ESG/sustainability reports, and statements on corporate governance.

Sectoral Coverage

- Banking, financial services, and insurance

- Healthcare and pharmaceuticals

- Telecommunications and technology

- Retail and e-commerce

- Logistics and supply chain

- Manufacturing and mining

- Energy and utilities

- Consulting and professional services

- Public sector and government institutions

Geographical Coverage

- Europe

- North America

- Asia-Pacific

- Africa

- Latin America and the Caribbean

- Middle East

4.3. Respondents and Inclusion Criteria

- Assume a senior management or leadership position overseeing AI implementation, digital strategy, data governance, risk management, compliance, or innovation (e.g., CIO, CTO, CDO, Head of Data, Head of Risk/Compliance, Innovation Lead).

- Represent an organisation that either actively employs AI systems or is in the process of implementing AI/ML technology.

- Possess a comprehensive awareness of the organisational governance structures, reporting systems, and policy requirements pertaining to AI.

4.4. Data Collection and Survey Instruments

- Organisational profile (sector, size, geography),

- Status of AI adoption and functional applications.

- Presence of formal AI governance positions,

- Characteristics of the highest AI governance position,

- Governance resources, and

- AI governance maturity and contextual application.

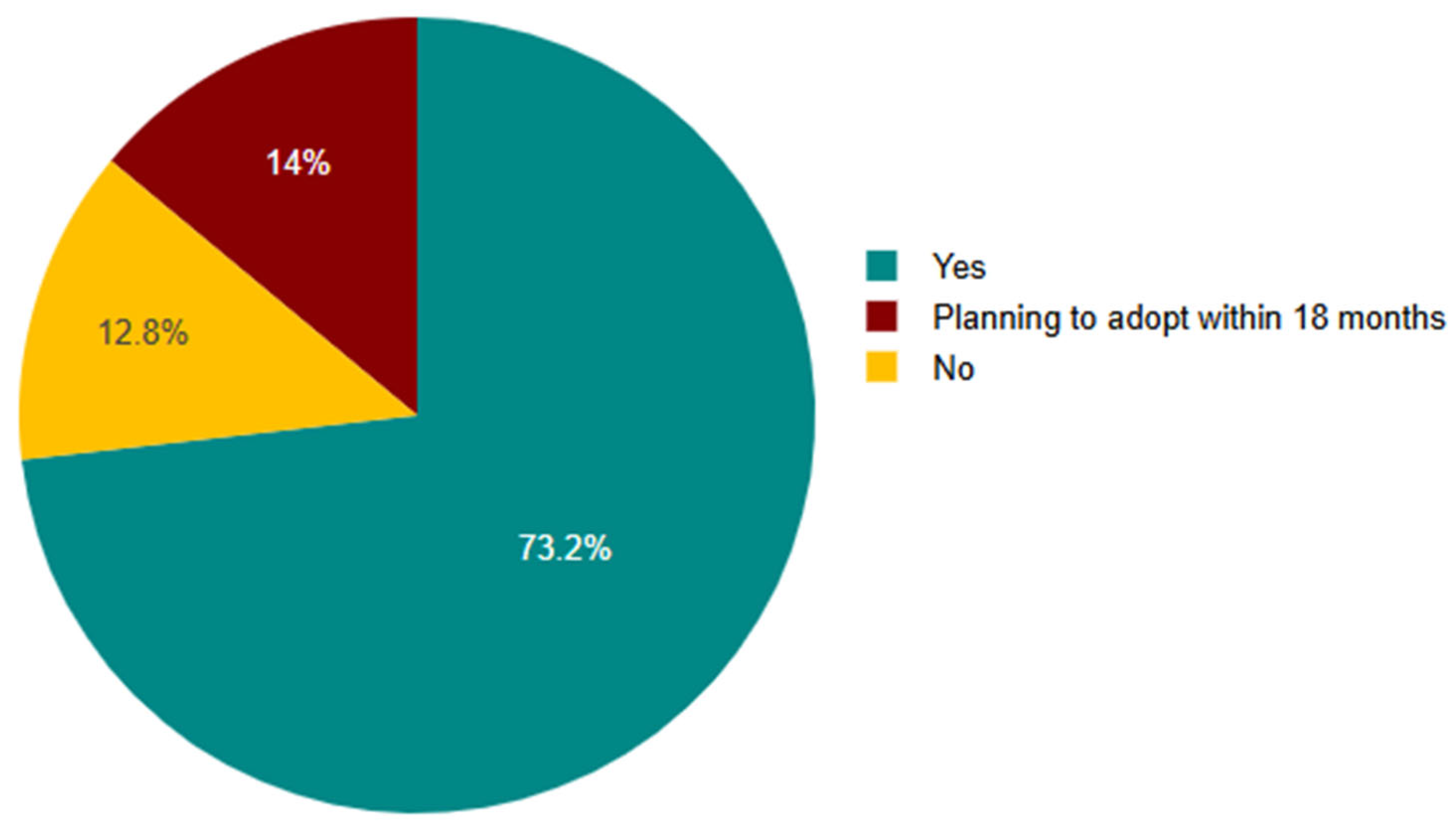

- Yes, (AI/ML use)

- No (AI use)

- Plans to adopt within 18 months.

Measurements

4.5. Data Preparation and Analytical Strategy

- RQ1 (prevalence) employed descriptive statistics to ascertain baseline acceptance rates for AI governance positions.

- RQ2 (sectoral variance) entailed cross-tabulations and percentage comparisons across industries.

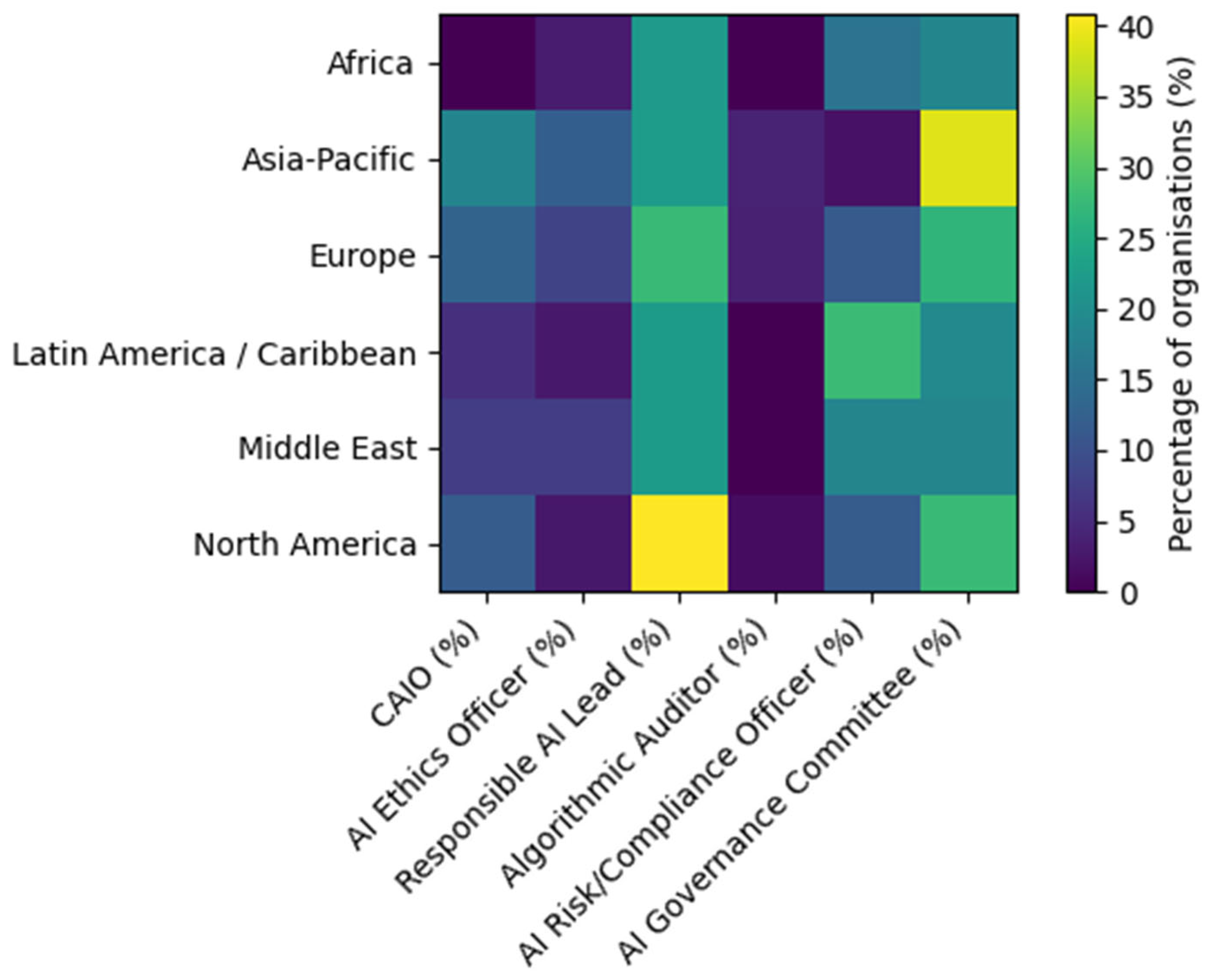

- RQ3 (geographical variation) was assessed using regional distributions and heatmap visualisations.

- RQ4 (structural characteristics) focused on the seniority, reporting lines, mandates, authority levels, and resources related to the top AI governance role in each organisation.

- RQ5 (governance maturity profiles) utilised exploratory cluster analysis to identify common governance configurations based on a standardised set of indicators.

4.6. Cluster Analysis and Typology Development

4.7. Ethical Consideration

5. Results

5.1. Prevalence: Sample Eligibility and AI Adoption Status

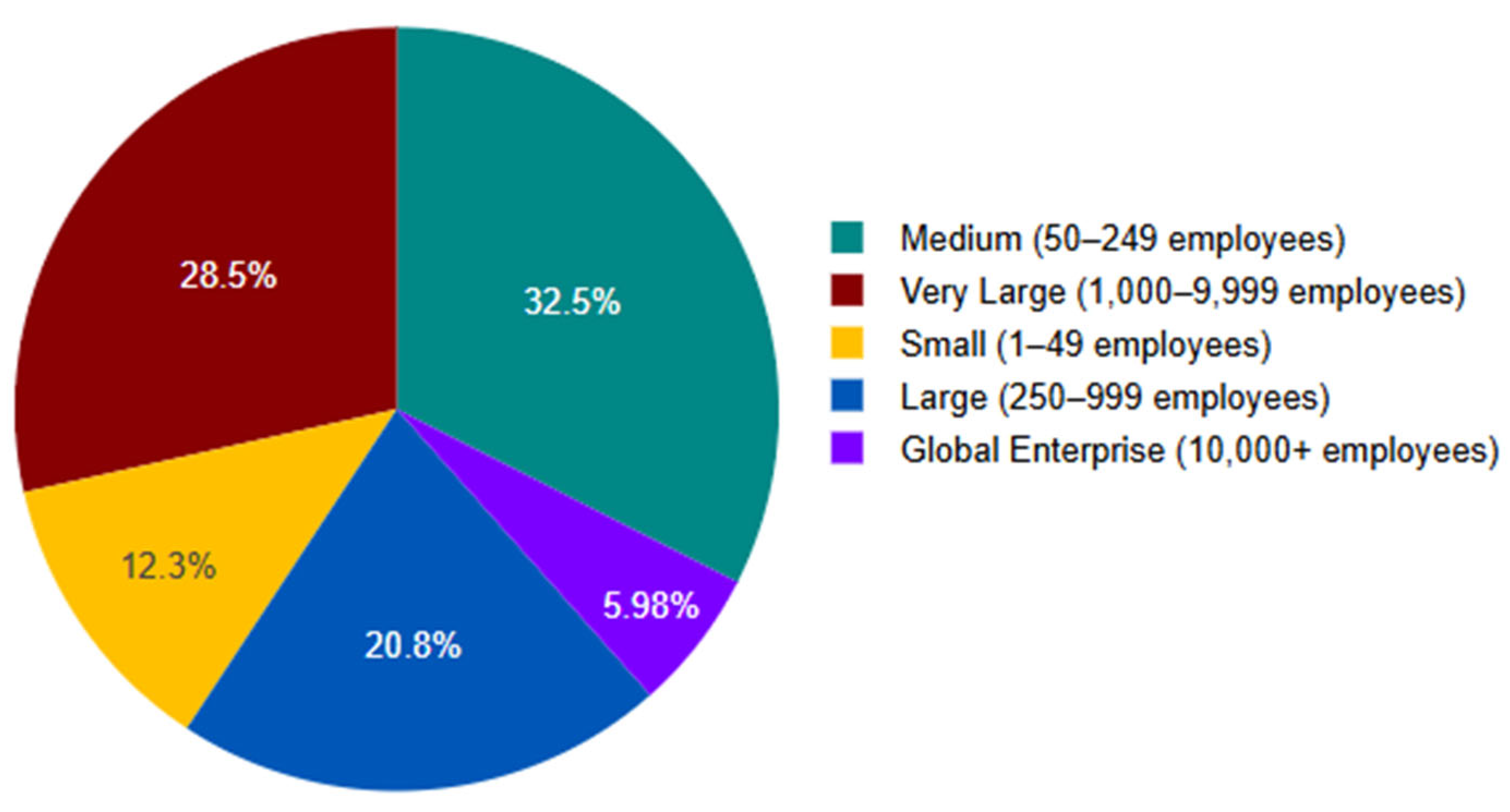

5.2. Composition by Organisation Size

5.3. Sectoral Variation

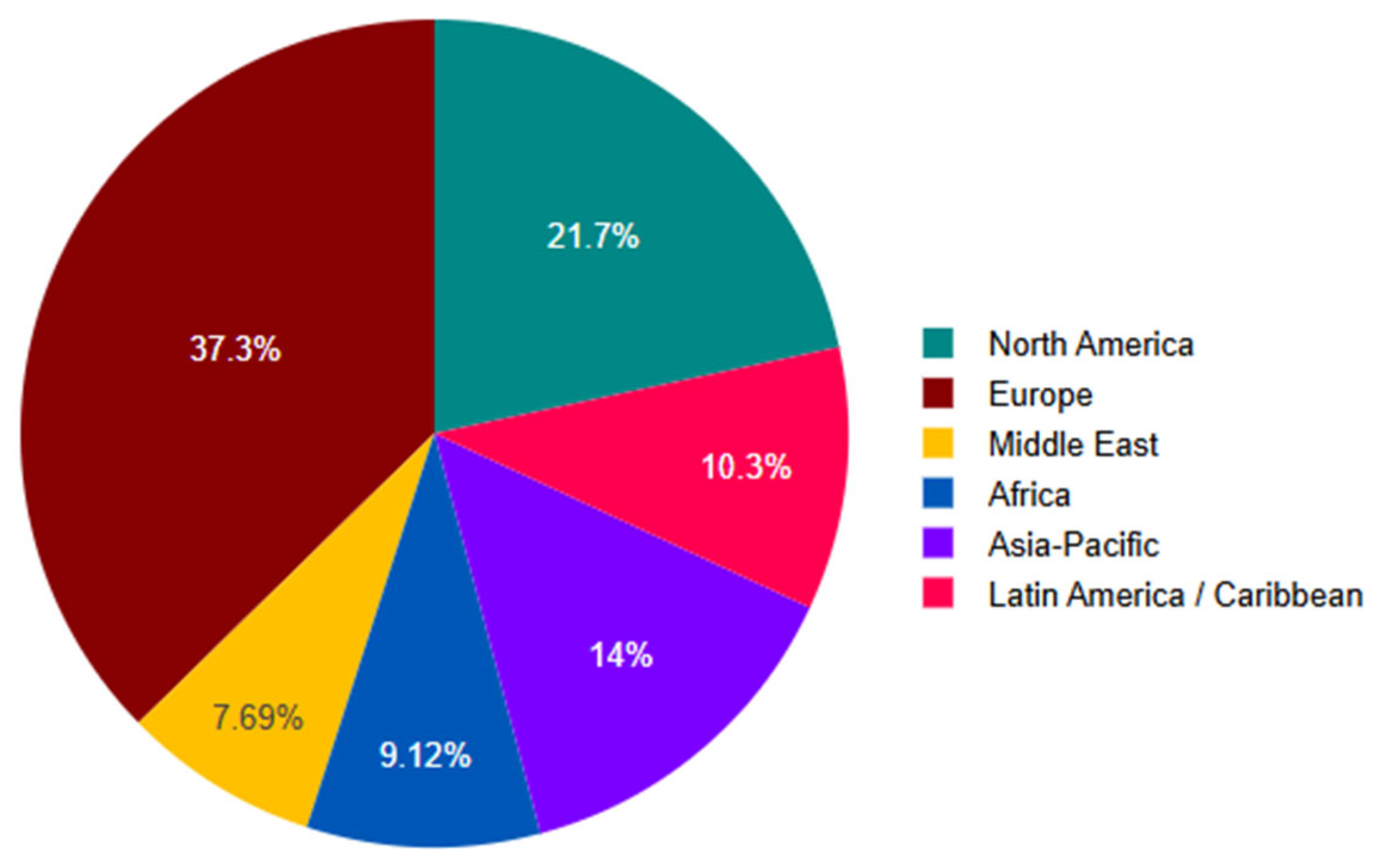

5.4. Geographical Variation in AI Governance Roles

5.5. Structural Characteristics of AI Governance Roles

| Panel A. Seniority of the highest AI governance role | Frequency | % |

| Vice President / Director | 95 | 27.07% |

| Senior Manager | 58 | 16.52% |

| Manager | 48 | 13.68% |

| Committee without formal leader | 43 | 12.25% |

| C-suite (CAIO, CIO/CTO with explicit AI mandate) | 22 | 6.27% |

| Not sure | 18 | 5.13% |

| Total | 284 | 80.91% |

| Invalid | 67 | 19.09% |

| Total | 351 | 100% |

| Panel B. Reporting Line (Reports to) | Frequency | % |

| Not sure | 79 | 22.51% |

| Chief Risk Officer | 48 | 13.68% |

| CIO / CTO | 32 | 9.12% |

| HR Executive | 30 | 8.55% |

| Board of Directors | 29 | 8.26% |

| CEO | 27 | 7.69% |

| Chief Compliance Officer | 21 | 5.98% |

| Chief Data Officer | 16 | 4.56% |

| Total | 282 | 80.34% |

| Invalid | 69 | 19.66% |

| Total | 351 | 100% |

| Panel C. Primary Mandate | % |

| Responsible AI implementation (operational focus) | 12.1% |

| AI strategy (strategic focus) | 11.7% |

| Combined ethics and Responsible AI | 5.1% |

| AI risk management (including compliance) | 7.45 |

| Mixed or multi-functional mandate* | 63.7% |

| Total | 100% |

| *Roles that combine multiple functions, including strategy, ethics, audit, risk, compliance, training, or data governance. | |

| Panel D. Resources allocated to AI governance | Frequency | % |

| Small team (2–4 people) | 69 | 19.66% |

| Dedicated team (5+ people) | 65 | 18.52% |

| Not sure | 57 | 16.24% |

| Shared responsibilities across teams | 56 | 15.95% |

| No dedicated resources | 24 | 6.84% |

| Single individual | 13 | 3.7% |

| Total | 284 | 80.91% |

| Invalid | 67 | 19.09% |

| Total | 351 | 100% |

| Panel E. Decision-making authority (level of authority) | Frequency | % |

| Moderate: Advisory role but influential | 113 | 32.19% |

| High: Can veto or approve AI systems | 58 | 16.52% |

| Low: Limited to documentation/compliance | 56 | 15.95% |

| Very low: Symbolic role | 39 | 11.11% |

| Not sure | 19 | 5.41% |

| Total | 285 | 81.2% |

| Invalid | 66 | 18.8% |

| Total | 351 | 100% |

5.6. Governance Maturity Profiles

6. Discussion

6.1. The Presence of an AI Role Does Not Equate to Governance Capacity

6.2. A Substantial Share of Organisations Govern AI Through Structural Absence

6.3. Symbolic and Operational Governance Take Precedence over Institutionalised Models

6.4. Fully Institutionalised AI Governance Remains the Exception

6.5. Governance Maturity Reflects Structural Design, Not Role Titling

6.6. Implications for Theory, Management, and Policy

7. Limitations

8. Conclusions

Appendix A

| Questionnaire | |

| 1. Organisation Profile | What sector does your organisation operate in? |

|

|

| Size of your Organisation: | |

| |

| Location of organisation: | |

| |

| Does your organisation currently use AI or machine-learning systems? | |

| |

| In which areas are AI systems currently being used or to be utilised? | |

| |

| 2. Presence or Absence of AI Governance Roles | Does your organisation have any of the following formal AI governance roles? |

(CAIO)

AI Manager

Specialist

Ethics Board |

|

| Other AI governance role(s). Please state: ____ | |

| How many distinct AI governance roles exist in your organisation? | |

| |

| 3. Structural Characteristics of Roles | Seniority of the highest AI governance role: |

|

|

| Who does this role/committee report to? | |

| |

| What is the mandate of this role? | |

| |

| Resources allocated to AI governance | |

| |

| How much decision-making authority does the role have? | |

| |

| 4. Governance Structures Supporting AI Roles | Which of the following AI governance mechanisms exist? |

|

|

How would you describe your organisation’s AI governance maturity?

| |

| 5. AI Use Intensity and Context | Approximate number of AI/ML systems deployed in your organisation: |

|

|

| Criticality of AI systems | |

| |

| Would you like to receive the final report or results summary? | |

|

References

- Bovens, M. Analysing and Assessing Accountability: A Conceptual Framework. European Law Journal 2007, 13(4), 447–468. [Google Scholar] [CrossRef]

- Cummings, M. L. Rethinking the Maturity of Artificial Intelligence in Safety-Critical Settings. Ai Magazine 2021, 42(1), 6–15. [Google Scholar] [CrossRef]

- Deloitte. State of AI in the Enterprise | Deloitte Australia. Www.deloitte.com. 2022. Available online: https://www.deloitte.com/au/en/services/consulting/research/state-of-ai-in-enterprise-2022.html.

- Dubes, R. C.; Jain, A. K. Clustering Methodologies in Exploratory Data Analysis; Elsevier EBooks, 1980; pp. 113–228. [Google Scholar] [CrossRef]

- European Commission. Ethics guidelines for trustworthy AI | Shaping Europe’s digital future. European Commission. 2019. Available online: https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai.

- Everitt, B. S.; Landau, S.; Leese, M.; Stahl, D. Cluster Analysis; Wiley Series in Probability and Statistics, 2011. [Google Scholar] [CrossRef]

- Floridi, L.; Cowls, J. A Unified Framework of Five Principles for AI in Society. Harvard Data Science Review 2019, 1(1). [Google Scholar] [CrossRef]

- Gopal, V.; Davenport, T.H; Bean, R. Why Your Company Needs a Chief Data, Analytics, and AI Officer. Harvard Business Review. December 2025. Available online: https://hbr.org/2025/12/why-your-company-needs-a-chief-data-analytics-and-ai-officer.

- Hanna, M.; Pantanowitz, L.; Jackson, B.; Palmer, O.; Visweswaran, S.; Pantanowitz, J.; Deebajah, M.; Rashidi, H. Ethical and Bias Considerations in Artificial intelligence/machine Learning. In Modern Pathology; ScienceDirect, 2024; Volume 38, 3, pp. 1–13. [Google Scholar] [CrossRef]

- Meyer, J.; Rowan, B. Institutionalised Organisations: Formal Structure as Myth and Ceremony. American Journal of Sociology 1977, 83, 340–363. [Google Scholar] [CrossRef]

- Martin, K. Ethical Implications and Accountability of Algorithms. Journal of Business Ethics 2019, 160, 835–850. [Google Scholar] [CrossRef]

- Mittelstadt, B. Principles alone cannot guarantee ethical AI. Nature Machine Intelligence 2019, 1(11), 501–507. [Google Scholar] [CrossRef]

- Morley, J.; Floridi, L.; Kinsey, L.; Elhalal, A. From What to How: An Initial Review of Publicly Available AI Ethics Tools, Methods and Research to Translate Principles into Practices. Science and Engineering Ethics 2020, 26(4). [Google Scholar] [CrossRef]

- OECD. (2019, June 11). Artificial Intelligence in Society. OECD. Available online: https://www.oecd.org/en/publications/artificial-intelligence-in-society_eedfee77-en.html.

- Papagiannidis, E.; Mikalef, P.; Conboy, K. Responsible artificial intelligence governance: A review and research framework. The Journal of Strategic Information Systems 2025, 34(2). [Google Scholar] [CrossRef]

- Rahwan, I. Society-in-the-loop: programming the algorithmic social contract. Ethics and Information Technology 2018, 20(1), 5–14. [Google Scholar] [CrossRef]

- Schmitt, M. Strategic Integration of Artificial Intelligence in the C-Suite: The Role of the Chief AI Officer. In ArXiv; Cornell University, 2024. [Google Scholar] [CrossRef]

- Shneiderman, B. Bridging the Gap between Ethics and Practice. ACM Transactions on Interactive Intelligent Systems 2020, 10(4), 1–31. [Google Scholar] [CrossRef]

- Wirtz, B. W.; Weyerer, J. C.; Geyer, C. Artificial Intelligence and the Public Sector—Applications and Challenges. International Journal of Public Administration 2019, 42(7), 596–615. [Google Scholar] [CrossRef]

- World Economic Forum Launches AI Governance Alliance Focused on Responsible Generative AI. World Economic Forum. 2023. Available online: https://www.weforum.org/press/2023/06/world-economic-forum-launches-ai-governance-alliance-focused-on-responsible-generative-ai/.

- Dillman, D. A.; Smyth, J. D.; Christian, & Leah Melani. Internet, Phone, Mail, and Mixed-Mode Surveys; Wiley, 2014. [Google Scholar] [CrossRef]

- The Belmont Report (1979). Office for Human Research Protections. The Belmont Report. U.S. Department of Health and Human Services. Available online: https://www.hhs.gov/ohrp/regulations-and-policy/belmont-report/index.html.

| Size of your Organisation | Frequency | % |

| Medium (50–249 employees) | 114 | 32.48% |

| Very Large (1,000–9,999 employees) | 100 | 28.49% |

| Large (250–999 employees) | 73 | 20.8% |

| Small (1–49 employees) | 43 | 12.25% |

| Global Enterprise (10,000+ employees) | 21 | 5.98% |

| Total | 351 | 100% |

| Sector of organisation respondents operate in. | Frequency | % |

| Banking / Financial / Insurance | 68 | 19.37% |

| Public Sector / Government | 43 | 12.25% |

| Consulting / Professional Services | 36 | 10.26% |

| Retail / E-commerce | 34 | 9.69% |

| Mining / Manufacturing | 32 | 9.12% |

| Healthcare / Pharmaceuticals | 31 | 8.83% |

| Telecommunications / Technology | 26 | 7.41% |

| Sports / Entertainment | 24 | 6.84% |

| Logistics / Supply Chain | 22 | 6.27% |

| Education | 16 | 4.56% |

| Energy / Utilities | 9 | 2.56% |

| Agriculture / Farming | 6 | 1.71% |

| Hospitality | 2 | 0.57% |

| Transportation | 1 | 0.28% |

| Building and construction | 1 | 0.28% |

| Total | 351 | 100% |

| Region | CAIO | AI Ethics Officer | Responsible AI Lead | Algorithmic Auditor | AI Risk / Compliance Officer | AI Governance Committee |

| Africa | 0.0 | 3.1 | 21.9 | 0.0 | 15.6 | 18.8 |

| Asia-Pacific | 18.4 | 12.2 | 22.4 | 4.1 | 2.0 | 38.8 |

| Europe | 13.0 | 8.4 | 27.5 | 3.8 | 11.5 | 26.7 |

| Latin America / Caribbean | 5.6 | 2.8 | 22.2 | 0.0 | 27.8 | 19.4 |

| Middle East | 7.4 | 7.4 | 22.2 | 0.0 | 18.5 | 18.5 |

| North America | 11.8 | 2.6 | 40.8 | 1.3 | 11.8 | 27.6 |

| Governance maturity profile | n (%) | Role formalisation | % with no formal roles | Governance authority (mean) | Resources (mean) | Maturity (mean) | Profile interpretation |

| Institutionalised Governance | 79 (22.5%) | 1.76 | 1.3% | 3.51 | 3.10 | 3.81 | Enhanced governance with greater authority, resources, senior engagement, and broader mandates. |

| Governance Absence | 72 (20.5%) | 0.00 | 100.0% | 0.06 | 0.00 | 2.94 | There are no formal AI governance roles; governance is mainly informal and lacks a clear definition. |

| Symbolic Governance | 87 (24.8%) | 0.33 | 66.7% | 1.46 | 1.11 | 1.92 | There is insufficient formalisation of responsibilities and inadequate governance competence, leading to diminished authority and scant resources. This indicates a governance approach that prioritises appearances above genuine compliance. |

| Operational Governance | 113 (32.2%) | 1.23 | 5.3% | 2.78 | 2.21 | 2.57 | Governance is integrated into operational functions with some authority and resources, but lacks strong support from institutional or executive leadership. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).