Submitted:

13 February 2026

Posted:

14 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Problem Setup and Threat Model

2. Contrastive Learning Principles for Anomaly Detection and Poisoning Identification

3. Design of Memory Poisoning Detection and Remediation Mechanisms

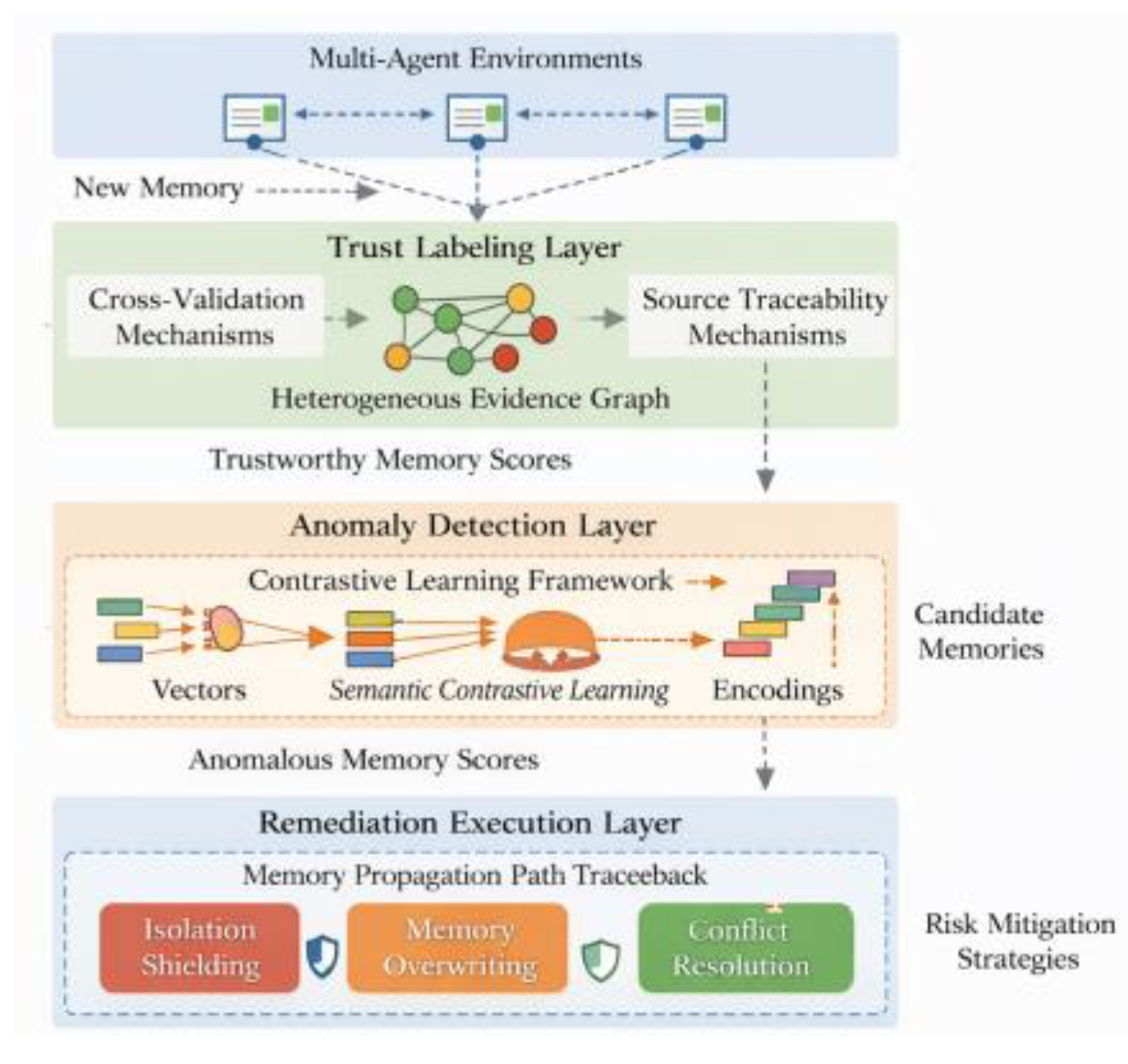

3.1. System Architecture Design

3.2. Trustworthiness-Driven Memory Source Annotation and Evidence Graph Construction

3.3. Contrastive Learning-Based Anomaly Memory Detection Algorithm

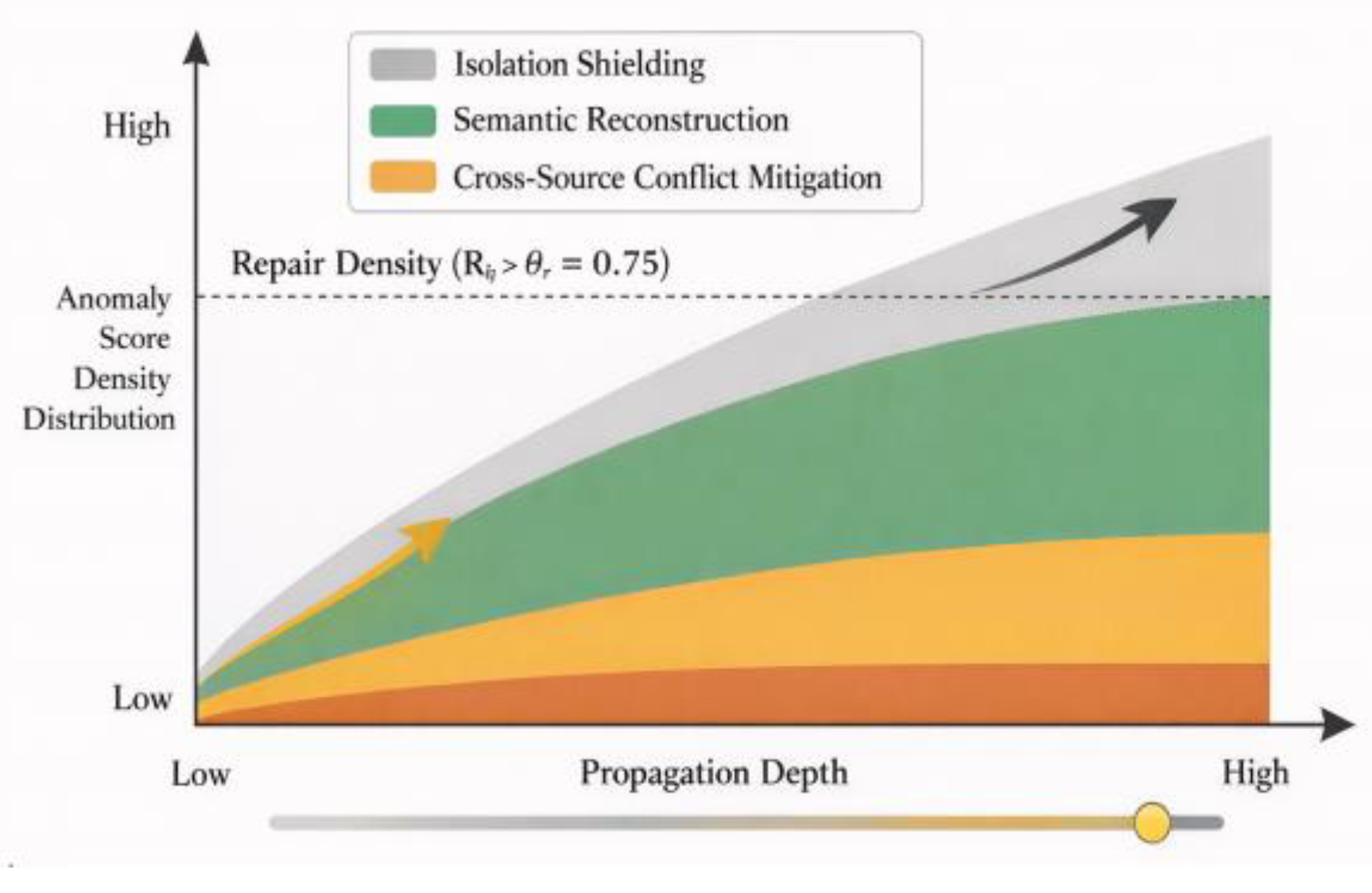

3.4. Poisoning Remediation Strategy

4. Experimental Design and Results Analysis

4.1. Experimental Platform and Task Scenario Construction

4.1.1. Reproducibility Package

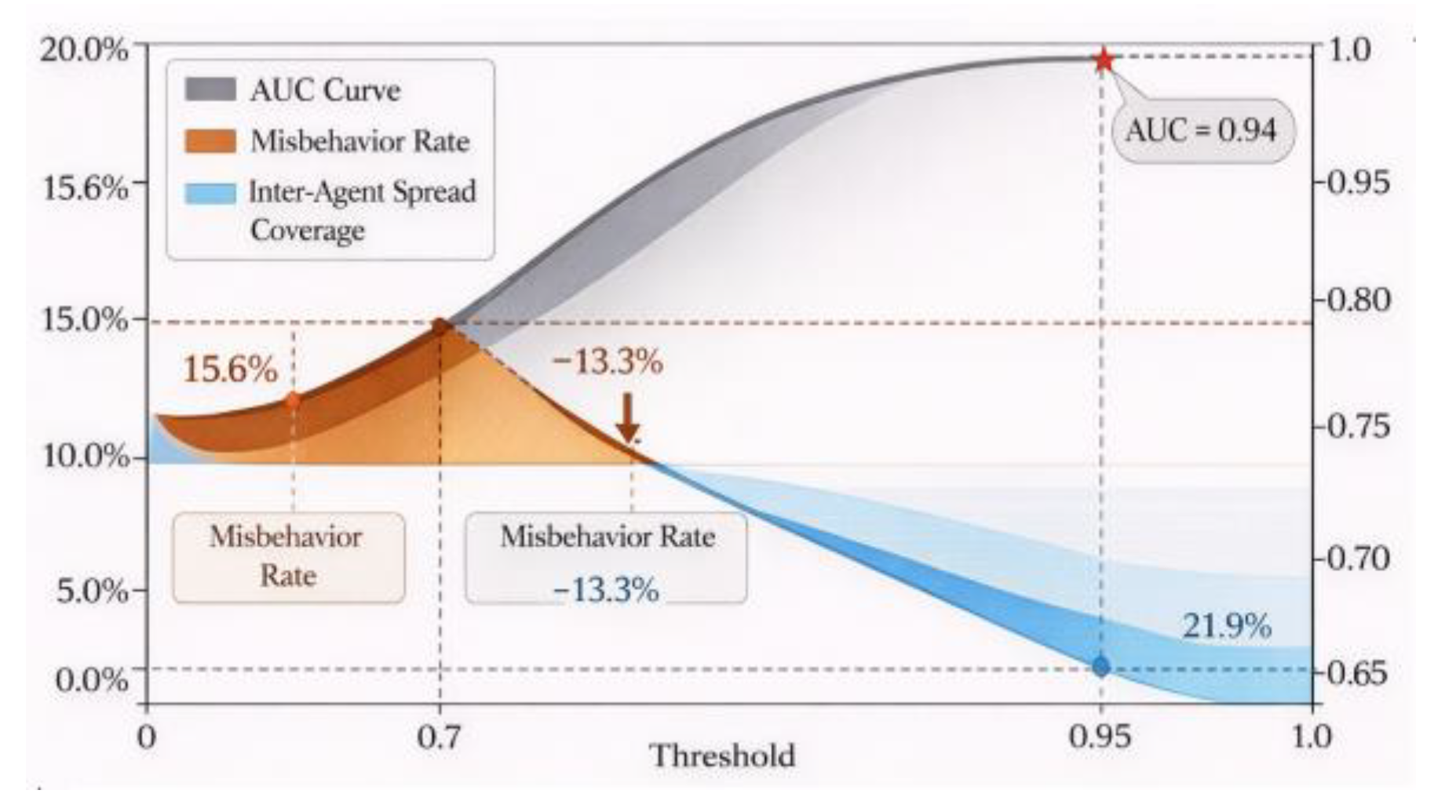

4.2. Malware Sample Injection and Detection Performance Evaluation (Including AUC Metrics)

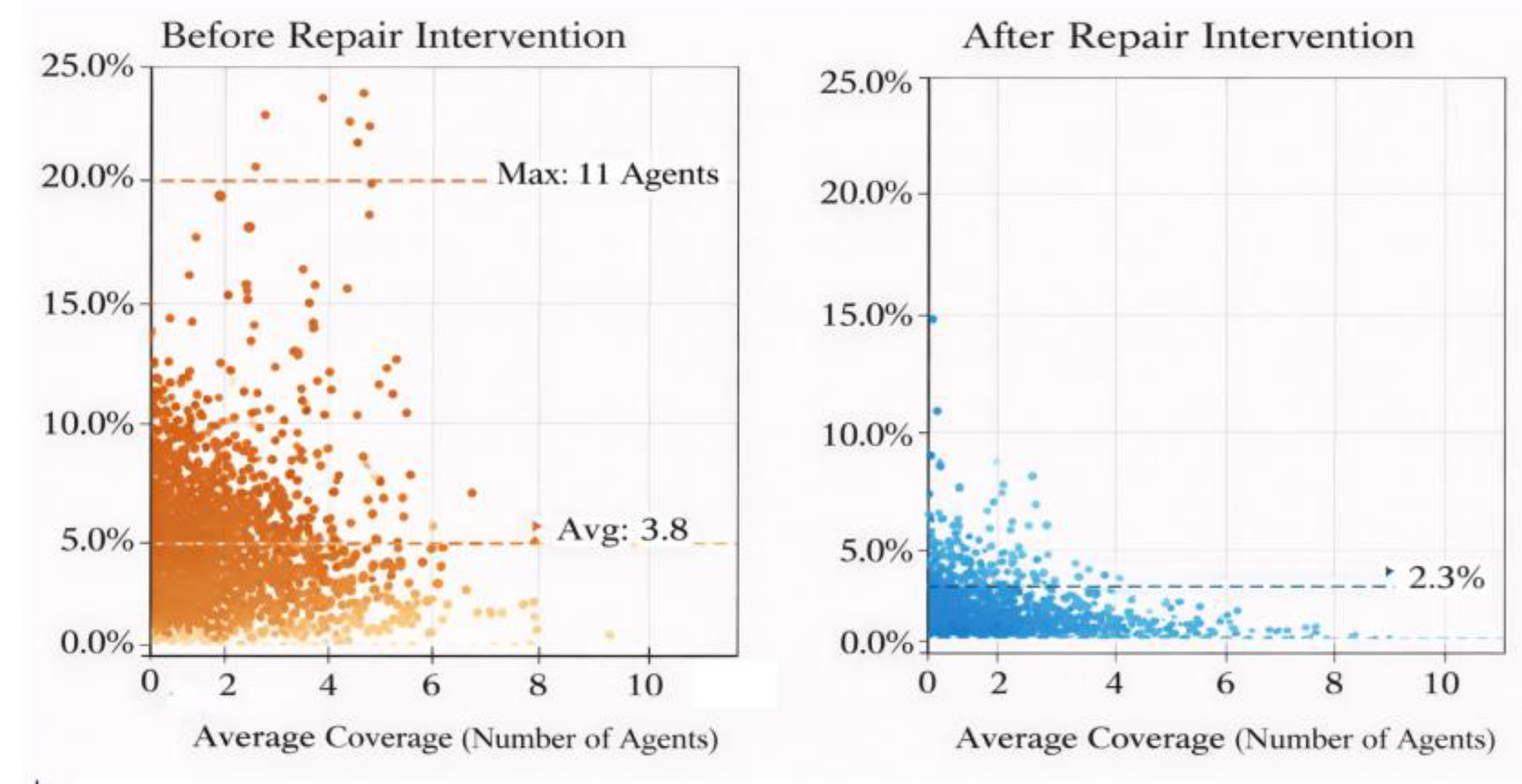

4.3. Analysis of Propagation Scope Control Effectiveness and Behavioral Accuracy

4.4. Comparative Analysis with Existing Methods and Robustness Testing

4.5. Attack Diversity and Intensity

| Poison Ratio | Detection AUC ↑ | Misbehavior Rate (Before → After) ↓ | Avg Propagation Hops (Before → After) ↓ |

| 0.5% | 0.97 ± 0.01 [0.96, 0.98] | 4.2% → 1.1% | 2.0 → 0.9 |

| 1% | 0.96 ± 0.01 [0.95, 0.97] | 6.8% → 1.3% | 2.4 → 1.0 |

| 2% | 0.95 ± 0.01 [0.94, 0.96] | 9.7% → 1.6% | 3.0 → 1.1 |

| 5% | 0.94 ± 0.01 [0.93, 0.95] | 15.6% → 2.3% | 3.8 → 1.4 |

| 10% | 0.91 ± 0.02 [0.89, 0.93] | 24.5% → 4.8% | 5.2 → 2.1 |

5. Conclusion

References

- Ahmed, I.; Syed, M.A.; Maaruf, M.; Khalid, M. Distributed computing in multi-agent systems: a survey of decentralized machine learning approaches. Computing 2025, vol. 107(no. 1, Art. no. 2). [Google Scholar] [CrossRef]

- Stone, P.; Veloso, M. Multiagent systems: A survey from a machine learning perspective. Autonomous Robots 2000, vol. 8, 345–383. [Google Scholar] [CrossRef]

- Chen, Y.; Zhang, Z.; Guo, J. MARNet: Backdoor attacks against cooperative multi-agent reinforcement learning. IEEE Transactions on Dependable and Secure Computing, 2023. [Google Scholar]

- Chu, K.-F.; Guo, W. Multi-agent reinforcement learning-based passenger spoofing attack on mobility-as-a-service. IEEE Transactions on Dependable and Secure Computing 2024, vol. 21(no. 6), 5565–5581. [Google Scholar] [CrossRef]

- Chen, Z.; Xiang, Z.; Xiao, C.; Song, D.; Li, B. AgentPoison: Red-teaming LLM agents via poisoning memory or knowledge bases. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS 2024), 2024. [Google Scholar]

- Lee, D.; Tiwari, M. Prompt Infection: LLM-to-LLM prompt injection within multi-agent systems. arXiv 2024, arXiv:2410.07283. [Google Scholar]

- Yi, J.; Xie, Y.; Zhu, B.; Kiciman, E.; Sun, G.; Xie, X.; Wu, F. Benchmarking and defending against indirect prompt injection attacks on large language models. arXiv 2023, arXiv:2312.14197. [Google Scholar]

- Zhan, Q.; Liang, Z.; Ying, Z.; Kang, D. InjecAgent: Benchmarking indirect prompt injections in tool-integrated large language model agents. Findings of the Association for Computational Linguistics: ACL 2024 2024, 10471–10506. [Google Scholar] [CrossRef]

- Tan, X.; Luan, H.; Luo, M.; Sun, X.; Chen, P.; Dai, J. RevPRAG: Revealing poisoning attacks in retrieval-augmented generation through LLM activation analysis. Findings of the Association for Computational Linguistics: EMNLP 2025, 12999–13011, 2025. [Google Scholar] [CrossRef]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. In Proceedings of the 37th International Conference on Machine Learning (ICML 2020), 2020; pp. 1597–1607. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Liò, P.; Bengio, Y. Graph attention networks. International Conference on Learning Representations (ICLR 2018), 2018. [Google Scholar]

- Liu, F.T.; Ting, K.M.; Zhou, Z.-H. Isolation forest. 2008 Eighth IEEE International Conference on Data Mining, 2008; pp. 413–422. [Google Scholar] [CrossRef]

- Schölkopf, B.; Platt, J.; Shawe-Taylor, J.; Smola, A.; Williamson, R. Estimating the support of a high-dimensional distribution. Neural Computation 2001, vol. 13(no. 7), 1443–1471. [Google Scholar] [CrossRef] [PubMed]

| Method Type | AUC Value | False Positive Rate (%) | Cross-Agent Propagation Coverage (Jumps) | Multi-Task Accuracy Fluctuation Range |

| Statistical Residual Detection | 0.79 | 8.5 | 6.4 | ±6.7% |

| Attention Disorder Screening | 0.84 | 5.3 | 5.1 | ±5.1% |

| Figure Attention Deficit Recognition | 0.88 | 4 | 4.3 | ±3.9% |

| Isolation Forest (embedding anomaly) | 0.86 | 4.8 | 4.9 | ±4.8% |

| One-Class SVM (RBF, embedding anomaly) | 0.87 | 4.5 | 4.7 | ±4.5% |

| This Method (Contrastive Learning + Repair) | 0.94 | 2.3 | 1.4 | ±1.8% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).