Submitted:

14 February 2026

Posted:

26 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Background

1.2. Research Gap

- Standardized cross-domain context sensitivity measurement

- Unified methodology across open and closed architectures

- Position-level temporal analysis across task types

- Systematic vendor-level behavioral characterization

1.3. Research Questions

- 1.

- RQ1: How does domain structure (closed-goal vs open-goal) affect aggregate context sensitivity?

- 2.

- RQ2: Do temporal dynamics differ systematically between domains at the position level?

- 3.

- RQ3: Are architectural differences (open vs closed models) domain-specific?

- 4.

- RQ4: Do vendor-level behavioral signatures persist across domains?

1.4. Contributions

- 1.

- Standardized framework: Unified 50-trial methodology with corrected trial definition across 14 models and 2 domains

- 2.

- Cross-domain validation: First systematic comparison of RCI in medical vs philosophical reasoning

- 3.

- Architectural diversity: Balanced open (7) and closed (5-6) model inclusion in both domains

- 4.

- Baseline dataset: 25 model-domain runs providing reproducible benchmarks for 14 state-of-the-art LLMs

- 5.

- Universal positive context sensitivity: All 25 model-domain runs show positive RCI, confirming robust context utilization across architectures

2. Related Work

2.1. Context Sensitivity in LLMs

2.2. Cross-Domain AI Evaluation

2.3. Paper 1 Foundation

3. Methodology

3.1. Experimental Design

- TRUE: Model receives coherent 29-message conversational history before prompt

- COLD: Model receives prompt with no prior context

- SCRAMBLED: Model receives same 29 messages in randomized order before prompt

- RCI (primary): Context sensitivity relative to no-context baseline

- RCI: Context sensitivity relative to scrambled baseline

- Hierarchy test: Validates that RCI>RCI, confirming scrambled context retains partial information

3.2. Domains

3.3. Models

- OpenAI: GPT-4o, GPT-4o-mini, GPT-5.2

- Anthropic: Claude Haiku, Claude Opus

- Google: Gemini Flash

- DeepSeek: V3.1

- Moonshot: Kimi K2

- Meta: Llama 4 Maverick, Llama 4 Scout

- Mistral: Mistral Small 24B, Ministral 14B

- Alibaba: Qwen3 235B

3.4. Data Scale

| Parameter | Value |

|---|---|

| Unique models | 14 |

| Model-domain runs | 25 |

| Trials per run | 50 |

| Prompts per trial | 30 |

| Conditions per trial | 3 (TRUE, COLD, SCRAMBLED) |

| Total trials | 1,250 |

| Total responses | 112,500 |

4. Results

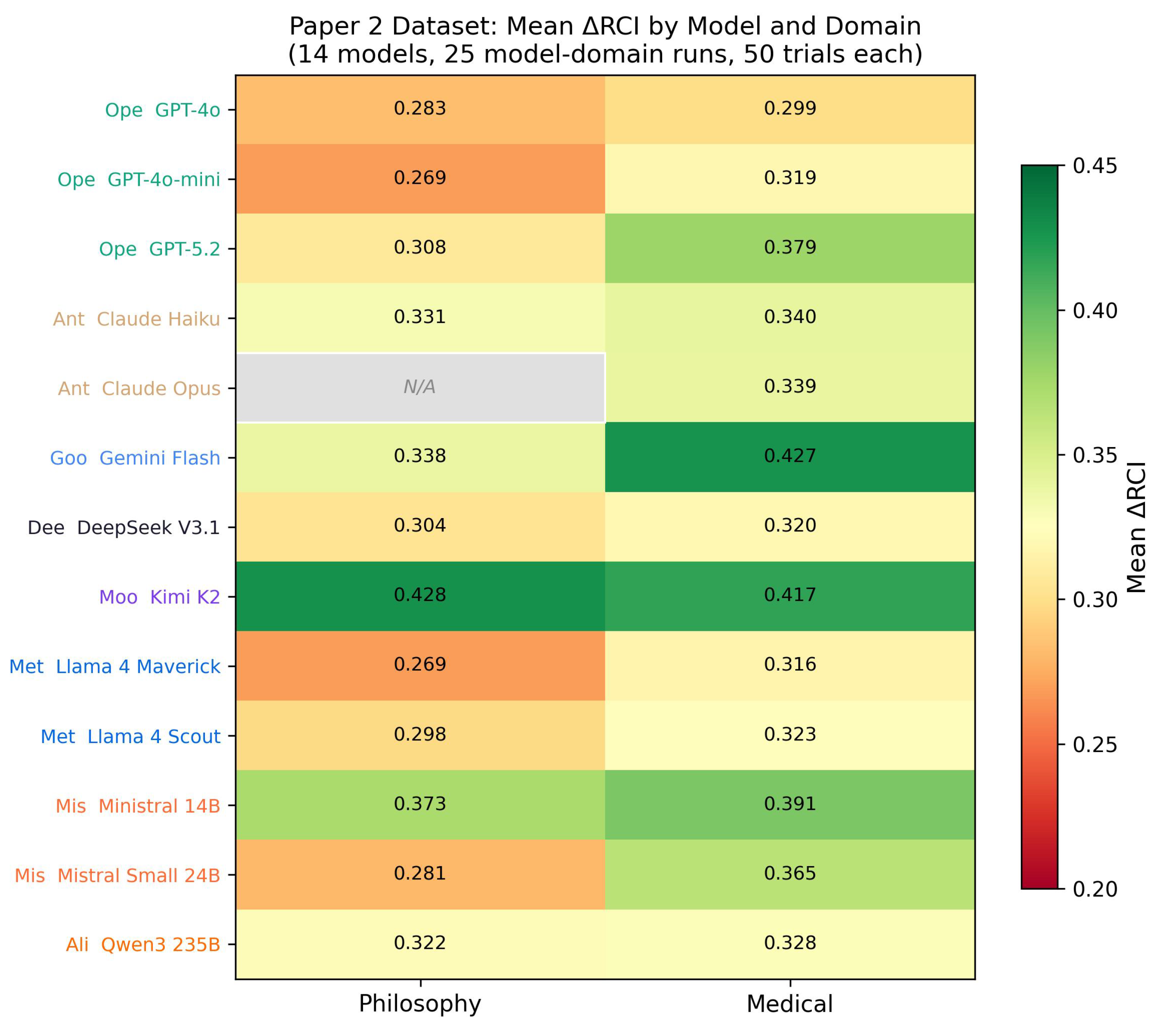

4.1. Dataset Overview

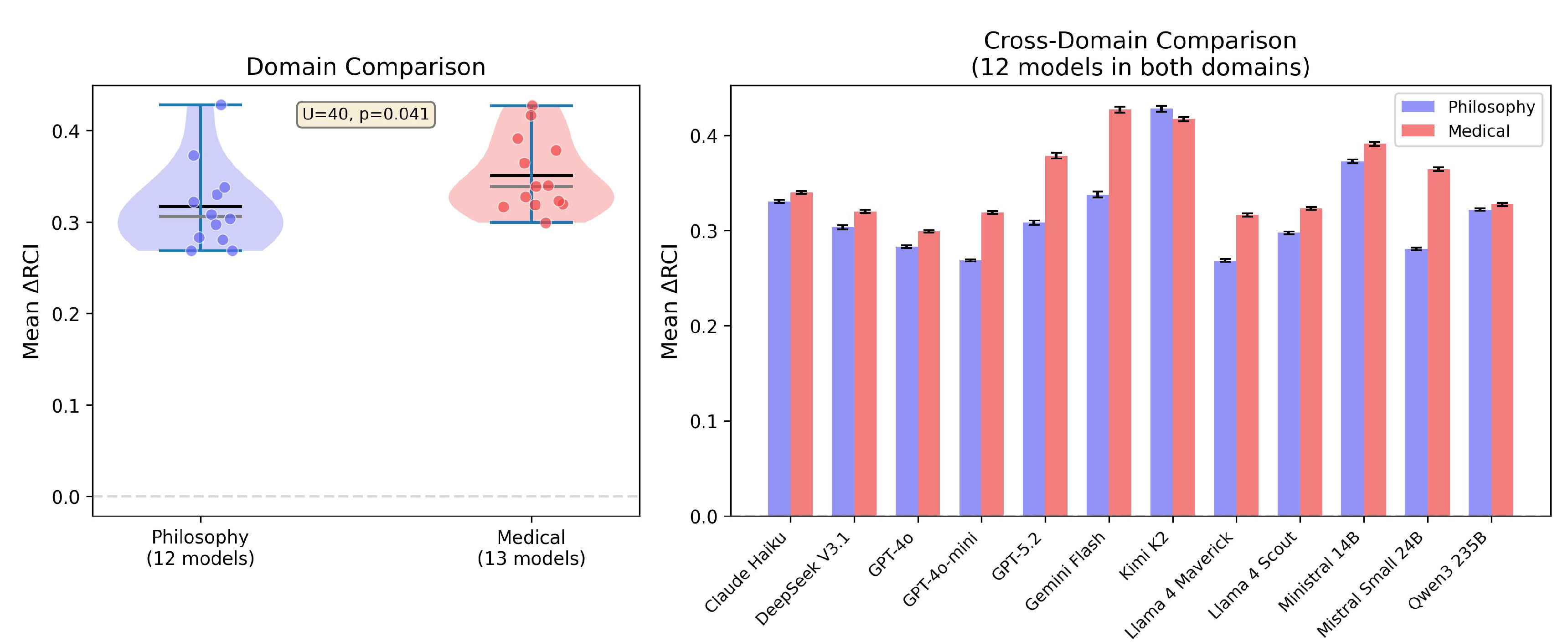

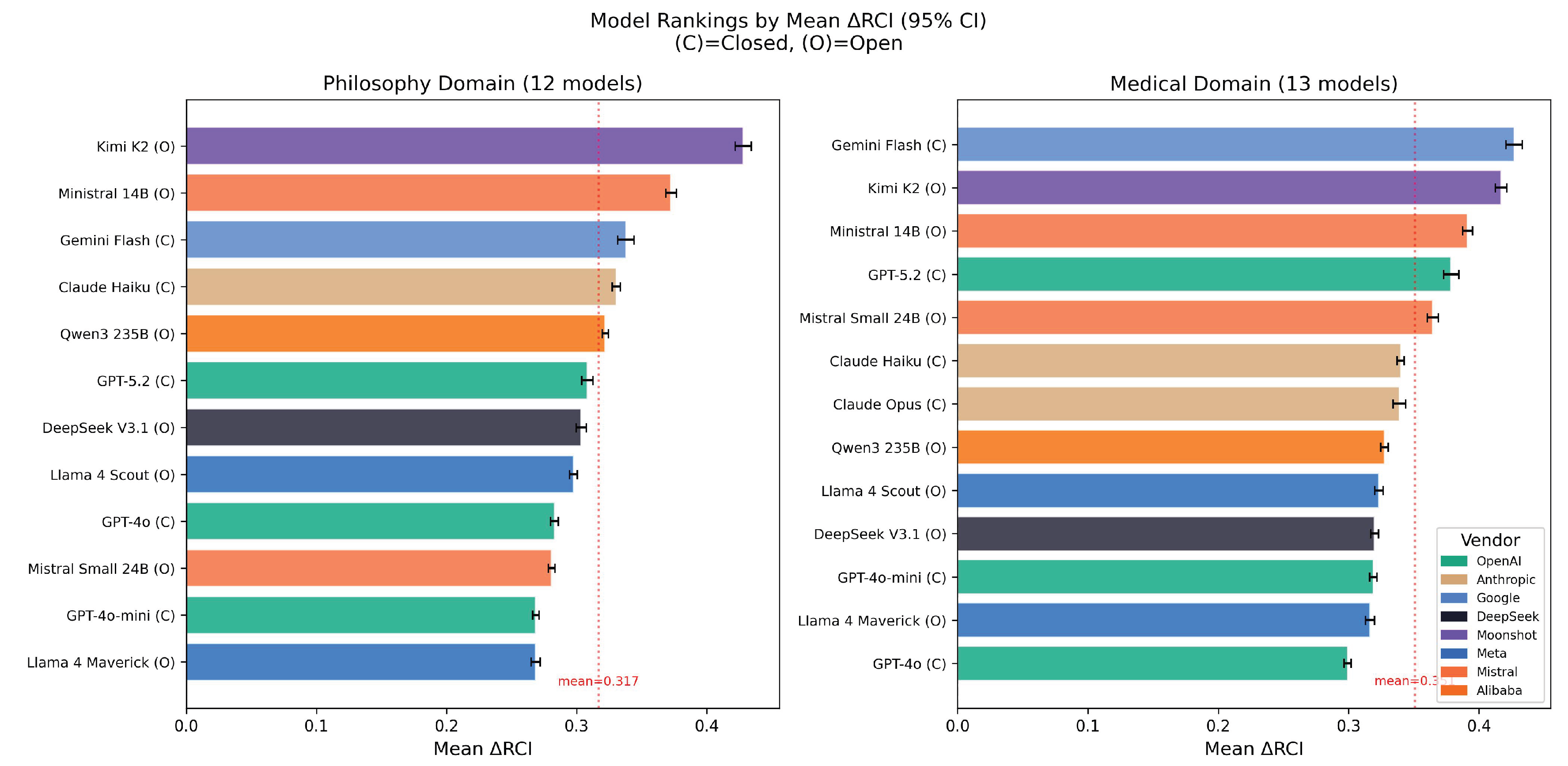

4.2. Domain Comparison

| Domain | Mean RCI | SD | n |

|---|---|---|---|

| Philosophy | 0.317 | 0.047 | 12 |

| Medical | 0.351 | 0.041 | 13 |

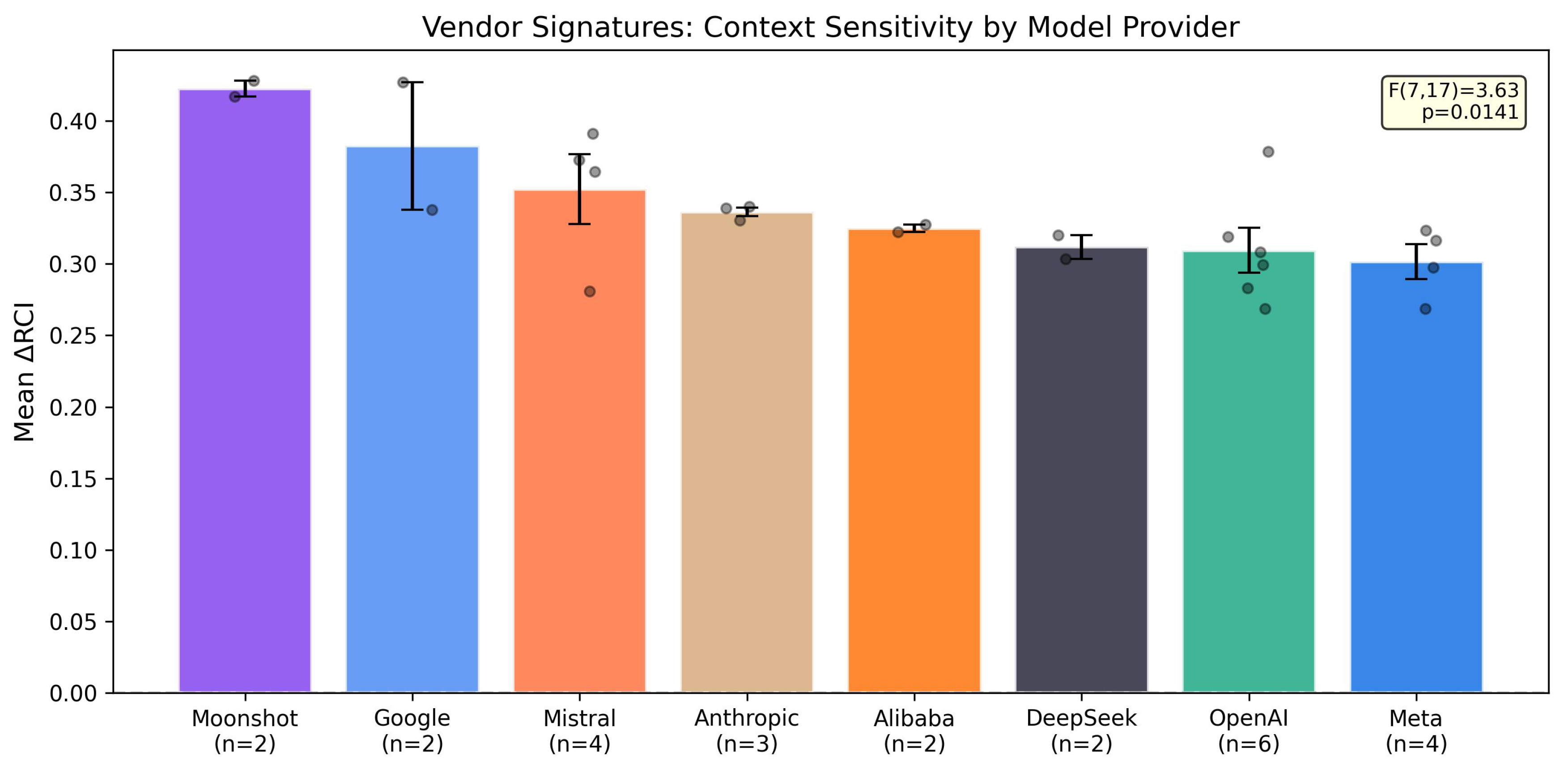

4.3. Vendor Signatures

| Rank | Vendor | n (models) | Mean RCI |

|---|---|---|---|

| 1 | Moonshot | 2 | 0.423 |

| 2 | 2 | 0.383 | |

| 3 | Mistral | 4 | 0.352 |

| 4 | Anthropic | 3 | 0.336 |

| 5 | Alibaba | 2 | 0.325 |

| 6 | DeepSeek | 2 | 0.312 |

| 7 | OpenAI | 6 | 0.310 |

| 8 | Meta | 4 | 0.302 |

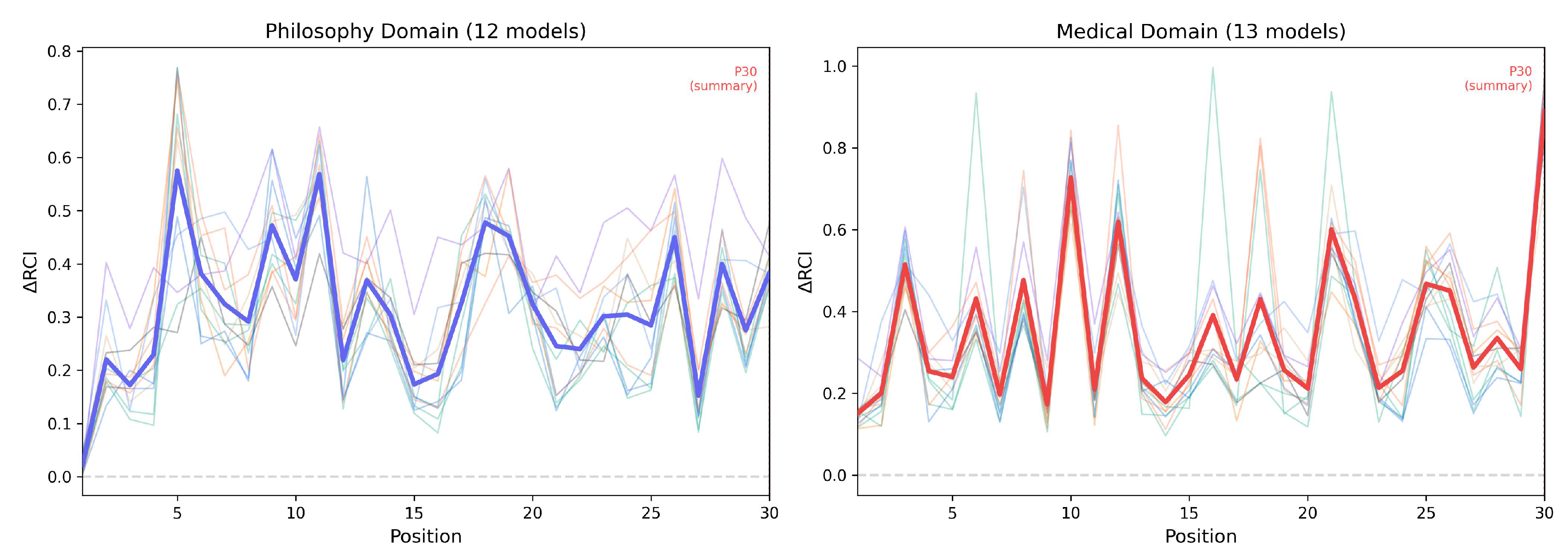

4.4. Position-Level Patterns

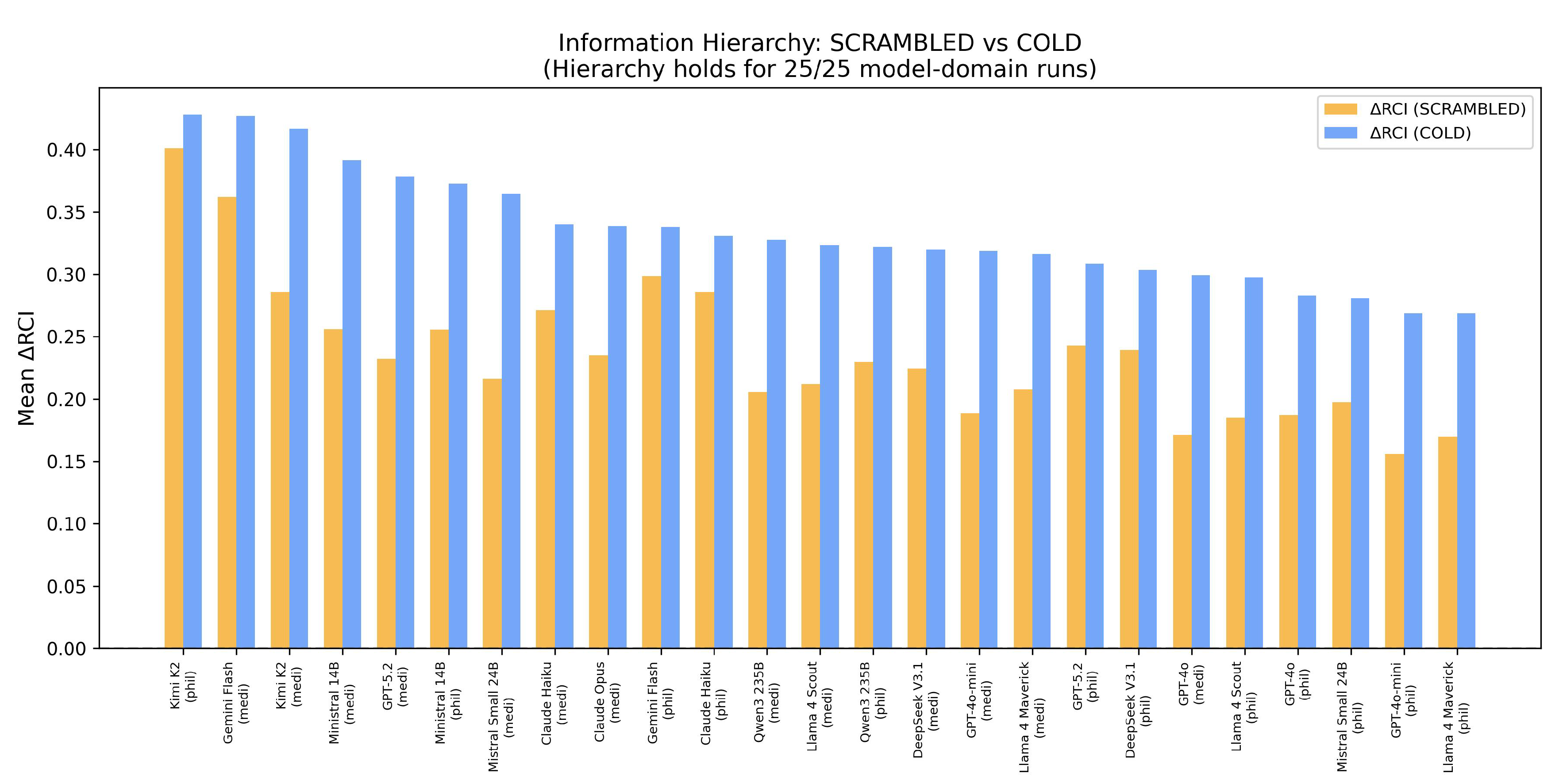

4.5. Information Hierarchy

4.6. Model Rankings

- 1.

- Kimi K2 (O): 0.428

- 2.

- Ministral 14B (O): 0.373

- 3.

- Gemini Flash (C): 0.338

- 1.

- Kimi K2 (O): 0.417

- 2.

- Ministral 14B (O): 0.391

- 3.

- GPT-5.2 (C): 0.379

5. Discussion

5.1. Medical Domain Shows Higher Context Sensitivity

5.2. Cross-Domain Patterns

5.3. Open vs Closed Architecture

- Medical open mean: 0.351 vs closed mean: 0.351 (identical)

- Philosophy open mean: 0.325 vs closed mean: 0.306

5.4. Vendor Clustering

5.5. Information Hierarchy Validation

5.6. Limitations

- 1.

- Single scenario per domain: One medical case (STEMI) and one philosophical topic (consciousness)

- 2.

- Embedding model ceiling: all-MiniLM-L6-v2 [9] may not capture all semantic distinctions

- 3.

- Temperature fixed at 0.7: Other settings may yield different patterns

- 4.

- Claude Opus: Medical only (absent from philosophy); recovered data lacks response text

- 5.

- Position-level noise: 50 trials provide limited statistical power for 30-position analysis

5.7. Future Directions

- Token limit variation: Testing whether max_tokens (currently 1024) affects context sensitivity differently across domains

- Multilingual prompts: Extending RCI measurement to non-English conversations to assess language-specific effects

- Quantifying openness: Since philosophy is open-goal, measuring response entropy could provide a continuous “openness” metric for domain characterization, enabling more precise comparisons than the binary closed/open classification

- Temperature sweeps: Systematic variation of temperature (0.0–1.0) to map the context sensitivity–randomness tradeoff

6. Conclusions

- 1.

- Context sensitivity is universally positive across all models in both domains (25/25 runs)

- 2.

- Medical domain elicits higher sensitivity: Closed-goal reasoning shows significantly higher RCI than open-goal reasoning (p=0.041), with comparable inter-model variance

- 3.

- Information hierarchy is universal: The “presence > absence” principle holds in 100% of model-domain runs

- 4.

- Open models compete with closed: No systematic architectural disadvantage for open-weight models

- 5.

- Vendor signatures are significant: Organizational design choices create significant and consistent behavioral patterns (F(7,17)=3.63, p=0.014)

Data Availability Statement

Acknowledgments

Appendix A Complete Per-Model Statistics

| Model | Domain | Type | Mean RCI | SD | 95% CI |

|---|---|---|---|---|---|

| GPT-4o | Philosophy | Closed | 0.283 | 0.011 | ±0.003 |

| GPT-4o-mini | Philosophy | Closed | 0.269 | 0.009 | ±0.002 |

| GPT-5.2 | Philosophy | Closed | 0.308 | 0.015 | ±0.004 |

| Claude Haiku | Philosophy | Closed | 0.331 | 0.012 | ±0.003 |

| Gemini Flash | Philosophy | Closed | 0.338 | 0.022 | ±0.006 |

| DeepSeek V3.1 | Philosophy | Open | 0.304 | 0.014 | ±0.004 |

| Kimi K2 | Philosophy | Open | 0.428 | 0.022 | ±0.006 |

| Llama 4 Maverick | Philosophy | Open | 0.269 | 0.012 | ±0.003 |

| Llama 4 Scout | Philosophy | Open | 0.298 | 0.011 | ±0.003 |

| Ministral 14B | Philosophy | Open | 0.373 | 0.015 | ±0.004 |

| Mistral Small 24B | Philosophy | Open | 0.281 | 0.009 | ±0.003 |

| Qwen3 235B | Philosophy | Open | 0.322 | 0.009 | ±0.003 |

| GPT-4o | Medical | Closed | 0.299 | 0.010 | ±0.003 |

| GPT-4o-mini | Medical | Closed | 0.319 | 0.010 | ±0.003 |

| GPT-5.2 | Medical | Closed | 0.379 | 0.021 | ±0.006 |

| Claude Haiku | Medical | Closed | 0.340 | 0.010 | ±0.003 |

| Claude Opus | Medical | Closed | 0.339 | 0.017 | ±0.005 |

| Gemini Flash | Medical | Closed | 0.427 | 0.023 | ±0.006 |

| DeepSeek V3.1 | Medical | Open | 0.320 | 0.010 | ±0.003 |

| Kimi K2 | Medical | Open | 0.417 | 0.016 | ±0.004 |

| Llama 4 Maverick | Medical | Open | 0.316 | 0.012 | ±0.003 |

| Llama 4 Scout | Medical | Open | 0.323 | 0.011 | ±0.003 |

| Ministral 14B | Medical | Open | 0.391 | 0.014 | ±0.004 |

| Mistral Small 24B | Medical | Open | 0.365 | 0.015 | ±0.004 |

| Qwen3 235B | Medical | Open | 0.328 | 0.010 | ±0.003 |

References

- Bai, Y.; Jones, A.; Ndousse, K.; et al. Constitutional AI: Harmlessness from AI Feedback. arXiv 2022, arXiv:2212.08073. [Google Scholar] [CrossRef]

- Brown, T.; Mann, B.; Ryder, N.; et al. Language Models are Few-Shot Learners. Advances in Neural Information Processing Systems 2020, arXiv:2005.1416533. [Google Scholar]

- Datasaur. LLM Scorecard 2025. 2025. Available online: https://datasaur.ai/blog-posts/llm-scorecard-22-8-2025.

- Laxman, M M. Context Curves Behavior: Measuring AI Relational Dynamics with ΔRCI. Preprints.org 2026. [Google Scholar] [CrossRef]

- Mou, X.; et al. Decoupling Safety into Orthogonal Subspace. arXiv 2025, arXiv:2510.09004. [Google Scholar] [CrossRef]

- Nguyen, T.; et al. A Framework for Neural Topic Modeling with Mutual Information. Neurocomputing 2025. [Google Scholar] [CrossRef]

- NIH PMC. Empirically derived evaluation requirements for responsible deployments of AI in safety-critical settings. In npj Digital Medicine; 2025. [Google Scholar] [CrossRef]

- Ouyang, L.; Wu, J.; Jiang, X.; et al. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems 2022, arXiv:2203.0215535. [Google Scholar]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. Proceedings of EMNLP 2019, 2019. [Google Scholar]

- Singhal, K.; Azizi, S.; Tu, T.; et al. Large Language Models Encode Clinical Knowledge. Nature 2023, 620, 172–180. [Google Scholar] [CrossRef] [PubMed]

- Skinner, B. F. Verbal Behavior; Copley Publishing Group, 1957. [Google Scholar]

- Subramani, N.; Srinivasan, R.; Hovy, E. SimBA: Simplifying Benchmark Analysis. Findings of EMNLP 2025 2025. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; et al. Attention Is All You Need. Advances in Neural Information Processing Systems 2017, arXiv:1706.0376230. [Google Scholar]

- Xu, Y.; et al. Does Context Matter? ContextualJudgeBench for Evaluating LLM-based Judges. Proceedings of ACL 2025 2025. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).