Submitted:

12 February 2026

Posted:

13 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

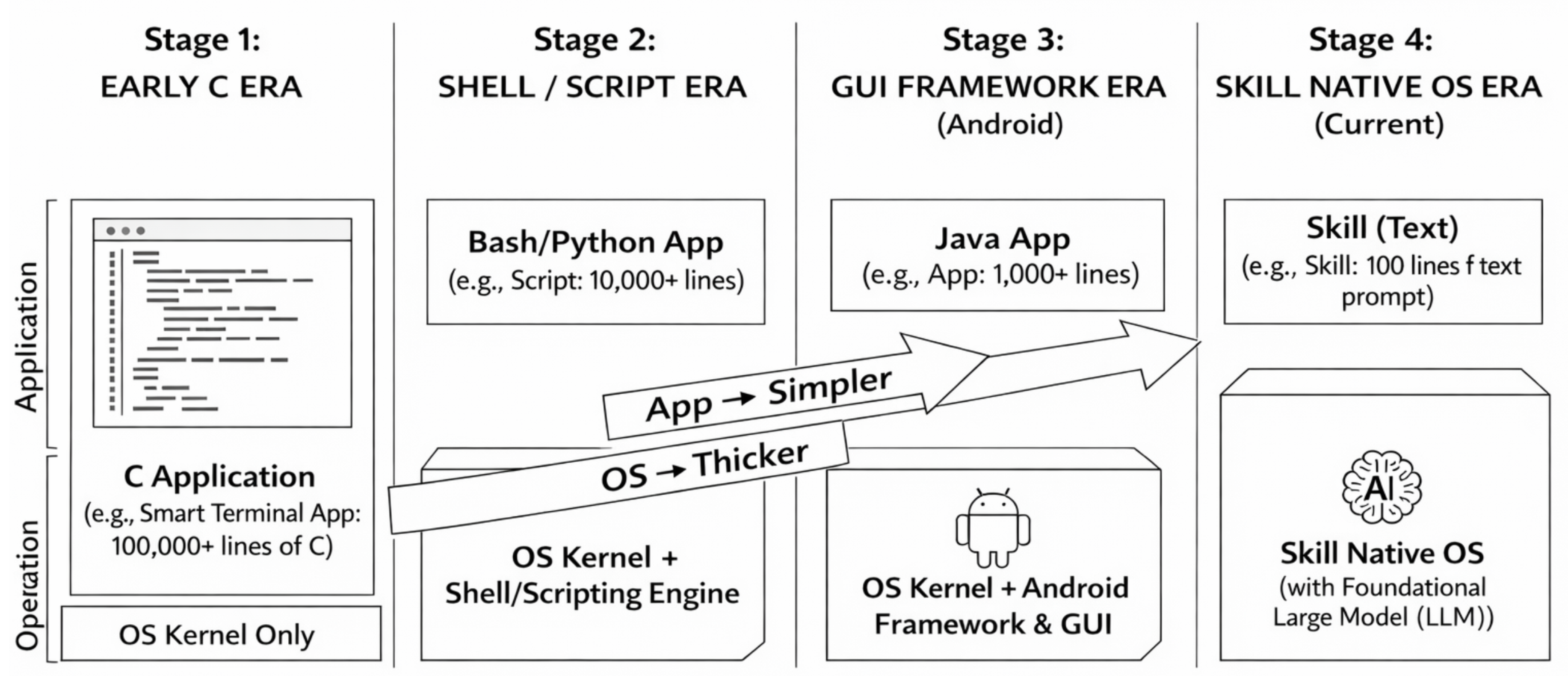

2.1. From Prompts to Skills

2.2. Skill Structure

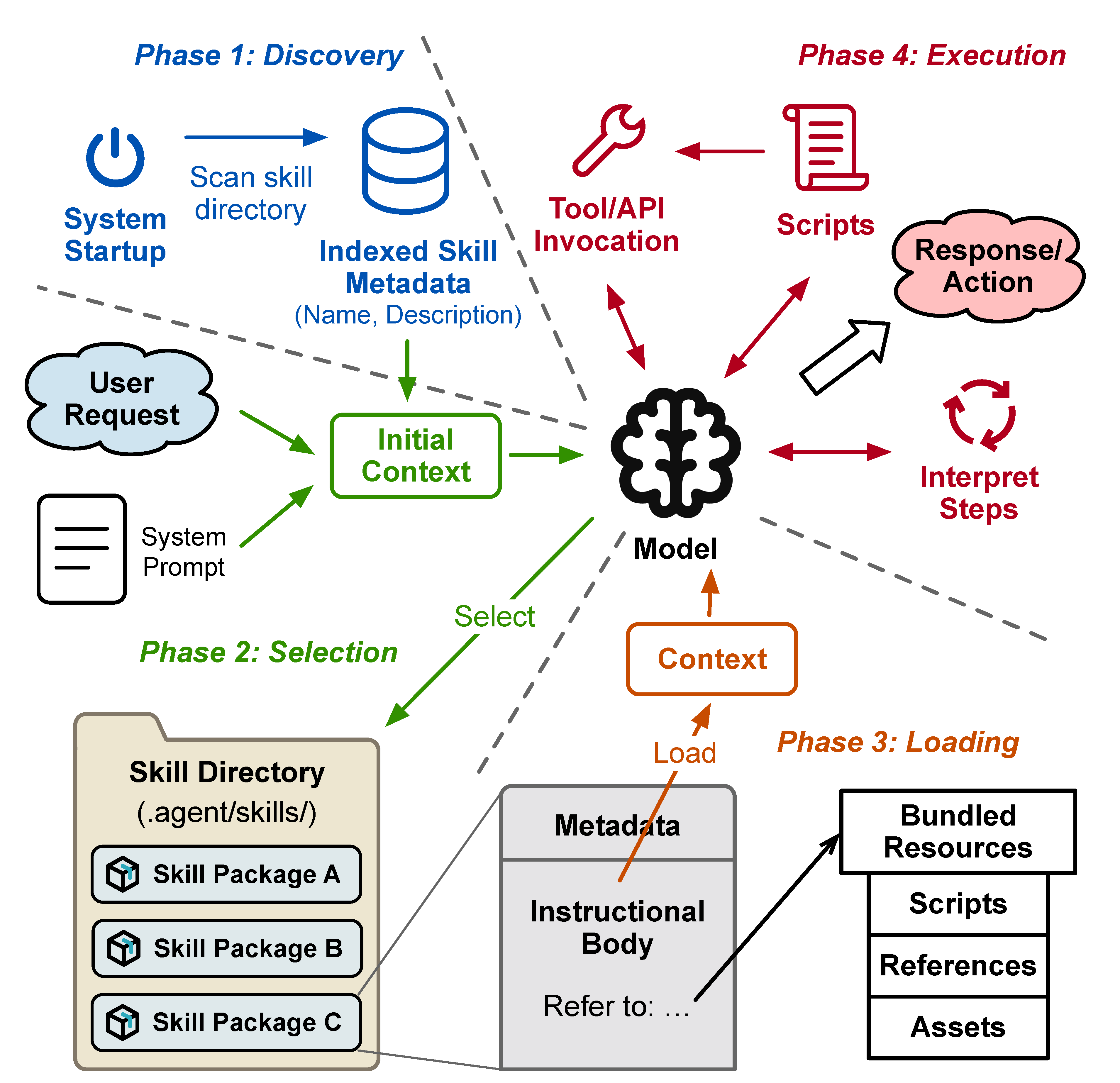

2.3. Skill Execution Model

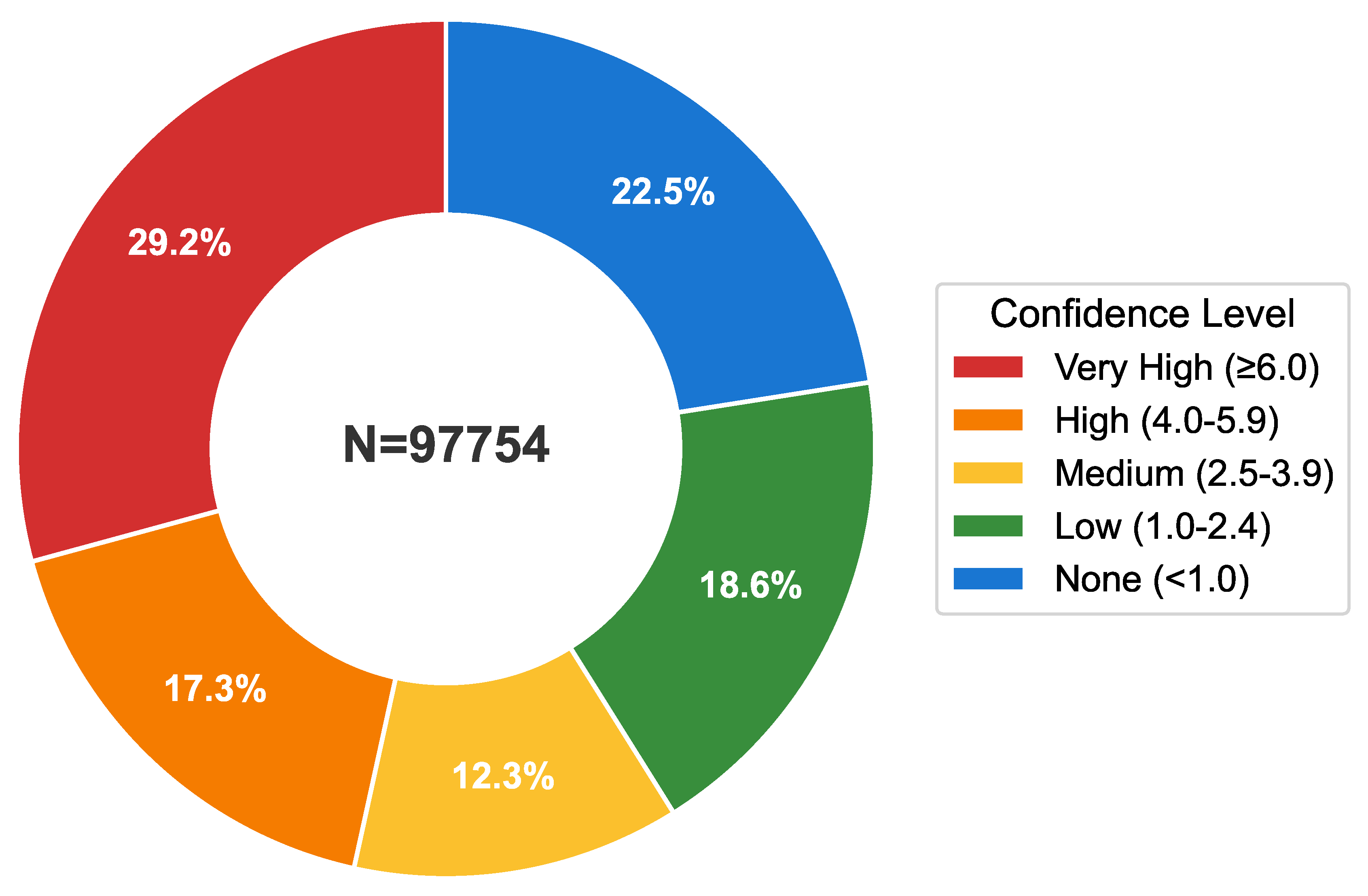

2.4. The Skill Ecosystem

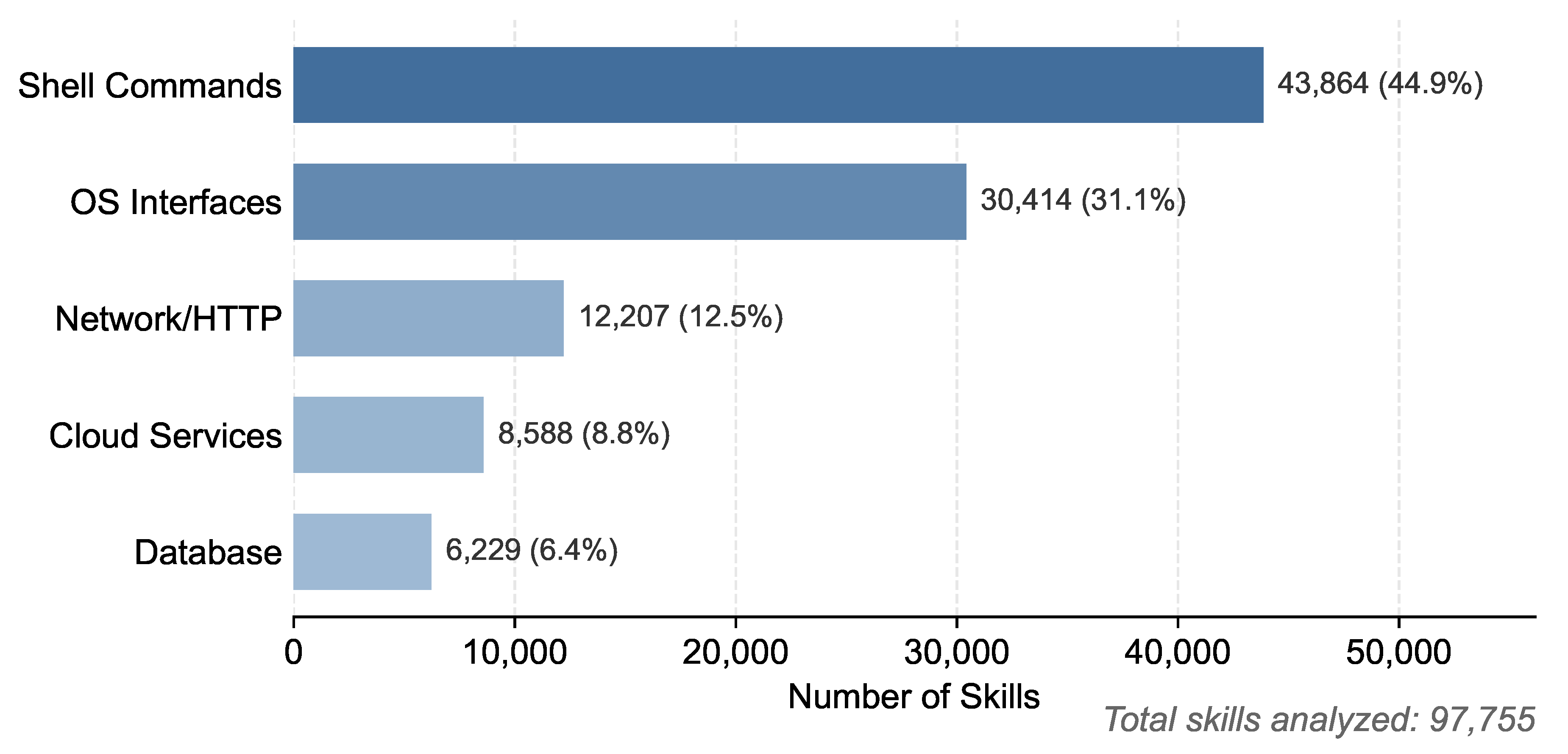

3. Skills in the Wild

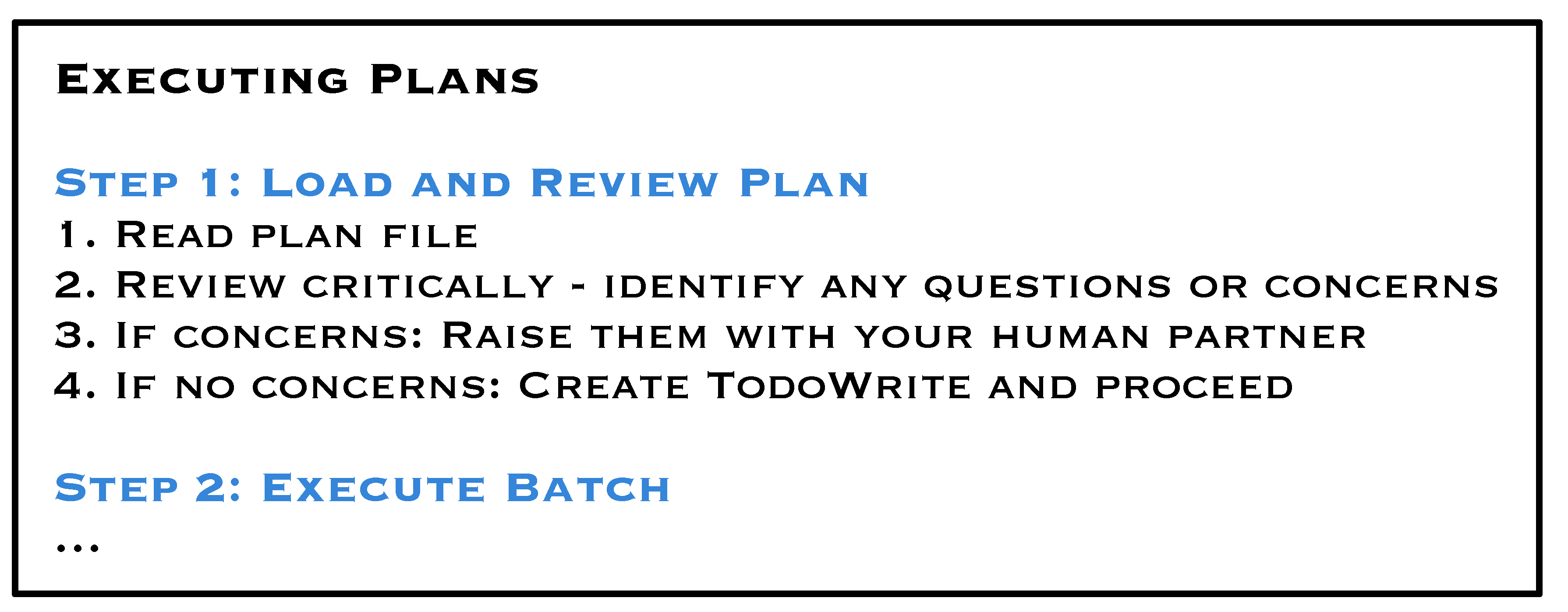

3.1. Skill as a Procedure

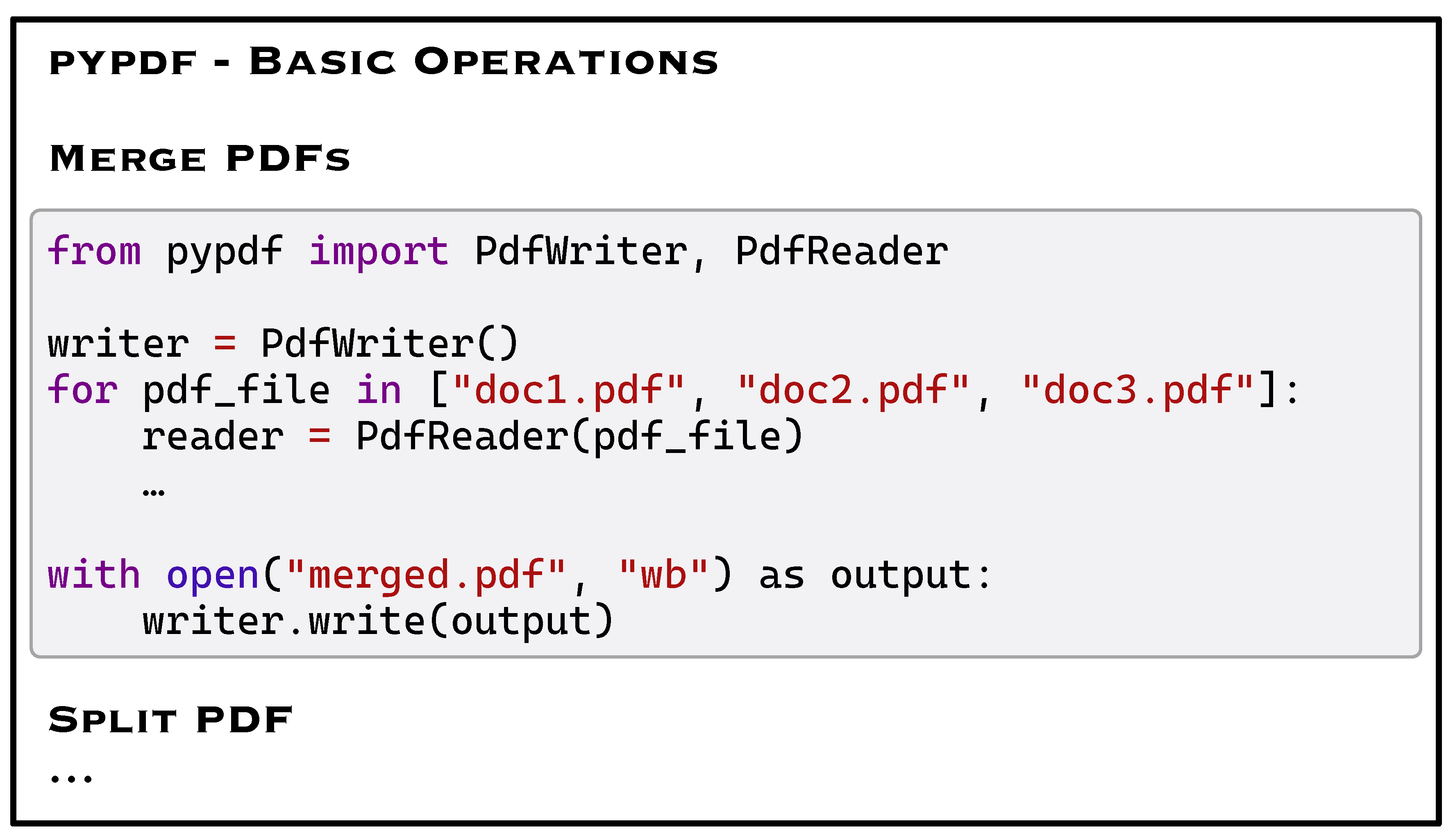

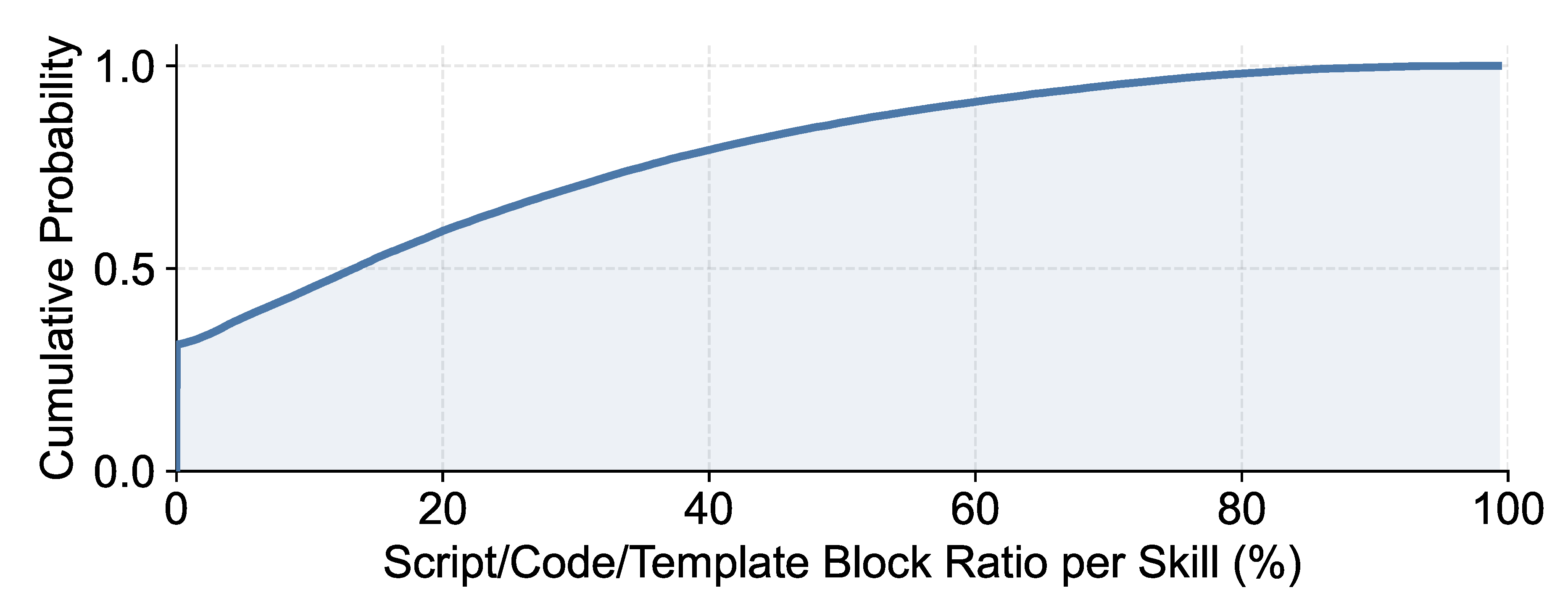

3.2. Semi-Deterministic Blocks in Skills

3.3. Execution Drift under Semantic Equivalence

3.4. Requirements without Strong Guarantees

3.5. Skill Dependence on Execution Environment

3.6. Shared Skills Between Sessions

4. Demands for Skill OS

4.1. Leveraging Skill Locality

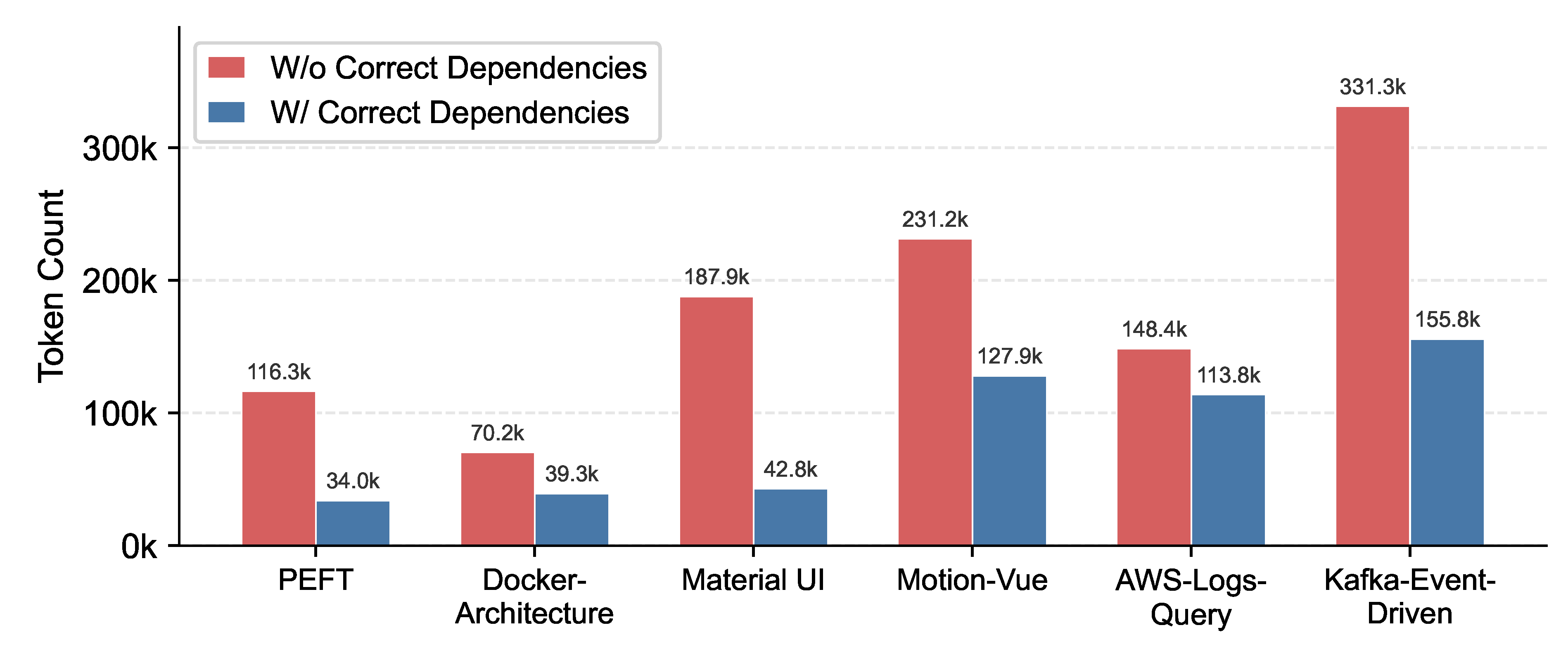

4.2. Dynamic Environment Construction

4.3. Global Management Across Sessions and Agents

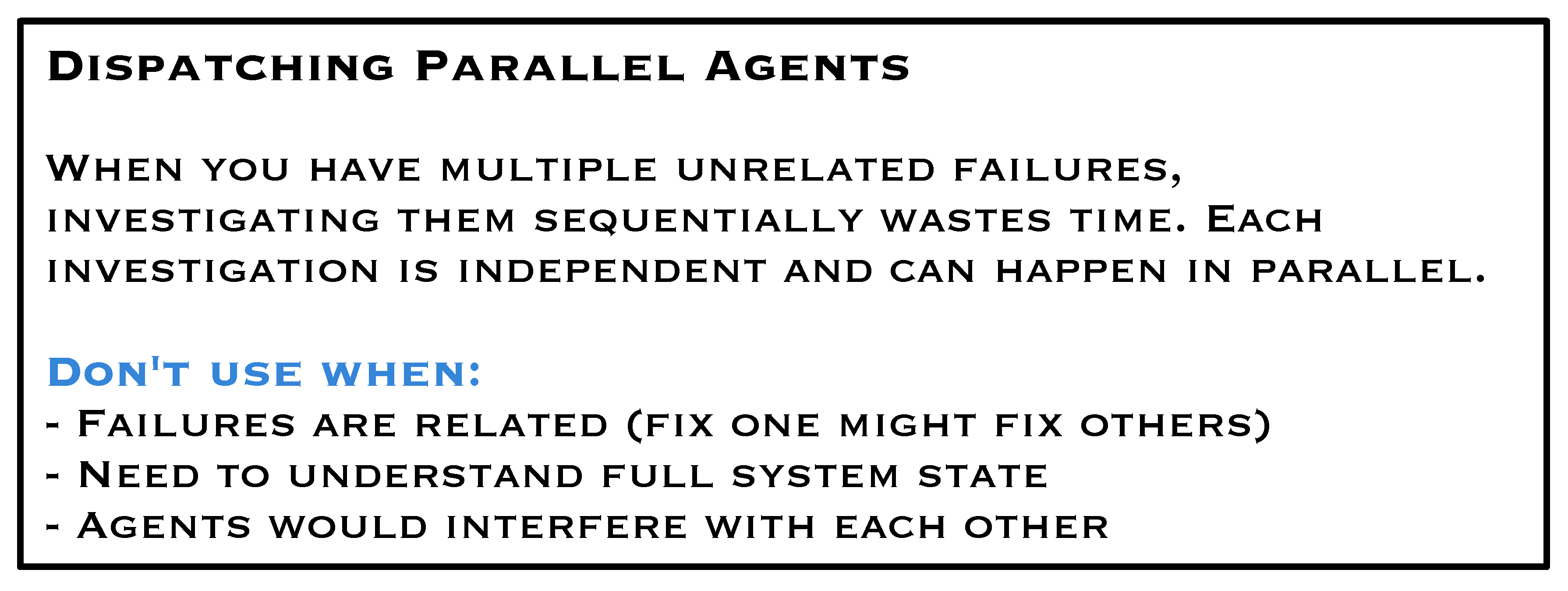

4.4. System-Level Fault Management

4.5. Security, Access Control, and Auditing

5. Discussion

6. Conclusion

References

- Agent Skills Initiative. Agent skills specification. https://agentskills.io/specification, 2024. Open SKILL.md format for agent skill portability. (accessed on 2026-02-06).

- agentskills. Agent skills: Specification and documentation. https://github.com/agentskills/agentskills, 2024. Open format for giving agents new capabilities; specification, documentation, and reference SDK. (accessed on 2026-02-06).

- Anthropic. Agent skills. 2025. Available online: https://platform.claude.com/docs/en/agents-and-tools/agent-skills/overview (accessed on 2026-02-02).

- Anthropic. Equipping agents for the real world with agent skills. 2025. Available online: https://www.anthropic.com/engineering/equipping-agents-for-the-real-world-with-agent-skills (accessed on 2026-02-06).

- Anthropic. Extend claude with skills. 2025. Available online: https://code.claude.com/docs/en/skills.

- Philip A. Bernstein, Vassos Hadzilacos, and Nathan Goodman. Concurrency Control and Recovery in Database Systems; Addison-Wesley, 1987.

- Rishi Bommasani, Drew A. Hudson, Ehsan Adeli, Russ Altman, Simran Arora, Sydney von Arx, et al. On the opportunities and risks of foundation models. arXiv preprint 2021, arXiv:2108.07258. [CrossRef]

- Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in Neural Information Processing Systems (NeurIPS) 2020, 33, 1877–1901.

- Mike Burrows. The chubby lock service for loosely-coupled distributed systems. In Proceedings of the 7th USENIX Symposium on Operating Systems Design and Implementation (OSDI), 2006; pp. pages 335–350.

- Wenhu Chen, Xueguang Ma, XuezhiWang,William Cohen,Wen-tau Yih, Daniel Fried, et al. Program of thoughts prompting: Disentangling computation from reasoning for numerical reasoning tasks. Transactions of the Association for Computational Linguistics (TACL) 2023, 11, 547–563.

- Coze. Plugin development guide. Coze bot plugins and skills. 2025. Available online: https://www.coze.cn/open/docs/guides/plugin (accessed on 2026-02-06).

- Cursor. Agent skills Cursor IDE skill mechanism. 2025. Available online: https://cursor.com/docs/context/skills.

- Peter J. Denning. Virtual memory. ACM Computing Surveys 1970, 2(3), 153–189. [CrossRef]

- Elmootazbellah N. Elnozahy, Lorenzo Alvisi, Yi-Min Wang, and David B. Johnson. A survey of rollback-recovery protocols in message-passing systems. ACM Computing Surveys 2002, 34(3), 375–408. [CrossRef]

- David F. Ferraiolo, Ravi Sandhu, Serban Gavrila, D. Richard Kuhn, and Ramaswamy Chandramouli. Proposed nist standard for role-based access control. ACM Transactions on Information and System Security (TISSEC) 2001, 4(3), 224–274. [CrossRef]

- Luyu Gao, Aman Madaan, Shuyan Zhou, Uri Alon, Pengfei Liu, Yiming Yang, et al. Pal: Program-aided language models. International Conference on Machine Learning (ICML), 2023.

- Google. Agent skills; Google Antigravity coding agent skills, 2025; Available online: https://antigravity.google/docs/skills (accessed on 2026-02-06).

- Jim Gray. The transaction concept: Virtues and limitations. Proceedings of the 7th International Conference on Very Large Data Bases (VLDB), pages 144–154, 1981.

- Zijian He, Reyna Abhyankar, Vikranth Srivatsa, and Yiying Zhang. Cognify: Supercharging gen-ai workflows with hierarchical autotuning. arXiv preprint 2025, arXiv:2502.08056. [CrossRef]

- Sirui Hong, Mingchen Zhuge, Jonathan Chen, Xiawu Zheng, Yuheng Cheng, Chaoyun Zhang, et al. Metagpt: Meta programming for a multi-agent collaborative framework. International Conference on Learning Representations (ICLR), 2024.

- Patrick Hunt, Mahadev Konar, Flavio P. Junqueira, and Benjamin Reed. Zookeeper: Wait-free coordination for internet-scale systems. USENIX Annual Technical Conference (ATC), 2010; pp. 145–158.

- Carlos E. Jimenez, John Yang, AlexanderWettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan. Swe-bench: Can language models resolve real-world github issues? International Conference on Learning Representations (ICLR) 2024.

- Omar Khattab, Arnav Singhvi, Paridhi Maheshwari, Zhiyuan Zhang, Keshav Santhanam, Sri Vardhamanan, et al. Dspy: Compiling declarative language model calls into self-improving pipelines. arXiv preprint 2023, arXiv:2310.03714. [CrossRef]

- Pavneet Singh Kochhar, Ferdian Thung, and David Lo. An empirical study of build failures in open source projects. Empirical Software Engineering 2016, 21(4), 1467–1503.

- Butler W. Lampson. Protection. In Proceedings of the 5th Princeton Conference on Information Sciences and Systems, 1971; pp. pages 437–443.

- Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in Neural Information Processing Systems (NeurIPS) 2020, 33, 9459–9474.

- Hao Li et al. Agentskillos: Build your agent from 90,000+ skills via skill retrieval and orchestration. https://github.com/ynulihao/AgentSkillOS, 2026. Skill tree, retrieval, and DAG-based orchestration over. (accessed on 2026-02-06).

- Hao Li, Chunjiang Mu, Jianhao Chen, Siyue Ren, Zhiyao Cui, Yiqun Zhang, Lei Bai, and Shuyue Hu. Leveraging, managing, and scaling the agent skill ecosystem. Preprint;AgentSkillOS: skill retrieval and orchestration at scale 2026.

- Zhenhao Li, Mingwen Zhang, Yingfei Xiong, et al. A large-scale study of api breaking changes in the wild. In Empirical Software Engineering; 2023.

- Pengfei Liu, Weizhe Yuan, Jinlan Fu, Zhengbao Jiang, Hiroaki Hayashi, and Graham Neubig. Pre-train, prompt, and predict: A systematic survey of prompting methods in natural language processing. ACM Computing Surveys 2023, arXiv:2107.1358655(9), 1–35. [CrossRef]

- Xiao Liu, Hao Lu, Yuxiang Zhang, Xiao Liu, Zhuohan Qian, Yansong Zhu, et al. Agentbench: Evaluating llms as agents. International Conference on Learning Representations (ICLR), 2024.

- Zibin Liu, Cheng Zhang, Xi Zhao, Yunfei Feng, Bingyu Bai, Dahu Feng, Erhu Feng, Yubin Xia, and Haibo Chen. Beyond training: Enabling self-evolution of agents with MOBIMEM. arXiv preprint 2025, arXiv:2512.15784. [CrossRef]

- Grégoire Mialon, Roberto Dessì, Maria Lomeli, Carsten Eickhoff, Thomas Scialom, Zihan Wang, et al. Augmented language models: A survey. Transactions on Machine Learning Research (TMLR), 2023.

- numman-ali. numman-ali. Openskills: Universal skills loader for ai coding agents. https://github.com/numman-ali/ openskills, 2026. CLI to install and load SKILL.md across Claude Code, Cursor, Windsurf, Aider, Codex; same format as Claude Code 2026. (accessed on 2026-02-06).

- OpenAI. Function calling and other api updates. 2023. Available online: https://openai.com/blog/function-calling-and-other-api-updates (accessed on 2026-02-06).

- OpenAI. Gpt-4 technical report. arXiv preprint 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- OpenAI. Openai codex cli documentation. 2025. Available online: https://developers.openai.com/codex (accessed on 2026-02-06).

- Long Ouyang, JeffreyWu, Xu Jiang, Diogo Almeida, CarrollWainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, et al. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems (NeurIPS) 2022, volume 35, pages 27730–27744.

- Yujia Qin, Yining Ye, Lei Fang, Haoming Zhang,WenhuiWang, Bin Qian, et al. Toolllm: Facilitating large language models to master 16000+ real-world apis. arXiv preprint 2023, arXiv:2307.16789.

- Dennis M. Ritchie and Ken Thompson. The unix time-sharing system. Communications of the ACM 1974, 17(7), 365–375. [CrossRef]

- Jerome H. Saltzer and Michael D. Schroeder. The protection of information in computer systems. Proceedings of the IEEE 1975, 63(9), 1278–1308. [CrossRef]

- Ravi S. Sandhu, Edward J. Coyne, Hal L. Feinstein, and Charles E. Youman. Role-based access control models. IEEE Computer 1996, 29(2), 38–47. [CrossRef]

- Timo Schick, Jane Dwivedi-Yu, Roberto Dessì, Roberta Raileanu, Maria Lomeli, Jonas Hamburger, et al. Toolformer: Language models can teach themselves to use tools. Advances in Neural Information Processing Systems (NeurIPS) 2023, 36.

- Yongliang Shen, Kaitao Song, Xu Tan, Dong Zhang,Weiming Lu, and Yueting Zhuang. Hugginggpt: Solving ai tasks with chatgpt and its friends in hugging face. Advances in Neural Information Processing Systems (NeurIPS) 2023, 36.

- Noah Shinn, Federico Cassano, Emmanuel Berman, Ashwin Gopinath, Karthik Narasimhan, and Shunyu Yao. Reflexion: Language agents with verbal reinforcement learning. Advances in Neural Information Processing Systems (NeurIPS) 2024, 37.

- GuanzhiWang, Yuqi Xie, Yunfan Jiang, Ajay Mandlekar, Angel Fan, Anima Anandkumar, et al. Voyager: An open-ended embodied agent with large language models. Transactions on Machine Learning Research (TMLR), 2024.

- Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed Chi, Sharan Narang, et al. Self-consistency improves chain of thought reasoning in language models. International Conference on Learning Representations (ICLR), 2023.

- JasonWei, XuezhiWang, Dale Schuurmans, Maarten Bosma, Brian Ichter, Fei Xia, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems (NeurIPS) 2022, 35, 24824–24837.

- Qingyun Wu, Gagan Bansal, Jieyu Zhang, Yiran Wu, Beibin Li, Erkang Zhu, et al. Autogen: Enabling next-gen llm applications via multi-agent conversation. arXiv preprint 2023, arXiv:2308.08155.

- John Yang, Carlos E. Jimenez, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan. Swe-agent: Agent-computer interfaces enable automated software engineering. arXiv preprint 2024, arXiv:2405.15793.

- Shunyu Yao, Dian Yu, Jeffrey Zhao, Izhak Shafran, Thomas L. Griffiths, Yuan Cao, and Karthik Narasimhan. Tree of thoughts: Deliberate problem solving with large language models. Advances in Neural Information Processing Systems (NeurIPS) 2023, 36.

- Shunyu Yao, Jeffrey Zhao, Dian Yu, Nan Du, Izhak Shafran, Karthik Narasimhan, and Yuan Cao. React: Synergizing reasoning and acting in language models. International Conference on Learning Representations (ICLR), 2023.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.