Submitted:

11 February 2026

Posted:

13 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Proposed Method

2.1. Deep Image Prior (DIP)

2.2. Backbone of Deep Image Prior (DIP)

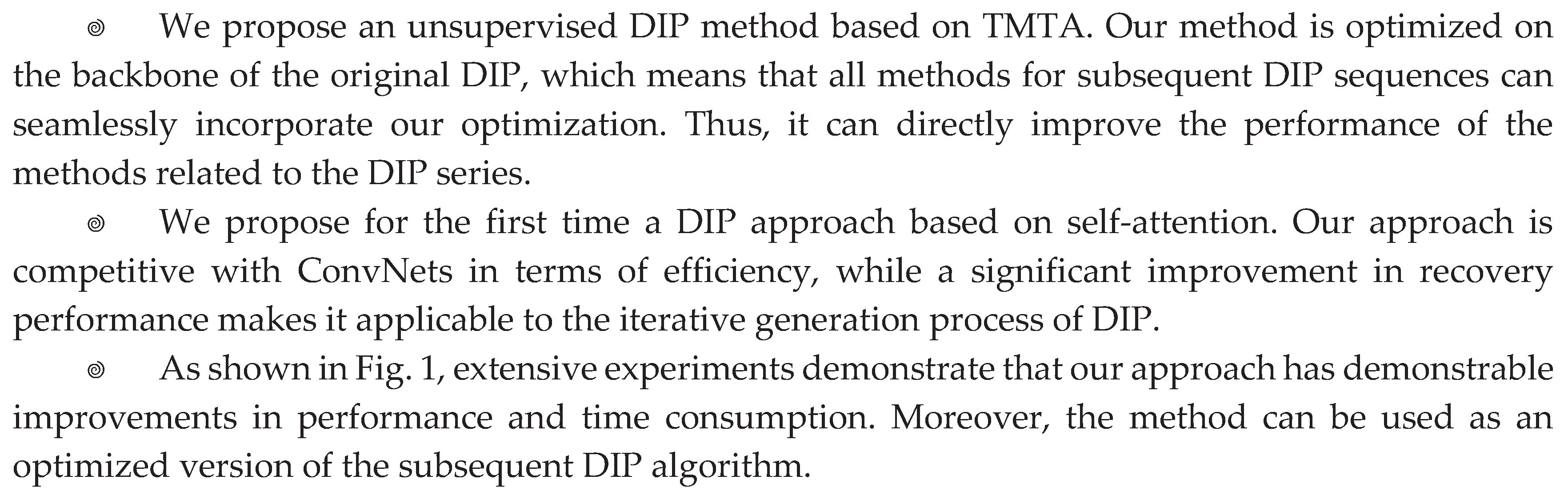

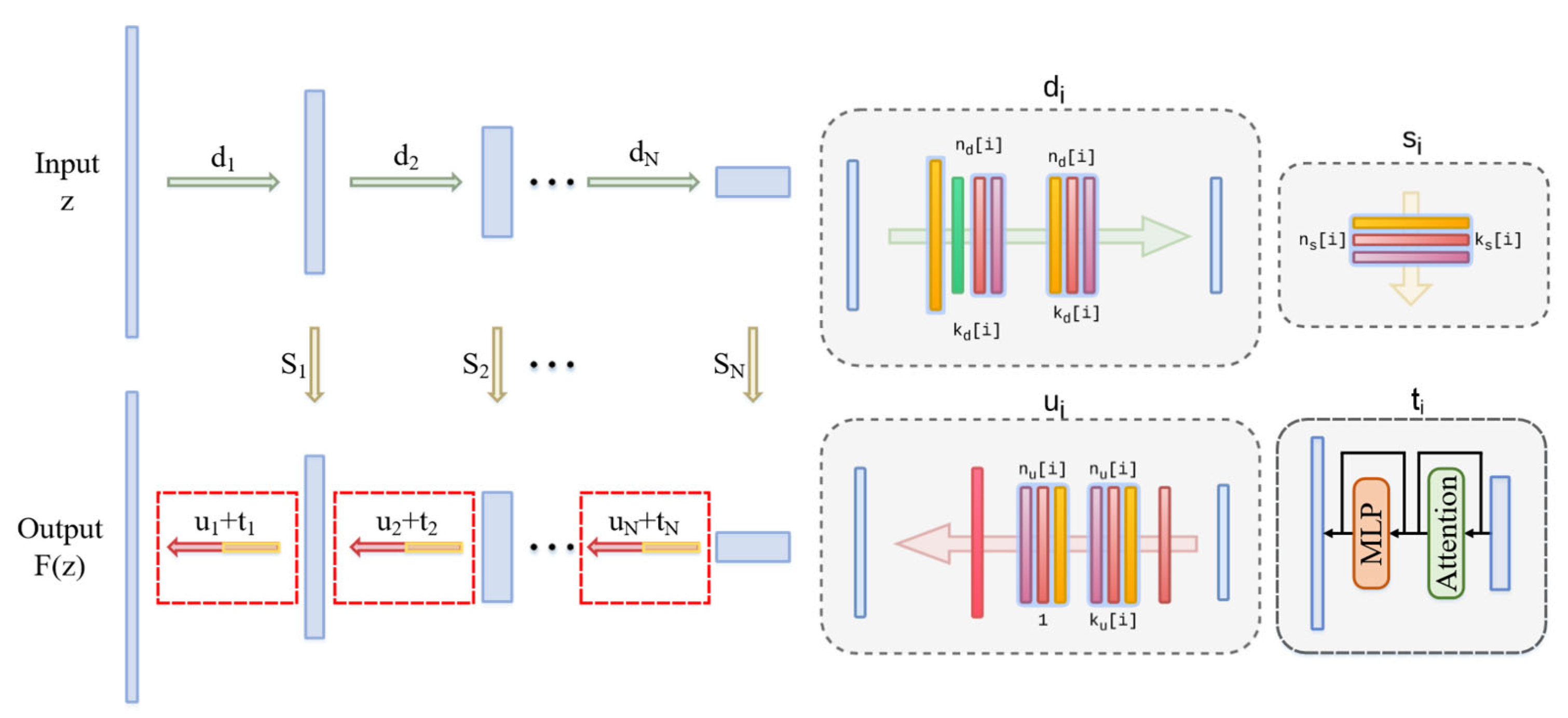

2.3. Overview of TM-DIP

2.4. Triple Multi-Head Transposed Attention

2.5. Efficiency of TMTA

3. Experiments

3.1. Experimental Setup

3.2. Comparison with DIP on Denoising and Generic Reconstruction

3.3. Comparison with DIP on Super-resolution

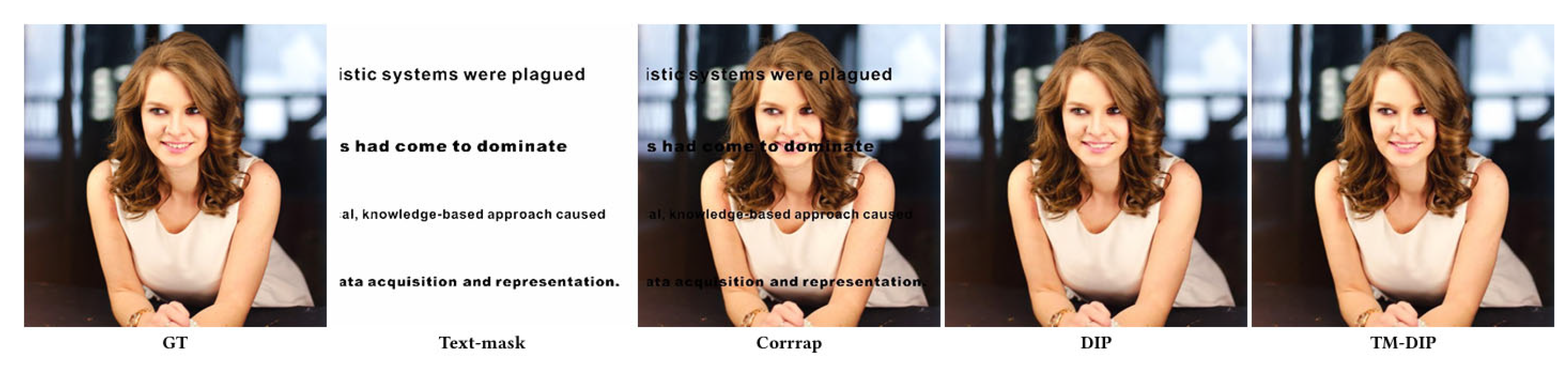

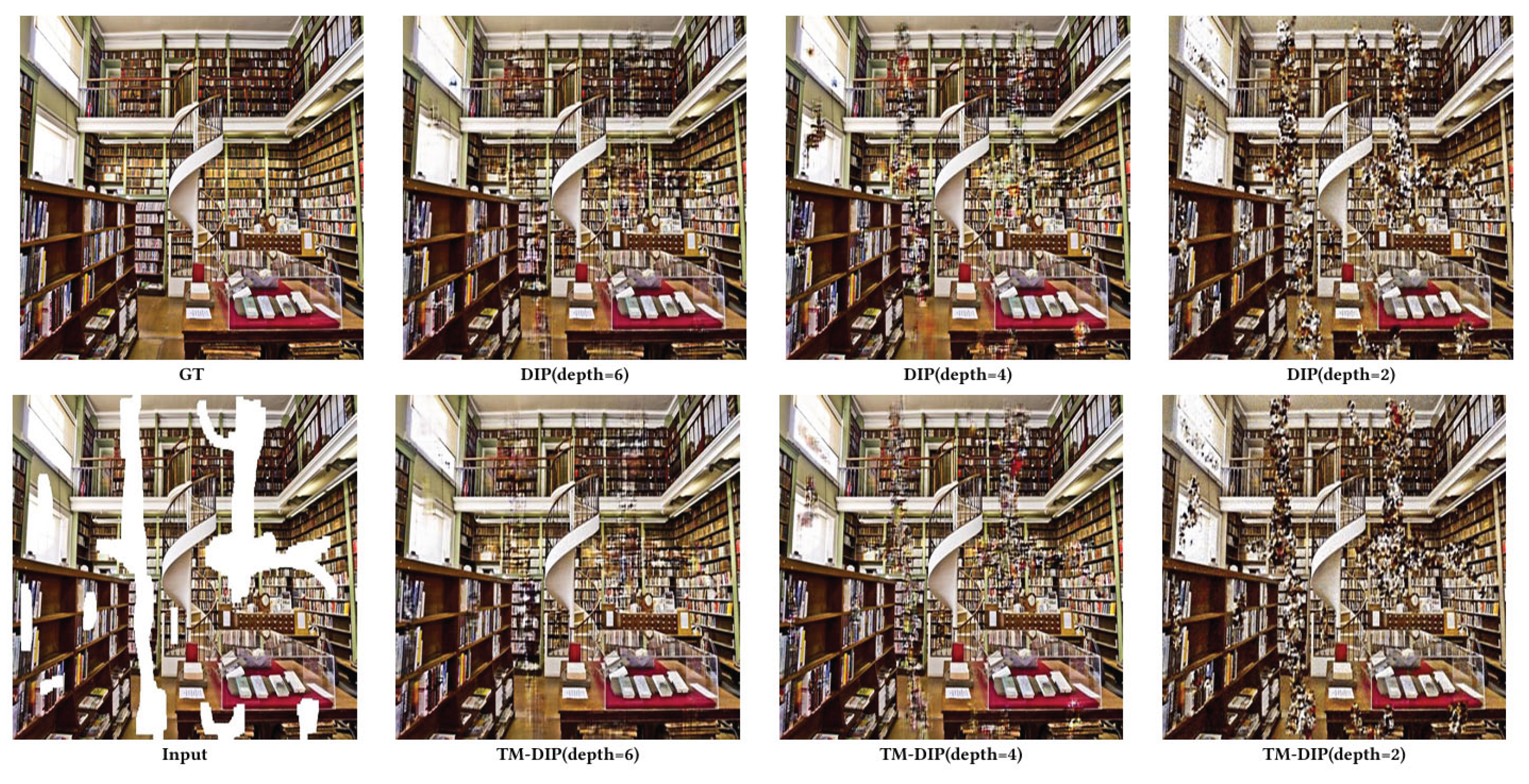

3.4. Comparison with DIP on Inpainting

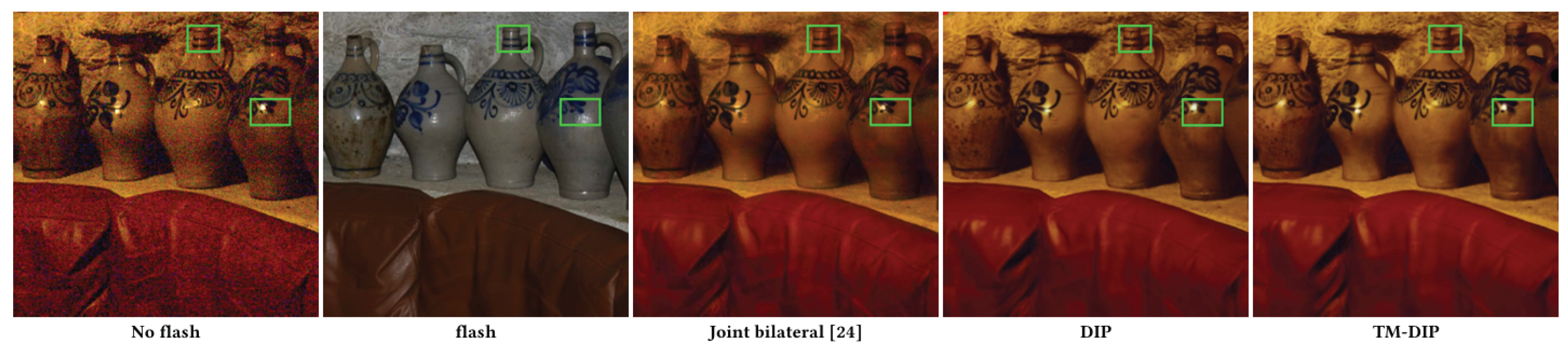

3.5. Comparison with DIP on Flash-no Flash Reconstruction

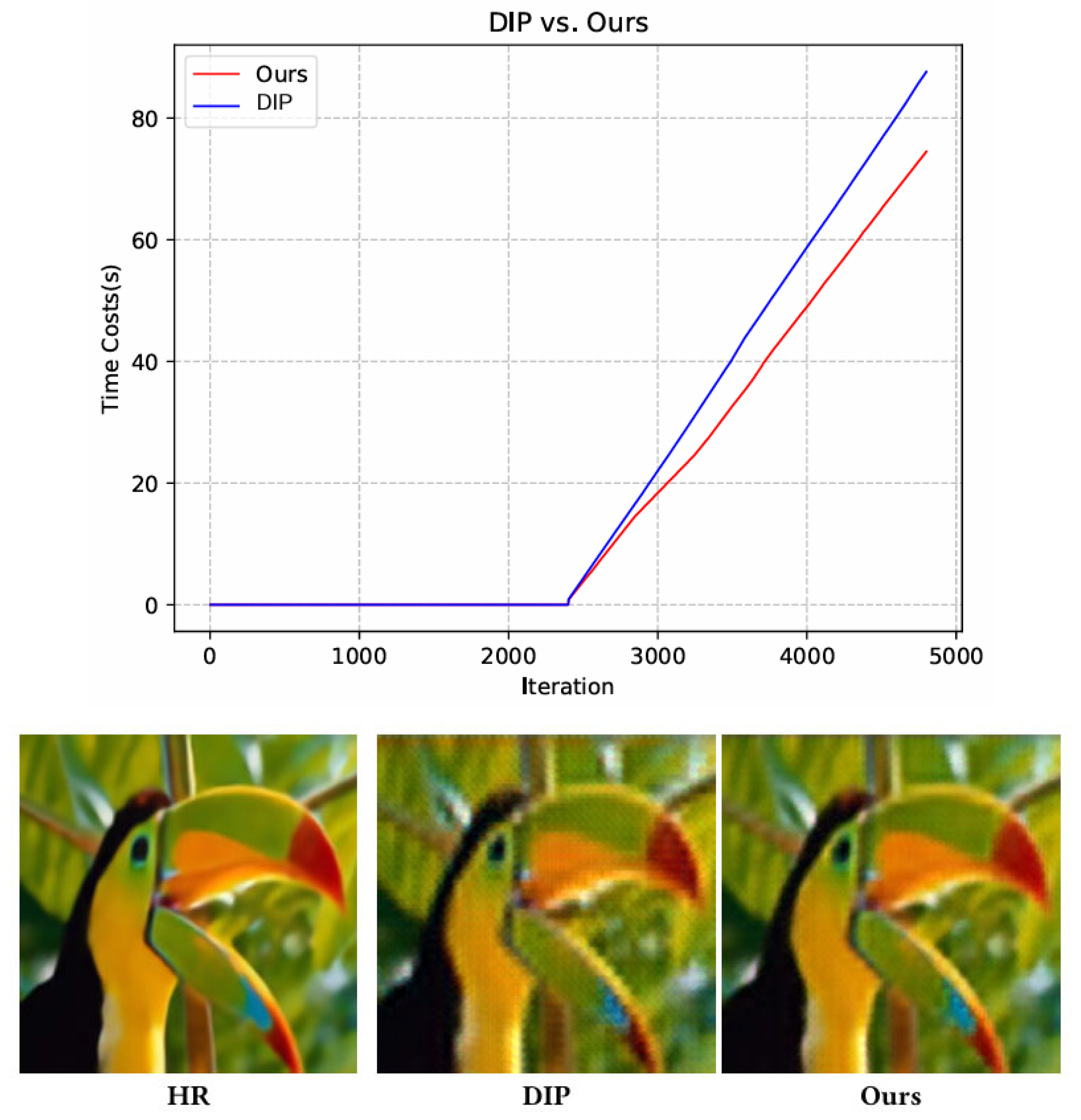

3.6. Comparison with DIP on Time cost

4. Conclusions

Funding

Conflicts of Interest

References

- Abdelrahman Abdelhamed, Stephen Lin, and Michael S Brown. 2018. A high-quality denoising dataset for smartphone cameras. In Proceedings of the IEEE conference on computer vision and pattern recognition. 1692–1700.

- Saeed Anwar, Salman Khan, and Nick Barnes. 2020. A deep journey into super-resolution: A survey. ACM Computing Surveys (CSUR) 53, 3 (2020), 1–34.

- Andrea Asperti and Valerio Tonelli. 2023. Comparing the latent space of generative models. Neural Computing and Applications 35, 4 (2023), 3155–3172.

- Harold C Burger, Christian J Schuler, and Stefan Harmeling. 2012. Image denoising: Can plain neural networks compete with BM3D. In 2012 IEEE conference on computer vision and pattern recognition. IEEE, 2392–2399.

- Yunjin Chen and Thomas Pock. 2016. Trainable nonlinear reaction diffusion: A flexible framework for fast and effective image restoration. IEEE transactions on pattern analysis and machine intelligence 39, 6 (2016), 1256–1272.

- Shen Cheng, Yuzhi Wang, Haibin Huang, Donghao Liu, Haoqiang Fan, and Shuaicheng Liu. 2021. Nbnet: Noise basis learning for image denoising with subspace projection. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 4896–4906.

- Xuan Ding, Hongchao Fan, and Jianya Gong. 2021. Towards generating network of bikeways from Mapillary data. Computers, Environment and Urban Systems 88 (2021), 101632.

- Xiaohan Ding, Xiangyu Zhang, Ningning Ma, Jungong Han, Guiguang Ding, and Jian Sun. 2021. Repvgg: Making vgg-style convnets great again. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 13733–13742.

- Chao Dong, Chen Change Loy, Kaiming He, and Xiaoou Tang. 2016. Image super-resolution using deep convolutional networks. 38, 2 (2016), 295–307.

- Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, et al. 2020. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020).

- Alexey Dosovitskiy, Thomas Brox, et al. 2015. Inverting convolutional networks with convolutional networks. arXiv preprint arXiv:1506.02753 4, 2 (2015), 3.

- Daniel Glasner, Shai Bagon, and Michal Irani. 2009. Super-resolution from a single image. In 2009 IEEE 12th international conference on computer vision. IEEE, 349–356.

- Ian Goodfellow, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. 2020. Generative adversarial networks. Commun. ACM 63, 11 (2020), 139–144.

- Shi Guo, Zifei Yan, Kai Zhang, Wangmeng Zuo, and Lei Zhang. 2019. Toward convolutional blind denoising of real photographs. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 1712–1722.

- Xinhui Kang, Shin’ya Nagasawa, Yixiang Wu, and Xingfu Xiong. 2023. Emotional design of bamboo chair based on deep convolution neural network and deep convolution generative adversarial network. Journal of Intelligent & Fuzzy Systems Preprint (2023), 1–13.

- Farhan Khawar, Leonard Poon, and Nevin L Zhang. 2020. Learning the structure of auto-encoding recommenders. In Proceedings of The Web Conference 2020. 519–529.

- Alex Krizhevsky, Ilya Sutskever, and Geoffrey E Hinton. 2012. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems 25 (2012).

- Wei-Sheng Lai, Jia-Bin Huang, Narendra Ahuja, and Ming-Hsuan Yang. 2017. Deep laplacian pyramid networks for fast and accurate super-resolution. In Proceedings of the IEEE conference on computer vision and pattern recognition. 624–632.

- Christian Ledig, Lucas Theis, Ferenc Huszár, Jose Caballero, Andrew Cunningham, Alejandro Acosta, Andrew Aitken, Alykhan Tejani, Johannes Totz, Zehan Wang, et al. 2019. Photo-realistic single image super-resolution using a generative adversarial network. In CVPR. 4681–4690.

- Bee Lim, Sanghyun Son, Heewon Kim, Seungjun Nah, and Kyoung Mu Lee. 2017. Enhanced deep residual networks for single image super-resolution. In CVPRW. 136–144.

- Yang Liu, Zhenyue Qin, Saeed Anwar, Pan Ji, Dongwoo Kim, Sabrina Caldwell, and Tom Gedeon. 2021. Invertible denoising network: A light solution for real noise removal. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 13365–13374.

- Aravindh Mahendran and Andrea Vedaldi. 2015. Understanding deep image representations by inverting them. In Proceedings of the IEEE conference on computer vision and pattern recognition. 5188–5196.

- Xiaojiao Mao, Chunhua Shen, and Yu-Bin Yang. 2016. Image restoration using very deep convolutional encoder-decoder networks with symmetric skip connections. Advances in neural information processing systems 29 (2016).

- Georg Petschnigg, Richard Szeliski, Maneesh Agrawala, Michael Cohen, Hugues Hoppe, and Kentaro Toyama. 2004.Digital photography with flash and no-flash image pairs. ACM transactions on graphics (TOG) 23, 3 (2004), 664–672.

- Assaf Shocher, Nadav Cohen, and Michal Irani. 2018. “zero-shot” super-resolution using deep internal learning. In Proceedings of the IEEE conference on computer vision and pattern recognition. 3118–3126.

- Ying Tai, Jian Yang, Xiaoming Liu, and Chunyan Xu. 2017. Memnet: A persistent memory network for image restoration. In Proceedings of the IEEE international conference on computer vision. 4539–4547.

- Dmitry Ulyanov, Andrea Vedaldi, and Victor Lempitsky. 2018. Deep image prior. In Proceedings of the IEEE conference on computer vision and pattern recognition. 9446–9454.

- Dmitry Ulyanov, Andrea Vedaldi, and Victor Lempitsky. 2018. Deep image prior. In Proceedings of the IEEE conference on computer vision and pattern recognition. 9446–9454.

- Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. Advances in neural information processing systems 30 (2017).

- Shiping Wen, Weiwei Liu, Yin Yang, Tingwen Huang, and Zhigang Zeng. 2018. Generating realistic videos from keyframes with concatenated GANs. IEEE Transactions on Circuits and Systems for Video Technology 29, 8 (2018), 2337–2348.

- Bichen Wu, Xiaoliang Dai, Peizhao Zhang, Yanghan Wang, Fei Sun, Yiming Wu, Yuandong Tian, Peter Vajda, Yangqing Jia, and Kurt Keutzer. 2019. Fbnet: Hardware-aware efficient convnet design via differentiable neural architecture search. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 10734–10742.

- Bin Xia, Yucheng Hang, Yapeng Tian, Wenming Yang, Qingmin Liao, and Jie Zhou. 2022. Efficient Non-Local Contrastive Attention for Image Super-Resolution. AAAI (2022).

- Syed Waqas Zamir, Aditya Arora, Salman Khan, Munawar Hayat, Fahad Shahbaz Khan, and Ming-Hsuan Yang. 2022. Restormer: Efficient transformer for high-resolution image restoration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 5728–5739.

- Syed Waqas Zamir, Aditya Arora, Salman Khan, Munawar Hayat, Fahad Shahbaz Khan, Ming-Hsuan Yang, and Ling Shao. 2020. Learning enriched features for real image restoration and enhancement. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part XXV 16. Springer, 492–511.

- Syed Waqas Zamir, Aditya Arora, Salman Khan, Munawar Hayat, Fahad Shahbaz Khan, Ming-Hsuan Yang, and Ling Shao. 2021. Multi-stage progressive image restoration. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 14821–14831.

- Kai Zhang, Wangmeng Zuo, Yunjin Chen, Deyu Meng, and Lei Zhang. 2017. Beyond a gaussian denoiser: Residual learning of deep cnn for image denoising. IEEE transactions on image processing 26, 7 (2017), 3142–3155.

- Yulun Zhang, Kunpeng Li, Kai Li, Lichen Wang, Bineng Zhong, and Yun Fu. 2018. Image super-resolution using very deep residual channel attention networks. In ECCV. 286–301.

- Yulun Zhang, Yapeng Tian, Yu Kong, Bineng Zhong, and Yun Fu. 2018. Residual dense network for image superresolution. In CVPR. 2472–2481.

- Yulun Zhang, Yapeng Tian, Yu Kong, Bineng Zhong, and Yun Fu. 2020. Residual dense network for image restoration. IEEE Transactions on Pattern Analysis and Machine Intelligence 43, 7 (2020), 2480–2495.

- Yuqian Zhou, Jianbo Jiao, Haibin Huang, Yang Wang, Jue Wang, Honghui Shi, and Thomas Huang. 2020. When awgnbased denoiser meets real noises. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 34. 13074–13081.

| Baboon | Barbara | Bridge | Coastguard | Comic | Face | Flowers | Foreman | Lenna | Man | Monarch | Pepper | Ppt3 | Zebra | Avg | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| No prior | 22.24 | 24.89 | 23.94 | 24.62 | 21.06 | 29.99 | 23.75 | 29.01 | 28.23 | 24.84 | 25.76 | 28.71 | 20.26 | 21.69 | 24.93 |

| Bicubic | 22.44 | 24.15 | 24.47 | 25.53 | 21.59 | 31.34 | 25.33 | 29.45 | 29.84 | 25.7 | 27.45 | 30.63 | 21.78 | 24.01 | 26.05 |

| TV prior [22] | 22.34 | 24.78 | 24.46 | 25.78 | 21.95 | 31.34 | 25.91 | 30.63 | 29.76 | 25.94 | 28.46 | 31.32 | 22.75 | 24.52 | 26.42 |

| Glasner et al.[12] | 22.44 | 25.38 | 24.73 | 25.38 | 21.98 | 31.09 | 25.54 | 30.4 | 30.48 | 26.33 | 28.22 | 32.02 | 22.16 | 24.34 | 26.46 |

| DIP | 22.29 | 25.53 | 24.38 | 25.81 | 22.18 | 31.02 | 26.14 | 31.66 | 30.83 | 26.09 | 29.98 | 32.08 | 24.38 | 25.71 | 27.00 |

| Ours | 22.31 | 25.63 | 24.45 | 25.94 | 22.29 | 31.17 | 26.28 | 31.73 | 30.99 | 26.14 | 30.12 | 32.21 | 24.43 | 25.85 | 27.11 |

| SRResNet-MSE [19] | 23.00 | 26.08 | 25.52 | 26.31 | 23.44 | 32.71 | 28.13 | 33.8 | 32.42 | 27.43 | 32.82 | 34.28 | 26.56 | 26.95 | 28.53 |

| LapSRN [18] | 22.83 | 25.69 | 25.36 | 26.21 | 22.9 | 32.62 | 27.54 | 33.59 | 31.98 | 27.27 | 31.62 | 33.88 | 25.36 | 26.98 | 28.13 |

| Baboon | Barbara | Bridge | Coastguard | Comic | Face | Flowers | Foreman | Lenna | Man | Monarch | Pepper | Ppt3 | Zebra | Avg | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| No prior | 21.09 | 23.04 | 21.78 | 23.63 | 18.65 | 27.84 | 21.05 | 25.62 | 25.42 | 22.54 | 22.91 | 25.34 | 18.15 | 18.85 | 22.56 |

| Bicubic | 21.28 | 23.44 | 22.24 | 23.65 | 19.25 | 28.79 | 22.06 | 25.37 | 26.27 | 23.06 | 23.18 | 26.55 | 18.62 | 19.59 | 23.09 |

| TV prior[22] | 21.3 | 23.72 | 22.3 | 23.82 | 19.5 | 28.84 | 22.5 | 26.07 | 26.74 | 23.53 | 23.71 | 27.56 | 19.34 | 19.89 | 23.48 |

| SelfExSR[25] | 21.37 | 23.9 | 22.28 | 24.17 | 19.79 | 29.48 | 22.93 | 27.01 | 27.72 | 23.83 | 24.02 | 28.63 | 20.09 | 20.25 | 23.96 |

| DIP | 21.38 | 23.94 | 22.2 | 24.21 | 19.86 | 29.52 | 22.86 | 27.87 | 27.93 | 23.57 | 24.86 | 29.18 | 20.12 | 20.62 | 24.15 |

| ours | 21.49 | 24.07 | 22.31 | 24.42 | 19.97 | 29.71 | 22.95 | 27.94 | 28.06 | 23.75 | 24.98 | 29.31 | 20.15 | 20.71 | 24.37 |

| LapSRN [18] | 21.51 | 24.21 | 22.77 | 24.10 | 20.06 | 29.85 | 23.31 | 28.13 | 28.22 | 24.20 | 24.97 | 29.22 | 20.13 | 20.28 | 24.35 |

| Baby | Bird | Butterfly | Head | Woman | Avg | |

|---|---|---|---|---|---|---|

| No prior | 30.16 | 27.67 | 19.82 | 29.98 | 25.18 | 26.56 |

| Bicubic | 31.78 | 30.2 | 22.13 | 31.34 | 26.75 | 28.44 |

| TV prior[22] | 31.21 | 30.43 | 24.38 | 31.34 | 26.93 | 28.85 |

| SelfExSR [25] | 32.24 | 31.1 | 22.36 | 31.69 | 26.85 | 28.84 |

| DIP | 31.49 | 31.8 | 26.23 | 31.04 | 28.93 | 29.89 |

| ours | 32.25 | 31.95 | 26.45 | 31.17 | 29.21 | 30.21 |

| LapSRN [18] | 33.55 | 33.76 | 27.28 | 32.62 | 30.72 | 31.58 |

| SRResNet-MSE [19] | 33.66 | 35.1 | 28.41 | 32.73 | 30.6 | 32.1 |

| Baby | Bird | Butterfly | Head | Woman | Avg | |

|---|---|---|---|---|---|---|

| No prior | 26.28 | 24.03 | 17.64 | 27.94 | 21.37 | 23.45 |

| Bicubic | 27.28 | 25.28 | 17.74 | 28.82 | 22.74 | 24.37 |

| TV prior[22] | 27.93 | 25.82 | 18.4 | 28.87 | 23.36 | 24.87 |

| SelfExSR [25] | 28.45 | 26.48 | 18.8 | 29.36 | 24.05 | 25.42 |

| DIP | 28.28 | 27.09 | 20.02 | 29.55 | 24.5 | 25.88 |

| ours | 28.41 | 27.22 | 20.13 | 29.71 | 24.67 | 26.05 |

| LapSRN [18] | 28.88 | 27.1 | 19.97 | 29.76 | 24.79 | 26.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).