1.10. Justification Against Alternatives

Logistic Regression: Insufficient for modeling complex feature interactions.

Random Forest / XGBoost: Although effective for tabular data, they lack native support for continuous embedding fusion.

Graph Neural Networks (GNNs): While suitable for code graphs, they introduce high computational overhead and are less straightforward to integrate with dynamic and dependency features.

Transformer-based Models: Require large-scale pretraining and are computationally intensive for real-time scoring in DevSecOps loops.

Thus, the chosen MLP provides a balanced approach for learning from unified feature vectors while remaining deployable in resource-constrained environments.

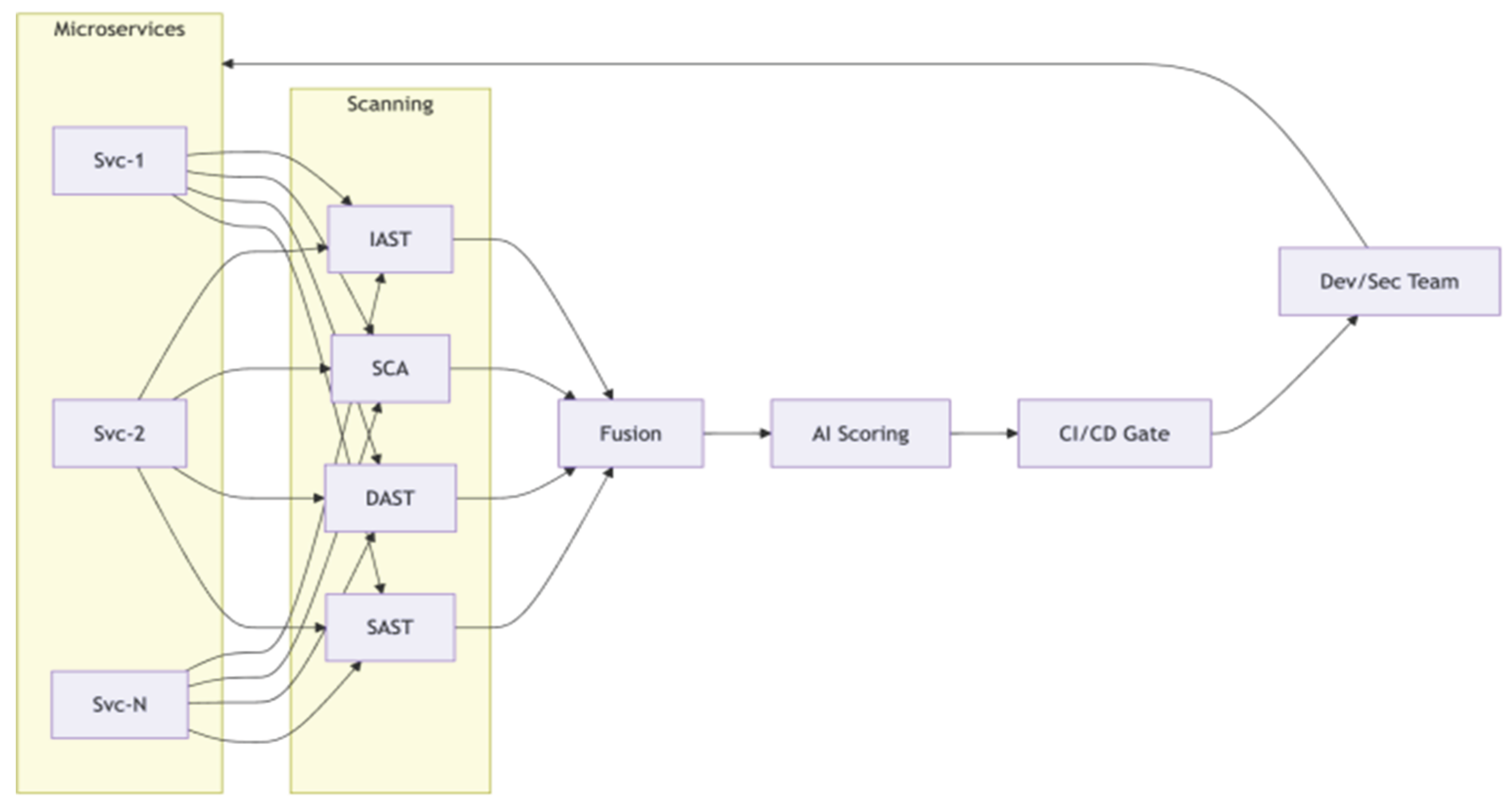

Microservice- and Path-Aware Risk Aggregation

In microservice environments, risk must be understood both at the individual vulnerability level and at coarser levels (service-level, communication path-level). This enables architectural decisions such as service isolation, rate limiting, or zero-trust enforcement.

Service-Level Risk

For a given service

, its risk is aggregated from the scores of all vulnerabilities belonging to that service:

where

is an aggregation operator.

A simple weighted sum is:

where weights

may encode whether the vulnerability is externally exposed, publicly exploitable, or tied to safety-critical functionality.

Alternatively, focusing on the top-

risk items:

Path-Level Risk

Many attacks in microservices follow cross-service communication paths. Consider a path:

with inter-service edges representing calls or message flows. Let the edge risk between

and

be

. The total path risk can be approximated by:

Paths with

above a threshold can be flagged for segmentation, stricter authentication, or more intensive runtime monitoring.

CI/CD Integration and Privacy-Preserving IRX Analytics

From a DevSecOps perspective, the framework operates as a continuous risk-assessment component in CI/CD pipelines. Each pipeline run at time

yields a set of vulnerabilities

and their scores

. We can track the average risk over time:

and the change in risk after a deployment:

Significant positive values of

indicate that a new release has degraded the security posture, and can be used as automated gating criteria (e.g., fail the pipeline or require manual approval).

Privacy-Preserving Feature Transformation

When vulnerability analytics are outsourced to a cloud-based engine using IRX or similar packages, code confidentiality and data privacy become critical. Let

be the original feature vector, potentially containing sensitive structural details. Before upload, a client-side anonymization / perturbation step produces:

where:

is a transformation matrix that masks direct identifiers (e.g., file names, exact paths),

is random noise used to enforce differential privacy.

A standard Gaussian mechanism is:

which prevents accurate reconstruction of individual code artifacts while preserving aggregate risk patterns.

Homomorphic Encryption for Cloud Scoring

For environments with strict confidentiality requirements, homomorphic encryption (HE) can be used so that the cloud processes encrypted feature vectors. Let

and

be encryption and decryption functions under an HE scheme. The cloud computes an approximate scoring function

over ciphertexts:

and the client recovers:

In practice, must be implemented using polynomial operations compatible with HE, which can be seen as a constrained approximation of the original neural network scoring function. Although computational overhead increases, this approach offers strong guarantees for organizations unwilling to expose raw vulnerability telemetry outside their perimeter.