Submitted:

09 February 2026

Posted:

10 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Evolution of VLN

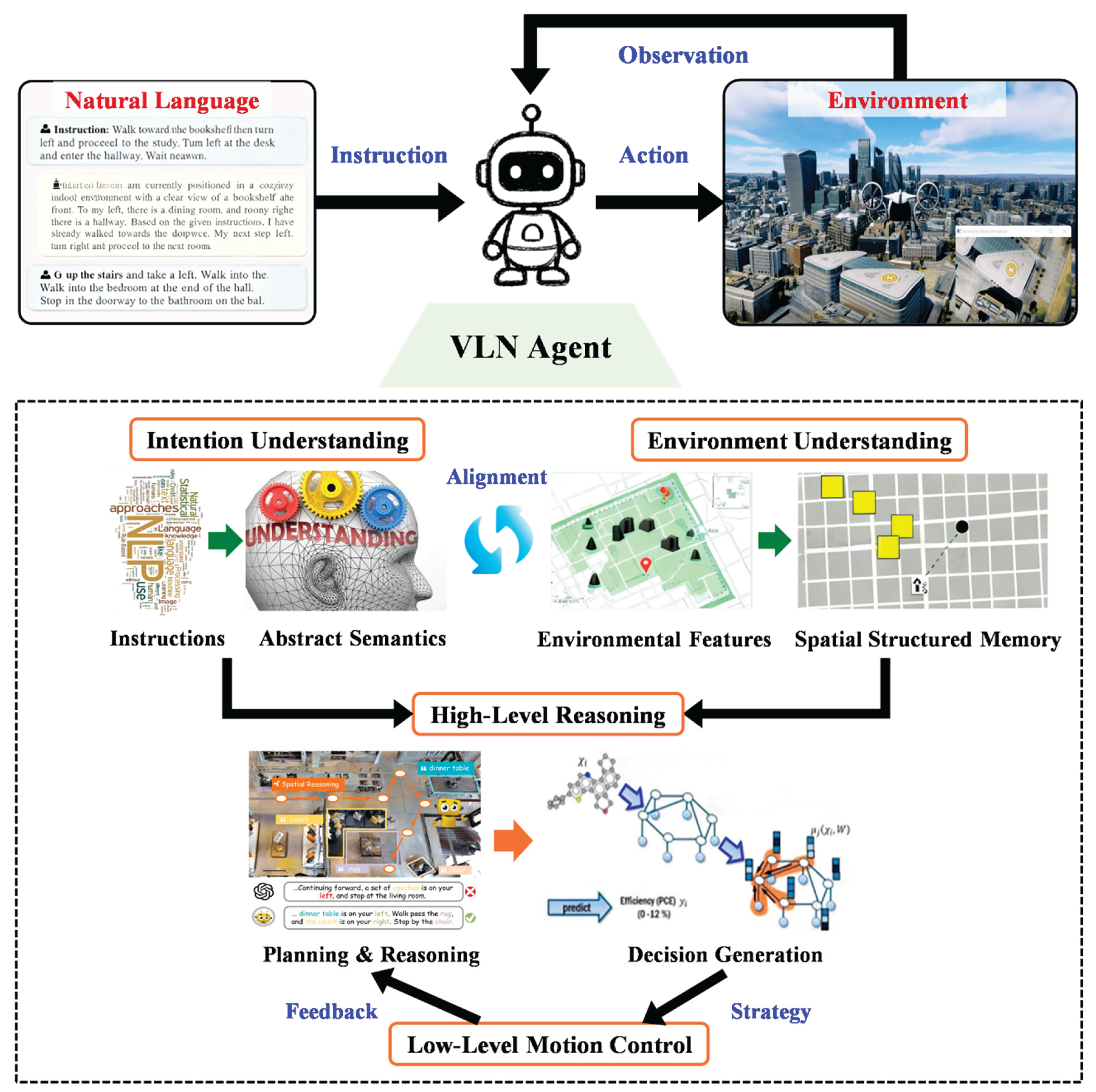

- Instruction understanding: parsing natural-language instructions into structured semantic representations to extract task goals, landmarks, and action sequences. Major challenges include linguistic variability, semantic ambiguity, and long-range dependency modeling;

- Environmental perception: recognizing objects, spatial layouts, and scene structures from visual inputs and aligning these with language semantics. Challenges include cross-scene generalization, robustness under dynamic conditions, and forming structured representations and long-term memory of the physical environment;

- Planning and decision-making: generating navigation paths by integrating linguistic intent with perceptual information. Key issues include efficient exploration, avoiding local optima, and improving decision stability;

- Motion control: executing high-level plans through low-level continuous control. Key challenges include maintaining accuracy, ensuring real-time responsiveness, and mitigating error accumulation during continuous execution.

- Enhanced language understanding and semantic generalization;

- Support for few-shot and zero-shot learning, significantly reducing annotation requirements;

- Cross-task reasoning capabilities, enabling unified handling of navigation, question answering, and object recognition;

- Improved adaptability and decision robustness in open environments.

3. Literature Review on LLM-Empowered VLN

3.1. Instruction Understanding

3.1.1. Semantic Encoding

3.1.2. Semantic Parsing

3.2. Environment Understanding

3.2.1. Feature Extraction

3.2.2. Structured Memory

3.2.3. Active Generative Prediction

3.3. High-Level Planning

3.3.1. Explicit Reasoning

3.3.2. Implicit Reasoning

3.3.3. Generative Planning

3.4. Low-Level Motion Control

3.4.1. Basic Control Strategies

3.4.2. Closed-Loop Control

3.4.3. Generative Control

3.5. Summary

4. Literature Review on Edge Deployment of LLM-based VLN Systems

4.1. Pre-Deployment Optimization

4.1.1. Quantization

4.1.2. Pruning

4.1.3. Knowledge Distillation

4.1.4. Low-Rank Decomposition

4.1.5. Other Methods

4.2. Runtime Optimization

4.2.1. Software-Level Optimization

4.2.2. Hardware-Level Optimization

4.2.3. Hardware-Software Co-Design

4.3. VLN Edge Deployment Cases

5. Implementation Requirements and Evaluation Protocols

5.1. Datasets

5.2. Simulation and Reconstructed Environments

5.3. Evaluation Metrics

5.3.1. Path-Level Metrics

- Success Rate (SR) [203]: the proportion of episodes in which the agent reaches the target within an acceptable error tolerance;

- Trajectory Length (TL) [204]: the average length of the agent’s executed trajectory, reflecting path efficiency;

- Success weighted by Path Length (SPL) [203]: a composite metric that accounts for both success and path optimality, and is widely considered the most representative performance indicator;

- Oracle Success Rate (OSR) [4]: an episode is counted as successful if the agent enters the goal region at any timestep, regardless of its final stopping action;

- Navigation Error (NE) [4]: the shortest-path distance between the agent’s final position and the goal location.

5.3.2. Path-Level Metrics

6. Challenges and Future Trends

6.1. Major Challenges

6.1.1. Capability-Level Constraints

- Insufficient semantic reasoning and logical planning: although LLMs have achieved significant progress in language understanding and contextual modeling, their reasoning is still dominated by statistical correlations rather than structured logic or causal inference. As a result, they struggle with multi-step commands, nested conditions, and implicit constraints, limiting long-horizon planning and cross-scene generalization;

- Limited spatial understanding and lack of world models: existing VLMs mainly capture local spatial relations but fail to systematically model dynamic scenes, object interactions, and physical constraints. Without predictive world models, agents lack anticipatory spatial cognition and physical reasoning, which is essential for real-world navigation [206,207];

- Hallucination and robustness issues: LLMs and VLMs often produce hallucinations, such as incorrect semantic descriptions or object predictions, which can lead to invalid or unsafe navigation decisions. The lack of cross-modal consistency checking and external knowledge validation is a key cause of this problem [208];

- Real-time constraints and computational bottlenecks: high inference latency and energy consumption of LLMs create challenges for deployment on mobile robots and edge devices. Balancing accuracy, latency, and energy efficiency remains a major obstacle for embodied navigation systems.

6.1.2. Ecosystem-Level Constraints

- Insufficient dataset and task diversity: most existing VLN datasets (e.g., R2R, RxR, VLN-CE) are limited to static indoor scenes and lack multilingual, multimodal, and dynamic interaction data, resulting in poor generalization to real-world environments;

- Incomplete evaluation systems and benchmark standards: current metrics such as SR, SPL, and nDTW focus primarily on geometric indicators and fail to capture semantic understanding, reasoning quality, and interaction efficiency, making it difficult to compare models across tasks and platforms;

- Challenges in Sim2Real transfer: simulation platforms (e.g., Habitat, AI2-THOR) simplify physical properties such as friction, inertia, and collisions, leading to significant performance degradation when models are deployed on real robots. The lack of standardized simulation–real-world integrated frameworks further limits engineering-level deployment [209].

6.2. Future Directions

6.2.1. Capability Enhancement

- Knowledge augmentation and semantic–logical reasoning integration: incorporating knowledge graphs, structured reasoning, and causal inference can enhance logical consistency and improve complex instruction understanding [206];

- Unified multimodal representation and world-model construction: integrating vision, language, touch, and other sensory modalities into a unified representation space, and combining it with predictive world models, can help agents transition from semantic alignment to physical understanding [207];

- Lightweight architectures and efficient inference optimization: techniques such as Mixture-of-Experts (MoE), LoRA, model quantization (GPTQ, QLoRA), and cloud–edge collaborative inference can reduce latency and energy consumption;

- Robustness and safety enhancement: multimodal consistency checking, external knowledge validation, and reinforcement learning with human feedback (RLHF) can mitigate hallucinations and improve system stability, safety, and interpretability [208].

6.2.2. Ecosystem Development

- Construction of diverse and large-scale datasets: future datasets should include multilingual, multimodal, and interactive navigation tasks to better reflect real-world complexity;

- Development of unified and reproducible benchmarks: standardized evaluation frameworks can more systematically assess semantic understanding, reasoning depth, and execution quality;

- Integrated Sim2Real and Real2Sim development frameworks: realistic simulation of sensor noise, physical disturbances, and robot-specific constraints can enable closed-loop training–validation–deployment pipelines [209];

6.3. Comprehensive Outlook

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Nguyen, P.D.H.; Georgie, Y.K.; Kayhan, E.; Eppe, M.; Hafner, V.V.; Wermter, S. Sensorimotor Representation Learning for an “Active Self” in Robots: A Model Survey. KI - Künstl. Intell. 2021, 35, 9–35. [Google Scholar] [CrossRef]

- Duan, J.; Yu, S.; Tan, H.L.; Zhu, H.; Tan, C. A Survey of Embodied AI: From Simulators to Research Tasks. IEEE Trans. Emerg. Top. Comput. Intell. 2022, 6, 230–244. [Google Scholar] [CrossRef]

- Sun, F.; Chen, R.; Ji, T.; Luo, Y.; Zhou, H.; Liu, H. A Comprehensive Survey on Embodied Intelligence: Advancements, Challenges, and Future Perspectives. CAAI Artif. Intell. Res. 2024, 3, 9150042. [Google Scholar] [CrossRef]

- Anderson, P.; Wu, Q.; Teney, D.; Bruce, J.; Johnson, M.; Sunderhauf, N.; Reid, I.; Gould, S.; Van Den Hengel, A. Vision-and-Language Navigation: Interpreting Visually-Grounded Navigation Instructions in Real Environments. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Salt Lake City, UT, USA, June 2018; pp. 3674–3683. [Google Scholar]

- Qi, Y.; Wu, Q.; Anderson, P.; Wang, X.; Wang, W.Y.; Shen, C.; Van Den Hengel, A. REVERIE: Remote Embodied Visual Referring Expression in Real Indoor Environments. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: Seattle, WA, USA, June 2020; pp. 9979–9988. [Google Scholar]

- Wang, H.; Chen, A.G.H.; Wu, M.; Dong, H. Find What You Want: Learning Demand-Conditioned Object Attribute Space for Demand-Driven Navigation. Adv. Neural Inf. Process. Syst. 2023, 36, 16353–16366. [Google Scholar]

- Zhao, W.X.; Zhou, K.; Li, J.; Tang, T.; Wang, X.; Hou, Y.; Min, Y.; Zhang, B.; Zhang, J.; Dong, Z.; et al. A Survey of Large Language Models. arXiv 2023. [Google Scholar] [CrossRef]

- Wang, J.; Shi, E.; Yu, S.; Wu, Z.; Hu, H.; Ma, C.; Dai, H.; Yang, Q.; Kang, Y.; Wu, J.; et al. Prompt Engineering for Healthcare: Methodologies and Applications. Meta-Radiol. 2025, 100190. [Google Scholar] [CrossRef]

- Radford, A.; Wu, J.; Child, R.; Luan, D.; Amodei, D.; Sutskever, I. Language Models Are Unsupervised Multitask Learners. Available online: https://cdn.openai.com/better-language-models/language_models_are_unsupervised_multitask_learners.pdf (accessed on 23 December 2025).

- Sanh, V.; Webson, A.; Raffel, C.; Bach, S.H.; Sutawika, L.; Alyafeai, Z.; Chaffin, A.; Stiegler, A.; Scao, T.L.; Raja, A.; et al. Multitask Prompted Training Enables Zero-Shot Task Generalization. In Proceedings of the International Conference on Learning Representations. OpenReview: Virtual Conference, 2022.

- Liu, S.; Zeng, Z.; Ren, T.; Li, F.; Zhang, H.; Yang, J.; Jiang, Q.; Li, C.; Yang, J.; Su, H.; et al. Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection. In Proceedings of the European Conference on Computer Vision; Springer Nature Switzerland: Cham, 2024; pp. 38–55. [Google Scholar]

- Kirillov, A.; Mintun, E.; Ravi, N.; Mao, H.; Rolland, C.; Gustafson, L.; Xiao, T.; Whitehead, S.; Berg, A.C.; Lo, W.-Y.; et al. Segment Anything. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: Paris, France, October 1 2023; pp. 4015–4026. [Google Scholar]

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large Language Models Are Zero-Shot Reasoners. Adv. Neural Inf. Process. Syst. 2022, 35, 22199–22213. [Google Scholar]

- Wang, J.; Liu, Z.; Zhao, L.; Wu, Z.; Ma, C.; Yu, S.; Dai, H.; Yang, Q.; Liu, Y.; Zhang, S.; et al. Review of Large Vision Models and Visual Prompt Engineering. Meta-Radiol. 2023, 1, 100047. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.H.; Le, Q.V.; Zhou, D. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. Adv. Neural Inf. Process. Syst. 2022, 35, 24824–24837. [Google Scholar]

- Gao, J.; Li, Y.; Cao, Z.; Li, W. Interleaved-Modal Chain-of-Thought. In Proceedings of the Computer Vision and Pattern Recognition Conference; IEEE, 2025; pp. 19520–19529. [Google Scholar]

- Pan, H.; Huang, S.; Yang, J.; Mi, J.; Li, K.; You, X.; Tang, X.; Liang, P.; Yang, J.; Liu, Y.; et al. Recent Advances in Robot Navigation via Large Language Models: A Review. Available online: https://www.researchgate.net/publication/384537380_Recent_Advances_in_Robot_Navigation_via_Large_Language_Models_A_Review (accessed on 24 December 2025).

- Zhang, Y.; Ma, Z.; Li, J.; Qiao, Y.; Wang, Z.; Chai, J.; Wu, Q.; Bansal, M.; Kordjamshidi, P. Vision-and-Language Navigation Today and Tomorrow: A Survey in the Era of Foundation Models. arXiv 2024. [Google Scholar] [CrossRef]

- Wu, W.; Chang, T.; Li, X. Vision-Language Navigation: A Survey and Taxonomy. Neural Comput. Appl. 2024, 36, 3291–3316. [Google Scholar] [CrossRef]

- Moravec, H.; Elfes, A. High Resolution Maps from Wide Angle Sonar. In Proceedings of the IEEE International Conference on Robotics and Automation; IEEE: St. Louis, MO, USA, 1985; Vol. 2, pp. 116–121. [Google Scholar]

- Hart, P.; Nilsson, N.; Raphael, B. A Formal Basis for the Heuristic Determination of Minimum Cost Paths. IEEE Trans. Syst. Sci. Cybern. 1968, 4, 100–107. [Google Scholar] [CrossRef]

- Durrant-Whyte, H.; Bailey, T. Simultaneous Localization and Mapping: Part I. IEEE Robot. Autom. Mag. 2006, 13, 99–110. [Google Scholar] [CrossRef]

- Cadena, C.; Carlone, L.; Carrillo, H.; Latif, Y.; Scaramuzza, D.; Neira, J.; Reid, I.; Leonard, J.J. Past, Present, and Future of Simultaneous Localization And Mapping: Towards the Robust-Perception Age. IEEE Trans. Robot. 2016, 32, 1309–1332. [Google Scholar] [CrossRef]

- Fried, D.; Hu, R.; Cirik, V.; Rohrbach, A.; Andreas, J.; Morency, L.-P.; Berg-Kirkpatrick, T.; Saenko, K.; Klein, D.; Darrell, T. Speaker-Follower Models for Vision-and-Language Navigation. Adv. Neural Inf. Process. Syst. 2018, 31. [Google Scholar]

- Wang, X.; Huang, Q.; Celikyilmaz, A.; Gao, J.; Shen, D.; Wang, Y.-F.; Wang, W.Y.; Zhang, L. Reinforced Cross-Modal Matching and Self-Supervised Imitation Learning for Vision-Language Navigation. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Long Beach, CA, USA, June 2019; pp. 6622–6631. [Google Scholar]

- Zhou, G.; Hong, Y.; Wu, Q. NavGPT: Explicit Reasoning in Vision-and-Language Navigation with Large Language Models. Proc. AAAI Conf. Artif. Intell. 2024, 38, 7641–7649. [Google Scholar] [CrossRef]

- Chen, J.; Lin, B.; Xu, R.; Chai, Z.; Liang, X.; Wong, K.-Y. MapGPT: Map-Guided Prompting with Adaptive Path Planning for Vision-and-Language Navigation. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics: Bangkok, Thailand, 2024; pp. 9796–9810. [Google Scholar]

- Zheng, D.; Huang, S.; Zhao, L.; Zhong, Y.; Wang, L. Towards Learning a Generalist Model for Embodied Navigation. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Seattle, WA, USA, 2024; pp. 13624–13634. [Google Scholar]

- Ma, Y.; Song, Z.; Zhuang, Y.; Hao, J.; King, I. A Survey on Vision-Language-Action Models for Embodied AI. arXiv 2024. [Google Scholar] [CrossRef]

- Zheng, Y.; Chen, Y.; Qian, B.; Shi, X.; Shu, Y.; Chen, J. A Review on Edge Large Language Models: Design, Execution, and Applications. ACM Comput. Surv. 2025, 57, 1–35. [Google Scholar] [CrossRef]

- Guan, T.; Yang, Y.; Cheng, H.; Lin, M.; Kim, R.; Madhivanan, R.; Sen, A.; Manocha, D. LOC-ZSON: Language-Driven Object-Centric Zero-Shot Object Retrieval and Navigation. arXiv 2024. [Google Scholar] [CrossRef]

- Ye, J.; Lin, H.; Ou, L.; Chen, D.; Wang, Z.; Zhu, Q.; He, C.; Li, W. Where Am I? Cross-View Geo-Localization with Natural Language Descriptions. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE/CVF, 2025; pp. 5890–5900. [Google Scholar]

- Zhang, Y.; Yu, H.; Xiao, J.; Feroskhan, M. Grounded Vision-Language Navigation for UAVs with Open-Vocabulary Goal Understanding. arXiv 2025. [Google Scholar] [CrossRef]

- Long, Y.; Cai, W.; Wang, H.; Zhan, G.; Dong, H. InstructNav: Zero-Shot System for Generic Instruction Navigation in Unexplored Environment. arXiv 2024. [Google Scholar] [CrossRef]

- Long, Y.; Li, X.; Cai, W.; Dong, H. Discuss Before Moving: Visual Language Navigation via Multi-Expert Discussions. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation; IEEE, 2024; pp. 17380–17387. [Google Scholar]

- Yin, H.; Wei, H.; Xu, X.; Guo, W.; Zhou, J.; Lu, J. GC-VLN: Instruction as Graph Constraints for Training-Free Vision-and-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Wang, Z.; Li, M.; Wu, M.; Moens, M.-F.; Tuytelaars, T. Instruction-Guided Path Planning with 3D Semantic Maps for Vision-Language Navigation. Neurocomputing 2025, 625, 129457. [Google Scholar] [CrossRef]

- Pan, B.; Panda, R.; Jin, S.; Feris, R.; Oliva, A.; Isola, P.; Kim, Y. LangNav: Language as a Perceptual Representation for Navigation. In Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2024; Association for Computational Linguistics: Mexico City, Mexico, 2024; pp. 950–974. [Google Scholar]

- Dorbala, V.S.; Mullen, J.F.; Manocha, D. Can an Embodied Agent Find Your “Cat-Shaped Mug”? LLM-Guided Exploration for Zero-Shot Object Navigation. IEEE Robot. Autom. Lett. 2024, 9, 4083–4090. [Google Scholar] [CrossRef]

- Zhou, K.; Zheng, K.; Pryor, C.; Shen, Y.; Jin, H.; Getoor, L.; Wang, X.E. ESC: Exploration with Soft Commonsense Constraints for Zero-Shot Object Navigation. In Proceedings of the International Conference on Machine Learning; PMLR, 2023; pp. 42829–42842. [Google Scholar]

- Qiu, D.; Ma, W.; Pan, Z.; Xiong, H.; Liang, J. Open-Vocabulary Mobile Manipulation in Unseen Dynamic Environments with 3D Semantic Maps. arXiv 2024. [Google Scholar] [CrossRef]

- Hu, Y.; Zhou, Y.; Zhu, Z.; Yang, X.; Zhang, H.; Bian, K.; Han, H. LLVM-Drone: A Synergistic Framework Integrating Large Language Models and Vision Models for Visual Tasks in Unmanned Aerial Vehicles. Knowl.-Based Syst. 2025, 327, 114190. [Google Scholar] [CrossRef]

- Shah, D.; Osiński, B.; Levine, S. LM-Nav: Robotic Navigation with Large Pre-Trained Models of Language, Vision, and Action. In Proceedings of the Conference on Robot Learning; PMLR, 2023; pp. 492–504. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. In Proceedings of the International Conference on Machine Learning; PMLR, 2021; pp. 8748–8763. [Google Scholar]

- Wu, R.; Zhang, Y.; Chen, J.; Huang, L.; Zhang, S.; Zhou, X.; Wang, L.; Liu, S. AeroDuo: Aerial Duo for UAV-Based Vision and Language Navigation. In Proceedings of the 33rd ACM International Conference on Multimedia; ACM, 2025; pp. 2576–2585. [Google Scholar]

- Zhang, J.; Wang, K.; Xu, R.; Zhou, G.; Hong, Y.; Fang, X.; Wu, Q.; Zhang, Z.; Wang, H. NaVid: Video-Based VLM Plans the Next Step for Vision-and-Language Navigation. arXiv 2024. [Google Scholar] [CrossRef]

- Zhou, G.; Hong, Y.; Wang, Z.; Wang, X.E.; Wu, Q. NavGPT-2: Unleashing Navigational Reasoning Capability for Large Vision-Language Models. In Proceedings of the European Conference on Computer Vision; Springer Nature Switzerland, 2024; pp. 260–278. [Google Scholar]

- Liu, Y.; Yao, F.; Yue, Y.; Xu, G.; Sun, X.; Fu, K. NavAgent: Multi-Scale Urban Street View Fusion For UAV Embodied Vision-and-Language Navigation. arXiv 2024. [Google Scholar] [CrossRef]

- Xu, Y.; Pan, Y.; Liu, Z.; Wang, H. FLAME: Learning to Navigate with Multimodal LLM in Urban Environments. In Proceedings of the AAAI Conference on Artificial Intelligence; 2025; Vol. 39, pp. 9005–9013. [Google Scholar]

- Zeng, T.; Peng, J.; Ye, H.; Chen, G.; Luo, S.; Zhang, H. EZREAL: Enhancing Zero-Shot Outdoor Robot Navigation toward Distant Targets under Varying Visibility. arXiv 2025. [Google Scholar] [CrossRef]

- Li, J.; Bansal, M. Improving Vision-and-Language Navigation by Generating Future-View Image Semantics. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE/CVF, 2023; pp. 10803–10812. [Google Scholar]

- Zhao, G.; Li, G.; Chen, W.; Yu, Y. OVER-NAV: Elevating Iterative Vision-and-Language Navigation with Open-Vocabulary Detection and StructurEd Representation. In Proceedings of the 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Seattle, WA, USA, 2024; pp. 16296–16306. [Google Scholar]

- Zhang, W.; Gao, C.; Yu, S.; Peng, R.; Zhao, B.; Zhang, Q.; Cui, J.; Chen, X.; Li, Y. CityNavAgent: Aerial Vision-and-Language Navigation with Hierarchical Semantic Planning and Global Memory. arXiv 2025. [Google Scholar] [CrossRef]

- Zeng, S.; Qi, D.; Chang, X.; Xiong, F.; Xie, S.; Wu, X.; Liang, S.; Xu, M.; Wei, X. JanusVLN: Decoupling Semantics and Spatiality with Dual Implicit Memory for Vision-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Zhang, S.; Qiao, Y.; Wang, Q.; Yan, Z.; Wu, Q.; Wei, Z.; Liu, J. COSMO: Combination of Selective Memorization for Low-Cost Vision-and-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Song, S.; Kodagoda, S.; Gunatilake, A.; Carmichael, M.G.; Thiyagarajan, K.; Martin, J. Guide-LLM: An Embodied LLM Agent and Text-Based Topological Map for Robotic Guidance of People with Visual Impairments. arXiv 2024. [Google Scholar] [CrossRef]

- Wang, Z.; Lee, S.; Lee, G.H. Dynam3D: Dynamic Layered 3D Tokens Empower VLM for Vision-and-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Kong, P.; Liu, R.; Xie, Z.; Pang, Z. VLN-KHVR: Knowledge-And-History Aware Visual Representation for Continuous Vision-and-Language Navigation. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation (ICRA); IEEE: Atlanta, GA, USA, 2025; pp. 5236–5243. [Google Scholar]

- Liu, S.; Zhang, H.; Qiao, Q.; Wu, Q.; Wang, P. VLN-ChEnv: Vision-Language Navigation in Changeable Environments. In Proceedings of the 33rd ACM International Conference on Multimedia; ACM: Dublin, Ireland, 2025; pp. 3798–3807. [Google Scholar]

- Wei, M.; Wan, C.; Yu, X.; Wang, T.; Yang, Y.; Mao, X.; Zhu, C.; Cai, W.; Wang, H.; Chen, Y.; et al. StreamVLN: Streaming Vision-and-Language Navigation via SlowFast Context Modeling. arXiv 2025. [Google Scholar] [CrossRef]

- Yokoyama, N.; Ha, S.; Batra, D.; Wang, J.; Bucher, B. VLFM: Vision-Language Frontier Maps for Zero-Shot Semantic Navigation. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation; IEEE, 2024; pp. 42–48. [Google Scholar]

- Huang, Y.; Wu, M.; Li, R.; Tu, Z. VISTA: Generative Visual Imagination for Vision-and-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Fan, S.; Liu, R.; Wang, W.; Yang, Y. Scene Map-Based Prompt Tuning for Navigation Instruction Generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE/CVF, 2025; pp. 6898–6908. [Google Scholar]

- Zhao, X.; Cai, W.; Tang, L.; Wang, T. ImagineNav: Prompting Vision-Language Models as Embodied Navigator through Scene Imagination. arXiv 2024. [Google Scholar] [CrossRef]

- Saanum, T.; Dayan, P.; Schulz, E. Predicting the Future with Simple World Models. arXiv 2024. [Google Scholar] [CrossRef]

- Huang, S.; Shi, C.; Yang, J.; Dong, H.; Mi, J.; Li, K.; Zhang, J.; Ding, M.; Liang, P.; You, X.; et al. KiteRunner: Language-Driven Cooperative Local-Global Navigation Policy with UAV Mapping in Outdoor Environments. arXiv 2025. [Google Scholar] [CrossRef]

- Lin, B.; Nie, Y.; Zai, K.L.; Wei, Z.; Han, M.; Xu, R.; Niu, M.; Han, J.; Zhang, H.; Lin, L.; et al. EvolveNav: Empowering LLM-Based Vision-Language Navigation via Self-Improving Embodied Reasoning. arXiv 2025. [Google Scholar] [CrossRef]

- Liu, C.; Zhou, Z.; Zhang, J.; Zhang, M.; Huang, S.; Duan, H. MSNav: Zero-Shot Vision-and-Language Navigation with Dynamic Memory and LLM Spatial Reasoning. arXiv 2025. [Google Scholar] [CrossRef]

- Lin, B.; Nie, Y.; Wei, Z.; Chen, J.; Ma, S.; Han, J.; Xu, H.; Chang, X.; Liang, X. NavCoT: Boosting LLM-Based Vision-and-Language Navigation via Learning Disentangled Reasoning. IEEE Trans. Pattern Anal. Mach. Intell. 2025. [Google Scholar] [CrossRef]

- Ahn, M.; Brohan, A.; Brown, N.; Chebotar, Y.; Cortes, O.; David, B.; Finn, C.; Fu, C.; Gopalakrishnan, K.; Hausman, K.; et al. Do As I Can, Not As I Say: Grounding Language in Robotic Affordances. In Proceedings of the Conference on Robot Learning; PMLR, 2023; pp. 287–318. [Google Scholar]

- Khan, A.-M.; Rehman, I.U.; Saeed, N.; Sobnath, D.; Khan, F.; Khattak, M.A.K. Context-Aware Autonomous Drone Navigation Using Large Language Models (LLMs). In Proceedings of the AAAI Symposium Series; 2025; Vol. 6, pp. 102–107. [Google Scholar]

- Bhatt, N.P.; Yang, Y.; Siva, R.; Samineni, P.; Milan, D.; Wang, Z.; Topcu, U. VLN-Zero: Rapid Exploration and Cache-Enabled Neurosymbolic Vision-Language Planning for Zero-Shot Transfer in Robot Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Zhou, X.; Xiao, T.; Liu, L.; Wang, Y.; Chen, M.; Meng, X.; Wang, X.; Feng, W.; Sui, W.; Su, Z. FSR-VLN: Fast and Slow Reasoning for Vision-Language Navigation with Hierarchical Multi-Modal Scene Graph. arXiv 2025. [Google Scholar] [CrossRef]

- Qiao, Y.; Lyu, W.; Wang, H.; Wang, Z.; Li, Z.; Zhang, Y.; Tan, M.; Wu, Q. Open-Nav: Exploring Zero-Shot Vision-and-Language Navigation in Continuous Environment with Open-Source LLMs. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation; IEEE, 2025; pp. 6710–6717. [Google Scholar]

- Kang, D.; Perincherry, A.; Coalson, Z.; Gabriel, A.; Lee, S.; Hong, S. Harnessing Input-Adaptive Inference for Efficient VLN. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE/CVF, 2025; pp. 8219–8229. [Google Scholar]

- Liu, R.; Wang, W.; Yang, Y. Vision-Language Navigation with Energy-Based Policy. Adv. Neural Inf. Process. Syst. 2024, 37, 108208–108230. [Google Scholar]

- Qi, Z.; Zhang, Z.; Yu, Y.; Wang, J.; Zhao, H. VLN-R1: Vision-Language Navigation via Reinforcement Fine-Tuning. arXiv 2025. [Google Scholar] [CrossRef]

- Cai, W.; Huang, S.; Cheng, G.; Long, Y.; Gao, P.; Sun, C.; Dong, H. Bridging Zero-Shot Object Navigation and Foundation Models through Pixel-Guided Navigation Skill. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation; IEEE, 2024; pp. 5228–5234. [Google Scholar]

- Liu, S.; Zhang, H.; Qi, Y.; Wang, P.; Zhang, Y.; Wu, Q. AerialVLN: Vision-and-Language Navigation for UAVs. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE/CVF, 2023; pp. 15384–15394. [Google Scholar]

- Li, Z.; Lu, Y.; Mu, Y.; Qiao, H. Cog-GA: A Large Language Models-Based Generative Agent for Vision-Language Navigation in Continuous Environments. arXiv 2024. [Google Scholar] [CrossRef]

- Ha, D.; Schmidhuber, J. World Models. arXiv 2018. [Google Scholar]

- Wang, H.; Liang, W.; Gool, L.V.; Wang, W. DREAMWALKER: Mental Planning for Continuous Vision-Language Navigation. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE/CVF, 2023; pp. 10873–10883. [Google Scholar]

- Wang, Y.; Fang, Y.; Wang, T.; Feng, Y.; Tan, Y.; Zhang, S.; Liu, P.; Ji, Y.; Xu, R. DreamNav: A Trajectory-Based Imaginative Framework for Zero-Shot Vision-and-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Bar, A.; Zhou, G.; Tran, D.; Darrell, T.; LeCun, Y. Navigation World Models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE/CVF, 2025; pp. 15791–15801. [Google Scholar]

- Kåsene, V.H.; Lison, P. Following Route Instructions Using Large Vision-Language Models: A Comparison between Low-Level and Panoramic Action Spaces. In Proceedings of the 8th International Conference on Natural Language and Speech Processing; 2025; pp. 449–463. [Google Scholar]

- Rajvanshi, A.; Sikka, K.; Lin, X.; Lee, B.; Chiu, H.-P.; Velasquez, A. SayNav: Grounding Large Language Models for Dynamic Planning to Navigation in New Environments. In Proceedings of the International Conference on Automated Planning and Scheduling; 2024; Vol. 34, pp. 464–474. [Google Scholar]

- Chen, J.; Lin, B.; Liu, X.; Ma, L.; Liang, X.; Wong, K.-Y.K. Affordances-Oriented Planning Using Foundation Models for Continuous Vision-Language Navigation. In Proceedings of the AAAI Conference on Artificial Intelligence; 2025; Vol. 39, pp. 23568–23576. [Google Scholar]

- Xiao, J.; Sun, Y.; Shao, Y.; Gan, B.; Liu, R.; Wu, Y.; Guan, W.; Deng, X. UAV-ON: A Benchmark for Open-World Object Goal Navigation with Aerial Agents. In Proceedings of the 33rd ACM International Conference on Multimedia; ACM, 2025; pp. 13023–13029. [Google Scholar]

- Brohan, A.; Brown, N.; Carbajal, J.; Chebotar, Y.; Chen, X.; Choromanski, K.; Ding, T.; Driess, D.; Dubey, A.; Finn, C.; et al. RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control. In Proceedings of the Conference on Robot Learning; PMLR, 2023; pp. 2165–2183. [Google Scholar]

- Kim, M.J.; Pertsch, K.; Karamcheti, S.; Xiao, T.; Balakrishna, A.; Nair, S.; Rafailov, R.; Foster, E.; Lam, G.; Sanketi, P.; et al. OpenVLA: An Open-Source Vision-Language-Action Model. arXiv 2024. [Google Scholar] [CrossRef]

- Ding, H.; Xu, Z.; Fang, Y.; Wu, Y.; Chen, Z.; Shi, J.; Huo, J.; Zhang, Y.; Gao, Y. LaViRA: Language-Vision-Robot Actions Translation for Zero-Shot Vision Language Navigation in Continuous Environments. arXiv 2025. [Google Scholar] [CrossRef]

- Lagemann, K.; Lagemann, C. Invariance-Based Learning of Latent Dynamics. In Proceedings of the International Conference on Learning Representations; 2023. [Google Scholar]

- Li, T.; Huai, T.; Li, Z.; Gao, Y.; Li, H.; Zheng, X. SkyVLN: Vision-and-Language Navigation and NMPC Control for UAVs in Urban Environments. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems; IEEE, 2025; pp. 17199–17206. [Google Scholar]

- Saxena, P.; Raghuvanshi, N.; Goveas, N. UAV-VLN: End-to-End Vision Language Guided Navigation for UAVs. In Proceedings of the 2025 European Conference on Mobile Robots; 2025; pp. 1–6. [Google Scholar]

- Payandeh, A.; Pokhrel, A.; Song, D.; Zampieri, M.; Xiao, X. Narrate2Nav: Real-Time Visual Navigation with Implicit Language Reasoning in Human-Centric Environments. arXiv 2025. [Google Scholar] [CrossRef]

- Cai, Y.; He, X.; Wang, M.; Guo, H.; Yau, W.-Y.; Lv, C. CL-CoTNav: Closed-Loop Hierarchical Chain-of-Thought for Zero-Shot Object-Goal Navigation with Vision-Language Models. arXiv 2025. [Google Scholar] [CrossRef]

- Zhang, X.; Tian, Y.; Lin, F.; Liu, Y.; Ma, J.; Szatmáry, K.S.; Wang, F.-Y. LogisticsVLN: Vision-Language Navigation For Low-Altitude Terminal Delivery Based on Agentic UAVs. arXiv 2025. [Google Scholar] [CrossRef]

- Choutri, K.; Fadloun, S.; Khettabi, A.; Lagha, M.; Meshoul, S.; Fareh, R. Leveraging Large Language Models for Real-Time UAV Control. Electronics 2025, 14, 4312. [Google Scholar] [CrossRef]

- Zhang, Z.; Chen, M.; Zhu, S.; Han, T.; Yu, Z. MMCNav: MLLM-Empowered Multi-Agent Collaboration for Outdoor Visual Language Navigation. In Proceedings of the 2025 International Conference on Multimedia Retrieval; ACM: Chicago IL USA, 2025; pp. 1767–1776. [Google Scholar]

- Shi, H.; Deng, X.; Li, Z.; Chen, G.; Wang, Y.; Nie, L. DAgger Diffusion Navigation: DAgger Boosted Diffusion Policy for Vision-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Cai, W.; Peng, J.; Yang, Y.; Zhang, Y.; Wei, M.; Wang, H.; Chen, Y.; Wang, T.; Pang, J. NavDP: Learning Sim-to-Real Navigation Diffusion Policy with Privileged Information Guidance. arXiv 2025. [Google Scholar] [CrossRef]

- Hu, Z.; Tang, C.; Munje, M.J.; Zhu, Y.; Liu, A.; Liu, S.; Warnell, G.; Stone, P.; Biswas, J. ComposableNav: Instruction-Following Navigation in Dynamic Environments via Composable Diffusion. arXiv 2025. [Google Scholar] [CrossRef]

- Nunes, D.; Amorim, R.; Ribeiro, P.; Coelho, A.; Campos, R. A Framework Leveraging Large Language Models for Autonomous UAV Control in Flying Networks. In Proceedings of the 2025 IEEE International Mediterranean Conference on Communications and Networking; IEEE: Nice, France, 2025; pp. 12–17. [Google Scholar]

- Liu, M.; Yurtsever, E.; Fossaert, J.; Zhou, X.; Zimmer, W.; Cui, Y.; Zagar, B.L.; Knoll, A.C. A Survey on Autonomous Driving Datasets: Statistics, Annotation Quality, and a Future Outlook. IEEE Trans. Intell. Veh. 2024. [Google Scholar] [CrossRef]

- Zeng, T.; Tang, F.; Ji, D.; Si, B. NeuroBayesSLAM: Neurobiologically Inspired Bayesian Integration of Multisensory Information for Robot Navigation. Neural Netw. 2020, 126, 21–35. [Google Scholar] [CrossRef]

- He, X.; Meng, X.; Yin, W.; Zhang, Y.; Mo, L.; An, X.; Yu, F.; Pan, S.; Liu, Y.; Liu, J.; et al. A Preliminary Exploration of the Differences and Conjunction of Traditional PNT and Brain-Inspired PNT. arXiv 2025. [Google Scholar] [CrossRef]

- Stachenfeld, K.L.; Botvinick, M.M.; Gershman, S.J. The Hippocampus as a Predictive Map. Nat. Neurosci. 2017, 20, 1643–1653. [Google Scholar] [CrossRef]

- Liu, Q.; Xin, H.; Liu, Z.; Wang, H. Integrating Neural Radiance Fields End-to-End for Cognitive Visuomotor Navigation. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 11200–11215. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-Training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies; Association for Computational Linguistics: Minneapolis, MN, USA, 2019; pp. 4171–4186. [Google Scholar]

- Radford, A.; Narasimhan, K.; Salimans, T.; Sutskever, I. Improving Language Understanding by Generative Pre-Training. Available online: https://www.mikecaptain.com/resources/pdf/GPT-1.pdf (accessed on 24 December 2025).

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models Are Few-Shot Learners. Adv. Neural Inf. Process. Syst. 2020, 33, 1877–1901. [Google Scholar]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.L.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training Language Models to Follow Instructions with Human Feedback. Adv. Neural Inf. Process. Syst. 2022, 35, 27730–27744. [Google Scholar]

- OpenAI; Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; et al. GPT-4 Technical Report. Available online: https://arxiv.org/pdf/2303.08774 (accessed on 27 November 2025).

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.-A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv 2023. [Google Scholar] [CrossRef]

- Team, G.; Anil, R.; Borgeaud, S.; Alayrac, J.-B.; Yu, J.; Soricut, R.; Schalkwyk, J.; Dai, A.M.; Hauth, A.; Millican, K.; et al. Gemini: A Family of Highly Capable Multimodal Models. arXiv 2023. [Google Scholar] [CrossRef]

- Li, J.; Li, D.; Savarese, S.; Hoi, S. BLIP-2: Bootstrapping Language-Image Pre-Training with Frozen Image Encoders and Large Language Models. In Proceedings of the International Conference on Machine Learning; PMLR, 2023; pp. 19730–19742. [Google Scholar]

- Alayrac, J.-B.; Donahue, J.; Luc, P.; Miech, A.; Barr, I.; Hasson, Y.; Lenc, K.; Mensch, A.; Millican, K.; Reynolds, M.; et al. Flamingo: A Visual Language Model for Few-Shot Learning. Adv. Neural Inf. Process. Syst. 2022, 35, 23716–23736. [Google Scholar]

- 隆重推出 GPT-5. Available online: https://openai.com/zh-Hans-CN/index/introducing-gpt-5/ (accessed on 28 November 2025).

- The Llama 4 Herd: The Beginning of a New Era of Natively Multimodal AI Innovation. Available online: https://ai.meta.com/blog/llama-4-multimodal-intelligence/?utm_source=llama-home-behemoth&utm_medium=llama-referral&utm_campaign=llama-utm&utm_offering=llama-behemoth-preview&utm_product=llama (accessed on 27 November 2025).

- Xu, J.; Guo, Z.; He, J.; Hu, H.; He, T.; Bai, S.; Chen, K.; Wang, J.; Fan, Y.; Dang, K.; et al. Qwen2.5-Omni Technical Report. arXiv 2025. [Google Scholar] [CrossRef]

- DeepSeek-AI; Liu, A.; Feng, B.; Xue, B.; Wang, B.; Wu, B.; Lu, C.; Zhao, C.; Deng, C.; Zhang, C.; et al. DeepSeek-V3 Technical Report. arXiv 2024. [Google Scholar] [CrossRef]

- DeepSeek-AI; Guo, D.; Yang, D.; Zhang, H.; Song, J.; Zhang, R.; Xu, R.; Zhu, Q.; Ma, S.; Wang, P.; et al. DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. arXiv 2025. [Google Scholar] [CrossRef]

- Frantar, E.; Ashkboos, S.; Hoefler, T.; Alistarh, D. GPTQ: Accurate Post-Training Quantization for Generative Pre-Trained Transformers. arXiv 2022. [Google Scholar] [CrossRef]

- Lin, J.; Tang, J.; Tang, H.; Yang, S.; Chen, W.-M.; Wang, W.-C.; Xiao, G.; Dang, X.; Gan, C.; Han, S. AWQ: Activation-Aware Weight Quantization for LLM Compression and Acceleration. Mach. Learn. Syst. 2024, 6, 87–100. [Google Scholar] [CrossRef]

- Tan, F.; Lee, R.; Dudziak, Ł.; Hu, S.X.; Bhattacharya, S.; Hospedales, T.; Tzimiropoulos, G.; Martinez, B. MobileQuant: Mobile-Friendly Quantization for On-Device Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024; Association for Computational Linguistics: Miami, Florida, USA, 2024; pp. 9761–9771. [Google Scholar]

- Xiao, G.; Lin, J.; Seznec, M.; Wu, H.; Demouth, J.; Han, S. SmoothQuant: Accurate and Efficient Post-Training Quantization for Large Language Models. In Proceedings of the International Conference on Machine Learning; PMLR, 2023; pp. 38087–38099. [Google Scholar]

- Yao, Z.; Aminabadi, R.Y.; Zhang, M.; Wu, X.; Li, C.; He, Y. ZeroQuant: Efficient and Affordable Post-Training Quantization for Large-Scale Transformers. Adv. Neural Inf. Process. Syst. 2022, 35, 27168–27183. [Google Scholar]

- Xia, M.; Gao, T.; Zeng, Z.; Chen, D. Sheared LLaMA: Accelerating Language Model Pre-Training via Structured Pruning. In Proceedings of the International Conference on Representation Learning; Kim, B., Yue, Y., Chaudhuri, S., Fragkiadaki, K., Khan, M., Sun, Y., Eds.; 2024; Vol. 2024, pp. 5385–5409. [Google Scholar]

- Frantar, E.; Alistarh, D. SparseGPT: Massive Language Models Can Be Accurately Pruned in One-Shot. In Proceedings of the 40th International Conference on Machine Learning; PMLR, 2023; pp. 10323–10337. [Google Scholar]

- Sanh, V.; Wolf, T.; Rush, A. Movement Pruning: Adaptive Sparsity by Fine-Tuning. Adv. Neural Inf. Process. Syst. 2020, 33, 20378–20389. [Google Scholar]

- Gu, Y.; Dong, L.; Wei, F.; Huang, M. MiniLLM: Knowledge Distillation of Large Language Models. arXiv 2023. [Google Scholar] [CrossRef]

- Sun, Z.; Yu, H.; Song, X.; Liu, R.; Yang, Y.; Zhou, D. MobileBERT: A Compact Task-Agnostic BERT for Resource-Limited Devices. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; Jurafsky, D., Chai, J., Schluter, N., Tetreault, J., Eds.; Association for Computational Linguistics: Online, 2020; pp. 2158–2170. [Google Scholar]

- Hsieh, C.-Y.; Li, C.-L.; Yeh, C.-K.; Nakhost, H.; Fujii, Y.; Ratner, A.; Krishna, R.; Lee, C.-Y.; Pfister, T. Distilling Step-by-Step! Outperforming Larger Language Models with Less Training Data and Smaller Model Sizes. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2023; Association for Computational Linguistics, 2023; pp. 8003–8017. [Google Scholar]

- Ho, N.; Schmid, L.; Yun, S.-Y. Large Language Models Are Reasoning Teachers. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2023; pp. 14852–14882. [Google Scholar]

- Lan, Z.; Chen, M.; Goodman, S.; Gimpel, K.; Sharma, P.; Soricut, R. ALBERT: A Lite BERT for Self-Supervised Learning of Language Representations. arXiv 2019. [Google Scholar] [CrossRef]

- Hsu, Y.-C.; Hua, T.; Chang, S.; Lou, Q.; Shen, Y.; Jin, H. Language Model Compression with Weighted Low-Rank Factorization. arXiv 2022. [Google Scholar] [CrossRef]

- Gunasekar, S.; Zhang, Y.; Aneja, J.; Mendes, C.C.T.; Giorno, A.D.; Gopi, S.; Javaheripi, M.; Kauffmann, P.; de Rosa, G.; Saarikivi, O.; et al. Textbooks Are All You Need. arXiv 2023. [Google Scholar] [CrossRef]

- Su, J.; Lu, Y.; Pan, S.; Murtadha, A.; Wen, B.; Liu, Y. RoFormer: Enhanced Transformer with Rotary Position Embedding. Neurocomputing 2024, 568, 127063. [Google Scholar] [CrossRef]

- Ainslie, J.; Lee-Thorp, J.; de Jong, M.; Zemlyanskiy, Y.; Lebrón, F.; Sanghai, S. GQA: Training Generalized Multi-Query Transformer Models from Multi-Head Checkpoints. arXiv 2023. [Google Scholar] [CrossRef]

- Mehta, S.; Ghazvininejad, M.; Iyer, S.; Zettlemoyer, L.; Hajishirzi, H. DeLighT: Deep and Light-Weight Transformer. arXiv 2020. [Google Scholar] [CrossRef]

- Borzunov, A.; Ryabinin, M.; Chumachenko, A.; Baranchuk, D.; Dettmers, T.; Belkada, Y.; Samygin, P.; Raffel, C. Distributed Inference and Fine-Tuning of Large Language Models Over The Internet. Adv. Neural Inf. Process. Syst. 2023, 36, 12312–12331. [Google Scholar]

- Hu, C.; Li, B. When the Edge Meets Transformers: Distributed Inference with Transformer Models. In Proceedings of the 2024 IEEE 44th International Conference on Distributed Computing Systems (ICDCS); IEEE: Jersey City, NJ, USA, 2024; pp. 82–92. [Google Scholar]

- Sun, Z.; Suresh, A.T.; Ro, J.H.; Beirami, A.; Jain, H.; Yu, F. SpecTr: Fast Speculative Decoding via Optimal Transport. Adv. Neural Inf. Process. Syst. 2023, 36, 30222–30242. [Google Scholar]

- Wang, Y.; Chen, K.; Tan, H.; Guo, K. Tabi: An Efficient Multi-Level Inference System for Large Language Models. In Proceedings of the Eighteenth European Conference on Computer Systems; ACM: Rome, Italy, 2023; pp. 233–248. [Google Scholar]

- Goyal, S.; Choudhury, A.R.; Raje, S.M.; Chakaravarthy, V.T.; Sabharwal, Y.; Verma, A. PoWER-BERT: Accelerating BERT Inference via Progressive Word-Vector Elimination. In Proceedings of the International Conference on Machine Learning; PMLR, 2020; pp. 3690–3699. [Google Scholar]

- Jiang, H.; Wu, Q.; Lin, C.-Y.; Yang, Y.; Qiu, L. LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models. arXiv 2023. [Google Scholar] [CrossRef]

- Zhou, W.; Xu, C.; Ge, T.; McAuley, J.; Xu, K.; Wei, F. BERT Loses Patience: Fast and Robust Inference with Early Exit. Adv. Neural Inf. Process. Syst. 2020, 33, 18330–18341. [Google Scholar]

- Kong, J.; Wang, J.; Yu, L.-C.; Zhang, X. Accelerating Inference for Pretrained Language Models by Unified Multi-Perspective Early Exiting. In Proceedings of the International Conference on Computational Linguistics; International Committee on Computational Linguistics; 2022; pp. 4677–4686. [Google Scholar]

- Guo, L.; Choe, W.; Lin, F.X. STI: Turbocharge NLP Inference at the Edge via Elastic Pipelining. In Proceedings of the 28th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, Volume 2; ACM: Vancouver, BC, Canada, 2023; pp. 791–803. [Google Scholar]

- Sheng, Y.; Zheng, L.; Yuan, B.; Li, Z.; Ryabinin, M.; Fu, D.Y.; Xie, Z.; Chen, B.; Barrett, C.; Gonzalez, J.E.; et al. FlexGen: High-Throughput Generative Inference of Large Language Models with a Single GPU. In Proceedings of the International Conference on Machine Learning; PMLR, 2023; pp. 31094–31116. [Google Scholar]

- Pytorch/Executorch. Available online: https://github.com/pytorch/executorch (accessed on 28 November 2025).

- Niu, W.; Guan, J.; Wang, Y.; Agrawal, G.; Ren, B. DNNFusion: Accelerating Deep Neural Networks Execution with Advanced Operator Fusion. ACM Trans. Archit. Code Optim. 2020, 17, 1–26. [Google Scholar] [CrossRef]

- Niu, W.; Sanim, M.M.R.; Shu, Z.; Guan, J.; Shen, X.; Yin, M.; Agrawal, G.; Ren, B. SmartMem: Layout Transformation Elimination and Adaptation for Efficient DNN Execution on Mobile. In Proceedings of the 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems; ACM, 2024; Vol. 3, pp. 916–931. [Google Scholar]

- Kwon, W.; Li, Z.; Zhuang, S.; Sheng, Y.; Zheng, L.; Yu, C.H.; Gonzalez, J.E.; Zhang, H.; Stoica, I. Efficient Memory Management for Large Language Model Serving with PagedAttention. In Proceedings of the Symposium on Operating Systems Principles; ACM, 2023; pp. 611–626. [Google Scholar]

- Shen, X.; Dong, P.; Lu, L.; Kong, Z.; Li, Z.; Lin, M.; Wu, C.; Wang, Y. Agile-Quant: Activation-Guided Quantization for Faster Inference of LLMs on the Edge. In Proceedings of the AAAI Conference on Artificial Intelligence; Association for the Advancement of Artificial Intelligence, 2024; Vol. 38, pp. 18944–18951. [Google Scholar]

- Intel Launches 13th Gen Intel Core Processor Family Alongside New Intel Unison Solution. Available online: https://www.intc.com/news-events/press-releases/detail/1578/intel-launches-13th-gen-intel-core-processor-family?utm_source=chatgpt.com (accessed on 28 November 2025).

- Apple Debuts iPhone 15 and iPhone 15 Plus. Available online: https://www.apple.com/newsroom/2023/09/apple-debuts-iphone-15-and-iphone-15-plus/ (accessed on 28 November 2025).

- How Google Tensor Helps Google Pixel Phones Do More. Available online: https://store.google.com/intl/en/ideas/articles/google-tensor-pixel-smartphone/ (accessed on 28 November 2025).

- Jetson Modules, Support, Ecosystem, and Lineup. Available online: https://developer.nvidia.com/embedded/jetson-modules (accessed on 28 November 2025).

- Yuan, J.; Yang, C.; Cai, D.; Wang, S.; Yuan, X.; Zhang, Z.; Li, X.; Zhang, D.; Mei, H.; Jia, X.; et al. Mobile Foundation Model as Firmware. In Proceedings of the 30th Annual International Conference on Mobile Computing and Networking; 2024; pp. 279–295. [Google Scholar]

- Zhang, X.; Nie, J.; Huang, Y.; Xie, G.; Xiong, Z.; Liu, J.; Niyato, D.; Shen, X. Beyond the Cloud: Edge Inference for Generative Large Language Models in Wireless Networks. IEEE Trans. Wirel. Commun. 2025, 24, 643–658. [Google Scholar] [CrossRef]

- Apple Introduces M2 Ultra. Available online: https://www.apple.com/newsroom/2023/06/apple-introduces-m2-ultra/ (accessed on 28 November 2025).

- Snapdragon 8 Gen 3 Mobile Platform. Available online: https://www.qualcomm.com/smartphones/products/8-series/snapdragon-8-gen-3-mobile-platform (accessed on 28 November 2025).

- Liu, Z.; Zhao, C.; Iandola, F.; Lai, C.; Tian, Y.; Fedorov, I.; Xiong, Y.; Chang, E.; Shi, Y.; Krishnamoorthi, R.; et al. MobileLLM: Optimizing Sub-Billion Parameter Language Models for On-Device Use Cases. In Proceedings of the International Conference on Machine Learning; PMLR; PMLR, 2024. [Google Scholar]

- Wang, H.; Zhang, Z.; Han, S. SpAtten: Efficient Sparse Attention Architecture with Cascade Token and Head Pruning. In Proceedings of the 2021 IEEE International Symposium on High-Performance Computer Architecture; 2021; pp. 97–110. [Google Scholar]

- Lu, L.; Jin, Y.; Bi, H.; Luo, Z.; Li, P.; Wang, T.; Liang, Y. Sanger: A Co-Design Framework for Enabling Sparse Attention Using Reconfigurable Architecture. In Proceedings of the MICRO-54: 54th Annual IEEE/ACM International Symposium on Microarchitecture; ACM: Virtual Event Greece, October 18 2021; pp. 977–991. [Google Scholar]

- Zhou, M.; Xu, W.; Kang, J.; Rosing, T. TransPIM: A Memory-Based Acceleration via Software-Hardware Co-Design for Transformer. In Proceedings of the 2022 IEEE International Symposium on High-Performance Computer Architecture (HPCA); IEEE: Seoul, Korea, Republic of, April 2022; pp. 1071–1085. [Google Scholar]

- Sridharan, S.; Stevens, J.R.; Roy, K.; Raghunathan, A. X-Former: In-Memory Acceleration of Transformers. IEEE Trans. Very Large Scale Integr. VLSI Syst. 2023, 31, 1223–1233. [Google Scholar] [CrossRef]

- Zadeh, A.H.; Edo, I.; Awad, O.M.; Moshovos, A. GOBO: Quantizing Attention-Based NLP Models for Low Latency and Energy Efficient Inference. In Proceedings of the 53rd Annual IEEE/ACM International Symposium on Microarchitecture; 2020; pp. 811–824. [Google Scholar]

- Zadeh, A.H.; Mahmoud, M.; Abdelhadi, A.; Moshovos, A. Mokey: Enabling Narrow Fixed-Point Inference for Out-of-the-Box Floating-Point Transformer Models. In Proceedings of the Annual International Symposium on Computer Architecture; ACM / IEEE, 2022; pp. 888–901. [Google Scholar]

- Tambe, T.; Yang, E.-Y.; Wan, Z.; Deng, Y.; Janapa Reddi, V.; Rush, A.; Brooks, D.; Wei, G.-Y. Algorithm-Hardware Co-Design of Adaptive Floating-Point Encodings for Resilient Deep Learning Inference. In Proceedings of the Design Automation Conference; IEEE: San Francisco, CA, USA, 2020; pp. 1–6. [Google Scholar]

- Guo, C.; Zhang, C.; Leng, J.; Liu, Z.; Yang, F.; Liu, Y.; Guo, M.; Zhu, Y. ANT: Exploiting Adaptive Numerical Data Type for Low-Bit Deep Neural Network Quantization. In Proceedings of the International Symposium on Microarchitecture; IEEE, 2022; pp. 1414–1433. [Google Scholar]

- Wen, J.; Zhu, Y.; Li, J.; Zhu, M.; Wu, K.; Xu, Z.; Liu, N.; Cheng, R.; Shen, C.; Peng, Y.; et al. TinyVLA: Towards Fast, Data-Efficient Vision-Language-Action Models for Robotic Manipulation. IEEE Robot. Autom. Lett. 2025. [Google Scholar] [CrossRef]

- Budzianowski, P.; Maa, W.; Freed, M.; Mo, J.; Xie, A.; Tipnis, V.; Bolte, B. EdgeVLA: Efficient Vision-Language-Action Models. arXiv 2025. [Google Scholar] [CrossRef]

- Williams, J.; Gupta, K.D.; George, R.; Sarkar, M. Lite VLA: Efficient Vision-Language-Action Control on CPU-Bound Edge Robots. arXiv 2025. [Google Scholar] [CrossRef]

- Gurunathan, T.S.; Raza, M.S.; Janakiraman, A.K.; Khan, A.; Pal, B.; Gangopadhyay, A. Edge LLMs for Real-Time Contextual Understanding with Ground Robots. In Proceedings of the AAAI Symposium Series; Association for the Advancement of Artificial Intelligence, 2025; Vol. 5, pp. 159–166. [Google Scholar]

- Chen, Q.; Gao, N.; Huang, S.; Low, J.; Chen, T.; Sun, J.; Schwager, M. GRaD-Nav++: Vision-Language Model Enabled Visual Drone Navigation with Gaussian Radiance Fields and Differentiable Dynamics. arXiv 2025. [Google Scholar] [CrossRef]

- Yang, Z.; Zheng, S.; Xie, T.; Xu, T.; Yu, B.; Wang, F.; Tang, J.; Liu, S.; Li, M. EfficientNav: Towards On-Device Object-Goal Navigation with Navigation Map Caching and Retrieval. arXiv 2025. [Google Scholar] [CrossRef]

- Adang, M.; Low, J.; Shorinwa, O.; Schwager, M. SINGER: An Onboard Generalist Vision-Language Navigation Policy for Drones. arXiv 2025. [Google Scholar] [CrossRef]

- Wang, S.; Zhou, D.; Xie, L.; Xu, C.; Yan, Y.; Yin, E. PanoGen++: Domain-Adapted Text-Guided Panoramic Environment Generation for Vision-and-Language Navigation. Neural Netw. 2025, 187, 107320. [Google Scholar] [CrossRef]

- Mohammadi, B.; Abbasnejad, E.; Qi, Y.; Wu, Q.; Hengel, A.V.D.; Shi, J.Q. Learning to Reason and Navigate: Parameter Efficient Action Planning with Large Language Models. arXiv 2025. [Google Scholar] [CrossRef]

- Qiao, Y.; Yu, Z.; Wu, Q. VLN-PETL: Parameter-Efficient Transfer Learning for Vision-and-Language Navigation. In Proceedings of the International Conference on Computer Vision; IEEE/CVF, 2023; pp. 15443–15452. [Google Scholar]

- Du, Y.; Fu, T.; Chen, Z.; Li, B.; Su, S.; Zhao, Z.; Wang, C. VL-Nav: Real-Time Vision-Language Navigation with Spatial Reasoning. arXiv 2025. [Google Scholar] [CrossRef]

- Zhang, Y.; Abdullah, A.; Koppal, S.J.; Islam, M.J. ClipRover: Zero-Shot Vision-Language Exploration and Target Discovery by Mobile Robots. arXiv 2025. [Google Scholar] [CrossRef]

- Wu, Y.; Wu, Y.; Gkioxari, G.; Tian, Y. Building Generalizable Agents with a Realistic and Rich 3D Environment. arXiv 2018. [Google Scholar] [CrossRef]

- Jain, V.; Magalhaes, G.; Ku, A.; Vaswani, A.; Ie, E.; Baldridge, J. Stay on the Path: Instruction Fidelity in Vision-and-Language Navigation. arXiv 2019. [Google Scholar] [CrossRef]

- Ku, A.; Anderson, P.; Patel, R.; Ie, E.; Baldridge, J. Room-Across-Room: Multilingual Vision-and-Language Navigation with Dense Spatiotemporal Grounding. arXiv 2020. [Google Scholar] [CrossRef]

- Thomason, J.; Murray, M.; Cakmak, M.; Zettlemoyer, L. Vision-and-Dialog Navigation. In Proceedings of the Conference on Robot Learning; PMLR, 2020; pp. 394–406. [Google Scholar]

- Krantz, J.; Wijmans, E.; Majumdar, A.; Batra, D.; Lee, S. Beyond the Nav-Graph: Vision-and-Language Navigation in Continuous Environments. In Proceedings of the European Conference on Computer Vision; Springer International Publishing, 2020; pp. 104–120. [Google Scholar]

- Shridhar, M.; Thomason, J.; Gordon, D.; Bisk, Y.; Han, W.; Mottaghi, R.; Zettlemoyer, L.; Fox, D. ALFRED: A Benchmark for Interpreting Grounded Instructions for Everyday Tasks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE / CVF, 2020; pp. 10740–10749. [Google Scholar]

- Zhu, F.; Liang, X.; Zhu, Y.; Chang, X.; Liang, X. SOON: Scenario Oriented Object Navigation with Graph-Based Exploration. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE / CVF, 2021; pp. 12689–12699. [Google Scholar]

- Ramakrishnan, S.K.; Gokaslan, A.; Wijmans, E.; Maksymets, O.; Clegg, A.; Turner, J.; Undersander, E.; Galuba, W.; Westbury, A.; Chang, A.X.; et al. Habitat-Matterport 3D Dataset (HM3D): 1000 Large-Scale 3D Environments for Embodied AI. arXiv 2021. [Google Scholar] [CrossRef]

- Yadav, K.; Ramrakhya, R.; Ramakrishnan, S.K.; Gervet, T.; Turner, J.; Gokaslan, A.; Maestre, N.; Chang, A.X.; Batra, D.; Savva, M.; et al. Habitat-Matterport 3D Semantics Dataset. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE / CVF, 2023; pp. 4927–4936. [Google Scholar]

- Vasudevan, A.B.; Dai, D.; Gool, L.V. Talk2Nav: Long-Range Vision-and-Language Navigation with Dual Attention and Spatial Memory. Int. J. Comput. Vis. 2021, 129, 246–266. [Google Scholar] [CrossRef]

- Chang, A.; Dai, A.; Funkhouser, T.; Halber, M.; Nießner, M.; Savva, M.; Song, S.; Zeng, A.; Zhang, Y. Matterport3D: Learning from RGB-D Data in Indoor Environments. arXiv 2017. [Google Scholar] [CrossRef]

- Xia, F.; Zamir, A.; He, Z.-Y.; Sax, A.; Malik, J.; Savarese, S. Gibson Env: Real-World Perception for Embodied Agents. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition; IEEE, 2018; pp. 9068–9079. [Google Scholar]

- Savva, M.; Kadian, A.; Maksymets, O.; Zhao, Y.; Wijmans, E.; Jain, B.; Straub, J.; Liu, J.; Koltun, V.; Malik, J.; et al. Habitat: A Platform for Embodied AI Research. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE / CVF, 2019; pp. 9339–9347. [Google Scholar]

- Kolve, E.; Mottaghi, R.; Han, W.; VanderBilt, E.; Weihs, L.; Herrasti, A.; Deitke, M.; Ehsani, K.; Gordon, D.; Zhu, Y.; et al. AI2-THOR: An Interactive 3D Environment for Visual AI. arXiv 2017. [Google Scholar] [CrossRef]

- Deitke, M.; Han, W.; Herrasti, A.; Kembhavi, A.; Kolve, E.; Mottaghi, R.; Salvador, J.; Schwenk, D.; VanderBilt, E.; Wallingford, M.; et al. RoboTHOR: An Open Simulation-to-Real Embodied AI Platform. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE / CVF, 2020; pp. 3164–3174. [Google Scholar]

- Jain, G.; Hindi, B.; Zhang, Z.; Srinivasula, K.; Xie, M.; Ghasemi, M.; Weiner, D.; Paris, S.A.; Xu, X.Y.T.; Malcolm, M.; et al. StreetNav: Leveraging Street Cameras to Support Precise Outdoor Navigation for Blind Pedestrians. In Proceedings of the 37th Annual ACM Symposium on User Interface Software and Technology; ACM, 2024; pp. 1–21. [Google Scholar]

- Shah, S.; Dey, D.; Lovett, C.; Kapoor, A. AirSim: High-Fidelity Visual and Physical Simulation for Autonomous Vehicles. In Proceedings of the Field and Service Robotics: Results of the 11th International Conference; Springer International Publishing, 2017; pp. 621–635. [Google Scholar]

- Anderson, P.; Chang, A.; Chaplot, D.S.; Dosovitskiy, A.; Gupta, S.; Koltun, V.; Kosecka, J.; Malik, J.; Mottaghi, R.; Savva, M.; et al. On Evaluation of Embodied Navigation Agents. 2018. [Google Scholar] [CrossRef]

- Edelkamp, S.; Schrödl, S. Heuristic Search: Theory and Applications; Morgan Kaufmann: Amsterdam Boston, 2012; ISBN 978-0-12-372512-7. [Google Scholar]

- Ilharco, G.; Jain, V.; Ku, A.; Ie, E.; Baldridge, J. General Evaluation for Instruction Conditioned Navigation Using Dynamic Time Warping. In Proceedings of the NeurIPS Visually Grounded Interaction and Language Workshop; NeurIPS, 2019. [Google Scholar]

- Pearl, J. Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference; 1. Aufl.; Elsevier Reference Monographs: s.l., 2014; ISBN 978-1-55860-479-7.

- Schrittwieser, J.; Antonoglou, I.; Hubert, T.; Simonyan, K.; Sifre, L.; Schmitt, S.; Guez, A.; Lockhart, E.; Hassabis, D.; Graepel, T.; et al. Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model. Nature 2020, 588, 604–609. [Google Scholar] [CrossRef]

- Chen, X.; Ma, Z.; Zhang, X.; Xu, S.; Qian, S.; Yang, J.; Fouhey, D.F.; Chai, J. Multi-Object Hallucination in Vision Language Models. Adv. Neural Inf. Process. Syst. 2024, 37, 44393–44418. [Google Scholar]

- Wang, L.; Xia, X.; Zhao, H.; Wang, H.; Wang, T.; Chen, Y.; Liu, C.; Chen, Q.; Pang, J. Rethinking the Embodied Gap in Vision-and-Language Navigation: A Holistic Study of Physical and Visual Disparities. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE / CVF, 2025; pp. 9455–9465. [Google Scholar]

- Dong, X.; Zhao, H.; Gao, J.; Li, H.; Ma, X.; Zhou, Y.; Chen, F.; Liu, J. SE-VLN: A Self-Evolving Vision-Language Navigation Framework Based on Multimodal Large Language Models. arXiv 2025. [Google Scholar] [CrossRef]

- Hong, H.; Qiao, Y.; Wang, S.; Liu, J.; Wu, Q. General Scene Adaptation for Vision-and-Language Navigation. arXiv 2025. [Google Scholar] [CrossRef]

- Chen, S.; Wu, Z.; Zhang, K.; Li, C.; Zhang, B.; Ma, F.; Yu, F.R.; Li, Q. Exploring Embodied Multimodal Large Models: Development, Datasets, and Future Directions. Inf. Fusion 2025, 103198. [Google Scholar] [CrossRef]

| Category | Methods | Year | Contributions |

|---|---|---|---|

| Instruction Semantic Encoding |

Speaker-Follower [24] |

2018 | Encodes instruction sequences with LSTM and aligns them with trajectories through data augmentation. |

| RCM [25] |

2019 | Reinforces cross-modal semantic alignment using matching rewards to improve global language–vision consistency. | |

| DDN [6] |

2023 | Extracts attribute-level semantics via LLM and aligns them with CLIP embeddings to construct a demand-conditioned semantic space. | |

| LOC-ZSON [31] |

2024 | Builds object-centric semantic representations and uses LLM prompting to achieve zero-shot language–vision alignment in open-vocabulary settings. | |

| CrossText2Loc [32] |

2025 | Enhances cross-view geo-semantic alignment by extending CLIP with positional encoding, OCR grounding, and hallucination suppression mechanisms. | |

| VLFly [33] |

2025 | Integrates LLaMA-3 semantic encoding with CLIP-based visual grounding to support open-vocabulary goal understanding. | |

| Instruction Semantic Parsing |

InstructNav [34] |

2024 | Parses natural language instructions into Dynamic Chains of Navigation, providing a structured and executable semantic representation for navigation. |

| Long et al. [35] |

2024 | Refines instruction semantics through multi-expert LLM discussions, producing more reliable and consistent semantic interpretations before navigation. | |

| NaviLLM [28] |

2024 | Unifies navigation, localization, summarization, and QA within a schema-based generative semantic parsing framework. | |

| GC-VLN [36] |

2025 | Parses natural language into entity–spatial graph constraints, enabling training-free semantic-to-action mapping through structured instruction graphs. | |

| Wang et al. [37] |

2025 | Extracts instruction-related semantic cues and aligns them with candidate paths on 3D semantic maps, enabling instruction-aware path planning. |

| Category | Methods | Year | Contributions |

| Feature Extraction |

LangNav [38] |

2023 | Integrates VLM and detectors to form language-aligned semantic perception for complex environments. |

| Dorbala et al. [39] |

2023 | Uses LLM commonsense priors to infer object locations and guide zero-shot exploration. | |

| ESC [40] |

2023 | Applies soft commonsense constraints to improve semantic-driven search in unseen scenes. | |

| MapGPT [27] |

2024 | Transforms visual observations into map-guided prompts to support LLM-based planning. | |

| Qiu et al. [41] |

2024 | Builds open-vocabulary 3D semantic maps unifying spatial and language-driven navigation cues. | |

| LLVM-Drone [42] |

2025 | Extracts semantic environmental features through LLM–vision collaboration with structured prompts and consistency checks. | |

| LM-Nav [43] |

2023 | Aligns CLIP visual features with textual landmark descriptions for accurate goal grounding. | |

| AeroDuo [45] |

2025 | Combines high-altitude semantic mapping with low-altitude obstacle-aware perception for dual-view UAV scene understanding. | |

| NaviD [46] |

2024 | Uses EVA-CLIP temporal encoding to produce continuous scene representations from video history. | |

| NavGPT-2 [47] |

2024 | Projects visual frames into lightweight fixed-length tokens for efficient reasoning. | |

| NavAgent [48] |

2024 | Fuses multi-scale urban street-view cues using GLIP-enhanced landmark extraction and cross-view matching to improve semantic grounding. | |

| FLAME [49] |

2025 | Learns multi-view semantic representations via Flamingo-based multimodal fusion, supporting route summarization and end-to-end action prediction. | |

| EZREAL [50] |

2025 | Improves far-distance target localization using multiscale visual cues, visibility-aware memory, and depth-free heading estimation. | |

| Li et al. [51] |

2023 | Predicts future-view semantic cues to enhance forward-looking visual understanding. | |

| Structured Memory |

OVER-NAV [52] |

2024 | Constructs an Omnigraph using LLM-parsed semantics and open-vocabulary detections as a persistent structured memory for IVLN. |

| CityNavAgent [53] |

2025 | Uses hierarchical semantic planning with global memory to support long-range UAV navigation. | |

| JanusVLN [54] |

2025 | Separates semantic and spatial cues via dual implicit memories to improve generalization. | |

| COSMO [55] |

2025 | Uses selective sparse memory to reduce redundancy and enhance cross-modal efficiency. | |

| Guide-LLM [56] |

2024 | Represents spatial topology as text-based nodes/edges for LLM-accessible world memory. | |

| Dynam3D [57] |

2025 | Introduces dynamic layered 3D tokens enabling semantic–geometric coupling and long-term reusable 3D environment memory. | |

| VLN-KHVR [58] |

2025 | Integrates external knowledge and navigation history into a temporally coherent state memory. | |

| VLN-ChEnv [59] |

2025 | Models evolving environments via temporal multi-modal updates for dynamic scene understanding. | |

| StreamVLN [60] |

2025 | Uses SlowFast streaming memory to capture short-term changes while retaining long-term context. | |

| Generative Prediction |

VLFM [61] |

2024 | Builds vision–language frontier maps to predict semantic frontiers in unseen environments. |

| VISTA [62] |

2025 | Uses diffusion-based imagination to generate future scene hypotheses for proactive navigation. | |

| Fan et al. [63] |

2025 | Uses scene-map prompts to generate task-aligned navigation instructions. | |

| ImagineNav [64] |

2024 | Prompts VLMs to imagine and align future states to guide anticipatory navigation decisions. | |

| Saanum et al. [65] |

2024 | Demonstrates simple models can reliably predict future states. | |

| KiteRunner [66] |

2025 | Combines language parsing, UAV mapping, and diffusion-based local trajectory generation for outdoor navigation. |

| Category | Methods | Year | Contributions |

| Explicit Reasoning |

EvolveNav [67] |

2025 | Improves navigation via self-evolving LLM reasoning, repeatedly refining explicit reasoning chains to enhance embodied decision-making. |

| MSNav [68] |

2025 | Performs explicit LLM-based spatial reasoning with dynamic memory to support zero-shot navigation in unseen environments. | |

| NavGPT [26] |

2024 | Performs explicit language-driven reasoning by integrating textualized observations with structured step-by-step decision chains. | |

| NavCoT [69] |

2025 | Introduces navigational CoT via imagined next-view prediction and disentangled reasoning for improved action selection. | |

| SayCan [70] |

2022 | Combines LLM-generated high-level subgoals with affordance-based feasibility grounding for reliable execution. | |

| Khan et al. [71] |

2025 | Uses context-aware LLM reasoning with weighted goal fusion and interpretable direction scoring for robust UAV navigation. | |

| VLN-Zero [72] |

2025 | Employs neuro-symbolic scene-graph planning with rapid exploration and cache-enabled reasoning for zero-shot transfer. | |

| FSR-VLN [73] |

2025 | Uses hierarchical multi-modal scene graphs with fast-to-slow reasoning for interpretable and efficient navigation planning. | |

| Open-Nav [74] |

2025 | Leverages open-source LLMs for waypoint prediction and spatiotemporal CoT reasoning in continuous VLN. | |

| Implicit Reasoning |

NavGPT-2 [47] |

2024 | Uses lightweight visual tokenization for efficient latent-space VLN reasoning. |

| Kang et al. [75] |

2025 | Reduces inference redundancy through dynamic early-exit and adaptive reasoning depth without explicit planning chains. | |

| Liu et al. [76] |

2024 | Learns implicit state-action distributions via energy-based modeling for stable, distribution-aligned navigation policies. | |

| VLN-R1 [77] |

2025 | Applies reinforcement fine-tuning on LVLM agents to optimize long-horizon behavior through reward-driven policy refinement. | |

| PGNS [78] |

2024 | Learns pixel-guided navigation skills that implicitly couple visual cues with action generation for zero-shot object navigation. | |

| AerialVLN [79] |

2023 | Learns continuous UAV navigation policies through cross-modal fusion without explicit reasoning chains. | |

| Generative Planning |

Cog-GA [80] |

2024 | Builds a generative LLM-based agent with cognitive maps enabling semantic memory, reflective correction, and long-horizon planning. |

| Ha et al. [81] |

2018 | Establishes latent-dynamics imagination frameworks inspiring generative planning for later VLN world-model approaches. | |

| Dreamwalker [82] |

2023 | Constructs a discrete abstract world model enabling mental planning and multi-step rollout in continuous VLN environments. | |

| DreamNav [83] |

2025 | Utilizes trajectory-based imagination with view correction and future-trajectory prediction for zero-shot long-range planning. | |

| Bar et al. [84] |

2025 | Uses controllable video-generation world models to simulate future observations for planning in familiar and novel environments. |

| Category | Methods | Year | Contributions |

| Basic Control Strategies |

Kåsene et al. [85] |

2025 | Compares egocentric low-level actions against panoramic actions, showing finer semantic-behavior alignment. |

| NaviD [46] |

2024 | Video-based VLM performs end-to-end continuous control for unified language-vision-action next-step planning. | |

| SayNav [86] |

2024 | Converts each LLM-generated planning step into short-range point-goal tasks, enabling execution through basic low-level control commands. | |

| Chen et al. [87] |

2025 | Uses semantic segmentation and affordance-grounding to map linguistic intent into precise continuous control actions. | |

| UAV-ON [88] |

2025 | Defines an open-world UAV object-goal benchmark that evaluates basic action-level control policies. | |

| RT-2 [89] |

2023 | Unifies vision–language representations to generate executable action tokens via large-scale robotic transformer training. | |

| OpenVLA [90] |

2024 | Open-source vision-language-action framework producing end-to-end action tokens through shared multimodal embeddings. | |

| LaViRA [91] |

2025 | Translates natural-language instructions to robot-level continuous actions via unified language–vision–action generation. | |

| Lagemann et al. [92] |

2023 | Learns invariant latent dynamics enabling multi-step internal state prediction for improved control consistency. | |

| Closed-loop Control | SkyVLN [93] |

2025 | Integrates VLN perception with NMPC to perform closed-loop continuous control for UAV navigation. |

| UAV-VLN [94] |

2025 | Employs multimodal feedback loops enabling real-time trajectory correction for UAV-based VLN in complex outdoor scenes. | |

| Narrate2Nav [95] |

2025 | Embeds implicit language reasoning into visual encoders, enabling human-aware real-time control in dynamic environments. | |

| CL-CoTNav [96] |

2025 | Combines hierarchical chain-of-thought with confidence-triggered re-reasoning to achieve self-correcting closed-loop actions. | |

| LogisticsVLN [97] |

2025 | Lightweight multimodal-LLM cascade loop that on-the-fly corrects window-level positioning errors in UAV terminal delivery. | |

| Choutri et al. [98] |

2025 | Offline bilingual voice feedback loop enabling zero-latency semantic-control self-correction in low-connectivity dynamic outdoors. | |

| MMCNav [99] |

2025 | Multi-agent dual-loop reflection closed-loop that cooperatively refines long-range dense-instruction outdoor trajectories in real time. | |

| Generative Control |

Shi et al. [100] |

2025 | Combines diffusion policies with DAgger to reduce compounding errors and stabilize long-horizon action generation. |

| NavDP [101] |

2025 | Generates multiple candidate trajectories in latent space and refines them via privilege-informed critic-guided optimization. | |

| ComposableNav [102] |

2025 | Uses composable diffusion models to generate flexible, instruction-aligned continuous control sequences in dynamic settings. | |

| FLUC [103] |

2025 | LLM offline generates flight-control code, turning natural language into executable aerial logic for zero-manual-programming generative UAV control. |

| Methods | Base Model | Deployment Platform | Inference Latency | Task Performance |

|---|---|---|---|---|

| TinyVLA [174] |

Pythia-0.4–1.3B | A6000 (training only) | 5 ms/step | ↑+25.7% SR |

| EdgeVLA [175] |

Qwen2-0.5B + SigLIP + DINO | A100 (training only) | 14 ms/action | ≈OpenVLA, 7× faster |

| Lite VLA [176] |

SmolVLM-256M | Raspberry Pi 4 (4GB) | 0.09 Hz | Stable office nav |

| Gurunathan et al. [177] |

LLaVA-1.5-7B | Jetson Orin NX/Nano | 19.25 tok/s | >90% VQA acc |

| GRaD-Nav++ [178] |

BLIP-2 6.7B + 3D-Gaussian + Diff-dynamics | Jetson Orin NX 16GB | 45 ms/step | +18.6 % SR (Urban-VLN) |

| EfficientNav [179] |

LLaMA-3.2-11B / LLaVA-34B | Jetson AGX Orin (32GB) | 0.35 s/step | ↑+11.1% SR (Habitat) |

| SINGER [180] |

CLIP-ViT + SV-Net | Jetson Orin Nano (8GB) | 12 Hz infer | ↑+23.3% SR |

| PanoGen++ [181] |

Stable Diffusion | Offline generation | × | ↑+1.77% SR (R2R) |

| PEAP-LLM [182] |

Llama-2-7B | RTX 3090 (training only) |

823 ms/step | ↑+4.0% SPL (REVERIE) |

| VLN-PETL [183] |

BERT+ViT | GPU training only | × | ≈Full fine-tune |

| VL-Nav [184] |

CLIP-Res50 + Spatial-LLM-7B | Jetson Xavier NX | 55 ms/frame | +12.3 % SR (Habitat-R2R) |

| ClipRover [185] |

CLIP ViT-B/32 | Raspberry Pi 4 | 0.11 s/obs | 86 % zero-shot target discovery |

| Dataset | Snapshot Description |

|---|---|

| R2R 2018 [4] |

Content: 90 different building scenes from the Matterport3D dataset (including homes, offices, churches, etc.), with 21k detailed natural language navigation instructions. Highlights: the first dataset that connects natural language instructions with large-scale, real 3D environments, driving a paradigm shift in VLN from grid worlds to real-world scenarios. Limitations: The path is relatively simple, lacking interactive tasks and dynamic environments. |

| RoomNav 2018 [186] |

Content: 45K+ indoor 3D environments in House3D with room categories, agent viewpoints, and navigation trajectories paired with concise, room-type textual instructions. Highlights: It offers structured, goal-directed navigation tasks that tightly couple semantic room labels with embodied visual exploration. Limitations: Instructions and goals are simplistic and low-level, limiting linguistic richness and real-world navigation generalization. |

| R4R 2019 [187] |

Content: 200K instructions in 61 scenes (train) plus 1000+ (val-seen) and 45,162 (val-unseen) split. Highlights: It concatenates adjacent R2R trajectories to form longer, twistier paths, reducing bias toward shortest-path behavior. Limitations: Because paths are algorithmically combined rather than naturally annotated, some linguistic fidelity may still be imperfect or unnatural. |

| RxR 2020 [188] |

Content: 120K+ multilingual (English, Hindi, Telugu) navigation instructions and 16,000 distinct paths in Matterport3D scenes. Highlights: It provides dense spatiotemporal grounding by aligning each word in the instruction with the speaker’s pose trajectory, and supports multilingual VLN. Limitations: High complexity, high resource demands, occasional instruction–trajectory misalignment. |

| CVDN 2020 [189] |

Content: 2K human-human dialogs spanning over 7,000 navigation trajectories across 83 Matterport houses. Highlights: It enables interactive navigation by incorporating dialogue-based grounded guidance where a navigator asks questions and an oracle gives privileged-step advice. Limitations: Dialog history can be noisy and sparse, making it challenging for agents to infer correct actions purely from conversational context. |

| VLN-CE 2020 [190] |

Content: 16K+ path-instruction pairs across 90 Matterport3D scenes. Highlights: Continuous-motion navigation in realistic 3D environments rather than discrete graph steps. Limitations: Requires fine-grained control and is more computationally demanding, making training and sim-to-real transfer harder. |

| REVERIE 2020 [5] |

Content: 21,000 human-written high-level navigation instructions across 86 buildings, targeting 4K remote objects. Highlights: It combines navigation with object grounding, requiring an agent not only to walk but also to identify a distant target object. Limitations: The high-level, concise instructions make precise step-by-step navigation hard, and locating the correct object in complex scenes is challenging. |

| ALFRED 2020 [191] |

Content: 25K+ English directives paired with expert demonstrations across 120 indoor AI2-THOR scenes. Highlights: It supports long-horizon, compositional household tasks with both high-level goals and step-by-step instructions, combining navigation and object manipulation. Limitations: The tasks are very complex and long, making models hard to train and generalize, and success rates remain low. |

| SOON 2021 [192] |

Content: 3,000 natural-language instructions and 40K trajectories across 90 Matterport3D scenes. Highlights: It emphasizes starting-point independence and coarse-to-fine scene descriptions, so an agent can navigate from anywhere to a fully described target. Limitations: Dense, complex instructions and long trajectories make navigation hard to follow. |

| HM3D 2021 [193] |

Content: Over 1,000 high-quality, photorealistic indoor 3D reconstructed environments covering diverse residential and commercial buildings. Highlights: The largest and most realistic indoor 3D reconstruction dataset used in embodied AI; provides dense geometry, consistent semantics, and significantly richer diversity than previous scanned datasets. Limitations: Contains only environment scans—no human-written navigation instructions; must be paired with VLN task datasets for instruction grounding. |

| HM3D-SEM 2023 [194] |

Content: Semantic extension of HM3D, including instance-level annotations, room categories, object labels, and spatial relationships. Highlights: Supports semantic-driven VLN, object-centric navigation, and open-vocabulary reasoning by providing detailed scene semantics. Limitations: Like HM3D, it lacks natural-language instructions and requires external datasets for grounded VLN tasks. |

| DDN 2023 [6] |

Content: 1,000+ demand-instructions mapped to 600 AI2-THOR + ProcThor scenes. Highlights: It lets agents reason over user needs (e.g. “I’m thirsty”) instead of object names, finding any object whose attributes satisfy the demand. Limitations: Fixed mappings limit generalization to unseen environments. |

| Talk2Nav 2021 [195] |

Content: 10K human-written navigation routes over 40K Google Street View nodes in a 10 km × 10 km area of New York City. Highlights: It captures long-range, real-world outdoor navigation grounded in verbal instructions referencing landmarks and directions. Limitations: Ambiguous scenes and instructions make it hard to reliably localize and follow instructions. |

| Simulators | Support Scene | Highlights |

|---|---|---|

| Matterport3D [196] |

Realistic, photo-quality indoor environments like homes, offices, and public spaces | Interactive 3D navigation with accurate visual and spatial cues; Supports advanced embodied AI research |

| House3D [186] |