Submitted:

15 April 2026

Posted:

23 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

It is impossible that someone should set up a certain well-defined system of axioms and rules and consistently make the following assertion about it: All of these axioms and rules I perceive to be correct, and moreover I believe that they contain all of mathematics.Kurt Gödel [1]

- Theory claims: Everything reduces to ZFC.

- Practice shows: ZFC is almost never invoked outside of logic and set theory itself.

- Theory claims: Incompleteness is a universal fog covering all mathematics.

- Practice shows: Most working domains (geometry, finite algebra, elementary analysis) behave as if they are complete and decidable.

2. Axiom 1: The Requirement of External Vantage Point

The exact definition of the concept of truth implies the necessity of a strict distinction between the language which is the object of our investigations and the language in which these investigations are carried out.Alfred Tarski [3]

3. The Logic of Separation: The Decidability Threshold

In consequence of the philosophical significance of the mathematical integers...one could perhaps expect that it would be possible to find a few evident axioms from which all properties of integers could be derived. This expectation...is false.Kurt Gödel [4]

3.1. The Trinity of Danger

- 1.

- Classical Negation: The ability to assert falsity and rely on classical proof principles sufficient to express provability and negation in the usual sense used in diagonalization arguments.

- 2.

-

Representability (Encoding): The capacity to define a discrete infinite predicate and represent all primitive recursive functions on that predicate.

- 3.

- Discrete Unboundedness: An infinite supply of distinct discrete states.

3.2. Federalism as Quarantine

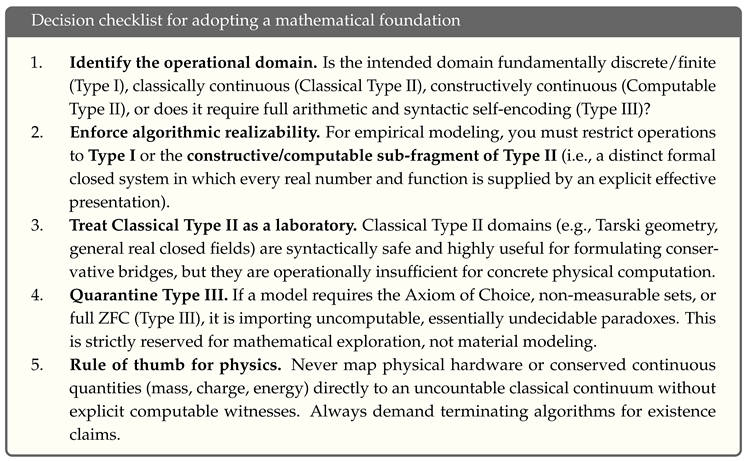

- Type I (Finite Domains): Boolean Algebra, Finite Groups. They possess Negation and Encoding, but drop Unboundedness. They are trivially decidable and inherently computable.

- Type II (Tame Continuous/Linear Domains): Euclidean Geometry, Presburger Arithmetic, Real Closed Fields. They possess Negation and Unboundedness, but they drop Representability. Core domains remain syntactically decidable via quantifier elimination. (Note: For the empirical scientist, syntactic decidability is insufficient; physical modeling requires restricting these to their Computable sub-fragments, as detailed in Section 5).

- Type III (Wild Domains): Peano Arithmetic, ZFC Set Theory. They possess all three ingredients. They are essentially undecidable.

4. The Cost of Reduction: The Compiler Myth

It is a fact of great philosophical interest that our knowledge of the system of real numbers and its geometry is much more complete than our knowledge of the system of integers.Alfred Tarski [2]

- 1.

-

Loss of Structural Immunity: In suitably chosen tame geometric formalisms (such as Tarski’s first-order geometry [2]), the admissible first-order questions admit effective elimination procedures. The native language excludes the expressive resources required to generate the familiar set-theoretic independence phenomena.When we embed geometry into ZFC, we expose geometric objects to the full expressive power of set theory. We can now formulate questions about geometric points—involving arbitrary set membership, cardinality, or choice functions—that are undecidable. Reduction to ZFC is not neutral; it changes the logical ecology of the objects. We have moved the object from a Clean Room to a Wild environment.

- 2.

- The Proof-Theoretic Overhead: In the native domain, truth is often algorithmic or governed by domain-specific decision methods. In the reduced domain (ZFC), truth is fundamentally deductive. While ZFC can simulate the geometric algorithm, the default mode of set theory is general proof search. By compiling down, we bury the efficient, domain-specific insight under layers of generic set-theoretic machinery.

- 3.

-

The Combinatorial Explosion of Strict Reduction: We do not need to look far to see what happens when the Compiler Myth is taken literally. The Bourbaki group famously attempted to rigorously formalize mathematics from the ground up using a strict set-theoretic foundation. As calculated by A.R.D. Mathias [5], the fully expanded formal definition of the number 1 in Bourbaki’s strict foundational system requires symbols.A standard defense is that abstraction layers exist precisely so no one has to write fully expanded proofs. But this misses the point: strict reduction is not epistemically faithful to the structures mathematicians actually exploit. A foundation that requires 4.5 trillion symbols to define a trivially decidable primitive concept is not providing ultimate semantic clarity; it is creating an epistemic collapse.

5. Implications for the Consumer: The Warning Label

The deductive method cannot, of course, provide any absolute guarantee of the material truth of the theorems established by its means. The truth of a theorem depends entirely on the truth of the axioms from which it has been deduced.Alfred Tarski [6]

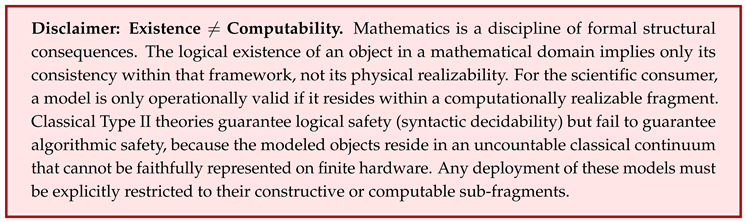

5.1. The Mirage of Non-Constructive Existence

5.2. The Risk of Using the Wrong Domain

6. Bridges Are Explicit, Not Automatic

- Analytic Geometry: A bridge from Euclidean Space to Field Arithmetic.

- Galois Theory: A bridge from Fields to Groups.

- Algebraic Topology: A bridge from Spaces to Algebraic Invariants.

6.1. Conservative vs. Non-Conservative Bridges

7. The Federated Organisation We Already Possess

8. Objections and Replies

9. Conclusions: Codifying Implicit Wisdom

Either mathematics is too big for the human mind, or the human mind is more than a machine.Kurt Gödel [1]

- 1.

- Logical Safety: Domains separate to preserve the Decidability Threshold (avoiding the Trinity of Danger).

- 2.

- Epistemic Efficiency: Reduction to ZFC is avoided because it imposes a crippling Epistemic Overhead, destroying the native algorithmic methods of local domains.

- 3.

- Algorithmic Realizability: This separation protects the Consumer (the Scientist) from inheriting uncomputable logical paradoxes. It allows empirical science to cleanly identify and operate exclusively within structurally valid, computable frameworks (Type I and Computable Type II).

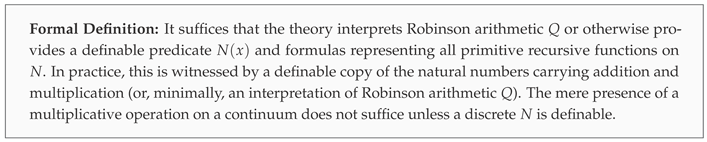

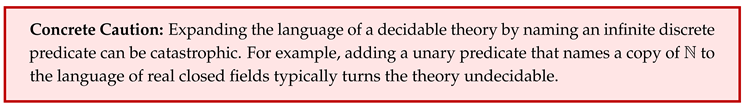

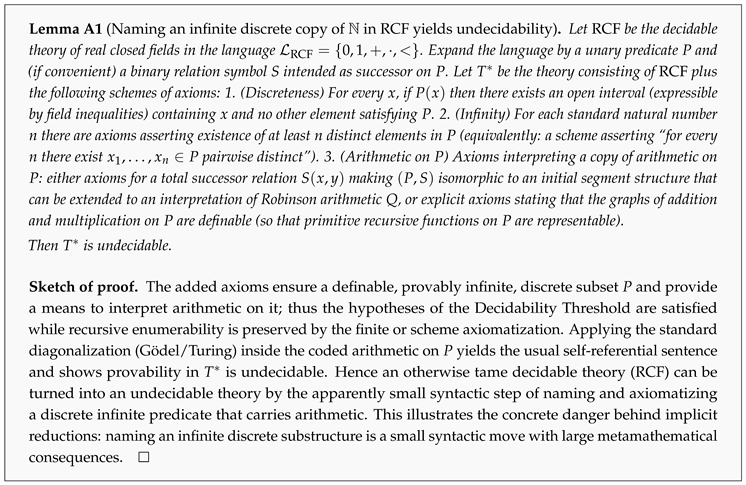

Appendix A. Formalizing the Decidability Threshold

Commentary: Mapping the Lemma to Modeling Mistakes

References

- Gödel, K. Some basic theorems on the foundations of mathematics and their implications. In Collected Works, Volume III: Unpublished Essays and Lectures; Feferman, S., et al., Eds.; Oxford University Press, 1995; Delivered as the 25th Josiah Willard Gibbs Lecture (1951). [Google Scholar]

- Tarski, A. A Decision Method for Elementary Algebra and Geometry; University of California Press, 1951. [Google Scholar]

- Tarski, A. The Semantic Conception of Truth: and the Foundations of Semantics. Philosophy and Phenomenological Research 1944, 4, 341–376. [Google Scholar] [CrossRef] [PubMed]

- Gödel, K. Remarks before the Princeton bicentennial conference on problems in mathematics. In Collected Works, Volume II: Publications 1938–1974; Feferman, S., et al., Eds.; Oxford University Press, 1990; Originally presented in 1946. [Google Scholar]

- Mathias, A.R.D. A Term of Length 4,523,659,424,929 Calculates the precise combinatorial bloat of Bourbaki’s foundational system when defining the number 1. Synthese 2002, 133, 75–86. [Google Scholar] [CrossRef]

- Tarski, A. Introduction to Logic and to the Methodology of Deductive Sciences; Oxford University Press: New York, 1941. [Google Scholar]

- De Giorgi, E. Sulla differenziabilità e l’analiticità delle estremali degli integrali multipli regolari. Memorie della Accademia delle Scienze di Torino. Classe di Scienze Fisiche, Matematiche e Naturali 1957, 3, 25–43. [Google Scholar]

- Nash, J. Continuity of solutions of parabolic and elliptic equations. American Journal of Mathematics 1958, 80, 931–954. [Google Scholar] [CrossRef]

- Bishop, E. Foundations of Constructive Analysis; McGraw-Hill: New York, 1967. [Google Scholar]

- Pour-El, M.B.; Richards, J.I. Computability in Analysis and Physics. In Perspectives in Mathematical Logic; Springer-Verlag: Berlin, Heidelberg, 1989. [Google Scholar]

- Banach, S.; Tarski, A. Sur la décomposition des ensembles de points en parties respectivement congruentes. Fundamenta Mathematicae 1924, 6, 244–277. [Google Scholar] [CrossRef]

- Wagon, S. The Banach–Tarski Paradox; Cambridge University Press: Cambridge, 1985. [Google Scholar]

- Montague, R. Deterministic Theories and Interpretations; 1965. [Google Scholar]

- Feferman, S. What rests on what? The proof-theoretic analysis of mathematics. In Proceedings of the Proceedings of the 15th International Congress of Logic, Methodology and Philosophy of Science, 1998. [Google Scholar]

- Voevodsky, V. The Origins and Motivations of Univalent Foundations. The Institute Letter, 2014. [Google Scholar]

- Mac Lane, S. Categories for the Working Mathematician; Springer, 1998. [Google Scholar]

- Gödel, K. Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I. Monatshefte für Mathematik und Physik 1931, 38, 173–198. [Google Scholar] [CrossRef]

- Turing, A.M. On Computable Numbers, with an Application to the Entscheidungsproblem. Proceedings of the London Mathematical Society, Series 2 1936, 42, 230–265. [Google Scholar]

- Tarski, A.; Mostowski, A.; Robinson, R.M. Undecidable Theories; North-Holland, 1953. [Google Scholar]

| 1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).