Submitted:

30 January 2026

Posted:

05 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Systematic Review Methodology

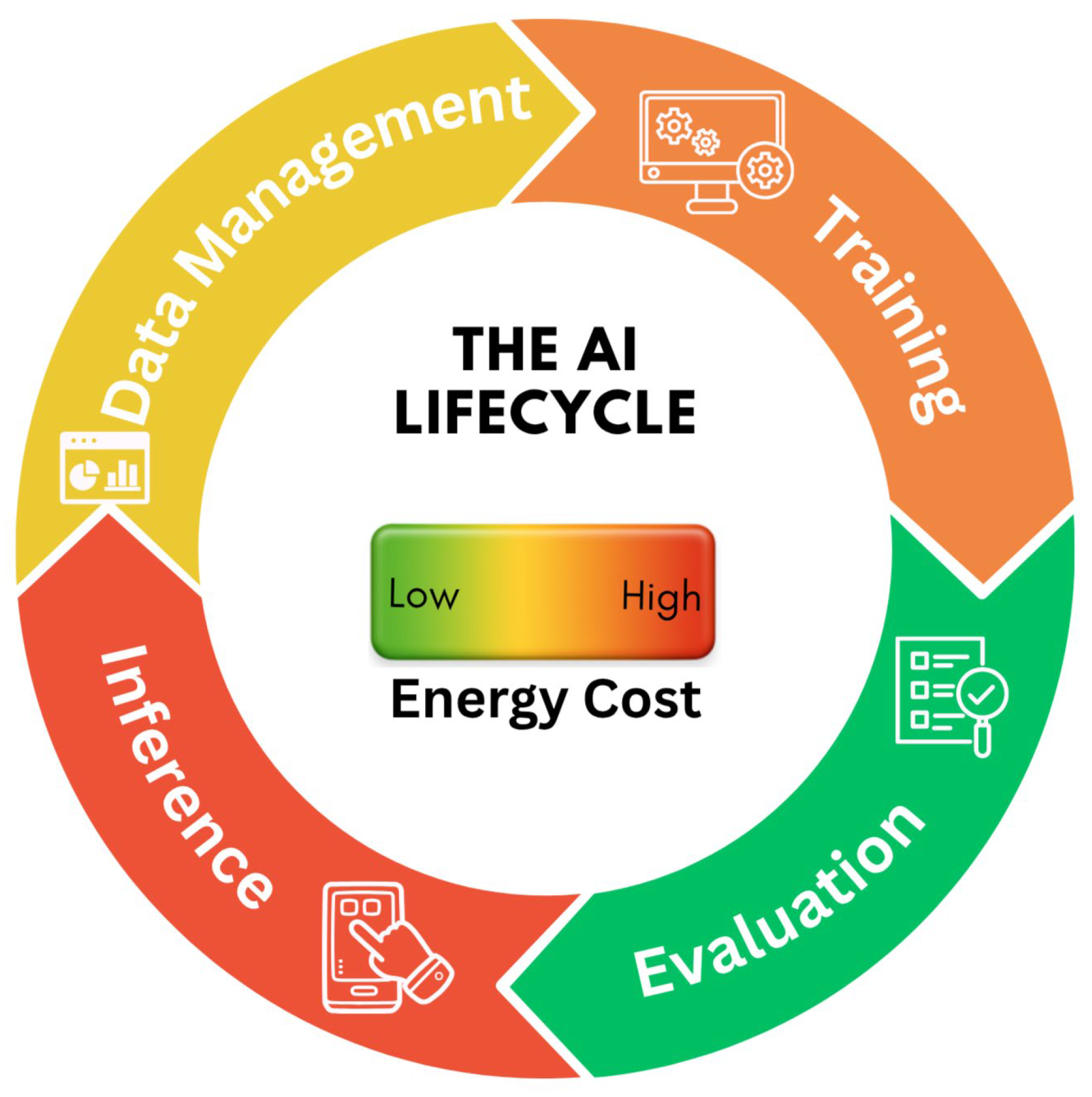

- RQ1: How can the environmental impact of AI be measured during its lifecycle?

- RQ2: What is the amount of energy consumed by various categories of AI methods?

- RQ3: What methods have been established to reduce AI carbon emissions without compromising model performance?

- s1.

- On AI: {(artificial intelligence) OR (AI) OR (machine learning) OR (ML) OR (deep learning) OR (DL)}

- s2.

- On large and trending AI: {(foundation) OR (generative) OR (genAI) OR (large language) OR (LLM) OR (federated) OR (self supervised) OR (self-supervised) OR (supervised) OR (pretrain) OR (agentic)}

- s3.

- On green: {(carbon emission) OR (energy consumption) OR (computation cost) OR (sustainable) OR (green)}

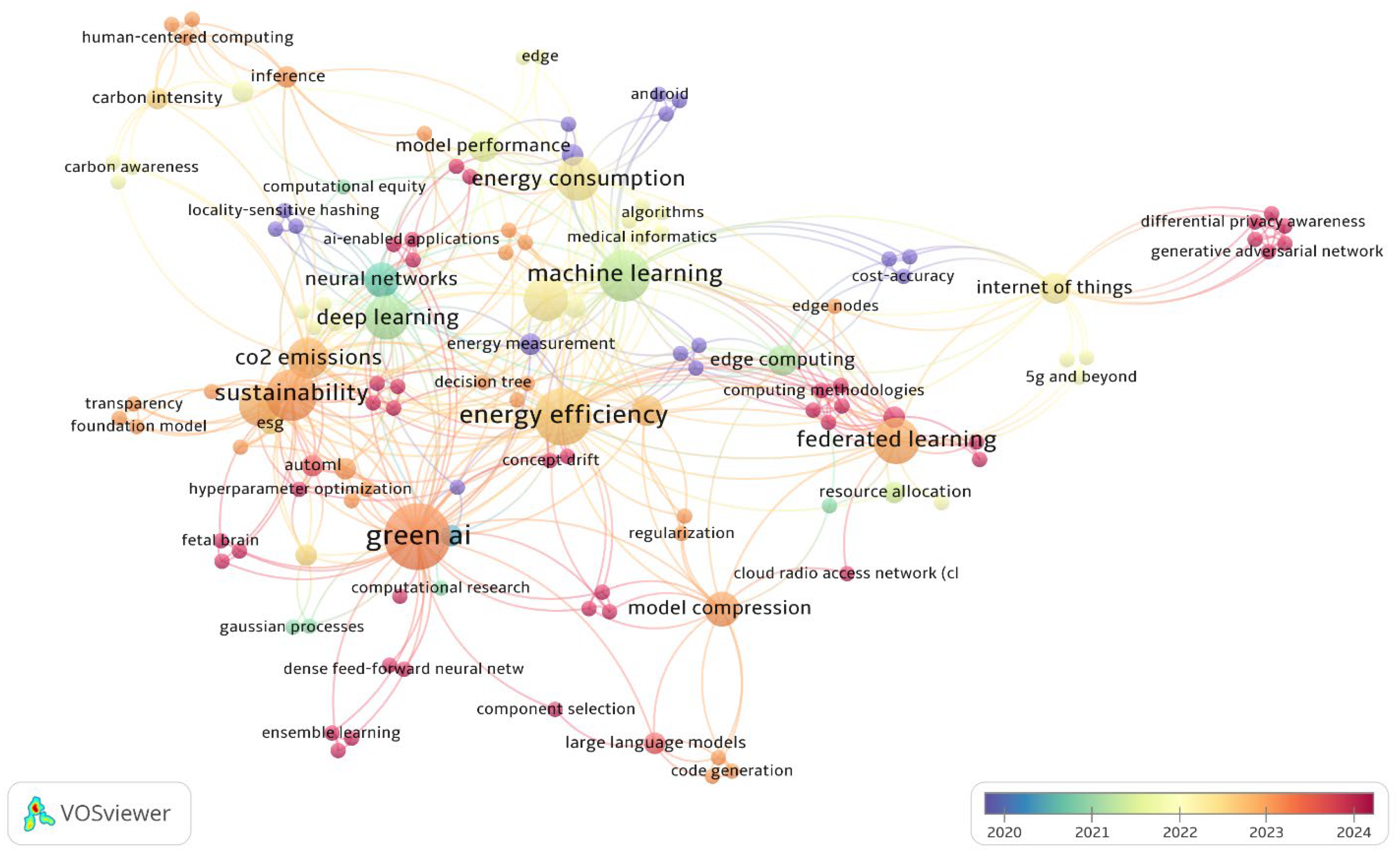

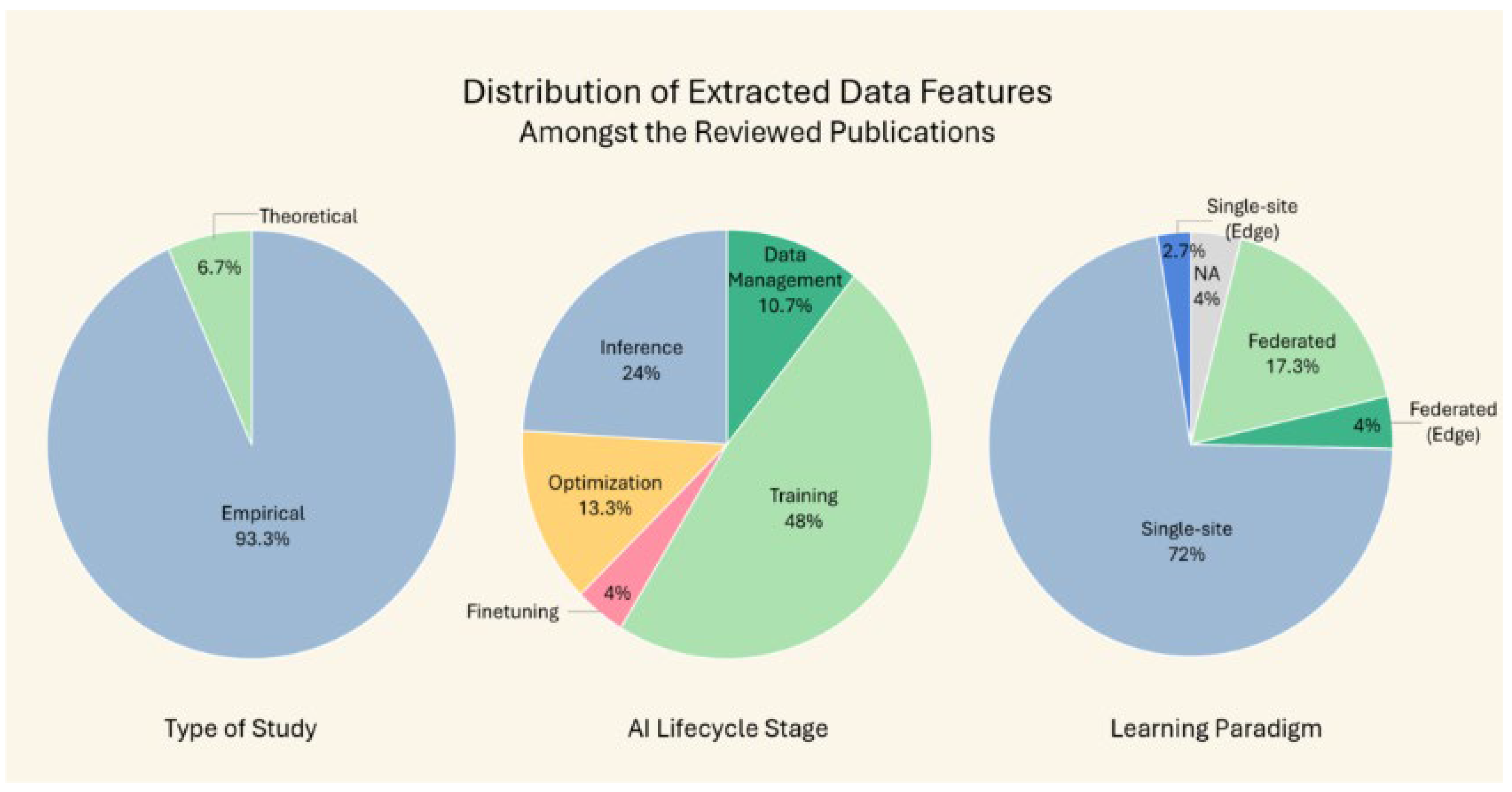

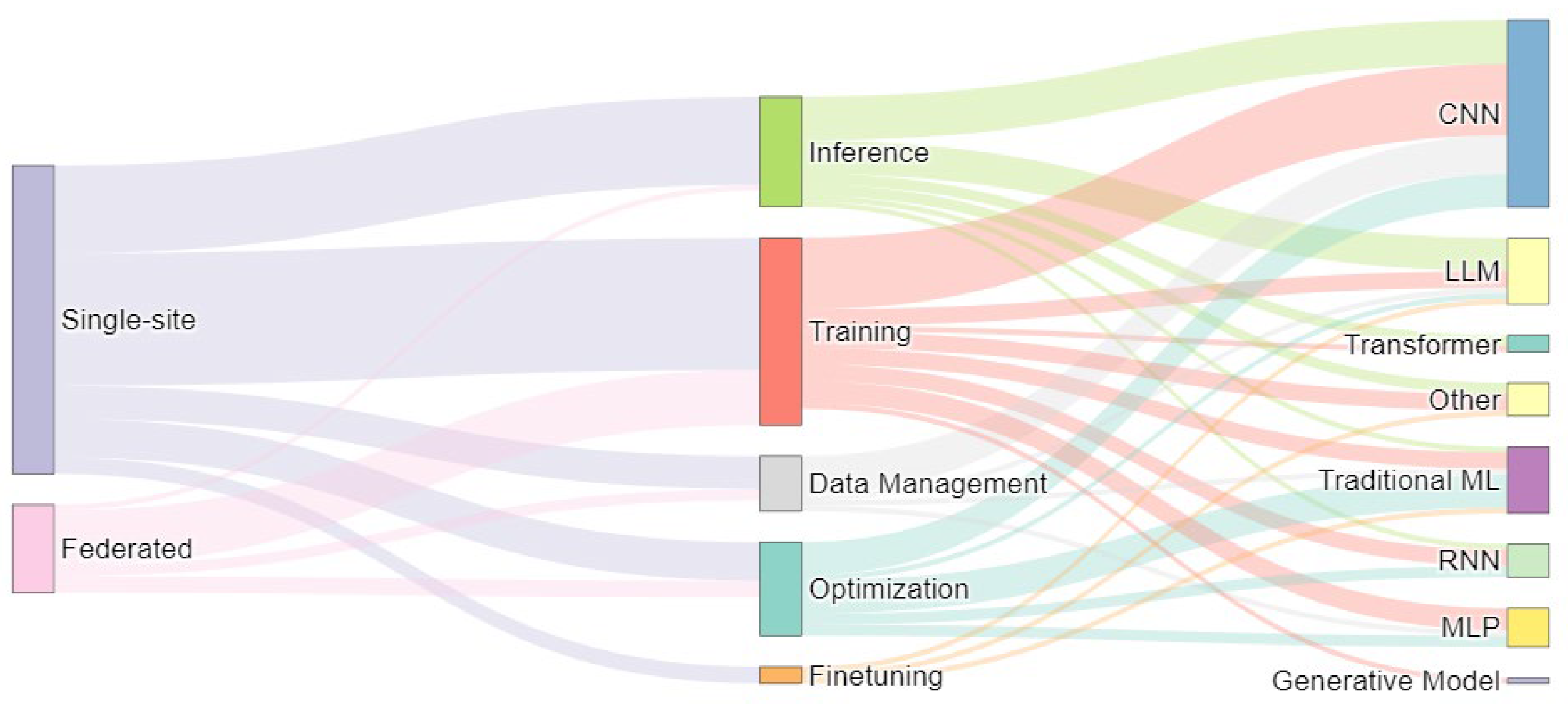

3. Empirical Findings

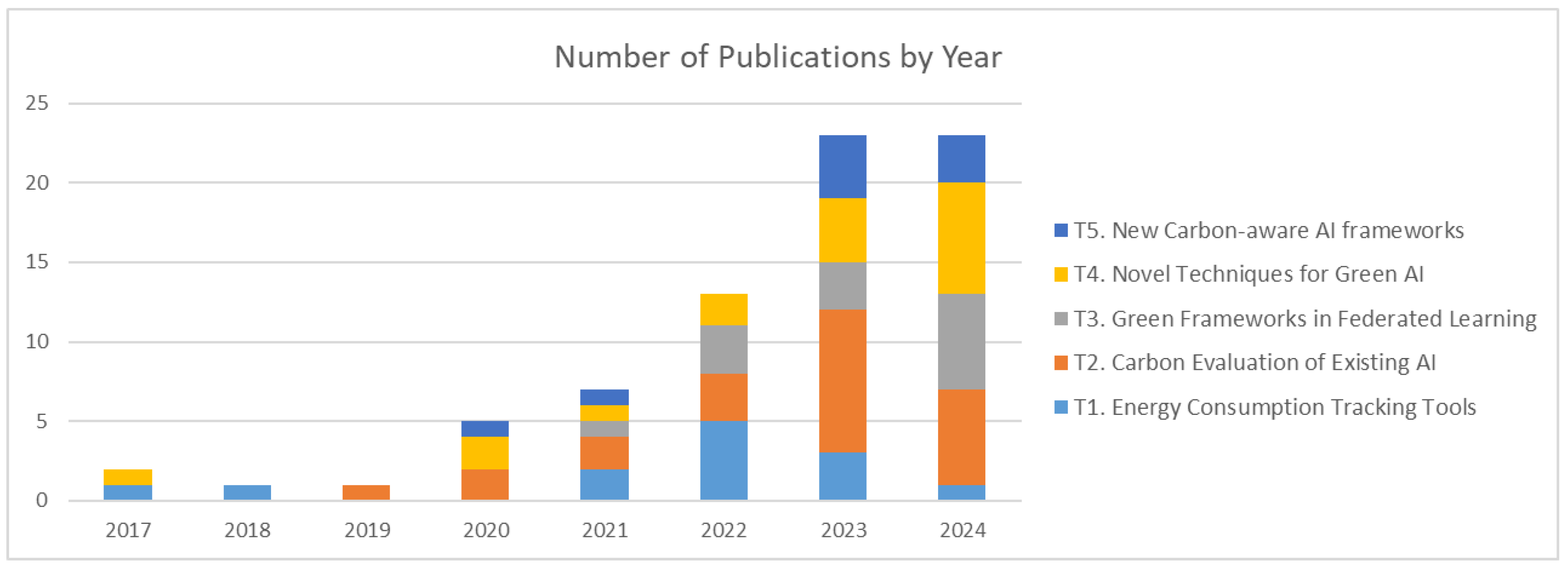

| Paper Count | Years | Frequently Occurring | ||||||

|---|---|---|---|---|---|---|---|---|

| Tools | AI Model | AI Lifecycle Stage | Computing Infrastructure | Performance Metric | ||||

| Theme 1 – Energy Consumption Tracking Tools | ||||||||

| 13 | 2017 – 2024 | Green Algorithms [34] CodeCarbon [40] |

CNN LLM |

Training Inference |

Local Cloud HPC |

Accuracy RMSE |

||

| Theme 2 – Evaluates Environmental Impact of AI methods | ||||||||

| 23 | 2019 – 2024 |

CodeCarbon [40] NVIDIA-SMI (NVIDIA) perf tool (LINUX) |

CNN ML LLM MLP |

Training Inference Optimization Data- Management |

Local |

Accuracy F1-score |

||

| Theme 3 – Green Frameworks in Federated Learning | ||||||||

| 13 | 2021 – 2024 | Mathematical models | CNN |

Training Optimization Data-Management |

Distributed |

Accuracy SSIM |

||

| Theme 4 – Novel Techniques for Green AI | ||||||||

| 17 | 2017 – 2024 | MLCO₂ Impact [42] Torch profiler (LINUX) |

CNN LLM |

Training Inference Data- Management |

Local |

Accuracy RMSE |

||

| Theme 5 – New Carbon-aware AI frameworks | ||||||||

| 9 | 2020 – 2024 | CodeCarbon [40] Carbon Tracker [44] Intel Power Gadget (INTEL) |

CNN ML |

Training Inference Optimization |

Local Cloud HPC Distributed |

Accuracy Latency BLEU score |

||

T1. Energy Consumption Tracking Tools

T2. Carbon Evaluation of Existing AI

- Use simpler model architecture when possible [53].

- Employ CPUs for non-imaging inference tasks [54].

- Add power caps to GPUs (10–24% energy efficiency) [55].

- Pair lightweight models with local devices and heavier models with cloud GPUs [56].

- Perform hyperparameter tuning (50–160% energy efficiency) [57].

- Reduce data and choose appropriate hardware (80% computational efficiency) [58].

- Deliberately select optimized techniques tailored to the model such as caching for data loading (400% faster training), choosing adaptive optimizer like Novograd, and using lightweight architecture. [59].

- Consider Racetrack Memory device instead of GPUs for energy-efficient inference [60].

- Consider ONNX Runtime for energy-efficient deployment of edge AI models [61].

- Use lower-precision computation e.g. float16 instead of float64 (~24% energy efficiency) [62].

- Employ energy-aware hyperparameter optimization techniques e.g. Bayesian optimization HyperBand (BOHB), HyperBand, population-based training (PBT), and the asynchronous successive halving algorithm (ASHA) (~29% energy efficiency) [63].

- Reduce size of training data (50% energy efficiency) [64].

- Reduce network complexity by reducing the number of convolutional layers [65].

T3. Green Frameworks in Federated Learning

T4. Novel Techniques for Green AI

T5. New Carbon-Aware AI Frameworks

- CEMAI, a carbon-aware machine learning pipeline that measures and reduces emissions across the entire ML lifecycle using CodeCarbon and smart caching [92].

- Clover, a carbon-aware inference system that dynamically selects models, allocates GPU resources, and schedules inference based on grid carbon intensity (80% reduced carbon emissions) [93].

- FREEDOM, a privacy-aware, energy-efficient resource scheduling framework to optimize batch sizes and privacy budgets in federated learning environments using Deep Q-learning and GANs (10.9-11.5% energy efficiency) [94].

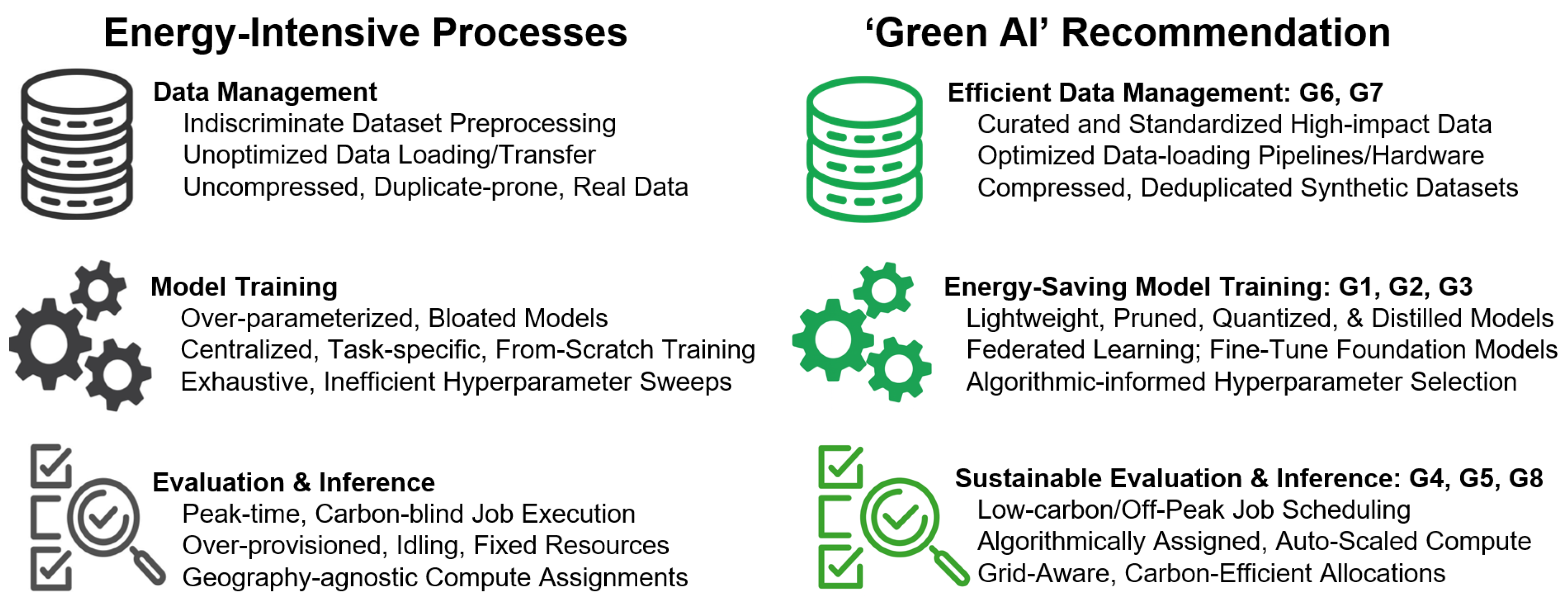

4. Guidelines for Green AI

G1. Adopt Emissions-Aware Scheduling and Resource Optimization

G2. Design Energy-Efficient Models and Datasets

G3. Leverage Pretrained Models and Efficient Learning Paradigms

G4. Track Carbon Emissions Throughout the AI Lifecycle

G5. Benchmark Environmental Metrics Alongside Performance

G6. Establish Benchmark Datasets for Energy Efficiency

G7. Encourage Policy and Institutional Reform

G8. Promote Explainable Sustainability in AI

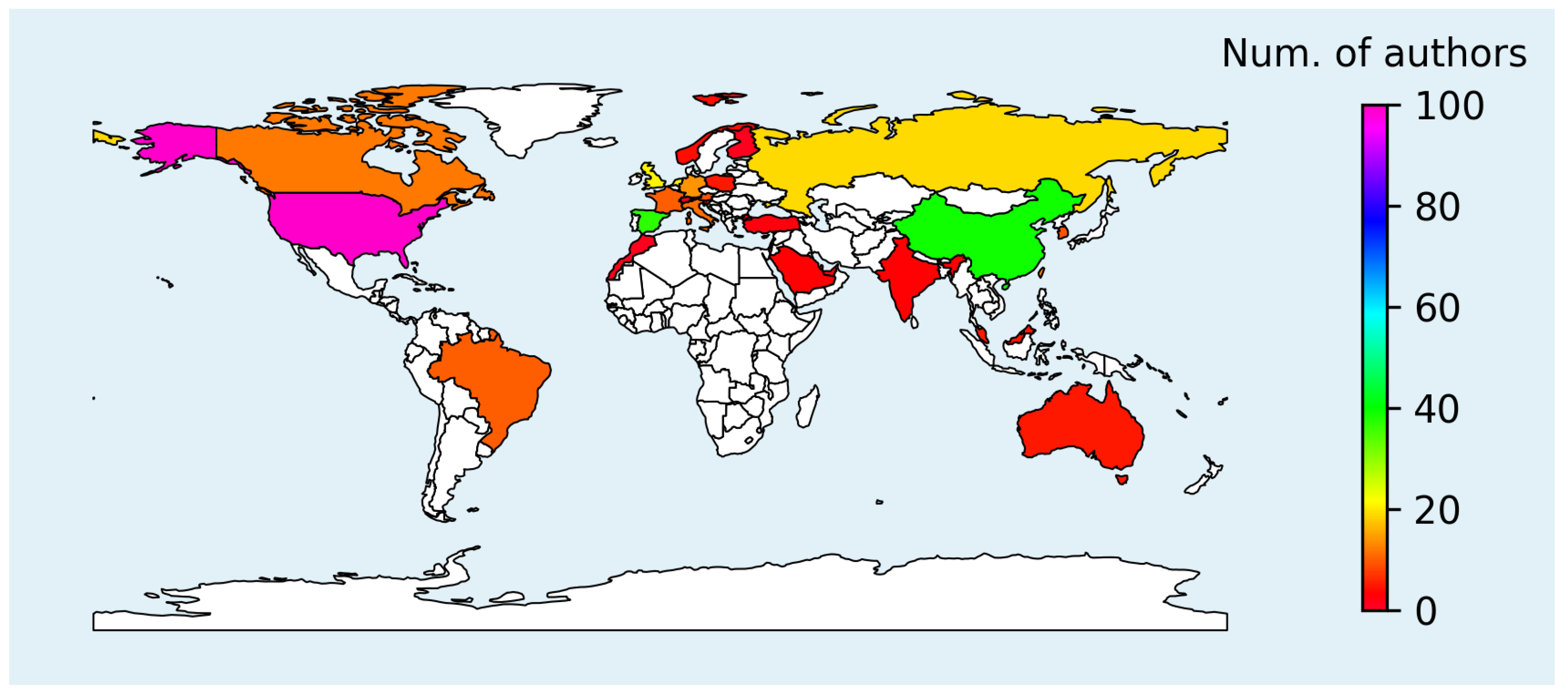

5. Discussion

Supplementary Materials

Acknowledgments

References

- Weizenbaum, Joseph. Computer power and human reason: from judgment to calculation. W. H. Freeman and Company. 1976. [Google Scholar]

- Sophia. Hansen Robotics. 2016. Available online: https://www.hansonrobotics.com/sophia/.

- Vaswani, A.; et al. Attention is all you need. Preprint 2017. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. Preprint 2019. [Google Scholar] [CrossRef]

- ChatGPT. OpenAI. 2023. Available online: https://chat.openai.com/chat.

- Claude 3 Opus, Anthropic. 2024. Available online: https://claude.ai/.

- Dhar, P. The carbon impact of artificial intelligence. Nature Machine Intelligence 2020, 2, 423–425. [Google Scholar] [CrossRef]

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Deep Learning in NLP. Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics 2019, 34, 13693–13696. [Google Scholar]

- Mei, L. Energy Considerations for Large Pre-trained Neural Networks; San José State University, 2025. [Google Scholar]

- EIA releases consumption and expenditures data from the Residential Energy Consumption Survey. U.S. Energy Information Administration (EIA). 2023. Available online: https://www.eia.gov/pressroom/releases/press530.php.

- Rahman, R.; Heindrich, L.; Owen, D.; Emberson, L. Over 30 AI models have been trained at the scale of GPT-4. Epoch AI. 2025. Available online: https://epoch.ai/data-insights/models-over-1e25-flop.

- Luccioni, A. S.; Viguier, S.; Ligozat, A.-L. Estimating the carbon footprint of BLOOM, a 176B parameter language model. The Journal of Machine Learning Research 2023, 24, 1–15. [Google Scholar]

- Touvron, H.; et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. 2023. [Google Scholar] [CrossRef]

- Flight Carbon Footprint Calculator. Calculator. 2025. Available online: https://calculator.now/flight-carbon-footprint-calculator/.

- Schwartz, R.; Dodge, J.; Smith, N. A.; Etzioni, O. Green AI. Communications of the ACM 2020, 63, 54–63. [Google Scholar] [CrossRef]

- Debus, C.; Piraud, M.; Streit, A.; Theis, F.; Götz, M. Reporting electricity consumption is for sustainable AI. Nature Machine Intelligence 2023, 5, 1176–1178. [Google Scholar] [CrossRef]

- Verdecchia, R.; Sallou, J.; Cruz, L. A systematic review of Green AI. WIREs Data Mining and Knowledge Discovery 2023, 13. [Google Scholar] [CrossRef]

- Bolón-Canedo, V.; Morán-Fernández, L.; Cancela, B.; Alonso-Betanzos, A. A review of green artificial intelligence: Towards a more sustainable future. Neurocomputing 2024, 599, 128096. [Google Scholar] [CrossRef]

- Tabbakh, A.; et al. Towards sustainable AI: a comprehensive framework for Green AI. Discover Sustainability 2024, 5, 1–14. [Google Scholar] [CrossRef]

- EU Artificial Intelligence Act. European Union. 2024. Available online: https://artificialintelligenceact.eu/.

- Lekadir, K; Frangi, A F; Porras, A R; Glocker, B; Cintas, C; Langlotz, C P; et al. FUTURE-AI: international consensus guideline for trustworthy and deployable artificial intelligence in healthcare. BMJ 2025, 388. [Google Scholar] [CrossRef] [PubMed]

- Jobin, A.; Ienca, M.; Vayena, E. The global landscape of AI ethics guidelines. Nature Machine Intelligence 2019, 1, 389–399. [Google Scholar] [CrossRef]

- Gyevnár, B.; Kasirzadeh, A. AI safety for everyone. Nature Machine Intelligence 2025, 7, 531–542. [Google Scholar] [CrossRef]

- Hoffman, P. AI’s Power Demand: Calculating ChatGPT’s electricity consumption for handling over 365 billion user queries every year. BestBrokers. 2025. Available online: https://www.bestbrokers.com/forex-brokers/ais-power-demand-calculating-chatgpts-electricity-consumption-for-handling-over-78-billion-user-queries-every-year.

- International, Total Energy Production. U.S. Energy Information Administration (EIA). Available online: https://www.eia.gov/international/rankings/world.

- Kaack, L. H.; et al. Aligning artificial intelligence with climate change mitigation. Nature Climate Change 2022, 12, 518–527. [Google Scholar] [CrossRef]

- Kitchenham, B. Procedures for performing systematic reviews. Keele University 2004, 33, 1–26. [Google Scholar]

- Wohlin, C. Guidelines for snowballing in systematic literature studies and a replication in software engineering. Proceedings of the 18th International Conference on Evaluation and Assessment in Software Engineering (EASE '14) 2014, 38, 1–10. [Google Scholar]

- Minion, J.T.; et al. PICO Portal. The Journal of the Canadian Health Libraries Association 2021, 42, 181–183. [Google Scholar] [CrossRef]

- Bukar, U.A.; et al. A method for analyzing text using VOSviewer. MethodsX 2023, 11. [Google Scholar] [CrossRef]

- Wade, A. D. The Semantic Scholar Academic Graph (S2AG). In Companion Proceedings of the Web Conference 2022 739–739 (2022).

- Valenzuela-Escarcega, M. A.; Ha, V. A.; Etzioni, O. Identifying Meaningful Citations. AAAI Workshop: Scholarly Big Data (2015).

- Yang, Z.; Chen, M.; Saad, W.; Hong, C. S.; Shikh-Bahaei, M. Energy Efficient Federated Learning Over Wireless Communication Networks. IEEE Transactions on Wireless Communications 2021, 20, 1935–1949. [Google Scholar] [CrossRef]

- Lannelongue, L.; Grealey, J.; Inouye, M. Green Algorithms: Quantifying the Carbon Footprint of Computation. Advanced Science 2021, 8. [Google Scholar] [CrossRef]

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Modern Deep Learning Research. Proceedings of the AAAI Conference on Artificial Intelligence 2020, 34, 13693–13696. [Google Scholar] [CrossRef]

- Justus, D.; Brennan, J.; Bonner, S.; McGough, A. S. Predicting the Computational Cost of Deep Learning Models. In IEEE International Conference on Big Data 3873–3882 (2018).

- Yang, T.-J.; Chen, Y.-H.; Emer, J.; Sze, V. A method to estimate the energy consumption of deep neural networks. In 51st Asilomar Conference on Signals, Systems, and Computers 1916–1920 (2017).

- Dodge, J.; et al. Measuring the Carbon Intensity of AI in Cloud Instances. In ACM Conference on Fairness Accountability and Transparency 1877–1894 (2022).

- Taylor, P. Data center average annual PUE worldwide 2024. Statista. 2025. Available online: https://www.statista.com/statistics/1229367/data-center-average-annual-pue-worldwide/.

- Courty, B.; et al. mlCO₂/codecarbon: v2.4.1. Zenodo 2024. [Google Scholar] [CrossRef]

- Henderson, P.; et al. Towards the systematic reporting of the energy and carbon footprints of machine learning. arXiv 2020, arXiv:2002.05651. [Google Scholar]

- Bannour, N.; Ghannay, S.; Névéol, A.; Ligozat, A.-L. Evaluating the carbon footprint of NLP methods: a survey and analysis of existing tools. In Proceedings of the Second Workshop on Simple and Efficient Natural Language Processing 11–21 (2021).

- Lacoste, A.; Luccioni, A.; Schmidt, V.; Dandres, T. Quantifying the carbon emissions of machine learning. arXiv 2019, arXiv:1910.09700. [Google Scholar] [CrossRef]

- Shaikh, O.; et al. EnergyVis: Interactively Tracking and Exploring Energy Consumption for ML Models. In Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems, ACM 1–7 (2021).

- Tiutiulnikov, M.; et al. eco4cast: Bridging Predictive Scheduling and Cloud Computing for Reduction of Carbon Emissions for ML Models Training. Doklady Mathematics 2023, 108, S443–S455. [Google Scholar] [CrossRef]

- Yoo, T.; Lee, H.; Oh, S.; Kwon, H.; Jung, H. Visualizing the Carbon Intensity of Machine Learning Inference for Image Analysis on TensorFlow Hub. In Computer Supported Cooperative Work and Social Computing, ACM 206–211 (2023).

- Kannan, J.; Barnett, S.; Simmons, A.; Selvi, T.; Cruz, L. Green Runner: A Tool for Efficient Deep Learning Component Selection. In Proceedings of the IEEE/ACM 3rd International Conference on AI Engineering - Software Engineering for AI 112–117 (2024).

- Li, C.; Tsourdos, A.; Guo, W. A Transistor Operations Model for Deep Learning Energy Consumption Scaling Law. IEEE Transactions on Artificial Intelligence 2024, 5, 192–204. [Google Scholar] [CrossRef]

- Budennyy, S. A.; et al. eCO₂AI: Carbon Emissions Tracking of Machine Learning Models as the First Step Towards Sustainable AI. Doklady Mathematics 2022, 106, S118–S128. [Google Scholar] [CrossRef]

- Ma, H.; Ding, A. Method for evaluation on energy consumption of cloud computing data center based on deep reinforcement learning. Electric Power Systems Research 2022, 208, 107899. [Google Scholar] [CrossRef]

- Carastan-Santos, D.; Pham, T. H. T. Understanding the Energy Consumption of HPC Scale Artificial Intelligence. In CARLA 2022- Latin America High Performance Computing Conference 1660 131–144 (2022).

- Jääskeläinen, P. Explainable Sustainability for AI in the Arts. In The 1st International Workshop on Explainable AI for the Arts, ACM Creativity and Cognition Conference (2023).

- Castanyer, R. C.; Martínez-Fernández, S.; Franch, X. Which design decisions in AI-enabled mobile applications contribute to greener AI? Empirical Software Engineering 2024, 29, 2. [Google Scholar] [CrossRef]

- Caspart, R.; et al. Precise Energy Consumption Measurements of Heterogeneous Artificial Intelligence Workloads. In International Conference on High Performance Computing 108–121 (2022).

- Zhao, D.; et al. Sustainable Supercomputing for AI. In Proceedings of the 2023 ACM Symposium on Cloud Computing 588–596 (2023).

- del Rey, S.; Martínez-Fernández, S.; Cruz, L.; Franch, X. Do DL models and training environments have an impact on energy consumption? 49th Euromicro Conference on Software Engineering and Advanced Applications (SEAA), IEEE, 2023; pp. 150–158. [Google Scholar]

- Ferro, M.; Silva, G. D.; de Paula, F. B.; Vieira, V.; Schulze, B. Towards a sustainable artificial intelligence: A case study of energy efficiency in decision tree algorithms. Concurrency and Computation: Practice and Experience 2023, 35. [Google Scholar] [CrossRef]

- Gómez-Carmona, O.; Casado-Mansilla, D.; Kraemer, F. A.; López-de-Ipiña, D.; García-Zubia, J. Exploring the computational cost of machine learning at the edge for human-centric Internet of Things. Future Generation Computer Systems 2020, 112, 670–683. [Google Scholar] [CrossRef]

- Mazurek, S.; Pytlarz, M.; Malec, S.; Crimi, A. Investigation of Energy-Efficient AI Model Architectures and Compression Techniques for “Green” Fetal Brain Segmentation. In Computational Science – ICCS 2024. Lecture Notes in Computer Science 14835 61–74 (2024).

- Ollivier, S.; et al. Sustainable AI Processing at the Edge. IEEE Micro 2023, 43, 19–28. [Google Scholar] [CrossRef]

- Tomlinson, B.; Black, R. W.; Patterson, D. J.; Torrance, A. W. The carbon emissions of writing and illustrating are lower for AI than for humans. Scientific Reports 2024, 14, 3732. [Google Scholar] [CrossRef] [PubMed]

- Yokoyama, A. M.; Ferro, M.; de Paula, F. B.; Vieira, V. G.; Schulze, B. Investigating hardware and software aspects in the energy consumption of machine learning: A green AI-centric analysis. Concurrency and Computation: Practice and Experience 2023, 35. [Google Scholar] [CrossRef]

- Castellanos-Nieves, D.; García-Forte, L. Strategies of Automated Machine Learning for Energy Sustainability in Green Artificial Intelligence. Applied Sciences 2024, 14, 6196. [Google Scholar] [CrossRef]

- Perera-Lago, J.; et al. An in-depth analysis of data reduction methods for sustainable deep learning. Open Research Europe 2024, 4, 101. [Google Scholar] [CrossRef]

- Yarally, T.; Cruz, L.; Feitosa, D.; Sallou, J.; van Deursen, A. Uncovering Energy-Efficient Practices in Deep Learning Training: Preliminary Steps Towards Green AI. IEEE/ACM 2nd International Conference on AI Engineering – Software Engineering for AI (CAIN), 2023; pp. 25–36. [Google Scholar]

- Kim, M.; Saad, W.; Mozaffari, M.; Debbah, M. Green, Quantized Federated Learning Over Wireless Networks: An Energy-Efficient Design. IEEE Transactions on Wireless Communications 2024, 23, 1386–1402. [Google Scholar] [CrossRef]

- Hsu, Y.-L.; Liu, C.-F.; Wei, H.-Y.; Bennis, M. Optimized Data Sampling and Energy Consumption in IIoT: A Federated Learning Approach. IEEE Transactions on Communications 2022, 70, 7915–7931. [Google Scholar] [CrossRef]

- Li, P.; Huang, X.; Pan, M.; Yu, R. FedGreen: Federated Learning with Fine-Grained Gradient Compression for Green Mobile Edge Computing. IEEE Global Communications Conference (GLOBECOM), 2021; pp. 1–6. [Google Scholar]

- Wang, J.; Mao, Y.; Wang, T.; Shi, Y. Green Federated Learning Over Cloud-RAN With Limited Fronthaul Capacity and Quantized Neural Networks. IEEE Transactions on Wireless Communications 2024, 23, 4300–4314. [Google Scholar] [CrossRef]

- Driss, M. B.; Sabir, E.; Elbiaze, H.; Diallo, A.; Sadik, M. A Green Multi-Attribute Client Selection for Over-The-Air Federated Learning: A Grey-Wolf-Optimizer Approach. ACM Transactions on Modeling and Performance Evaluation of Computing Systems 2025, 10, 1–24. [Google Scholar] [CrossRef]

- Albaseer, A.; Seid, A. M.; Abdallah, M.; Al-Fuqaha, A.; Erbad, A. Novel Approach for Curbing Unfair Energy Consumption and Biased Model in Federated Edge Learning. IEEE Transactions on Green Communications and Networking 2024, 8, 865–877. [Google Scholar] [CrossRef]

- Kuswiradyo, P.; Kar, B.; Shen, S.-H. Optimizing the energy consumption in three-tier cloud–edge–fog federated systems with omnidirectional offloading. Computer Networks 2024, 250, 110578. [Google Scholar] [CrossRef]

- Fontenla-Romero, O.; Guijarro-Berdiñas, B.; Hernandez-Pereira, E.; Perez-Sanchez, B. An effective and efficient green federated learning method for one-layer neural networks. In Proceedings of the 39th ACM/SIGAPP Symposium on Applied Computing, 2024; pp. 1050–1052. [Google Scholar]

- Qi, Y.; Hossain, M. S. Harnessing federated generative learning for green and sustainable Internet of Things. Journal of Network and Computer Applications 2024, 222, 103812. [Google Scholar] [CrossRef]

- Gille, C.; Guyard, F.; Antonini, M.; Barlaud, M. Learning Sparse auto-Encoders for Green AI image coding. 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2023; pp. 1–5. [Google Scholar]

- Lazzaro, D.; et al. Minimizing Energy Consumption of Deep Learning Models by Energy-Aware Training. Image Analysis and Processing – ICIAP 2023. Lecture Notes in Computer Science 2023, 14234 515–526. [Google Scholar]

- Acmali, S. S.; Ortakci, Y.; Seker, H. Green AI-Driven Concept for the Development of Cost-Effective and Energy-Efficient Deep Learning Method: Application in the Detection of Eimeria Parasites as a Case Study. Advanced Intelligent Systems 2024, 6. [Google Scholar] [CrossRef]

- Candelieri, A.; Perego, R.; Archetti, F. Green machine learning via augmented Gaussian processes and multi-information source optimization. Soft Computing 2021, 25, 12591–12603. [Google Scholar] [CrossRef]

- Huang, K.; Yin, H.; Huang, H.; Gao, W. Towards Green AI in Fine-tuning Large Language Models via Adaptive Backpropagation. The 12th International Conference on Learning Representations, 2024. [Google Scholar]

- Balderas, L.; Lastra, M.; Benítez, J. M. An Efficient Green AI Approach to Time Series Forecasting Based on Deep Learning. Big Data and Cognitive Computing 2024, 8, 120. [Google Scholar] [CrossRef]

- Nijkamp, N.; Sallou, J.; van der Heijden, N.; Cruz, L. Green AI in Action: Strategic Model Selection for Ensembles in Production. In Proceedings of the 1st ACM International Conference on AI-Powered Software, 2024; pp. 50–58. [Google Scholar]

- Spring, R.; Shrivastava, A. Scalable and Sustainable Deep Learning via Randomized Hashing. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining Part F129685, 2017; pp. 445–454. [Google Scholar]

- Yin, Z.; Pu, J.; Wan, R.; Xue, X. Embrace sustainable AI: Dynamic data subset selection for image classification. Pattern Recognition 2024, 151, 110392. [Google Scholar] [CrossRef]

- Shi, J.; et al. Greening Large Language Models of Code. In Proceedings of the 46th International Conference on Software Engineering: Software Engineering in Society, 2024; pp. ACM 142–153. [Google Scholar]

- Pirnat, A.; et al. Towards Sustainable Deep Learning for Wireless Fingerprinting Localization. IEEE International Conference on Communications (ICC), 2022; pp. 3208–3213. [Google Scholar]

- Liu, Q.; Zhu, J.; Dai, Q.; Wu, X.-M. Benchmarking News Recommendation in the Era of Green AI. In Companion Proceedings of the ACM Web Conference, 2024; pp. 971–974. [Google Scholar]

- Yang, X.; et al. Sparse Optimization for Green Edge AI Inference. Journal of Communications and Information Networks 2020, 5, 1–15. [Google Scholar] [CrossRef]

- Reguero, Á. D.; Martínez-Fernández, S.; Verdecchia, R. Energy-efficient neural network training through runtime layer freezing, model quantization, and early stopping. Computer Standards & Interfaces 2025, 92, 103906. [Google Scholar]

- Wei, X.; et al. Towards Greener Yet Powerful Code Generation via Quantization: An Empirical Study. In Proceedings of the 31st ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, 2023; pp. 224–236. [Google Scholar]

- Yang, Y.; Kang, H.; Mirzasoleiman, B. Towards Sustainable Learning: Coresets for Data-efficient Deep Learning. In Proceedings of the 40th International Conference on Machine Learning (PMLR), 2023; pp. 202 39314–39330. [Google Scholar]

- Bird, T.; Kingma, F. H.; Barber, D. Reducing the Computational Cost of Deep Generative Models with Binary Neural Networks. International Conference on Learning Representations (ICLR), 2021. [Google Scholar]

- Husom, E. J.; Sen, S.; Goknil, A. Engineering Carbon Emission-aware Machine Learning Pipelines. In Proceedings of the IEEE/ACM 3rd International Conference on AI Engineering - Software Engineering for AI 118–128, 2024. [Google Scholar]

- Li, B.; Samsi, S.; Gadepally, V.; Tiwari, D. Clover: Toward Sustainable AI with Carbon-Aware Machine Learning Inference Service. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, 2023; pp. ACM 1–15. [Google Scholar]

- Zhang, S.; et al. Differential Privacy-Aware Generative Adversarial Network-Assisted Resource Scheduling for Green Multi-Mode Power IoT. IEEE Transactions on Green Communications and Networking 2024, 8, 956–967. [Google Scholar] [CrossRef]

- Pagliari, R.; et al. A Comprehensive Sustainable Framework for Machine Learning and Artificial Intelligence. 27th European Conference on Artificial Intelligence, 2024; 392, pp. 834–841. [Google Scholar]

- McIntosh, A.; Hassan, S.; Hindle, A. What can Android mobile app developers do about the energy consumption of machine learning? Empirical Software Engineering 2019, 24, 562–601. [Google Scholar] [CrossRef]

- Hu, Y.; Huang, H.; Yu, N. Resource Optimization and Device Scheduling for Flexible Federated Edge Learning with Tradeoff Between Energy Consumption and Model Performance. Mobile Networks and Applications 2022, 27, 2118–2137. [Google Scholar] [CrossRef]

- Salh, A.; et al. Energy-Efficient Federated Learning With Resource Allocation for Green IoT Edge Intelligence in B5G. IEEE Access 2023, 11, 16353–16367. [Google Scholar] [CrossRef]

- Jean-Quartier, C.; et al. The Cost of Understanding—XAI Algorithms towards Sustainable ML in the View of Computational Cost. Computation 2023, 11, 92. [Google Scholar] [CrossRef]

- Shi, M.; et al. Thinking Geographically about AI Sustainability. AGILE: GIScience Series 2023, 4, 1–7. [Google Scholar] [CrossRef]

- Henderson, P.; et al. Towards the Systematic Reporting of the Energy and Carbon Footprints of Machine Learning. The Journal of Machine Learning Research 2020, 21, 10039–10081. [Google Scholar]

- Patterson, D.; et al. The Carbon Footprint of Machine Learning Training Will Plateau, Then Shrink. Computer 2022, 55, 18–28. [Google Scholar] [CrossRef]

- Artificial Intelligence’s Energy Paradox: Balancing Challenges and Opportunities. World Economic Forum – AI Governance Alliance. 2025. Available online: https://reports.weforum.org/docs/WEF_Artificial_Intelligences_Energy_Paradox_2025.pdf.

- The Sustainable Development Goals Report 2025. United Nations. 2025. Available online: https://unstats.un.org/sdgs/report/2025/The-Sustainable-Development-Goals-Report-2025.pdf.

| Paper | Citations (Overall / Influential) |

Brief Summary |

|---|---|---|

| Yang et al. 2021 [33] | 696 / 76 | Energy-efficient transmission and computation resource allocation for federated learning via an iterative optimization algorithm. |

| Lannelongue et al. 2021 [34] | 331 / 30 | Generalizable framework and tool for estimating the carbon footprint of any computation task and practical solutions for greener computation. |

| Strubell et al. 2020 [35] | 521 / 20 | Energy cost analysis of popular natural language processing models and energy saving recommendations. |

| Justus et al. 2018 [36] | 231 / 16 | Execution time prediction for different components of a neural network to facilitate optimal hardware selection and efficient model design. |

| Yang et al. 2017 [37] | 163 / 13 | Energy estimation technique for deep neural networks to guide energy-efficient design strategies before training begins. |

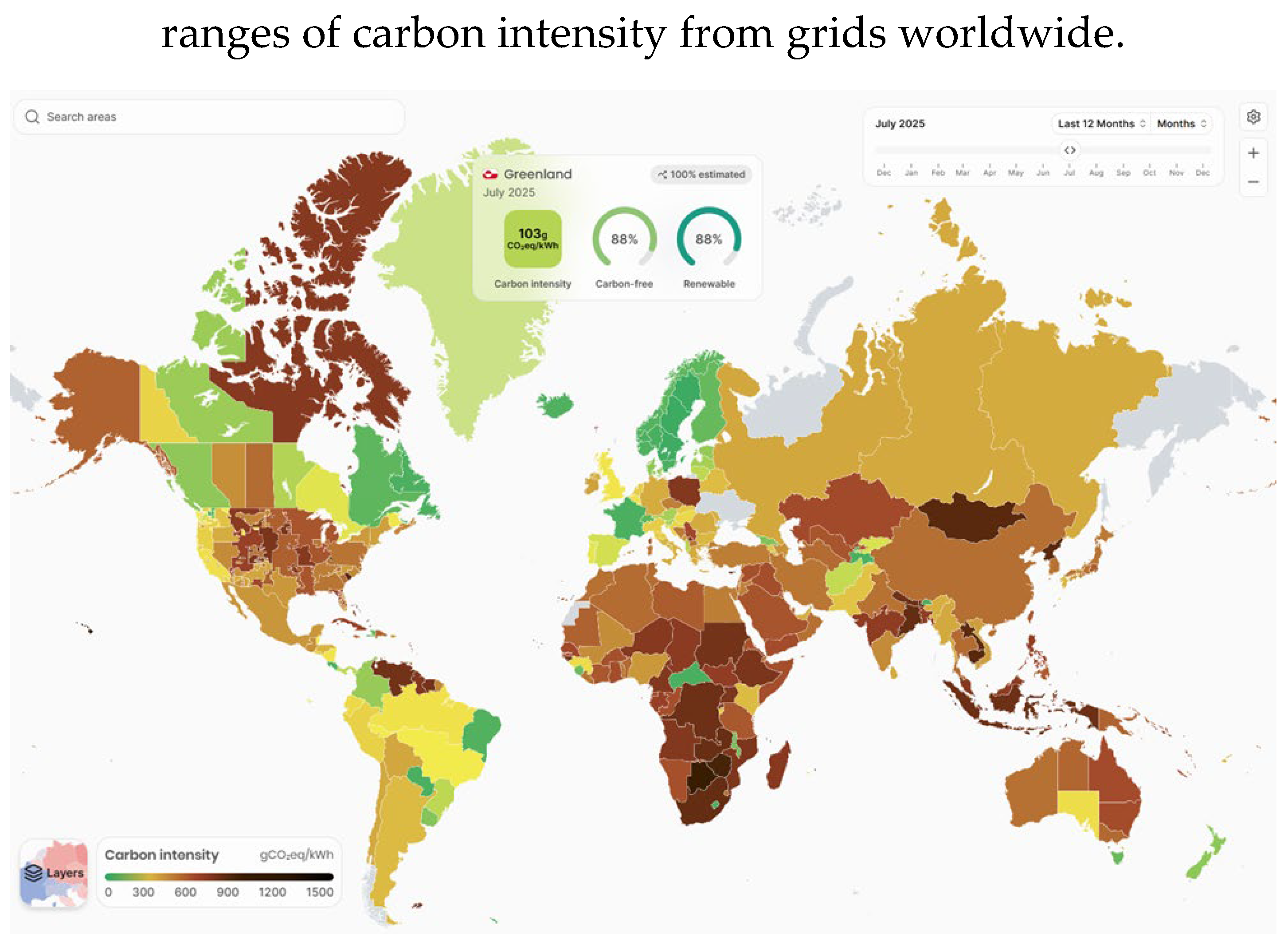

| Dodge et al. 2022 [38] | 200 / 11 | Framework for measuring the carbon impact of a system, especially considering the geographic location and time of the day. |

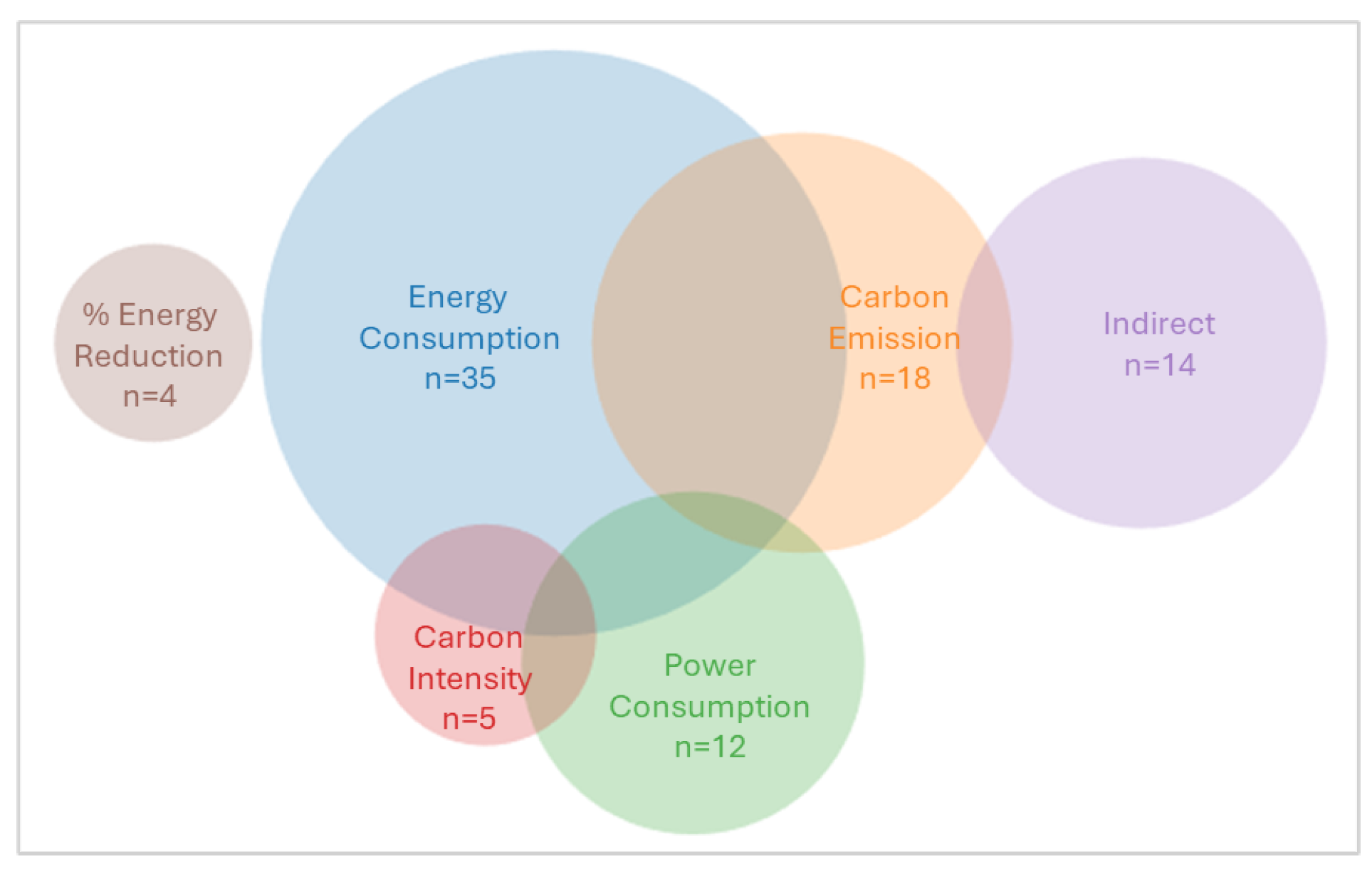

| Energy Consumption (EC): Total amount of energy utilized by a system or process over time. Unit: Joules (J) or kilowatt-hours (kWh). |

| Power Consumption (PC): The rate at which energy is consumed at a given instant. Unit: Watt (W). |

| Carbon Emissions (CE): The amount of CO₂ (or equivalent of other greenhouse gas) released into air. Unit: grams of CO₂ equivalent (gCO₂e). |

| Carbon Footprint (CF): The overall carbon emissions produced by a product or company. It is often used interchangeably with CE. Unit: gCO₂e. |

| Carbon Intensity (CI): The amount of CO₂ or equivalent gases emitted per unit of activity, like electricity. Example unit: gCO₂e/kWh. |

| Tool | Feature | Tool Description |

|---|---|---|

| Pre-training estimation tools | ||

| Execution-Time Predictor [36] | DL-based tool | Prediction of execution time for neural network training and inference. |

| GreenRunner [47] | DL-based tool Open source |

Preselection of energy-efficient ML configurations in application-specific contexts. |

| TO model [48] | Estimation of carbon footprint based on model configurations via nonlinear computational modeling. | |

| Green Algorithms Calculator [34] | Estimation of carbon emissions based on data center efficiency and geographic location. | |

| eco4Cast [45] | Open source | Prediction of daily carbon intensity in geographical regions and energy-efficient scheduling of ML tasks. |

| Runtime monitoring tools | ||

| eCO₂AI [49] | Open source | Estimation of carbon emissions during model training. |

| Carbon Framework [38] | Measurement of carbon footprint, considering location and time of the day. | |

| Compressed Framework [50] | DL-based tool | Evaluation of energy consumption, especially in cloud data centers. |

| Benchmark-Tracker [44] | Benchmarking and evaluation of AI system's speed, performance, energy use, and carbon footprint. | |

| EnergyVis [46] | Open source | Tracking, visualization, and comparison of carbon emissions based on hardware or geographical location. |

| MIEV [37] | Tracking, visualization and comparison of energy-performance tradeoffs in ML models | |

| Online Resource Estimator [37] | Open source | Estimation of energy consumption of deep neural networks considering both computation energy and data movement energy. |

| Governance | ||

| Explainable Sustainability [52] | Embedding sustainability feedback within AI systems. | |

| Paper | Maximum reported improvement | Method in Federated Learning |

|---|---|---|

| Using direct measures (energy consumption, power consumption) | ||

| Kim et al. 2022 [66] | 70% | Quantized neural networks to optimize convergence rate and energy cost. |

| Hsu et al. 2022 [67] | 69% | Two-stage iterative optimization for optimal data sampling and communication energy resources. |

| Li et al. 2021 [68] | 57% | Fine-grained gradient compression technique that adapts sparsity/precision per network layer. |

| Wang et al. 2023 [69] | 50% | Quantized models combined with optimized joint fronthaul-rate and power allocation. |

| Driss et al. 2024 [70] | 43% | Grey-Wolf-Optimizer–based multi-attribute client selection. |

| Albaseer et al. 2024 [71] | 28% | Fairness-aware client scheduling that weights devices by historical participation. |

| Kuswiradyo et al. 2024 [72] | 27% | Energy-aware omnidirectional workload offloading across fog, edge, and cloud computing. |

| Using indirect measures (time, communication overhead) | ||

| Fontenla-Romero et al. 2023 [73] | 87% | One-shot federation for a network for efficient single round communication. |

| Qi et al. 2024 [74] | 80% | One-shot federated learning with generative learning to achieve outcomes in a single communication round. |

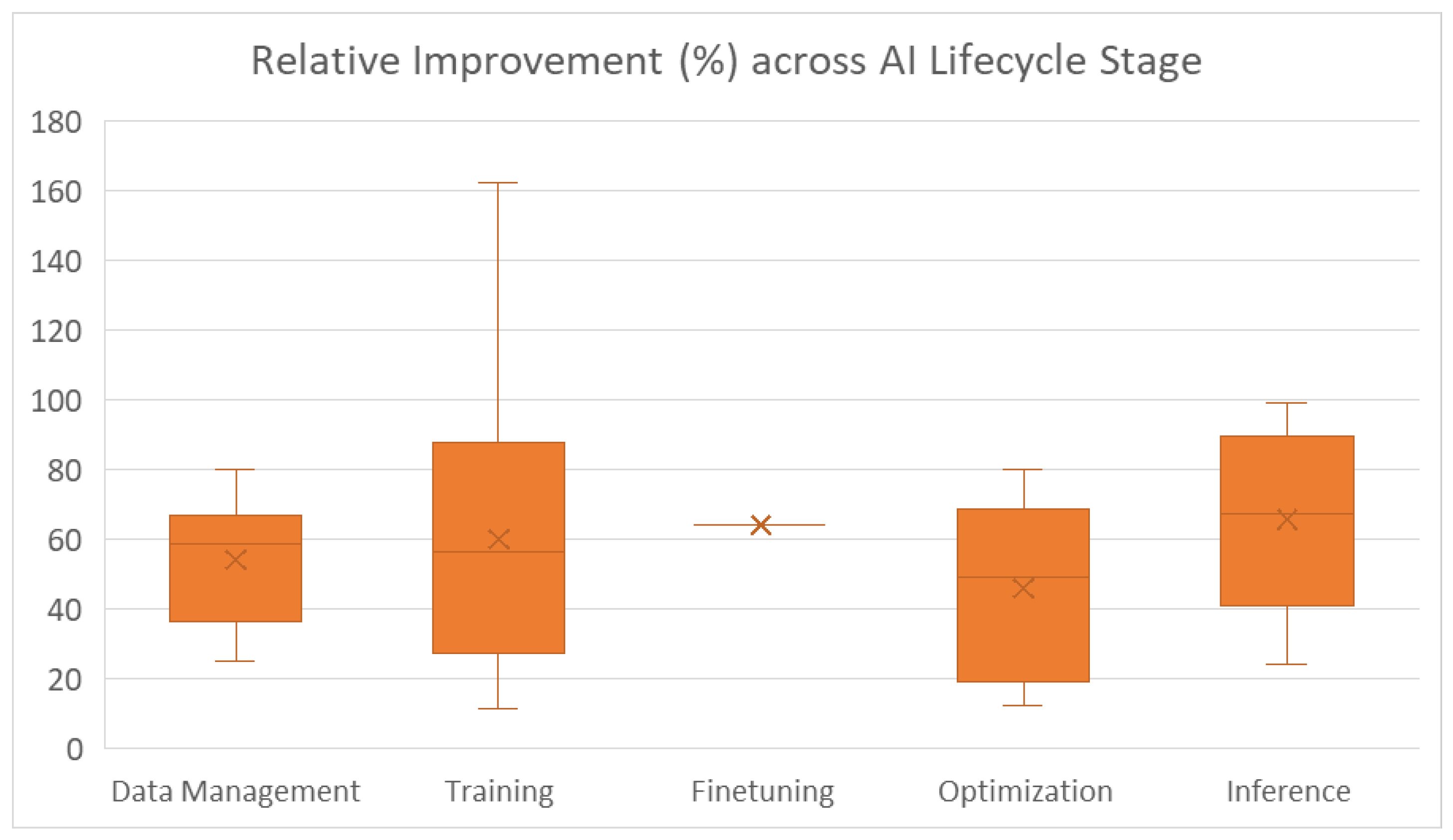

| Paper | Maximum reported improvement | Method |

|---|---|---|

| Using direct measures (energy consumption, carbon emissions) | ||

| Shi et al. 2024 [84] | 99% | Compression and energy-focused usage of LLMs. |

| Pirnat et al. 2022 [85] | 94% | Compact feature and model design for low-power applications |

| Liu et al. 2024 [86] | 87.6% | Green-aware benchmarking framework using only-encode-once (OLEO) training. |

| Xiangyu et al. 2020 [87] | 67% | Optimized task selection and structured sparsity for efficient edge inference. |

| Yin et al. 2024 [83] | 62% | Dynamic coreset selection to train on a compact subset of data. |

| Nijkamp et al. 2024 [81] | 57% | Optimal ensemble selection to minimize computing costs. |

| Reguero et al. 2024 [88] | 56% | Reduce training compute using progressive layer freezing, model quantization, and early stopping. |

| Wei et al. 2023 [89] | 55% | Post-training quantization method for LLMs. |

| Lazzaro et al. 2023 [76] | 31% | Novel training loss function guided by measured energy to optimize consumption. |

| Gille et al. 2022 [75] | 25% | Structured sparsity-regularized convolutional autoencoders for low-energy image compression. |

| Using indirect measures (compute cost, FLOPs) | ||

| Huang et al. 2023 [79] | 64% | Adaptive backpropagation in LLM finetuning to reduce computing costs. |

| Spring et al. 2017 [82] | 95% | Randomized hashing technique to reduce memory and computing during training. |

| Using indirect measures (time, model size) | ||

| Balderas et al. 2024 [80] | 20% | Lightweight deep learning architecture with efficient training for time-series prediction. |

| Acmali et al. 2024 [77] | 50% | Model pruning technique for reducing the number of parameters. |

| Yang et al. 2023 [90] | 60% | Dynamic data subset selection during training to reduce redundant samples. |

| Candelieri et al. 2021 [78] | 66% | Augmented Gaussian processes for efficient hyperparameter optimization. |

| Bird et al. 2020 [91] | 94% | Binary-activated neural networks for generative models to limit computation. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).