Submitted:

05 February 2026

Posted:

06 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

3. Research Gaps and Contributions

3.1. Research Gaps

3.2. Research Contributions

-

Development of a Novel Hybrid BCI System

- -

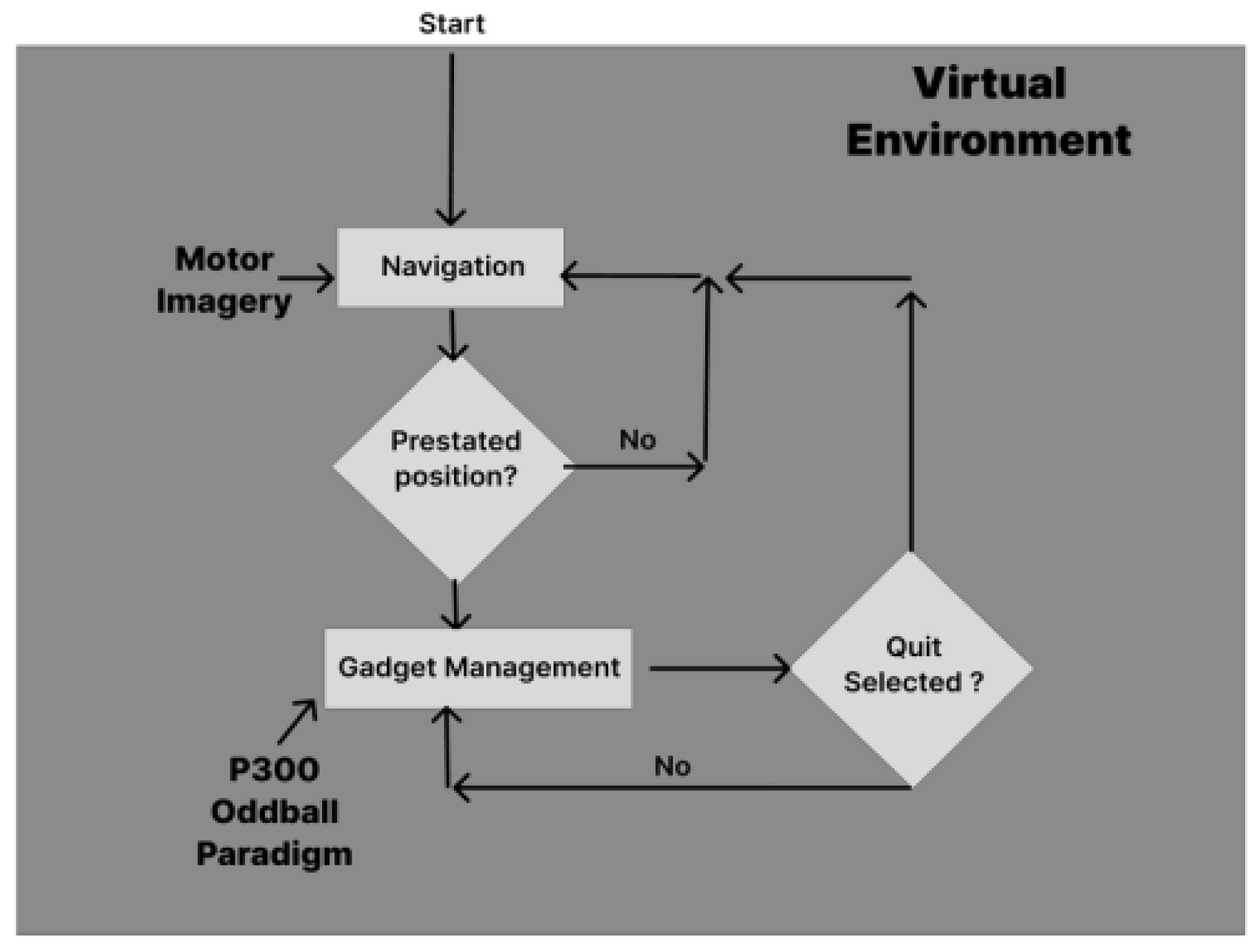

- Merges Motor Imagery (MI)-based continuous navigation and P300-based discrete control.

- -

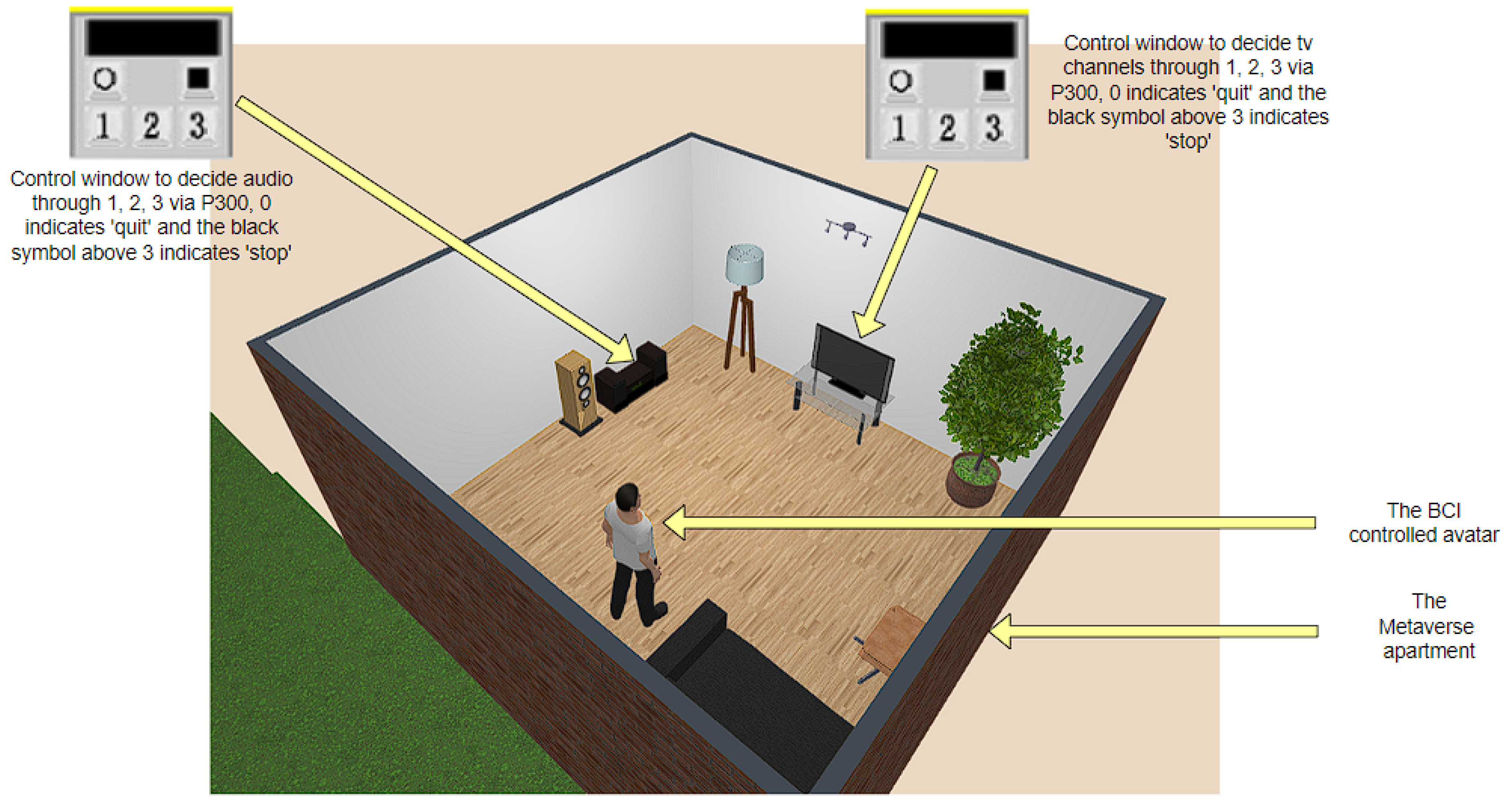

- Enables both navigate-through and navigate-through-and-interact-with-devices interactions within a Metaverse environment using EEG signals exclusively.

-

Context-Aware Adaptive Switching Mechanism

- -

- Switches dynamically between MI and P300 control modes depending on interaction context reducing cognitive complexities.

- -

- Improves usability by reducing redundant mode switching.

-

Signal Processing Advancements

- -

- Deploys Transformer + SVM hybrid architecture for P300 classification with high accuracy.

- -

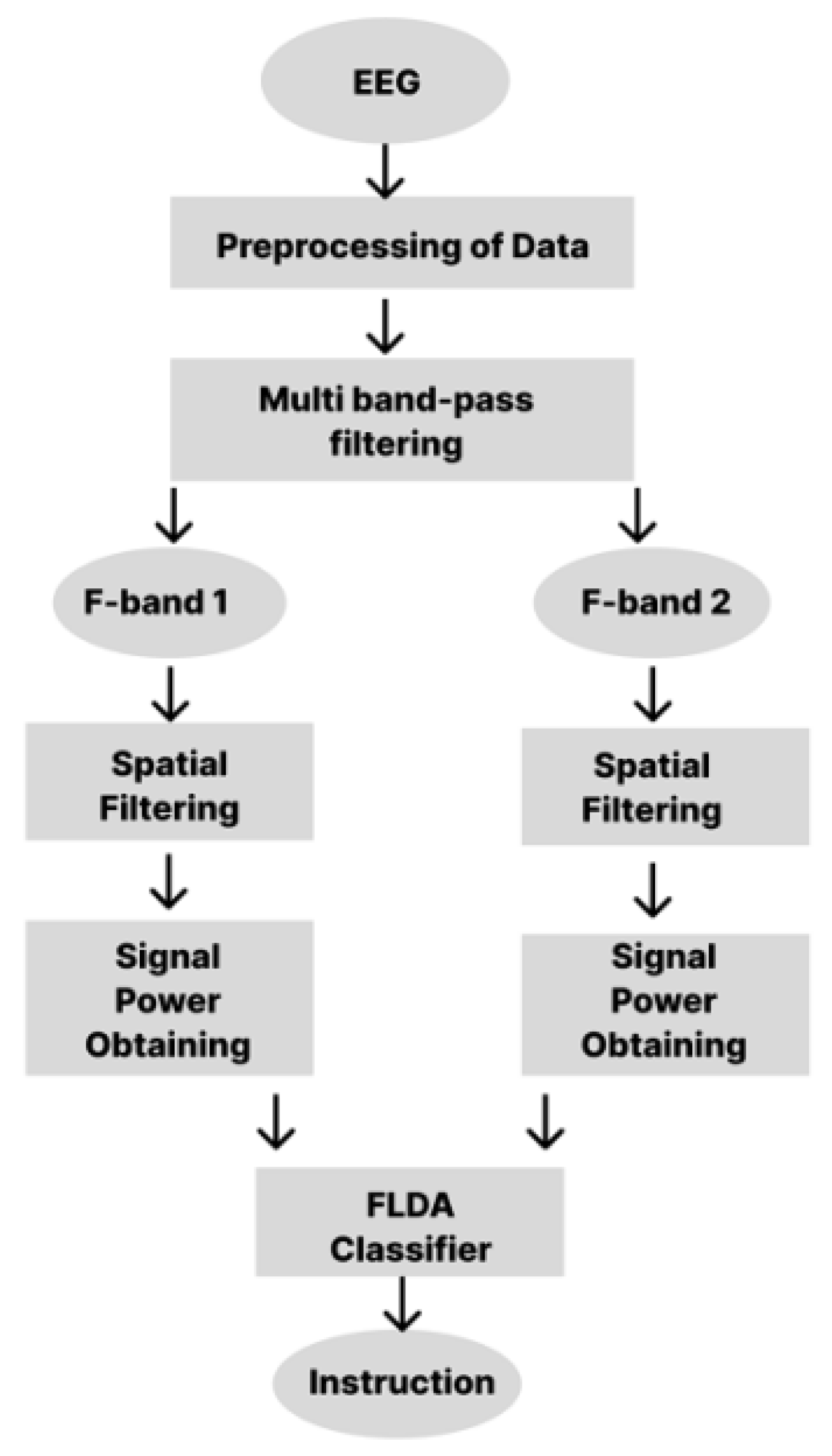

- Uses Fisher’s Linear Discriminant Analysis (FLDA) and Common Spatial Patterns (CSP) for the detection of MI.

-

Experimental Metaverse Implementation

- -

- Implemented in an apartment virtual setting with OpenSceneGraph and C Sharp and control of real-time EEG signal.

- -

- Tasks are navigation and device usage (e.g., TV/music control).

-

Robust Experimental Validation

- -

- Performed with 8 simulated participants with different amounts of BCI experience.

-

Data Augmentation for Robustness

- -

- Presented EEGGAN-Net for synthesizing artificial EEG data, promoting classifier generalization and reducing data imbalance.

-

Real-Time Training Personalization

- -

- Frequency bands and CSP weights are adapted using real-time data to improve MI classification.

4. Equipments and Methodology :

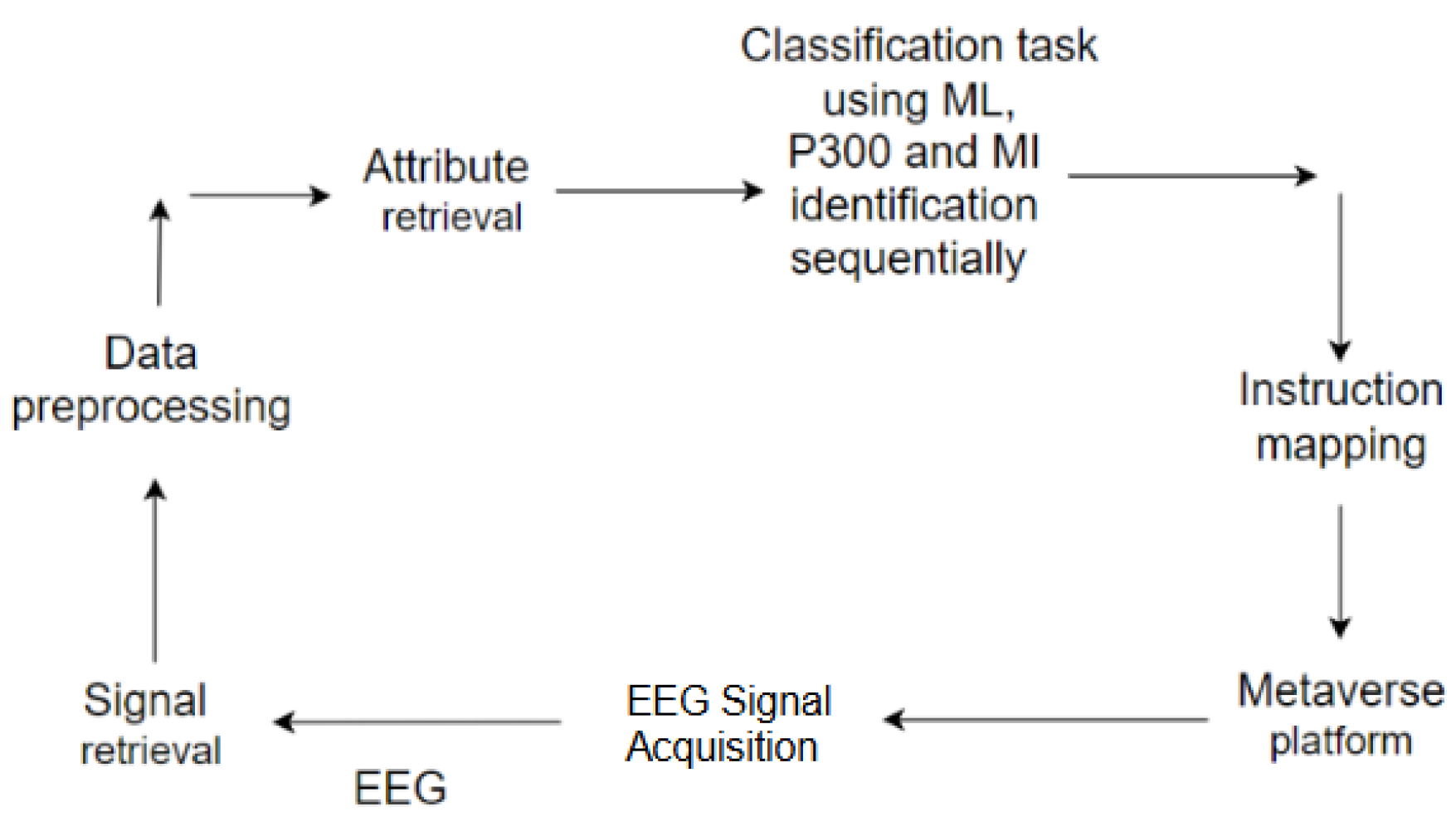

4.1. Hybrid BCI Handling Procedure:

4.2. Signal Refining:

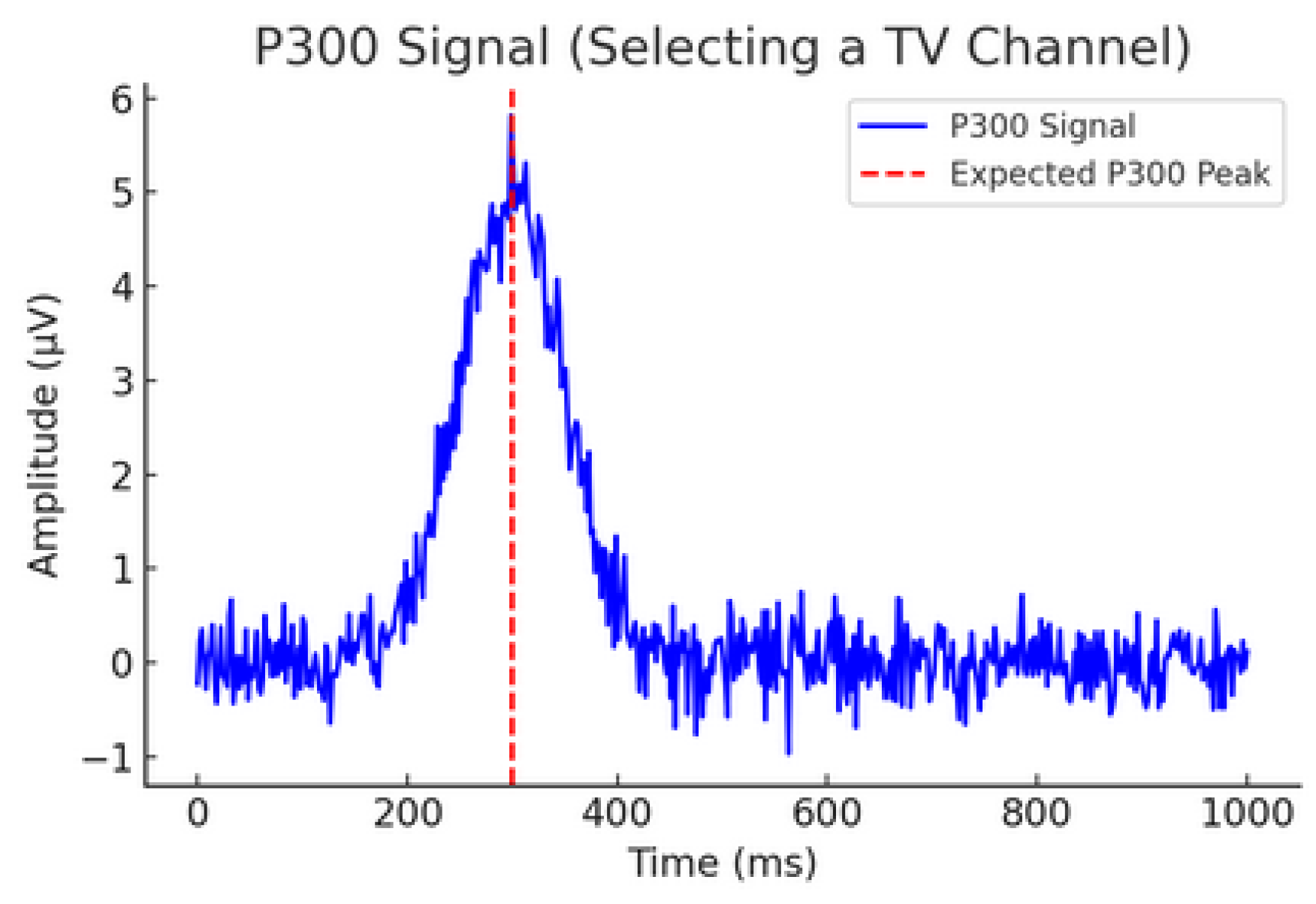

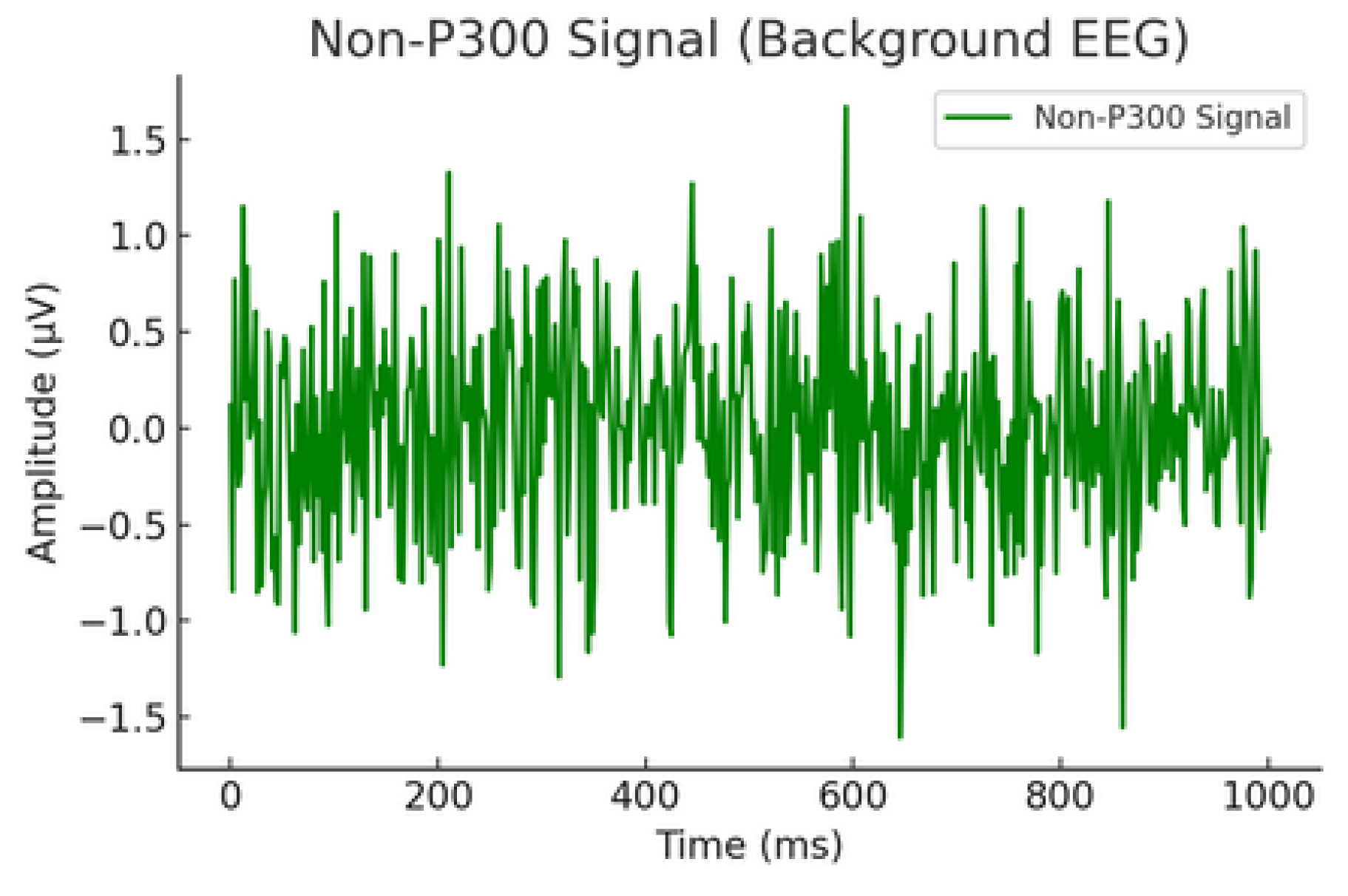

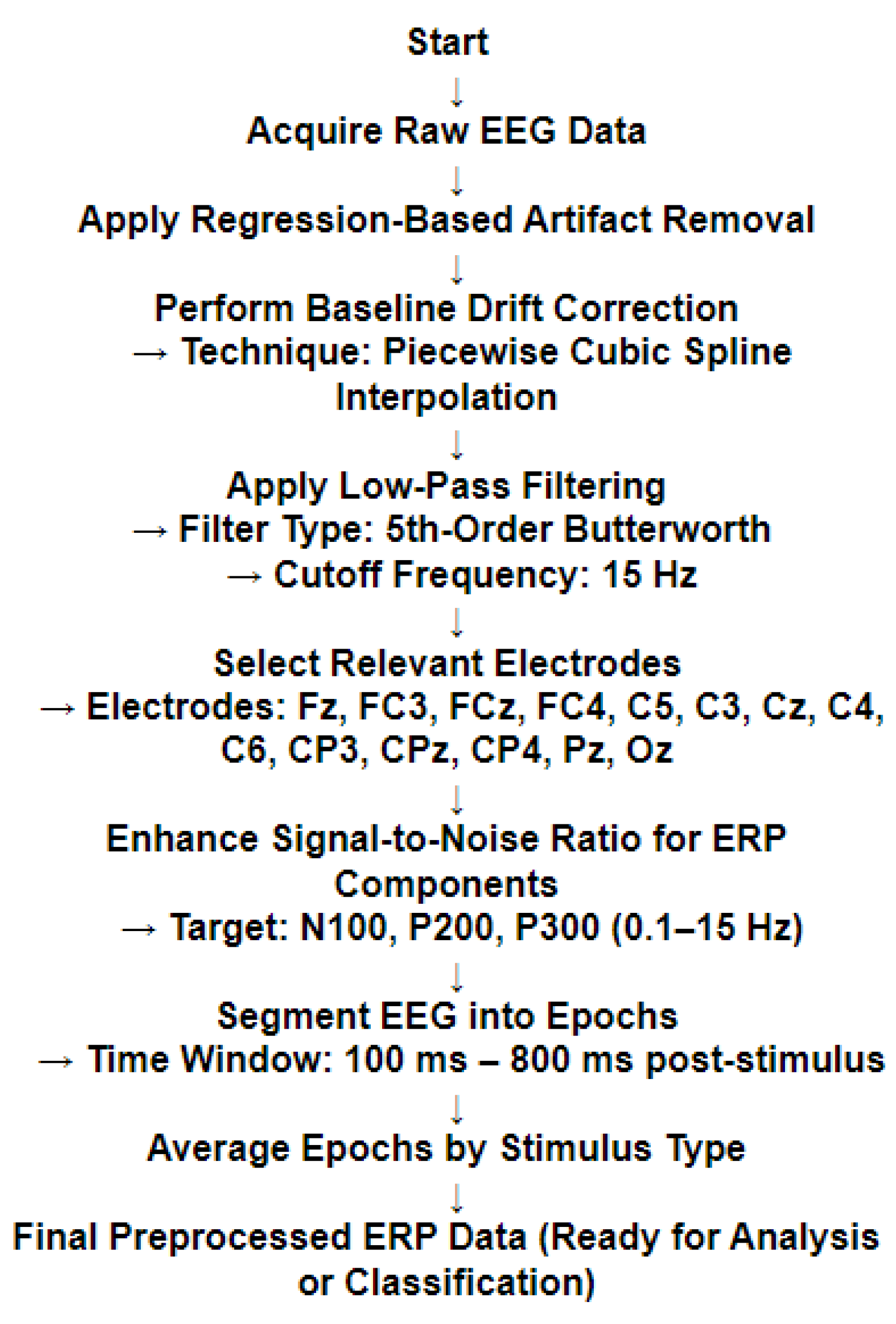

4.2.1. P300 Potential Identification

| Algorithm 1: P300 Classification using Transformer, SVM Hybrid Model for each |

|

Require: EEG trials , Labels Ensure: Predicted Labels

|

- Strong Feature Learning – Unlike CNN-based models, relying on spatial features, the Transformer learns temporal representation critical for ERP classification.

- Generalization using SVM – RBF kernel SVM is employed to make robust decisions, preventing overfitting and enhancing classification accuracy on new examples.

- Generalization using SVM – RBF kernel SVM is employed to make robust decisions, preventing overfitting and enhancing classification accuracy on new examples.

- Scalability and Transferability – The modularity of this approach allows for easy extension to other EEG-based BCI applications aside from P300 classification.

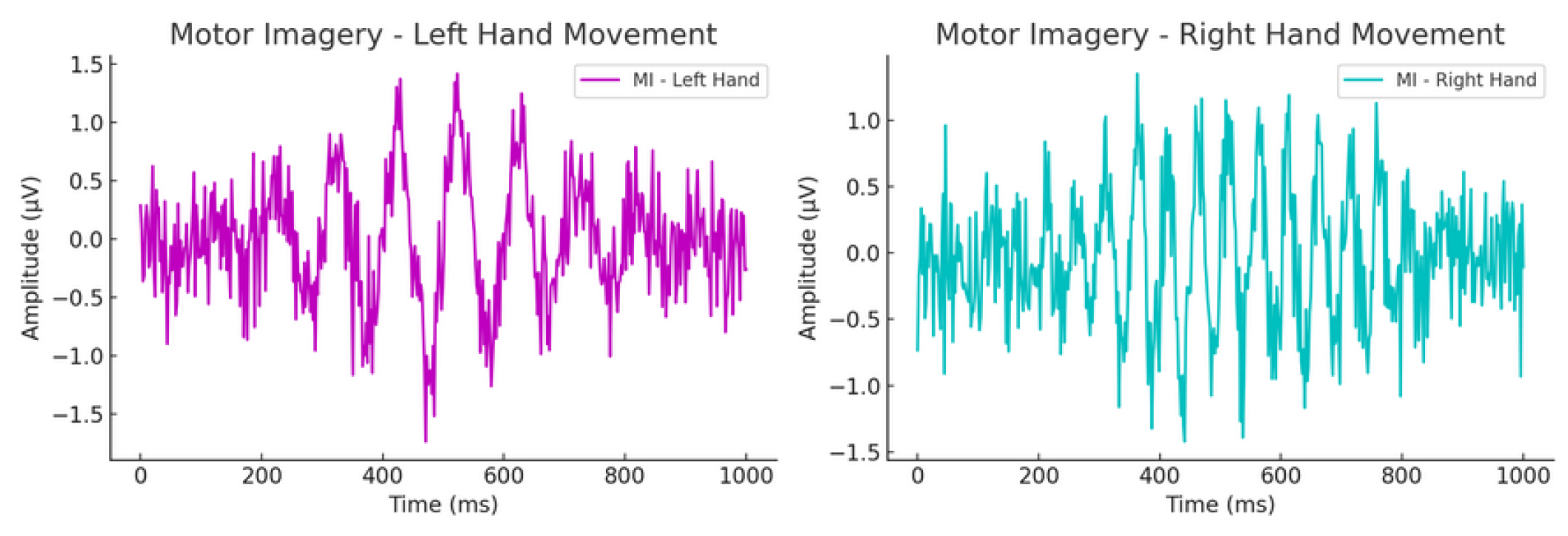

4.2.2. Motor Imagery Identification

5. Experimental Procedure

5.1. Data Collection and Model Training Procedure:

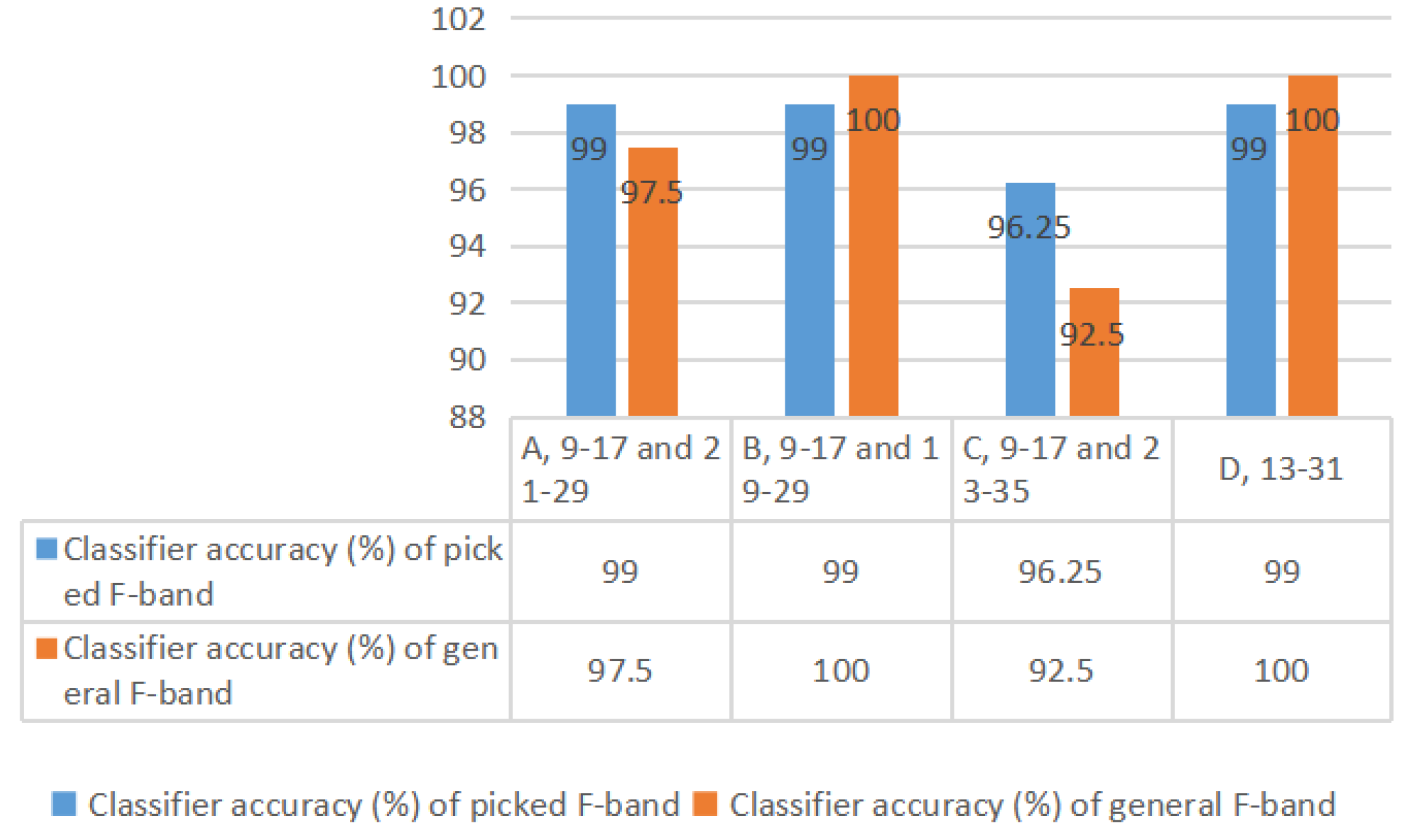

5.1.1. MI Parameter Training Phase

-

Weight Matrix:

- -

- The CSP algorithm computes a transformation matrix that linearly transforms the initial EEG signal S to a new matrix X.

- -

- The transformation maximizes the difference in power characteristics between left-hand and right-hand motor imagery signals.

- -

- Such spatial filters support discriminative feature extraction for classification.

-

Projection Vectorw:

- -

- The FLDA classifier computes a best projection vector w to project EEG features onto a one-dimensional space with the maximum class separability.

- -

- It is learned from training samples of left/right-hand motor imagery signals.

-

Class Means and Variances :

- -

- The mean values are average signal feature values for the left- and right-hand imagery classes.

- -

- The variances are measurements of intra-class variability.

- -

- They are used to FDC to determine classes’ separability.

-

Values for F-Bands using FDC:

- -

- FDC is computed for every F-band for its role in classification.

- -

- The top bands contributing at least 70–75% of total FDC are selected.

-

Selected Frequency Bands for Each User:

- -

- Based on FDC values, an optimal set of F-bands is chosen individually for each participant.

- -

- Overlapping bands are summed if necessary.

- Filter Order: 4.

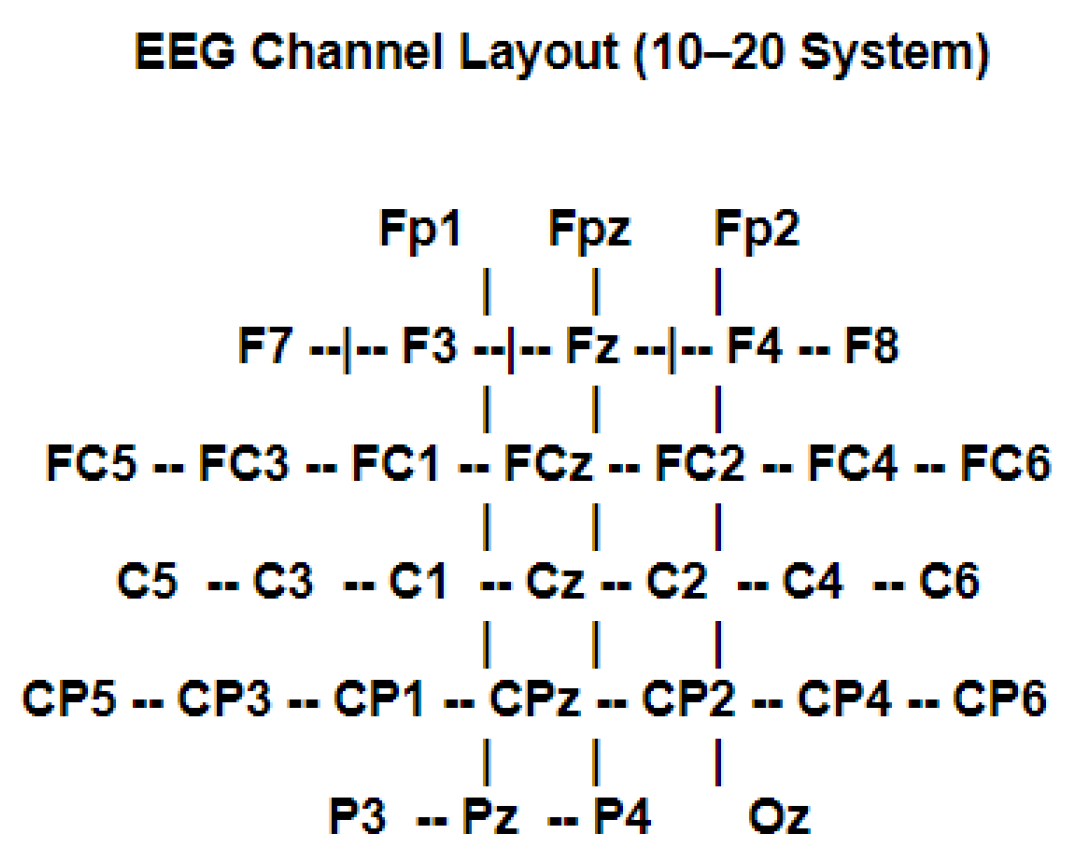

- Filter Cutoff Frequencies: Defines the 17 overlapping frequency bands (e.g., 1–5 Hz, 3–7 Hz, …, 33–37 Hz).

- The variance of each of the chosen F-bands is calculated and transformed into log-band power features to be classified.

5.1.2. P300 Data Collection and Training Phase

-

Combining Trials Across Virtual Subjects

- -

- Trials across all subjects were combined for each stimulus, resulting in a larger set of P300 responses per stimulus.

- -

- This makes sure variability over subjects is accounted for and more effective augmentation and training are facilitated.

-

Data Augmentation with EEGGAN-Net

- -

- EEGGAN-Net was utilized to create synthetic EEG trials retaining the spectral, temporal, and spatial characteristics of P300 signals.

- -

- Every synthetic trial was given the same label as the actual real trial that it was derived from.

- -

- This made sure that P300 target trials were kept labeled as targets and non-target trials were kept non-target.

-

Adaptive Oversampling for Class Balancing

- -

- As the initial dataset had an 80/20 non-target/target ratio, oversampling was implemented solely on the minority (P300 target trials).

- -

- High-quality, clean target trials were selectively replicated to maintain inter-trial variability but to elevate the proportion of target samples.

- -

- Oversampled target trial labels were left unchanged from their source trials to avoid label inconsistency.

-

Final Verification and Dataset Structure

- -

- Following augmentation and oversampling, the final dataset had an 60/40 split while substantially boosting the total number of trials.

- -

- Trials were cross-checked by comparing their spectral and temporal characteristics to ensure synthetic and oversampled data remained in line with actual EEG responses.

-

Merging Trials Across the Simulated Subjects

- -

- Boosts the statistical power of every stimulus, providing strong class-wise augmentation.

- -

- Reduces inter-subject variability by synchronizing features across various participants.

- -

- Provides consistency in labeling for GAN-based augmentation and oversampling.

-

Data Augmentation Using EEGGAN-Net

- -

- Produces realistic P300 signals for every stimulus considering inter-subject variability.

- -

- Temporal modeling provides generated EEG signals with real-world brain activity patterns.

- -

- Prevents overfitting by generating diverse synthetic samples, enhancing generalization.

-

Class Balancing Using Adaptive Oversampling

- -

- Chooses only high-quality actual P300 samples for oversampling, not simple replication.

- -

- Provides more accurate representation of minority class, enhancing classifier training.

- -

- Avoids overfitting to replicated samples, in contrast to naive oversampling methods.

-

Better Non-Target vs. Target Ratio

- -

- 60/40 ratio provides real-world relevance, rendering the model deployable.

- -

- Avoids classifier bias, providing true discrimination between P300 and non-target trials.

5.2. Model Testing Procedure

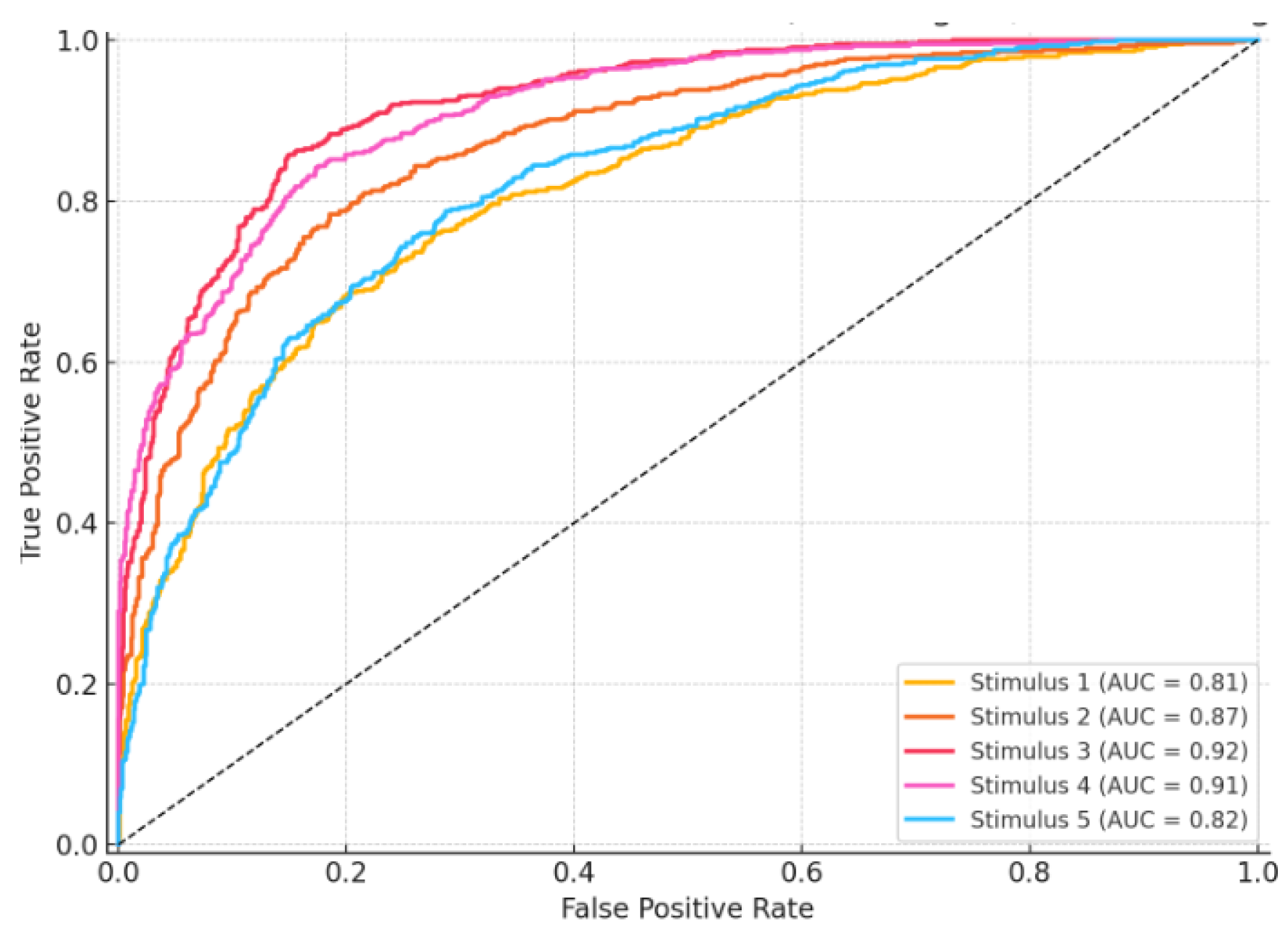

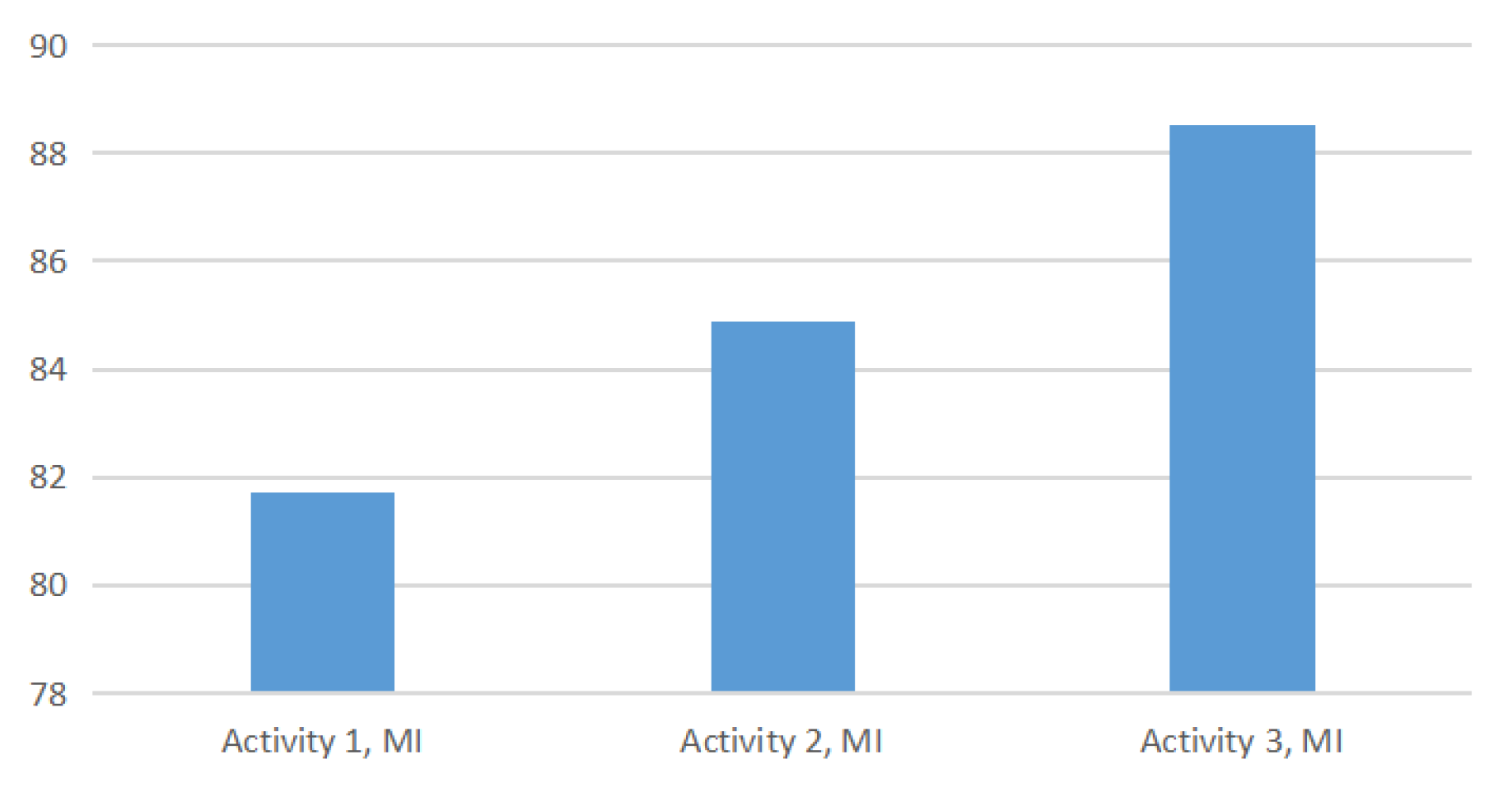

6. Experimental Results

7. Post Experiment Discussions

8. Real-Time Latency Evaluation

- EEG Acquisition Window: 800 ms (standard ERP post-stimulus window)

- Preprocessing (Artifact Rejection + Filtering): 70 ms

- Signal Classification (Transformer+SVM or FLDA): 55 ms

- Command Interpretation + Metaverse API Execution (via Socket IO): 35 ms

- Total End-to-End Latency: 960 ± 35 ms

9. User Learning Curve and Fatigue Impact Analysis for Real-time user Implementation

9.1. Learning Curve Assessment

- P300 accuracy expected to increase from session 1 to session 5 by a fair margin

- MI accuracy expected to increase from session 1 to session 5 by a fair margin

- A decrease in perceived mental effort by session 5

9.2. Fatigue Impact Analysis

- P300 accuracy is expected to fell off slightly later than 20–25 minutes in some users in real-time (by a small margin)

- MI-based control expected to have constant accuracy over time but had elevated false positives near the end of the session

- Expected growing eye strain and decreased attention span after 25 minutes, particularly on high visual load tasks (P300 trials)

10. Discussion on Participant Size and Statistical Significance Analysis

11. Advantages of the Proposed Research Study

- Enhanced Control Flexibility: Integrates Motor Imagery (MI) for continuous navigation and P300 signals for discrete gadget interaction within the Metaverse.

- Context-Aware Adaptive Switching: Dynamically switches between MI and P300 modes based on the interaction context (e.g., navigation vs. control).

- Sophisticated Signal Processing: Comprises a Transformer + SVM combination for P300 classification and FLDA + CSP pipeline for MI signal decoding.

- Real-Time Implementation: Proven successful in a 3D virtual apartment setting, confirming real-world feasibility of the hybrid BCI system.

- EEG Data Augmentation: Leverages EEGGAN-Net to synthetically create realistic EEG data, overcoming class imbalance and enhancing training efficacy.

12. Constraints of the Proposed Research Study

- Limited Participant Pool: Experimental study involved a mere four simulated participants, limiting generalizability across wider populations. Furthermore, the EEG signals for each participants is simulated to mimic the real-time participants.

- High Cognitive Load: Forces users to switch between visual and motor attention tasks, which may lead to mental wear and tear in the long term.

- Static Mode Switching Logic: Switches between MI and P300 based on virtual location and not on user intention or control.

- Inter-Subject Variability: MI performance varies significantly among users, especially for those without prior BCI training.

- System Complexity: Relies on multiple components (Matlab, MFC, OpenSceneGraph, EEG amplifier), making real-world deployment more complex.

- Limited Navigation Dimensions: MI-based navigation currently supports only left/right rotation, lacking support for forward/backward or vertical movement.

- Feedback Latency: Real-time system feedback provides minor latencies in signal classification and mode switching.

- Training Overhead: Needs extensive offline calibration and parameter adjustment per user, restricting its out-of-the-box functionality.

13. Comparative Analysis with Single-Modality BCIs

13.1. Quantitative Improvements

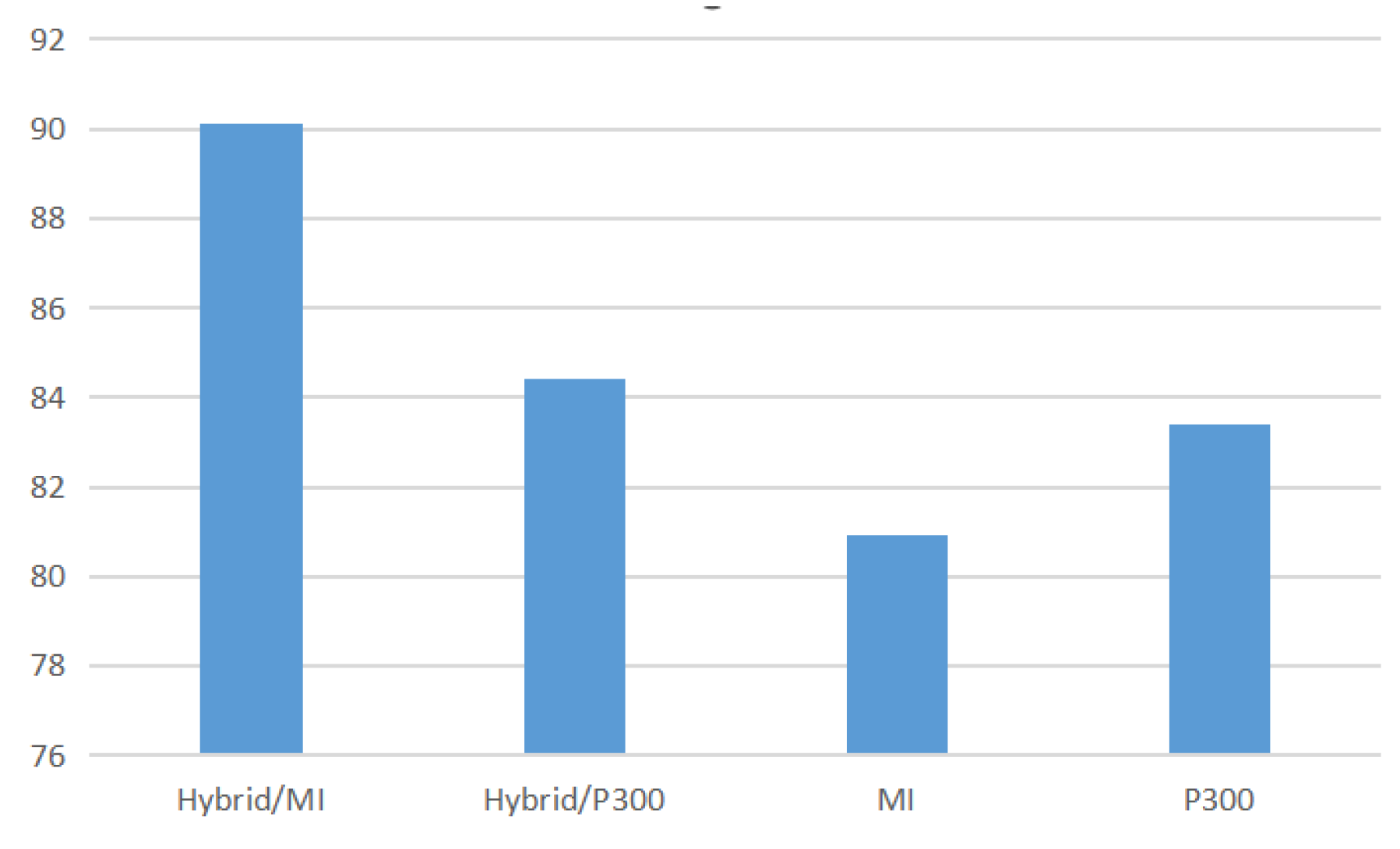

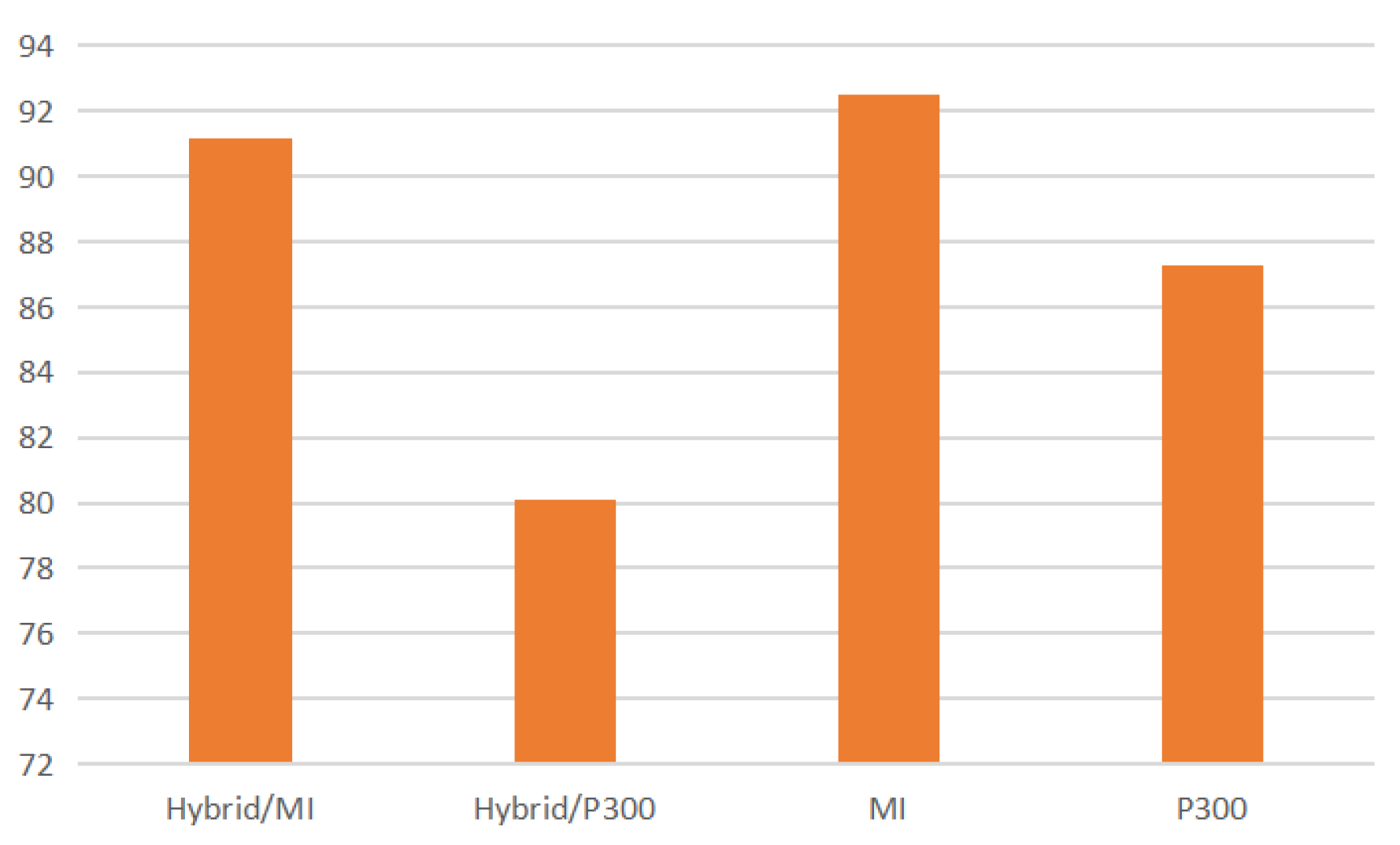

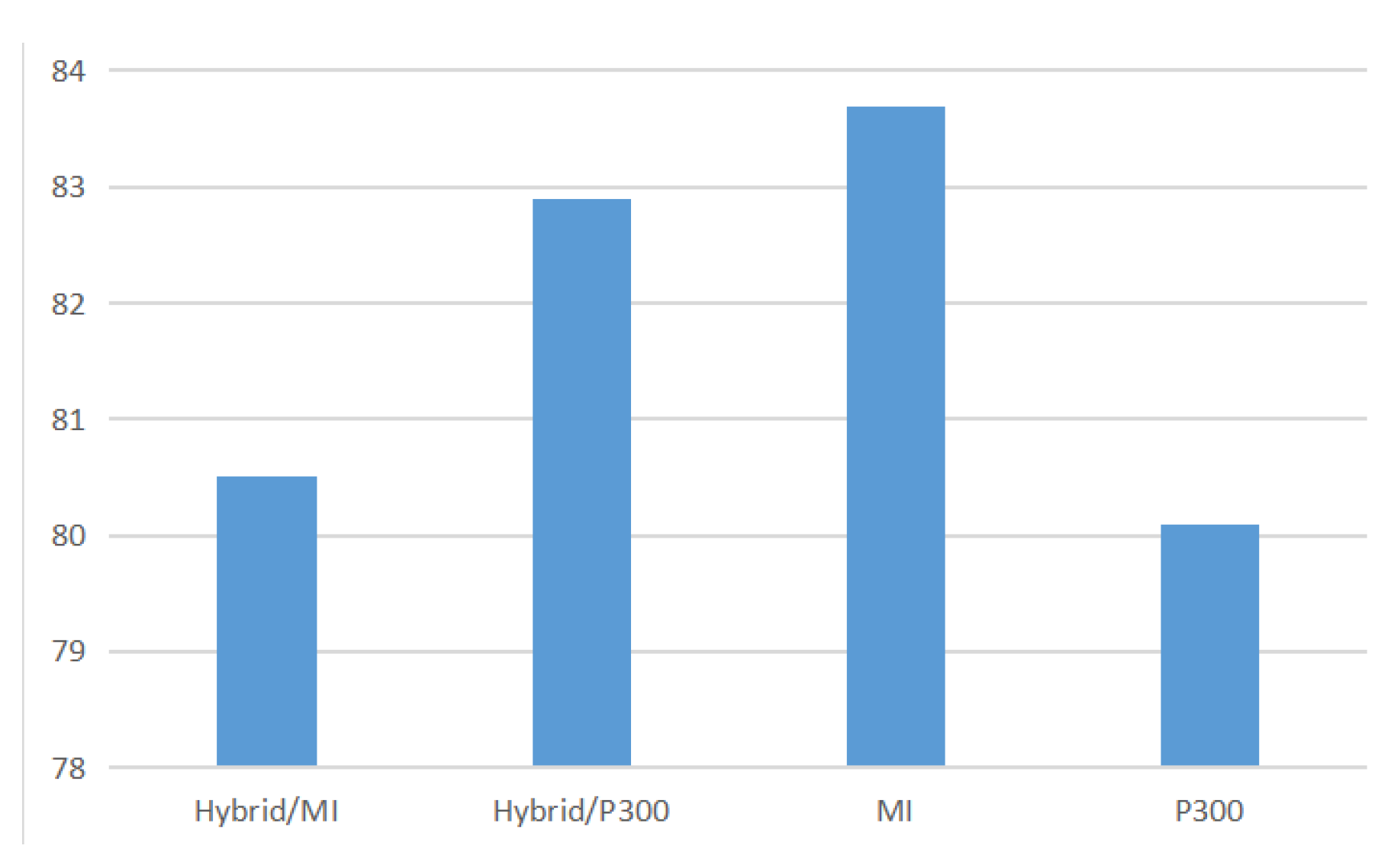

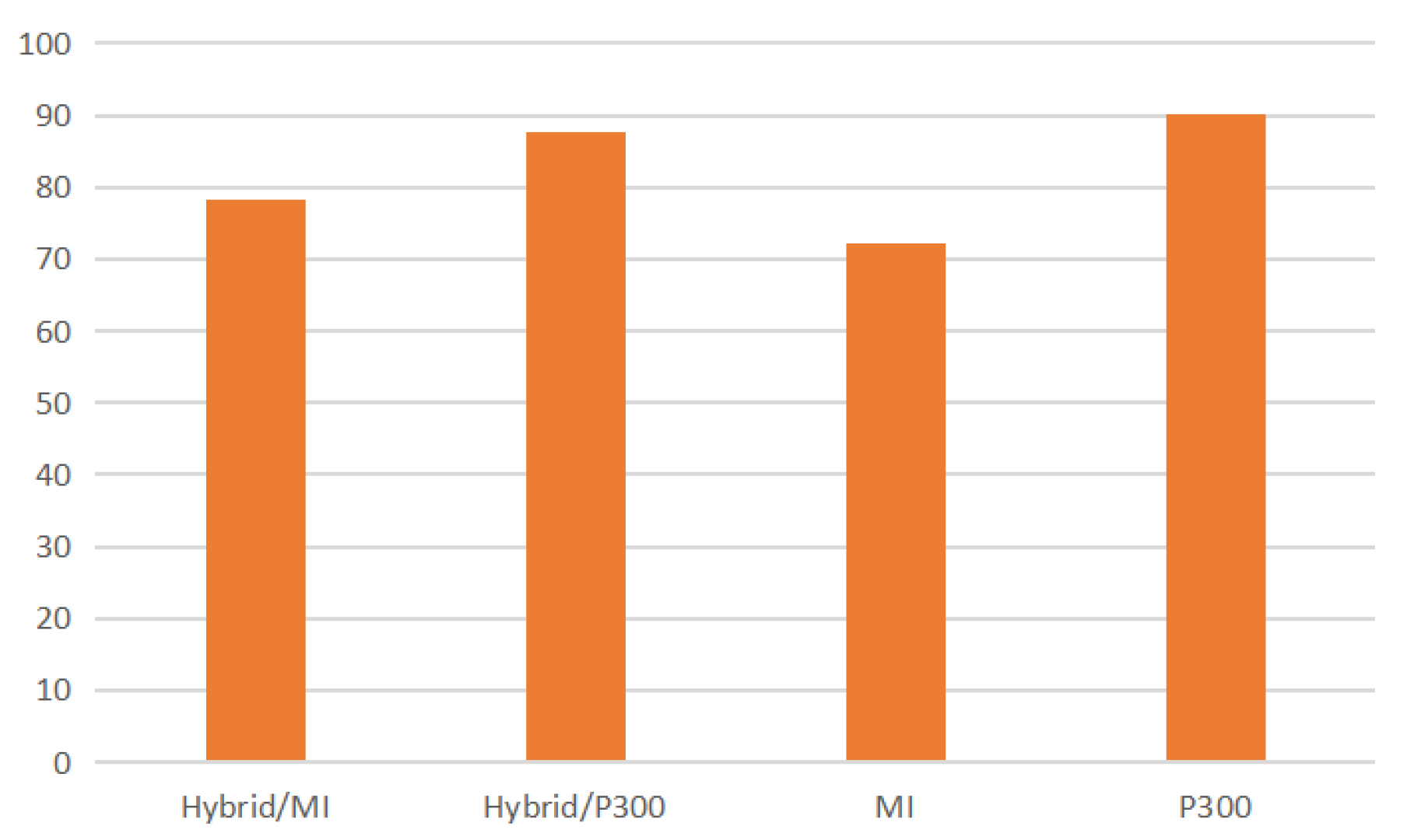

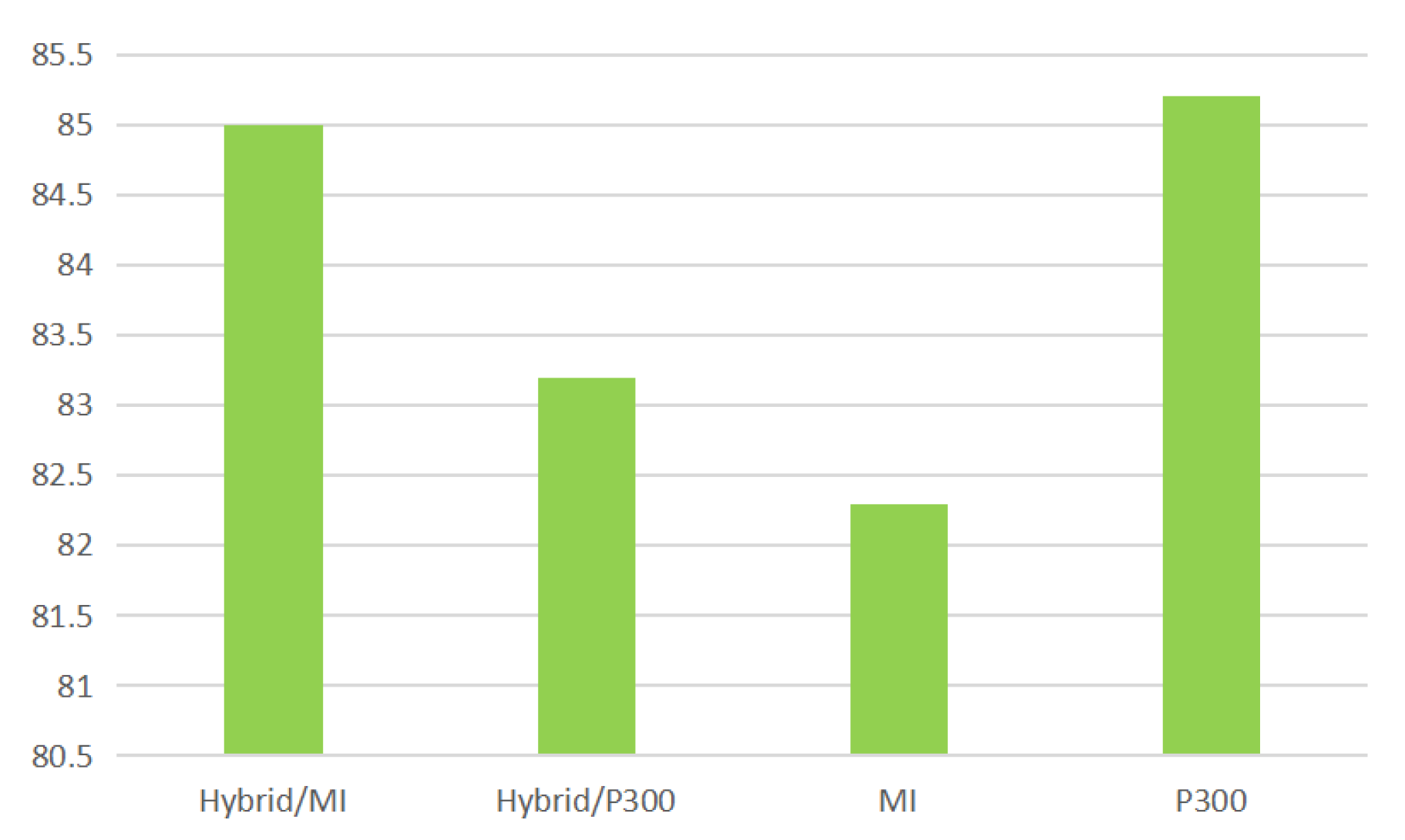

- For navigation-only tasks, the independent MI system averaged approximately 80.9%, while hybrid MI performance attained 85.0% in hybrid rounds.

- For device interaction tasks, the independent P300 method averaged 83.0%, whereas the hybrid configuration achieved as high as 87.0–90.0%, depending on task and participant order.

13.2. Functional Superiority

- It supports continuous navigation and multi-command interaction in the same control session—something single-modality systems cannot offer.

- In contrast to pure MI systems, which can only support low-degree-of-freedom motion, the hybrid model allows users to move and select—thereby mimicking natural task workflows in the Metaverse.

- P300-only systems would need an inordinate amount of visual stimuli to mimic spatial movement, which is cognitively inefficient and slow.

14. Metaverse-Specific Adaptations and Challenges

14.1. Multidimensional Navigation Complexity

14.2. Asynchronous Control State Switching

14.3. Cognitive Load and User Fatigue

14.4. Signal Latency and Feedback Responsiveness

14.5. Inclusivity and Assistive Design

15. Future Research Avenues

16. Conclusions

Conflicts of Interest

References

- Turjya, S.M.; Singh, R.; Sarkar, P.; Swain, S.; Bandyopadhyay, A. Smart Education Resource Management System: A Federated Game Theoretic Approach on Metaverse with Strict Preferences. In Proceedings of the 2024 15th International Conference on Computing Communication and Networking Technologies (ICCCNT), 2024; IEEE; pp. 1–6. [Google Scholar]

- Wolpaw, J.R.; Birbaumer, N.; Heetderks, W.J.; McFarland, D.J.; Peckham, P.H.; Schalk, G.; Donchin, E.; Quatrano, L.A.; Robinson, C.J.; Vaughan, T.M.; et al. Brain-computer interface technology: a review of the first international meeting. IEEE transactions on rehabilitation engineering 2000, 8, 164–173. [Google Scholar] [CrossRef]

- Tang, X.; Shen, H.; Zhao, S.; Li, N.; Liu, J. Flexible brain–computer interfaces. Nature Electronics 2023, 6, 109–118. [Google Scholar] [CrossRef]

- Islam, M.K.; Rastegarnia, A. Recent advances in EEG (non-invasive) based BCI applications. Frontiers in Computational Neuroscience 2023, 17, 1151852. [Google Scholar] [CrossRef] [PubMed]

- Wolpaw, J.R.; Birbaumer, N.; McFarland, D.J.; Pfurtscheller, G.; Vaughan, T.M. Brain–computer interfaces for communication and control. Clinical neurophysiology 2002, 113, 767–791. [Google Scholar] [CrossRef]

- Pfurtscheller, G.; Neuper, C. Motor imagery and direct brain-computer communication. Proceedings of the IEEE 2001, 89, 1123–1134. [Google Scholar] [CrossRef]

- Lun, X.; Zhang, Y.; Zhu, M.; Lian, Y.; Hou, Y. A Combined Virtual Electrode-Based ESA and CNN Method for MI-EEG Signal Feature Extraction and Classification. Sensors 2023, 23, 8893. [Google Scholar] [CrossRef] [PubMed]

- Farwell, L.A.; Donchin, E. Talking off the top of your head: toward a mental prosthesis utilizing event-related brain potentials. Electroencephalography and clinical Neurophysiology 1988, 70, 510–523. [Google Scholar] [CrossRef]

- Donchin, E.; Spencer, K.M.; Wijesinghe, R. The mental prosthesis: assessing the speed of a P300-based brain-computer interface. IEEE transactions on rehabilitation engineering 2000, 8, 174–179. [Google Scholar] [CrossRef]

- Sarraf, J.; Pattnaik, P.; et al. A study of classification techniques on P300 speller dataset. Materials Today: Proceedings 2023, 80, 2047–2050. [Google Scholar] [CrossRef]

- Bayliss, J.D. Use of the evoked potential P3 component for control in a virtual apartment. IEEE transactions on neural systems and rehabilitation engineering 2003, 11, 113–116. [Google Scholar] [CrossRef]

- Velasco-Álvarez, F.; Ron-Angevin, R. Asynchronous brain-computer interface to navigate in virtual environments using one motor imagery. In Proceedings of the Bio-Inspired Systems: Computational and Ambient Intelligence: 10th International Work-Conference on Artificial Neural Networks, IWANN 2009 Proceedings, Part I 10, Salamanca, Spain, June 10-12, 2009; Springer, 2009; pp. 698–705. [Google Scholar]

- Burback, L.; Brult-Phillips, S.; Nijdam, M.J.; McFarlane, A.; Vermetten, E. Treatment of posttraumatic stress disorder: A state-of-the-art review. Current neuropharmacology 2024, 22, 557–635. [Google Scholar] [CrossRef]

- Shershneva, M.; Kim, J.H.; Kear, C.; Heyden, R.; Heyden, N.; Lee, J.; Mitchell, S. Motivational interviewing workshop in a virtual world: learning as avatars. Family medicine 2014, 46, 251. [Google Scholar]

- Leeb, R.; Pfurtscheller, G. Walking through a virtual city by thought. In Proceedings of the The 26th Annual International Conference of the IEEE Engineering in Medicine and Biology Society. IEEE; 2004; Vol. 2, pp. 4503–4506. [Google Scholar]

- Leeb, R.; Lee, F.; Keinrath, C.; Scherer, R.; Bischof, H.; Pfurtscheller, G. Brain–computer communication: motivation, aim, and impact of exploring a virtual apartment. IEEE Transactions on Neural Systems and Rehabilitation Engineering 2007, 15, 473–482. [Google Scholar] [CrossRef] [PubMed]

- Zhao, Q.; Zhang, L.; Cichocki, A. EEG-based asynchronous BCI control of a car in 3D virtual reality environments. Chinese Science Bulletin 2009, 54, 78–87. [Google Scholar] [CrossRef]

- Zhou, Y.; Yu, T.; Gao, W.; Huang, W.; Lu, Z.; Huang, Q.; Li, Y. Shared three-dimensional robotic arm control based on asynchronous BCI and computer vision. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 2023. [Google Scholar]

- Kronegg, J.; Chanel, G.; Voloshynovskiy, S.; Pun, T. EEG-based synchronized brain-computer interfaces: A model for optimizing the number of mental tasks. IEEE Transactions on Neural Systems and Rehabilitation Engineering 2007, 15, 50–58. [Google Scholar] [CrossRef] [PubMed]

- Zhu, S.; Hosni, S.I.; Huang, X.; Wan, M.; Borgheai, S.B.; McLinden, J.; Shahriari, Y.; Ostadabbas, S. A dynamical graph-based feature extraction approach to enhance mental task classification in brain–computer interfaces. Computers in Biology and Medicine 2023, 153, 106498. [Google Scholar] [CrossRef]

- Ahn, M.; Ahn, S.; Hong, J.H.; Cho, H.; Kim, K.; Kim, B.S.; Chang, J.W.; Jun, S.C. Gamma band activity associated with BCI performance: simultaneous MEG/EEG study. Frontiers in human neuroscience 2013, 7, 848. [Google Scholar] [CrossRef]

- Baniqued, P.D.E.; Stanyer, E.C.; Awais, M.; Alazmani, A.; Jackson, A.E.; Mon-Williams, M.A.; Mushtaq, F.; Holt, R.J. Brain–computer interface robotics for hand rehabilitation after stroke: a systematic review. Journal of neuroengineering and rehabilitation 2021, 18, 1–25. [Google Scholar] [CrossRef]

- Piccione, F.; Giorgi, F.; Tonin, P.; Priftis, K.; Giove, S.; Silvoni, S.; Palmas, G.; Beverina, F. P300-based brain computer interface: Reliability and performance in healthy and paralysed participants. Clinical Neurophysiology 2006, 117, 531–537. [Google Scholar] [CrossRef]

- Pitt, K.M.; Spoor, A.; Zosky, J. Considering preferences, speed and the animation of multiple symbols in developing P300 brain-computer interface for children. Disability and Rehabilitation: Assistive Technology 2025, 20, 171–183. [Google Scholar] [CrossRef]

- McFarland, D.J.; Sarnacki, W.A.; Townsend, G.; Vaughan, T.; Wolpaw, J.R. The P300-based brain–computer interface (BCI): effects of stimulus rate. Clinical neurophysiology 2011, 122, 731–737. [Google Scholar] [CrossRef] [PubMed]

- Leoni, J.; Strada, S.C.; Tanelli, M.; Brusa, A.; Proverbio, A.M. Single-trial stimuli classification from detected P300 for augmented Brain–Computer Interface: A deep learning approach. Machine Learning with Applications 2022, 9, 100393. [Google Scholar] [CrossRef]

- Edlinger, G.; Krausz, G.; Groenegress, C.; Holzner, C.; Guger, C.; Slater, M. Brain-computer interfaces for virtual environment control. In Proceedings of the 13th International Conference on Biomedical Engineering: ICBME 2008 3–6 December 2008 Singapore, 2009; Springer; pp. 366–369. [Google Scholar]

- Rashid, M.; Sulaiman, N.; PP Abdul Majeed, A.; Musa, R.M.; Ab Nasir, A.F.; Bari, B.S.; Khatun, S. Current status, challenges, and possible solutions of EEG-based brain-computer interface: a comprehensive review. Frontiers in neurorobotics 2020, 25. [Google Scholar] [CrossRef]

- Shah, V.N.; Singh, R.; Turjya, S.M.; Ahuja, P.; Bandyopadhyay, A.; Swain, S. Unmanned Aerial Vehicles by Implementing Mobile Edge Computing for Resource Allocation. In Proceedings of the 2024 IEEE International Conference on Information Technology, Electronics and Intelligent Communication Systems (ICITEICS); IEEE, 2024; pp. 1–5. [Google Scholar]

- Chen, W.d.; Zhang, J.h.; Zhang, J.c.; Li, Y.; Qi, Y.; Su, Y.; Wu, B.; Zhang, S.m.; Dai, J.h.; Zheng, X.x.; et al. A P300 based online brain-computer interface system for virtual hand control. Journal of Zhejiang University SCIENCE C 2010, 11, 587–597. [Google Scholar] [CrossRef]

- Pfurtscheller, G.; Solis-Escalante, T.; Ortner, R.; Linortner, P.; Muller-Putz, G.R. Self-paced operation of an SSVEP-Based orthosis with and without an imagery-based “brain switch:” a feasibility study towards a hybrid BCI. IEEE transactions on neural systems and rehabilitation engineering 2010, 18, 409–414. [Google Scholar] [CrossRef]

- Luo, W.; Yin, W.; Liu, Q.; Qu, Y. A hybrid brain-computer interface using motor imagery and SSVEP Based on convolutional neural network. Brain-Apparatus Communication: A Journal of Bacomics 2023, 2, 2258938. [Google Scholar] [CrossRef]

- Allison, B.Z.; Brunner, C.; Kaiser, V.; Müller-Putz, G.R.; Neuper, C.; Pfurtscheller, G. Toward a hybrid brain–computer interface based on imagined movement and visual attention. Journal of neural engineering 2010, 7, 026007. [Google Scholar] [CrossRef] [PubMed]

- Pan, K.; Li, L.; Zhang, L.; Li, S.; Yang, Z.; Guo, Y. A Noninvasive BCI System for 2D Cursor Control Using a Spectral-Temporal Long Short-Term Memory Network. Frontiers in Computational Neuroscience 2022, 16, 799019. [Google Scholar] [CrossRef] [PubMed]

- Pfurtscheller, G.; Allison, B.; Brunner, C.; Bauernfeind, G.; Solis-Escalante, T.; Scherer, R.; Zander, T.; Mueller-Putz, G.; Neuper, C.; Birbaumer, N. The hybrid BCI Front. Neurosci 2010, 4, 10–3389. [Google Scholar]

- Donnerer, M.; Steed, A. Using a P300 brain–computer interface in an immersive virtual environment. Presence: Teleoperators and Virtual Environments 2010, 19, 12–24. [Google Scholar] [CrossRef]

- Singh, A.; Hussain, A.A.; Lal, S.; Guesgen, H.W. A comprehensive review on critical issues and possible solutions of motor imagery based electroencephalography brain-computer interface. Sensors 2021, 21, 2173. [Google Scholar] [CrossRef] [PubMed]

- Zhu, H.Y.; Hieu, N.Q.; Hoang, D.T.; Nguyen, D.N.; Lin, C.T. A human-centric metaverse enabled by brain-computer interface: A survey. IEEE Communications Surveys & Tutorials, 2024. [Google Scholar]

- Kohli, V.; Tripathi, U.; Chamola, V.; Rout, B.K.; Kanhere, S.S. A review on Virtual Reality and Augmented Reality use-cases of Brain Computer Interface based applications for smart cities. Microprocessors and Microsystems 2022, 88, 104392. [Google Scholar] [CrossRef]

- Gu, X.; Cao, Z.; Jolfaei, A.; Xu, P.; Wu, D.; Jung, T.P.; Lin, C.T. EEG-based brain-computer interfaces (BCIs): A survey of recent studies on signal sensing technologies and computational intelligence approaches and their applications. IEEE/ACM transactions on computational biology and bioinformatics 2021, 18, 1645–1666. [Google Scholar] [CrossRef]

- Vega, C.F.; Quevedo, J.; Escandón, E.; Kiani, M.; Ding, W.; Andreu-Perez, J. Fuzzy temporal convolutional neural networks in P300-based Brain–computer interface for smart home interaction. Applied Soft Computing 2022, 117, 108359. [Google Scholar] [CrossRef]

- Garakani, G.; Ghane, H.; Menhaj, M.B. Control of a 2-DOF robotic arm using a P300-based brain-computer interface. arXiv 2019, arXiv:1901.01422. [Google Scholar]

- Medhi, K.; Hoque, N.; Dutta, S.K.; Hussain, M.I. An efficient EEG signal classification technique for Brain–Computer Interface using hybrid Deep Learning. Biomedical Signal Processing and Control 2022, 78, 104005. [Google Scholar] [CrossRef]

- Mughal, N.E.; Khan, M.J.; Khalil, K.; Javed, K.; Sajid, H.; Naseer, N.; Ghafoor, U.; Hong, K.S. EEG-fNIRS-based hybrid image construction and classification using CNN-LSTM. Frontiers in Neurorobotics 2022, 16, 873239. [Google Scholar] [CrossRef]

- Chakravarthi, B.; Ng, S.C.; Ezilarasan, M.; Leung, M.F. EEG-based emotion recognition using hybrid CNN and LSTM classification. Frontiers in computational neuroscience 2022, 16, 1019776. [Google Scholar] [CrossRef]

- Blankertz, B.; Tomioka, R.; Lemm, S.; Kawanabe, M.; Muller, K.R. Optimizing spatial filters for robust EEG single-trial analysis. IEEE Signal processing magazine 2007, 25, 41–56. [Google Scholar] [CrossRef]

- Bishop, C. Pattern recognition and machine learning. Springer google schola 2006, 2, 5–43. [Google Scholar]

- Qi, F.; Wu, W.; Liu, K.; Yu, T.; Cao, Y. A Logistic Regression Based Framework for Spatio-Temporal Feature Representation and Classification of Single-Trial EEG. In Proceedings of the International Conference on Cognitive Systems and Signal Processing, 2020; Springer; pp. 387–394. [Google Scholar]

- Hartmann, K.G.; Schirrmeister, R.T.; Ball, T. EEG-GAN: Generative adversarial networks for electroencephalograhic (EEG) brain signals. arXiv 2018, arXiv:1806.01875. [Google Scholar] [CrossRef]

- Li, Y.; Long, J.; Yu, T.; Yu, Z.; Wang, C.; Zhang, H.; Guan, C. An EEG-based BCI system for 2-D cursor control by combining Mu/Beta rhythm and P300 potential. IEEE Transactions on Biomedical Engineering 2010, 57, 2495–2505. [Google Scholar] [CrossRef]

- Bernal, S.L.; Beltrán, E.T.M.; Pérez, M.Q.; Romero, R.O.; Celdrán, A.H.; Pérez, G.M. Study of P300 Detection Performance by Different P300 Speller Approaches Using Electroencephalography. In Proceedings of the 2022 IEEE 16th International Symposium on Medical Information and Communication Technology (ISMICT), 2022; IEEE; pp. 1–6. [Google Scholar]

- Millán, J.d.R.; Rupp, R.; Müller-Putz, G.R.; Murray-Smith, R.; Giugliemma, C.; Tangermann, M.; Vidaurre, C.; Cincotti, F.; Kübler, A.; Leeb, R.; et al. Combining brain–computer interfaces and assistive technologies: state-of-the-art and challenges. Frontiers in neuroscience 2010, 4, 161. [Google Scholar] [CrossRef]

- Lécuyer, A.; Lotte, F.; Reilly, R.B.; Leeb, R.; Hirose, M.; Slater, M. Brain-computer interfaces, virtual reality, and videogames. Computer 2008, 41, 66–72. [Google Scholar] [CrossRef]

| Component | Architecture Details |

|---|---|

| Generator (G) | - Input: Noise vector + Stimulus Label |

| - 2D Transposed Convolution Layers with BatchNorm + ReLU | |

| - LSTM-based Temporal Feature Modeling to capture EEG dependencies | |

| - Spectral Constraints to match EEG frequency characteristics | |

| - Output: Synthetic EEG trials for each stimulus | |

| Discriminator (D) | - Input: Real/Synthetic EEG Trials |

| - 1D Convolutional Layers with Leaky ReLU | |

| - Bidirectional GRU for EEG sequence modeling | |

| - Output: Real/Fake Classification | |

| Classifier (C) | - Input: EEG Data (Real + Synthetic) |

| - CNN-LSTM Hybrid Model for feature extraction | |

| - Output: P300 vs. Non-P300 classification to ensure augmentation quality |

| Class | Trials per Stimulus |

|---|---|

| P300 Target | 600 |

| Non-Target | 900 |

| Class | Original Trials | Augmented Trials | Oversampled Trials | Total Trials |

|---|---|---|---|---|

| P300 Target | 500 | 2,000 | 500 | 3,000 |

| Non-Target | 2,000 | 2,000 | 500 | 4,500 |

| Total | 2,500 | 4,000 | 1,000 | 7,500 |

| Simulated User | Portion (rounds 1, 2, 3) | Activity 1 Online Accuracy (3 rounds) |

|---|---|---|

| A | 1, Hybrid/MI | 84.5 |

| A | 1, Hybrid/P300 | 91.0 |

| A | 2, MI | 68.9 |

| A | 3, P300 | 86.7 |

| B | 1, Hybrid/MI | 91.0 |

| B | 1, Hybrid/P300 | 80.9 |

| B | 2, MI | 90.9 |

| B | 3, P300 | 87.8 |

| C | 1, Hybrid/MI | 76.7 |

| C | 1, Hybrid/P300 | 88.9 |

| C | 2, MI | 81.6 |

| C | 3, P300 | 80.0 |

| D | 1, Hybrid/MI | 74.4 |

| D | 1, Hybrid/P300 | 93.3 |

| D | 2, MI | 68.4 |

| D | 3, P300 | 86.7 |

| Simulated User | Activity 2 Online Accuracy (3 rounds) |

Activity 3 Online Accuracy (2 rounds) |

Average |

|---|---|---|---|

| A | 92.6 | 93.2 | 90.1 |

| A | 84.1 | 78.0 | 84.4 |

| A | 79.4 | 94.5 | 80.9 |

| A | 80.0 | - | 83.4 |

| B | 87.8 | 94.7 | 91.2 |

| B | 77.8 | 81.7 | 80.1 |

| B | 87.5 | 99.0 | 92.5 |

| B | 86.7 | - | 87.3 |

| C | 79.4 | 85.4 | 80.5 |

| C | 72.2 | 90.0 | 82.9 |

| C | 86.8 | 80.4 | 83.7 |

| C | 80.1 | - | 80.1 |

| D | 79.6 | 80.8 | 78.3 |

| D | 82.2 | 87.5 | 87.7 |

| D | 69.5 | 78.6 | 72.2 |

| D | 93.5 | - | 90.1 |

| Study | BCI Type | Modalities | Avg Accuracy |

|---|---|---|---|

| Li et al. [50] | Hybrid BCI | MI + P300 | 85% (MI & P300) |

| Singh et al. [37] | Single-mode BCI | MI only | 75–80% |

| Sergio et al. [51] | P300-based BCI | P300 | F1-score: 75% |

| Flores Vega et al. [41] | Deep Learning BCI | P300 | 98.6% (SD), 74.3% (SI) |

| Luo et al. [32] | Hybrid BCI | MI + SSVEP | 95.6% |

| Garakani et al. [42] | P300-based BCI | P300 | ∼97% |

| Proposed Work | Hybrid BCI | P300 + MI | 88.5% (P300), 88.5% (MI) |

| Study | Features / Strengths | Limitations |

|---|---|---|

| Li et al. [50] | 2D cursor control with concurrent signal decoding | No adaptive switching; limited task flexibility |

| Singh et al. [37] | General-purpose motor imagery classification in EEG | No hybrid interaction; not integrated with immersive systems |

| Sergio et al. [51] | Character selection using blink-triggered P300 paradigm | Limited to speller task; no spatial interaction |

| Flores Vega et al. [41] | EEG-TCFNet model with LSTM and fuzzy logic | Poor generalization across users; no real-time use |

| Luo et al. [32] | CNN-based decoding with hybrid commands | Static switching; dependent on exogenous visual stimuli |

| Garakani et al. [42] | ERP-based control of 2-DoF robotic arm | Low-dimensional discrete control; no immersive interface |

| Proposed Work | Real-time 3D Metaverse integration; adaptive switching; GAN-based augmentation; CSP+FLDA pipeline | – |

| Session # | Duration | Activities |

|---|---|---|

| Session 1 | 20–30 min | Initial use of MI and P300 control modules |

| Session 2 | 20–30 min | Guided MI and P300 tasks |

| Session 3 | 20–30 min | Repeated command execution (navigation + gadget control) |

| Session 4 | 20–30 min | Mixed-task session under light cognitive load |

| Session 5 | 30 min | Real-time task block in virtual apartment Metaverse |

| Session # | Purpose |

|---|---|

| Session 1 | Baseline performance measurement and familiarization with the hybrid BCI interface. |

| Session 2 | Observe short-term learning progression and initial adaptation. |

| Session 3 | Continued user-specific tuning and calibration of the classifiers, especially for MI. |

| Session 4 | Evaluate medium-term stability and improved efficiency under natural use. |

| Session 5 | Final test of accuracy, response time, usability, and fatigue resilience in an immersive scenario. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).