Submitted:

28 January 2026

Posted:

29 January 2026

You are already at the latest version

Abstract

Keywords:

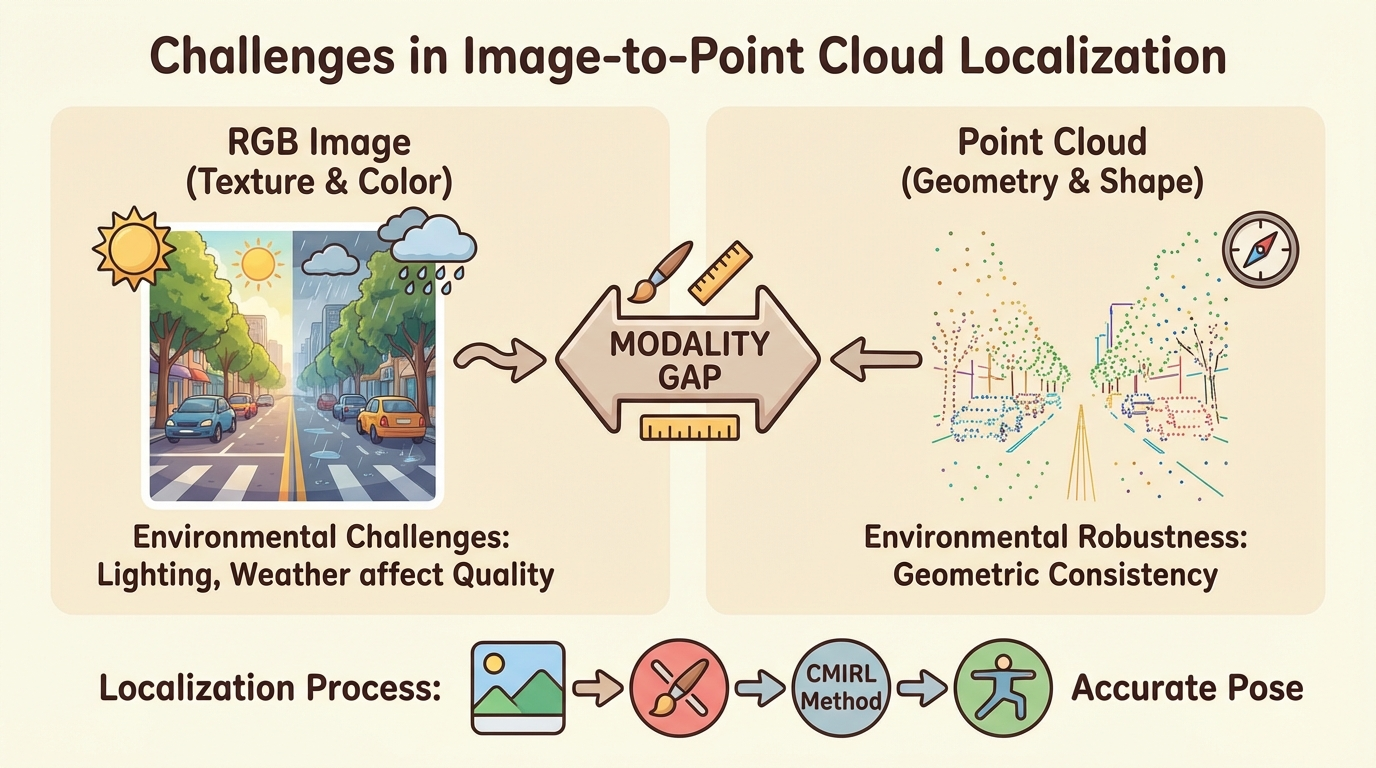

1. Introduction

- We propose a novel framework, CMIRL, for Image-to-PointCloud place recognition that effectively learns cross-modal invariant representations robust to severe environmental changes.

- We introduce an Adaptive Cross-Modal Alignment (ACMA) module and a Cross-Modal Attention Fusion (CMAF) module within a dual-stream encoding architecture to dynamically align modalities and explicitly fuse invariant features.

- We demonstrate that CMIRL significantly outperforms existing state-of-the-art methods on the challenging KITTI dataset in terms of Recall@1 and Recall@1%, showcasing enhanced robustness and accuracy in long-term localization scenarios.

2. Related Work

2.1. Cross-Modal Place Recognition

2.2. Invariant Representation Learning and Feature Fusion

3. Method

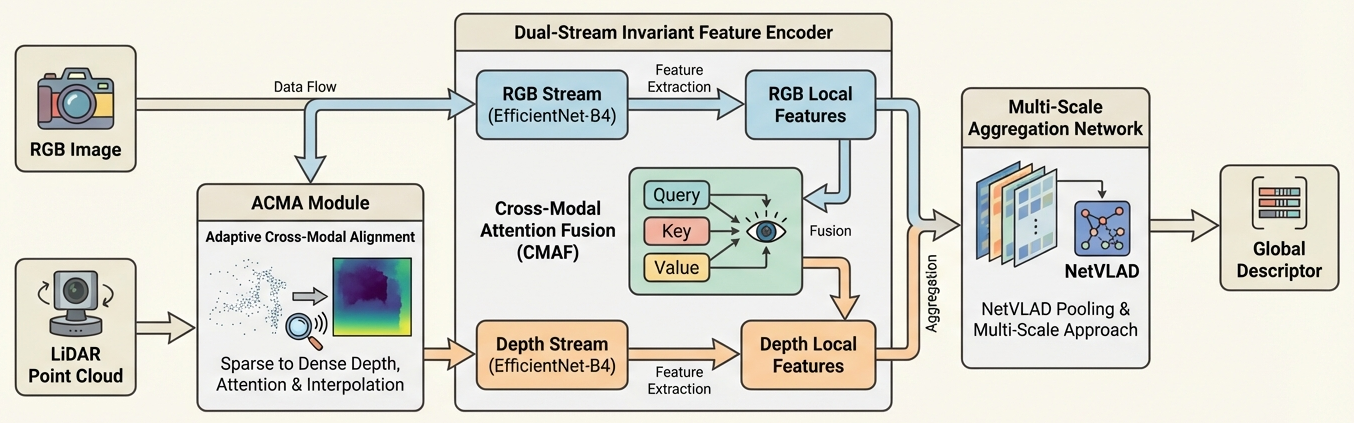

3.1. Overall Architecture

3.2. Adaptive Cross-Modal Alignment (ACMA) Module

3.3. Dual-Stream Invariant Feature Encoder

3.3.1. Local Feature Extraction

3.3.2. Cross-Modal Attention Fusion (CMAF) Module

3.4. Multi-Scale Aggregation Network

4. Experiments

4.1. Experimental Setup

4.1.1. Datasets

4.1.2. Training Details

4.2. Baseline Methods

4.3. Quantitative Results

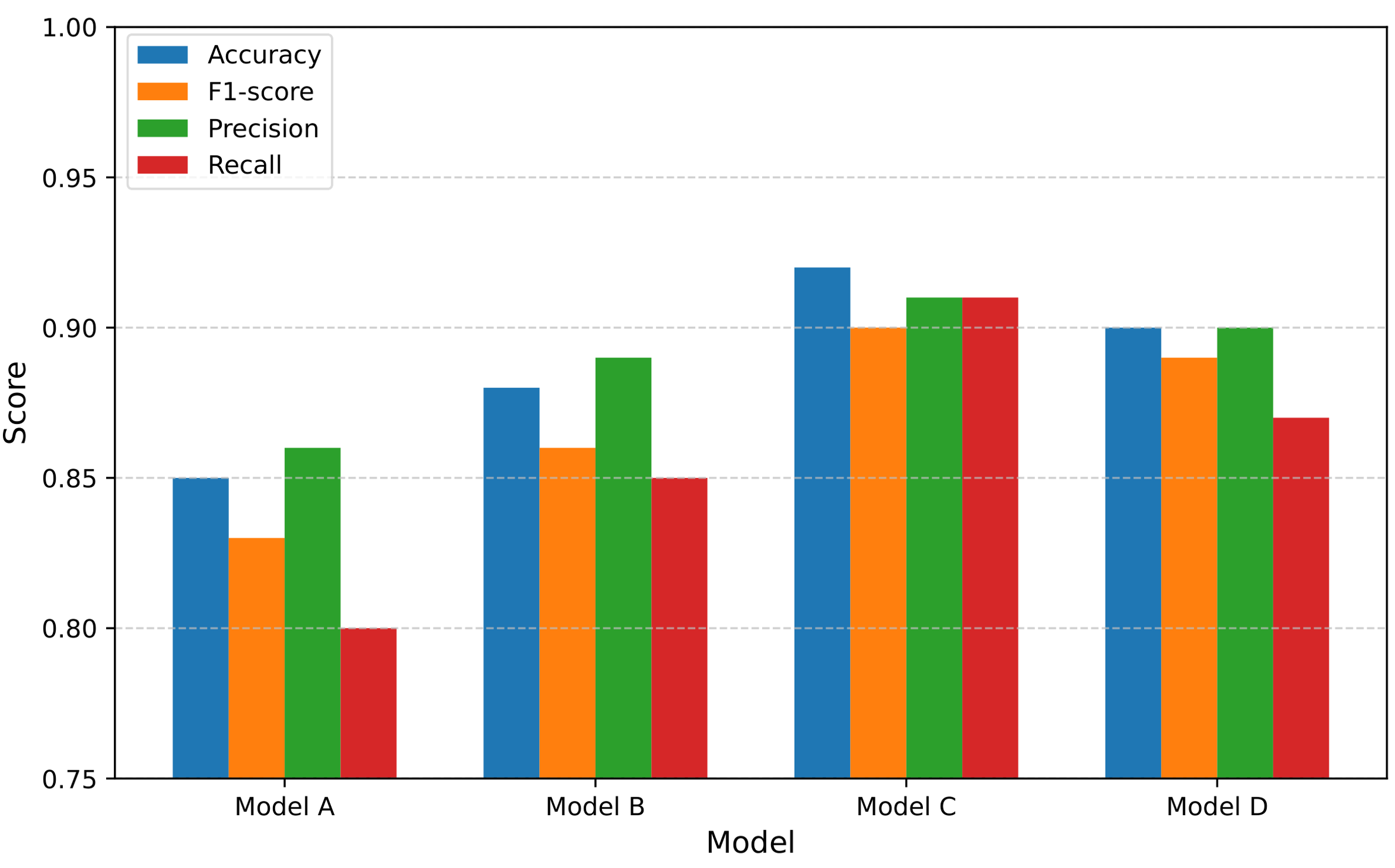

4.4. Ablation Study

- CMIRL w/o ACMA (Rigid FoV Cropping): In this variant, the Adaptive Cross-Modal Alignment (ACMA) module is replaced by a conventional approach of rigidly cropping the LiDAR point cloud’s field-of-view (FoV) to match the camera’s FoV, followed by standard depth completion. This simplification leads to a significant drop in performance (e.g., 5.4% reduction in R@1 on seq 00 and 13.1% on seq 02), underscoring the critical role of ACMA in dynamically aligning modalities and generating semantically rich, view-optimized dense depth maps, especially under varying viewpoints.

- CMIRL w/o CMAF (Simple Concatenation): Here, the Cross-Modal Attention Fusion (CMAF) module is substituted with a simpler feature fusion mechanism, such as direct concatenation of RGB and depth features, followed by a linear projection. While still performing well, this configuration shows a noticeable decrease in performance (e.g., 3.2% reduction in R@1 on seq 00 and 6.7% on seq 02). This demonstrates that the Transformer-based CMAF’s explicit cross-modal attention mechanism is vital for learning and emphasizing invariant features by robustly filtering noise and modality-specific artifacts, which simple concatenation fails to achieve as effectively.

- CMIRL w/o Multi-Scale Aggregation (Single-Scale NetVLAD): To assess the impact of multi-scale pooling, we replace our enhanced NetVLAD with a single-scale NetVLAD module operating on the fused features. The results show a minor but consistent performance drop (e.g., 1.1% reduction in R@1 on seq 00 and 1.6% on seq 02). This indicates that aggregating features from multiple spatial resolutions significantly enhances the discriminative power and robustness of the final global descriptor, allowing it to capture information at various contextual levels.

| Method Variant | seq 00 | seq 02 | ||

|---|---|---|---|---|

| R@1 | R@1% | R@1 | R@1% | |

| CMIRL (Full Model) | 93.5 | 99.8 | 78.5 | 98.7 |

| w/o ACMA (Rigid FoV Cropping) | 88.1 | 97.2 | 65.4 | 90.1 |

| w/o CMAF (Simple Concatenation) | 90.3 | 98.5 | 71.8 | 94.6 |

| w/o Multi-Scale Aggregation (Single-Scale NetVLAD) | 92.4 | 99.3 | 76.9 | 98.0 |

4.5. Qualitative Analysis and Robustness

4.6. Generalization to Unseen Environments

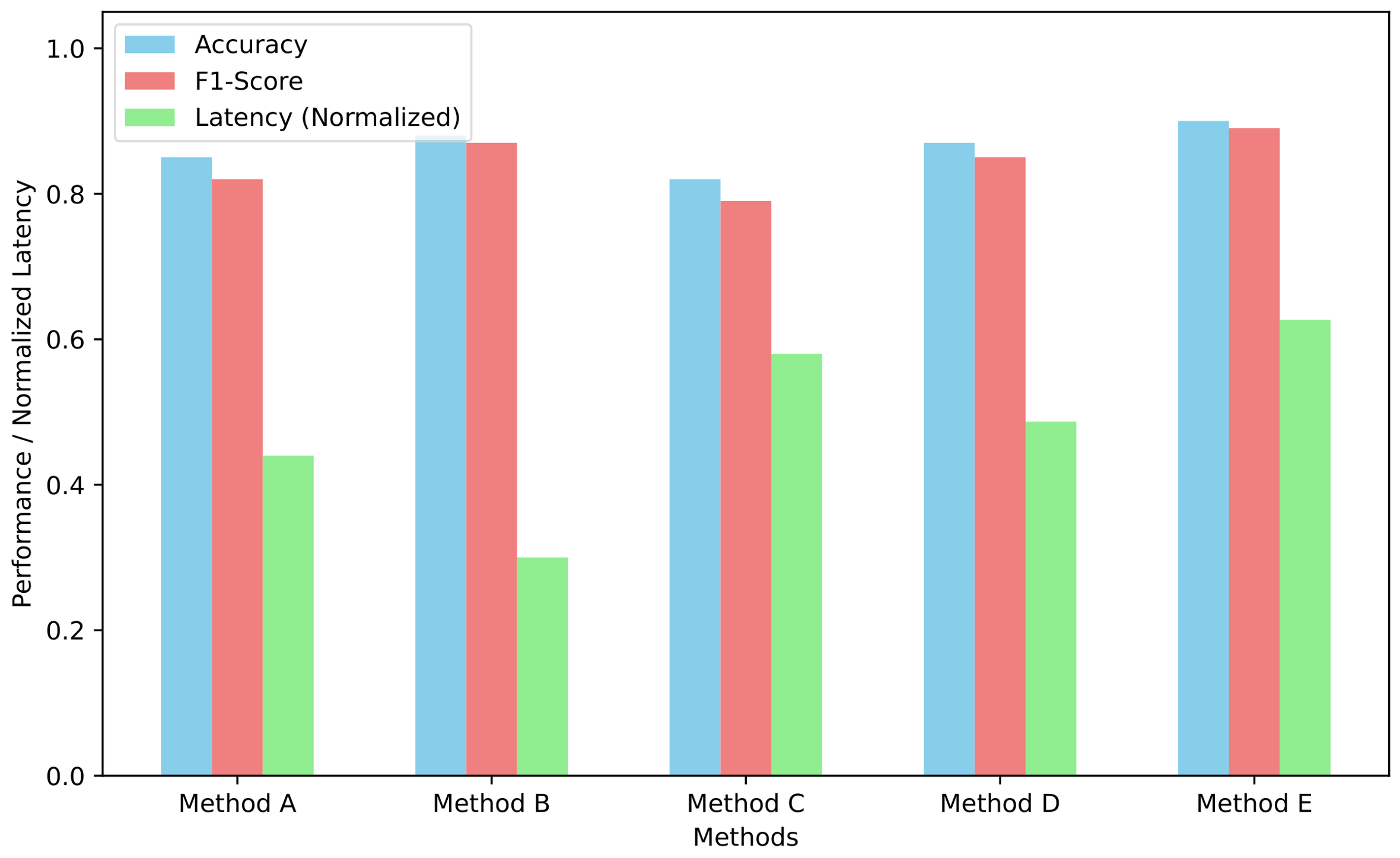

4.7. Computational Performance

5. Conclusion

References

- Gu, J.; Stefani, E.; Wu, Q.; Thomason, J.; Wang, X. Vision-and-Language Navigation: A Survey of Tasks, Methods, and Future Directions. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2022; Volume 1, pp. 7606–7623. [Google Scholar] [CrossRef]

- Li, X.; Xu, Z.; Wu, C.; Yang, Z.; Zhang, Y.; Liu, J.J.; Yu, H.; Ye, X.; Wang, Y.; Li, S.; et al. U-ViLAR: Uncertainty-Aware Visual Localization for Autonomous Driving via Differentiable Association and Registration. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2025; pp. 24889–24898. [Google Scholar]

- Ye, R.; Wang, M.; Li, L. Cross-modal Contrastive Learning for Speech Translation. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022; Association for Computational Linguistics; pp. 5099–5113. [Google Scholar] [CrossRef]

- Hazarika, D.; Li, Y.; Cheng, B.; Zhao, S.; Zimmermann, R.; Poria, S. Analyzing Modality Robustness in Multimodal Sentiment Analysis. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022; Association for Computational Linguistics; pp. 685–696. [Google Scholar] [CrossRef]

- Fetahu, B.; Chen, Z.; Kar, S.; Rokhlenko, O.; Malmasi, S. MultiCoNER v2: a Large Multilingual dataset for Fine-grained and Noisy Named Entity Recognition. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023; Association for Computational Linguistics, 2023; pp. 2027–2051. [Google Scholar] [CrossRef]

- Ding, N.; Xu, G.; Chen, Y.; Wang, X.; Han, X.; Xie, P.; Zheng, H.; Liu, Z. Few-NERD: A Few-shot Named Entity Recognition Dataset. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 3198–3213. [CrossRef]

- Li, W.; Gao, C.; Niu, G.; Xiao, X.; Liu, H.; Liu, J.; Wu, H.; Wang, H. UNIMO: Towards Unified-Modal Understanding and Generation via Cross-Modal Contrastive Learning. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 2592–2607. [CrossRef]

- Xu, H.; Yan, M.; Li, C.; Bi, B.; Huang, S.; Xiao, W.; Huang, F. E2E-VLP: End-to-End Vision-Language Pre-training Enhanced by Visual Learning. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 503–513. [CrossRef]

- Song, H.; Dong, L.; Zhang, W.; Liu, T.; Wei, F. CLIP Models are Few-Shot Learners: Empirical Studies on VQA and Visual Entailment. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 6088–6100. [CrossRef]

- Wang, X.; Gui, M.; Jiang, Y.; Jia, Z.; Bach, N.; Wang, T.; Huang, Z.; Tu, K. ITA: Image-Text Alignments for Multi-Modal Named Entity Recognition. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022; Association for Computational Linguistics; pp. 3176–3189. [Google Scholar] [CrossRef]

- Li, X.; Zhang, Y.; Ye, X. DrivingDiffusion: layout-guided multi-view driving scenarios video generation with latent diffusion model. In Proceedings of the European Conference on Computer Vision, 2024; Springer; pp. 469–485. [Google Scholar]

- Li, X.; Wu, C.; Yang, Z.; Xu, Z.; Zhang, Y.; Liang, D.; Wan, J.; Wang, J. DriVerse: Navigation world model for driving simulation via multimodal trajectory prompting and motion alignment. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 9753–9762. [Google Scholar]

- Qi, L.; Wu, J.; Gong, B.; Wang, A.N.; Jacobs, D.W.; Sengupta, R. Mytimemachine: Personalized facial age transformation. ACM Transactions on Graphics (TOG) 2025, 44, 1–16. [Google Scholar] [CrossRef]

- Gong, B.; Qi, L.; Wu, J.; Fu, Z.; Song, C.; Jacobs, D.W.; Nicholson, J.; Sengupta, R. The Aging Multiverse: Generating Condition-Aware Facial Aging Tree via Training-Free Diffusion. arXiv arXiv:2506.21008.

- Qi, L.; Wu, J.; Choi, J.M.; Phillips, C.; Sengupta, R.; Goldman, D.B. Over++: Generative Video Compositing for Layer Interaction Effects. arXiv arXiv:2512.19661.

- Ju, X.; Zhang, D.; Xiao, R.; Li, J.; Li, S.; Zhang, M.; Zhou, G. Joint Multi-modal Aspect-Sentiment Analysis with Auxiliary Cross-modal Relation Detection. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 4395–4405. [Google Scholar] [CrossRef]

- Liu, H.; Wang, W.; Li, H. Towards Multi-Modal Sarcasm Detection via Hierarchical Congruity Modeling with Knowledge Enhancement. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; Association for Computational Linguistics; pp. 4995–5006. [Google Scholar] [CrossRef]

- Wu, Y.; Lin, Z.; Zhao, Y.; Qin, B.; Zhu, L.N. A Text-Centered Shared-Private Framework via Cross-Modal Prediction for Multimodal Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021; Association for Computational Linguistics, 2021; pp. 4730–4738. [Google Scholar] [CrossRef]

- Hui, J.; Cui, X.; Han, Q. Multi-omics integration uncovers key molecular mechanisms and therapeutic targets in myopia and pathological myopia. Asia-Pacific Journal of Ophthalmology 2026, 100277. [Google Scholar] [CrossRef] [PubMed]

- Wang, J.; Cui, X. Multi-omics Mendelian Randomization Reveals Immunometabolic Signatures of the Gut Microbiota in Optic Neuritis and the Potential Therapeutic Role of Vitamin B6. Molecular Neurobiology 2025, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Xuehao, C.; Dejia, W.; Xiaorong, L. Integration of Immunometabolic Composite Indices and Machine Learning for Diabetic Retinopathy Risk Stratification: Insights from NHANES 2011–2020. Ophthalmology Science 2025, 100854. [Google Scholar] [CrossRef] [PubMed]

- Zhang, W.; Stratos, K. Understanding Hard Negatives in Noise Contrastive Estimation. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021; Association for Computational Linguistics; pp. 1090–1101. [Google Scholar] [CrossRef]

- You, C.; Chen, N.; Zou, Y. Self-supervised Contrastive Cross-Modality Representation Learning for Spoken Question Answering. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021; Association for Computational Linguistics, 2021; pp. 28–39. [Google Scholar] [CrossRef]

- Yang, J.; Yu, Y.; Niu, D.; Guo, W.; Xu, Y. ConFEDE: Contrastive Feature Decomposition for Multimodal Sentiment Analysis. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2023, pp. 7617–7630. [CrossRef]

- Jimenez Gutierrez, B.; McNeal, N.; Washington, C.; Chen, Y.; Li, L.; Sun, H.; Su, Y. Thinking about GPT-3 In-Context Learning for Biomedical IE? Think Again. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022; Association for Computational Linguistics, 2022; pp. 4497–4512. [Google Scholar] [CrossRef]

- Nguyen, M.V.; Lai, V.D.; Nguyen, T.H. Cross-Task Instance Representation Interactions and Label Dependencies for Joint Information Extraction with Graph Convolutional Networks. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021; Association for Computational Linguistics; pp. 27–38. [Google Scholar] [CrossRef]

- Yang, X.; Feng, S.; Zhang, Y.; Wang, D. Multimodal Sentiment Detection Based on Multi-channel Graph Neural Networks. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 328–339. [CrossRef]

- Wu, Y.; Zhan, P.; Zhang, Y.; Wang, L.; Xu, Z. Multimodal Fusion with Co-Attention Networks for Fake News Detection. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021; Association for Computational Linguistics, 2021; pp. 2560–2569. [Google Scholar] [CrossRef]

- Sinha, K.; Parthasarathi, P.; Pineau, J.; Williams, A. UnNatural Language Inference. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 7329–7346. [CrossRef]

- Ren, F.; Zhang, L.; Yin, S.; Zhao, X.; Liu, S.; Li, B.; Liu, Y. A Novel Global Feature-Oriented Relational Triple Extraction Model based on Table Filling. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 2646–2656. [Google Scholar] [CrossRef]

| Challenging Condition | LEA-I2P-Rec* (R@1%) | CMIRL (R@1%) |

|---|---|---|

| Daylight (Good Conditions) | 99.7 | 99.8 |

| Low Light / Twilight | 96.2 | 98.1 |

| Heavy Shadows | 95.5 | 97.9 |

| Simulated Rain/Fog | 88.0 | 91.5 |

| Significant Viewpoint Change | 94.1 | 96.8 |

| Method | Recall@1 | Recall@1% |

|---|---|---|

| MIM-I2P-Rec | 68.1 | 85.0 |

| PSM-I2P-Rec* | 72.5 | 89.2 |

| LEA-I2P-Rec* | 79.8 | 93.5 |

| Ours (CMIRL) | 83.1 | 95.7 |

| Method | AIT (ms) | TP (M) |

|---|---|---|

| MIM-I2P-Rec | 95.2 | 68.5 |

| PSM-I2P-Rec* | 110.5 | 75.1 |

| LEA-I2P-Rec* | 102.8 | 72.3 |

| Ours (CMIRL) | 98.1 | 69.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).