Submitted:

22 January 2026

Posted:

27 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Can question-based knowledge encoding improve retrieval performance in RAG systems compared to traditional chunking methods?

- What is the impact of syntactic reranking and “paper-card” summaries on the accuracy and efficiency of information retrieval in scientific texts?

- Can question generation serve as an effective form of knowledge compression for scalable, fine-tuning-free RAG architectures?

2. Literature Review

2.1. Fixed-Size Chunking

2.2. Structure-Based Chunking

2.3. Advances in Multihhop Reasoning in RAG Systems

2.4. Semantic Chunking Strategies and Syntactic Limitations in RAG Systems

3. Research Objectives

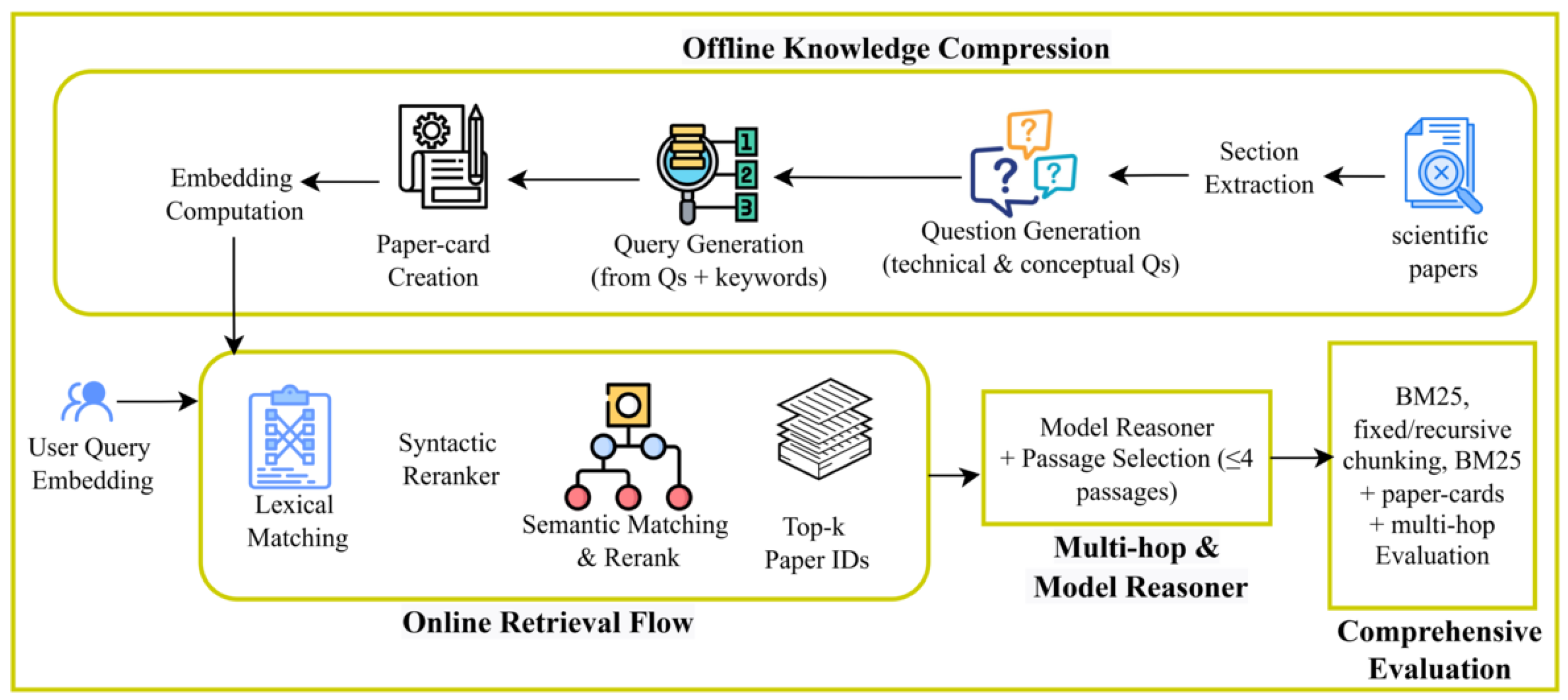

4. Material and Research Methodology

4.1. Single-Hop Document Retrieval

4.2. Multihop Document Retrieval

4.3. Proposed Approach

4.3.1. Knowledge and Context Compression Pipeline

4.3.2. Retrieval Process

4.3.3. Syntactic Re-Ranking

- 1.

-

Recursive Syntactic Splitting: The query goes through recursive splitting at syntactic boundaries defined by part-of-speech tags (ADP, CCONJ) and specific punctuation marks (colons). This process continues iteratively until no further split points are found, decomposing the query into minimal semantic units while preserving content-bearing segments.It can be defined mathematically by defining and be the split trigger sets. For a text segment s, we define the split points as shown in Equation (3):The recursive splitting process produces query segments as shown in Equation (4):

- 2.

- Document Frequency Calculation: For each word w in each query segment , calculate its document frequency (number of passages containing the word) as shown in Equation (5):

- 3.

- Word Frequency Counting: For each word w in the original query, count its raw frequency in every passage as shown in Equation (6):

- 4.

- 5.

- Phrase-Level Aggregation: For each query segment (phrase) s, collect all passage indices that appear in the top-L for any word in that phrase as shown in Equation (8):

- 6.

- Reverse-Order Union: Process query segments in reverse order and take the union of all valid indices as shown in Equation (9):

- 7.

- Order Preservation: Rather than ranking by frequency scores, selected passages are returned according to their original document order. This maintains the semantic and logical flow of the document collection, which is crucial for coherent information retrieval as shown in Equation (10):

4.4. Dataset

5. Experimental Setup

5.1. Evaluation Methodology

5.1.1. Metric Rationale and Evaluation Scope

5.2. Models

5.3. Evaluation Tasks

6. Results

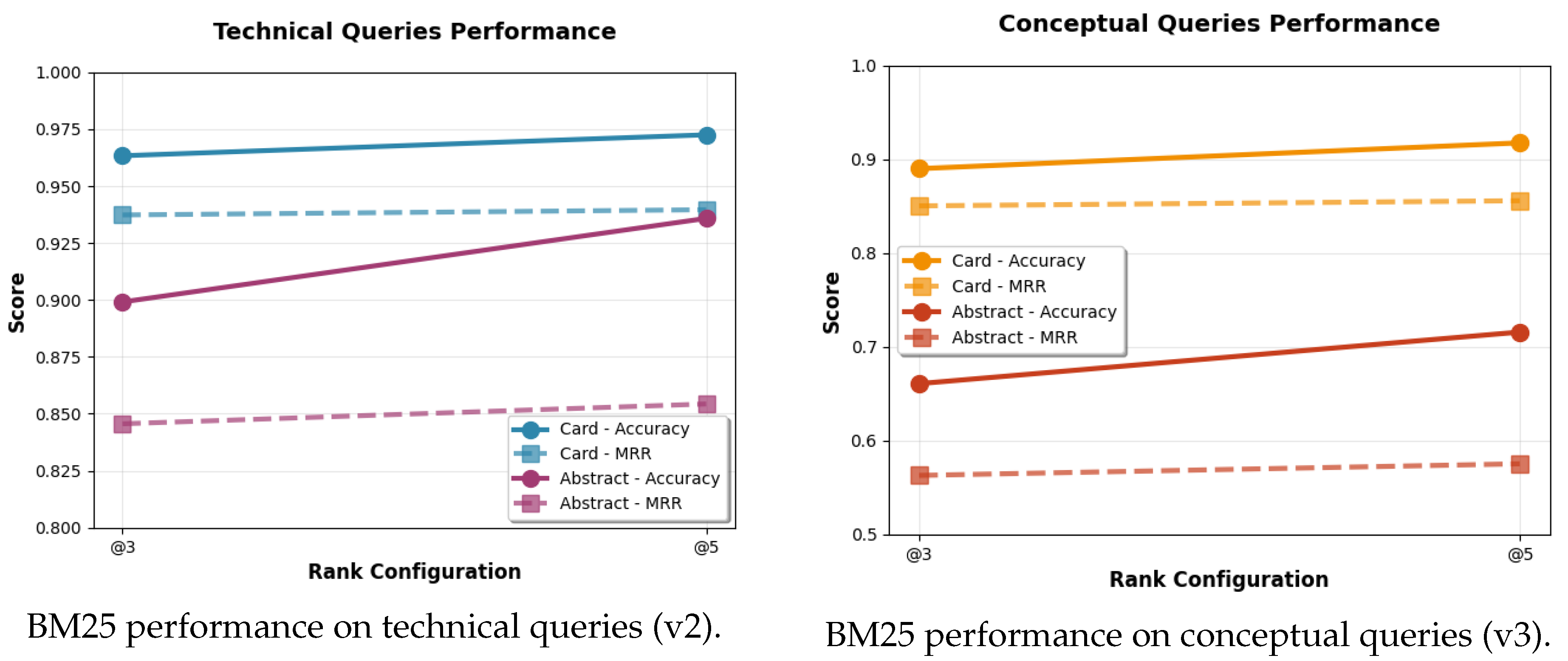

6.1. Task 1: Single-Hop Retrieval

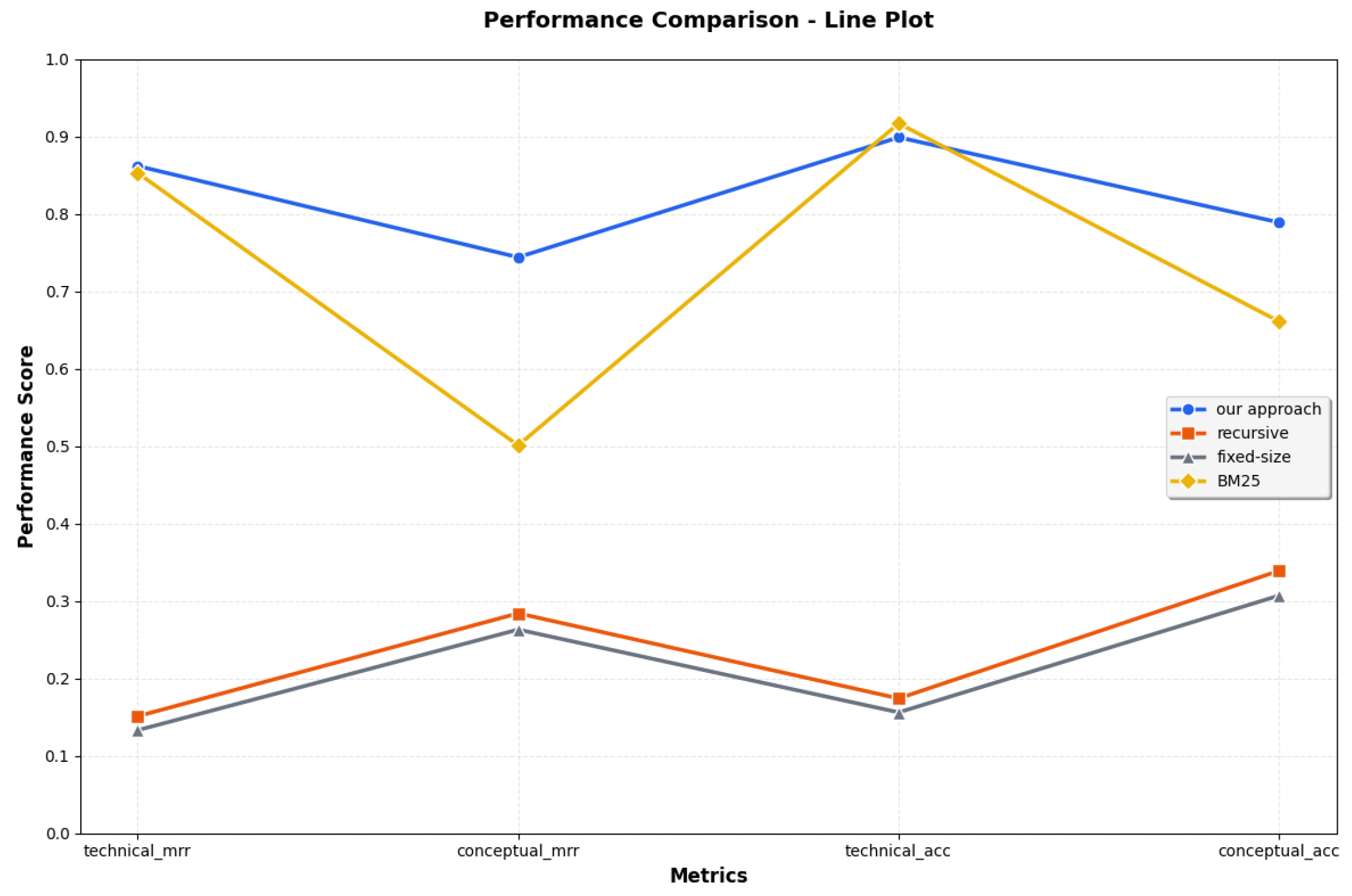

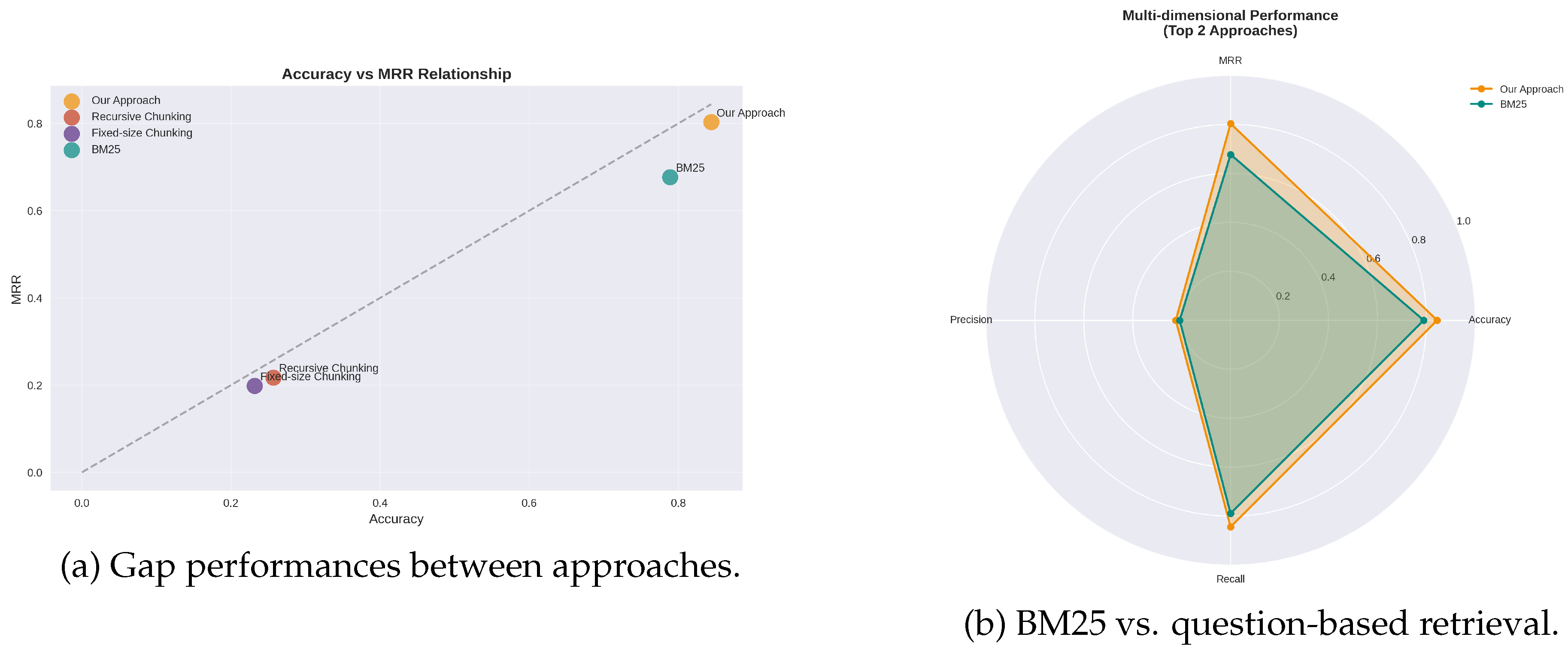

6.1.1. Global Performances

6.2. Task 2: Multi-Hop Retrieval

7. Discussions

7.1. Task 1: Single-Hop Retrieval

7.2. Task 2: Multi-Hop Retrieval

7.3. Limitations

8. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Arslan, M.; Ghanem, H.; Munawar, S.; Cruz, C. A Survey on RAG with LLMs. Procedia Computer Science 2024, 246, 3781–3790. [Google Scholar] [CrossRef]

- Arslan, M.; Munawar, S.; Cruz, C. Business insights using RAG–LLMs: a review and case study. Journal of Decision Systems 2024, 1–30. [Google Scholar] [CrossRef]

- Zhu, Z.; Sun, Z.; Yang, Y. HaluEval-Wild: Evaluating Hallucinations of Language Models in the Wild. arXiv 2024, arXiv:2403.04307. [Google Scholar]

- Guțu, B. M.; Popescu, N. Exploring data analysis methods in generative models: from Fine-Tuning to RAG implementation. Computers 2024, 13(12), 327. [Google Scholar] [CrossRef]

- Xu, S.; Pang, L.; Shen, H.; Cheng, X. BERM: Training the Balanced and Extractable Representation for Matching to Improve Generalization Ability of Dense Retrieval. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Long Papers), 2023; pp. 6620–6635. [Google Scholar]

- Jimeno-Yepes, A.; You, Y.; Milczek, J.; Laverde, S.; Li, R. Financial Report Chunking for Effective Retrieval Augmented Generation. ArXiv 2024, arXiv:2402.05131. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Kuttler, H.; Lewis, M.; Yih, W.; Rocktäschel, T.; Riedel, S.; Kiela, D. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. arXiv 2020, arXiv:2005.11401. [Google Scholar]

- Qu, R.; Tu, R.; Bao, F. S. Is Semantic Chunking Worth the Computational Cost? Findings of the ACL: NAACL 2025; 2025; pp. 2155–2177. [Google Scholar]

- Merola, C.; Singh, J. Reconstructing Context: Evaluating Advanced Chunking Strategies for Retrieval-Augmented Generation. Proceedings of ACL 2025, 2025. [Google Scholar]

- Zhao, J.; Ji, Z.; Fan, J. Z.; Wang, H.; Niu, S.; Tang, B.; Xiong, F.; Li, Z. MoC: Mixtures of Text Chunking Learners for Retrieval-Augmented Generation System. ArXiv 2025, arXiv:2503.09600. [Google Scholar]

- Duarte, A. V.; Marques, J.; Graça, M.; Freire, M. F.; Li, L.; Oliveira, A. L. LumberChunker: Long-Form Narrative Document Segmentation. arXiv 2024, arXiv:2406.17526. [Google Scholar]

- Qian, H.; Liu, Z.; Mao, K.; Zhou, Y.; Dou, Z. Grounding Language Model with Chunking-Free In-Context Retrieval. In Proceedings of the 62nd Annual Meeting of the ACL (Long Papers), 2024; pp. 1298–1311. [Google Scholar]

- Devlin, J. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Goldberg, Y. Assessing BERT’s syntactic abilities. arXiv 2019, arXiv:1901.05287. [Google Scholar]

- Liu, N. F.; Gardner, M.; Belinkov, Y.; Peters, M. E.; Smith, N. A. Linguistic knowledge and transferability of contextual representations. arXiv 2019, arXiv:1903.08855. [Google Scholar] [CrossRef]

- Iwamoto, R.; Yoshida, I.; Kanayama, H.; Ohko, T.; Muraoka, M. Incorporating syntactic knowledge into pre-trained language model using optimization for overcoming catastrophic forgetting. Findings of EMNLP 2023, 2023; pp. 10981–10993. [Google Scholar]

- Wang, X.; Gao, T.; Zhu, Z.; Zhang, Z.; Liu, Z.; Li, J.; Tang, J. KEPLER: A Unified Model for Knowledge Embedding and Pre-trained Language Representation. TACL 2021, 9, 176–194. [Google Scholar] [CrossRef]

- Bai, J.; Wang, Y.; Chen, Y. Syntax-BERT: Improving Pre-trained Transformers with Syntax Trees. Proceedings of EACL; 2021; pp. 3011–3020. [Google Scholar]

- Chun, Y.; Kim, M.; Kim, D.; Park, C.; Lim, H. Enhancing Automatic Term Extraction with Large Language Models via Syntactic Retrieval. In Findings of ACL; 2025; pp. 9916–9926. [Google Scholar]

- Ahmad, W.; Li, H.; Chang, K.-W.; Mehdad, Y. Syntax-augmented Multilingual BERT for Cross-lingual Transfer. Proceedings of ACL AND IJCNLP, 2021; pp. 4538–4554. [Google Scholar]

- Arif, R.; Bashir, M. Question Answer Re-Ranking using Syntactic Relationship. Proceedings of ICOSST, 2021; pp. 1–6. [Google Scholar]

- Bai, Y.; Lv, X.; Zhang, J.; Lyu, H.; Tang, J.; Huang, Z.; Du, Z.; Liu, X.; Zeng, A.; Hou, L.; Dong, Y.; Tang, J.; Li, J. LongBench: A Bilingual, Multitask Benchmark for Long Context Understanding. arXiv 2023, arXiv:2308.14508. [Google Scholar] [CrossRef]

- Bai, Y.; Lv, X.; Zhang, J.; Lyu, H.; Tang, J.; Huang, Z.; Du, Z.; Liu, X.; Zeng, A.; Hou, L.; Dong, Y.; Tang, J.; Li, J. LongBench: A Bilingual, Multitask Benchmark for Long Context Understanding. In Proceedings of the 62nd Annual Meeting of ACL, 2024; pp. 3119–3137. [Google Scholar]

- Dubey, A.; Others. The Llama 3 Herd of Models. arXiv 2024, arXiv:2407.21783. [Google Scholar] [CrossRef]

- Touvron, H.; Others. Llama 2: Open Foundation and Fine-Tuned Chat Models. ArXiv 2023, arXiv:2307.09288. [Google Scholar]

- Zheng, L.; Others. Judging LLM-as-a-judge with MT-Bench and Chatbot Arena. ArXiv 2023, arXiv:2306.05685. [Google Scholar]

- Meta Team. Llama 3.2: Revolutionizing edge AI and vision with open, customizable models. Available online: https://tinyurl.com/p9y46bv6 (accessed on 4 November 2025).

- Boteva, V.; Gholipour, D.; Sokolov, A.; Riezler, S. A Full-Text Learning to Rank Dataset for Medical Information Retrieval. Proceedings of ECIR; 2016. [Google Scholar]

- Wang, M.; Others. Leave No Document Behind: Benchmarking Long-Context LLMs with Extended Multi-Doc QA. Proceedings of EMNLP, 2024; pp. 5627–5646. [Google Scholar]

- Islam, P.; Others. FinanceBench: A New Benchmark for Financial Question Answering. arXiv 2023, arXiv:2311.11944. [Google Scholar] [CrossRef]

- Dasigi, P.; Others. A Dataset of Information-Seeking Questions and Answers Anchored in Research Papers. ArXiv 2021, arXiv:2105.03011. [Google Scholar]

- Bai, Y.; Others. LongBench v2: Towards Deeper Understanding and Reasoning on Realistic Long-context Multitasks. arXiv 2024, arXiv:2412.15204. [Google Scholar]

- Rackauckas, Z. Rag-Fusion: A New Take on Retrieval-Augmented Generation. arXiv 2024, arXiv:2402.03367. [Google Scholar] [CrossRef]

- Xiao, J.; Wu, W.; Zhao, J.; Fang, M.; Wang, J. Enhancing long-form question answering via reflection with question decomposition. Information Processing & Management 2025, 62, 104274. Available online: https://www.sciencedirect.com/science/article/pii/S0306457325002158. [CrossRef]

- Sun, S.; Zhang, K.; Li, J.; Yu, M.; Hou, K.; Wang, Y.; Cheng, X. Retriever-generator-verification: A novel approach to enhancing factual coherence in open-domain question answering. Information Processing & Management 2025, 62, 104147. https://www.sciencedirect.com/science/article/pii/S0306457325000883.

- Rezaei, M.; Dieng, A. Vendi-RAG: Adaptively Trading-Off Diversity And Quality Significantly Improves Retrieval Augmented Generation With LLMs. 2025. https://arxiv.org/abs/2502.11228.

- Gao, Z.; Zhang, Y.; Zhang, S.; Wang, L.; Li, D. Dr3: A Multi-Stage Discriminate–Recompose–Resolve Framework for Reducing Off-Topic Responses. arXiv 2024, arXiv:2403.12393. [Google Scholar]

- Ji, Y.; Huang, Z.; Cen, R.; Zhang, Q. DEC: A Resource-Friendly Dynamic Enhancement Chain for Multi-Hop Question Answering. arXiv 2025, arXiv:2506.17692. [Google Scholar]

- Press, O.; Cheng, L.; Liu, M.; Aharoni, N. Retrieve–Summarize–Plan: An Iterative Framework for Multi-Hop Reasoning. arXiv 2024, arXiv:2407.13101. [Google Scholar]

- Cao, Y.; Zhou, R.; Chen, J.; Zhao, S. Data Augmentation for Real-World Multi-Hop Reasoning via Synthetic Knowledge Graph Expansion. arXiv 2025, arXiv:2504.20752. [Google Scholar]

- Zhang, X.; Garg, S.; Lin, K.; Jurafsky, D. GenDec: Robust Generative Multi-Hop Question Decomposition. arXiv 2024, arXiv:2402.11166. [Google Scholar]

- Zhu, R.; Liu, X.; Sun, Z.; Wang, Y.; Hu, W. Mitigating Lost-in-Retrieval Problems in Retrieval Augmented Multi-Hop Question Answering. 2025. https://arxiv.org/abs/2502.14245.

| 1 | The indices help in tracking the correct passages for good ordering. |

| 2 | For the current study, the first 200 documents were selected for the 2WikiMultihopQA_e |

| 3 | A fine-tuned model developed by Tethys Research for educational purposes; excluded from the second evaluation for transparency. |

| Technique | Number of Papers |

|---|---|

| Fixed-sized Chunking | 109 |

| Recursive Chunking | 109 |

| BM25 | 109 |

| Approach | Acc. | MRR |

|---|---|---|

| Our Approach | 0.844 | 0.803 |

| Recursive Chunking | 0.256 | 0.217 |

| Fixed-size Chunking | 0.231 | 0.198 |

| BM25 | 0.789 | 0.677 |

| Method | Top-3 | Top-5 | ||

|---|---|---|---|---|

| Accuracy | MRR | Accuracy | MRR | |

| Approach | 0.880 | 0.857 | 0.917 | 0.866 |

| Recursive | 0.165 | 0.148 | 0.183 | 0.152 |

| Chunk | 0.146 | 0.131 | 0.165 | 0.135 |

| BM25 | 0.899 | 0.848 | 0.935 | 0.856 |

| Conceptual | at 3 | at 5 | ||

|---|---|---|---|---|

| Acc. | MRR | Acc. | MRR | |

| Approach | 0.770 | 0.740 | 0.807 | 0.748 |

| Recursive | 0.321 | 0.279 | 0.357 | 0.288 |

| Chunk | 0.293 | 0.259 | 0.321 | 0.266 |

| BM25 | 0.605 | 0.489 | 0.715 | 0.513 |

| Technical | at 3 | at 5 | ||

|---|---|---|---|---|

| Acc. | MRR | Acc. | MRR | |

| BM25 (Card) | 0.963 | 0.937 | 0.972 | 0.939 |

| BM25 (Abs) | 0.899 | 0.845 | 0.935 | 0.854 |

| Conceptual | at 3 | at 5 | ||

|---|---|---|---|---|

| Acc. | MRR | Acc. | MRR | |

| BM25 (Card) | 0.889 | 0.850 | 0.917 | 0.855 |

| BM25 (Abs) | 0.660 | 0.562 | 0.715 | 0.575 |

| Models | Samples of both datasets |

|---|---|

| Llama2-7B-chat-4k | 200–200 |

| Vicuna-7B | 200–200 |

| Llama3-8B-8k | 200–200 |

| Method | Records |

|---|---|

| Recursive Chunking | 38,509 |

| Fixed-size Chunking (350 words) | 44,809 |

| Paper-cards (ours) | 109 |

| Main research queries (ours) | 95 |

| Models | F1 |

|---|---|

| Llama2-7B-chat-4k (ours) | 0.520 |

| CFIC-7B | 0.412 |

| Llama2-7B-chat-4k | 0.328 |

| Vicuna-7B (ours) | 0.340 |

| Vicuna-7B (CFIC) | 0.233 |

| Llama3 (ours) | 0.550 |

| GPT-3.5-Turbo-16k | 0.377 |

| Llama_index | 0.117 |

| PPL chunking | 0.141 |

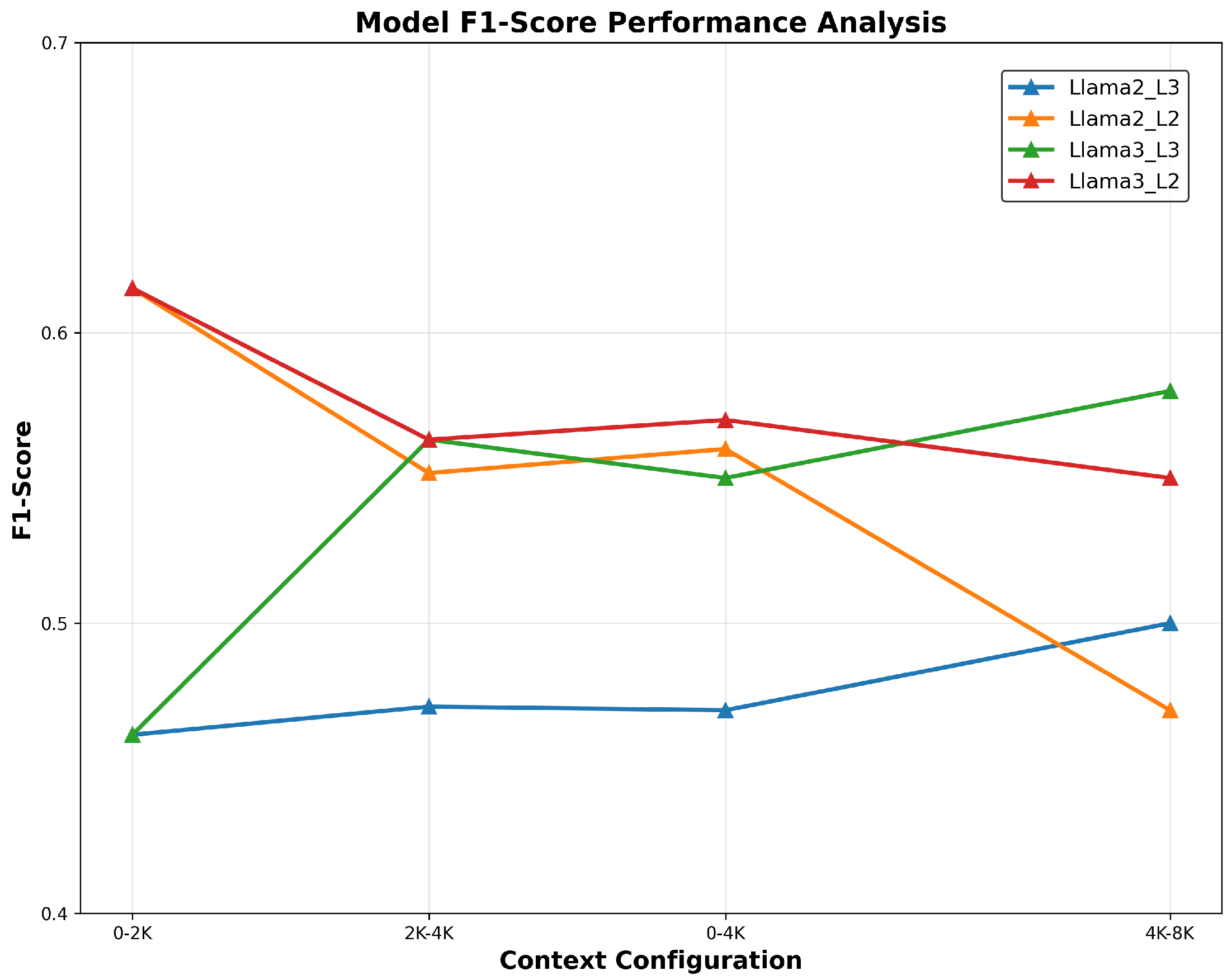

| Models | 0-4K | 4K-8K |

|---|---|---|

| Llama2-7B-chat-4k_L3 (ours) | 0.470 | 0.500 |

| Llama2-7B-chat-4k_L2 (ours) | 0.560 | 0.470 |

| Llama3_L3 (ours) | 0.550 | 0.580 |

| Llama3_L2 (ours) | 0.550 | 0.580 |

| GPT-3.5-Turbo-16k | 0.498 | 0.451 |

| Llama2-7B-chat-4k | 0.333 | 0.225 |

| Method / Paper Group | Core Idea | Strengths | Limitations | Example Works |

| Fixed-size Chunking | Split documents into equal-length segments (often with overlap). | Simple, robust, low computational cost; competitive performance when tuned. | Ignores document structure; can break semantic units; limited adaptability. | [7]: 100-word passages for early RAG; [8,9]: 200-word chunks performing on par with semantic chunking. |

| Structure-based Chunking | Split documents using natural hierarchy (HTML, headings, recursion, RL-based segmentation). | More coherent chunks; improved QA scores (e.g., 84% on FinanceBench; F1 81.8/62.0 on CoQA/QuAC). | Fragile with messy real-world documents, inconsistent formatting, noisy HTML; limited web-scale reliability. | [5,14]: RL recurrent chunker; [16]: Meta-chunking with Qwen2/Baichuan2. |

| Semantic Chunking | Group text using embedding-based semantic similarity. | Conceptually adaptive; captures meaning across spans. | High computational cost; often minimal retrieval gains; embedding drift. | [10]. |

| Improved Semantic / | ||||

| Chunk-Free Approaches | Use LLMs or full-text encoding to find natural transitions or remove chunking entirely. | Better retrieval accuracy; coherent boundaries; avoids chunk fragmentation. | Much higher computational cost; not always scalable. | LumberChunker [11]; Chunking-Free Retrieval [12]; Mixture of Chunkers (MoC) [10]. |

| RGV Framework | Retrieve, generate and verify answers to reduce hallucinations. | Improves factual coherence and QA reliability. | Complex multi-step pipeline reduces scalability; does not address chunking or representation. | [35]. |

| Syntactic Competence | ||||

| Limitations in LLMs | Analysis of LLM syntactic knowledge and probing studies. | Reveals partial syntactic awareness that inspires new chunking methods. | Transformers capture syntax inconsistently; cross-layer misalignment; catastrophic forgetting. | Probing studies: [16,17,18]. |

| Syntax-aware Methods | Inject or leverage syntactic structure (UD trees, tree kernels, syntax-enhanced encoders). | Improves re-ranking, NLU tasks, cross-lingual transfer, few-shot selection. | Requires tree extraction; sensitive to parser errors; high preprocessing cost. | Syntax-BERT [19]; UD-based transfer [21]; syntactic re-ranking [22]; ATE selection [20]. |

| Decomposition-Reflection (DR) | Break complex questions into sub-questions and refine via reflection. | Stronger long-form reasoning; improved multi-paragraph answer quality. | Closed-book; no retrieval, no chunking, no semantic compression; not useful for RAG. | [34]. |

| Our Proposed Method | Compress documents into query-aligned representations (paper-cards) for precise retrieval and minimal storage. | Reduces chunking errors; boosts retrieval (MRR drop only 12% vs 50% for BM25); highly storage-efficient; scalable. | Requires controlled generation pipeline; depends on compression quality. | Current work. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).