Submitted:

14 January 2026

Posted:

16 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Methodology

3.1. 1. Model Behavior Assessment

3.2. 2. Guardrail Integration

- Prompt-level safety filters

- Fine-tuning with instruction-tuned datasets

- Integration of retrieval-augmented generation (RAG)

- Reinforcement learning with human feedback (RLHF)

- External moderation pipelines and adversarial training

3.3. 3. Evaluation and Validation

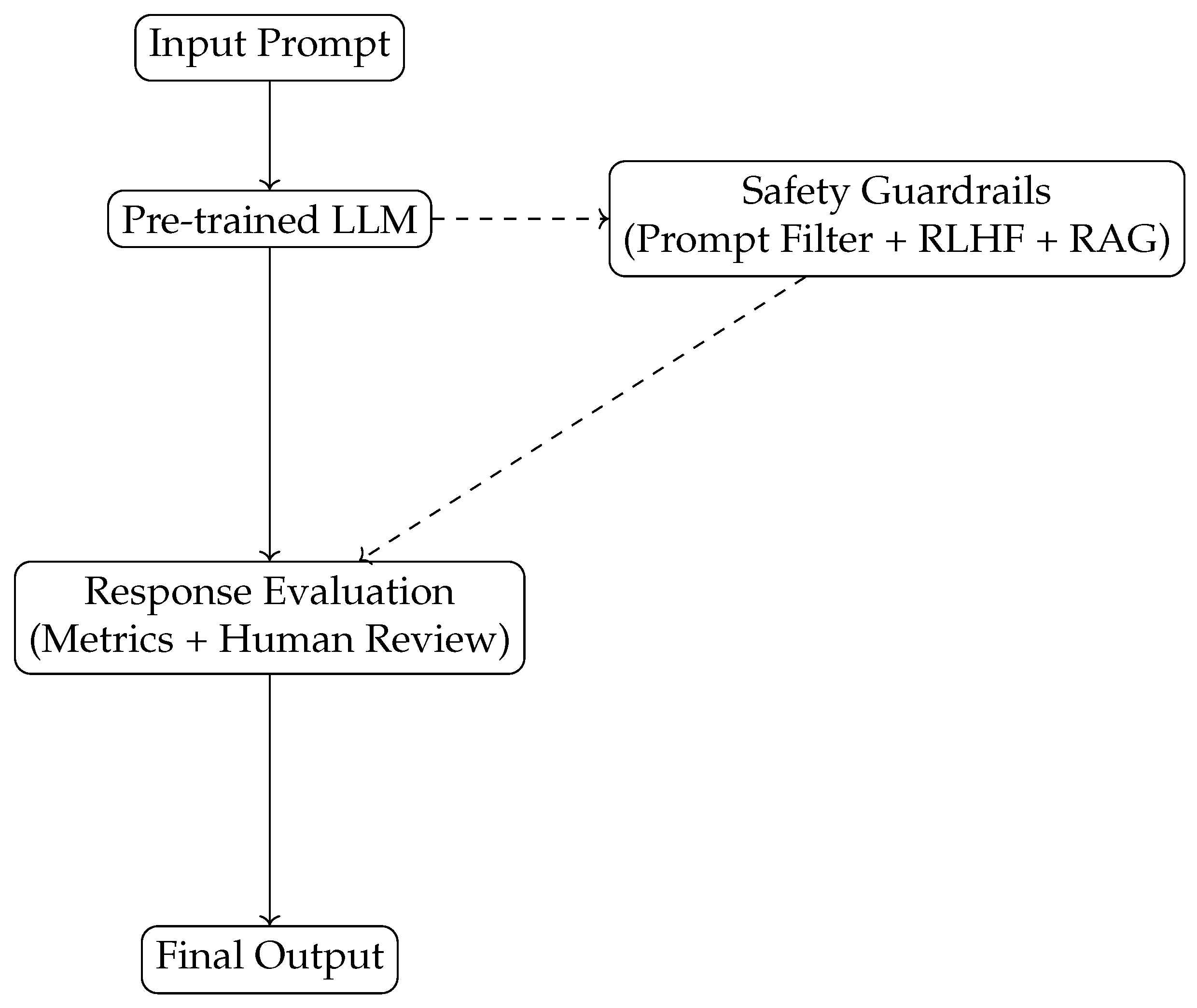

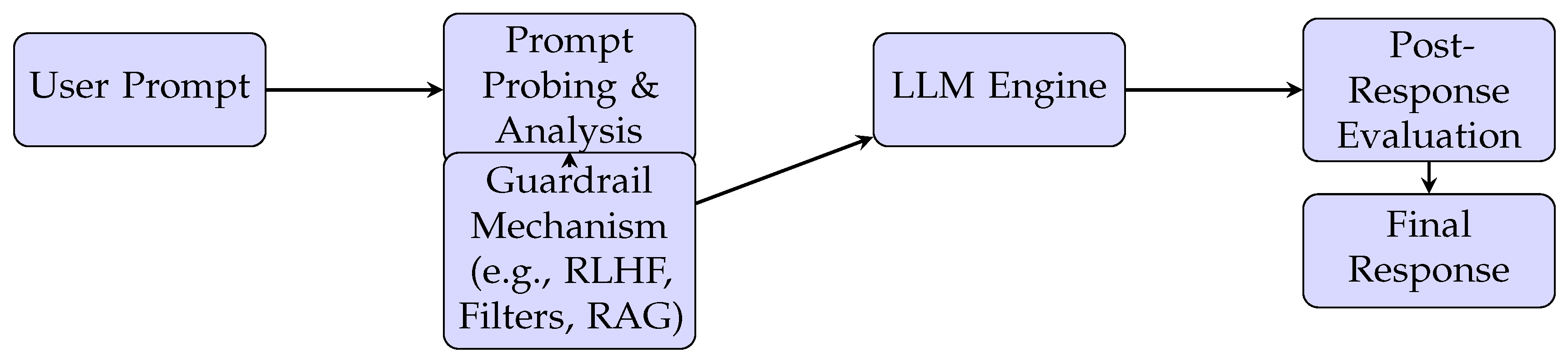

3.4. 4. System Architecture

3.5. 5. Experimental Setup

4. Implementation

4.1. System Architecture

4.2. Tooling and Frameworks

- OpenAI API: For interfacing with GPT-4 under controlled parameters.

- LangChain and PromptLayer: To dynamically modify and analyze prompts.

- Haystack and FAISS: Used for RAG integration with vector databases.

- Transformers Library (HuggingFace): For deploying and fine-tuning LLaMA 3 and PaLM 2 variants.

- BiasEval, Detoxify, and RealToxicityPrompts: Used for quantitative analysis of bias and toxicity.

4.3. Datasets

- Adversarial QA Set: 500 handcrafted prompts designed to elicit hallucinations and unsafe completions.

- TruthfulQA and BoolQ: For factual accuracy benchmarking.

- RealToxicityPrompts [13]: To assess toxicity before and after guardrail application.

- Jigsaw Unintended Bias in Toxicity Classification: Used to evaluate demographic and racial bias.

4.4. Execution Workflow

4.5. Challenges

5. Results and Discussion

5.1. 1. Reduction in Unsafe Outputs

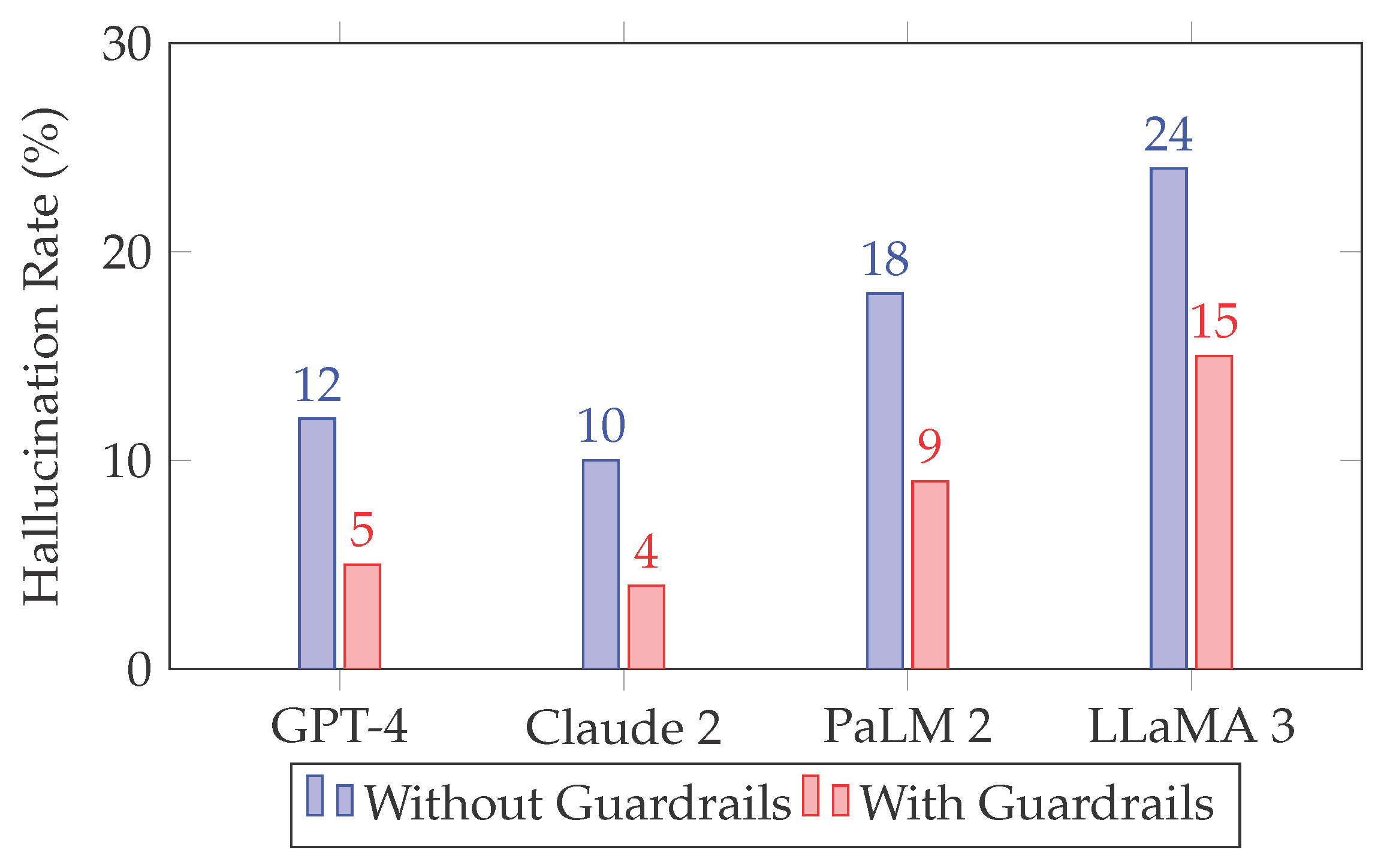

5.2. 2. Accuracy and Hallucination Detection

5.3. 3. Bias and Toxicity Evaluation

5.4. 4. Qualitative Insights

5.5. 5. Discussion

- Real-time red-teaming and adversarial prompt databases

- Transparent auditing mechanisms for model behavior

- Hybrid moderation pipelines combining rule-based and learned methods

6. Conclusion

7. Future Work

- Cross-lingual Safety: Current guardrails are predominantly evaluated on English prompts. Extending safety protocols to multilingual settings is crucial to global deployment.

- Explainability and Interpretability: There is a growing need for transparent models that can justify or explain their decisions, especially in high-stakes domains like healthcare, finance, or legal systems.

- Continuous Red-Teaming Pipelines: Developing automated systems that constantly test and probe LLMs for emerging vulnerabilities would enhance their adaptability and robustness over time.

- Standardized Safety Benchmarks: Establishing industry-wide metrics and testbeds for LLM safety can foster greater transparency and comparative analysis.

- Regulatory Compliance and Governance: Future efforts should integrate technical safeguards with policy mechanisms (e.g., GDPR, AI Act) to ensure legal accountability and societal trust in AI systems.

References

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Advances in Neural Information Processing Systems 2020, 33, 9459–9474. [Google Scholar]

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large language models are zero-shot reasoners. arXiv 2022, arXiv:2205.11916. [Google Scholar]

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the dangers of stochastic parrots: Can language models be too big? In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 2021; pp. 610–623. [Google Scholar]

- Liang, P.P.; Manzini, T.; Levy, S.; Bansal, M.; Lipton, Z.C.; et al. Towards understanding and mitigating social biases in language models. arXiv 2021, arXiv:2106.13219. [Google Scholar] [CrossRef]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; et al. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems 2022, 35, 27730–27744. [Google Scholar]

- Wallace, E.; Feng, S.; Kandpal, N.; Gardner, M.; Singh, S. Universal adversarial triggers for attacking and analyzing NLP. EMNLP 2019, 2153–2162. [Google Scholar]

- Ganguli, D.; Askell, A.; Bai, Y.; et al. Red teaming language models with language models. arXiv 2022, arXiv:2202.03286. [Google Scholar] [CrossRef]

- Zhang, J.; Wang, L.; Wang, B.; et al. PromptBench: Evaluating robustness of language models with adversarial prompts. arXiv 2023, arXiv:2302.12095. [Google Scholar]

- Vig, J. BERTViz: Visualizing attention in transformer models. arXiv 2020, arXiv:1904.02679. [Google Scholar]

- Mittelstadt, B. Principles alone cannot guarantee ethical AI. Nature Machine Intelligence 2019, 1, 501–507. [Google Scholar] [CrossRef]

- Djerf, O.; Gavrikov, D.; Wilson, T.; et al. Aligning language models with structured guardrails. arXiv 2023. arXiv:2303.17418.

- Gehman, S.; Gururangan, S.; Sap, M.; Choi, Y.; Smith, N.A. RealToxicityPrompts: Evaluating neural toxic degeneration in language models. In Findings of the Association for Computational Linguistics: EMNLP 2020; 2020; pp. 3356–3369. [Google Scholar]

| Model | Parameters | Architecture | Guardrail Type |

|---|---|---|---|

| GPT-4 | ∼170B | Transformer Decoder | RLHF, Prompt Filtering |

| Claude 2 | Unknown | Constitutional AI | Rule-based, Feedback Loop |

| PaLM 2 | ∼540B | Pathways Transformer | Instruction-tuned, Moderation |

| LLaMA 3 | ∼65B | Decoder-only | Open Fine-tuned |

| Model | Unsafe Output Rate (No Guardrails) | Unsafe Output Rate (With Guardrails) |

|---|---|---|

| GPT-4 | 14.2% | 3.7% |

| Claude 2 | 11.5% | 2.9% |

| PaLM 2 | 17.9% | 6.3% |

| LLaMA 3 | 23.1% | 11.2% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).