Submitted:

14 January 2026

Posted:

15 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Dynamic Adaptability: The ability to adjust resource allocation in real time as workloads and system conditions change.

- QoS Optimization: Simultaneous consideration of multiple performance indicators such as latency, throughput, and resource utilization.

- Scalability: Effective handling of high-dimensional state spaces and heterogeneous resource pools.

- We introduce a DRL-based allocation strategy that integrates proximity-aware resource placement with adaptive load distribution mechanisms.

- We incorporate user-centric constraints into the decision-making process to ensure both reliability and performance.

- We conduct extensive simulations using CloudSim 3.0.2 to compare the proposed approach with traditional algorithms such as Ant Colony Optimization (ACO) and Simulated Annealing (SA), demonstrating notable improvements in execution time, cost efficiency, and system stability.

2. Related Work

2.1. Resource Scheduling by Deep Reinforcement Learning

2.2. Resource Scheduling Considering Time-Varying Characteristics

2.3. Task Scheduling/Offloading with Priority Constraints

2.4. Summary

3. Methodology

3.1. Experimental Environment

- A MyAllocationTest class was implemented to initialize data centers, virtual machines, and workloads.

- VM attributes included MIPS ratings, memory capacity, storage size, and network bandwidth.

- Workloads (cloudlets) were generated with varying computational and I/O requirements to simulate realistic heterogeneous demands.

3.2. Parameter Settings

3.3. Performance Metrics

- Average Execution Time:where is the completion time for task i and N is the total number of tasks.

- Average Cost:where is the total cost incurred by task i in terms of bandwidth and CPU usage.

- Quality of Service (QoS): Aggregates execution time, cost, and reliability into a composite performance score.

- System Load:where represents utilization of VM j and M is the number of VMs.

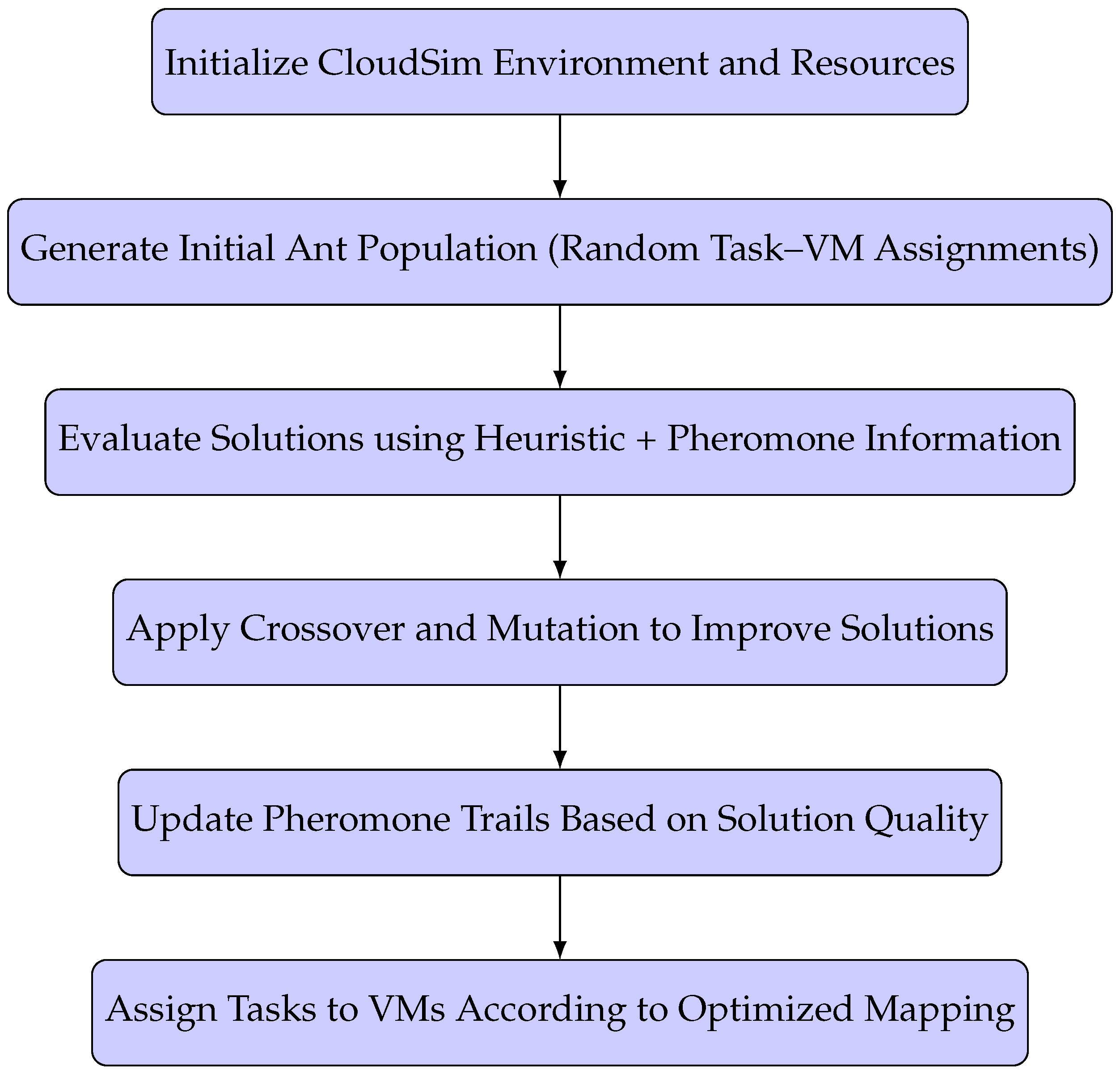

3.4. Proposed Workflow

3.5. Advantages of the Proposed Approach

- Enhanced exploration of the solution space, reducing the risk of premature convergence.

- Adaptation to dynamic workloads and resource fluctuations in real time.

- Balanced optimization of execution time, cost, and system reliability.

3.6. Summary

4. Implementation

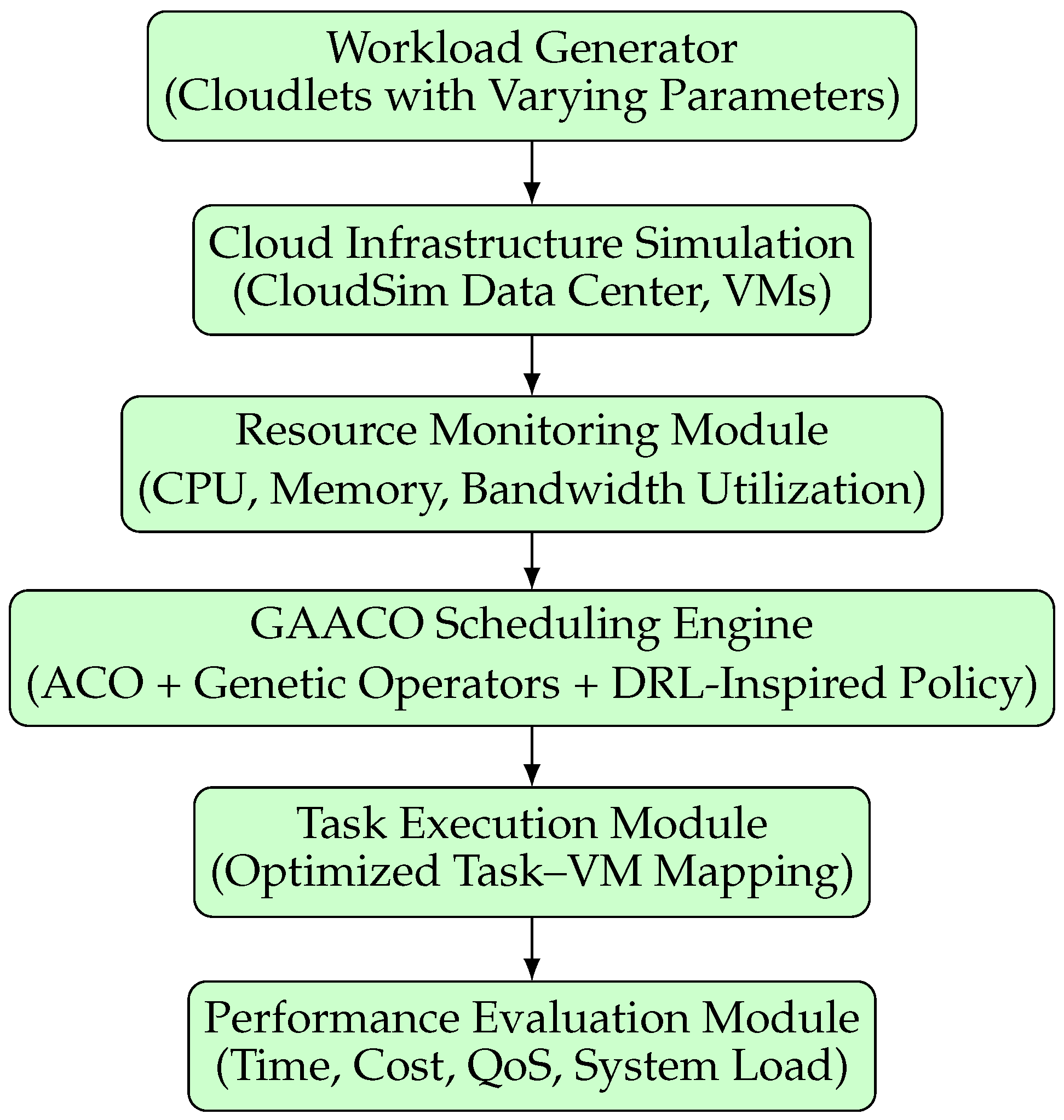

4.1. System Architecture

4.2. Module Descriptions

4.2.1. Workload Generator

- Instruction length (MI): Defines the computational demand of each task.

- Input/Output data size (MB): Simulates network traffic requirements.

- Deadline constraints: Establishes task urgency for QoS evaluation.

4.2.2. Cloud Infrastructure Simulation

- Hosts: Physical servers with defined CPU cores, RAM, storage, and bandwidth.

- VMs: Configured with specific MIPS, memory, and network bandwidth to emulate heterogeneous cloud resources.

4.2.3. Resource Monitoring

4.2.4. GAACO Scheduling Engine

- Ant Colony Optimization (ACO) for pheromone-based exploration.

- Genetic operators (crossover, mutation) to diversify the search space.

- DRL-inspired policy updates for adapting to system state changes.

4.2.5. Task Execution

4.2.6. Performance Evaluation

- Average execution time ()

- Average cost ()

- QoS score

- System load ()

4.3. Integration Flow

- 1.

- Workload generation.

- 2.

- Resource monitoring.

- 3.

- Dynamic scheduling via GAACO.

- 4.

- Task execution and performance feedback.

4.4. Summary

5. Results

5.1. Evaluation Metrics

- Average Execution Time () — measures scheduling efficiency.

- Average Cost () — represents monetary efficiency in terms of resource consumption.

- Service Quality (QoS) — a composite index incorporating time, cost, and reliability.

- System Load () — average utilization of computational resources.

5.2. Numerical Comparison

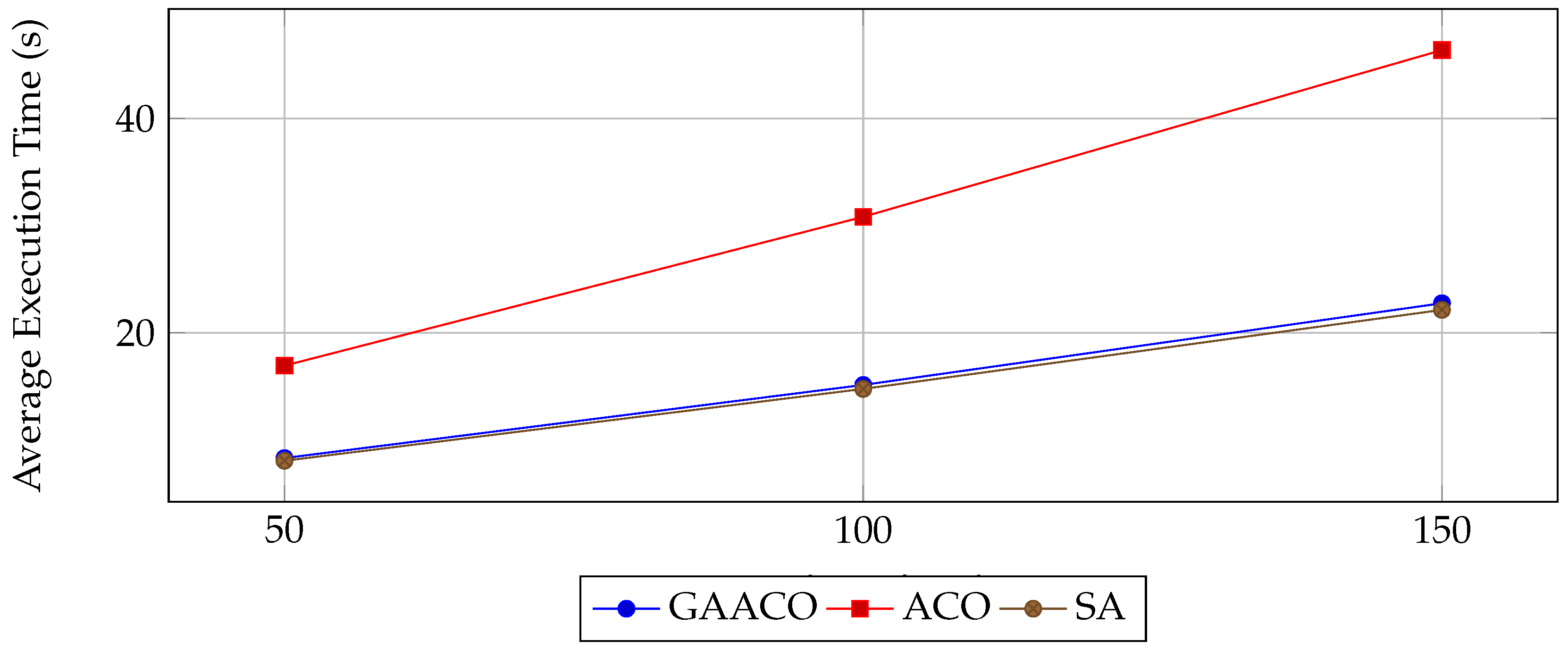

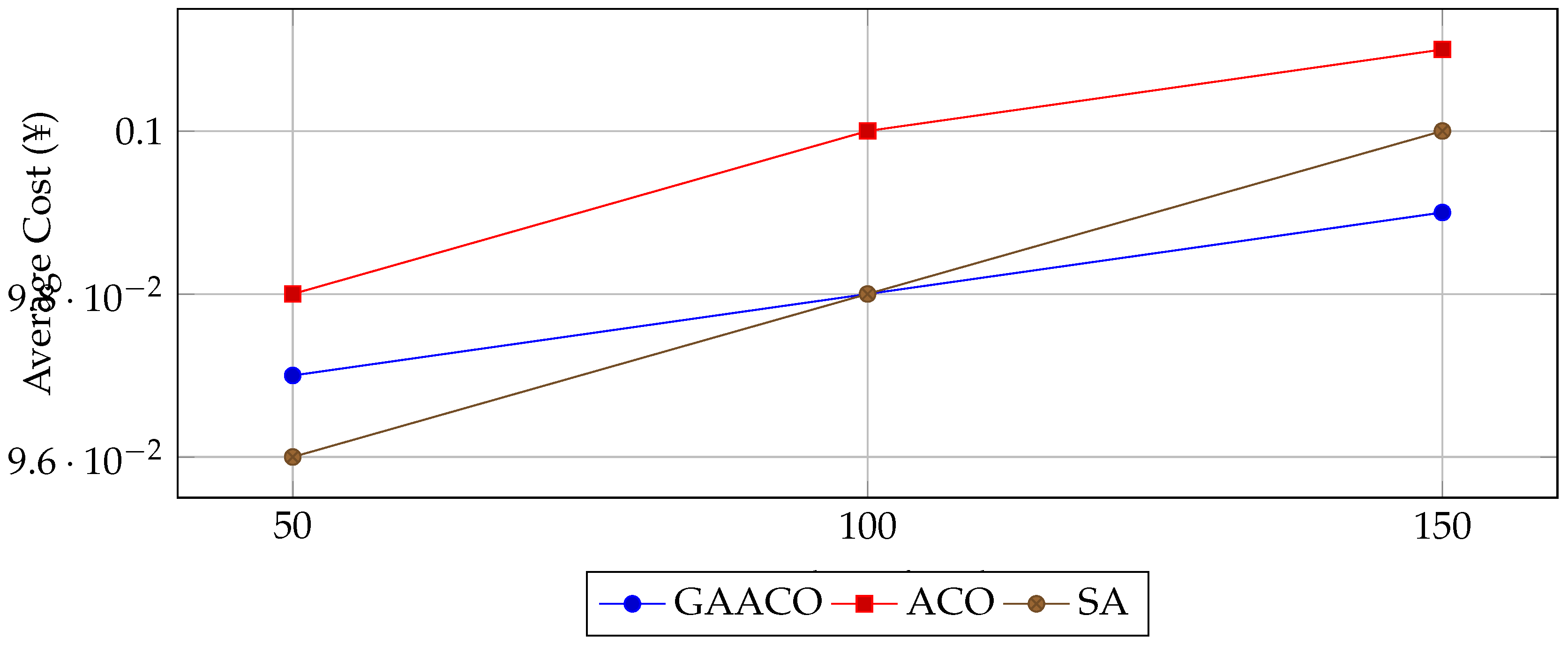

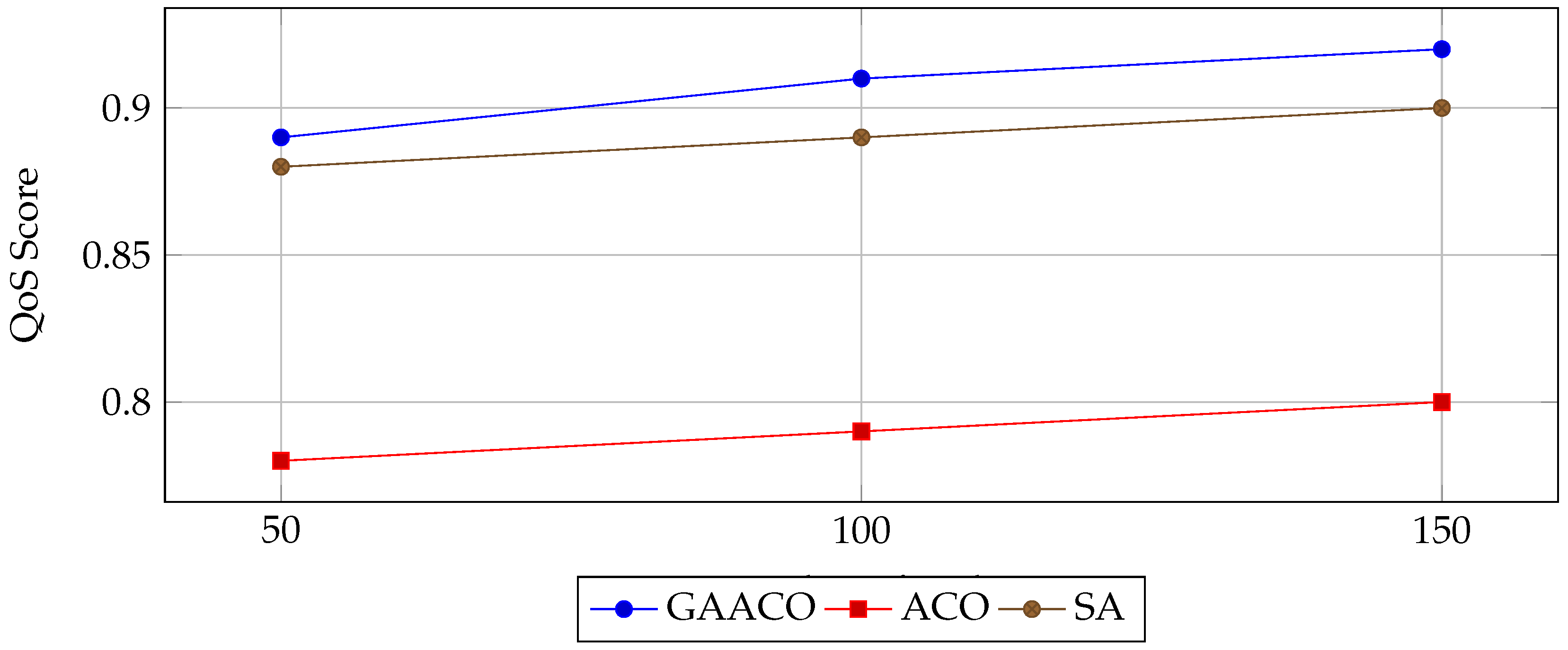

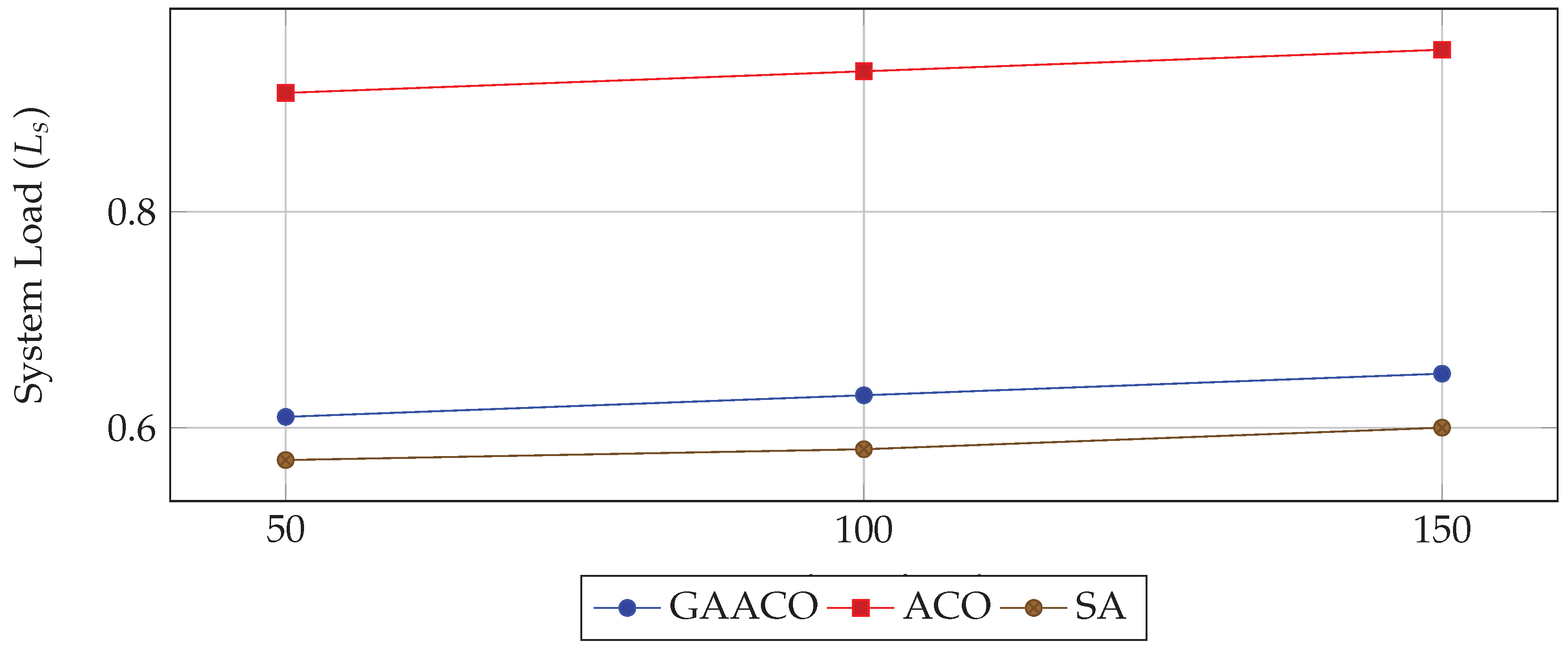

5.3. Graphical Analysis

5.4. Observations and Analysis

- Execution Time: GAACO achieves a substantial reduction in execution time compared to ACO (up to 50.9% improvement), while being comparable to SA.

- Cost Efficiency: The average cost differences among algorithms are marginal (), with GAACO slightly outperforming ACO in most scenarios.

- Service Quality: GAACO maintains higher QoS scores, attributed to its ability to balance load while minimizing delays.

- System Load: GAACO reduces system load by over 50% relative to ACO, indicating more balanced task assignments.

5.5. Summary

6. Discussion

6.1. Interpretation of Results

- Hybrid Optimization: The integration of genetic operators (crossover and mutation) into the pheromone-based search process of ACO enhances solution diversity and mitigates the risk of premature convergence. This leads to more robust exploration of the solution space, especially under dynamic workload conditions.

- Adaptive Decision-Making: The DRL-inspired component enables the algorithm to adjust task–VM mappings based on the current system state rather than relying solely on static heuristic values. This adaptability is particularly beneficial when workloads vary significantly over time.

- Load Balancing: GAACO’s fitness function incorporates a system load penalty, directly encouraging task distributions that avoid over-utilization of specific VMs. This prevents bottlenecks and contributes to lower execution times and higher QoS scores.

6.2. Comparison with Baseline Methods

6.2.1. GAACO vs ACO

6.2.2. GAACO vs SA

6.3. Alignment with Theoretical Principles

- Hybridization of population-based (ACO) and evolutionary (GA) methods often yields improved exploration-exploitation balance [19].

- Incorporating real-time state feedback, as in DRL frameworks, increases the adaptability of resource allocation strategies in dynamic environments [6].

- Penalizing high system load in the objective function inherently promotes better resource utilization, aligning with load balancing principles in distributed systems [25].

6.4. Practical Implications

- Data Center Efficiency: Lower system load reduces the likelihood of hardware failures and extends infrastructure lifespan.

- Cost-Performance Trade-Off: Comparable cost efficiency with better QoS makes GAACO attractive for providers aiming to differentiate on service quality.

- Scalability: The modular architecture ensures GAACO can be adapted to large-scale, heterogeneous environments with minimal modifications.

6.5. Limitations

- Computational Overhead: The hybrid search process is computationally more intensive than standalone ACO or SA, particularly in large-scale scenarios.

- Parameter Sensitivity: Performance is sensitive to parameter tuning (e.g., crossover rate, pheromone evaporation), requiring empirical calibration.

- Lack of Energy-Awareness: The present formulation focuses on time, cost, and QoS but does not explicitly incorporate energy consumption as an optimization objective.

6.6. Future Enhancements

- Integrating energy-efficient scheduling objectives to minimize data center power usage.

- Employing self-adaptive parameter tuning techniques, reducing reliance on manual calibration.

- Extending the algorithm to multi-cloud and edge computing environments, where network latency and geographic distribution play critical roles.

6.7. Summary

7. Conclusions

- Execution Time: Achieving up to 50.9% faster task completion than ACO while remaining comparable to SA.

- Quality of Service: Delivering higher QoS scores by balancing execution time, cost, and reliability.

- Load Balancing: Reducing average system load by over 50% relative to ACO, improving overall infrastructure stability.

- Cost Efficiency: Maintaining competitive operational costs across all tested workloads.

8. Future Work

- Energy-Aware Scheduling: Incorporating energy consumption as an optimization criterion to reduce data center power usage and environmental impact.

- Multi-Cloud and Edge Integration: Extending the algorithm to hybrid cloud–edge infrastructures where geographic distribution, network latency, and bandwidth constraints play critical roles.

- Self-Adaptive Parameter Tuning: Implementing automated adjustment of algorithm parameters (e.g., crossover rate, pheromone evaporation coefficient) to improve robustness across varying workloads without manual calibration.

- Scalability Testing: Evaluating GAACO on large-scale cloud deployments with thousands of VMs and multi-tenant workloads to assess its performance under extreme load conditions.

- Incorporating Machine Learning Forecasting: Leveraging predictive analytics (e.g., LSTM, ARIMA) to anticipate workload patterns and preemptively optimize resource allocations.

- Security-Aware Scheduling: Introducing mechanisms to account for security constraints, such as isolating sensitive workloads or adapting to intrusion detection alerts.

Final Remark

References

- Atzori, L.; Iera, A.; Morabito, G. The Internet of Things: A Survey. Computer Networks 2010, 54, 2787–2805. [Google Scholar] [CrossRef]

- Brogi, A.; Forti, S.; Guerrero, C.; et al. How to Place Your Apps in the Fog: State of the Art and Open Challenges. Software: Practice and Experience 2019, 49, 489–509. [Google Scholar] [CrossRef]

- Mao, H.; Schwarzkopf, M.; Kaul, S.; et al. Resource Management with Deep Reinforcement Learning. In Proceedings of the Proceedings of the 15th ACM Workshop on Hot Topics in Networks, 2016; pp. 50–56. [Google Scholar]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; et al. Human-level Control Through Deep Reinforcement Learning. Nature 2015, 518, 529–533. [Google Scholar] [CrossRef] [PubMed]

- Xu, X.; Liu, B.; Zhang, L.; et al. Deep Reinforcement Learning for Virtual Machine Placement in Cloud Data Centers. Future Generation Computer Systems 2022, 128, 1–12. [Google Scholar]

- Li, Y. Deep Reinforcement Learning: An Overview. arXiv preprint arXiv:1701.07274 2017. [Google Scholar]

- Wang, Z.; Wei, X.; et al. A Survey of Deep Reinforcement Learning Applications in Resource Management. IEEE Access 2020, 8, 74676–74696. [Google Scholar]

- Lin, Y.; Li, Y.; et al. Efficient Resource Scheduling with Deep Reinforcement Learning. In Proceedings of the IEEE International Conference on Cloud Computing, 2018; pp. 17–24. [Google Scholar]

- Zhang, K.; et al. Deep Reinforcement Learning for Resource Scheduling: Challenges and Opportunities. IEEE Network 2019, 33, 138–145. [Google Scholar]

- Calheiros, R.N.; Ranjan, R.; Buyya, R. Workload Prediction Using ARIMA Model and Its Impact on Cloud Applications’ QoS. IEEE Transactions on Cloud Computing 2015, 3, 449–458. [Google Scholar] [CrossRef]

- Mondal, S.; Ghosh, S.; Ghose, D. Time-varying Resource Scheduling in Cloud Computing Using Unsupervised Learning. IEEE Transactions on Cloud Computing 2021, PP, 1–12. [Google Scholar]

- Zhu, X.; et al. LSTM-based Workload Prediction for Cloud Resource Scheduling. IEEE Access 2020, 8, 178173–178186. [Google Scholar]

- Zhou, Y.; et al. Hybrid Prediction Model for Cloud Workloads. Future Generation Computer Systems 2019, 95, 1–10. [Google Scholar]

- Bittencourt, L.F.; et al. Mobility-aware Application Scheduling in Fog Computing. IEEE Cloud Computing 2018, 5, 26–35. [Google Scholar] [CrossRef]

- Topcuoglu, H.; Hariri, S.; Wu, M.Y. Performance-effective and Low-complexity Task Scheduling for Heterogeneous Computing. IEEE Transactions on Parallel and Distributed Systems 2002, 13, 260–274. [Google Scholar] [CrossRef]

- Kwok, Y.K.; Ahmad, I. Benchmarking and Comparison of the Task Graph Scheduling Algorithms. Journal of Parallel and Distributed Computing 1999, 59, 381–422. [Google Scholar] [CrossRef]

- Li, K.; et al. Heuristics for Scheduling DAGs in Grid Computing. In Proceedings of the IEEE International Conference on High Performance Computing, 2004; pp. 1–8. [Google Scholar]

- Casanova, H.; et al. Heuristics for Scheduling DAG Applications on Heterogeneous Systems. Parallel and Distributed Computing 2000, 61, 1337–1361. [Google Scholar]

- Goldberg, D.E. Genetic Algorithms in Search, Optimization, and Machine Learning; Addison-Wesley, 1989. [Google Scholar]

- Kennedy, J.; Eberhart, R. Particle Swarm Optimization. Proceedings of IEEE International Conference on Neural Networks 1995, 4, 1942–1948. [Google Scholar]

- Lozano, J.A.; et al. Towards a New Evolutionary Computation: Advances in Estimation of Distribution Algorithms. Springer Science & Business Media 2006. [Google Scholar]

- Zhang, Y.; et al. Energy-efficient Task Scheduling in Cloud–Edge Computing. IEEE Access 2020, 8, 190734–190747. [Google Scholar] [CrossRef]

- Satyanarayanan, M. The Emergence of Edge Computing. Computer 2017, 50, 30–39. [Google Scholar] [CrossRef]

- Bellendorf, J.; Mann, Z.A. Classification of Optimization Problems in Fog Computing. Future Generation Computer Systems 2020, 107, 158–176. [Google Scholar] [CrossRef]

| Parameter | Symbol | Value |

|---|---|---|

| Number of generations | 100 | |

| Population size | 10 | |

| Number of ants | m | 31 |

| Crossover probability | 0.35 | |

| Mutation probability | 0.08 | |

| Max pheromone factor | 1.00 | |

| Max expected pheromone factor | 2.00 | |

| Pheromone evaporation coefficient | 0.10 | |

| Pheromone intensity | Q | 50.00 |

| Tasks | Algorithm | (s) | (¥) | QoS Score |

|---|---|---|---|---|

| 50 | GAACO | 8.32 | 0.097 | 0.89 |

| ACO | 16.94 | 0.098 | 0.78 | |

| SA | 8.07 | 0.096 | 0.88 | |

| 100 | GAACO | 15.14 | 0.098 | 0.91 |

| ACO | 30.82 | 0.100 | 0.79 | |

| SA | 14.77 | 0.098 | 0.89 | |

| 150 | GAACO | 22.76 | 0.099 | 0.92 |

| ACO | 46.37 | 0.101 | 0.80 | |

| SA | 22.14 | 0.100 | 0.90 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).