Submitted:

04 January 2026

Posted:

06 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

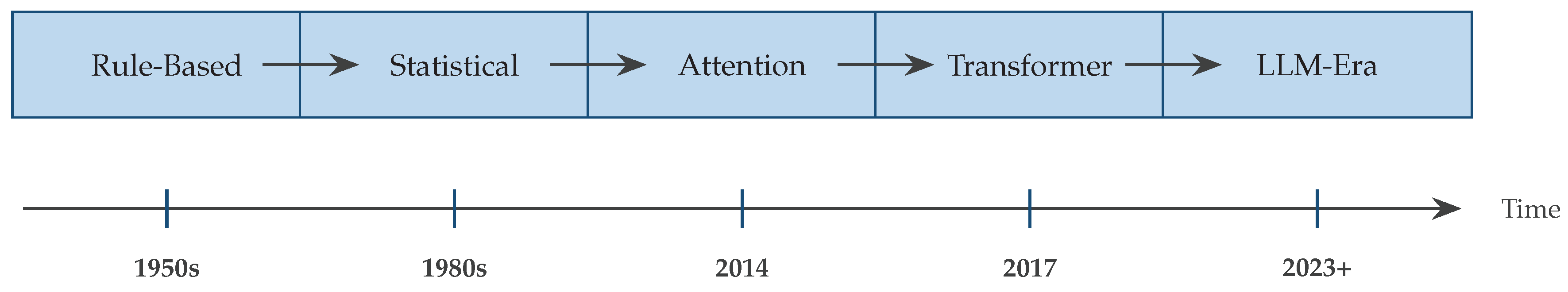

2. Historical Evolution and Background

2.1. From Rule-Based to Statistical Methods

2.2. The Neural Revolution

2.3. The Attention Revolution

3. Fundamental Architectures in Neural Machine Translation

3.1. Encoder-Decoder Framework

3.2. Transformer Architecture

3.3. Variants and Improvements

| Architecture | Key Innovation | Complexity | Efficiency | Long Context |

|---|---|---|---|---|

| Vanilla Transformer | Multi-head attention | Baseline | Limited | |

| Transformer-XL | Segment recurrence | Slightly lower | Better | |

| Longformer | Sparse attention | Higher | Excellent | |

| Reformer | LSH attention | High | Excellent | |

| Linformer | Linear projection | Very high | Good | |

| Performer | Kernel methods | Very high | Good |

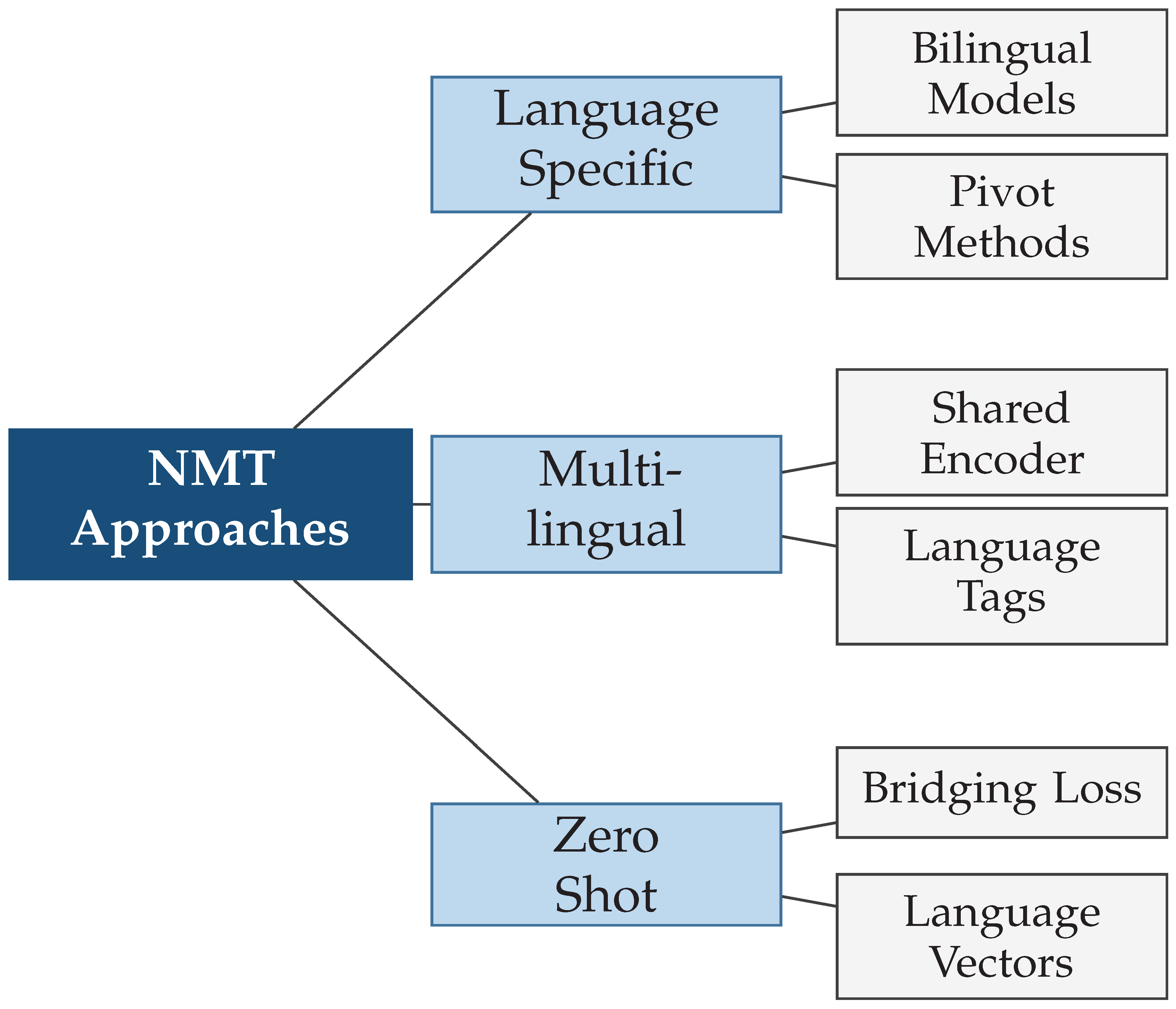

4. Multilingual Neural Machine Translation

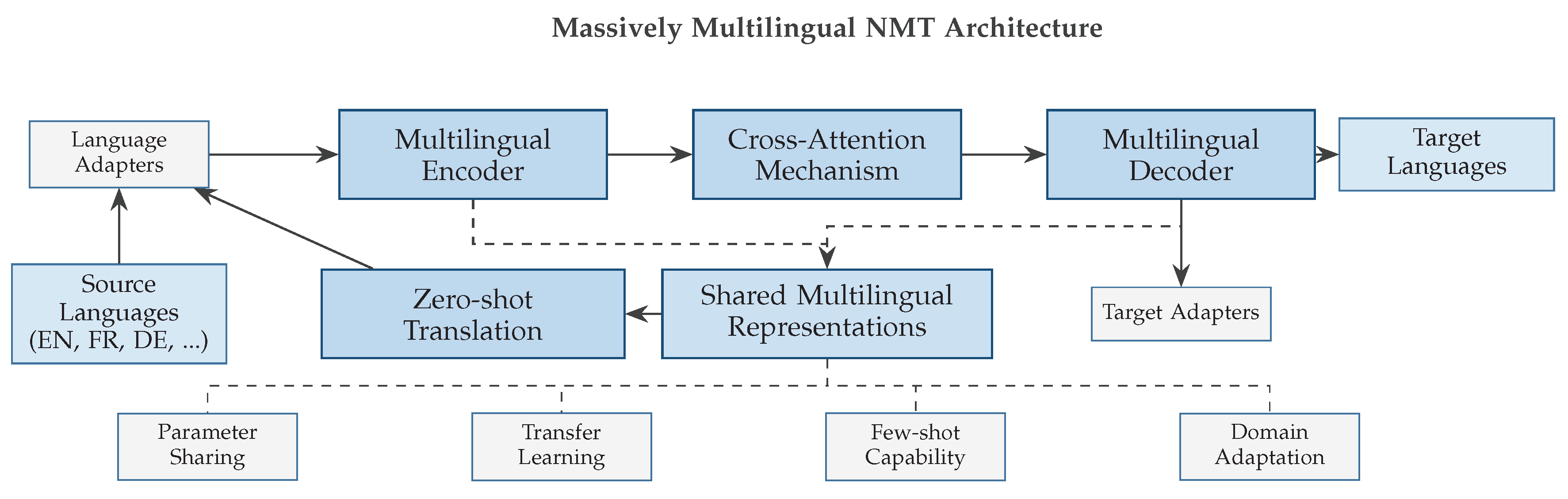

4.1. Massively Multilingual Models

4.2. Zero-Shot and Few-Shot Translation

4.3. Cross-Lingual Transfer Learning

5. Advanced Techniques and Methods

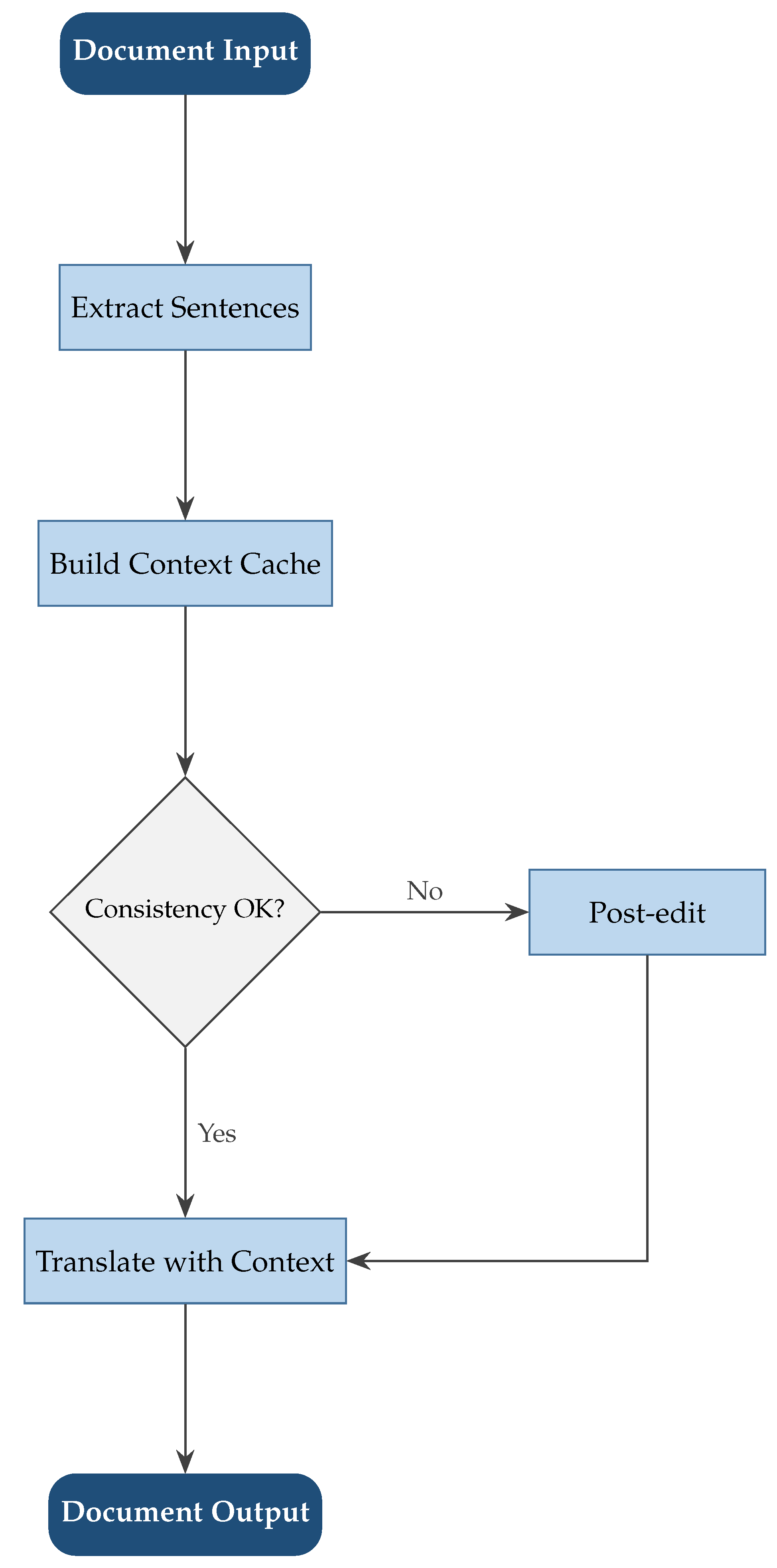

5.1. Document-Level Translation

5.2. Multimodal Translation

5.3. Domain Adaptation and Specialized Translation

6. Large Language Models in Translation

6.1. Emergence of LLM-based Translation

6.2. Comparison with Dedicated NMT Systems

| Criterion | Dedicated NMT | LLM-Based |

|---|---|---|

| Benchmark Performance | Excellent | Very Good |

| Parameter Efficiency | Very High | Low |

| Inference Speed | Fast | Slow |

| Context Window | Limited | Large |

| Knowledge Integration | Difficult | Natural |

| Domain Adaptation | Manual fine-tuning | Prompt engineering |

| Controllability | High | Moderate |

| Deployment Cost | Low | High |

6.3. Challenges and Opportunities

7. Evaluation Metrics and Quality Assessment

7.1. Automatic Evaluation Metrics

| Metric | Type | Correlation | Coverage | Interpretation |

|---|---|---|---|---|

| BLEU | Surface-level | Moderate | N-grams | Word overlap |

| METEOR | Surface+Semantic | Good | Synonyms | Fluency aware |

| chrF | Character-level | Good | Characters | Script-robust |

| BERTScore | Neural embedding | Very Good | Contextual | Semantic similarity |

| COMET | Learned metric | Excellent | Trained | Human judgment |

| BLEURT | Fine-tuned BERT | Excellent | Quality dims | Nuanced quality |

7.2. Human Evaluation

7.3. Quality Estimation

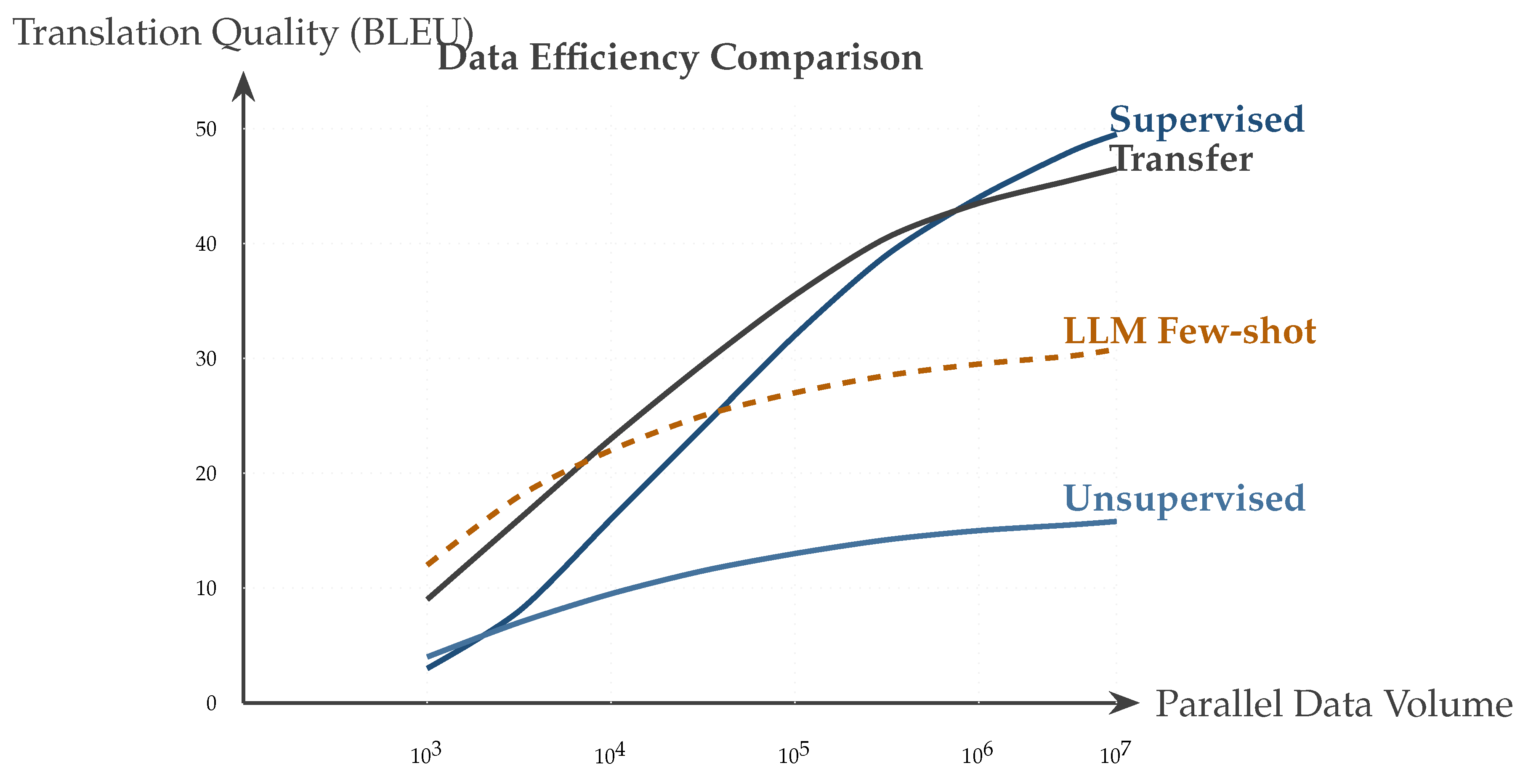

8. Low-Resource and Unsupervised Translation

8.1. Challenges in Low-Resource Settings

8.2. Data Augmentation Techniques

8.3. Unsupervised and Semi-Supervised Approaches

9. Practical Implementation and Deployment

9.1. Training Strategies and Optimization

9.2. Inference Optimization

9.3. Production Systems and Infrastructure

10. Applications and Use Cases

10.1. Commercial Applications

10.2. Academic and Research Applications

10.3. Social Impact and Accessibility

11. Future Directions and Emerging Trends

11.1. Towards Universal Translation

11.2. Integration with Large Language Models

11.3. Ethical Considerations and Challenges

12. Conclusions

References

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural machine translation by jointly learning to align and translate. In Proceedings of the International Conference on Learning Representations, 2014. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in neural information processing systems, 2017; pp. 5998–6008. [Google Scholar]

- Sutskever, I.; Vinyals, O.; Le, Q.V. Sequence to sequence learning with neural networks. In Proceedings of the Advances in neural information processing systems, 2014; pp. 3104–3112. [Google Scholar]

- Cho, K.; Van Merriënboer, B.; Gulcehre, C.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning phrase representations using RNN encoder-decoder for statistical machine translation. arXiv 2014, arXiv:1406.1078. [Google Scholar] [CrossRef]

- Luong, T.; Pham, H.; Manning, C.D. Effective approaches to attention-based neural machine translation. In Proceedings of the Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, 2015; pp. 1412–1421. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Zhu, W.; Liu, H.; Liu, Q.; Liu, J.; Zhou, J.; Zeng, J.; Sun, M. Multilingual machine translation with large language models: Empirical results and analysis. arXiv 2023, arXiv:2304.04675. [Google Scholar] [CrossRef]

- Xu, H.; Kim, Y.J.; Sharaf, A.; Awadalla, H.H. A paradigm shift in machine translation: Boosting translation performance of large language models. In Proceedings of the arXiv, 2023. [Google Scholar]

- Wu, Y.; Schuster, M.; Chen, Z.; Le, Q.V.; Norouzi, M.; Macherey, W.; Krikun, M.; Cao, Y.; Gao, Q.; Macherey, K.; et al. Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv 2016, arXiv:1609.08144. [Google Scholar]

- Arivazhagan, N.; Bapna, A.; Firat, O.; Lepikhin, D.; Johnson, M.; Krikun, M.; Chen, M.X.; Cao, Y.; Foster, G.; Cherry, C.; et al. Massively multilingual neural machine translation in the wild: Findings and challenges. arXiv 2019, arXiv:1907.05019. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Proceedings of NAACL-HLT, 2019; pp. 4171–4186. [Google Scholar]

- Conneau, A.; Khandelwal, K.; Goyal, N.; Chaudhary, V.; Wenzek, G.; Guzmán, F.; Grave, E.; Ott, M.; Zettlemoyer, L.; Stoyanov, V. Unsupervised cross-lingual representation learning at scale. arXiv 2020, arXiv:1911.02116. [Google Scholar] [CrossRef]

- Chen, K.; Bi, Z.; Niu, Q.; Liu, J.; Peng, B.; Zhang, S.; Liu, M.; Li, M.; Pan, X.; Xu, J.; et al. Deep learning and machine learning, advancing big data analytics and management: Tensorflow pretrained models. arXiv 2024. arXiv:2409.13566.

- Deutsch, D.; et al. Machine Translation Meta Evaluation through Translation Accuracy Challenge Sets. Computational Linguistics 2024, 51, 73–106. [Google Scholar]

- Nzeyimana, A. Low-resource neural machine translation with morphological modeling; 2024; pp. 182–195. [Google Scholar]

- Och, F.J. Minimum error rate training in statistical machine translation. In Proceedings of the Proceedings of the 41st Annual Meeting of the Association for Computational Linguistics, 2003; pp. 160–167. [Google Scholar]

- Papineni, K.; Roukos, S.; Ward, T.; Zhu, W.J. BLEU: a method for automatic evaluation of machine translation. In Proceedings of the Proceedings of the 40th annual meeting of the Association for Computational Linguistics, 2002; pp. 311–318. [Google Scholar]

- Koehn, P.; Och, F.J.; Marcu, D. Statistical phrase-based translation. In Proceedings of the Proceedings of the 2003 Conference of the North American Chapter of the Association for Computational Linguistics on Human Language Technology, 2003; pp. 48–54. [Google Scholar]

- Tillmann, C.; Ney, H. Word Reordering and a Dynamic Programming Beam Search Algorithm for Statistical Machine Translation. Computational Linguistics 2003, 29, 97–133. [Google Scholar] [CrossRef]

- Zens, R.; Ney, H. Improvements in dynamic programming beam search for phrase-based statistical machine translation. In Proceedings of the International Workshop on Spoken Language Translation, 2008; pp. 198–205. [Google Scholar]

- Chiang, D. Hierarchical phrase-based translation. Proceedings of the computational linguistics 2007, Vol. 33, 201–228. [Google Scholar] [CrossRef]

- Schwenk, H. Continuous space language models. Computer Speech and Language 2007, 21, 492–518. [Google Scholar] [CrossRef]

- Bengio, Y.; Ducharme, R.; Vincent, P.; Jauvin, C. A Neural Probabilistic Language Model. Journal of Machine Learning Research 2003, 3, 1137–1155. [Google Scholar]

- Auli, M.; Galley, M.; Quirk, C.; Zweig, G. Joint Language and Translation Modeling with Recurrent Neural Networks. In Proceedings of the Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, 2013; pp. 1044–1054. [Google Scholar]

- Devlin, J.; Zbib, R.; Huang, Z.; Lamar, T.; Schwartz, R.; Makhoul, J. Fast and Robust Neural Network Joint Models for Statistical Machine Translation. In Proceedings of the Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics, 2014; pp. 1370–1380. [Google Scholar]

- Kalchbrenner, N.; Blunsom, P. Recurrent continuous translation models. In Proceedings of the Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, 2013; pp. 1700–1709. [Google Scholar]

- Bentivogli, L.; Bisazza, A.; Cettolo, M.; Federico, M. Neural versus Phrase-Based Machine Translation Quality: a Case Study. In Proceedings of the Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, 2016; pp. 257–267. [Google Scholar]

- Toral, A.; Sánchez-Cartagena, V.M. A Multifaceted Evaluation of Neural versus Phrase-Based Machine Translation for 9 Language Directions. In Proceedings of the Proceedings of the 15th Conference of the European Chapter of the Association for Computational Linguistics, 2017; pp. 1063–1073. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory; 1997; Vol. 9, pp. 1735–1780. [Google Scholar]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv 2014, arXiv:1412.3555. [Google Scholar] [CrossRef]

- Schuster, M.; Paliwal, K.K. Bidirectional recurrent neural networks. IEEE transactions on Signal Processing 1997, 45, 2673–2681. [Google Scholar] [CrossRef]

- Britz, D.; Goldie, A.; Luong, M.T.; Le, Q. Massive Exploration of Neural Machine Translation Architectures. In Proceedings of the Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, 2017; pp. 1442–1451. [Google Scholar]

- Feng, P.; Bi, Z.; Wen, Y.; Pan, X.; Peng, B.; Liu, M.; Xu, J.; Chen, K.; Liu, J.; Yin, C.H.; et al. Deep Learning and Machine Learning, Advancing Big Data Analytics and Management: Unveiling AI’s Potential Through Tools, Techniques, and Applications. arXiv 2024. arXiv:2410.01268.

- Dai, Z.; Yang, Z.; Yang, Y.; Carbonell, J.; Le, Q.V.; Salakhutdinov, R. Transformer-xl: Attentive language models beyond a fixed-length context. In Proceedings of the Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 2019; pp. 2978–2988. [Google Scholar]

- Child, R.; Gray, S.; Radford, A.; Sutskever, I. Generating Long Sequences with Sparse Transformers. arXiv 2019, arXiv:1904.10509. [Google Scholar] [CrossRef]

- Beltagy, I.; Peters, M.E.; Cohan, A. Longformer: The Long-Document Transformer. arXiv arXiv:2004.05150. [CrossRef]

- Parmar, N.; Vaswani, A.; Uszkoreit, J.; Kaiser, L.; Shazeer, N.; Ku, A.; Tran, D. Image Transformer. In Proceedings of the Proceedings of the 35th International Conference on Machine Learning, 2018; pp. 4055–4064. [Google Scholar]

- Sukhbaatar, S.; Grave, E.; Bojanowski, P.; Joulin, A. Adaptive Attention Span in Transformers. In Proceedings of the Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 2019; pp. 331–335. [Google Scholar]

- Kitaev, N.; Kaiser; Levskaya, A. Reformer: The efficient transformer. arXiv 2020, arXiv:2001.04451. [Google Scholar] [CrossRef]

- Tang, Y.; Wang, Y.; Guo, J.; Tu, Z.; Han, K.; Hu, H.; Tao, D. A Survey on Transformer Compression. arXiv 2024. arXiv:2402.05964. [CrossRef]

- Gehring, J.; Auli, M.; Grangier, D.; Yarats, D.; Dauphin, Y.N. Convolutional sequence to sequence learning. In Proceedings of the International conference on machine learning, 2017; pp. 1243–1252. [Google Scholar]

- Jean, S.; Firat, O.; Cho, K.; Memisevic, R.; Bengio, Y. Montreal neural machine translation systems for WMT’15. In Proceedings of the Proceedings of the Tenth Workshop on Statistical Machine Translation, 2015; pp. 134–140. [Google Scholar]

- Chen, H.; Peng, J.; Min, D.; Sun, C.; Chen, K.; Yan, Y.; Yang, X.; Cheng, L. MVI-Bench: A Comprehensive Benchmark for Evaluating Robustness to Misleading Visual Inputs in LVLMs. arXiv 2025, arXiv:2511.14159. [Google Scholar] [CrossRef]

- Dehghani, M.; Gouws, S.; Vinyals, O.; Uszkoreit, J.; Kaiser. Universal transformers. In Proceedings of the International Conference on Learning Representations, 2018. [Google Scholar]

- Liu, Y.; Gu, J.; Goyal, N.; Li, X.; Edunov, S.; Ghazvininejad, M.; Lewis, M.; Zettlemoyer, L. Multilingual denoising pre-training for neural machine translation. Transactions of the Association for Computational Linguistics 2020, 8, 726–742. [Google Scholar] [CrossRef]

- Conneau, A.; Lample, G. Cross-lingual language model pretraining. In Proceedings of the Advances in neural information processing systems, 2019; pp. 7059–7069. [Google Scholar]

- Johnson, M.; Schuster, M.; Le, Q.V.; Krikun, M.; Wu, Y.; Chen, Z.; Thorat, N.; Viégas, F.; Wattenberg, M.; Corrado, G.; et al. Google’s multilingual neural machine translation system: Enabling zero-shot translation. Transactions of the Association for Computational Linguistics 2017, 5, 339–351. [Google Scholar] [CrossRef]

- Ha, T.L.; Niehues, J.; Waibel, A. Toward multilingual neural machine translation with universal encoder and decoder. arXiv 2016, arXiv:1611.04798. [Google Scholar] [CrossRef]

- Firat, O.; Cho, K.; Bengio, Y. Multi-way, multilingual neural machine translation with a shared attention mechanism. In Proceedings of the Proceedings of NAACL-HLT, 2016; pp. 866–875. [Google Scholar]

- Zhang, G.; Chen, K.; Wan, G.; Chang, H.; Cheng, H.; Wang, K.; Hu, S.; Bai, L. Evoflow: Evolving diverse agentic workflows on the fly. arXiv 2025, arXiv:2502.07373. [Google Scholar] [CrossRef]

- Chen, K.; Lin, Z.; Xu, Z.; Shen, Y.; Yao, Y.; Rimchala, J.; Zhang, J.; Huang, L. R2I-Bench: Benchmarking Reasoning-Driven Text-to-Image Generation. arXiv arXiv:2505.23493.

- Wang, Z.; Lipton, Z.C.; Tsvetkov, Y. On Negative Interference in Multilingual Models: Findings and A Meta-Learning Treatment. In Proceedings of the Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, 2020; pp. 4438–4450. [Google Scholar]

- Dufter, P.; Schütze, H. Identifying Elements Essential for BERT’s Multilinguality. In Proceedings of the Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing, 2020; pp. 4423–4437. [Google Scholar]

- Wang, S.; Liu, Y.; Wang, C.; Luan, H.; Sun, M. Language models are good translators. In Proceedings of the arXiv, 2021. [Google Scholar]

- Sachan, D.; Neubig, G. Parameter Sharing Methods for Multilingual Self-Attentional Translation Models. In Proceedings of the Proceedings of the Third Conference on Machine Translation, 2018; pp. 261–271. [Google Scholar]

- Platanios, E.A.; Sachan, M.; Neubig, G.; Mitchell, T. Contextual Parameter Generation for Universal Neural Machine Translation. In Proceedings of the Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, 2018; pp. 425–435. [Google Scholar]

- Wang, X.; Tsvetkov, Y.; Neubig, G. Balancing Training for Multilingual Neural Machine Translation. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 2020; pp. 8526–8537. [Google Scholar]

- Zhang, B.; Bapna, A.; Sennrich, R.; Firat, O. Share or Not? Learning to Schedule Language-Specific Capacity for Multilingual Translation. In Proceedings of the International Conference on Learning Representations, 2021. [Google Scholar]

- Lepikhin, D.; Lee, H.; Xu, Y.; Chen, D.; Firat, O.; Huang, Y.; Krikun, M.; Shazeer, N.; Chen, Z. GShard: Scaling Giant Models with Conditional Computation and Automatic Sharding. In Proceedings of the International Conference on Learning Representations, 2021. [Google Scholar]

- Kudugunta, S.; Huang, Y.; Bapna, A.; Krikun, M.; Lepikhin, D.; Luong, M.T.; Firat, O. Beyond Distillation: Task-level Mixture-of-Experts for Efficient Inference. Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021, 2021, 3577–3599. [Google Scholar]

- Arivazhagan, N.; Bapna, A.; Firat, O.; Aharoni, R.; Johnson, M.; Macherey, W. The Missing Ingredient in Zero-Shot Neural Machine Translation. arXiv 2019, arXiv:1903.07091. [Google Scholar] [CrossRef]

- Pham, N.Q.; Niehues, J.; Ha, T.L.; Waibel, A. Improving Zero-shot Translation with Language-Independent Constraints. In Proceedings of the Proceedings of the Fourth Conference on Machine Translation, 2019; pp. 13–23. [Google Scholar]

- Li, Z.; Hu, C.; Chen, J.; Chen, Z.; Guo, X.; Zhang, R. Improving Zero-Shot Cross-Lingual Transfer via Progressive Code-Switching. In Proceedings of the Proceedings of the Thirty-Third International Joint Conference on Artificial Intelligence, 2024. [Google Scholar]

- Vilar, D.; Freitag, M.; Cherry, C.; Luo, J.; Ratnakar, V.; Foster, G. Prompting PaLM for translation: Assessing strategies and performance. arXiv 2022, arXiv:2211.09102. [Google Scholar]

- Zhang, B.; Haddow, B.; Birch, A. Prompting large language model for machine translation: A case study. arXiv 2023, arXiv:2301.07069. [Google Scholar] [CrossRef]

- Liang, C.X.; Bi, Z.; Wang, T.; Liu, M.; Song, X.; Zhang, Y.; Song, J.; Niu, Q.; Peng, B.; Chen, K.; et al. Low-Rank Adaptation for Scalable Large Language Models: A Comprehensive Survey. 2025. [Google Scholar] [CrossRef]

- Zoph, B.; Yuret, D.; May, J.; Knight, K. Transfer learning for low-resource neural machine translation. In Proceedings of the Proceedings of EMNLP, 2016; pp. 1568–1575. [Google Scholar]

- Nguyen, T.Q.; Chiang, D. Transfer Learning across Low-Resource, Related Languages for Neural Machine Translation. In Proceedings of the Proceedings of the Eighth International Joint Conference on Natural Language Processing, 2017; pp. 296–301. [Google Scholar]

- Dong, D.; Wu, H.; He, W.; Yu, D.; Wang, H. Multi-task learning for multiple language translation. In Proceedings of the Proceedings of ACL-IJCNLP, 2015; pp. 1723–1732. [Google Scholar]

- Luong, M.T.; Le, Q.V.; Sutskever, I.; Vinyals, O.; Kaiser, L. Multi-task Sequence to Sequence Learning. In Proceedings of the International Conference on Learning Representations, 2016. [Google Scholar]

- Bapna, A.; Firat, O. Simple, scalable adaptation for neural machine translation. arXiv 2019, arXiv:1909.08478. [Google Scholar] [CrossRef]

- Philip, J.; Berard, A.; Huck, M.; Firat, O. Monolingual adapters for zero-shot neural machine translation. In Proceedings of the Proceedings of EMNLP, 2020; pp. 4465–4470. [Google Scholar]

- Üstün, A.; Berard, A.; Besacier, L.; Gallé, M. Multilingual Unsupervised Neural Machine Translation with Denoising Adapters. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; pp. 6650–6662. [Google Scholar]

- Peng, B.; Chen, K.; Li, M.; Feng, P.; Bi, Z.; Liu, J.; Niu, Q. Securing large language models: Addressing bias, misinformation, and prompt attacks. arXiv 2024, arXiv:2409.08087. [Google Scholar] [CrossRef]

- Tang, Y.; Tran, C.; Li, X.; Chen, P.J.; Goyal, N.; Chaudhary, V.; Gu, J.; Fan, A. Multilingual translation from denoising pre-training. Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, 2021, 3450–3466. [Google Scholar]

- Aharoni, R.; Johnson, M.; Firat, O. Massively multilingual neural machine translation. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics, 2019; pp. 3874–3884. [Google Scholar]

- Wu, M.; Wang, Y.; Foster, G.; Qu, L.; Haffari, G. Importance-Aware Data Augmentation for Document-Level Neural Machine Translation. In Proceedings of the Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics, 2024; pp. 740–752. [Google Scholar]

- Xu, K.; Ba, J.; Kiros, R.; Cho, K.; Courville, A.; Salakhudinov, R.; Zemel, R.; Bengio, Y. Show, attend and tell: Neural image caption generation with visual attention. In Proceedings of the International conference on machine learning, 2015; pp. 2048–2057. [Google Scholar]

- Tu, Z.; Lu, Z.; Liu, Y.; Liu, X.; Li, H. Modeling coverage for neural machine translation. In Proceedings of the Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, 2016; pp. 76–85. [Google Scholar]

- Li, M.; Chen, K.; Bi, Z.; Liu, M.; Peng, B.; Niu, Q.; Liu, J.; Wang, J.; Zhang, S.; Pan, X.; et al. Surveying the mllm landscape: A meta-review of current surveys. arXiv 2024, arXiv:2409.18991. [Google Scholar]

- Shen, H.; et al. A Survey on Multi-modal Machine Translation: Tasks, Methods and Challenges. arXiv 2024, arXiv:2405.12669. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Chi, E.; Le, Q.; Zhou, D. Chain-of-thought prompting elicits reasoning in large language models. In Proceedings of the NeurIPS, 2022. [Google Scholar]

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.; Chi, E.; Narang, S.; Chowdhery, A.; Zhou, D. Self-consistency improves chain of thought reasoning in language models. arXiv 2023, arXiv:2203.11171. [Google Scholar] [CrossRef]

- Freitag, M.; Caswell, I.; Roy, S. APE at Scale and Its Implications on MT Evaluation Biases. In Proceedings of the Proceedings of the Fourth Conference on Machine Translation, 2019; pp. 34–44. [Google Scholar]

- Kepler, F.; Trénous, J.; Treviso, M.; Vera, M.; Góis, A.; Farajian, M.A.; Lopes, A.V.; Martins, A.F.T. Unbabel’s Participation in the WMT19 Translation Quality Estimation Shared Task. In Proceedings of the Proceedings of the Fourth Conference on Machine Translation, 2019; pp. 78–84. [Google Scholar]

- Freitag, M.; Al-Onaizan, Y.; Sankaran, B. Ensemble distillation for neural machine translation. arXiv 2017, arXiv:1702.01802. [Google Scholar] [CrossRef]

- Imamura, K.; Sumita, E. Ensemble and Reranking: Using Multiple Models in the NICT-2 Neural Machine Translation System at WAT2017. In Proceedings of the Proceedings of the 4th Workshop on Asian Translation, 2017; pp. 127–134. [Google Scholar]

- Kim, Y.; Rush, A.M. Sequence-level knowledge distillation. In Proceedings of the Proceedings of EMNLP, 2016; pp. 1317–1327. [Google Scholar]

- Liu, Z.; Bi, Z.; Song, J.; Liang, C.X.; Wang, T.; Zhang, Y. Hardware Accelerated Foundations for Multimodal Medical AI Systems: A Comprehensive Survey. 2025. [Google Scholar] [CrossRef]

- Banerjee, S.; Lavie, A. METEOR: An automatic metric for MT evaluation with improved correlation with human judgments. In Proceedings of the ACL workshop on intrinsic and extrinsic evaluation measures, 2005; pp. 65–72. [Google Scholar]

- Denkowski, M.; Lavie, A. Meteor Universal: Language Specific Translation Evaluation for Any Target Language. In Proceedings of the Proceedings of the Ninth Workshop on Statistical Machine Translation, 2014; pp. 376–380. [Google Scholar]

- Popović, M. chrF: character n-gram F-score for automatic MT evaluation. Proceedings of WMT; 2015; pp. 392–395. [Google Scholar]

- Zhang, T.; Kishore, V.; Wu, F.; Weinberger, K.Q.; Artzi, Y. BERTScore: Evaluating text generation with BERT. arXiv 2020, arXiv:1904.09675. [Google Scholar] [CrossRef]

- Rei, R.; Stewart, C.; Farinha, A.C.; Lavie, A. COMET: A neural framework for MT evaluation. In Proceedings of the Proceedings of EMNLP, 2020; pp. 2685–2702. [Google Scholar]

- Sellam, T.; Das, D.; Parikh, A. BLEURT: Learning robust metrics for text generation. Proceedings of ACL, 2020; pp. 7881–7892. [Google Scholar]

- Nakazawa, T.; et al. Overview of the 10th Workshop on Asian Translation. In Proceedings of the Proceedings of the 10th Workshop on Asian Translation, 2023. [Google Scholar]

- Haque, R.; et al. Evaluating Machine Translation Quality. In Proceedings of the Proceedings of LREC-COLING 2024, 2024. [Google Scholar]

- Bojar, O.; Chatterjee, R.; Federmann, C.; Graham, Y.; Haddow, B.; Huck, M.; Yepes, A.J.; Koehn, P.; Logacheva, V.; Monz, C.; et al. Findings of the 2016 conference on machine translation. In Proceedings of the Proceedings of WMT, 2016; pp. 131–198. [Google Scholar]

- Bojar, O.; Chatterjee, R.; Federmann, C.; Graham, Y.; Haddow, B.; Huang, S.; Huck, M.; Koehn, P.; Liu, Q.; Logacheva, V.; et al. Findings of the 2017 Conference on Machine Translation. In Proceedings of the Proceedings of the Second Conference on Machine Translation, 2017; pp. 169–214. [Google Scholar]

- Kim, H.; Lee, J.H.; Na, S.H. Predictor-estimator using multilevel task learning with stack propagation for neural quality estimation. Proceedings of WMT, 2017; pp. 562–568. [Google Scholar]

- Kepler, F.; Trénous, J.; Treviso, M.; Vera, M.; Martins, A.F.T. OpenKiwi: An Open Source Framework for Quality Estimation. In Proceedings of the Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics: System Demonstrations, 2019; pp. 117–122. [Google Scholar]

- Xiao, Y.; et al. A Survey on Non-Autoregressive Generation for Neural Machine Translation and Beyond. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 11407–11427. [Google Scholar] [CrossRef]

- Sennrich, R.; Haddow, B.; Birch, A. Improving neural machine translation models with monolingual data. In Proceedings of the Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, 2016; pp. 86–96. [Google Scholar]

- Liu, Y.; et al. Communication Efficient Federated Learning for Multilingual Neural Machine Translation with Adapter. arXiv 2023, arXiv:2305.12449. [Google Scholar] [CrossRef]

- Graves, A. Adaptive computation time for recurrent neural networks. arXiv 2016, arXiv:1603.08983. [Google Scholar]

- Fan, A.; Bhosale, S.; Schwenk, H.; Ma, Z.; El-Kishky, A.; Goyal, S.; Baines, M.; Celebi, O.; Wenzek, G.; Chaudhary, V.; et al. Beyond english-centric multilingual machine translation. Proceedings of the Journal of Machine Learning Research 2021, Vol. 22, 1–48. [Google Scholar]

- Niu, Q.; Liu, J.; Bi, Z.; Feng, P.; Peng, B.; Chen, K.; Li, M.; Yan, L.K.; Zhang, Y.; Yin, C.H.; et al. Large language models and cognitive science: A comprehensive review of similarities, differences, and challenges. arXiv 2024, arXiv:2409.02387. [Google Scholar] [CrossRef]

- Zhang, o. Mixture of Experts for Multilingual Translation. arXiv 2025. [Google Scholar]

- Han, K.; et al. A Survey on Vision Transformer. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 87–110. [Google Scholar] [CrossRef] [PubMed]

- Hsieh, W.; Bi, Z.; Jiang, C.; Liu, J.; Peng, B.; Zhang, S.; Pan, X.; Xu, J.; Wang, J.; Chen, K.; et al. A comprehensive guide to explainable ai: From classical models to llms. arXiv 2024, arXiv:2412.00800. [Google Scholar] [CrossRef]

- Song, J.; et al. Transformer Architecture Survey. arXiv 2025. [Google Scholar]

- Huang, o. Methodologies for Neural Machine Translation. arXiv 2025. [Google Scholar]

- Song, o. Generative Models for Machine Translation. arXiv 2025. [Google Scholar]

- Li, M.; Bi, Z.; Wang, T.; Wen, Y.; Niu, Q.; Liu, J.; Peng, B.; Zhang, S.; Pan, X.; Xu, J.; et al. Deep learning and machine learning with gpgpu and cuda: Unlocking the power of parallel computing. arXiv 2024, arXiv:2410.05686. [Google Scholar] [CrossRef]

- Ren, o. Deep Learning for NLP. arXiv 2024. [Google Scholar] [CrossRef]

- Jing, o. Semantic Processing in Neural Translation. arXiv 2024. [Google Scholar]

- Peng, o. Noise Robust Neural Machine Translation. arXiv 2024. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).