Submitted:

15 April 2026

Posted:

16 April 2026

You are already at the latest version

Abstract

Keywords:

MSC: Primary: 91A60; Secondary: 60G40; 68T20

1. Introduction

2. Basic Rules and Game Structure of Skyjo

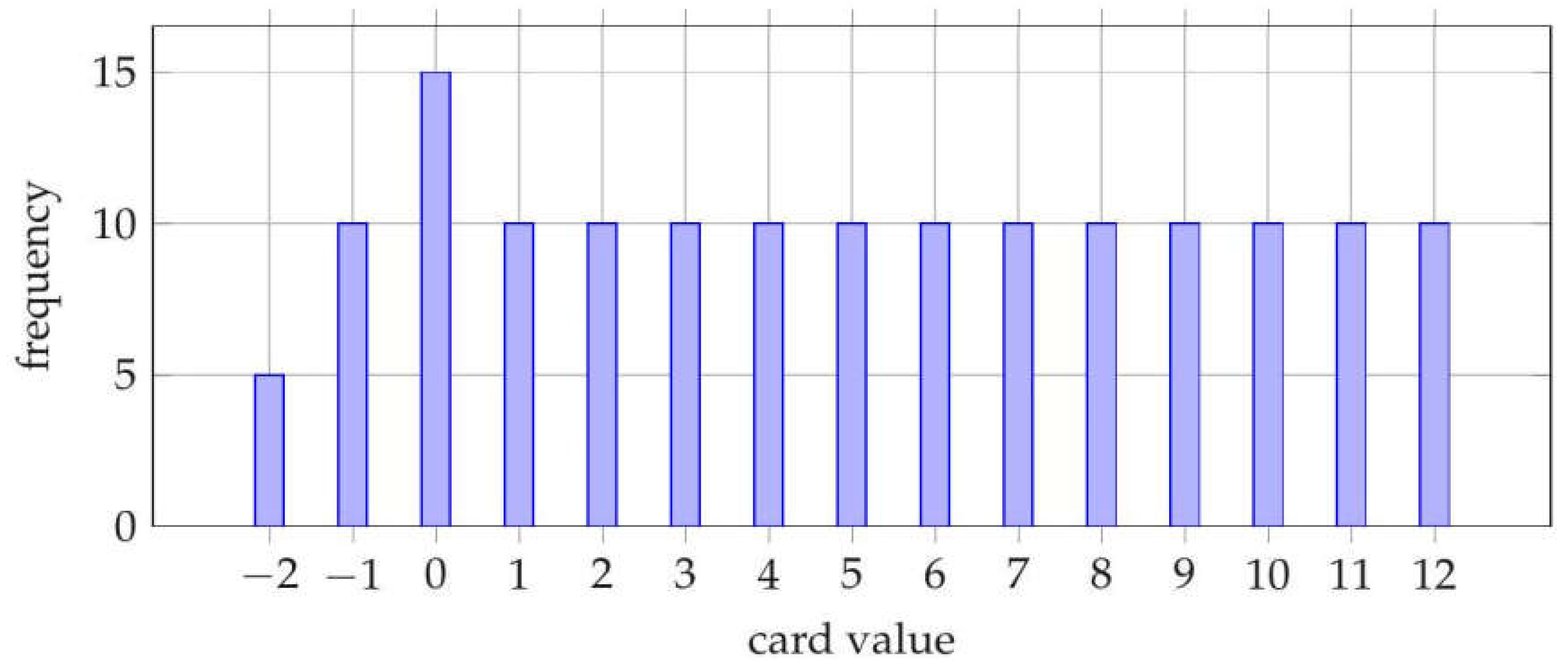

2.1. Card Set and Distribution

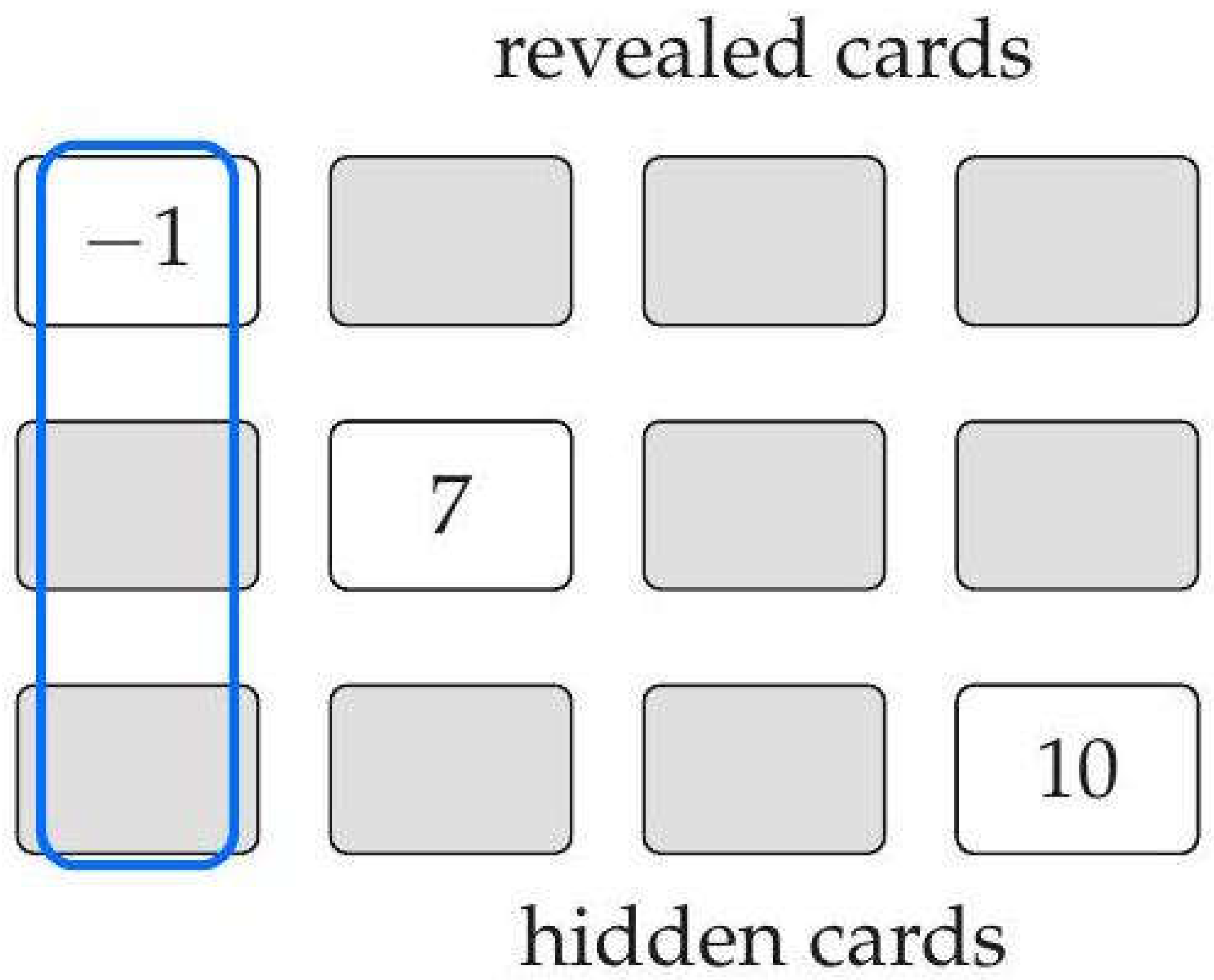

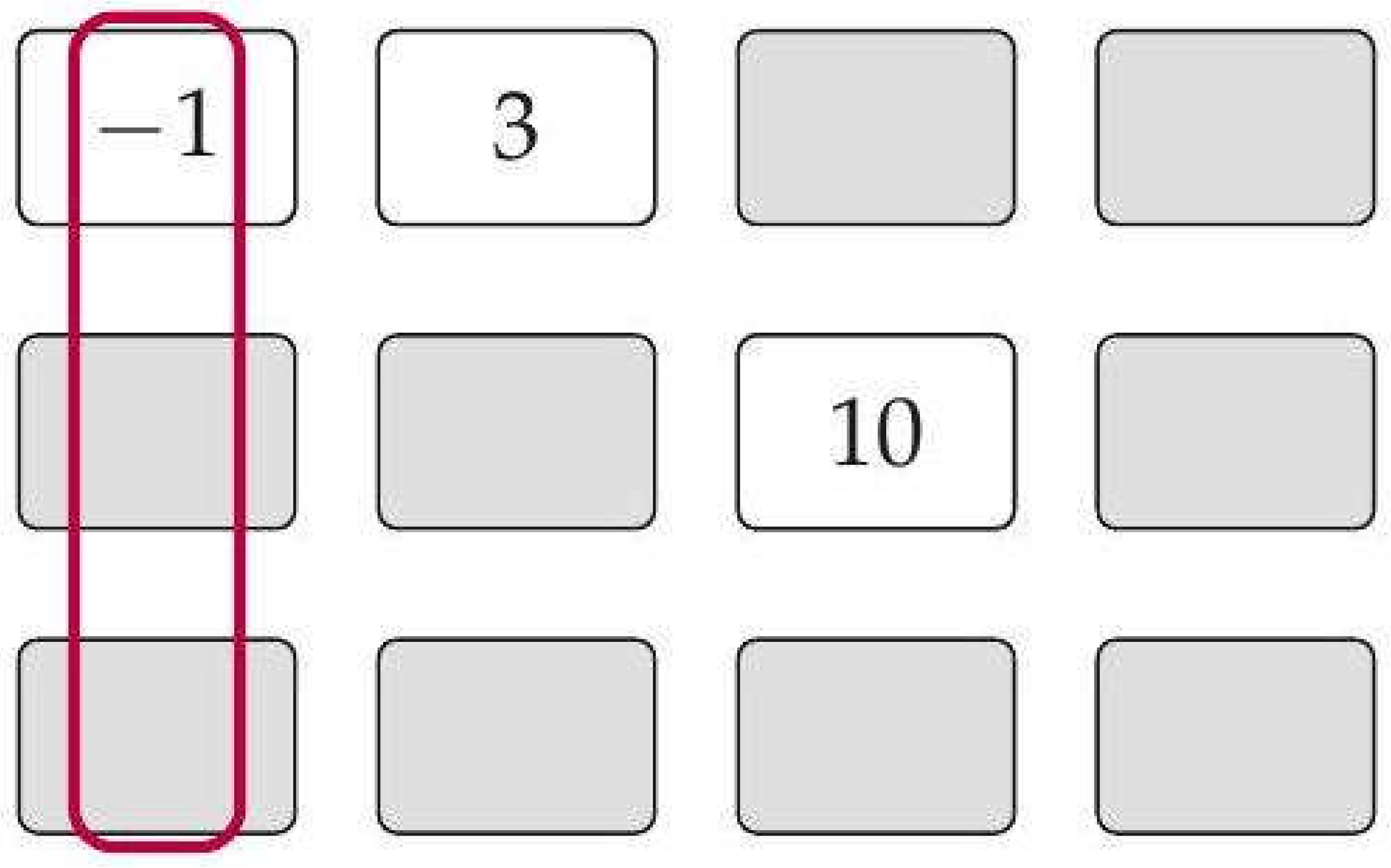

2.2. Player Tableau

- Hidden: card value unknown to the player,

- Revealed: card value known and fixed,

- Removed: position cleared due to column completion.

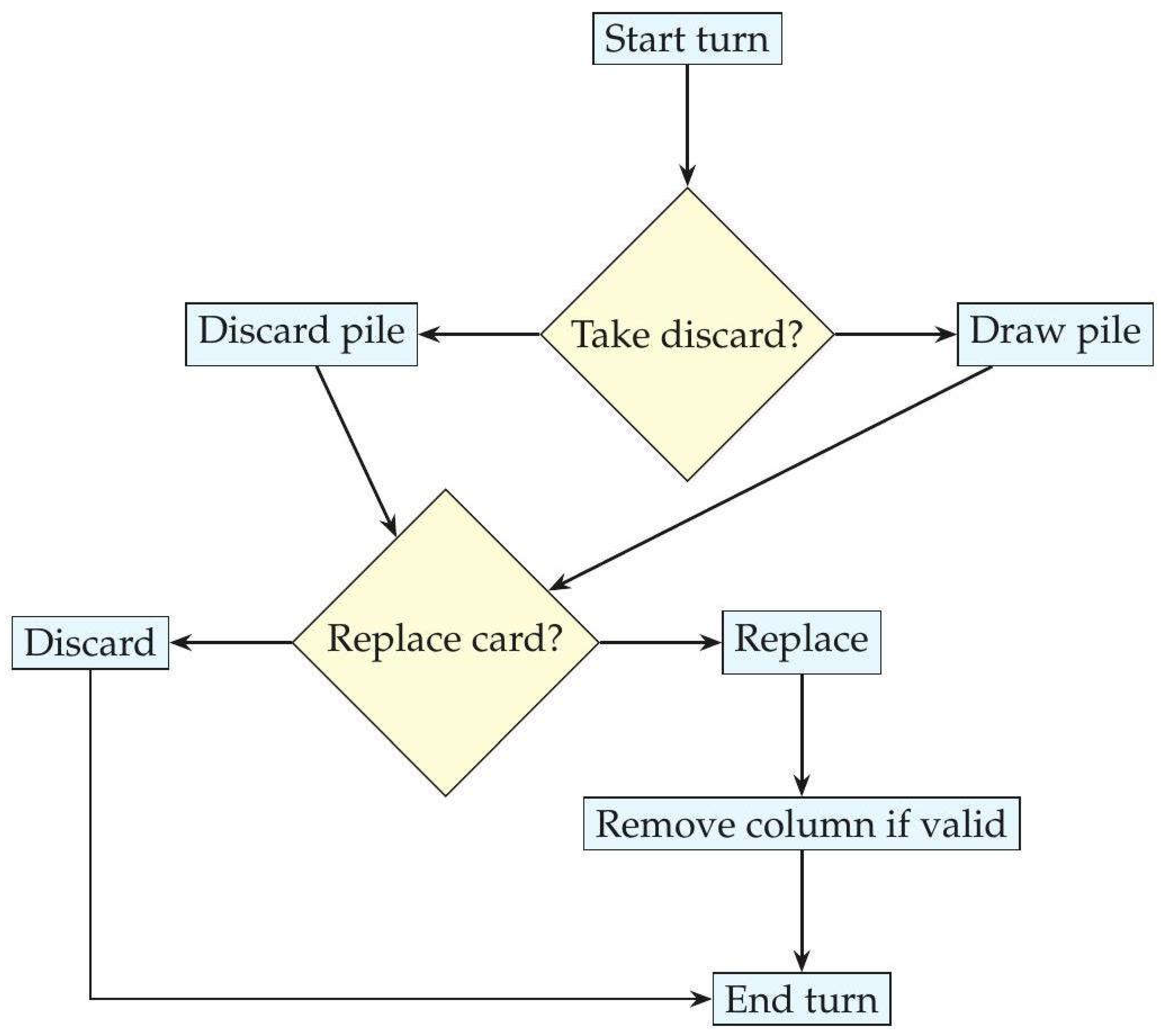

2.3. Player’s Turn Structure

2.4. Round Termination and Scoring

2.5. Strategic Implications

- replacement of large revealed values,

- risk-reward trade-offs for hidden cards,

- strong nonlinear payoffs from column completion.

3. Game-Theoretic Model

3.1. State Space

3.2. Action Space

- draw pile, discard pile ,

- discard .

3.3. Transition Kernel

- uniform draws from ,

- deterministic tableau updates,

- deterministic column-removal rules.

3.4. Payoff Function

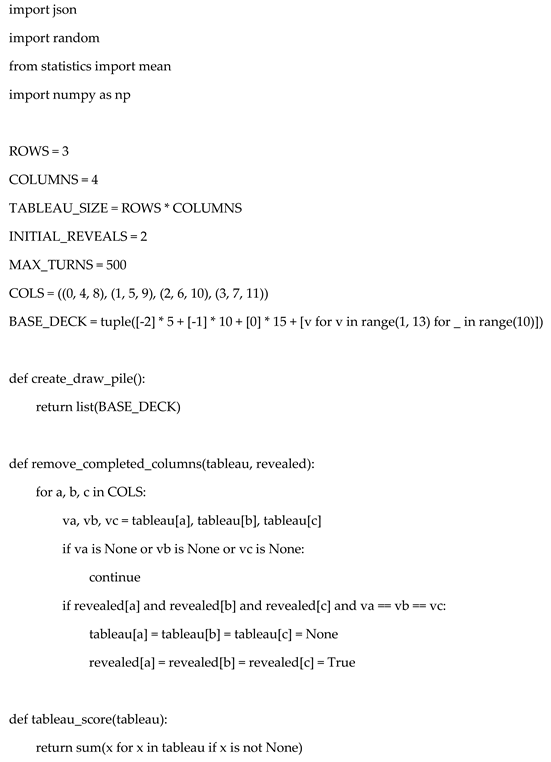

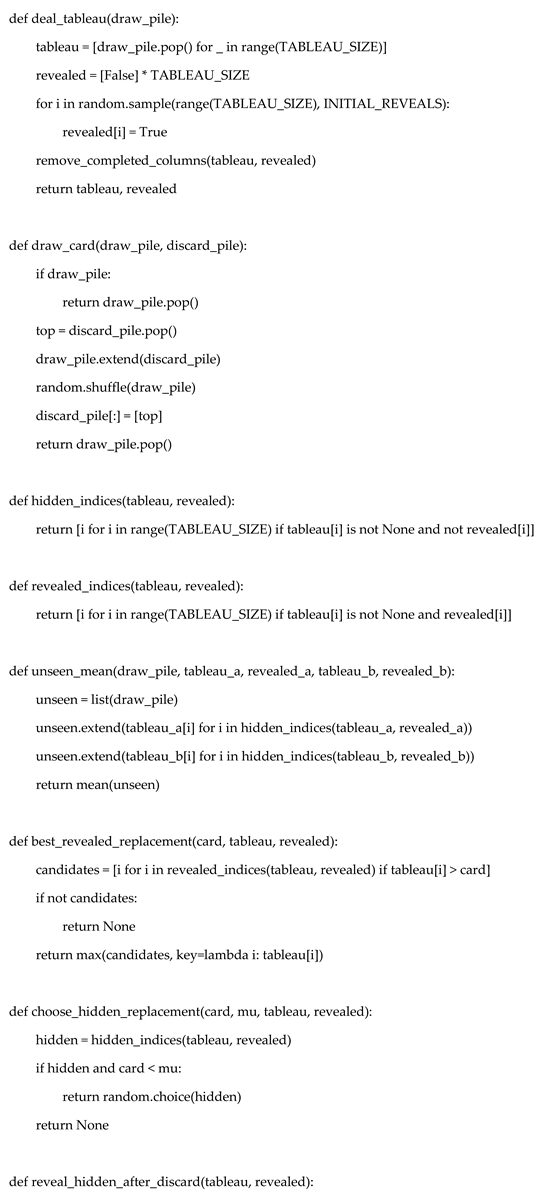

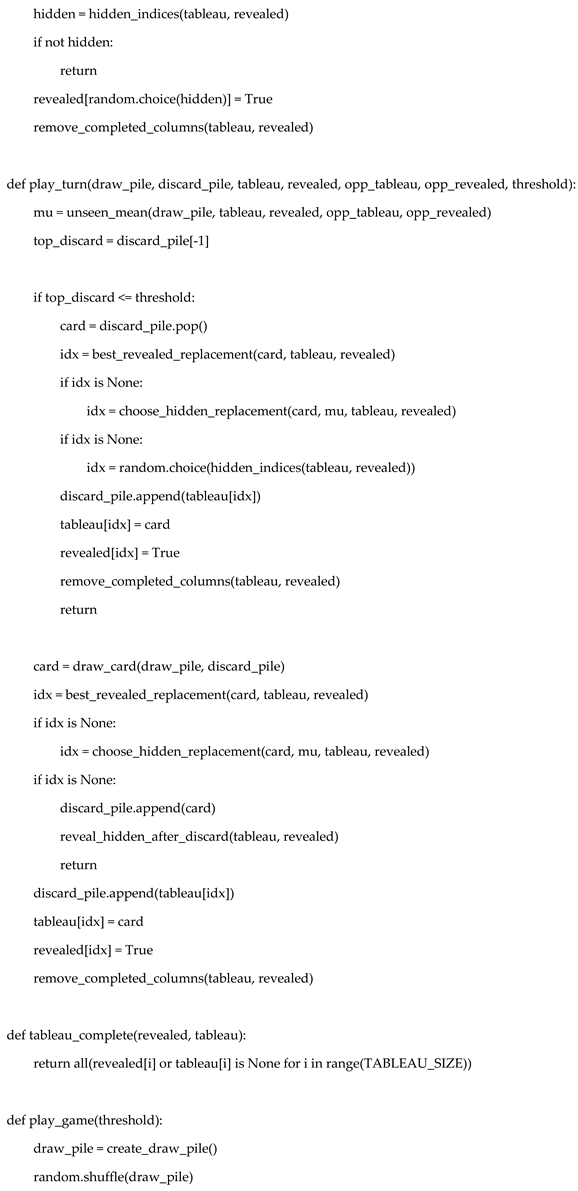

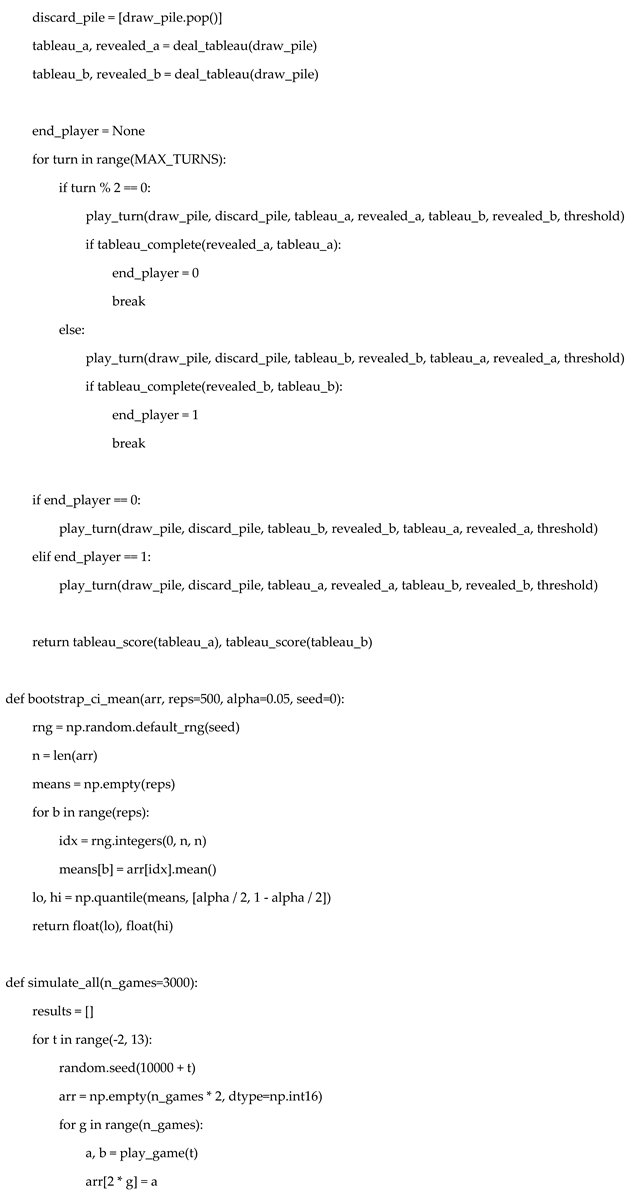

4. Mapping Rules to Simulation Code

4.1. Deck and Belief Model

4.2. Turn Logic

| Rule element | Code component |

| Discard threshold policy | if top_discard <= threshold |

| Replace revealed card | worst_revealed = max(...) |

| Replace hidden card | random.choice(hidden) |

| Column completion | remove_completed_columns() |

4.3. Round Termination

5. Skyjo Rule Variants and Extensions

5.1. Skyjo Action Variant

- additional draws,

- card swaps,

- forced reveals.

5.2. Alternative Column Rules

6. Strategy Taxonomy

6.1. Naive Strategies

- Always draw from the draw pile.

- Replace a random hidden card.

6.2. Column-Seeking Strategies

- priority for completing 2-of-a-kind columns,

- preference ordering by value v.

6.3. Dominance Relations

7. Model Scope and Generalization

- larger tableaux,

- asymmetric deck compositions,

- replacement games with pattern-based removal.

8. Deck Belief State

9. Expected Value of Hidden Cards

10. Replacement Decisions

11. Incentives of Column Removal

12. Player’s Grid

13. Turn Decision Structure

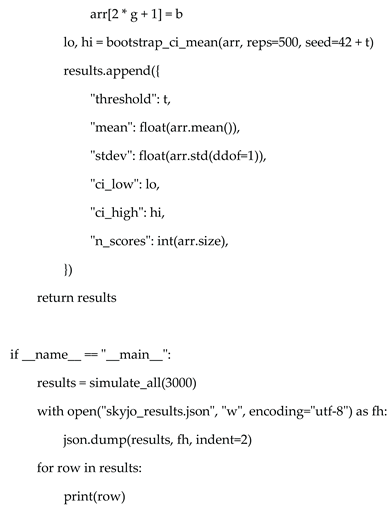

14. Simulation Design

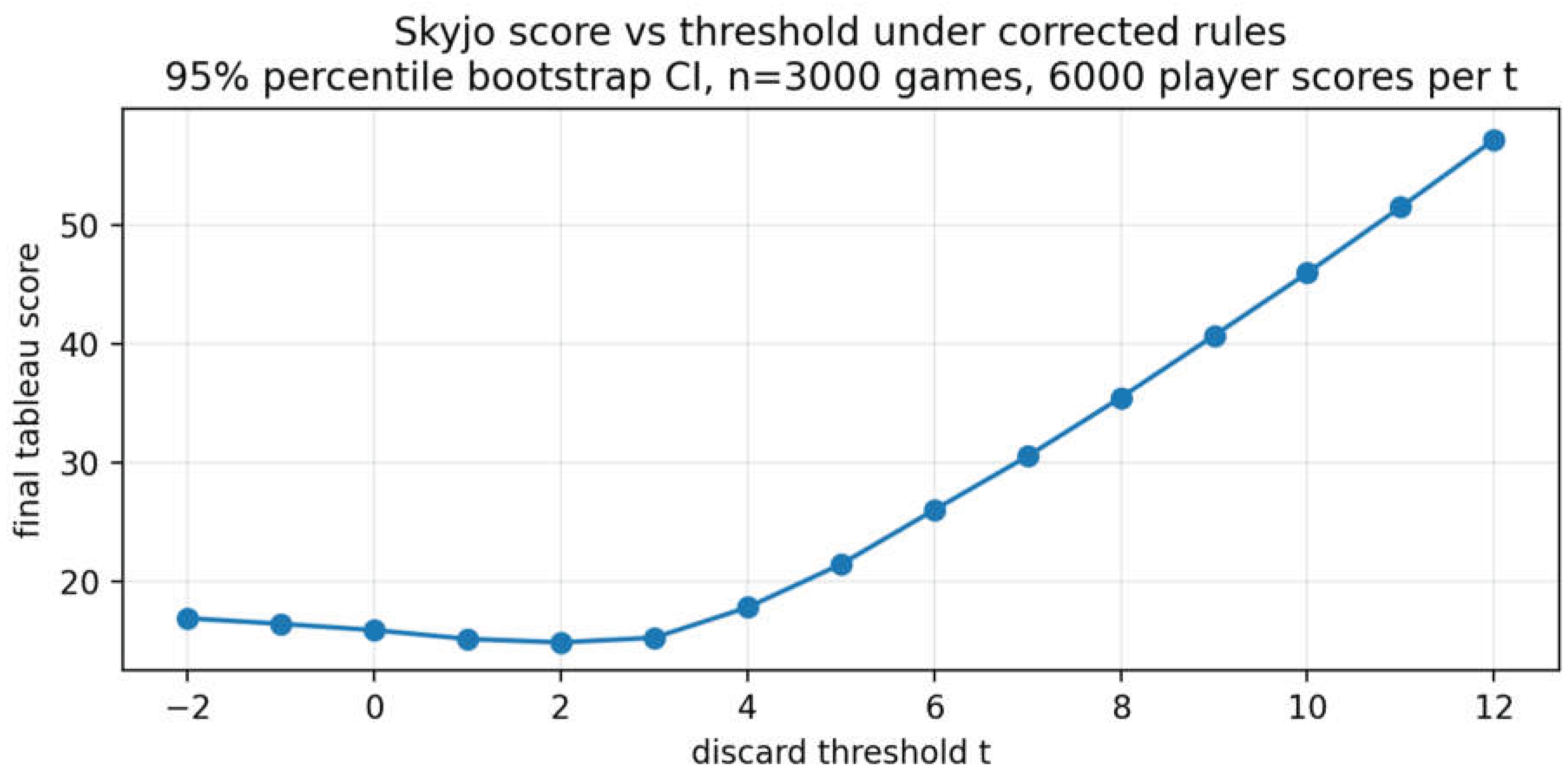

15. Simulation Results

16. Discussion

17. Limitations

18. Concluding Remarks

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

Appendix A. Python Simulation Code

Appendix B. Technical Proofs for the Model

Appendix B.1. Exchangeability and Linearity

Appendix B.2. Hidden-Card Expectation

Appendix B.3. Hidden Replacement

Appendix B.4. Revealed Replacement

Appendix B.5. Draw-Pile Discard with Mandatory Reveal

Appendix B.6. Column Completion

Appendix B.7. Absence of Threshold Monotonicity on the Domain

Appendix B.8. Simulation Fidelity

Appendix B.9. Scope

Appendix B.10. Scope of Validity

References

- Magilano GmbH. Skyjo Official Rules. Magilano, Germany. 2015. Available online: https://www.magilano.com.

- Magilano GmbH. Skyjo Action Rulebook. Magilano, Germany. 2023. [Google Scholar]

- Ross, S.M. Introduction to Probability Models, 11th ed.; Academic Press: Amsterdam, The Netherlands, 2014. [Google Scholar]

- Puterman, M.L. Markov Decision Processes: Discrete Stochastic Dynamic Programming; Wiley: Hoboken, NJ, USA, 1994. [Google Scholar]

- Fishman, G.S. Monte Carlo: Concepts, Algorithms, and Applications; Springer: New York, NY, USA, 1996. [Google Scholar]

- Osborne, M.J.; Rubinstein, A. A Course in Game Theory; MIT Press: Cambridge, MA, USA, 1994. [Google Scholar]

- Ferguson, T.S. Optimal stopping and applications. Electronic Journal of Probability 2008. [Google Scholar]

| Threshold t | Mean score | Std. dev. | 95% bootstrap CI | n |

| -2 | 16.95 | 10.01 | [16.70, 17.20] | 6000 |

| -1 | 16.48 | 9.93 | [16.26, 16.75] | 6000 |

| 0 | 15.95 | 9.94 | [15.70, 16.22] | 6000 |

| 1 | 15.21 | 9.77 | [14.95, 15.44] | 6000 |

| 2 | 14.92 | 9.54 | [14.68, 15.16] | 6000 |

| 3 | 15.31 | 9.05 | [15.09, 15.55] | 6000 |

| 4 | 17.86 | 8.83 | [17.65, 18.10] | 6000 |

| 5 | 21.48 | 8.85 | [21.25, 21.70] | 6000 |

| 6 | 26.05 | 9.29 | [25.84, 26.29] | 6000 |

| 7 | 30.56 | 10.08 | [30.29, 30.81] | 6000 |

| 8 | 35.50 | 11.08 | [35.21, 35.78] | 6000 |

| 9 | 40.71 | 12.20 | [40.40, 41.00] | 6000 |

| 10 | 45.98 | 13.36 | [45.65, 46.29] | 6000 |

| 11 | 51.51 | 15.05 | [51.14, 51.89] | 6000 |

| 12 | 57.18 | 16.08 | [56.75, 57.61] | 6000 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).