1.1. Global Burden of Breast Cancer: Current Status and Future Projections

Breast cancer poses an important threat to women’s health worldwide. Its burdens vary considerably across regions and socioeconomic conditions. This section provides an overview of the current global incidence and mortality rates of breast cancer based on GLOBOCAN 2022 data [

1], with discussion of regional disparities and their underlying factors, in addition to future projections.

According to GLOBOCAN 2022 data [

1], approximately 2.3 million new breast cancer cases occurred globally in 2022, causing approximately 670,000 deaths. The age-standardized incidence rate (ASR) was 46.8 per 100,000. The age-standardized mortality rate (ASR) was 12.7 per 100,000. These figures demonstrate clearly that breast cancer persists as a crucially important public health issue.

Breast cancer incidence and mortality rates differ considerably among regions, exhibiting particular dependence on the Human Development Index (HDI). High-HDI regions (e.g., Europe, North America, Oceania) tend to have higher incidence rates, whereas low-HDI regions (e.g., Africa) are associated with higher mortality rates. These disparities are likely to stem from differences in screening availability, stage at diagnosis, treatment infrastructure, and socioeconomic factors.

A study by Liao et al. [

2] indicated that breast cancer burdens are likely to increase in the future. Specifically in Africa, new cases among young women (0–39 years) are projected to increase by approximately 96%: from 43,168 cases in 2022 to 84,683 cases in 2050. The Asian region has the greatest number of cases. The total number is projected to remain high, but with variations depending on the HDI level.

The economic burdens of breast cancer vary considerably among nations and regions. A study particularly addressing India [

2] projected the numbers of breast cancer patients and associated economic burdens during 2021–2030. The study found that the total economic burden would increase from approximately US

$8 billion in 2021 to approximately US

$13.95 billion in 2030. Additionally, a study of chemotherapy patients in an Ethiopian hospital [

3] showed that the average total direct cost for outpatients was approximately US

$1,188.26, suggesting heavy patient burdens in low-income and middle-income countries.

Breast cancer presents severe global health difficulties, with large regional disparities. To reduce future breast cancer burdens, it is crucially important to improve screening coverage, early diagnosis, and access to appropriate treatment. High mortality rates in low-HDI regions are a particularly urgent issue. International cooperation and support are fundamentally important.

1.2. Utility of Deep Learning-Based Mammography Imaging Diagnosis in Breast Cancer Treatment Planning

Deep learning has emerged as a transformative approach used for medical imaging diagnosis, particularly for cancer detection. Yuliana Jiménez-Gaona et al. [

4] presented a comprehensive review of deep learning applications using ultrasound and mammography for breast cancer diagnosis, analyzing 59 studies from 250 research articles published during 2010–2020. Results show that deep learning-based computer-aided diagnosis systems reduce manual feature extraction needs effectively and improve diagnostic accuracy. Munir et al. provided a bibliographic review covering various deep learning techniques including Convolutional Neural Networks (CNNs), GANs, and RNNs for cancer diagnosis across breast, lung, brain, and skin cancers, highlighting the superiority of AI methods over traditional diagnostic approaches [

5]. By contrast, Nagendran et al. identified important methodological concerns related to deep learning studies, finding that only 9 of 81 non-randomized trials were prospective, with high risk of bias in 58 studies and limited data availability [

6]. Kassem et al. reviewed 102 reports of studies of skin lesion diagnosis, comparing traditional machine learning with deep learning methods while identifying challenges including small datasets and evaluation biases [

7].

1.3. Deep Learning-Based Methods for Mammographic Image Diagnosis

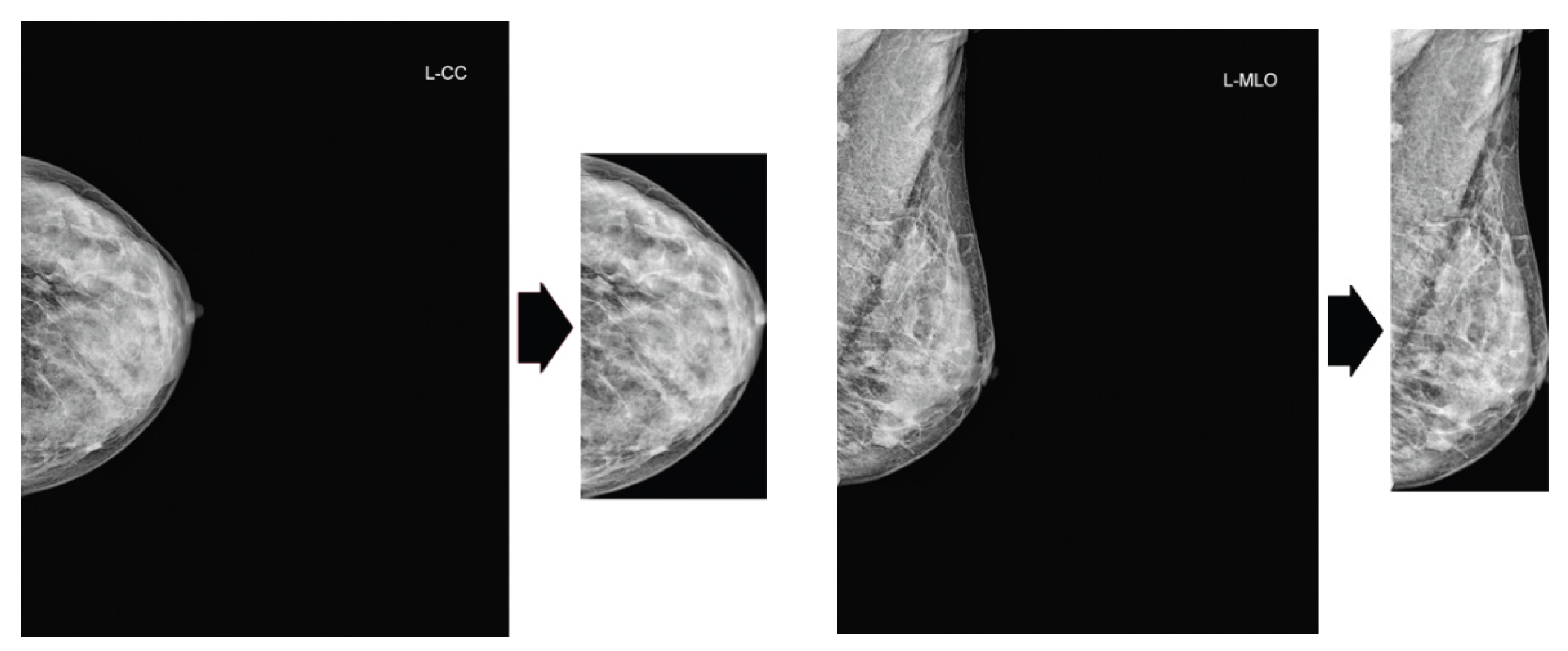

Since the advent of deep learning technologies, particularly Convolutional Neural Networks (CNNs), research in breast cancer detection and diagnosis from mammograms has advanced dramatically. The approaches adopted for these studies fall into two main streams based on the granularity of the annotation information they use and the format of the model’s input. These are region of interest (ROI)-based methods, which specifically examine candidate lesion areas, and whole-image-based methods, which analyze the entire image directly. This report outlines these two primary methodology categories and presents key publications in chronological order to demonstrate the development and diversity within each stream.

1.3.1. Region of Interest (ROI)-Based Methods

ROI-based methods feature a two-stage approach. The first stage detects a candidate lesion region (ROI) within the image. The second stage inputs a cropped patch of that region into a classification model to perform a diagnostic assessment (e.g., benign or malignant). This method is well-suited to learning fine-grained morphological features because it allows the model to emphasize high-resolution information of the lesion for its processes. Nevertheless, this approach has an important limitation: its final diagnostic performance is strongly dependent on the accuracy of the ROI detection stage. Its training requires precise, local annotations that indicate the lesion location.

The following list presents chronologically ordered descriptions of major academic efforts in this method category.

Levy et al. presented a methodology that employs CNNs to classify pre-segmented breast masses on mammograms directly as benign or malignant. To overcome the challenge of limited training data, this approach used a combination of transfer learning, meticulous pre-processing, and data augmentation. Results show that the methodology achieved high classification accuracy on the DDSM dataset, surpassing human performance. It also demonstrated model interpretability [

8].

Kooi et al. directly compared the performance of a conventional CAD system based on hand-crafted features with a CNN using approximately 45,000 mammograms. The CNN outperformed the conventional Computer-Aided Diagnosis (CAD) system at the low-sensitivity regime and performed comparably at the high-sensitivity regime. Furthermore, a patch-level reader study found no significant difference between the CNN performance and that of certified screening radiologists [

9].

Shen et al. developed an end-to-end approach for deep learning-based mammography diagnosis. The method reduces reliance on expensive local annotations using lesion-level annotations only during the initial training stage and subsequently training on image-level labels. The approach demonstrated high performance when using the CBIS-DDSM (area under the curve, AUC 0.91) and INbreast (AUC 0.98) datasets [

10].

Al-antari et al. proposed a deep learning-based CAD system that integrates breast mass detection (YOLO), segmentation (using the proposed FrCN method), and benign–malignant classification (CNN) into a single framework. In a four-fold cross-validation on the public INbreast dataset, the system achieved 98.96% detection accuracy, 92.97% segmentation accuracy, and 95.64% classification accuracy (AUC 94.78%), demonstrating its effectiveness over conventional methods [

11].

Chougrad et al. developed a CAD system that classifies mammography ROIs as benign or malignant using CNNs and transfer learning. The research explored optimal fine-tuning strategies and used a merged dataset from multiple public sources for training. Upon validation on the independent MIAS dataset, the system achieved 98.23% accuracy and 0.99 AUC, demonstrating high generalization performance [

12].

Sun et al. proposed a multi-view CNN to leverage complementary information from craniocaudal (CC) and mediolateral oblique (MLO) mammographic views. The methodology featured a penalty term to enforce inter-view consistency and the learning of invariant representations via Supervised Contrastive Learning (SCL). Through experiments conducted of the DDSM dataset, the study demonstrated superior classification performance and diagnostic speed compared to conventional methods [

13].

Garrucho et al. proposed a single-source training pipeline that requires no images from unseen target domains. In an evaluation across five unseen domains, the proposed methodology outperformed conventional transfer learning approaches in four of the five domains and reduced the domain shift attributable to different acquisition systems. The study also analyzed the effects of covariates such as patient age and breast density [

14].

Celat et al. evaluated the performance of a deep learning model (ResNet152V2) for classifying normal–abnormal mammography ROIs using the large-scale EMBED dataset, achieving an AUC of 0.975. Subgroup analysis revealed significant associations between false-negative predictions and white patients or architectural distortions, as well as significant associations between false-positive predictions and high breast density. This finding underscores the importance of subgroup analysis for improving model fairness and interpretability [

15].

Camurdan, O et al. combined a patch-based deep learning approach with curriculum learning to produce a system for mammographic breast cancer detection. Even when using only limited strong annotations (20%) and weak annotations (image labels), the method improved both the F1 score and Grad-CAM-based explainability (Ground Truth overlap ratio) compared to a baseline trained with no strong annotation. This performance trend held also for an external dataset, demonstrating the effectiveness of this annotation-efficient model [

16].

1.3.2. Whole-Image-Based Methods

Whole-Image-based methods perform end-to-end diagnosis by feeding the entire mammogram directly into a model, consequently bypassing an earlier ROI detection step. This approach holds the advantage of requiring only image-level diagnostic labels for training, which reduces annotation costs significantly. However, because lesion areas are extremely small relative to the entire image, recent frameworks in weakly supervised learning and Multiple Instance Learning (MIL) are crucially important for overcoming this challenge.

The following list presents a chronologically ordered listing of major academic papers in this method category.

Campanella et al. proposed an MIL system that trains using only slide-level diagnoses, obviating the need for costly pixel-level annotations. In a large-scale evaluation using 44,732 whole slide images, the system achieved an AUC greater than 0.98 for all three cancer types. For clinical application, this system could potentially exclude 65–75% of slides while maintaining 100% sensitivity [

17].

McKinney et al. developed an AI system for breast cancer screening. We evaluated the system using datasets from the UK and the US, for which it reduced the rates of false positives and false negatives significantly. In an independent study comparing the AI against six radiologists, the system outperformed all human readers, exceeding the average radiologist’s AUC by an absolute margin of 11.5%. Furthermore, in a double-reading simulation, the AI system maintained non-inferior performance while reducing the workload of the second reader by 88% [

18].

Lotter et al. used a whole-image-based approach (including MIL) to demonstrate that a high-accuracy diagnostic model can be built even from limited annotations, thereby proving the effectiveness of annotation-efficient learning [

19].

Zhu et al. proposed an end-to-end deep MIL network for whole mammogram classification, thereby eliminating the need for costly ROI annotations. The authors explored three MIL schemes. Through experimentation on the INbreast dataset, they demonstrated the robustness of their approach compared to conventional methods that require annotation [

20].

El Mikaty et al. proposed FPN-MIL, a model integrating a Feature Pyramid Network (FPN), to address the challenge of multi-scale lesions that conventional MIL for mammography overlooks. The proposed method realizes multi-scale analysis from single-scale patch inputs and employs an attention-based aggregation mechanism to enhance robustness against scale variations. Experiment results demonstrated that the proposed method surpassed conventional approaches; FPN-SetTrans achieved the best performance for calcification classification, whereas FPN-AbMIL performed best for mass classification [

21].

Deep learning for mammography diagnosis shows a clear shift of research focus from ROI-based methods, which precisely analyze local features, to whole-image-based methods, which reduce annotation costs and can capture global context. Particularly, the adoption of weakly supervised learning and MIL offers the potential to combine advantages of both approaches. It represents a primary direction for future research and development. Furthermore, the scope of applications is expanding from simple benign–malignant classification to risk and treatment response prediction, progressively increasing the clinical value of these systems.

1.4. Positioning and Objective of Our Study

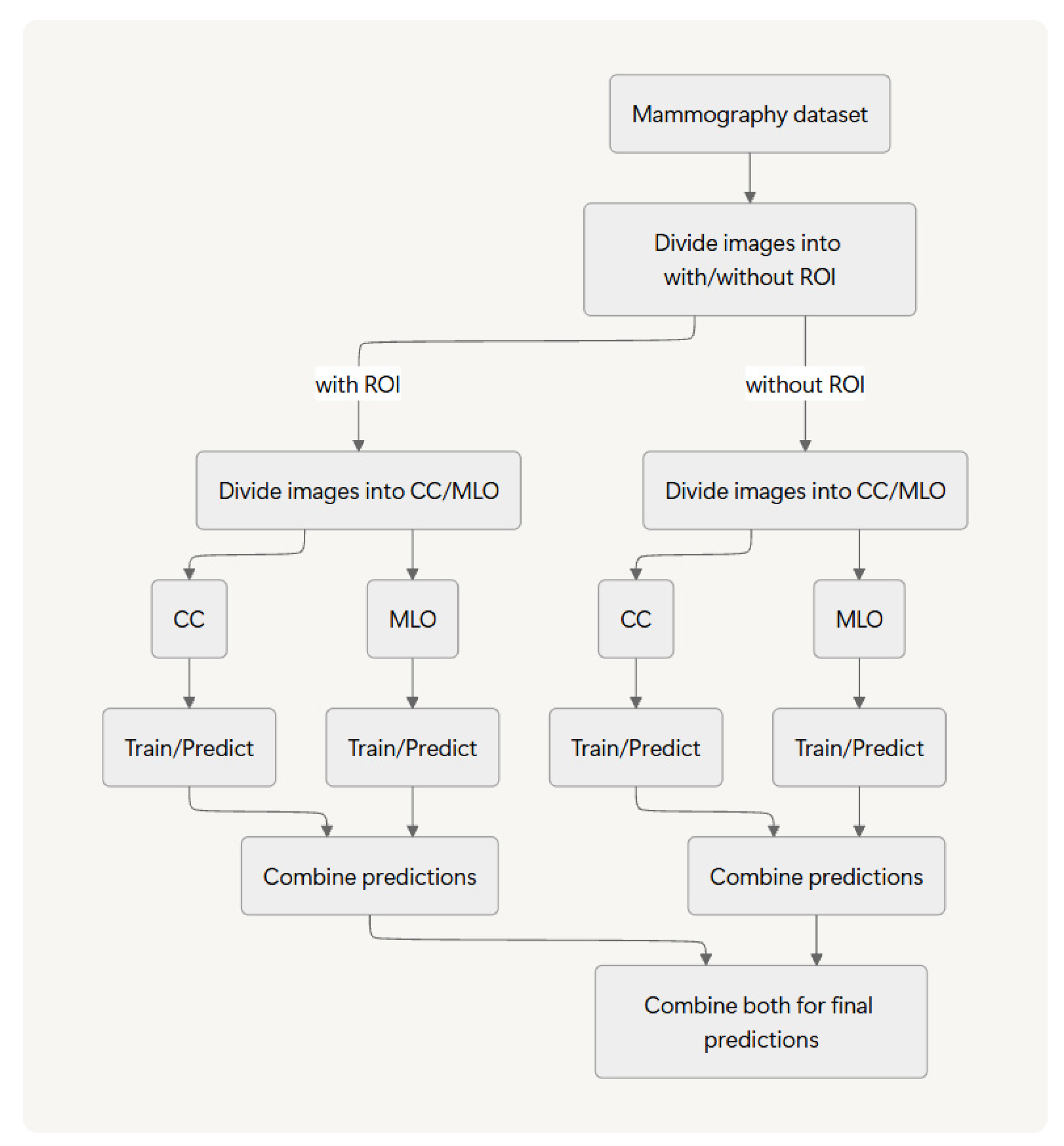

The methodology proposed herein falls under the category of image classification. In earlier work [

22], we introduced and evaluated an ROI-stratified approach by which we separate images based on the presence or absence of an annotated ROI for distinct training and inference pipelines before integrating their results. Using two public datasets and two deep learning models, we demonstrated that this method has potential to improve benign–malignant classification accuracy.

For this work, we assess the generalizability of our ROI-stratified approach by application of it to two distinct classification tasks (normal versus abnormal and benign versus malignant) across three public datasets and five representative deep learning models.