Submitted:

29 December 2025

Posted:

30 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

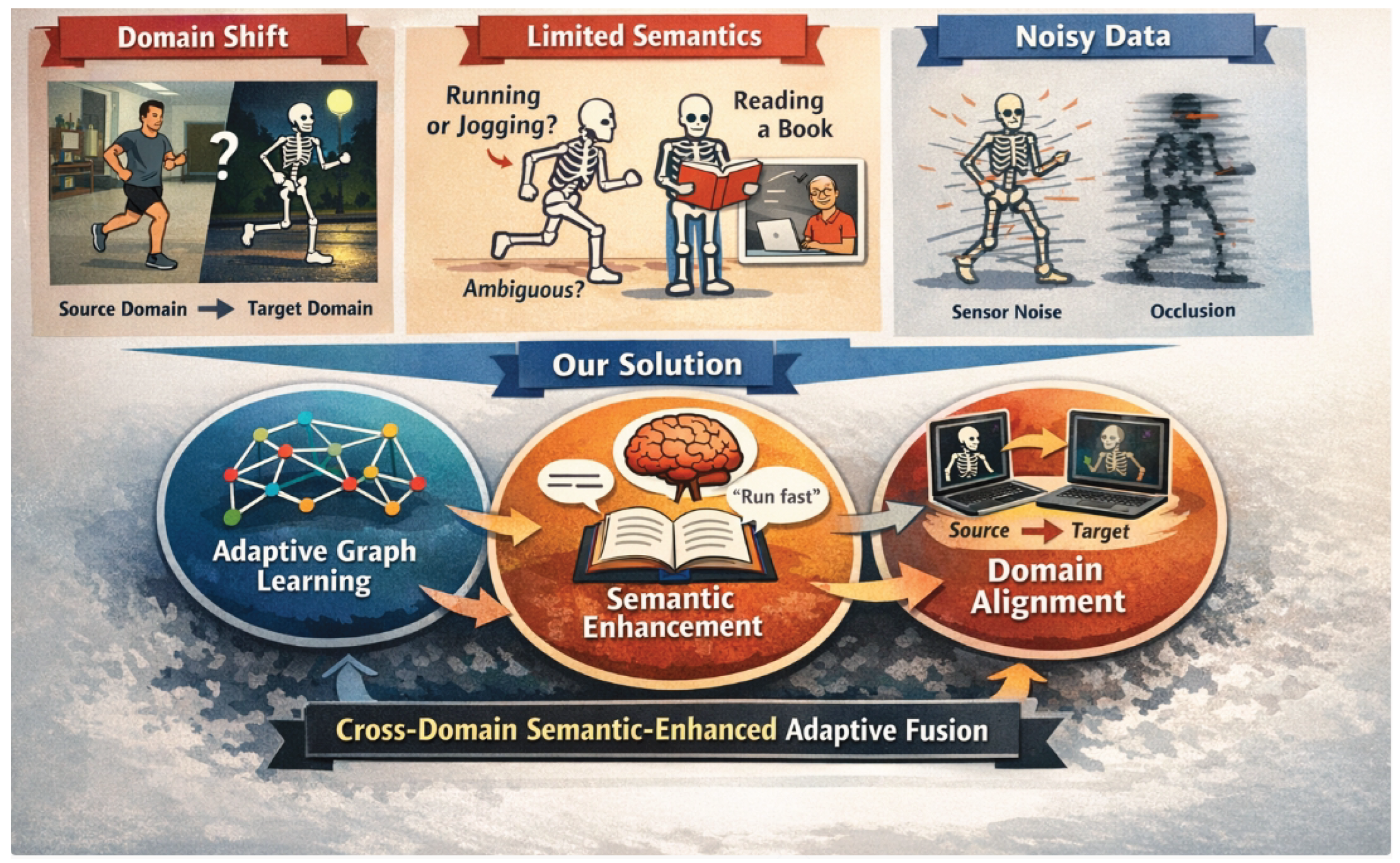

- We propose a novel framework, CD-SEAFNet, which innovatively integrates adaptive spatio-temporal graph learning with advanced semantic contextual information to enhance skeleton-based action recognition robustness and understanding.

- We develop a sophisticated semantic context encoder and a cross-modal adaptive fusion module that effectively leverages natural language descriptions to inject high-level semantic cues, significantly improving the recognition of complex and ambiguous actions.

- We introduce a robust domain alignment mechanism, incorporating adversarial training and contrastive learning, to learn domain-invariant features, thereby achieving superior cross-domain generalization capabilities in diverse real-world settings.

2. Related Work

2.1. Skeleton-Based Action Recognition and Graph Neural Networks

2.2. Multi-Modal Semantic Fusion and Domain Adaptation

2.3. Decision-Making and Interactive Systems

3. Method

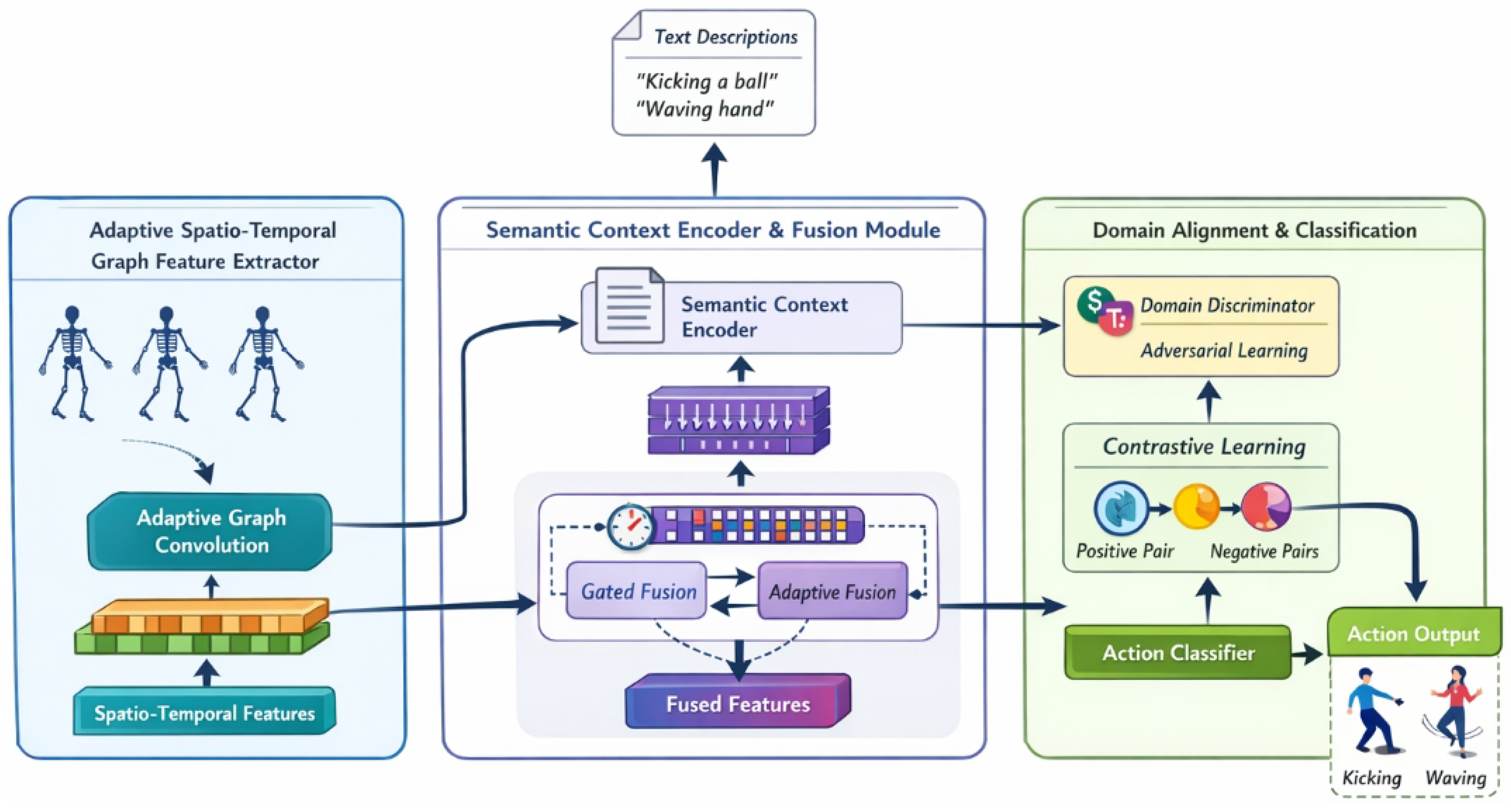

3.1. Adaptive Spatio-Temporal Graph Feature Extractor

3.2. Semantic Context Encoder and Fusion Module

3.2.1. Semantic Context Encoder

3.2.2. Cross-Modal Adaptive Fusion Module

3.3. Domain Alignment and Classification Module

3.3.1. Domain Alignment

3.3.1.1. Adversarial Training.

3.3.1.2. Contrastive Learning.

3.3.2. Action Classification

4. Experiments

4.1. Experimental Setup

4.1.1. Datasets and Evaluation Protocols

-

NTU RGB+D (NTU-60) comprises 56,880 action clips performed by 40 distinct subjects across 60 action classes. It offers two standard evaluation protocols:

- -

- Cross-Subject (X-Sub): The training and testing sets include different subjects, assessing the model’s generalization to unseen individuals.

- -

- Cross-View (X-View): The training and testing sets are captured from different camera viewpoints, evaluating robustness to viewpoint variations.

-

NTU RGB+D 120 is an extended version of NTU-60, featuring 114,480 action clips performed by 106 subjects across 120 action classes. It introduces two additional challenging protocols:

- -

- Cross-Subject (X-Sub): Similar to NTU-60, but with a larger subject pool.

- -

- Cross-Set (X-Set): Different setup IDs are used for training and testing, evaluating robustness to diverse experimental environments.

4.1.2. Implementation Details

4.1.3. Data Preprocessing

- Skeleton Data Preprocessing: Raw skeleton sequences undergo standardization, where the body’s center is translated to the origin to mitigate positional variances. To enhance the model’s generalization capabilities and robustness against noise, various data augmentation techniques are applied, including random cropping of frames, perturbation of joint coordinates, and temporal interpolation.

- Semantic Data Preparation: For each action category, concise natural language descriptions are either manually authored or extracted from rich external datasets (e.g., Kinetics captions). These descriptions are then tokenized and fed into a pre-trained, lightweight Transformer-based text encoder (as part of the Semantic Context Encoder) to generate high-dimensional semantic feature vectors.

- Feature Extraction and Fusion: The preprocessed skeleton sequences are processed by the Adaptive Spatio-Temporal Graph Feature Extractor to derive spatio-temporal features. Concurrently, the semantic descriptions yield corresponding semantic vectors. These two modalities are then deeply integrated within the Cross-Modal Adaptive Fusion Module, producing a semantically enriched and unified action representation.

- Domain Alignment and Classification: The model’s training is supervised by the Domain Alignment and Classification Module, which employs adversarial and contrastive learning to generate domain-invariant features. Finally, these aligned and fused features are passed to a classifier for predicting the ultimate action category.

4.2. Comparison with State-of-the-Art Methods

4.3. Ablation Study

- Base Model (M1): A standard GCN-based architecture with fixed graph structures serves as our baseline. Its performance, while respectable, leaves room for improvement, particularly on the more complex NTU RGB+D 120 X-Sub.

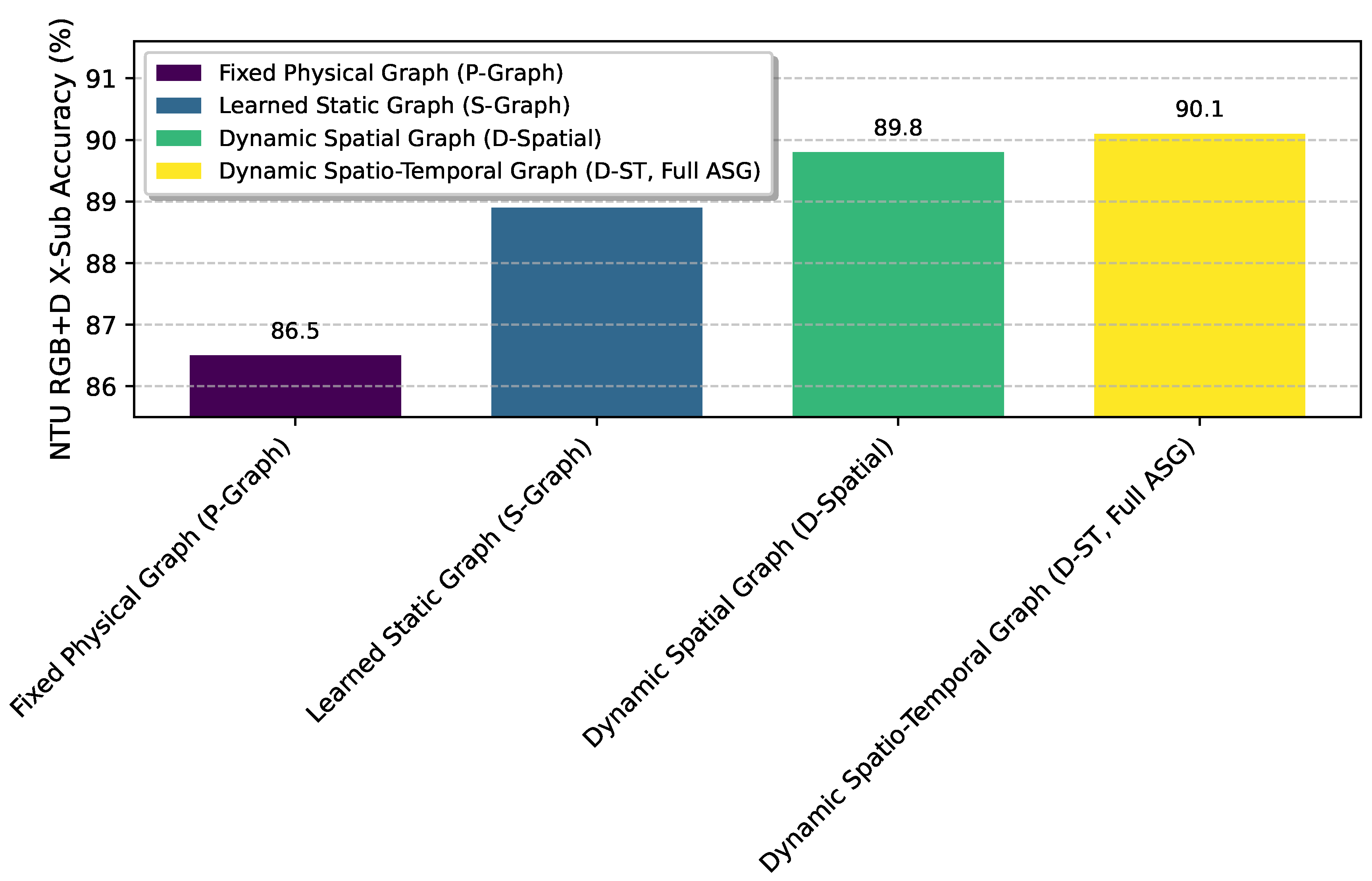

- Adaptive Spatio-Temporal Graph (ASG) (M2): Integrating the Adaptive Spatio-Temporal Graph Feature Extractor significantly boosts performance (from 86.5% to 90.1% on NTU RGB+D X-Sub). This demonstrates the critical role of dynamically learning graph structures to better capture the nuanced and context-dependent relationships between body joints in different actions.

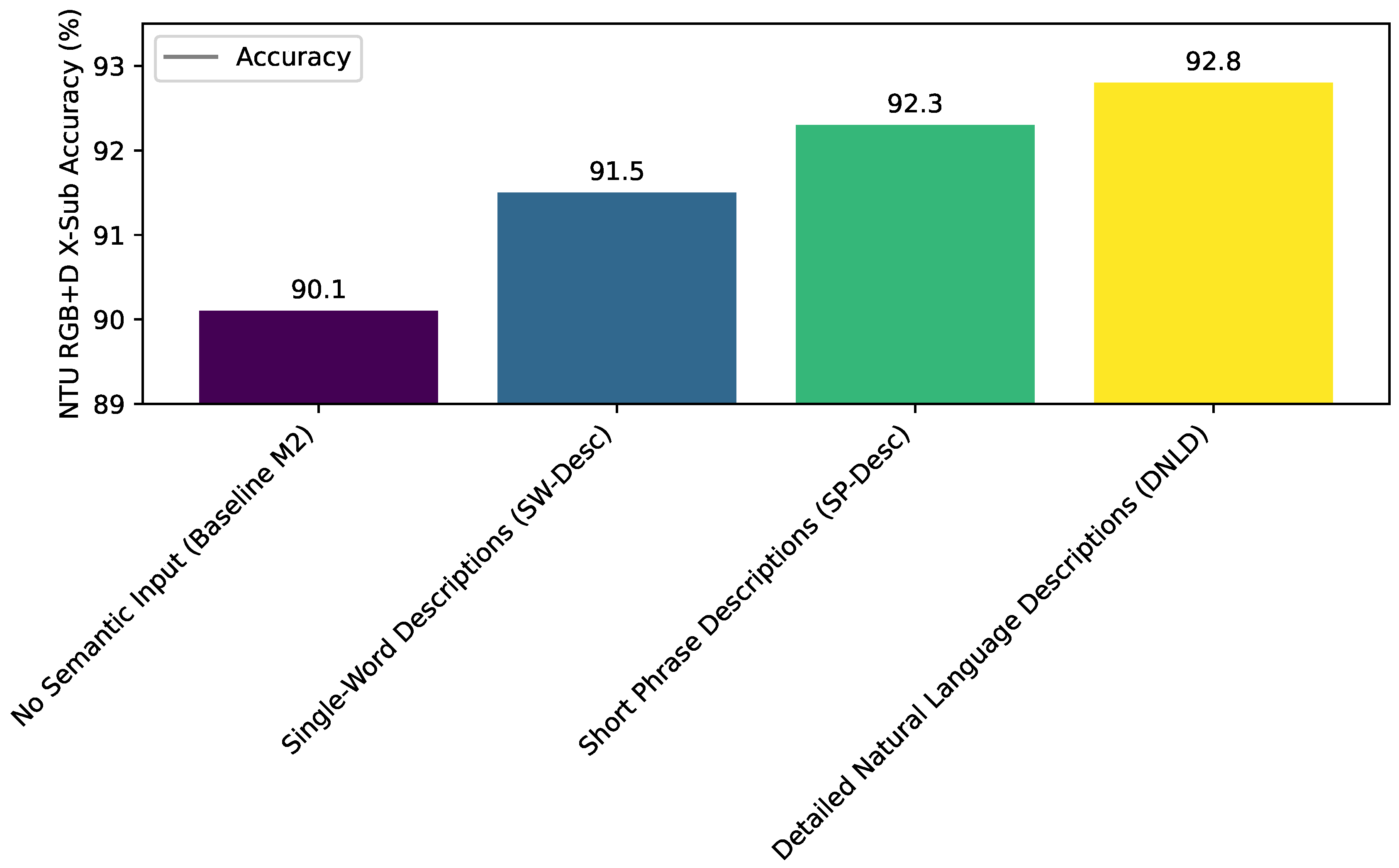

- Semantic Context Encoder and Fusion (SEM) (M3): Adding the Semantic Context Encoder and Fusion Module further enhances accuracy (92.8% on NTU RGB+D X-Sub). This improvement underscores the value of incorporating high-level semantic information from natural language descriptions, which helps resolve ambiguities and provides a richer understanding of action categories, especially for visually similar or complex movements.

- Domain Alignment (DA) (M4): When compared to M2, the inclusion of the Domain Alignment module also shows a notable increase in performance (91.5% on NTU RGB+D X-Sub), validating its efficacy in mitigating domain shift by learning robust, domain-invariant feature representations.

- Full CD-SEAFNet (M5): The complete CD-SEAFNet framework, combining ASG, SEM, and DA, achieves the best performance. The synergistic integration of all three modules leads to a substantial gain, highlighting that these components are not merely additive but interact to produce a more robust and semantically aware action recognition system. The highest accuracies of 94.2% and 86.8% confirm the holistic effectiveness of our design.

4.4. Analysis of Adaptive Graph Dynamics

4.5. Impact of Semantic Description Quality

4.6. Hyperparameter Sensitivity Analysis

4.7. Human Evaluation of Semantic Understanding

4.7.1. Methodology

- Base ST-GCN: A representative state-of-the-art model without any explicit semantic enhancement or adaptive graph mechanisms.

- CD-SEAFNet (Full): Our complete proposed framework.

4.7.2. Results

5. Conclusion

References

- Xu, H.; Yan, M.; Li, C.; Bi, B.; Huang, S.; Xiao, W.; Huang, F. E2E-VLP: End-to-End Vision-Language Pre-training Enhanced by Visual Learning. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 503–513. [CrossRef]

- Chen, J.; Yang, D. Structure-Aware Abstractive Conversation Summarization via Discourse and Action Graphs. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 1380–1391. [CrossRef]

- Lee, K.; Ippolito, D.; Nystrom, A.; Zhang, C.; Eck, D.; Callison-Burch, C.; Carlini, N. Deduplicating Training Data Makes Language Models Better. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2022, pp. 8424–8445. [CrossRef]

- Saxena, A.; Chakrabarti, S.; Talukdar, P. Question Answering Over Temporal Knowledge Graphs. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 6663–6676. [CrossRef]

- Zhou, Y. Sketch storytelling. In Proceedings of the ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2022, pp. 4748–4752.

- FitzGerald, J.; Hench, C.; Peris, C.; Mackie, S.; Rottmann, K.; Sanchez, A.; Nash, A.; Urbach, L.; Kakarala, V.; Singh, R.; et al. MASSIVE: A 1M-Example Multilingual Natural Language Understanding Dataset with 51 Typologically-Diverse Languages. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers). Association for Computational Linguistics, 2023, pp. 4277–4302. [CrossRef]

- Joshi, A.; Bhat, A.; Jain, A.; Singh, A.; Modi, A. COGMEN: COntextualized GNN based Multimodal Emotion recognitioN. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2022, pp. 4148–4164. [CrossRef]

- Tian, Y.; Chen, G.; Song, Y. Aspect-based Sentiment Analysis with Type-aware Graph Convolutional Networks and Layer Ensemble. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 2910–2922. [CrossRef]

- Shen, W.; Wu, S.; Yang, Y.; Quan, X. Directed Acyclic Graph Network for Conversational Emotion Recognition. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 1551–1560. [CrossRef]

- Qin, L.; Wei, F.; Xie, T.; Xu, X.; Che, W.; Liu, T. GL-GIN: Fast and Accurate Non-Autoregressive Model for Joint Multiple Intent Detection and Slot Filling. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 178–188. [CrossRef]

- Fan, Z.; Gong, Y.; Liu, D.; Wei, Z.; Wang, S.; Jiao, J.; Duan, N.; Zhang, R.; Huang, X. Mask Attention Networks: Rethinking and Strengthen Transformer. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 1692–1701. [CrossRef]

- Cao, M.; Chen, L.; Shou, M.Z.; Zhang, C.; Zou, Y. On Pursuit of Designing Multi-modal Transformer for Video Grounding. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 9810–9823. [CrossRef]

- Yang, J.; Wang, Y.; Yi, R.; Zhu, Y.; Rehman, A.; Zadeh, A.; Poria, S.; Morency, L.P. MTAG: Modal-Temporal Attention Graph for Unaligned Human Multimodal Language Sequences. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 1009–1021. [CrossRef]

- Zhang, D.; Li, S.; Zhang, X.; Zhan, J.; Wang, P.; Zhou, Y.; Qiu, X. SpeechGPT: Empowering Large Language Models with Intrinsic Cross-Modal Conversational Abilities. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023. Association for Computational Linguistics, 2023, pp. 15757–15773. [CrossRef]

- Zhou, Y.; Geng, X.; Shen, T.; Tao, C.; Long, G.; Lou, J.G.; Shen, J. Thread of thought unraveling chaotic contexts. arXiv preprint arXiv:2311.08734 2023.

- Zhou, Y.; Li, X.; Wang, Q.; Shen, J. Visual In-Context Learning for Large Vision-Language Models. In Proceedings of the Findings of the Association for Computational Linguistics, ACL 2024, Bangkok, Thailand and virtual meeting, August 11-16, 2024. Association for Computational Linguistics, 2024, pp. 15890–15902.

- Li, Z.; Tang, F.; Zhao, M.; Zhu, Y. EmoCaps: Emotion Capsule based Model for Conversational Emotion Recognition. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2022. Association for Computational Linguistics, 2022, pp. 1610–1618. [CrossRef]

- Hardalov, M.; Arora, A.; Nakov, P.; Augenstein, I. Cross-Domain Label-Adaptive Stance Detection. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 9011–9028. [CrossRef]

- Yu, T.; Liu, Z.; Fung, P. AdaptSum: Towards Low-Resource Domain Adaptation for Abstractive Summarization. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 5892–5904. [CrossRef]

- Röttger, P.; Pierrehumbert, J. Temporal Adaptation of BERT and Performance on Downstream Document Classification: Insights from Social Media. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021. Association for Computational Linguistics, 2021, pp. 2400–2412. [CrossRef]

- Zhou, Y.; Zheng, X.; Hsieh, C.J.; Chang, K.W.; Huang, X. Defense against Synonym Substitution-based Adversarial Attacks via Dirichlet Neighborhood Ensemble. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 5482–5492. [CrossRef]

- Tian, Z.; Lin, Z.; Zhao, D.; Zhao, W.; Flynn, D.; Ansari, S.; Wei, C. Evaluating scenario-based decision-making for interactive autonomous driving using rational criteria: A survey. arXiv preprint arXiv:2501.01886 2035.

- Lin, Z.; Tian, Z.; Lan, J.; Zhao, D.; Wei, C. Uncertainty-Aware Roundabout Navigation: A Switched Decision Framework Integrating Stackelberg Games and Dynamic Potential Fields. IEEE Transactions on Vehicular Technology 2025, pp. 1–13. [CrossRef]

- Zheng, L.; Tian, Z.; He, Y.; Liu, S.; Chen, H.; Yuan, F.; Peng, Y. Enhanced mean field game for interactive decision-making with varied stylish multi-vehicles. arXiv preprint arXiv:2509.00981 2035.

| Method | NRD X-Sub | NRD X-View | NRD 120 X-Sub | NRD 120 X-Set |

|---|---|---|---|---|

| ST-GCN | 85.7 | 92.4 | 82.1 | 84.5 |

| Shift-GCN | 87.8 | 95.1 | 80.9 | 83.2 |

| InfoGCN | 89.8 | 95.2 | 85.1 | 86.3 |

| PoseC3D | 93.7 | 96.5 | 85.9 | 89.7 |

| FR-Head | 90.3 | 95.3 | 85.5 | 87.3 |

| Koopman | 90.2 | 95.2 | 85.7 | 87.4 |

| GAP | 90.2 | 95.6 | 85.5 | 87.0 |

| HD-GCN | 90.6 | 95.7 | 85.7 | 87.3 |

| STC-Net | 91.0 | 96.2 | 86.2 | 88.0 |

| Ours (CD-SEAFNet) | 94.2 | 97.1 | 86.8 | 90.3 |

| ID | Configuration | NRD X-Sub | NRD 120 X-Sub |

|---|---|---|---|

| M1 | Base Model (Static GCN) | 86.5 | 81.2 |

| M2 | M1 + Adaptive Spatio-Temporal Graph (ASG) | 90.1 | 84.0 |

| M3 | M2 + Semantic Context Encoder and Fusion (SEM) | 92.8 | 85.5 |

| M4 | M2 + Domain Alignment (DA) | 91.5 | 84.8 |

| M5 | Full CD-SEAFNet (M2 + SEM + DA) | 94.2 | 86.8 |

| (Adv. Loss Weight) | (Cont. Loss Weight) | NTU RGB+D X-Sub Accuracy |

|---|---|---|

| 0.0 | 0.0 | 92.8 |

| 0.1 | 0.1 | 93.3 |

| 0.1 | 0.2 | 93.5 |

| 0.2 | 0.1 | 93.6 |

| 0.2 | 0.2 | 94.2 |

| 0.2 | 0.3 | 94.0 |

| 0.3 | 0.2 | 93.9 |

| 0.3 | 0.3 | 93.7 |

| Method | Accuracy on Ambiguous Pairs |

|---|---|

| Human Evaluators | 96.1 |

| Base ST-GCN | 78.5 |

| Ours (CD-SEAFNet) | 91.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.