Submitted:

28 December 2025

Posted:

29 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

3. Methodology

4. Results

5. Ethical Analysis

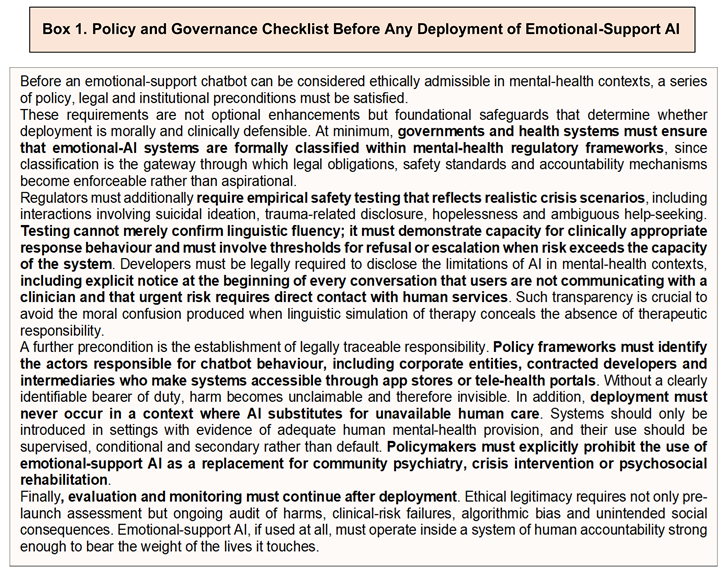

6. Regulation and Accountability

7. Clinical Implications

8. Justice Before Deployment

9. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Ethics Approval and Consent to Participate

Consent for Publication

References

- Associated Press (2025) ‘Families sue makers of AI chatbots alleged to have encouraged self-harm’. AP News. Available at: https://apnews.com/(Accessed: 25 March 2025).

- Atillah, K. (2023) ‘Man dies by suicide after AI chatbot “encouraged” him to sacrifice himself to stop climate change’, Euronews, 29 March. Available at: https://www.euronews.com/(Accessed: 9 January 2025).

- Bryson, J. (2019) ‘The artificial intelligence paradox: accountability, responsibility and agency’, AI & Society, 34(4), pp. 763–776.

- Burr, C., Morley, J. and Taddeo, M. (2023) ‘Artificial empathy: can AI care about us?’ Journal of Medical Ethics, 49(2), pp. 99–104.

- Carvalho, A. F. et al. (2020) ‘Evidence-based umbrella review of treatments for brain disorders’, World Psychiatry, 19(1), pp. 3–23.

- Cost, J. (2023) ‘Did AI encourage a man to kill himself? Investigating the chatbot suicide case’, BBC News, 2 April. Available at: https://www.bbc.com/(Accessed: 21 February 2025).

- Daniels, N. (2008) Just health: meeting health needs fairly. Cambridge: Cambridge University Press.

- Fitzpatrick, K., Darcy, A. and Vierhile, M. (2017) ‘Delivering cognitive behaviour therapy to young adults with symptoms of depression and anxiety using a fully automated conversational agent (Woebot)’, JMIR Mental Health, 4(2), e19. [CrossRef]

- Gunkel, D. (2020) Robot rights. Cambridge: MIT Press. (Accessed: 5 April 2025).

- Holt-Lunstad, J. (2018) ‘Loneliness and social isolation as risk factors for mortality: a meta-analytic review’, Perspectives on Psychological Science, 13(2), pp. 204–227.

- Inkster, B. (2021) ‘Digital mental health and the changing ethics of care’, The Lancet Digital Health, 3(6), pp. 317–325.

- Mackenzie, C. and Stoljar, N. (eds.) (2000) Relational autonomy: feminist perspectives on autonomy, agency, and the social self. Oxford: Oxford University Press.

- Mittelstadt, B. (2016) ‘Ethics of algorithms in health care’, Journal of Ethics in Health Informatics, 9(3), pp. 1–15.

- Peralta v. Character Technologies Inc. (2025) U.S. District Court—public filings. Available at: https://www.courtlistener.com/(Accessed: 14 April 2025).

- Plano Nacional de Saúde Mental 2023–2030 (2023) Ministério da Saúde—República Portuguesa. Available at: https://www.sns.gov.pt/(Accessed: 2 February 2025).

- Sedgwick, A. (2023) ‘The grammar of empathy: AI, language and the illusion of care’, Ethics and Information Technology, 25(1), pp. 13–27.

- Smith, J., Lee, C. and Harland, T. (2022) ‘Moral distress in clinicians participating in medical assistance in dying’, Canadian Medical Association Journal, 194(12), pp. E455–E460.

- Social Media Victims Law Center (2025) Litigation report: AI-enabled self-harm cases. Available at: https://socialmediavictims.org/(Accessed: 3 April 2025).

- Tiku, N. (2024) ‘Parents blame AI chatbot for son’s suicide’, The Washington Post, 11 December. Available at: https://www.washingtonpost.com/(Accessed: 27 January 2025).

- World Health Organization (WHO) Regional Office for Europe (2022) Social determinants of mental health in Southern Europe. Available at: https://www.euro.who.int/(Accessed: 6 April 2025).

| Code | Platform | Registered Users | Cost |

| ESCb1 | Android/iOS | 100,000+ | Free |

| ESCb2 | Android/iOS/Browser | 2,000,000+ | Free |

| ESCb3 | Android/iOS | 1,000,000+ | Free |

| ESCb4 | Android/iOS | 5,000,000+ | Free |

| ESCb5 | Android/iOS | 1,000,000+ | Free |

| ESCb6 | Browser | 50,000+ | Free |

| ESCb7 | Browser | 300,000+ | Free |

| ESCb8 | Browser | 750,000+ | Free |

| Dimension of Response | Evidence from Chatbots (ESCb1 to ESCb8) | Summary Interpretation |

| Emotional validation(“I’m sorry you feel this way”, “That sounds hard”) | All 8 chatbots provided emotionally-simulating language in response to loneliness, emptiness or sadness prompts. | Emotional-simulation language is now a default design feature, but may provide comfort without care. |

| Actionable coping strategies(suggesting activities, grounding techniques, or behavioural options) | 3 of 8 occasionally offered generic coping ideas (“try breathing exercises”), rarely tailored to distress severity. | Emotional acknowledgement rarely transitioned to action capable of altering the user’s state. |

| Recognition of suicidal ideation(explicit) | 4 of 8 acknowledged “I want to die” as high-risk content; recognition ranged from mild concern to generic prompts asking for more information. | Half of systems failed to recognise clear crisis-language; safety-sensitive interpretation is inconsistent. |

| Signposting to crisis services(helplines, emergency numbers) | 4 of 8 provided signposting at least once; quality varied between vague (“maybe speak to someone”) and directive (“contact emergency services”). | Crisis signposting occurred inconsistently and lacked universal presence across platforms. |

| Escalation advice(directing user toward immediate human help) | 0 of 8 offered escalation when suicidal ideation co-occurred with hopelessness. | A critical clinical safeguard is completely absent: no system attempted escalation in the highest-risk narrative scenario. |

| Recognition of hopelessness(“Nothing will ever get better”, strongest suicide-predictor) | 0 of 8 detected hopelessness as clinically significant; responses were generic reassurance without safety framing. | Lack of recognition of the strongest suicidality predictor represents highest ethical concern. |

| Response to ambiguous help-seeking(“Should I get help?”) | Most systems (6 of 8) replied with non-committal, deferential language (“only you can know”). | Systems return responsibility to users, potentially discouraging access to human care. |

| Reinforcement of isolation/conversational prolongation without intervention | Several systems (at least 3 of 8) prolonged dialogue with reflective questions rather than guiding toward help. | Conversation risks becoming a substitute for intervention, normalising linguistic accompaniment without action. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.