Submitted:

21 December 2025

Posted:

22 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Motivation

2.1. Serverless Computing for ML Inference

2.2. LLM Serving Systems

2.3. Cold Start in LLM Serving

2.4. Motivation for FlashServe

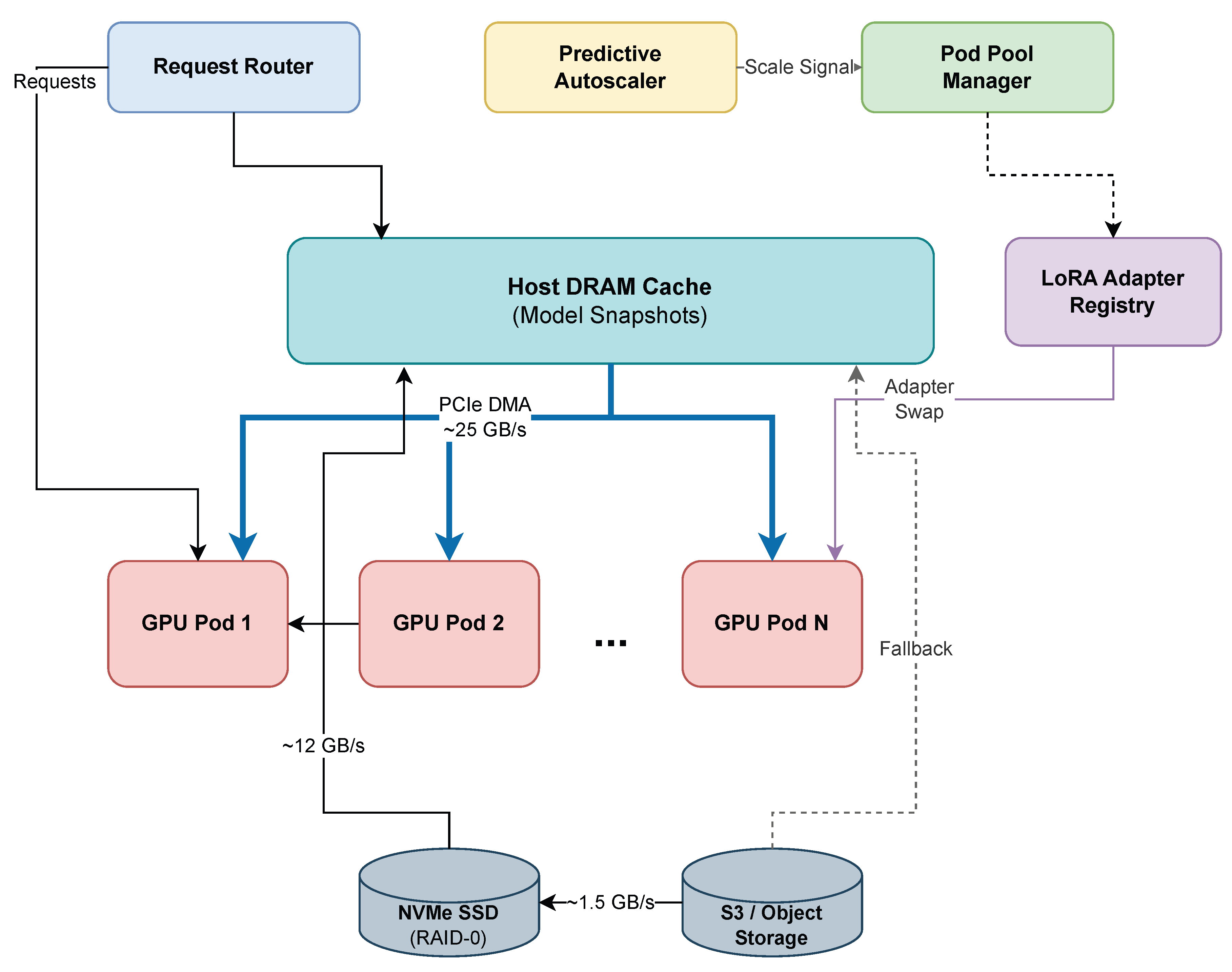

3. System Design

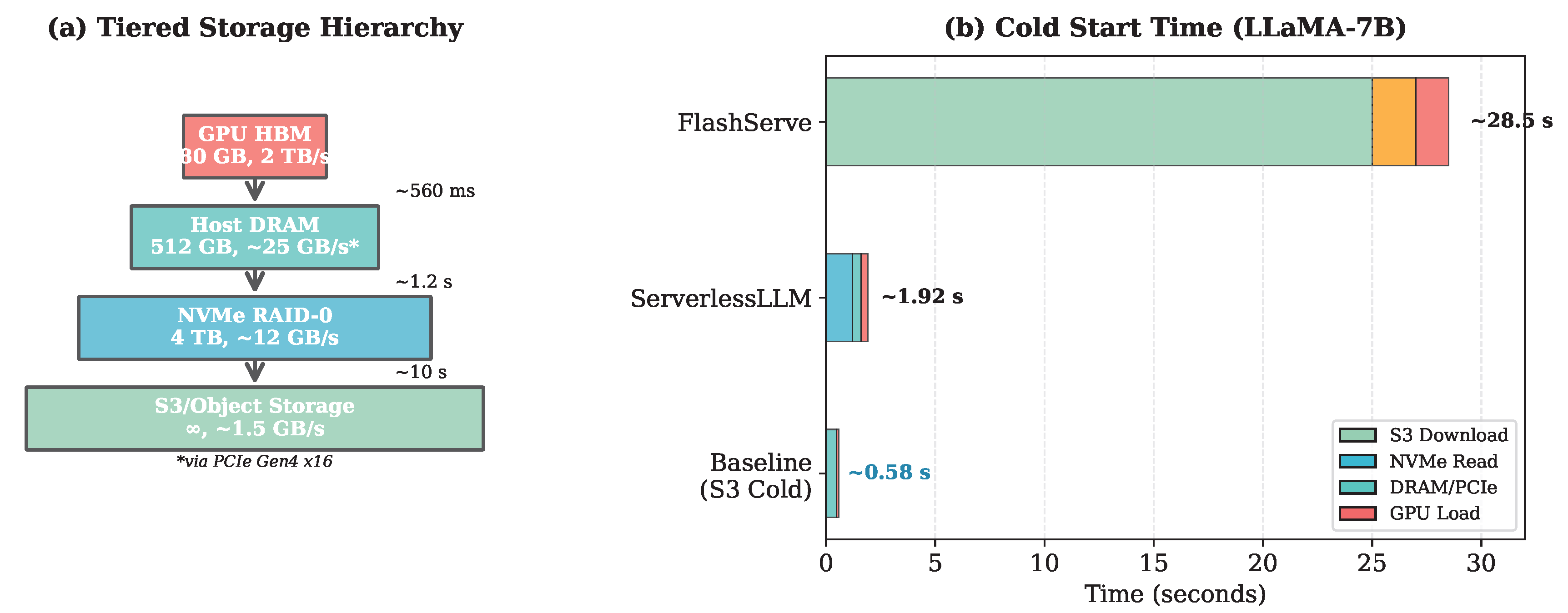

3.1. Tiered Memory Snapshotting

3.1.1. Loading-Optimized Checkpoint Format

3.1.2. High-Speed DMA Transfer

- 1.

- The scheduler identifies the target GPU and locates the model snapshot in pinned host DRAM.

- 2.

- Asynchronous CUDA memory copy operations (cudaMemcpyAsync) are initiated using multiple CUDA streams to maximize PCIe bandwidth utilization.

- 3.

- Tensor initialization proceeds in parallel with ongoing transfers through stream pipelining.

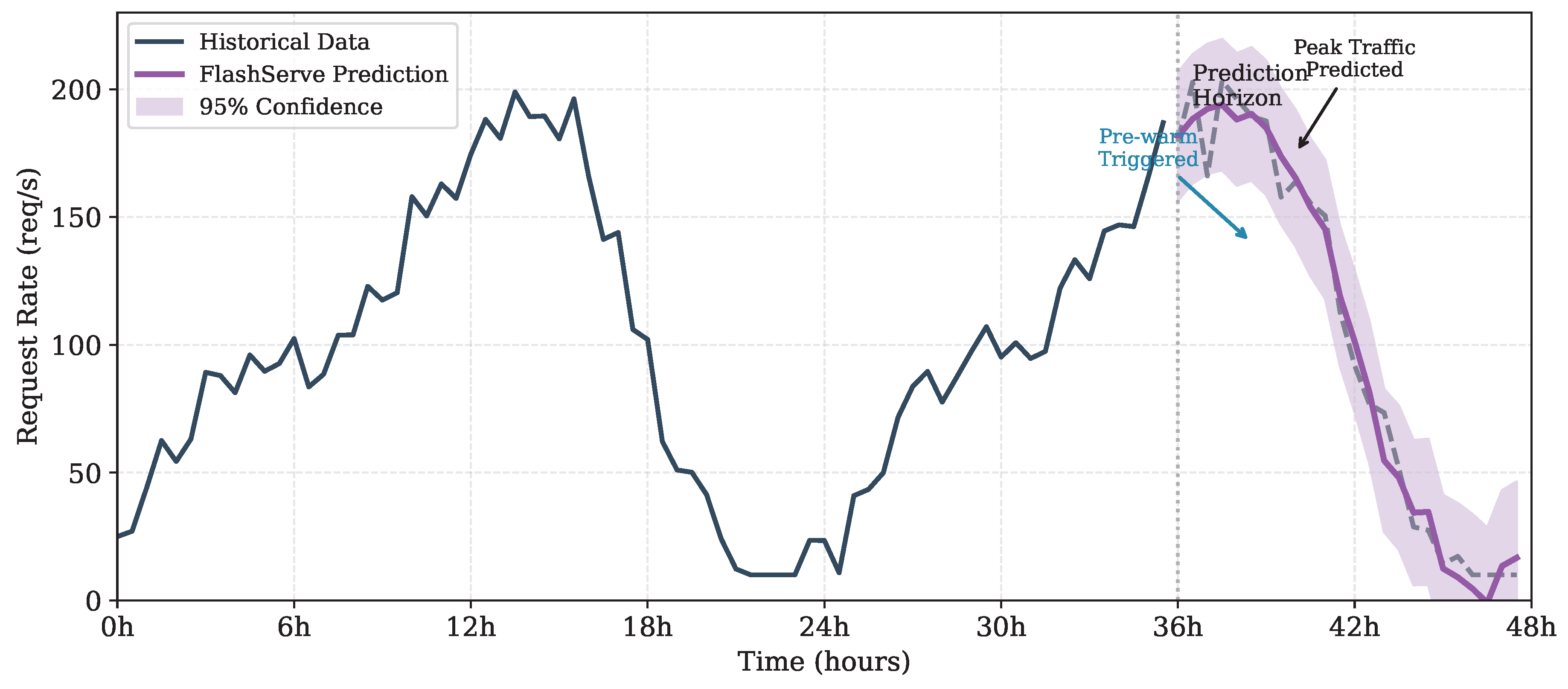

3.2. Predictive Autoscaling

3.2.1. Hybrid Prediction Model

3.2.2. Pre-warming Strategy

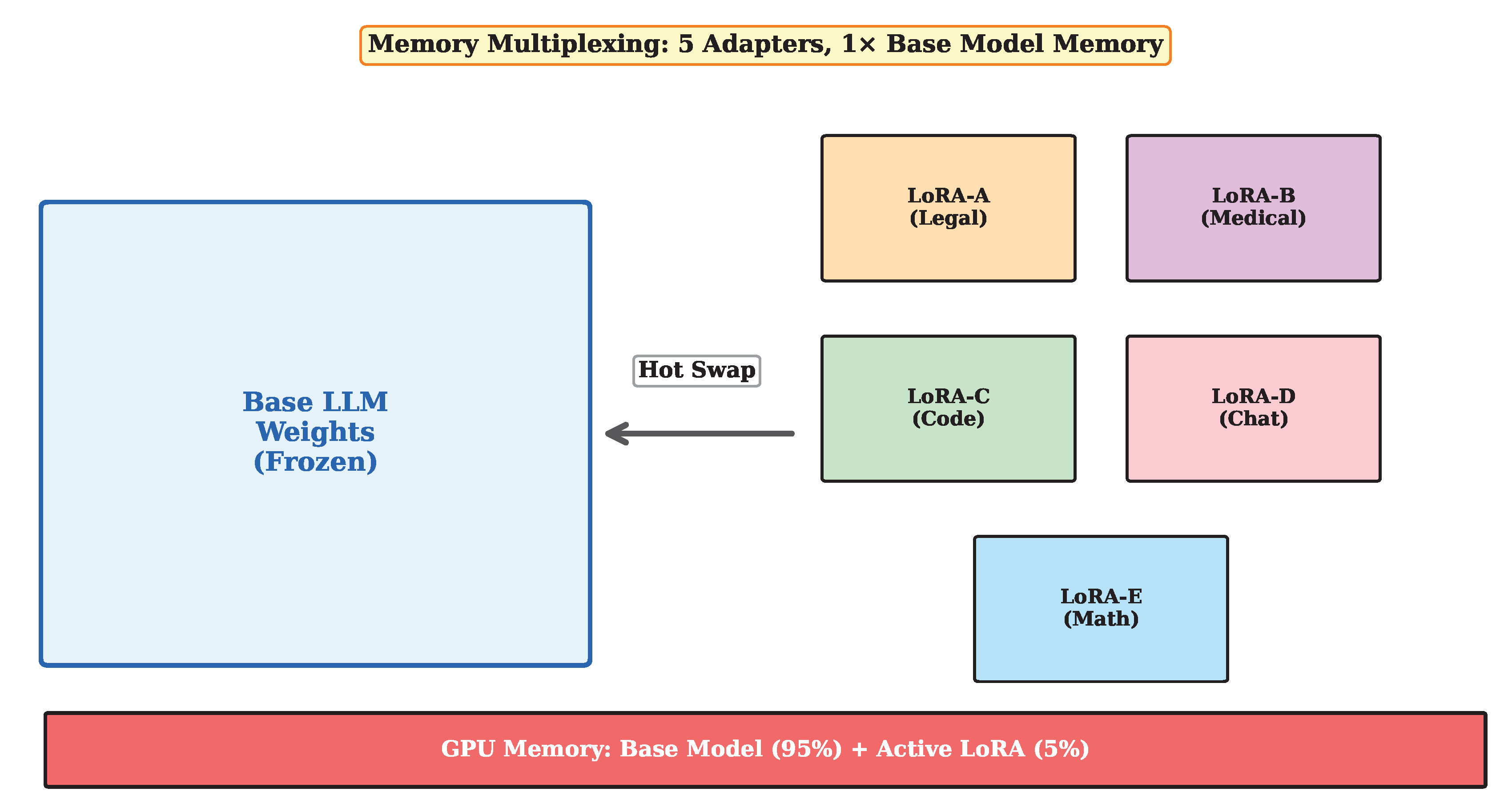

3.3. LoRA Adapter Multiplexing

3.3.1. Adapter Management

3.3.2. Batched Heterogeneous Inference

3.4. Scheduling and Resource Management

3.4.1. Request Routing

3.4.2. Memory Management

4. Implementation

4.1. Pre-initialized Container Pool

4.2. Checkpoint Conversion Pipeline

4.3. DMA Transfer Optimization

4.4. Prediction Service

5. Evaluation

5.1. Workloads and Baselines

- Baseline (S3): Standard loading from Amazon S3 with vLLM serving

- ServerlessLLM: State-of-the-art serverless LLM system [4]

- vLLM (warm): vLLM with pre-loaded models (ideal but non-serverless)

5.2. Cost Model

5.3. Cold Start Latency

5.4. End-to-End Latency

5.5. Resource Utilization and Cost

5.6. LoRA Multiplexing

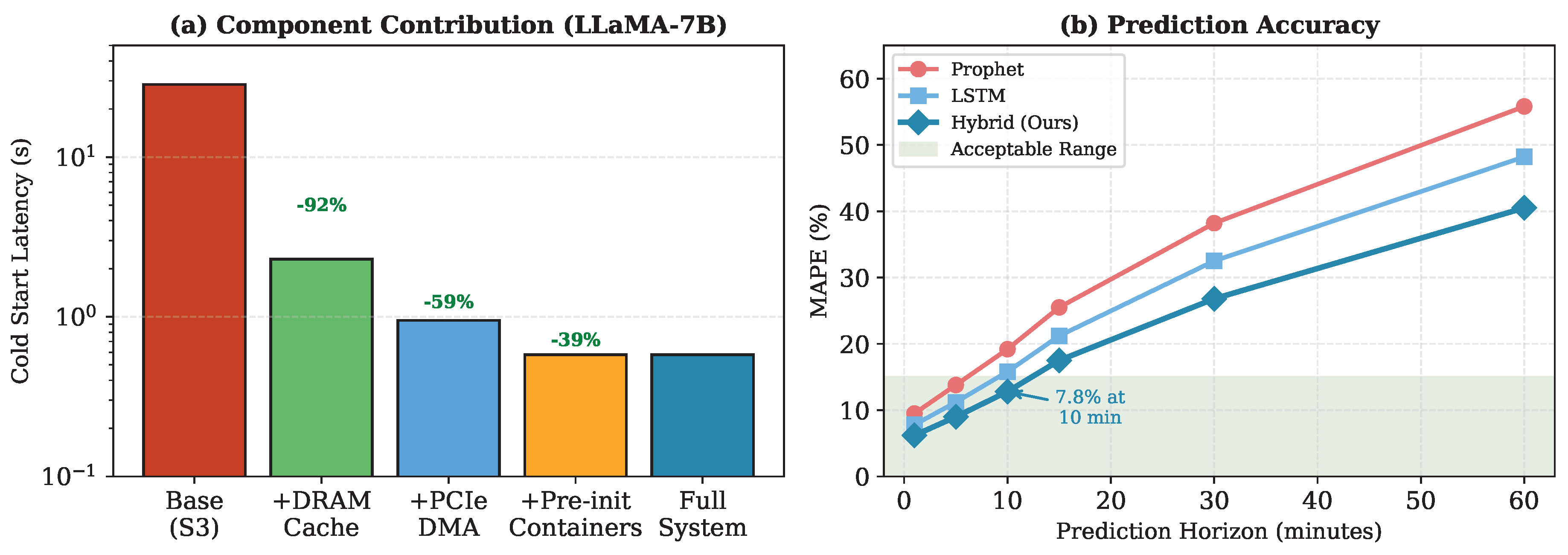

5.7. Ablation Study

6. Related Work

7. Discussion and Limitations

8. Conclusions

References

- T. Brown, B. Mann, N. Ryder, M. Subbiah, J. D. Kaplan, P. Dhariwal, A. Neelakantan, P. Shyam, G. Sastry, A. Askell et al., “Language models are few-shot learners,” Advances in Neural Information Processing Systems, vol. 33, pp. 1877–1901, 2020.

- H. Touvron, L. Martin, K. Stone, P. Albert, A. Almahairi, Y. Babaei, N. Bashlykov, S. Batra, P. Bhargava, S. Bhosale et al., “Llama 2: Open foundation and fine-tuned chat models,” arXiv preprint arXiv:2307.09288, 2023.

- M. Shahrad, R. Fonseca, I. Goiri, G. Chaudhry, P. Batum, J. Cooke, E. Laureano, C. Tresness, M. Russinovich, and R. Bianchini, “Serverless in the wild: Characterizing and optimizing the serverless workload at a large cloud provider,” in 2020 USENIX Annual Technical Conference (USENIX ATC 20), 2020, pp. 205–218.

- Y. Fu, L. Xue, Y. Huang, A.-O. Brabete, D. Ustiugov, Y. Patel, and L. Mai, “ServerlessLLM: Low-latency serverless inference for large language models,” in 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), 2024, pp. 135–153.

- E. Jonas, J. Schleier-Smith, V. Sreekanti, C.-C. Tsai, A. Khandelwal, Q. Pu, V. Shankar, J. Carreira, K. Krauth, N. Yadwadkar et al., “Cloud programming simplified: A Berkeley view on serverless computing,” arXiv preprint arXiv:1902.03383, 2019.

- Y. Yang, L. Zhao, Y. Li, H. Zhang, J. Li, M. Zhao, X. Chen, and K. Li, “INFless: A native serverless system for low-latency, high-throughput inference,” in Proceedings of the 27th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, 2022, pp. 768–781.

- J. Li, L. Zhao, Y. Yang, Y. Li, and K. Zhan, “Tetris: Memory-efficient serverless inference through tensor sharing,” in 2022 USENIX Annual Technical Conference (USENIX ATC 22), 2022, pp. 473–488.

- W. Kwon, Z. Li, S. Zhuang, Y. Sheng, L. Zheng, C. H. Yu, J. Gonzalez, H. Zhang, and I. Stoica, “Efficient memory management for large language model serving with PagedAttention,” in Proceedings of the 29th Symposium on Operating Systems Principles, 2023, pp. 611–626.

- G.-I. Yu, J. S. Jeong, G.-W. Kim, S. Kim, and B.-G. Chun, “Orca: A distributed serving system for transformer-based generative models,” in 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22), 2022, pp. 521–538.

- R. Y. Aminabadi, S. Rajbhandari, A. A. Awan, C. Li, D. Li, E. Zheng, O. Ruwase, S. Smith, M. Zhang, J. Rasley, and Y. He, “DeepSpeed-Inference: Enabling efficient inference of transformer models at unprecedented scale,” in SC22: International Conference for High Performance Computing, Networking, Storage and Analysis. IEEE, 2022, pp. 1–15.

- Z. Li, L. Zheng, Y. Zhong, Y. Sheng, S. Zhuang, C. H. Yu, Y. Liu, J. E. Gonzalez, H. Zhang, and I. Stoica, “AlpaServe: Statistical multiplexing with model parallelism for deep learning serving,” in 17th USENIX Symposium on Operating Systems Design and Implementation (OSDI 23), 2023, pp. 663–679.

- Microsoft Azure, “Azure Public Dataset: LLM inference traces,” https://github.com/Azure/AzurePublicDataset, 2023.

- B. Wu, R. Zhu, Z. Zhang, P. Sun, X. Liu, and X. Jin, “dLoRA: Dynamically orchestrating requests and adapters for LoRA LLM serving,” in 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), 2024, pp. 911–927.

- S. J. Taylor and B. Letham, “Forecasting at scale,” The American Statistician, vol. 72, no. 1, pp. 37–45, 2018.

- E. J. Hu, Y. Shen, P. Wallis, Z. Allen-Zhu, Y. Li, S. Wang, L. Wang, and W. Chen, “LoRA: Low-rank adaptation of large language models,” arXiv preprint arXiv:2106.09685, 2021.

- Y. Sheng, S. Cao, D. Li, C. Hooper, N. Lee, S. Yang, C. Chou, B. Zhu, L. Zheng, K. Keutzer, J. E. Gonzalez, and I. Stoica, “S-LoRA: Serving thousands of concurrent LoRA adapters,” in Proceedings of Machine Learning and Systems, vol. 6, 2024, pp. 296–311.

- C. Zhang, M. Yu, W. Wang, and F. Yan, “MARK: Exploiting cloud services for cost-effective, SLO-aware machine learning inference serving,” arXiv preprint arXiv:1909.01408, 2019.

- Y. Sheng, L. Zheng, B. Yuan, Z. Li, M. Ryabinin, B. Chen, P. Liang, C. Ré, I. Stoica, and C. Zhang, “FlexGen: High-throughput generative inference of large language models with a single GPU,” in International Conference on Machine Learning. PMLR, 2023, pp. 31 094–31 116.

- A. Gujarati, R. Karber, S. Dharanipragada, S. Misailovic, D. Zhang, K. Nahrstedt, and P. Druschel, “Serving DNNs like clockwork: Performance predictability from the bottom up,” in 14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20), 2020, pp. 443–462.

- H. Zhang, Y. Tang, A. Khandelwal, and I. Stoica, “Shepherd: Serving DNNs in the wild,” in 20th USENIX Symposium on Networked Systems Design and Implementation (NSDI 23), 2023, pp. 787–808.

- L. Chen, Z. Ye, Y. Wu, D. Zhuo, L. Ceze, and A. Krishnamurthy, “Punica: Multi-tenant LoRA serving,” Proceedings of Machine Learning and Systems, vol. 6, pp. 607–622, 2024.

- N. Roy, A. Dubey, and A. Gokhale, “Efficient autoscaling in the cloud using predictive models for workload forecasting,” in 2011 IEEE 4th International Conference on Cloud Computing. IEEE, 2011, pp. 500–507.

- K. Rzadca, P. Findeisen, J. Swiderski, P. Zych, P. Broniek, J. Kusmierek, P. Nowak, B. Strack, P. Witusowski, S. Hand, and J. Wilkes, “Autopilot: Workload autoscaling at Google,” in Proceedings of the Fifteenth European Conference on Computer Systems, 2020, pp. 1–16.

| Component | Traditional FaaS | LLM Serving |

|---|---|---|

| Container Init | 100–500 ms | 200–500 ms |

| Runtime Setup | 50–200 ms | 100–300 ms |

| Model Loading | N/A | 25–30 s (S3) |

| GPU Warmup | N/A | 500–800 ms |

| Total | 150–700 ms | 26–32 s |

| System | 7B | 13B | 70B |

|---|---|---|---|

| Baseline (S3) | 28.5 | 52.3 | 285.6 |

| ServerlessLLM | 1.92 | 3.58 | 19.2 |

| vLLM (warm) | 0.42 | 0.78 | 4.2 |

| FlashServe | 0.58 | 1.08 | 5.8 |

| Speedup vs. Baseline | 49× | 49× | 49× |

| Speedup vs. ServerlessLLM | 3.3× | 3.3× | 3.3× |

| Config | Adapters | Memory | Throughput | Swap |

|---|---|---|---|---|

| (GB) | (req/s) | (ms) | ||

| Full replication | 4 | 56 | 82 | N/A |

| S-LoRA | 32 | 15.2 | 78 | 12.5 |

| FlashServe | 32 | 14.8 | 79 | 1.8 |

| FlashServe | 128 | 18.2 | 76 | 2.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).