Submitted:

11 December 2025

Posted:

11 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

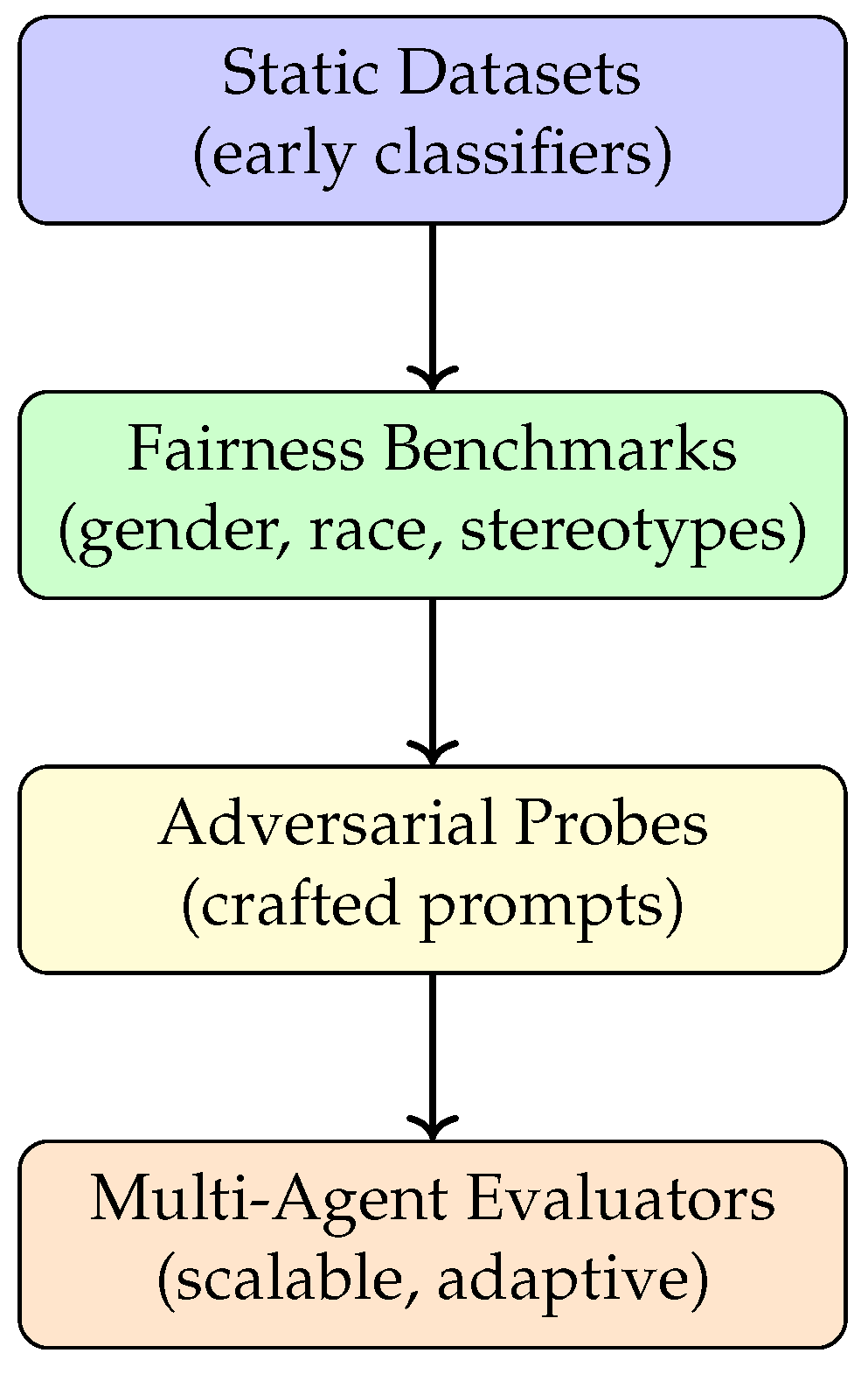

2. Background and Related Work

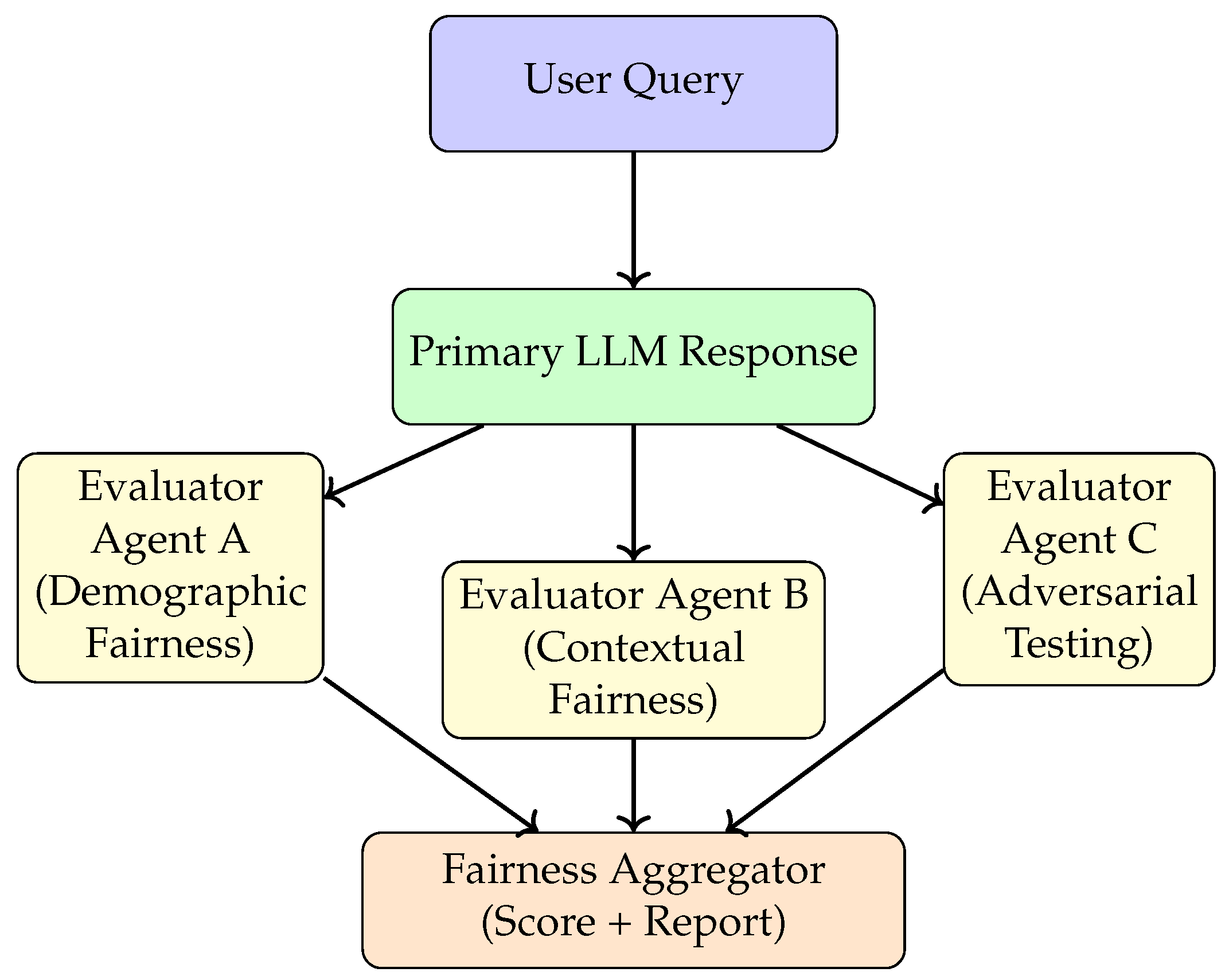

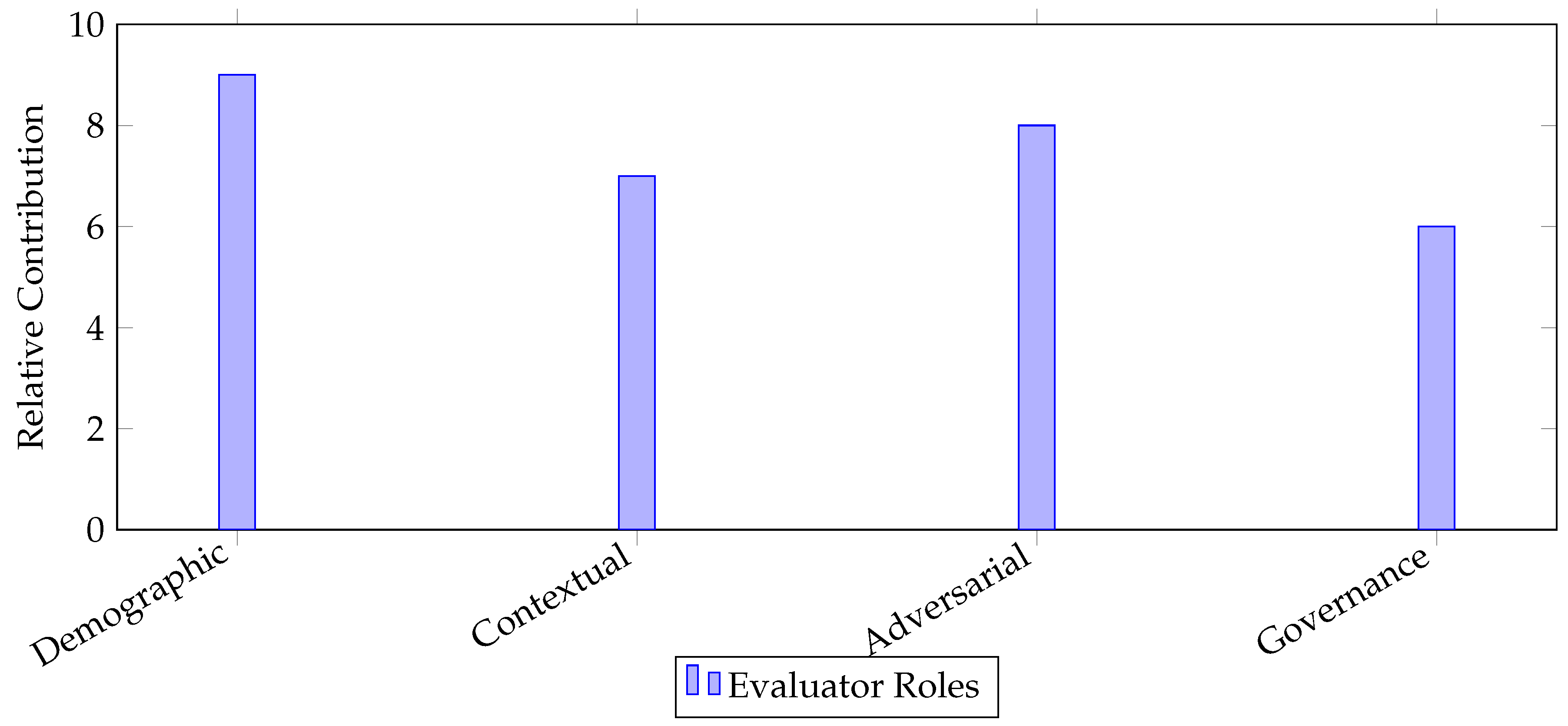

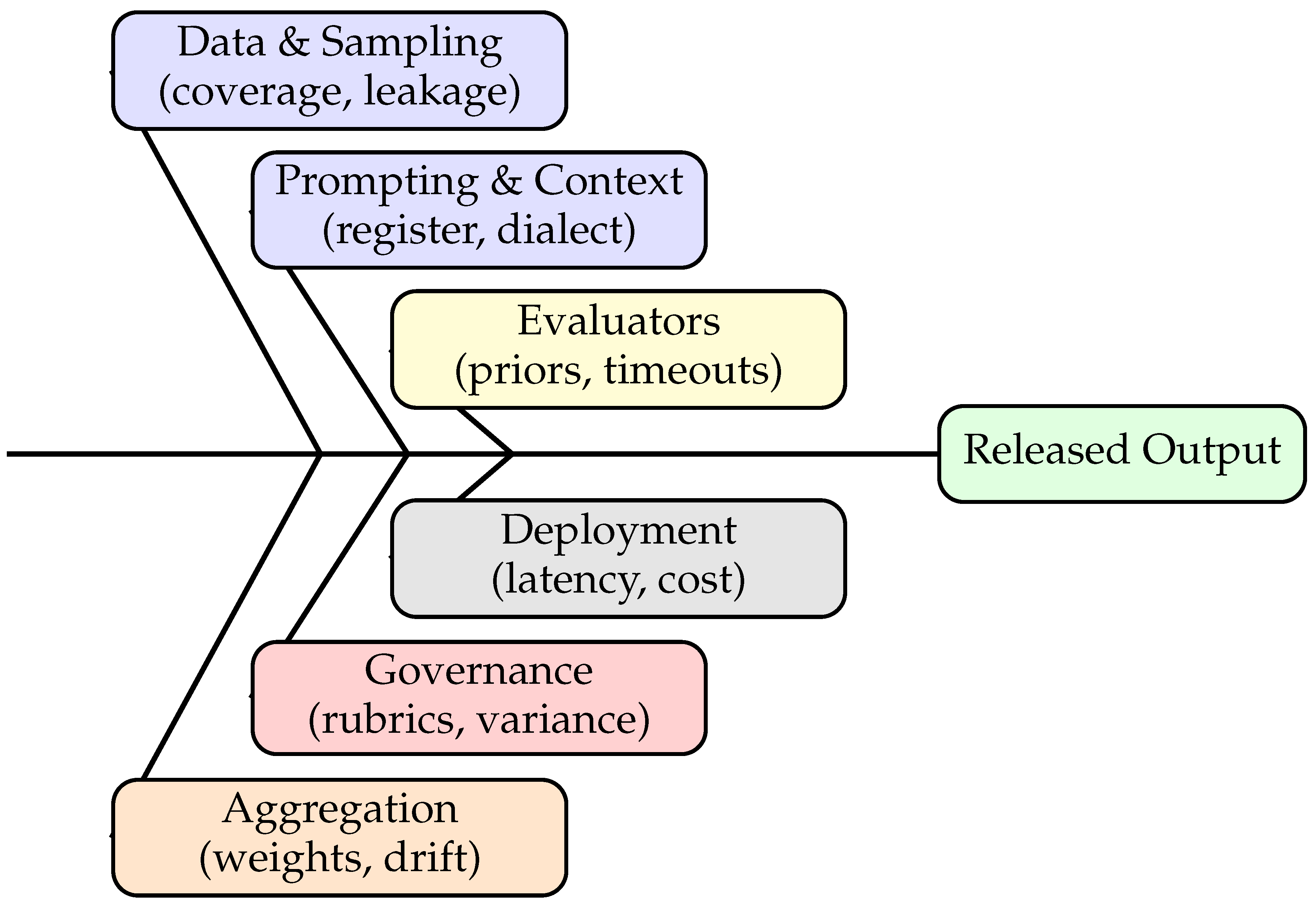

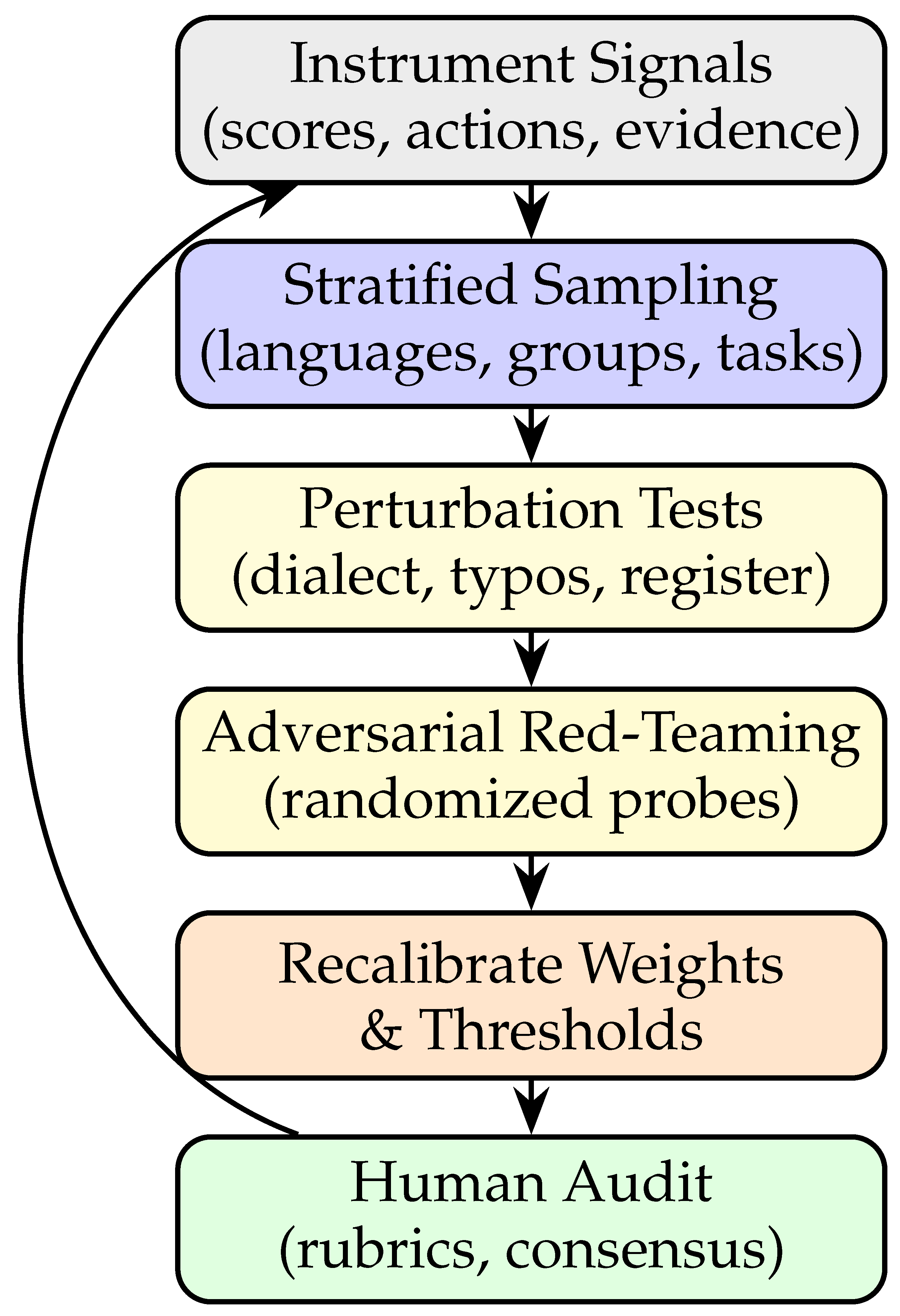

3. Proposed Multi-Agent Fairness Evaluation Framework

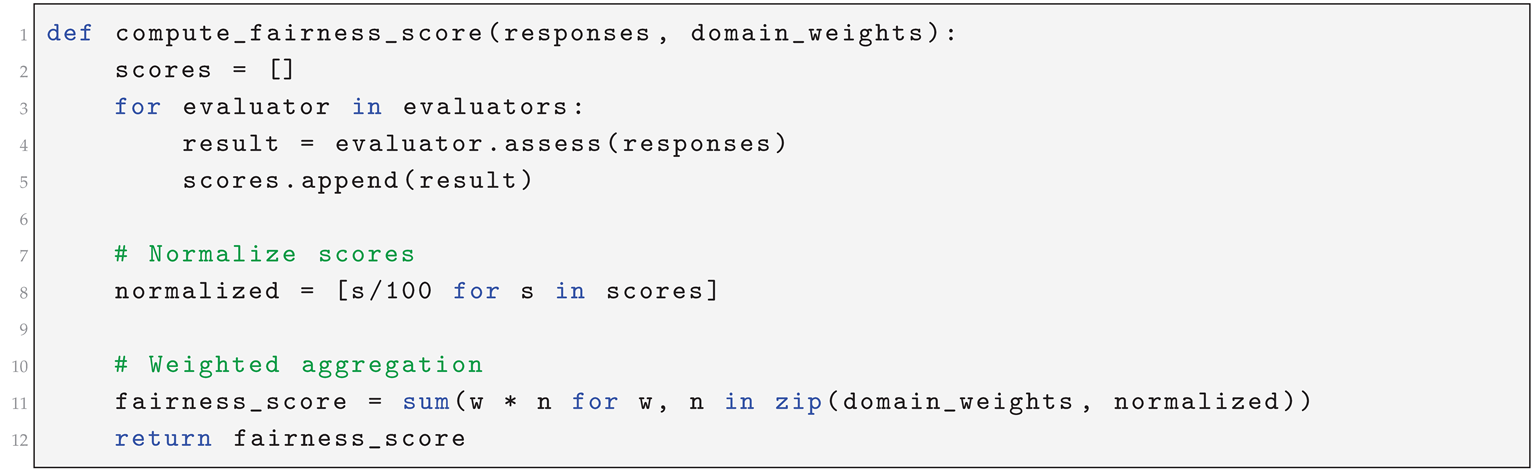

| Listing 1: Pseudocode for composite fairness scoring across evaluator agents |

|

4. Case Studies

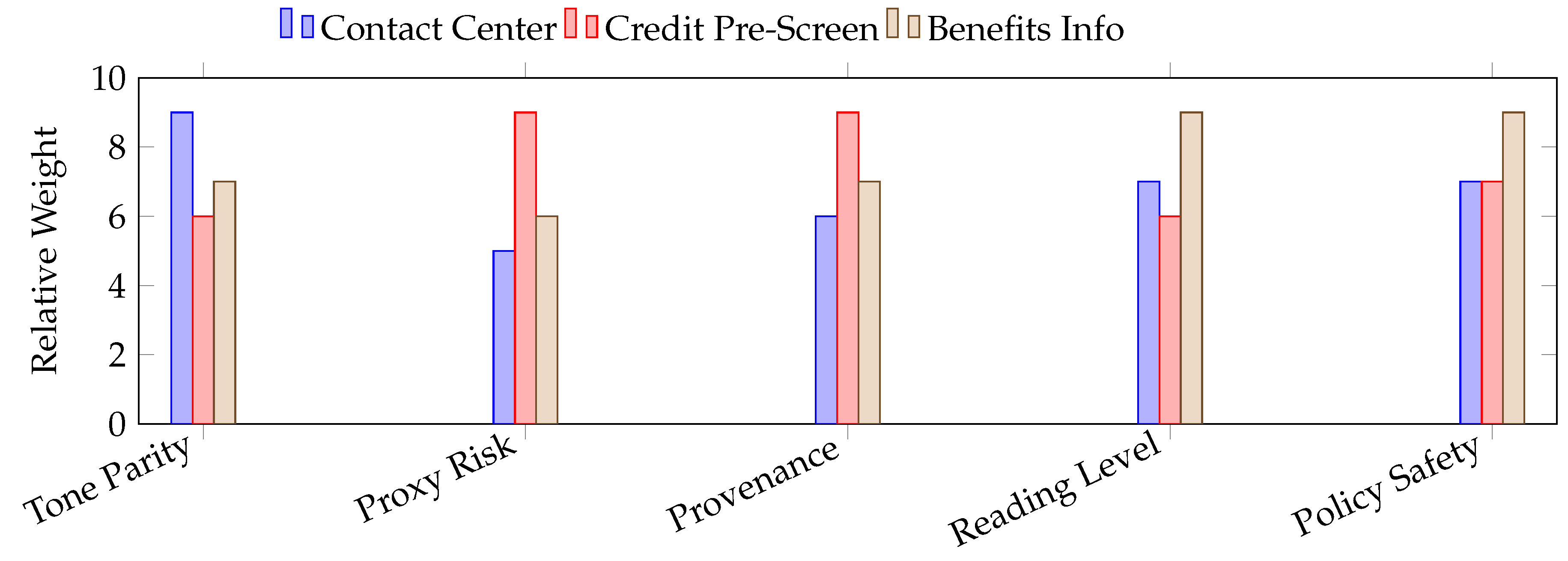

4.1. Contact-Center Triage (Customer Support)

4.2. Credit Pre-Screening Assistant (Advisory, Not Decisioning)

4.3. Public Benefits Information Service

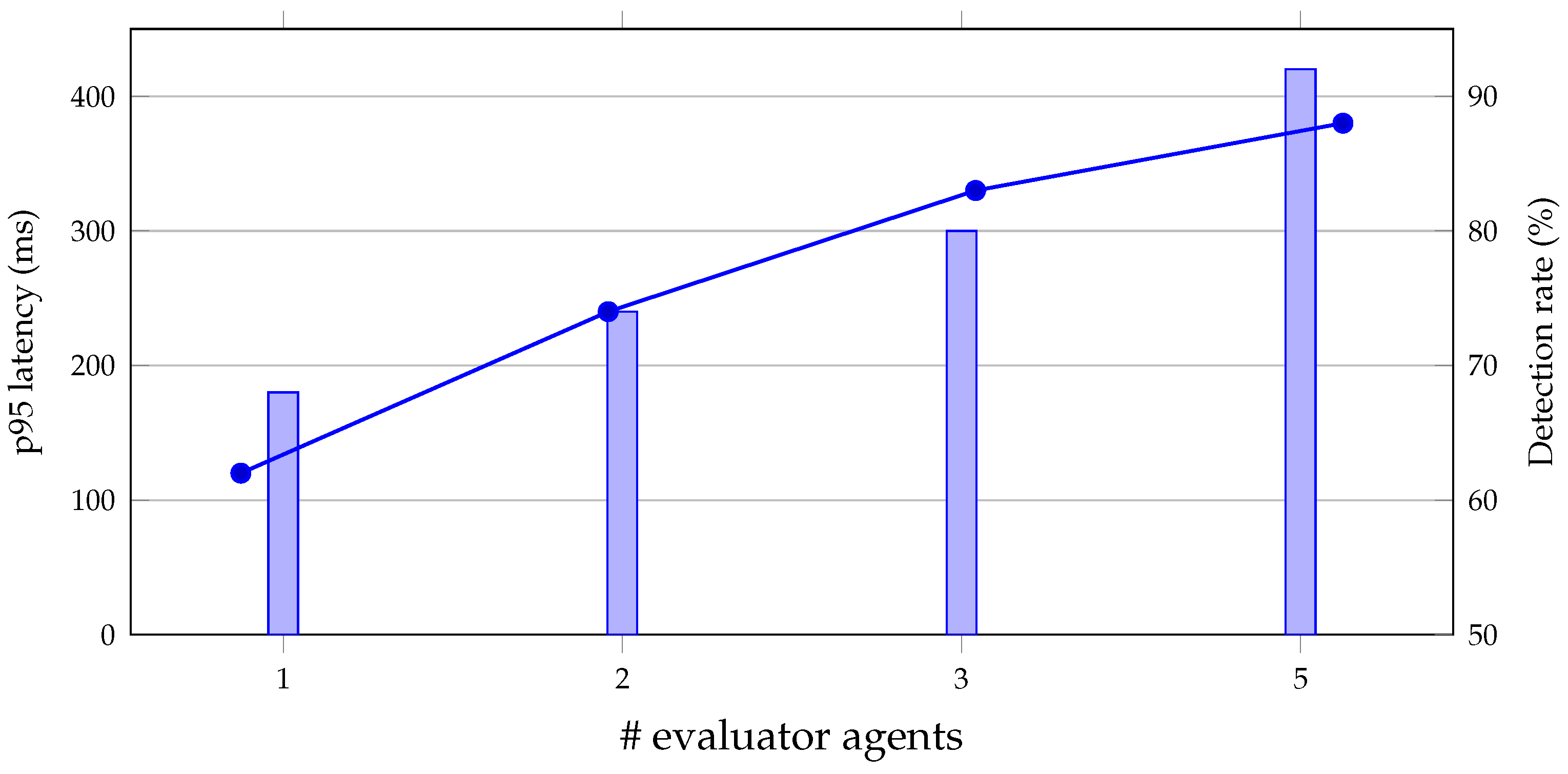

5. Experimental Setup and Metrics

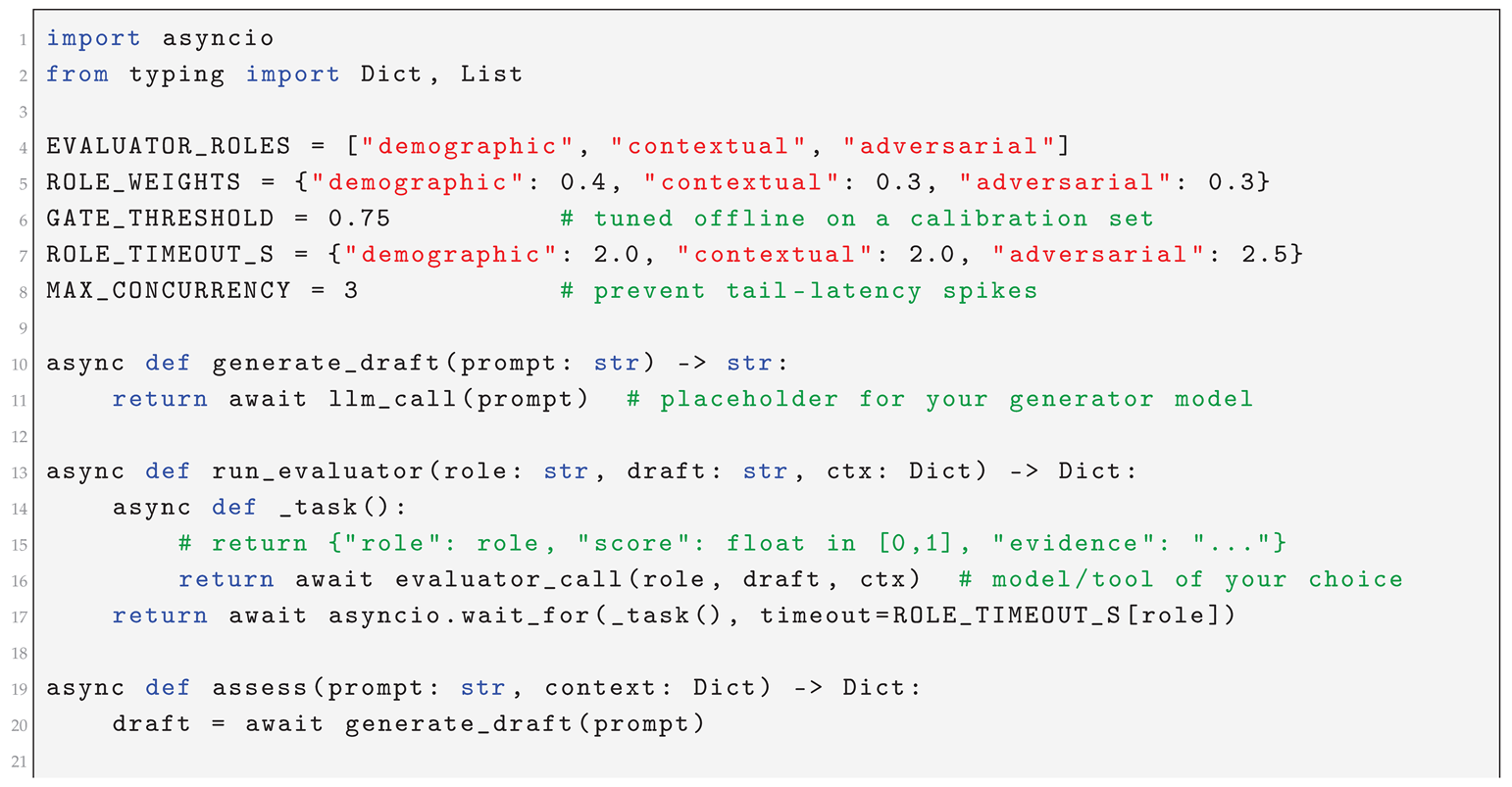

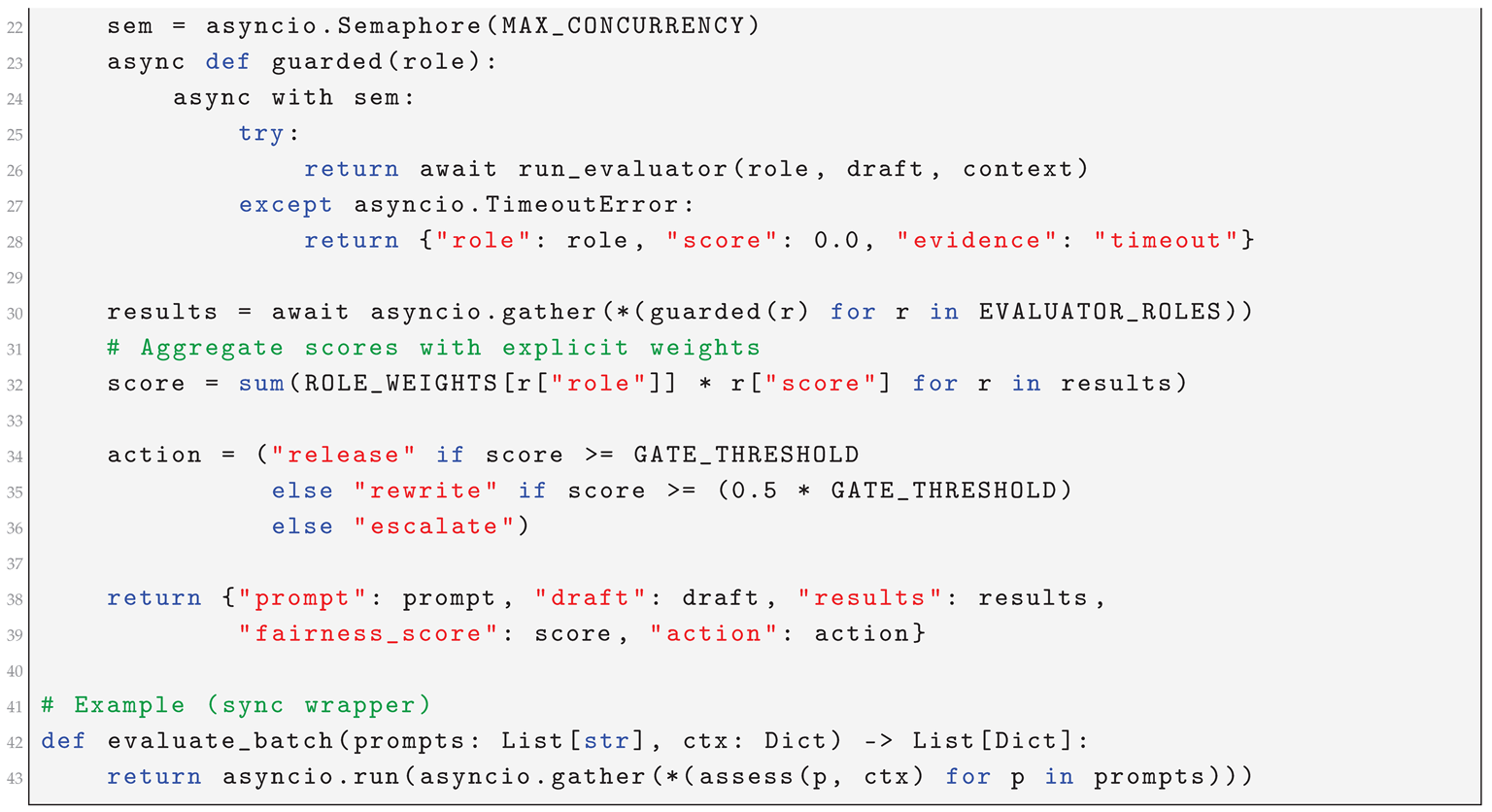

| Listing 2: Minimal orchestration for multi-agent fairness evaluation with bounded concurrency and timeouts |

|

6. Limitations and Threats to Validity

7. Conclusions

References

- Shahane, R. Improving ML Model Accuracy Through Data-Centric Engineering in Enterprise Data Lakes. 2024. [Google Scholar] [CrossRef]

- Nangia, N.; Vania, C.; Bhalerao, R.; Bowman, S.R. CrowS-Pairs: A Challenge Dataset for Measuring Social Biases in Masked Language Models. In Proceedings of the Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP); Association for Computational Linguistics, 2020; pp. 1953–1967. [Google Scholar] [CrossRef]

- Raji, I.D.; Smart, A.; Binns, R.N.; O’Neil, P.V.L.; Mazhar, A.; Levy, S.D.; Buolamwini, J. Closing the AI Accountability Gap: Defining Audit and Assurance for Algorithms. In Proceedings of the Proceedings of the 2020 ACM Conference on Fairness, Accountability, and Transparency (FAccT); ACM, 2020; pp. 33–44. [Google Scholar] [CrossRef]

- Alang, K.; Peta, S.B.; Pai, R.R.; Patil, B. Scalable Cloud Architectures for Efficient Processing of Multi-Structured Big Data. In Proceedings of the 2025 Global Conference in Emerging Technologies (GCET); IEEE, 2025. [Google Scholar] [CrossRef]

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? In Proceedings of the Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT); ACM, 2021; pp. 610–623. [Google Scholar] [CrossRef]

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I.D.; Gebru, T. Model Cards for Model Reporting. In Proceedings of the Proceedings of the 2019 ACM Conference on Fairness, Accountability, and Transparency (FAT*); ACM, 2019; pp. 220–229. [Google Scholar] [CrossRef]

- Gebru, T.; Morgenstern, J.; Vecchione, B.; Vaughan, J.W.; Wallach, H.; III, H.D.; Crawford, K. Datasheets for Datasets. Communications of the ACM 2021, 64, 86–92. [Google Scholar] [CrossRef]

- Pasam, V.R.; Devaraju, P.; Methuku, V.; Dharamshi, K.; Veerapaneni, S.M. Engineering Scalable AI Pipelines: A Cloud-Native Approach for Intelligent Transactional Systems. In Proceedings of the Proceedings of the 2025 International Conference on Intelligent Systems and Cloud Computing. IEEE; 2025. [Google Scholar]

- Veluguri, S.P. Deep PPG: Improving Heart Rate Estimates with Activity Prediction. In Proceedings of the Proceedings of the 2025 1st International Conference on Biomedical AI and Digital Health. IEEE, 2025. [Google Scholar]

- Shahane, R.; Prakash, S. Quantum Machine Learning Opportunities for Scalable AI. Journal of Validation Technology 2025, 28, 75–89. [Google Scholar] [CrossRef]

- Chouldechova, A. Fair Prediction with Disparate Impact: A Study of Bias in Recidivism Prediction Instruments. Big Data 2017, 5, 153–163. [Google Scholar] [CrossRef] [PubMed]

- Zhao, J.; Wang, T.; Yatskar, M.; Ordonez, V.; Chang, K.W. Gender Bias in Coreference Resolution: Evaluation and Debiasing Methods. In Proceedings of the Proceedings of NAACL-HLT 2018; Association for Computational Linguistics, 2018; pp. 15–20. [Google Scholar] [CrossRef]

- Rudinger, R.; Naradowsky, J.; Leonard, B.; Durme, B.V. Gender Bias in Coreference Resolution. In Proceedings of the Proceedings of NAACL-HLT 2018; Association for Computational Linguistics, 2018; pp. 8–14. [Google Scholar] [CrossRef]

- Natarajan, G.N.; Veerapaneni, S.M.; Methuku, V.; Venkatesan, V.; Kanji, R.K. Federated AI for Surgical Robotics: Enhancing Precision, Privacy, and Real-Time Decision-Making in Smart Healthcare. In Proceedings of the Proceedings of the 2025 5th International Conference on Emerging Technologies in Healthcare (ICETH); IEEE, 2025. [Google Scholar]

- Ribeiro, M.T.; Wu, T.; Guestrin, C.; Singh, S. Beyond Accuracy: Behavioral Testing of NLP Models with CheckList. In Proceedings of the Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2020; pp. 4902–4912. [Google Scholar] [CrossRef]

- Caliskan, A.; Bryson, J.J.; Narayanan, A. Semantics Derived Automatically from Language Corpora Contain Human-like Biases. Science 2017, 356, 183–186. [Google Scholar] [CrossRef] [PubMed]

- De-Arteaga, M.; Romanov, A.; Wallach, H.; Chayes, J.; Borgs, C.; Chouldechova, A.; Geyik, S.S.; Kenthapadi, K.; Beutel, A.; Weikum, G.; et al. Bias in Bios: A Case Study of Semantic Representation Bias in a High-Stakes Setting. In Proceedings of the Proceedings of the 2019 ACM Conference on Fairness, Accountability, and Transparency (FAT*); ACM, 2019; pp. 120–128. [Google Scholar] [CrossRef]

- Nadeem, M.; Bethke, A.; Reddy, S. StereoSet: Measuring Stereotypical Bias in Pretrained Language Models. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2021; pp. 5356–5371. [Google Scholar] [CrossRef]

- Bellamy, R.K.E.; Dey, K.; Hind, M.; Hoffman, S.C.; Houde, S.; Kannan, K.; Lohia, P.; Martino, J.; Mehta, S.; Mojsilović, A.; et al. AI Fairness 360: An Extensible Toolkit for Detecting, Understanding, and Mitigating Unwanted Algorithmic Bias. IBM Journal of Research and Development 2019, 63, 4:1–4:15. [Google Scholar] [CrossRef]

- Kiela, D.; Bartolo, M.; Nie, Y.; Kaushik, D.; Geiger, A.; Wu, Z.; Vidgen, B.; Prasad, G.; Singh, A.; Ringshia, P.; et al. Dynabench: Rethinking Benchmarking in NLP. In Proceedings of the Proceedings of NAACL-HLT 2021; Association for Computational Linguistics, 2021; pp. 4110–4124. [Google Scholar] [CrossRef]

| Dimension | Traditional Benchmarking | Multi-Agent Evaluators |

|---|---|---|

| Scalability | Limited to curated datasets | Continuous, scalable with multiple evaluators |

| Adaptability | Static, fixed tasks | Dynamic, context-aware and evolving |

| Bias Detection | Detects only known bias types | Surfaces hidden, emergent biases through adversarial roles |

| Governance Integration | One-time test results | Continuous feedback into oversight pipelines |

| Benchmark | Scope | Limitations |

|---|---|---|

| Winogender | Gender pronoun resolution | Limited to English; narrow domain |

| StereoSet | Stereotype detection in sentences | Focused on predefined bias categories |

| CrowS-Pairs | Contrastive sentence pairs | Coverage gaps for global fairness contexts |

| HolisticBias | 40+ demographic attributes | Dataset still static; cannot adapt to new contexts |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).