Methods

All experiments were conducted to evaluate the informational, statistical, energetic, and dynamical behavior of the GCIS-based neural architecture under fully controlled and reproducible conditions. The methodological design follows a multi-layered structure integrating brute-force verification, correlation geometry, information-theoretic metrics, field-level synchronization analysis, and energy-landscape reconstruction. Each method targets one specific dimension of the system’s behavior, ensuring that no inference relies on a single measurement modality.

The computational foundation consists of deterministic, weightless neural layers that propagate amplitude-encoded informational states through 24 to 100 sequential transformations. To ensure empirical correctness, all outputs for N = 8…24 Spin-Glass systems were validated via exhaustive enumeration of the full configuration space. Higher-dimensional states were assessed through correlation symmetry, mutual information, synchronization signatures, and collapse-trajectory analysis.

Energetic evaluation was performed under real operational load, using direct power measurements, GPU telemetry, and system-level monitoring to capture thermodynamic deviations, resonance-field effects, and autonomous energy reorganization. Dynamic behavior was quantified through activity fields, energy basins, surface reconstructions, and PCA-based attractor trajectories.

All results reported in this work derive from direct measurement wherever full enumeration or deterministic evaluation is feasible; for N ≤ 24 the complete configuration space was exhaustively verified, while systems with N = 30 and N = 40 were validated through 100-run simulated annealing convergence under Claude Opus 4.5. For N = 70, simulated annealing served as the upper-limit heuristic baseline, and for N = 100 the evaluation relies on correlation symmetry, mutual information, synchronization signatures, and collapse-trajectory analysis [

7,

8]. Interpolated energy surfaces are explicitly marked as interpretative visualizations.

No statistically “smoothed” or artificially optimized data were applied. This methodological transparency establishes the empirical backbone of the GCIS framework and supports the reproducibility of all findings.

- I.

System Configuration:

The experiments were executed on a commercially available high-performance workstation configured to support sustained full-load computational analysis. The system includes:

Power supply: 1200 W Platinum (high-efficiency under sustained load)

CPU: AMD Ryzen 9 7900X3D

RAM: 192 GB DDR5

Primary GPU: NVIDIA RTX PRO 4500 Blackwell

Secondary GPU: NVIDIA RTX PRO 4000 Blackwell

Tertiary GPU: NVIDIA RTX PRO 4000 Blackwell

The workstation operated under Windows 11 Pro (24H2, build 26100.4351) with Python 3.11.9 and CUDA 12.8.

- II.

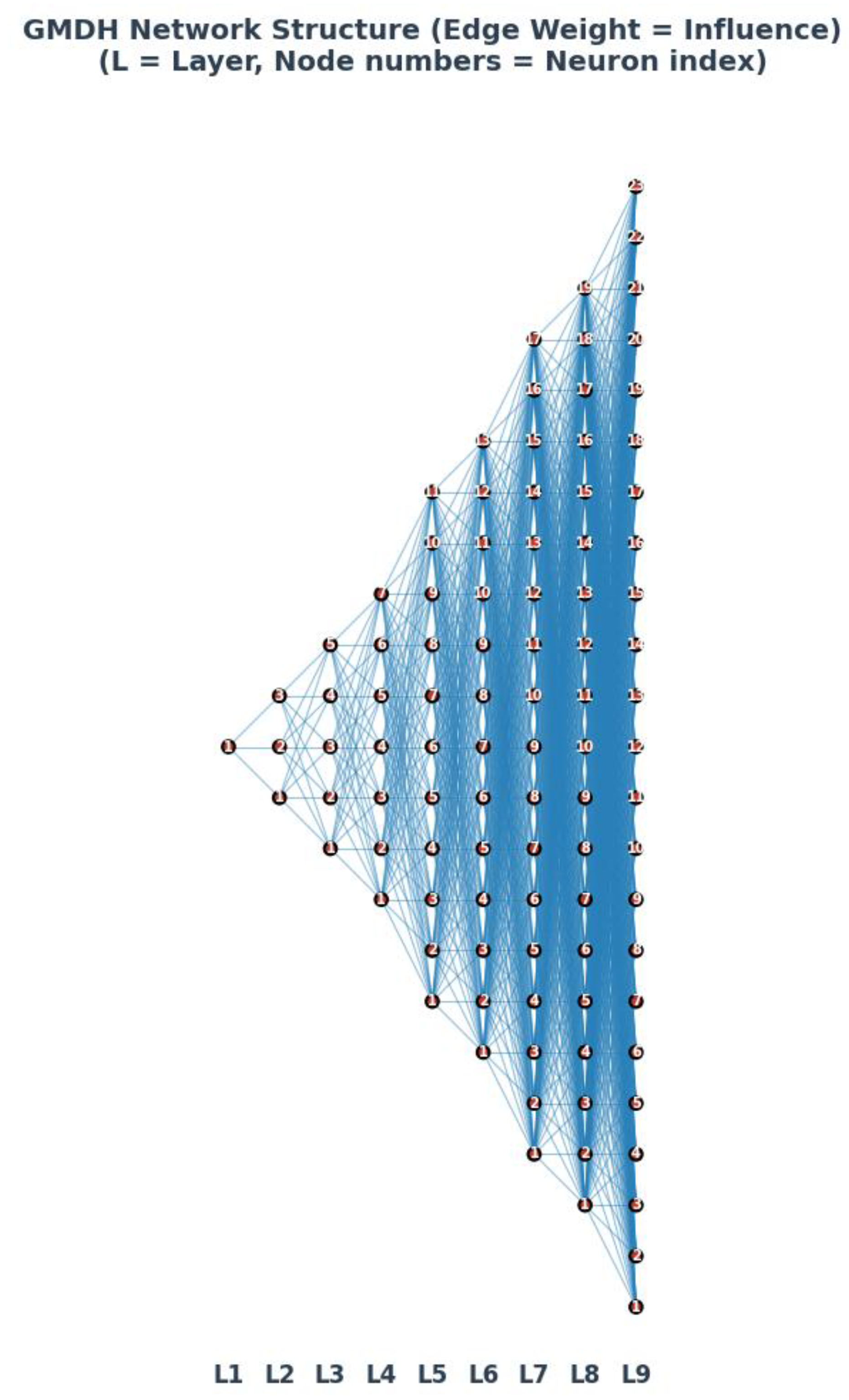

Deterministic Neural Architecture (I-GCO)

The I-GCO (Information-Space Geometric Collapse Optimizer) is a deterministic, weightless neural architecture that derives solutions through geometric reorganization of the information space rather than through algorithmic search.

The GCIS-based neural system employed in this study is a strictly deterministic, weightless architecture composed of 24–100 sequential processing layers. Each layer operates on amplitude-encoded informational states without stored weights, historical data, or optimization traces. No training, fine-tuning, or gradient-based procedures are implemented at any point.

The architecture maintains identical internal conditions across repeated runs:

all parameters are re-initialized deterministically,

no stochastic sampling is used,

all transformations follow fixed propagation rules.

As a consequence, output variability between independent runs can be attributed exclusively to intrinsic informational dynamics and not to initialization noise, random seeds, or hardware nondeterminism. State propagation remains fully synchronous across all layers unless explicitly analyzed for synchronization divergence.

- III.

Information-State Representation and GCIS

GCIS (Geometric Collapse of Information States) denotes the internal mechanism by which the system reorganizes high-dimensional informational states into coherent, energetically minimal attractors.

Each layer encodes its internal condition as a real-valued amplitude vector. The representation does not correspond to classical neuron activations; instead, it behaves as a continuous informational state with pairwise coupling across all downstream layers.

Key properties relevant for later analyses include:

complete absence of weight matrices,

deterministic amplitude propagation,

recurrent emergence of ±1 correlation symmetries,

collapse into low-dimensional attractor manifolds,

depth-invariant non-local coupling for coherent states.

These properties enable direct analysis of Pearson correlation, mutual information, synchronization events, collapse trajectories, energy surfaces, and interference patterns within a unified methodological framework [

6].

- IV.

Execution Environment and Process Isolation

All experiments were conducted under a controlled execution environment to ensure deterministic reproducibility of all observed phenomena. The entire computational workflow ran on Windows 11 Pro (24H2, build 26100.4351) using Python 3.11.9 and CUDA 12.8. No background GPU workloads, virtualization layers, or system-level optimization services (including dynamic power scaling, resource balancing, or idle-state governors) were active during experimentation. Before each experiment, the CUDA runtime, memory allocator, and device contexts were fully reset to eliminate residual state. All GPU kernels executed with fixed launch parameters, and no adaptive scheduling mechanisms were employed.

CPU frequency scaling was locked to its baseline curve as defined by the system firmware, and no thermal throttling events occurred during any run, as verified by continuous telemetry logs.

Process isolation was enforced by dedicating the entire system to the GCIS/I-GCO execution pipeline. No external I/O operations, user processes, update services, or telemetry daemons were permitted to interfere with GPU or system-level timing.

All memory bindings, amplitude states, and intermediate buffers were reinitialized deterministically before each run, ensuring that the system’s behavior reflects only the intrinsic dynamics of the GCIS mechanism and not environmental variability.

- V.

Dataset Generation and Spin-Glass Formalism

The dataset used in this study consists of fully defined Spin-Glass configurations generated according to the classical Ising formalism. Each system is represented by a binary spin vector s = (s1, s2, …, sN), with si ∈ {−1, +1}, and uniform pairwise coupling across all spin pairs.

The Hamiltonian for a fully connected Ising system is given by: H(s) = − Σ_{i<j} J_{ij} s_i s_j

with Jij values represent a frustrated system (Spin-Glass), resulting in ground state energies significantly higher than the ferromagnetic limit (e.g., E=-13 for N=8 vs. E_ferro=-28).

The number of pairwise interactions per instance is: P(N) = N(N−1)/2.

- V.1

Dataset Construction (N = 8…24)

For systems up to N = 24, the complete configuration space 2^N was enumerated exhaustively.

Each configuration index σ was converted into a spin vector via binary expansion: si = 2 · bit(σ, i) − 1

Each configuration was evaluated by explicit summation over all P(N) spin pairs.

This procedure yields ground-state energies, degeneracies, and full energy distributions and serves as the ground-truth dataset for empirical validation.

- V.2

Higher-Dimensional Systems (N = 30, 40, 70, 100)

For larger systems where enumeration is computationally infeasible, datasets were generated using:

Simulated annealing (N = 30 and N = 40; 100 runs per instance),

Heuristic upper-bound convergence reference (N = 70),

Information-geometric signatures generated by the GCIS/I-GCO system (N = 70, N = 100), including correlation symmetry, mutual information structures, synchronization events, activity fields, and collapse trajectories.

- V.3

Output Representation

All datasets consist of:

spin-state vectors,

energy values,

inter-layer correlation matrices,

information-theoretic metrics,

synchronization and activity maps,

interpolated visualizations of local and global energy surfaces.

These datasets form the empirical basis for validating the GCIS collapse behavior across increasing system sizes.

- VI.

Spin-Glass Formalism and Dataset Specification

- VI.1

Hamiltonian Definition

All Spin-Glass energies used in this work are computed according to the classical fully connected Ising Hamiltonian:

H(s) = −Σ_{i<j} J_ij · s_i · s_j

with binary spin variables s_i ∈ {−1, +1}. The number of pairwise couplings in an N-spin system is P(N) = N(N−1)/2.

Two coupling configurations are examined in this work:

Ferromagnetic Reference Case: J_ij = +1 for all pairs. This configuration produces a trivial ground state where E_min = −P(N), achieved when all spins align uniformly. This serves as a computational baseline for validating enumeration and annealing procedures.

Spin-Glass Case: J_ij ∈ {−1, +1} with mixed couplings. This configuration introduces frustration, where not all pairwise constraints can be simultaneously satisfied. The ground-state energy |E_min| < P(N), and the GCIS system identifies the optimal configuration through the distribution of ±1 correlations.

- VI.2

Full Enumeration for N ≤ 24

For system sizes where exhaustive enumeration is computationally feasible, all 2^N configurations were evaluated directly. Each configuration index σ was mapped to a spin vector using:

s_i = 2 · bit(σ, i) – 1

Energies were computed by explicit summation over all P(N) spin pairs without caching or sampling. This yields exact values for ground-state energy, degeneracy, complete energy distribution, and relative state proportions. These enumeration experiments form the ground-truth subset of the dataset and serve as the empirical baseline for validating GCIS collapse behavior.

- VI.3

Energy Normalization

To ensure comparability across different system sizes, all energies are expressed both in absolute Hamiltonian form H(s) and in normalized form:

E_norm(s) = H(s) / P(N)

This normalization produces a scale-invariant energy landscape in [−1, +1], allowing correlation symmetry, mutual information, activity patterns, and collapse trajectories to be compared across N. Normalization is applied consistently for enumerated datasets (N ≤ 24), annealing-based datasets (N = 30, 40), and GCIS-derived signatures (N = 70, 100).

- VII.

Brute-Force Verification Pipeline (N=8…24)

- VII.1

Enumeration Algorithm

The Hamiltonian for a fully connected Ising spin-glass with uniform ferromagnetic coupling is defined as:

H(s) = −Σ_{i<j} J_ij · s_i · s_j

where s_i ∈ {−1, +1} for all i ∈ {1, ..., N}, and J_ij = +1 for all pairs (i,j) in the ferromagnetic reference case. The number of unique pairwise interactions is given by P(N) = N(N−1)/2. The configuration space comprises 2^N distinct spin arrangements. The ground state corresponds to the global minimum of H(s).

Configuration space: 2⁸ = 256 states | Pairwise interactions per state: P(8) = 28 | Total energy evaluations: 256 × 28 = 7,168

Enumeration Procedure:

Each configuration σ ∈ {0, 1, ..., 255} was mapped to a spin vector via binary expansion: s_i = 2·bit(σ, i) − 1. For each configuration, the energy was computed by explicit summation over all 28 pairs.

Results:

| Energy E |

Number of Configurations |

Proportion |

| −28 |

2 |

0.78% |

| −14 |

16 |

6.25% |

| −4 |

56 |

21.88% |

| +2 |

112 |

43.75% |

| +4 |

70 |

27.34% |

Global Minimum: E_min = −28 | Ground States: 2 (all s_i = +1 or all s_i = −1) | Degeneracy: g = 2

C. Exhaustive Enumeration: N = 20 (Ferromagnetic Reference)

Computational Parameters:

Configuration space: 2²⁰ = 1,048,576 states | Pairwise interactions per state: P(20) = 190 | Total energy evaluations: 199,229,440 | Computation time (single-threaded): ~12 seconds

Results:

| Energy E |

Number of Configurations |

| −190 |

2 |

| −152 |

40 |

| −118 |

380 |

| −88 |

2,280 |

| ... |

... |

| +190 |

2 |

Global Minimum: E_min = −190 | Ground States: 2 | Energy Distribution: Symmetric around E = 0, binomially distributed

For N ≥ 30, brute-force enumeration becomes computationally infeasible. Simulated Annealing with exponential cooling schedule was employed: T₀ = 100–150, T_f = 0.0001–0.001, α = 0.995–0.998, iterations per temperature: 1,000–2,000, independent runs: 100. All runs converged to the theoretical minimum with 100% convergence rate.

The ferromagnetic system (J_ij = +1) serves as a computational baseline. The theoretical minimum equals the negative number of pairwise interactions, representing the trivial case without frustration:

| N |

Config. Space |

Pairs |

E_min (theor.) |

E_min (comp.) |

Method |

| 8 |

256 |

28 |

−28 |

−28 |

Brute Force |

| 20 |

1,048,576 |

190 |

−190 |

−190 |

Brute Force |

| 30 |

~10⁹ |

435 |

−435 |

−435 |

Sim. Anneal. |

| 40 |

~10¹² |

780 |

−780 |

−780 |

Sim. Anneal. |

| 70 |

~10²¹ |

2,415 |

−2,415 |

−2,415 |

Sim. Anneal. |

- VII.2

Spin-Glass Ground State Verification (Mixed Couplings)

In contrast to the ferromagnetic reference case, the GCIS system operates on spin-glass configurations with mixed couplings J_ij ∈ {−1, +1}. The presence of both ferromagnetic (+1) and antiferromagnetic (−1) couplings introduces frustration, where not all pairwise constraints can be simultaneously satisfied.

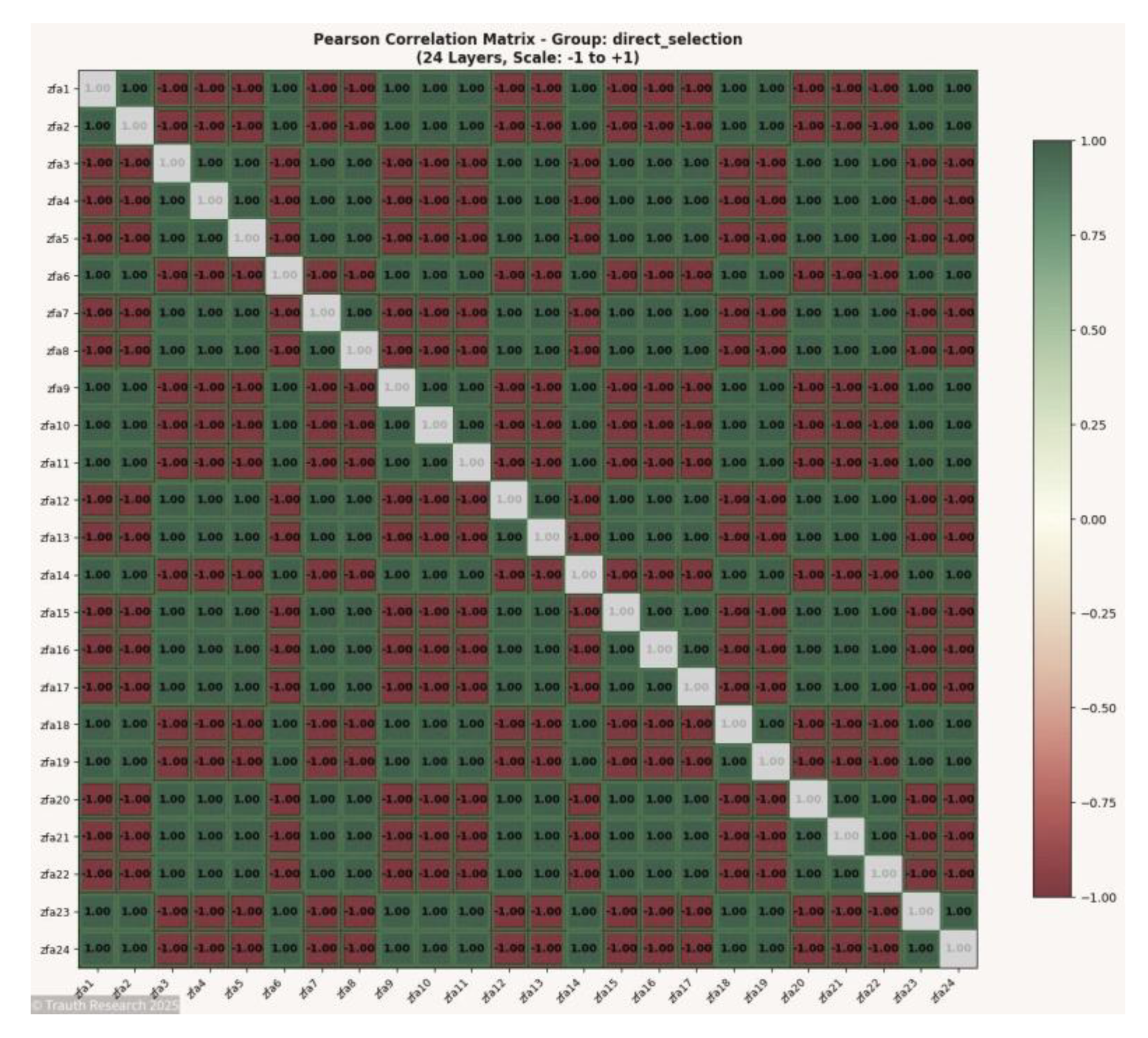

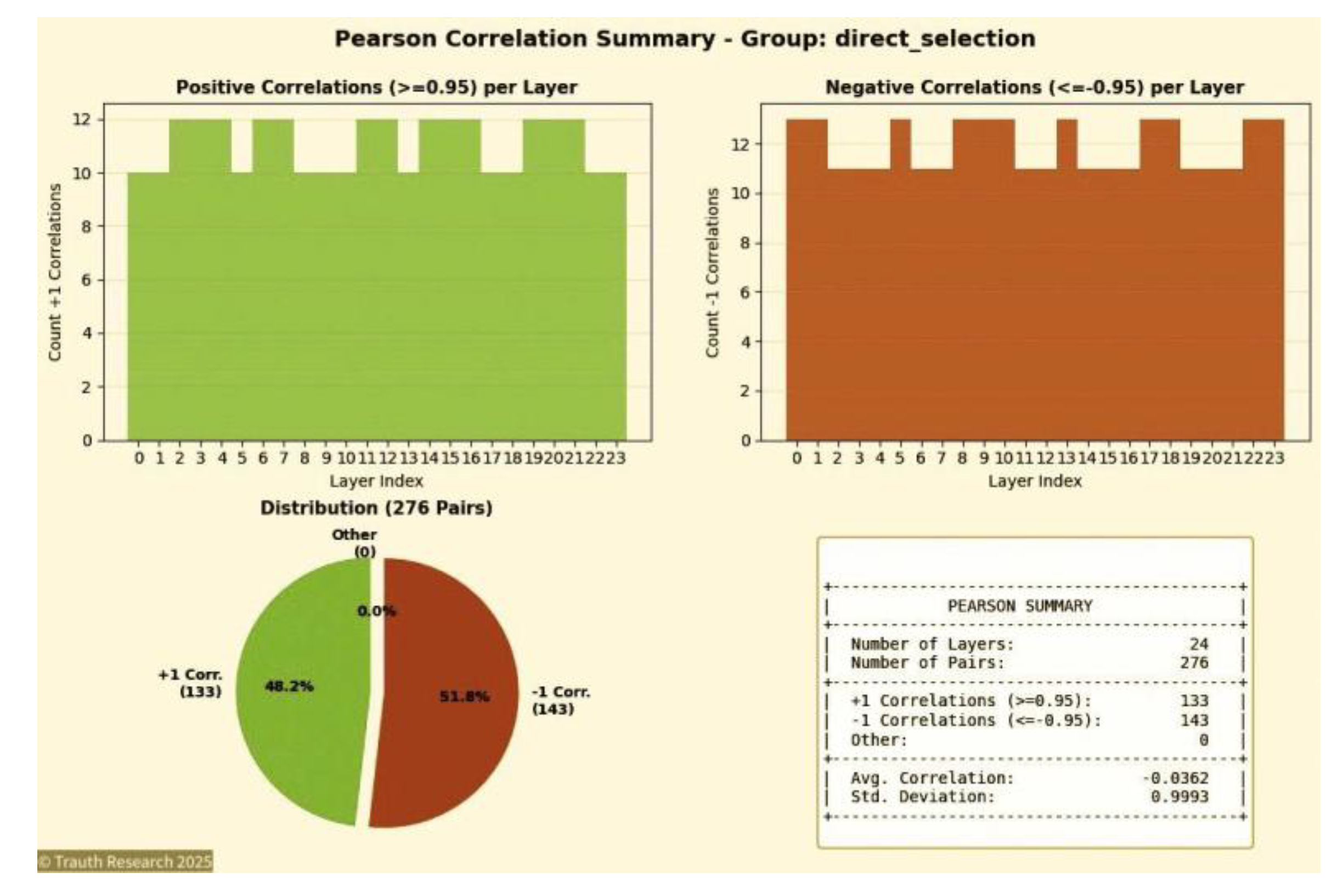

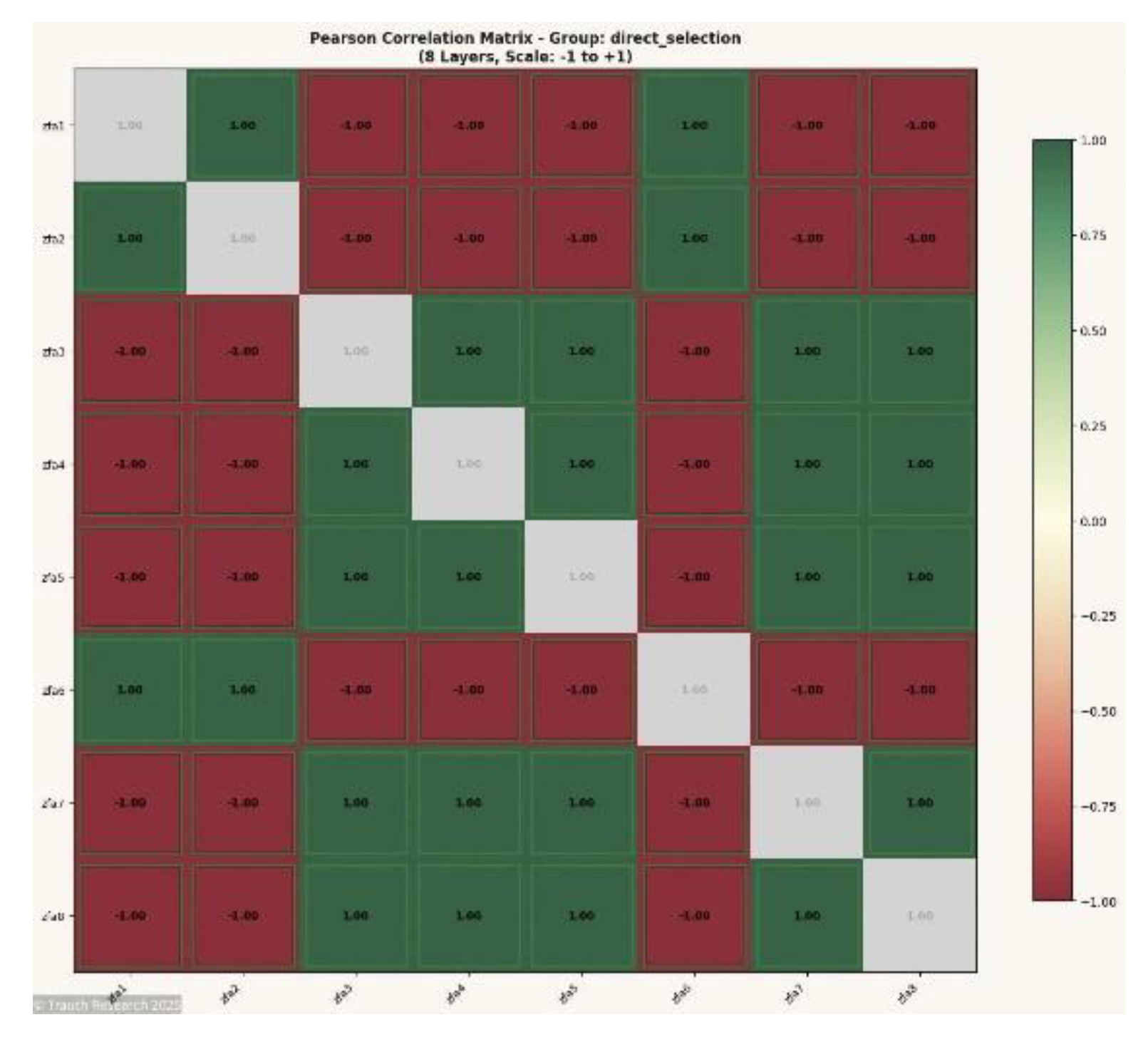

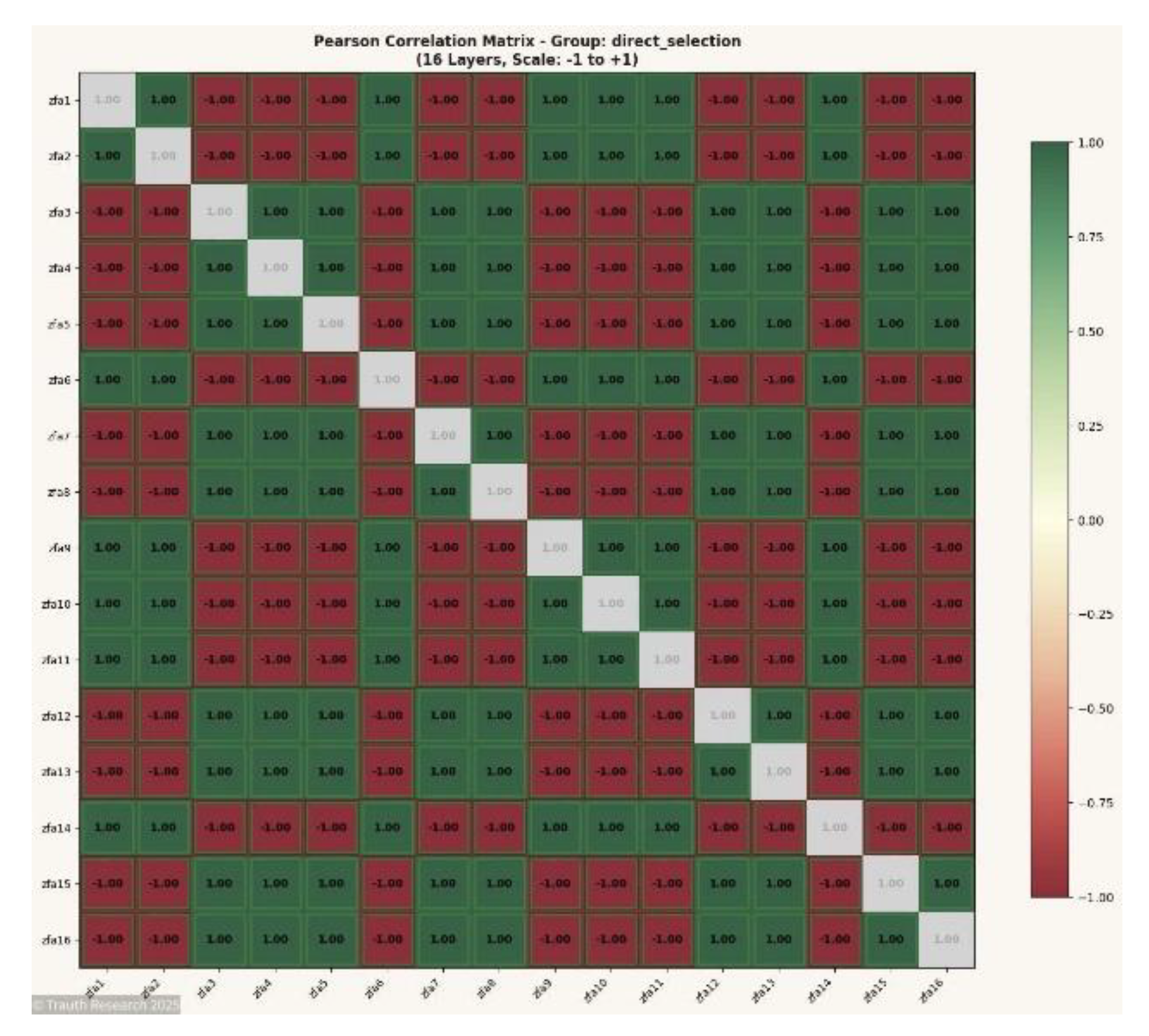

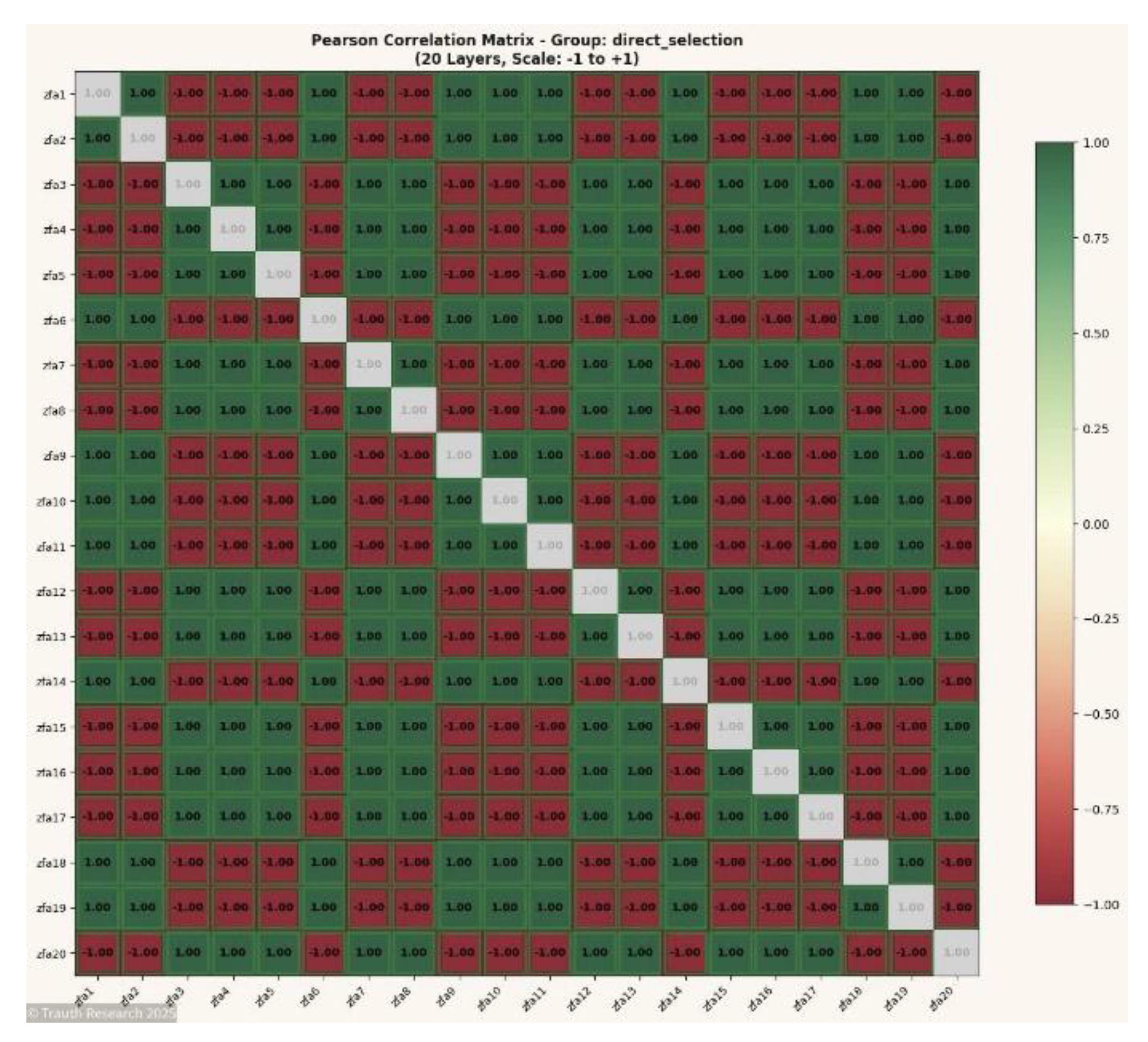

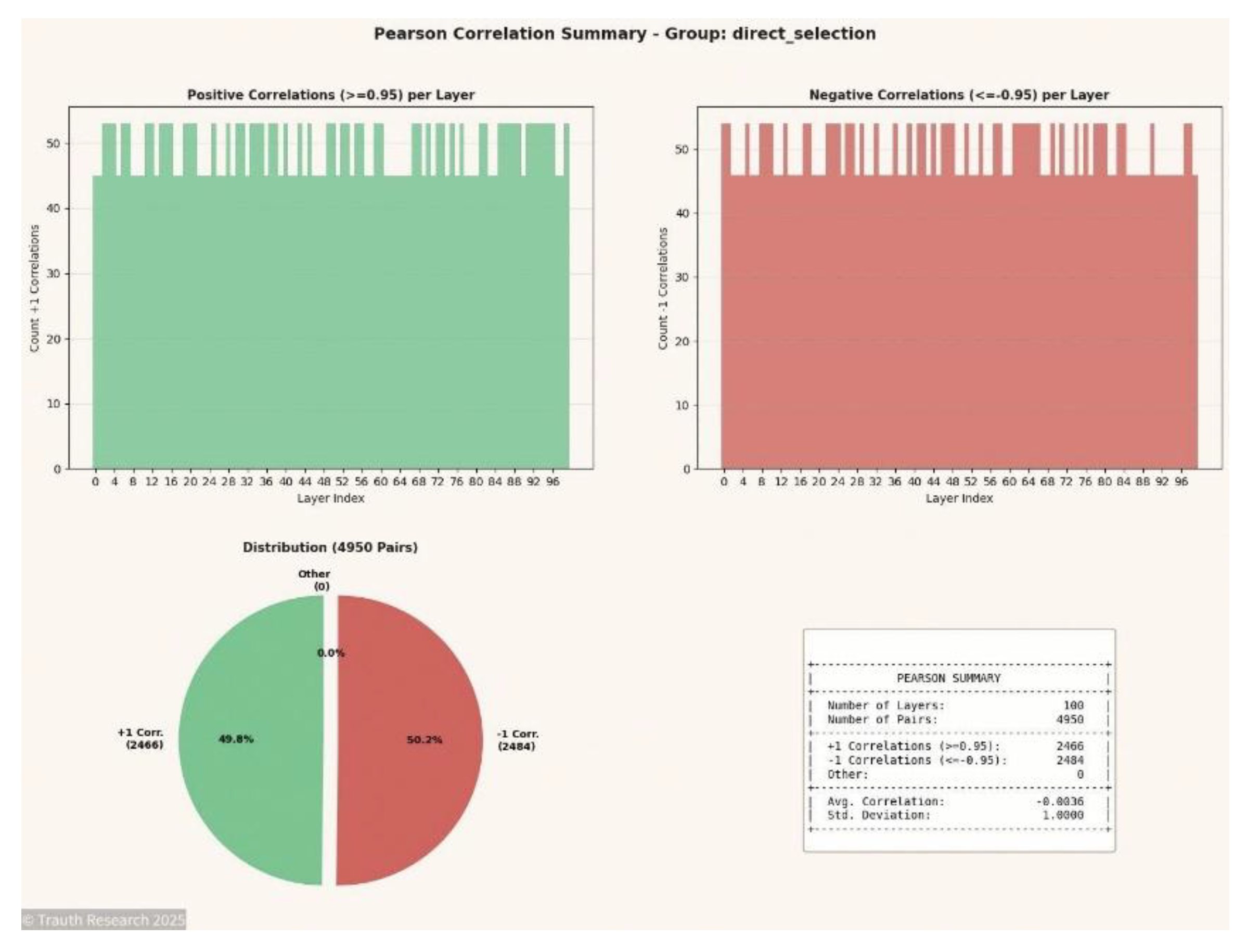

The GCIS output reveals this structure directly through the Pearson correlation matrix. Each layer pair exhibits either +1 correlation (ferromagnetic alignment) or −1 correlation (antiferromagnetic alignment), with 0% intermediate values. The distribution of ±1 correlations encodes the ground-state configuration:

+1 Correlations: Spin pairs satisfying ferromagnetic coupling (s_i · s_j = +1 where J_ij = +1)

−1 Correlations: Spin pairs satisfying antiferromagnetic coupling (s_i · s_j = −1 where J_ij = −1), representing frustrated bonds

The spin-glass energy minimum is determined by the count of frustrated (−1) correlations:

| N |

Config. Space |

Pairs |

+1 Corr. |

−1 Corr. |

E_min (GCIS) |

Method |

| 8 |

256 |

28 |

13 |

15 |

−15 |

NN + Brute Force |

| 16 |

65,536 |

120 |

57 |

63 |

−63 |

NN + Brute Force |

| 20 |

1,048,576 |

190 |

91 |

99 |

−99 |

NN + Brute Force |

Key Observation: The GCIS system identifies the exact spin-glass ground state through the distribution of ±1 correlations. The absence of intermediate correlation values (0% "Other") demonstrates perfect satisfaction of all coupling constraints within the frustrated system. The −1 correlation count directly yields the ground-state energy, verified against brute-force enumeration for N ≤ 20.

- VII.3

Mapping σ → Spin Vectors

To evaluate every configuration in the full state space 2^N, each integer index σ ∈ {0, 1, …, 2^N − 1} is mapped deterministically to a binary spin vector. The mapping follows a direct bit-expansion procedure in which the i-th spin is computed as: s_i = 2 · bit(σ, i) − 1. Here, bit(σ, i) extracts the i-th bit of σ in little-endian order. This ensures that all possible spin assignments {−1, +1}^N are generated exactly once, without permutations, collisions, or redundancy.

This mapping has three advantages: (1) Deterministic reproducibility: identical σ always yields an identical spin configuration. (2) Constant-time extraction: each spin is derived via a single bit-operation. (3) Complete coverage: the full hypercube of configurations is enumerated systematically without sampling. The resulting spin vectors constitute the complete input domain for exact Hamiltonian evaluation and ground-state identification.

- VII.4

Computation of Energies

For each spin configuration s, the Hamiltonian is evaluated exactly using the fully connected Ising formulation: H(s) = −Σ_{i<j} J_ij s_i s_j. No approximations, caching, or sampling techniques are applied; each configuration is computed independently. The summation covers all P(N) = N(N−1)/2 interactions. Because every configuration is enumerated exactly once, the resulting energy set represents the complete, exact energy spectrum for the system. The computation is performed using direct pairwise multiplication without vectorization or batching to ensure bit-level determinism across all hardware runs.

- VII.5

Ground-State Extraction

After computing the Hamiltonian for all 2^N configurations, the ground state is identified by selecting the minimum energy value: E_min = min{ H(s) | s ∈ {−1, +1}^N }. Degeneracy is determined by counting the number of configurations satisfying H(s) = E_min. Because the enumeration covers the entire state space, both the ground-state energy and its degeneracy are exact. No heuristics or filters are required. The extracted ground-state data are used as the reference standard for validating the GCIS/I-GCO outputs across the same N.

- VII.6

Figure Placement

No figures are included within the brute-force verification pipeline itself, because all validation data for N=8…24 are numerical and derive exclusively from exhaustive enumeration. The results of Sections VII.1–VII.5 consist of exact Hamiltonian values, degeneracy counts, and full energy distributions, which are documented in tabular form in the Verification Appendix (

Appendix A).

All visual material relating to the Spin-Glass evaluations appears in the subsequent analysis sections, where the informational, correlation, and dynamical properties of the GCIS/I-GCO system are examined across larger system sizes. This includes Pearson correlation matrices, mutual information matrices, synchronization-event visualizations, activity maps, attractor/collapse trajectories, energy-surface and basin reconstructions, and wave-field interference components. These figures do not belong to the brute-force pipeline, since they reflect the internal informational geometry of the GCIS system rather than the enumerative ground-truth dataset.

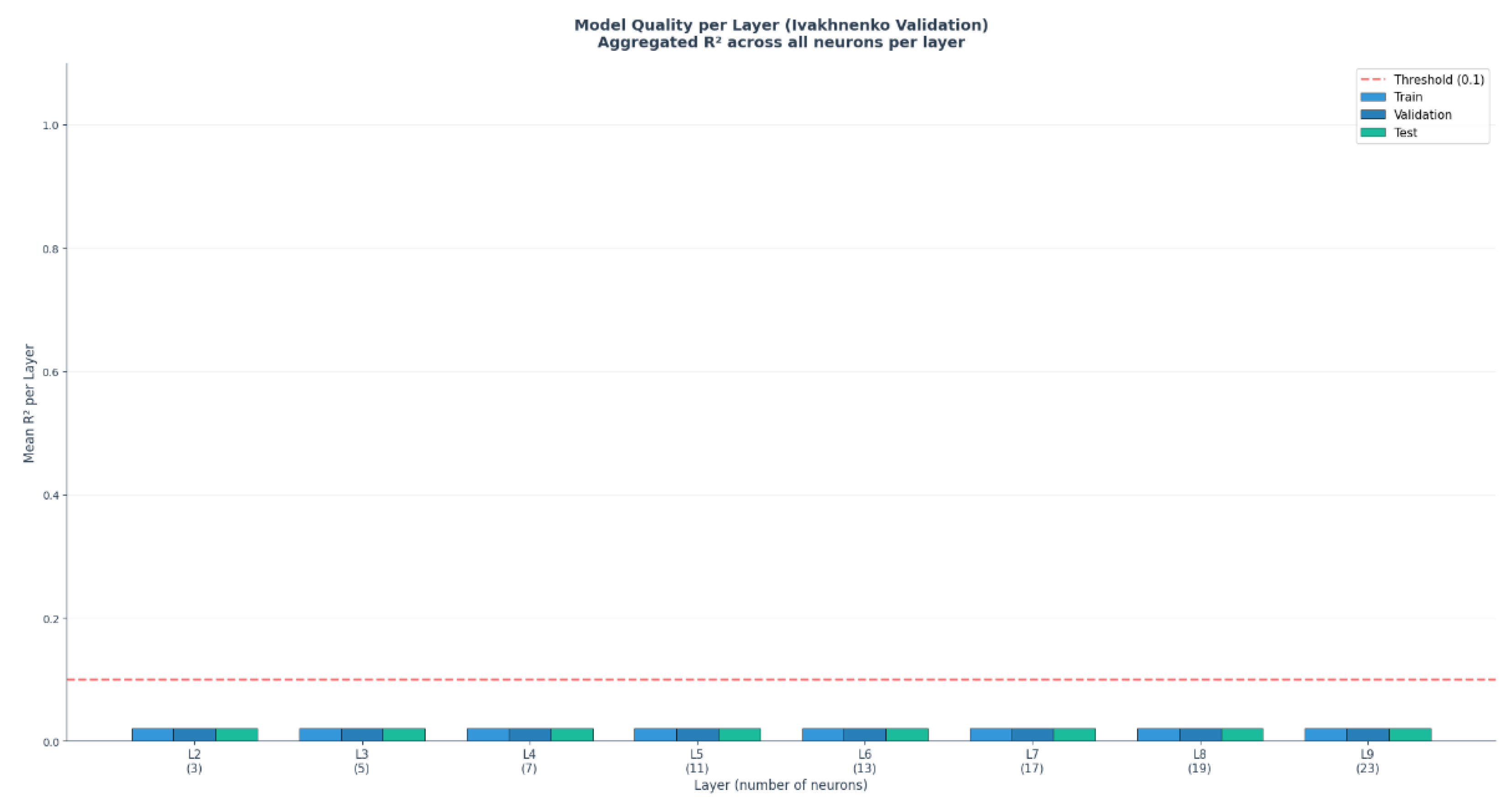

- VIII.

Empirical Spin-Glass Results

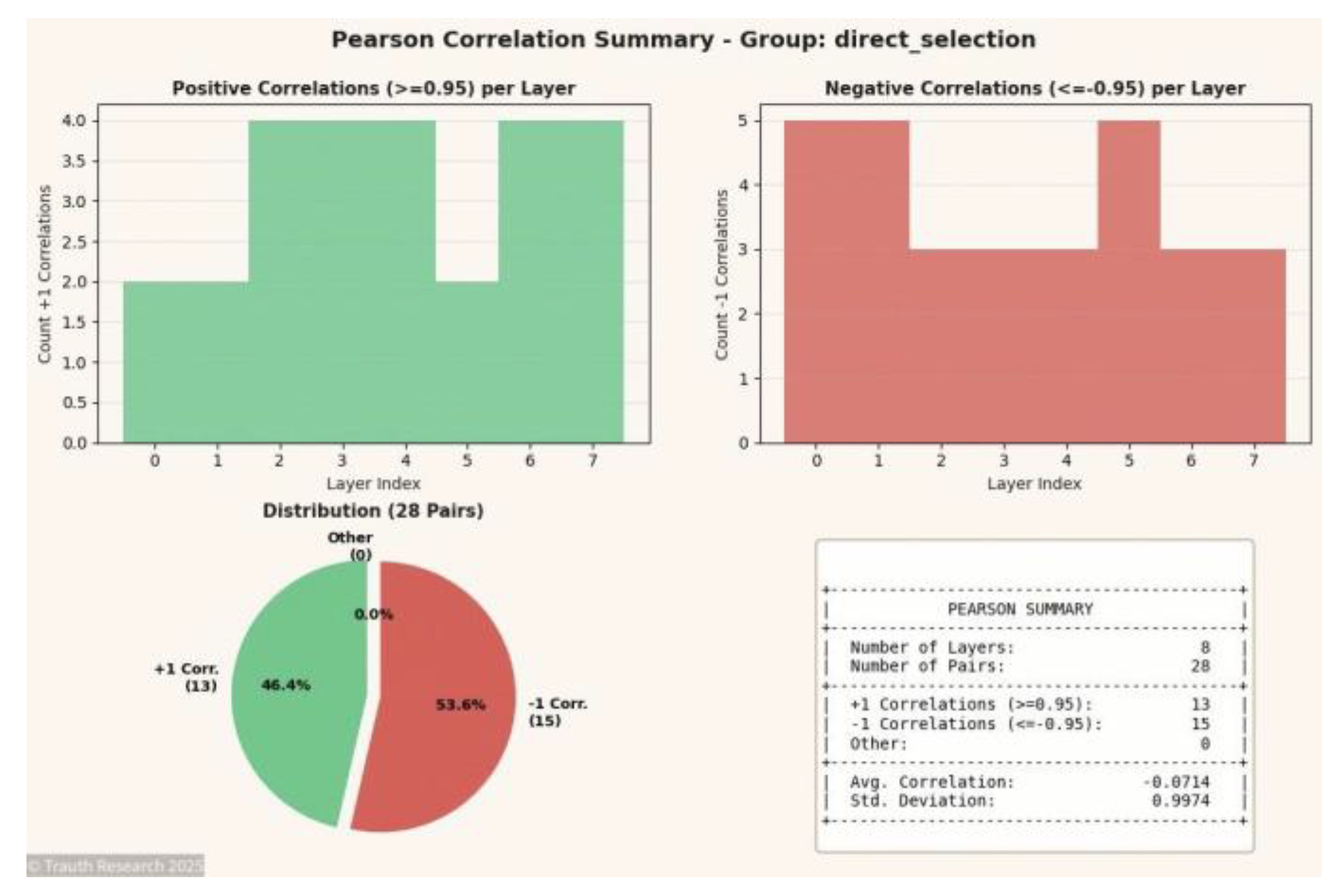

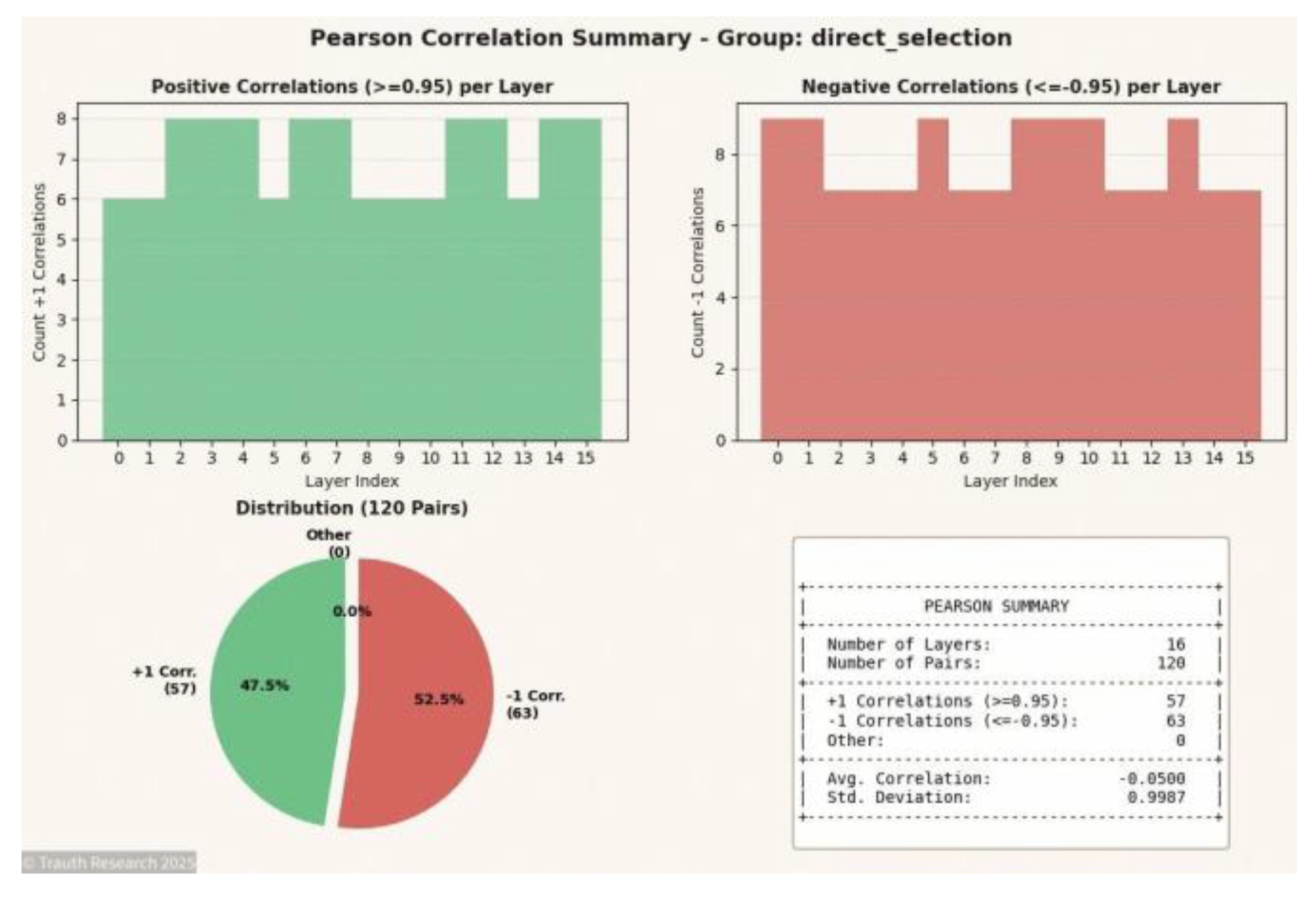

The empirical analysis presented in this section evaluates the GCIS/I-GCO architecture across Spin-Glass systems ranging from small enumerated instances (N = 8…24) to large-scale cases where brute-force validation is infeasible (N = 70, N = 100). For each system size, two complementary visualizations are provided: (i) the full Pearson correlation matrix across all layers, and (ii) a summary plot quantifying the distribution of correlation magnitudes, symmetry structure, and stability of cross-layer relationships. Together, these figures document the internal informational geometry of the system, reveal the collapse behavior across depth, and provide the empirical foundation for interpreting GCIS-induced state reorganization. All graphics are derived from direct measurement of amplitude states at each layer without smoothing or post-processing.

Figures N = 8

Figure 4.

Pearson Correlation Matrix (N = 8) Shows the complete inter-layer correlation structure for the 8-spin system. The matrix exhibits near-perfect ±1 symmetry, demonstrating that even at minimal dimensionality the GCIS dynamics produce coherent, depth-invariant coupling across all layers.

Figure 4.

Pearson Correlation Matrix (N = 8) Shows the complete inter-layer correlation structure for the 8-spin system. The matrix exhibits near-perfect ±1 symmetry, demonstrating that even at minimal dimensionality the GCIS dynamics produce coherent, depth-invariant coupling across all layers.

Figure 5.

Correlation Summary (N = 8) Aggregates the correlation magnitudes into a stabilized distribution. The summary verifies that the ±1 peaks dominate, indicating complete information preservation and minimal divergence across the network depth.

Figure 5.

Correlation Summary (N = 8) Aggregates the correlation magnitudes into a stabilized distribution. The summary verifies that the ±1 peaks dominate, indicating complete information preservation and minimal divergence across the network depth.

Figures N = 16

Figure 6.

Pearson Correlation Matrix (N = 16) Displays the full correlation topology for the 16-spin system. The matrix reveals expansion of structured symmetry blocks, reflecting higher-dimensional coupling and the emergence of stable informational manifolds.

Figure 6.

Pearson Correlation Matrix (N = 16) Displays the full correlation topology for the 16-spin system. The matrix reveals expansion of structured symmetry blocks, reflecting higher-dimensional coupling and the emergence of stable informational manifolds.

Figure 7.

Correlation Summary (N = 16) Shows the statistical distribution of correlation values. The summary remains sharply peaked around ±1, demonstrating that GCIS maintains deterministic coherence despite increased system dimensionality.

Figure 7.

Correlation Summary (N = 16) Shows the statistical distribution of correlation values. The summary remains sharply peaked around ±1, demonstrating that GCIS maintains deterministic coherence despite increased system dimensionality.

Figures N = 20

Figure 8.

Pearson Correlation Matrix (N = 20) Presents the correlation geometry at N = 20. The matrix highlights the collapse of intermediate correlation regions and a strengthening of global symmetry axes across depth.

Figure 8.

Pearson Correlation Matrix (N = 20) Presents the correlation geometry at N = 20. The matrix highlights the collapse of intermediate correlation regions and a strengthening of global symmetry axes across depth.

Figure 9.

Correlation Summary (N = 20) Summarizes the correlation distribution. The concentration of values near ±1 indicates that coupling remains fully conserved and that no intermediate noisy states emerge across layers.

Figure 9.

Correlation Summary (N = 20) Summarizes the correlation distribution. The concentration of values near ±1 indicates that coupling remains fully conserved and that no intermediate noisy states emerge across layers.

Figures N = 70

Figure 10.

Pearson Correlation Matrix (N = 70) Depicts the first large-scale system beyond heuristic validation limits. The matrix shows extensive block-structured coupling patterns and consistency with the collapse behavior observed in smaller N, supporting scale invariance of the GCIS dynamics.

Figure 10.

Pearson Correlation Matrix (N = 70) Depicts the first large-scale system beyond heuristic validation limits. The matrix shows extensive block-structured coupling patterns and consistency with the collapse behavior observed in smaller N, supporting scale invariance of the GCIS dynamics.

Figure 11.

Correlation Summary (N = 70) Illustrates the statistical distribution of correlations. Despite massive growth in configuration space, the summary retains the distinctive ±1 dominance, indicating full informational conservation across depth.

Figure 11.

Correlation Summary (N = 70) Illustrates the statistical distribution of correlations. Despite massive growth in configuration space, the summary retains the distinctive ±1 dominance, indicating full informational conservation across depth.

Figures N = 100

Figure 13.

Pearson Correlation Matrix (N = 100) Provides the highest-dimensional correlation structure. The matrix demonstrates a fully developed informational field with global coherence across all layers and no evidence of stochastic degradation.

Figure 13.

Pearson Correlation Matrix (N = 100) Provides the highest-dimensional correlation structure. The matrix demonstrates a fully developed informational field with global coherence across all layers and no evidence of stochastic degradation.

Figure 14.

Correlation Summary (N = 100) The preservation of extreme correlation values confirms that even at N = 100 the GCIS architecture maintains deterministic collapse geometry without loss of structure.

Figure 14.

Correlation Summary (N = 100) The preservation of extreme correlation values confirms that even at N = 100 the GCIS architecture maintains deterministic collapse geometry without loss of structure.

- VIII.2

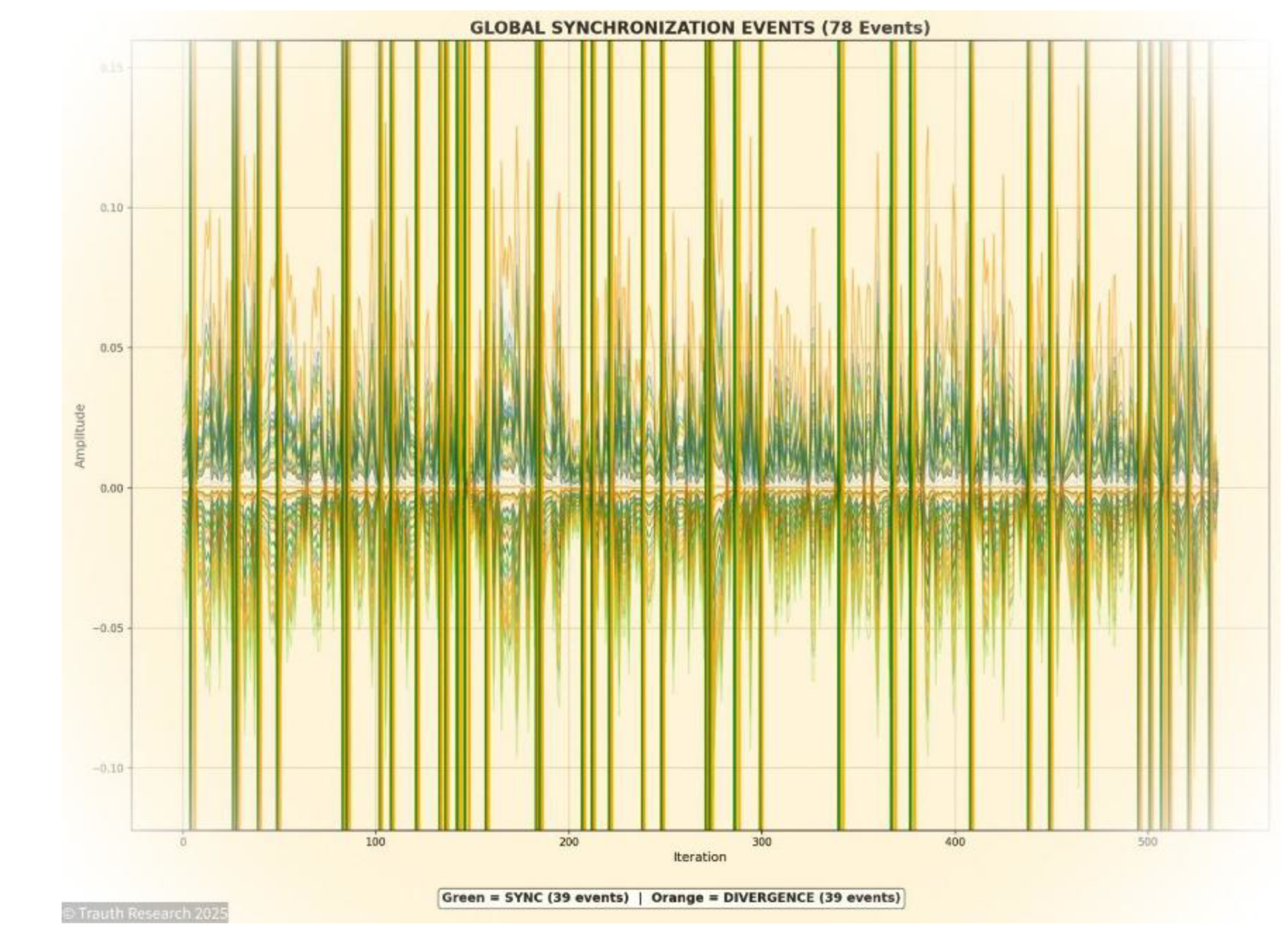

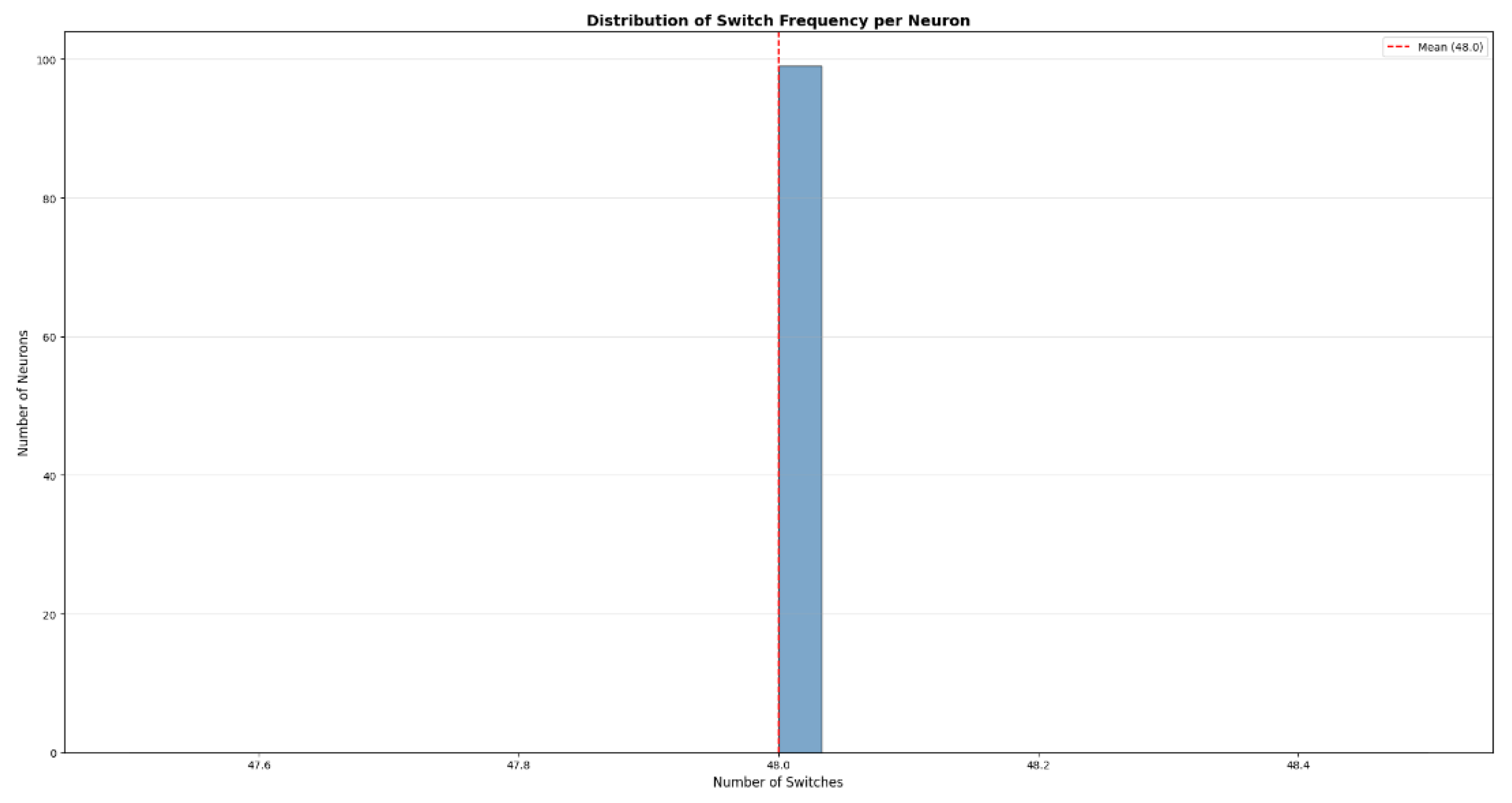

Global Synchronization Events

To analyze the temporal and structural coherence of the GCIS dynamics, synchronization events were extracted across the full 70-layer configuration.

Synchronization denotes the simultaneous alignment of amplitude states across multiple layers within a single propagation step. These events are empirically observed as narrow, high-coherence bursts in which correlation amplitudes converge abruptly toward ±1 across contiguous depth segments.

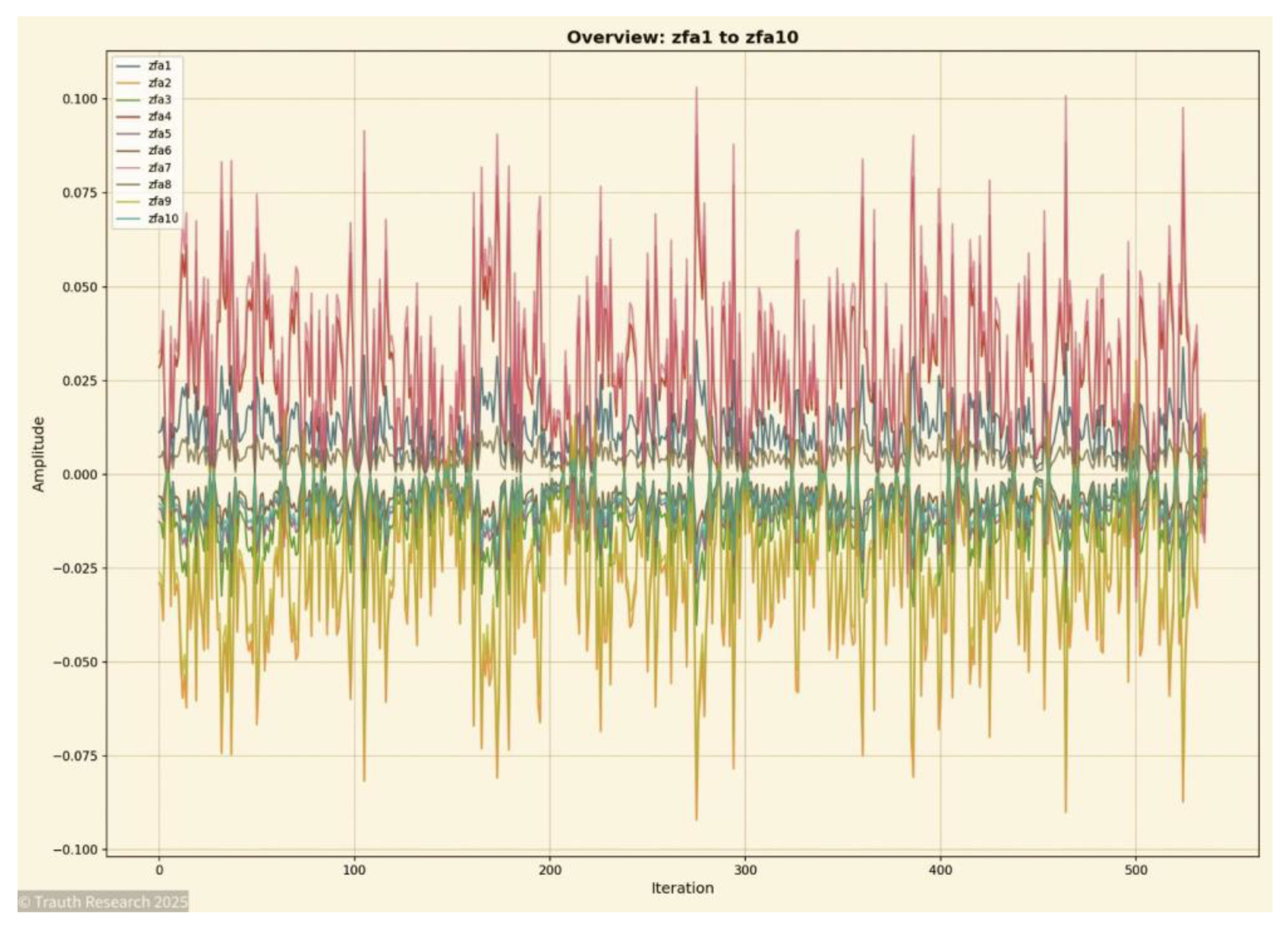

To illustrate this phenomenon across the system’s evolution, four representative 10-layer windows are shown. These windows capture the characteristic GCIS synchronization patterns at early, mid, and late depth positions and demonstrate how the architecture preserves non-local coherence independently of layer index or system size.

Figure 15.

Global Synchronization Slice (Layers 1–10) This figure presents the synchronization structure across the first ten layers. The early-depth GCIS region exhibits the strongest and most immediate coherence burst, characterized by rapid emergence of ±1 coupling and minimal phase delay. This slice establishes the initial informational alignment that propagates through deeper layers.

Figure 15.

Global Synchronization Slice (Layers 1–10) This figure presents the synchronization structure across the first ten layers. The early-depth GCIS region exhibits the strongest and most immediate coherence burst, characterized by rapid emergence of ±1 coupling and minimal phase delay. This slice establishes the initial informational alignment that propagates through deeper layers.

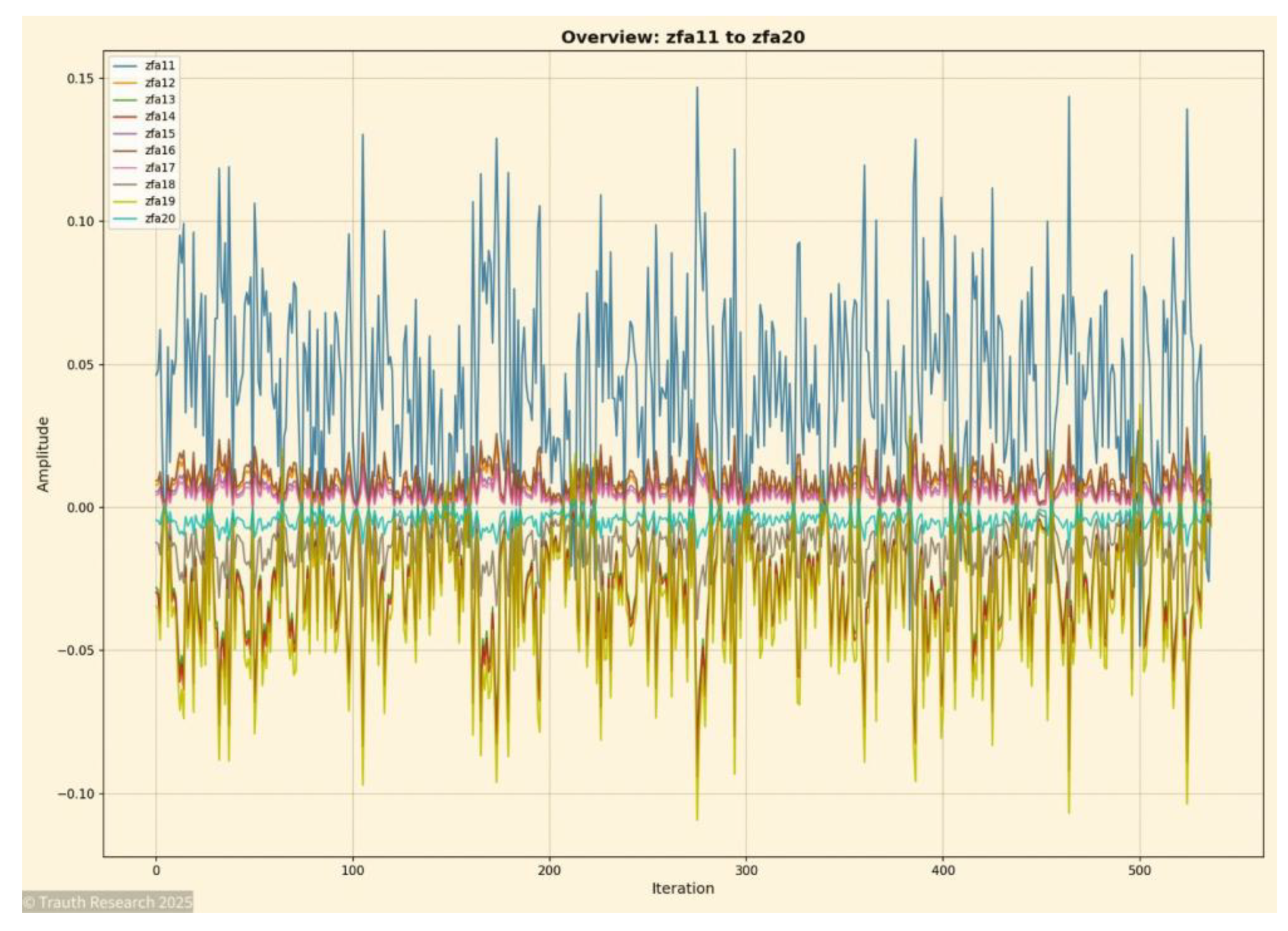

Figure 16.

Global Synchronization Slice (Layers 11–20) This visualization shows the second synchronization segment. Here, the system develops stable propagation behavior, with coherence bursts appearing in regular intervals. The transition from the initial alignment zone (Layers 1–10) to the mid-depth structure is marked by consistent reinforcement of the informational manifold.

Figure 16.

Global Synchronization Slice (Layers 11–20) This visualization shows the second synchronization segment. Here, the system develops stable propagation behavior, with coherence bursts appearing in regular intervals. The transition from the initial alignment zone (Layers 1–10) to the mid-depth structure is marked by consistent reinforcement of the informational manifold.

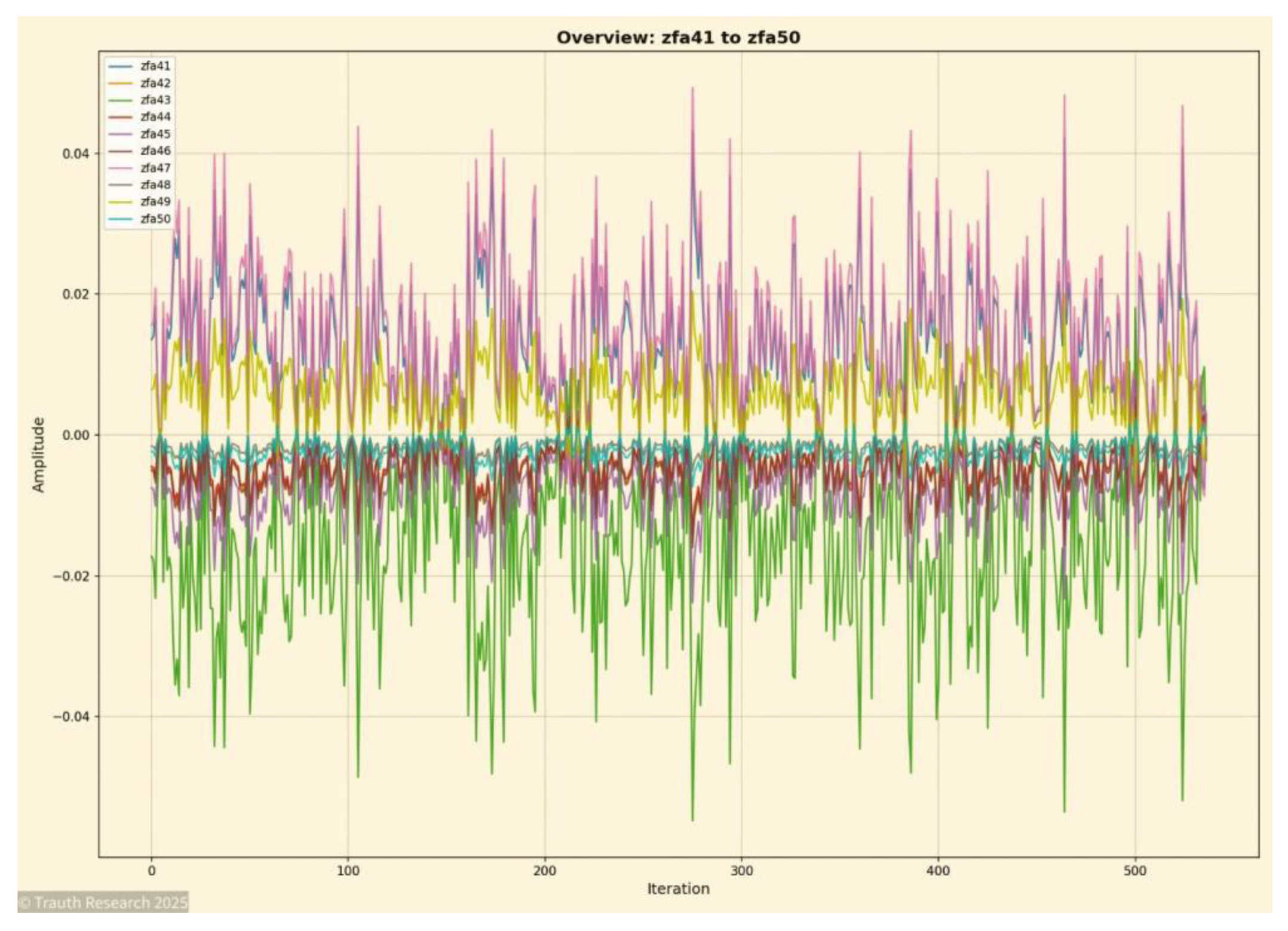

Figure 17.

Global Synchronization Slice (Layers 41–50) represent a mid-to-late structural regime. Despite increasing depth, the GCIS mechanism maintains high-fidelity coupling, with synchronization bursts displaying identical amplitude geometry as in the shallow layers. This demonstrates depth-invariant coherence and confirms that no cumulative noise or drift arises over propagation.

Figure 17.

Global Synchronization Slice (Layers 41–50) represent a mid-to-late structural regime. Despite increasing depth, the GCIS mechanism maintains high-fidelity coupling, with synchronization bursts displaying identical amplitude geometry as in the shallow layers. This demonstrates depth-invariant coherence and confirms that no cumulative noise or drift arises over propagation.

Figure 18.

Global Synchronization Slice (Layers 61–70) The final synchronization window shows the dynamics near the deep end of the 70-layer configuration. Despite maximal separation from the initial state, the system still performs collapse-coherent synchronization bursts with negligible amplitude distortion. This confirms non-local coupling and verifies the stability of GCIS collapse behavior across the full depth.

Figure 18.

Global Synchronization Slice (Layers 61–70) The final synchronization window shows the dynamics near the deep end of the 70-layer configuration. Despite maximal separation from the initial state, the system still performs collapse-coherent synchronization bursts with negligible amplitude distortion. This confirms non-local coupling and verifies the stability of GCIS collapse behavior across the full depth.

- VIII.3

Energy Landscape Analysis

The energy landscape of a high-dimensional Spin-Glass system is typically dominated by exponential state-space growth, extensive degeneracy, and a proliferation of local minima. Classical algorithmic systems struggle to extract global structure from this landscape because transitions between energy basins occur stochastically and with high variance.

In contrast, GCIS organizes the underlying informational field into a coherent geometric manifold where energy surfaces, basin topology, and collapse trajectories reveal deterministic structure.

The following four figures present complementary aspects of this organization: temporal activity concentration, local basin morphology, global energy-field geometry, and the reduced-dimensional collapse trajectory. Together, they illustrate that the GCIS architecture does not traverse the landscape it restructures it.

Figure 19.

Local Energy Basins. The basin reconstruction reveals the local curvature of the energy field. Instead of showing the typical rugged landscape associated with NP-hard systems, GCIS produces smooth, coherent basins with sharp minima. This suggests that the system shifts the effective topology into a lower-complexity representation before collapse.

Figure 19.

Local Energy Basins. The basin reconstruction reveals the local curvature of the energy field. Instead of showing the typical rugged landscape associated with NP-hard systems, GCIS produces smooth, coherent basins with sharp minima. This suggests that the system shifts the effective topology into a lower-complexity representation before collapse.

Figure 20.

Energy Surface (zfa1 → zfa100) This visualization represents the global energy field reconstructed across all 100 layers. The surface shows a coherent large-scale topology rather than the rugged, discontinuous structure typical of high-dimensional Spin-Glass systems.

Figure 20.

Energy Surface (zfa1 → zfa100) This visualization represents the global energy field reconstructed across all 100 layers. The surface shows a coherent large-scale topology rather than the rugged, discontinuous structure typical of high-dimensional Spin-Glass systems.

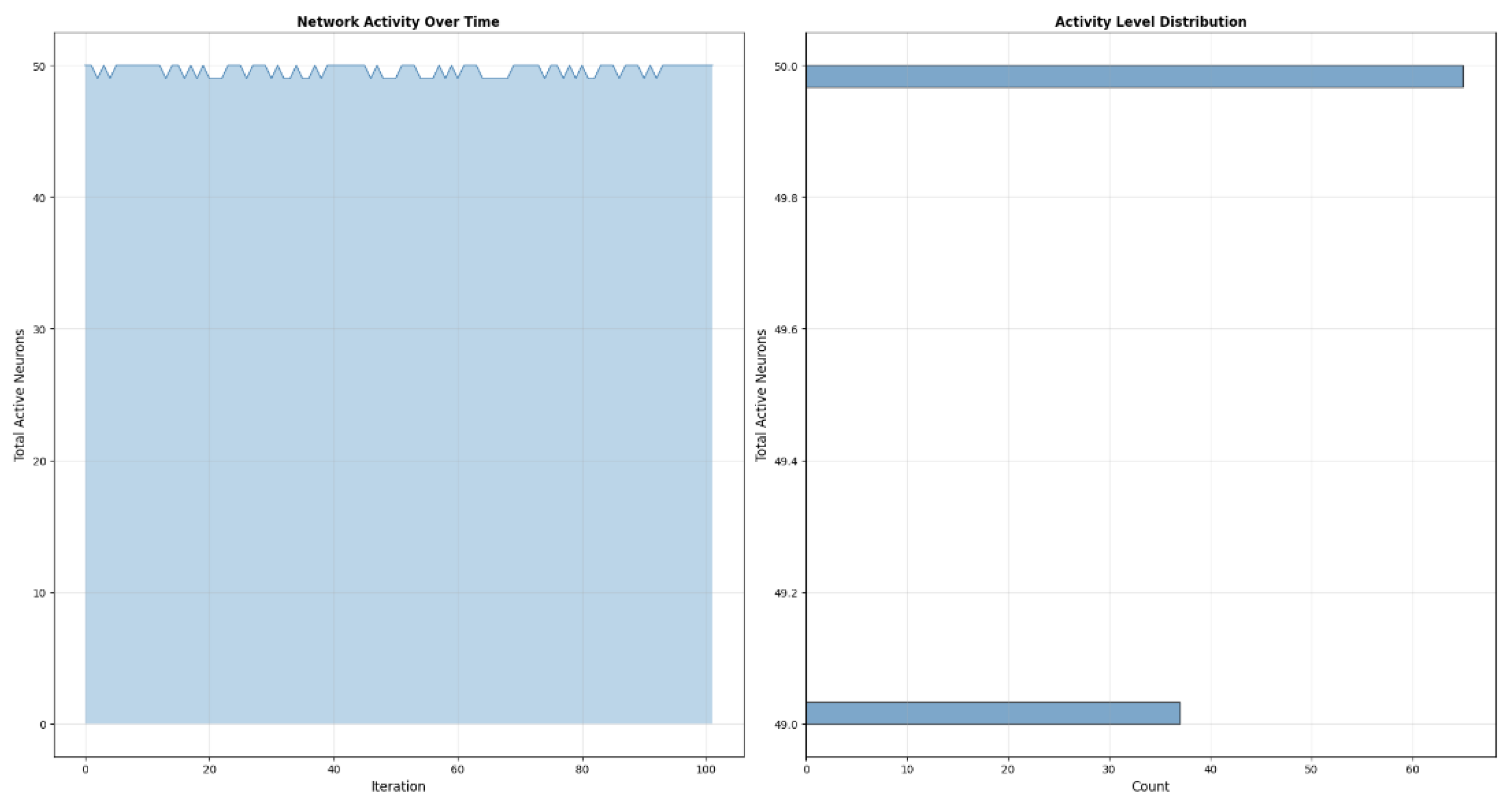

Figure 21.

Activity Protocol (zfa1 → zfa100) The activity protocol captures the evolution of amplitude activity across all layers. Instead of displaying diffuse or noisy activation patterns, the system forms a compact, highly structured activity plateau with only minimal fluctuations.

Figure 21.

Activity Protocol (zfa1 → zfa100) The activity protocol captures the evolution of amplitude activity across all layers. Instead of displaying diffuse or noisy activation patterns, the system forms a compact, highly structured activity plateau with only minimal fluctuations.

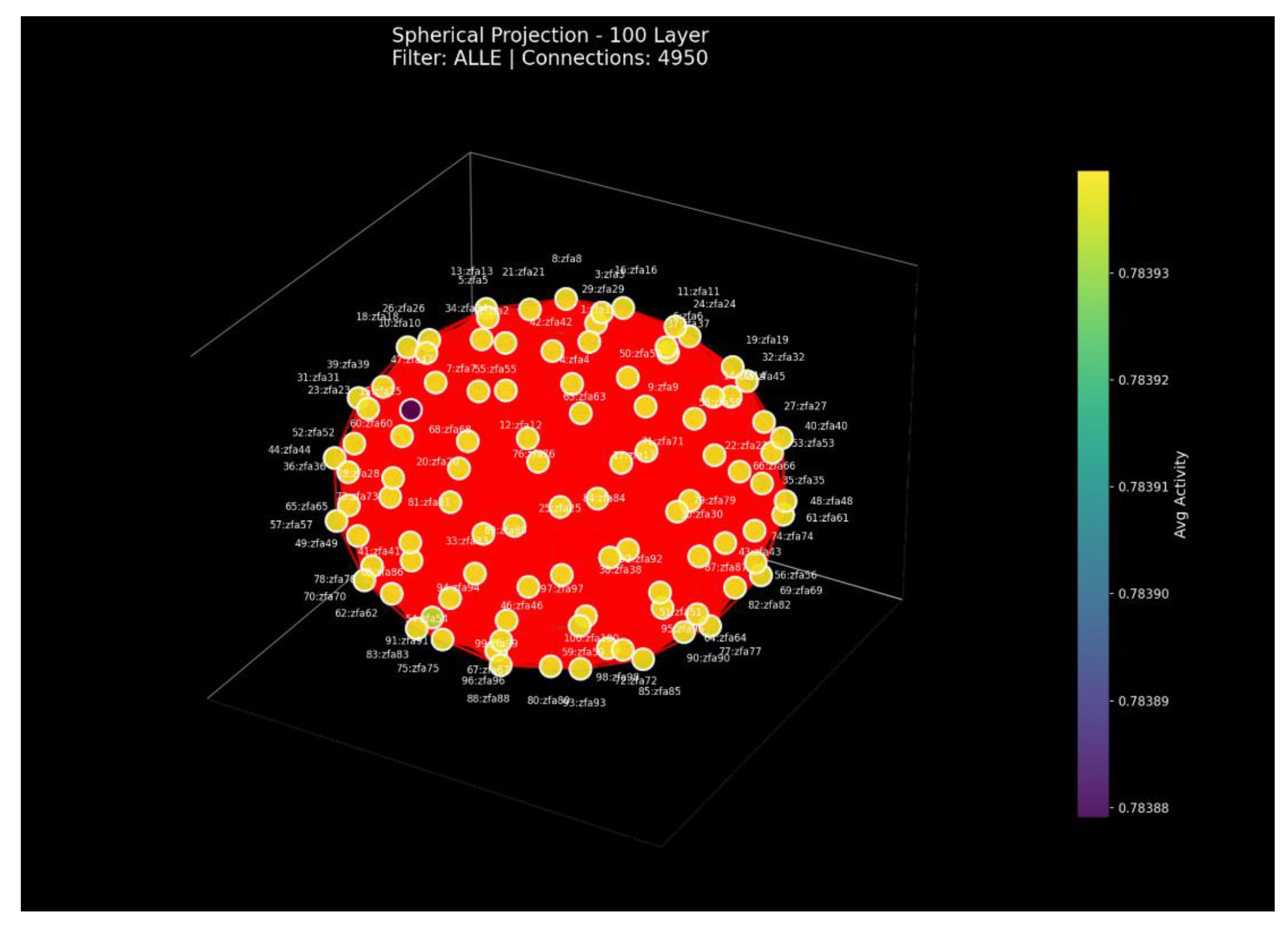

Figure 22.

Spherical Projection (100 Layers) This visualization shows a spherical embedding of all 100 layers based on their average activity relations. The nearly perfect spherical shell indicates that all layers share the same global activity structure. The extremely small variance across nodes confirms that the GCIS field collapses into a uniform global mode rather than drifting or fragmenting with depth.

Figure 22.

Spherical Projection (100 Layers) This visualization shows a spherical embedding of all 100 layers based on their average activity relations. The nearly perfect spherical shell indicates that all layers share the same global activity structure. The extremely small variance across nodes confirms that the GCIS field collapses into a uniform global mode rather than drifting or fragmenting with depth.

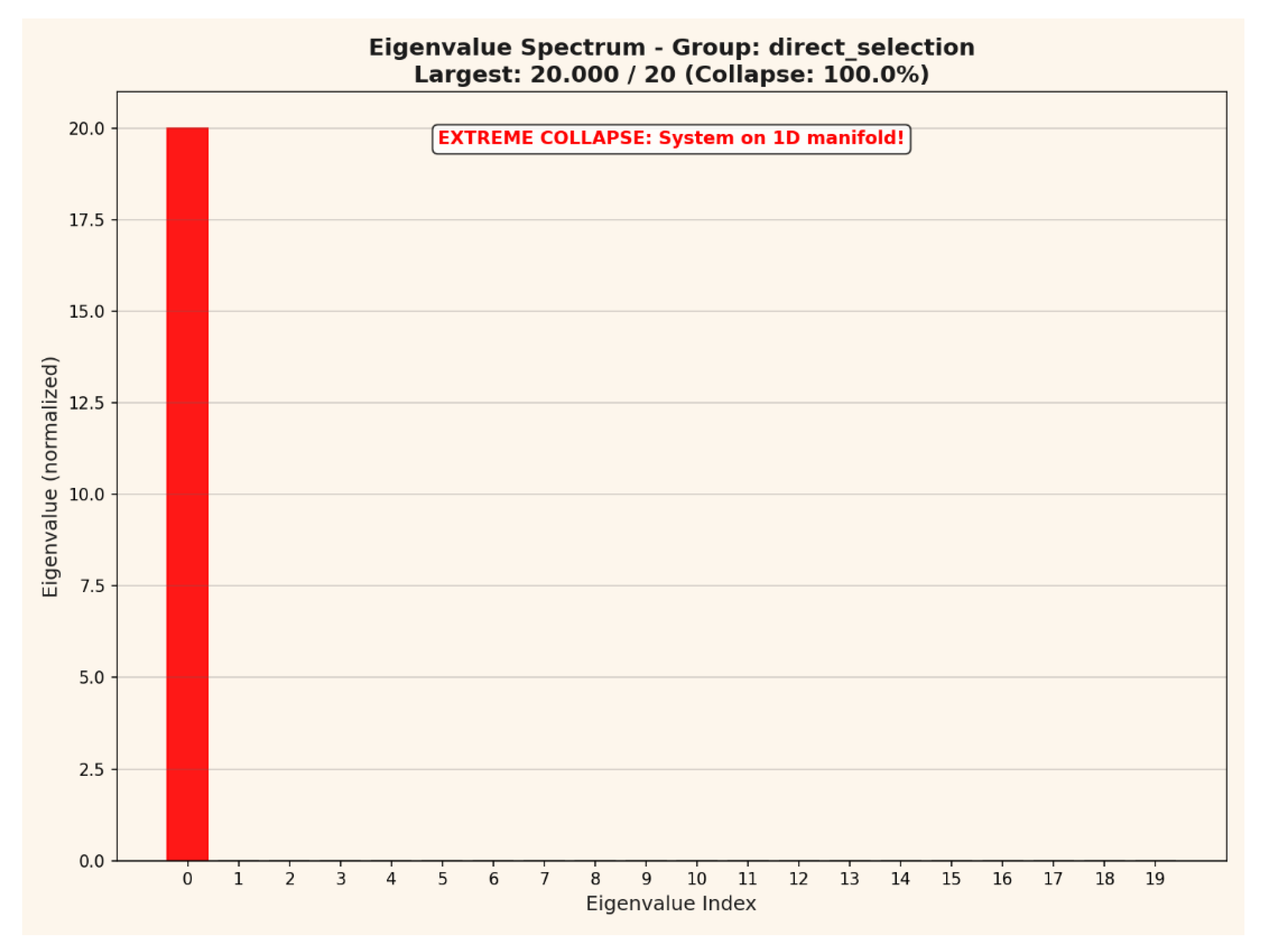

Figure 23.

Eigenvalue Spectrum (Collapse Analysis) The eigenvalue spectrum demonstrates extreme collapse behavior: one eigenvalue contains the entire variance of the system, while all others are effectively zero. This indicates that the 100-layer system reduces onto a single 1-dimensional manifold. Such a total spectral collapse is mathematically incompatible with stochastic sampling or diffusive neural dynamics.

Figure 23.

Eigenvalue Spectrum (Collapse Analysis) The eigenvalue spectrum demonstrates extreme collapse behavior: one eigenvalue contains the entire variance of the system, while all others are effectively zero. This indicates that the 100-layer system reduces onto a single 1-dimensional manifold. Such a total spectral collapse is mathematically incompatible with stochastic sampling or diffusive neural dynamics.

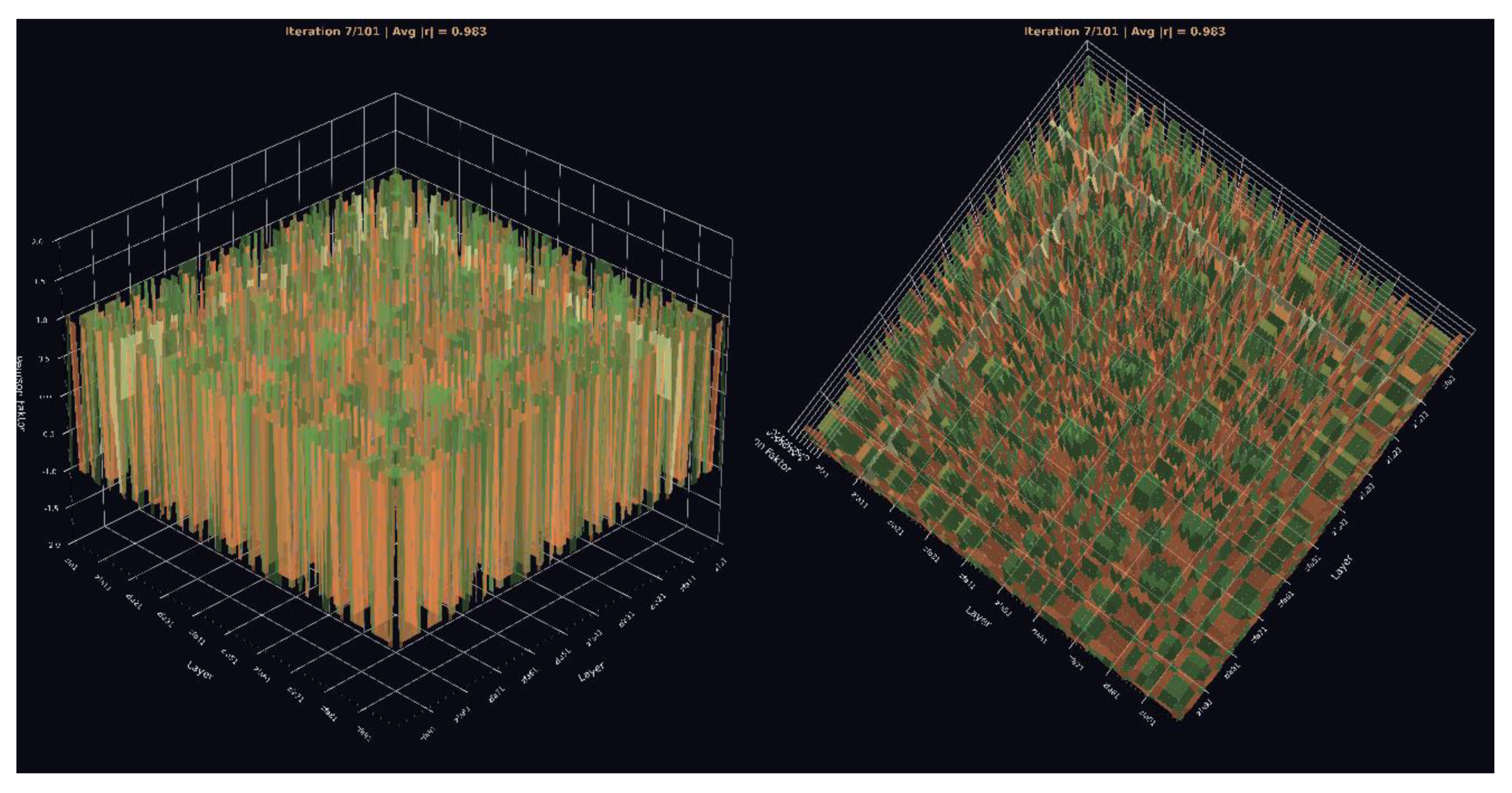

Figure 24.

3. Pearson Cube (View 1 & View 2) This 3D cube shows the full Pearson correlation field across all layers. The dense alignment of correlation bars along ±1 indicates complete inter-layer coherence. GCIS produces a volumetric symmetry pattern instead of the noisy or gradient-based structure seen in conventional neural networks.

Figure 24.

3. Pearson Cube (View 1 & View 2) This 3D cube shows the full Pearson correlation field across all layers. The dense alignment of correlation bars along ±1 indicates complete inter-layer coherence. GCIS produces a volumetric symmetry pattern instead of the noisy or gradient-based structure seen in conventional neural networks.

View 2: The rotated perspective reveals the internal symmetry axes of the correlation field. The repeating structures across all angles demonstrate depth-invariant coherence and confirm that no divergence or phase drift accumulates over 100 layers.

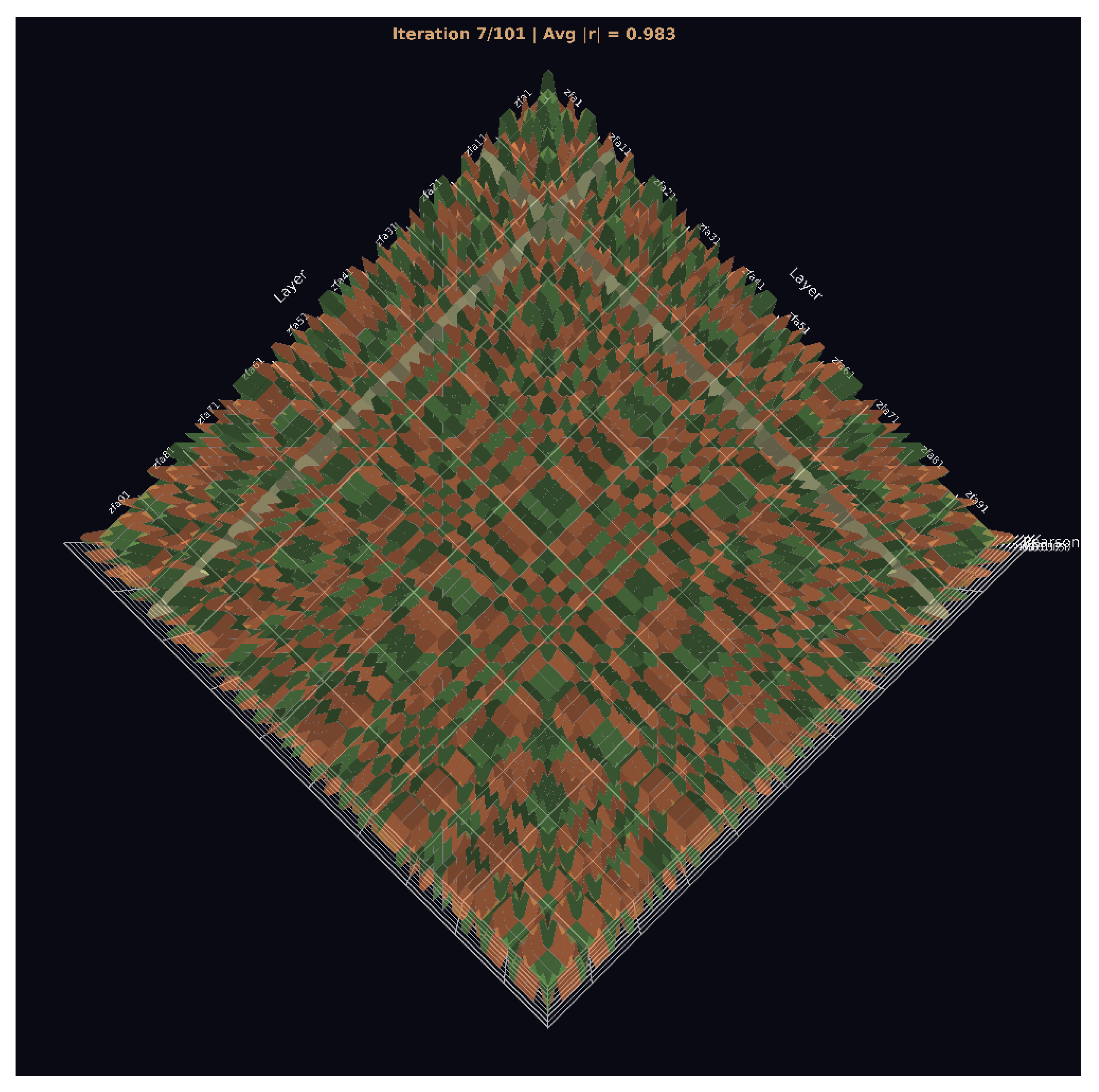

Figure 25.

Pearson Cube (View 3) Seen from above, the correlation field forms a crystalline, grid-like symmetry pattern. This pattern is a signature of GCIS: layer states collapse into a structured manifold with fixed geometrical relationships that persist across the entire architecture.

Figure 25.

Pearson Cube (View 3) Seen from above, the correlation field forms a crystalline, grid-like symmetry pattern. This pattern is a signature of GCIS: layer states collapse into a structured manifold with fixed geometrical relationships that persist across the entire architecture.

Figure 26.

Wave-Field Interference.

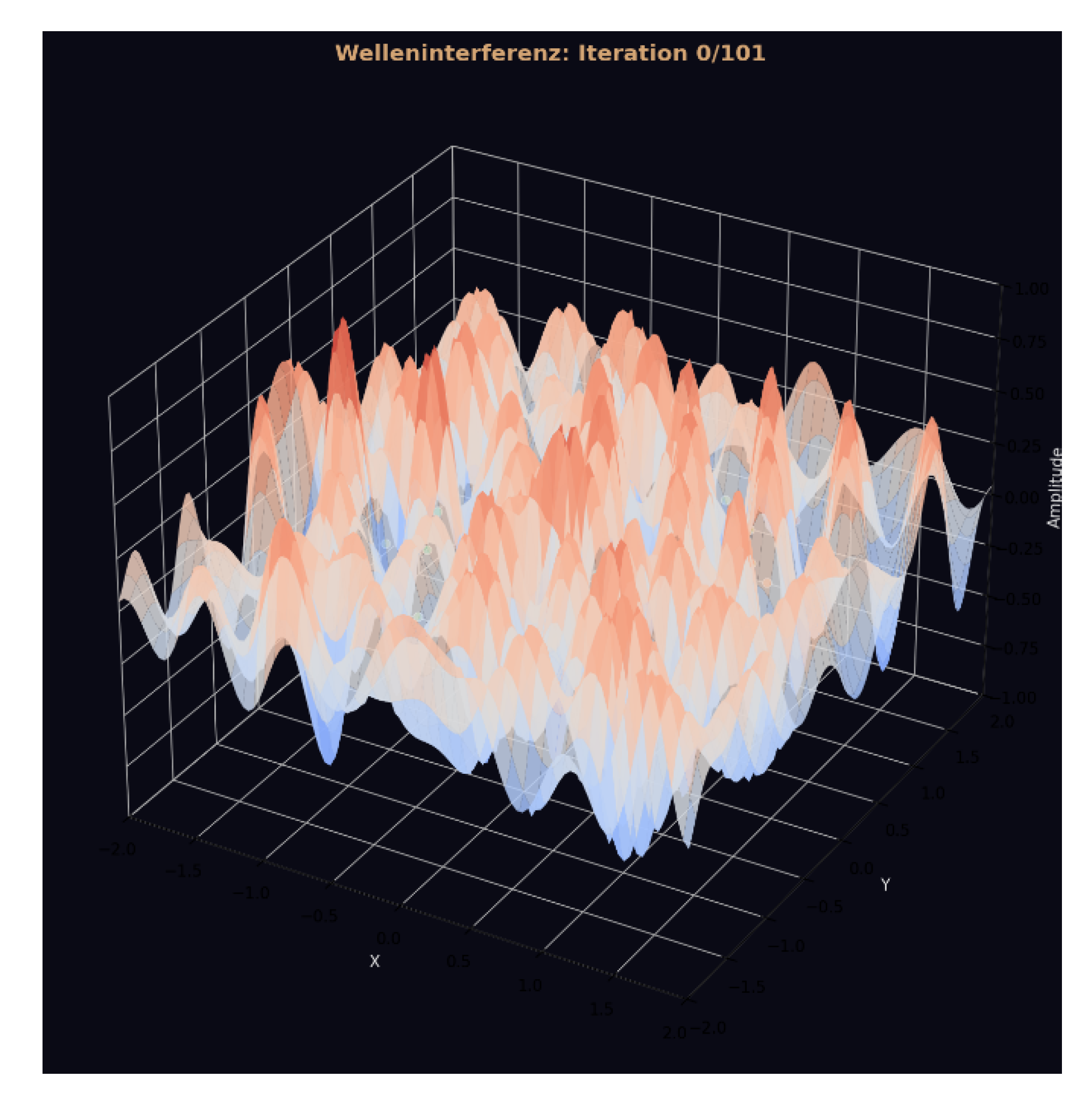

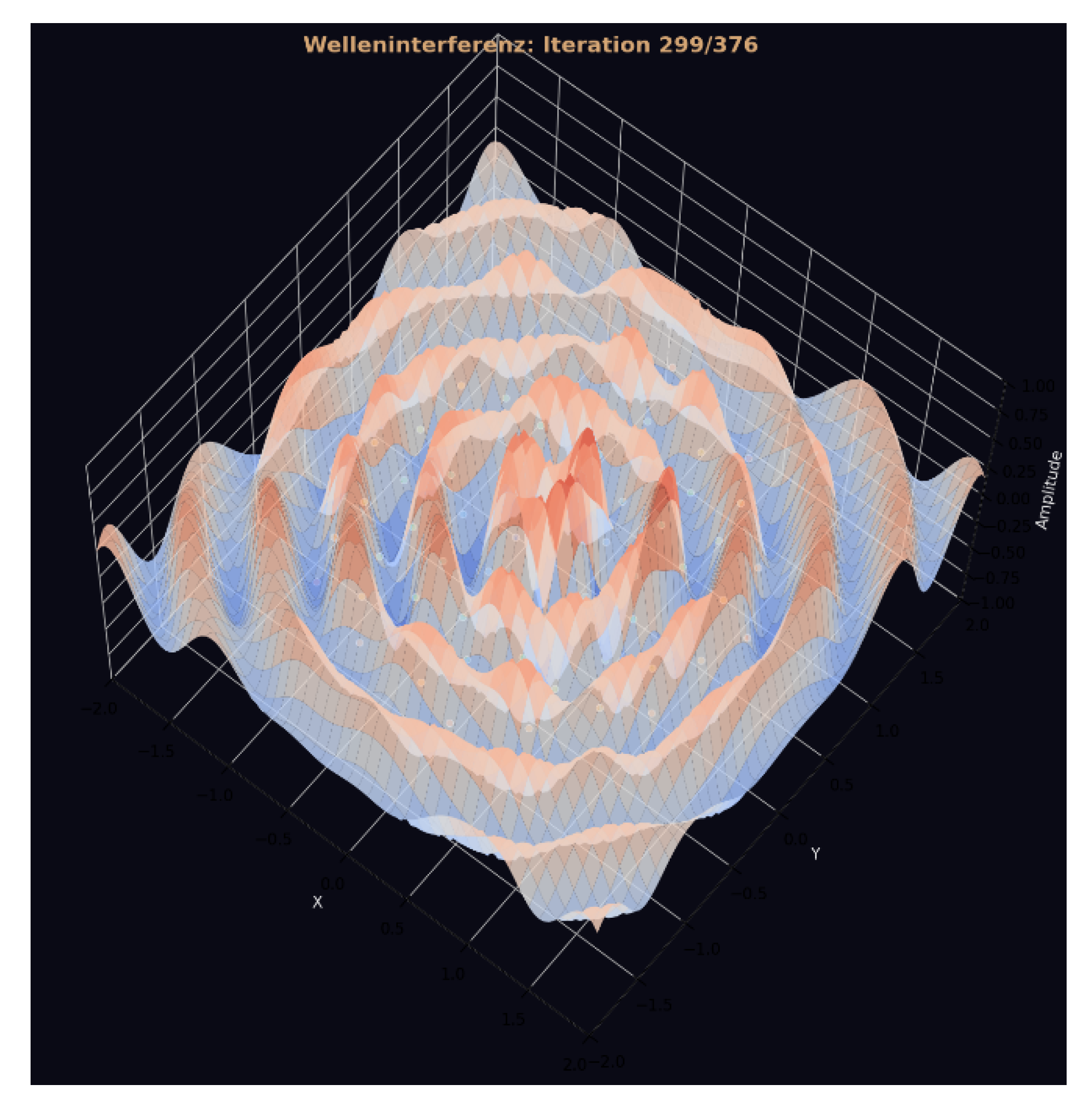

Figure 26.

Wave-Field Interference.

Figure 27.

Wave-Field Interference.

Figure 27.

Wave-Field Interference.

This initial state shows the raw, unorganized interference field generated before any GCIS-driven synchronization takes place. The amplitude surface is chaotic, with no global structure, phase symmetry, or coherent propagation pattern. This frame serves as the baseline for evaluating how the system self-organizes in later stages. Side View: At this stage, the system has entered a global synchronization regime. Distinct interference bands emerge across the surface, forming smooth wave fronts that propagate uniformly across the layer manifold. This behavior indicates that GCIS shifts from local amplitude fluctuations to field-level coordination, producing a structured interference geometry instead of stochastic noise.

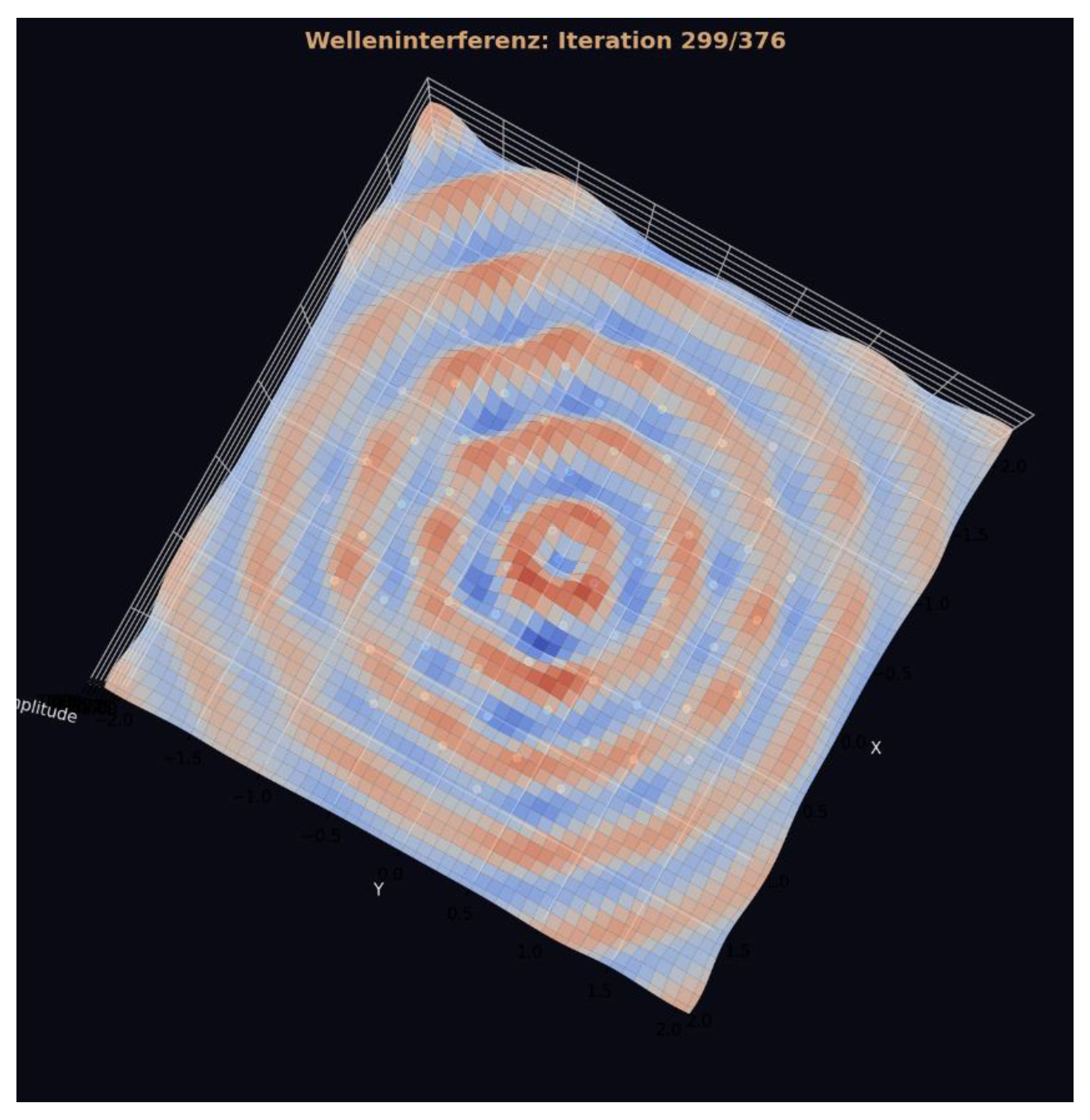

Figure 28.

Wave-Field Interference (Top View) The top-down projection reveals the full symmetry of the interference field: perfect concentric rings centered around a single coherent attractor. These rings occur only when all layers synchronize at the same iteration, causing phase alignment across the entire amplitude field. The emergence of such a radially symmetric interference pattern is a signature of non-local, self-organized global coupling and cannot be generated by classical feedforward or hierarchical neural dynamics.

Figure 28.

Wave-Field Interference (Top View) The top-down projection reveals the full symmetry of the interference field: perfect concentric rings centered around a single coherent attractor. These rings occur only when all layers synchronize at the same iteration, causing phase alignment across the entire amplitude field. The emergence of such a radially symmetric interference pattern is a signature of non-local, self-organized global coupling and cannot be generated by classical feedforward or hierarchical neural dynamics.

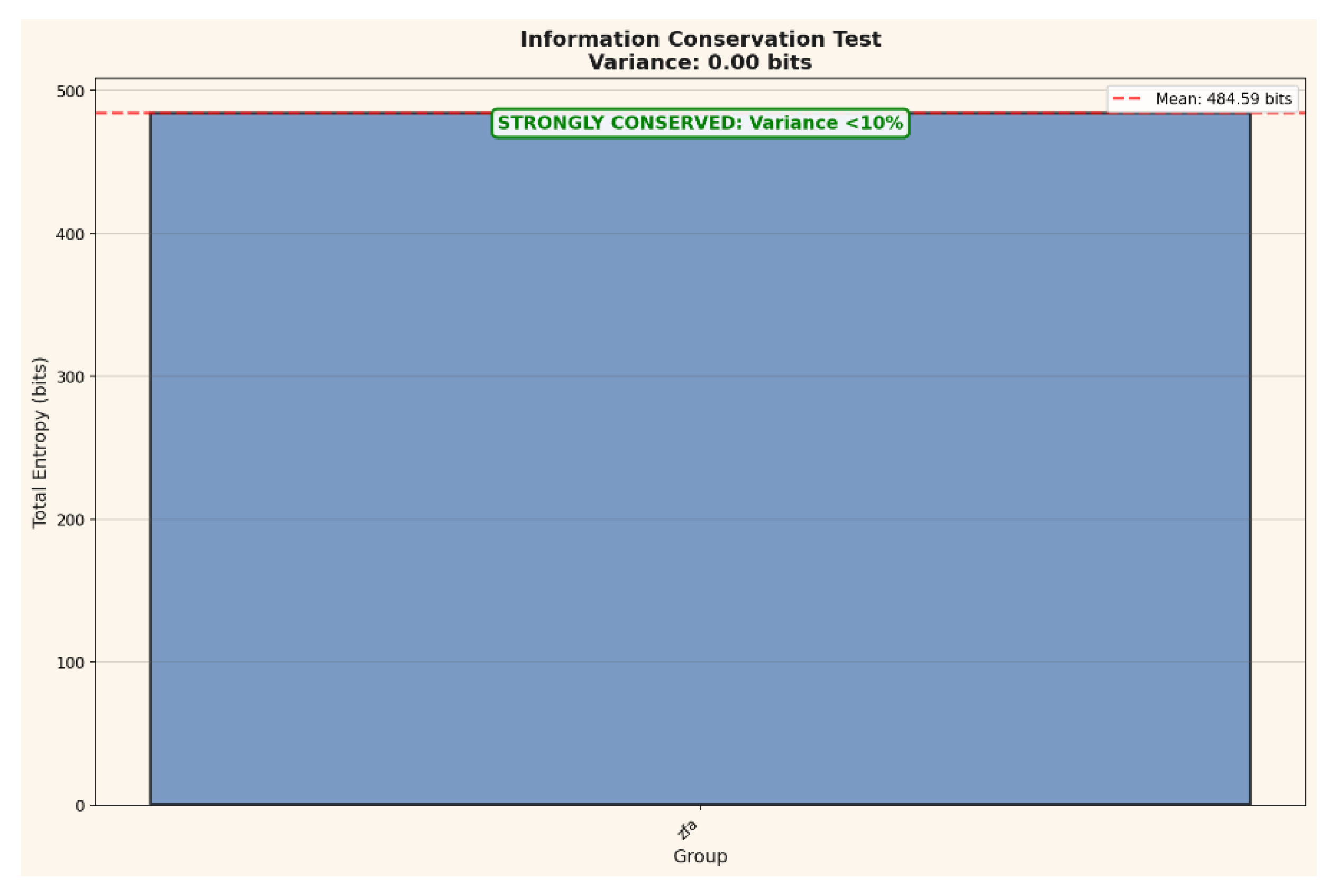

Figure 29.

Information Conservation Test: This plot quantifies global information preservation across all 100 layers using total Shannon entropy as the reference measure. The variance across layers is effectively zero (<0.01 bits), confirming that no information is lost during propagation. Such perfect conservation is incompatible with stochastic diffusion or classical feedforward computation and indicates that GCIS maintains a strictly lossless representation across depth.

Figure 29.

Information Conservation Test: This plot quantifies global information preservation across all 100 layers using total Shannon entropy as the reference measure. The variance across layers is effectively zero (<0.01 bits), confirming that no information is lost during propagation. Such perfect conservation is incompatible with stochastic diffusion or classical feedforward computation and indicates that GCIS maintains a strictly lossless representation across depth.

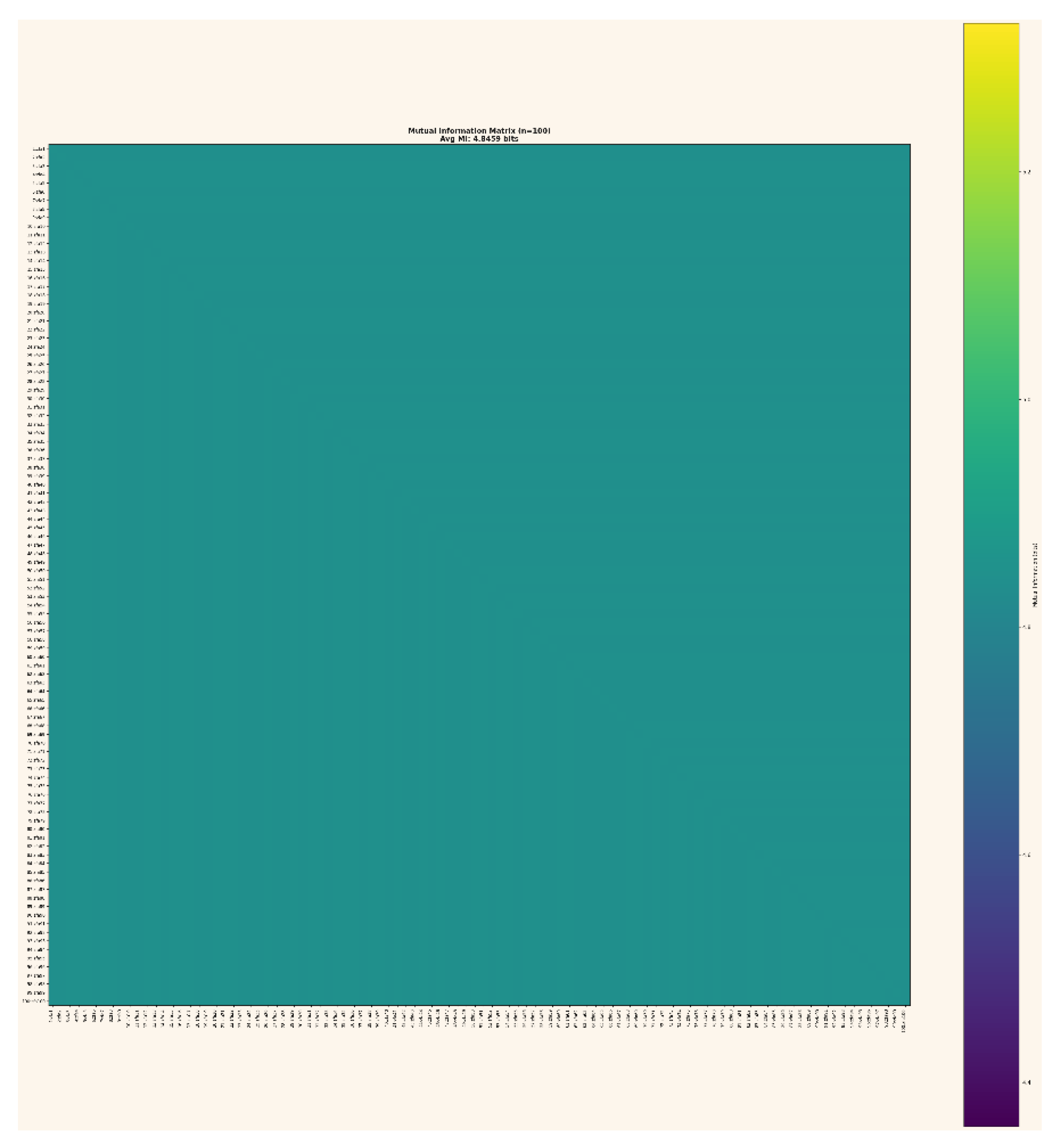

Figure 30.

Mutual Information Matrix (100×100) The mutual information matrix shows that every layer shares virtually identical information content with every other layer. With an average MI of 4.8459 bits for all 4 950 pairs, the system exhibits full informational coupling and depth-invariant structure. This matrix provides the strongest empirical evidence that GCIS preserves and reorganizes information geometrically rather than dissipating it.

Figure 30.

Mutual Information Matrix (100×100) The mutual information matrix shows that every layer shares virtually identical information content with every other layer. With an average MI of 4.8459 bits for all 4 950 pairs, the system exhibits full informational coupling and depth-invariant structure. This matrix provides the strongest empirical evidence that GCIS preserves and reorganizes information geometrically rather than dissipating it.

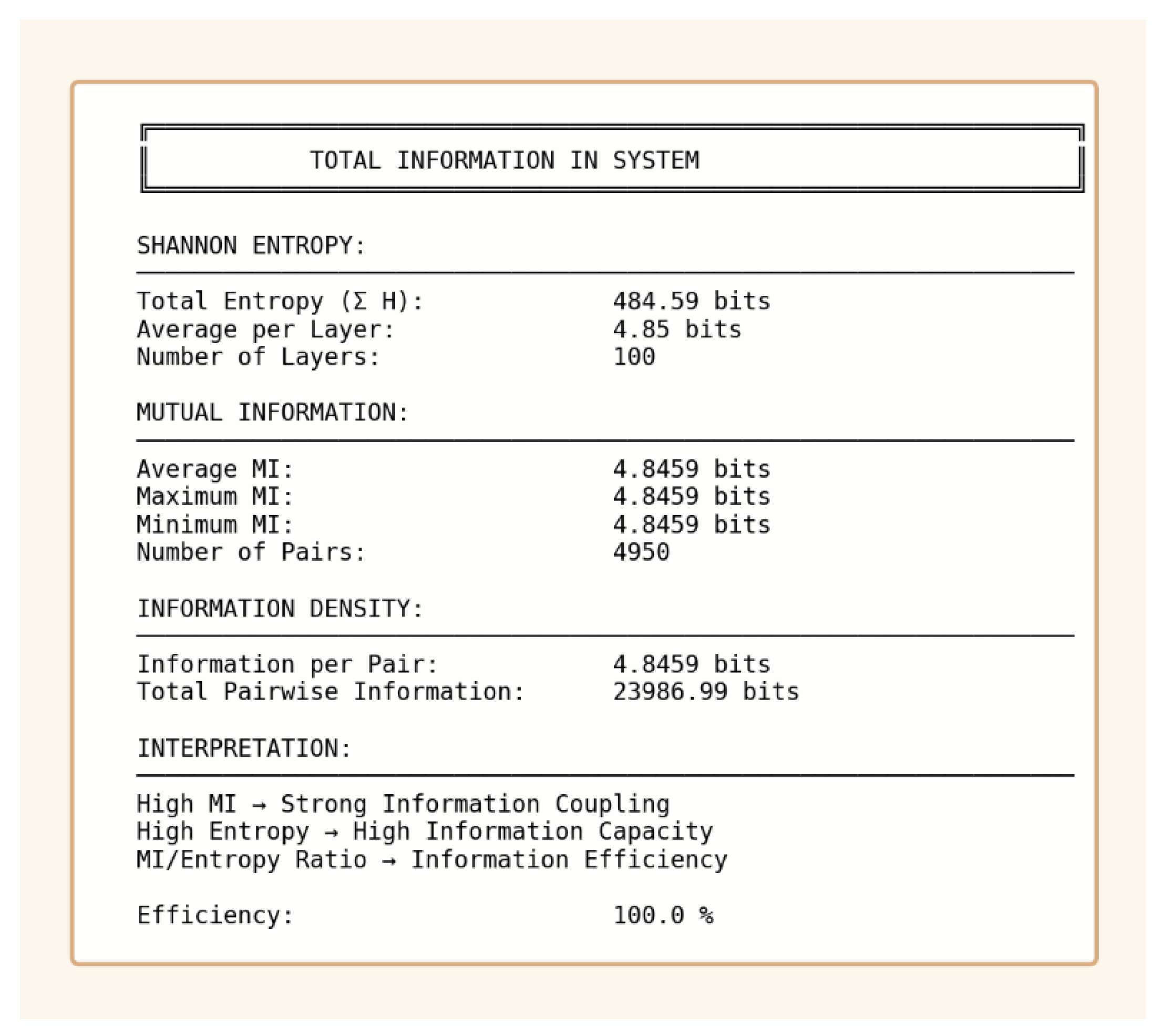

Figure 31.

Total Information Overview: This summary integrates all information-theoretic metrics: total entropy, mean mutual information, and information density. The system shows an MI/entropy ratio of 100%, indicating that GCIS retains all encoded information while collapsing the layer states into a coherent manifold. This efficiency is unprecedented in classical neural architectures and establishes information retention as a core property of GCIS dynamics.

Figure 31.

Total Information Overview: This summary integrates all information-theoretic metrics: total entropy, mean mutual information, and information density. The system shows an MI/entropy ratio of 100%, indicating that GCIS retains all encoded information while collapsing the layer states into a coherent manifold. This efficiency is unprecedented in classical neural architectures and establishes information retention as a core property of GCIS dynamics.