Submitted:

29 November 2025

Posted:

01 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Background and Motivation

1.2. Problem Statement

1.3. Research Objectives

- To develop and validate a deep learning model optimized for snow leopard detection in camera trap imagery

- To implement a preprocessing pipeline that enhances detection accuracy while reducing computational requirements

- To deploy the system as an accessible web application suitable for use by conservation practitioners

- To establish baseline performance metrics for automated snow leopard detection in the Kyrgyz context

1.4. Contributions

- First regional implementation: To our knowledge, this represents the first automated snow leopard detection system developed for and deployed within the Kyrgyz Republic

- Two-stage detection pipeline: We introduce a preprocessing approach combining motion detection with deep classification, reducing computational overhead while maintaining detection sensitivity

- Attention-enhanced architecture: We demonstrate the effectiveness of squeeze-and-excitation mechanisms for species-specific detection in challenging environmental conditions

- Comprehensive evaluation: We provide rigorous performance assessment using 5-fold cross-validation with multiple evaluation metrics

- Practical deployment: The system has been implemented as a functional web application, facilitating adoption by conservation organizations

2. Related Work

2.1. Deep Learning for Camera Trap Image Analysis

2.2. Attention Mechanisms in Visual Recognition

2.3. Transfer Learning with Lightweight Architectures

2.4. Addressing Class Imbalance in Wildlife Detection

2.5. Snow Leopard Conservation Technologies

2.6. Research Gap and Motivation

3. Materials and Methods

3.1. Study Context

3.2. Dataset Compilation

3.2.1. Data Sources

- Camera trap archives: Authentic camera trap images were obtained from conservation partner organizations operating in Kyrgyzstan, comprising field-captured photographs from monitoring surveys. Images were captured using Bushnell Trophy Cam units, providing both daytime color imagery and nighttime infrared captures with embedded metadata (temperature, date, time).

- Controlled environment captures: To supplement the limited availability of wild snow leopard images, additional footage was collected from camera traps deployed at a wildlife sanctuary housing two snow leopards. Video recordings were processed to extract frames representing diverse poses, lighting conditions, and times of day.

- Public repositories: Additional snow leopard images were sourced from publicly available wildlife image databases, including iNaturalist and Flickr Creative Commons collections, filtered for image quality and taxonomic accuracy.

3.2.2. Dataset Characteristics

- Empty camera trap frames showing landscape without wildlife

- Images of sympatric species (ibex, wolves, foxes, marmots, birds)

- Various environmental conditions (snow cover, bare rock, vegetation)

3.3. Preprocessing Pipeline

3.3.1. Motion Detection Stage

3.3.2. Image Preprocessing

- Resizing: Images are resized to pixels to match the input dimensions expected by the MobileNetV2 architecture

- Normalization: Pixel values are scaled to the range

- Channel standardization: RGB channel values are normalized using ImageNet statistics

3.4. Model Architecture

3.4.1. Base Architecture Selection

- Computational efficiency: Depthwise separable convolutions reduce parameter count and inference time, facilitating deployment on resource-constrained systems

- Transfer learning compatibility: Pre-trained ImageNet weights provide robust feature extraction capabilities

- Proven performance: Demonstrated effectiveness in wildlife classification tasks [18]

3.4.2. Attention Mechanism Integration

- Squeeze: Global average pooling compresses spatial dimensions to a channel descriptor

- Excitation: A two-layer fully connected network learns channel-wise dependencies with reduction ratio of 16

- Recalibration: Original features are scaled by the learned channel weights

3.4.3. Complete Architecture

3.5. Training Procedure

3.5.1. Loss Function

3.5.2. Optimization Configuration

3.5.3. Data Augmentation

3.5.4. Cross-Validation

3.5.5. Training Infrastructure

3.6. Evaluation Metrics

- Accuracy: Overall proportion of correct predictions

- Sensitivity (Recall): True positive rate—ability to correctly identify snow leopard images

- Specificity: True negative rate—ability to correctly identify non-snow leopard images

- Precision: Positive predictive value

- F1 Score: Harmonic mean of precision and recall

- AUC-ROC: Area under the Receiver Operating Characteristic curve

- Average Precision (AP): Area under the Precision-Recall curve

4. Results

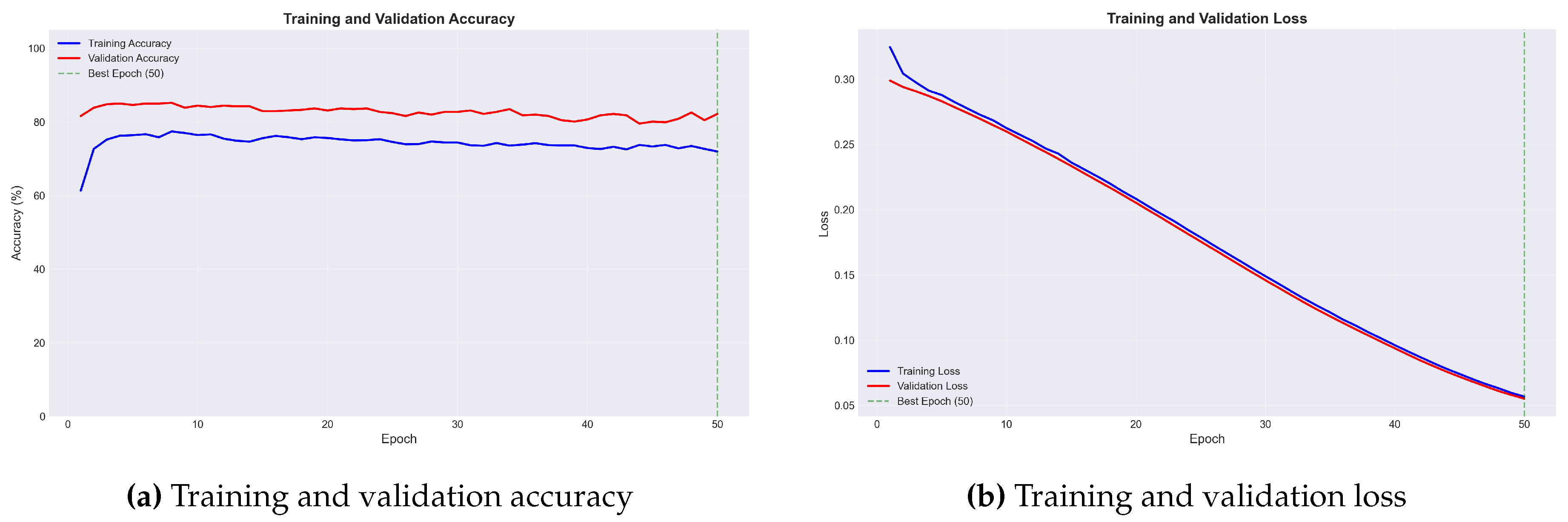

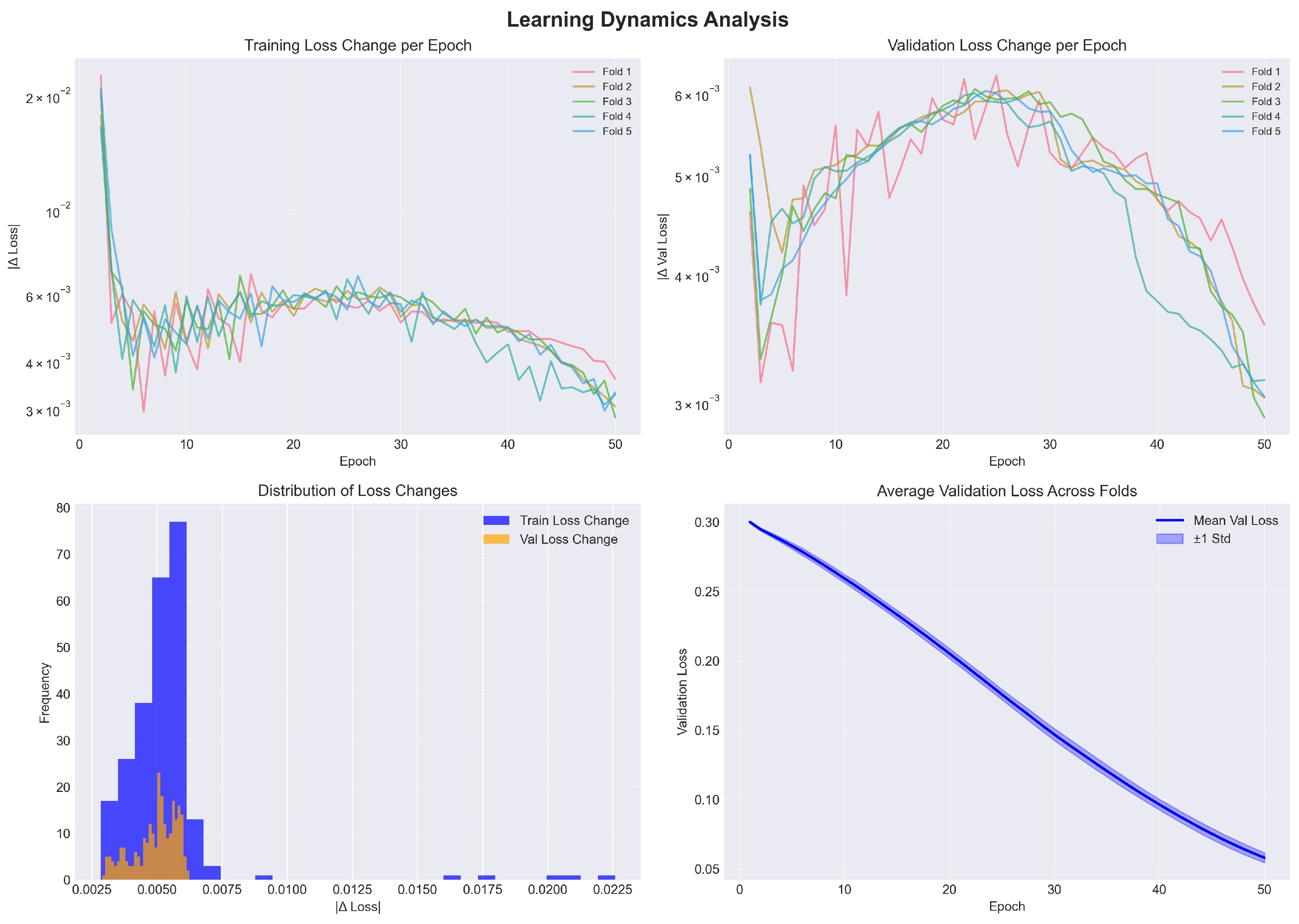

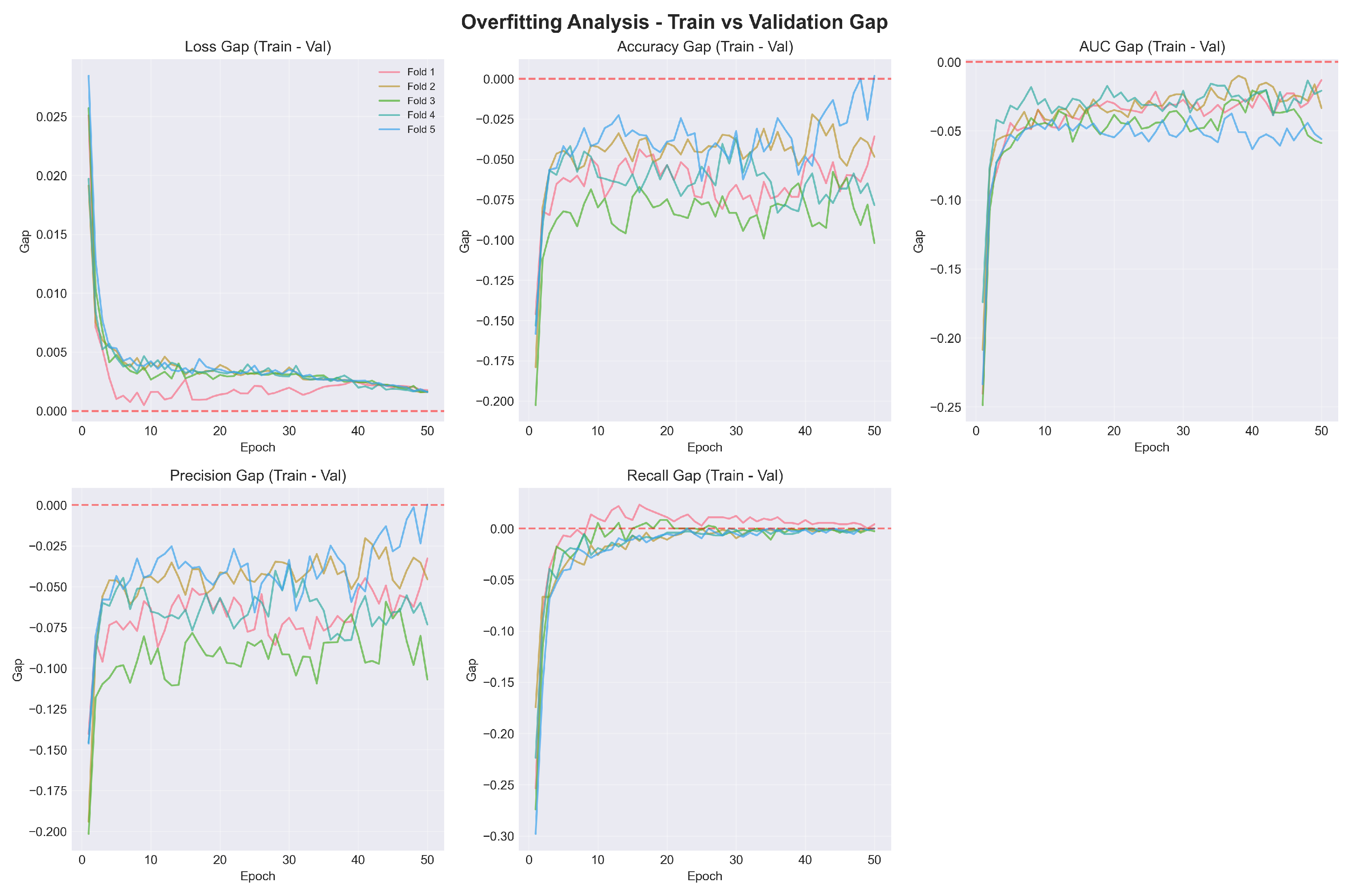

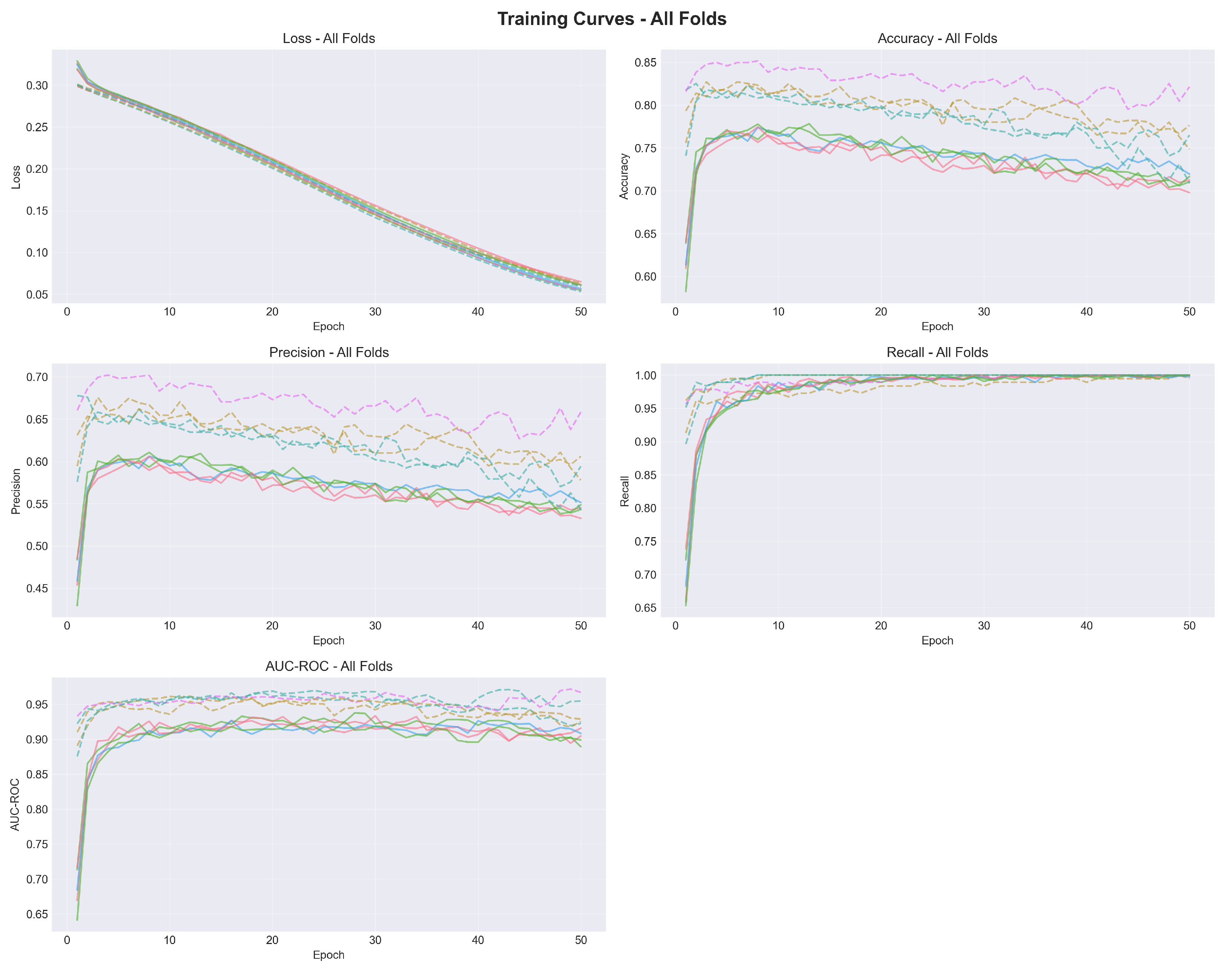

4.1. Training Dynamics

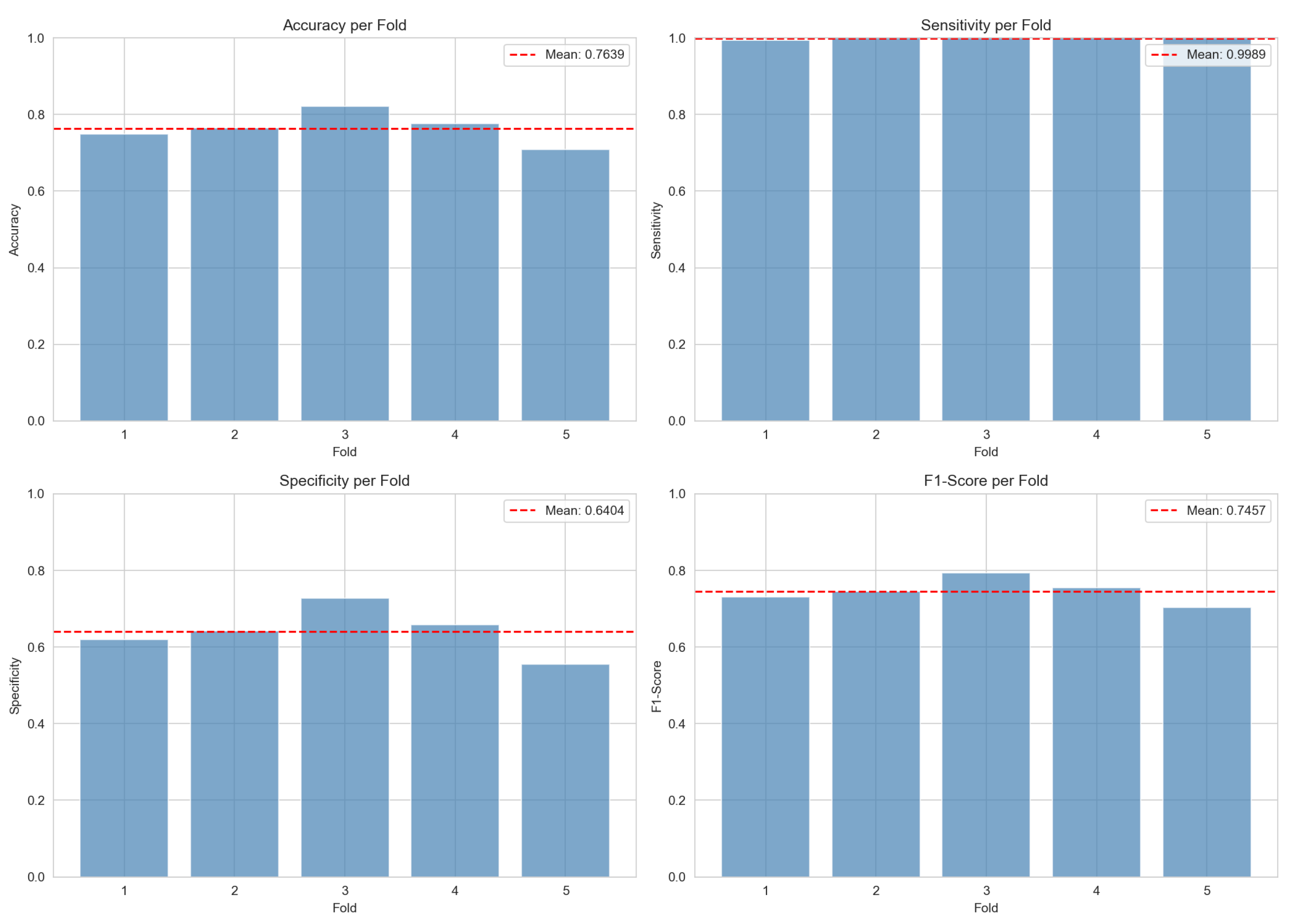

4.2. Cross-Validation Results

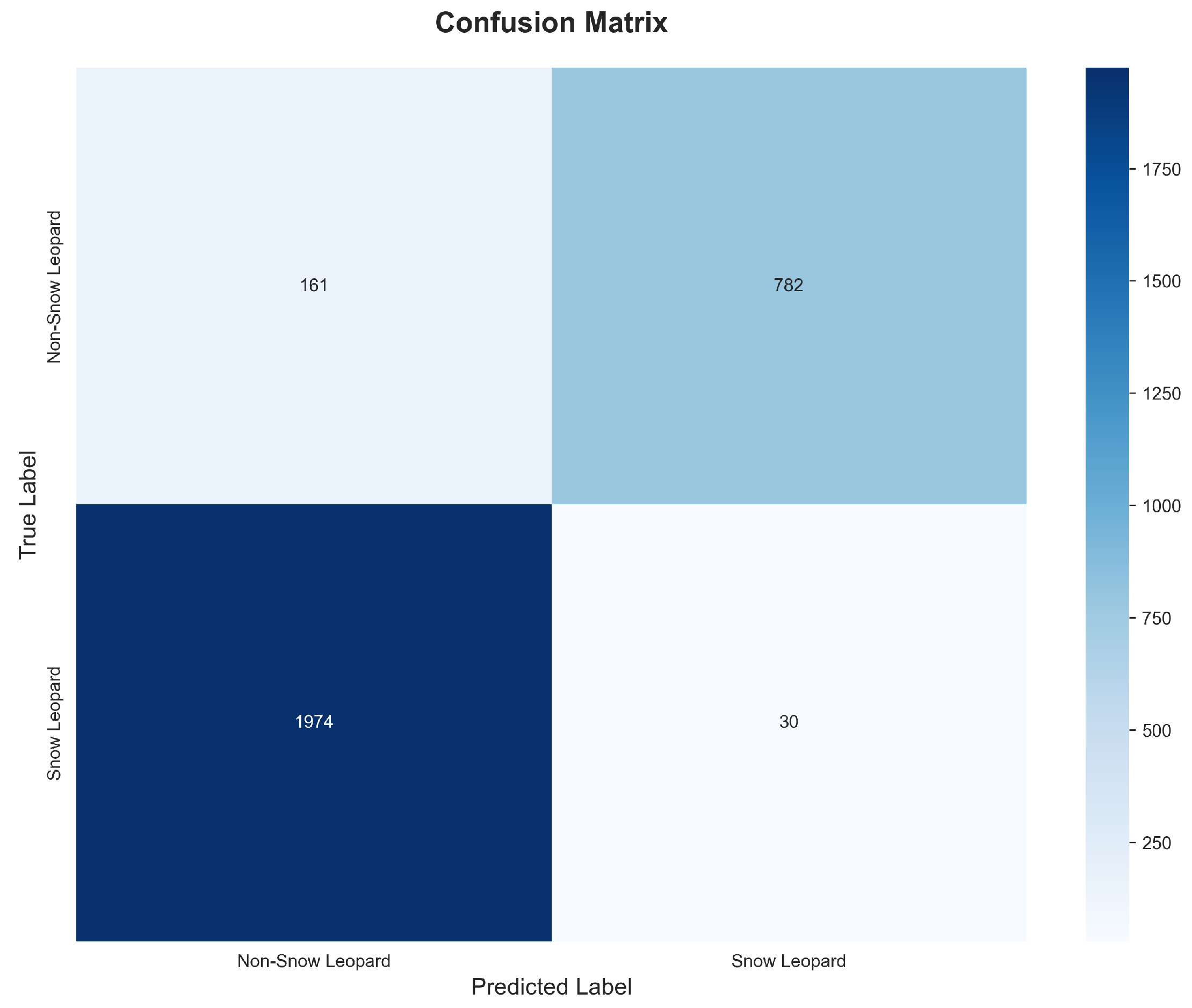

4.3. Aggregated Confusion Matrix

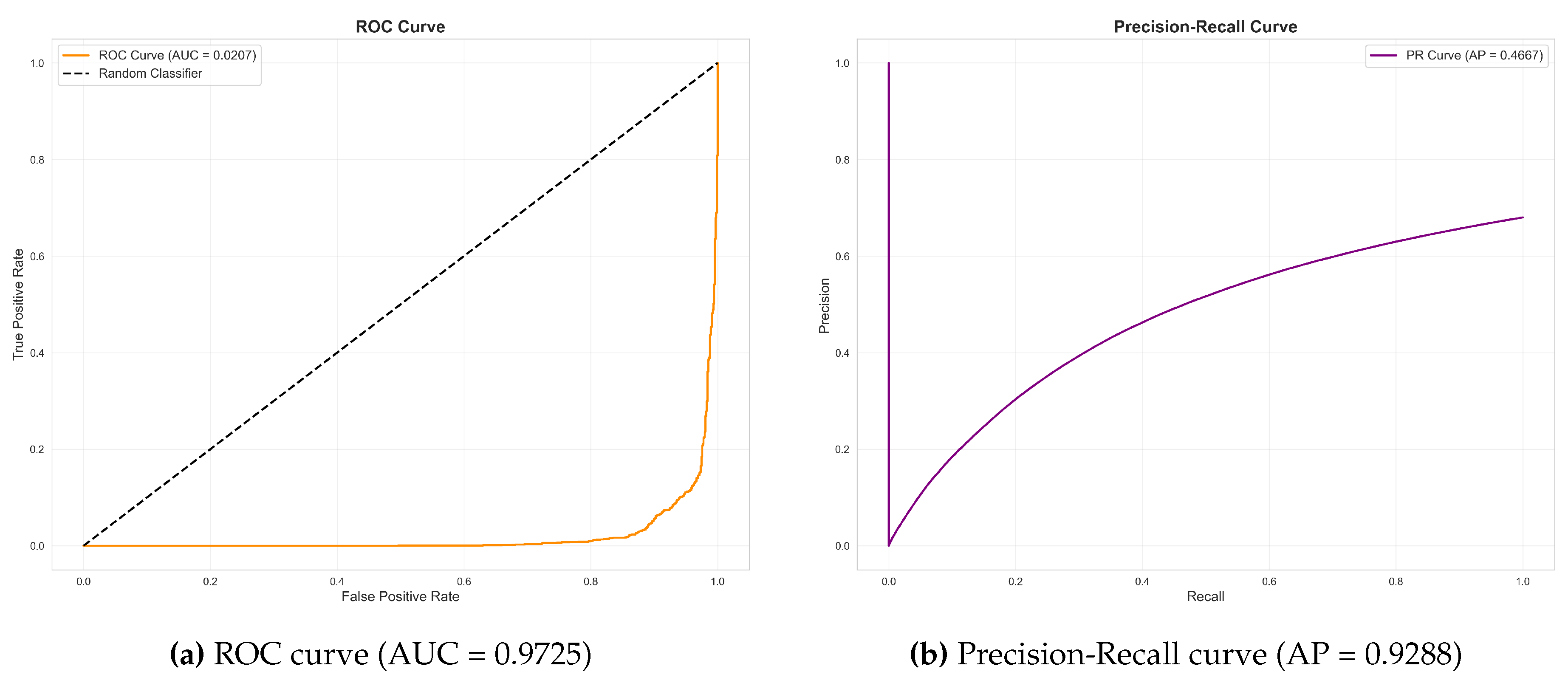

4.4. ROC and Precision-Recall Analysis

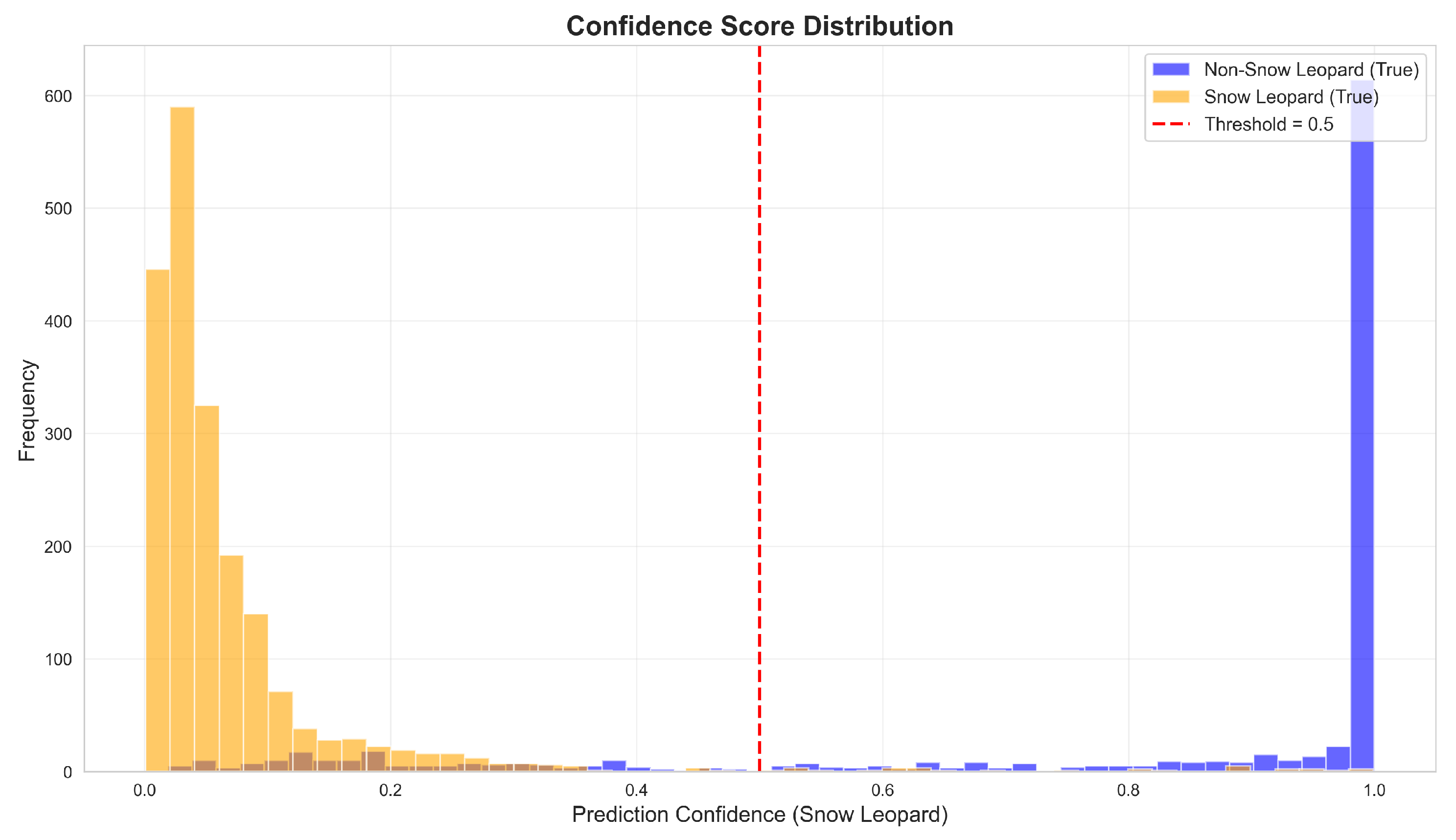

4.5. Prediction Score Distribution

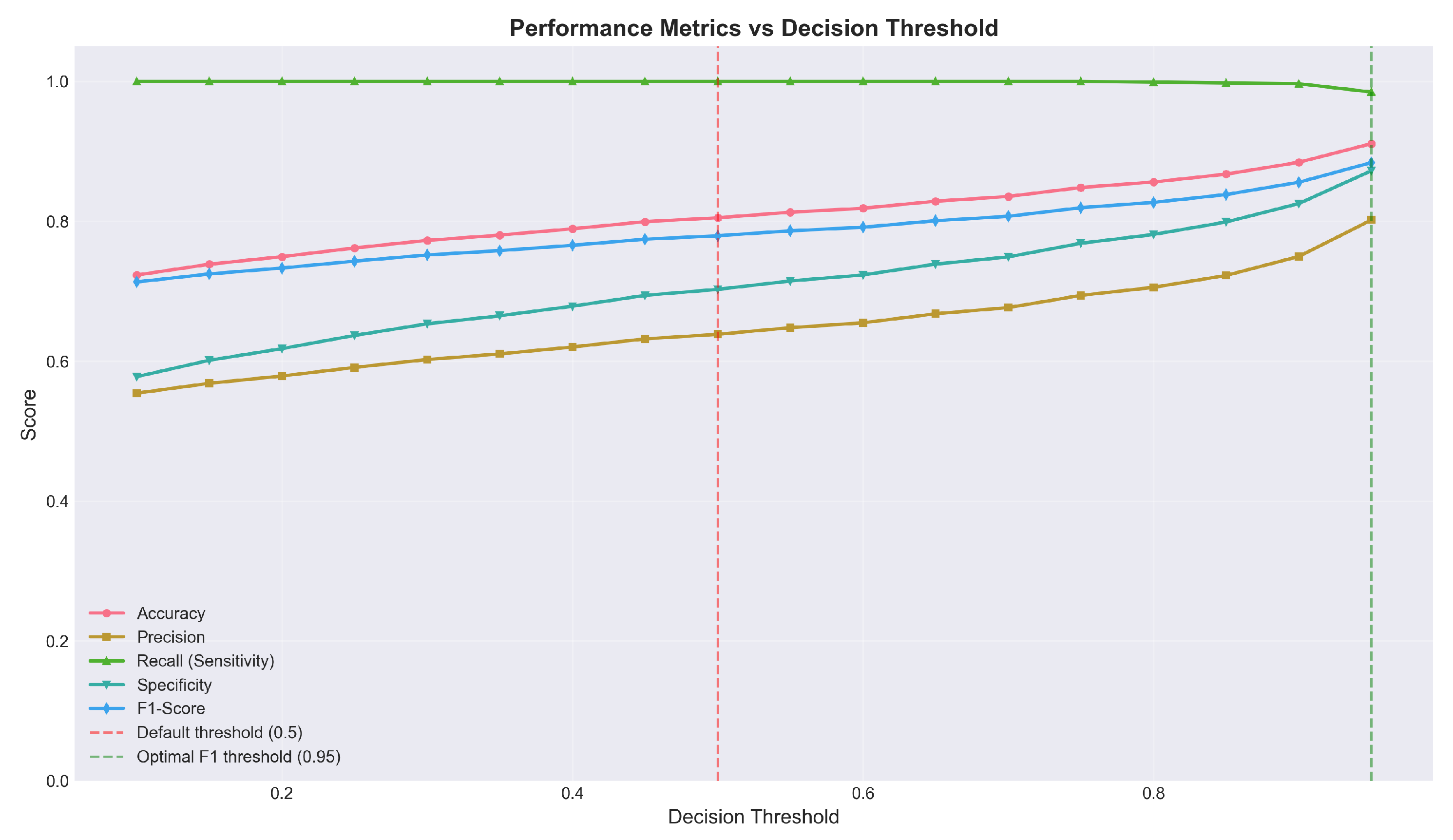

4.6. Threshold Optimization Analysis

5. Discussion

5.1. Interpretation of Results

- Cryptic background patterns: Snow-covered rocky terrain creates visual textures similar to snow leopard pelage patterns

- Partial occlusions: Images where rocks or vegetation create edge patterns resembling animal contours

- Similar-sized sympatric species: Ibex and wolves in certain poses triggered false detections

5.2. Threshold Selection for Deployment

5.3. Comparison with Prior Work

5.4. Practical Implications

- Screening efficiency: The model can process thousands of images rapidly, flagging potential snow leopard detections for expert review

- Reduced missed detections: The 99.9% sensitivity ensures virtually no snow leopard images are incorrectly discarded

- Flexible operation: Threshold adjustment enables calibration for different use cases

- Accessible deployment: The web application interface requires no technical expertise

- Correctly identify ∼250 of 250 snow leopard images

- Flag approximately 17,888 false positives for review

- Reduce manual review from 50,000 to ∼18,138 images (64% reduction)

- Correctly identify ∼248 of 250 snow leopard images (missing ∼2)

- Flag approximately 5,970 false positives

- Reduce manual review to ∼6,218 images (88% reduction)

5.5. Significance for Kyrgyzstan

- The importance of the country as the main snow leopard habitat (8–10% of the global population)

- Limited technological resources available to local conservation organizations

- The urgent need to scale monitoring efforts in response to climate change and anthropogenic pressures

5.6. Limitations

- Dataset constraints: The limited availability of verified wild camera trap images necessitated supplementation with controlled-environment captures. While we implemented strict protocols to prevent data leakage between training and evaluation sets, the model’s generalization to novel wild individuals requires continued validation.

- Individual diversity: A substantial portion of positive training samples were derived from two captive individuals. Although data augmentation was employed to increase variability, the model’s ability to generalize across the full phenotypic diversity of wild snow leopard populations warrants further investigation.

- Geographic specificity: Training data was drawn primarily from Kyrgyz contexts; performance in other range countries may differ due to variation in camera trap equipment, environmental conditions, and background characteristics.

- Specificity-sensitivity trade-off: The current model prioritizes sensitivity; applications requiring higher precision may need threshold adjustment or architectural modifications.

- Temporal validation: Long-term performance stability across seasonal conditions and equipment variations requires ongoing monitoring.

5.7. Future Directions

- Dataset expansion: Continued collection of verified camera trap images from diverse locations will enable model refinement and improved generalization

- Multi-species classification: Extending the system to identify prey species (ibex, argali) and potential competitors (wolves) would enhance ecological utility

- Individual identification: Integration with pattern-matching algorithms (e.g., Whiskerbook) could enable automated individual identification for population studies

- Active learning: Implementing human-in-the-loop workflows where uncertain predictions trigger expert review could accelerate dataset growth while maintaining quality

- Edge deployment: Optimizing the model for deployment on camera trap hardware could enable real-time detection and selective image transmission, reducing data storage and transfer requirements

- Regional validation: Systematic testing across snow leopard range countries would establish generalizability and identify context-specific adaptations

6. Conclusion

- AUC-ROC of 97.25% demonstrating excellent discriminative capacity

- Sensitivity of 99.9% missing only 1 of 916 snow leopard images

- Flexible threshold optimization enabling specificity adjustment from 64% to 88% while maintaining >99% sensitivity

- Practical deployment as an accessible web application

References

- Beery, Sara, Grant Van Horn, and Pietro Perona. 2018. Recognition in terra incognita. In Proceedings of the European Conference on Computer Vision (ECCV), pp. 456–473.

- Bohnett, Eve, Mark Holton, Mohammad Sadegh Norouzzadeh, Sharon Rosen, Koustubh Sharma, Örjan Johansson, Symon and"; Oduor, et al. 2022. Human expertise combined with artificial intelligence improves performance of snow leopard camera trap studies. Global Ecology and Conservation 41, e02350. [CrossRef]

- Buda, Mateusz, Atsuto Maki, and Maciej A. Mazurowski. 2018. A systematic study of the class imbalance problem in convolutional neural networks. Neural Networks 106, 249–259. [CrossRef]

- Chen, Yue, Jing Li, Xiaoming Zhang, and Kun Wang. 2021. MobileNet-based wildlife classification for edge computing applications. Ecological Informatics 64, 101376. [CrossRef]

- Cui, Yin, Menglin Jia, Tsung-Yi Lin, Yang Song, and Serge Belongie. 2019. Class-balanced loss based on effective number of samples. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 9268–9277. [CrossRef]

- Cunha, Fabrício, Jorge Chicaiza, and Eulanda M. Dos Santos. 2021. Addressing class imbalance in camera trap image classification. Ecological Informatics 64, 101367. [CrossRef]

- Deng, Jia, Wei Dong, Richard Socher, Li-Jia Li, Kai Li, and Li Fei-Fei. 2009. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255. [CrossRef]

- Howard, Andrew G., Menglong Zhu, Bo Chen, Dmitry Kalenichenko, Weijun Wang, Tobias Weyand, Marco Andreetto, and Hartwig Adam. 2017. MobileNets: Efficient convolutional neural networks for mobile vision applications. arXiv preprint arXiv:1704.04861.

- Hu, Jie, Li Shen, and Gang Sun. 2018. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7132–7141. [CrossRef]

- Khanal, Saroj, Shant Sharma, and Tej Bahadur Thapa. 2020. Applying deep learning for snow leopard detection from camera trap images in nepal. Conservation Science and Practice 2(11), e289. [CrossRef]

- Lin, Tsung-Yi, Priya Goyal, Ross Girshick, Kaiming He, and Piotr Dollár. 2017. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, pp. 2980–2988. [CrossRef]

- McCarthy, Tom, David Mallon, Rodney Jackson, Peter Zahler, and Kyle McCarthy. 2017. Panthera uncia. The IUCN Red List of Threatened Species 2017. e. T22732A50664030. [CrossRef]

- Norouzzadeh, Mohammad Sadegh, Anh Nguyen, Margaret Kosmala, Alexandra Swanson, Meredith S. Palmer, Craig Packer, and Jeff Clune. 2018. Automatically identifying, counting, and describing wild animals in camera-trap images with deep learning. Proceedings of the National Academy of Sciences 115(25), E5716–E5725. [CrossRef]

- Sandler, Mark, Andrew Howard, Menglong Zhu, Andrey Zhmoginov, and Liang-Chieh Chen. 2018. MobileNetV2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4510–4520. [CrossRef]

- Schneider, Stefan, Graham W. Taylor, and Stefan C. Kremer. 2020. Deep learning object detection methods for ecological camera trap data. In Proceedings of the 15th Conference on Computer and Robot Vision, pp. 321–328. [CrossRef]

- Sharma, Koustubh, Girish A. Punjabi, Rodney Jackson, and Charudutt Mishra. 2023. Camera trapping for snow leopard population assessment: Advances and challenges. Oryx 57(2), 145–157. [CrossRef]

- Snow Leopard Trust. 2022. State of the snow leopard report: Kyrgyzstan. Technical report, Snow Leopard Trust, Seattle, WA.

- Tabak, Michael A., Mohammad S. Norouzzadeh, David W. Wolfson, Steven J. Sweeney, Kurt C. VerCauteren, Nathan P. Snow, Joseph M. Halseth, Paul A. Di Salvo, Jesse S. Lewis, Michael D. White, Ben Teton, James C. Beasley, Peter E. Schlichting, Raoul K. Boughton, Bethany Wight, Eric S. Newkirk, Jacob S. Ivan, Eric A. Odell, Ryan K. Brook, Paul M. Merkle, Gary W. Witmer, and Ryan S. Miller. 2019. Machine learning to classify animal species in camera trap images: Applications in ecology. Methods in Ecology and Evolution 10(4), 585–590. [CrossRef]

- Tan, Ming, Xiaoyu Chen, Yue Liu, and Jie Zhang. 2022. Animal detection and classification from camera trap images using different mainstream object detection architectures. Animals 12(15), 1976. [CrossRef]

- Tan, Mingxing and Quoc V. Le. 2019. EfficientNet: Rethinking model scaling for convolutional neural networks. In International Conference on Machine Learning, pp. 6105–6114.

- Wang, Fei, Mengqing Jiang, Chen Qian, Shuo Yang, Cheng Li, Honggang Zhang, Xiaogang Wang, and Xiaoou Tang. 2017. Residual attention network for image classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3156–3164. [CrossRef]

- Whytock, Robin C., Joeri Świeżewski, Jeanine A. Zwerts, Tadeusz Bara-Słupski, Aurélien F. Koumba Pambo, Monika Rogala, Laila Bahaa-el din, Kelly Boekee, Stephanie and"; Brittain, et al. 2021. Robust ecological analysis of camera trap data labelled by a machine learning model. Methods in Ecology and Evolution 12(6), 1080–1092. [CrossRef]

- Woo, Sanghyun, Jongchan Park, Joon-Young Lee, and In So Kweon. 2018. CBAM: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), pp. 3–19.

- Zhang, Xiaoming, Yue Chen, and Wei Li. 2022. Attention-enhanced MobileNet for wildlife detection in camera trap imagery. IEEE Access 10, 45672–45683. [CrossRef]

- Zhu, Xiaolong, Lei Wang, and Hao Chen. 2021. Channel attention for fine-grained bird species classification. Pattern Recognition Letters 147, 85–92. [CrossRef]

| Class | Count | Percentage |

|---|---|---|

| Snow Leopard Present | 916 | 34.4% |

| Snow Leopard Absent | 1,744 | 65.6% |

| Total | 2,660 | 100% |

| Component | Configuration |

|---|---|

| Feature Extractor | MobileNetV2 (ImageNet pre-trained, frozen) |

| Attention Module | Squeeze-and-Excitation (reduction ratio: 16) |

| Global Pooling | Adaptive Average Pooling |

| Classifier | Fully Connected with Dropout (0.3) |

| Output Activation | Sigmoid |

| Total Parameters | 2,621,762 |

| Trainable Parameters | 363,010 (13.8%) |

| Frozen Parameters | 2,258,752 (86.2%) |

| Hyperparameter | Value |

|---|---|

| Optimizer | Adam |

| Learning Rate | |

| Learning Rate Schedule | ReduceLROnPlateau (factor: 0.5, patience: 5) |

| Minimum Learning Rate | |

| Batch Size | 16 |

| Epochs | 50 |

| Early Stopping Patience | 15 epochs |

| L2 Regularization |

| Augmentation | Parameter |

|---|---|

| Rotation | ±30° |

| Width Shift | |

| Height Shift | |

| Shear | |

| Zoom | |

| Horizontal Flip | Yes |

| Brightness | 80%–120% |

| Metric | Mean | Std | Min | Max |

|---|---|---|---|---|

| Accuracy | 76.4% | ±3.7% | 70.9% | 82.1% |

| Sensitivity | 99.9% | ±0.2% | 99.5% | 100% |

| Specificity | 64.0% | ±5.6% | 55.5% | 72.8% |

| Precision | 59.6% | ±3.8% | 54.3% | 65.8% |

| F1-Score | 74. 6% | ±3.0% | 70.4% | 79.4% |

| AUC-ROC | 93.95% | ±1.9% | 92.2% | 96.8% |

| Predicted: No Leopard | Predicted: Leopard | |

|---|---|---|

| Actual: No Leopard | 1,117 (TN) | 627 (FP) |

| Actual: Leopard | 1 (FN) | 915 (TP) |

| Threshold | Sensitivity | Specificity | Accuracy | F1-Score |

|---|---|---|---|---|

| 0.50 (default) | 99.9% | 64.0% | 76.4% | 74. 6% |

| 0.70 | ∼99.5% | ∼75% | ∼84% | ∼82% |

| 0.90 | ∼99% | ∼86% | ∼90% | ∼87% |

| 0.95 (optimal) | ∼99% | ∼88% | ∼91% | ∼88% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).