Submitted:

28 November 2025

Posted:

01 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation and Context

1.2. Problem Statement

1.3. Research Objectives and Contributions

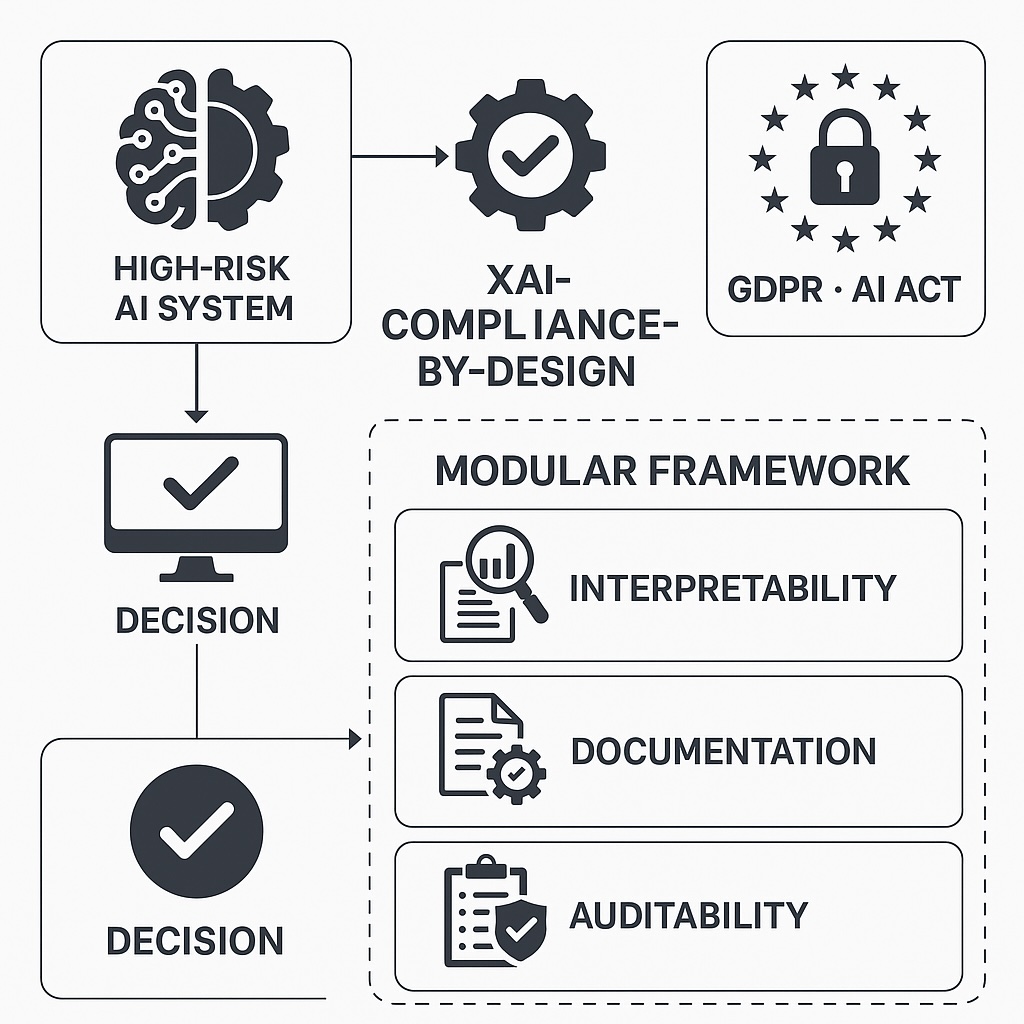

- RQ1: How can XAI techniques, compliance-by-design principles and trustworthy MLOps practices be integrated into a single modular framework for high-risk AI systems?

- RQ2: To what extent can such a framework produce concrete, verifiable artefacts—such as models, explanations, logs and evidence bundles—that support GDPR- and AI Act-aligned auditability and accountability?

- RQ3: How does the proposed framework behave when instantiated in an anomaly detection scenario using a synthetic, security-relevant tabular dataset, in terms of predictive performance, explainability and drift monitoring?

- 1.

- A conceptual XAI-Compliance-by-Design framework linking the lifecycle of high-risk AI systems to regulatory requirements from the GDPR, the AI Act and ISO/IEC 42001, emphasising traceability, oversight and risk management.

- 2.

- A modular, MLOps-oriented architecture integrating data preprocessing, model training, explainability, drift monitoring and compliance logging, designed to be implementable with widely used open-source tools.

- 3.

- A technical–regulatory correspondence matrix mapping specific metrics and artefacts—such as SHAP reports, drift statistics, model lineage logs and evidence bundles—to relevant legal and standardisation provisions.

- 4.

- An end-to-end proof-of-concept implementation in Python, instantiated on a synthetic, IDS-inspired anomaly detection dataset using a Random Forest classifier combined with SHAP and LIME explanations, producing versioned models, explanation artefacts, drift indicators and tamper-evident evidence bundles. This implementation serves as an illustrative toy example to demonstrate implementability rather than to optimise intrusion detection performance.

- 5.

- An empirical assessment of model performance, global and local explainability and stability under dataset shift and distributional drift, discussing the implications for trustworthy MLOps, regulatory governance and European digital sovereignty.

1.4. Structure of the Paper

2. Related Work and State of the Art

2.1. Compliance-by-Design and AI Accountability

2.2. Comparative Analysis of Existing Frameworks

2.3. Regulatory and Ethical Foundations: GDPR, AI Act, and Digital Sovereignty

2.4. Research Gap and Conceptual Positioning

3. Methodology

3.1. Framework-Oriented Methodology and MLOps Pipeline

- Environment configuration and context registration: initialisation of the execution environment, creation of working directories, configuration of random seeds and registration of key parameters (e.g., data sources, model family, hyperparameter ranges) in a compliance log. Each execution is assigned a unique identifier (RUN_ID) that links all subsequent artefacts, including software versions and configuration files.

- Data handling and preprocessing: ingestion or generation of data, separation into features and target variables, definition of numerical and categorical attributes and configuration of preprocessing steps (e.g., ColumnTransformer, scaling, encoding). The resulting schema and data statistics are recorded to support documentation obligations under the AI Act and ISO/IEC 42001 regarding data quality, representativeness and preprocessing.

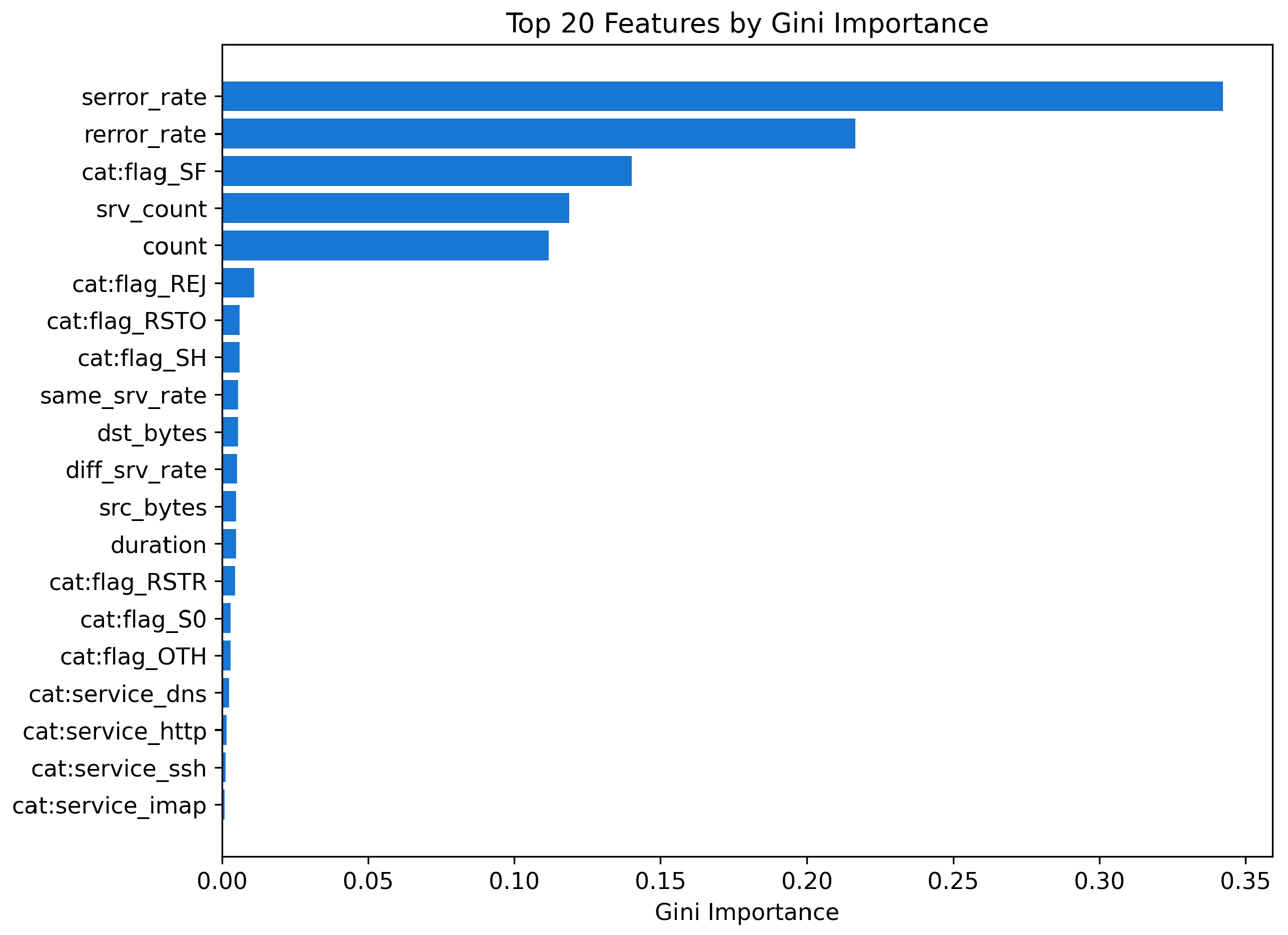

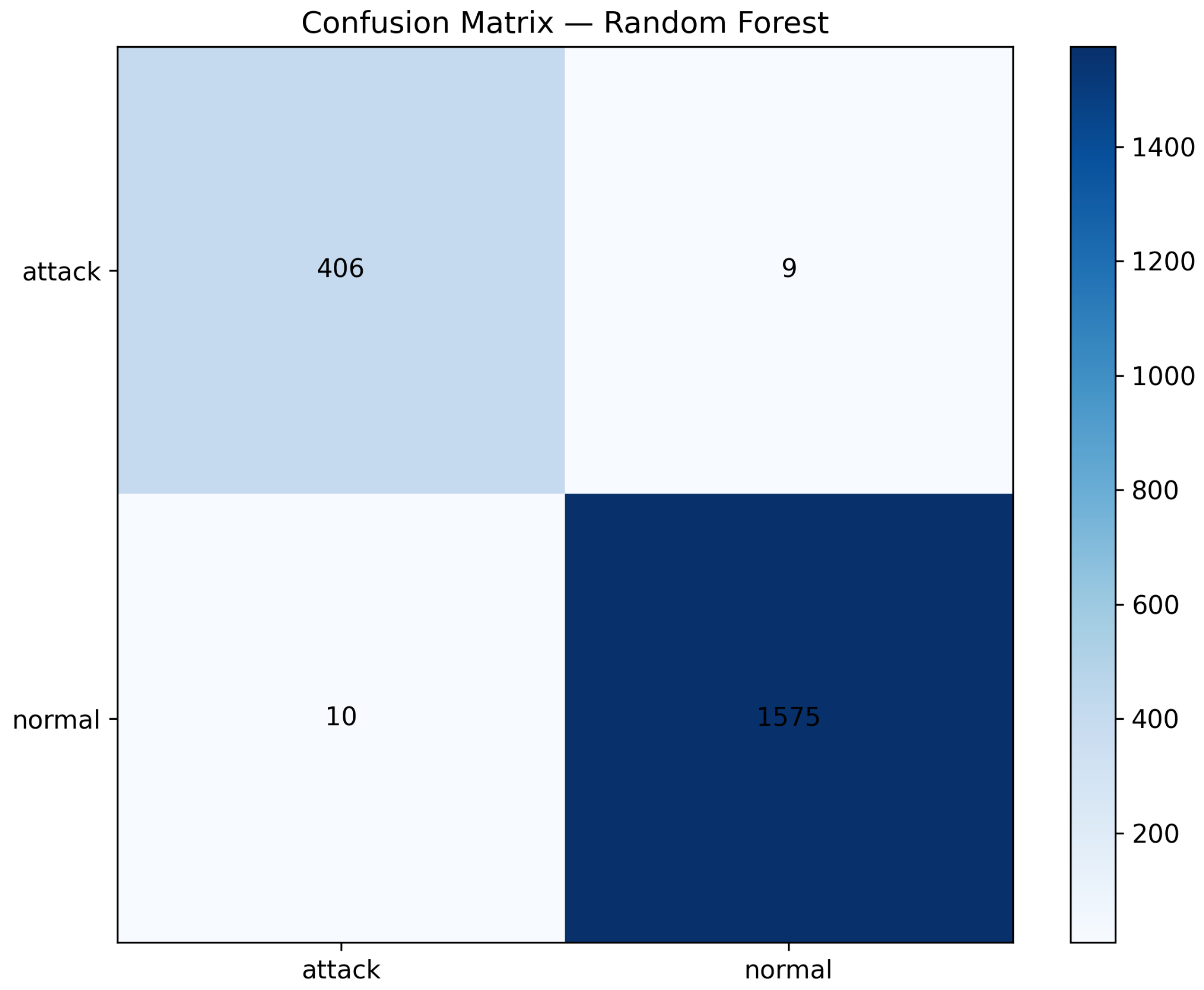

- Model training and validation: training of a classification model encapsulated in a scikit-learn-based pipeline, with standard train–test splitting and computation of performance metrics (accuracy, precision, recall, F1-score and AUC-ROC). The choice of model family (here, a Random Forest) is illustrative and not essential to the framework, which can be instantiated with alternative classifiers that fit the same pipeline structure.

- Explainability and drift monitoring: generation of global and local explanations (SHAP, LIME) and computation of basic drift indicators on held-out or temporally segmented data, in order to demonstrate how explainability and monitoring artefacts are integrated into the compliance workflow. These artefacts are later used as inputs for audit-oriented documentation, model risk analysis and human oversight.

- Evidence bundle construction: aggregation of models, metrics, explanation outputs, drift statistics and compliance logs into structured evidence bundles (e.g., JSON manifests and directory structures) that can be inspected by auditors or regulators. These bundles are designed to be directly reusable as building blocks for technical documentation dossiers and conformity assessment under the AI Act.

3.2. Illustrative Case Study: Synthetic IDS-like Scenario

- Numerical and categorical features: continuous predictors (e.g., connection duration, packet and byte counts) and categorical variables (e.g., protocol type, service, flags), processed via a ColumnTransformer with OneHotEncoder for categorical attributes and passthrough for numerical attributes.

- Binary target variable: a label labels representing normal and attack traffic, used solely to demonstrate how the framework manages supervised classification tasks in an IDS-like context.

- Class imbalance: a minority proportion of attack events (around 20%), echoing the typical imbalance found in many operational network settings, without claiming to reproduce any specific real-world environment.

3.3. Explainability Layer: SHAP and LIME

- SHAP: Shapley values are computed via a TreeExplainer on a representative subset of the transformed dataset. The outputs include global summary plots and feature importance statistics, which demonstrate how the framework can generate explanation artefacts suitable for incorporation into audit-ready evidence bundles and for assessing properties such as fidelity and stability.

- LIME: Instance-level explanations are generated using a LimeTabularExplainer, focusing on selected cases (e.g., false positives and false negatives). The goal is to show how local explanations can be captured, stored and linked to compliance events, supporting human oversight and documentation of decision rationales rather than analysing any specific operational scenario.

3.4. Evaluation Criteria and Assessment Model

- 1.

- Model performance (illustrative): standard metrics such as accuracy, precision, recall, F1-score and AUC-ROC are computed to confirm that the toy model behaves in a plausible manner. These metrics are reported to contextualise the explanation and compliance artefacts and to provide a basic characterisation of predictive behaviour, not as the primary contribution of the work.

- 2.

- Explanation properties: fidelity, stability and comprehensibility are assessed qualitatively and, where applicable, via metrics inspired by recent unified evaluation frameworks for XAI [7,13]. The objective is to verify that the framework is capable of producing explanations that can be systematically documented, revisited and compared across runs, rather than to exhaustively benchmark XAI techniques.

- 3.

- Compliance and governance indicators: coverage of regulatory obligations (percentage of GDPR, AI Act and ISO/IEC 42001 requirements mapped to technical controls in the technical–regulatory correspondence matrix), completeness of evidence bundles and the ability to reconstruct, from the compliance log, the full data–model–explanation–decision lineage for a given RUN_ID. These indicators capture the extent to which the pipeline supports continuous, audit-ready governance of high-risk AI systems.

3.5. Limitations, Ethical Considerations, and Reproducibility

4. Proposed Framework: XAI-Compliance-by-Design

4.1. Conceptual Overview and Architectural Logic

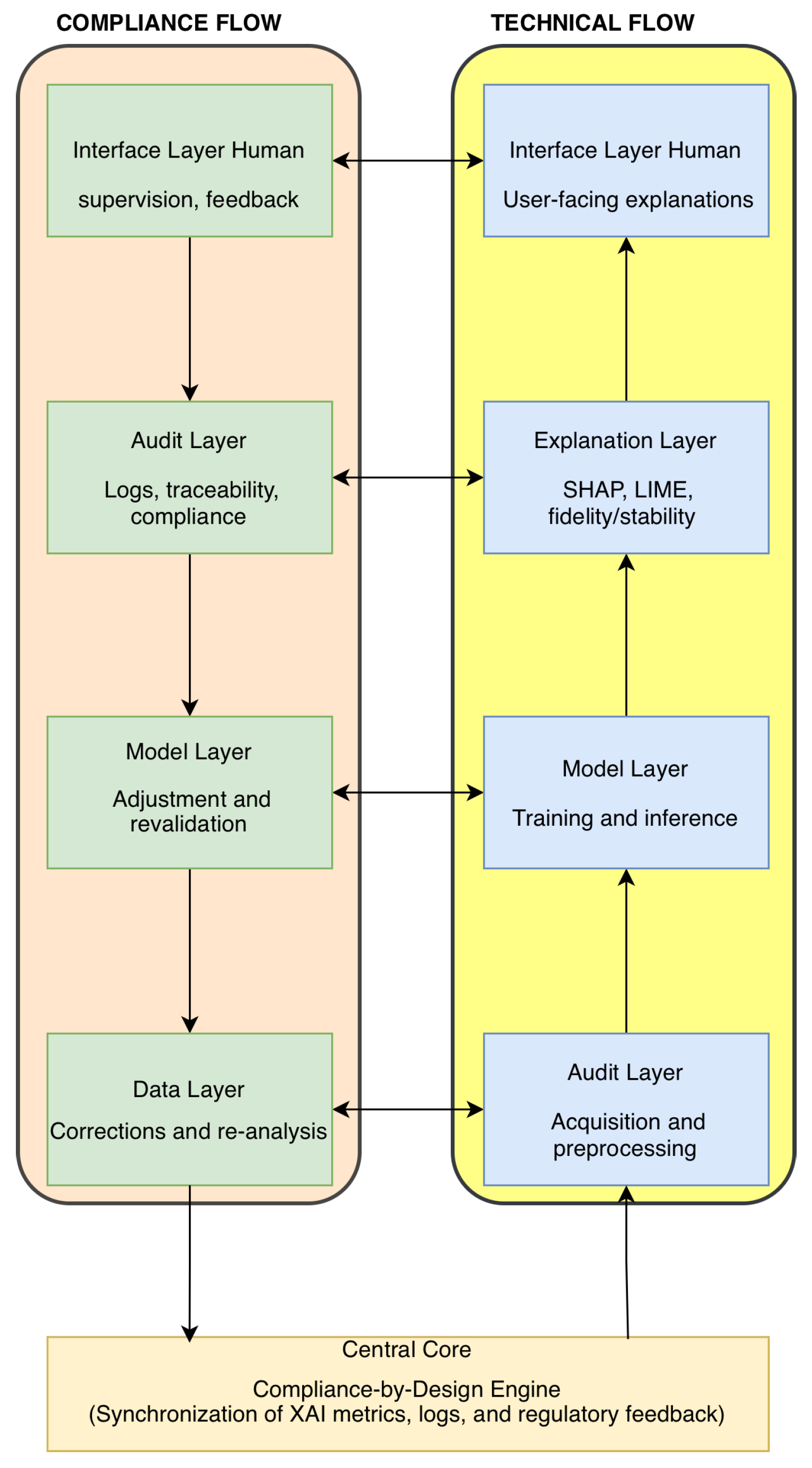

4.2. Functional Layers

- Data Layer: responsible for data acquisition, preprocessing and data quality auditing, ensuring integrity, provenance and traceability. This layer underpins compliance with GDPR Articles 5 and 25 and supports robust governance practices through data documentation, quality indicators and impact assessment inputs [24,25].

- Model Layer: covers model development, validation, deployment and monitoring, with emphasis on traceability, versioning and documentation for accountability and transparency in line with the AI Act and GDPR [1,2]. This layer supports formal revalidation processes whenever technical audits detect performance degradation, emerging risks or material changes in data or use context.

- Explanation Layer: implements explanation techniques and metrics—such as SHAP, LIME, fidelity and stability—to provide human-understandable justifications for automated decisions and to generate technical evidence for legal justification duties [26,27,28,29,30]. It directly supports transparency provisions under the GDPR and AI Act and feeds explanation artefacts into the audit and governance processes.

- Audit Layer: coordinates continuous evaluation and monitoring of compliance, automating reporting and log management to meet obligations such as responding to access or contestation requests (e.g., GDPR Article 22) and post-market monitoring under the AI Act [22,31,32]. This layer maintains integrity checks and preserves audit trails across the lifecycle, linking technical events to governance decisions.

- Interface Layer: provides interactive mechanisms for visualising explanations and supporting human oversight, thereby operationalising meaningful human intervention and accountability [17,24]. It enables human-in-the-loop review, feedback and override capabilities, which are critical in high-risk AI settings and for demonstrating effective human oversight in conformity assessments.

4.3. Technical and Regulatory Correspondence Matrix

4.4. Integration Within MLOps Pipelines

- Policy-aware CI/CD stages: CI pipelines are extended with policy linting, unit tests for explainability components and compliance gates. For instance, a build may be blocked if explanation reports are missing, if drift metrics exceed configured thresholds or if mandatory documentation artefacts (e.g., model cards, data schemas) are absent [28,41].

- Evidence-aware training stages: during training, the pipeline requires data snapshots, lineage metadata and explanation outputs to be stored in structured locations and referenced in the compliance log. This enforces the generation of audit-ready artefacts as a condition for promoting models to later stages.

- Governance-aware deployment stages: deployment pipelines enforce policies that prevent promotion of models lacking human override mechanisms, decision provenance logging or post-deployment monitoring hooks. Candidate releases are evaluated against the correspondence matrix and CDE indicators before approval [33,42].

- Post-deployment observability and revalidation: in production, telemetry collectors feed drift detectors, explainers and governance dashboards. Periodic revalidation routines reassess model performance and explanation properties; where appropriate, audit metadata can be anchored on immutable ledgers (e.g., blockchain-based records) to reinforce integrity and non-repudiation [43].

5. Implementation and Experimental Validation

5.1. Implementation Overview

- data_lake/: frozen datasets and transformed feature matrices;

- models/: serialised pipelines that encapsulate both preprocessing and classifier;

- evidence_bundles/: explanation plots, drift reports, compliance logs and JSON manifests that aggregate technical evidence;

- decision_dossiers/: machine-readable deployment decisions and associated justifications.

5.2. Framework Instantiation in a Synthetic IDS-like Scenario

- numerical attributes representing connection duration, byte volumes, local counts of recent connections and rate-based indicators (e.g., error and service ratios);

- categorical attributes modelling protocol type, service and connection flag, with basic consistency constraints (e.g., http, ftp and ssh mapped to tcp, dns mostly mapped to udp);

- a binary target label labels distinguishing normal and attack traffic, with an intentionally imbalanced class distribution of approximately 80% normal and 20% attack.

6. Discussion

6.1. Alignment with the Research Objectives

6.2. From Model-Centric to Evidence-Centric Governance

6.3. Implications for High-Risk AI Practice

6.4. Practical Transfer to Real-World High-Risk AI Systems

6.5. Positioning Relative to Existing Frameworks

6.6. Generalisability, Limitations and Future Directions

7. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- European Union. Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data (General Data Protection Regulation). Off. J. Eur. Union 2016, L 119, 1–88, 2016. Available online: https://eur-lex.europa.eu/eli/reg/2016/679/oj (accessed on 24 November 2025).

- European Parliament and Council of the European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending certain Union legislative acts (Artificial Intelligence Act). Off. J. Eur. Union 2024, 2024. Available online: https://eur-lex.europa.eu/eli/reg/2024/1689/oj (accessed on 24 November 2025).

- Barredo Arrieta, A.; Díaz-Rodríguez, N.; Del Ser, J.; Bennetot, A.; Tabik, S.; Barbado, A.; Herrera, F. Explainable Artificial Intelligence (XAI): Concepts, Taxonomies, Opportunities and Challenges Toward Responsible AI. Information Fusion 2020, 58, 82–115. [Google Scholar] [CrossRef]

- Doshi-Velez, F.; Kim, B. Towards a Rigorous Science of Interpretable Machine Learning. arXiv 2017, [1702.08608]. [CrossRef]

- Ahangar, M.N.; Jalali, S.; Dastjerdi, A. AI Trustworthiness in Manufacturing. Sensors 2025, 25, 4357. [Google Scholar] [CrossRef]

- Guidotti, R.; Monreale, A.; Ruggieri, S.; Turini, F.; Giannotti, F.; Pedreschi, D. A Survey of Methods for Explaining Black Box Models. ACM Computing Surveys 2019, 51, 93. [Google Scholar] [CrossRef]

- Islam, M.A.; Mridha, M.F.; Jahin, M.A.; Dey, N. A Unified Framework for Evaluating the Effectiveness and Enhancing the Transparency of Explainable AI Methods in Real-World Applications. arXiv 2024, arXiv:2412.03884. [Google Scholar] [CrossRef]

- Chhetri, T.R.; Kurteva, A.; et al. Data Protection by Design Tool for Automated GDPR Verification. Sensors 2022, 22, 2763. [Google Scholar] [CrossRef] [PubMed]

- Liao, Q.V.; Gruen, D.; Miller, S. Questioning the AI: Informing Design Practices for Explainable AI User Experiences. In Proceedings of the Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems. ACM, 2020, pp. 1–15. [CrossRef]

- Kabir, M.; Gandomi, A.; Wiese, A. A Review of Explainable Artificial Intelligence from the Perspectives of Challenges and Opportunities. Algorithms 2025, 18, 556. [Google Scholar] [CrossRef]

- Kostopoulos, G.; Davrazos, G.; Kotsiantis, S. Explainable Artificial Intelligence-Based Decision Support Systems. Electronics 2024, 13, 2842. [Google Scholar] [CrossRef]

- Longo, L.; Brcic, M.; Cabitza, F.; et al. Explainable Artificial Intelligence (XAI) 2.0. Information Fusion 2024, 106, 102301. [Google Scholar] [CrossRef]

- Pinto, J.D.; Paquette, L. Towards a Unified Framework for Evaluating Explanations. arXiv 2024, arXiv:2405.14016. [Google Scholar] [CrossRef]

- Pavlidis, G. Unlocking the Black Box: Analysing the EU AI Act Framework. Law, Innovation and Technology 2024, 16, 293–308. [Google Scholar] [CrossRef]

- International Organization for Standardization. ISO/IEC 42001:2023—Artificial Intelligence Management System. International Standard, 2023. Available online: https://www.iso.org/standard/81230.html (accessed on 24 November 2025).

- Lozano-Murcia, J.; Gómez, R.; Blasco, L. Protocol for Evaluating Explainability in Actuarial Models. Electronics 2025, 14, 1561. [Google Scholar] [CrossRef]

- Amershi, S.; Weld, D.; Vorvoreanu, M.; Fourney, A.; Nushi, B.; Collisson, P.; Suh, J.; Iqbal, S.; Bennett, P.; Inkpen, K.; et al. Guidelines for Human–AI Interaction. In Proceedings of the Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 2019; pp. 1–13. [CrossRef]

- Sculley, D.; Holt, G.; Golovin, D.; Davydov, E.; Phillips, T.; Ebner, D.; Chaudhary, V.; Young, M.; Crespo, J.F.; Dennison, D. Hidden Technical Debt in Machine Learning Systems. In Proceedings of the Advances in Neural Information Processing Systems, 2015, pp. 2503–2511.

- Kieburtz, R.; Danks, D.; Horowitz, E. Multi-layered Governance for AI Systems. AI and Society 2020, 35, 753–763. [Google Scholar]

- Bosshart, P.; Salathé, M.; Tramèr, F.; Magazzeni, D.; Pfrommer, J.; Schleiss, P.; Narayanan, A.; Kratzwald, B. Building Modular and Trustworthy AI Systems. IEEE Intelligent Systems 2021, 36, 88–93. [Google Scholar]

- Lwakatare, L.E.; Crnkovic, I.; Holmström Olsson, H.; MacGregor, S.A.; Šmite, D.; Bosch, J. A Taxonomy of MLOps. IEEE Software 2020, 37, 66–73. [Google Scholar]

- Arya XAI. The Growing Importance of Explainable AI (XAI) in AI Governance. 2025. Available online: https://aryaxai.com/blog/the-growing-importance-of-explainable-ai (accessed on 24 November 2025).

- Alhena AI. GDPR Compliance Through Multi-Region Architecture. 2025. Available online: https://alhena.ai/reports/gdpr-compliance-multi-region-architecture (accessed on 24 November 2025).

- WilmerHale. AI and GDPR: A Road Map to Compliance by Design. 2025. Available online: https://www.wilmerhale.com/en/insights/client-alerts/2025-07-28-ai-and-gdpr-a-roadmap-to-compliance-by-design (accessed on 24 November 2025).

- Exabeam. The Intersection of GDPR and AI and 6 Compliance Best Practices. 2025. Available online: https://www.exabeam.com/blog/intersection-of-gdpr-and-ai-6-compliance-best-practices/ (accessed on 24 November 2025).

- Lundberg, S.M.; Lee, S. A Unified Approach to Interpreting Model Predictions. In Proceedings of the Advances in Neural Information Processing Systems, 2017, pp. 4765–4774. Proceedings of the Advances in Neural Information Processing Systems.

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Ẅhy Should I Trust You?: Explaining the Predictions of Any Classifier. In Proceedings of the Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 2016, pp. 1135–1144. [CrossRef]

- Zhao, X.; Manzano, A.; Forbes, C.; et al. An End-to-End Data and Machine Learning Pipeline for Energy Forecasting: A Systematic Approach Integrating MLOps and Domain Expertise. Information 2025, 16, 805. [Google Scholar] [CrossRef]

- Carvalho, D.V.; Pereira, E.M.; Cardoso, J.S. Machine Learning Interpretability: A Survey on Methods and Metrics. Electronics 2019, 8, 832. [Google Scholar] [CrossRef]

- Tjoa, E.; Guan, C. A Survey on Explainable Artificial Intelligence (XAI): Toward Medical XAI. IEEE Transactions on Neural Networks and Learning Systems 2021, 32, 4793–4813. [Google Scholar] [CrossRef] [PubMed]

- Centre for Data Ethics and Innovation. The Roadmap to an Effective AI Assurance Ecosystem. UK Government Independent Report, 2021. Available online: https://www.gov.uk/government/publications/the-roadmap-to-an-effective-ai-assurance-ecosystem (accessed on 24 November 2025).

- Tabassi, E. Artificial Intelligence Risk Management Framework (AI RMF 1.0). Technical Report NIST AI 100-1, National Institute of Standards and Technology, 2023. [CrossRef]

- Hartmann, D.; de Pereira, J.R.L.; Streitbörger, C.; Berendt, B. Addressing the regulatory gap: moving towards an EU AI audit ecosystem beyond the AI Act by including civil society. AI and Ethics 2025, 5, 3617–3638. [Google Scholar] [CrossRef]

- Bass, L.; Clements, P.; Kazman, R. Software Architecture in Practice, 3rd ed.; Addison-Wesley, 2012.

- Garlan, D.; Shaw, M. Software Architecture: Perspectives on an Emerging Discipline; Prentice Hall, 1996.

- Taylor, R.N.; Medvidović, N.; Dashofy, E.M. Software Architecture: Foundations, Theory, and Practice; Wiley, 2009.

- van Zyl, C.; Knorr, K. Separation of Concerns: A New Model for Software Engineering. ACM SIGSOFT Software Engineering Notes 2002, 27, 1–5. [Google Scholar] [CrossRef]

- Papagiannidis, E.; Mikalef, P.; Conboy, K. Responsible Artificial Intelligence Governance: A Review and Research Framework. Journal of Strategic Information Systems 2025, 34, 101885. [Google Scholar] [CrossRef]

- Morley, J.; Floridi, L.; Kinsey, L.A.; Elhalal, A. From What to How: Guidelines for Responsible AI Governance through a Bidirectional and Iterative Oversight Model. AI & Society 2021, 36, 715–729. [Google Scholar]

- hillips, P.; Hahn, C.; Fontana, P.; Broniatowski, D.A.; Przybocki, M.A. Four Principles of Explainable Artificial Intelligence (NISTIR 8312, Draft). Technical Report NISTIR 8312, National Institute of Standards and Technology, 2020. Available online: https://doi.org/10.6028/NIST.IR.8312-draft (accessed on 24 November 2025).

- Tran, T.A.; Ruppert, T.; Abonyi, J. The Use of eXplainable Artificial Intelligence and Machine Learning Operation Principles to Support the Continuous Development of Machine Learning-Based Solutions in Fault Detection and Identification. Computers 2024, 13, 252. [Google Scholar] [CrossRef]

- Umer, M.A.; Belay, E.G.; Gouveia, L.B. Leveraging Artificial Intelligence and Provenance Blockchain Framework to Mitigate Risks in Cloud Manufacturing in Industry 4.0. Electronics 2024, 13, 660. [Google Scholar] [CrossRef]

- Kulothungan, V. Using Blockchain Ledgers to Record AI Decisions in IoT. IoT 2025, 6, 37. [Google Scholar] [CrossRef]

| Framework | Key characteristics | Regulatory alignment |

|---|---|---|

| Arrieta et al. (2020) [3] | Taxonomy of XAI techniques; fidelity and stability analysis. | Supports transparency; lacks explicit regulatory mapping. |

| Chhetri et al. (2022) [8] | Semantic modelling for automated GDPR conformance. | Implements Data Protection by Design. |

| Liao et al. (2022) [9] | Algorithmic auditing and explainability controls. | Covers decision provenance and human oversight. |

| Kabir et al. (2025) [10] | Organisational trust and governance perspective. | Identifies measurable accountability metrics. |

| Kostopoulos et al. (2024) [11] | Operational transparency in decision-support systems. | Partially aligned with AI Act transparency obligations. |

| Islam et al. (2024) [7] | Unified evaluation framework for explanations. | Offers audit-relevant metrics; lacks full legal integration. |

| Longo et al. (2024) [12] | XAI 2.0 research manifesto; interdisciplinary challenges. | Addresses comprehensibility and transparency. |

| Pinto (2024) [13] | Standardised empirical evaluation of explanations. | Facilitates audit standardisation. |

| Pavlidis (2025) [14] | Application of XAI within the AI Act framework. | Explicitly AI Act–oriented. |

| Principle | Reference | Description | Application in the framework |

|---|---|---|---|

| Modularity | [34,35] | Independent, evolvable components. | Separated functional layers and replaceable modules across the technical and governance flows. |

| Separation of concerns | [36,37] | Each module has a single, well-defined responsibility. | Explicit distinction between technical and regulatory flows, and between data, model, explanation and audit layers. |

| Governance and accountability | [2,15,38] | Explicit responsibility and continuous traceability. | CDE, audit-ready logs and model lineage enabling oversight and responsibility allocation. |

| Bidirectionality and feedback | [19,39] | Dynamic two-way flows with iterative oversight. | Continuous synchronisation and feedback loops between technical and regulatory layers. |

| Transparency by design | [3,6,40] | Decisions are explainable and justifiable. | SHAP/LIME explanations, model cards and structured documentation integrated into evidence bundles. |

| Compliance-by-design and by-default | [1,2,8,24] | Legal requirements embedded from conception onward. | Policy-as-code, compliance gates in Continuous Integration/Continuous Deployment (CI/CD) and automatic generation of compliance evidence. |

| Metric / artefact | Regulatory objective | Legal basis / standard | Compliance evidence (examples) |

|---|---|---|---|

| Explanation fidelity | Transparency and justification of decisions | GDPR Arts. 5, 13–15; AI Act Art. 13 | SHAP/LIME reports with fidelity curves, minimum thresholds and documented limitations. |

| Explanation stability and robustness | Risk management and robustness of high-risk systems | AI Act Arts. 9, 15; ISO/IEC 42001 | Versioned stability and sensitivity tests, with documented variance under controlled input perturbations. |

| Comprehensibility (audience-specific) | Transparency and intelligibility for different stakeholders | GDPR Art. 12; AI Act Art. 13 | Model cards and layered summaries tailored to technical users, managers and auditors. |

| Decision provenance (decision trail) | Accountability and auditability of automated decisions | AI Act Art. 12; GDPR Art. 5(2) | Signed logs including model ID, canonical inputs, confidence scores and decision rationale references. |

| Model lineage | Governance, documentation and change management | AI Act Arts. 11–12; ISO/IEC 42001 | Versioned training records, datasets, hyperparameter configurations and validation documentation. |

| Data and concept drift detection | Post-deployment monitoring and lifecycle management | AI Act Title VIII; ISO/IEC 42001 | Alerts, challenge sets and documented roll-back or retraining procedures triggered by drift thresholds. |

| Compliance coverage (%) | Compliance-by-design and continuous governance | GDPR Art. 25; AI Act Art. 17 | Aggregated indicators from the CDE per build/release, reporting the proportion of mapped obligations with associated controls and evidence. |

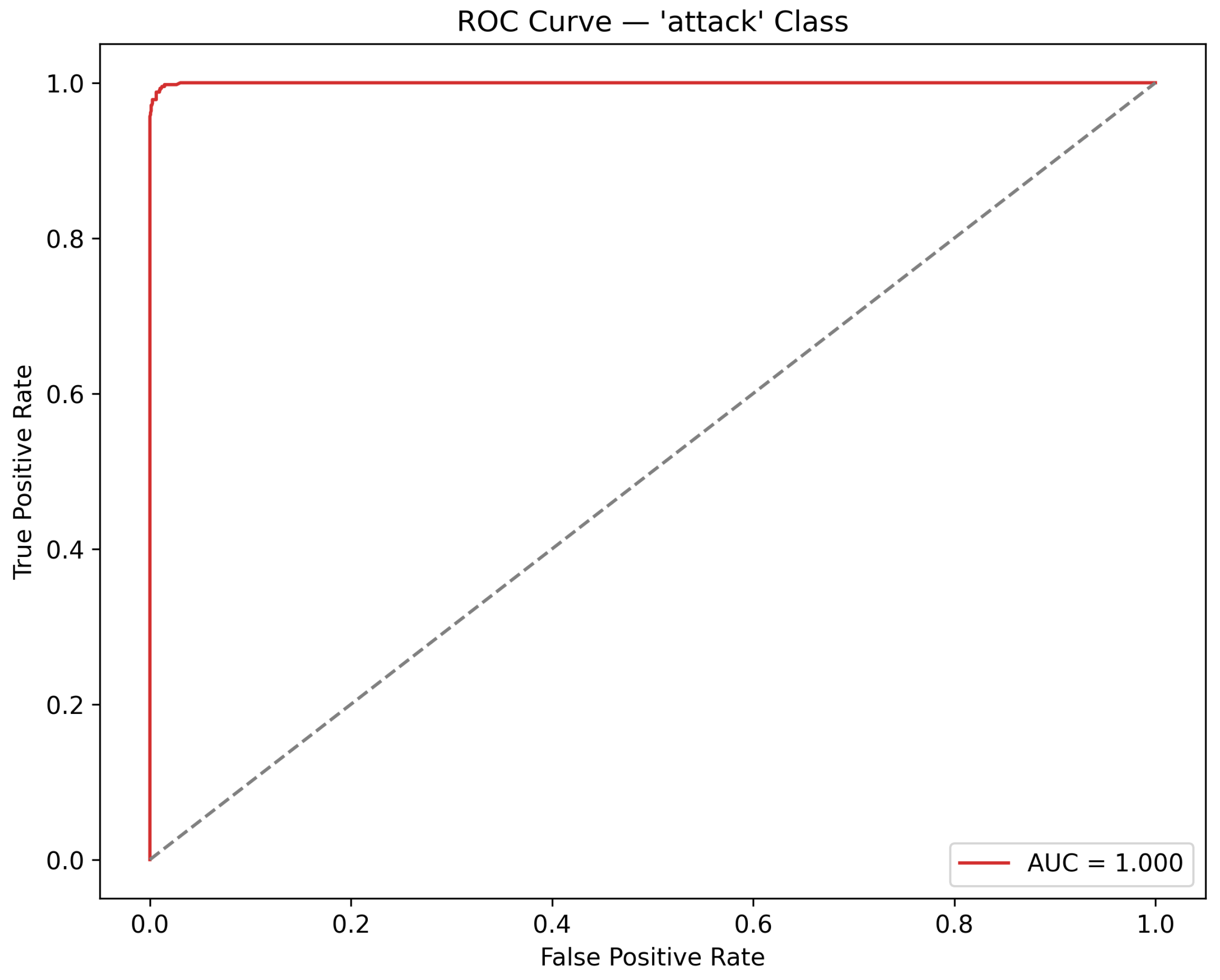

| Metric | Definition | Value |

|---|---|---|

| Accuracy | Overall proportion of correctly classified instances | 0.9905 |

| Precision (attack) | Proportion of predicted attack instances that are actually attack | 0.9760 |

| Recall (attack) | Proportion of true attack instances correctly identified as attack | 0.9783 |

| F1-score (attack) | Harmonic mean of precision and recall for the attack class | 0.9771 |

| ROC AUC (attack) | Area under the ROC curve for the attack class | 0.9997 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).