Submitted:

25 November 2025

Posted:

27 November 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Contrastive Learning

2.2. Masked Image Modeling

2.3. Discrete Representation Learning

3. Methodology

3.1. A Principled Objective: The Minimum Description Length

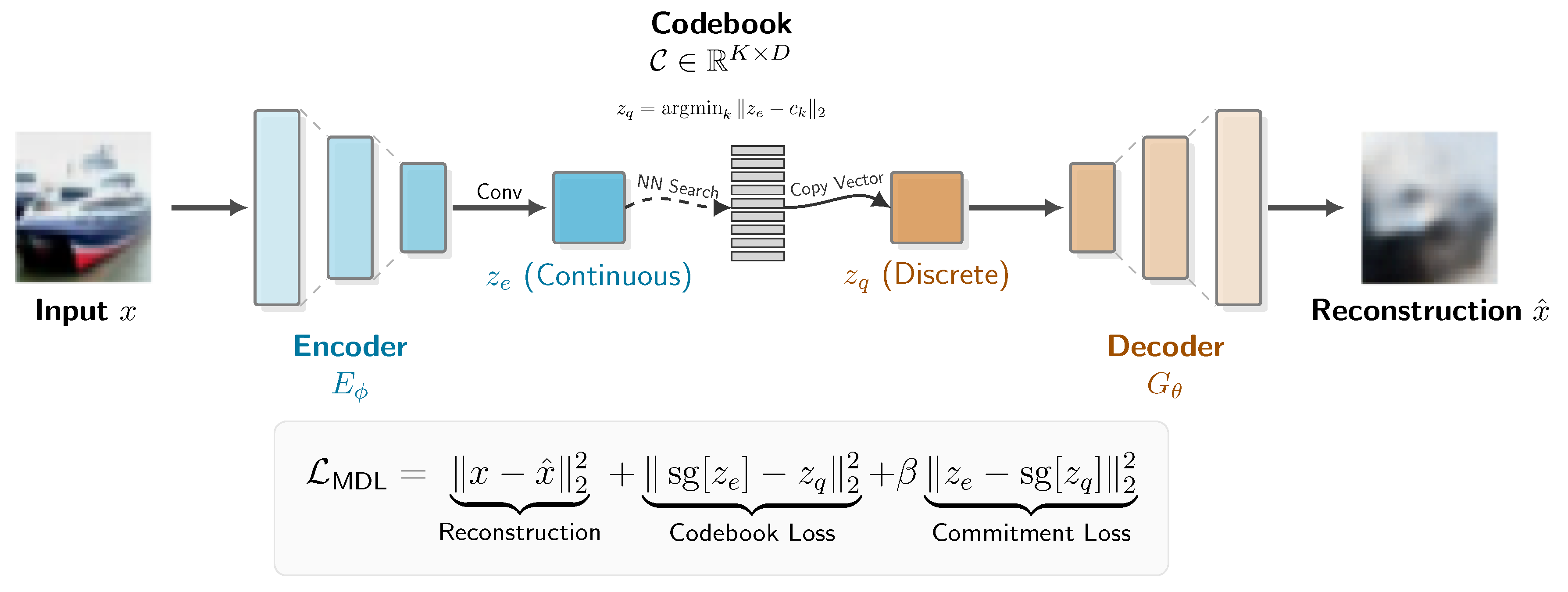

3.2. The MDL-Autoencoder (MDL-AE) Architecture

-

The Encoder (): A neural network, parameterized by , that maps an input image to a continuous intermediate representation . This representation is a spatial feature map. We experimented with two encoder architectures to study the effect of model capacity:

- A Simple CNN, consisting of a stack of three strided convolutional layers.

- A modified ResNet-18, where the initial convolution is adapted for 32x32 inputs and the final fully-connected layer is replaced with a 1x1 convolutional projection to produce an output with D channels.

- The Codebook (C): A learnable embedding layer that serves as our discrete vocabulary of "visual words." The codebook is a matrix , where K is the number of codebook vectors (a hyperparameter, NUM_EMBEDDINGS) and D is the dimensionality of each vector (EMBEDDING_DIM).

- The Decoder (): A neural network, parameterized by , that is symmetric to the encoder. It takes a quantized representation and reconstructs the original image .

3.3. The Training Objective: Operationalizing MDL

- Reconstruction Loss (): This term corresponds to , the cost of encoding the data given the model. It is the squared L2-norm between the original input image and its reconstruction from the quantized representation. Minimizing this term ensures that our discrete codebook is expressive enough to capture the essential information in the image.

-

Codebook Loss (): This term, along with the commitment loss, corresponds to , the cost of describing the model itself. It aims to make the "model" (our codebook) as efficient as possible by moving the codebook vectors closer to the encoder outputs that are mapped to them. This is achieved using a stop-gradient operator to ensure gradients only update the codebook embeddings.Here, the gradient from is blocked, so the loss only serves to pull the chosen vector from the codebook towards the encoder’s output .

-

Commitment Loss (): This is the complementary term that regularizes the encoder. It forces the encoder’s output to "commit" to its chosen codebook vector, preventing it from growing arbitrarily large. The hyperparameter controls the strength of this regularization. Again, a stop-gradient is used, but this time to ensure gradients only update the encoder parameters .In this term, the gradient from is blocked. The loss penalizes the encoder if its output is far from the codebook vector it was mapped to, encouraging the latent space to align with the learned discrete vocabulary.

3.4. The Straight-Through Estimator

3.5. Downstream Evaluation Protocols

- Linear Probe on Flattened Features: The encoder produces a spatial feature map . We flatten this map into a single vector of dimension for each image. A single linear layer is then trained on top of these frozen, flattened features to perform 10-way classification.

- Linear Probe on Globally Pooled Features: To test for spatial invariance, we apply an AdaptiveAvgPool2d layer to the encoder’s feature map , reducing it to a representation in . This is flattened to a vector of dimension . A linear layer is then trained on these global features.

- Vision Transformer (ViT) Head: To evaluate the features as a sequence of tokens, we designed a more sophisticated head. The frozen encoder and VQ layer act as a "tokenizer," producing a sequence of 16 discrete "visual word" vectors for each image. This sequence is prepended with a learnable [CLS] token, augmented with positional embeddings, and fed into a small Transformer encoder. A final linear layer is trained on the output [CLS] token’s representation. In this protocol, only the parameters of the Transformer and the final linear layer are updated.

- MAE-Aligned Vision Transformer Head: To test our hypothesis that the architectural mismatch can be resolved, we introduce a two-stage protocol. First, the ViT head is pre-trained on a self-supervised Masked Autoencoding (MAE) task. Given the sequence of 16 discrete tokens from the frozen tokenizer, we mask 75% of them and train the ViT head to predict the original token IDs of the masked positions. After this alignment phase, the masking is removed, and a final linear layer is trained on the output [CLS] token for the downstream classification task, following the same protocol as (3).

3.6. Implementation Details

4. Results and Discussion

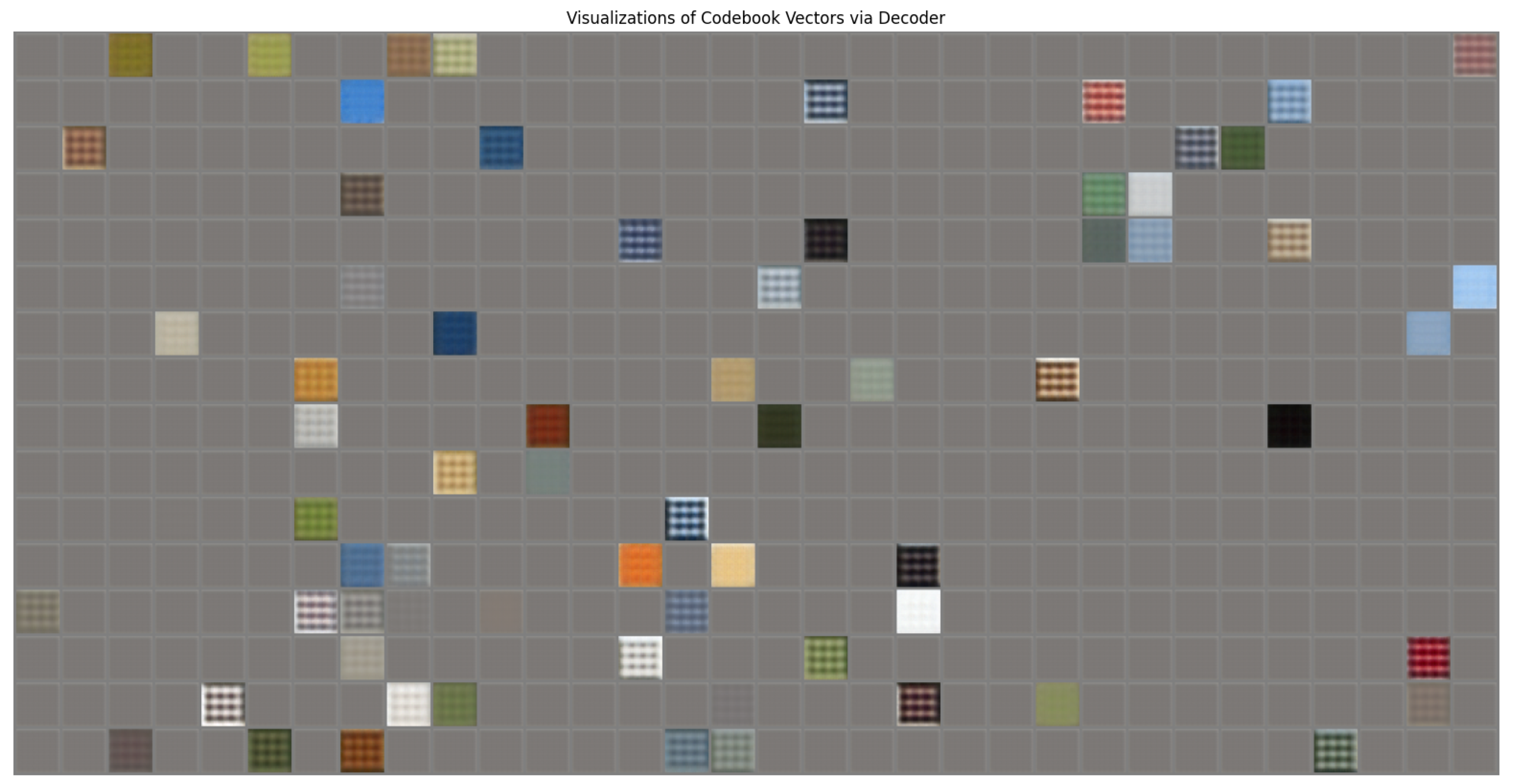

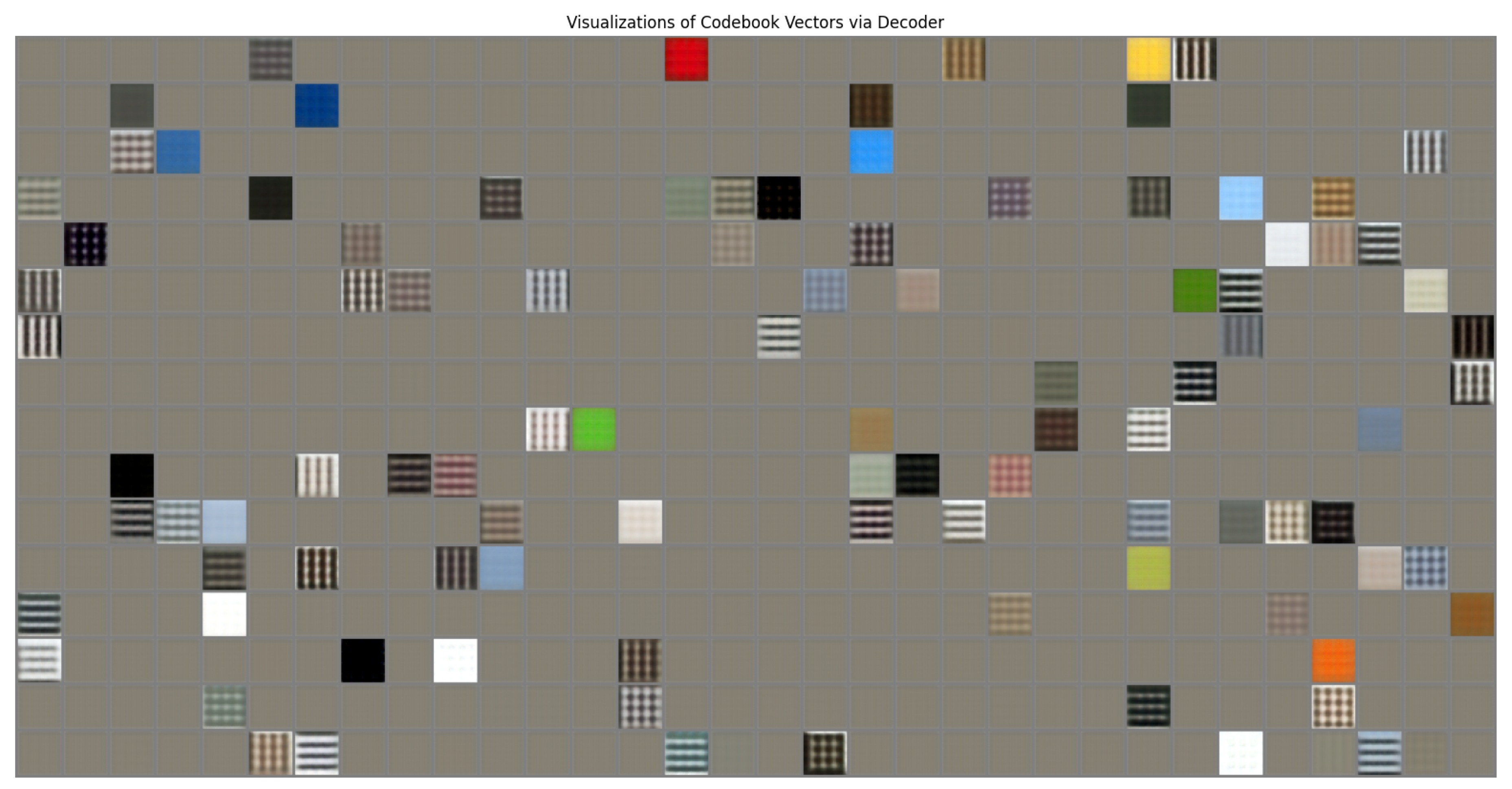

4.1. The MDL-AE as a High-Fidelity Tokenizer

Quantitative Evidence

Qualitative Evidence and Codebook Sparsity

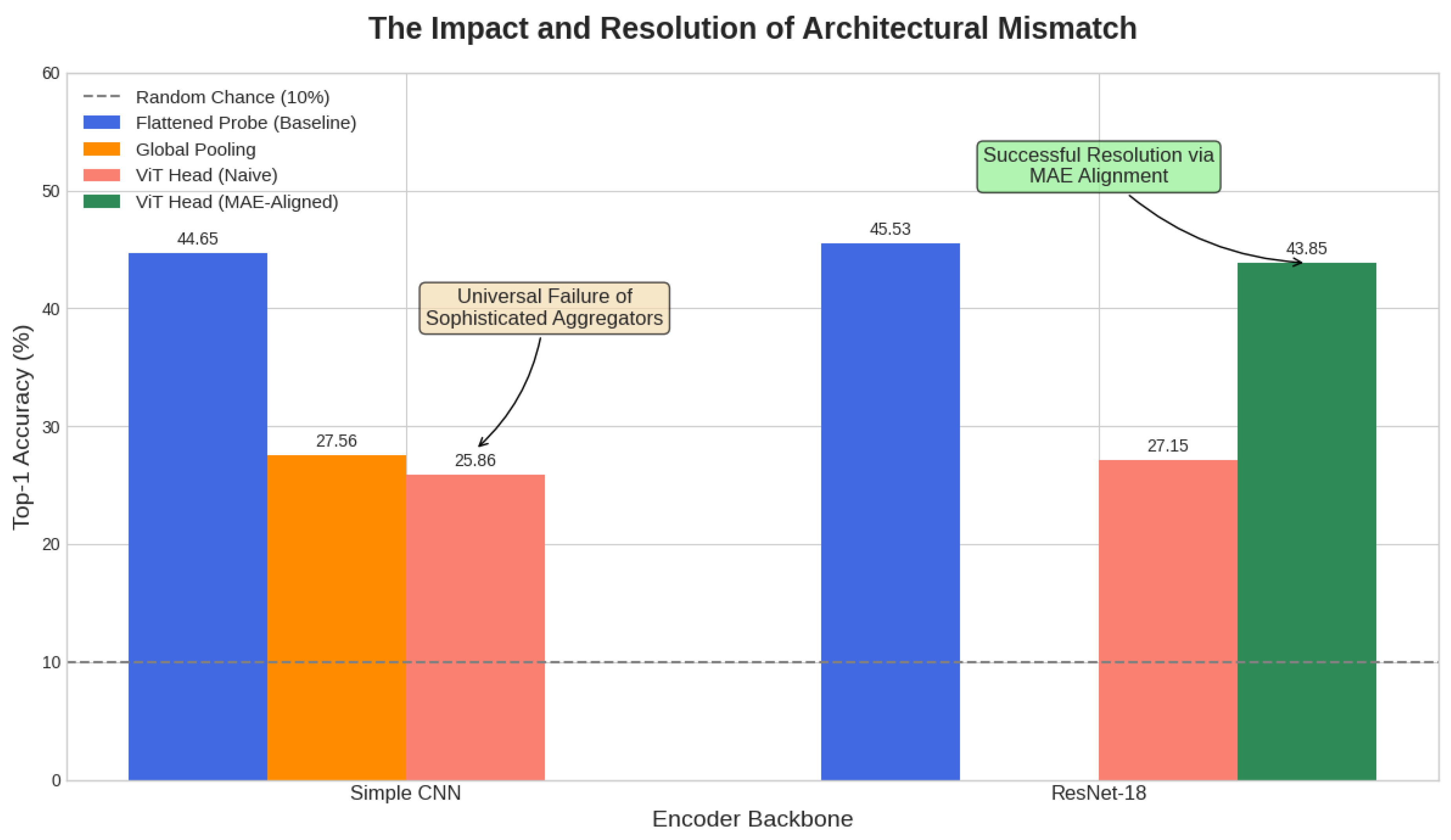

4.2. Deconstructing Performance: The Architectural Mismatch

The Paradox of Capacity

The Failure of Aggregation

4.3. Comparison with Discriminative Baselines

- Discriminative models (SimCLR) learn to discard information (e.g., exact orientation, background color) to achieve high classification accuracy.

- Generative/Compression models (MDL-AE) must preserve this information to satisfy the reconstruction bottleneck.

4.4. Resolving the Mismatch: Self-Supervised Alignment of the ViT Head

4.5. Synthesis: The Holistic Tokenizer and the Tyranny of the Tool

- Why the Flattened Probe is the "Least Bad" Option: This protocol functions as a simple "bag-of-object-parts" detector. A linear layer can learn the simple correlation: "if the highly informative `horse_coat_token` is present anywhere in the flattened 1024-dimensional input, the image is likely a horse." It relies on token presence, not relationships.

- Why the Transformer Fails: A Transformer is a powerful grammatical tool designed to find complex relationships between generic tokens to build meaning. Our holistic tokens, however, already contain the meaning. Asking a Transformer to find the "grammar" between ’a_car_wheel_token’ and ’a_car_window_token’ is a fundamentally mismatched task. Our results demonstrate this unequivocally: the Transformer’s performance is disastrous regardless of the quality of the holistic tokens. Whether they are the simpler tokens from the CNN or the richer, higher-fidelity tokens from the ResNet, the Transformer is unable to leverage them. Its complexity becomes a liability, as it is the wrong tool for the job of simple "token detection."

5. Conclusion and Future Work

Conclusion:

Future Work:

References

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE conference on computer vision and pattern recognition. Ieee; 2009; pp. 248–255. [Google Scholar]

- Chen, T.; Kornblith, S.; Norouzi, M.; Hinton, G. A simple framework for contrastive learning of visual representations. In Proceedings of the International conference on machine learning. PMLR; 2020; pp. 1597–1607. [Google Scholar]

- He, K.; Fan, H.; Wu, Y.; Xie, S.; Girshick, R. Momentum contrast for unsupervised visual representation learning. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2020, pp. 9729–9738.

- He, K.; Chen, X.; Xie, S.; Li, Y.; Dollár, P.; Girshick, R. Masked autoencoders are scalable vision learners. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022, pp. 16000–16009.

- Bao, H.; Dong, L.; Piao, S.; Wei, F. Beit: Bert pre-training of image transformers. In Proceedings of the International Conference on Learning Representations; 2021. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. In Proceedings of the International Conference on Learning Representations; 2021. [Google Scholar]

- Rissanen, J. Modeling by shortest data description. Automatica 1978, 14, 465–471. [Google Scholar] [CrossRef]

- Van Den Oord, A.; Vinyals, O.; et al. Neural discrete representation learning. In Proceedings of the Advances in neural information processing systems, Vol. 30. 2017. [Google Scholar]

- Wu, Z.; Xiong, Y.; Yu, S.X.; Lin, D. Unsupervised feature learning via non-parametric instance discrimination. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 3733–3742.

- Grill, J.B.; Strub, F.; Altché, F.; Tallec, C.; Richemond, P.H.; Buchatskaya, E.; Doersch, C.; Pires, B.A.; Guo, Z.D.; Azar, M.G.; et al. Bootstrap your own latent-a new approach to self-supervised learning. In Proceedings of the Advances in Neural Information Processing Systems, 2020, Vol. 33, pp. 21271–21284.

- Chen, X.; He, K. Exploring simple siamese representation learning. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2021; pp. 15750–15758. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), 2019, pp. 4171–4186.

- Ramesh, A.; Pavlov, M.; Goh, G.; Gray, S.; Voss, C.; Radford, A.; Chen, M.; Sutskever, I. Zero-shot text-to-image generation. In Proceedings of the International Conference on Machine Learning. PMLR; 2021; pp. 8821–8831. [Google Scholar]

- Razavi, A.; van den Oord, A.; Vinyals, O. Generating diverse high-fidelity images with vq-vae-2. In Proceedings of the Advances in Neural Information Processing Systems, 2019, Vol. 32.

- Esser, P.; Rombach, R.; Ommer, B. Taming transformers for high-resolution image synthesis. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021, pp. 12873–12883.

| Category | Hyperparameter | Value |

|---|---|---|

| MDL-AE Architecture | Codebook Size (K) | 512 |

| Embedding Dimension (D) | 64 | |

| Commitment Cost () | 0.25 | |

| Self-Supervised Pre-training | Optimizer | Adam |

| Learning Rate | 2×10−4 | |

| Batch Size | 128 | |

| Training Epochs | 15 / 50 | |

| Downstream Linear Probe | Optimizer | Adam |

| Learning Rate | 1×1010−3 | |

| Batch Size | 256 | |

| Training Epochs | 10 |

| Experiment ID | Encoder Backbone | Training Epochs | Evaluation Protocol | Final Recon Loss | Final VQ Loss | Final Accuracy (%) |

|---|---|---|---|---|---|---|

| 1a | Simple CNN | 15 | Flattened Probe | 0.0561 | 0.0767 | 40.44 |

| 1b | Simple CNN | 50 | Flattened Probe | 0.0484 | 0.0960 | 44.65 |

| 2 | ResNet-18 | 50 | Flattened Probe | 0.0473 | 0.1251 | 45.53 |

| 3a | Simple CNN | 50 | Global Pooling | 0.0495 | 0.0828 | 27.56 |

| 3b | Simple CNN | 50 | ViT Head | 0.0495 | 0.0828 | 25.86 |

| 3c | ResNet-18 | 50 | ViT Head | 0.0473 | 0.1251 | 27.15 |

| 3d | ResNet-18 | 50 | ViT Head (MAE-Aligned) | 0.0473 | 0.1251 | 43.85 |

| Method | Objective | Backbone | Accuracy (%) |

|---|---|---|---|

| Supervised | Cross-Entropy | ResNet-18 | 93.50 |

| SimCLR | Contrastive Learning | ResNet-18 | 88.20 |

| BYOL | Self-Distillation | ResNet-18 | 90.50 |

| MAE (ViT-T) | Reconstruction | ViT-Tiny | 55.40 |

| MDL-AE (Ours) | Compression | ResNet-18 | 45.53 |

| MDL-AE (+Align) | Compression | ResNet-18 | 43.85 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).