Submitted:

25 November 2025

Posted:

26 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

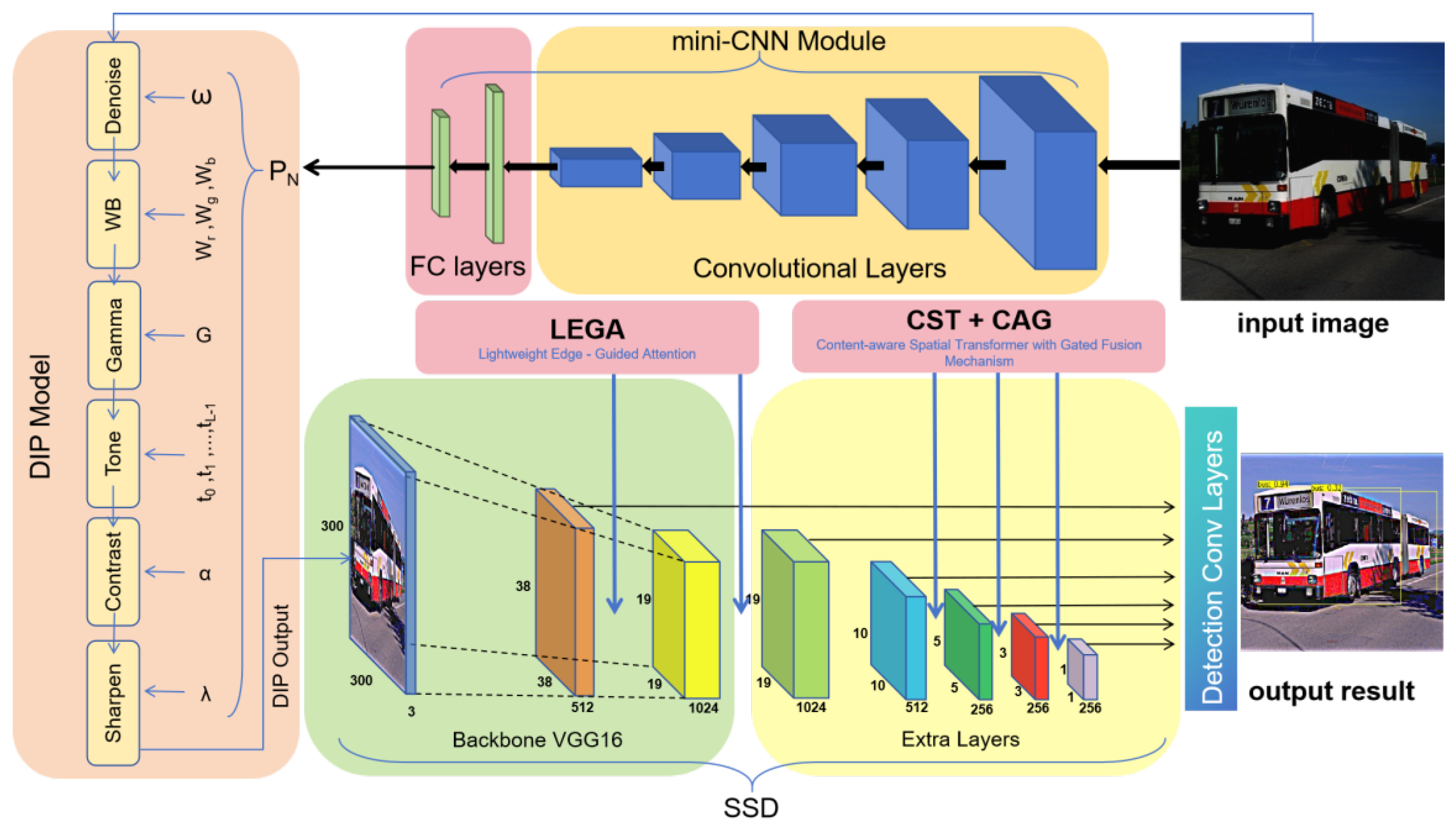

- A lightweight, multi-filter task-driven differentiable image processing (DIP) module is introduced to mitigate the mismatch between image restoration and object detection, enabling adaptive enhancement tailored to diverse degradation patterns.

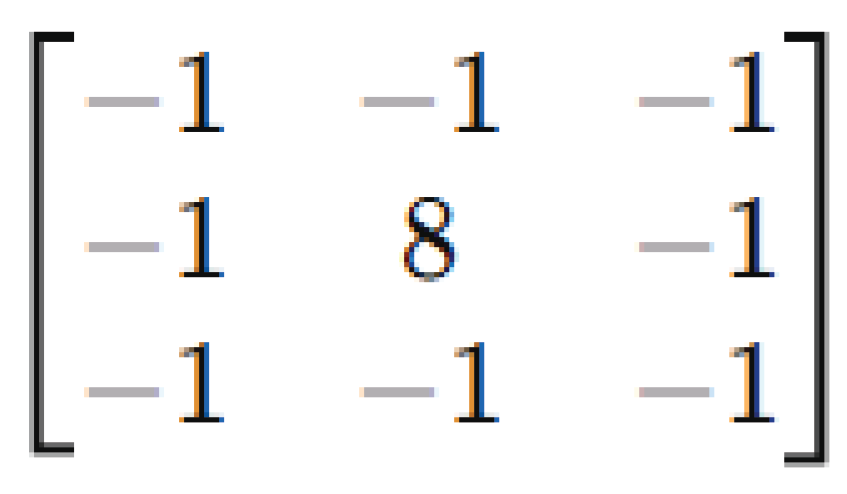

- A Lightweight Edge-Guided Attention (LEGA) mechanism is designed to reinforce structural cues in shallow high-resolution feature maps using a fixed Laplacian prior. This module improves boundary representation without introducing additional learnable parameters.

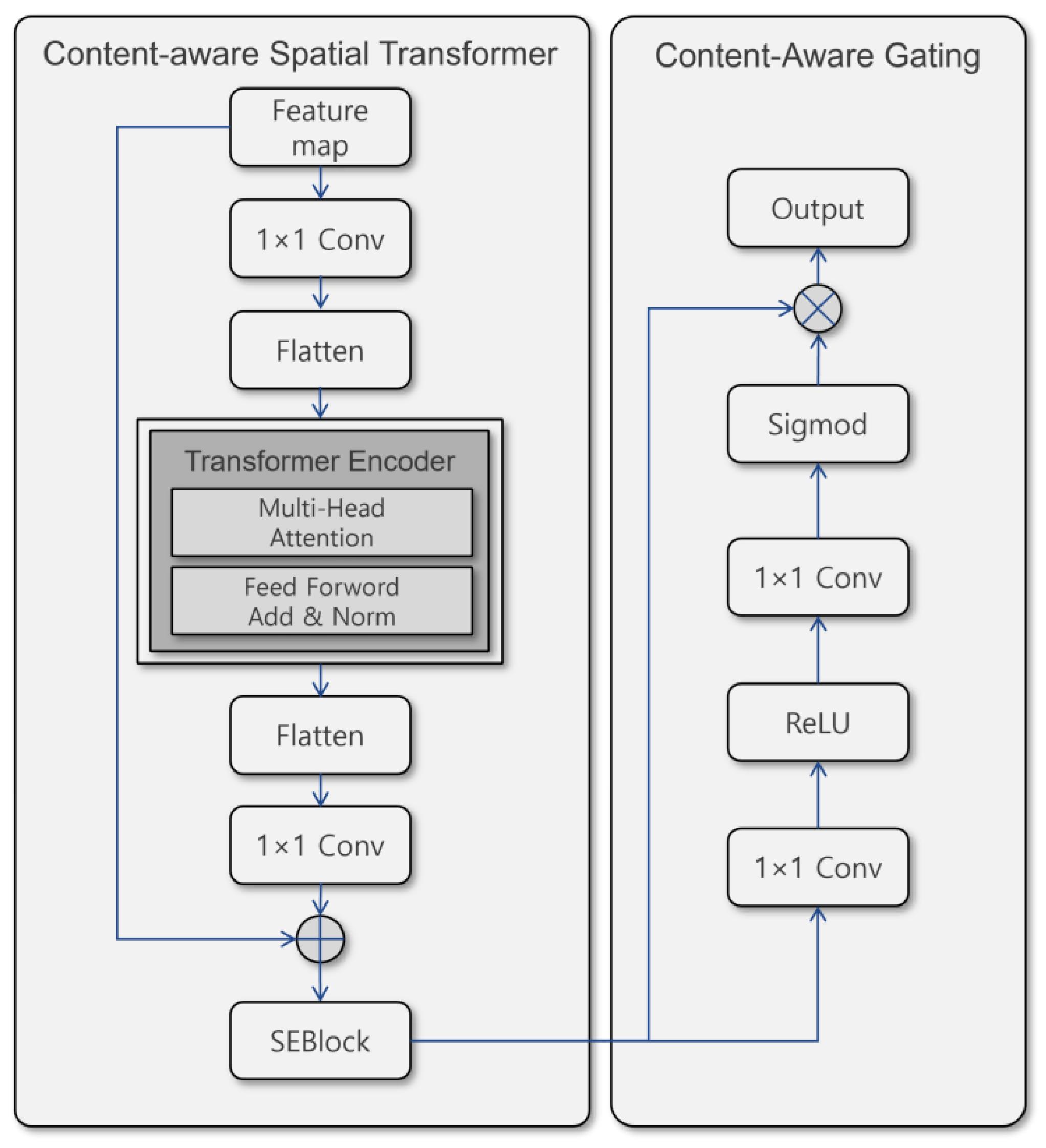

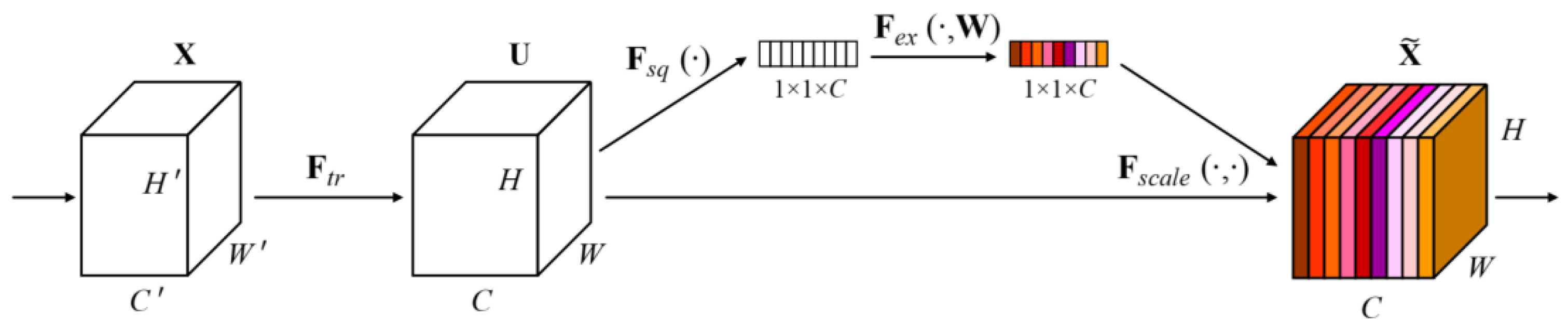

- A Content-aware Spatial Transformer with Gating (CSTG) is proposed to jointly strengthen global contextual reasoning and local semantic selectivity through a compact architecture. Integrated seamlessly with the multi-scale feature hierarchy of SSD, CSTG enhances semantic separation for small or blurred objects.

- A unified hierarchical degradation-aware pipeline is constructed by integrating DIP, LEGA, and CSTG, achieving robust performance across rain, fog, and low-light conditions while maintaining near real-time efficiency.

2. Related Work

2.1. Adverse Weather Object Detection

2.2. Differentiable Image Processing and Task-Driven Enhancement

2.3. Edge-Aware and Laplacian-Based Structural Refinement

2.4. Transformer and Gated Attention for Weather-Adaptation

2.5. Summary of Research Gap

3. Proposed Method

3.1. Overall Pipeline Architecture

3.2. Hierarchical Image-Structure-Semantic Refinement

3.2.1. Differentiable Image Processing

Denoise Filter.

Pixel-wise Filters.

Sharpen Filter.

3.2.2. Lightweight Edgie-Guided Attention

3.2.3. Content-aware Spatial Transformer with Gating

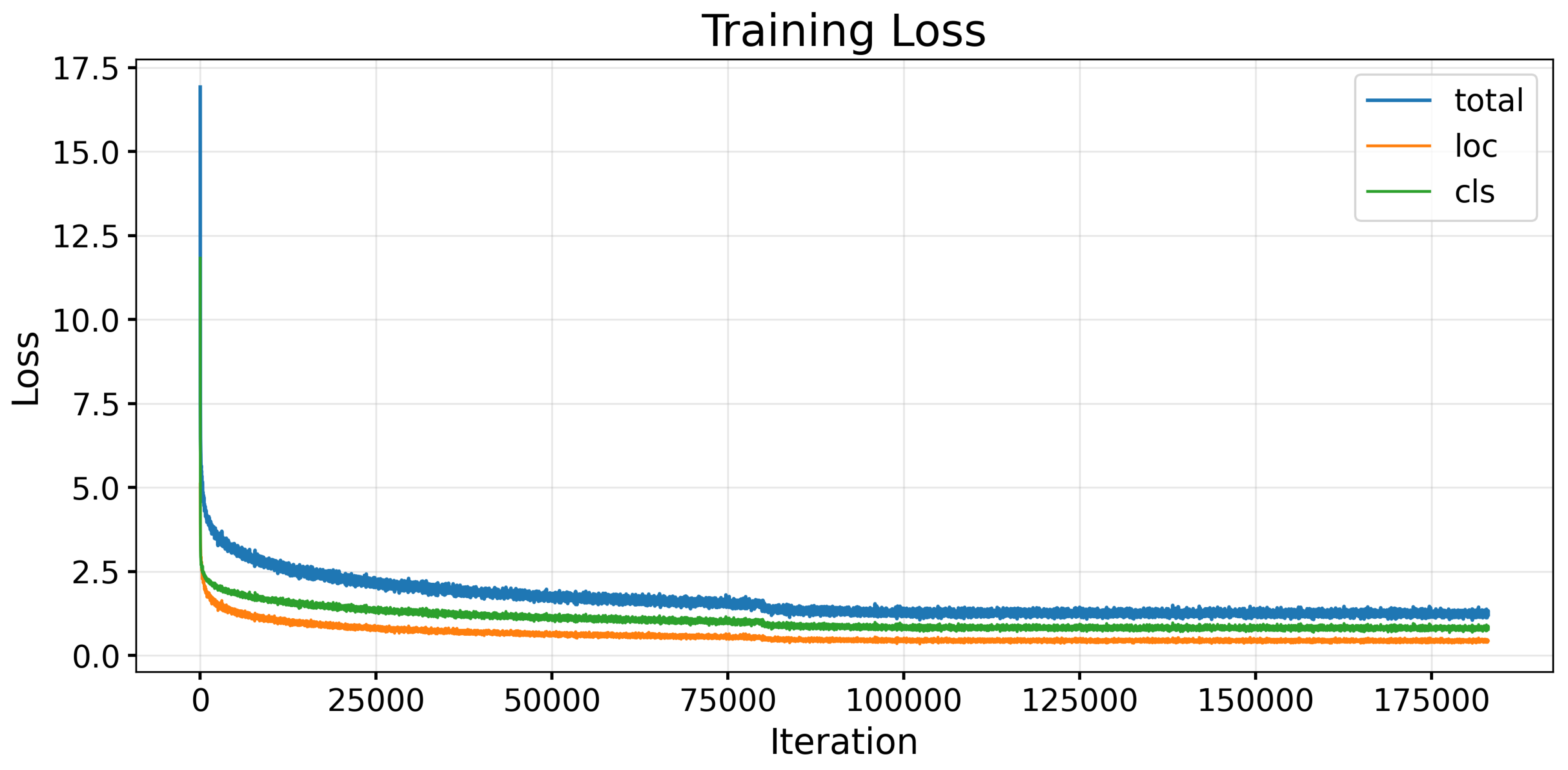

3.3. Joint Optimization and Training Objective

4. Experiments

4.1. Experimental Setup

4.1.1. Hardware and Software Environment

4.1.2. Dataset Preparation

4.1.3. Training Strategy

4.1.4. Evaluation Metrics

4.2. Experimental Results and Analysis

4.2.1. Quantitative Comparison with Baselines

4.2.2. Qualitative Results

4.2.3. Ablation Study

4.2.4. Efficiency Analysis

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Seo, A.; Woo, S.; Son, Y. Enhanced Vision-Based Taillight Signal Recognition for Analyzing Forward Vehicle Behavior. Sensors 2024, 24. [Google Scholar] [CrossRef] [PubMed]

- McCartney, E.J.; Hall, Freeman F., J. Optics of the Atmosphere: Scattering by Molecules and Particles. Physics Today 1977, 30, 76–77, [https://pubs.aip.org/physicstoday/article-pdf/30/5/76/8283978/76_1_online.pdf]. [CrossRef]

- He, K.; Sun, J.; Tang, X. Single image haze removal using dark channel prior. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, 2009, pp. 1956–1963. [CrossRef]

- Dong, H.; Pan, J.; Xiang, L.; Hu, Z.; Zhang, X.; Wang, F.; Yang, M.H. Multi-Scale Boosted Dehazing Network With Dense Feature Fusion. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020, pp. 2154–2164. [CrossRef]

- Liu, X.; Ma, Y.; Shi, Z.; Chen, J. GridDehazeNet: Attention-Based Multi-Scale Network for Image Dehazing. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), 2019, pp. 7313–7322. [CrossRef]

- Guo, C.; Li, C.; Guo, J.; Loy, C.C.; Hou, J.; Kwong, S.; Cong, R. Zero-Reference Deep Curve Estimation for Low-Light Image Enhancement. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020, pp. 1777–1786. [CrossRef]

- Kim, Y.T.; Bak, S.H.; Han, S.S.; Son, Y.; Park, J. Non-contrast CT-based pulmonary embolism detection using GAN-generated synthetic contrast enhancement: Development and validation of an AI framework. Computers in Biology and Medicine 2025, 198, 111109. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.; Son, Y. Generating Multi-View Action Data from a Monocular Camera Video by Fusing Human Mesh Recovery and 3D Scene Reconstruction. Applied Sciences 2025, 15. [Google Scholar] [CrossRef]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2015, pp. 1–9. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016, pp. 770–778. [CrossRef]

- Huang, S.C.; Le, T.H.; Jaw, D.W. DSNet: Joint Semantic Learning for Object Detection in Inclement Weather Conditions. IEEE Transactions on Pattern Analysis and Machine Intelligence 2021, 43, 2623–2633. [Google Scholar] [CrossRef] [PubMed]

- Chu, Z. D-YOLO a robust framework for object detection in adverse weather conditions. arXiv preprint arXiv:2403.09233 2024. [CrossRef]

- Le, T.H.; Huang, S.C.; Hoang, Q.V.; Lokaj, Z.; Lu, Z. Amalgamating Knowledge for Object Detection in Rainy Weather Conditions. ACM Trans. Intell. Syst. Technol. 2025, 16. [Google Scholar] [CrossRef]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. SSD: Single Shot MultiBox Detector. In Proceedings of the Computer Vision – ECCV 2016; Leibe, B.; Matas, J.; Sebe, N.; Welling, M., Eds., Cham, 2016; pp. 21–37.

- Zhao, Z.Q.; Zheng, P.; Xu, S.T.; Wu, X. Object Detection With Deep Learning: A Review. IEEE Transactions on Neural Networks and Learning Systems 2019, 30, 3212–3232. [Google Scholar] [CrossRef] [PubMed]

- Liu, B.; Jin, J.; Zhang, Y.; Sun, C. WRRT-DETR: Weather-Robust RT-DETR for Drone-View Object Detection in Adverse Weather. Drones 2025, 9. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.u.; Polosukhin, I. Attention is All you Need. In Proceedings of the Advances in Neural Information Processing Systems; Guyon, I.; Luxburg, U.V.; Bengio, S.; Wallach, H.; Fergus, R.; Vishwanathan, S.; Garnett, R., Eds. Curran Associates, Inc., 2017, Vol. 30.

- Wang, Y.; Zhang, J.; Zhou, J.; Han, M.; Li, S.; Miao, H. ClearSight: Deep Learning-Based Image Dehazing for Enhanced UAV Road Patrol. In Proceedings of the 2024 5th International Conference on Computer Vision, Image and Deep Learning (CVIDL), 2024, pp. 68–74. [CrossRef]

- Liu, W.; Ren, G.; Yu, R.; Guo, S.; Zhu, J.; Zhang, L. Image-Adaptive YOLO for Object Detection in Adverse Weather Conditions 2022. [arXiv:cs.CV/2112.08088].

- Kalwar, S.; Patel, D.; Aanegola, A.; Konda, K.R.; Garg, S.; Krishna, K.M. GDIP: Gated Differentiable Image Processing for Object-Detection in Adverse Conditions 2022. [arXiv:cs.CV/2209.14922].

- Ogino, Y.; Shoji, Y.; Toizumi, T.; Ito, A. ERUP-YOLO: Enhancing Object Detection Robustness for Adverse Weather Condition by Unified Image-Adaptive Processing 2024. [arXiv:cs.CV/2411.02799].

- Gupta, H.; Kotlyar, O.; Andreasson, H.; Lilienthal, A.J. Robust Object Detection in Challenging Weather Conditions. In Proceedings of the 2024 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2024, pp. 7508–7517. [CrossRef]

- Ghiasi, G.; Fowlkes, C.C. Laplacian Pyramid Reconstruction and Refinement for Semantic Segmentation 2016. [arXiv:cs.CV/1605.02264].

- Cai, J.; Sun, H.; Liu, N. B2Net: Camouflaged Object Detection via Boundary Aware and Boundary Fusion 2024. [arXiv:cs.CV/2501.00426].

- Bui, N.T.; Hoang, D.H.; Nguyen, Q.T.; Tran, M.T.; Le, N. MEGANet: Multi-Scale Edge-Guided Attention Network for Weak Boundary Polyp Segmentation 2023. [arXiv:cs.CV/2309.03329].

- Qiu, H.; Ma, Y.; Li, Z.; Liu, S.; Sun, J. BorderDet: Border Feature for Dense Object Detection 2021. [arXiv:cs.CV/2007.11056].

- Gharatappeh, S.; Sekeh, S.; Dhiman, V. Weather-Aware Object Detection Transformer for Domain Adaptation 2025. [arXiv:cs.CV/2504.10877].

- Jiang, L.; Ma, G.; Guo, W.; Sun, Y. YOLO-DH: Robust Object Detection for Autonomous Vehicles in Adverse Weather. Electronics 2025, 14. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition 2015. [arXiv:cs.CV/1409.1556].

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation Networks. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2018.

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuScenes: A Multimodal Dataset for Autonomous Driving. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2020.

| Study | Key Idea | Limitations |

|---|---|---|

| D-YOLO [12] | Hazy/clear feature fusion via attention | Heavy fusion; auxiliary subnetworks; no image-level enhancement |

| DSNet [11] | Joint visibility enhancement + detection | Difficult loss balancing; fog-specific; high complexity |

| AK-Net [13] | Weather degradation separation and feature fusion | Multiple subnetworks; limited generalization across conditions |

| WRRT-DETR [16] | Global self-attention for long-range context | High computational cost; unsuitable for real-time |

| ClearSight [18] | Deep dehazing before detection | Not jointly optimized; expensive preprocessing |

| Study | Key Idea | Limitations |

|---|---|---|

| ZeroDCE [6] | Zero-reference curve estimation; lightweight pixel-wise correction | Not task-driven; limited generalization to rain/fog; no multi-scale feature modeling |

| IA-YOLO [19] | Adaptive enhancement via integrated module | Add-on enhancement increases overhead; limited filter diversity |

| GDIP [20] | Parameterized image filters embedded into detection pipeline | Heavy operations on full-resolution images; limited structural modeling |

| ERUP-YOLO [21] | Unified image-adaptive filtering with Bezier-based pixel and kernel operations | Limited robustness to over-enhancement; no feature-level weather modeling |

| Augment + YOLOv5 [22] | Training with real all-weather data and physics/GAN-based augmentation | Synthetic noise often unstable; no joint enhancemnet-detection optimization |

| Study | Key Idea | Limitations |

|---|---|---|

| Laplacian Pyramid Reconstruction [23] | Refines semantic features using Laplacian pyramid–based boundary reconstruction | Requires multi-level decoding; high computational overhead |

| B2Net [24] | Explicit boundary extraction and fusion for camouflaged objects | Learnable boundary extractor increases parameters; less suitable for real-time |

| MEGANet [25] | Multi-scale edge features guide attention for weak-boundary segmentation | Multi-branch edge pathways raise complexity and memory usage |

| BorderDet [26] | Enhances dense detectors by modeling explicit border cues | Additional border heads and regression terms increase inference cost |

| Study | Key Idea | Limitations |

|---|---|---|

| Weather-aware RT-DETR [27] | Fog-adaptive dual-stream attention for RT-DETR | Inconsistent gains; limited generalization beyond fog |

| YOLO-DH [28] | Wavelet-guided dehazing and adaptive attention fusion | Added complexity; enhancement not jointly optimized with detection |

| Category | Specification |

|---|---|

| Operating System | Ubuntu 20.04.6 LTS (Focal Fossa) |

| Processor (CPU) | AMD EPYC 7742 64-Core Processor × 2 |

| Memory (RAM) | 1.0 TiB DDR4 ECC |

| GPU Model | NVIDIA Tesla V100-PCIE-32GB × 8 |

| CUDA Version | 12.2 |

| PyTorch Version | 2.2.1 (GPU-accelerated) |

| Language | Python 3.12 |

| Dataset | Split | Number of Images | Conditions | Classes |

|---|---|---|---|---|

| Filter-nuS | Train | 9,950 | Rain, Fog, Low Light | Pedestrian, Vehicle |

| Test | 900 |

| Group | Method | Mean-mAP | P-mAP | V-mAP | Loss | Params |

|---|---|---|---|---|---|---|

| Baseline | D-Yolo | 62.91% | 54.9% | 70.8% | 0.51 | 46M |

| ClearSight-OD | 61.81% | 49.2% | 71.5% | 0.54 | 60M | |

| Aug-Yolov5 | 62.53% | 50.6% | 74.4% | 0.53 | 21M | |

| AK-Net | 63.70% | 52.1% | 73.0% | 0.50 | 75M | |

| ZeroDCE | 59.67% | 48.1% | 70.5% | 0.51 | 25M | |

| DSNet | 62.10% | 48.2% | 75.8% | 0.52 | 40M | |

| Ours | CSTG-SSD | 62.54% | 50.4% | 74.6% | 0.49 | 28M |

| DLC-SSD | 64.29% | 53.5% | 75.0% | 0.44 | 29M |

| Enhancement | Detector | Performance | ||||

|---|---|---|---|---|---|---|

| DIP | SSD | LEGA | ST | CAG | mAP | Times |

| ✓ | 61.01% | 12ms | ||||

| ✓ | ✓ | ✓ | 61.95% | 14ms | ||

| ✓ | ✓ | ✓ | ✓ | 62.54% | 15ms | |

| ✓ | ✓ | 63.65% | 15ms | |||

| ✓ | ✓ | ✓ | ✓ | ✓ | 64.29% | 19ms |

| Ours vs. SSD | 3.28%↑ | - | ||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).