Submitted:

24 November 2025

Posted:

25 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

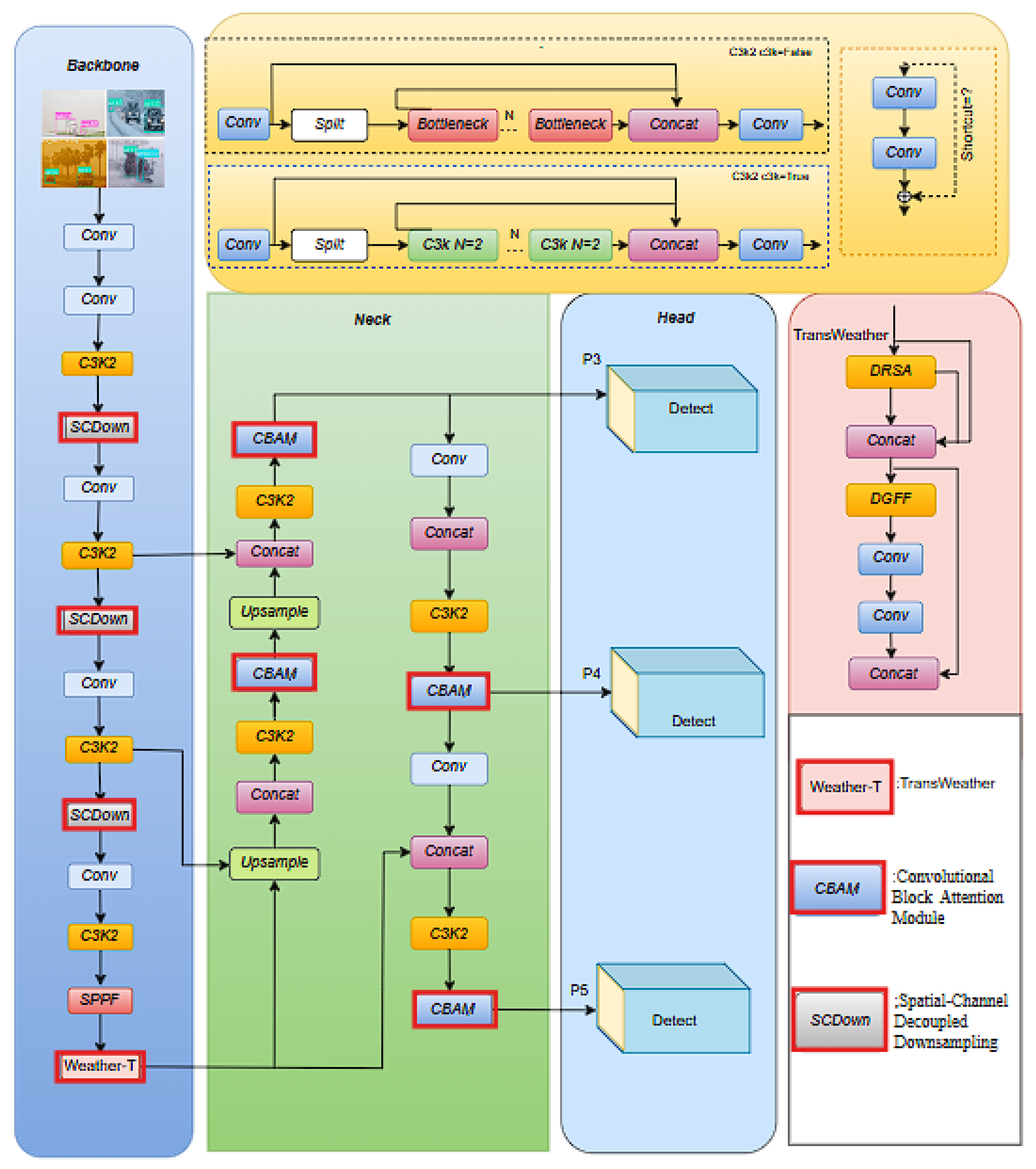

- To improve feature extraction from objects that are blurred or occluded by rain, snow, fog, or sandstorms, we integrated the TransWeather Block [13,14] into the PSA block of C2PSA in the final layer of the backbone network in YOLOv11, enabling the model to capture both local and global features effectively.

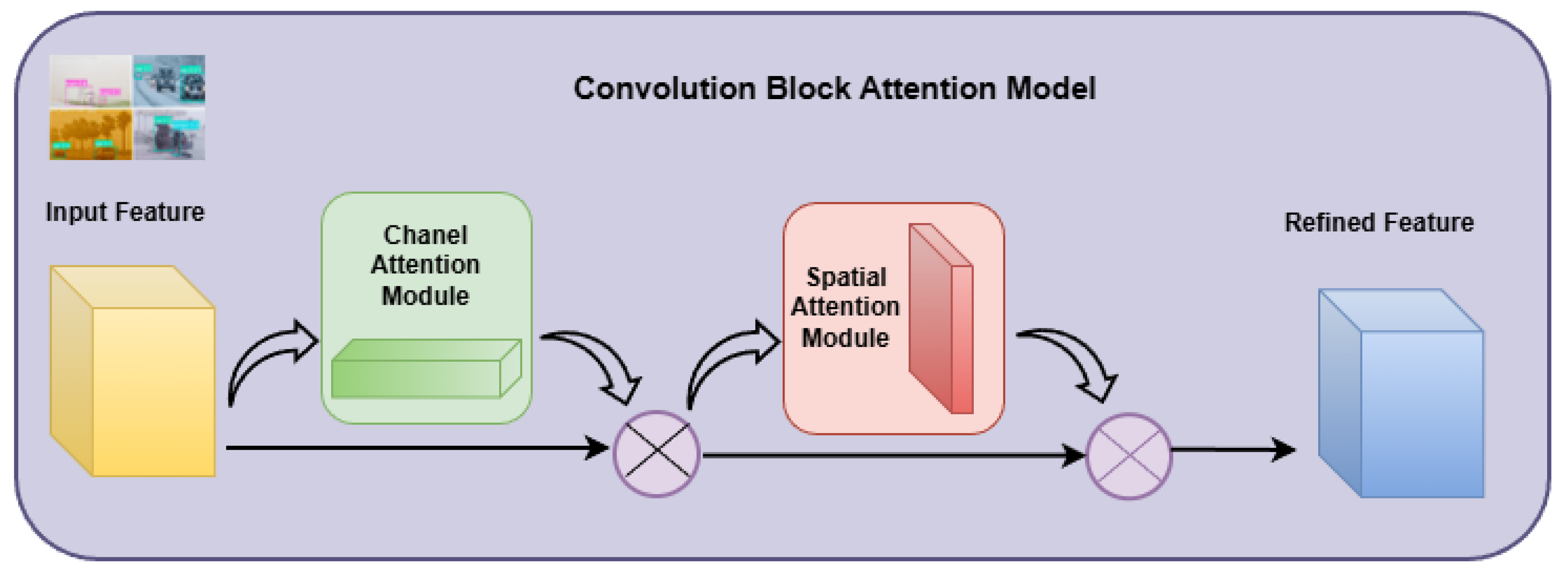

- Within the Neck network, we incorporated the Convolutional Block Attention Module (CBAM) [15] following each C3k2 layer to highlight salient features in the feature maps while diminishing less relevant information via channel-wise and spatial attention mechanisms.

- To reduce the computational cost introduced by TransWeather and CBAM without sacrificing detection accuracy, we substituted selected convolutional layers in the backbone network with Spatial-Channel Decoupled Downsampling (SCDown) modules [16,17]. These modules make the model more efficient by compactly reducing both the spatial and channel dimensions of the feature maps, while simultaneously enhancing detection performance.

- The proposed YOLOv11-TWCS model was extensively evaluated on the DAWN dataset using key metrics such as mAP@0.5, FPS, GFLOPs, and parameter count. Experimental results and ablation studies verify that each integrated module significantly enhances detection accuracy and computational efficiency. Its strong generalization capability is further validated through reliable results on the Udacity and KITTI datasets under both clear and low-light environments.

2. Related Work

3. Methodology

3.1. Basic YOLOv11 Model

3.2. Overview of YOLOv11-TWCS Model

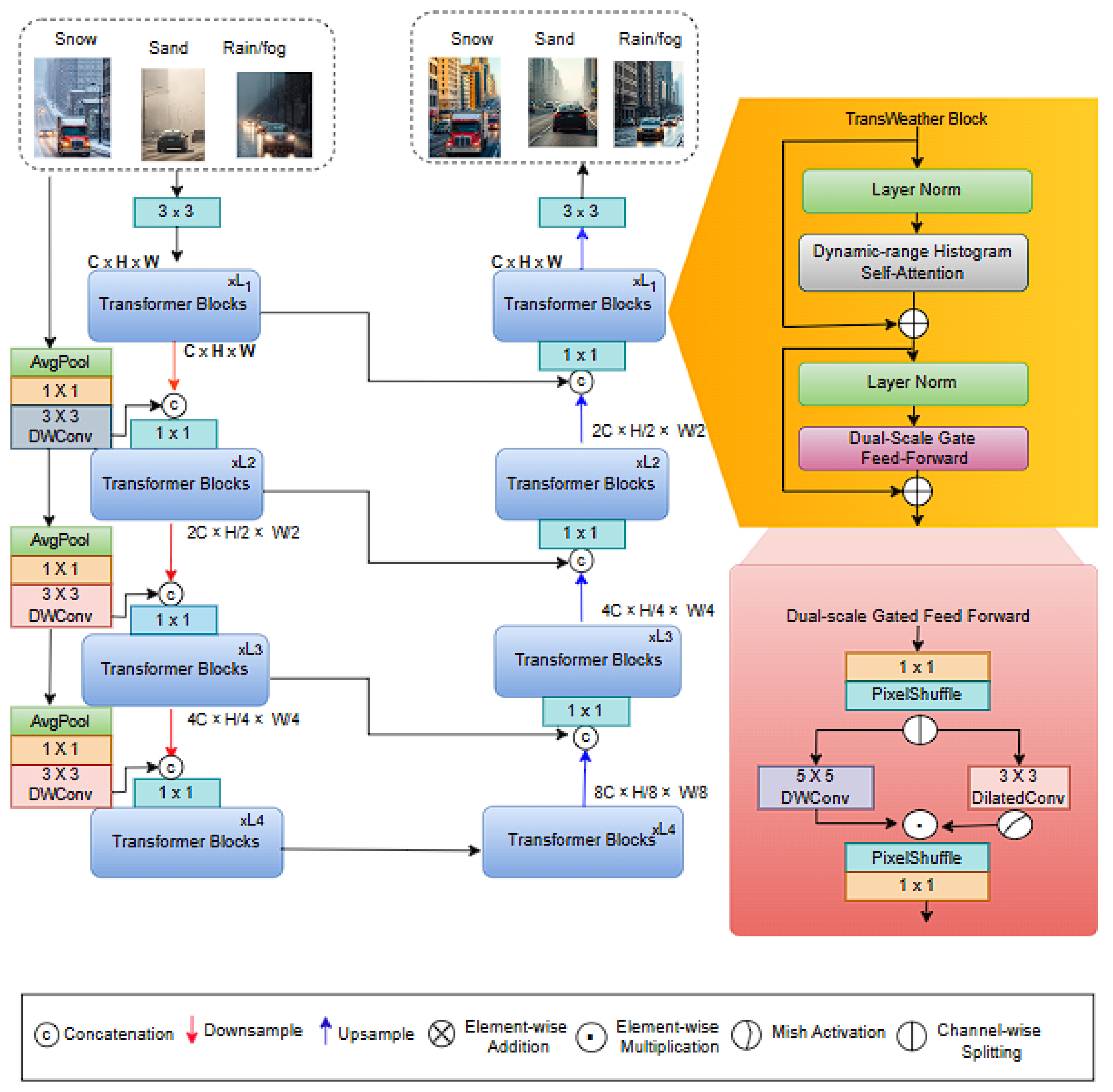

3.3. TransWeather Block

3.4. CBAM

3.5. SCDown Module

4. Experiments and Results

4.1. Dataset and Experimental Setup

4.2. Experimental Evaluation Criteria

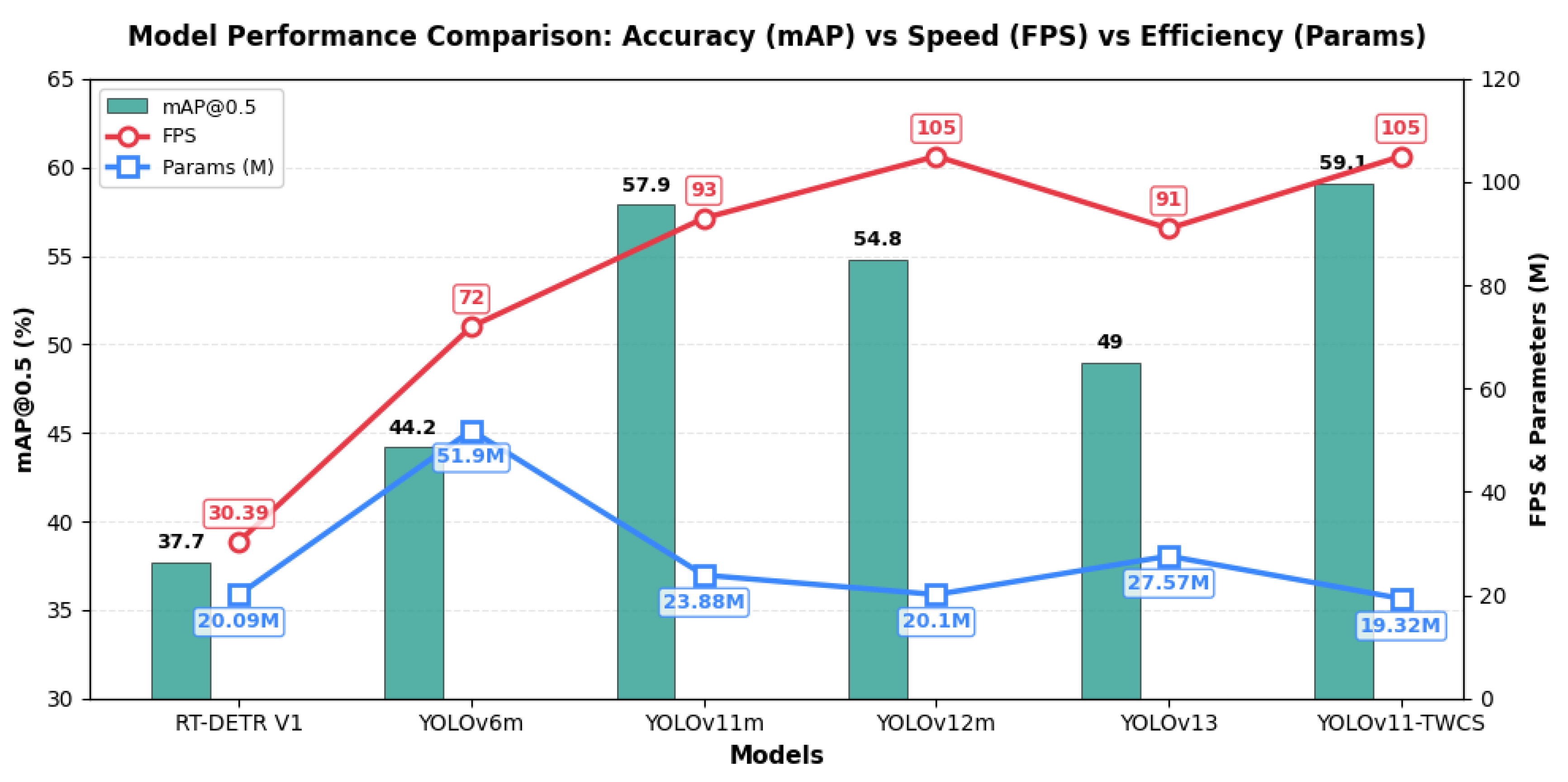

4.3. Performance Analysis on DAWN Dataset

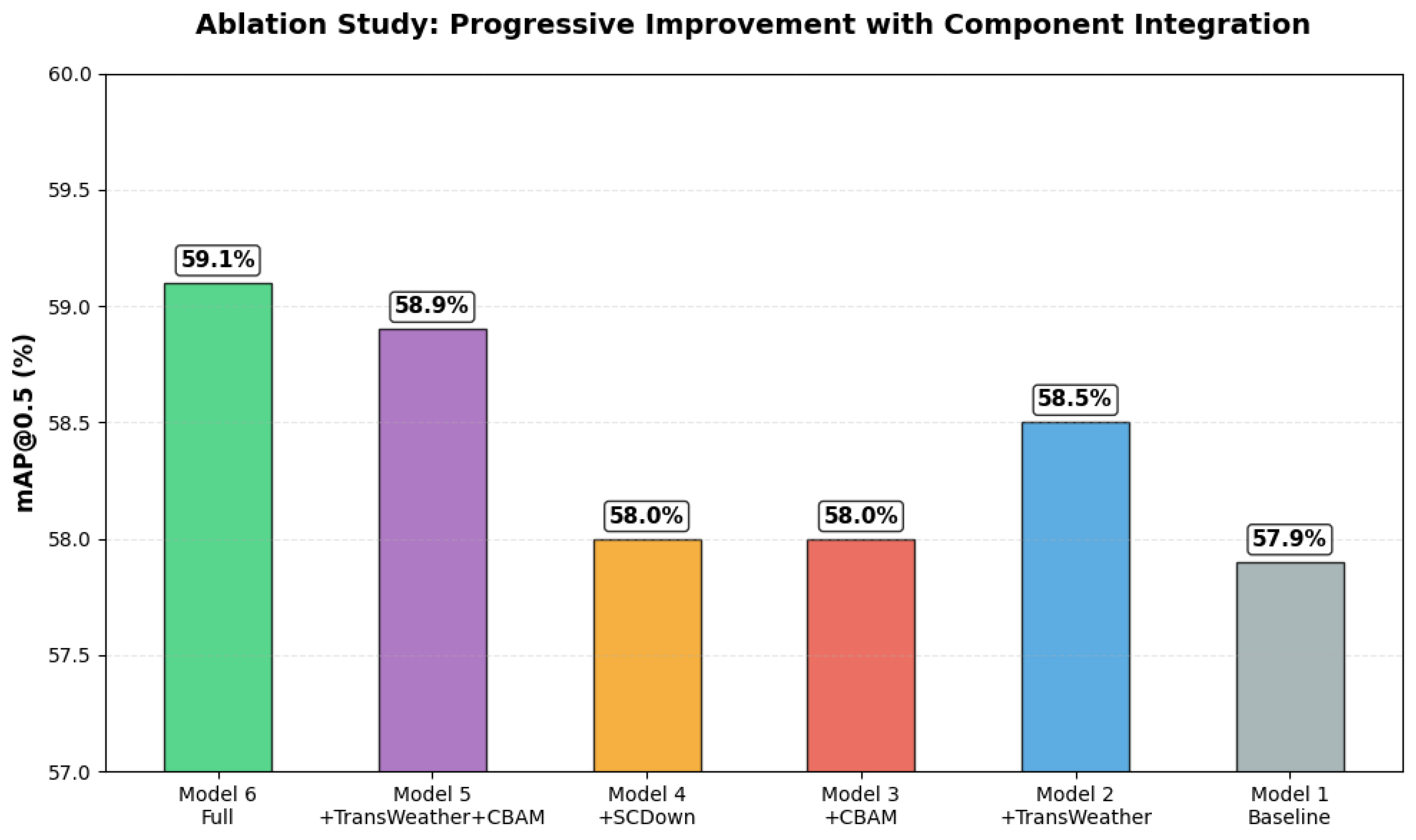

4.4. Ablation Study

4.5. Generalization Performance on Udacity and KITTI Datasets

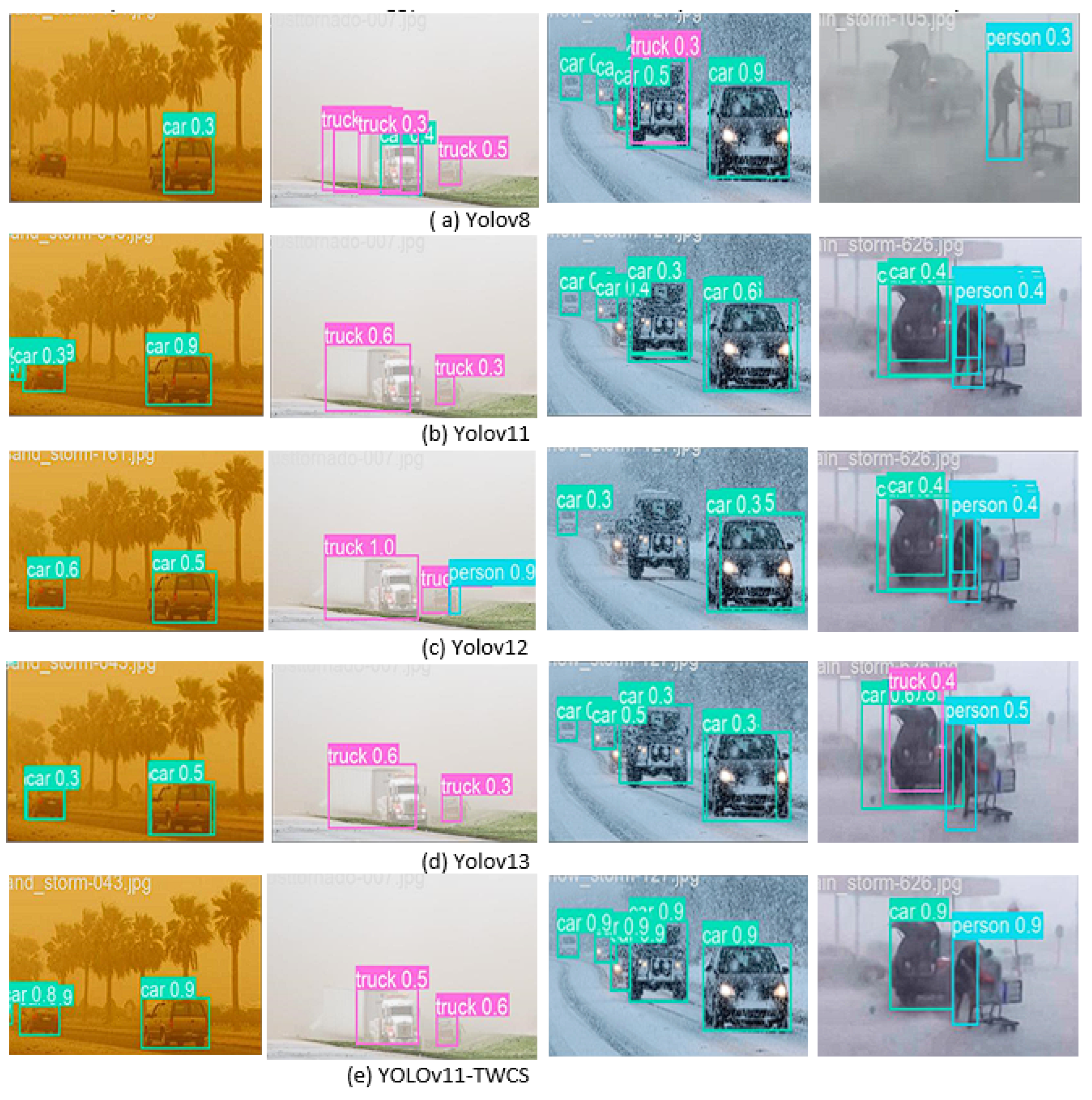

4.6. Visualization Results on DAWN Dataset

4.6.1. Sandstorm Condition Analysis

4.6.2. Foggy Weather Evaluation

4.6.3. Snowy Weather Detection Challenges

4.6.4. Rainy Condition Performance

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- R. Zhao, S. H. Tang, J. Shen, E. E. B. Supeni, and S. A. Rahim, “Enhancing autonomous driving safety: A robust traffic sign detection and recognition model TSD-YOLO,” Signal Process., vol. 225, Dec. 2024, Art. no. 109619.

- D. Dai, J. Wang, Z. Chen, and H. Zhao, “Image guidance based 3D vehicle detection in traffic scene,” Neurocomputing, vol. 428, pp. 1–11, Mar. 2021.

- R. Girshick, J. Donahue, T. Darrell, and J. Malik, “Rich feature hierarchies for accurate object detection and semantic segmentation,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit., Jun. 2014, pp. 580–587.

- S. Ren, K. He, R. Girshick, and J. Sun, “Faster R-CNN: Towards real-time object detection with region proposal networks,” IEEE TPAMI, vol. 39, no. 6, pp. 1137–1149, Jun. 2017.

- K. He, G. Gkioxari, P. Dollár, and R. Girshick, “Mask R-CNN,” IEEE TPAMI, vol. 42, no. 2, pp. 386–397, Feb. 2020.

- W. Liu et al., “SSD: Single shot multibox detector,” in Proc. ECCV, 2016, pp. 21–37.

- L. Zheng, C. Fu, and Y. Zhao, “Extend the shallow part of single shot multibox detector via convolutional neural network,” Proc. SPIE, vol. 10806, Aug. 2018.

- J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, “You only look once: Unified, real-time object detection,” in Proc. CVPR, Jun. 2016, pp. 779–788.

- J. Redmon and A. Farhadi, “YOLO9000: Better, faster, stronger,” in Proc. CVPR, Jul. 2017, pp. 6517–6525.

- K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in Proc. CVPR, Jun. 2016, pp. 770–778.

- W. Chu et al., “Multi-task vehicle detection with region-of-interest voting,” IEEE Trans. Image Process., 2017.

- R. Khanam and M. Hussain, “YOLOv11: An Overview of the Key Architectural Enhancements,” arXiv preprint, arXiv:2410.17725, 2024.

- S. Sun, W. Ren, X. Gao, R. Wang, and X. Cao, “Restoring Images in Adverse Weather Conditions via Histogram Transformer,” arXiv preprint, arXiv:2407.10172, Jul. 2024.

- Valanarasu, J.M.J.; Yasarla, R.; Patel, V.M. TransWeather: Transformer-Based Restoration of Images Degraded by Adverse Weather Conditions. 2024, arXiv:2409.06334.

- S. Woo, J. Park, J. Y. Lee, and I. S. Kweon, “CBAM: Convolutional block attention module,” in Proc. European Conference on Computer Vision (ECCV), Munich, 2018, pp. 3-19.

- D. Chen and L. Zhang, “SL-YOLO: A stronger and lighter drone target detection model,” 2024, arXiv:2411.11477.

- A. Wang, H. Chen, L. Liu, K. Chen, Z. Lin, J. Han, and G. Ding, “YOLOv10: Real-time end-to-end object detection,” 2024, arXiv:2405.14458.

- A. Bochkovskiy, C.-Y. Wang, and H.-Y. Mark Liao,“YOLOv4: Optimal speed and accuracy of object detection,” 2020, arXiv:2004.10934.

- S. Liu, D. Huang, and Y. Wang, “Receptive Field Block Net for Accurate and Fast Object Detection,” arXiv preprint arXiv:1711.07767, Jul. 2018.

- X. Zhu, S. Lyu, X. Wang, and Q. Zhao, “TPH-YOLOv5: Improved YOLOv5 based on transformer prediction head for object detection on drone-captured scenarios,” in Proc. IEEE/CVF Int. Conf. Comput. Vis. Workshops (ICCVW), Oct. 2021, pp. 2778–2788.

- Z. Liu, Y. Lin, Y. Cao, H. Hu, Y. Wei, Z. Zhang, S. Lin, and B. Guo, “Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows,” Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), pp. 10012–10022, 2021.

- Y. Zhao, W. Lv, S. Xu, J. Wei, G. Wang, Q. Dang, Y.Liu, and J. Chen, “DETRs beat YOLOs on real-time object detection,” in Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR), Jun. 2024, pp. 16965–16974.

- W. Lv, Y. Zhao, Q. Chang, K. Huang, G. Wang, and Y. Liu, “RT DETRv2: Improved baseline with bag-of-freebies for real-time detection transformer,” 2024, arXiv:2407.17140. I.

- C. Lyu, W. Zhang, H. Huang, Y. Zhou, Y. Wang, Y. Liu, S. Zhang, and K. Chen, “RTMDet: An Empirical Study of Designing Real-Time Object Detectors,” arXiv preprint arXiv:2212.07784, Dec. 16, 2022.

- H. Liu, Y. Ma, H. Jiang, and T. Hong, “SPR-YOLO: A Traffic Flow Detection Algorithm for Fuzzy Scenarios,” Arabian Journal for Science and Engineering, King Fahd University of Petroleum and Minerals, accepted Jan. 2025.

- N. U. A. Tahir, Z. Zhang, M. Asim, S. Iftikhar, and A. A. Abd El-Latif, “PVDM-YOLOv8l: A solution for reliable pedestrian and vehicle detection in autonomous vehicles under adverse weather conditions,” Multimedia Tools and Applications, Springer Nature, accepted Sept. 2024.

- Z. Su, J. Yu, H. Tan, X. Wan, and K. Qi, “MSA-YOLO: A remote sensing object detection model based on multi-scale strip attention,” Sensors, vol. 23, no. 15, p. 6811, Jul. 2023.

- M. A. Kenk and M. Hassaballah, “DAWN: Vehicle detection in adverse weather nature dataset,” 2020, arXiv:2008.05402.

- A. Geiger, P. Lenz, and R. Urtasun, “Are we ready for autonomous driving? the KITTI vision benchmark suite,” in Proc. IEEE Conference on Computer Vision and Pattern Recognition, 2012, pp. 3354–3361.

| Parameter | Value |

|---|---|

| Epochs | 300 |

| Batch Size | 8 |

| Initial Learning Rate | 0.01 |

| Momentum | 0.937 |

| Weight Decay | 0.0005 |

| Input Size (DAWN) | 416 × 416 |

| Input Size (Udacity) | 512 × 512 |

| Input Size (KITTI) | 640 × 640 |

| Data Augmentation | Mosaic augmentation |

| Models | Params (M) | GFLOPs | Precision | Recall | mAP@0.5 | mAP@0.5:0.95 | FPS |

|---|---|---|---|---|---|---|---|

| SwinTransformer [21] | 51.3 | 455 | 56.4 | 50.8 | 46.2 | 27.73 | 27.73 |

| YOLOv9m | 20.0 | 76.9 | 49.7 | 50.8 | 48.5 | 22.8 | 98 |

| RT-DETR V1 [22] | 20.09 | 61.17 | - | - | 37.70 | 21.80 | 30.39 |

| YOLOv6m | 51.9 | 161 | 47.9 | 46.9 | 44.2 | 28.5 | 72 |

| SPR-YOLO [25] | 3.9 | 14.6 | 63.5 | 33.5 | 50.8 | 30.48 | 214.68 |

| Faster R-CNN [4] | 138 | 231.7 | 53.04 | 56.5 | 26.4 | 14.01 | 29 |

| PVDM-YOLOv8l [26] | 104.7 | 458 | 75.8 | 50.4 | 55.2 | 30.2 | 70.0 |

| RT-DETR V2 [23] | 20.09 | 61.17 | - | - | 36.46 | 21.98 | 30.67 |

| YOLOv8_MobileNetV3 [25] | 2.3 | 5.7 | 51.2 | 45.6 | 40.4 | 24.6 | 162.48 |

| YOLOv8m | 25.8 | 79 | 49.3 | 50.7 | 47.7 | 22.0 | 97 |

| YOLOv13 | 27.57 | 89.0 | 45.1 | 43.0 | 49.0 | 21.0 | 91 |

| YOLOv11m | 23.88 | 85.5 | 56.0 | 44.2 | 57.9 | 35.7 | 93 |

| YOLOv12m | 20.1 | 67.1 | 54.4 | 45.6 | 54.8 | 33.2 | 105 |

| YOLOv11-TWCS | 19.32 | 67.5 | 57.2 | 64.1 | 59.1 | 36.1 | 105 |

| Models | Components | mAP@0.5 | mAP@0.5:0.95 | Params (M) | FPS | |||

|---|---|---|---|---|---|---|---|---|

| Baseline | ransWeather | CBAM | SCDown | |||||

| Model 1 | ✔ | 57.9 | 35.7 | 23.83 | 93 | |||

| Model 2 | ✔ | ✔ | 58.5 | 35.9 | 21.80 | 100 | ||

| Model 3 | ✔ | ✔ | 58.0 | 36.0 | 24.10 | 91 | ||

| Model 4 | ✔ | ✔ | 58.0 | 35.8 | 22.00 | 97 | ||

| Model 5 | ✔ | ✔ | ✔ | 58.9 | 36.0 | 20.51 | 103 | |

| Model 6 | ✔ | ✔ | ✔ | ✔ | 59.1 | 36.1 | 19.32 | 105 |

| Model | KITTI dataset | Udacity dataset | Params | GFLOPs | ||||

|---|---|---|---|---|---|---|---|---|

| mAP50 | mAP50-95 | FPS | mAP50 | mAP50-95 | FPS | (M) | ||

| YOLOv4 [18] | 77.6 | 46.1 | 48 | 83.4 | 50.2 | 50.2 | 27.6 | 26 |

| TPH-YOLOv5 [20] | 79.5 | 51.2 | 35.5 | 86.5 | 56.5 | 36.3 | 95 | 237 |

| RFBNet [19] | 73.44 | 39.20 | 32.5 | 77.9 | 41.2 | 32 | 34.5 | 95.0 |

| SSD [6,7] | 50 | 18 | 28 | 52.1 | 20.3 | 21.8 | 24 | 31.4 |

| Faster R-CNN [4] | 72.5 | 45.5 | 11 | 78.6 | 49.4 | 10.7 | 138 | 183 |

| RTMDet [24] | 80.0 | 48.0 | 180 | 84.8 | 50.4 | 178 | 16 | 27.7 |

| YOLOv11 | 80 | 55 | 227.3 | 84.7 | 56 | 135 | 23.88 | 85.5 |

| YOLOv12 | 79.1 | 54.1 | 220 | 83.1 | 55 | 130 | 20.1 | 67.1 |

| YOLOv13 | 78.5 | 52 | 215 | 82 | 54 | 125 | 27.57 | 89.0 |

| YOLOv11-TWCS | 81.9 | 56.9 | 245.1 | 88.5 | 58.1 | 146 | 19.32 | 67.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).