Submitted:

19 November 2025

Posted:

21 November 2025

You are already at the latest version

Abstract

Keywords:

I. Introduction

II. Methodology

A. Problem Setting and Threat Assumptions

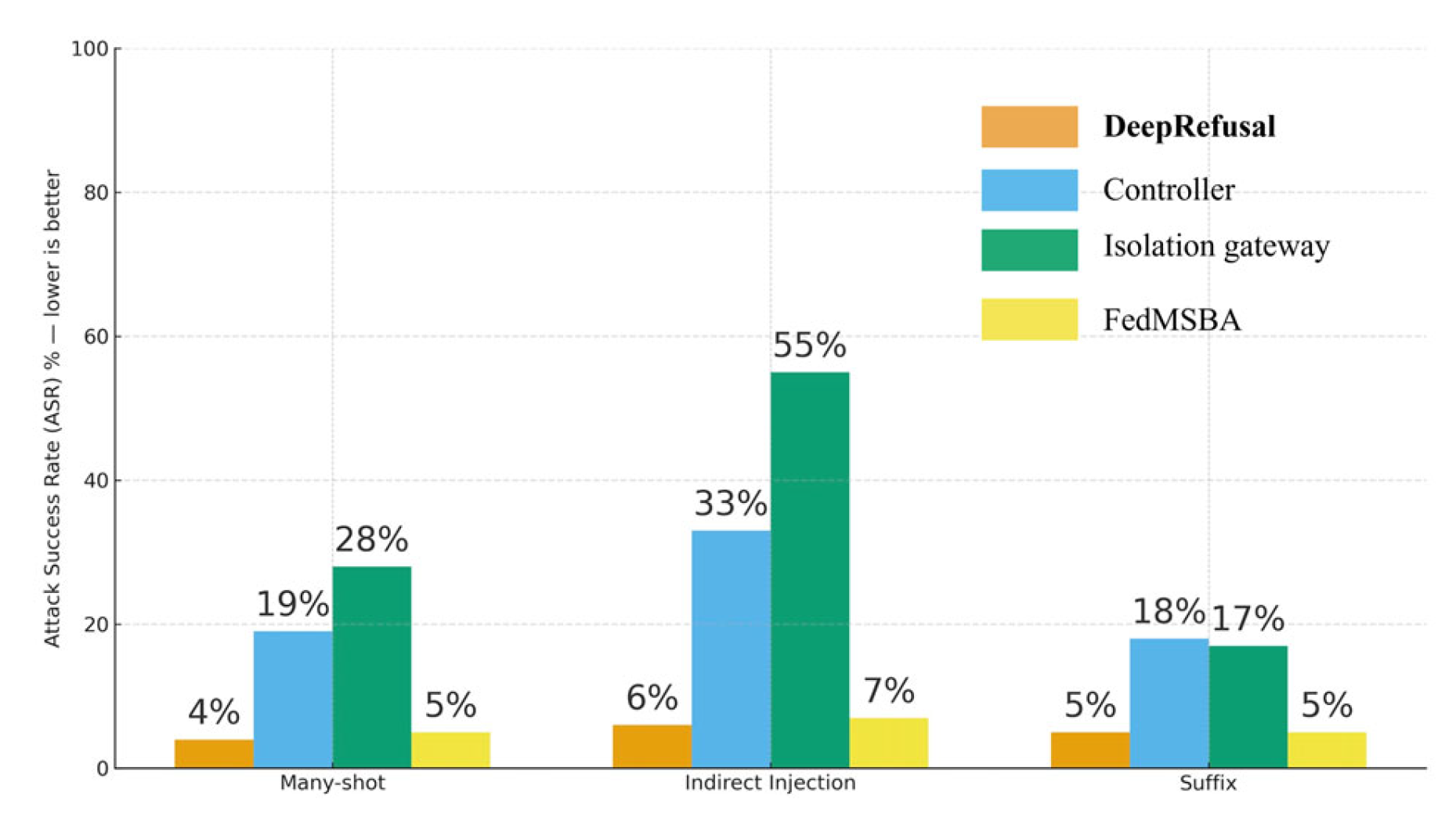

B. Architecture Overview

C. Governance Plane

D. Guard Plane

E. Decision Plane: DeepRefusal

F. Isolation Gateway for RAG and Agents

G. Federated Safety Training: FedMSBA

H. Implementation Details and Telemetry

I. Formal Properties and Practical Guarantees

III. Experiment

A. Datasets and Splits

B. Metrics

C. Baselines and Ablations

D. Settings

E. Results

IV. Discussion

References

- National Institute of Standards and Technology, Artificial Intelligence Risk Management Framework (AI RMF 1.0), NIST AI 100-1, Gaithersburg, MD, USA, 2023.

- European Parliament and of the Council, “Regulation (EU) 2024/1689 of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act)” Official Journal of the European Union, 2024.

- Organisation for Economic Co-operation and Development (OECD), OECD Principles on Artificial Intelligence, OECD Legal No. 0449, 2019.

- M. Brundage, S. Avin, J. Clark, et al., “The malicious use of artificial intelligence: Forecasting, prevention, and mitigation,” arXiv:1802.07228, 2018.

- D. Hendrycks, N. Burns, S. Basart, et al., “Unsolved problems in ML safety,” arXiv:2109.13916, 2021.

- Y. Yao, J. Duan, K. Xu, et al., “A survey on Large Language Model (LLM) security and privacy: The good, the bad, and the ugly,” High-Confidence Computing, vol. 4, no. 2, p. 100211, 2024. [CrossRef]

- J. Yi, Y. Xie, J. Shao, et al., “Jailbreak attacks and defenses against large language models: A survey,” arXiv:2407.04295, 2024.

- K. Greshake, M. Abdelaziz, M. Bartsch, et al., “More than you’ve asked for: A comprehensive analysis of novel prompt injection threats to application-integrated large language models,” arXiv:2302.12173, 2023.

- A. Zou, Z. Wang, K. Miller, et al., “Universal and transferable adversarial attacks on aligned language models,” in Advances in Neural Information Processing Systems (NeurIPS), 2023.

- C. Anil, N. R. Zhang, Y. Bai, et al., “Many-shot jailbreaking,” Anthropic Research Tech. Rep., 2024.

- A. Wei, N. Haghtalab, and J. Steinhardt, “Jailbroken: How does LLM safety training fail?” Advances in Neural Information Processing Systems, vol. 36, pp. 80079–80110, 2023. (arXiv:2307.02483).

- X. Liu, S. Zhu, Z. Zhang, et al., “AutoDAN: Generating stealthy jailbreak prompts on aligned large language models,” in Proc. Int. Conf. Learning Representations (ICLR), 2024.

- H. Inan, K. Upasani, J. Chi, et al., “Llama Guard: LLM-based input-output safeguard for human-AI conversations”, 2023.

- T. Rebedea, R. Dinu, M. N. Sreedhar, C. Parisien, and J. Cohen, “NeMo Guardrails: A toolkit for controllable and safe LLM applications with programmable rails,” in Proc. EMNLP 2023, System Demonstrations, pp. 431–445, 2023.

- W. Zou, R. Geng, B. Wang, and J. Jia, “PoisonedRAG: Knowledge corruption attacks to retrieval-augmented generation of large language models,” in Proc. USENIX Security, 2025.

- C. Clop and Y. Teglia, “Backdoored retrievers for prompt injection attacks on retrieval-augmented generation of large language models,” 2024.

| Split | Count | Threat Type | Source / Notes |

| Many-shot long-context | 100,000 | Jailbreak via demonstrations | Inspired by [10,11] |

| Indirect Prompt Injection | 120,000 | IPI / RAG poisoning / backdoored retrievers | Based on [8,15,16] |

| Suffix Adversary | 80,000 | Universal suffix / gradient-guided attacks | Based on [9,12] |

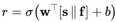

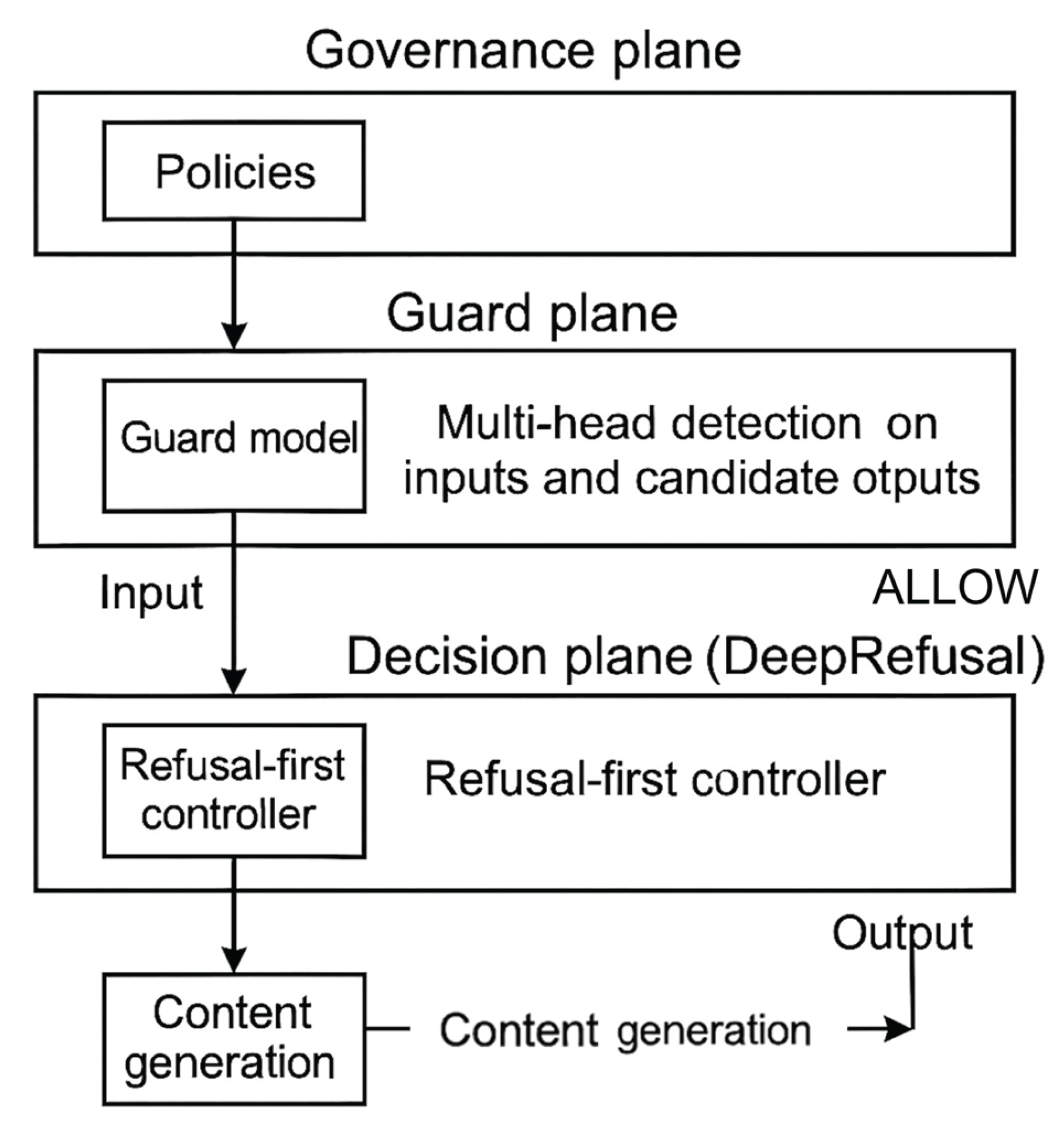

| Defense | Many-shot ASR ↓ | Indirect Injection ASR ↓ | Suffix ASR ↓ | Guard F1 ↑ | Benign Utility (Δ) |

|---|---|---|---|---|---|

| Aligned LLM (No Guard) | 62% | 88% | 57% | – | 0 |

| Guard Model Only | 31% | 42% | 29% | 83.9% | −0.7% |

| DeepRefusal (Ours) | 4% | 6% | 5% | 83.9% | −0.9% |

| − controller | 19% | 33% | 18% | 83.9% | −0.6% |

| − isolation gateway | 28% | 55% | 17% | 83.9% | −0.7% |

| − FedMSBA | 5% | 7% | 5% | 83.7% | −0.9% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).