Submitted:

01 November 2025

Posted:

03 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

2.1. Quantum Computing Fundamentals

2.2. Quantum Machine Learning Foundations

2.3. Natural Language Processing

2.4. Quantum Classical Hybrids

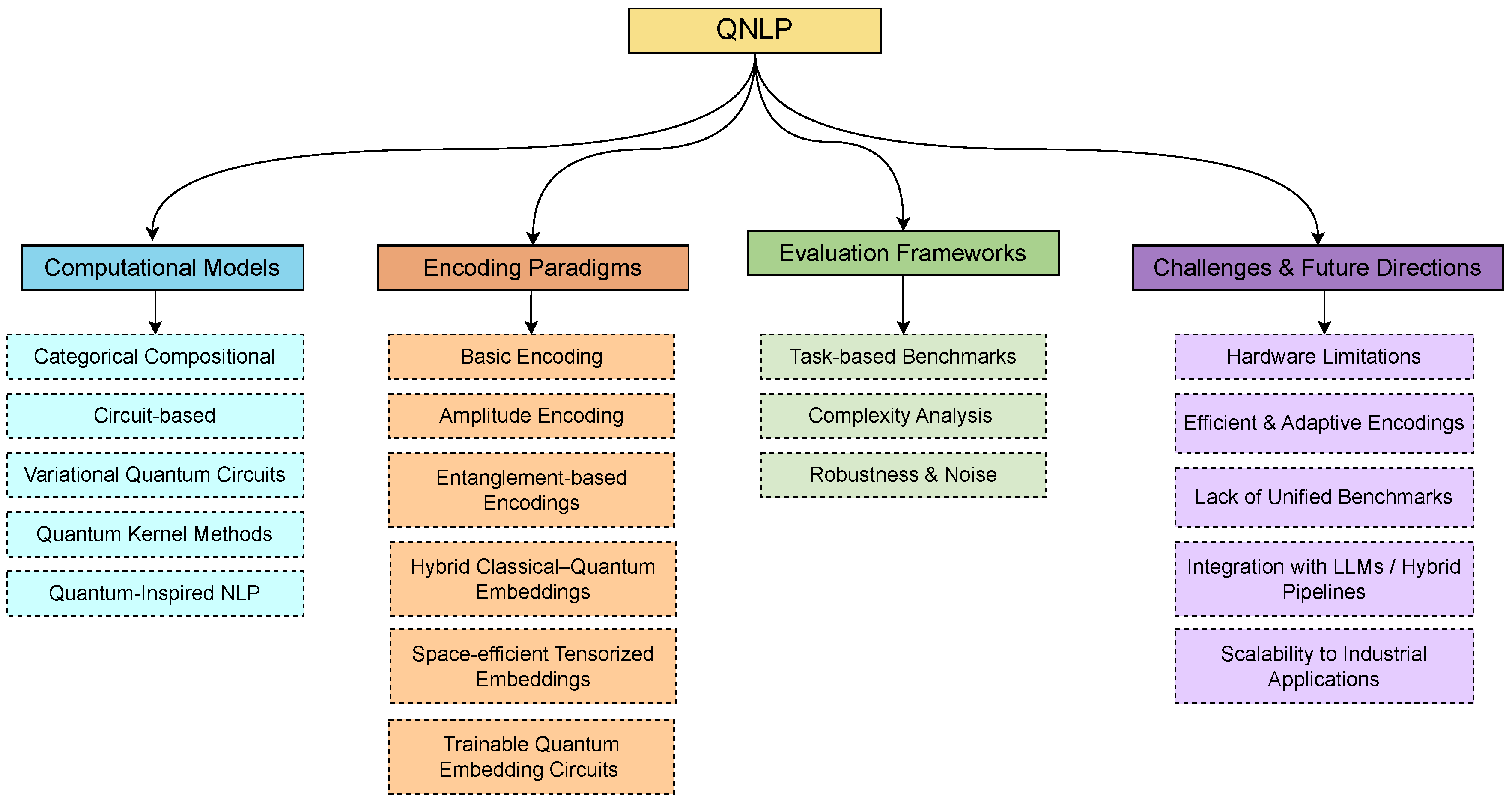

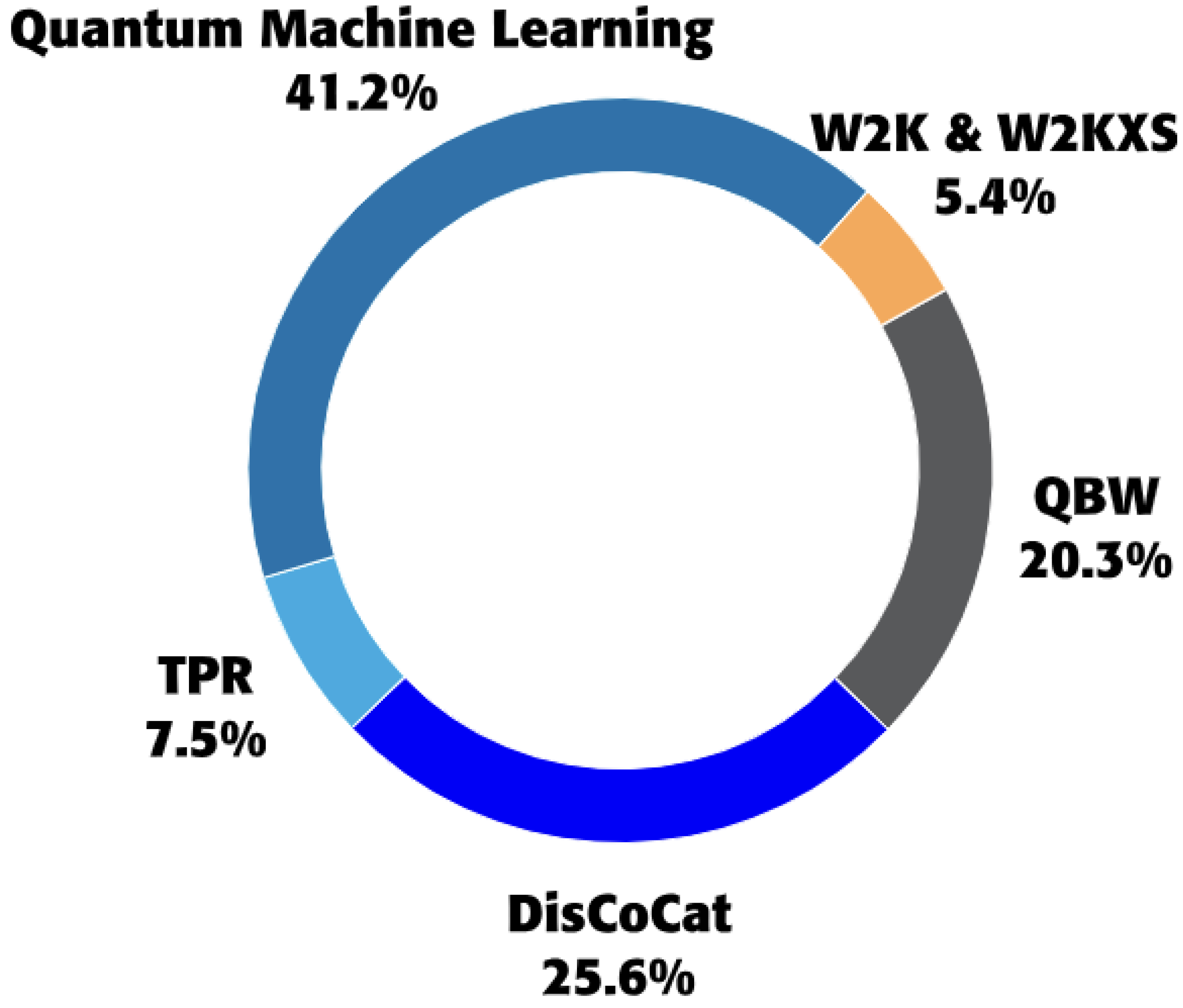

3. Computational Models for QNLP

3.1. Categorical Compositional Models

3.2. Quantum Circuit-Based Models

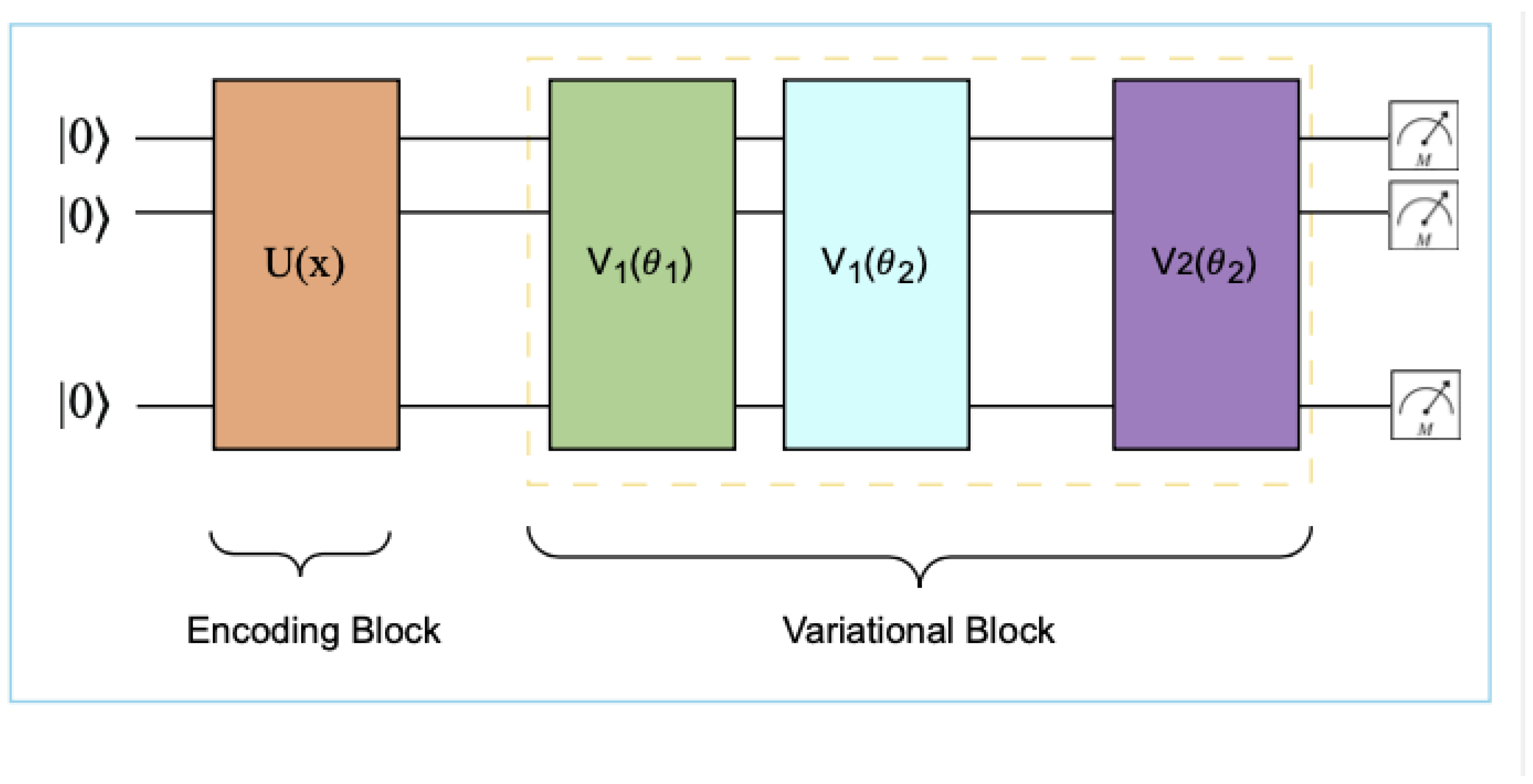

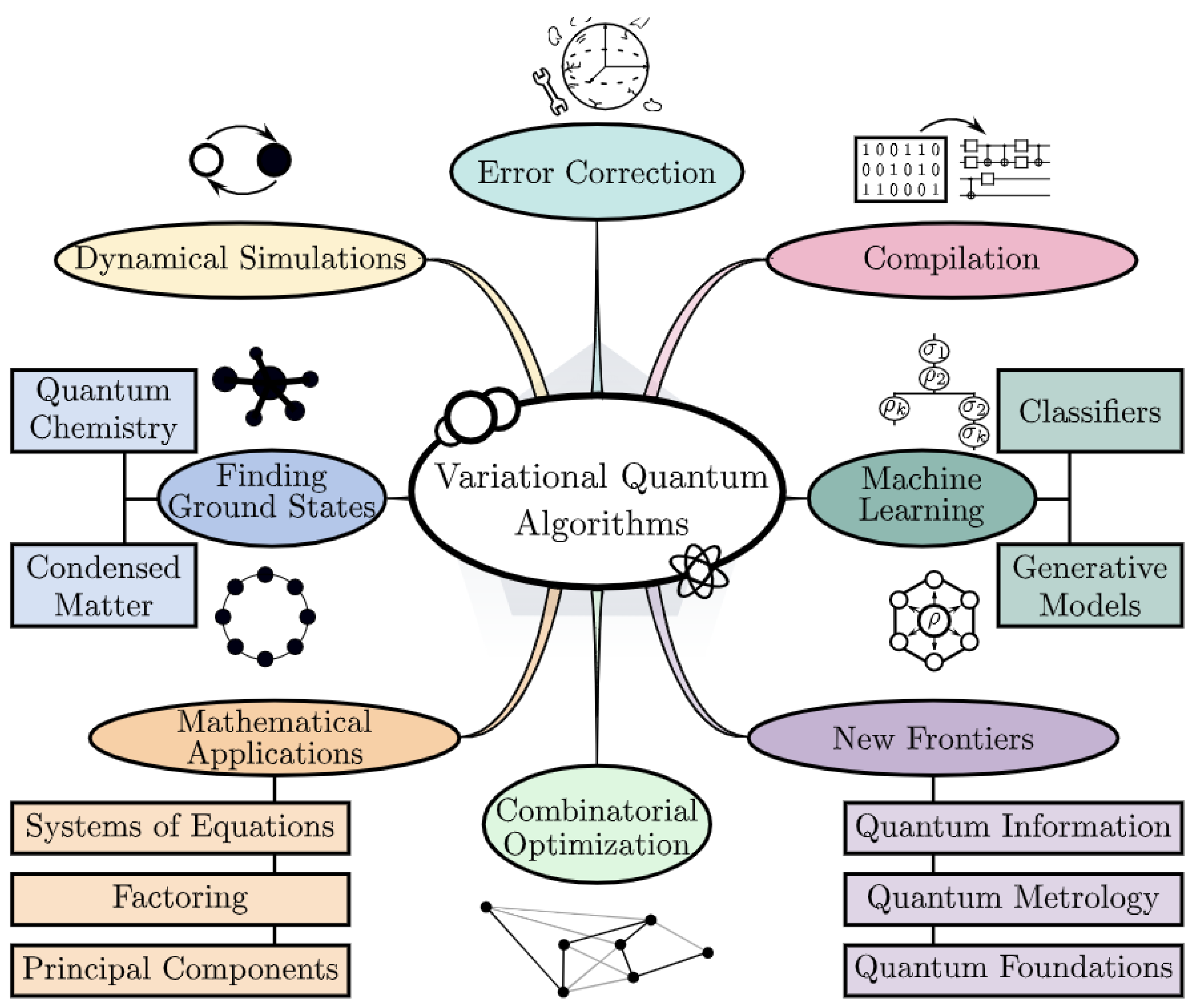

3.3. Variational Quantum Models

3.4. Quantum Kernel Methods

4. Encoding Paradigms

4.1. Basic Encoding

4.2. Amplitude Encoding

4.3. Entanglement-Based Encodings

4.4. Hybrid Embedding Strategies

4.5. Space-Efficient Tensorized Embeddings

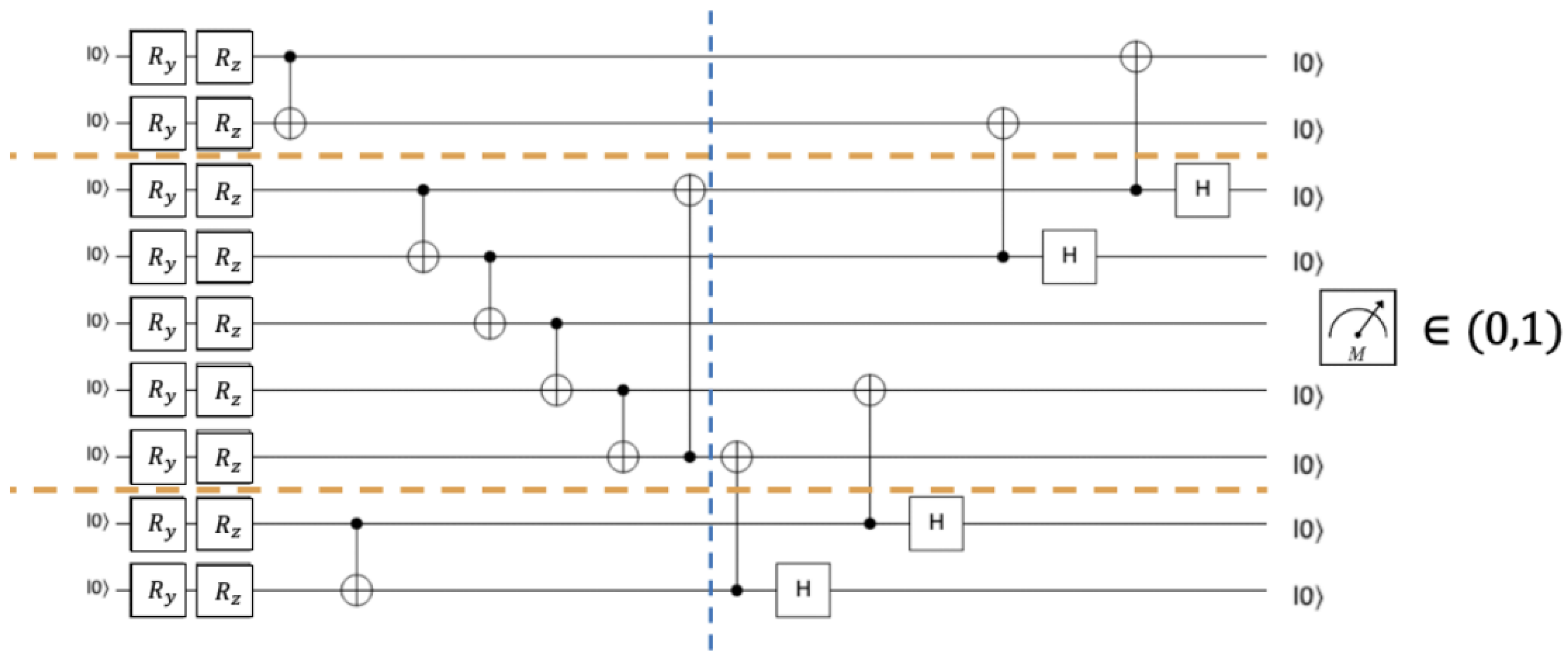

4.6. Trainable Quantum Embedding Circuits

4.7. Resource Cost Modeling

5. Evaluation Frameworks

6. Challenges and Future Directions

7. Conclusion

Appendix A

| Task | Method | Design Highlights | Input Data Type | Label Type | Loss |

|---|---|---|---|---|---|

| Sentence Classification | DisCoCat Coecke et al. (2010) | Maps grammatical reductions to tensor contractions in Hilbert space (compact-closed categories); sentence meaning via categorical compositionality with quantum-ready tensors. | Tokenized sentences | Sentiment / Topic | Cross-entropy |

| VQC-QNLP Gujju et al. (2025) | Parameterized quantum circuit on encoded tokens; hybrid loop minimizes expectation; entanglement captures long-range dependencies under NISQ. | Token embeddings | Binary / Multi-class | Weighted cross-entropy | |

| Semantic Similarity | QBW Lorenz et al. (2021a) | Quantum Bag-of-Words; embeds words as quantum states; measures similarity via state fidelity/overlaps instead of cosine distance. | Sentence pairs | Similarity / Paraphrase | Fidelity or MSE |

| Quantum Kernel (QK-NLP) Schuld and Killoran (2019);Wang et al. (2025) | Quantum feature map induces kernel ; classical SVM/GP on quantum kernel matrix. | Sentences / embeddings | STS / Entailment | Hinge loss / GP NLL | |

| Sequence Labeling | DisCoCirc Chang et al. (2023) | Discourse-aware extension of DisCoCat; circuit evolution updates word states across context; syntax–semantics via variational updates. | Token sequences | POS / NER / chunks | Token-level cross-entropy |

| QCSE Liu et al. (2025b) | Quantum Context-Sensitive Embeddings: context unitary entangles tokens; contextual vectors in Hilbert space for tagging. | Token sequences | Sequence tags | MSE / cross-entropy | |

| Hybrid Embedding Learning | Hybrid-QNN Chen et al. (2025) | Classical encoder (e.g., BERT) → amplitude/angle map → shallow PQC refinement; few-qubit head for NISQ robustness. | Pretrained text embeddings | Sentiment / Intent | Cross-entropy (hybrid) |

| Low-Resource / Multi-Modal | MultiQ-NLP Wang et al. (2024) | Entangles text–image qubits; cross-modal attention via controlled rotations; improves transfer in few-shot regimes. | Text–image pairs | Match / Tags | Contrastive (InfoNCE) |

| Sense Modeling / Pretraining | QTP-Net Zhang et al. (2025) | Encodes word senses as quantum superpositions ; learns sense mixture via measurement-driven objectives. | Large text corpora | Sense / Masked tokens | NLL; superposition reconstruction |

| Encoding Learning | Trainable Basic Encoding Munikote (2024) | Learnable encoder on basis states prior to PQC; low-depth, NISQ-friendly alternative to fixed angle/amplitude maps. | Token indices | Task-specific | Task loss + encoder reg. |

| Resource-Efficient Embeddings | word2ket / Tensorized Panahi et al. (2019) | Factorizes embedding matrix into low-order tensor products; quantum-ready prep with shallow circuits; large parameter compression. | Vocabulary embeddings | Task-specific | Task loss; tensor-factor regs |

| Encoding Paradigm | Core Idea / Map | Qubits q | State-Prep Cost | Strengths | Limitations |

|---|---|---|---|---|---|

| Basic / Learnable Encoding | Token index with shallow trainable unitary | (index map) | Low (shallow ) | Very low depth; parameter-efficient; preserves discrete identity; NISQ-friendly | Needs downstream entanglers/PQC for expressivity; tuning still task-dependent |

| Angle / Rotation Encoding | Map features to single-qubit rotations (e.g., /) per dimension; supports data re-uploading | Simple, robust, transparent geometry; pairs well with re-uploading in VQCs | Linear qubit growth with d; underuses Hilbert space unless combined with entanglement | ||

| Amplitude Encoding | (inner-products preserved) | (state loading) | Exponential compression of d; strong for kernel/similarity tasks; unitary-friendly | Expensive loaders; noise-sensitive; benefits from high-fidelity prep | |

| Entanglement-based Composition | Apply (CNOT/CZ) to correlate token subsystems; syntax/relations via entanglers | Task-dependent | Entanglers dominate | Directly captures compositional/relational structure; aligns with categorical semantics | Increases depth and error on NISQ; careful compilation needed |

| Hybrid Embedding Strategies | Classical embedding (e.g., BERT/Word2Vec) → quantum feature map → PQC | Few-qubit heads common | Modest; depends on chosen feature map | Best near-term trade-off; leverages pretrained semantics; smaller q / shots | Classical front-end may dominate compute; quantum benefit is task- and map-dependent |

| Space-efficient Tensorized (word2ket) | Factorize embedding matrix into low-order tensor products; shallow quantum prep from factors | By factorization design | Low (from tensor factors) | compression reported; principled bridge to tensor networks; shallow circuits | Quality depends on factorization rank/structure; extra design choices required |

| Trainable Quantum Embedding Circuits | Small reusable quantum cell learns token/context encoding in-circuit; reused across positions | Few (cell reused) | Low–moderate (per-cell) | Parameter-efficient; context-aware; fewer qubits/shots than naïve per-token circuits | Requires careful training/stability on NISQ; generalization may be dataset-dependent |

References

- Shamminuj Aktar, Andreas Bärtschi, Abdel-Hameed A. Badawy, and Stephan Eidenbenz. 2025. Quantum graph transformer for nlp sentiment classification.

- Junyeob Baek, Hosung Lee, Christopher Hoang, Mengye Ren, and Sungjin Ahn. 2025. Discrete jepa: Learning discrete token representations without reconstruction.

- Emily M Bender, Timnit Gebru, Angelina McMillan-Major, and Shmargaret Shmitchell. 2021. On the dangers of stochastic parrots: Can language models be too big? In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency.

- Tom B. Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-Voss, Gretchen Krueger, Tom Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler, Jeffrey Wu, Clemens Winter, Christopher Hesse, Mark Chen, Eric Sigler, Mateusz Litwin, Scott Gray, Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever, and Dario Amodei. 2020. Language models are few-shot learners. In Advances in Neural Information Processing Systems, volume 33.

- M. Cerezo, Andrew Arrasmith, Ryan Babbush, Simon C. Benjamin, Suguru Endo, Keisuke Fujii, Jarrod R. McClean, Kosuke Mitarai, Xiao Yuan, Lukasz Cincio, and Patrick J. Coles. 2021a. Variational quantum algorithms. Nature Reviews Physics, 3(9):625–644. [CrossRef]

- Marco Cerezo, Andrew Arrasmith, Ryan Babbush, Simon C Benjamin, Suguru Endo, Keisuke Fujii, et al. 2021b. Variational quantum algorithms. Nature Reviews Physics, 3.

- Eric Chang, John Smith, and Ling Zhao. 2023. Variational quantum classifiers for natural-language text: A discocirc approach. In Proceedings of the Quantum NLP Workshop.

- Ying Chen, Paul Griffin, Paolo Recchia, Lei Zhou, and Hongrui Zhang. 2025. Hybrid quantum neural networks with amplitude encoding: Advancing recovery rate predictions. [CrossRef]

- Bob Coecke, Mehrnoosh Sadrzadeh, and Stephen Clark. 2010. Mathematical foundations for a compositional distributional model of meaning. Lambek Festschrift.

- Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. 2019. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies.

- Fabian Döschl and Annabelle Bohrdt. 2025. Importance of correlations for neural quantum states.

- Yan Ge, Wu Wenjie, Chen Yuheng, Pan Kaisen, Lu Xudong, Zhou Zixiang, Wang Yuhan, Wang Ruocheng, and Yan Junchi. 2024. Quantum circuit synthesis and compilation optimization: Overview and prospects. [CrossRef]

- Guillermo González-García, Rahul Trivedi, and J. Ignacio Cirac. 2022. Error propagation in nisq devices for solving classical optimization problems. PRX Quantum, 3(04):040326. “NISQ-Computer: Quantum entanglement can be a double-edged sword” via MPQ news. [CrossRef]

- Yaswitha Gujju, Romain Harang, and Tetsuo Shibuya. 2025. Llm-guided ansätze design for quantum circuit born machines in financial generative modeling. [CrossRef]

- Aram W Harrow, Avinatan Hassidim, and Seth Lloyd. 2009. Quantum algorithm for linear systems of equations. Physical Review Letters, 103(15):150502. [CrossRef]

- Steffen Herbold. 2024. Semantic similarity prediction is better than other semantic similarity measures.

- Zheyong Hu and Sabre Kais. Characterizing quantum circuits with qubit functional configurations. Scientific Reports. [CrossRef]

- Hsin-Yuan Huang, Richard Kueng, and John Preskill. 2021. Power of data in quantum machine learning. Nature Communications, 12(1):2631. [CrossRef]

- Jonas Jäger, Thierry Nicolas Kaldenbach, Max Haas, and Erik Schultheis. 2025. Fast gradient-free optimization of excitations in variational quantum eigensolvers. [CrossRef]

- Ivan Kankeu, Stefan Gerd Fritsch, Gunnar Schönhoff, Elie Mounzer, Paul Lukowicz, and Maximilian Kiefer-Emmanouilidis. 2025. Quantum-inspired embeddings projection and similarity metrics for representation learning.

- D. Kartsaklis, R. Lorenz, K. Meichanetzidis, and B. Coecke. 2021. lambeq: An efficient high-level python library for quantum nlp. In Proceedings of the 16th Conference on Quantum Physics and Logic (QPL).

- Kimleang Kea, Won-Du Chang, Hee Chul Park, and Youngsun Han. 2024. Enhancing a convolutional autoencoder with a quantum approximate optimization algorithm for image noise reduction.

- Michael Lan, Philip Torr, and Fazl Barez. 2024. Towards interpretable sequence continuation: Analyzing shared circuits in large language models.

- Quentin Lhoest, Albert Villanova del Moral, Yacine Jernite, Abhishek Thakur, Patrick von Platen, Suraj Patil, Julien Chaumond, Mariama Drame, Julien Plu, Lewis Tunstall, Joe Davison, Mario Šaško, Gunjan Chhablani, Bhavitvya Malik, Simon Brandeis, Teven Le Scao, Victor Sanh, Canwen Xu, Nicolas Patry, Angelina McMillan-Major, Philipp Schmid, Sylvain Gugger, Clément Delangue, Théo Matussière, Lysandre Debut, Stas Bekman, Pierric Cistac, Thibault Goehringer, Victor Mustar, François Lagunas, Alexander M. Rush, and Thomas Wolf. 2021. Datasets: A community library for natural language processing.

- Ang Li, Samuel Stein, Sriram Krishnamoorthy, and James Ang. 2022. Qasmbench: A low-level quantum benchmark suite for nisq evaluation and simulation. ACM Transactions on Quantum Computing. [CrossRef]

- Chen-Yu Liu, Samuel Yen-Chi Chen, Kuan-Cheng Chen, Wei-Jia Huang, and Yen-Jui Chang. 2024. Programming variational quantum circuits with quantum-train agent.

- Chen-Yu Liu, Chao-Han Huck Yang, Hsi-Sheng Goan, and Min-Hsiu Hsieh. 2025a. A quantum circuit-based compression perspective for parameter-efficient learning. In The Thirteenth International Conference on Learning Representations.

- Xiaoming Liu, Yifan Zhao, and Ming Chen. 2025b. Qcse: Pretrained quantum context-sensitive word embeddings. arXiv preprint arXiv:2509.05729.

- R. Lorenz, D. Kartsaklis, K. Meichanetzidis, and B. Coecke. 2021a. Qnlp experiments with lambeq. In Proceedings of Quantum Physics and Logic (QPL).

- Robin Lorenz, Daniel Pearson, and et al. 2021b. Qnlp: Quantum natural language processing. arXiv preprint arXiv:2102.12846.

- Ning Ma and Heng Li. 2024. Understanding and estimating the execution time of quantum programs.

- Jarrod R McClean, Sergio Boixo, Vadim N Smelyanskiy, Ryan Babbush, and Hartmut Neven. 2018. Barren plateaus in quantum neural network training landscapes. Nature Communications, 9(1):4812. [CrossRef]

- Konstantinos Meichanetzidis, Abdelghani Toumi, Giacomo De Felice, Bob Coecke, et al. 2020. Quantum natural language processing on near-term quantum computers. arXiv preprint arXiv:2005.04147.

- Valter Moretti and Marco Oppio. 2017. Quantum theory in real hilbert space: How the complex hilbert space structure emerges from poincaré symmetry. Reviews in Mathematical Physics, 29(06):1750021. [CrossRef]

- Nidhi Munikote. 2024. Comparing quantum encoding techniques.

- Van Hoa Nguyen, Yvon Besanger, Quoc Tuan Tran, Tung Lam Nguyen, Cederic Boudinet, Ron Brandl, Frank Marten, Achilleas Markou, Panos Kotsampopoulos, Arjen A. van der Meer, Effren Guillo-Sansano, Georg Lauss, Thomas I. Strasser, and Kai Heussen. 2017. Real-time simulation and hardware-in-the-loop approaches for integrating renewable energy sources into smart grids: Challenges & actions.

- Vojtěch Novák, Ivan Zelinka, and Václav Snášel. 2025. Optimization strategies for variational quantum algorithms in noisy landscapes.

- Aliakbar Panahi, Seyran Saeedi, and Tom Arodz. 2019. word2ket: Space-efficient word embeddings inspired by quantum entanglement. In International Conference on Learning Representations 2020.

- John Preskill. 2018. Quantum computing in the nisq era and beyond. Quantum, 2:79. [CrossRef]

- Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever. 2019. Language models are unsupervised multitask learners. Technical report, OpenAI.

- Colin Raffel, Noam Shazeer, Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, et al. 2020. Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of Machine Learning Research, 21.

- Maria Schuld and Nathan Killoran. 2019. Quantum machine learning in feature hilbert spaces. Physical Review Letters, 122(4):040504. [CrossRef]

- Maria Schuld, Ilya Sinayskiy, and Francesco Petruccione. 2015. An introduction to quantum machine learning. Contemporary Physics, 56(2):172–185. [CrossRef]

- Maria Schuld, Ryan Sweke, and Johannes Jakob Meyer. 2021. Effect of data encoding on the expressive power of variational quantum-machine-learning models. Physical Review A, 103(3). [CrossRef]

- N. A. Susulovska. 2024. Geometric measure of entanglement of quantum graph states prepared with controlled phase shift operators. [CrossRef]

- S. M. Yousuf Iqbal Tomal, Abdullah Al Shafin, Debojit Bhattacharjee, MD. Khairul Amin, and Rafiad Sadat Shahir. 2025. Quantum-enhanced attention mechanism in nlp: A hybrid classical-quantum approach.

- Charles M. Varmantchaonala, Jean Louis K. E. Fendji, Julius Schöning, and Marcellin Atemkeng. 2024. Quantum natural language processing: A comprehensive survey. IEEE Access, 12:99578–99598. [CrossRef]

- Charles M. Varmantchaonala, Niclas GÖtting, Nils-Erik SchÜtte, Jean Louis E. K. Fendji, and Christopher Gies. 2025. Qcse: A pretrained quantum context-sensitive word embedding for natural language processing.

- Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in Neural Information Processing Systems (NeurIPS).

- Davide Venturelli, Minh Do, Bryan O’Gorman, Jeremy Frank, Eleanor Rieffel, Kyle E. C. Booth, Thanh Nguyen, Parvathi Narayan, and Sasha Nanda. 2019. Quantum circuit compilation: An emerging application for automated reasoning. In Scheduling and Planning Applications Workshop.

- Leyang Wang, Yilun Gong, and Zongrui Pei. 2025. Quantum and hybrid machine-learning models for materials-science tasks.

- Yichen Wang, Zhe Sun, and Xin Hu. 2024. Multiq-nlp: Multimodal structure-aware quantum natural language processing. arXiv preprint arXiv:2411.04242.

- Nathan Wiebe, Bob Coecke, et al. 2024. Near-term advances in quantum natural language processing. Annals of Mathematics and Artificial Intelligence, 92(3-4):501–520.

- Yanying Wu and Quanlong Wang. 2019. A categorical compositional distributional modelling for the language of life.

- Jian Xu, Feng Li, and Hao Chen. 2025. Quantum graph transformers for sentiment classification. arXiv preprint arXiv:2506.07937.

- Hao Zhang, Jiaqi Wang, and Qiang Li. 2025. Qtp-net: Quantum text pre-training network for nlp tasks. arXiv preprint arXiv:2506.00321.

- Li Zhang, Wei Chen, et al. 2024. Quantum algorithms for compositional text processing. arXiv preprint arXiv:2408.06061.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).