Submitted:

19 January 2026

Posted:

21 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Proposal of an architectural paradigm that shifts TCP socket establishment to the service module side and eliminates connect() from NGINX

- Conceptual definition and structural guarantee of deterministic QPS based on the number of sessions registered by service modules

- Design-level elimination of indeterminacy factors inherent in conventional methods (connect() timing, TIME_WAIT accumulation, kernel FD distribution)

- Scale-up optimization guidelines that can be combined with cloud environment scale-out

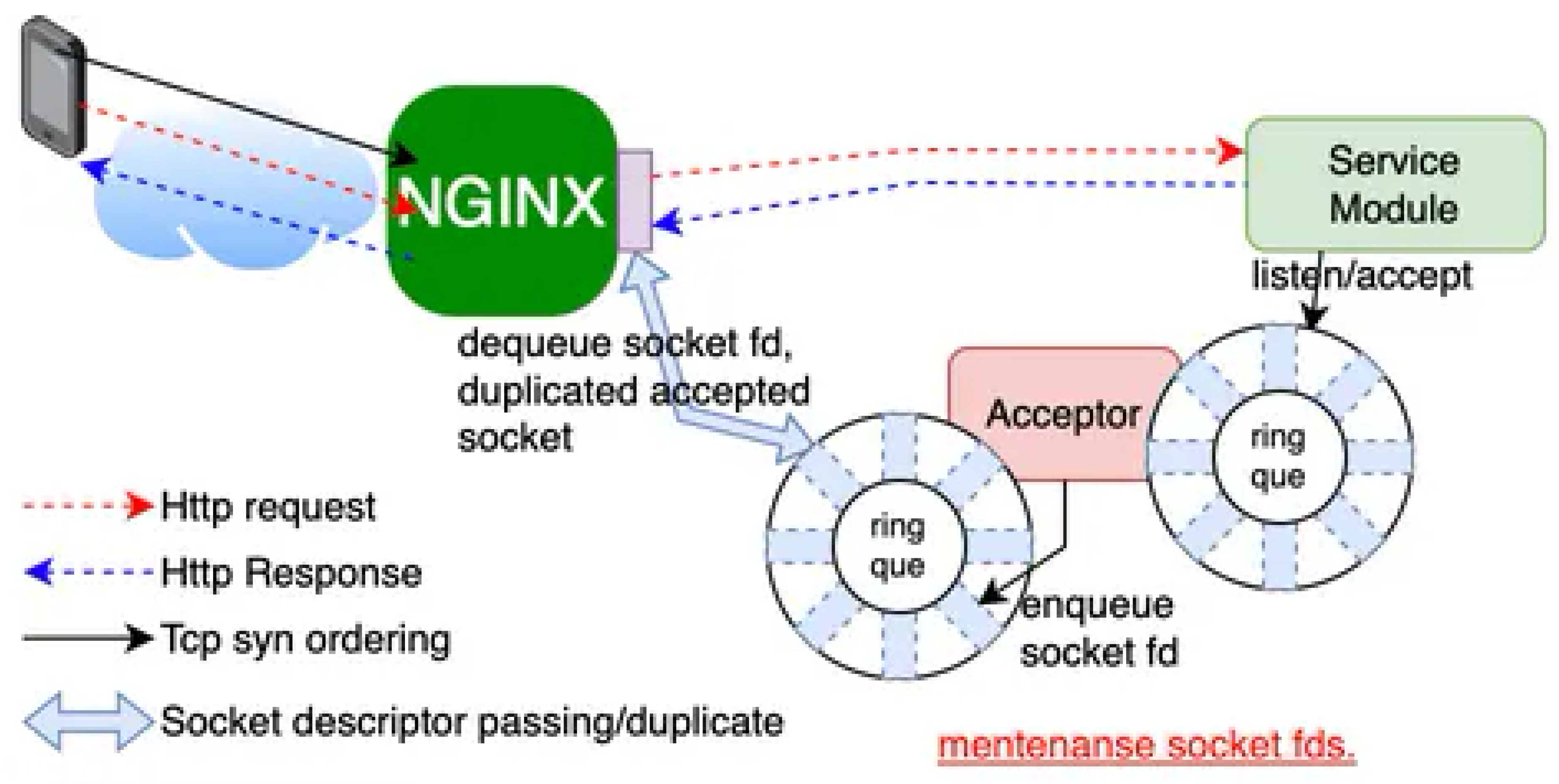

2. System Architecture

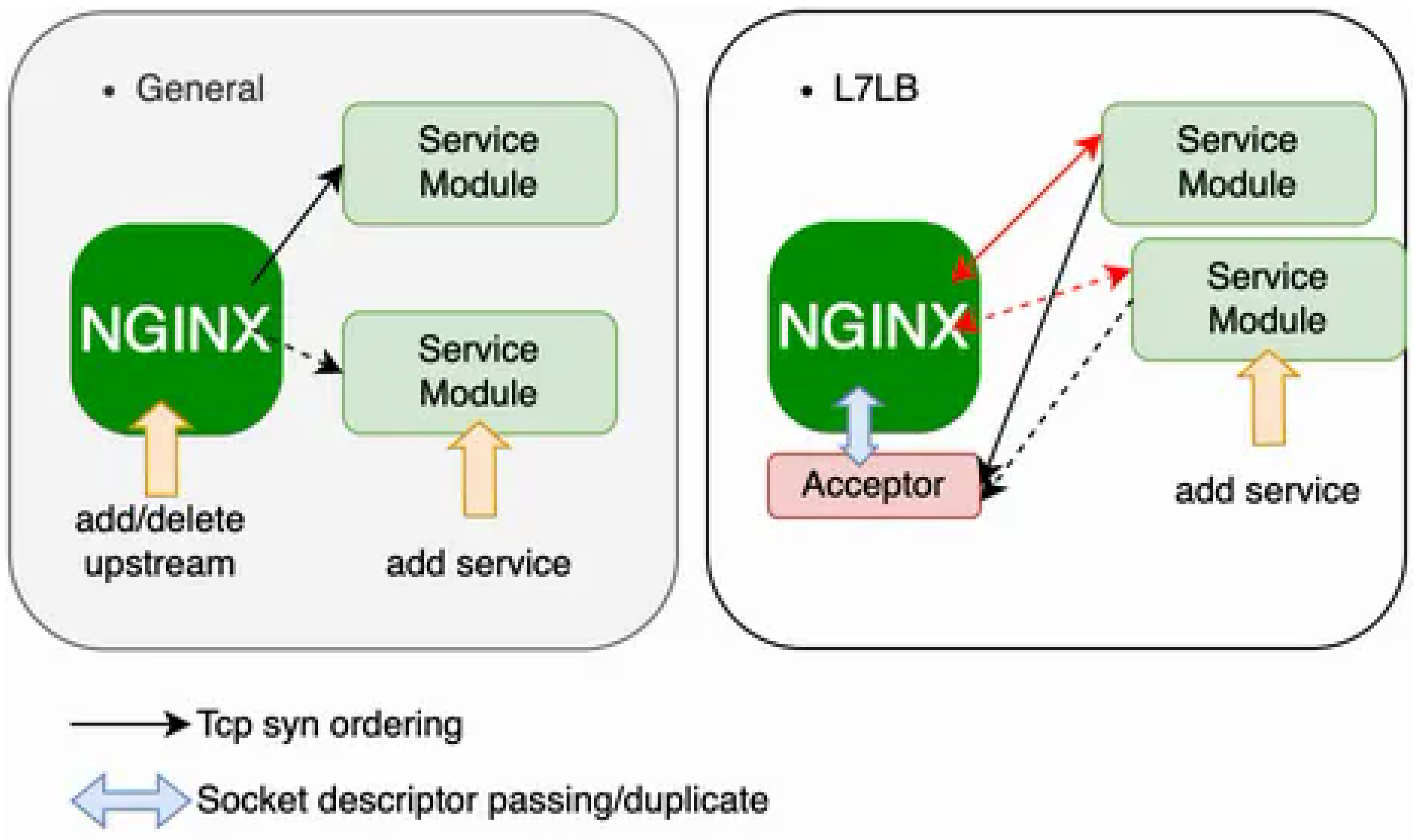

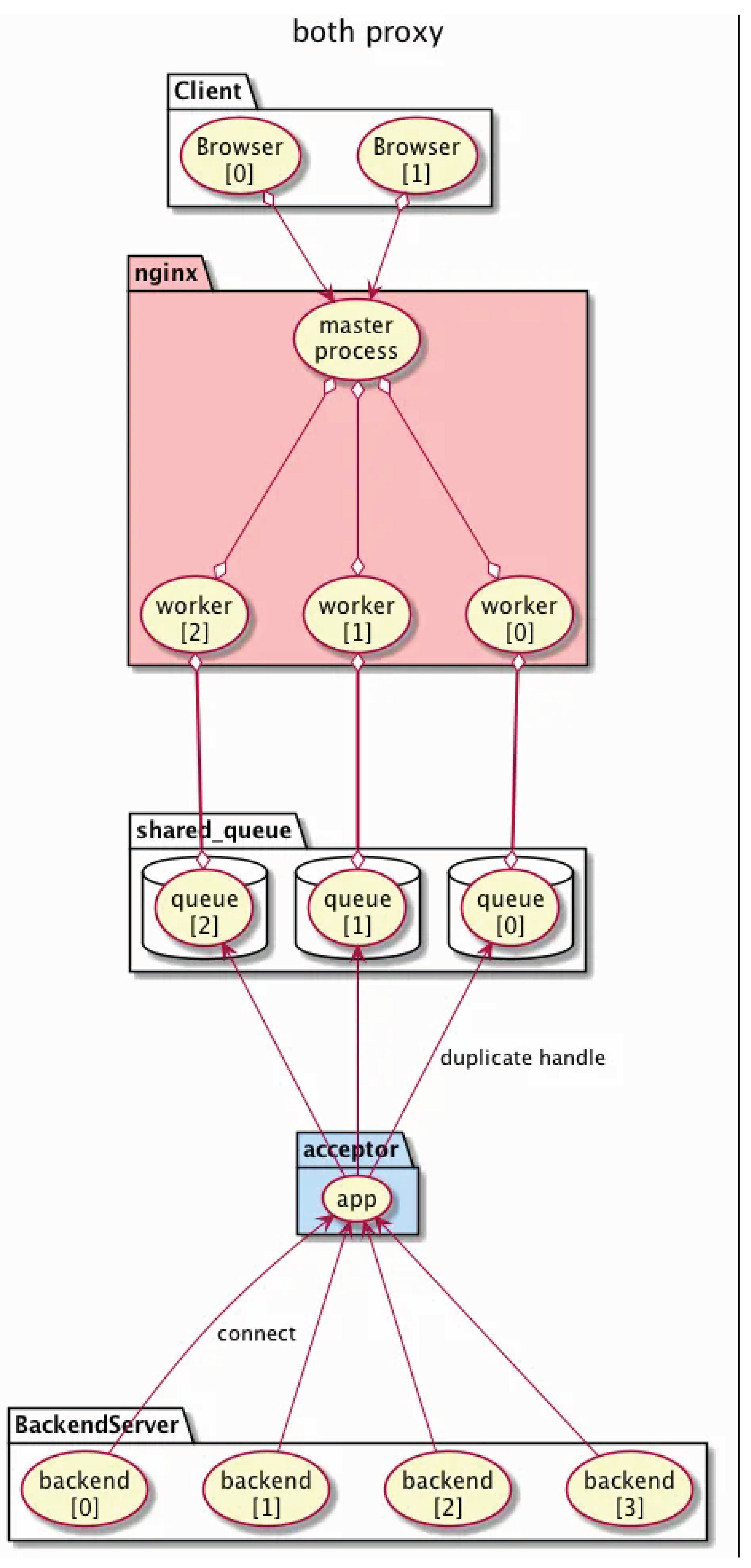

2.1. Overview

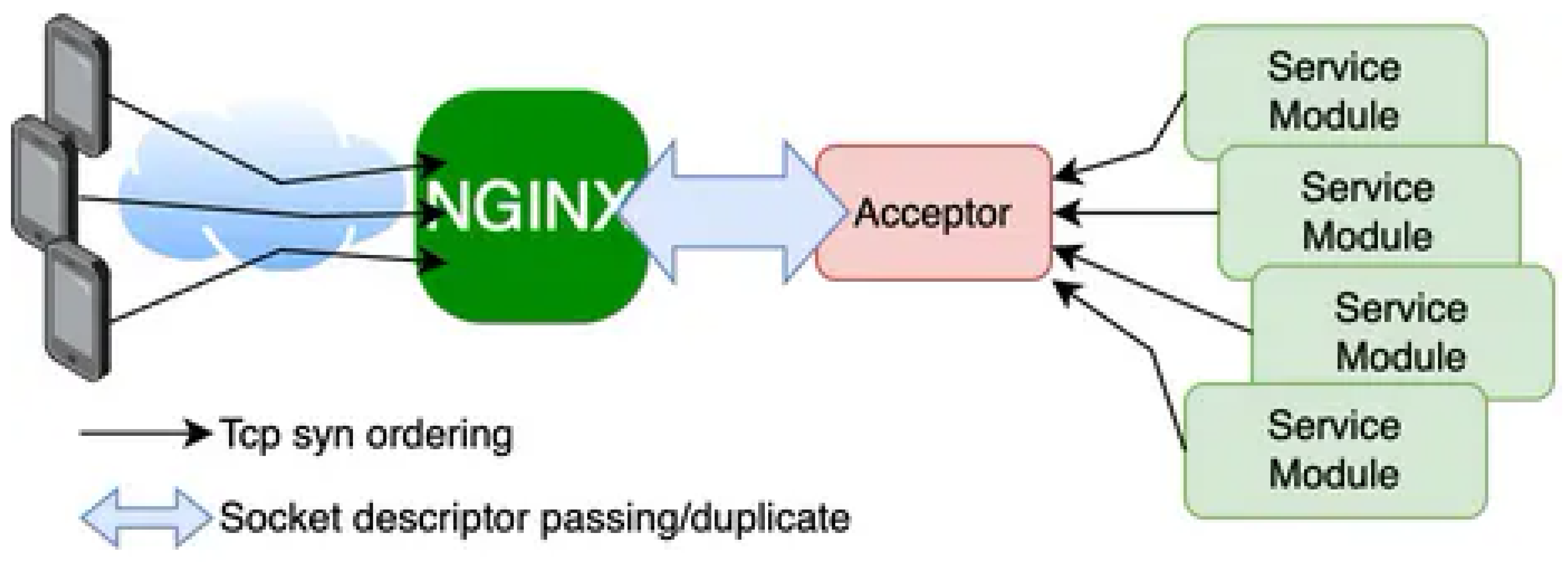

- NGINX patched module with capability to receive duplicated socket descriptors

- Acceptor module that transfers active connections via UNIX domain sockets

- Service modules that perform request handling as independent processes

2.2. Advantages over Conventional L7LB

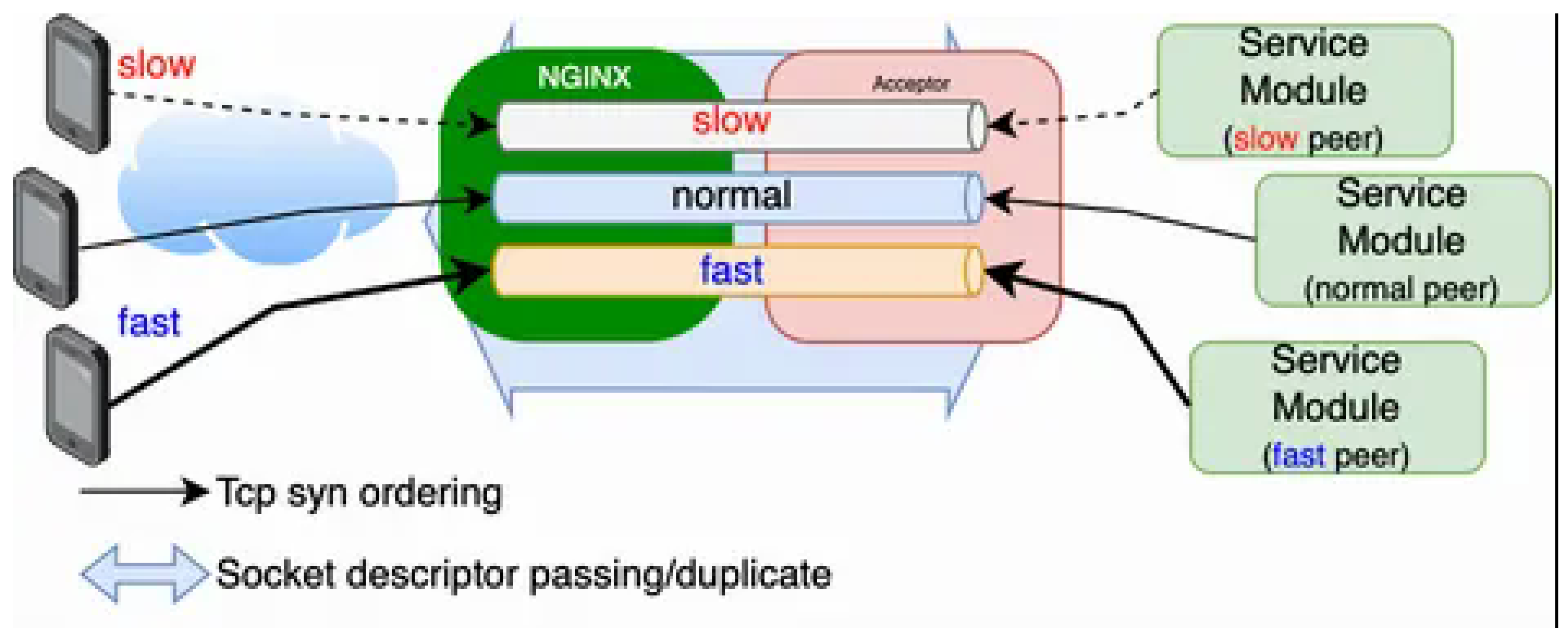

- Port exhaustion mitigation by logically separating (slicing) session processing based on terminal characteristics, suppressing local port occupation by slow terminals

- Dynamic and configurable L7 load balancing without restarts

- No health-check overhead

- Graceful restart support via socket FD passing

- Reduced number of required application service processes

3. Implementation

3.1. NGINX Modification

3.2. Acceptor Module

3.3. Example Service Module

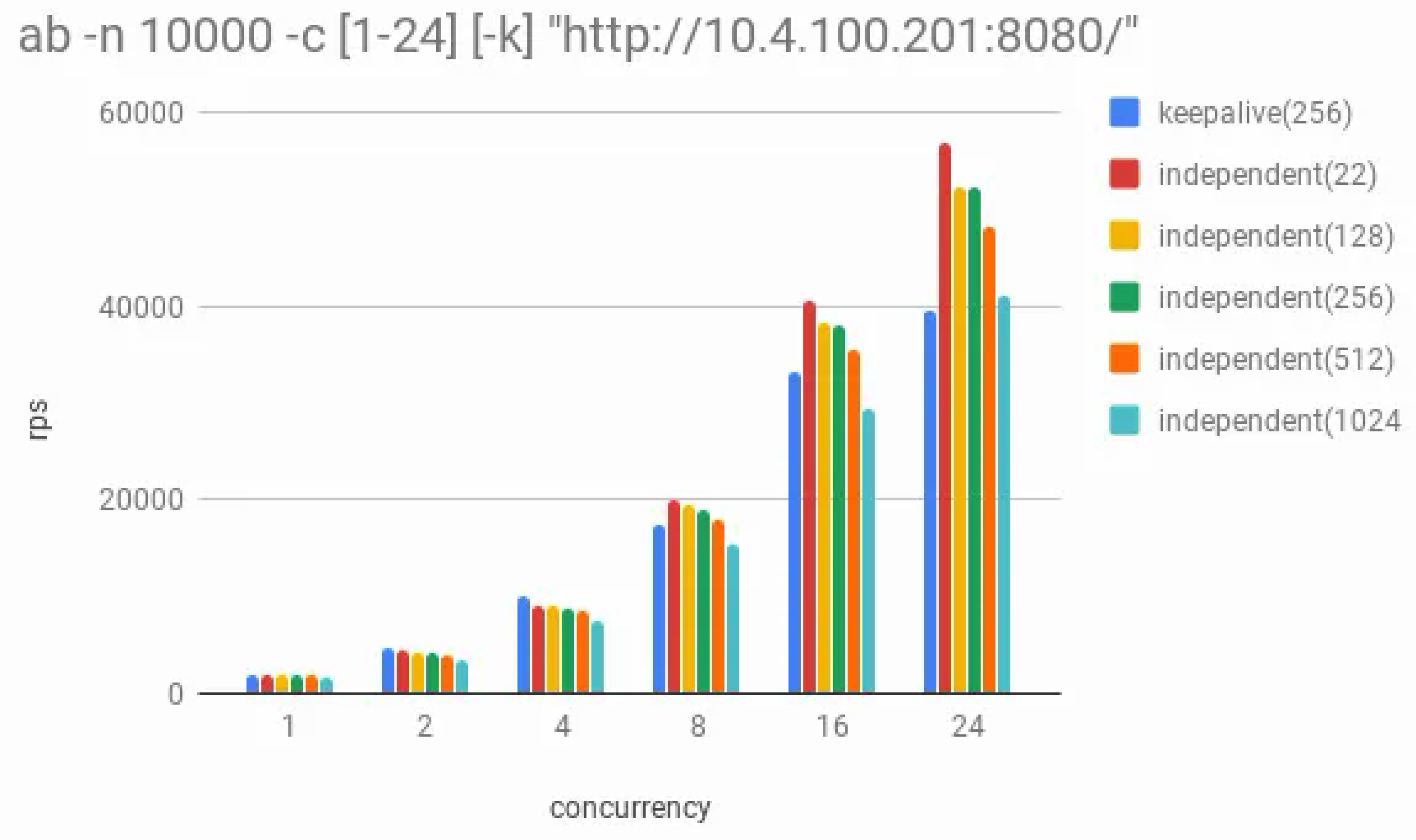

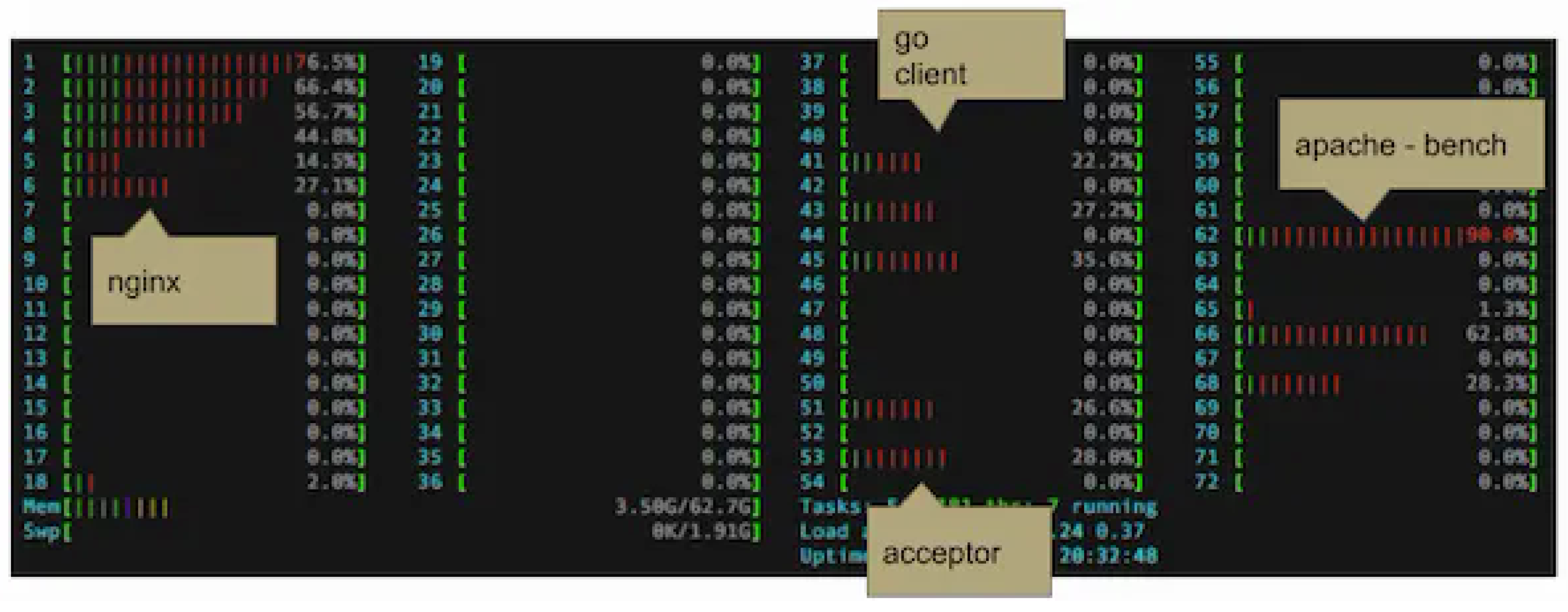

4. Performance Evaluation

| Item | Specification |

|---|---|

| Machine | MacBook Pro (Mac15,8) |

| Chip | Apple M3 Max |

| Core Count | 16 (P: 12, E: 4) |

| Memory | 64 GB |

| NGINX | 1.16.0 |

| worker_processes | 1 |

| worker_connections | 1024 |

| Benchmark Tool | ApacheBench (ab) |

| CS Measurement Command | top -stats csw -pid <PID> |

| CS Measurement Target | NGINX worker process (fixed PID) |

| CS Type | Total (voluntary + involuntary) |

| Sample Interval | 1 second |

| ./configure –with-debug –prefix=../nginx |

| –with-cc-opt="-I/opt/homebrew/opt/pcre/include" |

| –with-ld-opt="-L/opt/homebrew/opt/pcre/lib" |

4.1. Comparison with Conventional Method

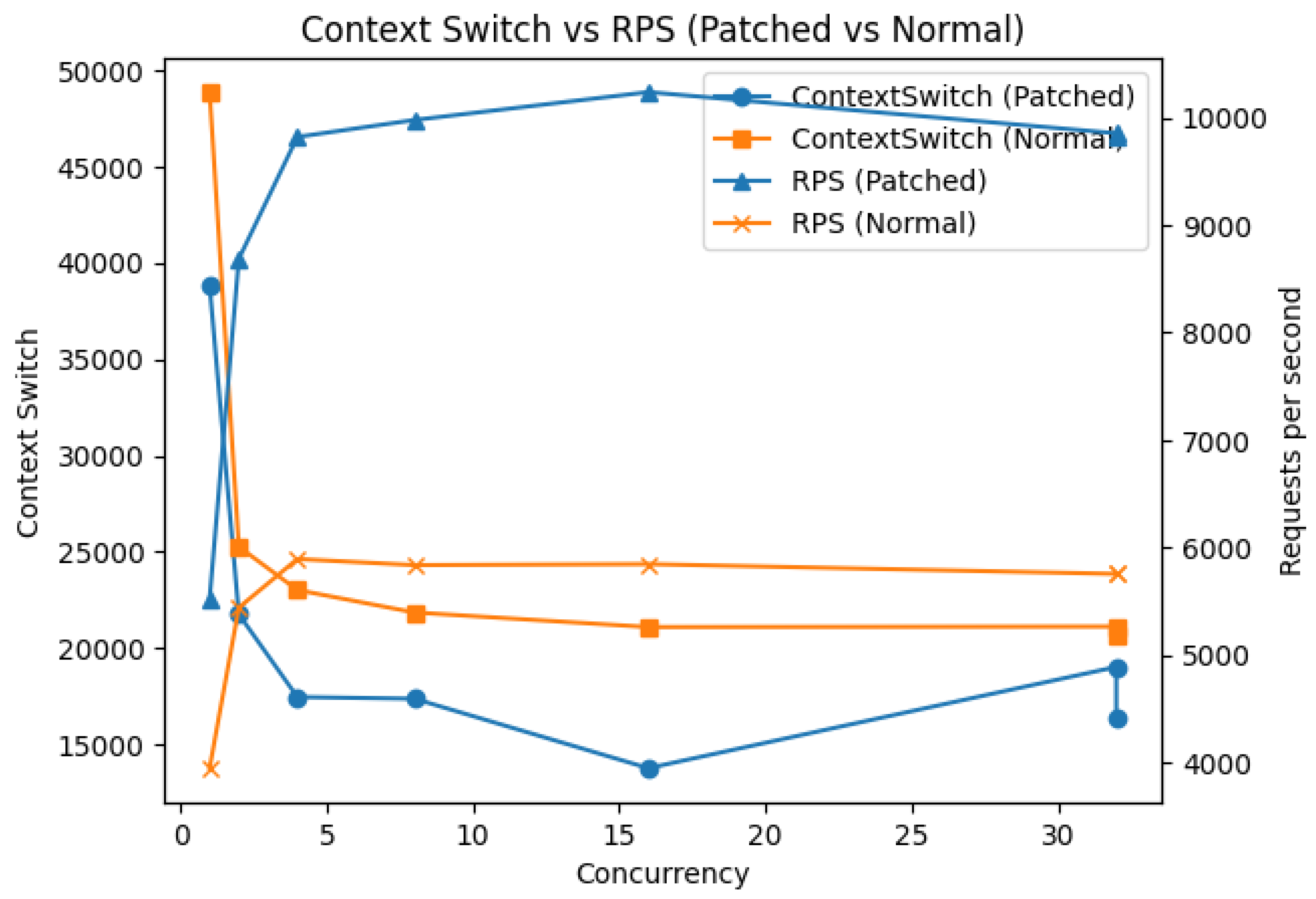

4.1.1. Context Switches and RPS

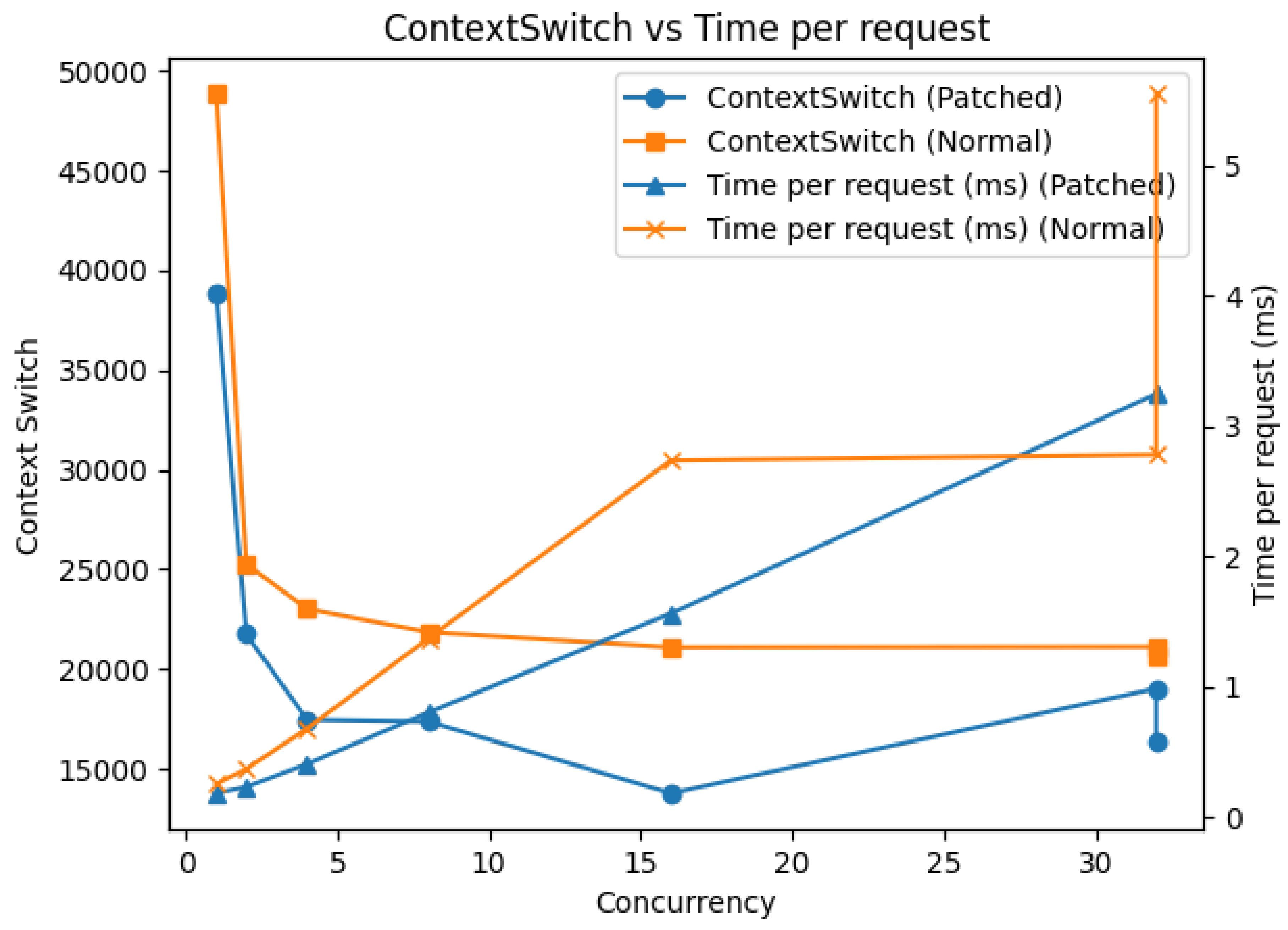

4.1.2. Context Switches and Request Time

4.2. Structural Advantages

- During traffic spikes: In conventional methods, NGINX issues connect() to the backend for each request and TCP handshakes occur, causing connect() wait to accumulate during spikes and throughput to degrade non-linearly. In this proposal, since handshake-completed sockets are received via UNIX domain sockets, connect() is unnecessary, and the Acceptor queue functions as a buffer, structurally limiting requests exceeding the service module’s processing capacity.

- When slow clients are mixed: In conventional methods, slow clients occupy NGINX’s local ports for extended periods, potentially reducing connection acceptance capacity for all clients due to port exhaustion. In this proposal, since session ownership is on the service module side, NGINX’s port consumption is limited to the service module’s concurrent processing capacity.

- TIME_WAIT accumulation during long-term operation: In conventional methods, the NGINX process repeatedly calling connect()/close() causes TIME_WAIT socket accumulation, potentially causing ephemeral port exhaustion. In this proposal, since connect() calls from the NGINX process are eliminated, this problem is structurally avoided.

5. Discussion

5.1. io-uring

5.2. Deterministic QPS and Spike Resistance

5.3. Definition and Analysis of Deterministic QPS

5.3.1. Mechanism

- The application never receives more simultaneous requests than it can process.

- The proxy never accumulates excessive pending ACCEPT/LISTEN queues, even under burst traffic.

- connect() from NGINX becomes unnecessary, eliminating the round-trip wait time of TCP handshakes (SYN→SYN-ACK→ACK).

- QPS becomes deterministic and directly measurable as a function of the application’s concurrency configuration.

5.3.2. Analysis

5.3.3. Difference from Conventional Concurrency Limits

5.4. Port Exhaustion Mitigation

5.4.1. Problem Context

- Slow or idle clients occupy proxy ports for extended periods.

- Application-side and client-side latency indirectly causes TIME_WAIT and CLOSE_WAIT accumulation in the proxy.

- Eventually, ephemeral-port exhaustion limits connection acceptance capacity and induces cascading failures.

5.4.2. Proposed Mechanism

5.4.3. Analysis

- Stable port consumption: proportional to declared backend concurrency rather than client volume.

- Reduced TIME_WAIT/CLOSE_WAIT pressure: preventing socket-table inflation during long uptimes.

- Improved multi-service coexistence: isolation between independent service modules avoids cross-service port contention.

5.4.4. Considerations

5.5. Session Fairness and Multi-Core Affinity

6. Comparison to Conventional Architectures

6.1. Comparison with Upstream Keepalive

6.2. Comparison with Katran

6.3. Relation to L4 Load Balancers (e.g., Maglev)

7. Conclusion

7.1. Future Work

- Comparison of connect() wait accumulation vs Acceptor queue absorption under spike load

- Comparison of port occupation and slow/fast interference when slow clients are mixed

- Time-series comparison of TIME_WAIT/CLOSE_WAIT transitions during long-term operation

Author Contributions

Funding

Conflicts of Interest

Use of Artificial Intelligence

Appendix A. Implementation Code

- Repository: https://github.com/xander-jp/mxl7lb2

Appendix A.1. nginx.patch

Appendix A.2. acceptor/

Appendix A.3. acceptor_cli/

Appendix A.4. natlibs/

Appendix A.5. client/client.go

Appendix A.6. client_large_resp.go

Appendix A.7. client_large_resp_server.go

References

- Neal, Cardwell; et al. Fast ZC Rx Data Plane using io_uring, Netdev 0x17 (2023). Available online: https://netdevconf.info/ 0x17/docs/netdev-0x17-paper24-talk-paper.pdf.

- Agarwal, A.; Nikolaev, A.; Rybkin, D.; et al. Katran: A Scalable Layer-4 Load Balancer using XDP and eBPF, Meta (Facebook) Engineering Blog. May 2018. Open source implementation: https://github.com/ facebookincubator/katran. Available online: https://engineering.fb.com/2018/05/22/open-source/katran-a-scalable-network-load-balancer/.

- Eisenbud, D. E.; Yi, C.; Contavalli, C.; Smith, C.; Kononov, R.; Mann-Hielscher, E.; Cilingiroglu, A.; Cheyney, B.; Shang, W.; Hosein, J. D. Maglev: A Fast and Reliable Software Network Load Balancer. USENIX NSDI 2016. [Google Scholar]

| Feature | Traditional L7LB | Proposed Layer7 Proxy |

|---|---|---|

| TCP Direction | Client → Server | Server ↔ Acceptor |

| Upstream Port Exhaustion | Frequent | Suppressed |

| Health Checks | Required | Not required |

| Session Fairness | Limited | Deterministic |

| Restart Overhead | High | Graceful |

| QPS Determinism | Low | High |

| Indeterminacy Factor | Occurrence Mechanism in Conventional Method | Elimination Method in This Proposal |

|---|---|---|

| connect() completion timing | Depends on backend load; wait time accumulates during spikes | Eliminates connect() call itself from NGINX |

| TIME_WAIT accumulation | Socket state accumulates as NGINX repeatedly calls connect()/close() | Avoids TIME_WAIT generation by eliminating connect() from NGINX |

| Kernel FD distribution | OS-dependent scheduling such as SO_REUSEPORT | Deterministic control in user space by Acceptor |

| Client-induced port occupation | Long-term connections from slow terminals occupy NGINX’s local ports | Transfers session ownership to service module side |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).