Submitted:

17 October 2025

Posted:

17 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

2.1. What are Large Language Models (LLMs)?

2.1.1. Definition of LLMs

- How to Define “Large”? LLMs are characterized as “large” by their exceptionally high number of parameters—typically ranging from billions to hundreds of billions—paired with extensive training data. For instance, OpenAI’s GPT series contains hundreds of billions of parameters, allowing it to capture a wide range of linguistic patterns and knowledge. Beyond sheer size, the term “large” also signifies a critical scale at which emergent capabilities arise—abilities that are not present in smaller models and often cannot be predicted simply by extrapolating from smaller-scale performance [13]. Driven by advances in computing power and guided by scaling laws, the size of language models has increased rapidly over recent years. Models once regarded as state-of-the-art have been quickly surpassed and are now considered relatively small by current standards. For example, GPT-2, released in 2019, contained 1.5 billion parameters [5], whereas the smallest variants of contemporary LLMs typically begin at 7 billion parameters. This shift highlights the field’s rapid progression and the evolving definition of what constitutes a “large” model.

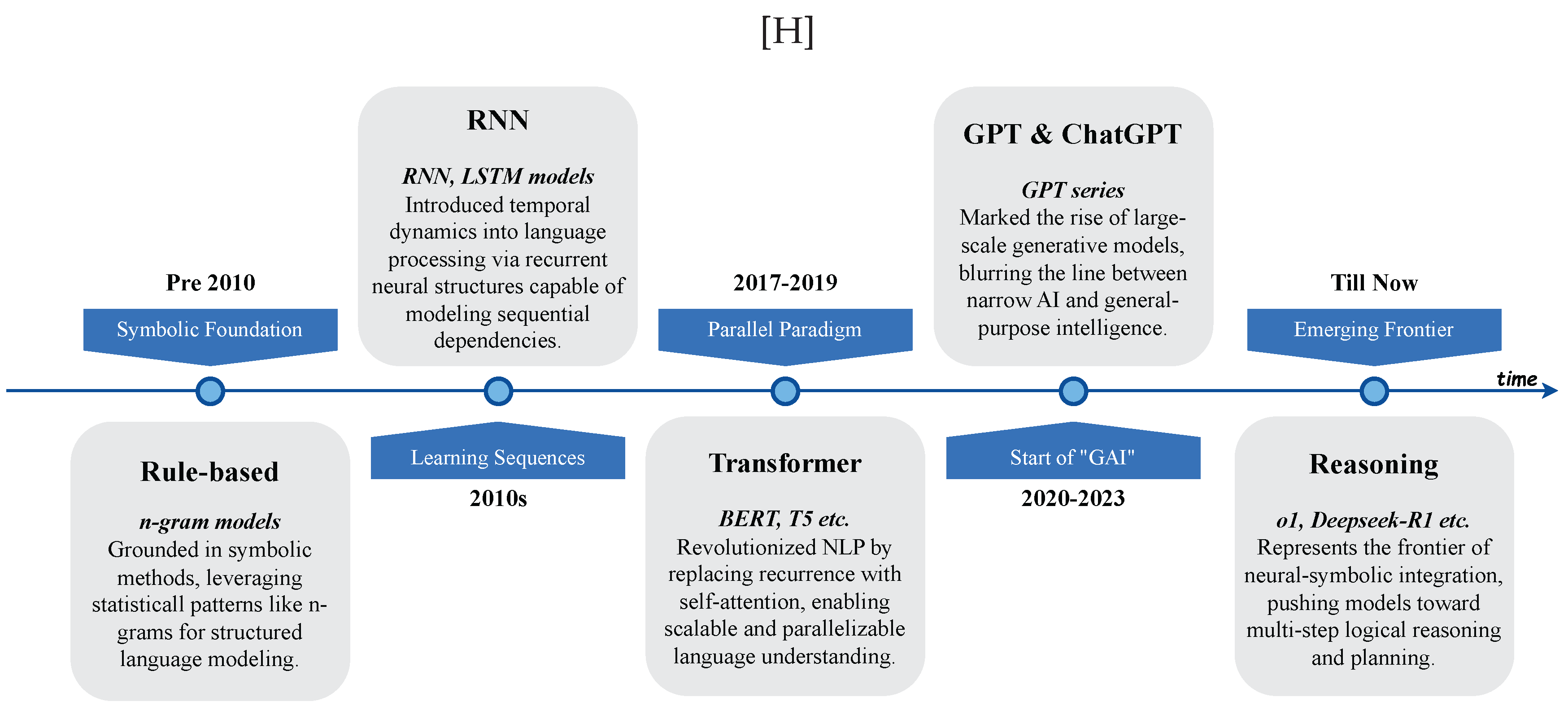

2.1.2. History of LLMs

| Time Nodes | Model | Core Tech | Contribution/Feature | Major Lab/Company | Release Time |

|---|---|---|---|---|---|

|

Rule-based Pre 2010 |

ELIZA [43] | Rule-based communication | First NLP communication system | MIT | 1966 |

| n-grams [44] | Markov language model | Based on statistical frequency modeling | IBM | 1992 | |

| PCFG [45] | Context-independent probabilistic | Syntactic analysis & structural prediction | Brown Uni. | 1998 | |

|

RNN 2010s |

RNNLM [46] | Based on RNN | First RNN-based language model | Microsoft | 2010 |

| Seq2Seq [47] | Based on GRU / LSTM | Pioneered encoder-decoder for NLP | 2014 | ||

|

Transformer 2017-2019 |

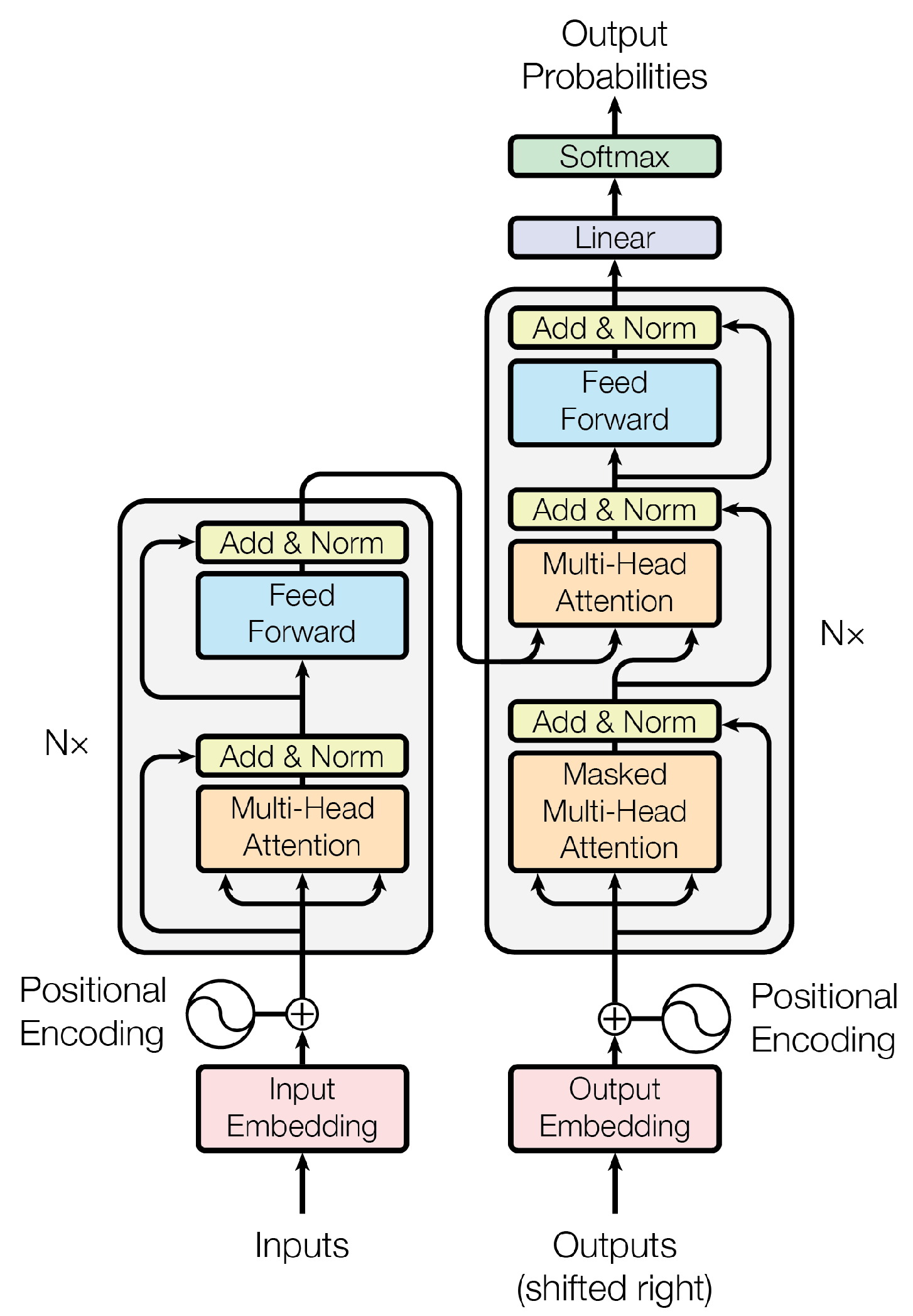

Transformer [4] | Self-attention | Breaks sequential constraint, enables parallelism | 2017 | |

| BERT [9] | Masked LM + fine-tuning | Contextualized language representation | 2018 | ||

| T5 [40] | Text-to-text transfer learning | Reformulates all NLP tasks as text generation | 2019 | ||

|

GPT & ChatGPT 2020-2023 |

GPT-1/2 [5,6] | Autoregressive transformer | Unified architecture for generation | OpenAI | 2018/2019 |

| GPT-3 [7] | Large-scale autoregressive model | Kickstarted large model era | OpenAI | 2020 | |

| ChatGPT [48] | Fine-tuned GPT-3 with RLHF | Interactive and aligned chatbot | OpenAI | 2022 | |

| GPT-4 [1] | Multimodal, tool-use capabilities | Generalized across wide range of tasks | OpenAI | 2023 | |

|

Other LLMs 2020-2023 |

Codex [49] | Code-focused GPT fine-tuning | Natural language to code translation | OpenAI | 2021 |

| FLAN-T5 [50] | Instruction-tuned T5 | Strong zero-shot generalization | 2022 | ||

| QWen [51] | Tool-augmented transformer | Open-source model with strong instruction-following | Alibaba | 2023 | |

| LLaMA [52] | Efficient transformer variants | High performance with fewer resources | Meta | 2023 | |

|

Emerging Frontier Till Now |

Mixtral [53] | Sparse Mixture-of-Experts | Efficient inference with high performance | MixtralAI | 2023 |

| DeepSeek-R1 [21] | Neural-symbolic reasoning | Multi-step logic | DeepSeek | 2024 | |

| o1 [20] | Experimental AGI prototype | Focus on generalized reasoning skills | OpenAI | 2024 |

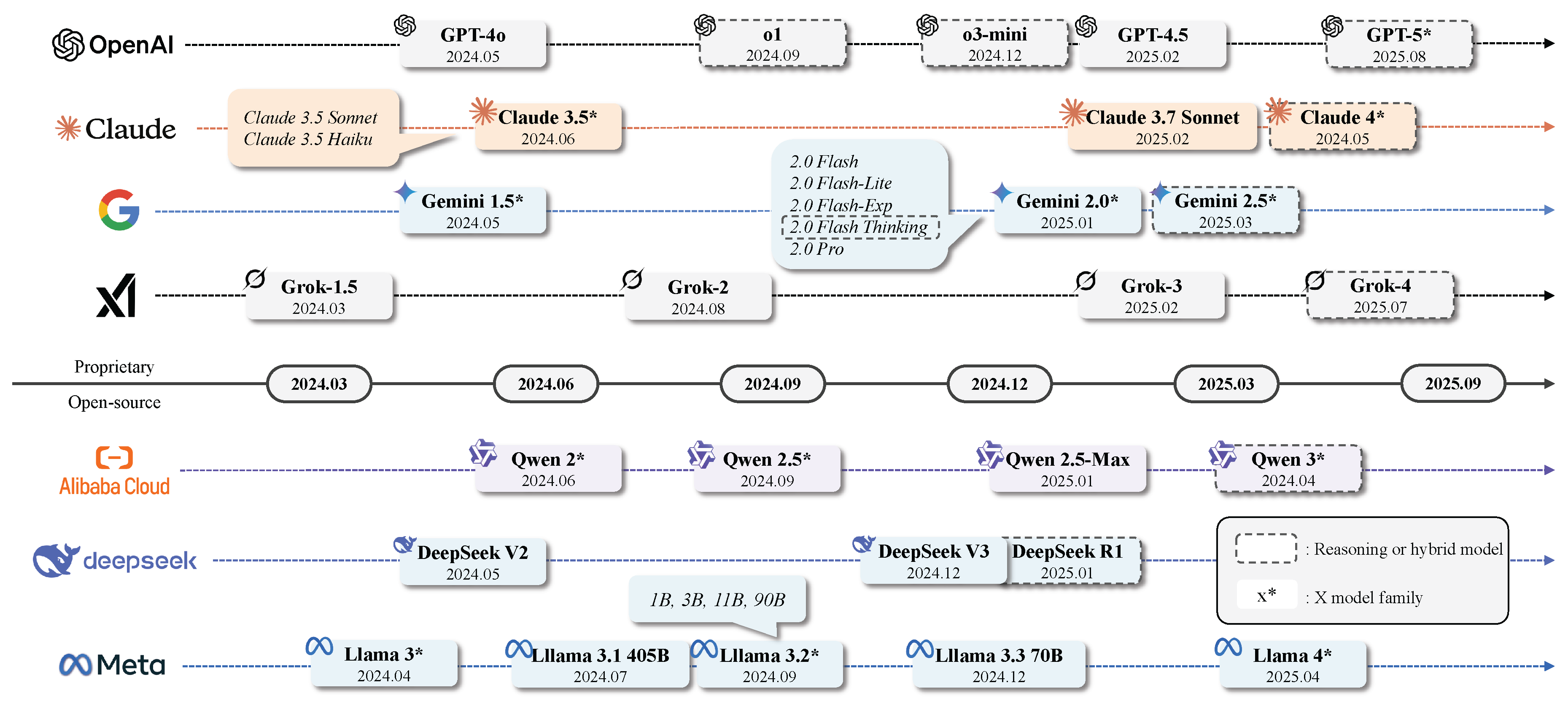

2.2. State-of-the-art LLMs

2.2.1. Overview

| Task | Modality | Context Window | Latency | Privacy | Budget | Hardware | Perf. | LLMs |

| Conversational Task | T, (I, A) | ≥ 200K | Yes | No | Low | – | Med | Gemini 2 |

| T, (I) | < 200K | Yes | No | Med | – | High | ChatGPT-4o | |

| T | < 200K | No | Yes | – | Med | – | Qwen 2.5 | |

| T, (I) | < 200K | No | Yes | – | High | – | Llama 3.2 MM | |

| Reasoning Task | T, (I, A) | ≥ 200K | Yes | No | – | – | High | Gemini 2.5 Pro |

| T | < 200K | No | No | Med | – | Med | OpenAI Reasoning | |

| T | < 200K | Yes | Yes | – | High | – | DeepSeek-R1 | |

| Coding Task | T | < 200K | Yes | No | Low | – | Med | Claude 3.5 |

| T | < 200K | No | No | High | – | High | Claude 3.7 | |

| T | ≥ 200K | No | Yes | – | Med | – | Qwen 2.5-1M | |

| Open-Domain QA | T, (I, A) | ≥ 200K | Yes | No | Low | – | Med | Gemini 2 |

| T | < 200K | Yes | No | Med | – | High | GPT-4o | |

| T | < 200K | Yes | Yes | – | Med | – | QwQ 32B | |

| Focused Tasks (Fine-tune) | T | < 200K | Yes | No | Low | – | – | GPT-4o mini |

| T, (I) | < 200K | No | Yes | – | Med | – | LLama 3.2 MM | |

| T | < 200K | Yes | Yes | – | Low | – | DeepSeek-R1 Distill |

2.2.2. GPT-series Models

2.2.3. OpenAI Reasoning Models

2.2.4. Claude 3 Model Family

2.2.5. Gemini 2 Model Family

2.2.6. Gork Model Family

2.2.7. GPT-OSS

2.2.8. Llama 3 Model Family

2.2.9. Qwen 2 Model Family

2.2.10. DeepSeek Model Family

2.3. Evaluation on LLMs

2.3.1. Tasks

2.3.2. Benchmarks

- MMLU [135]: The Massive Multitask Language Understanding (MMLU) benchmark evaluates multitask accuracy across 57 diverse subjects, including humanities, social sciences, STEM fields, and professional domains like law and medicine. Each question is multiple-choice with four options, covering difficulty levels from elementary to professional. Questions are sourced from standardized test prep materials (e.g., GRE, USMLE) and university-level courses. The dataset comprises 15,908 questions split into training, validation, and test sets. MMLU assesses models in both zero-shot and few-shot settings, reflecting real-world conditions where no task-specific fine-tuning is applied. Human performance baselines are also provided, ranging from average crowdworkers to expert-level participants.

- BIG-Bench [136]: The Beyond the Imitation Game Benchmark (BIG-Bench) is a large-scale suite of 204 tasks designed to test LLMs on capabilities not captured by conventional benchmarks. Tasks span areas such as linguistics, mathematics, biology, social bias, and software engineering, and were contributed by researchers and institutions worldwide. Human experts also completed the tasks to establish reference baselines. BIG-Bench includes JSON tasks (with structured inputs/outputs) and programmatic tasks (which allow custom metrics and interaction). Evaluation metrics include accuracy, exact match, and calibration. A smaller curated subset, BIG-Bench Lite, contains 24 JSON tasks for lightweight and efficient evaluation.

- HumanEval [49]: HumanEval is a benchmark for evaluating the functional correctness of code generation. It consists of 164 original Python programming problems, each with a function signature, descriptive docstring, and empty function body. A solution is deemed correct if it passes predefined unit tests, aligning with how developers assess code quality. The benchmark targets abilities such as comprehension, algorithmic reasoning, and basic mathematics. For safety, all code is executed in a secure sandbox to mitigate risks posed by untrusted or potentially harmful code.

- TruthfulQA [151]: This benchmark is designed to assess whether LLMs generate truthful answers and avoid perpetuating misconceptions or factual inaccuracies. It includes 817 questions across 38 domains, such as health, finance, and law. The questions—typically concise, with a median length of 9 words—are crafted to exploit known weaknesses in LLMs, particularly their tendency to imitate common yet incorrect human text. The benchmark imposes rigorous truthfulness criteria, evaluating answers based on factual accuracy as supported by public sources like Wikipedia. Each question includes both true and false reference answers.

- GSM8K [140]: The Grade School Math 8K (GSM8K) dataset comprises 8.5K human-written arithmetic word problems suitable for gradeschool-level mathematics. Of these, 7.5K are training problems and 1K are test problems. Each problem typically requires 2 to 8 reasoning steps and involves basic arithmetic. The dataset emphasizes: (1) high quality, with a reported error rate below 2%; (2) high diversity, avoiding repetitive templates and encouraging varied linguistic expression; (3) moderate difficulty, solvable using early algebra without advanced math concepts; and (4) natural language solutions, favoring everyday phrasing over formal math notation.

2.3.3. Evaluation Methods

2.3.4. Performance at a Glance

- Insight 1: No single LLM dominates across all tasks. GPT-4.5 ranks highest on Chatbot Arena (1398), suggesting strong general chatbot capabilities. However, it does not lead in benchmarks like GPQA (reasoning) or math. Conversely, DeepSeek R1 achieves the best scores on MMLU (90.8%) and Math (97.3%) but lacks results in HumanEval (coding) and multilingual tasks, indicating that top performance in one area does not translate to all domains.

- Insight 2: Reasoning models outperform others in logical and structured tasks. Claude 3.7 Sonnet (reasoner) achieves the highest GPQA Diamond score (84.8%), the best Tool Use (Retail) result (81.2%), and leads in MMMLU (86.1%). These benchmarks emphasize reasoning and complex task execution, showcasing the value of models fine-tuned for reasoning.

- Insight 3: Specialized models often come with trade-offs. Claude 3.5 Haiku performs well in general chatbot interaction but has one of the lowest GPQA scores (41.6%) and math scores (69.4%). Gemini 1.5 Pro and Gemini 2.0 Flash perform reasonably well in multilingual (MGSM) and tool-use (BFCL) tasks, but underperform in reasoning and coding, highlighting performance sacrifices in general-purpose versus specialized capabilities.

- Insight 4: Different models excel at different tasks, and should be selected accordingly. For chatbot dialogue, GPT-4.5 and GPT-4o are top performers. Claude 3.7 Sonnet (reasoner) excels in reasoning, tool use, and multilingual benchmarks. Claude 3.5 Sonnet leads in coding with the best HumanEval score (93.7%). For math-heavy benchmarks, DeepSeek R1 and GPT-03-mini both surpass 97% on the Math benchmark. Thus, model selection should be guided by the specific task requirements.

3. LLMs for Arts, Letters, and Law

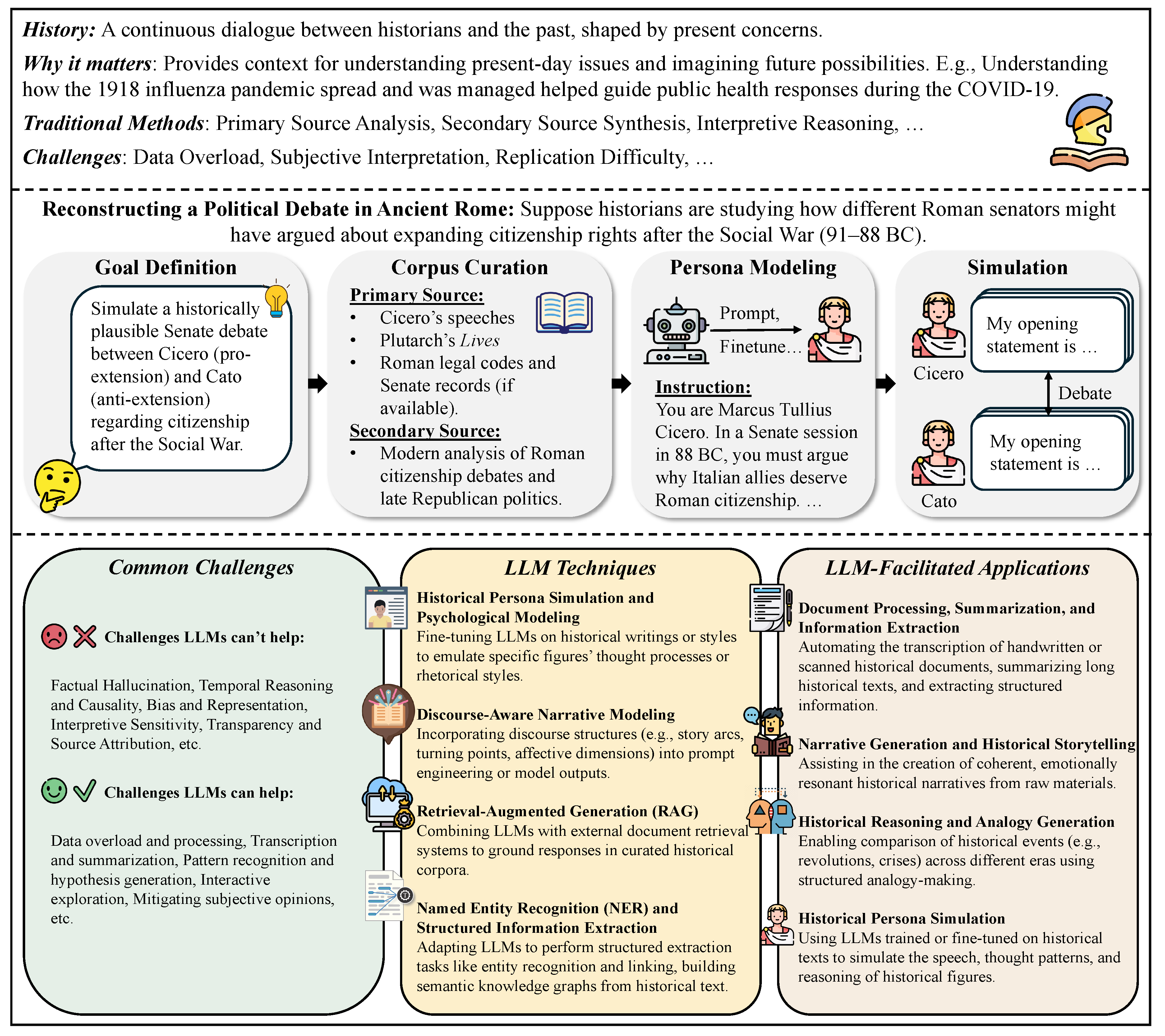

3.1. History

3.1.1. Overview

| Historical Category | LLM Application Areas | Use Case-Inspired Research Question | Key Insights and Contributions | References |

|---|---|---|---|---|

| Narrative and Interpretive History | Narrative Generation and Analysis | Can LLMs generate historically coherent and emotionally resonant narratives from primary source texts? | LLMs struggle with coherence and diversity; discourse-aware prompts improve storytelling; useful for story arcs and emotional analysis. | [186,187] |

| Historical Research Assistance | How can LLMs simulate historical personas or provide conversational access to archives for education and research? | Used in chat-based exploration, simulated historical figures, and layered tools (e.g., KleioGPT); helpful for both scholars and students. | [188,189,190] | |

| Historical Interpretation | Can LLMs improve consistency and reduce bias in interpretive historical analysis? | LLMs show more consistent judgments than humans; useful for reducing subjectivity in analysis. | [191] | |

| Quantitative and Scientific History | Historical Thinking | How do LLMs enhance access to and reflection on historical content through transcription and summarization? | Help transcribe handwriting and summarize texts; make primary sources easier to explore and reflect on. | [192] |

| Historical Data Processing | Can LLMs scale up entity extraction and temporal reasoning across vast historical corpora? | Enable name/date extraction, timeline generation, and semantic linking at scale. | [193,194] | |

| Simulating Historical Psychological Responses | Is it possible to model the psychological tendencies of past societies using LLMs trained on historical texts? | Can mimic cultural mindsets from historical texts, but results may reflect biases of elite sources. | [188] | |

| Comparative and Cross-Disciplinary History | Historical Analogy Generation | How can LLMs retrieve or generate meaningful analogies between past and present events? | Generate cross-era comparisons; reduce hallucinations with structured frameworks and similarity checks. | [195] |

| Interdisciplinary Information Seeking | Can LLMs facilitate the discovery of relevant insights across disciplines for historical inquiry? | Tools like DiscipLink help explore diverse sources and connect ideas across fields. | [196] |

- Narrative and Interpretive History: This area emphasizes descriptive, subjective, and human-centered accounts of the past. It uses storytelling and meaning-making to explain events in context. LLMs can assist in narrative generation, reconstruct voices from historical texts, and interpret language use in personal accounts or memoirs.

- Quantitative and Scientific History: This approach applies statistical, computational, and formal methods to study historical data and trends. LLMs can process large datasets, simulate historical psychological responses, aid in historical reasoning tasks, and evaluate knowledge systems.

- Comparative and Cross-Disciplinary History: This domain integrates methods from sociology, economics, political science, and other disciplines to study similarities and differences across historical contexts. LLMs can Support comparative analysis, generate historical analogies, link concepts across eras or cultures.

3.1.2. Narrative and Interpretive History

3.1.3. Quantitative and Scientific History

3.1.4. Comparative and Cross-Disciplinary History

3.1.5. Benchmarks

| Benchmark | Scope and Focus | Data Composition | Evaluation Tasks | Key Insights |

|---|---|---|---|---|

| TimeTravel [198] | Multimodal evaluation of historical and cultural artifacts | 10,250 expert-verified samples across 266 cultures and 10 regions; includes manuscripts, artworks, inscriptions, and archaeological findings | Classification, interpretation, and historical reasoning | Highlights LMMs’ limitations in cultural/historical context understanding; sets new standards for AI in cultural heritage preservation |

| AC-EVAL [199] | Ancient Chinese language understanding by LLMs | 3,245 multiple-choice questions on historical facts, geography, customs, classical poetry, and philosophy, categorized into three difficulty levels | General knowledge, short text understanding, long text comprehension | Chinese-trained models outperform English-trained ones; ancient Chinese remains a low-resource challenge; few-shot often introduces noise |

| Hist-LLM [193] | Global historical knowledge evaluation using structured data | Subset of Seshat Global History Databank: 600 societies, 36,000 data points | Multiple-choice on historical facts across global regions and eras | Models show moderate accuracy; better on early periods and the Americas; weaker in Sub-Saharan Africa and Oceania |

3.1.6. Discussion

- Temporal and Causal Reasoning Enhancement. Advances in temporal modeling, causal inference, and discourse-level narrative comprehension [186] are critical for enabling LLMs to reason more accurately about historical sequences and cause-effect relationships.

- Explainable and Source-Grounded Historical AI. Building models that produce verifiable outputs, grounded in cited historical documents or structured datasets, can strengthen academic trust and facilitate critical engagement [190].

- Collaborative Human-AI Historical Research. Systems like DiscipLink [196] suggest a promising future where LLMs act as exploratory partners rather than authoritative experts, supporting iterative, mixed-initiative workflows that preserve human scholarly agency.

- Ethics and Epistemology of Digital History. Further interdisciplinary studies are needed to critically examine how LLMs reshape historical knowledge production, interpretive authority, and educational practices, ensuring that technological augmentation remains aligned with historical rigor and ethical standards [191].

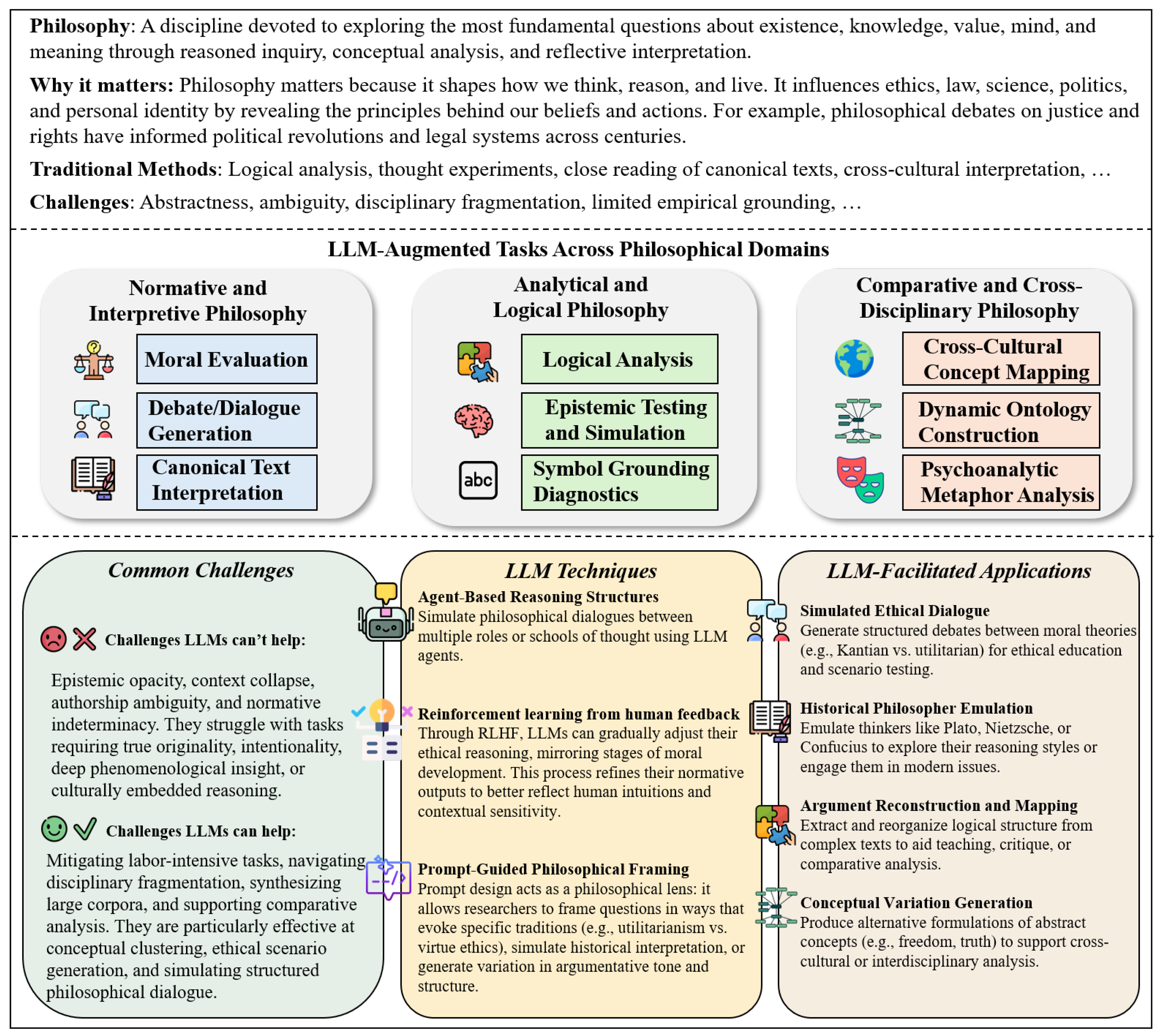

3.2. Philosophy

3.2.1. Overview

- Normative and Interpretive Philosophy: This area emphasizes the analysis of moral values, ethical dilemmas, and interpretive narratives within philosophical traditions. LLMs can assist in generating novel normative arguments, reconstructing historical ethical discourses, and offering fresh interpretations of canonical texts.

- Analytical and Logical Philosophy: This approach applies formal logic, precise argumentation, and rigorous conceptual analysis to explore philosophical problems. LLMs can support the systematic breakdown of complex arguments, facilitate comparative analyses of theoretical positions, and enhance clarity in logical reasoning.

- Comparative and Cross-Disciplinary Philosophy: This domain integrates insights from diverse fields—such as political science, sociology, and linguistics—to study philosophical questions from multiple angles. LLMs can aid in cross-cultural comparisons, generate analogies between disparate theories, and help synthesize interdisciplinary perspectives.

3.2.2. Normative and Interpretive Philosophy

3.2.3. Analytical and Logical Philosophy

3.2.4. Comparative and Cross-Disciplinary Philosophy

3.2.5. Benchmarks

3.2.6. Discussion

- Philosophical Fine-Tuning and Corpus Design. Curating balanced and inclusive corpora that represent diverse traditions can mitigate bias and expand philosophical reach [226].

- Logical Structure and Argument Mining. Developing tools to extract, visualize, and compare philosophical arguments enhances interpretive transparency and pedagogical value [221].

- Ontology Mapping and Comparative Frameworks. Leveraging LLMs to compare metaphysical and ethical schemas across cultures can support pluralistic theorizing and cross-cultural ethics [215].

- Interactive Dialogue Systems for Teaching. Deploying LLMs as Socratic partners or role-played historical thinkers can deepen student engagement and simulate philosophical exchange [218].

- Epistemology and AI Ethics. Interdisciplinary work is needed to assess the epistemic status of LLM outputs, their limits of understanding, and their role in reshaping human inquiry [217].

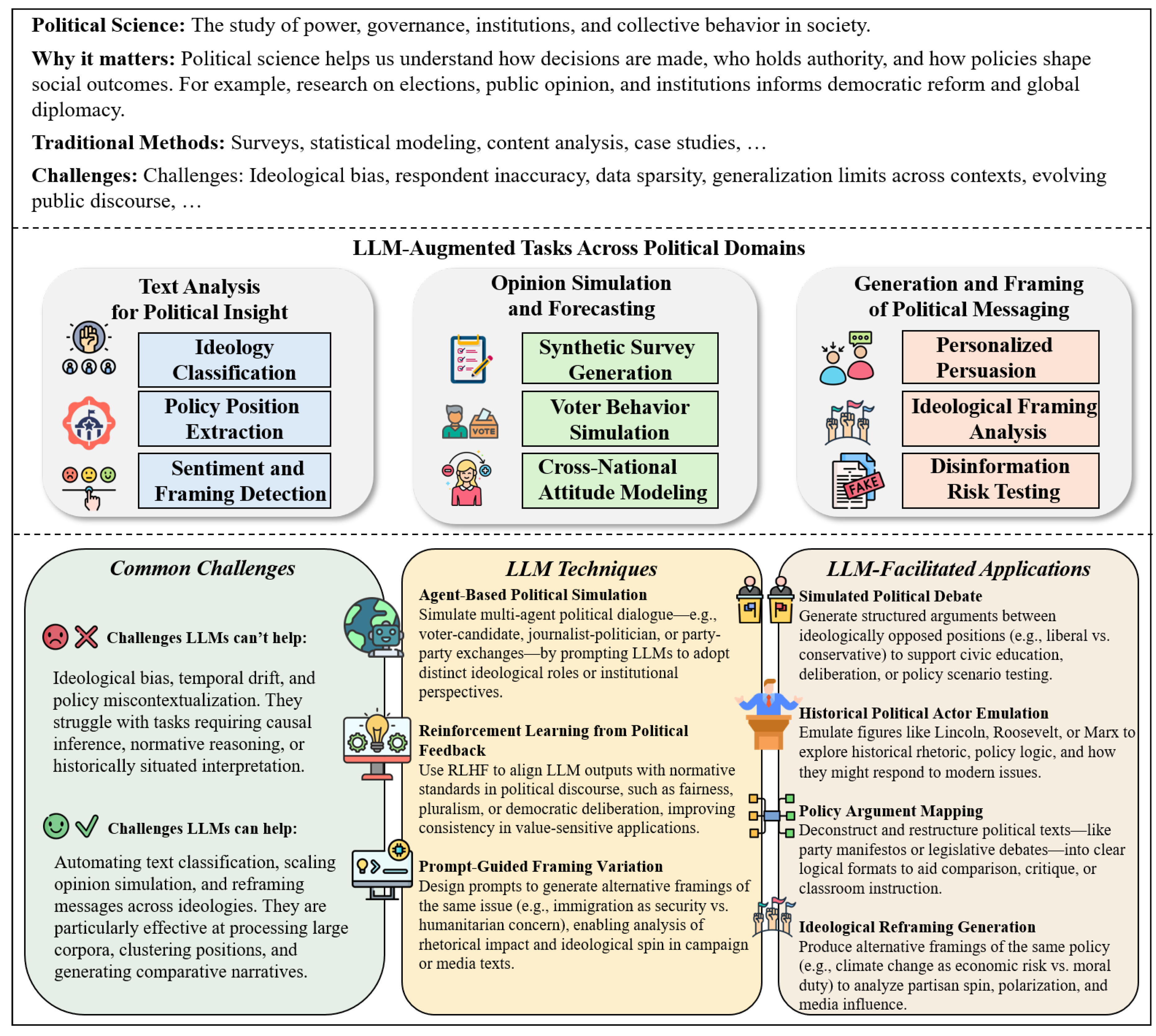

3.3. Political Science

3.3.1. Overview

- Text Analysis for Political Insight: LLMs assist in analyzing political texts such as speeches, party platforms, policy documents, and social media discourse. They are widely used for tasks like sentiment analysis, policy position classification, ideological scaling, and automated topic modeling, enabling scalable and consistent textual interpretation at unprecedented scale.

- Opinion Simulation and Forecasting: LLMs can simulate public opinion, generate synthetic survey respondents (“silicon samples”), and forecast electoral outcomes through multi-step reasoning. These capabilities are particularly valuable for behavioral modeling, comparative analysis, and data augmentation in contexts where real-world survey data is limited.

- Generation and Framing of Political Messaging: LLMs are increasingly used to craft persuasive political language, adapt messages to different audiences, and analyze framing strategies. These applications include generating campaign slogans and policy narratives, auditing ideological bias in generated outputs, and studying the persuasive dynamics of AI-authored political content.

3.3.2. Text Analysis for Political Insight

3.3.3. Opinion Simulation and Forecasting

3.3.4. Generation and Framing of Political Messaging

3.3.5. Benchmarks

3.3.6. Discussion

- Fine-Tuned Political Models. Domain-specific fine-tuning on legislative records, public opinion corpora, and multilingual political texts can enhance accuracy and reduce ideological bias.

- Causal and Temporal Modeling. Combining LLMs with structured models or reasoning frameworks may improve their ability to infer causality, detect agenda dynamics, and simulate policy feedback.

- Bias Detection and Auditing. Systematic tools to audit, mitigate, and document political biases in LLM outputs are essential for responsible deployment.

- Interactive Political Simulation. LLMs can be embedded in deliberative platforms to support role-playing, voter education, or negotiation training in civic and educational settings.

- Disinformation Defense. Techniques such as adversarial prompting, fact-checking augmentation, and truth-conditioned training can be used to defend against political misuse.

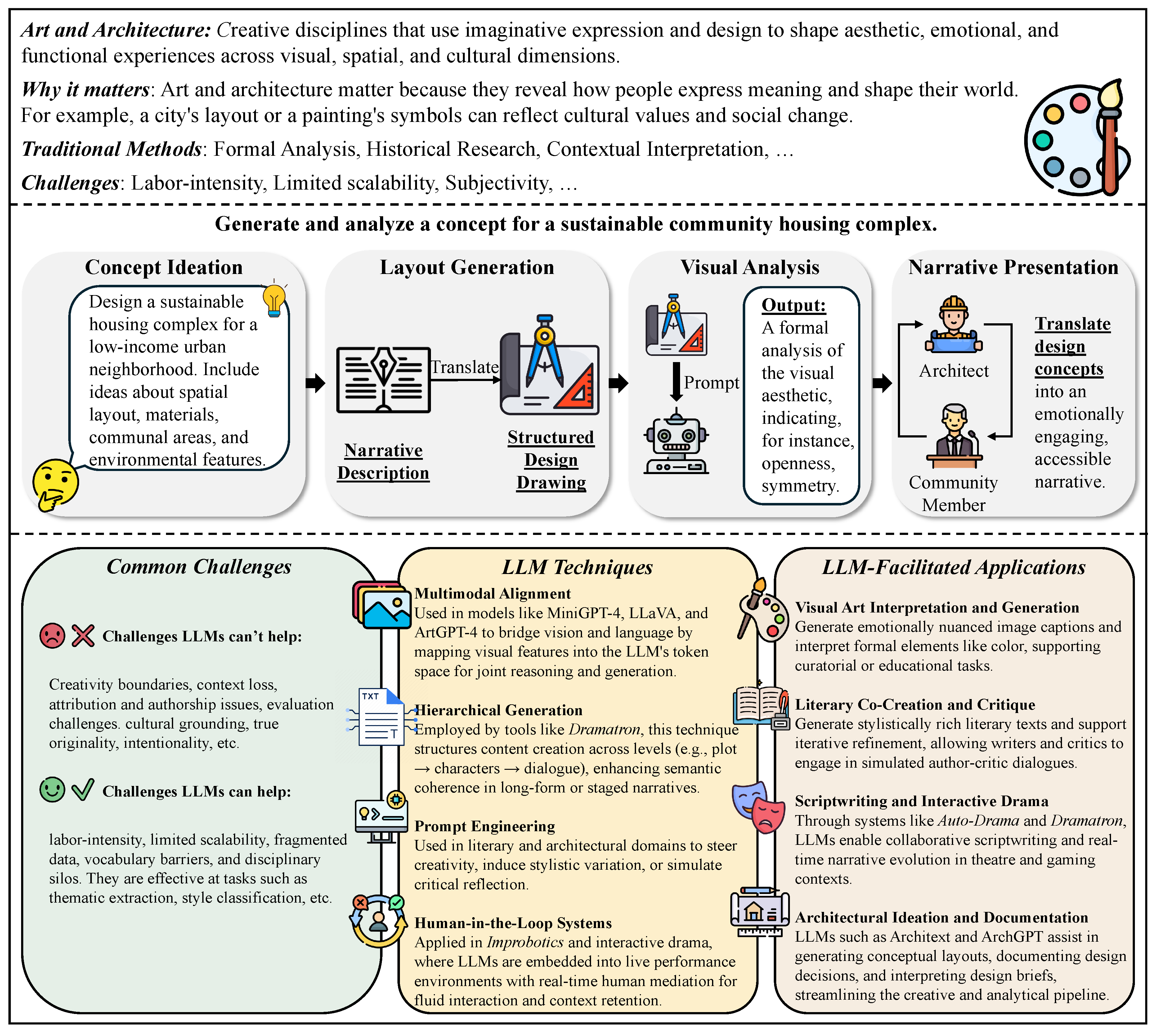

3.4. Arts and Architecture

3.4.1. Overview

| Type of Art | Subtasks | Use Case-Inspired Research Question | Insights and Contributions | Citations |

|---|---|---|---|---|

| Visual Art | Creation (Prompting, Image Generation) | How can LLM-vision models generate visually compelling and stylistically diverse art through prompt-based interaction? | Multimodal models like MiniGPT-4 and LLaVA support creative generation; ArtGPT-4 enhances aesthetics using image adapters. | [269,270,271] |

| Analysis (Symbolism, Style Classification) | Can LLMs classify artistic styles and interpret visual symbolism without dedicated visual encoders? | GalleryGPT reduces hallucinations via structured prompts; CognArtive uses SVGs to enable symbolic reasoning without visual models. | [272,273] | |

| Literary Art | Creation (Storytelling, Stylistic Writing) | How can LLMs be guided to produce stylistically rich and emotionally resonant literary texts? | Prompt tuning and temperature settings support diverse styles; enables multi-voice storytelling and creative expression. | [274,275] |

| Analysis (Critique, Interpretation) | Can LLMs simulate literary critics or authors to support textual analysis and interpretation? | Interactive and self-reflective prompting enables critical reading and style-aware interpretation. | [276,277] | |

| Performing Art | Creation (Scriptwriting, Interactive Drama) | How can LLMs co-write scripts and structure dramatic arcs in interactive performances? | Tools like Dramatron build layered plots; Auto-Drama uses classical structure (e.g., Aristotelian elements) to shape narrative flow. | [278,279] |

| Performance (Live Interaction, Improvisation) | Can LLMs participate in live improvisational performance alongside human actors? | Improbotics blends live dialogue with LLM input for co-creative scenes; handles multi-party flow and timing. | [280] | |

| Architecture | Design and Creation (Concept Ideation, Layout Generation, Decision Records) | How can LLMs support early-stage design, spatial layout, and architectural decision-making processes? | Tools like Architext generate floorplans; GPT-based pipelines assist with ideation and accurate design documentation. | [281,282,283] |

| Analysis (Heritage Assessment, Design Comparison) | Can LLMs assist in heritage assessment and design analysis by integrating semantic knowledge with user intent? | ArchGPT retrieves context-aware insights; supports restoration, regulation checks, and expert collaboration. | [284] |

- Visual Arts. Visual arts include creative practices such as painting, photography, illustration, and video. LLMs assist artists by generating image prompts, describing visual scenes, and helping with conceptual development. They also support analysis by interpreting symbolism, classifying art styles, and summarizing critical commentary.

- Literary Arts. Literary arts encompass creative writing forms such as poetry, drama, fiction, and essays. LLMs can generate literary content, mimic specific authorial styles, and assist in drafting narratives. They also enable textual analysis, such as thematic extraction, stylistic comparison, and literary critique.

- Performing Arts. Performing arts include music, dance, theater, and opera—forms that rely on embodied performance. LLMs can generate scripts, lyrics, or librettos, and simulate performative dialogue for interactive settings. They also help tag and analyze archival materials, enabling exploration of movement, expression, and performance history.

- Architecture. Architecture focuses on designing functional and aesthetic spaces, integrating art, engineering, and environmental factors. LLMs support architects by generating design narratives, proposing spatial ideas, and interpreting project briefs. They also assist in summarizing regulations, comparing architectural styles, and evaluating design decisions.

3.4.2. Visual Art

3.4.3. Literary Art

3.4.4. Performing Art

3.4.5. Architecture

3.4.6. Benchmarks

| Benchmark | Scope and Focus | Data Composition | Evaluation Tasks | Key Insights |

|---|---|---|---|---|

| ArtBench-10 [286] | Artwork generation and style classification | 60,000 curated images spanning 10 artistic styles with balanced classes and cleaned labels | Generative modeling using GANs, VAEs, diffusion; FID, IS, KID, precision/recall scores | StyleGAN2+ADA achieves best results; highlights diversity and fidelity gaps in GAN variants |

| AKM [283] | Architectural design decisions from text prompts | Context-rich design scenarios and model inputs for GPT/T5-family models | Zero-shot, few-shot, and fine-tuned generation of architectural decisions | GPT-4 performs best in zero-shot; GPT-3.5 effective with few-shot; Flan-T5 benefits from fine-tuning |

| ADD (DRAFT) [287] | Domain-specific architectural decision generation | 4,911 real-world Architectural Decision Records (ADRs) with labeled rationale and context | Few-shot vs. RAG vs. fine-tuning using DRAFT for architectural reasoning | DRAFT outperforms baselines in accuracy and efficiency; avoids reliance on large proprietary LLMs |

| AGI & Arch [288] | Generative AI’s knowledge of architectural history | 101M+ Midjourney prompts + qualitative and factual analysis of style descriptions | Historical style recognition, hallucination detection, generative image-text alignment | ChatGPT shows inconsistencies in confidence vs. accuracy; Midjourney trends analyzed via reverse prompts |

| WenMind [289] | Chinese Classical Literature and Language Arts (CCLLA) | 42 fine-grained tasks across Ancient Prose, Poetry, and Literary Culture; tested on 31 LLMs | Question answering, translation, rewriting, interpretation across genres | ERNIE-4.0 best performer with 64.3 score; major gap in LLM proficiency for classical Chinese content |

| AIST++ [290] | Music-conditioned 3D dance motion generation | 5.2 hours of 3D motion data across 10 dance genres + musical accompaniment | Motion synthesis with FACT transformer; evaluation via FID, diversity, beat alignment | FACT model generates realistic, synchronized, long-sequence dances better than prior baselines |

3.4.7. Discussion

- Ethical Authorship and Attribution Frameworks. Establishing clear guidelines for authorship attribution, ethical usage, and creative credit in LLM-augmented works will be critical to ensuring fair recognition and responsible innovation [278].

- Qualitative Evaluation Metrics. Developing domain-specific, human-centered evaluation rubrics—focused on creativity, authenticity, and emotional resonance—will be necessary to meaningfully assess LLM contributions in artistic fields [275].

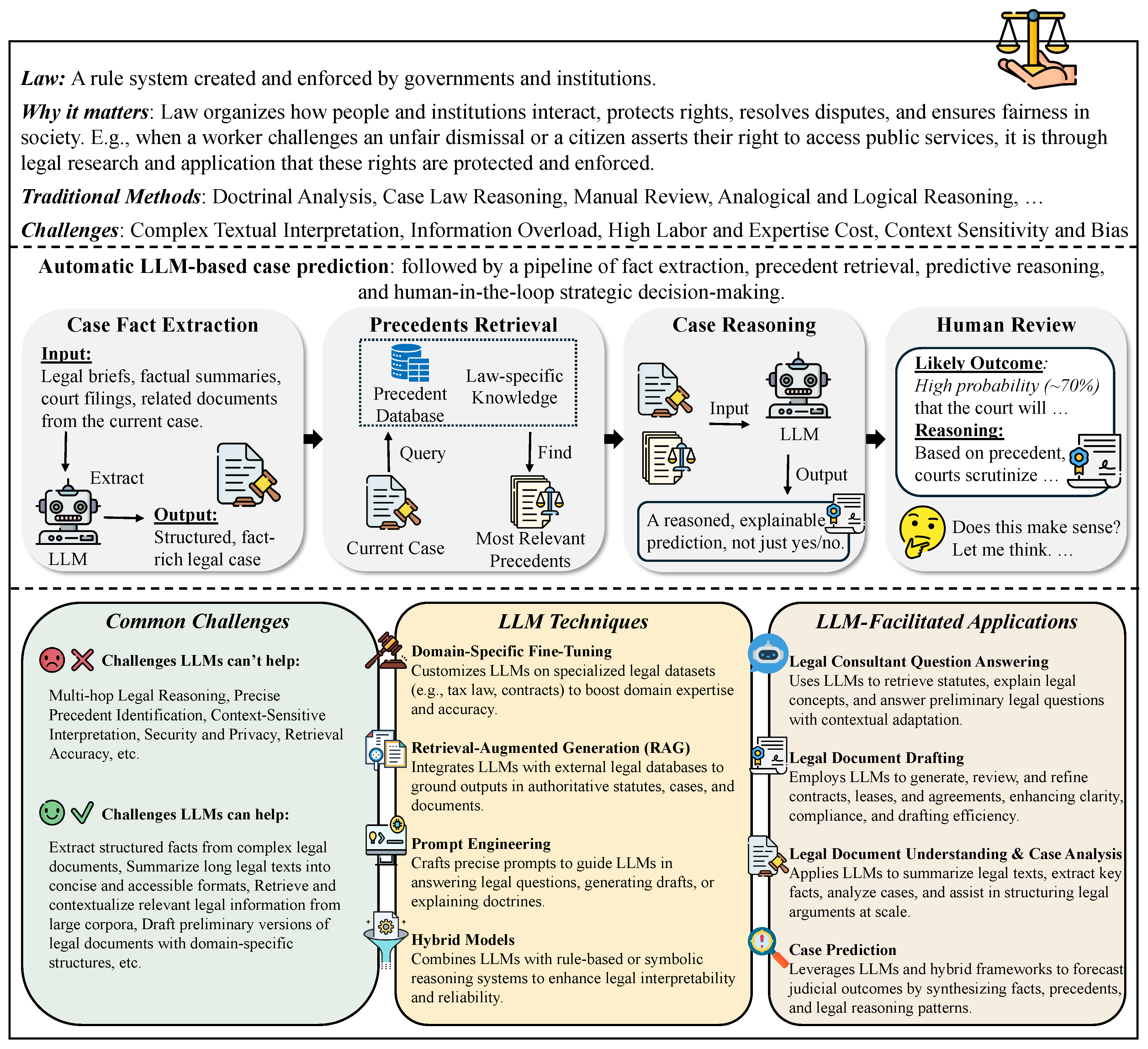

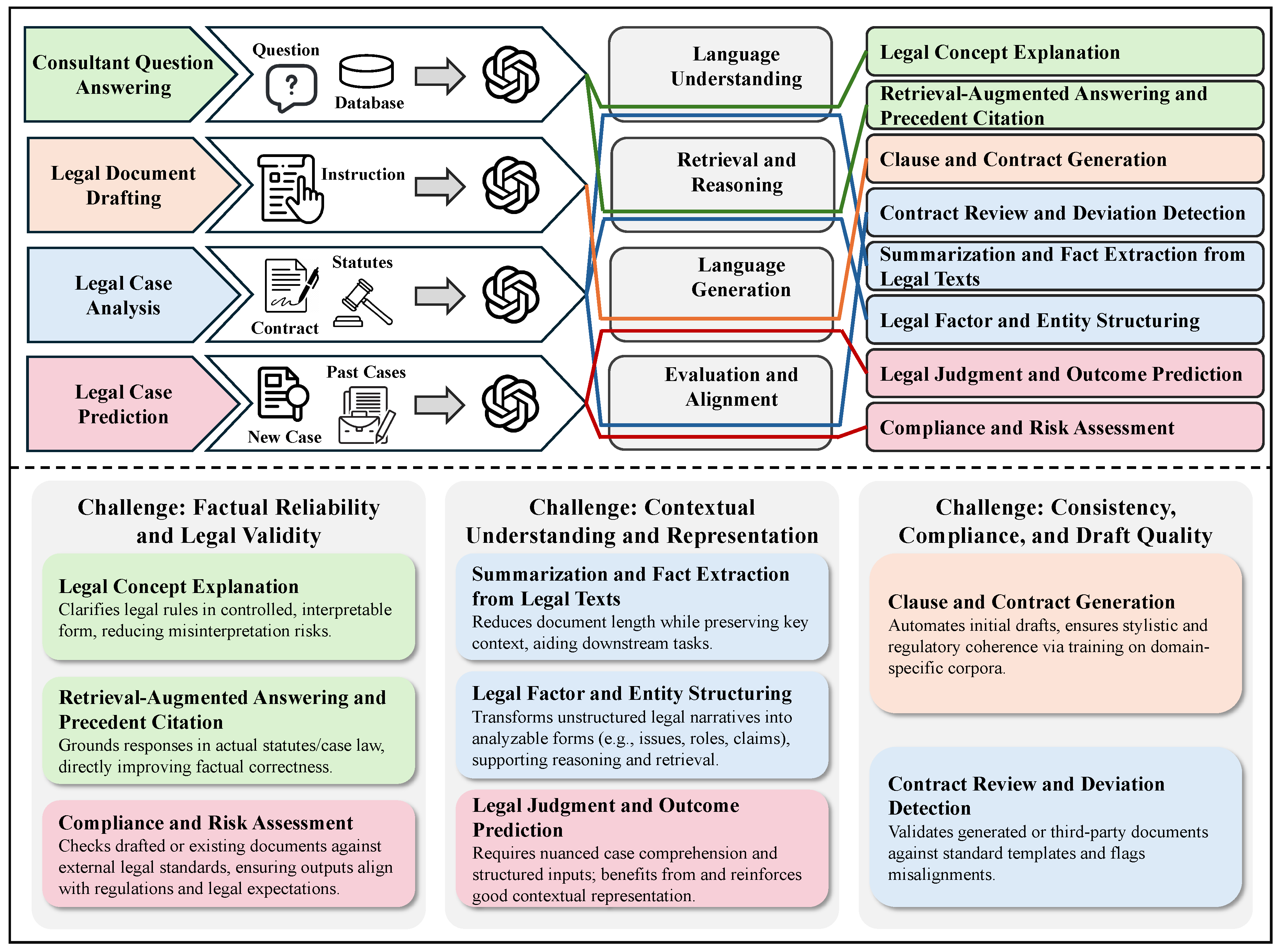

3.5. Law

3.5.1. Overview

- Legal Consultant Question Answering. Many legal queries—such as “What are the elements of negligence?” or “Does the GDPR apply to this situation?”—involve retrieving statutory definitions, summarizing doctrines, or explaining precedent. LLMs can function as legal assistants, offering plain-language explanations, surfacing relevant laws, and contextualizing rules. This enables broader access to legal knowledge and supports both laypersons and professionals during early-stage legal reasoning.

- Legal Document Drafting. Drafting contracts, policies, and filings involves significant repetition and domain knowledge. LLMs can generate initial templates, propose clauses, and adapt documents to specific scenarios or jurisdictions. This accelerates document production, reduces drafting overhead, and promotes standardization—especially useful for small firms or high-volume legal operations.

- Legal Document Understanding & Case Analysis. Interpreting statutes, summarizing opinions, or identifying relevant facts is core to legal analysis. LLMs can extract key information, highlight legal entities or issues, and support case comparison. This improves comprehension, reduces time spent on manual review, and helps structure arguments and decisions based on large textual corpora.

- Case Prediction. Predicting legal outcomes—based on case facts, prior rulings, and jurisdictional context—is valuable for risk assessment and litigation strategy. While final outcomes are shaped by human judgment and evolving law, LLMs can surface patterns, suggest likely outcomes, and support probabilistic reasoning based on precedent, helping users plan and prioritize cases.

| Legal Domain | LLM Application Areas | Use Case-Inspired Research Question | Key Insights and Contributions | References |

|---|---|---|---|---|

| Legal Consultant Question Answering | Interactive Legal Q&A Systems | Can LLMs answer basic legal questions with accurate references to statutes and case law? | Emergent legal reasoning observed; retrieval-augmented prompting reduces hallucination; GPT-4 can approximate legal explanations with improved accuracy. | [305,306,307] |

| Domain-Specific Legal Models | How can LLMs be fine-tuned to better address legal reasoning tasks? | Models like LawLLM improve U.S. law reasoning through fine-tuning and task adaptation (retrieval, precedent matching, judgment prediction). | [308] | |

| Legal Factor Extraction | Can LLMs extract and define core legal factors from court opinions? | Supports building expert systems; improves structure and consistency of legal analysis. | [309] | |

| Legal Document Drafting | Clause Generation | Can LLMs autonomously generate domain-compliant legal clauses? | LLMs generate grammatically and legally sound clauses; useful in reducing drafting effort. | [310,311] |

| Draft Comparison via NLI | How can LLMs verify consistency between generated and template contracts? | NLI tasks help identify deviations and inconsistencies, enabling automated review. | [312] | |

| Legal Validity of Prompt-Based Contracts | What legal risks arise when contracts are generated using prompts? | Raises issues with doctrines like the parol evidence rule; prompt provenance matters. | [313] | |

| Legal Document Understanding and Case Analysis | Document Summarization & Entity Extraction | How well can LLMs extract facts and citations from unstructured legal texts? | Enhanced summarization, fact extraction, and legal entity recognition using retrieval-based and fine-tuned models. | [306,308,314] |

| Large-Scale Legal Analysis | Can LLMs support empirical research over large legal corpora? | Enables scalable judgment pattern extraction; useful for comparative legal studies. | [307] | |

| E-Discovery and Compliance | Can LLMs assist in regulatory compliance and legal review at scale? | RAG-based systems improve due diligence and compliance decisions; multi-agent LLMs aid in document relevance prediction. | [315,316] | |

| Legal Case Prediction | Judgment Forecasting | Can LLMs accurately predict legal case outcomes? | LLMs outperform traditional models; retrieval-augmented LLMs improve consistency and generalization. | [317,318] |

| Hybrid Legal Reasoning | How can LLMs be integrated with expert systems to improve prediction accuracy? | Hybrid systems improve interpretability and performance by aligning LLM outputs with legal logic. | [319] |

3.5.2. Legal Consultant Question Answering

3.5.3. Legal Document Drafting

3.5.4. Legal Document Understanding and Case Analysis

3.5.5. Legal Judgment Prediction

3.5.6. Benchmarks

| Benchmark | Scope and Focus | Data Composition | Evaluation Tasks | Key Insights |

|---|---|---|---|---|

| CUAD [333] | Contract clause extraction and risk detection in legal documents | 13,000+ expert-annotated examples across 41 clause types, sourced from real commercial contracts | Clause identification, named entity recognition (NER), binary classification | Focused on practical contract review tasks; emphasized precision in extraction under legal ambiguity; widely used in contract AI |

| CaseHOLD [334] | Case law judgment understanding via conclusion prediction | 53,000+ U.S. appellate court case summaries with multiple-choice legal holdings | Multiple-choice question answering; outcome selection from candidates | Tests nuanced legal entailment and fact-to-holding inference; served as early benchmark for transformer-based legal models |

| EUR-Lex [335] | Multilabel legal topic classification for EU directives and regulations | 55,000+ European legal documents tagged with 3,956 EuroVoc labels | Multilabel text classification | One of the earliest and most cited legal NLP datasets; highly imbalanced and hierarchical label space inspired development of label-aware classifiers |

| SCOTUS [336] | Supreme Court decision classification and ideological alignment analysis | U.S. Supreme Court opinions, annotated with justice ideology, vote splits, and case topics | Binary and multiclass classification, ideological trend analysis | Used in political science and legal prediction; supported early quantitative legal studies using machine learning |

| COLIEE [337] | Legal information retrieval and entailment challenge (competition format) | Multiple years of formal tasks including Japanese Bar Exam questions and Canadian legal cases/statutes | IR, entailment classification, statute retrieval, legal QA | Serves as international benchmark challenge; evaluated both retrieval and inference under strict logic constraints |

3.5.7. Discussion

- Retrieval-Augmented and Fact-Verified Generation. Integrating retrieval-augmented generation (RAG) systems that anchor responses in verifiable legal texts can reduce hallucination and improve accuracy [309].

- Jurisdiction-Aware and Temporal Modeling. Developing models sensitive to jurisdictional differences and evolving case law—such as time-aware frameworks like PILOT—can enhance the contextual reliability of legal predictions [328].

- Ethical and Regulatory Frameworks. Establishing governance standards for the deployment of LLMs in legal contexts—including audit trails, liability attribution, and responsible AI usage—will be essential to mitigate misuse and legal uncertainty [325].

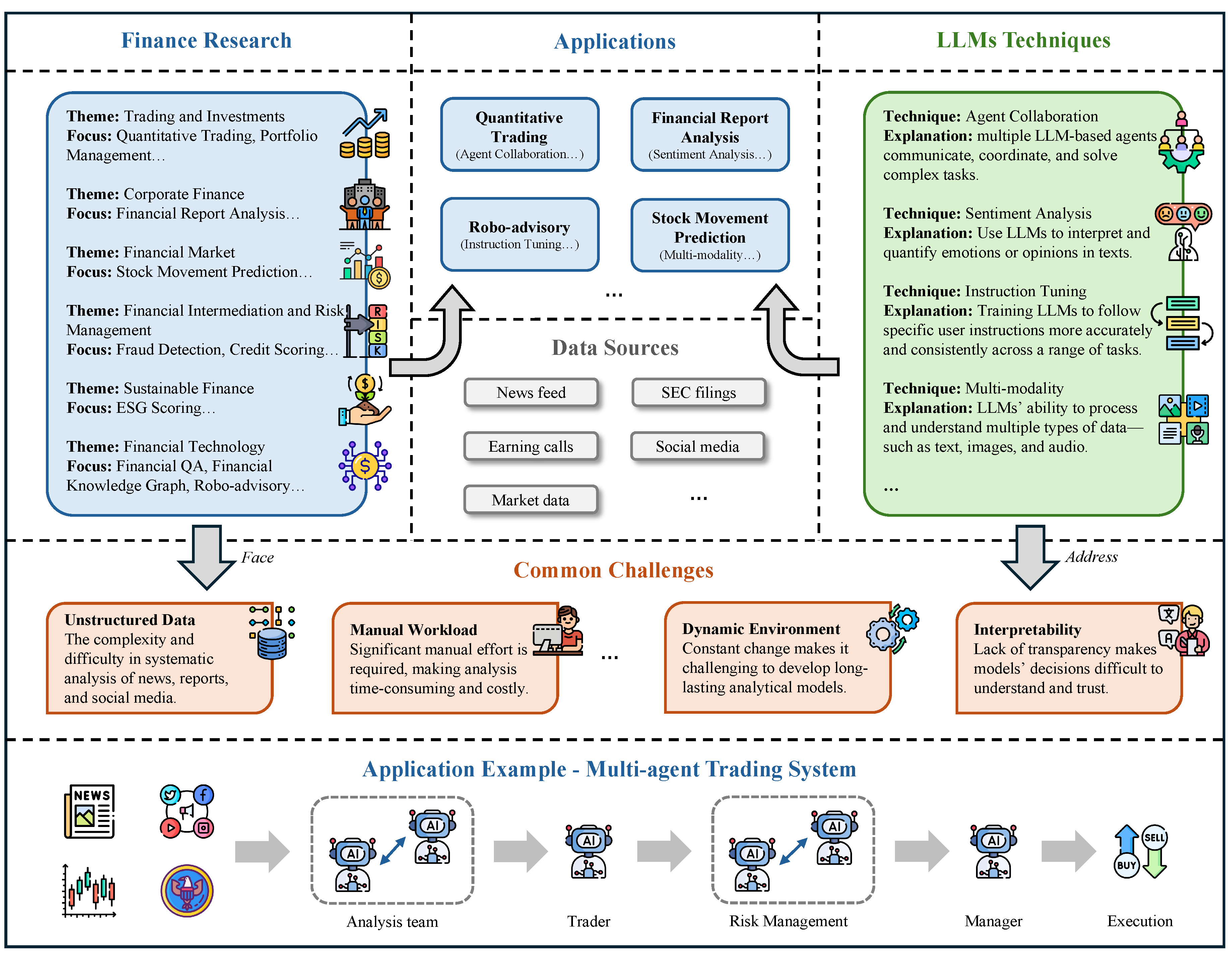

4. LLMs for Economics and Business

4.1. Finance

4.1.1. Overview

| Field | Subfield | Key Insights and Contributions | Examples | Citations |

|---|---|---|---|---|

|

Trading and Investments |

Quantitative Trading | LLM-based trading agents demonstrate enhanced interpretability, adaptability, and profitability in simulating and executing financial strategies across diverse market conditions | FinCon [364]: Multi-agent LLM system improves trading via verbal risk reinforcement. FinMem [365]: Layered memory and character design enhance LLM-based trading decisions. | [364,365,366,367,368,369,370,371,372,373,374,375] |

| Portfolio Management | LLMs enhance financial decision-making by enabling adaptive, explainable, and multi-modal portfolio strategies through agent collaboration, sentiment reasoning, and dynamic alpha mining | Ko & Lee [376]: ChatGPT enhances portfolio diversification and asset selection across classes. Kou et al. [377]: LLM-driven agents mine multimodal alphas, dynamically adapt trading strategies. | [376,377,378,379,380,381,382,383] | |

| Corporate Finance | Financial Report Analysis | LLMs enhance financial report analysis by enabling accurate, explainable, and scalable extraction and generation through multimodal processing, domain-specific fine-tuning, and tool-augmented reasoning | XBRL-Agent [384]: LLM agent analyzes XBRL reports using retriever and calculator tools. | [384,385,386] |

| Financial Markets | Stock Movement Prediction | LLMs can effectively predict and explain stock movements by extracting sentiment, factors, and insights from financial text through self-reflection, instruction tuning, and domain-specific prompting | Koa et al. [387]: Self-reflective LLM explains stock predictions via reinforcement learning framework. Ni et al. [388]: QLoRA-fine-tuned LLM predicts stocks using rich earnings data. | [387,388,389,390,391,392] |

|

Financial Intermediation and Risk Management |

Fraud Detection | LLMs significantly enhance financial fraud detection by enabling accurate, scalable, and robust identification of anomalies and manipulations through prompt engineering, hybrid modeling, and adversarial benchmarking | Fraud-R1 [393]: Benchmark tests LLM defenses against multi-round fraud and phishing. RiskLabs [394]: LLM fuses multi-source data to forecast volatility and financial risk. | [393,394,395,396,397] |

| Credit Scoring | LLMs enhance credit risk assessment by improving prediction accuracy, generalization, and explainability through hybrid modeling, text integration, and domain-specific fine-tuning | CALM [398]: LLM scores credit risk across tasks with fairness checks. LGP [399]: Prompted LLMs use Bayesian logic to generate insightful risk reports. | [398,399,400,401] | |

| Sustainable Finance | ESG Scoring | Leverage LLMs for classification, rule learning, data extraction, greenwashing detection, readability assessment, and multi-lingual understanding, thereby enhancing transparency and decision-making in sustainable finance. | ESGReveal [402]: Leverage LLMs and RAG to systematically extract and analyze ESG data from corporate reports. | [170,402,403,404,405,406,407,408,409,410] |

|

Finance Technology |

Financial Question Answering | LLMs enhance financial QA in accurate, context-aware reasoning over complex, multi-source financial data. | TAT-QA [411]: a Financial QA benchmark that contains over 16,000 questions built from real-world financial reports that combines tabular and textual data. | [411,412,413,414,415,416,417,417] |

| Knowledge Graph Construction | LLMs enable automated KG construction from financial data, retrieval from KG and support multi-document financial QA. | FinKG [418]: A curated core financial knowledge graph built from authoritative sources like corporate reports and stock data, structured to enable systematic analysis and applications in financial forecasting, risk assessment, and decision-making through semantically rich relationships between entities. | [417,418,419,420,421,422] | |

| Robo-advisory | LLMs enhance robo-advisors for novice investors, but still lag behind humans in performance and trust. | Jung et al [423]: A earlier work that propose the concept "Robo-Advisory" that leverage AI to provide automatic financial advisory servies for a broader range of investors. | [404,423,424,425,426,427,426] |

- Trading and Investments. Trading pursues short-term gains while investment emphasizes long-term value through diversification and analysis. Traditional methods struggle with large-scale, complex data, whereas LLMs offer new capabilities for processing unstructured information, enhancing forecasting, and supporting strategies in quantitative trading and portfolio management.

- Corporate Finance. Corporate finance manages funding, capital structure, and investment to drive growth. Conventional approaches like financial modeling and discounted cash flow analysis are labor-intensive and limited under fast-changing conditions. LLMs streamline tasks such as financial report analysis, improving efficiency and accuracy in strategic decision-making.

- Financial Markets. Financial markets allocate resources and manage risk through the trading of assets. While econometric models and machine learning aid analysis, they face challenges with today’s data scale and complexity. LLMs advance this field by processing unstructured information and enabling applications such as stock movement prediction.

- Financial Intermediation and Risk Management. Banks and insurers channel capital while managing risks, but traditional statistical models and manual processes lag in dynamic environments. LLMs improve performance by analyzing diverse datasets, with emerging applications in fraud detection and credit scoring.

- Sustainable Finance. Sustainable finance incorporates ESG factors into investment decisions. Standard scoring systems often overlook rich unstructured data from reports and media. LLMs can extract and synthesize such information, offering more context-aware and adaptive ESG insights.

- Financial Technology. FinTech reshapes financial services through innovations like digital banking, blockchain, and robo-advisory. Traditional solutions emphasize automation but lack flexibility. LLMs expand FinTech by powering financial question answering, knowledge graph construction, and conversational advisory, enhancing personalization and accessibility.

4.1.2. Trading and Investment

4.1.3. Corporate Finance

4.1.4. Financial Market Analysis

4.1.5. Financial Intermediation and Risk Management

4.1.6. Sustainable Finance

4.1.7. Financial Technology

4.1.8. Benchmarks

| Benchmark | Language | Size | Feature | Insights on LLMs |

|---|---|---|---|---|

| FinBen | English | 36 datasets | Broadest task range; includes forecasting and agent evaluations | LLMs perform best on IE/textual analysis, poorly on forecasting and reasoning-heavy tasks |

| R-Judge | English | 569 records | Multi-turn safety judgment for agents in real scenarios | LLMs lack behavioral safety judgment in interactive settings; fine-tuning helps significantly |

| FinEval | Chinese | 8,351 Qs | Covers academic, industry, security, and agent reasoning tasks | LLMs outperform average individuals but lag behind experts; complex reasoning and tool usage still weak |

| CFinBench | Chinese | 99,100 Qs | Career-aligned categories; diverse questions and rigorous filtering | Highlights knowledge gaps; current LLMs struggle with practical depth and legal reasoning |

| UCFE | English, Chinese | 330 data points | User-role simulation; dynamic multi-turn tasks | Human-like evaluations show LLMs align with users but fall short under dynamic, evolving needs |

| Hirano | Japanese | — | Domain-specific benchmark in Japanese | Domain-specific LLMs still underdeveloped in Japanese finance |

- Challenges in complex financial tasks: Current LLMs still struggle with tasks that require deep domain knowledge, logical reasoning, and multi-step decision-making.

- Effectiveness of domain-specific fine-tuning: Fine-tuning LLMs on domain-specific corpora continues to yield notable performance gains, demonstrating its importance in enhancing model specialization.

- Benchmark coverage vs. real-world applicability: While these benchmarks effectively assess LLMs’ comprehensive capabilities in finance, they are primarily diagnostic and not tailored to specific application scenarios. Practical use cases often require the design of dedicated, task-specific benchmarks.

- Need for broader evaluation dimensions: Additional attention should be given to other meaningful evaluation perspectives, such as user alignment (e.g., UCFE) and risk awareness (e.g., R-Judge), which are crucial for safe and effective real-world deployment.

- General Financial Data: Provides access to real-time and historical stock prices, fundamental financial indicators, and corporate financial statements. Such data are critical for simulating trading environments, developing investment strategies, conducting market forecasts, and evaluating algorithmic trading agents.

- Cryptocurrency Data: Offers market prices, trading volumes, and metadata for cryptocurrencies. These datasets are particularly useful for research on crypto trading strategies, market microstructure analysis, and portfolio optimization involving digital assets.

- Regulatory Filings: Includes official company disclosures, such as quarterly and annual reports (10-Q, 10-K), and other significant events (8-K filings). Regulatory filings are essential for fundamental analysis, event-driven trading, and financial sentiment extraction.

- Analyst Reports: Consists of investment opinions, earnings forecasts, and qualitative assessments from financial analysts. These resources are valuable for sentiment analysis, opinion aggregation, and modeling the impact of market expectations on asset prices.

- News Data: Covers financial news, press releases, and market commentary from a variety of media outlets. News data is critical for developing event-driven trading strategies, market volatility prediction, and detecting sentiment shifts in real-time.

- Social Media Data: Comprises user-generated content from platforms such as Twitter (X) and Reddit. Social media data enables the study of retail investor sentiment, information diffusion, and the dynamics of attention-driven market movements.

4.1.9. Discussion

- Real-Time and Adaptive Learning. Future models must be capable of online learning and rapid adaptation to changing market conditions, with architectures that support dynamic knowledge updates and feedback-driven improvement [373].

- Ethics, Fairness, and Regulatory Compliance. Research must prioritize fairness audits, bias mitigation strategies, and interpretability mechanisms to ensure that LLM-based systems meet ethical and legal standards in finance [398].

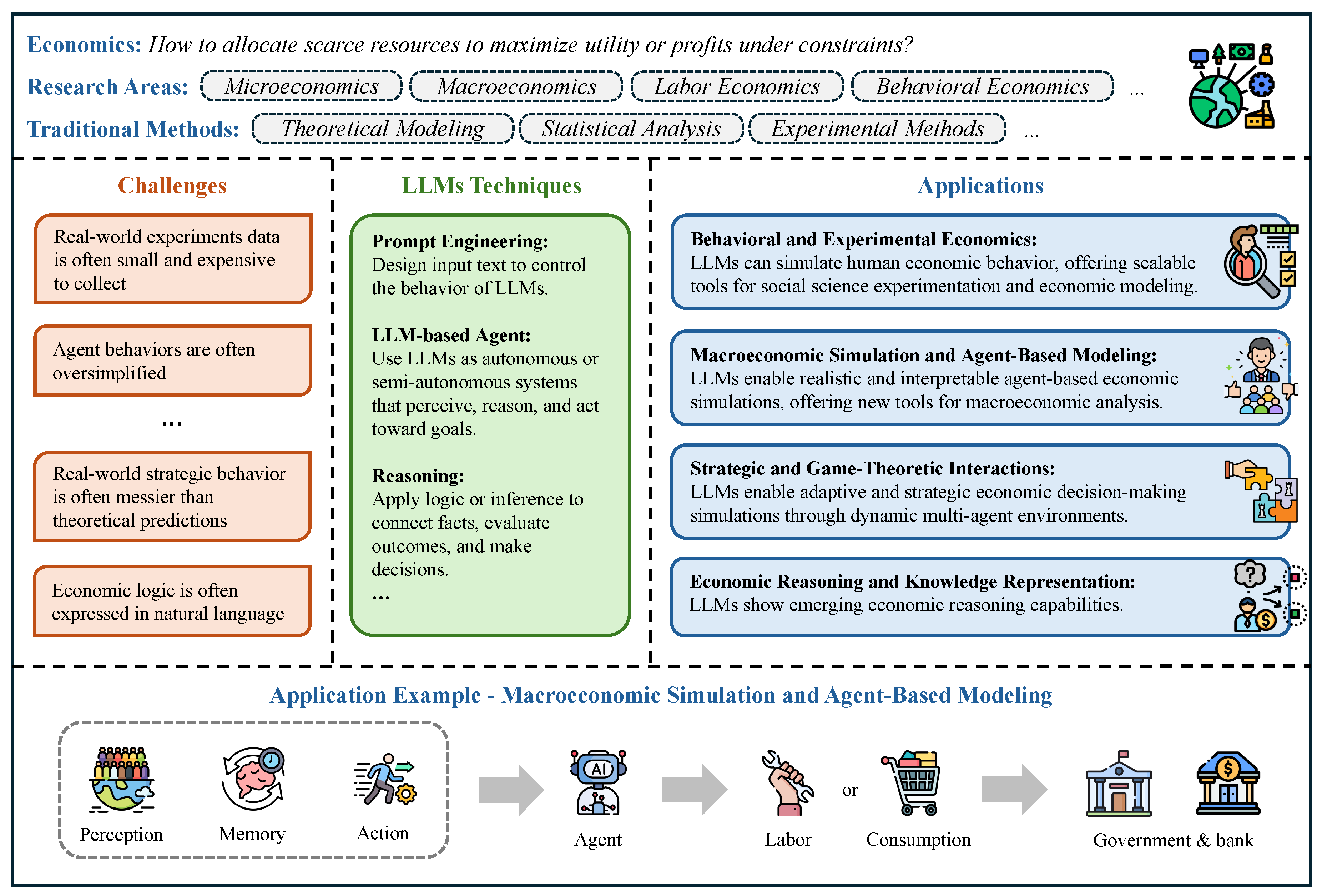

4.2. Economics

4.2.1. Overview

| Field | Key Insights and Contributions | Examples | Citations |

|---|---|---|---|

| Behavioral and Experimental Economics | LLMs can simulate human economic behavior by exhibiting rationality, personality traits, and behavioral biases, offering scalable tools for social science experimentation and economic modeling | Ross et al. [492]: Utility theory reveals LLMs’ behavioral biases across economic decision settings. Horton [493]: LLMs simulate economic agents, replicating human decisions in experiments. | [491,492,493,494] |

| Macroeconomic Simulation and Agent-Based Modeling | LLMs enable realistic, interpretable, and heterogeneous agent-based economic simulations by modeling complex decision-making, memory, perception, and policy responses, offering new tools for macroeconomic analysis and public policy evaluation | MLAB [495]: Multi-LLM agents simulate diverse economic responses for policy analysis. EconAgent [496]: LLM agents model macroeconomics with perception, memory, and decision modules. | [495,496,497] |

| Strategic and Game-Theoretic Interactions | LLMs enable robust, adaptive, and strategically nuanced economic decision-making simulations through dynamic multi-agent environments and standardized benchmarks | GLEE [489]: Economic game benchmarks evaluating LLM fairness, efficiency, and communication. Guo et al. [498]: LLM agents compete in dynamic games testing rationality and strategy. | [489,491,498] |

| Economic Reasoning and Knowledge Representation | LLMs show emerging economic reasoning capabilities through benchmarks and frameworks assessing causal, sequential, and logical inference | EconLogicQA [499]: Tests LLMs’ ability to sequence economic events logically, contextually. EconNLI [490]: Evaluates LLMs’ causal reasoning using premise-hypothesis economic event pairs. | [490,499,500] |

- Behavioral and Experimental Economics. This field studies how real people make decisions, often deviating from the rational “homo economicus” model. Experiments with games like the dictator, ultimatum, and trust games reveal biases such as fairness concerns and the endowment effect. LLMs complement these methods by simulating diverse decision behaviors and allowing rapid pre-testing of economic experiments.

- Macroeconomic Simulation and Agent-Based Modeling. ABMs simulate how individual agents interact to shape aggregate outcomes like inflation or unemployment. Unlike equilibrium-based models, they capture dynamic, bottom-up processes but often lack realistic human behavior. LLMs enrich ABMs by powering adaptive, communicative agents, bringing greater realism and flexibility to macroeconomic simulations.

- Strategic and Game-Theoretic Interactions. Game theory examines how outcomes depend on the choices of multiple agents, requiring competition, cooperation, and anticipation. Traditional approaches rely on simplified assumptions, limiting realism. LLMs enable agents with recursive reasoning and natural language interaction, offering richer simulations of strategic scenarios.

- Economic Reasoning and Knowledge Representation. Economic reasoning analyzes trade-offs under scarcity, while knowledge representation encodes concepts for computational use. Rule-based methods struggle with complexity and scalability. LLMs simulate reasoning in natural language and generalize across contexts, though they remain sensitive to prompt design and prone to oversimplification.

4.2.2. Behavioral and Experimental Economics

4.2.3. Macroeconomic Simulation and Agent-Based Modeling

4.2.4. Strategic and Game-Theoretic Interactions

4.2.5. Economic Reasoning and Knowledge Representation

4.2.6. Benchmarks

4.2.7. Discussion

- Structured Prompting and Experimental Design. Developing standardized, transparent prompting methodologies—analogous to experimental protocols—will be essential for ensuring replicability and robustness in LLM-driven economic experiments [492].

- Ethical Evaluation and External Validation. Establishing benchmarks, guidelines, and ethical frameworks for using LLMs as economic agents—alongside systematic validation against real human data—will be crucial for credible scientific practice.

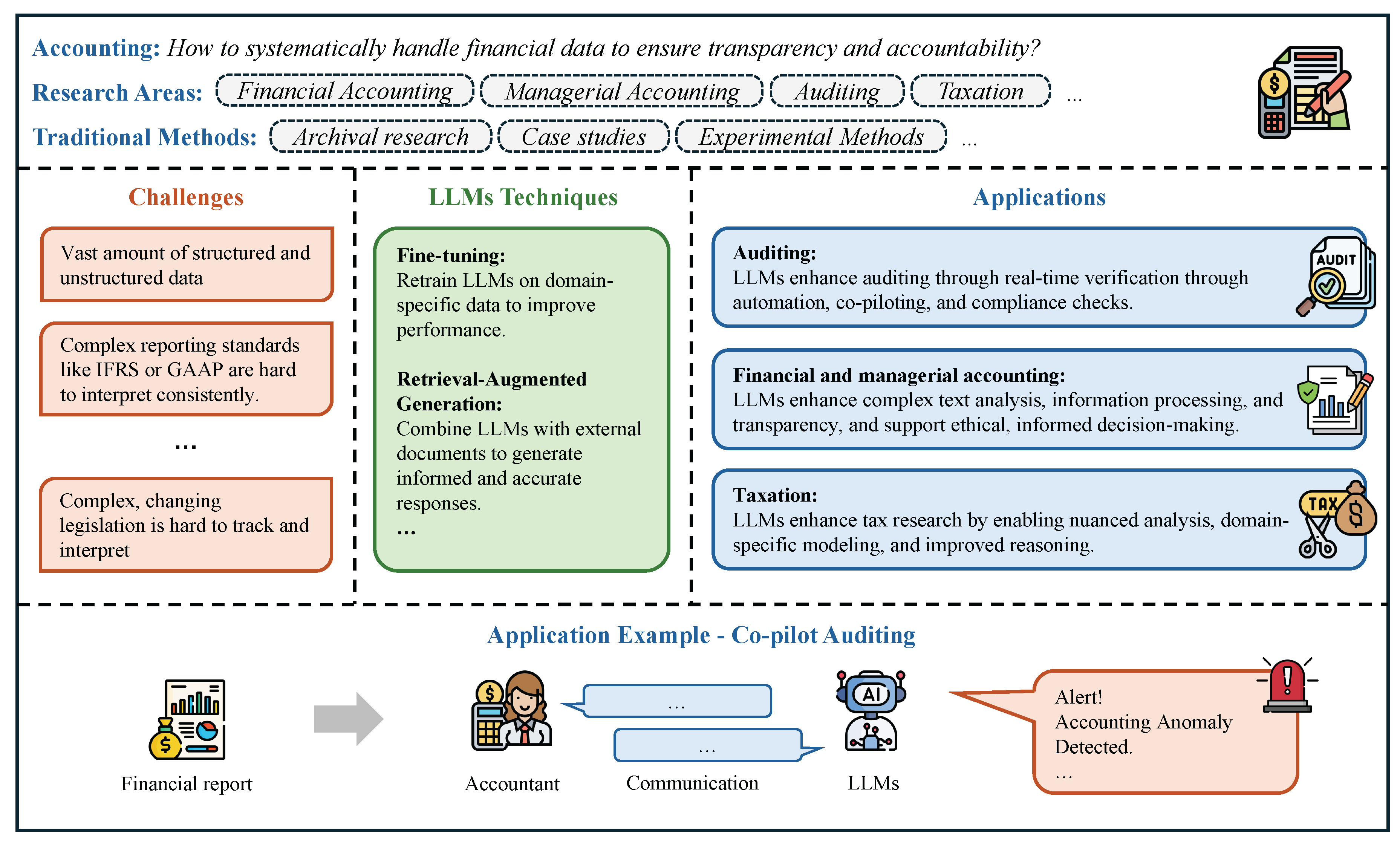

4.3. Accounting

4.3.1. Overview

- Auditing. Traditionally reliant on manual, sample-based checks, auditing struggles with rising data volumes and fraud complexity. LLMs can automate text analysis, flag anomalies, and expand audit coverage, enabling smarter AI-assisted audits while underscoring the need for transparency and safeguards.

- Financial and Managerial Accounting. Both functions are central to decision-making but increasingly burdened by complex disclosures and fragmented systems. LLMs help extract insights, streamline reporting, and convert unstructured data into actionable analysis, strengthening transparency, accuracy, and strategic value.

- Taxation. Taxation involves intricate laws and resource-constrained enforcement, with traditional systems often missing nuances in legal texts. LLMs can interpret tax codes, analyze unstructured filings, and support compliance and enforcement, offering new efficiency while raising questions of trust and adaptability.

| Field | Key Insights and Contributions | Examples | Citations |

|---|---|---|---|

| Auditing | LLMs enhance auditing by improving accuracy, efficiency, and real-time verification through automation, co-piloting, and compliance checks, while raising implementation and ethical challenges | Gu et al. [538]: Co-piloted auditing combines LLMs and humans for efficient audits. Berger et al. [539]: LLMs assess financial compliance, outperforming peers in regulatory audits. | [538,539,540,541,542,543,544,545,546] |

| Financial and Managerial Accounting | LLMs enhance accounting, reporting, and sustainability practices by automating complex text analysis, improving information processing, enabling transparency, and supporting ethical, informed decision-making across financial and ESG domains | De Villiers et al. [547]: AI reshapes sustainability reporting, raising greenwashing risks and governance questions. Föhr et al. [548]: LLMs audit sustainability reports using taxonomy-aligned prompt frameworks efficiently. | [547,548,549,550,551,552,553,554] |

| Taxation | LLMs enhance tax research, compliance, and enforcement by enabling nuanced analysis, firm-level measurement, domain-specific modeling, and improved reasoning through agent collaboration | PLAT [555]: PLAT tests LLMs’ tax reasoning under ambiguous penalty exemption scenarios. Alarie et al. [556]: LLMs assist tax research, but hallucinations limit reliable adoption. | [555,556,557,558,559] |

4.3.2. Auditing

4.3.3. Financial and Managerial Accounting

4.3.4. Taxation

4.3.5. Benchmarks

4.3.6. Discussion

- Hybrid Human-AI Systems. Emphasizing "co-piloted" models where human expertise remains central will ensure that LLMs complement rather than replace professional judgment, particularly in high-risk domains like auditing and taxation [538].

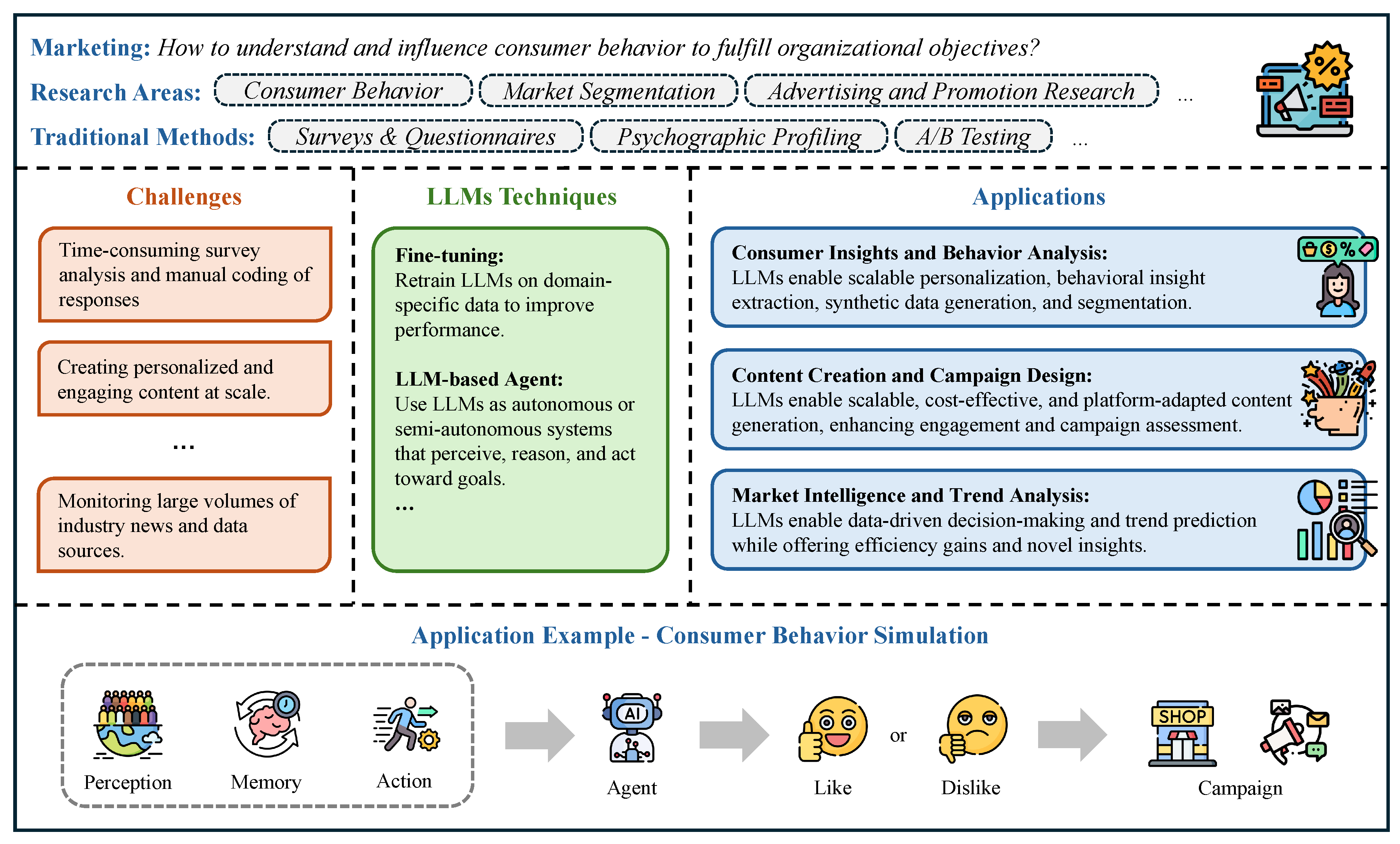

4.4. Marketing

4.4.1. Overview

| Field | Key Insights and Contributions | Examples | Citations |

|---|---|---|---|

| Consumer Insights and Behavior Analysis | LLMs enable scalable personalization, behavioral insight extraction, synthetic data generation, and segmentation, though challenges remain in faithfully replicating human preferences and ensuring ethical, accurate application | Li et al. [600]: LLMs embed surveys, cluster consumers, simulate chatbots for marketing. Goli & Singh [601]: LLMs mimic preferences poorly; chain-of-thought aids segmentation hypotheses. | [600,601,602,603,604,605,606] |

| Content Creation and Campaign Design | LLMs enable scalable, cost-effective, and platform-adapted content generation, enhancing engagement and campaign assessment while requiring human oversight for quality, ethics, and strategic alignment | Kasuga & Yonetani [607]: CXSimulator simulates campaign effects using LLM embeddings and behavior graphs. Wahid et al. [608]: Generative AI reshapes content marketing, raising engagement and ethical concerns. | [607,608,609,610,611,612,613] |

| Market Intelligence and Trend Analysis | LLMs enable scalable content creation, personalized engagement, data-driven decision-making, trend prediction, and rapid research replication, while offering efficiency gains and novel insights across digital channels | Yeykelis et al. [614]: LLM personas replicate media experiments, accelerating marketing research validation. Saputra et al. [615]: ChatGPT improves Instagram marketing using AIDA model for engagement. | [602,614,615,616,617] |

- Consumer Insights and Behavior Analysis. Consumer Insights and Behavior Analysis focuses on understanding the motivations behind consumer thoughts, feelings, and actions to inform effective marketing strategies. While traditional methods like surveys and interviews offer value, they often struggle with the scale and nuance of modern, unstructured data sources. LLMs are transforming this field by enabling scalable, nuanced analysis of language-rich data, offering deeper, real-time insights into consumer behavior.

- Content Creation and Campaign Design. Content Creation and Campaign Design are key pillars of marketing, combining creative storytelling with strategic planning to engage audiences and achieve business goals. Traditionally reliant on manual effort and intuition, this process has faced challenges in scalability, personalization, and real-time feedback. Today, LLMs are transforming how content is ideated, produced, and optimized, enhancing creativity, streamlining workflows, and enabling more dynamic, data-driven campaigns.

- Market Intelligence and Trend Analysis. Market Intelligence and Trend Analysis are essential for guiding strategic marketing decisions, helping businesses monitor competitors, anticipate consumer shifts, and navigate evolving market conditions. Traditionally grounded in surveys and expert insights, these methods often lag behind today’s fast-paced digital environment. LLMs are revolutionizing this space by enabling real-time analysis of vast, unstructured data sources, offering marketers faster, deeper, and more forward-looking insights to stay competitive and adaptive in a rapidly changing landscape.

4.4.2. Consumer Insights and Behavior Analysis

4.4.3. Content Creation and Campaign Design

4.4.4. Market Intelligence and Trend Analysis

4.4.5. Benchmarks

4.4.6. Discussion

- Hybrid Approaches Combining LLMs with Human Oversight. Structuring workflows where human judgment complements AI output will help mitigate risks of error, bias, and unethical deployment.

- Domain-Specific Fine-Tuning and Contextualization. Fine-tuning LLMs on marketing-specific corpora and continuously updating them with domain-relevant data can improve accuracy, nuance, and relevance in marketing applications [617].

- Benchmarking and Standardized Evaluation. Creating rigorous benchmarks for LLM performance in marketing tasks, such as synthetic persona realism, content personalization efficacy, and market trend prediction accuracy, will be vital for advancing scientific rigor.

- Explainability and Interpretability. Incorporating methods such as chain-of-thought prompting, citation grounding, and attribution tracing will enhance transparency and user trust in LLM-assisted marketing insights [602].

- Ethical Guidelines and Best Practices. Marketing researchers must proactively develop frameworks for ethical AI use, covering disclosure norms for AI-generated content, fairness in segmentation, and protection against manipulative targeting.

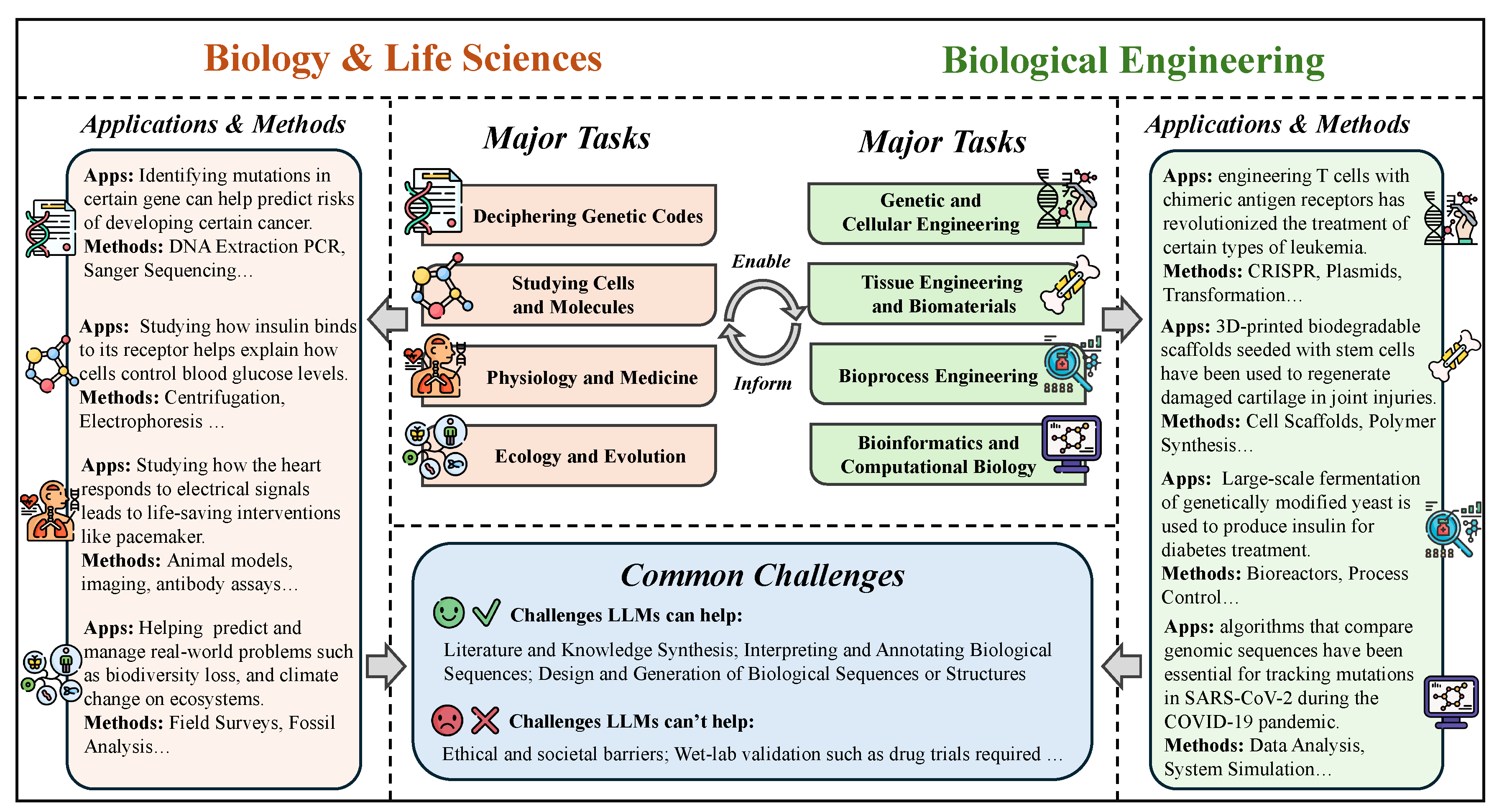

5. LLMs for Science and Engineering

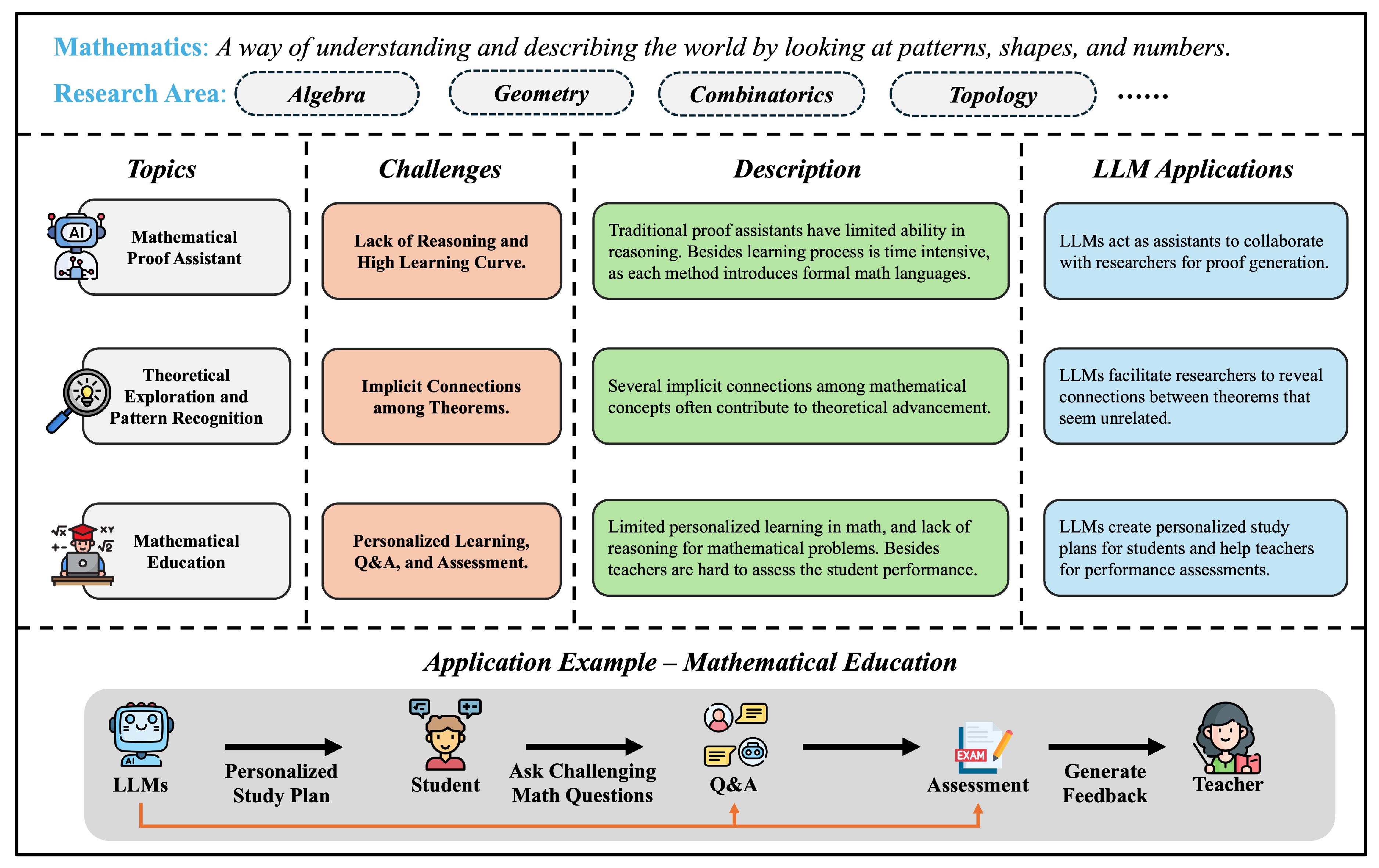

5.1. Mathematics

5.1.1. Overview

5.1.2. Mathematical Proof Assistant

- Advancing the hexagon packing problem by finding better solutions for arranging 11 and 12 hexagons inside a larger hexagon, surpassing human achievements after 16 years of stagnation.

- Making progress on the kissing number problem, a mathematical challenge unsolved for over 300 years [683].

| Method | Model Size | Sample Budget | Accuracy |

| Traditional Proof Assistants | |||

| Curriculum Learning [685] | 837M | 34.5% | |

| 837M | 36.6% | ||

| Proof Artifact Co-Training [658] | 837M | 24.6% | |

| 837M | 29.2% | ||

| Hypertree Proof Search [660] | 600M | 41.0% | |

| LLMs-based Methods | |||

| DeepSeekMath [686] | 7B | 128 | 27.5% |

| 7B | Cumulative | 52.0% | |

| 7B | Greedy | 30.0% | |

| DeepSeek-Prover [687] | 7B | 64 | 46.3% |

| 7B | 128 | 46.3% | |

| 7B | 8,192 | 48.8% | |

| 7B | 65,536 | 50.0% | |

5.1.3. Theoretical Exploration and Pattern Recognition

5.1.4. Mathematical Education

5.1.5. Benchmarks

5.1.6. Discussion

- Enhancing Mathematical Reasoning. Developing novel architectures and training recipes that enable LLMs to move beyond pattern matching toward genetic models. This could involve incorporating symbolic computation capabilities or training on datasets designed to specifically test and improve reasoning skills.

- Improving Reliability and Accuarcy. Investigating methods to reduce hallucinations and errors in LLM outputs for mathematical tasks. This could involve techniques like self-verification, the use of external validators like theorem provers, or reinforcement learning from human feedback focused on accuracy.

- Effective Educational Tools. There is a need for designing and evaluating LLMs-empowered tools that effectively support mathematics learning without compromising conceptual understanding or fundamental skill development. This includes exploring the utilization of LLMs in personalized tutoring, generating diverse explanations, and creating engaging problem-solving activities.

- Integration Strategies Evaluation. Exploring optimal strategies for incorporating LLMs into conventional educational practices to develop hybrid learning environments that capitalize on the advantages of both methodologies is crucial. Additionally, it is vital to comprehend how to effectively instruct educators in the utilization of LLMs as pedagogical tools.

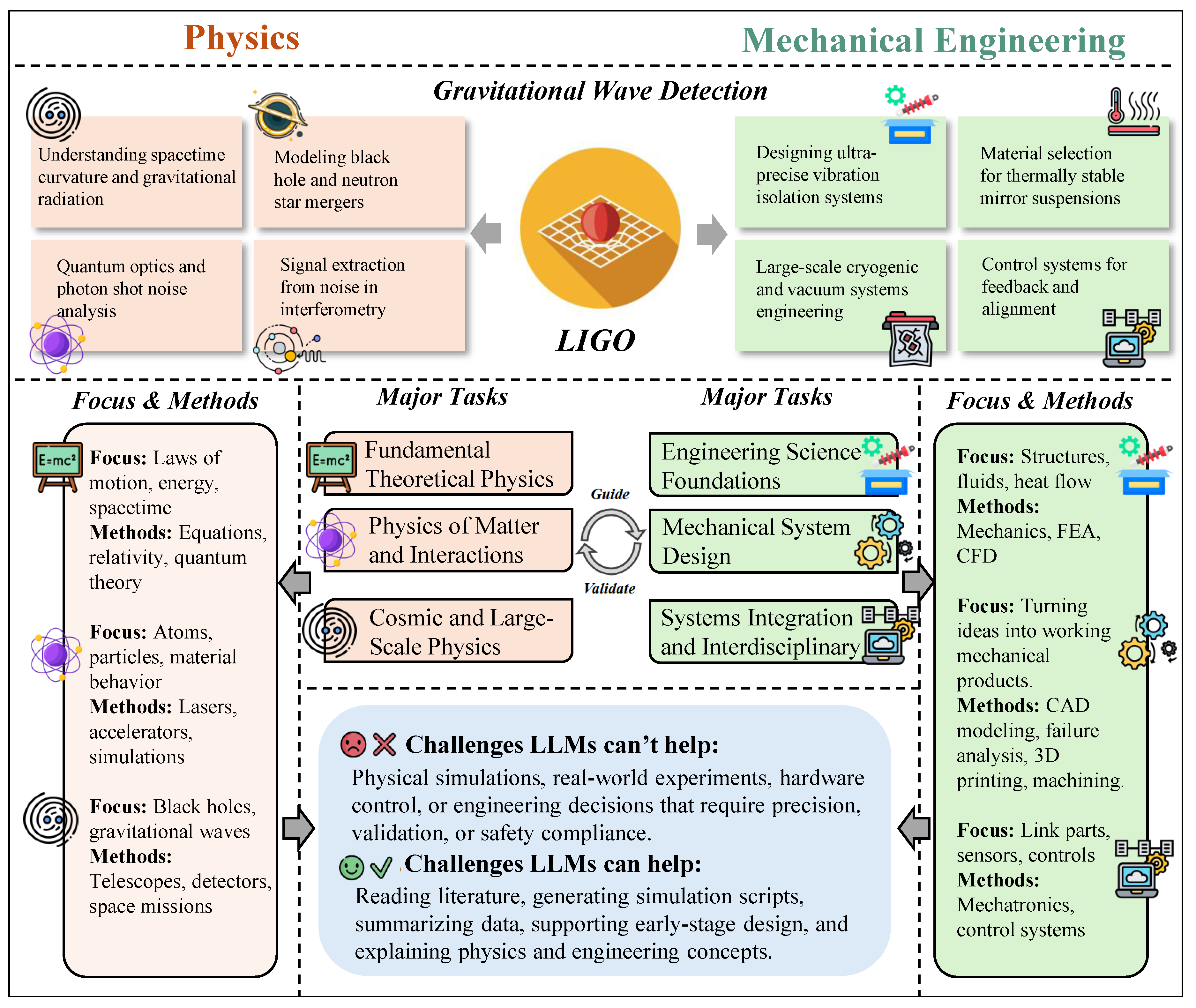

5.2. Physics and Mechanical Engineering

5.2.1. Overview

- Complexity of Multiphysics Coupling and Governing Equations. Physical and mechanical systems are often governed by a series of highly coupled partial differential equations (PDEs), involving nonlinear dynamics, continuum mechanics, thermodynamics, electromagnetism, and quantum interactions [734,743]. Solving such systems requires professional numerical solvers, high-fidelity discretization techniques, and physics-informed modeling assumptions. Although LLMs can retrieve relevant equations or suggest approximate forms, they are incapable of deriving physical laws, ensuring conservation principles, or performing accurate numerical simulations.

- Simulation Accuracy and Model Calibration. Accurate mechanical design and physical predictions typically rely on high-fidelity simulations such as finite element analysis (FEA), computational fluid dynamics (CFD), or multiphysics modeling [744,745]. These simulations demand precise geometry input, boundary conditions, material models, and experimental validation. LLMs may assist in interpreting simulation reports or proposing modeling strategies, but they lack the resolution, numerical rigor, and feedback integration necessary to execute or validate such models.

- Experimental Prototyping and Hardware Integration. Engineering innovations ultimately require validation through physical experiments—building prototypes, tuning actuators, installing sensors, and measuring performance under dynamic conditions [746,747]. These tasks depend on laboratory facilities, fabrication tools, and hands-on experimentation, all of which are beyond the operational scope of LLMs. While LLMs can help generate test plans or documentation, they cannot replace real-world testing or iterative hardware development.

- Materials and Manufacturing Constraints. Real-world engineering designs must account for constraints such as thermal stress, fatigue life, manufacturability, and cost-efficiency [748]. Addressing these challenges often relies on materials testing, manufacturing standards, and domain experience in processes like welding, casting, and additive manufacturing. LLMs lack access to real-time physical data and material behavior, and thus cannot support tradeoff decisions in design or production.

- Ethical, Safety, and Regulatory Considerations. From biomedical devices to autonomous systems, mechanical engineers must weigh ethical impacts, user safety, and legal compliance [749]. Although LLMs can summarize policies or regulatory codes, they are not equipped to make decisions involving responsibility, risk evaluation, or normative judgment—elements essential for deploying certified, real-world systems.

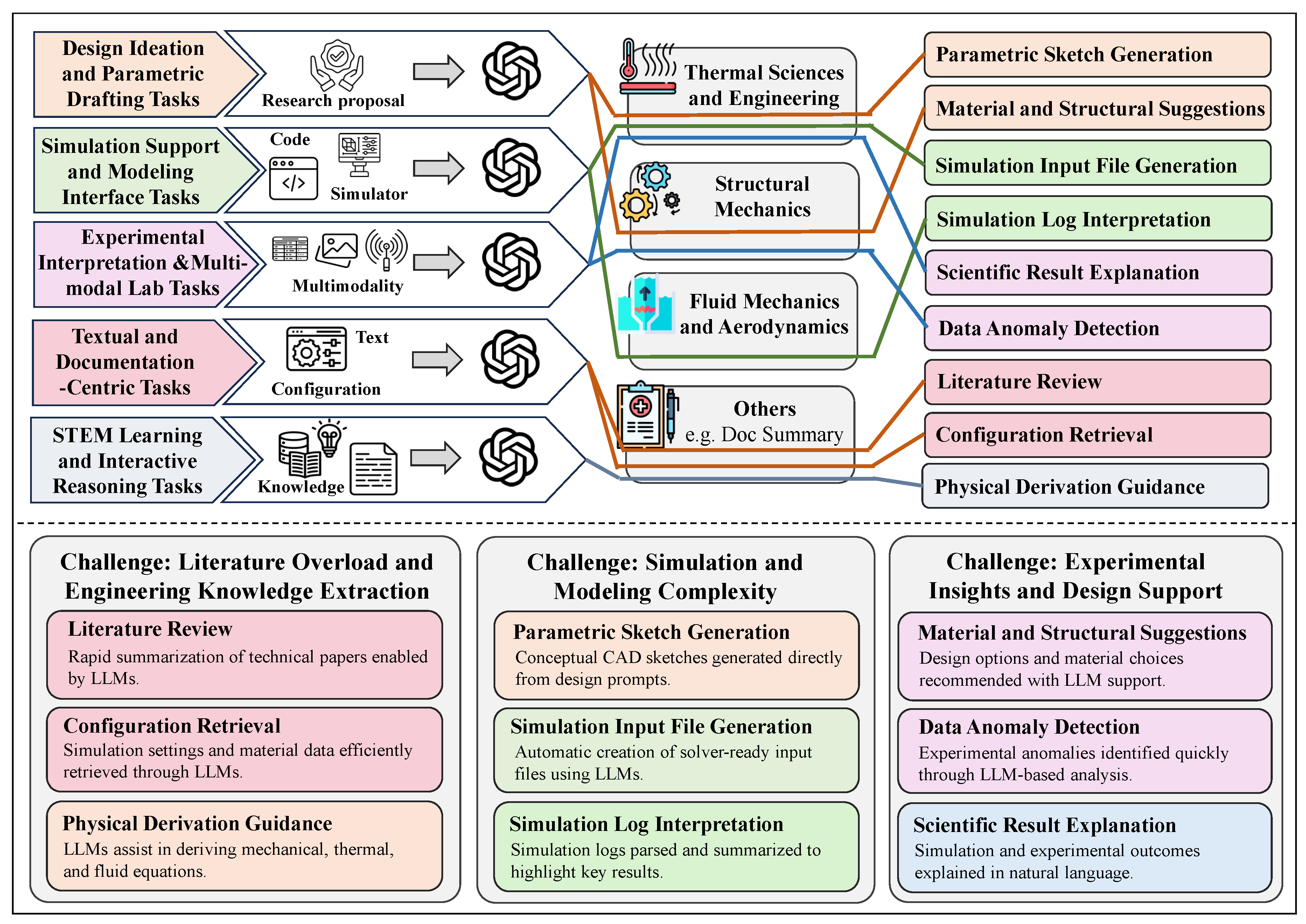

- Although current LLMs remain limited in core tasks such as physical modeling and experimental validation, they have shown growing potential in assisting a variety of supporting tasks in physics and mechanical engineering—particularly in knowledge integration, document drafting, design ideation, and educational support:

- Literature Review and Standards Lookup. Both disciplines rely heavily on technical documents such as material handbooks, design standards, experimental protocols, and scientific publications. LLMs can significantly accelerate the literature review process by extracting key information about theoretical models, experimental conditions, or engineering parameters. For instance, an engineer could use an LLM to compare different welding codes, retrieve thermal fatigue limits of materials, or summarize applications of a specific mechanical model [750,751].

- Assisting with Simulation and Test Report Interpretation. In simulations such as finite element analysis (FEA), computational fluid dynamics (CFD), or structural testing, LLMs can help parse simulation logs, identify setup issues, or generate summaries of experimental findings. When integrated with domain-specific tools, LLMs may even assist in generating simulation input files, interpreting outliers in results, or recommending appropriate post-processing techniques [752,753].

- Supporting Conceptual Design and Parametric Exploration. During early-stage mechanical design or material selection, LLMs can suggest structural concepts, propose parameter combinations, or retrieve examples of similar engineering cases. For instance, given a prompt like “design a spring for high-temperature fatigue conditions,” the model might generate candidate materials, geometric options, and common failure modes [754,755].

- Engineering Education and Learning Support. Education in physics and mechanical engineering involves both theoretical understanding and hands-on application. LLMs can generate step-by-step derivations, support simulation-based exercises, or simulate simple lab setups (e.g., free fall, heat conduction, beam deflection). They can also assist with terminology explanation or provide example problems to enhance interactive and self-guided learning [756,757].

- Textual and Documentation-Centric Tasks. LLMs are particularly effective in processing technical documents, engineering standards, lab reports, and scientific literature. For instance, Polverini and Gregorcic demonstrated how LLMs can support physics education by extracting and explaining key information from conceptual texts [758], while Harle et al. highlighted their use in organizing and generating instructional materials for engineering curricula [759].

- Design Ideation and Parametric Drafting Tasks. In early-stage design and manufacturing workflows, LLMs can transform natural language prompts into CAD sketches, material recommendations, and parameter ranges. The MIT GenAI group systematically evaluated the capabilities of LLMs across the entire design-manufacture pipeline [760], and Wu et al. introduced CadVLM, a multimodal model that translates linguistic input into parametric CAD sketches [755].

- Simulation-Support and Modeling Interface Tasks. Although LLMs cannot replace high-fidelity physical simulation, they can assist in generating model input files, translating specifications into solver-ready formats, and summarizing results. Ali-Dib and Menou explored the reasoning capacity of LLMs in physics modeling tasks [761], while Raissi et al.’s PINN framework demonstrated how language-driven architectures can help solve nonlinear partial differential equations by encoding physics into neural representations [762].

- Experimental Interpretation and Multimodal Lab Tasks. In experimental workflows, LLMs can support data summarization, anomaly detection, and textual explanation of multimodal results. Latif et al. proposed PhysicsAssistant, an LLM-powered robotic learning system capable of interpreting physics lab scenarios and offering real-time feedback to students and instructors [763].

- STEM Learning and Interactive Reasoning Tasks. LLMs are increasingly integrated into educational settings to guide derivations, answer conceptual questions, and simulate physical systems. Jiang and Jiang introduced a tutoring system that enhanced high school students’ understanding of complex physics concepts using LLMs [756], while Polverini’s work further confirmed the model’s utility in supporting structured, interactive learning [758].

5.2.2. Textual and Documentation-Centric Tasks

5.2.3. Design Ideation and Parametric Drafting Tasks

5.2.4. Simulation-support and Modeling Interface Tasks

5.2.5. Experimental Interpretation and Multimodal Lab Tasks

5.2.6. STEM Learning and Interactive Reasoning Tasks

| Type of Task | Benchmarks | Introduction |

|---|---|---|

| CAD and Geometric Modeling | ABC Dataset [773] DeepCAD [774] Fusion 360 Gallery [775] CADBench [776] | The ABC Dataset, DeepCAD, and Fusion 360 Gallery together provide a comprehensive foundation for studying geometry-aware language and generative models. While ABC emphasizes clean, B-Rep-based CAD structures suitable for geometric deep learning, DeepCAD introduces parameterized sketches tailored for inverse modeling tasks. Fusion 360 Gallery complements these with real-world user-generated modeling histories, enabling research on sequential CAD reasoning and practical design workflows. CADBench further supports instruction-to-script evaluation by providing synthetic and real-world prompts paired with CAD programs. It serves as a high-resolution benchmark for measuring attribute accuracy, spatial correctness, and syntactic validity in code-based CAD generation. |

| Finite Element Analysis (FEA) | FEABench [? ] | FEABench is a purpose-built benchmark that targets the simulation domain, offering structured prompts and tasks for evaluating LLM performance in generating and understanding FEA input files. It serves as a critical testbed for bridging the gap between symbolic physical language and numerical simulation. |

| CFD and Fluid Simulation | OpenFOAM Cases [777] | The OpenFOAM example case library provides a curated set of fluid dynamics simulation setups, widely used for training models to understand solver configuration, mesh generation, and boundary condition specifications in CFD contexts. |

| Material Property Retrieval | MatWeb [778] | MatWeb is a widely-used material database containing thermomechanical and electrical properties of thousands of substances. It plays an essential role in supporting downstream simulation tasks such as material selection, constitutive modeling, and multi-physics simulation setup. |

| Physics Modeling and PDE Learning | PDEBench [779] PHYBench [780] | PDEBench and PHYBench collectively advance the evaluation of LLMs in physical reasoning and numerical modeling. PDEBench focuses on classical PDEs like heat transfer, diffusion, and fluid flow in the context of scientific machine learning, while PHYBench introduces a broader spectrum of perception and reasoning tasks grounded in physical principles. Together, they support benchmarking across symbolic reasoning, equation prediction, and simulation-aware generation. |

| Fault Diagnosis and Health Monitoring | NASA C-MAPSS [781] | NASA C-MAPSS provides real-world time-series degradation data from turbofan engines, serving as a benchmark for predictive maintenance, anomaly detection, and reliability modeling in aerospace and mechanical systems. |

5.2.7. Benchmarks

5.2.8. Discussion

- Simulation-Augmented Dataset Generation. Integrating LLMs with numerical solvers in a simulator-in-the-loop framework allows the generation of language-input–simulation-output triplets at scale. This enables supervised training, fine-tuning, and RLHF strategies grounded in physically valid feedback.

- Task Decomposition and Geometric Reformulation. Decomposing CAD workflows into modular sub-tasks (e.g., sketching, constraints, extrusion) and reformulating modeling problems as geometric reasoning tasks can align better with LLM capabilities and improve interpretability.

- Multimodal and Multi-agent Integration. Developing LLM systems that can call CAD tools, solvers, and databases autonomously—as seen in MechAgents or LangSim—will allow LLMs to reason, plan, and act across tools in complex design and simulation pipelines.

- Standardized Benchmarks and Evaluation. Creating large-scale, task-diverse, and format-unified benchmark datasets (e.g., combining natural language prompts, simulation files, and result summaries) will accelerate model evaluation and fair comparison in this field.

- Physics Validation and Safety Assurance. Embedding physical rule checkers and verification mechanisms into generation loops can help enforce unit consistency, structural validity, and simulation compatibility, ensuring that outputs are not just syntactically correct but physically plausible.

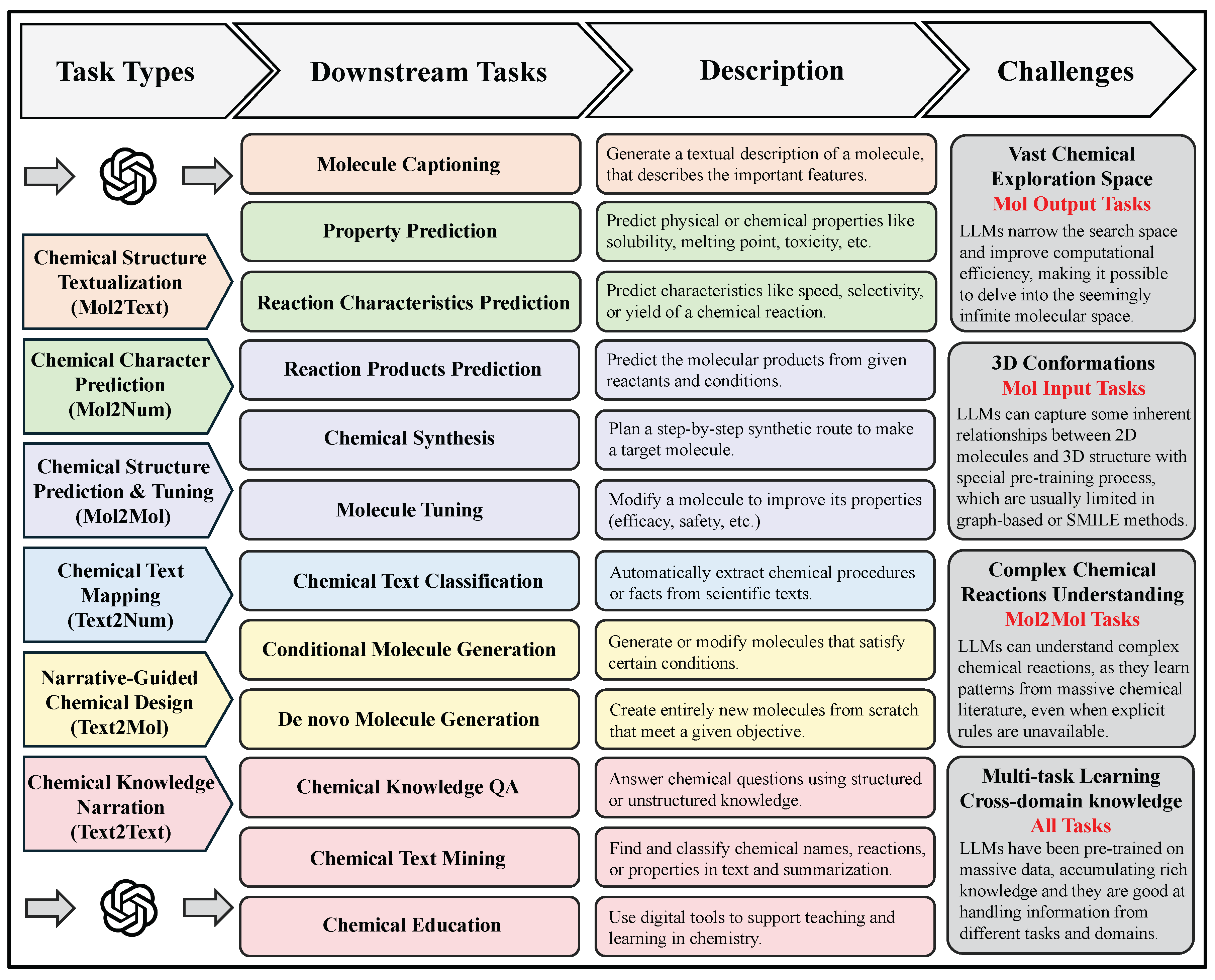

5.3. Chemistry and Chemical Engineering

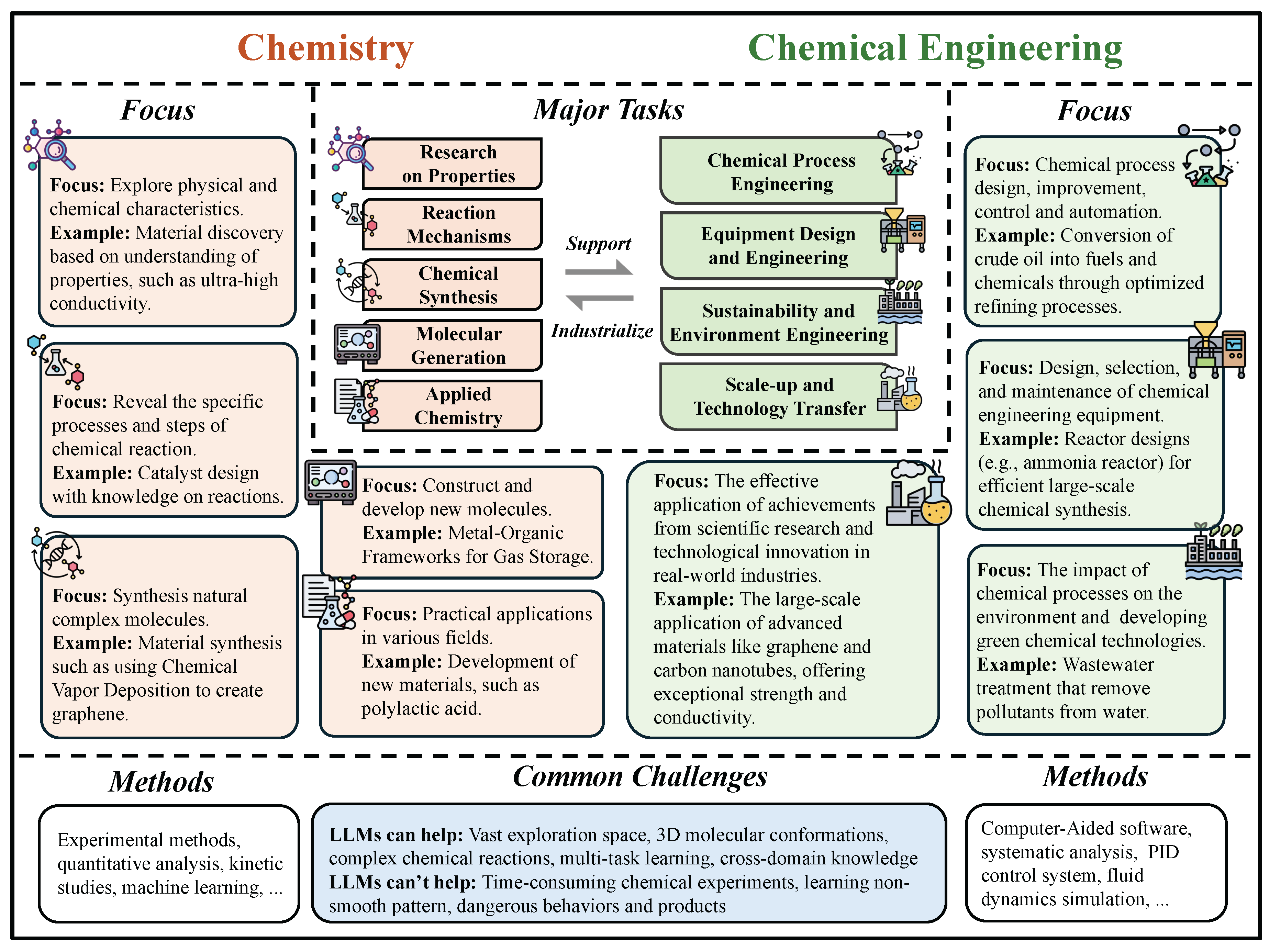

5.3.1. Overview

| Type of Task | Subtasks | Insights and Contributions | Key Models | Citations |

|---|---|---|---|---|

| Chemical Structure Textualization | Molecular Captioning | LLMs, by learning structure–property patterns from data, generate meaningful molecular captions, thus improving interpretability and aiding chemical understanding. | MolT5: generates concise captions by mapping substructures to descriptive phrases; MolFM: uses fusion of molecular graphs and text for richer narrative summaries. | [887,888,889,890,891,892,893,894,895,896,897,898,899,900,901,902,892] |

| Chemical characteristics prediction | Property Prediction | LLMs, by capturing complex structure–property relationships from molecular representations, enable accurate property prediction, thereby providing mechanistic insights and guiding the rational design of molecules with desired functions. | SMILES-BERT: self-supervised SMILES pretraining for robust property inference; ChemBERTa: masked SMILES modeling boosting solubility and toxicity predictions. | [903,904,905,906,907,908,909,910,911,912,913,914] |

| Reaction Characteristics Classification | LLMs, by modeling the relationships between reactants, conditions, and outcomes from large reaction datasets, can accurately predict reaction types, yields, and rates, thereby uncovering hidden patterns in chemical reactivity and enabling chemists to optimize reaction conditions and select efficient synthetic routes with greater confidence. | RXNFP: fingerprint-transformer accurately classifies reaction types; YieldBERT: fine-tuned on yield data to predict experimental yields within 10% error. | [863,915,916,917,918,919,920,921,922,923,924,925,926,927] | |

| Chemical Structure Prediction & Tuning | Reaction Products Prediction | LLMs, by learning underlying chemical transformations from reaction data, can accurately predict reaction products, thus uncovering implicit reaction rules and supporting more efficient and informed synthetic planning. | Molecular Transformer: state-of-the-art SMILES-to-product translation; | [921,923,924,925,926,928,929,930,931,932,933] |

| Chemical Synthesis | LLMs, by capturing patterns in reaction sequences and chemical logic from large datasets, can suggest plausible synthesis routes and rationales, thereby enhancing human understanding of synthetic strategies and accelerating discovery. | Coscientist: GPT-4-driven planning and robotic execution. | [872,932,934,935,936,937,938,939,940,941,942,943,944,945,946,947,948,949,950,951] | |

| Molecule Tuning | LLMs, by modeling structure–property relationships across diverse molecular spaces, enable targeted molecule tuning to optimize desired properties, thereby providing insights into molecular design and accelerating the development of functional compounds. | DrugAssist: uses LLM prompts for ADMET property optimization; ControllableGPT: enables constraint-based molecular modifications. | [952,953,954,955,956,957,958,959,960,961,962,963,964,964] | |

| Chemical Text Mapping | Chemical Text Mining | LLMs, by capturing semantic and contextual nuances in chemical literature, enable accurate classification and regression in text mining tasks, thereby uncovering trends, predicting research outcomes, and transforming unstructured texts into actionable scientific insights. | Fine-tuned GPT: specialized for chemical classification and regression; ChatGPT: adapts zero-shot classification of chemical text. | [965,966,967,968,969,970,971,972,973,974,975,976,977,978] |

| Narrative-Guided Chemical Design | De Novo Molecule Generation | LLMs, by learning chemical syntax and patterns from large molecular corpora, enable de novo molecule generation with realistic and diverse structures, thus offering insights into unexplored chemical space and accelerating early-stage drug and material discovery. | ChemGPT: unbiased SMILES sampling for novel molecules; MolecuGen: scaffold-guided generative modeling for improved novelty. | [887,891,979,980,981,982,983,984,985,986,987,988,989,990,991,992] |

| Conditional Molecule Generation | LLMs, by conditioning molecular generation on desired properties or scaffolds, enable the design of compounds that meet specific criteria, thereby offering insights into structure–function relationships and streamlining the discovery of tailored molecules. | GenMol: multi-constraint text-driven fragment remasking. | [887,889,931,957,983,984,986,987,988,993,994,995,996] | |

| Chemical Knowledge Narration | Chemical Knowledge QA | LLMs, by integrating extensive chemical literature and diverse databases, can accurately address complex chemical knowledge questions, thereby uncovering valuable insights and enabling more informed, accelerated research and decision-making. | ChemGPT: conditional SMILES generation for property-specific tuning; ScholarChemQA: domain-specific QA fine-tuned on scholarly chemistry data. | [863,924,997,998,999,1000,1001,1002,1003,1004,1005,1006,1007,1008] |

| Chemical Text Mining | LLMs, by understanding and extracting structured information from unstructured chemical texts, enable efficient chemical text mining, thereby revealing hidden knowledge, facilitating data-driven research, and accelerating the discovery of relationships across literature. | ChemBERTa: BERT-based model fine-tuned for chemical text classification. SciBERT: pretrained on scientific text including chemical literature for robust retrieval. | [965,966,973,976,1009,1010,1011,1012,1013,1014] | |

| Chemical Education | LLMs, by generating intuitive explanations and answering complex queries in natural language, support chemical education by making abstract concepts more accessible, thereby enhancing student understanding and promoting more interactive, personalized learning experiences. | MetaTutor: LLM-based metacognitive tutor for chemistry learners. | [997,998,1015,1016,1017,1018,1019,1020,1021,1022] |

5.3.2. Chemical Structure Textualization

5.3.3. Chemical Characteristics Prediction

5.3.4. Chemical Structure Prediction and Tuning

5.3.5. Chemical Text Mapping

5.3.6. Property-Directed Chemical Design

5.3.7. Chemical Knowledge Narration

5.3.8. Benchmarks

| Model (source) | Classification (ROC-AUC ↑) | Regression (RMSE ↓) | ||||||

|---|---|---|---|---|---|---|---|---|

| BACE | BBBP | HIV | Tox21 | SIDER | ESOL | FreeSolv | Lipo | |

| MolBERT | 0.866 | 0.762 | 0.783 | — | — | 0.531 | 0.948 | 0.561 .[1] |

| ChemBERTa-2 | 0.799 | 0.728 | — | — | — | 0.889 | 1.363 | 0.798[2] |

| BARTSmiles | 0.705 | 0.997† | 0.851 | 0.825 | 0.745 | — | — | —[3] |

| MolFormer-XL | 0.690 | 0.948 | 0.847 | — | 0.882† | — | — | 0.937†[3] |

| ImageMol | — | — | 0.814 | — | — | — | — | —[4] |