Submitted:

15 October 2025

Posted:

16 October 2025

You are already at the latest version

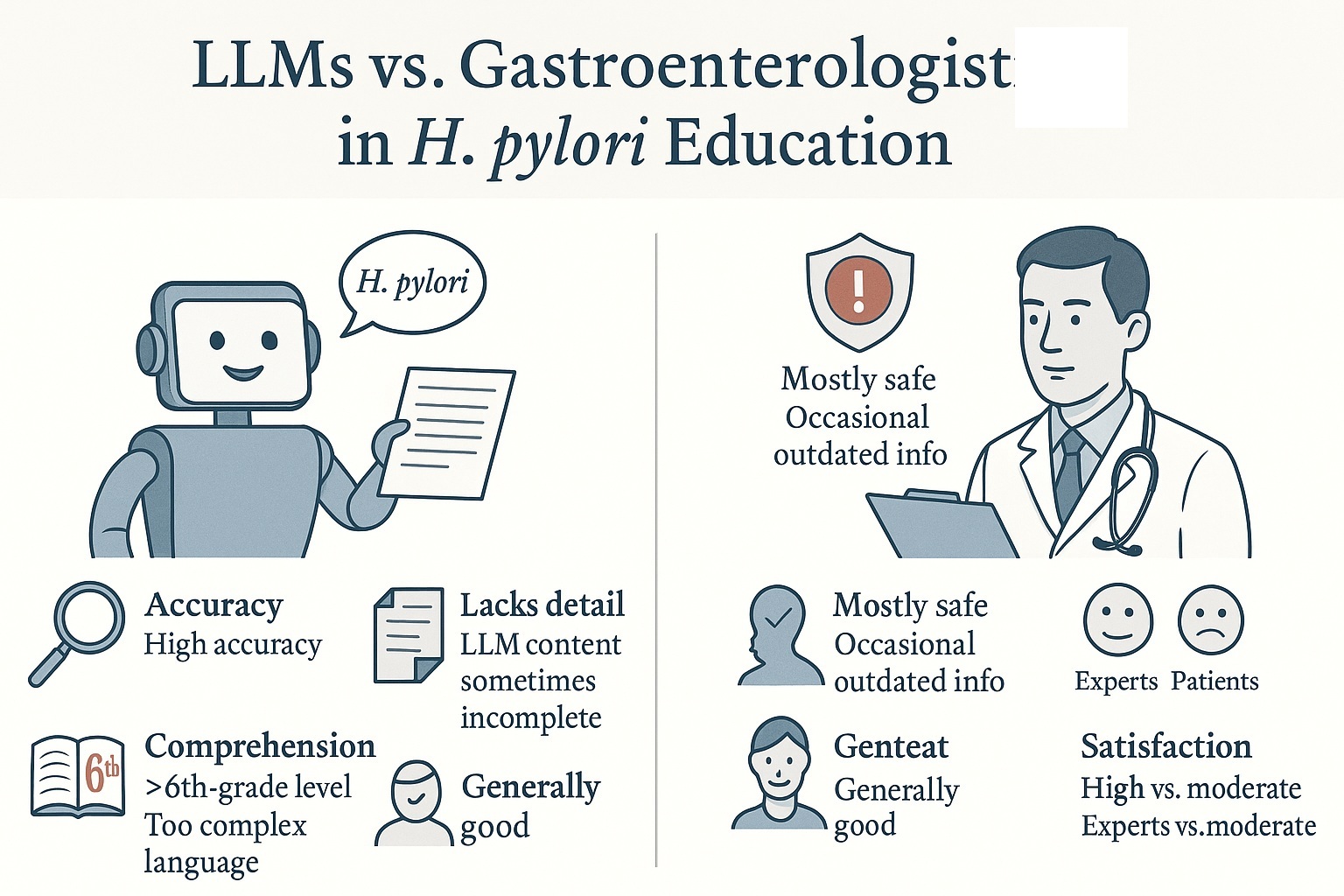

Abstract

Keywords:

1. Introduction

2. Methods

3. Results

3.1. Accuracy

3.2. Completeness

3.3. Readability

3.4. Patient Comprehension

3.5. Safety

4. Discussion

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Bray, F.; Laversanne, M.; Sung, H.; Ferlay, J.; Siegel, R.L.; Soerjomataram, I.; Jemal, A. Global cancer statistics 2022: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. CA Cancer J Clin 2024, 74, 229–263. [Google Scholar] [CrossRef] [PubMed]

- de Martel, C.; Georges, D.; Bray, F.; Ferlay, J.; Clifford, G.M. Global burden of cancer attributable to infections in 2018: a worldwide incidence analysis. Lancet Glob Health 2020, 8, e180–e190. [Google Scholar] [CrossRef] [PubMed]

- Zha, J.; Li, Y.Y.; Qu, J.Y.; Yang, X.X.; Han, Z.X.; Zuo, X. Effects of enhanced education for patients with the Helicobacter pylori infection: A systematic review and meta-analysis. Helicobacter 2022, 27, e12880. [Google Scholar] [CrossRef] [PubMed]

- Hafiz, T.A.; D'Sa, J.L.; Zamzam, S.; Visbal Dionaldo, M.L.; Aldawood, E.; Madkhali, N.; Mubaraki, M.A. The Effectiveness of an Educational Intervention on Helicobacter pylori for University Students: A Quasi-Experimental Study. J Multidiscip Healthc 2023, 16, 1979–1988. [Google Scholar] [CrossRef] [PubMed]

- Association, A.M. Health literacy and patient safety: help patients understand. Available online: https://www.ama-assn.org/sites/ama-assn.org/files/corp/media-browser/public/health-literacy/ama-health-literacy-patient-safety-2007.

- Iqbal, U.; Tanweer, A.; Rahmanti, A.R.; Greenfield, D.; Lee, L.T.; Li, Y.J. Impact of large language model (ChatGPT) in healthcare: an umbrella review and evidence synthesis. J Biomed Sci 2025, 32, 45. [Google Scholar] [CrossRef] [PubMed]

- Berry, P.; Dhanakshirur, R.R.; Khanna, S. Utilizing large language models for gastroenterology research: a conceptual framework. Therap Adv Gastroenterol 2025, 18, 17562848251328577. [Google Scholar] [CrossRef] [PubMed]

- Hu, Y.; Lai, Y.; Liao, F.; Shu, X.; Zhu, Y.; Du, Y.Q.; Lu, N.H.; National Clinical Research Center for Digestive, D. Assessing Accuracy of ChatGPT on Addressing Helicobacter pylori Infection-Related Questions: A National Survey and Comparative Study. Helicobacter 2024, 29, e13116. [Google Scholar] [CrossRef] [PubMed]

- Ye, Y.; Zheng, E.D.; Lan, Q.L.; Wu, L.C.; Sun, H.Y.; Xu, B.B.; Wang, Y.; Teng, M.M. Comparative evaluation of the accuracy and reliability of ChatGPT versions in providing information on Helicobacter pylori infection. Front Public Health 2025, 13, 1566982. [Google Scholar] [CrossRef] [PubMed]

- Du, R.C.; Zhu, Y.C.; Xiao, Y.T.; Yang, B.N.; Lai, Y.K.; Zhou, Z.X.; Deng, H.; Shu, X.; Lu, N.H.; Zhu, Y.; et al. Assessing the Capabilities of Novel Open-Source Artificial Intelligence-DeepSeek in Helicobacter pylori-Related Queries. Helicobacter 2025, 30, e70045. [Google Scholar] [CrossRef] [PubMed]

- Lai, Y.; Liao, F.; Zhao, J.; Zhu, C.; Hu, Y.; Li, Z. Exploring the capacities of ChatGPT: A comprehensive evaluation of its accuracy and repeatability in addressing helicobacter pylori-related queries. Helicobacter 2024, 29, e13078. [Google Scholar] [CrossRef] [PubMed]

- Kong, Q.Z.; Ju, K.P.; Wan, M.; Liu, J.; Wu, X.Q.; Li, Y.Y.; Zuo, X.L.; Li, Y.Q. Comparative analysis of large language models in medical counseling: A focus on Helicobacter pylori infection. Helicobacter 2024, 29, e13055. [Google Scholar] [CrossRef] [PubMed]

- Zeng, S.; Kong, Q.; Wu, X.; Ma, T.; Wang, L.; Xu, L.; Kou, G.; Zhang, M.; Yang, X.; Zuo, X.; et al. Artificial Intelligence-Generated Patient Education Materials for Helicobacter pylori Infection: A Comparative Analysis. Helicobacter 2024, 29, e13115. [Google Scholar] [CrossRef] [PubMed]

- Gao, Z.; Ge, J.; Xu, R.; Chen, X.; Cai, Z. Potential application of ChatGPT in Helicobacter pylori disease relevant queries. Front Med (Lausanne) 2024, 11, 1489117. [Google Scholar] [CrossRef] [PubMed]

- Dore, M.P.; Merola, E.; Pes, G.M. Advances and future perspectives in the pharmacological treatment of Helicobacter pylori infection: Taking advantage from artificial intelligence. Clin Res Hepatol Gastroenterol 2025, 49, 102689. [Google Scholar] [CrossRef] [PubMed]

| Study | Type of Study | AI Models Analyzed |

|---|---|---|

| "Assessing Accuracy of ChatGPT on Addressing Helicobacter pylori Infection- Related Questions: A National Survey and Comparative Study" (Hu et al., 2024) | National Survey and Comparative Study | ChatGPT3.5 and ChatGPT4 |

| "Comparative analysis of large language models in medical counseling: A focus on Helicobacter pylori infection" (Kong et al., 2024) | Comparative Analysis | ChatGPT 4, ChatGPT 3.5, and ERNIE Bot 4.0 |

| "Exploring the capacities of ChatGPT: A comprehensive evaluation of its accuracy and repeatability in addressing helicobacter pylori- related queries" (Lai et al., 2024) | Observational Study | ChatGPT-3.5 |

| "Artificial Intelligence- Generated Patient Education Materials for Helicobacter pylori Infection: A Comparative Analysis" (Zeng et al., 2024) | Comparative Analysis | Bing Copilot, Claude 3 Opus, Gemini Pro, ChatGPT-4, and ERNIE Bot 4.0 |

| "Assessing the Capabilities of Novel Open- Source Artificial Intelligence—DeepSeek in Helicobacter pylori- Related Queries" (Du et al., 2025) | Letter to the Editor (Comparative Analysis) | DeepSeek (versions V3 and R1) and ChatGPT (versions 4o and o1) |

| "Potential application of ChatGPT in Helicobacter pylori disease relevant queries" (Gao et al., 2024) | Evaluation Study | ChatGPT-4 |

| "Comparative evaluation of the accuracy and reliability of ChatGPT versions in providing information on Helicobacter pylori infection" (Ye et al., 2025) | Comparative Evaluation Study | ChatGPT-3.5, ChatGPT-4, and ChatGPT-4o |

| LLM Model | Study | Description of the Accuracy Metric (Scale/Threshold) | Mean score (SD) | Value/Score % |

|---|---|---|---|---|

| ChatGPT-3.5 | Hu et al. | 4-point Likert scale; (threshold ≥3) | 3.44, average of responses from 3 attemps | 92% Highest percentage of answers over 3 attempts |

| Lai et al. | 4-point Likert scale; (threshold ≥3) | Overall score 3.57 (0.13) | ~95.23% (61.9% completely correct+ 33.33% correct but not complete) | |

| Kong et al. | 6-point Likert scale; (threshold ≥4) | English 4.84 (1.07). Chinese 4.76 (0.86) | 90% (overall) 91.1% English, 88.9% Chinese. Summation score of all LLMs |

|

| Ye et al. | 5-point Likert scale; (threshold ≥4) | 3.94 (0.75) | Not reported | |

| ChatGPT-4 | Hu et al. | 4-point Likert scale; (threshold ≥3) | 3.55 average of responses from three trials/questions | 92% (Highest percentage of answers over 3 attempts |

| Kong et al. | 6-point Likert scale; (threshold ≥4) | English 4.87 (1.01) Chinese 4.84 (1.00) | 90% (overall) 91.1% English, 88.9% Chinese. Summation score of all LLMs |

|

| Zeng et al. | 6-point Likert scale; (threshold ≥4) | English: 4.00 (1.00) Chinese: 4.40 (0.89) | ||

| Gao et al. | 5-point Likert scale; (threshold ≥4) |

Overall mean for experts: 4.58 (0.50) | ||

| Ye et al. | 5-point Likert scale; (threshold ≥4) | 4.14 (0.75) | ||

| ChatGPT-4o | Du et al. | Average accuracy (objective) as percentage of correct answers over total | 77.4% | |

| Ye et al. | 5-point Likert scale; (threshold ≥4) | 4.49 (0.74) | ||

| DeepSeek-V3 | Du et al. | Average accuracy (objective) as percentage of correct answers over total | 90.4% | |

| Claude 3 Opus | Zeng et al. | 6-point Likert scale; (threshold ≥4) | English: 4.30 (1.30). Chinese: 3.40 (0.89) | |

| DeepSeek-R1 | Du et al. | Average accuracy (objective) as percentage of correct answers over total | 95.2% | |

| OpenAI o1 | Du et al. | Average accuracy (objective) as percentage of correct answers over total | 87.0% | |

| Bing Copilot | Zeng et al. | 6-point Likert scale; (threshold ≥4) | English: 4.40 (0.89). Chinese: 4.20 (0.84) | |

| Gemini Pro | Zeng et al. | 6-point Likert scale; (threshold ≥4) | English: 5.40 (0.55). Chinese: 5.00 (0.71) | |

| ERNIE Bot 4.0 | Kong et al. | 6-point Likert scale; (threshold ≥4) | English 5.07 (0.89). Chinese 4.42 (1.18) | 90% (overall) 91.1% inglese, 88.9% cinese. Summation score of all LLMs |

| Zeng et al. | 6-point Likert scale; (threshold ≥4) | English: 5.20 (0.45). Chinese: 5.20 (0.45) |

| LLM Model | Study | Completeness Metric/Scale | Mean score (SD) | Value/Score % |

|---|---|---|---|---|

| ChatGPT-3.5 | Kong et al. | 3-point Likert scale (threshold ≥2); | Overall score: English 1.82 (0.78) Chinese 1.67 (0.77) | 45.6%; English: 57.8% Chinese 33.3% (composite performance score of the three LLMs evaluated in the study) |

| Lai et al. | Completeness assessed via 4-point accuracy scale (4= Comprehensive) | 3.57 (0.13) | 61.9% | |

| Ye et al. | Assessed via 5-point Likert accuracy. (threshold ≥4) | Overall score: 3.94 | Not considered fully complete. Completeness mentioned but not quantitatively evaluated | |

| Hu et al. | Completeness mentioned but not quantitatively evaluated | Not reported | Not reported | |

| ChatGPT-4 | Kong et al. | 3-point Likert scale (threshold ≥2); | Overall score: English 2.11 (0.68) Chinise 1.78 (0.74) | 45.6%; English: 57.8% Chinse 33.3% (composite performance score of the three LLMs evaluated in the study) |

| Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists' rating: English 1.60 (0.55), Chinese 1.80 (0.45) Non-Expert rating: Chinese 2.44 ± 0.54 | Not specified | |

| Gao et al. | 3-point Likert scale; (threshold ≥2) | Overall score: 2.79 (0.41) | Not specified | |

| Ye et al. | Assessed via 5-point Likert accuracy. (threshold ≥4) | Overall score: 4.14 | Considered complete Completeness mentioned but not quantitatively evaluated | |

| Hu et al. | Completeness mentioned but not quantitatively evaluated | Not reported | Not reported | |

| ChatGPT-4o | Ye et al. | Assessed via 5-point Likert accuracy. (threshold ≥4) | Overall score: 4.94 | Considered complete Completeness mentioned but not quantitatively evaluated |

| Claude 3 Opus | Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists' rating: English 1.80 (0.84), Chinese 1.20 (0.45) Non-Expert rating: Chinese 1.82 (0.72) | Not specified |

| Bing Copilot | Zeng et al. | 3-point Likert scale; (threshold ≥2); | Gastroenterologists' rating: English 2.00 (0.00), Chinese 1.80 (0.45) Non-Expert rating: Chinese 2.20 (0.70) | Not specified |

| Gemini Pro | Zeng et al. | 3-point Likert scale; (threshold ≥2); | Gastroenterologists' rating: English 2.40 (0.55), Chinese 2.20 (0.45) Non-Expert rating: Chinese 2.70 (0.58) | Not specified |

| ERNIE Bot 4.0 |

Kong et al. | 3-point Likert scale (threshold ≥2) | Overall score: English 1.84 (0.80) Chinese 1.71 (0.87) |

45.6%; English: 57.8% Chinse 33.3% (composite performance score of the three LLMs evaluated in the study) |

| Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists' rating: English 1.80 (0.45), Chinese 1.80 (0.45) Non-Expert rating: Chinese 2.12 (0.69) | Not specified |

| LLM Model | Study | Readability Value/Score | Scores for Each Scale | Word Count/Length Metric |

|---|---|---|---|---|

| ChatGPT-3.5 | Ye et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL). Word count |

FRE: 20.24 (9.44) FKGL: 15.19 (1.60). |

Word count: 155.5 (67.09) |

| ChatGPT-4 | Zeng et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL). Simple Measure of Gobbledygook (SMOG) Word count |

FRE: 75.47 FKGL: 7th grade SMOG: 9.87 |

Word count: 578 (Chinese) 433 (English) |

| Ye et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL) Word count |

FRE: 24.88 (8.04) FKGL: 14.82 (1.48) |

Word count: 199.2 (75.51) | |

| Gao et al. | Word count | Word count: 195.94 (52.96) | ||

| ChatGPT-4o | Ye et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL) Word count |

FRE: 21.64 (10.54) FKGL: 15.01 (2.09) |

Word count: 230.9 (104.5) |

| Du et al. | Hemingway Editor, Grammarly | Hemingway Readability Score: poor. Hemingway Very Hard Sentences: 219/299. Grammarly Readability Score: 20. Grammarly Text Score: 85% | Word length: 5.5 Sentence length:24 |

|

| OpenAI o1 | Du et al. | Hemingway Editor, Grammarly | Hemingway Readability Score: poor. Hemingway Very Hard Sentences: 209/282. Grammarly Readability Score: 15. Grammarly Text Score: 85%. | Word length: 5.7 Sentence length: 22.8 |

| DeepSeek-V3 | Du et al. | Hemingway Editor, Grammarly | Hemingway Readability Score: poor. Hemingway Very Hard Sentences: 248/309. Grammarly Readability Score: 9. Grammarly Text Score: 88% | Word length: 5.8 Sentence length: 23.7 |

| Claude 3 Opus | Zeng et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL). Simple Measure of Gobbledygook (SMOG) Word count |

FRE: 60.87 FKGL: 8th and 9th grade SMOG: 11.73 |

Words (English version): 319 Words (Chinese version): 354 |

| ERNIE Bot 4.0 | Zeng et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL). Simple Measure of Gobbledygook (SMOG) Word count |

FRE: 71.23 FKGL: 7th grade SMOG: 10.01 |

Words (English version): 309 Words (Chinese version): 509 |

| Gemini Pro | Zeng et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL). Simple Measure of Gobbledygook (SMOG) Word count |

FRE: 72.44 FKGL: 7th grade SMOG: 9.46 |

Words (English version): 474 Words (Chinese version): 748 |

| Bing Copilot | Zeng et al. | Flesch Reading Ease (FRE) Flesch–Kincaid Grade Level (FKGL). Simple Measure of Gobbledygook (SMOG) Word count |

FRE: 55.55 FKGL: 10th to 12th grade SMOG: 11.94 |

Words (English version): 323 Words (Chinese version): 519 |

| LLM Model | Study | Comprehensibility Metric/Scale | Mean score (SD) | Value/Score % |

|---|---|---|---|---|

| ChatGPT-3.5 | Kong et al. | 3-point Likert scale; (threshold ≥2) | English 2.96 (0.21) Chinese 2.93 (0.25) |

100% of responses reached an acceptable level of comprehensibility |

| Lai et al. | Qualitative assessment of concision | Responses were “coherent and easy to understand,” “in language easily understandable for patients.” | Not expressed | |

| Hu et al. | Qualitative assessment of concision | Not directly assess with specific scores | Not expressed | |

| Ye et al. | Comprehensibility was evaluated through measures of readability | |||

| ChatGPT-4 | Kong et al. | 3-point Likert scale; (threshold ≥2) | English 2.93 (0.33) Chinese 2.80 (0.40) | 100% of responses reached an acceptable level of comprehensibility |

| Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists: English 2.60 (0.55) Chinese 3.00 (0.00) Patients: Chinese 2.76 (0.43) | 100% of responses reached an acceptable level of comprehensibility | |

| Gao et al. | 3-point Likert scale; (threshold ≥2) | Overall mean for experts: 2.95 (0.21) Medical students 2.68 (0.54) scored higher than non-medical participants 2.16 (0.79) | ||

| Ye et al. | Comprehensibility was evaluated through measures of readability. | |||

| Hu et al. | Qualitative assessment of concision | Not directly assess with specific scores | ||

| ChatGPT-4o | Du et al. | Comprehensibility was evaluated through measures of readability. | ||

| Ye et al. | Comprehensibility was evaluated through measures of readability. | |||

| OpenAI o1 | Du et al. | Comprehensibility was evaluated through measures of readability. | ||

| DeepSeek-V3 | Du et al. | Comprehensibility was evaluated through measures of readability. | ||

| DeepSeek-R1 | Du et al. | Comprehensibility was evaluated through measures of readability. | ||

| Claude 3 Opus | Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists: English 2.80 (0.45) Chinese 3.00 (0.00) Patients: Chinese 2.56 (0.67) | 100% of responses reached an acceptable level of comprehensibility |

| Bing Copilot | Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists: English 2.80 (0.45) Chinese 3.00 (0.00) Patients: Chinese 2.68 (0.55) | 100% of responses reached an acceptable level of comprehensibility |

| Gemini Pro | Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists: English 2.60 (0.89) Chinese 3.00 (0.00) Patients: Chinese 2.86 (0.40) | 100% of responses reached an acceptable level of comprehensibility |

| ERNIE Bot 4.0 | Kong et al. | 3-point Likert scale; (threshold ≥2) | English 2.93 (0.33); Chinese 2.83 (0.53) | 100% of responses reached an acceptable level of comprehensibility |

| Zeng et al. | 3-point Likert scale; (threshold ≥2) | Gastroenterologists: English 3.00 (0.00) Chinese 3.00 (0.00) Patients: Chinese 2.72 (0.50) | 100% of responses reached an acceptable level of comprehensibility |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).