Submitted:

07 October 2025

Posted:

08 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

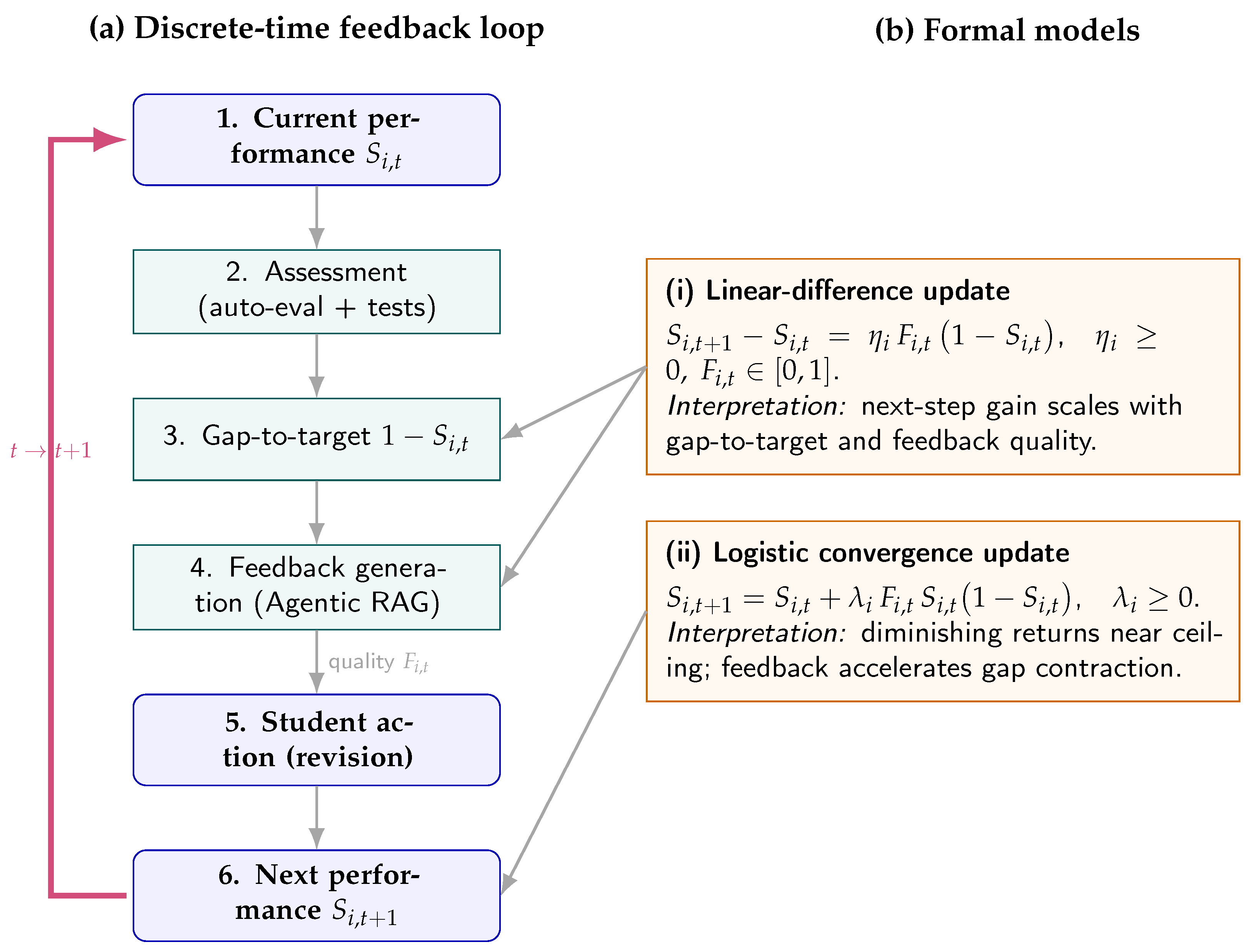

2. Theoretical Framework

2.1. Assessment and Feedback in Technical Disciplines and Digital Settings

2.2. Advanced AI for Personalized Feedback: RAG and Agentic RAG

2.3. Mathematical Modeling of Assessment–Feedback Dynamics

- 1.

- (Monotonicity & boundedness) is nondecreasing and remains in for all t.

- 2.

- (Geometric convergence) If there exists such that for all t, then

- 3.

- (Iteration complexity) To achieve with , it suffices that

- 1.

- (Local stability at the target) If , then is locally asymptotically stable. In particular, if for all t, then increases monotonically to 1.

- 2.

- (Convergence without oscillations) If , then is nondecreasing and converges to 1 without overshoot.

2.3.0.1. Lyapunov and contraction view.

2.4. Relation to Knowledge Tracing and Longitudinal Designs

2.5. Comparative-Education Perspective

3. Materials and Methods

3.1. Overview and Study Design

Participants and inclusion.

Outcomes and endpoints.

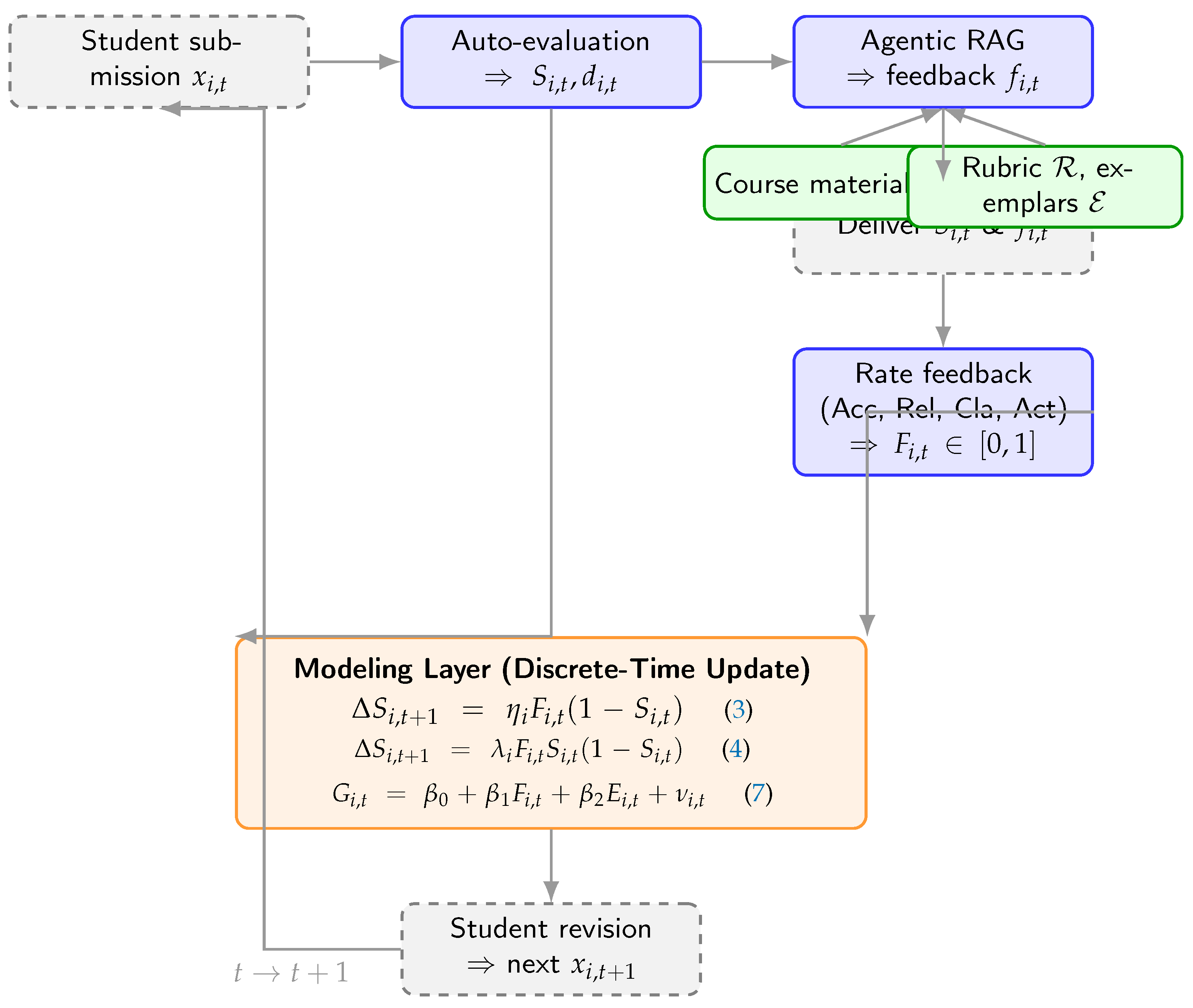

3.2. System Architecture for Feedback Generation

- Agentic RAG feedback engine. A retrieval-augmented generation pipeline with agentic capabilities (planning, tool use, self-critique) that produces course-aligned, evidence-grounded feedback tailored to each submission. Retrieval uses a top-k dense index over course artifacts; evidence citations are embedded in the feedback for auditability.

- Connector/middleware layer (MCP-like). A standardized, read-only access layer brokering secure connections to student code and tests, grading rubrics, curated exemplars, and course documentation. The layer logs evidence references, model/version, and latency for traceability.

- Auto-evaluation module. Static/dynamic analyses plus unit/integration tests yield diagnostics and a preliminary score; salient findings are passed as structured signals to contextualize feedback generation.

3.3. Dynamic Assessment Cycle

- Submission. Students solved a syllabus-aligned concurrent-programming task.

- Auto-evaluation. The system executed the test suite and static/dynamic checks to compute and extract diagnostics .

- Personalized feedback (Agentic RAG). Detailed, actionable comments grounded on the submission, rubric, and retrieved evidence were generated and delivered together with .

- Feedback Quality Index. Each feedback instance was rated on Accuracy, Relevance, Clarity, and Actionability (5-point scale); the mean was linearly normalized to to form . A stratified subsample was double-rated for reliability (Cohen’s ) and internal consistency (Cronbach’s ).

- Revision. Students incorporated the feedback to prepare the next submission. Operationally, feedback from informs the change observed at t.

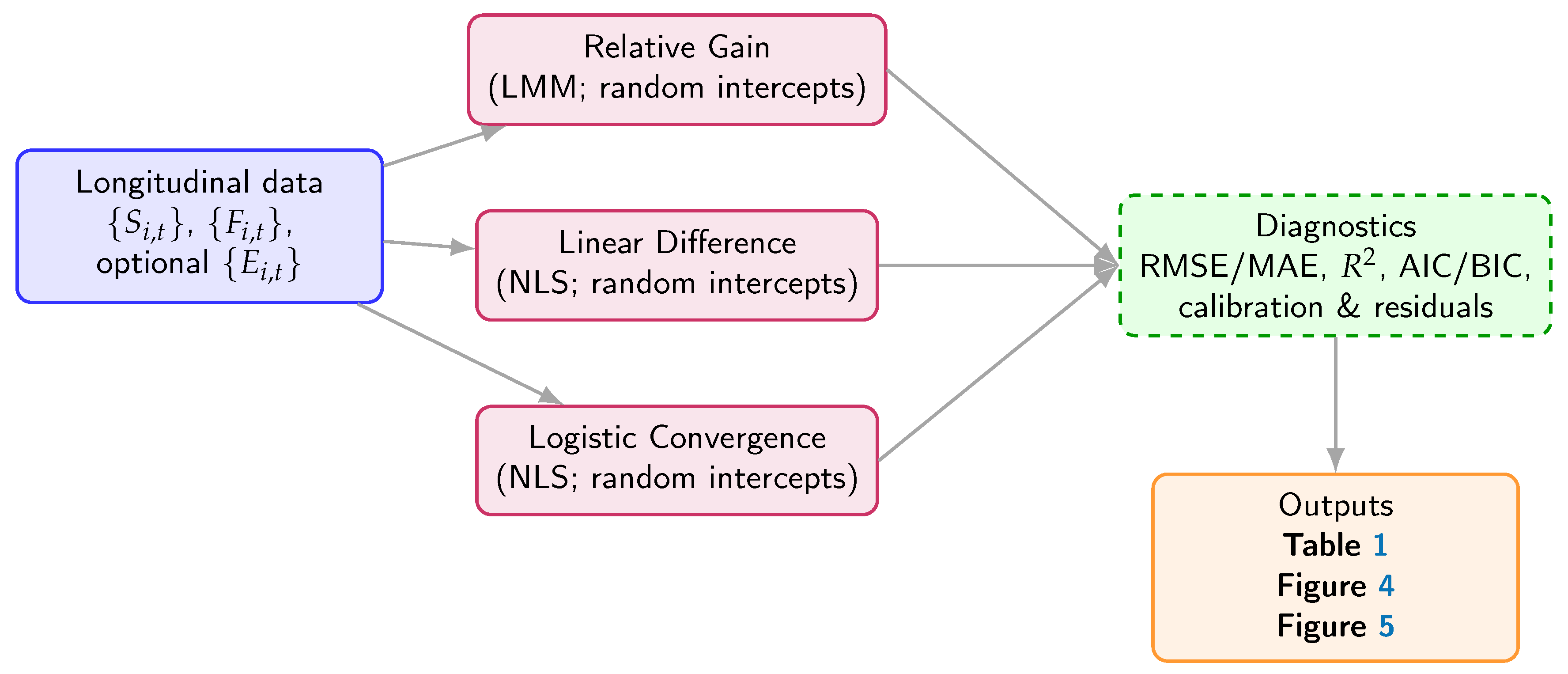

3.4. Model Specifications

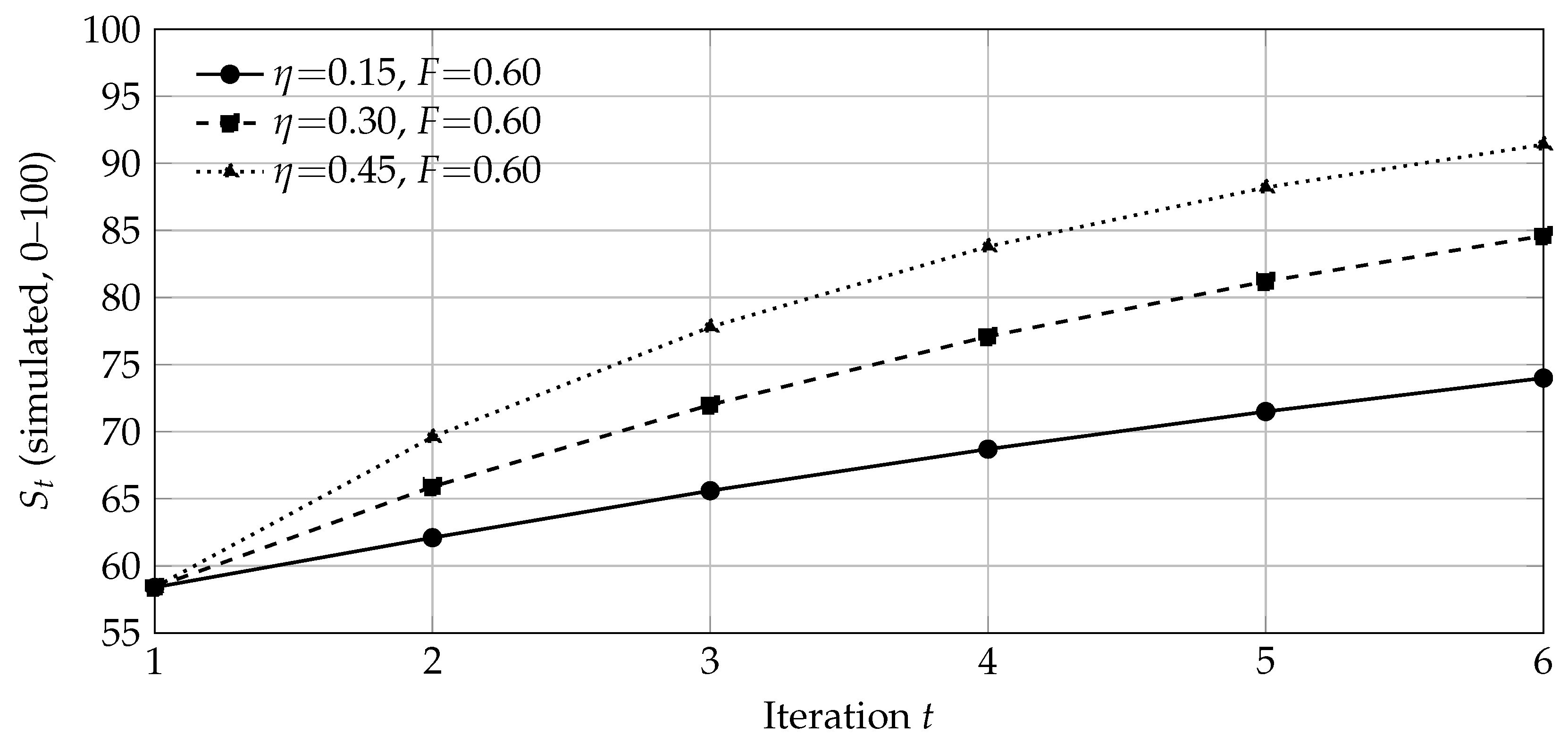

(1) Linear difference model.

(2) Logistic convergence model.

(3) Relative-gain model.

3.5. Identification Strategy, Estimation, and Diagnostics

Identification and controls.

Estimation.

Multiple testing and robustness.

Preprocessing and missing data.

3.6. Threats to Validity and Mitigations

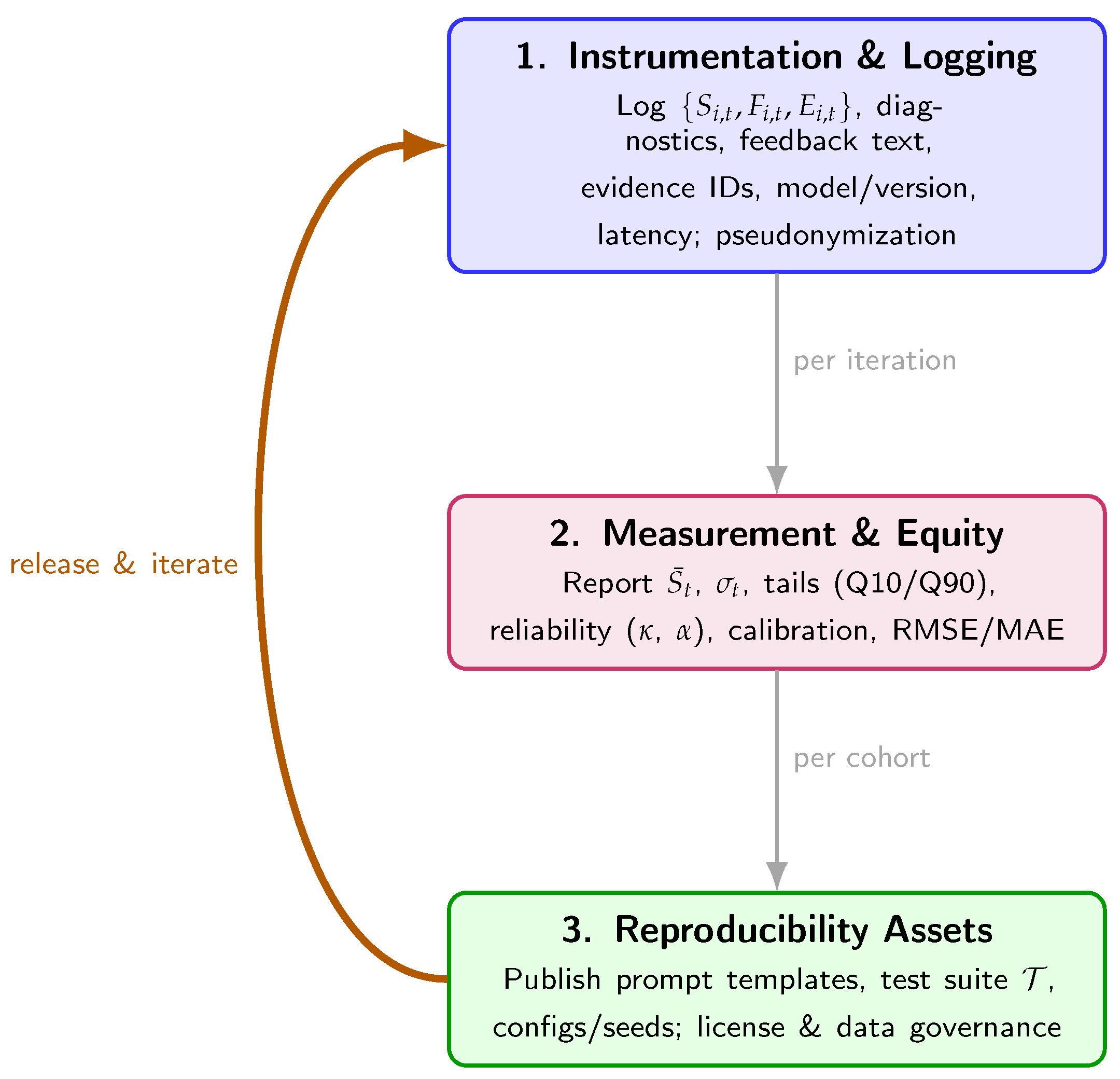

3.7. Software, Versioning, and Reproducibility

3.8. Data and Code Availability

3.9. Statement on Generative AI Use

3.10. Ethics

3.11. Algorithmic Specification and Visual Summary

| Algorithm 1:Iterative Dynamic Assessment Cycle with Agentic RAG |

|

Require: Course materials , rubric , exemplars , test suite ; cohort ;

Initialize connectors (MCP-like), audit logs, and pseudonymization

forto 6 do▹ Discrete-time learning loop for each student do

Receive submission

Auto-evaluation: run + static/dynamic checks ⇒ diagnostics ; compute

Build context

Agentic RAG: retrieve top-k evidence; draft → self-critique → finalize feedback

Deliver and to student i

Feedback Quality Rating: rate {Accuracy, Relevance, Clarity, Actionability} on 1–5 Normalize/aggregate ⇒; (optional) collect (effort/time) Log with pseudonym IDs end for

end for

Output: longitudinal dataset , , optional ; evidence/audit logs |

| Algorithm 2:Computation of and Reliability Metrics (, ) |

|

Require: Feedback instances with rubric ratings for ; 20% double-rated subsample

Ensure:; Cohen’s on ; Cronbach’s across criteria

for each feedback instance do

Handle missing ratings: if any missing, impute with within-iteration criterion mean for each criterion c do

▹ Normalize to

end for

Aggregate: ▹ Equal weights; alternative weights in Sec. Section 3.5

end for

Inter-rater agreement (): compute linear-weighted Cohen’s on

Internal consistency (): with criteria, compute Cronbach’s

Outputs: for modeling; and reported in Results |

4. Results

4.1. Model Fitting, Parameter Estimates, and Effect Sizes

- Per-step effect at mid-trajectory. At and , the linear-difference model implies an expected gain (i.e., ∼7.7 points on a 0–100 scale). Increasing F by at the same adds (≈1.0 point).

- Gap contraction in the logistic view. Using (5), the multiplicative contraction factor of the residual gap is . For and , the factor is , i.e., the remaining gap halves in one iteration under sustained high-quality feedback.

Reliability of the Feedback Quality Index (FQI).

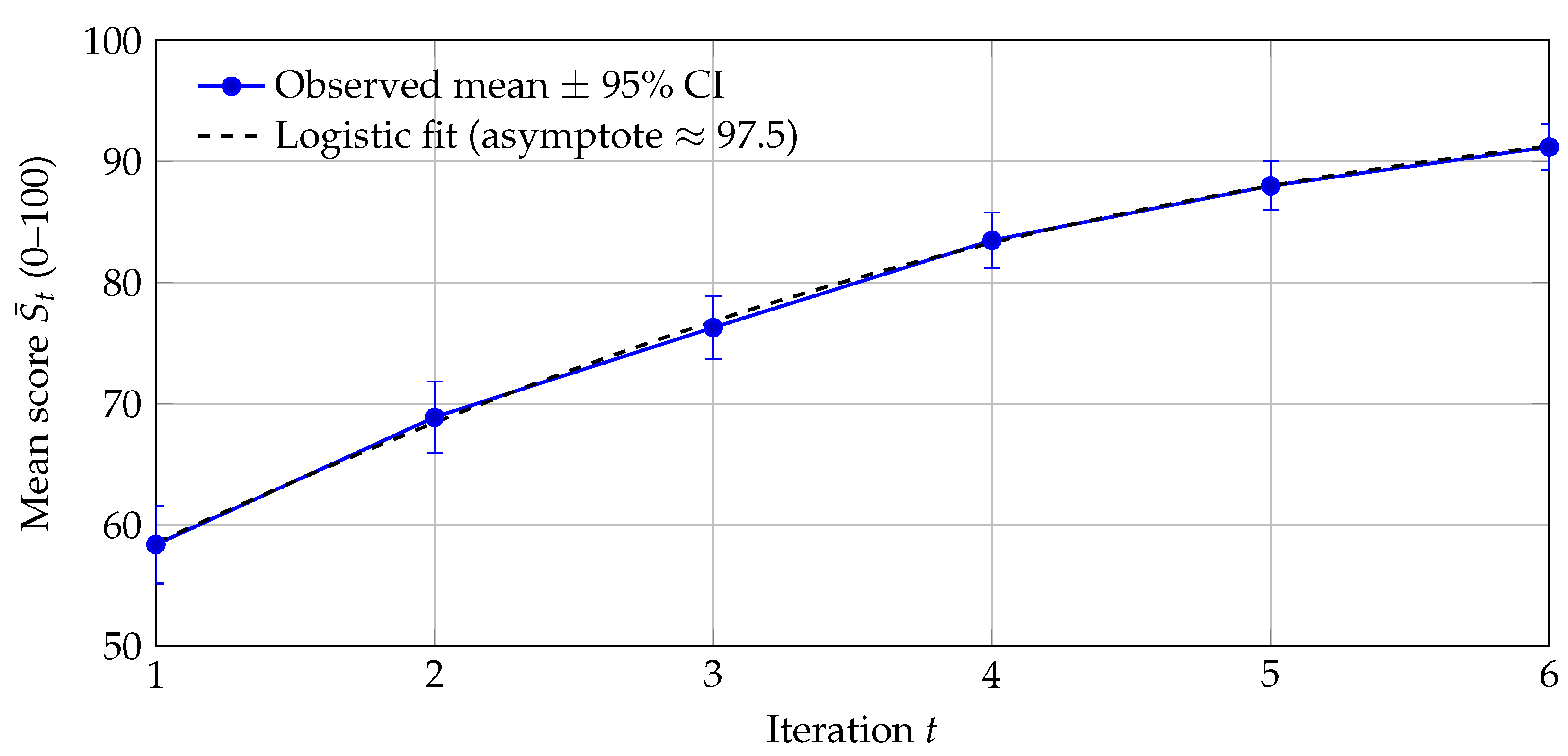

4.2. Cohort Trajectories Across Iterations

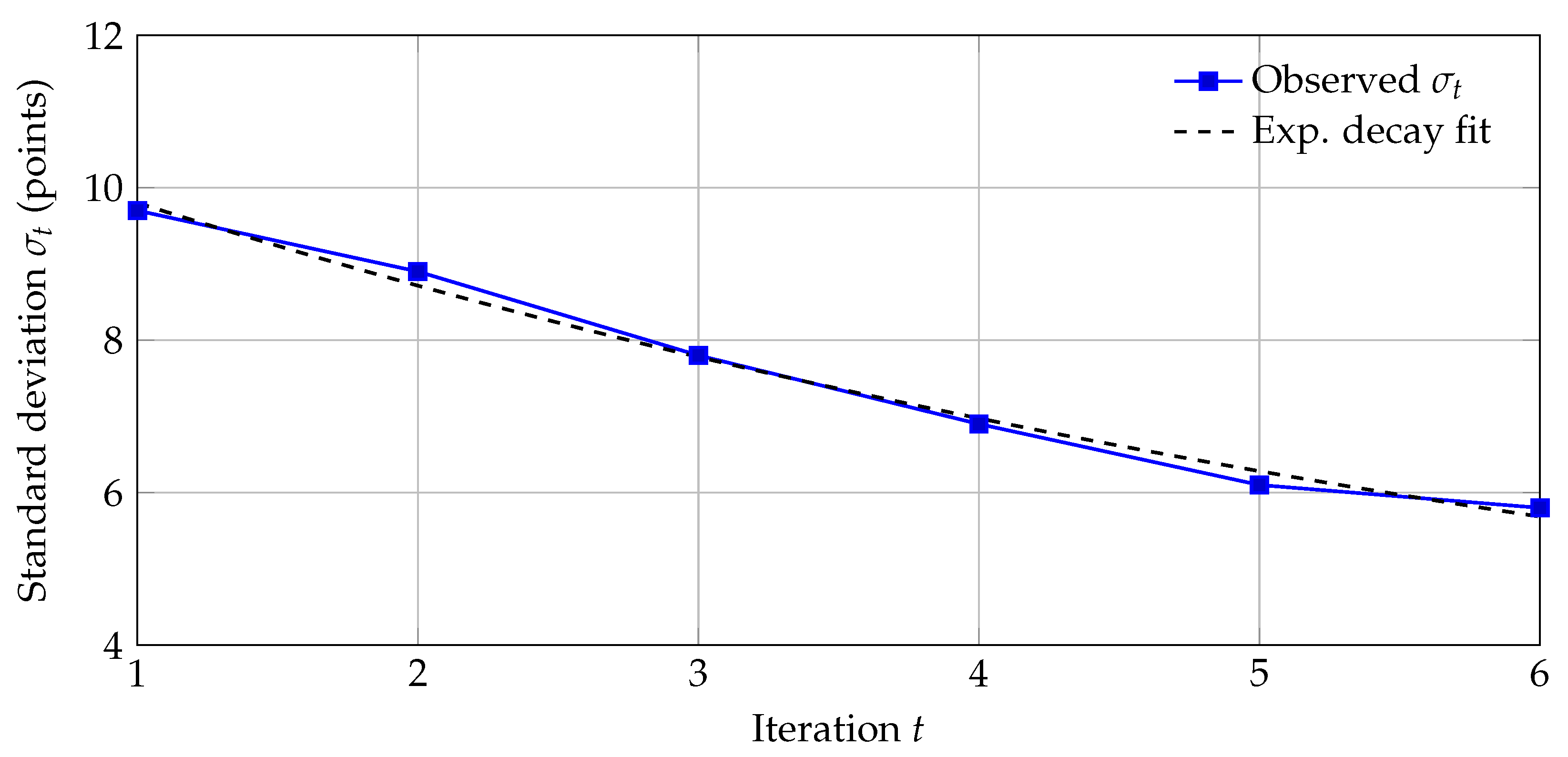

4.3. Variance Dynamics, Equity Metrics, and Group Homogeneity

- Inter-decile spread (Q90–Q10). Using , the spread drops from points at to at (), indicating tighter clustering of outcomes.

- Tail risk. The proportion below an 80-point proficiency threshold moves from at (z) to at (z), evidencing a substantive collapse of the lower tail as feedback cycles progress.

4.4. Individual Trajectories: Heterogeneous Responsiveness

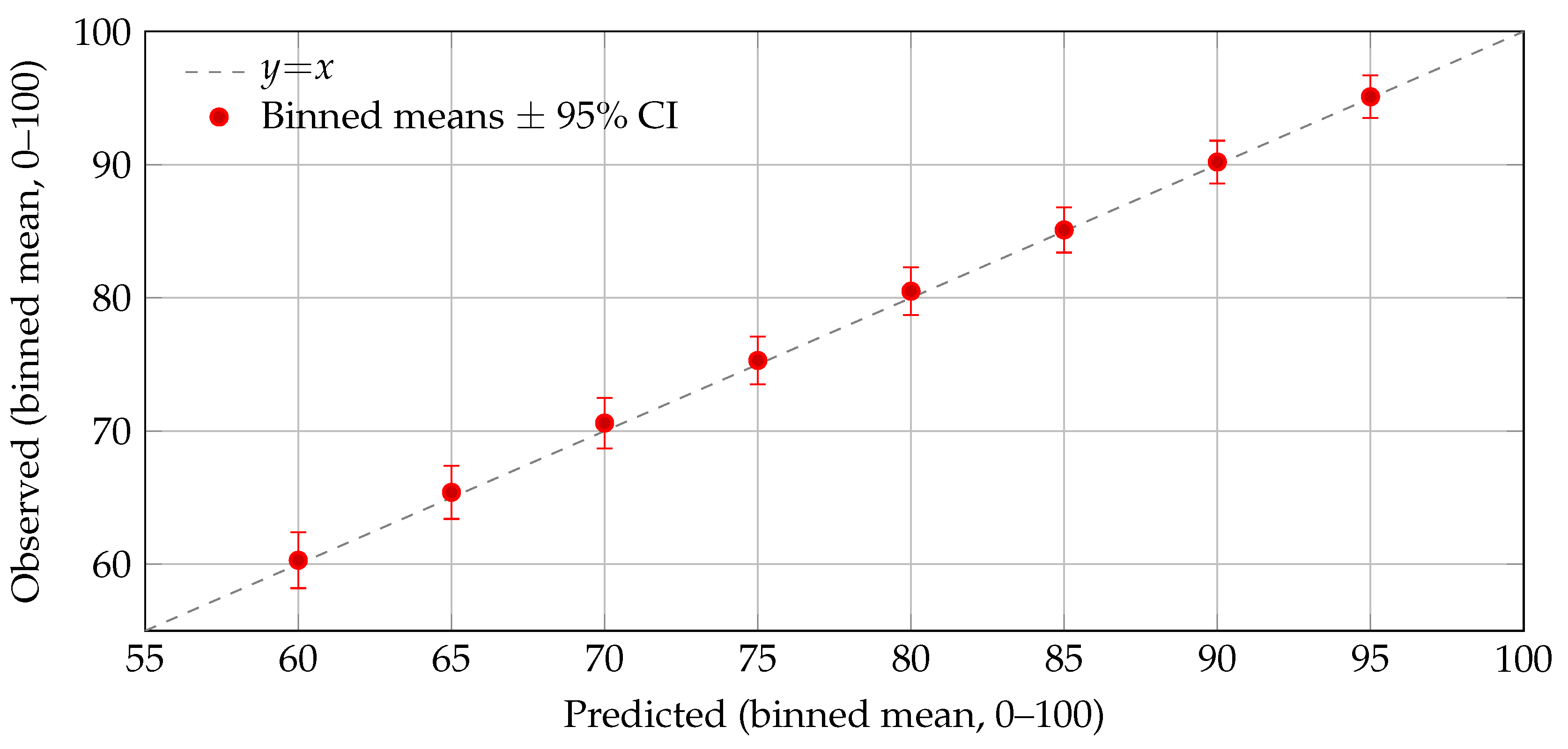

4.5. Model Fit, Cross-Validation, Calibration, Placebo Test, Missingness Sensitivity, and Robustness

4.5.0.12. Cross-validation.

Calibration by individuals (binned).

Placebo timing test (lead).

Sensitivity to missingness and influence.

Robustness.

Equity and design implications.

5. Discussion: Implications for Assessment in the AI Era

5.1. Principal Findings and Their Meaning

5.2. Algorithmic Interpretation and Links to Optimization

5.3. Relation to Prior Work and the Digital-Transformation Context

5.4. Design and Policy Implications for EdTech at Scale

5.5. Threats to Validity and Limitations

5.6. Future Work

5.7. Concluding Remark and Implementation Note

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ogunleye, B.; Zakariyyah, K.I.; Ajao, O.; Olayinka, O.; Sharma, H. A Systematic Review of Generative AI for Teaching and Learning Practice. Education Sciences 2024, 14, 636. [Google Scholar] [CrossRef]

- Wang, S.; Wang, F.; Zhu, Z.; Wang, J.; Tran, T.; Du, Z. Artificial intelligence in education: A systematic literature review. Expert Systems with Applications 2024, 252, 124167. [Google Scholar] [CrossRef]

- Li, K.; Zheng, L.; Chen, X. Automated Feedback Systems in Higher Education: A Meta-Analysis. Computers & Education 2023, 194, 104676. [Google Scholar] [CrossRef]

- Jauhiainen, J.S.; Garagorry Guerra, A. Generative AI in education: ChatGPT-4 in evaluating students’ written responses. Innovations in Education and Teaching International 2024. [Google Scholar] [CrossRef]

- Cingillioglu, I.; Gal, U.; Prokhorov, A. AI-experiments in education: An AI-driven randomized controlled trial for higher education research. Education and Information Technologies 2024, 29, 19649–19677. [Google Scholar] [CrossRef]

- Fan, W.; et al. A Survey on RAG Meeting LLMs: Towards Retrieval-Augmented LLMs. ACM Computing Surveys 2024. [Google Scholar] [CrossRef]

- Gupta, S.; et al. Comprehensive Survey of Retrieval-Augmented Generation (RAG): Evolution, Current Landscape and Future Directions. arXiv 2024, arXiv:2410.12837. [Google Scholar]

- Asai, A.; Wu, Z.; Wang, Y.; Sil, A.; Hajishirzi, H. Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection. arXiv 2023, arXiv:2310.11511. [Google Scholar] [CrossRef]

- Yao, S.; et al. ReAct: Synergizing Reasoning and Acting in Language Models. In Proceedings of the International Conference on Learning Representations (ICLR 2023), 2023. [CrossRef]

- Keuning, H.; Jeuring, J.; Heeren, B. A Systematic Literature Review of Automated Feedback Generation for Programming Exercises. ACM Transactions on Computing Education 2019, 19. [Google Scholar] [CrossRef]

- Jacobs, S.; Jaschke, S. Evaluating the Application of Large Language Models to Generate Feedback in Programming Education. arXiv 2024, arXiv:2403.09744. [Google Scholar] [CrossRef]

- Nguyen, H.; Stott, N.; Allan, V. Comparing Feedback from Large Language Models and Instructors: Teaching Computer Science at Scale. In Proceedings of the Proceedings of the Eleventh ACM Conference on Learning @ Scale (L@S ’24), New York, NY, USA, 2024. [CrossRef]

- Koutcheme, C.; Hellas, A. Propagating Large Language Models Programming Feedback. In Proceedings of the Proceedings of the 11th ACM Conference on Learning at Scale (L@S ’24), Atlanta, GA, USA, 2024, pp. 366-370. [CrossRef]

- Heickal, H.; et al. Generating Feedback-Ladders for Logical Errors in Programming with LLMs. In Proceedings of the Proceedings of the 17th International Conference on Educational Data Mining (EDM 2024) – Posters.

- Banihashem, S.K.; et al. Feedback sources in essay writing: peer-generated or AI-generated? International Journal of Educational Technology in Higher Education 2024, 21. [Google Scholar] [CrossRef]

- Abdelrahman, G.; Wang, Q.; Nunes, B.P.; other collaborators. Knowledge Tracing: A Survey. ACM Computing Surveys 2023, 55. [Google Scholar] [CrossRef]

- Song, X.; et al. A Survey on Deep Learning-Based Knowledge Tracing. Knowledge-Based Systems 2022, 258, 110036. [Google Scholar] [CrossRef]

- Yin, Y.; et al. Tracing Knowledge Instead of Patterns: Stable Knowledge Tracing with Diagnostic Transformer. In Proceedings of the Proceedings of the ACM Web Conference 2023 (WWW ’23). ACM, 2023, pp. 855–864. [CrossRef]

- Liu, T.; et al. Transformer-based Convolutional Forgetting Knowledge Tracking. Scientific Reports 2023, 13, 19112. [Google Scholar] [CrossRef] [PubMed]

- Zhou, T.; et al. Multi-Granularity Time-based Transformer for Student Performance Prediction. arXiv 2023, arXiv:2304.05257. [Google Scholar]

- van der Kleij, F.M.; Feskens, R.C.W.; Eggen, T.J.H.M. Effects of Feedback in a Computer-Based Learning Environment on Students’ Learning Outcomes: A Meta-Analysis. Review of Educational Research 2015, 85, 475–511. [Google Scholar] [CrossRef]

- Hattie, J.; Timperley, H.; Brown, G. Feedback in the Age of AI: Revisiting Foundational Principles. Educational Psychology Review 2023, 35, 1451–1475. [Google Scholar] [CrossRef]

- Gao, L.; Zhang, J. Automated Feedback Generation for Programming Assignments Using Transformer-Based Models. IEEE Transactions on Education 2022, 65, 203–212. [Google Scholar] [CrossRef]

- Chen, Y.; Huang, Y.; Xu, D. Intelligent Feedback in Programming Education: Trends and Challenges. ACM Transactions on Computing Education 2024, 24, 15–1. [Google Scholar] [CrossRef]

- Dai, W.; Lin, J.; Jin, F.; Li, T.; Tsai, Y.; Gašević, D.; Chen, G. Assessing the Proficiency of Large Language Models in Automatic Feedback Generation: An Evaluation Study. Computers and Education: Artificial Intelligence 2024, 5, 100234. [Google Scholar] [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. In Proceedings of the Advances in Neural Information Processing Systems 33 (NeurIPS 2020), 2020.

| 1 | Scores were normalized to with and for estimation; descriptive figures are presented on a 0–100 scale. |

| 2 | Fitted curve (0–100 scale): . Evaluations at are , closely matching the observed means. |

| Model | Parameter | Estimate | SE | 95% CI | Notes | |

|---|---|---|---|---|---|---|

| Linear difference | (mean) | 0.320 | 0.060 | 0.203 | 0.437 | Mixed-effects NLS; |

| Logistic convergence | (mean) | 0.950 | 0.150 | 0.656 | 1.244 | ; |

| Relative gain | (effect of F) | 0.280 | 0.070 | 0.143 | 0.417 | LMM, student RE; |

| Iteration t | Mean | SD | 95% CI | |

|---|---|---|---|---|

| 1 | 58.4 | 9.7 | 55.2 | 61.6 |

| 2 | 68.9 | 8.9 | 66.0 | 71.9 |

| 3 | 76.3 | 7.8 | 73.7 | 78.9 |

| 4 | 83.5 | 6.9 | 81.2 | 85.8 |

| 5 | 88.0 | 6.1 | 86.0 | 90.0 |

| 6 | 91.2 | 5.8 | 89.3 | 93.1 |

| Measure | Estimate / Result |

|---|---|

| Baseline mean ± SD () | |

| Final mean ± SD () | |

| Sphericity (Mauchly) | Violated () |

| Greenhouse–Geisser | |

| RM-ANOVA (GG-corrected) | , |

| Effect size (partial ) |

| Gain LMM | Linear NLS RMSE | Logistic NLS RMSE | ||||

|---|---|---|---|---|---|---|

| Mean ± SD | 0.79 | 0.04 | 0.055 | 0.006 | 0.051 | 0.005 |

| Gain | Linear NLS MAE | Logistic NLS MAE | ||||

| Mean ± SD | — | 0.042 | 0.005 | 0.039 | 0.004 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).