Submitted:

06 October 2025

Posted:

07 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We develop an innovative IDS system capable of detecting network threats with a 98.5% accuracy rate.

- We successfully utilize and interpret complex semantic features for enhanced intrusion detection.

- We comprehensively evaluate our IDS using a large-scale, real-world dataset from China Mobile, demonstrating the system’s effectiveness and practical applicability.

2. Background: NLP and Advanced Embedding Techniques

2.1. Embedding in NLP

2.2. Self-Supervised Learning in Embedding Generation

2.3. From Embedding to IDS

3. System Design

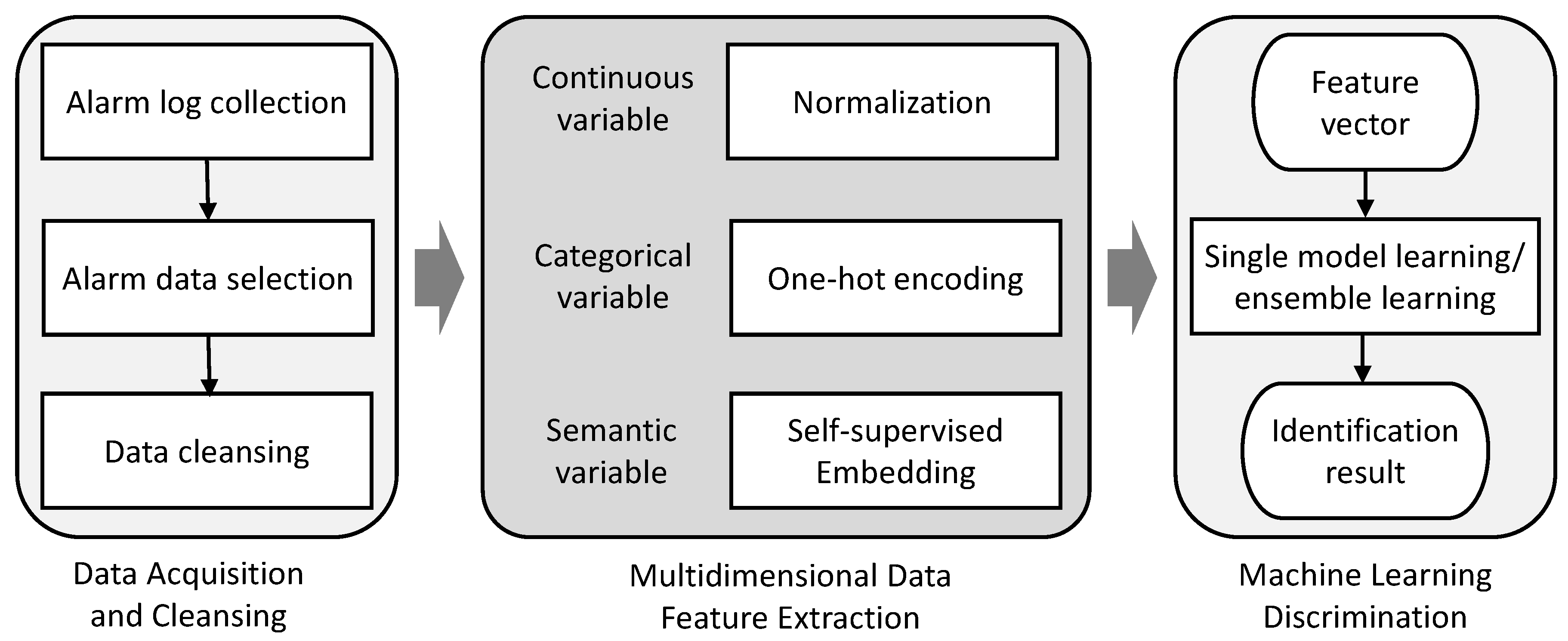

3.1. Design Overview

| Field | Value |

|---|---|

| Generation Time | 2023/3/23 11:26 |

| End Time | 2023/3/23 11:26 |

| Source IP | 124.220.174.243 |

| Source Port | 58306 |

| Destination IP | 120.199.235.18, Zhejiang, China |

| Destination Port | 37020 |

| Device Source | 192.168.2.1 |

| Occurrence Count | 1 |

| Request Message | GET / HTTP/1.1 Host: zzjdhgl.zj.chinamobile.com:37020 User-Agent: Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/34.0.1847.137 Safari/4E423F Connection: close Accept: image/gif, image/x-xbitmap, image/jpeg, image/pjpeg, image/png, */* Accept-Charset: iso-8859-1,utf-8;q=0.9,*;q=0.1 Content-Type:%[#context[’ISOP#@!STARTcom.opensymphony.xwork2ISOP#@!END.dispatcher.HttpServletResponse’].addHeader(’X-Hacker’,’Bounty Plz’)].multipart/form-data Accept-Encoding: gzip |

3.2. Feature Selection

- Event start/end time: Records the start and end times of events for temporal analysis and comparison.

- Source and destination port numbers: Provide information related to network connections, aiding the analysis and discrimination of activities on specific ports.

- Total number of event occurrences: Counts the number of times each event occurs over a period to reveal the frequency and trend of the event.

- Source IP: Records the source IP address of the event for tracking and identifying potential attack sources.

- Source IP and Geospatial Context: Records the source IP address of the event. Crucially, we extend this to derive geospatial context features (e.g., country, city, AS number) for tracking and identifying potential attack sources based on geographical behavioral baselines.

- Request message: Divides the request message into various aspects, including content length, method, path, content type, user information, and main body. These details offer a comprehensive description of the request, aiding in the analysis and identification of potential threats.

3.3. Multidimensional Feature Extraction

- Continuous Variables: Continuous variables denote features with an infinite continuum of values, typically rendered as numerical quantities. In the context of alarm event characterization, this includes metrics such as the duration of events, total count of occurrences, source port numbers, request content length, etc. These variables are denoted by specific numerical values within the dataset.

- Categorical Variables: These variables represent features with a finite, discrete set of options, commonly expressed as labels or categories. Within the domain of alarm event characterization, examples of categorical variables are the request type (e.g., GET/POST), content type (e.g., text/html), user agent (e.g., Mozilla), and IP address, geolocation, which are confined to a predetermined spectrum of values in the dataset.

- Semantic Variables: Semantic variables refer to data entries rich in semantic content, like URL paths and request body strings. These variables are presented as specific strings within the dataset, which resist straightforward enumeration and pose substantial challenges for feature extraction and representation.

3.3.1. Continuous Variable Feature Extraction

3.3.2. Categorical Variable Feature Extraction

3.3.3. Semantic Variable Feature Extraction

- Embedding Model Backbone. The Transformer, as conceptualized by Vaswani et al. [14] in the foundational paper "Attention is All You Need," encodes contextual information for input tokens. Input vectors are assembled into an initial matrix . The transformation process through successive Transformer layers is defined by the relation:Here, L denotes the total number of Transformer layers, with representing the output of the final layer. Each element serves as a contextualized representation of the corresponding input . A Transformer layer is composed of a self-attention sub-layer paired with a fully connected feed-forward network. This architecture is fortified by residual connections, as introduced by He et al. [15], and is followed by layer normalization techniques proposed by Ba et al. [16].

- Learning Embedding through Self-Supervised Learning. Employing self-supervised contrastive learning objectives within siamese and triplet network structures [19], the embedding model is fine-tuned on a dataset of 1 billion sentence pairs. The contrastive learning task challenges the model to discern the correct pairing from a randomly selected sentence set. The product of this learning process is a suite of dense, 384-dimensional vectors that embody the rich semantic details of the sentences [10]. The fine-tuned model is available on Hugging Face [20].

- Embedding Dimension Compression. Upon receiving the embeddings from the refined model, we confront the issue that semantic features often reside within a lower-dimensional manifold of the expansive 384-dimensional space. To address this, we utilize Principal Component Analysis (PCA) for dimensionality reduction [21]. PCA efficiently isolates and retains the most salient information, compacting the high-dimensional embeddings into a more manageable form for subsequent ML applications. This compression is instrumental in exploiting the wealth of information within semantic variables and furnishing potent, expressive features for the discriminative mechanisms of our ML algorithms.

3.4. Machine Learning Discrimination Algorithms

3.4.1. ML Classifiers

- Support Vector Machine: SVMs operate on the principle of finding a hyperplane in an N-dimensional space that distinctly classifies the data points. The optimal hyperplane is determined by the equation:Here, w represents the weight vector perpendicular to the hyperplane, b is the bias that adjusts the hyperplane’s position, denotes the feature vectors, are the corresponding class labels, and is the norm of the weight vector, indicating the inverse of the margin.

- Linear Regression Classifier: This classifier applies LR to classification problems. It predicts the target by fitting the best linear relationship between the feature vector and the target label.

- Naive Bayes Classifier: Based on Bayes’ Theorem, this classifier assumes the independence of features and calculates the probability of a label given a set of features using the formula:Here, is the probability of label Y given feature set X, is the likelihood of feature set X given label Y, is the prior probability of label Y, and is the prior probability of feature set X.

- K-Nearest Neighbors: KNN works on the principle of feature similarity, classifying a data point based on how closely it resembles the other points in the training set.

- Decision Tree: DTs employ a tree-like model of decisions. The goal is to learn decision rules inferred from data features, represented by the tree branches, leading to conclusions about the target value.

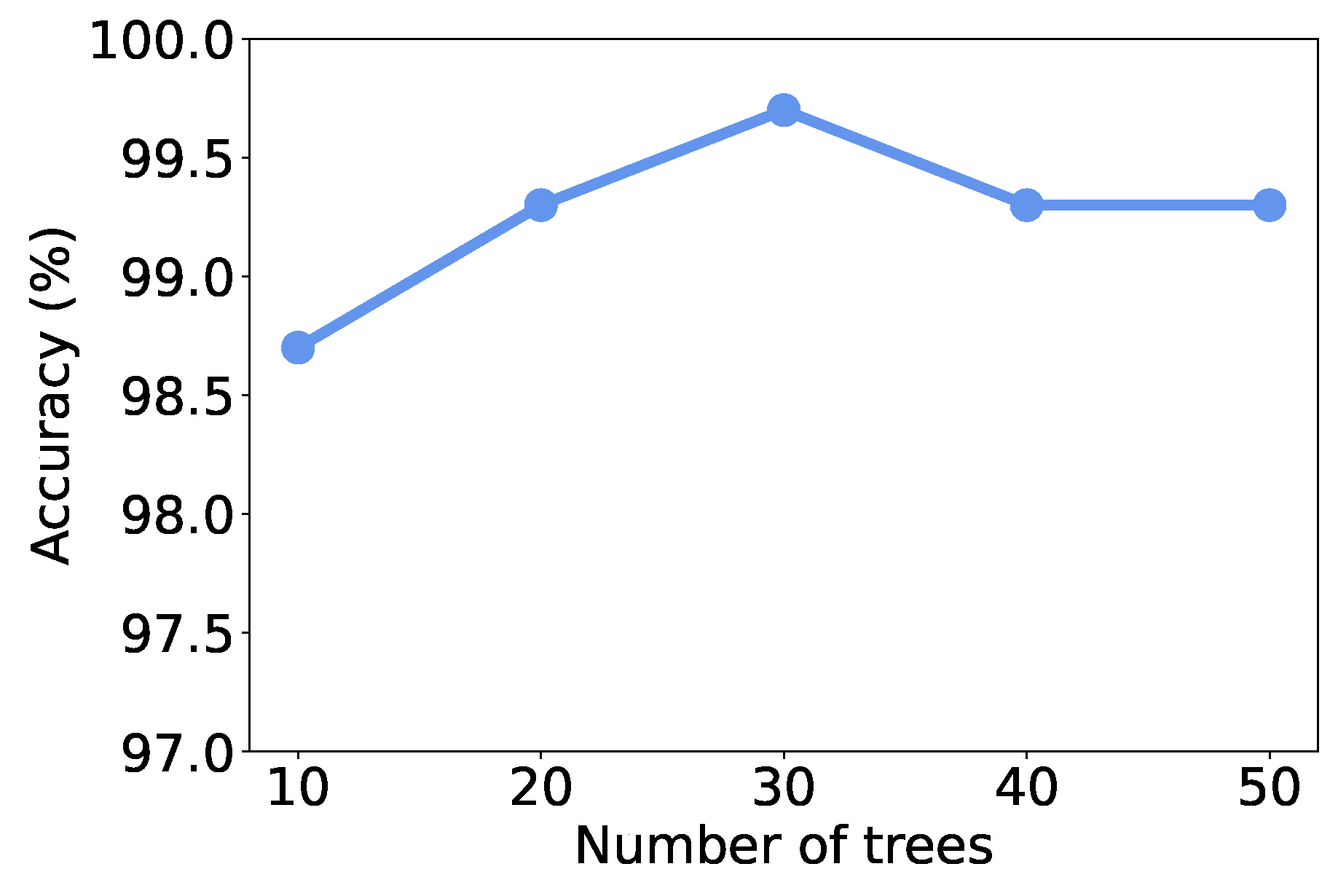

- Ensembled Classifier: Random Forest. To overcome the limitations of single-model ML algorithms, we explore the efficacy of ensemble learning algorithms. Such algorithms, particularly RF, construct a collective model from multiple DTs to mitigate bias and variance:where N is the number of trees and is the ith DT’s prediction. The dual sources of randomness in RFs - both in the selection of the bootstrap samples and the feature subsets for splitting - significantly reduce the variance of the model. This is achieved without substantially increasing the bias, leading to a model that is both accurate and robust against overfitting. In contrast to standard approaches, our implementation harnesses the probabilistic predictions of individual classifiers, combining them through averaging rather than majority voting. This nuanced approach not only bolsters the accuracy of traffic discrimination but also provides a more comprehensive understanding of the underlying data patterns.

3.4.2. Data Balancing

4. Evaluation

4.1. Experimental Setup

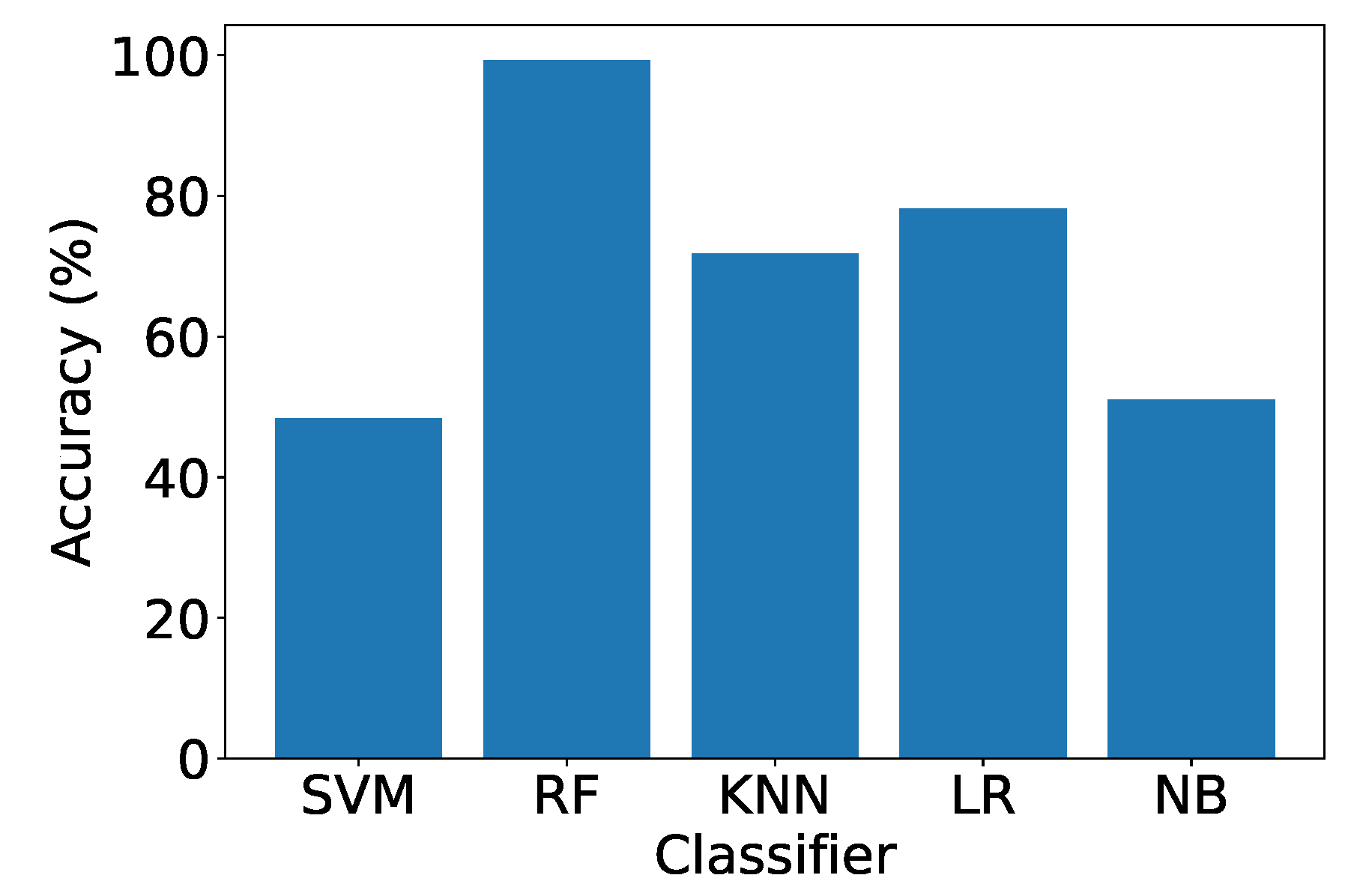

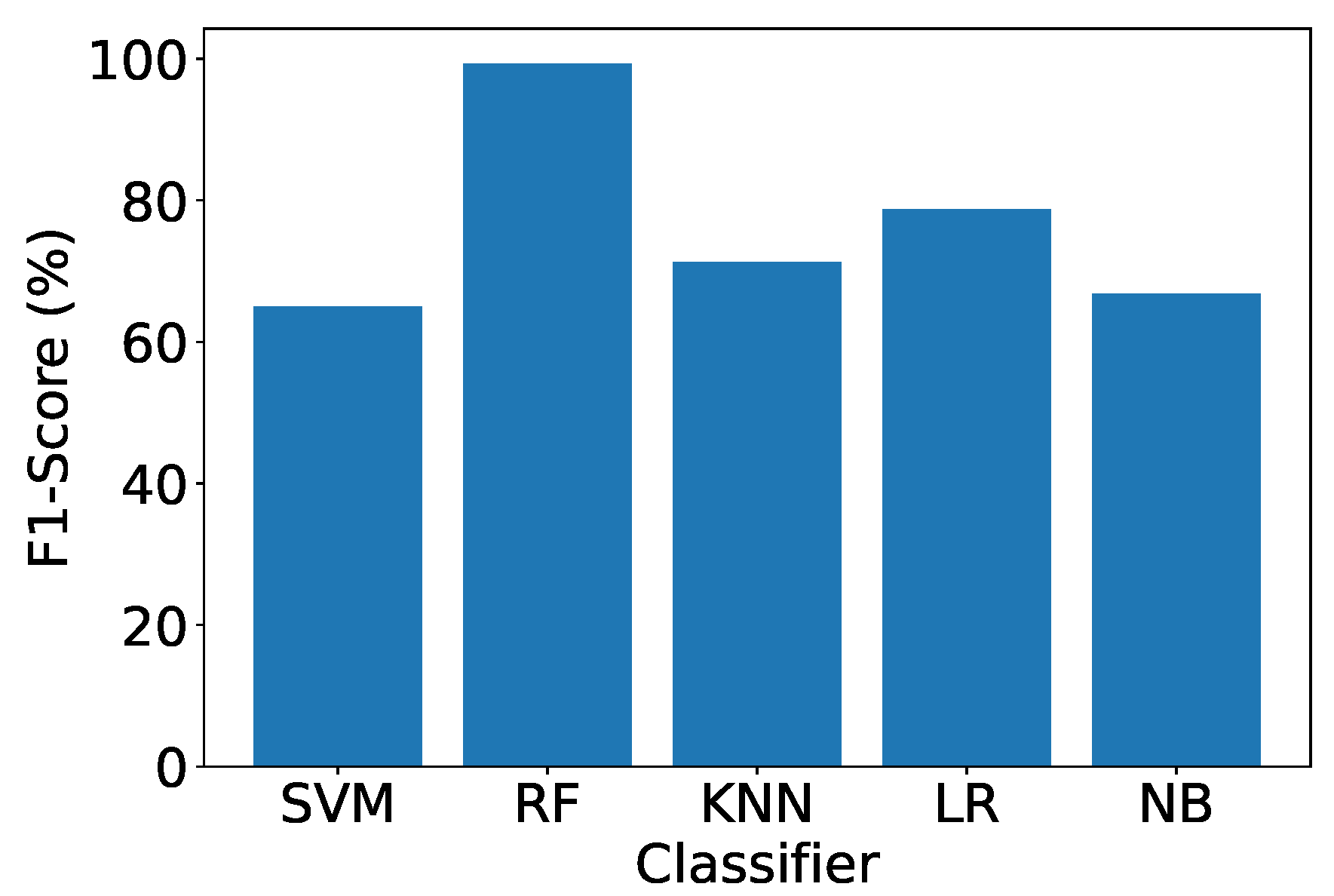

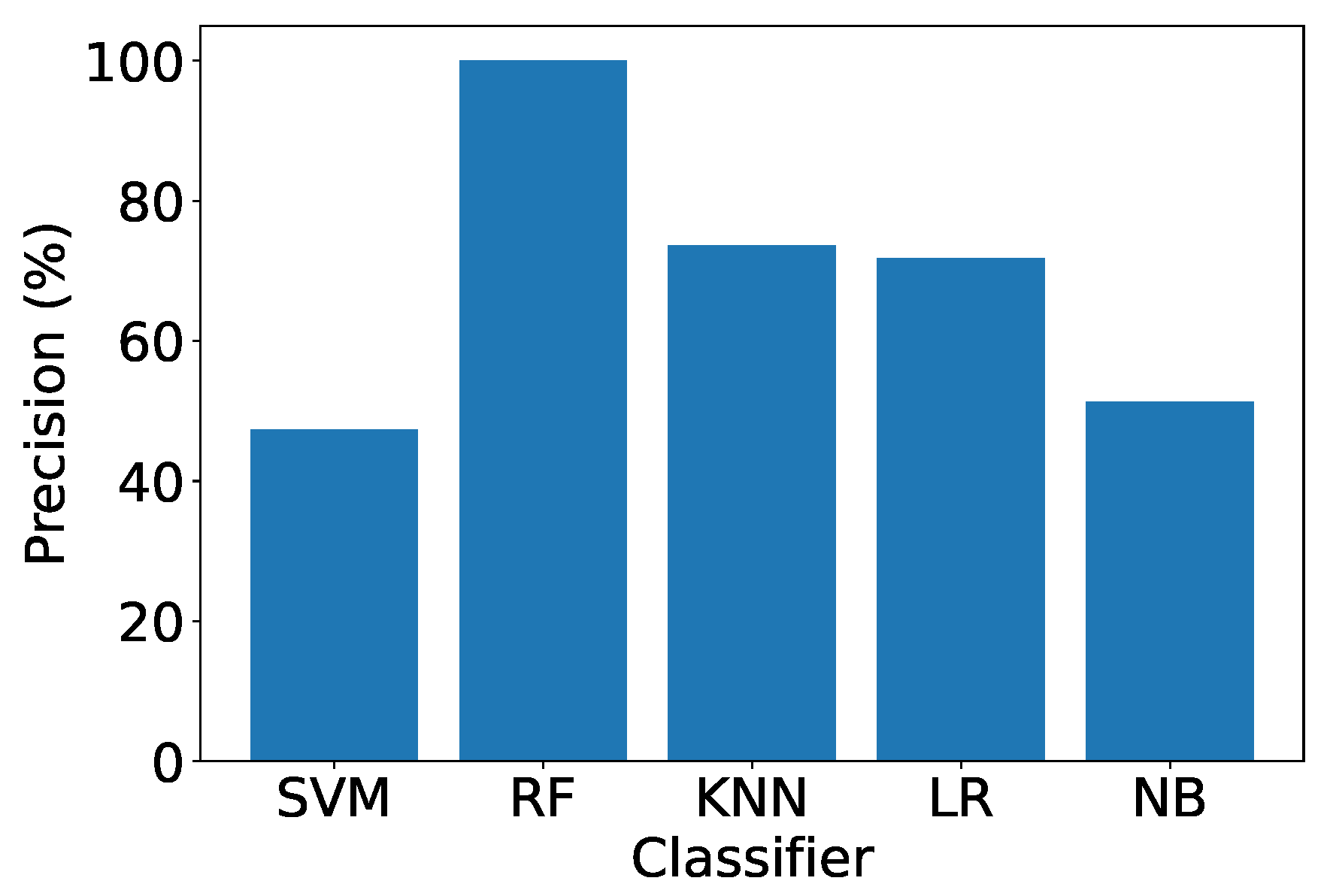

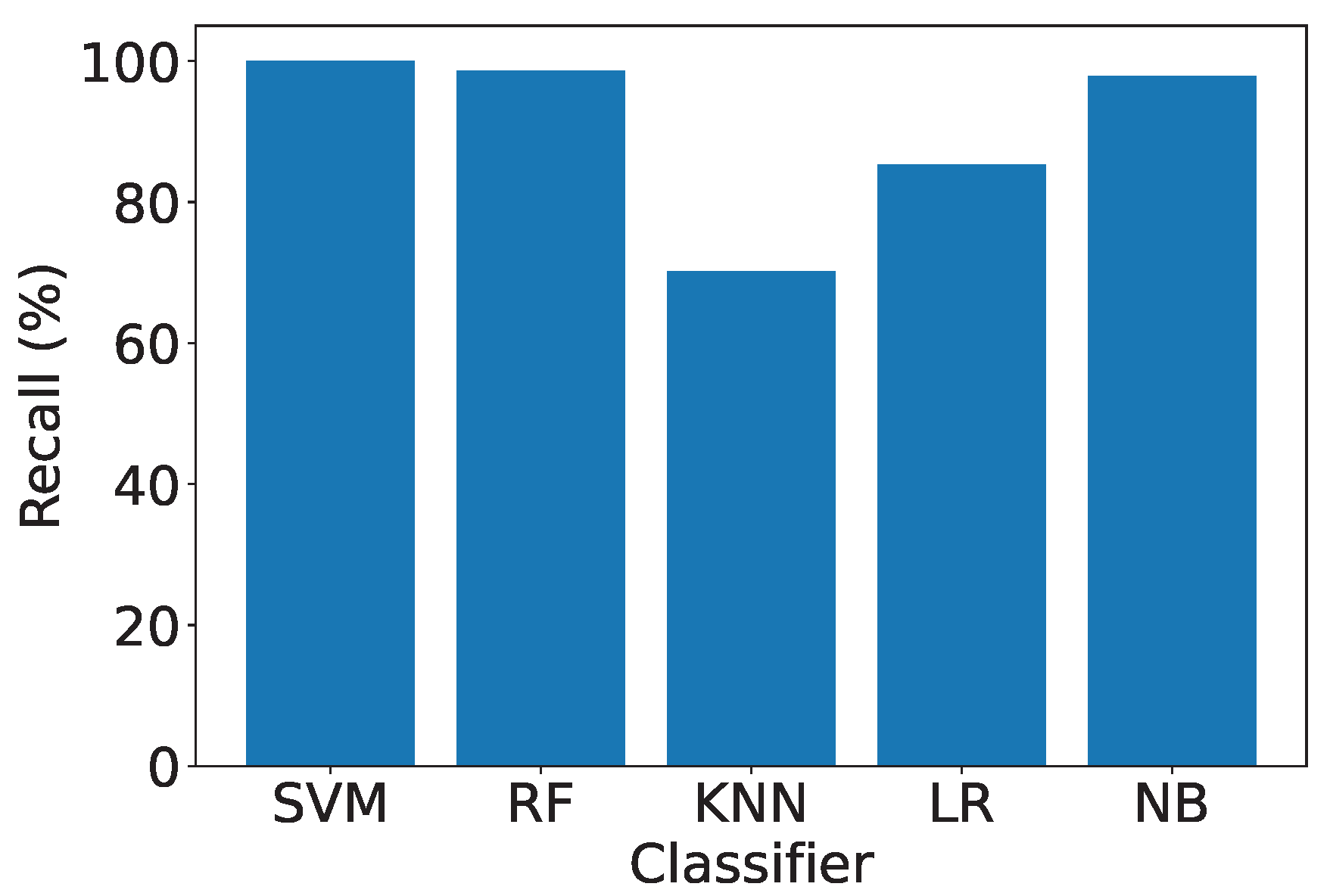

4.2. Overall Performance

- Ensemble Classifier Matching Our Feature Extraction Method. The RF classifier, an ensemble method, exhibits the best performance, aligning with the effectiveness of our feature extraction design. This emphasizes the compatibility and synergy between our feature extraction method and the ensemble classifier.

- Effectiveness of IDS with RF. With RF, our IDS achieves an accuracy exceeding 98.5%, demonstrating the efficacy of our feature extraction design and the overall system. The high accuracy and F1 Scores underscore the robustness and practical utility of our IDS in real-world deployment scenarios.

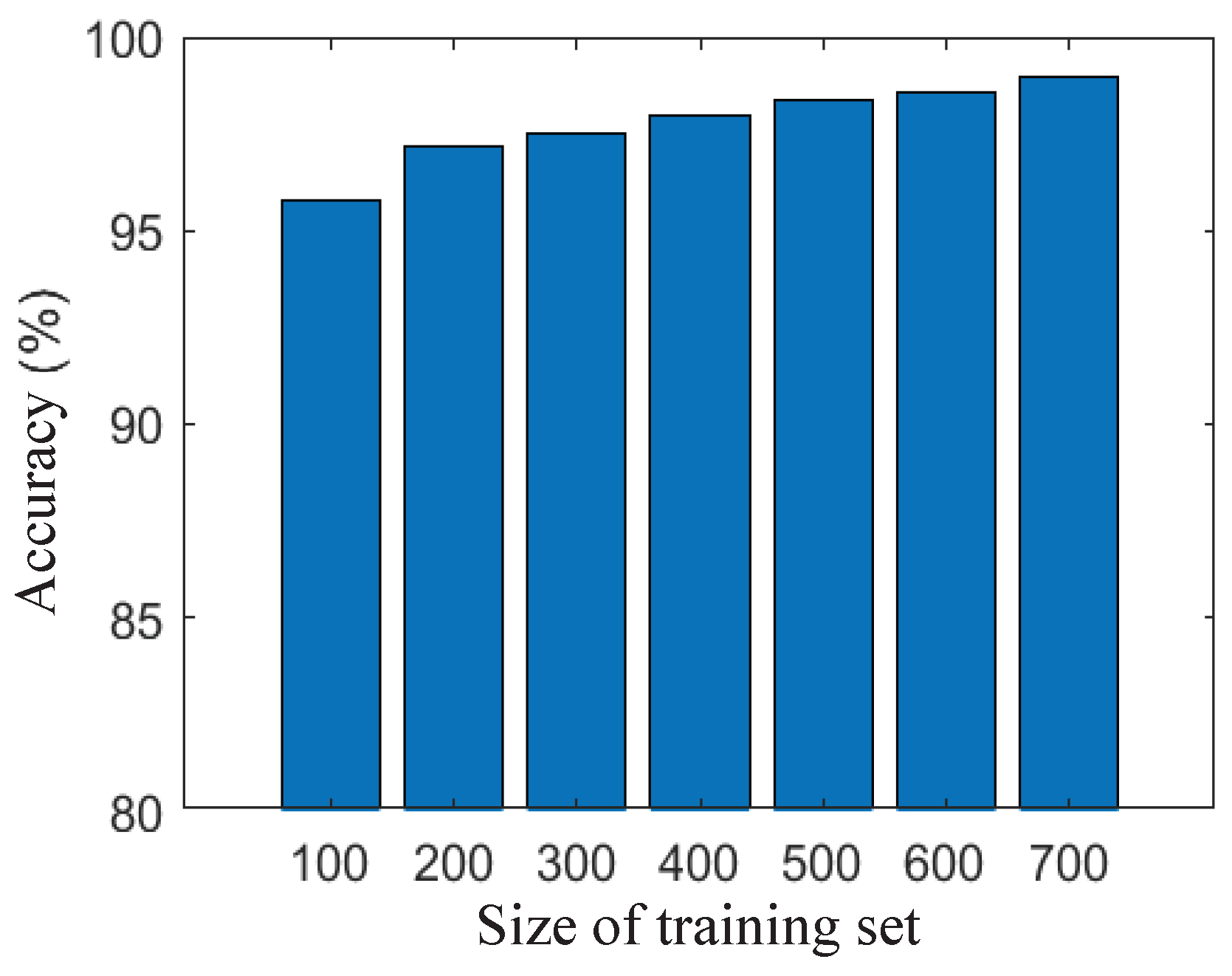

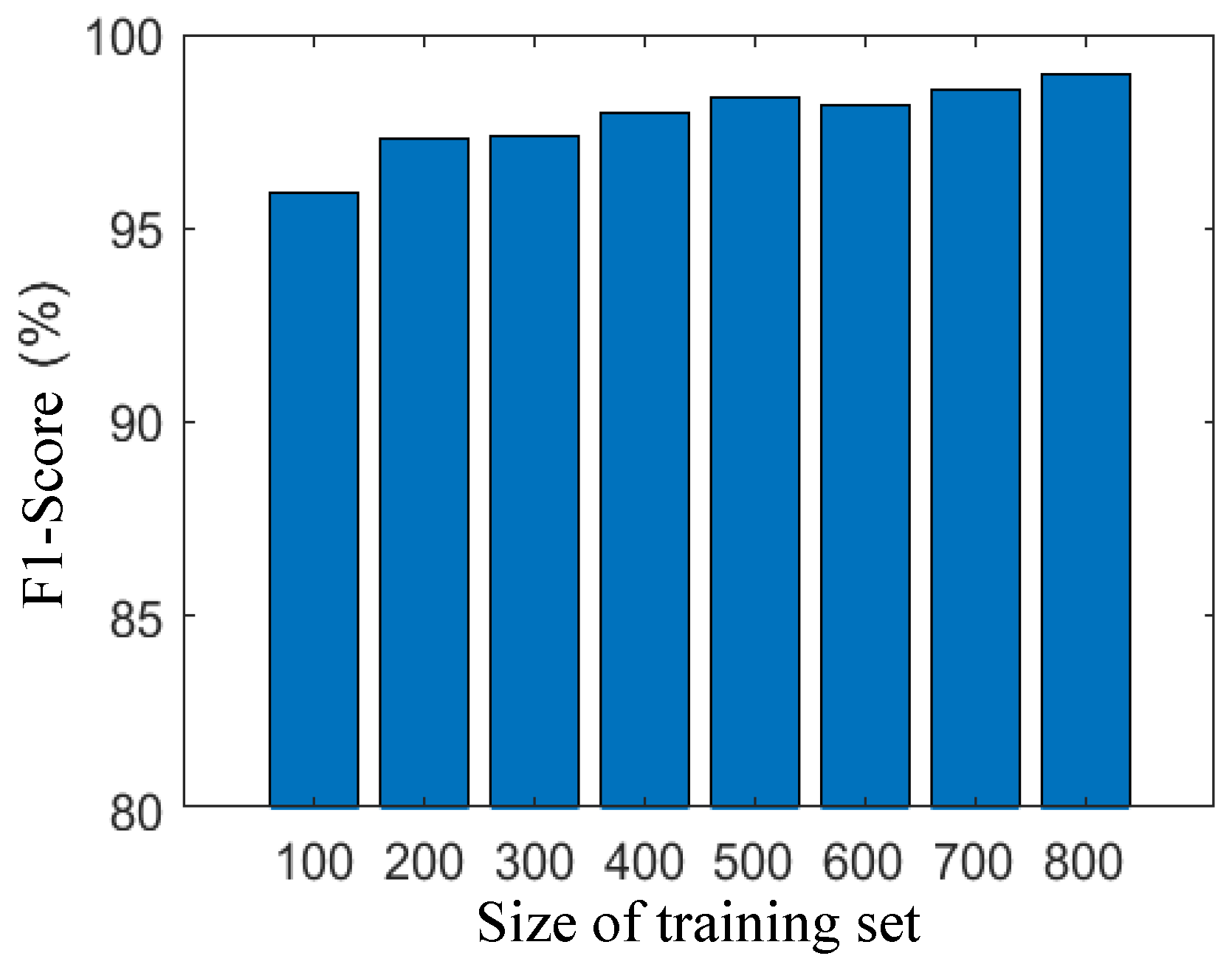

4.3. Effect of Size of Training Set

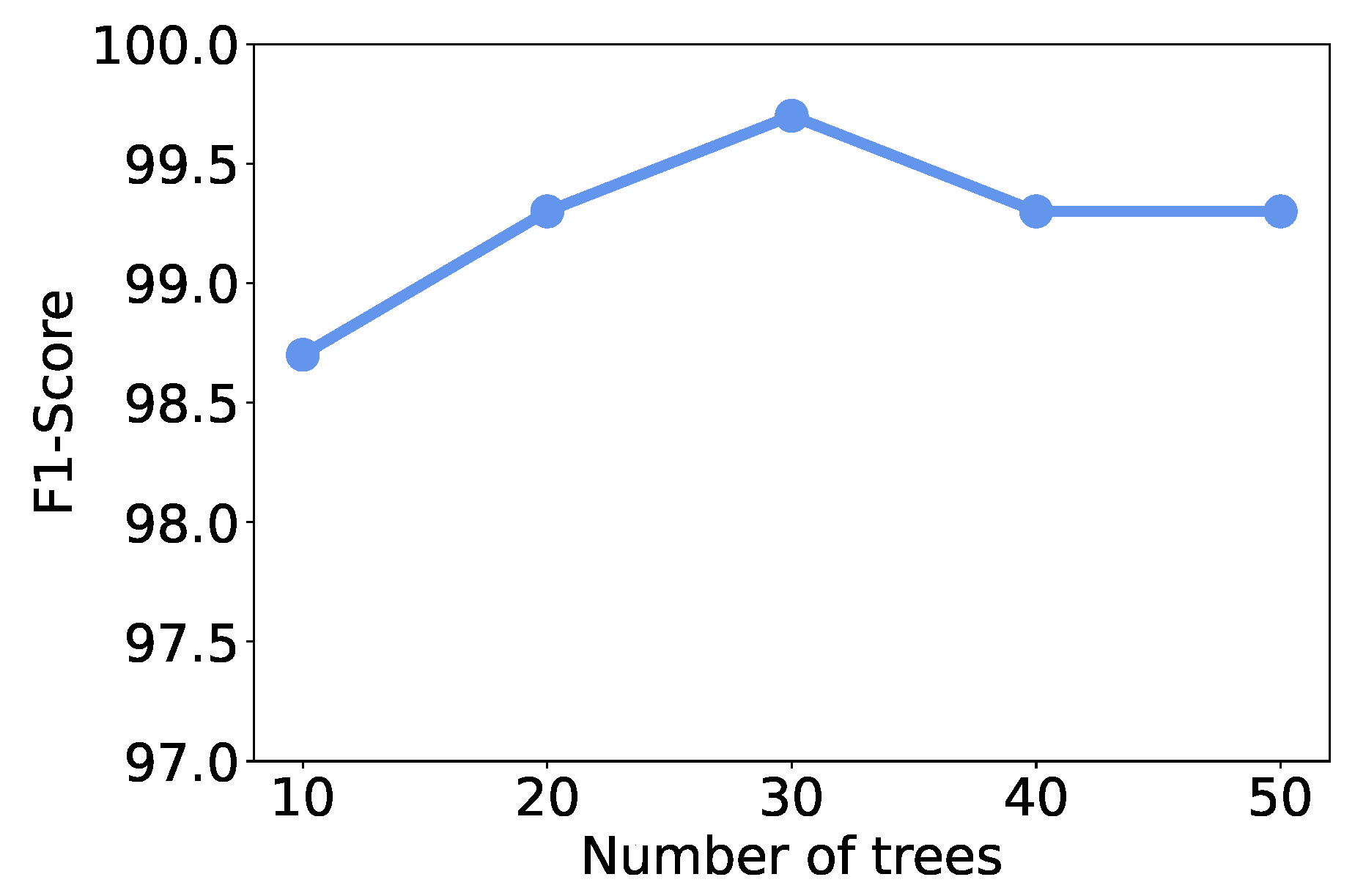

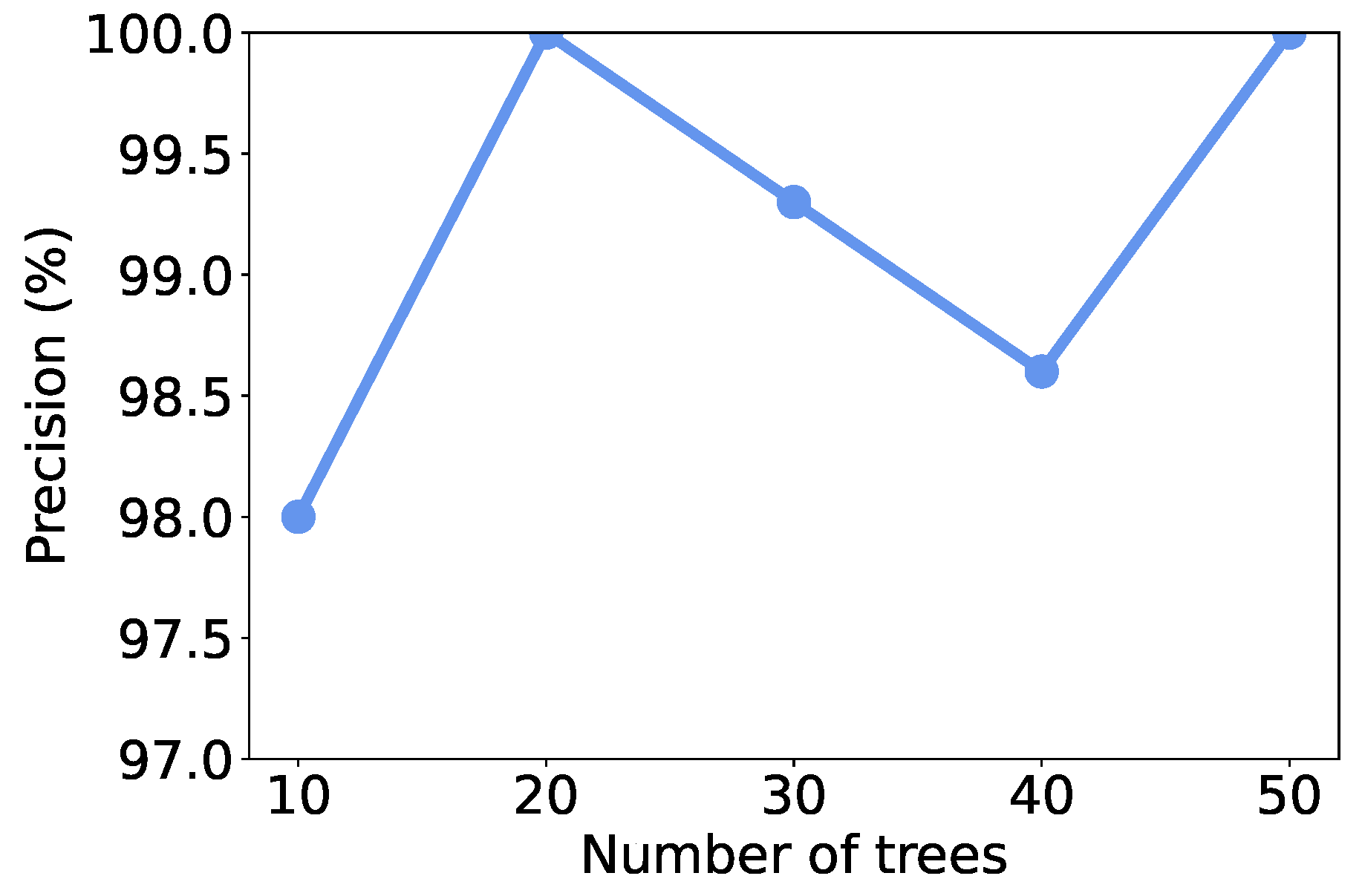

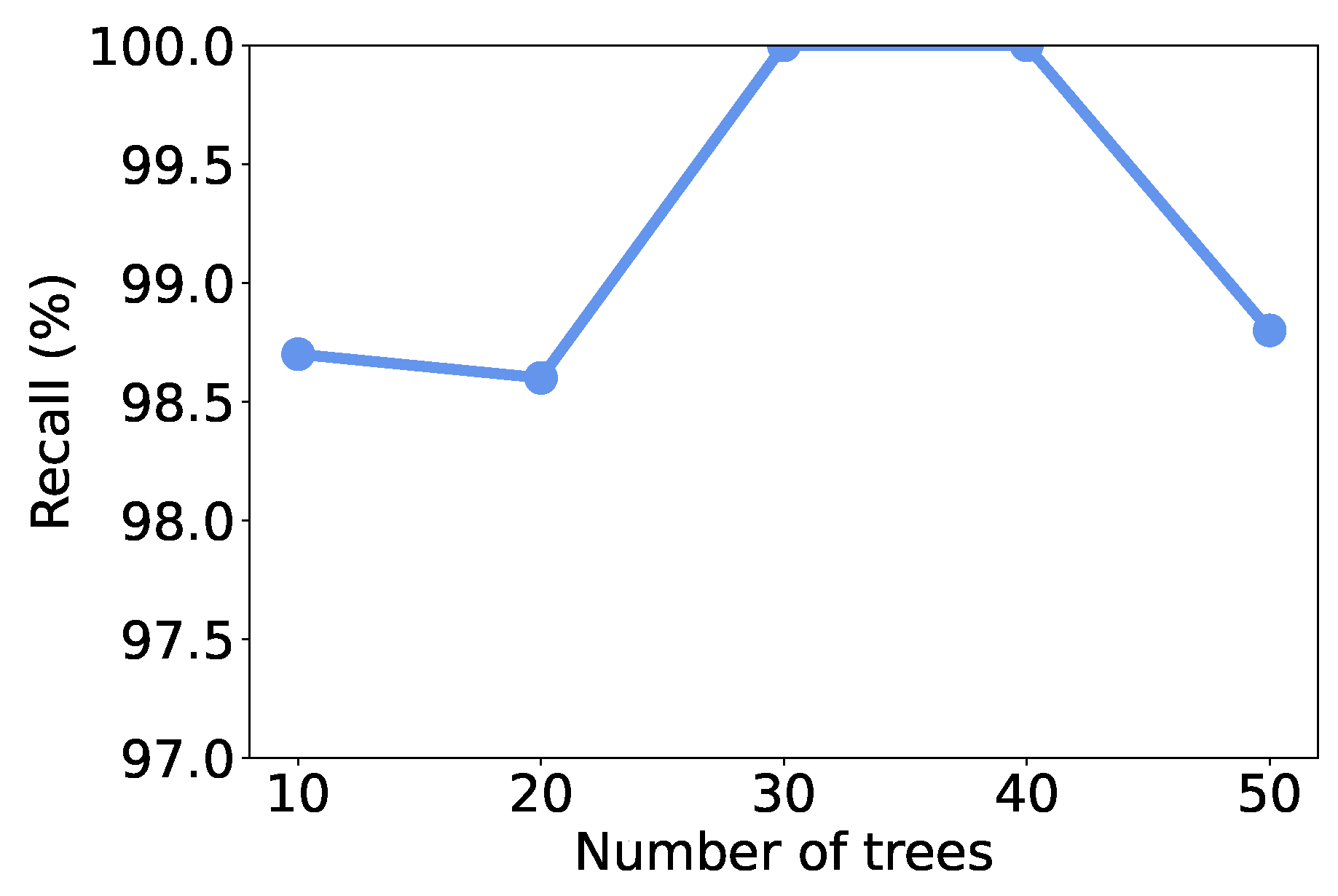

4.4. Effect of Number of Trees

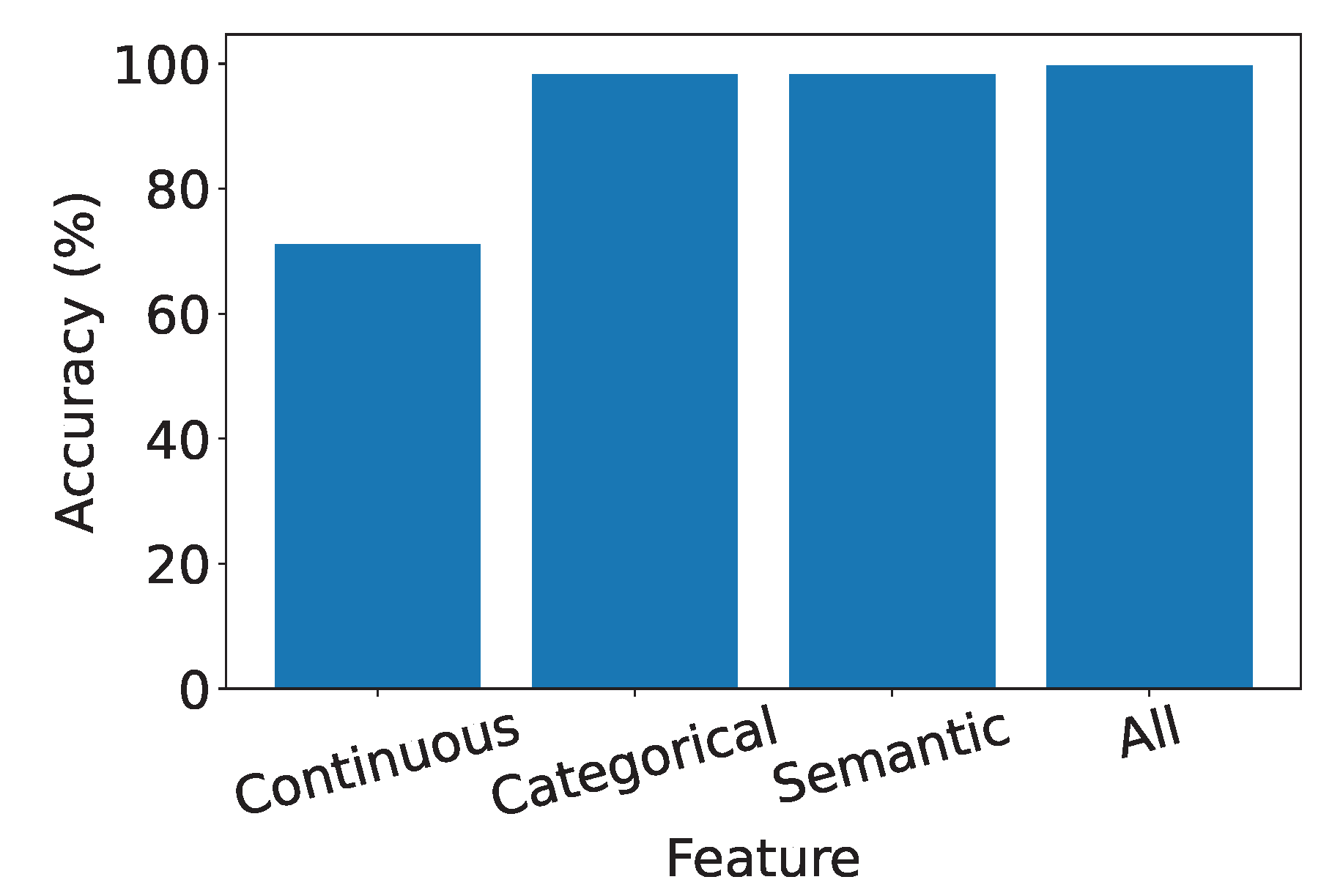

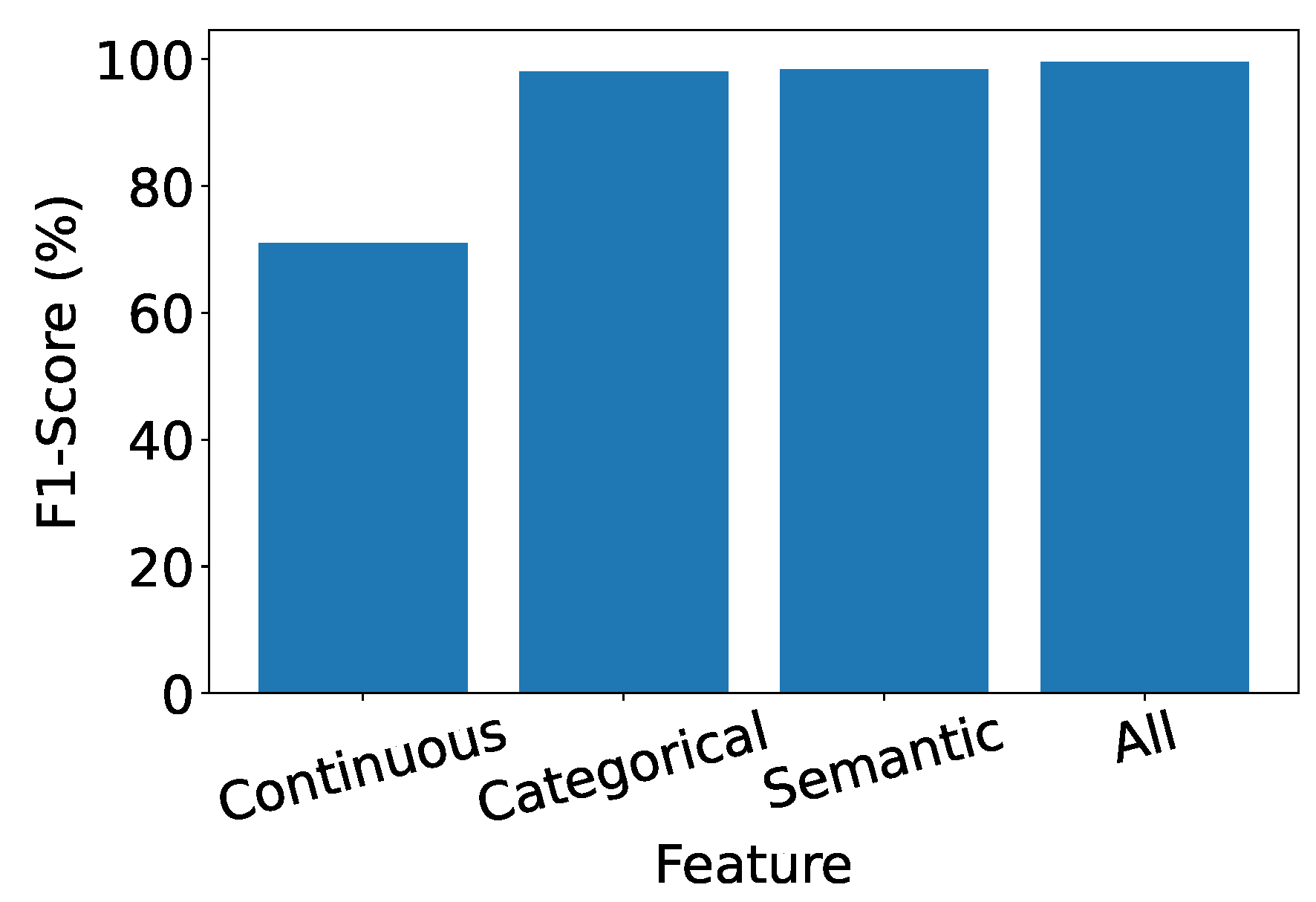

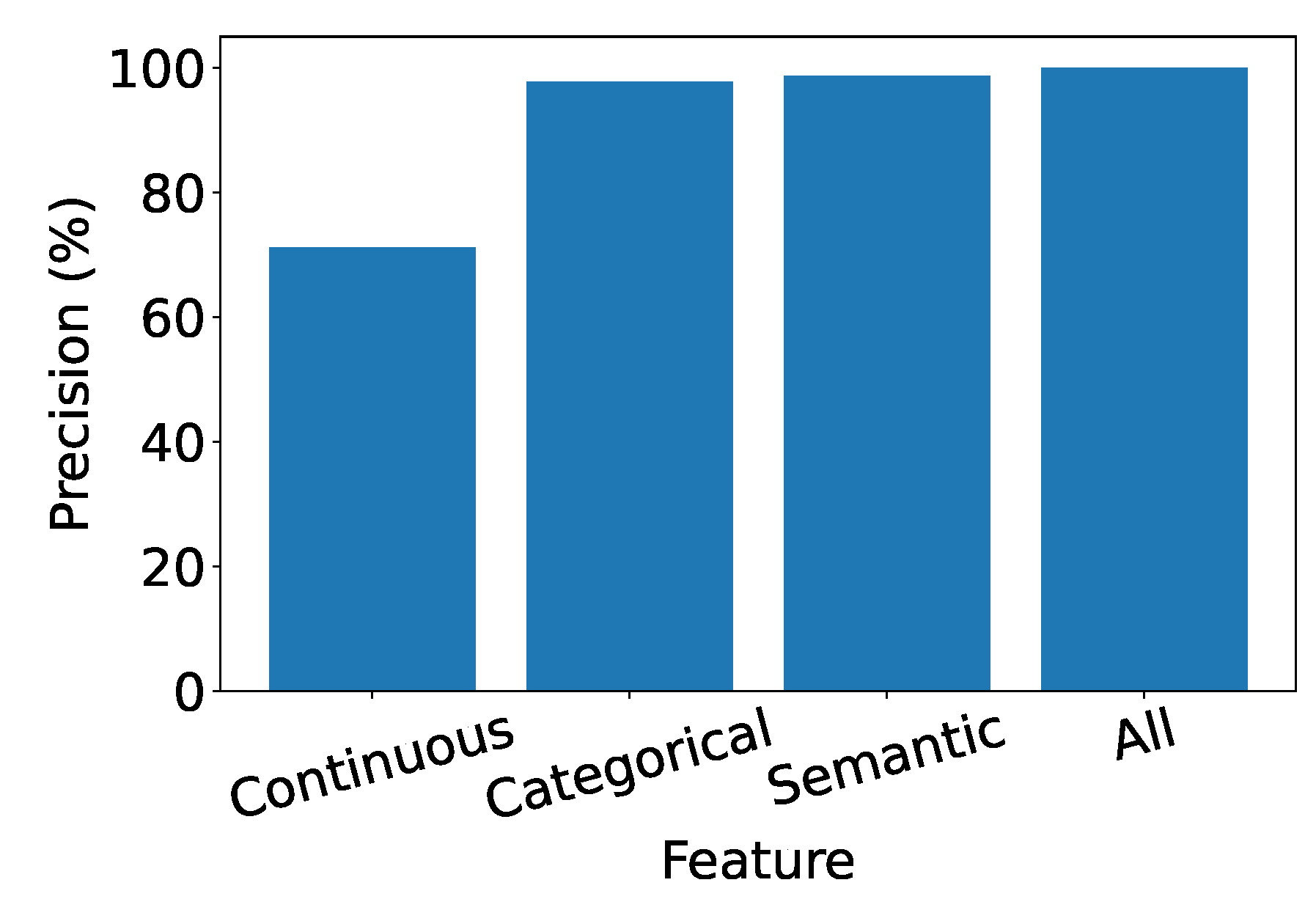

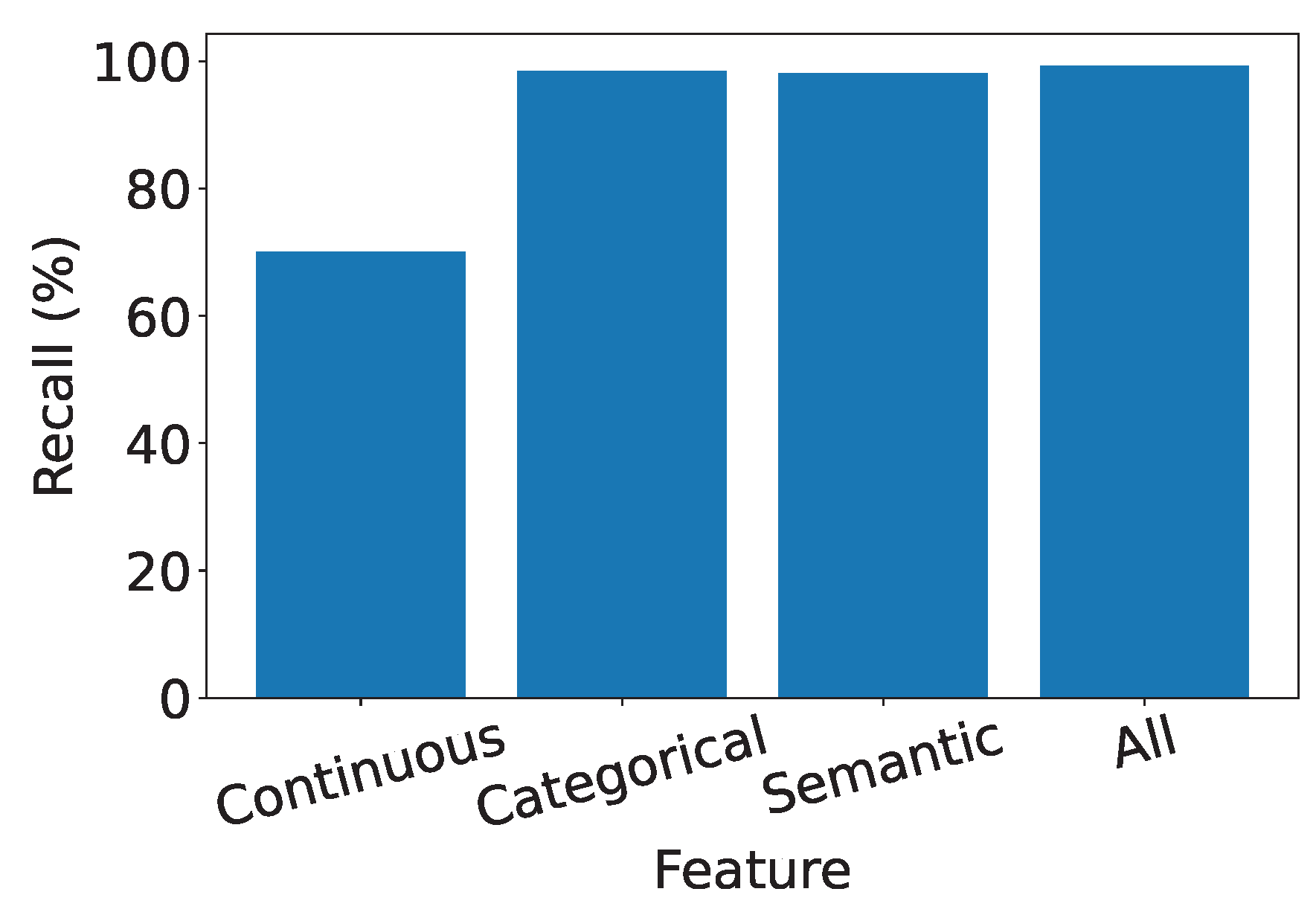

4.5. Exploring the Significance of Features in IDS

-

Effectiveness of Semantic and Categorical Features.

- Semantic Features: Achieve over 90% accuracy alone, highlighting their effectiveness in capturing meaningful information.

- Categorical Features: Similarly demonstrate commendable performance, consistently surpassing 90%. This showcases the robustness of categorical features in characterizing network traffic.

- Effective Feature Fusion. When all types of features are employed collectively, our IDS achieves optimal performance. The seamless combination of our designed feature extraction methods for each type of feature contributes synergistically to the final result. This underscores our system’s capability to effectively utilize a diverse set of features for intrusion detection.

- Extra Robustness Through Feature Diversity. In scenarios where categorical features may lack robustness, the inclusion of robust semantic features provides additional guidance. This contributes to the overall resilience of the system, ensuring effective intrusion detection even in challenging conditions.

5. Related Work

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| IDS | Intrusion detection system |

| ML | Machine learning |

| ISP | Internet service provider |

| URL | Uniform resource locator |

| NLP | Natural language processing |

| IP | Internet protocol |

| CNN | Convolutional neural network |

| DNN | Deep Neural Network |

| LSTM | Long short-term memory |

| SVM | Support vector machine |

| RF | Random forest |

| NB | Naive Bayes |

| LR | Linear regression |

| KNN | K-nearest neighbors |

| DT | Decision Tree |

References

- Dina, A.S.; Siddique, A.B.; Manivannan, D. Effect of Balancing Data Using Synthetic Data on the Performance of Machine Learning Classifiers for Intrusion Detection in Computer Networks. IEEE Access 2022, 10, 96731–96747. [Google Scholar] [CrossRef]

- Liu, J.; Song, X.; Zhou, Y.; Peng, X.; Zhang, Y.; Liu, P.; Wu, D.; Zhu, C. Deep anomaly detection in packet payload. Neurocomputing 2022, 485, 205–218. [Google Scholar] [CrossRef]

- Naseer, S.; Saleem, Y.; Khalid, S.; Bashir, M.K.; Han, J.; Iqbal, M.M.; Han, K. Enhanced network anomaly detection based on deep neural networks. IEEE access 2018, 6, 48231–48246. [Google Scholar] [CrossRef]

- Wang, W.; Sheng, Y.; Wang, J.; Zeng, X.; Ye, X.; Huang, Y.; Zhu, M. HAST-IDS: Learning hierarchical spatial-temporal features using deep neural networks to improve intrusion detection. IEEE access 2017, 6, 1792–1806. [Google Scholar] [CrossRef]

- Bulajoul, W.; James, A.; Pannu, M. Network intrusion detection systems in high-speed traffic in computer networks. In Proceedings of the 2013 IEEE 10th International Conference on e-Business Engineering. IEEE, 2013, pp. 168–175. [CrossRef]

- Kim, T.Y.; Cho, S.B. Web traffic anomaly detection using C-LSTM neural networks. Expert Systems with Applications 2018, 106, 66–76. [Google Scholar] [CrossRef]

- Marín, G.; Casas, P.; Capdehourat, G. RawPower: Deep Learning based Anomaly Detection from Raw Network Traffic Measurements. In Proceedings of the Proceedings of the ACM SIGCOMM 2018 Conference on Posters and Demos, SIGCOMM 2018. ACM, 2018, pp. 75–77. [CrossRef]

- Liu, H.; Lang, B.; Liu, M.; Yan, H. CNN and RNN based payload classification methods for attack detection. Knowledge-Based Systems 2019, 163, 332–341. [Google Scholar] [CrossRef]

- Gao, T.; Yao, X.; Chen, D. SimCSE: Simple Contrastive Learning of Sentence Embeddings. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, EMNLP 2021. Association for Computational Linguistics, 2021, pp. 6894–6910.

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. In Proceedings of the Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, EMNLP-IJCNLP 2019. Association for Computational Linguistics, 2019, pp. 3980–3990. [CrossRef]

- Thakur, N.; Reimers, N.; Daxenberger, J.; Gurevych, I. Augmented SBERT: Data Augmentation Method for Improving Bi-Encoders for Pairwise Sentence Scoring Tasks. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2021. Association for Computational Linguistics, 2021, pp. 296–310.

- Devlin, J.; Chang, M.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2019. Association for Computational Linguistics, 2019, pp. 4171–4186.

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient Estimation of Word Representations in Vector Space. In Proceedings of the 1st International Conference on Learning Representations, ICLR 2013, 2013.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All you Need. In Proceedings of the Advances in Neural Information Processing Systems 30: Annual Conference on Neural Information Processing Systems, NIPS 2017, 2017, pp. 5998–6008.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2016, Las Vegas, NV, USA, June 27-30, 2016. IEEE Computer Society, 2016, pp. 770–778.

- Ba, L.J.; Kiros, J.R.; Hinton, G.E. Layer Normalization. CoRR 2016, abs/1607.06450, [1607.06450].

- Wang, W.; Wei, F.; Dong, L.; Bao, H.; Yang, N.; Zhou, M. MiniLM: Deep Self-Attention Distillation for Task-Agnostic Compression of Pre-Trained Transformers. In Proceedings of the Proceedings of the 34th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2020; NIPS’20.

- Wang, W.; Bao, H.; Huang, S.; Dong, L.; Wei, F. MiniLMv2: Multi-Head Self-Attention Relation Distillation for Compressing Pretrained Transformers. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, Online, 2021; pp. 2140–2151.

- Schroff, F.; Kalenichenko, D.; Philbin, J. FaceNet: A unified embedding for face recognition and clustering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, CVPR 2015. IEEE Computer Society, 2015, pp. 815–823. [CrossRef]

- Face, H. all-MiniLM-L12-v2, 2024. https://huggingface.co/sentence-transformers/all-MiniLM-L12-v2.

- Wold, S.; Esbensen, K.; Geladi, P. Principal component analysis. Chemometrics and intelligent laboratory systems 1987, 2, 37–52. [Google Scholar] [CrossRef]

- Johnson, J.M.; Khoshgoftaar, T.M. Survey on deep learning with class imbalance. Journal of Big Data 2019, 6, 1–54. [Google Scholar] [CrossRef]

- Nils Reimers. Sentence Transformers, 2022. https://www.sbert.net/.

- da Silva, A.S.; Wickboldt, J.A.; Granville, L.Z.; Filho, A.E.S. ATLANTIC: A framework for anomaly traffic detection, classification, and mitigation in SDN. In Proceedings of the 2016 IEEE/IFIP Network Operations and Management Symposium, NOMS 2016. IEEE, 2016, pp. 27–35.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).