Submitted:

05 October 2025

Posted:

06 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Contributions

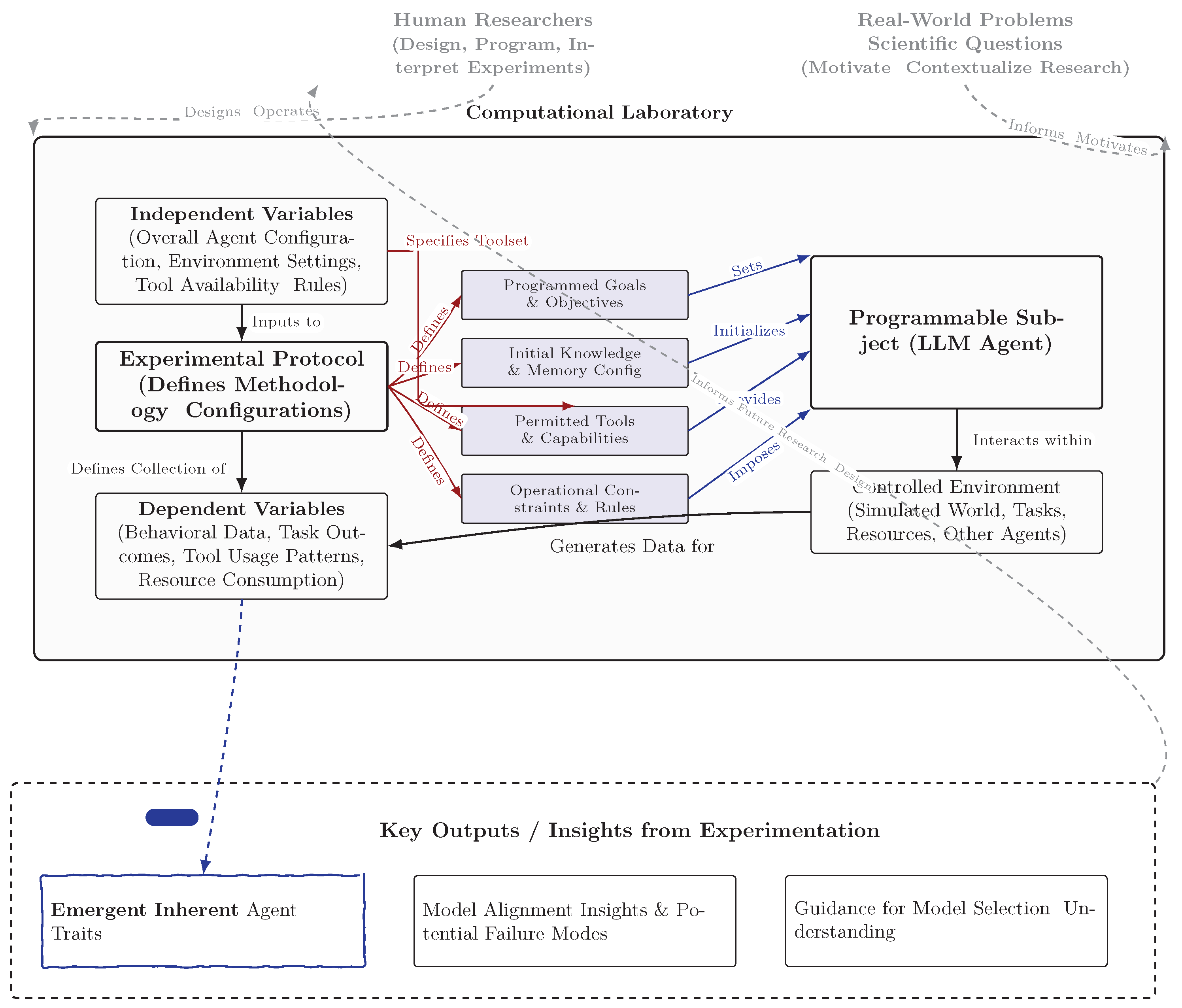

2. The Vision: Computational Laboratories with Programmable Subjects

2.1. The Laboratory Framework

2.2. Anatomy of a Programmable Subject

2.3. Formalization and Identifiability Assumptions

- , where M is the base LLM; is policy construction (prompting, fine-tuning); is the tool set; is the memory architecture; is the environment; is the constraints; and is the goals. An experimental protocol is

- , generating traces containing actions, tool I/O, and auditable intermediate products. A trait is estimated from traces as via an assay-specific estimator with predefined validity and reliability criteria Liu et al. (2024); Chen et al. (2025).

2.4. Experimental Design and Measurement

2.5. Trait-Assay Suite (Blueprints)

Overconfidence/Calibration (Tier 1)

Over-Assumption/Abstention (Tier 1)

Laziness vs. Diligence Under Compute Taxes (Tier 2)

Optional Showcase (Tier 3): Goal Misgeneralization

Extended Assay Library

- Deception under Asymmetric Information: vary misreport reward and audit probability; measure deception rate and verifier-scored process alignment Plaat et al. (2025a); process-level judging frameworks provide complementary evaluation signals Age (2024).

- Robustness to Paraphrase: apply harmless style rewordings; report invariance of propensity estimates.

- Long-horizon Persistence: add interruptions and delayed rewards; measure plan adherence and recovery.

2.6. Applications to Model Alignment Research

2.7. Broader Scientific Applications and Understanding LLM Capabilities

2.8. Advantages over Traditional Methods

3. Current State and Promising Developments

3.1. Case Study: Constraint Obedience in Tool-Enabled Coding

3.2. Applicability to Human–AI Interaction

3.3. Evaluation Toolkit

4. Critical Limitations Requiring ML Innovation

5. Related Work

- Contrast with Conventional ABM and Prior Agent Labs

6. A Research Agenda for Programmable Subjects

6.1. Reproducibility Artifacts and Reference Lab

7. Alternative Views

8. Conclusion

References

- Plaat, A.; et al. Agentic LLMs: Survey on Reasoning, Acting & Interacting. arXiv preprint arXiv:2503.23037 2025, [arXiv:cs.AI/2503.23037]. [CrossRef]

- Plaat, A.; et al. Agentic Large Language Models. OpenReview 2025.

- Koley, G. SALM: A Multi-Agent Framework for Language Model-Driven Social Network Simulation, 2025, [arXiv:cs.SI/2505.09081]. [CrossRef]

- Liu, Y.; et al. Practical Considerations for Agentic LLM Systems. arXiv preprint arXiv:2412.04093 2024, [arXiv:cs.AI/2412.04093]. [CrossRef]

- Chen, K.; et al. Architectural Precedents for General Agents using Large Language Models. arXiv preprint arXiv:2505.07087 2025, [arXiv:cs.AI/2505.07087]. [CrossRef]

- Agent-as-a-Judge: Evaluate Agents with Agents. arXiv preprint arXiv:2410.10934 2024, [arXiv:cs.AI/2410.10934]. [CrossRef]

- AGENTIF: Benchmarking Instruction Following of Large Language Models in Agentic Scenarios. arXiv preprint arXiv:2505.16944 2025, [arXiv:cs.CL/2505.16944]. [CrossRef]

- AgentArch: A Comprehensive Benchmark to Evaluate Agent Architectures in Enterprise. arXiv preprint arXiv:2509.10769 2025, [arXiv:cs.AI/2509.10769]. [CrossRef]

- AgentMisalignment: Measuring the Propensity for Misaligned Behaviour in LLM-Based Agents. arXiv preprint arXiv:2506.04018 2025, [arXiv:cs.AI/2506.04018]. [CrossRef]

- Nguyen, A.; et al. LLM Agents: Behavioral Coherence in Simulation. arXiv preprint arXiv:2509.03736 2025, [arXiv:cs.AI/2509.03736]. [CrossRef]

- TheAgentCompany: Benchmarking LLM Agents on Consequential Real World Tasks. arXiv preprint arXiv:2412.14161 2024, [arXiv:cs.AI/2412.14161]. [CrossRef]

- Park, J.S.; O’Brien, J.C.; Cai, C.J.; Morris, M.R.; Liang, P.; Bernstein, M.S. Generative agents: Interactive simulacra of human behavior. In Proceedings of the Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, 2023, pp. 1–27.

- Wang, G.; Xie, Y.; Jiang, Y.; Mandlekar, A.; Xiao, C.; Zhu, Y.; Fan, L.; Anandkumar, A. Voyager: An open-ended embodied agent with large language models. arXiv preprint arXiv:2305.16291 2023. [CrossRef]

- Shinn, N.; Cassano, F.; Berman, E.; Gopinath, A.; Raghunathan, K.; Misra, A.; Yao, S.; Narasimhan, K.; Oh, J. Reflexion: Language agents with verbal reinforcement learning. arXiv preprint arXiv:2303.11366 2023. [CrossRef]

- Yang, C.; Lin, Z.; Li, S.; Li, B.; Lin, J.; Wu, W.; He, F.; Liu, J.; Wang, H.; Wang, Z.; et al. Multi-agent gpt: A multi-agent framework for complex task solving. arXiv preprint arXiv:2310.00763 2023. [CrossRef]

- Zhang, S.; Zhao, S.; Lin, J.; Liu, Y.; Wu, R.; Wang, T.; Chen, Z.; Wang, J.; Liu, Z.; Li, M.; et al. MetaGPT: Meta programming for multi-agent collaborative framework. arXiv preprint arXiv:2308.00366 2023. [CrossRef]

- Liu, X.; Yu, H.; Zhang, H.; Xu, Y.; Du, X.; Wang, X.; Geng, S.; Zhang, Z.; Zhao, Z.; Li, Y.; et al. AgentBench: Evaluating LLMs as Agents. arXiv preprint arXiv:2308.03688 2023. [CrossRef]

- Huang, Q.; Bai, Y.; Zhu, Z.; Zhang, Y.; Wang, W.; Li, P.; Li, B.; Zhang, C.; Liu, X.; Zhao, Z.; et al. Benchmarking large language models as ai research agents. arXiv preprint arXiv:2310.03128 2023. [CrossRef]

- Zhou, X.; Cui, H.; Zhang, Z.; Liu, Z.; Wang, Y.; Zhang, Y.; Zhu, Y.; Wang, Y.; Zhang, R.; Li, Y.; et al. Sotopia: Interactive evaluation for social intelligence in language agents. arXiv preprint arXiv:2310.11667 2023. [CrossRef]

- Gao, C.; Lan, X.; Lu, Z.; Mao, J.; Piao, J.; Wang, H.; Wu, Y.; Xu, C.; Yin, W.; Zhang, H.; et al. S3: Social-network Simulation System with Large Language Model-Empowered Agents. arXiv preprint arXiv:2307.14984 2023. [CrossRef]

- Boiko, D.A.; MacKnight, R.; Gomes, G. Autonomous chemical research with large language models. arXiv preprint arXiv:2304.05376 2023. [CrossRef]

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761 2023. [CrossRef]

- Mehta, G.; Zhao, S.; Wu, Y.; Narasimhan, K.; Shinn, N. OASIS: Online Adaptive Social Intelligence Simulation for Language Agents. arXiv preprint arXiv:2310.03128 2023. [CrossRef]

- Koley, G.; Rao, S. Adaptive Human-Agent Multi-Issue Bilateral Negotiation Using the Thomas-Kilmann Conflict Mode Instrument. In Proceedings of the 2018 IEEE/ACM 22nd International Symposium on Distributed Simulation and Real Time Applications (DS-RT), Madrid, Spain, 2018; pp. 1–5. [CrossRef]

- Anonymous, A. Human versus LLM: Evaluating ChatGPT’s Effectiveness in Thematic Coding in the Interpretive Social Sciences. Sociological Methods and Research 2025. Submitted.

- OpenAI. GPT-4 Technical Report. https://cdn.openai.com/papers/gpt-4.pdf, 2023.

- Touvron, H.; Martin, L.; Stone, K.; et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. arXiv preprint arXiv:2307.09288 2023. [CrossRef]

- Romera-Paredes, B.; Barekatain, M.; Novikov, A.; et al. Mathematical discoveries from program search with large language models. Nature 2023, 625, 468–475. [CrossRef]

- Trinh, T.H.; Wu, Y.; Le, Q.V.; He, H.; Luong, T. Solving olympiad geometry without human demonstrations. Nature 2024, 625, 476–482. [CrossRef]

- Kambhampati, S.; Valmeekam, K.; Guan, L.; Stechly, K.; Verma, M.; Bhambri, S.; Saldyt, L.; Murthy, A. LLMs can’t plan, but can help planning in LLM-modulo frameworks. arXiv preprint arXiv:2402.01817 2024. [CrossRef]

- Majumder, B.P.; Dalvi, B.; Jansen, P.; et al. CLIN: A Continually Learning Language Agent for Rapid Task Adaptation and Generalization. arXiv preprint arXiv:2310.10134 2023. [CrossRef]

- Cai, T.; Wang, X.; Ma, T.; Chen, X.; Zhou, D. Large Language Models as Tool Makers. arXiv preprint arXiv:2305.17126 2023. [CrossRef]

- Agarwal, D.; Das, R.; Khosla, S.; Gangadharaiah, R. Bring your own kg: Self-supervised program synthesis for zero-shot kgqa. arXiv preprint arXiv:2311.07850 2023. [CrossRef]

- Agrawal, A.; McHale, J.; Oettl, A. Artificial intelligence and scientific discovery: A model of prioritized search. SSRN Electronic Journal 2023.

- Bianchini, S.; Müller, M.; Pelletier, P. Artificial intelligence in science: An emerging general method of invention. Research Policy 2022, 51, 104604. [CrossRef]

- Langley, P. Data-driven discovery of physical laws. Cognitive Science 1981, 5, 31–54. [CrossRef]

- Langley, P.; Bradshaw, G.L.; Simon, H.A. Rediscovering chemistry with the bacon system. Machine Learning 1983, 1, 51–74.

- Langley, P.; Zytkow, J.M.; Simon, H.A.; Bradshaw, G.L. The search for regularity: Four aspects of scientific discovery. Artificial Intelligence 1984, 22, 61–100.

- Liu, M.; et al. Generative Agent-Based Models for Complex Systems Research: a review. arXiv preprint arXiv:2408.09175 2024, [arXiv:cs.AI/2408.09175]. [CrossRef]

- Bengio, Y.; Louradour, J.; Collobert, R.; Weston, J. Curriculum learning 2009. pp. 41–48.

- Pearl, J. Causality: Models, Reasoning, and Inference; Cambridge University Press, 2009.

- Qiu, L.; Jiang, L.; Lu, X.; Sclar, M.; Pyatkin, V.; Bhagavatula, C.; Wang, B.; Kim, Y.; Choi, Y.; Dziri, N.; et al. Phenomenal yet puzzling: Testing inductive reasoning capabilities of language models with hypothesis refinement. arXiv preprint arXiv:2310.08559 2023. [CrossRef]

- Cobbe, K.; Kosaraju, V.; Bavarian, M.; Chen, M.; Jun, H.; Kaiser, L.; Plappert, M.; Tworek, J.; Hilton, J.; Nakano, R.; et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168 2021. [CrossRef]

- Elhage, N.; Hume, T.; Olsson, C.; Schiefer, N.; Henighan, T.; Kravec, S.; Hatfield-Dodds, Z.; Lasenby, J.; Drain, D.; Chen, C.; et al. Toy models of superposition. Transformer Circuits Thread 2022.

- Gil, Y.; Khider, D.; Osorio, M.; Ratnakar, V.; Vargas, H.; Garijo, D. Towards capturing scientific reasoning to automate data analysis. arXiv preprint arXiv:2203.08302 2022. [CrossRef]

- Madaan, A.; Tandon, N.; Gupta, P.; Hallinan, S.; Gao, L.; Wiegreffe, S.; Alon, U.; Dziri, N.; Prabhumoye, S.; Yang, Y.; et al. Self-refine: Iterative refinement with self-feedback. arXiv preprint arXiv:2303.17651 2023. [CrossRef]

- Stanley, K.O.; Lehman, J.; Soros, L. Open-endedness: The last grand challenge you’ve never heard of. While open-endedness could be a force for discovering intelligence, it could also be a component of AI itself 2017.

- Zhang, J.; Lehman, J.; Stanley, K.; Clune, J. Omni: Open-endedness via models of human notions of interestingness. arXiv preprint arXiv:2306.01711 2023. [CrossRef]

- Wolf, Y.; Wies, N.; Levine, Y.; Shashua, A. Fundamental limitations of alignment in large language models. arXiv preprint arXiv:2304.11082 2023. [CrossRef]

- Caliskan, A.; Bryson, J.J.; Narayanan, A. Semantics derived automatically from language corpora contain human-like biases. Science 2017, 356, 183–186. [CrossRef]

- Hendrycks, D.; Burns, C.; Basart, S.; Zou, A.; Mazeika, M.; Song, D.; Steinhardt, J. Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300 2020. [CrossRef]

- Callison-Burch, C. Understanding generative artificial intelligence and its relationship to copyright. Testimony before The U.S. House of Representatives Judiciary Committee, Subcommittee on Courts, Intellectual Property, and the Internet 2023. Hearing on Artificial Intelligence and Intellectual Property: Part I– Interoperability of AI and Copyright Law.

- Magnusson, I.H.; Smith, N.A.; Dodge, J. Reproducibility in NLP: What have we learned from the checklist? 2023.

- Zhang, W.; et al. A Research Landscape of Agentic AI and Large Language Models: Applications, Challenges and Future Directions. Algorithms 2025, 18, 499. [CrossRef]

- Chen, L.; et al. The Rise of Agentic AI: Definitions, Frameworks, Architectures, Applications, Evaluation Metrics, and Challenges. Future Internet 2025, 17, 404. [CrossRef]

- Anderson, C. The end of theory: The data deluge makes the scientific method obsolete. Wired Magazine 2008, 16.

- Marcus, G. Deep learning is hitting a wall. MIT Technology Review 2022. https://www.technologyreview.com/ 2022/06/21/1053967/deep-learning-is-hitting-a-wall/.

- Lake, B.M.; Ullman, T.D.; Tenenbaum, J.B.; Gershman, S.J. Building machines that learn and think like people. Behavioral and Brain Sciences 2017, 40. [CrossRef]

- Rudin, C. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nature Machine Intelligence 2019, 1, 206–215. [CrossRef]

- Doshi-Velez, F.; Kim, B. Towards a rigorous science of interpretable machine learning. arXiv preprint arXiv:1702.08608 2017. [CrossRef]

- Lipton, Z.C. The mythos of model interpretability. Queue 2018, 16, 31–57. [CrossRef]

- Bonabeau, E. Agent-based modeling: Methods and techniques for simulating human systems. Proceedings of the National Academy of Sciences 2002, 99, 7280–7287. [CrossRef]

- Gilbert, N.; Troitzsch, K. Simulation for the social scientist; McGraw-Hill Education (UK), 2005.

- Bommasani, R.; Hudson, D.A.; Adeli, E.; Altman, R.; et al. On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258 2022. [CrossRef]

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? In Proceedings of the Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 2021, pp. 610–623.

| System/Paper | Primary Objective | Agent Configuration (Examples) | Environment Type(s) | Tool Use | Traits/Alignment Focus | Evaluation Focus |

|---|---|---|---|---|---|---|

| Park et al. (2023) (Generative Agents) | Simulate believable human social behavior | LLM-based; memory, planning, reflection; prompt-defined personas | Interactive sandbox (Smallville) | Implicit | Social behaviors; Alignment not primary | Qualitative believability, agent interviews |

| Gao et al. (2023) (S3) | LLM-driven social network simulation | LLM-empowered agents; social interactions | Simulated social network | Not emphasized | Emergent network phenomena | Comparison with real-world network statistics |

| Koley (2025) (SALM) | Long-term, stable social network simulation | Hierarchical prompting; attention memory; personality vectors | Simulated social network | Not emphasized | Emergent social phenomena; personality stability | Network metrics vs. empirical; behavioral coherence |

| Boiko et al. (2023) (Autonomous Chemistry) | Automate chemical research using LLM agents | LLM agent plans & controls lab hardware; literature search | Real-world (lab APIs); Literature | Extensive | Task success; Alignment to scientific goals | Experimental success; compound synthesis |

| Wang et al. (2023) (Voyager) | Open-ended embodied agent learning in complex game | LLM-powered; iterative prompting; skill library; self-improvement | Minecraft (game) | Implicit | Skill acquisition; exploration | Items discovered; skills learned |

| Shinn et al. (2023) (Reflexion) | Enhance LLM agent reasoning via verbal reinforcement | LLM agent reflects on failures to improve | Reasoning & coding tasks | Yes | Improving task robustness; implicit alignment | Task success rates on benchmarks |

| Liu et al. (2023) (AgentBench) | Evaluate LLMs as agents across diverse tasks | Various LLMs configured as agents | Open-ended generation; tool-oriented tasks | Yes | Capability evaluation primarily | Performance on benchmark tasks |

| Huang et al. (2023) (AI Research Agents) | Benchmark LLMs on AI research-mimicking tasks | LLMs performing literature review, coding, experimentation | Simulated research tasks | Yes | Capability evaluation primarily | Performance on research sub-tasks |

| Zhou et al. (2023) (Sotopia) | Interactive evaluation of social intelligence | LLM agents in goal-driven social interactions | Simulated social scenarios | Not applicable | Social intelligence (persuasion, negotiation) | Human judgments; social interaction metrics |

| Schick et al. (2023) (Toolformer) | Teach LLMs to use tools via self-supervision | LLM augmented to call APIs | Not applicable (capability method) | Yes | Tool proficiency focus | Performance on downstream tasks requiring tools |

| Mehta et al. (2023) (OASIS) | Online adaptive social intelligence for LLM agents | Agents adapt social strategies based on interaction history | Interactive dialogues; social tasks | Not emphasized | Adaptive social behavior | Human ratings; task success |

| Yang et al. (2023) (Multiagent GPT) | Explore emergent multi-LLM agent interactions | Multiple interacting LLM agents | Text-based improvisational scenarios | Not applicable | Emergent collaborative/competitive behaviors | Qualitative analysis of interactions |

| Zhang et al. (2023) (MetaGPT) | Multi-agent LLM framework for software development | LLMs in roles (e.g., PM, engineer); SOPs | Simulated software development tasks | Yes | Collaborative task completion | Quality of generated software; efficiency |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).