Submitted:

02 October 2025

Posted:

04 October 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Datasets for Network Intrusion Detection

2.2. Selecting Training Examples Efficiently

3. Methods and Materials

3.1. Problem Statement

- , i.e., the size of the selected subset is significantly smaller;

- The performance of a model f trained on , denoted , satisfies:where is a task-specific performance metric (e.g., accuracy, F1-score);

- The total computational cost remains within acceptable bounds, i.e.,to ensure that the method does not significantly increase training time.

3.2. Proposal: Add Only Errors Selection Strategy

- The classifier predicts labels for the current batch of samples.

- Misclassified instances are identified by comparing the predicted labels with the ground truth labels .

- Only the misclassified samples are added to the current training set .

- The classifier is then retrained on the updated dataset.

| Algorithm 1 AOE: Selection strategy based on adding only errors |

|

Require: Training data , labels , batch size , number of epochs , classifier clf Ensure: Updated training data x, y, and trained classifier clf

|

RSS – Random Selection Strategy

| Algorithm 2 RSS: Selection based on random selection strategy |

|

Require: Training data , labels , selection size , classifier clf Ensure: Selected training data x, y, and trained classifier clf

|

3.3. Datasets

3.3.1. KDDCUP99 Dataset

3.3.2. NSL-KDD Dataset

- No redundant records in the training subset.

- No redundant records in the validation subset.

- Selection of representative records for each class to improve evaluation accuracy.

- A reasonable dataset size that enables full-set experimentation without random sampling.

- Basic features: Attributes extracted directly from the TCP/IP connection.

-

Traffic features: Attributes extracted over a time window and divided into:

- -

- Same host: Connections in the last 2 seconds to the same host, capturing protocol behavior, service use, and connection frequency.

- -

- Same service: Connections in the last 2 seconds with the same service as the current one.

- Content features: Attributes within the payload, such as failed login attempts or command execution attempts—indicators of potential account or system compromise.

-

Labels: grouped into five general categories (see Table 1):

- -

- Normal: Benign network activity.

- -

- Denial of Service (DoS): Attacks that flood network services with requests, making them unavailable.

- -

- Probe: Attacks that scan for information about the network (e.g., port scans or vulnerability probes).

- -

- Remote to Local (R2L): Attacks where an external entity attempts to gain local access on a networked machine.

- -

- User to Root (U2R): Attacks that escalate privileges from a local user account to administrative (root) access.

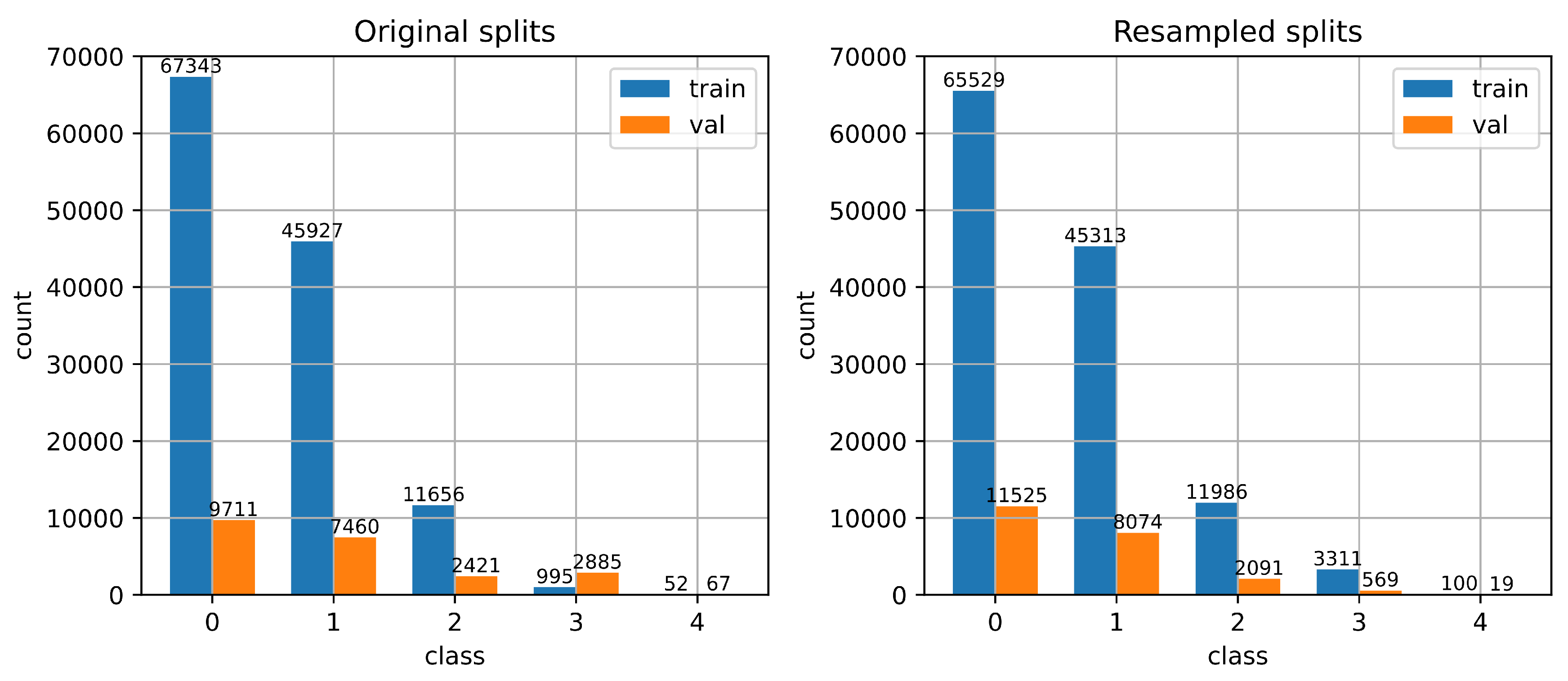

Class Imbalance.

Feature Representation.

3.3.3. Dataset Preprocessing

- One-hot encoding: Categorical features such as protocol_type, service, and flag are transformed into binary vectors. For example, protocol_type with values tcp, udp, and icmp becomes [1,0,0], [0,1,0], and [0,0,1], respectively.

- Normalization: Numerical features are normalized using Min-Max scaling to ensure all values fall within the same range, avoiding dominance of features with larger scales during model training.

4. Experimental Results and Discussion

4.1. Datasets Splits

4.2. Experiment 1: Only One Epoch

4.2.1. Experimental Setup

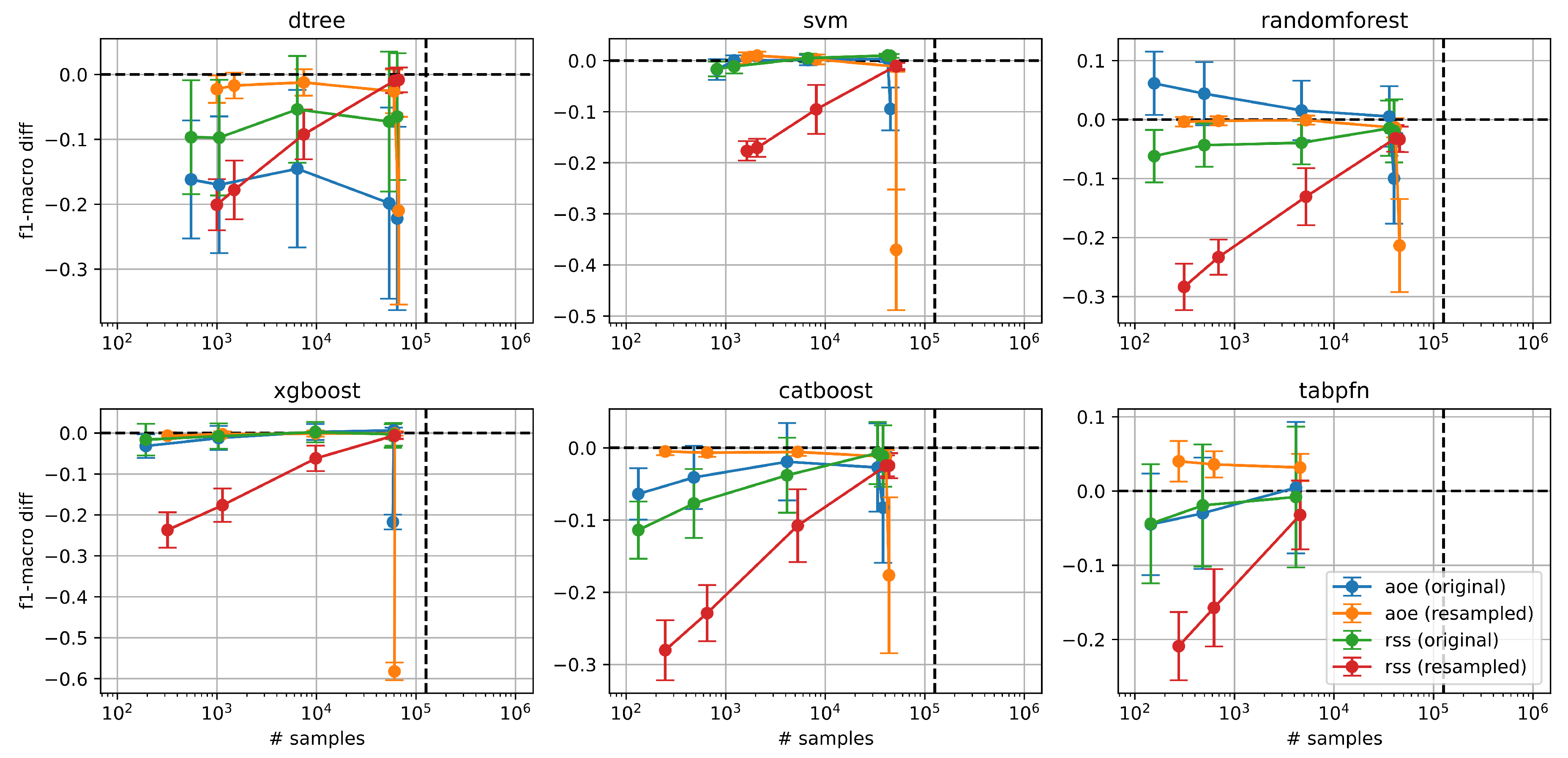

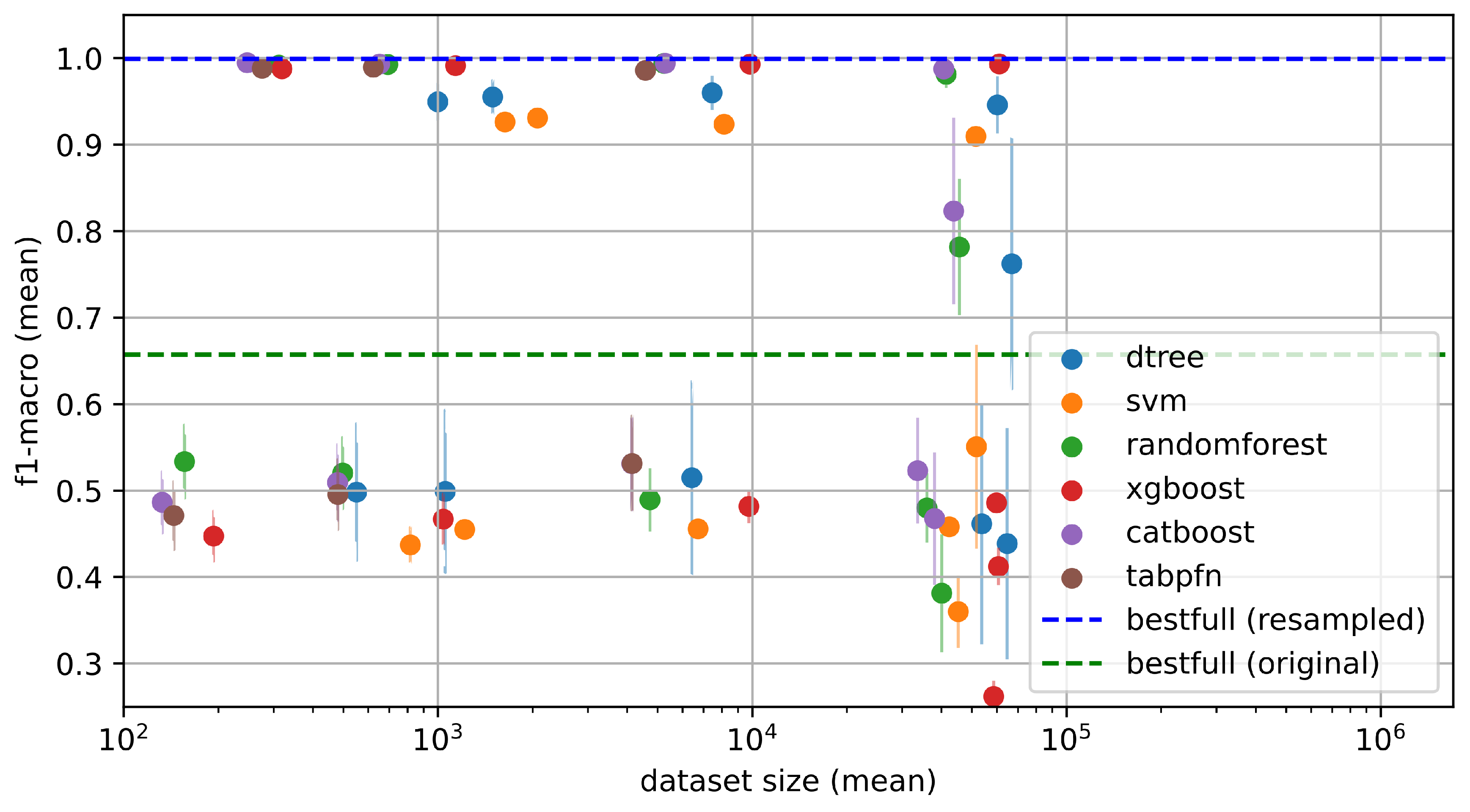

4.2.2. Performance Comparison Across Selection Strategies and Models

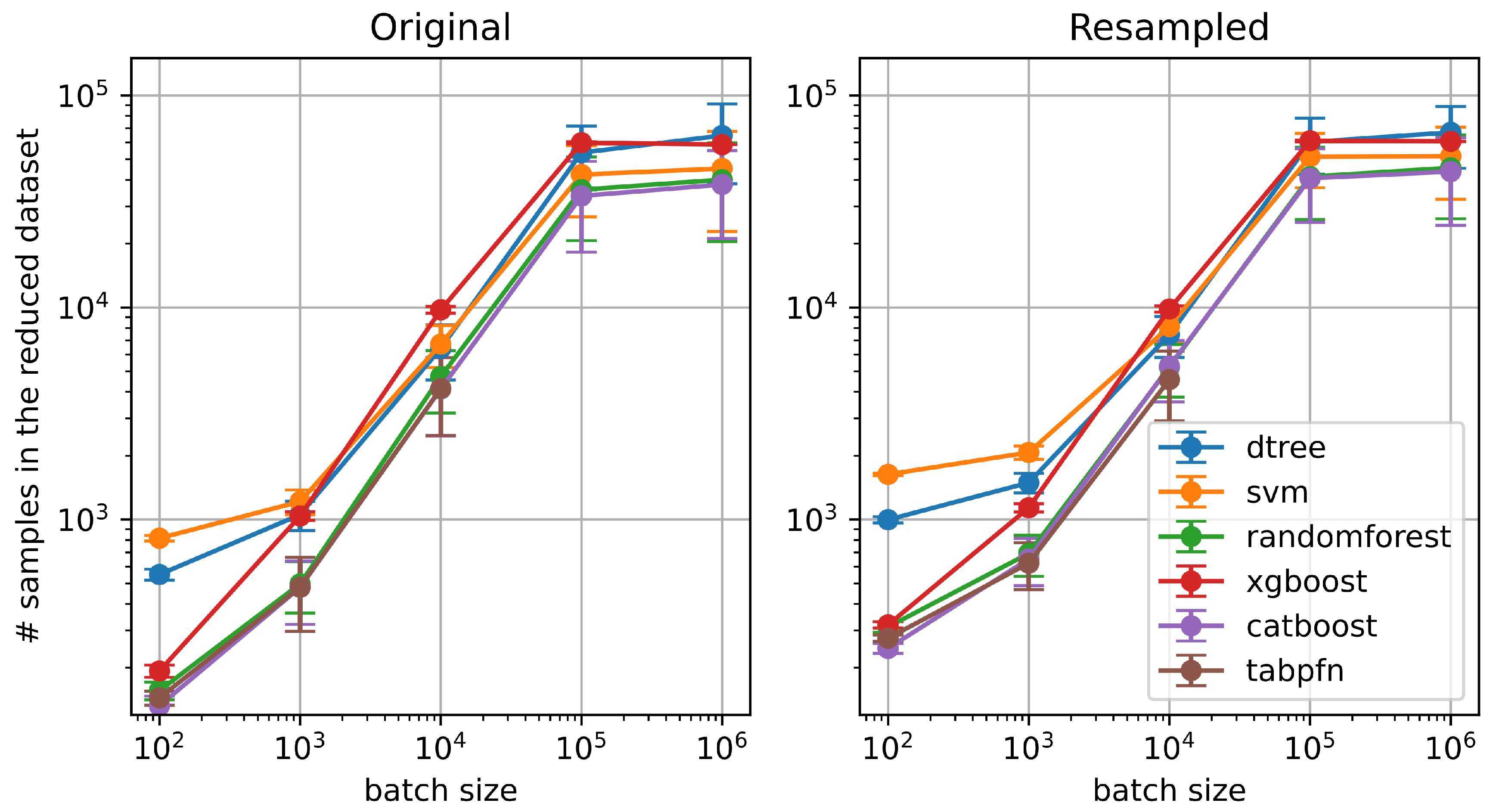

4.2.3. Impact of Batch Size on the Number of Selected Examples

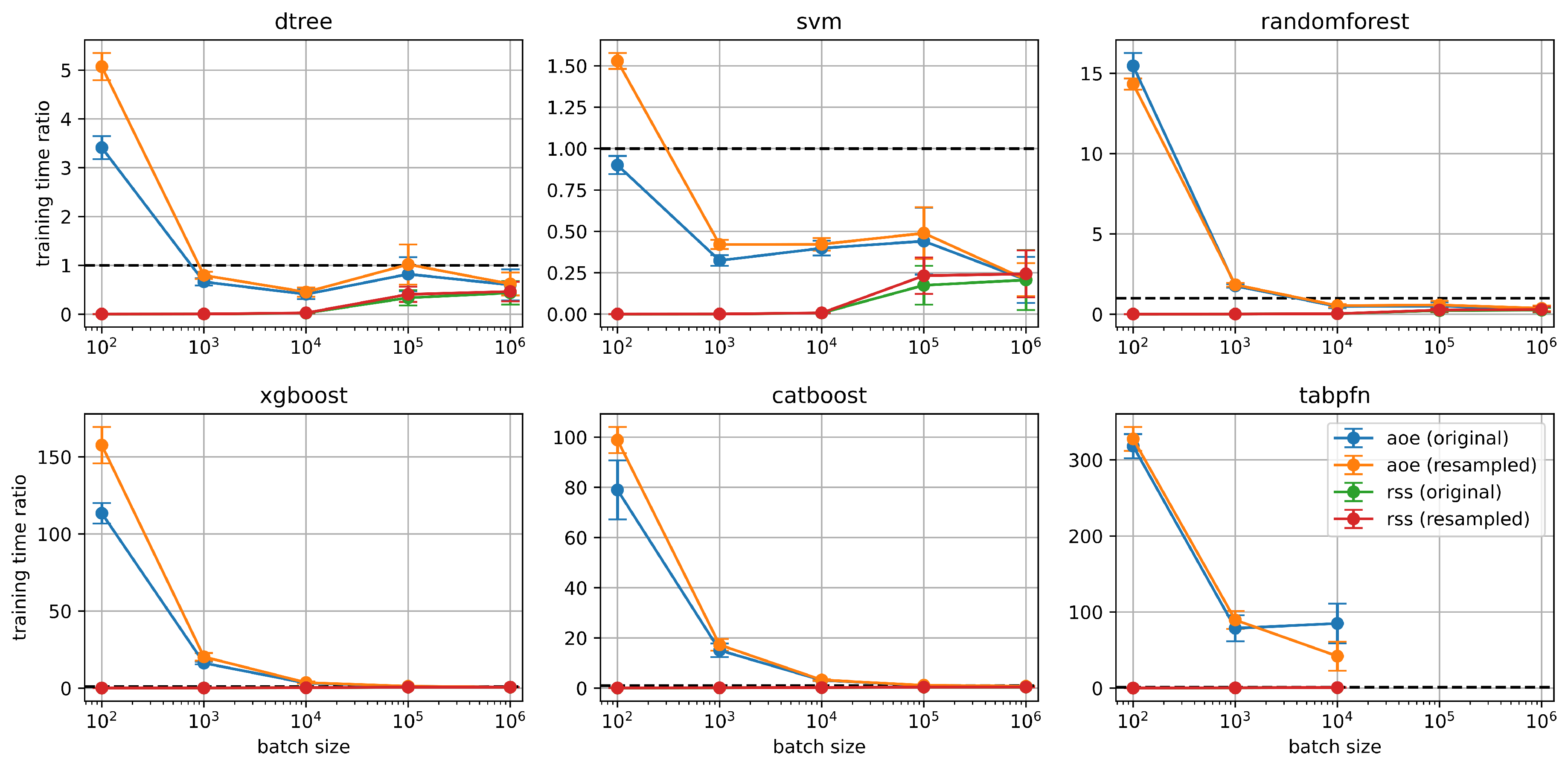

4.2.4. Training Time Comparison Between AOE and RSS

4.2.5. Absolute Performance of AOE Across Splits and Classifiers

4.3. Experiment 2: More Epochs

4.3.1. Experimental Setup

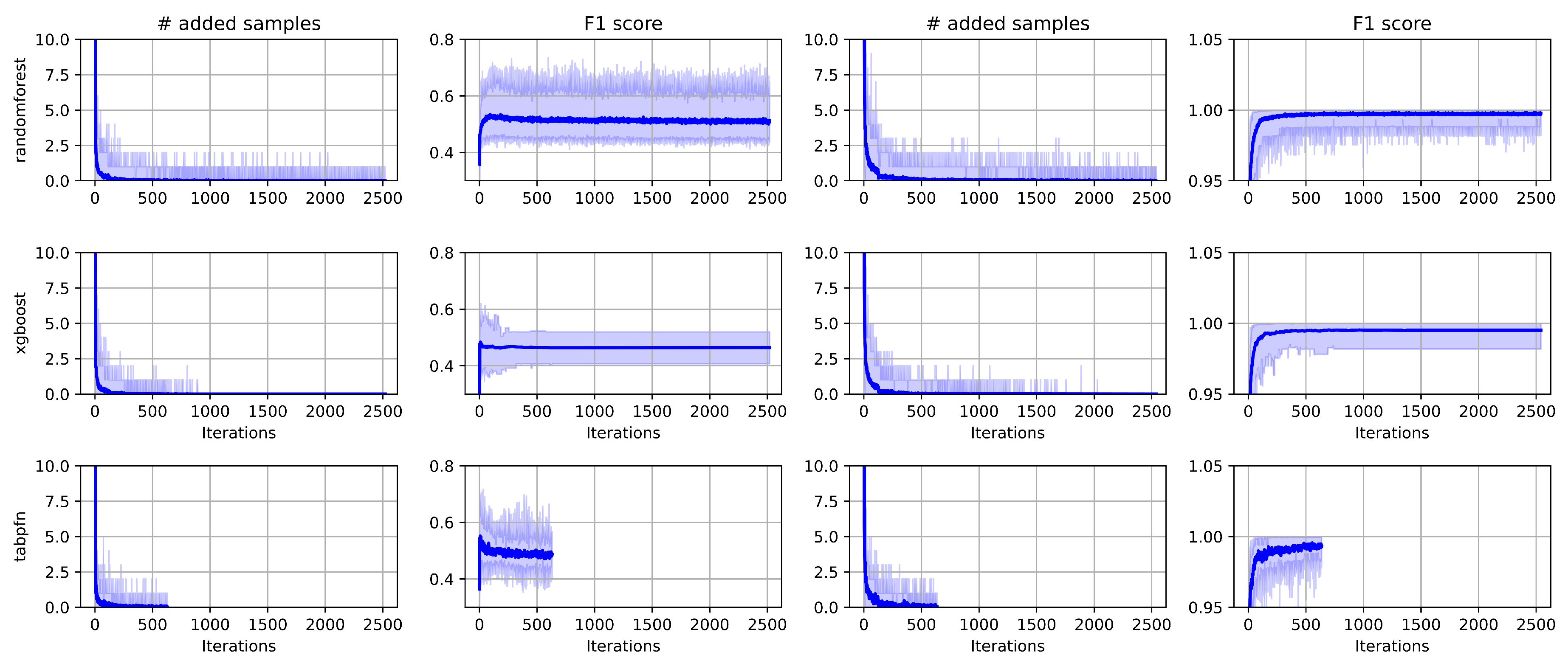

4.3.2. Iteration Dynamics of Added Samples

4.3.3. Duplicate Samples in AOE-Generated Training Sets

5. Conclusions

- One epoch may be sufficient for simpler tasks, but more epochs might be required for more complex problems.

- A batch size of 1000 often reduces the dataset by several orders of magnitude without significantly impacting performance or increasing computational cost.

- For quick training with AOE, use a Random Forest classifier, which provides good performance with minimal tuning.

- If computational cost and time are not a constraint, we recommend using a smaller batch size (e.g., 100), up to five epochs, and a high-performance model such as CatBoost or TabPFN.

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| IDS | Intrusion Detection System |

| AOE | Adding Only Errors |

| RSS | Random Subset Selection |

| NSL-KDD | NSL-Knowledge Discovery and Data Mining Cup Dataset |

| KDD | Knowledge Discovery and Data Mining Cup Dataset |

| NIDS | Network Intrusion Detection System |

| DoS | Denial of Service |

| U2R | User to Root attack |

| R2L | Remote to Local attack |

| Probe | Probing attack |

| TabPFN | Tabular Prior-data Fitted Networks |

References

- Ferrari, P.; Bellagente, P.; Flammini, A.; Gaffurini, M.; Rinaldi, S.; Sisinni, E.; Brandao, D. Anomaly Detection in Industrial Networks using Distributed Observation of Statistical Behavior. In Proceedings of the 2024 IEEE International Workshop on Metrology for Industry 4.0 & IoT (MetroInd4.0 & IoT), 2024, pp. 180–185. [CrossRef]

- Achour, M.; Mana, M. Seasonal Adjustment for traffic modeling and analysis in IEEE 802.15.4 networks. In Proceedings of the 2022 7th International Conference on Image and Signal Processing and their Applications (ISPA), 2022, pp. 1–6. [CrossRef]

- Achour, M.; Mana, M.; Achour, S. Exploiting traffic seasonality for anomaly detection in IEEE 802.15.4 networks. In Proceedings of the 2022 19th International Multi-Conference on Systems, Signals & Devices (SSD), 2022, pp. 1351–1356. [CrossRef]

- Lieto, A.; Liao, Q.; Bauer, C. A generative approach for production-aware industrial network traffic modeling. In Proceedings of the 2022 IEEE Globecom Workshops (GC Wkshps). IEEE, 2022, pp. 575–580.

- Author(s). AI-Driven Network Traffic Optimization and Fault Detection in Enterprise WAN. International Journal of Scientific Research in Engineering and Management 2024, 08, 1–8.

- Zhang, Y.; Liu, W.; Kuok, K.; Cheong, N. Anteater: Advanced Persistent Threat Detection With Program Network Traffic Behavior. IEEE Access 2024, 12, 8536–8551. [CrossRef]

- Ying, Z.; Zhang, Y.; Xu, S.; Xu, G.; Liu, W. Anteater: Malware Injection Detection with Program Network Traffic Behavior. In Proceedings of the 2022 International Conference on Networking and Network Applications (NaNA), 2022, pp. 169–175. [CrossRef]

- Moazzeni, N.; Katsaros, D. Towards Next-Generation Intelligent Inter-Domain Routing: A Machine Learning-Based Approach. In Proceedings of the Proceedings of the International Conference on Advanced Networking, 2024.

- Chupaev, A.V.; Zaripova, R.S.; Galyamov, R.R.; Sharifullina, A.Y. The use of industrial wireless networks based on standard ISA100.11a and protocol WirelessHART in process control. In Proceedings of the E3S Web of Conferences. EDP Sciences, 2019, Vol. 124, p. 03013. [CrossRef]

- Das, T.; Caria, M.; Jukan, A.; Hoffmann, M. A Techno-economic Analysis of Network Migration to Software-Defined Networking. arXiv preprint arXiv:1310.0216 2013, [arXiv:cs.NI/1310.0216].

- Das, T.; Drogon, M.; Jukan, A.; Hoffmann, M. Study of Network Migration to New Technologies using Agent-based Modeling Techniques. arXiv preprint arXiv:1305.0219 2014, [arXiv:cs.NI/1305.0219].

- Saha, S.; Haque, A.; Sidebottom, G. Multi-Step Internet Traffic Forecasting Models with Variable Forecast Horizons for Proactive Network Management. Sensors 2024, 24, 1871. [CrossRef]

- Canel, C.; Madhavan, B.; Sundaresan, S.; Spring, N.; Kannan, P.; Zhang, Y.; Lin, K.; Seshan, S. Understanding Incast Bursts in Modern Datacenters. In Proceedings of the Proceedings of the 2024 ACM Internet Measurement Conference (IMC ’24), Madrid, Spain, 2024; pp. 674–680. [CrossRef]

- Liu, X.; Huang, C.; Ashraf, M.W.A.; Huang, S.; Chen, Y. Spatiotemporal Self-Attention-Based Network Traffic Prediction in IIoT. Wireless Communications and Mobile Computing 2023, 2023, 1–15. [CrossRef]

- UCI KDD Archive. KDD Cup 1999 Dataset. http://kdd.ics.uci.edu/databases/kddcup99/kddcup99.html, 2007. Accessed: 04-Aug-2025.

- Tavallaee, M.; Bagheri, E.; Lu, W.; Ghorbani, A.A. A detailed analysis of the KDD CUP 99 data set. In Proceedings of the 2009 IEEE Symposium on Computational Intelligence for Security and Defense Applications, 2009, pp. 1–6. [CrossRef]

- Liu, H.; Lang, B. Machine learning and deep learning methods for intrusion detection systems: A survey. applied sciences 2019, 9, 4396.

- Choudhary, S.; Kesswani, N. Analysis of KDD-Cup’99, NSL-KDD and UNSW-NB15 Datasets using Deep Learning in IoT. Procedia Computer Science 2020, 167, 1561–1573. International Conference on Computational Intelligence and Data Science. [CrossRef]

- Moustafa, N.; Slay, J. UNSW-NB15: a comprehensive data set for network intrusion detection systems. Military Communications and Information Systems Conference (MilCIS) 2015.

- Zoghi, Z.; Serpen, G. UNSW-NB15 computer security dataset: Analysis through visualization. Security and privacy 2024, 7, e331.

- Sharafaldin, I.; Lashkari, A.H.; Ghorbani, A.A. Toward generating a new intrusion detection dataset and intrusion traffic characterization. Proceedings of the International Conference on Information Systems Security and Privacy 2018.

- Maciá-Fernández, G.; García-Teodoro, P.; Moriano, G.; et al. UGR’16: A new dataset for the evaluation of cyclostationarity-based network IDSs. Computers & Security 2018, 73, 411–424.

- García, S.; Grill, M.; Stiborek, J.; Zunino, A. An empirical comparison of botnet detection methods. Computers & Security 2014, 45, 100–123.

- Koroniotis, N.; Moustafa, N.; Sitnikova, E.; Turnbull, B. Towards the development of realistic botnet dataset in the Internet of Things for network forensic analytics: Bot-IoT. Future Generation Computer Systems 2019, 100, 779–796.

- Mirsky, Y.; Doitshman, T.; Elovici, Y.; Shabtai, A. Kitsune: An ensemble of autoencoders for online network intrusion detection. In Proceedings of the NDSS, 2018. [CrossRef]

- Laghrissi, F.E.; et al. Intrusion detection systems using long short-term memory. Journal of Big Data 2021, 8, 1–24.

- Imrana, Y.; et al. χ2-BidLSTM: A feature-driven intrusion detection system using bidirectional LSTM. Sensors 2022, 22, 2189.

- Thakkar, A.; Lohiya, R. A review of the advancement in intrusion detection datasets. Procedia Computer Science 2020, 167, 636–645.

- Choudhary, S.; et al. Analysis of KDD-CUP’99, NSL-KDD and UNSW-NB15 datasets. Procedia Computer Science 2020, 167, 1561–1570.

- Breiman, L. Random forests. Machine Learning 2001, 45, 5–32.

- Chen, T.; Guestrin, C. XGBoost: A scalable tree boosting system. In Proceedings of the Proceedings of KDD, 2016, pp. 785–794.

- Prokhorenkova, L.; Gusev, G.; Vorobev, A.; Dorogush, A.V.; Gulin, A. CatBoost: Unbiased boosting with categorical features. In Proceedings of the NeurIPS, 2018.

- Hollmann, N.; Müller, S.; Eggensperger, K.; Hutter, F. TabPFN: A transformer that solves small tabular classification problems in a second. arXiv:2207.01848 2022.

- Hart, P. The condensed nearest neighbor rule. IEEE Transactions on Information Theory 1968, 14, 515–516.

- Wilson, D.L. Asymptotic properties of nearest neighbor rules using edited data. IEEE Transactions on Systems, Man, and Cybernetics 1972, SMC-2, 408–421.

- Tomek, I. Two modifications of CNN. In Proceedings of the IEEE Trans. Systems, Man, and Cybernetics, 1976.

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: Synthetic minority over-sampling technique. Journal of Artificial Intelligence Research 2002, 16, 321–357.

- Wei, K.; Iyer, R.; Bilmes, J. Submodularity in data subset selection and active learning. In Proceedings of the ICML, 2015, pp. 1954–1963.

- Mirzasoleiman, B.; Bilmes, J.; Leskovec, J. Coresets for data-efficient training of machine learning models. In Proceedings of the ICML, 2020, pp. 6950–6960.

- Killamsetty, K.; Sivasubramanian, D.; Ramakrishnan, G.; Iyer, R. GLISTER: Generalization based data subset selection for efficient and robust learning. In Proceedings of the AAAI, 2021, pp. 8110–8118.

- Killamsetty, K.; et al. Gradient matching based data subset selection for efficient learning. In Proceedings of the ICML, 2021, pp. 5464–5474.

- Settles, B. Active learning literature survey. Technical Report UW-CS-2009-1648, University of Wisconsin–Madison, 2009.

- Wang, T.; Zhu, J.Y.; Torralba, A.; Efros, A.A. Dataset distillation. In Proceedings of the NeurIPS, 2018.

- Zhao, B.; Mopuri, K.R.; Bilen, H. Dataset condensation with gradient matching. In Proceedings of the ICLR, 2020.

- Nguyen, T.; et al. Dataset meta-learning from kernel ridge regression. In Proceedings of the NeurIPS, 2021.

- Liu, S.; et al. Dataset distillation via factorization. In Proceedings of the ICLR, 2022.

- Lee, S.; et al. Dataset condensation with contrastive signals. In Proceedings of the ICML, 2022.

- Yu, R.; et al. Dataset distillation: A comprehensive review. arXiv:2301.07014 2023.

- Iftikhar, N.; Rehman, M.U.; Shah, M.A.; Alenazi, M.J.F.; Ali, J. Intrusion Detection in NSL-KDD Dataset Using Hybrid Self-Organizing Map Model. CMES - Computer Modeling in Engineering and Sciences 2025, 143, 639–671. [CrossRef]

- Ghajari, G.; Ghajari, E.; Mohammadi, H.; Amsaad, F. Intrusion Detection in IoT Networks Using Hyperdimensional Computing: A Case Study on the NSL-KDD Dataset. arXiv preprint arXiv:2503.03037 2025.

- Dhanabal, L.; Shantharajah, S. A study on NSL-KDD dataset for intrusion detection system based on classification algorithms. International journal of advanced research in computer and communication engineering 2015, 4, 446–452.

- Meena, G.; Choudhary, R.R. A review paper on IDS classification using KDD 99 and NSL KDD dataset in WEKA. In Proceedings of the 2017 International Conference on Computer, Communications and Electronics (Comptelix), 2017, pp. 553–558. [CrossRef]

- Rahim, R.; Ahanger, A.S.; Khan, S.M.; Masoodi, F. Analysis of IDS using Feature Selection Approach on NSL-KDD Dataset. In Proceedings of the SCRS Conference Proceedings on Intelligent Systems; Pal, R.; Shukla, P.K., Eds., India, 2022; pp. 475–481. [CrossRef]

- Zargari, S.; Voorhis, D. Feature Selection in the Corrected KDD-dataset. In Proceedings of the 2012 Third International Conference on Emerging Intelligent Data and Web Technologies, 2012, pp. 174–180. [CrossRef]

- Mohanty, S.; Agarwal, M. Recursive Feature Selection and Intrusion Classification in NSL-KDD Dataset Using Multiple Machine Learning Methods. In Proceedings of the Computing, Communication and Learning; Panda, S.K.; Rout, R.R.; Bisi, M.; Sadam, R.C.; Li, K.C.; Piuri, V., Eds., Cham, 2024; pp. 3–14.

- Safa, B.; Hamou, R.M.; Toumouh, A. Optimizing the performance of the IDS through feature-relevant selection using PSO and random forest techniques. Comput. Sist. 2024, 28. [CrossRef]

- Patel, N.D.; Mehtre, B.M.; Wankar, R. A computationally efficient dimensionality reduction and attack classification approach for network intrusion detection. International Journal of Information Security 2024, 23, 2457–2487. [CrossRef]

- Thakkar, A.; Kikani, N.; Geddam, R. Fusion of linear and non-linear dimensionality reduction techniques for feature reduction in LSTM-based Intrusion Detection System. Applied Soft Computing 2024, 154, 111378. [CrossRef]

- Nabi, F.; Zhou, X. Enhancing intrusion detection systems through dimensionality reduction: A comparative study of machine learning techniques for cyber security. Cyber Security and Applications 2024, 2, 100033. [CrossRef]

- Xu, W.; Jang-Jaccard, J.; Singh, A.; Wei, Y.; Sabrina, F. Improving Performance of Autoencoder-Based Network Anomaly Detection on NSL-KDD Dataset. IEEE Access 2021, 9, 140136–140146. [CrossRef]

- Jin, L.; Fan, R.; Han, X.; Cui, X. IGSA-SAC: a novel approach for intrusion detection using improved gravitational search algorithm and soft actor-critic. Frontiers in Computer Science 2025. Original Research. [CrossRef]

- B, S.; M, S.; K, M.; B, L. Ensemble of feature augmented convolutional neural network and deep autoencoder for efficient detection of network attacks. Scientific Reports 2025, 15, 4267. [CrossRef]

- Quinlan, J.R. Induction of decision trees. Machine Learning 1986, 1, 81–106. [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Machine Learning 1995, 20, 273–297. [CrossRef]

- Breiman, L. Random Forests. Machine Learning 2001, 45, 5–32. [CrossRef]

- Chen, T.; Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the Proceedings of the 22nd acm sigkdd international conference on knowledge discovery and data mining, 2016, pp. 785–794.

- Prokhorenkova, L.; Gusev, G.; Vorobev, A.; Dorogush, A.V.; Gulin, A. CatBoost: unbiased boosting with categorical features. Advances in neural information processing systems 2018, 31.

- Hollmann, N.; Müller, S.; Eggensperger, K.; Hutter, F. Tabpfn: A transformer that solves small tabular classification problems in a second. arXiv preprint arXiv:2207.01848 2022.

| Class | Label |

|---|---|

| Normal | Normal or No Attack |

| DoS | neptune; back; land; pod; smurf; teardrop; mailbomb; apache2; processtable; udpstorm and worm |

| Probe | ipsweep; nmap; portsweep; satan; mscan and saint |

| R2L | ftp write; guess passwd; imap; multihop; phf; spy; warezclient; warezmaster; sendmail; named; snmpgetattack; snmpguess; xlock; xsnoop and httptunnel |

| U2R | buffer overflow; loadmodule; perl; rootkit; ps; sqlattack and xterm |

| Dataset | Normal | DoS | Probe | R2L | U2R | Total |

|---|---|---|---|---|---|---|

| Training | 67,343 | 45,927 | 11,656 | 995 | 52 | 125,973 |

| Validation | 9,711 | 7,460 | 2,421 | 2,885 | 67 | 22,544 |

| Stats | Original splits | Resampled splits |

|---|---|---|

| Nval/Ntrain class 0 | 0.1442 | 0.1759 |

| Nval/Ntrain class 1 | 0.1624 | 0.1782 |

| Nval/Ntrain class 2 | 0.2077 | 0.1745 |

| Nval/Ntrain class 3 | 2.8995 | 0.1719 |

| Nval/Ntrain class 4 | 1.2885 | 0.1900 |

| Xtrain(mean)±Xtrain(std) | 0.0660 ± 0.2401 | 0.0662 ± 0.2404 |

| Xval(mean)±Xval(std) | 0.0674 ± 0.2425 | 0.0663 ± 0.2406 |

| Model | #duplicates / #dataset size * 100 (average) |

|---|---|

| Random Forest | 0% |

| XGBoost | 0.005% |

| TabPFN | 0% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).