Submitted:

01 October 2025

Posted:

01 October 2025

You are already at the latest version

Abstract

Keywords:

Introduction

Related Work

2.1. Graph Neural Network

2.2. Graph Convolutional Network (GCN)

2.2. Long- and Short-Term Preference Modeling (LSTPM)

2.3. Graph Long-Term and Short-Term Preference (GLSP)

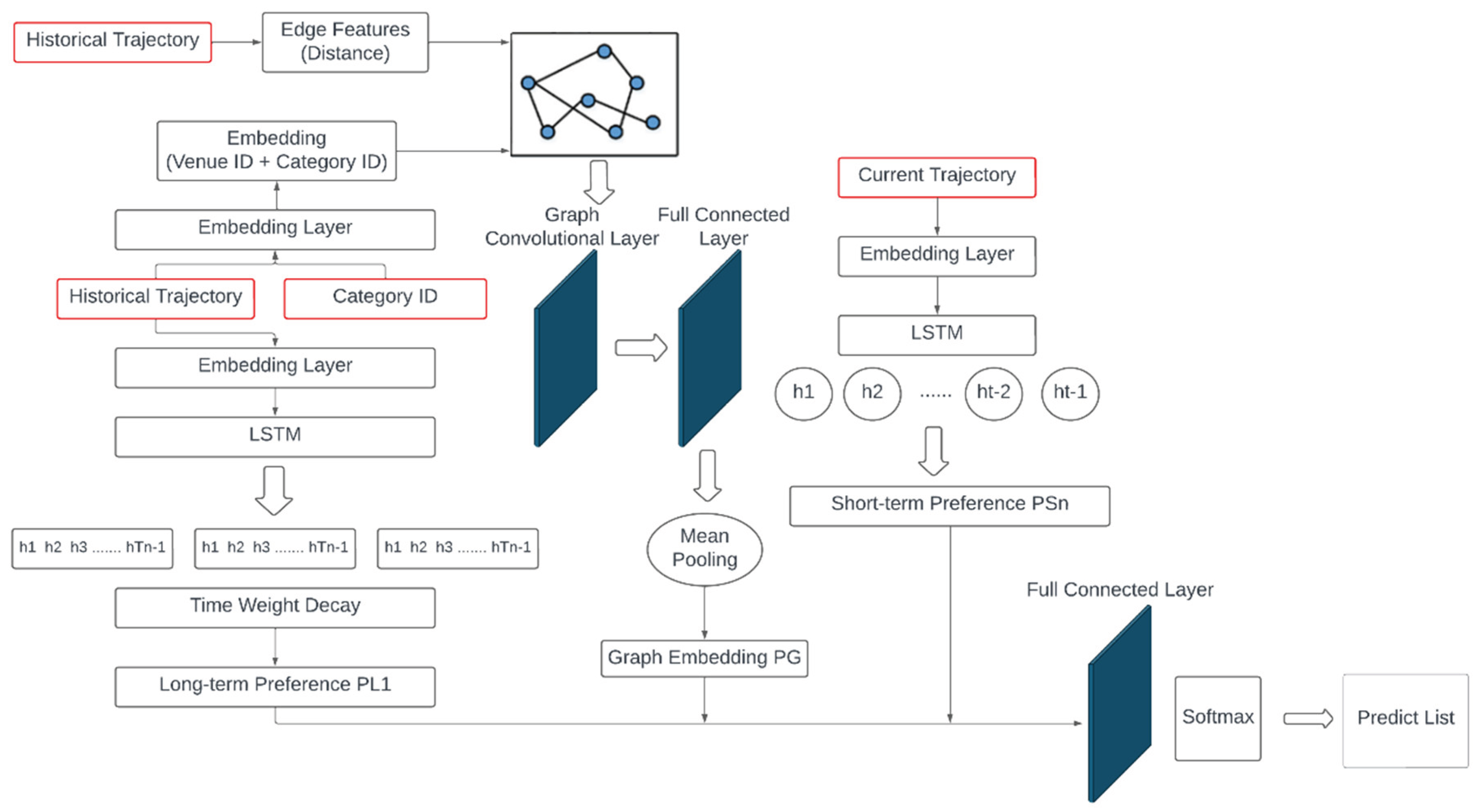

3. Our Approach

3.1. Geographic Data Processing

3.2. User Graph Embedding Vector Generation

3.3. User Preference Generation

3.4. STLGNet Model Prediction

4. Experiments

4.1. Dataset Descriptions

- Removal of infrequent locations: Locations visited by fewer than three users were excluded to eliminate sparse and less informative data.

- Daily trajectory segmentation: All check-ins made by a single user within one day were aggregated and regarded as a complete trajectory.

- Elimination of short trajectories: Trajectories consisting of fewer than two check-ins were discarded to avoid the adverse impact of excessively short sequences on model learning.

- Filtering of inactive users: Users with fewer than three distinct check-in locations in total were removed, thereby focusing on users exhibiting relatively stable behavioral patterns.

4.2. Evaluation Metrics

4.2. Experimental Results

5. Conclusions

References

- A. Sherstinsky, “Fundamentals of Recurrent Neural Network (RNN) and Long Short-Term Memory (LSTM) network,” Physica D: Nonlinear Phenomena, vol. 404, p. 132306, 2020. [CrossRef]

- M. Vakalopoulou, S. Christodoulidis, N. Burgos, O. Colliot, and V. Lepetit, Deep learning: basics and convolutional neural networks (CNN). 2023.

- F. Scarselli, M. Gori, A. C. Tsoi, M. Hagenbuchner, and G. Monfardini, “The Graph Neural Network Model,” IEEE Transactions on Neural Networks, vol. 20, no. 1, pp. 61-80, 2009.

- J. Weston, A. Bordes, O. Yakhnenko, and N. Usunier, “Connecting Language and Knowledge Bases with Embedding Models for Relation Extraction,” EMNLP 2013 - 2013 Conference on Empirical Methods in Natural Language Processing, Proceedings of the Conference, 2013.

- A. Sassi, M. Brahimi, W. Bechkit, and A. Bachir, “Location Embedding and Deep Convolutional Neural Networks for Next Location Prediction,” IEEE 44th LCN Symposium on Emerging Topics in Networking (LCN Symposium), pp. 149-157, 2019.

- C. Zhang, X. Liu, and D. Bis, “An Analysis on the Learning Rules of the Skip-Gram Model,” 2019 International Joint Conference on Neural Networks (IJCNN), pp. 1-8, 2019. [CrossRef]

- B. Jiang, Z. Zhang, D. Lin, J. Tang, and B. Luo, “Semi-supervised learning with graph learning-convolutional networks,” in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 11313-11320, 2019.

- Y. Yin, Y. Zhang, Z. Liu, S. Wang, R. R. Shah, and R. Zimmermann, “GPS2Vec: Pre-Trained Semantic Embeddings for Worldwide GPS Coordinates,” IEEE Transactions on Multimedia, vol. 24, pp. 890-903, 2022.

- K. Sun, T. Qian, T. Chen, Y. Liang, N. Hung, and H. Yin, “Where to Go Next: Modeling Long- and Short-Term User Preferences for Point-of-Interest Recommendation,” Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 214-221, 2020. [CrossRef]

- J. Liu, Y. Chen, X. Huang, J. Li, and G. Min, “GNN-based long and short term preference modeling for next-location prediction,” Information Sciences, vol. 629, 2023.

- D. Yao, C. Zhang, J. Huang, and J. Bi, “SERM: A Recurrent Model for Next Location Prediction in Semantic Trajectories,” Proceedings of the 2017 ACM on Conference on Information and Knowledge Management, 2017.

- R. Ying, R. He, K. Chen, P. Eksombatchai, W. L. Hamilton, and J. Leskovec, “Graph Convolutional Neural Networks for Web-Scale Recommender Systems,” 2018. [CrossRef]

- S. Yan, Y. Xiong, and D. Lin, “Spatial temporal graph convolutional networks for skeleton-based action recognition,” in Proceedings of the AAAI conference on artificial intelligence, vol. 32, no. 1, 2018.

- Y. Huang, Y. Weng, S. Yu, and X. Chen, Diffusion Convolutional Recurrent Neural Network with Rank Influence Learning for Traffic Forecasting, pp. 678-685, 2019.

- D. Yang, D. Zhang, V. Zheng, and Z. Yu, “Modeling User Activity Preference by Leveraging User Spatial Temporal Characteristics in LBSNs,” Systems, Man, and Cybernetics: Systems, IEEE Transactions on, vol. 45, pp. 129-142, 2015. [CrossRef]

| Node | adjacency list | distance | ||

|---|---|---|---|---|

| 0 | -1.1, 0.3, -0.1 | [0, 1], [0, 2] | 3, 2 | −0.439, 0.119, −0.040 |

| 1 | 0.6, 0.4, 0.4 | [1, 2], [1, 3] | 4, 1 | 0.34, 0.225, 0.225 |

| 2 | 0.5, 0.3, 0.5 | [0, 2], [1, 2] | 2, 1 | 0.34, 0.205, 0.34 |

| 3 | -1.0, 0.2, -0.1 | [1, 3], [3, 4], [3, 5] | 1, 4, 2 | -0.536, 0.103, -0.053 |

| 4 | -1.1, 0.3, -0.3 | [3, 4] | 4 | -0.26, 0.07, -0.07 |

| 5 | 0.5, 0.3, 0.3 | [3, 5] | 2 | 0.23, 0.14, 0.14 |

| Sample | Label | Prediction | Loss |

|---|---|---|---|

| 1 | [1,0,0] | [0.8,0.1,0.1] | |

| 2 | [0,1,0] | [0.2,0.7,0.1] | |

| 3 | [0,0,1] | [0.1,0.3,0.6] |

| Dataset | #of users | #of locations | # of categories |

|---|---|---|---|

| FourSquare NYC | 1083 | 8015 | 221 |

| FourSquare TKY | 2293 | 14508 | 203 |

| Method | Evaluation Metric | K=1 | K=5 | K=10 |

|---|---|---|---|---|

| LSTPM | Recall | 0.0920 | 0.2920 | 0.3787 |

| NDCG | 0.0920 | 0.2227 | 0.2340 | |

| MAP | 0.0920 | 0.1999 | 0.2134 | |

| GLSP | Recall | 0.1737 | 0.3105 | 0.3882 |

| NDCG | 0.1737 | 0.2470 | 0.2720 | |

| MAP | 0.1737 | 0.2258 | 0.2360 | |

| STLGNet | Recall | 0.3049 | 0.5372 | 0.5852 |

| NDCG | 0.3049 | 0.5073 | 0.5225 | |

| MAP | 0.3049 | 0.4974 | 0.5035 |

| Method | Evaluation Metric | K=1 | K=5 | K=10 |

|---|---|---|---|---|

| LSTPM | Recall | 0.0915 | 0.2691 | 0.3327 |

| NDCG | 0.0915 | 0.1920 | 0.2162 | |

| MAP | 0.0915 | 0.1669 | 0.1815 | |

| GLSP | Recall | 0.1153 | 0.2782 | 0.3514 |

| NDCG | 0.1153 | 0.1993 | 0.2230 | |

| MAP | 0.1153 | 0.1734 | 0.1831 | |

| STLGNet | Recall | 0.2302 | 0.3149 | 0.4868 |

| NDCG | 0.2302 | 0.2751 | 0.4615 | |

| MAP | 0.2302 | 0.2619 | 0.4234 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).