Submitted:

29 September 2025

Posted:

30 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

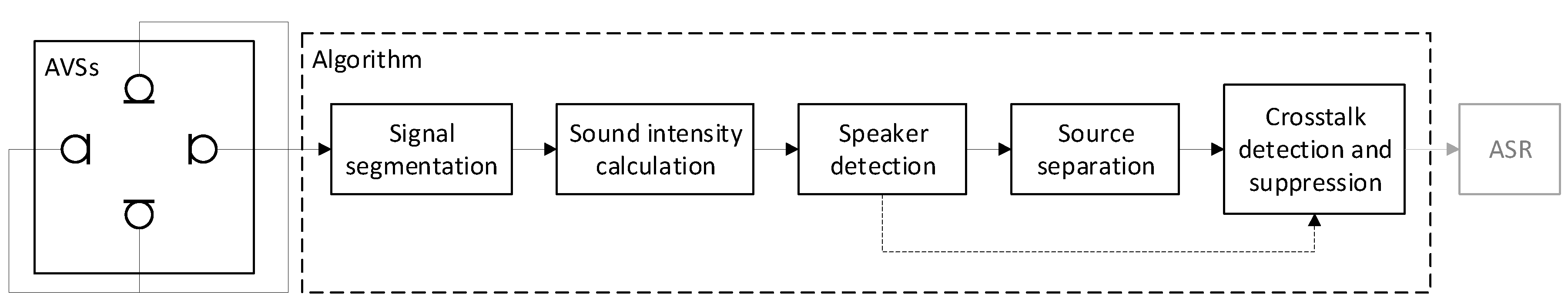

2.1. An Overview of the Crosstalk Suppression Algorithm

2.2. Acoustic Vector Sensor

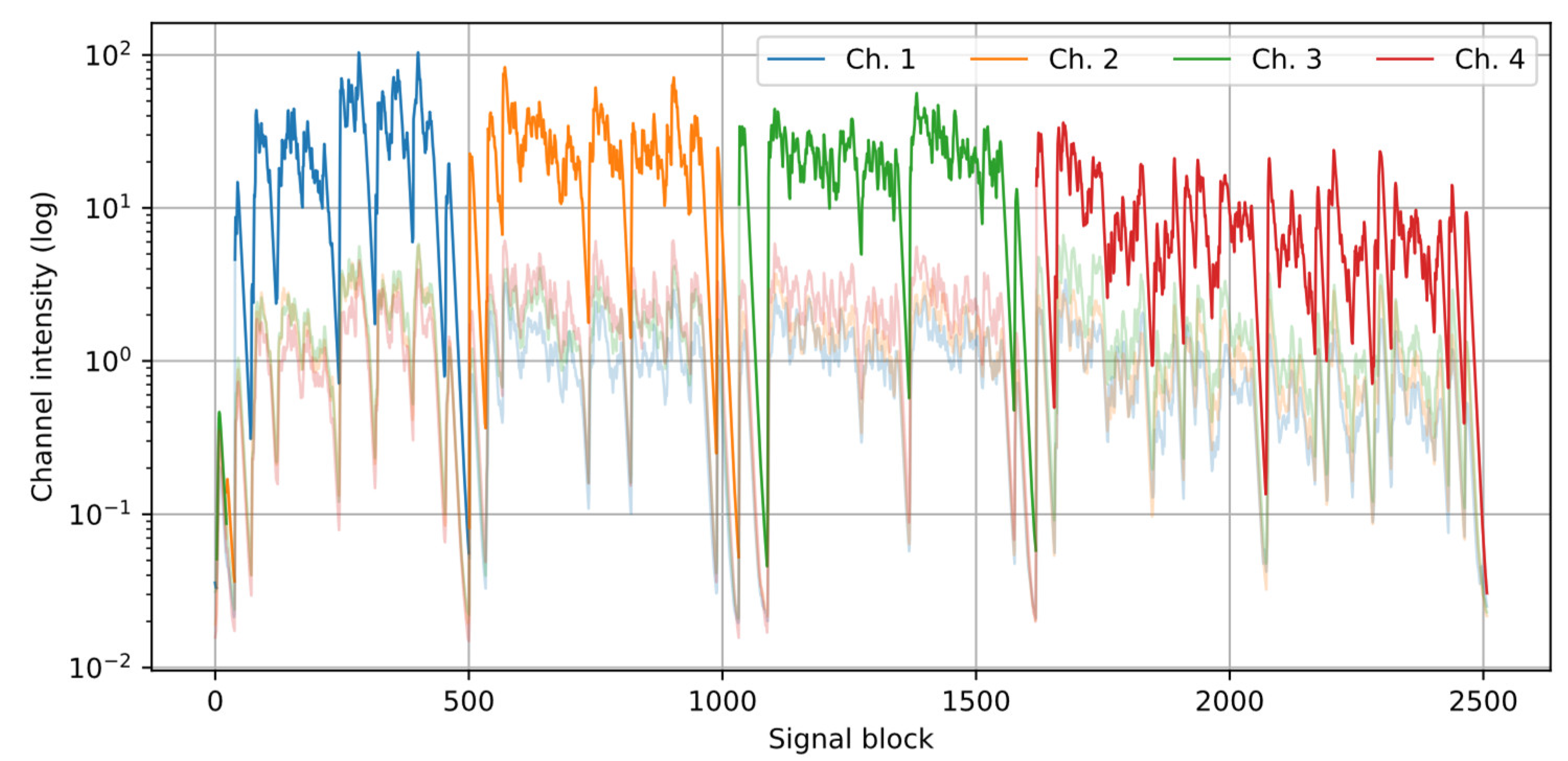

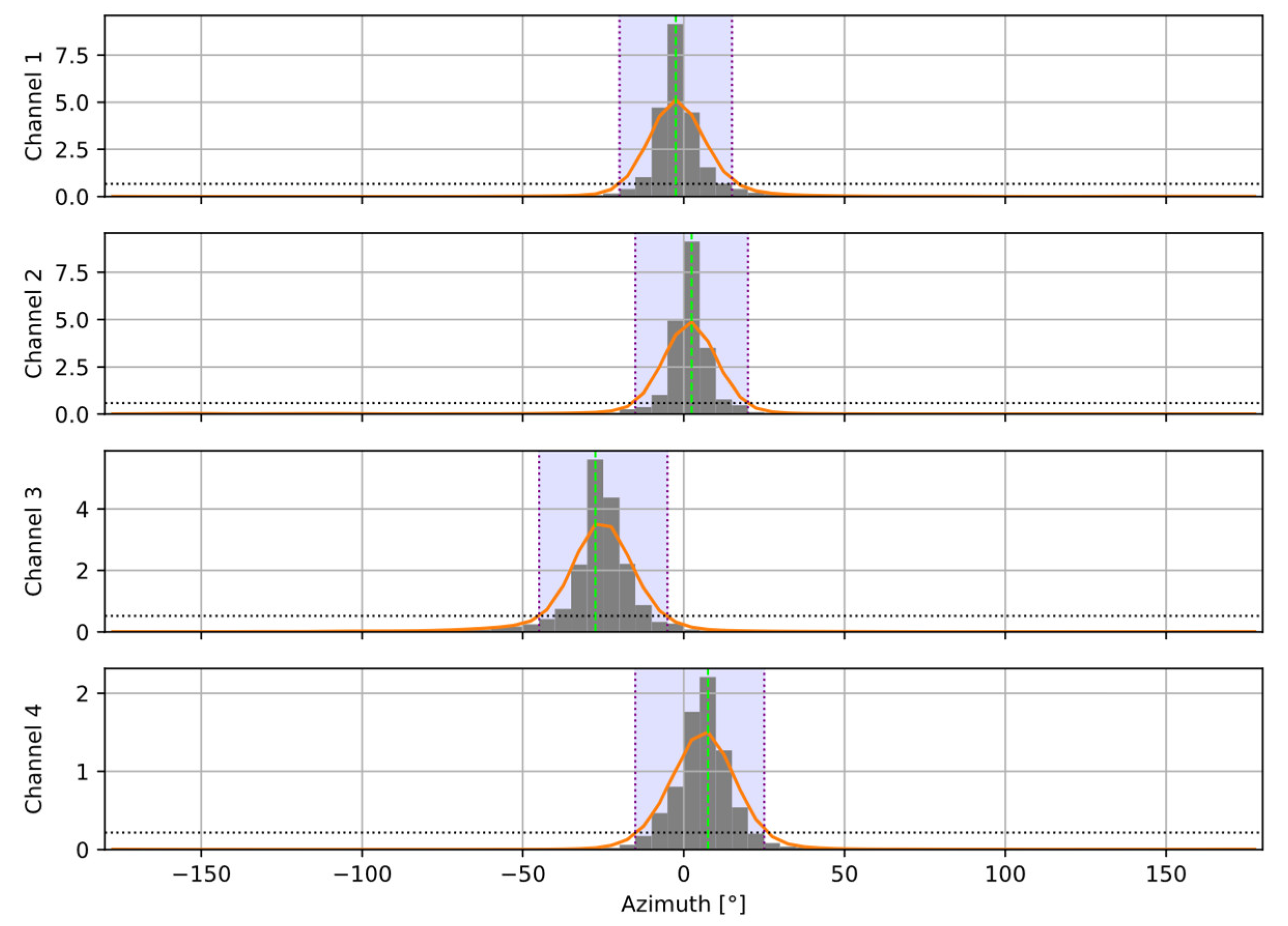

2.3. Speaker Detection

2.4. Source Separation

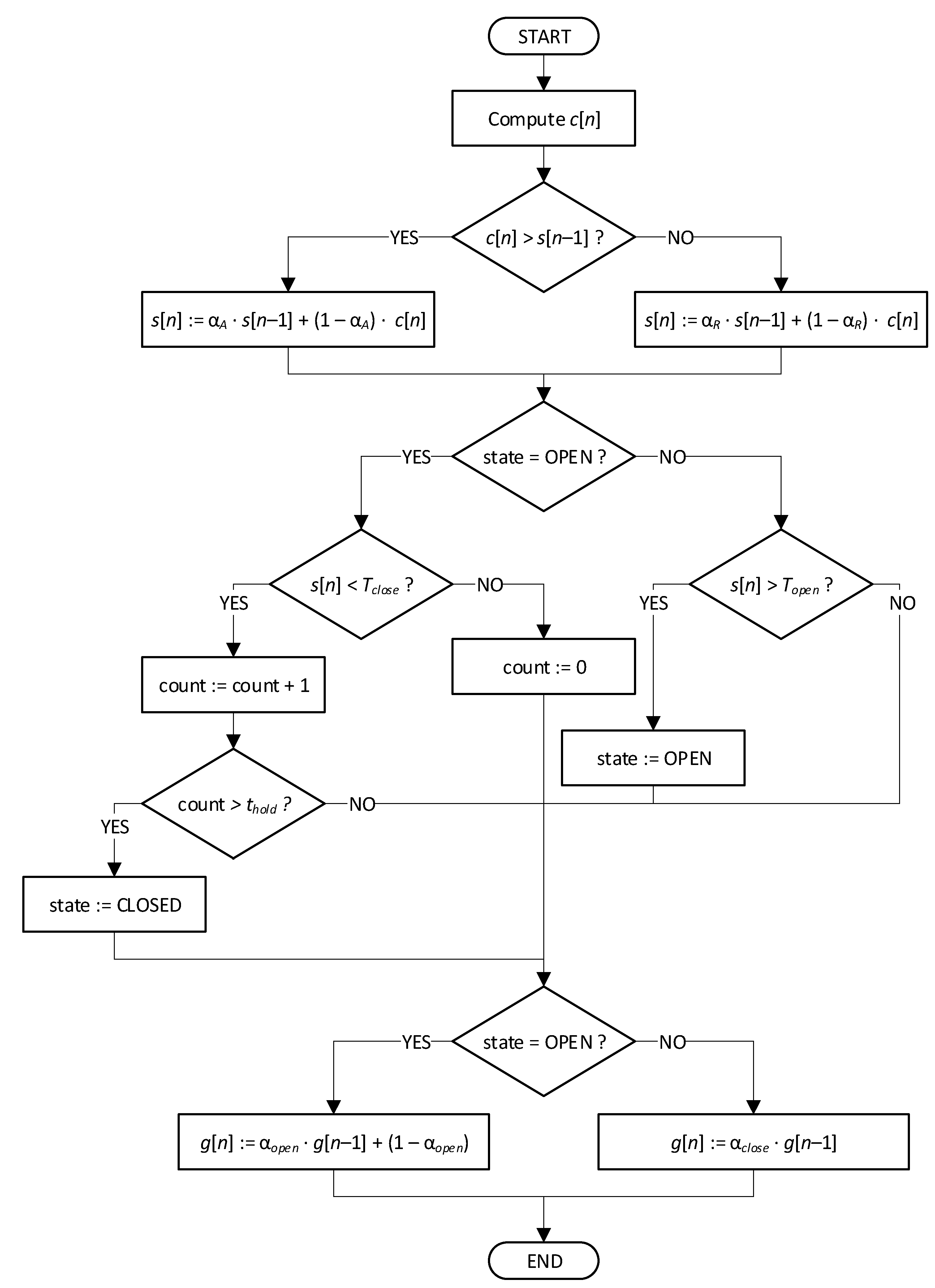

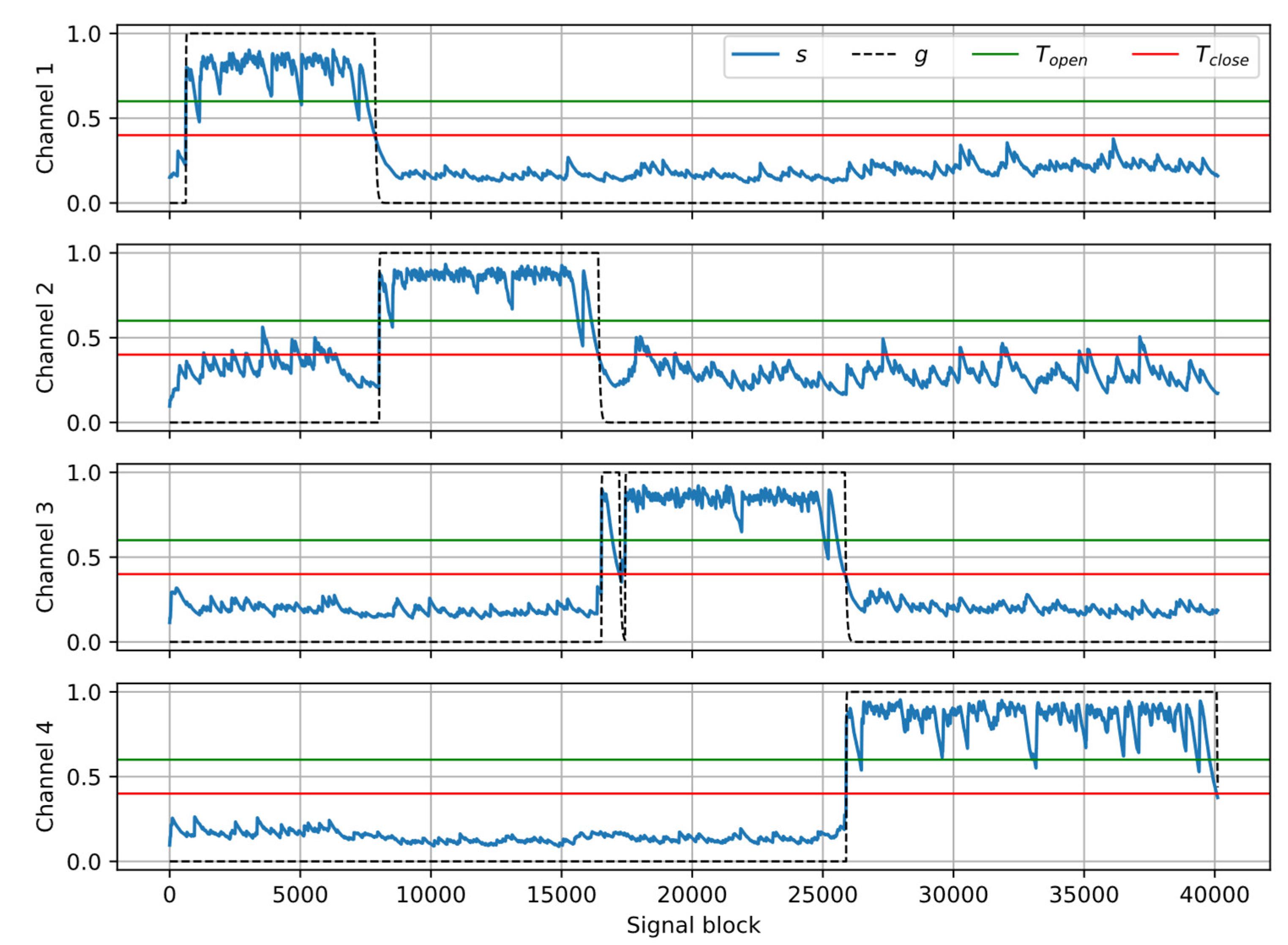

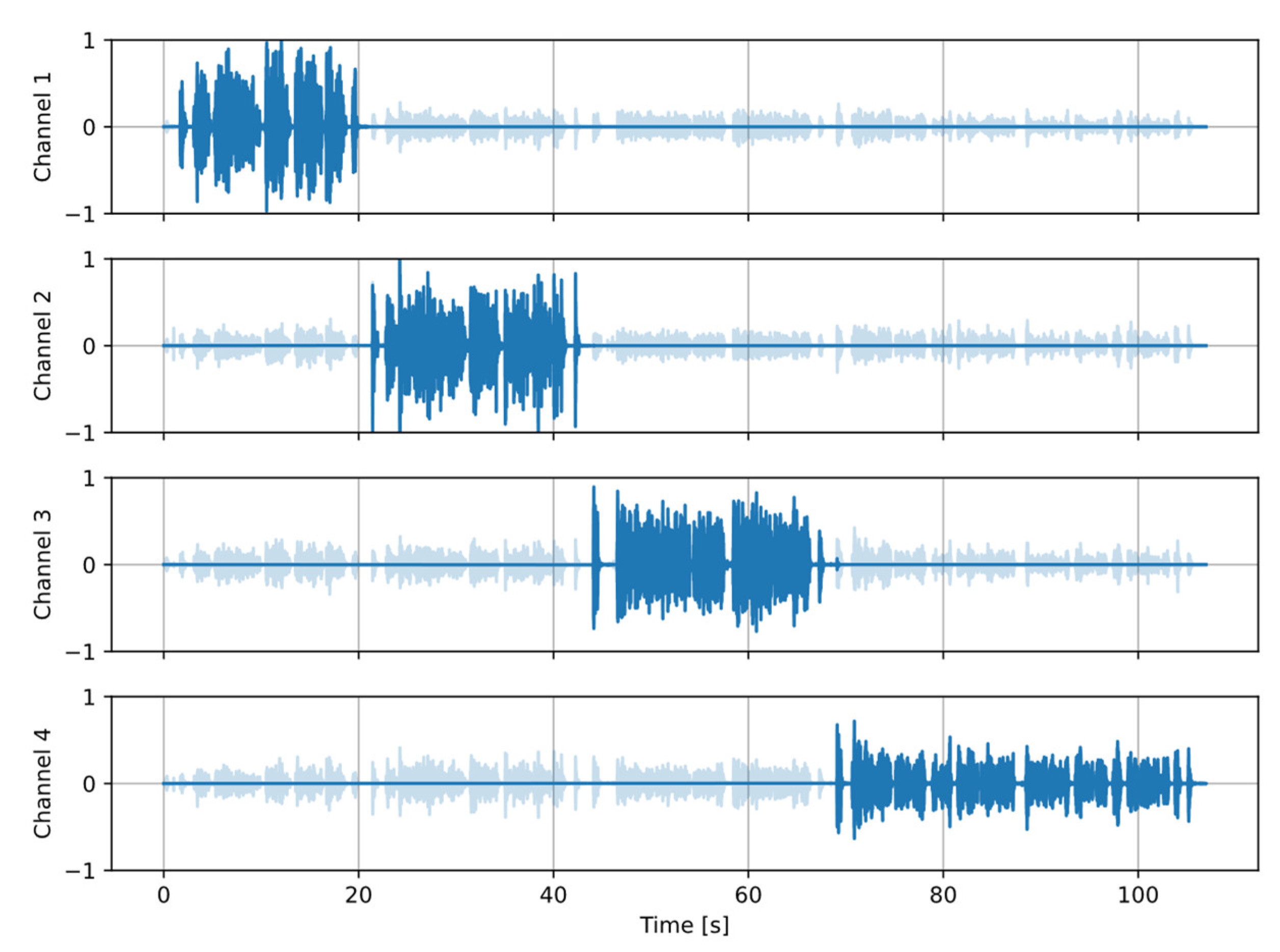

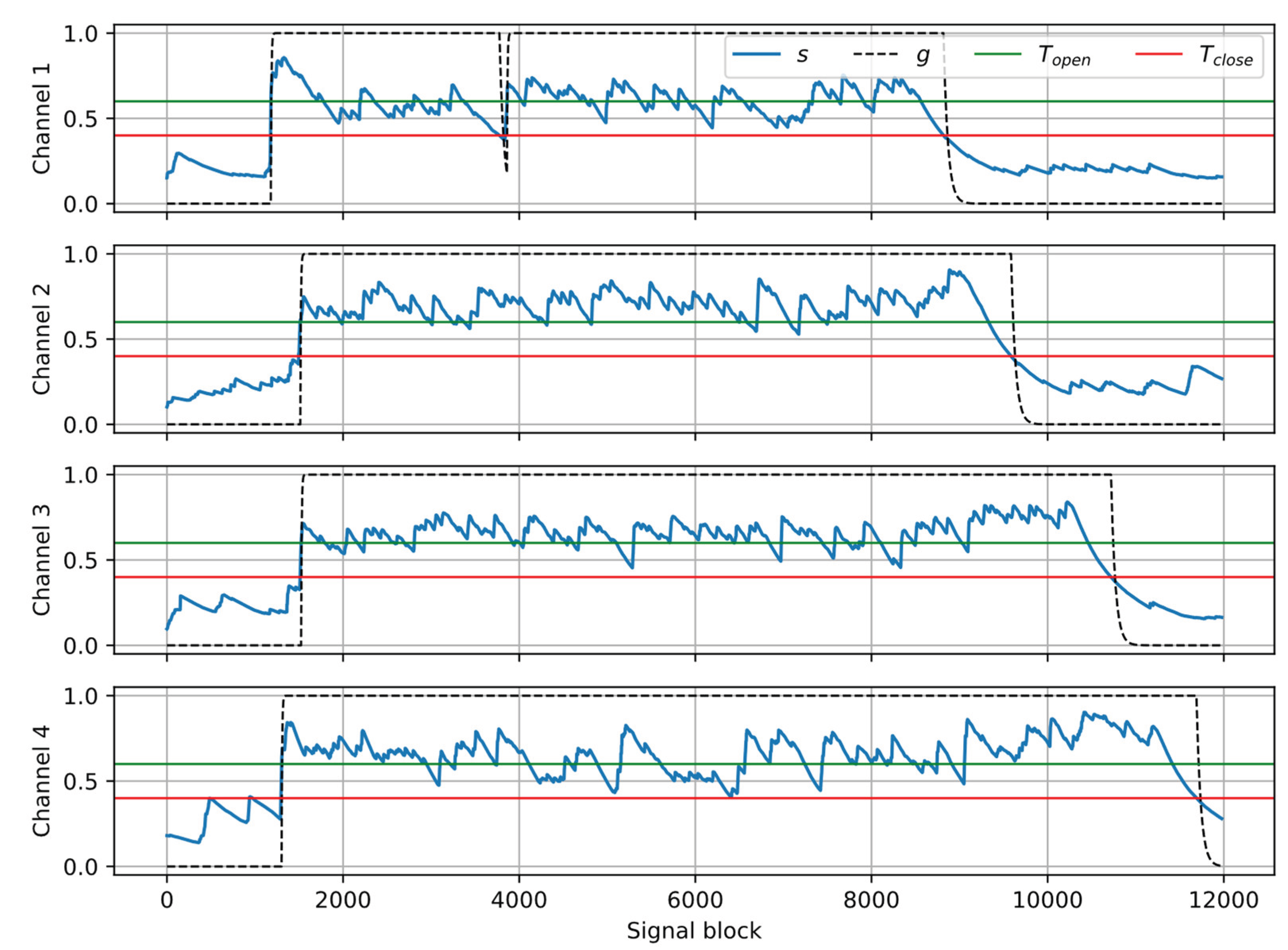

2.5. Crosstalk Detection and Suppression

3. Results

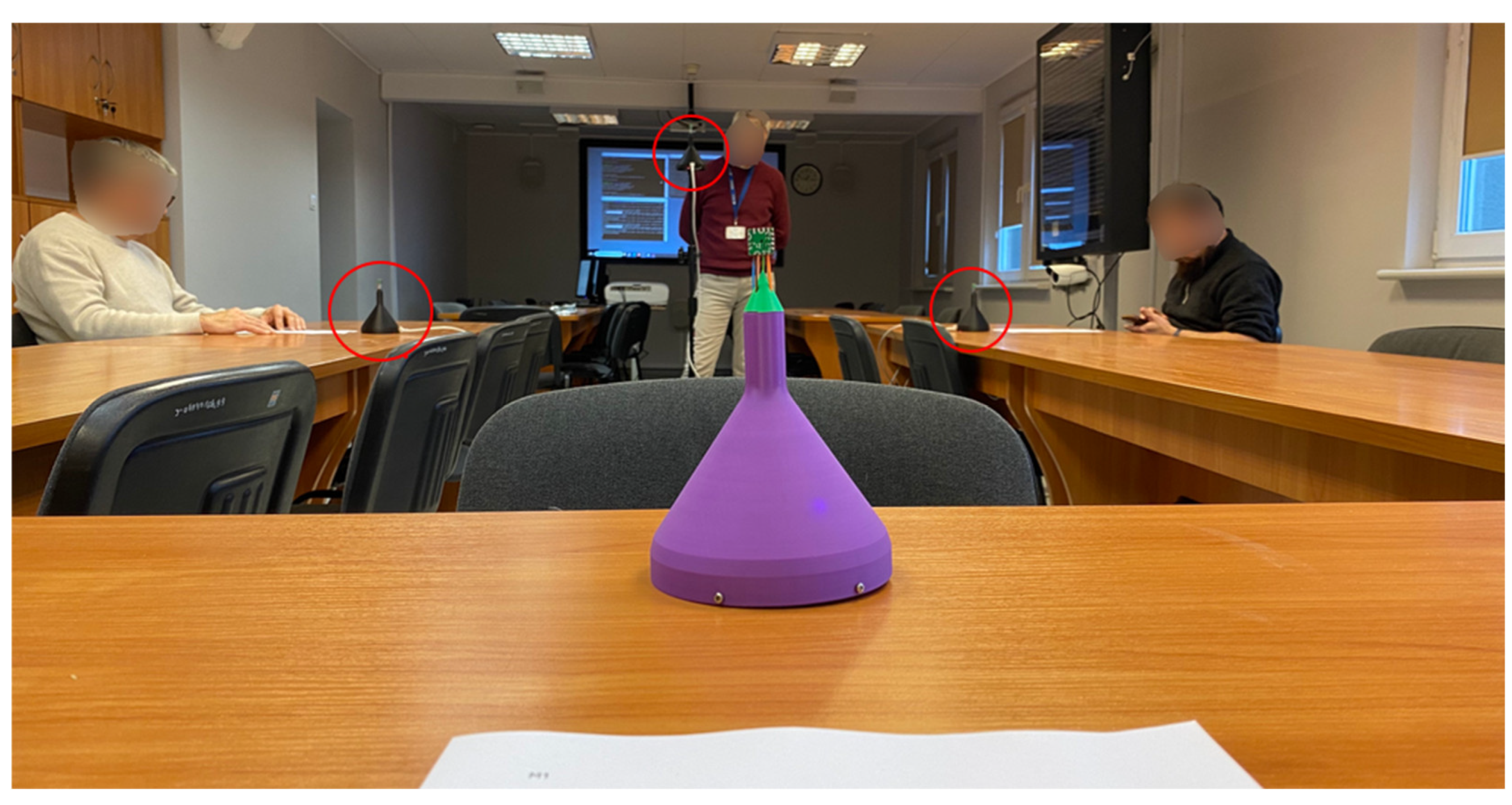

3.1. Evaluation Dataset

3.2. Evaluation Metric

3.3. Reference System

3.4. Evaluation Results

3.5. Algorithm Performance in the Presence of Overlapping Speech

4. Discussion

5. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| ASR | Automatic Speech Recognition |

| AVS | Acoustic Vector Sensor |

| DNN | Deep Neural Networks |

| DOA | Direction of Arrival |

| SI-SDR | Scale Invariant Source-to-Distortion Ratio |

| SNR | Signal-to-Noise Ratio |

References

- Cutajar, M.; Gatt, E.; Grech, I.; Casha, O.; Micallef, J. Comparative study of automatic speech recognition techniques. IET Signal Processing 2013, 7, 25–46. [Google Scholar] [CrossRef]

- Karpagavalli, S.; Chandra, E. A Review on automatic speech recognition architecture and approaches. Int. J. Signal Processing, Image Processing and Pattern Recognition 2016, 9, 393–404. [Google Scholar] [CrossRef]

- Dhanjal, A.S.; Singh, W. A comprehensive survey on automatic speech recognition using neural networks. Multimed Tools Appl 2024, 83, 23367–23412. [Google Scholar] [CrossRef]

- Al-Fraihat, D.; Sharrab, Y.; Alzyoud, F.; Qahmash, A.; Tarawneh, M.; Maaita, A. Speech recognition utilizing deep learning: A systematic review of the latest developments. Human-centric computing and information sciences 2024, 14, 15. [Google Scholar] [CrossRef]

- Ahlawat, H.; Aggarwal, N.; Gupta, D. Automatic Speech Recognition: A survey of deep learning techniques and approaches. Int. J. Cognitive Computing in Engineering 2025, 6, 201–237. [Google Scholar] [CrossRef]

- Pfau, T.; Ellis, D.P.W.; Stolcke, A. Multispeaker speech activity detection for the ICSI meeting recorder. IEEE Workshop on Automatic Speech Recognition and Understanding ASRU ‘01, Madonna di Campiglio, Italy, 9-13 Dec. 2001, pp. 107-110. [CrossRef]

- Wrigley, S.N.; Brown, G.J.; Wan, V.; Renals, S. Speech and crosstalk detection in multichannel audio. IEEE Trans. Speech and Audio Processing 2005, 13, 84–91. [Google Scholar] [CrossRef]

- Meyer, P.; Elshamy, S; Fingscheidt, T. Multichannel speaker interference reduction using frequency domain adaptive filtering. J. Audio Speech Music Proc. 2020, 14. [Google Scholar] [CrossRef]

- Meyer, P.; Elshamy, S; Fingscheidt, T. A multichannel Kalman-based Wiener filter approach for speaker interference reduction in meetings. IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4-8 May 2020, pp. 451-455. [CrossRef]

- Milner, R.; Hain, T. DNN approach to speaker diarisation using speaker channels. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5-9 March 2017, pp. 4925-4929. [CrossRef]

- Sell, G.; McCree, A. Multi-speaker conversations, cross-talk, and diarization for speaker recognition. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5-9 March 2017, pp. 5425-5429. [CrossRef]

- Lin, J.; Moritz, N.; Xie, R.; Kalgaonkar, K.; Fuegen, C.; Seide, F. Directional speech recognition for speaker disambiguation and cross-talk suppression. INTERSPEECH 2023, Dublin, Ireland, 20-24 Aug. 2023, pp. 3522-3526. [CrossRef]

- Wang, Z.-Q.; Kumar, A.; Watanabe, S. Cross-talk reduction. Int. J. Conf. Artificial Intelligence (IJCAI), Jeju, South Korea, 3-9 Aug. 2024. [CrossRef]

- Han, H.; Kumar, N. A cross-talk robust multichannel VAD model for multiparty agent interactions trained using synthetic re-recordings. IEEE Int. Conf. Acoustics, Speech, and Signal Processing Workshops (ICASSPW), Seoul, Rep. Korea, 14-19 April 2024, pp. 444-448. [CrossRef]

- Mitrofanov, A.; Prisyach, T.; Timofeeva, T.; Novoselov, S.; Korenevsky, M.; Khokhlov, Y.; Akulov, A.; Anikin, A.; Khalili, R.; Lezhenin, I; Melnikov, A. ; Miroshnichenko, D.; Mamaev, N.; Odegov, I.; Rudnitskaya, O.; Romanenko, A. Accurate speaker counting, diarization and separation for advanced recognition of multichannel multispeaker conversations. Computer Speech & Language 2025, 92, 101780. [Google Scholar] [CrossRef]

- Hu, Q.; Sun, T.; Chen, X.; Rong, X.; Lu, J. Optimization of modular multi-speaker distant conversational speech recognition. Computer Speech & Language 2026, 95, 101816. [Google Scholar] [CrossRef]

- Tranter, S.E.; Reynolds, D.A. An overview of automatic speaker diarization systems. IEEE Trans. Audio, Speech, and Language Processing 2006, 14, 1557–1565. [Google Scholar] [CrossRef]

- Anguera, X.; Bozonnet, S.; Evans, N.; Fredouille, C.; Friedland, G. , Vinyals, O. Speaker diarization: a review of recent research. IEEE Trans. Audio, Speech, and Language Processing 2012, 20, 356–370. [Google Scholar] [CrossRef]

- Park, T.J.; Kanda, N.; Dimitriadis, D.; Han, K.J.; Watanabe, S.; Narayanan, S. A review of speaker diarization: Recent advances with deep learning. Computer Speech & Language 2022, 72, 101317. [Google Scholar] [CrossRef]

- O’Shaughnessy, D. Diarization: a review of objectives and methods. Appl. Sci. 2025, 15, 2002. [Google Scholar] [CrossRef]

- Chang, X.; Zhang, W.; Qian, Y.; Roux, J.L.; Watanabe, S. End-to-end multi-speaker speech recognition with Transformer. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4-8 May 2020, pp. 6134-6138. [CrossRef]

- Medennikov, I.; Korenevsky, M.; Prisyach, T.; Khokhlov, Y.; Korenevskaya, M.; Sorokin, I.; Timofeeva, T.; Mitrofanov, A.; Andrusenko, A.; Podluzhny, I.; Laptev, A.; Romanenko, A. Target-speaker voice activity detection: a novel approach for multi-speaker diarization in a dinner party scenario. INTERSPEECH 2020, Shanghai, China, 25-29 Oct. 2020, pp. 274-278. [CrossRef]

- Raj, D.; Denisov, P.; Chen, Z.; Erdogan, H.; Huang, Z.; He, M.; Watanabe, S.; Du, J.; Yoshioka, T.; Luo, Y.; Kanda, N.; Li, J.; Wisdom, S.; Hershey, J. Integration of speech separation, diarization, and recognition for multi-speaker meetings: system description, comparison, and analysis. IEEE Spoken Language Technology Workshop (SLT), Shenzhen, China, 19-22 Jan. 2021, pp. 897-904. [CrossRef]

- Zheng, N.; Li, N.; Yu, J.; Weng, C.; Su, D.; Liu, X.; Meng, H.M. Multi-channel speaker diarization using spatial features for meetings. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), Singapore, Singapore, 23-27 May 2022, pp. 7337-7341. [CrossRef]

- Horiguchi, S.; Takashima, Y.; García, P.; Watanabe, S.; Kawaguchi, Y. Multi-channel end-to-end neural diarization with distributed microphones. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), Singapore, Singapore, 23-27 May 2022, pp. 7332-7336. [CrossRef]

- Gomez, A.; Pattichis, M.S.; Celedón-Pattichis, S. Speaker diarization and identification from single channel classroom audio recordings using virtual microphones. IEEE Access 2022, 10, 56256–56266. [Google Scholar] [CrossRef]

- Wang, J.; Liu, Y.; Wang, B.; Zhi, Y.; Li, S.; Xia, S.; Zhang, J.; Tong, F.; Li, L.; Hong, Q. Spatial-aware speaker diarization for multi-channel multi-party meeting. INTERSPEECH 2022, Incheon, South Korea, 18-22 Sept. 2022, pp. 1491-1495. [CrossRef]

- Taherian, H.; Wang, D. Multi-channel conversational speaker separation via neural diarization. IEEE/ACM Trans. Audio, Speech, and Language Processing 2024, 32, 2467–2476. [Google Scholar] [CrossRef]

- Wang, R.; Niu, S.; Yang, G.; Du, J.; Qian, S.; Gao, T.; Pan, J. Incorporating spatial cues in modular speaker diarization for multi-channel multi-party meetings. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6-11 April 2025. [CrossRef]

- Ma, F.; Tu, Y.; He, M.; Wang, R.; Niu, S.; Sun, L.; Ye, Z.; Du, J.; Pan, J.; Lee, C.-H. A spatial long-term iterative mask estimation approach for multi-channel speaker diarization and speech recognition. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), Seoul, South Korea, 14-19 April 2024, pp. 12331-12335. [CrossRef]

- Xylogiannis, P.; Vryzas, N.; Vrysis, L.; Dimoulas, C. Multisensory fusion for unsupervised spatiotemporal speaker diarization. Sensors 2024, 24, 4229. [Google Scholar] [CrossRef] [PubMed]

- Cord-Landwehr, T.; Gburrek, T.; Deegen, M.; Haeb-Umbach, R. Spatio-spectral diarization of meetings by combining TDOA-based segmentation and speaker embedding-based clustering. INTERSPEECH 2025, Rotterdam, The Netherlands, 17-21 Aug. 2025, pp. 5223-5227. [CrossRef]

- Cao, J.; Liu, J.; Wang, J.; Lai, X. Acoustic vector sensor: reviews and future perspectives. IET Signal Process. 2017, 11, 1–9. [Google Scholar] [CrossRef]

- Shujau, M.; Ritz, C.H.; Burnett, I.S. Separation of speech sources using an Acoustic Vector Sensor. IEEE Int. Workshop on Multimedia Signal Processing, Hangzhou, China, 17-19 Oct. 2011. [CrossRef]

- Jin, Y.; Zou, Y.; Ritz, C.H. Robust speaker DOA estimation based on the inter-sensor data ratio model and binary mask estimation in the bispectrum domain. IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5-9 March 2017, pp. 3266-3270. [CrossRef]

- Zou, Y.; Liu, Z.; Ritz, C.H. Enhancing target speech based on nonlinear soft masking using a single Acoustic Vector Sensor. Appl. Sci. 2018, 8, 1436. [Google Scholar] [CrossRef]

- Wang, D.; Zou, Y. Joint noise and reverberation adaptive learning for robust speaker DOA estimation with an Acoustic Vector Sensor, INTERSPEECH 2018, Hyderabad, India, 2-6 Sept. 2018, pp. 821-825. [CrossRef]

- Wang, D.; Zou, Y.; Wang, W. Learning soft mask with DNN and DNN-SVM for multi-speaker DOA estimation using an acoustic vector sensor. J. Franklin Institute, 2018, 355, 1692–1709. [Google Scholar] [CrossRef]

- Geng, J.; Wang, S.; Gao, S.; Liu, Q.; Lou, X. A time-frequency bins selection pipeline for direction-of-arrival estimation using a single Acoustic Vector Sensor. IEEE Sensors Journal 2022, 22, 14306–14319. [Google Scholar] [CrossRef]

- Gburrek, T.; Schmalenstroeer, J.; Haeb-Umbach, R. Spatial diarization for meeting transcription with ad-hoc Acoustic Sensor Networks. 57th Asilomar Conf. Signals, Systems, and Computers, Pacific Grove, CA, USA, 29 Oct.-1 Nov. 2023, pp. 1399-1403. [CrossRef]

- Kotus, J.; Szwoch, G. Separation of simultaneous speakers with Acoustic Vector Sensor. Sensors 2025, 25, 1509. [Google Scholar] [CrossRef] [PubMed]

- Fahy, F. Sound intensity, 2nd ed., E & F.N. Spon: London, United Kingdom, 1995.

- Dutilleux, P.; Dempwolf, K.; Holters, M.; Zölzer, U. Nonlinear processing. In DAFX: Digital Audio Effects, 2nd ed.; Zölzer, U., Ed.; Wiley: Chichester, UK, 2011; pp. 101–138. [Google Scholar]

- Kotus, J.; Szwoch, G. Calibration of Acoustic Vector Sensor based on MEMS microphones for DOA estimation. Appl. Acoust. 2018, 141, 307–321. [Google Scholar] [CrossRef]

- Roux, J.L.; Wisdom, S.; Erdogan, H.; Hershey, J.R. SDR – half-baked or well done?” IEEE Int. Conf. Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 12-17 May 2019, pp. 626-630. [CrossRef]

- Bredin, H. Pyannote.audio 2.1 Speaker Diarization Pipeline: Principle, Benchmark, and Recipe. INTERSPEECH 2023, Dublin, Ireland, 20-24 Aug. 2023, pp. 1983-1987. [CrossRef]

- Bain, M.; Huh, J.; Han, T.; Zisserman, A. WhisperX: time-accurate speech transcription of long-form audio. INTERSPEECH 2023, Dublin, Ireland, 20-24 Aug. 2023, pp. 4489-4493. [CrossRef]

| Algorithm | SI-SDR | ΔSI-SDR |

|---|---|---|

| Unprocessed signals | 5.13 | – |

| Fixed width, with separation | 14.51 | 9.38 |

| Dynamic width, with separation | 12.67 | 7.54 |

| Fixed width, without separation | 21.33 | 16.20 |

| Dynamic width, without separation | 24.66 | 19.53 |

| Reference system | 25.62 | 20.49 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).