Submitted:

22 September 2025

Posted:

25 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We systematize SNN components: neuron models (Integrate-and-Fire (IF) / Leaky Integrate-and-Fire (LIF), Adaptive Leaky Integrate-and-Fire (ALIF), Exponential Integrate-and-Fire / Adaptive Exponential integrate-and-fire (EIF/AdEx), Resonate-and-Fire (RF), Hodgkin–Huxley (HH), Izhikevich, Resonate-and-Fire–Izhikevich hybrid (RF–Iz), Current-Based neuron (CUBA), Sigma–Delta ()), neural encodings (direct/single-value encoding, rate coding, temporal variants including Time-to-First-Spike (TTFS), Rank-Order with Number-of-Spikes (R–NoM), population coding, phase-of-firing coding (PoFC), burst coding, Sigma–Delta encoding ()), and learning paradigms(review scope): supervised ( backpropagation-through-time (BPTT) with surrogate gradients; e.g., SLAYER, SuperSpike, EventProp), unsupervised (spike-timing–dependent plasticity(STDP) and variants), reinforcement (reward-modulated (R-STDP), e-prop), hybrid supervised STDP (SSTDP), and ANN→SNN conversion. and Practical pipeline used here: supervised training via BPTT with surrogate gradients (aTan by default; SLAYER/SuperSpike-style updates) for the tutorial and benchmarks. [16,17,18,19].

- We provide a practical tutorial (with reference to a representative neuromorphic software stack, e.g., Lava) covering model construction, encoding choices, and training/inference workflows suitable for resource-constrained deployment.

- We establish a side-by-side evaluation protocol that compares SNNs with architecturally matched ANNs on a shallow setting (MNIST) and a deeper convolutional setting (CIFAR-10 with VGG-style backbones [34]). Metrics include task accuracy/timesteps, spike activity, and power-oriented proxies to illuminate accuracy–efficiency trade-offs.

- We distill design guidelines that map application goals—accuracy targets and per-inference energy budgets—onto actionable choices of neuron model, encoding scheme, number of time steps, and supervised surrogate-gradient training (e.g., SLAYER, SuperSpike, aTan).

2. Background of Spiking Neural Networks

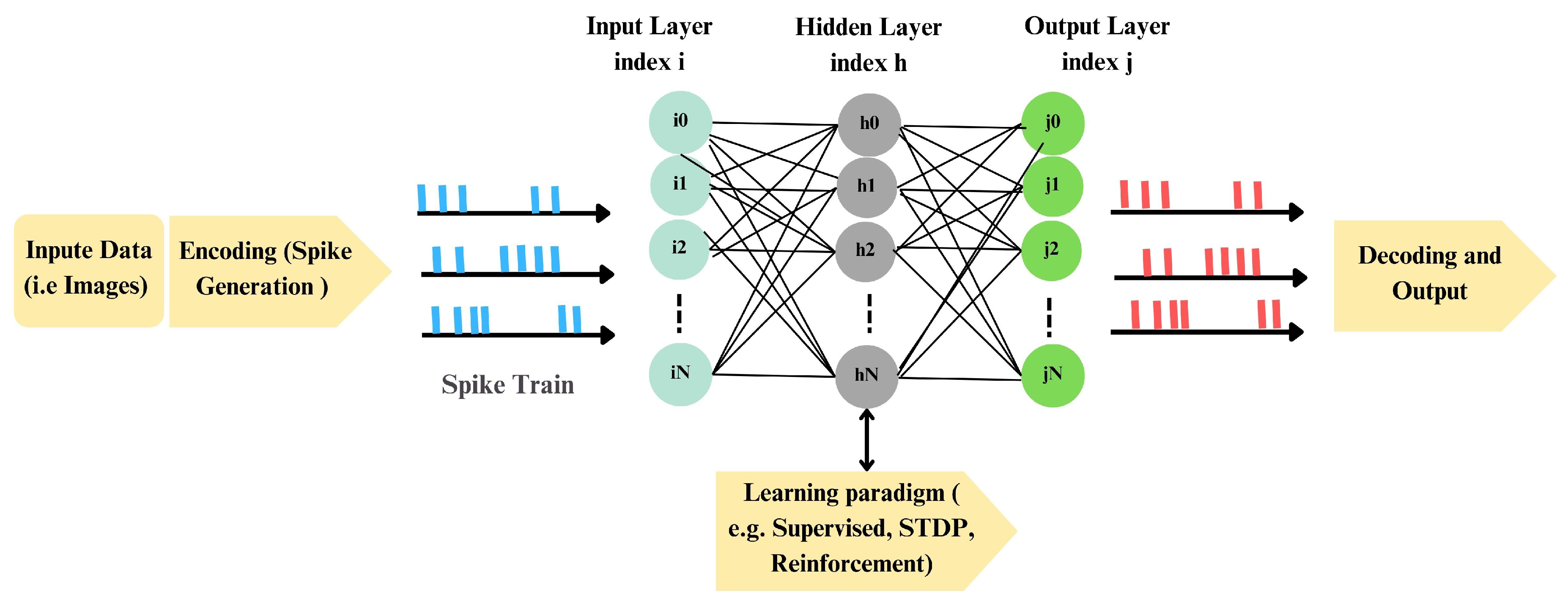

2.0.0.1. Processing pipeline:

2.0.0.2. Power efficiency: mechanisms and practice:

2.0.0.3. Advantages and challenges:

2.0.0.4. Learning and encoding:

2.0.0.5. Real-world applications:

2.1. Encoding in SNNs

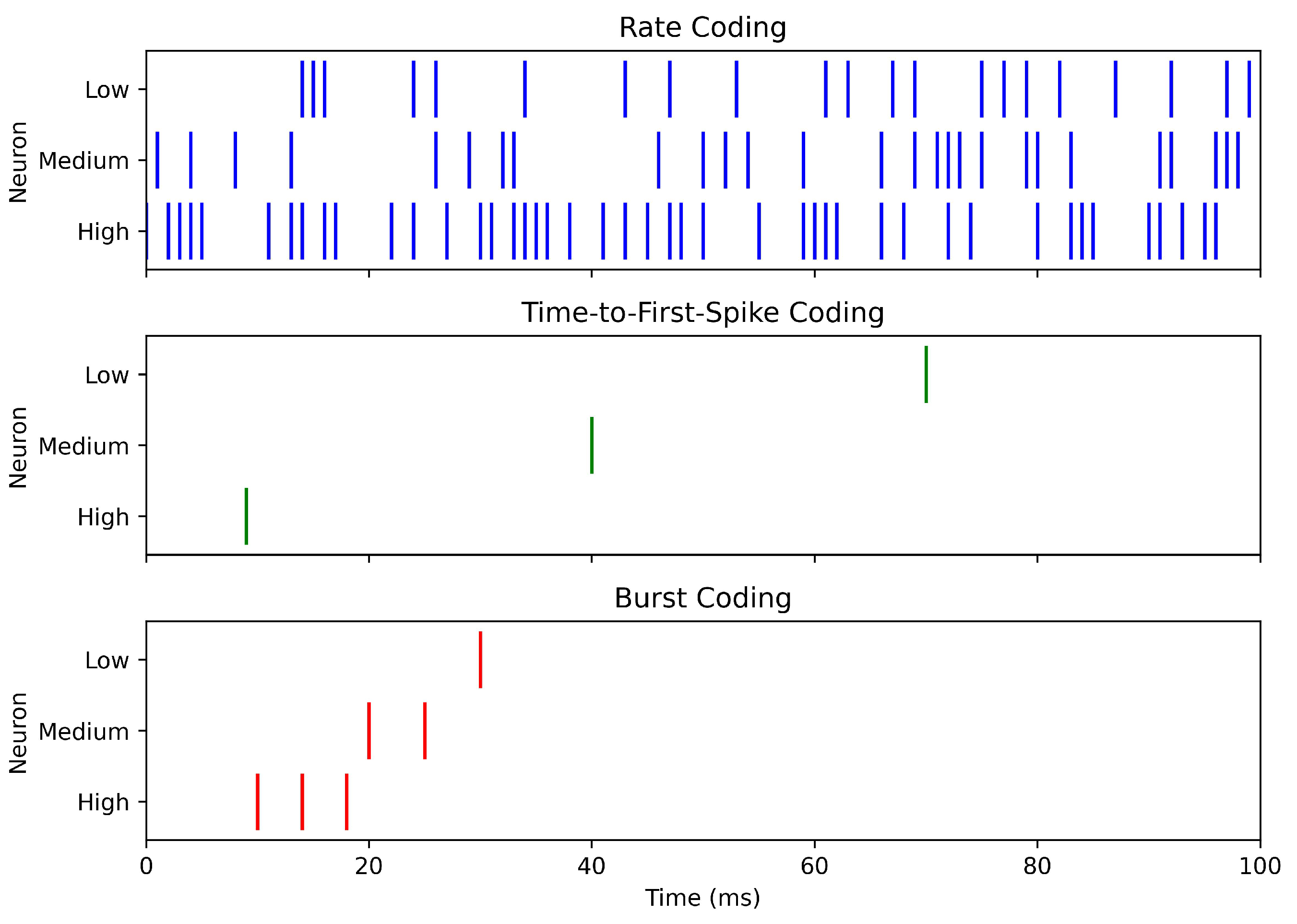

2.1.1. Rate Coding

2.1.2. Direct Input Encoding

2.1.3. Temporal Coding

- Time-to-First-Spike (TTFS): encodes stimulus strength in the latency of the first spike:where is stimulus onset and the delay before firing [14]. TTFS is highly power-efficient, as minimal spiking activity can support rapid decisions, though it requires precise timing and complicates learning.

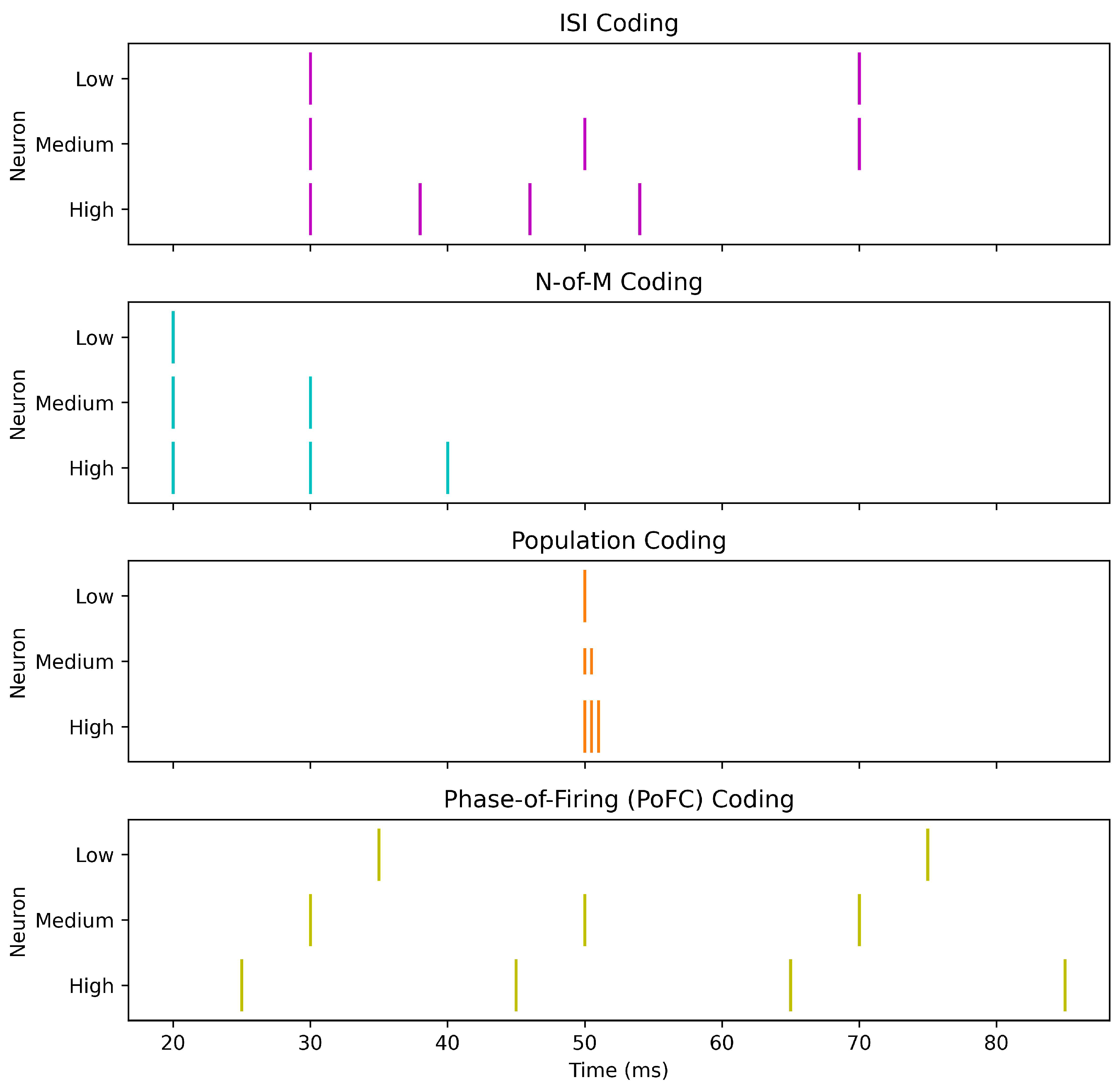

- Inter-Spike Interval (ISI): uses the time gap between consecutive spikes,providing richer temporal detail at the cost of increased spiking and energy use [14].

- N-of-M (NoM) Coding: transmits only the first N of M possible spikes, enhancing hardware efficiency but discarding spike-order information [82].

- Rank Order Coding (ROC): exploits the sequence of spike arrivals according to synaptic weights, offering high discriminability but at the cost of computational intensity and sensitivity to precise timing [24].

- Ranked-N-of-M (R-NoM): integrates ROC and NoM by propagating the first N spikes while weighting their order:where is a decreasing modulation with spike order [82].

2.1.4. Population Coding

2.1.5. Encoding

2.1.6. Burst Coding

2.1.7. PoFC Coding

2.1.8. Impact of Encoding Schemes on SNN Performance and Power Efficiency

2.1.8.1. Guidelines for Selecting an Encoding Scheme.

2.2. SNN Neuron Models

2.2.1. Spike Response Model (SRM)

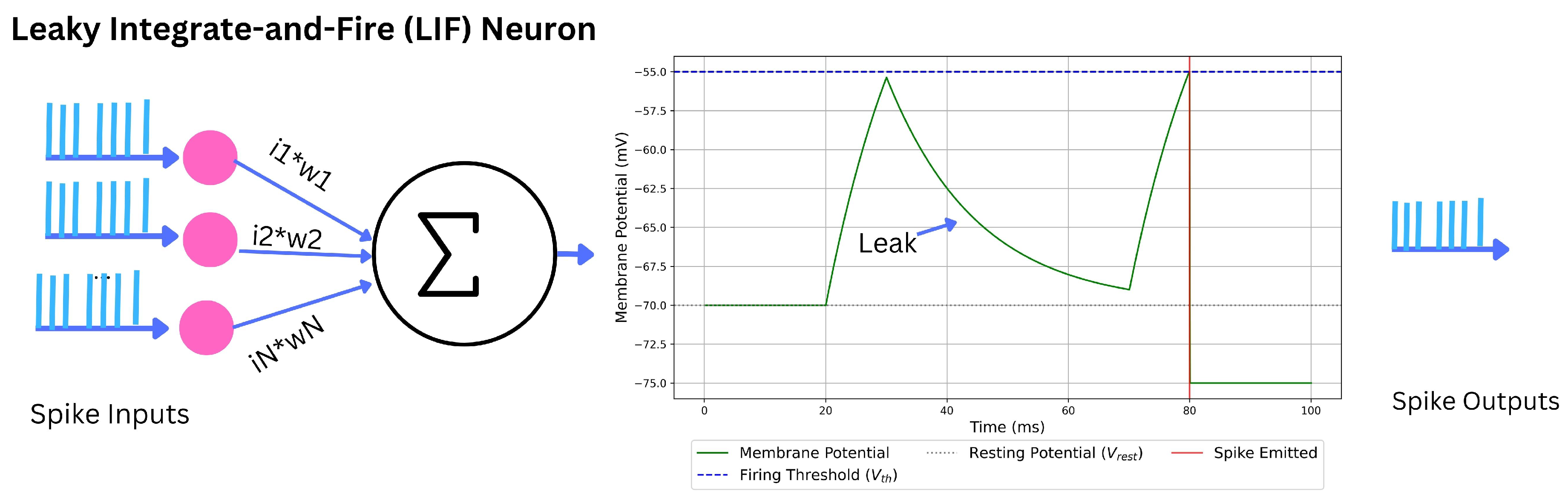

2.2.2. Integrate-and-Fire (IF) and Leaky Integrate-and-Fire (LIF)

2.2.2.1. Perfect IF (PIF).

2.2.2.2. Leaky IF (LIF).

2.2.3. Adaptive Leaky Integrate-and-Fire (ALIF)

2.2.3.1. Dynamic-threshold ALIF.

2.2.3.2. Current-based ALIF.

2.2.4. Exponential Integrate-and-Fire (EIF)

2.2.5. Adaptive Exponential Integrate-and-Fire (AdEx)

2.2.6. Resonate-and-Fire (RF)

2.2.7. Hodgkin–Huxley (HH)

2.2.8. Izhikevich

2.2.9. Resonate-and-Fire Izhikevich (RF–Iz)

2.2.10. Current-Based Neuron (CUBA)

2.2.11. Neuron ()

2.2.12. Trade-offs in Neuron Model Selection for SNNs

2.2.12.1. Practical guidelines.

- Energy/scale: LIF/ALIF or ; use CUBA when speed > realism.

- Temporal richness: ALIF, EIF/AdEx, or RF/RF–Iz for adaptation/resonance/phase coding.

- Mechanistic fidelity: HH for channel-level questions; validate reduced models against HH.

- Trainability: Prefer models with robust surrogate-gradient practice; constrain parameters to avoid stiffness/instability.

- Hardware fit: Match state and nonlinearities to fabric (fixed-point, exponentials/CORDIC, event-driven kernels); layer-wise hybrids (e.g., LIF front-ends + AdEx/ALIF deeper) often win on accuracy–efficiency.

2.3. Learning Paradigms in SNNs

2.3.1. Supervised Learning

2.3.1.1. SpikeProp

2.3.1.2. SuperSpike

2.3.1.3. SLAYER

2.3.1.4. EventProp

2.4. Unsupervised Learning in SNNs

2.4.0.5. STDP

2.4.0.6. Adaptive STDP (aSTDP)

2.4.0.7. Multiplicative STDP

2.4.0.8. Triplet STDP

2.5. Reinforcement Learning in SNNs

2.5.0.9. R-STDP

2.5.0.10. ReSuMe (Rewarded Subspace Method)

2.5.0.11. Eligibility Propagation (e-prop)

2.6. Hybrid Learning Paradigms

2.6.0.12. (SSTDP)

2.6.0.13. ANN-to-SNN Conversion

- Training a conventional ANN using backpropagation.

- Converting neuron activations to spike rates or spike times.

- Adjusting weights, thresholds, and normalization parameters to match the target SNN framework.

- Temporal coding conversion: Encodes information in spike timing to capture temporal patterns, reducing latency and improving performance on dynamic datasets [89].

2.7. Evolution of Supervised Learning in SNNs and Broader Context

3. Materials and Methods

3.1. Experimental Design

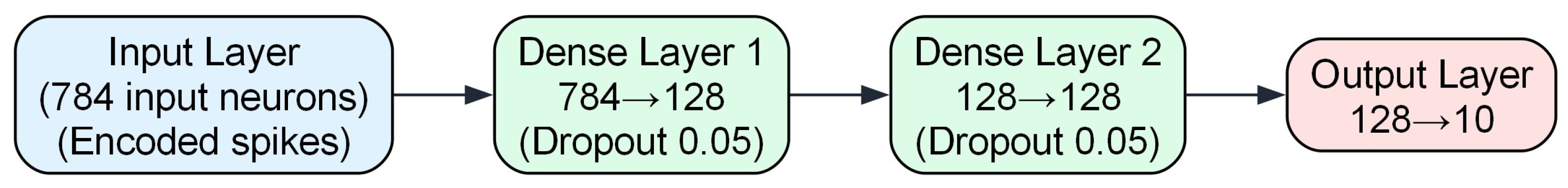

- Fully Connected Network (FCN): Two hidden fully connected layers , and a classifier; applied to MNIST; trainable parameters.

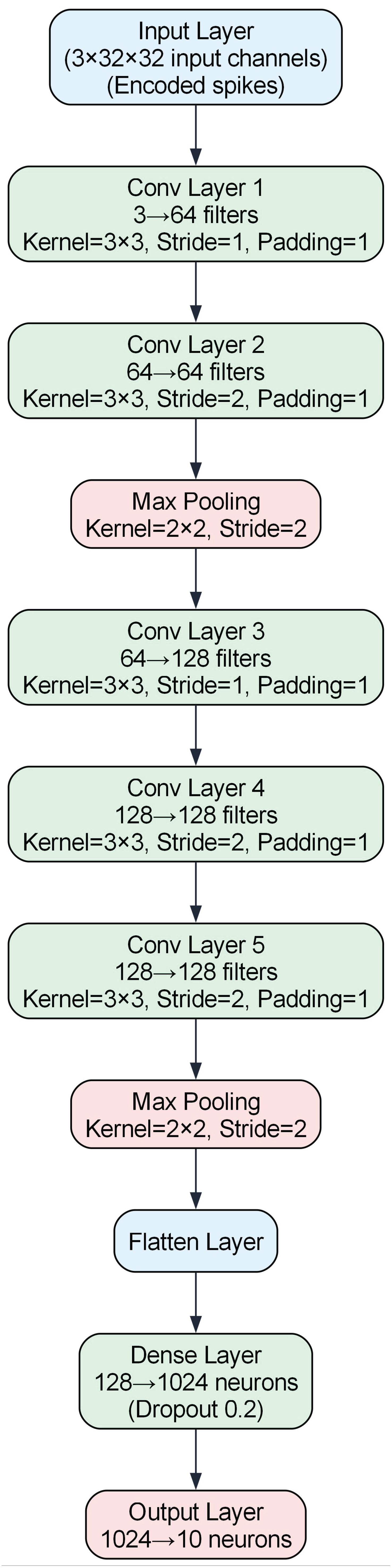

- Deep Convolutional Network (VGG7): five convolutional layers, two max-pooling layers, one hidden fully connected layer, and a classifier; applied to CIFAR-10; trainable parameters.

- Neuron Models: The neuron models include IF, LIF, ALIF, CUBA, , RF, RF–Iz, EIF, and AdEx.

- Encoding Schemes: The encoding schemes utilized are Direct Coding, Rate Encoding, Temporal TTFS, Sigma-Delta () Encoding, Burst Coding, PoFC, and R-NoM. These schemes transform the continuous pixel values of input images into spike trains over specified time steps.

- Predictive Efficacy (Accuracy): the proportion of correctly classified instances.

- Energy Efficiency (Power Consumption): theoretical power usage during inference.

- Training models on MNIST and CIFAR-10 with the specified time steps.

- Encoding inputs based on predefined schemes.

- Measuring predictive accuracy and estimating energy consumption.

- Comparing SNN performance against equivalent ANN baselines to assess accuracy and power efficiency trade-offs.

3.2. Data Collection and Preprocessing

3.3. Implementation Frameworks and Tools

3.3.0.14. Lava

3.3.0.15. SpikingJelly

3.3.0.16. Norse

3.3.0.17. PyTorch

3.4. Neural Network Architectures

3.5. Training Configuration and Procedures

3.6. Evaluation Metrics

3.7. Algorithms

3.7.0.18. Encodings used

- Direct input: duplicate the image across T (no spike synthesis).

- Rate (Poisson): intensities mapped to rates (max 100 Hz); Bernoulli sampling per step.

- TTFS: single-spike latency monotonically mapped from intensity over T bins.

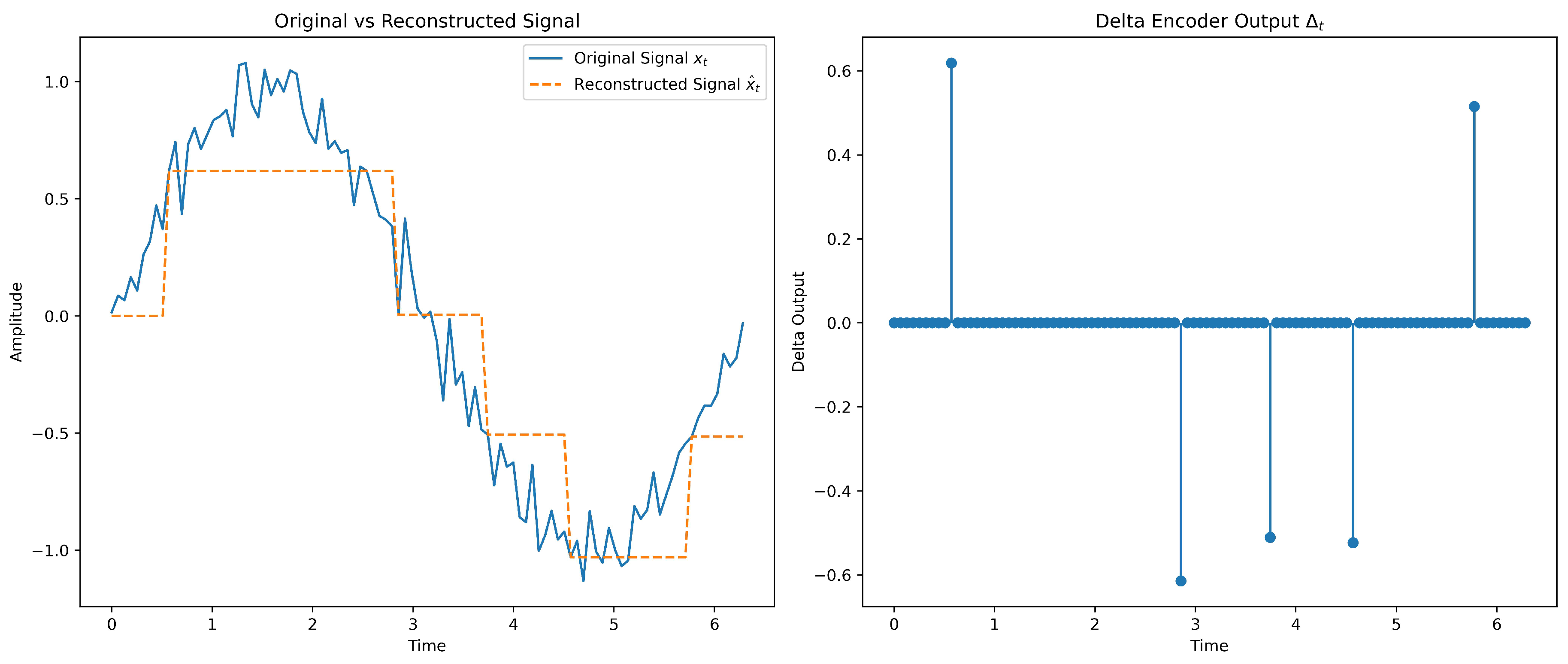

- : dead-zone delta encoder with feedback; emit when (here ), then reconstruct by .

- R–NoM: rank-modulated top-N spiking from sorted intensities; N tuned by validation.

- PoFC: with phase derived from normalized intensity on an cycle.

- Burst: intensity-dependent bursts capped at (chosen by validation) within T.

3.7.0.19. Neuron models instantiated

- IF, LIF (SpikingJelly; ATan surrogate).

- ALIF (Lava; dynamic threshold/current adaptation).

- CUBA (Lava,current-based neuron).

- neuron (Lava; event-driven error-based spiking).

- RF and RF–Iz (Lava; resonance/phase sensitivity).

- EIF and AdEx (Norse; exponential onset and adaptive dynamics).

3.7.0.20. Learning rules

- SLAYER surrogate gradient (Lava/SLAYER 2.0).

- SuperSpike surrogate gradient (Norse).

- ATan surrogate function (SpikingJelly).

| Algorithm 1:Training/evaluationfor VGG7 on CIFAR-10 and FCN on MNIST with operation counting for energy estimation. |

|

Data: VGG7/CIFAR-10 with ; FCN/MNIST with

Result: Trained models, accuracy/loss, per-inference energy proxy

Define encodings and neuron models ; set lr, batch size, epochs foreach dataset do

set T per D; load & preprocess Dforeachdoforeachdoforeachdo

encode data with e over t steps; define architecture with neuron n

initialize params; reset energy counters , forepoch toepochsdoforeachbatch do

forward pass (aggregate over time for SNNs); compute cross-entropy loss

backprop; update (Adam)

accumulate operation counts: synaptic/MAC ops; neuron/state updatesend

evaluate accuracy/loss on validation/test setend

save model and metrics; compute endendend

end

|

4. Results

4.1. Performance on MNIST Dataset

4.1.1. Classification Accuracy Results

4.1.1.1. Observation

- () neurons achieving highest accuracy neurons achieved the highest accuracy of 98.10% with Rate Encoding at 8 time steps. They maintained strong performance across other encoding schemes, including 98.00% with Encoding at both 8 and 6 time steps. This indicates their effectiveness in precise spike-based computation and suitability for tasks requiring precise timing and efficient encoding.

- Adaptive neuron models showing competitive performance Adaptive neuron models like ALIF and AdEx showed competitive performance, particularly with Direct Coding and Burst Coding. ALIF achieved a maximum accuracy of 97.30% with Rate Encoding at 8 time steps, while AdEx reached 97.50% with Direct Coding at 6 time steps. These models effectively incorporate adaptation mechanisms to capture temporal dynamics, providing a favorable balance between accuracy and computational efficiency.

- Solid performance of simpler neuron models Simpler neuron models like IF and LIF provided solid performance with Rate Encoding and Encoding. IF achieved a maximum accuracy of 97.70% with Encoding at 8 time steps, and LIF reached 97.50% with Encoding at 6 time steps. While their accuracies are slightly below the ANN baseline of 98.23%, these results demonstrate that simpler neuron models can still perform well with appropriate encoding schemes.

- Performance of RF neurons The standard RF neuron exhibited lower accuracies than other models, with a maximum of 97.20% achieved with Direct Coding at 8 time steps. The RF-Iz variant performed better, achieving a maximum accuracy of 97.70% with Rate Encoding and Direct Coding at 8 time steps.

- Robustness of CUBA neurons CUBA neurons demonstrated strong performance with a maximum accuracy of 97.66% using Rate Encoding at 8 time steps and maintained high accuracies across other encoding schemes, such as 97.60% with Direct Coding at 8 time steps. This consistency highlights their robustness across different encoding strategies.

- EIF neurons’ effectiveness EIF neurons showed robust performance with a maximum accuracy of 97.60% using Direct Coding at 6 time steps, indicating their effectiveness in handling direct input representations. Neuron models that perform well across multiple encoding schemes, such as CUBA and EIF, demonstrate robustness and versatility, making them suitable for diverse applications where encoding strategies may vary.

- Effectiveness of specific encoding schemes Encoding schemes like Direct Coding and Encoding emerged as the most effective across multiple neuron types, often resulting in the highest accuracies. Burst Coding also demonstrated strong performance, especially when paired with adaptive neuron models like ALIF and AdEx. Temporal encoding schemes (TTFS, PoFC) showed improvement with increased time steps but generally did not outperform Direct and Sigma-Delta Encoding.

- Effect of time steps on accuracy Increasing the number of time steps generally led to higher accuracies for most neuron models and encoding schemes, especially with configurations utilizing temporal encoding schemes like TTFS and PoFC. However, models like ALIF and neurons maintained high accuracy even with fewer time steps, demonstrating their efficiency in capturing essential temporal dynamics and making them more suitable for practical applications due to reduced computational load and energy consumption.

- Advanced neuron models outperform simpler models Advanced neuron models like , ALIF, and AdEx consistently achieved higher accuracy than simpler models like IF and LIF, underscoring the importance of incorporating mechanisms like spike timing precision and adaptation to enhance performance. While some SNN configurations approached the ANN baseline accuracy, others remained slightly below but demonstrated competitive performance. This highlights the potential of SNNs to match traditional ANNs while offering additional benefits like energy efficiency and temporal processing capabilities.

- Variable performance of R-NoM encoding Although not the primary focus, R-NoM encoding showed varying performance across neuron types, with ALIF neurons achieving a maximum of 58.00% and EIF neurons reaching 88.10%. This suggests that while R-NoM can be effective, its performance highly depends on the neuron model used.

4.1.2. Energy Consumption

4.1.2.1. Trade-off between accuracy and power consumption

- R-NoM: minimal energy, lower accuracy R-NoM encoding reaches remarkably low power usage—e.g., J for IF at 6 time steps—but these same configurations typically yield weaker accuracies (e.g., around 70–75% for IF, much lower for ALIF), indicating a clear cost/benefit trade-off.

- High-accuracy configurations are still energy-efficient Several neuron types (e.g., , ALIF, CUBA) achieve accuracies near or above 97–98%, yet their energy consumption remains well under the ANN baseline. For instance, neurons with Rate encoding at 8 time steps yield 98.10% accuracy (Table 6) and consume only J (Table 7), which is more efficient than the ANN baseline.

- AdEx neurons: best energy with Burst coding, good accuracy with Direct coding AdEx achieves its minimal energy consumption under Burst coding (bolded entries in Table 7) but generally attains its top accuracies (around 97.4–97.5%) via Direct coding (Table 6). This highlights another instance of how the most energy-frugal choice may not align with the highest-accuracy choice—even within the same neuron model.

- Fewer time steps often reduce energy but may lower accuracy Most neuron types consume less power at 4 or 6 time steps than at 8. Yet, especially for temporal encoding schemes (e.g., TTFS, PoFC), dropping below 8 time steps can reduce accuracy by several percentage points. The models with built-in adaptation (ALIF, AdEx) or robust dynamics preserve relatively high accuracies at lower time steps, making them attractive for energy-constrained scenarios.

- Overall SNN advantage Nearly all SNN configurations—even those approaching or exceeding 97–98% accuracy—still require less energy than the ANN baseline. This confirms the viability of SNNs for edge devices or power-sensitive deployments, where small accuracy drops might be acceptable if power savings are substantial.

- Conclusion: balancing encoding and neuron type While R-NoM leads in power savings, it lags in accuracy. Schemes like or Direct coding may offer near-ANN accuracy with only a moderate energy increase over R-NoM—still significantly lower than the ANN baseline. Ultimately, choosing a neuron model and encoding scheme requires balancing accuracy targets against power constraints, as each combination exhibits distinct performance–efficiency trade-offs.

4.2. Performance on CIFAR-10 Dataset

4.2.1. Classification Accuracy Results

4.2.1.1. Observation

- neurons achieving highest accuracy The neurons achieved the highest SNN accuracy of 83.00% with Direct coding at 2 time steps, closely matching the ANN baseline of 83.60%. This performance at a low number of time steps indicates the effectiveness of neurons in capturing complex patterns with efficient temporal dynamics. Additionally, TTFS encoding with neurons yielded accuracies up to 72.50%, showcasing their versatility across encoding schemes.

- Solid performance of IF and LIF neurons IF and LIF neurons showed solid performance, with maximum accuracies of 74.50% for both using Direct coding at 4 time steps. These results highlight that even simpler neuron models can perform well on complex datasets when paired with effective encoding schemes. IF and LIF improved slightly with increased time steps, suggesting temporal dynamics contribute positively to their classification capabilities.

- Moderate performance of ALIF neurons The adaptive LIF (ALIF) model achieved a maximum accuracy of 51.00% with Rate encoding at 6 time steps. The lower accuracies compared to other neuron models suggest ALIF may require further tuning or more sophisticated encoding to fully leverage adaptation on CIFAR-10.

- Performance of CUBA neurons CUBA neurons reached a maximum of 50.00% with TTFS at 4 time steps. While above chance, this indicates CUBA may not capture features needed for higher accuracy on CIFAR-10 without additional optimization.

- Limited performance of RF and RF–Iz neurons RF and its Izhikevich variant (RF-Iz) were limited, peaking at 47.00% and 45.00%, respectively—suggesting resonate-and-fire dynamics are less effective for CIFAR-10 image classification.

- Consistent performance of EIF and AdEx neurons EIF reached 70.00% with Direct coding at 2 steps, and AdEx achieved 70.10% with Direct coding at 6 steps. Exponential mechanisms and adaptation appear to aid the processing of complex patterns.

- Effectiveness of Direct coding and TTFS encoding Across neuron models, Direct coding emerged as the most effective scheme, consistently yielding higher accuracies—likely due to conveying rich information without heavy reliance on temporal dynamics. TTFS also performed well, particularly with neurons, indicating precise spike timing benefits certain configurations.

- Impact of time steps Time steps influenced performance, though less than on MNIST. Some models, such as , achieved high accuracies even at low time steps, highlighting efficiency. Increasing steps generally gave modest gains, indicating a trade-off between temporal resolution and computational load.

- Comparison with ANN baseline While several SNN configurations approached the ANN baseline, many still lagged. Careful selection of neuron model and encoding is crucial for high performance on complex datasets. In particular, with Direct coding shows strong promise—high accuracy with few time steps, enabling potential energy and computational savings.

4.2.2. Energy Consumption on CIFAR-10 Dataset

4.2.2.1. Observation

- Overall Energy Trends. In general, SNNs consume significantly less energy than the baseline ANN across neuron types and encoding schemes. Models achieving higher classification accuracy—such as those using Direct Coding or certain temporal encodings—tend to consume more energy relative to simpler encodings. However, even these higher-energy SNN configurations remain below the J reference set by the ANN. This underscores SNNs’ potential for energy savings in complex tasks such as CIFAR-10 classification.

- Influence of Time Steps. A clear pattern emerges whereby increasing the number of time steps typically elevates energy consumption. For example, IF neurons see their energy usage grow from J at 2 time steps to J at 6 time steps under Rate Encoding. Although more time steps can improve classification accuracy in certain cases, it raises the energy cost. Consequently, applications requiring real-time performance or low power draw may benefit from using fewer time steps, provided accuracy remains acceptable.

- Neuron Models and Encoding Schemes. While simpler neuron models (e.g., IF, LIF) generally show moderate energy consumption, their performance depends heavily on the chosen encoding. For instance, IF neurons with Rate Encoding at 2 time steps require as little as J, whereas LIF neurons with certain temporal encodings increase overall energy usage but often achieve better accuracy. More advanced neuron models, such as ALIF or AdEx, sometimes yield improved accuracy but do not always minimize energy. Their adaptive dynamics can reduce spiking under certain conditions, yet these benefits vary with the specific encoding and time-step setting.

- Balancing Accuracy and Efficiency. Although some encoding schemes report lower energy consumption, they may simultaneously yield substantially lower accuracy. Hence, choosing an optimal combination of neuron model, encoding scheme, and time steps is essential for achieving an acceptable balance of performance and efficiency. Overall, the results confirm that SNNs maintain lower energy consumption compared to a conventional ANN, even when configured for higher accuracy. This balance between accuracy and energy use makes SNNs appealing for edge devices and other power-sensitive environments.

4.3. Effect of Thresholding and Encoding Schemes on Model Performance and Energy Consumption

4.3.0.2. Impact of threshold values on classification accuracy

4.3.0.3. Impact of threshold values on energy consumption

4.3.0.4. Trade-off between accuracy and energy consumption

4.3.0.5. Comparison with related studies

4.4. Comparative Analysis and Discussion

- Accuracy-critical tasks: prefer neurons with Direct (CIFAR-10) or Rate/ encodings (MNIST). Expect higher energy than ultra-sparse choices, but still below ANN.

- Energy-constrained/edge settings: choose IF/LIF with Burst or R-NoM and fewer time steps; tune thresholds to meet power budgets, accepting some accuracy loss.

5. Conclusions and Outlook

5.0.0.6. 1) Accuracy–energy trade-off is real but tunable.

- MNIST (FCN): neurons with rate/ encodings reached up to 98.1% (vs. 98.23% ANN) while remaining energetically below the ANN proxy. ALIF/AdEx and even LIF/IF also performed strongly when paired with effective encodings.

- CIFAR-10 (VGG7): neurons with Direct input achieved 83.0% at 2 time steps (vs. 83.6% ANN), indicating that well-chosen neuron/encoding pairs can approach ANN performance even on a deeper, more complex task.

- Encodings that are extremely frugal (e.g., R–NoM) minimized the energy proxy but incurred the largest accuracy drops; conversely, settings that closed the accuracy gap (e.g., with Direct/Rate) used more energy—but still generally below the ANN reference on our GPU-targeted model.

5.0.0.7. 2) Practical configuration rules.

- Accuracy-critical: Prefer neurons with Direct (CIFAR-10) or with Rate/ (MNIST); AdEx/EIF are solid fallbacks. Keep T as low as possible once the accuracy target is met.

- Energy-constrained: Favor simpler neurons (IF/LIF) with Burst or R–NoM encoding and small T; expect some accuracy loss. Intermediate thresholds typically balance activity with correctness better than very low or very high ones.

- General tip: Tune thresholds jointly with the encoding; moderate T and carefully chosen thresholds often deliver the best accuracy-per-joule.

5.0.0.8. 3) Neuromorphic potential.

5.0.0.9. Limitations.

5.0.0.10. Future work.

5.0.0.11. Takeaway.

Author Contributions

Funding

Informed Consent Statement: Not applicable.

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Deep Learning in NLP. arXiv preprint arXiv:1906.02243, arXiv:1906.02243 2019.

- Schwartz, R.; Dodge, J.; Smith, N.A.; Etzioni, O. Green AI. arXiv preprint arXiv:2007.10792, arXiv:2007.10792 2020.

- Han, S.; Pool, J.; Tran, J.; Dally, W.J. Learning both weights and connections for efficient neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Vol. 28. 2015. [Google Scholar]

- Shi, Y.; Nguyen, L.; Oh, S.; Liu, X.; Kuzum, D. A soft-pruning method applied during training of spiking neural networks for in-memory computing applications. Frontiers in Neuroscience 2019, 13, 405. [Google Scholar] [CrossRef]

- Hubara, I.; Courbariaux, M.; Soudry, D.; El-Yaniv, R.; Bengio, Y. Quantized neural networks: training neural networks with low precision weights and activations. Journal of Machine Learning Research 2017, 18, 6869–6898. [Google Scholar]

- Patterson, D.A.; Gonzalez, J.; Le, Q.V.; Liang, P.; Munguia, L.M.; Rothchild, D.; So, D.R.; Texier, M.; Dean, J. Carbon emissions and large neural network training. arXiv 2021, arXiv:2104.10350. [Google Scholar] [CrossRef]

- Paschek, S.; Förster, F.; Kipfmüller, M.; Heizmann, M. Probabilistic Estimation of Parameters for Lubrication Application with Neural Networks. Eng 2024, 5, 2428–2440. [Google Scholar] [CrossRef]

- Nashed, S.; Moghanloo, R. Replacing Gauges with Algorithms: Predicting Bottomhole Pressure in Hydraulic Fracturing Using Advanced Machine Learning. Eng 2025, 6, 73. [Google Scholar] [CrossRef]

- Sumon, R.I.; Ali, H.; Akter, S.; Uddin, S.M.I.; Mozumder, M.A.I.; Kim, H.C. A Deep Learning-Based Approach for Precise Emotion Recognition in Domestic Animals Using EfficientNetB5 Architecture. Eng 2025, 6, 9. [Google Scholar] [CrossRef]

- Maass, W. Networks of spiking neurons: the third generation of neural network models. Neural Networks 1997, 10, 1659–1671. [Google Scholar] [CrossRef]

- Adrian, E.D.; Zotterman, Y. The impulses produced by sensory nerve endings: Part 3. impulses set up by touch and pressure. The Journal of Physiology 1926, 61, 465–483. [Google Scholar] [CrossRef]

- Rueckauer, B.; Liu, S.C. Conversion of analog to spiking neural networks using sparse temporal coding. In Proceedings of the Proceedings of the IEEE International Symposium on Circuits and Systems (ISCAS), 2018, Vol.

- Park, S.; Kim, S.; Choe, H.; Yoon, S. Fast and Efficient Information Transmission with Burst Spikes in Deep Spiking Neural Networks. In Proceedings of the Proceedings of the 56th Annual Design Automation Conference 2019 (DAC ’19); 2019. [Google Scholar]

- Gollisch, T.; Meister, M. Rapid neural coding in the retina with relative spike latencies. Science 2008, 319, 1108–1111. [Google Scholar] [CrossRef]

- Yamazaki, K.; Vo-Ho, V.K.; Bulsara, D.; Le, N. Spiking neural networks and their applications: a review. Brain Sciences 2022, 12, 863. [Google Scholar] [CrossRef]

- Neftci, E.O.; Mostafa, H.; Zenke, F. Surrogate gradient learning in spiking neural networks: Bringing the power of gradient-based optimization to spiking neural networks. IEEE Signal Processing Magazine 2019, 36, 51–63. [Google Scholar] [CrossRef]

- Lee, J.; Delbrück, T.; Pfeiffer, M. Enabling Gradient-Based Learning in Spiking Neural Networks with Surrogate Gradients. Frontiers in Neuroscience 2020, 14, 123. [Google Scholar] [CrossRef]

- Zhou, C.; Zhang, H.; Yu, L.; Ye, Y.; Zhou, Z.; Huang, L.; Tian, Y. Direct training high-performance deep spiking neural networks: a review of theories and methods. arXiv 2024, arXiv:2405.04289]. [Google Scholar] [CrossRef]

- Auge, D.; Hille, J.; Mueller, E.; Knoll, A. A survey of encoding techniques for signal processing in spiking neural networks. Neural Processing Letters 2021, 53, 4693–4710. [Google Scholar] [CrossRef]

- Zhou, X.; et al. DeepSNN: A Comprehensive Survey on Deep Spiking Neural Networks. Frontiers in Neuroscience 2020, 14, 456. [Google Scholar] [CrossRef]

- Nguyen, D.A.; Tran, X.T.; Iacopi, F. A review of algorithms and hardware implementations for spiking neural networks. Journal of Low Power Electronics and Applications 2021, 11, 23. [Google Scholar] [CrossRef]

- Dora, S.; Kasabov, N. Spiking neural networks for computational intelligence: an overview. Big Data and Cognitive Computing 2021, 5, 67. [Google Scholar] [CrossRef]

- Pietrzak, P.; Szczęsny, S.; Huderek, D.; Przyborowski, Ł. Overview of spiking neural network learning approaches and their computational complexities. Sensors 2023, 23, 3037. [Google Scholar] [CrossRef] [PubMed]

- Thorpe, S.; Gautrais, J. Rank order coding. In Computational Neuroscience: Trends in Research, 1998; Springer US: Boston, MA, 1998; pp. 113–118. [Google Scholar]

- Paugam-Moisy, H. Spiking Neuron Networks: A Survey. Technical report, EPFL-REPORT-83371, 2006.

- Davies, M.; Wild, A.; Orchard, G.; Sandamirskaya, Y.; Guerra, G.A.F.; Joshi, P.; Risbud, S.R. Advancing neuromorphic computing with loihi: a survey of results and outlook. In Proceedings of the Proceedings of the IEEE, 2021, Vol.

- Paul, P.; Sosik, P.; Ciencialova, L. A survey on learning models of spiking neural membrane systems and spiking neural networks. arXiv 2024, arXiv:2403.18609. [Google Scholar] [CrossRef]

- Rathi, N.; Chakraborty, I.; Kosta, A.; Sengupta, A.; Ankit, A.; Panda, P.; Roy, K. Exploring neuromorphic computing based on spiking neural networks: algorithms to hardware. ACM Computing Surveys 2023, 55, 1–49. [Google Scholar] [CrossRef]

- Dampfhoffer, M.; Mesquida, T.; Valentian, A.; Anghel, L. Backpropagation-based learning techniques for deep spiking neural networks: a survey. IEEE Transactions on Neural Networks and Learning Systems 2023. [Google Scholar] [CrossRef]

- Nunes, J.D.; Carvalho, M.; Carneiro, D.; Cardoso, J.S. Spiking neural networks: a survey. IEEE Access 2022, 10, 60738–60764. [Google Scholar] [CrossRef]

- Schliebs, S.; Kasabov, N. Evolving spiking neural network—a survey. Evolving Systems 2013, 4, 87–98. [Google Scholar] [CrossRef]

- Martinez, F.S.; Casas-Roma, J.; Subirats, L.; Parada, R. Spiking neural networks for autonomous driving: A review. Engineering Applications of Artificial Intelligence 2023. [Google Scholar] [CrossRef]

- Wu, J.; Wang, Y.; Li, Z.; Lu, L.; Li, Q. A review of computing with spiking neural networks. Computers, Materials & Continua 2024, 78. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition, 2014, [1409.1556].

- Dayan, P.; Abbott, L.F. Theoretical neuroscience: computational and mathematical modeling of neural systems; MIT Press, 2005.

- Nielsen, M.A. Neural networks and deep learning; Vol. 25, Determination Press: San Francisco, CA, USA, 2015; pp. 15–24. [Google Scholar]

- Diehl, P.U.; Cook, M. Unsupervised learning of digit recognition using spike-timing-dependent plasticity. Frontiers in Computational Neuroscience 2015, 9, 99. [Google Scholar] [CrossRef] [PubMed]

- Gerstner, W.; Kistler, W.M. Spiking neuron models: single neurons, populations, plasticity; Cambridge University Press: Cambridge, U.K, 2002. [Google Scholar]

- Izhikevich, E.M. Which model to use for cortical spiking neurons? IEEE Transactions on Neural Networks 2004, 15, 1063–1070. [Google Scholar] [CrossRef] [PubMed]

- Horowitz, M. Computing’s energy problem (and what we can do about it). 2014 IEEE International Solid-State Circuits Conference (ISSCC) Digest of Technical Papers. [CrossRef]

- Sze, V.; Chen, Y.H.; Emer, J.S.; Suleiman, A.; Zhang, Z. How to evaluate deep neural network processors: the good, the bad and the ugly. In Proceedings of the Proceedings of the IEEE, 2020, Vol. [CrossRef]

- Davies, M.; Srinivasa, N.; Lin, T.H.; Chinya, G.; Cao, Y.; Choday, S.H.; Dimou, G.; Joshi, P.; Imam, N.; Jain, S.; et al. Loihi: a neuromorphic manycore processor with on-chip learning. IEEE Micro 2018, 38, 82–99. [Google Scholar] [CrossRef]

- Akopyan, F.; Sawada, J.; Cassidy, A.; Alvarez-Icaza, R.; Arthur, J.; Merolla, P.; Imam, N.; Nakamura, Y.; Datta, P.; Nam, G.J.; et al. Truenorth: design and tool flow of a 65 mw 1 million neuron programmable neurosynaptic chip. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2015, 34, 1537–1557. [Google Scholar] [CrossRef]

- Furber, S.B.; Galluppi, F.; Temple, S.; Plana, L.A. The spinnaker project. In Proceedings of the Proceedings of the IEEE, 2014, Vol. [CrossRef]

- Roy, K.; Jaiswal, A.; Panda, P. Towards spike-based machine intelligence with neuromorphic computing. Nature 2019, 575, 607–617. [Google Scholar] [CrossRef] [PubMed]

- Plank, J.S.; Rizzo, C.P.; Gullett, B.; Dent, K.E.M.; Schuman, C.D. Alleviating the Communication Bottleneck in Neuromorphic Computing with Custom-Designed Spiking Neural Networks. Journal of Low Power Electronics and Applications 2025, 15, 50. [Google Scholar] [CrossRef]

- Wang, X.; Zhu, Y.; Zhou, Z.; Chen, X.; Jia, X. Memristor-Based Spiking Neuromorphic Systems Toward Brain-Inspired Perception and Computing. Nanomaterials 2025, 15, 1130. [Google Scholar] [CrossRef]

- Panda, P.; Aketi, S.A.; Roy, K. Toward scalable, efficient, and accurate deep spiking neural networks with backward residual connections, stochastic softmax, and hybridization. Frontiers in Neuroscience 2020, 14, 653. [Google Scholar] [CrossRef]

- Lemaire, Q.; Cordone, L.; Castagnetti, A.; Novac, P.E.; Courtois, J.; Miramond, B. An Analytical Estimation of Spiking Neural Networks Energy Efficiency. In Proceedings of the International Conference on Artificial Neural Networks (ICANN). Springer; 2023; pp. 585–597. [Google Scholar] [CrossRef]

- Shen, Y.; et al. Evolutionary Algorithms for Optimizing Spiking Neural Networks. Frontiers in Neuroscience 2024, 16, 1011. [Google Scholar] [CrossRef]

- Bi, G.Q.; Poo, M.M. Synaptic modifications in cultured hippocampal neurons: dependence on spike timing, synaptic strength, and postsynaptic cell type. Journal of Neuroscience 1998, 18, 10464–10472. [Google Scholar] [CrossRef] [PubMed]

- Mozafari, M.; Ganjtabesh, M.; Nowzari-Dalini, A.; Thorpe, S.J.; Masquelier, T. Bio-inspired digit recognition using reward-modulated spike-timing-dependent plasticity in deep convolutional networks. Pattern Recognition 2019, 94, 87–95. [Google Scholar] [CrossRef]

- Amir, A.; Taba, B.; Berg, D.; Melano, T.; McKinstry, J.; Nolfo, C.D.; Nayak, T.; Andreopoulos, A.; Garreau, G.; Mendoza, M.; et al. A Low Power, Fully Event-Based Gesture Recognition System. In Proceedings of the Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. [CrossRef]

- Massa, R.; Marchisio, A.; Martina, M.; Shafique, M. An Efficient Spiking Neural Network for Recognizing Gestures with a DVS Camera on the Loihi Neuromorphic Processor. arXiv preprint arXiv:2006.09985, arXiv:2006.09985 2020, [2006.09985]. [CrossRef]

- Ma, S.; Pei, J.; Zhang, W.; Wang, G.; Feng, D.; Yu, F.; et al. Neuromorphic Computing Chip with Spatiotemporal Elasticity for Multi-Intelligent-Tasking Robots. Science Robotics 2022, 7, eabk2948. [Google Scholar] [CrossRef] [PubMed]

- Stewart, K.; Orchard, G.; Shrestha, S.B.; Neftci, E. Online Few-Shot Gesture Learning on a Neuromorphic Processor. arXiv preprint arXiv:2008.01151, arXiv:2008.01151 2020, [2008.01151]. [CrossRef]

- Bartolozzi, C.; Indiveri, G.; Donati, E. Embodied Neuromorphic Intelligence. Nature Communications 2022, 13, 1–14. [Google Scholar] [CrossRef]

- Leitão, D.; Cunha, R.; Lemos, J.M. Adaptive Control of Quadrotors in Uncertain Environments. Eng 2024, 5, 544–561. [Google Scholar] [CrossRef]

- Velarde-Gomez, S.; Giraldo, E. Nonlinear Control of a Permanent Magnet Synchronous Motor Based on State Space Neural Network Model Identification and State Estimation by Using a Robust Unscented Kalman Filter. Eng 2025, 6, 30. [Google Scholar] [CrossRef]

- Tan, C.; Šarlija, M.; Kasabov, N. NeuroSense: Short-Term Emotion Recognition and Understanding Based on Spiking Neural Network Modelling of Spatio-Temporal EEG Patterns. Neurocomputing 2021, 434, 137–148. [Google Scholar] [CrossRef]

- Yang, G.; Kang, Y.; Charlton, P.H.; Kyriacou, P.A.; Kim, K.K.; Li, L.; Park, C. Energy-Efficient PPG-Based Respiratory Rate Estimation Using Spiking Neural Networks. Sensors 2024, 24, 3980. [Google Scholar] [CrossRef]

- Kumar, N.; Tang, G.; Yoo, R.; Michmizos, K.P. Decoding EEG With Spiking Neural Networks on Neuromorphic Hardware. Transactions on Machine Learning Research 2022 June. [Google Scholar]

- Garcia-Palencia, O.; Fernandez, J.; Shim, V.; Kasabov, N.K.; Wang, A.; the Alzheimer’s Disease Neuroimaging Initiative. Spiking Neural Networks for Multimodal Neuroimaging: A Comprehensive Review of Current Trends and the NeuCube Brain-Inspired Architecture. Bioengineering 2025, 12, 628. [Google Scholar] [CrossRef]

- Ayasi, B.; Vázquez, I.X.; Saleh, M.; et al. Application of Spiking Neural Networks and Traditional Artificial Neural Networks for Solar Radiation Forecasting in Photovoltaic Systems in Arab Countries. Neural Computation and Applications 2025, 37, 9095–9127. [Google Scholar] [CrossRef]

- Sopeña, J.M.G.; Pakrashi, V.; Ghosh, B. A Spiking Neural Network Based Wind Power Forecasting Model for Neuromorphic Devices. Energies 2022, 15, 7256. [Google Scholar] [CrossRef]

- Thangaraj, V.K.; Nachimuthu, D.S.; Francis, V.A.R. Wind Speed Forecasting at Wind Farm Locations with a Unique Hybrid PSO-ALO Based Modified Spiking Neural Network. Energy Systems 2023, 16, 713–741. [Google Scholar] [CrossRef]

- AbouHassan, I.; Kasabov, N.; Bankar, T.; Garg, R.; Sen Bhattacharya, B. PAMeT-SNN: Predictive Associative Memory for Multiple Time Series based on Spiking Neural Networks with Case Studies in Economics and Finance, 2023, [24063975.v1]. [CrossRef]

- Joseph, G.V.; Pakrashi, V. Spiking Neural Networks for Structural Health Monitoring. Sensors 2022, 22, 9245. [Google Scholar] [CrossRef]

- Reid, D.; Hussain, A.J.; Tawfik, H. Financial Time Series Prediction Using Spiking Neural Networks. PLOS ONE 2014, 9, e103656. [Google Scholar] [CrossRef]

- Du, X.; Tong, W.; Jiang, L.; Yu, D.; Wu, Z.; Duan, Q.; Deng, S. SNN-IoT: Efficient Partitioning and Enabling of Deep Spiking Neural Networks in IoT Services. IEEE Transactions on Services Computing. [CrossRef]

- Li, H.; Tu, B.; Liu, B.; Li, J.; Plaza, A. Adaptive Feature Self-Attention in Spiking Neural Networks for Hyperspectral Classification. IEEE Transactions on Geoscience and Remote Sensing 2025, 63, 1–15. [Google Scholar] [CrossRef]

- Chunduri, R.K.; Perera, D.G. Neuromorphic Sentiment Analysis Using Spiking Neural Networks. Sensors 2023, 23, 7701. [Google Scholar] [CrossRef]

- Schuman, C.D.; Plank, J.; Bruer, G.; Anantharaj, J. Non-Traditional Input Encoding Schemes for Spiking Neuromorphic Systems. In Proceedings of the IEEE International Joint Conference on Neural Networks (IJCNN). IEEE; 2019. [Google Scholar]

- Datta, G.; Liu, Z.; Abdullah-Al Kaiser, M.; Kundu, S.; Mathai, J.; Yin, Z.; et al. In-Sensor & Neuromorphic Computing Are all You Need for Energy Efficient Computer Vision. In Proceedings of the ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE; 2023; pp. 1–5. [Google Scholar]

- Guo, W.; Fouda, M.E.; Eltawil, A.M.; Salama, K.N. Neural coding in spiking neural networks: a comparative study for robust neuromorphic systems. Frontiers in Neuroscience 2021, 15, 638474. [Google Scholar] [CrossRef]

- Mostafa, H. Supervised learning based on temporal coding in spiking neural networks. IEEE Transactions on Neural Networks and Learning Systems 2017, 29, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Sakemi, Y.; Morino, K.; Morie, T.; Aihara, K. A supervised learning algorithm for multilayer spiking neural networks based on temporal coding toward energy-efficient VLSI processor design. IEEE Transactions on Neural Networks and Learning Systems 2021, 34, 394–408. [Google Scholar] [CrossRef]

- Kim, Y.; Park, H.; Moitra, A.; Bhattacharjee, A.; Venkatesha, Y.; Panda, P. Rate Coding or Direct Coding: Which One is Better for Accurate, Robust, and Energy-Efficient Spiking Neural Networks? In Proceedings of the Proceedings of the ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE; 2022; pp. 71–75. [Google Scholar] [CrossRef]

- Zhou, S.; Li, X.; Chen, Y.; Chandrasekaran, S.T.; Sanyal, A. Temporal-coded deep spiking neural network with easy training and robust performance. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence. [CrossRef]

- Gautrais, J.; Thorpe, S. Rate coding versus temporal order coding: a theoretical approach. Biosystems 1998, 48, 57–65. [Google Scholar] [CrossRef]

- Duarte, R.C.; Uhlmann, M.; Van Den Broek, D.V.; Fitz, H.; Petersson, K.; Morrison, A. Encoding symbolic sequences with spiking neural reservoirs. In Proceedings of the IEEE International Joint Conference on Neural Networks (IJCNN). IEEE; 2018. [Google Scholar]

- Bonilla, L.; Gautrais, J.; Thorpe, S.; Masquelier, T. Analyzing time-to-first-spike coding schemes: A theoretical approach. Frontiers in Neuroscience 2022, 16, 971937. [Google Scholar] [CrossRef]

- Averbeck, B.B.; Latham, P.E.; Pouget, A. Neural correlations, population coding and computation. Nature Reviews Neuroscience 2006, 7, 358–366. [Google Scholar] [CrossRef] [PubMed]

- Pan, Z.; Wu, J.; Zhang, M.; Li, H.; Chua, Y. Neural population coding for effective temporal classification. In Proceedings of the 2019 International Joint Conference on Neural Networks (IJCNN). IEEE; 2019; pp. 1–8. [Google Scholar]

- Cheung, K.F.; Tang, P.Y. Sigma-delta modulation neural networks. In Proceedings of the IEEE International Conference on Neural Networks. IEEE; 1993; pp. 489–493. [Google Scholar]

- Yousefzadeh, A.; Hosseini, S.; Holanda, P.; Leroux, S.; Werner, T.; Serrano-Gotarredona, T.; Simoens, P. Conversion of synchronous artificial neural network to asynchronous spiking neural network using sigma-delta quantization. In Proceedings of the 2019 IEEE International Conference on Artificial Intelligence Circuits and Systems (AICAS). IEEE; 2019; pp. 81–85. [Google Scholar]

- Nasrollahi, S.A.; Syutkin, A.; Cowan, G. Input-Layer Neuron Implementation Using Delta-Sigma Modulators. In Proceedings of the 2022 20th IEEE Interregional NEWCAS Conference (NEWCAS). IEEE; 2022; pp. 533–537. [Google Scholar]

- Nair, M.V.; Indiveri, G. An ultra-low power sigma-delta neuron circuit. In Proceedings of the 2019 IEEE International Symposium on Circuits and Systems (ISCAS). IEEE; 2019; pp. 1–5. [Google Scholar]

- Izhikevich, E.M. Simple model of spiking neurons. IEEE Transactions on Neural Networks 2003, 14, 1569–1572. [Google Scholar] [CrossRef]

- Eyherabide, H.G.; Rokem, A.; Herz, A.V.; Samengo, I. Bursts generate a non-reducible spike-pattern code. Frontiers in Neuroscience 2009, 3, 490. [Google Scholar] [CrossRef]

- O’Keefe, J.; Recce, M.L. Phase relationship between hippocampal place units and the EEG theta rhythm. Hippocampus 1993, 3, 317–330. [Google Scholar] [CrossRef] [PubMed]

- Montemurro, M.A.; Rasch, M.J.; Murayama, Y.; Logothetis, N.K.; Panzeri, S. Phase-of-firing coding of natural visual stimuli in primary visual cortex. Current Biology 2008, 18, 375–380. [Google Scholar] [CrossRef]

- Masquelier, T.; Hugues, E.; Deco, G.; Thorpe, S.J. Oscillations, Phase-of-Firing Coding, and Spike Timing-Dependent Plasticity: An Efficient Learning Scheme. Journal of Neuroscience 2009, 29, 13484–13493. [Google Scholar] [CrossRef]

- Wang, Z.; Yu, N.; Liao, Y. Activeness: A Novel Neural Coding Scheme Integrating the Spike Rate and Temporal Information in the Spiking Neural Network. Electronics 2023, 12, 3992. [Google Scholar] [CrossRef]

- Qiu, X.; Zhu, R.J.; Chou, Y.; Wang, Z.; Deng, L.J.; Li, G. Gated attention coding for training high-performance and efficient spiking neural networks. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2024, Vol.

- Paugam-Moisy, H.; Bohte, S.M. Computing with Spiking Neuron Networks. In Handbook of Natural Computing; Rozenberg, G.; Back, T.; Kok, J., Eds.; Springer-Verlag, 2012; pp. 335–376. [CrossRef]

- Hodgkin, A.L.; Huxley, A.F. A quantitative description of membrane current and its application to conduction and excitation in nerve. The Journal of Physiology 1952, 117, 500. [Google Scholar] [CrossRef]

- Brette, R.; Rudolph, M.; Carnevale, T.; Hines, M.; Beeman, D.; Bower, J.M.; et al. Simulation of networks of spiking neurons: a review of tools and strategies. Journal of Computational Neuroscience 2007, 23, 349–398. [Google Scholar] [CrossRef]

- Indiveri, G.; Liu, S.C. Neuromorphic VLSI circuits for spike-based computation. Proceedings of the IEEE 2011, 99, 2414–2435. [Google Scholar]

- Gerstner, W.; van Hemmen, J.L. Why spikes? Hebbian learning and retrieval of time-resolved excitation patterns. Biological Cybernetics 1993, 69, 503–515. [Google Scholar] [CrossRef] [PubMed]

- Lapicque, L. Recherches quantitatives sur l’excitation électrique des nerfs traitée comme une polarisation. Journal de Physiologie et de Pathologie Générale 1907, 9, 620–635. [Google Scholar]

- Dayan, P.; Abbott, L.F. Theoretical Neuroscience: Computational and Mathematical Modeling of Neural Systems; MIT Press, 2001.

- Burkitt, A.N. A review of the integrate-and-fire neuron model: I. Homogeneous synaptic input. Biological Cybernetics 2006, 95, 1–19. [Google Scholar] [CrossRef] [PubMed]

- Brunel, N.; Van Rossum, M.C.W. Lapicque’s 1907 paper: from frogs to integrate-and-fire. Biological Cybernetics 2007, 97, 337–339. [Google Scholar] [CrossRef]

- Benda, J.; Herz, A.V.M. A universal model for spike-frequency adaptation. Neural Computation 2003, 15, 2523–2564. [Google Scholar] [CrossRef]

- Fourcaud-Trocmé, N.; Hansel, D.; Van Vreeswijk, C.; Brunel, N. How spike generation mechanisms determine the neuronal response to fluctuating inputs. Journal of Neuroscience 2003, 23, 11628–11640. [Google Scholar] [CrossRef]

- Gerstner, W.; Brette, R. Adaptive exponential integrate-and-fire model. Scholarpedia 2009, 4, 8427. [Google Scholar] [CrossRef]

- Makhlooghpour, A.; Soleimani, H.; Ahmadi, A.; Zwolinski, M.; Saif, M. High Accuracy Implementation of Adaptive Exponential Integrated and Fire Neuron Model. In Proceedings of the 2016 IEEE International Joint Conference on Neural Networks (IJCNN). IEEE; 2016; pp. 192–197. [Google Scholar] [CrossRef]

- Haghiri, S.; Ahmadi, A. A Novel Digital Realization of AdEx Neuron Model. IEEE Transactions on Circuits and Systems II: Express Briefs 2020, 67, 1444–1451. [Google Scholar] [CrossRef]

- Ahmadi, A.; Zwolinski, M. A Modified Izhikevich Model For Circuit Implementation of Spiking Neural Networks. In Proceedings of the Proceedings of the IEEE International Symposium on Circuits and Systems; 2010. [Google Scholar]

- Izhikevich, E.M. Resonate-and-fire neurons. Neural networks 2001, 14, 883–894. [Google Scholar] [CrossRef]

- Higuchi, S.; Takemoto, K.; Hasegawa, T.; Kanzaki, R. Balanced Resonate-and-Fire Neuron Model for Efficient Recurrent Spiking Neural Networks. of Neural Engineering 2024, XX, XX–XX. https://doi.org/XX.XXXX/XXXXXX.

- Lehmann, H.M.; Hille, J.; Grassmann, C.; Issakov, V. Direct Signal Encoding with Analog Resonate-and-Fire Neurons. IEEE Access 2023, 11, 71985–71995. [Google Scholar] [CrossRef]

- Leigh, A.J.; Heidarpur, M.; Mirhassani, M. A Resource-Efficient and High-Accuracy CORDIC-Based Digital Implementation of the Hodgkin–Huxley Neuron. IEEE Transactions on Very Large Scale Integration (VLSI) Systems.

- Devi, M.; Choudhary, D.; Garg, A.R. Information Processing in Extended Hodgkin-Huxley Neuron Model. In Proceedings of the 2020 3rd International Conference on Emerging Technologies in Computer Engineering: Machine Learning and Internet of Things (ICETCE). IEEE; 2020; pp. 176–180. [Google Scholar]

- Lab, I.N.C. Lava: A Software Framework for Neuromorphic Computing, 2021. [Online]. Available: http://lava-nc.org and https://github.com/lava-nc/lava.

- Zambrano, D.; Bohté, S.M. Fast and efficient asynchronous neural computation with adapting spiking neural networks, 2016, [1609. 0 2053.

- Wu, Y.; Deng, L.; Li, G.; Zhu, J.; Shi, L. Spatio-Temporal Backpropagation for Training High-Performance Spiking Neural Networks. Frontiers in Neuroscience 2018, 12, 331. [Google Scholar] [CrossRef] [PubMed]

- Lee, J.H.; Delbruck, T.; Pfeiffer, M. Training deep spiking neural networks using backpropagation. Frontiers in Neuroscience 2016, 10, 508. [Google Scholar] [CrossRef]

- Shrestha, S.B.; Orchard, G. SLAYER: Spike Layer Error Reassignment in Time. In Proceedings of the Advances in Neural Information Processing Systems (NIPS), Vol. 31. 2018. [Google Scholar]

- Lian, S.; Shen, J.; Liu, Q.; Wang, Z.; Yan, R.; Tang, H. Learnable Surrogate Gradient for Direct Training Spiking Neural Networks. In Proceedings of the Proceedings of the Thirty-second International Joint Conference on Artificial Intelligence (IJCAI-23), 2023, pp. [CrossRef]

- Sjöström, J.; Gerstner, W. Spike-timing dependent plasticity. Spike-timing dependent plasticity 2010, 35, 0–0. [Google Scholar] [CrossRef]

- Bohte, S.; Kok, J.; Poutré, J. SpikeProp: Backpropagation for Networks of Spiking Neurons. In Proceedings of the Proceedings of the 8th European Symposium on Artificial Neural Networks, ESANN 2000, Bruges, Belgium, 26–28 April 2000, 2000, Vol. [Google Scholar]

- Zenke, F.; Ganguli, S. Superspike: Supervised learning in multilayer spiking neural networks. Neural Computation 2018, 30, 1514–1541. [Google Scholar] [CrossRef] [PubMed]

- Wunderlich, T.C.; Pehle, C. Event-based backpropagation can compute exact gradients for spiking neural networks. Scientific Reports 2021, 11, 12829. [Google Scholar] [CrossRef]

- Gautam, A.; Kohno, T. Adaptive STDP-Based On-Chip Spike Pattern Detection. Frontiers in Neuroscience 2023, 17, 1203956. [Google Scholar] [CrossRef]

- Li, S. aSTDP: a more biologically plausible learning, 2022, [2206. 1 4137.

- Paredes-Vallès, F.; Scheper, K.Y.; Croon, G.C.D. Unsupervised Learning of a Hierarchical Spiking Neural Network for Optical Flow Estimation: From Events to Global Motion Perception. IEEE Transactions on Pattern Analysis and Machine Intelligence 2020, 42, 2051–2064. [Google Scholar] [CrossRef]

- Caporale, N.; Dan, Y. Spike Timing–Dependent Plasticity: A Hebbian Learning Rule. Annual Review of Neuroscience 2008, 31, 25–46. [Google Scholar] [CrossRef]

- Ponulak, F. ReSuMe-new supervised learning method for spiking neural networks. Technical report, Institute of Control and Information Engineering, Poznań University of Technology, 2005.

- Bellec, G.; Scherr, F.; Hajek, E.; Salaj, D.; Legenstein, R.; Maass, W. Biologically inspired alternatives to backpropagation through time for learning in recurrent neural nets, 2019, [1901. 0 9049.

- Liu, F.; Zhao, W.; Chen, Y.; Wang, Z.; Yang, T.; Jiang, L. SSTDP: Supervised Spike Timing Dependent Plasticity for Efficient Spiking Neural Network Training. Frontiers in Neuroscience 2021, 15, 756876. [Google Scholar] [CrossRef]

- Diehl, P.U.; Neil, D.; Binas, J.; Cook, M.; Liu, S.C.; Pfeiffer, M. Balancing. In Proceedings of the Proceedings of the 2015 International Joint Conference on Neural Networks (IJCNN), 2015, pp. [CrossRef]

- Rueckauer, B.; Lungu, I.A.; Hu, Y.; Pfeiffer, M.; Liu, S.C. Conversion of continuous-valued deep networks to efficient event-driven networks for image classification. Frontiers in Neuroscience 2017, 11, 682. [Google Scholar] [CrossRef]

- Cao, Y.; Chen, Y.; Khosla, D. Spiking deep convolutional neural networks for energy-efficient object recognition. International Journal of Computer Vision 2015, 113, 54–66. [Google Scholar] [CrossRef]

- Hunsberger, E.; Eliasmith, C. 2015; arXiv:cs.NE/1510.08829]. [CrossRef]

- Han, B.; Srinivasan, G.; Roy, K. RMP-SNN: Residual Membrane Potential Neuron for Enabling Deeper High-Accuracy and Low-Latency Spiking Neural Network. In Proceedings of the 38th IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, Utah, June 2018. [Google Scholar]

- Bu, T.; Fang, W.; Ding, J.; Dai, P.; Yu, Z.; Huang, T. Optimal ANN-SNN Conversion for High-accuracy and Ultra-low-latency Spiking Neural Networks. In Proceedings of the Proceedings of the 10th International Conference on Learning Representations (ICLR), Virtual Conference, April 2022. [Google Scholar]

- LeCun, Y.; Cortes, C.; Burges, C.J. The MNIST database of handwritten digits. Technical report, ATT Labs, 1998.

- Krizhevsky, A.; Hinton, G. Learning multiple layers of features from tiny images. Technical Report UTML TR 2009, University of Toronto, 2009. [Google Scholar]

- Gaurav, R.; Tripp, B.; Narayan, A. Spiking Approximations of the MaxPooling Operation in Deep SNNs. arXiv preprint arXiv:2205.07076, arXiv:2205.07076 2022, [2205.07076]. [CrossRef]

- Ponulak, F.; Kasinski, A. Introduction to spiking neural networks: information processing, learning and applications. Acta Neurobiologiae Experimentalis 2011, 71, 409–433. [Google Scholar] [CrossRef]

- Xin, J.; Embrechts, M.J. Supervised learning with spiking neural networks. In Proceedings of the IJCNN’01. International Joint Conference on Neural Networks. Proceedings (Cat. No.01CH37222), Vol. 3; 2001; pp. 1772–1777. [Google Scholar]

- Ponulak, F.; Kasiński, A. Supervised learning in spiking neural networks with ReSuMe: sequence learning, classification, and spike shifting. Neural Computation 2010, 22, 467–510. [Google Scholar] [CrossRef]

- Xu, Y.; Zeng, X.; Zhong, S. A New Supervised Learning Algorithm for Spiking Neurons. Neural Computation 2013, 25, 1472–1511. [Google Scholar] [CrossRef] [PubMed]

- Xu, Y.; Zeng, X.; Han, L.; Yang, J. A supervised multi-spike learning algorithm based on gradient descent for spiking neural networks. Neural Networks 2013, 43, 99–113. [Google Scholar] [CrossRef] [PubMed]

- Ahmed, F.Y.; Shamsuddin, S.M.; Hashim, S.Z.M. Improved spikeprop for using particle swarm optimization. Mathematical Problems in Engineering 2013, 2013, 257085. [Google Scholar] [CrossRef]

- Yu, Q.; Tang, H.; Tan, K.C.; Yu, H. A brain-inspired spiking neural network model with temporal encoding and learning. Neurocomputing 2014, 138, 3–13. [Google Scholar] [CrossRef]

- Huh, D.; Sejnowski, T.J. Gradient descent for spiking neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Vol. 31. 2018. [Google Scholar]

- Datta, G.; Kundu, S.; Beerel, P.A. Training energy-efficient deep spiking neural networks with single-spike hybrid input encoding. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN). IEEE; 2021; pp. 1–8. [Google Scholar]

- Zheng, H.; Wu, Y.; Deng, L.; Hu, Y.; Li, G. Going deeper with directly-trained larger spiking neural networks 2021. 35.

- Shi, X.; Hao, Z.; Yu, Z. networks. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024, pp.

- Zhou, C.; Zhang, H.; Zhou, Z.; Yu, L.; Huang, L.; Fan, X.; et al. Qkformer: Hierarchical spiking transformer using qk attention, 2024, [2403. 1 6552.

- Sorbaro, M.; Liu, Q.; Bortone, M.; Sheik, S. Optimizing the Energy Consumption of Spiking Neural Networks for Neuromorphic Applications. Frontiers in Neuroscience 2020, 14, 516916. [Google Scholar] [CrossRef] [PubMed]

- Sengupta, A.; Ye, Y.; Wang, R.; Liu, C.; Roy, K. Going deeper in spiking neural networks: VGG and residual architectures. Frontiers in Neuroscience 2019, 13, 95. [Google Scholar] [CrossRef] [PubMed]

- Kucik, A.S.; Meoni, G. Investigating spiking neural networks for energy-efficient on-board AI applications: a case study in land cover and land use classification. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021, pp.

- Applied Brain Research. KerasSpiking - estimating model energy. https://www.nengo.ai/keras-spiking/examples/model-energy.html, 2024. Last accessed on 12/04/2024.

- Fang, W.; Chen, Y.; Ding, J.; Yu, Z.; Masquelier, T.; Chen, D.; Yu, Z.; Zhou, H.; Tian, Y. Spikingjelly: an open-source machine learning infrastructure platform for spike-based intelligence. Science Advances 2023, 9, eadi1480. [Google Scholar] [CrossRef] [PubMed]

- Pehle, C.G.; Pedersen, J.E. Norse—a deep learning library for spiking neural networks, 2021. [CrossRef]

- Ali, H.A.H.; Dabbous, A.; Ibrahim, A.; Valle, M. Assessment of Recurrent Spiking Neural Networks on Neuromorphic Accelerators for Naturalistic Texture Classification. In Proceedings of the 2023 18th Conference on Ph. D Research in Microelectronics and Electronics (PRIME), jun 2023. [Google Scholar]

- Herbozo Contreras, L.F.; Huang, Z.; Yu, L.; Nikpour, A.; Kavehei, O. Biological plausible algorithm for seizure detection: Toward AI-enabled electroceuticals at the edge. APL Machine Learning 2024, 2. [Google Scholar] [CrossRef]

- Takaghaj, S.M.; Sampson, J. 2024; arXiv:cs.ET/2407.19566]. License: CC BY-NC-ND 4.0.

| Encoding Type | Main Applications | Complexity | Biological Plausibility | Advantages | Challenges |

|---|---|---|---|---|---|

| Rate Coding [38,75,78] | Image & signal processing; ANN-to-SNN conversion; resource-constrained inference | Low | High | Simple implementation; noise/adversarial robustness; hardware-friendly mapping from activations | Loses fine temporal structure; window-length/latency sensitivity; can require high spike counts that raise energy [80] |

| Direct Input Encoding [73,74,76,78,81] | Deep vision and real-time pipelines with large datasets; accuracy/latency–critical use | Moderate–High | Low | Fewer timesteps; preserves input fidelity; simplifies front end; fast inference | Not event driven; multi-bit input raises compute/energy; lower biological realism |

| Temporal Coding [14,24,38,76,82] | Rapid sensory processing; real-time decisions; fine temporal discrimination/patterns | High | High | High information per spike; low-latency responses; potentially energy efficient with sparse spiking | Sensitive to jitter/noise; complex decoding; training with precise timings is challenging |

| Population Coding [83,84] | Speech/audio; noisy environments; improving separability with simple classifiers | High | High | Noise robustness via redundancy; improved linear separability | More neurons increase energy; decoding large populations adds computational overhead |

| Encoding [74,78,85,86,87,88] | Dynamic signals (wearables, biomedical, streaming sensors); energy-aware neuromorphic platforms | Moderate–High | Moderate | Encodes changes (fewer spikes) with good fidelity; noise shaping; strong energy savings | Requires feedback-loop/circuit tuning; trade-offs among fidelity, latency, and energy |

| Burst Coding [13,75,89,90] | Biologically realistic simulations; temporally complex signals; long-activity tasks | Moderate–High | High | Rapid information transfer in spike packets; can be energy saving when bursts are well managed | Synchronizing bursts complicates decoding; scalability and parameter tuning on hardware |

| PoFC[87,91,92,93] | Spatial navigation/sensory processing with oscillations; high-fidelity temporal representation | High | High | Dense information per spike via phase; strong discriminability; potential spike-count/energy reduction | Requires precise global phase reference; sensitive to timing noise; complex decoding and STDP integration |

| Neuron Model | Main Applications | Complexity | Biological Plausibility | Advantages | Challenges |

|---|---|---|---|---|---|

| SRM [38,100] | STDP studies; analytical probes of SNNs; event-driven/hardware simulation | Low | Mod. | Kernel-based, tractable; separates synaptic and refractory effects; efficient | Limited subthreshold nonlinearities; requires kernel/threshold calibration |

| IF [101,104] | Baselines; large-scale SNNs; theoretical analysis | V. Low | Low | Extremely simple; fast; closed-form insights | Unrealistic integration (no leak); no adaptation/resonance |

| LIF [38,103] | Neuromorphic inference; brain–computer interfaces; large-scale simulations | Low | Mod. | Good accuracy–efficiency balance; event-driven; well-studied statistics | No intrinsic adaptation/bursting; linear subthreshold |

| ALIF [100,105] | Temporal/sequential tasks; speech-like streams; robotics control | Mod. | Mod. | Spike-frequency adaptation; better temporal credit assignment | Extra state and parameters; tuning sensitivity |

| EIF [104,106] | Fast fluctuating inputs; spike initiation studies; neuromorphic surrogates | Mod. | High | Sharp, smooth onset; improved gain/phase vs. LIF | Parameter calibration; stiffer near threshold |

| AdEx [107] | Cortical pattern repertoire; adapting/bursting cells; efficient yet rich neurons | Mod.–High | High | Diverse firing patterns with compact model; efficient integration | More parameters; careful numerical and hardware calibration |

| RF [111] | Resonance/phase codes; frequency-selective processing; edge sensing prototypes | Mod. | High | Captures subthreshold oscillations and resonance; phase selectivity | Phenomenological spike; added second-order state; parameter tuning |

| HH [97] | Biophysical mechanism studies; channelopathies; pharmacology; single-cell fidelity | V. High | V. High | Gold-standard fidelity; reproduces ionic mechanisms and refractoriness | Computationally expensive; stiff; many parameters |

| Izhikevich [39,89] | Large-scale networks with rich firing; cortical microcircuits; plasticity studies | Mod. | High | Wide repertoire at low cost; simple 2D form with reset | Lower biophysical interpretability; heuristic fitting |

| RF–Iz [111,116] | Phase-aware resonance with lightweight reset; recurrent SNNs; neuromorphic stacks | Mod. | High | Preserves phase; efficient event rules; toolchain support (Lava) | Phenomenological; calibration of ; still > IF/LIF cost |

| CUBA [98] | Large-scale SNNs; theory; fast prototyping; hardware with current-mode synapses | Low | Low | Very fast; analytically convenient; event-driven synapses independent of V | Ignores reversal/shunt; biases in high-conductance regimes vs. COBA |

| [88,116,117] | Energy-constrained streaming (audio/vision); edge sensing; –LIF layers | Mod. | Mod. | Sparse, error-driven spikes; low switching energy; good reconstruction | Feedback/threshold tuning; integration details for stability and latency |

| Algorithm Name | Learning Paradigm | Main Applications | Complexity | Biological Plausibility | Advantages | Challenges |

|---|---|---|---|---|---|---|

| SpikeProp [123] | Supervised | Temporal pattern recognition | Low | Low | Enables precise spike timing learning; first event-based backpropagation for SNNs | Limited to shallow networks; affected by spike non-differentiability |

| SuperSpike [124] | Supervised | Temporal pattern recognition; deep SNN training | Medium | Medium | Uses surrogate gradients; enables multilayer training | Requires careful surrogate design; added computational cost |

| SLAYER [120] | Supervised | Complex temporal data; sequence prediction | High | Medium | Addresses temporal credit assignment; handles sequences well | Computationally intensive; complex to implement |

| EventProp [125] | Supervised | Exact gradient computation; neuromorphic hardware | High | High | Computes exact gradients; efficient for event-driven processing | Complex discontinuity handling; implementation challenges |

| STDP [51,122] | Unsupervised | Pattern recognition; feature extraction | Low | High | Biologically plausible; local weight updates | Limited scalability; lower accuracy for large-scale tasks |

| aSTDP [126,127] | Unsupervised | Adaptive feature learning | Medium | High | Dynamically adapts learning parameters; robust | Parameter tuning complexity; additional computation |

| Multiplicative STDP [128] | Unsupervised | Weight updates scaled by current weight; prevents unbounded growth/decay | High | Medium | Improves biological plausibility; stabilizes learning | Requires careful parameter tuning |

| Triplet-STDP [129] | Unsupervised | Frequency-dependent learning | Medium | Medium | Captures multi-spike interactions; models frequency effects | Complex spike attribution; higher computation |

| R-STDP [52] | RL | Adaptive learning; decision making | Medium | High | Integrates reward signals; adaptive to reinforcement tasks | Requires carefully designed reward schemes |

| ReSuMe [142] | RL | Temporal precision learning | Medium | High | Combines supervised targets with reinforcement feedback | Dependent on reward design; non-gradient-based |

| e-prop [131] | RL | Temporal dependencies; complex dynamics | High | High | Tracks synaptic influence with eligibility traces | Computationally intensive; eligibility tracking complexity |

| SSTDP [132] | Hybrid | High temporal precision; visual recognition | Medium | High | Merges backpropagation and STDP; energy-efficient | Requires precise timing data; integration complexity |

| ANN-to-SNN Conversion [135,136] | Hybrid | Neuromorphic deployment | Medium | Low | Leverages pre-trained ANNs; fast deployment | Accuracy loss in conversion; parameter mapping issues |

| Layer | FCN Architecture | VGG7 Architecture |

|---|---|---|

| Input Layer | 784 input neurons | 3 channels, pixels |

| Layer 1 | Dense, 128 neurons, | Conv, 64 filters, kernel, stride 1, padding 1 |

| Layer 2 | Dense, 128 neurons, | Conv, 64 filters, kernel, stride 2, padding 1 |

| – | Output, 10 neurons | Max Pooling, kernel, stride 2 |

| Layer 3 | — | Conv, 128 filters, kernel, stride 1, padding 1 |

| Layer 4 | — | Conv, 128 filters, kernel, stride 2, padding 1 |

| Layer 5 | — | Conv, 128 filters, kernel, stride 2, padding 1 |

| – | — | Max Pooling, kernel, stride 2 |

| Flatten Layer | — | Flatten feature maps |

| Layer 6 | — | Dense, 1024 neurons, |

| Layer 7 | Output, 10 neurons | Output, 10 neurons |

| Special Components | Weight Normalization, Weight Scaling | Weight Normalization, Weight Scaling |

| Total Parameters | ∼118,016 | ∼548,554 |

| Frameworks Used | PyTorch for ANNs; Lava, Norse, SpikingJelly for SNNs | PyTorch for ANNs; Lava, Norse, SpikingJelly for SNNs |

| Parameter | ANN (FCN/VGG7) | SNN (FCN) | SNN (VGG7) |

|---|---|---|---|

| Optimizer | Adam | Adam | Adam |

| Learning rate | 0.001 | 0.001 | 0.001 |

| Weight decay | |||

| Loss function | Cross-Entropy | CrossEntropyLoss | CrossEntropyLoss |

| Number of epochs | FCN: 100; VGG7: 150 | 100 | 150 |

| Batch size | 64 | 64 | 64 |

| Dropout rate | FCN: 5%; VGG7: 20% | 5% | 20% |

| Activation function | ReLU | — | — |

| Weight initialization | PyTorch defaults | — | — |

| Neuron parameters | — | Threshold (): 1.25 Current decay: 0.25 Voltage decay: 0.03 Tau gradient: 0.03 Scale gradient: 3 Refractory decay: 1 |

Threshold (): 0.5 Current decay: 0.25 Voltage decay: 0.03 Tau gradient: 0.03 Scale gradient: 3 Refractory decay: 1 |

| Neuron type | Time steps | Rate encoding | TTFS | Direct coding | Burst coding | PoFC | R-NoM | |

|---|---|---|---|---|---|---|---|---|

| ANN (baseline) | – | 98.23% | ||||||

| IF | 8 | 97.20 | 86.60 | 97.70 | 78.90 | 80.00 | 71.20 | 72.20 |

| 6 | 97.10 | 90.00 | 97.60 | 88.10 | 91.00 | 78.50 | 74.00 | |

| 4 | 96.90 | 92.00 | 97.40 | 86.80 | 88.40 | 79.80 | 76.80 | |

| LIF | 8 | 97.00 | 86.50 | 97.40 | 81.90 | 80.06 | 72.10 | 71.00 |

| 6 | 96.90 | 90.00 | 97.50 | 90.00 | 91.10 | 77.70 | 73.00 | |

| 4 | 96.90 | 92.00 | 97.20 | 88.60 | 83.30 | 82.30 | 75.50 | |

| ALIF | 8 | 97.30 | 96.40 | 96.50 | 97.10 | 97.14 | 92.70 | 58.00 |

| 6 | 96.60 | 96.10 | 96.30 | 97.00 | 97.00 | 92.90 | 57.00 | |

| 4 | 96.40 | 96.70 | 96.30 | 97.00 | 96.90 | 93.00 | 46.00 | |

| CUBA | 8 | 97.66 | 97.10 | 97.40 | 97.60 | 79.50 | 94.10 | 68.00 |

| 6 | 97.56 | 97.00 | 97.18 | 97.50 | 79.30 | 94.10 | 66.50 | |

| 4 | 97.20 | 96.30 | 96.40 | 97.30 | 96.80 | 93.40 | 56.00 | |

| 8 | 98.10 | 97.90 | 98.00 | 88.00 | 88.30 | 95.00 | 86.70 | |

| 6 | 97.80 | 97.50 | 98.00 | 88.50 | 97.90 | 87.40 | 86.00 | |

| 4 | 97.90 | 97.50 | 97.70 | 98.00 | 97.90 | 87.80 | 64.00 | |

| RF | 8 | 93.00 | 86.80 | 90.05 | 97.20 | 92.20 | 85.80 | 52.00 |

| 6 | 92.60 | 88.70 | 92.00 | 79.00 | 92.00 | 84.90 | 50.00 | |

| 4 | 93.00 | 88.00 | 91.60 | 53.00 | 91.70 | 83.90 | 47.70 | |

| RF-Iz | 8 | 97.70 | 47.00 | 79.60 | 97.70 | 50.00 | 94.90 | 48.00 |

| 6 | 97.55 | 87.70 | 97.40 | 97.70 | 97.40 | 94.80 | 69.70 | |

| 4 | 97.00 | 96.40 | 96.00 | 97.20 | 96.80 | 93.00 | 74.00 | |

| EIF | 8 | 96.70 | 94.60 | 96.50 | 97.50 | 96.30 | 95.20 | 88.10 |

| 6 | 96.20 | 94.90 | 96.20 | 97.60 | 96.00 | 94.50 | 86.70 | |

| 4 | 96.55 | 95.80 | 96.60 | 97.50 | 96.40 | 95.10 | 87.70 | |

| AdEx | 8 | 96.50 | 94.70 | 96.50 | 97.40 | 96.70 | 95.40 | 89.50 |

| 6 | 96.40 | 95.00 | 96.40 | 97.50 | 96.80 | 95.30 | 89.20 | |

| 4 | 96.00 | 95.90 | 96.44 | 97.39 | 96.80 | 95.20 | 88.50 | |

| Neuron type | Time steps | Rate encoding | TTFS | Direct coding | Burst coding | PoFC | R-NoM | |

|---|---|---|---|---|---|---|---|---|

| IF | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| LIF | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| ALIF | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| CUBA | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| 8 | ||||||||

| 6 | ||||||||

| 4 | ||||||||

| RF | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| RF-Iz | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| EIF | 8 | |||||||

| 6 | ||||||||

| 4 | ||||||||

| AdEx | 8 | |||||||

| 6 | ||||||||

| 4 |

| Neuron Type | Time Steps | Rate Encoding | TTFS | Direct Coding | Burst Coding | PoFc | R-NoM | |

|---|---|---|---|---|---|---|---|---|

| ANN (Baseline) | – | 83.60% | ||||||

| IF | 2 | 57.00 | 60.00 | 62.00 | 74.00 | 60.00 | 57.00 | 29.00 |

| 4 | 56.50 | 62.00 | 65.00 | 74.50 | 64.00 | 65.00 | 27.00 | |

| 6 | 57.00 | 62.50 | 65.00 | 74.50 | 64.50 | 68.00 | 30.00 | |

| LIF | 2 | 50.00 | 61.50 | 62.50 | 74.30 | 57.60 | 59.00 | 28.00 |

| 4 | 51.00 | 62.00 | 64.50 | 74.50 | 61.00 | 63.00 | 23.00 | |

| 6 | 50.00 | 59.50 | 65.00 | 74.00 | 61.50 | 67.00 | 21.00 | |

| ALIF | 2 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 |

| 4 | 39.00 | 34.00 | 20.00 | 46.00 | 20.00 | 27.00 | 14.00 | |

| 6 | 51.00 | 38.00 | 28.00 | 49.00 | 27.00 | 29.00 | 24.00 | |

| CUBA | 2 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 |

| 4 | 34.00 | 50.00 | 33.50 | 40.00 | 30.00 | 24.00 | 25.00 | |

| 6 | 45.00 | 43.00 | 40.00 | 50.00 | 30.00 | 31.00 | 27.00 | |

| 2 | 57.00 | 72.00 | 56.00 | 83.00 | 57.00 | 57.00 | 30.00 | |

| 4 | 61.00 | 72.00 | 66.00 | 78.00 | 66.00 | 62.00 | 27.00 | |

| 6 | 60.00 | 72.50 | 68.00 | 79.00 | 66.00 | 67.00 | 24.00 | |

| RF | 2 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 |

| 4 | 22.00 | 10.00 | 10.00 | 42.00 | 10.00 | 10.00 | 10.00 | |

| 6 | 37.00 | 36.00 | 32.00 | 47.00 | 39.00 | 37.00 | 31.00 | |

| R&F Iz | 2 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 | 10.00 |

| 4 | 10.00 | 10.00 | 10.00 | 33.00 | 10.00 | 10.00 | 10.00 | |

| 6 | 45.00 | 37.00 | 30.00 | 39.00 | 32.00 | 32.00 | 27.00 | |

| EIF | 2 | 58.50 | 56.00 | 53.50 | 70.00 | 58.50 | 54.00 | 25.00 |

| 4 | 59.50 | 64.00 | 60.00 | 69.00 | 57.00 | 57.00 | 22.50 | |

| 6 | 60.00 | 65.00 | 61.00 | 68.50 | 53.50 | 60.00 | 24.00 | |

| AdEx | 2 | 59.00 | 55.50 | 60.00 | 69.50 | 58.50 | 54.00 | 27.00 |

| 4 | 60.00 | 62.00 | 61.50 | 70.00 | 56.50 | 59.00 | 29.00 | |

| 6 | 60.50 | 63.00 | 60.50 | 70.10 | 52.00 | 61.00 | 25.50 | |

| Neuron type | Time steps | Rate encoding | TTFS | Direct coding | Burst coding | PoFC | R-NoM | |

|---|---|---|---|---|---|---|---|---|

| IF | 2 | |||||||

| 4 | ||||||||

| 6 | ||||||||

| LIF | 2 | |||||||

| 4 | ||||||||

| 6 | ||||||||

| ALIF | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 4 | ||||||||

| 6 | ||||||||

| CUBA | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 4 | ||||||||

| 6 | ||||||||

| 2 | ||||||||

| 4 | ||||||||

| 6 | ||||||||

| RF | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 4 | 0 | 0 | 0 | 0 | ||||

| 6 | ||||||||

| RF-Iz | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 4 | 0 | 0 | 0 | 0 | 0 | 0 | ||

| 6 | ||||||||

| EIF | 2 | |||||||

| 4 | ||||||||

| 6 | ||||||||

| AdEx | 2 | |||||||

| 4 | ||||||||

| 6 |

| Encoding scheme | Threshold | 2 steps | 4 steps | 6 steps |

|---|---|---|---|---|

| Rate encoding | 0.1 | 58.50 | 59.50 | 60.00 |

| 0.5 | 47.00 | 53.00 | 47.50 | |

| 0.75 | 40.00 | 41.00 | 27.00 | |

| TTFS encoding | 0.1 | 56.00 | 64.00 | 65.00 |

| 0.5 | 57.00 | 65.50 | 67.50 | |

| 0.75 | 54.00 | 65.00 | 55.00 | |

| encoding | 0.1 | 53.50 | 60.00 | 61.00 |

| 0.5 | 39.00 | 38.50 | 54.00 | |

| 0.75 | 51.50 | 30.00 | 51.00 | |

| Direct coding | 0.1 | 70.00 | 69.00 | 68.50 |

| 0.5 | 68.00 | 67.50 | 66.00 | |

| 0.75 | 16.00 | 17.00 | 15.00 | |

| Burst coding | 0.1 | 58.50 | 57.00 | 53.50 |

| 0.5 | 32.00 | 56.00 | 60.00 | |

| 0.75 | 51.00 | 57.00 | 55.00 | |

| PoFC | 0.1 | 54.00 | 57.00 | 60.00 |

| 0.5 | 42.50 | 53.50 | 56.00 | |

| 0.75 | 17.00 | 39.50 | 20.00 | |

| R-NoM | 0.1 | 25.00 | 22.50 | 24.00 |

| 0.5 | 28.00 | 28.00 | 29.50 | |

| 0.75 | 29.00 | 28.50 | 28.00 |

| Encoding scheme | Threshold | 2 steps | 4 steps | 6 steps |

|---|---|---|---|---|

| Rate encoding | 0.1 | |||

| 0.5 | ||||

| 0.75 | ||||

| TTFS encoding | 0.1 | |||

| 0.5 | ||||

| 0.75 | ||||

| encoding | 0.1 | |||

| 0.5 | ||||

| 0.75 | ||||

| Direct coding | 0.1 | |||

| 0.5 | ||||

| 0.75 | ||||

| Burst coding | 0.1 | |||

| 0.5 | ||||

| 0.75 | ||||

| PoFC | 0.1 | |||

| 0.5 | ||||

| 0.75 | ||||

| R-NoM | 0.1 | |||

| 0.5 | ||||

| 0.75 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 1996 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).