Submitted:

01 September 2025

Posted:

24 September 2025

You are already at the latest version

Abstract

Keywords:

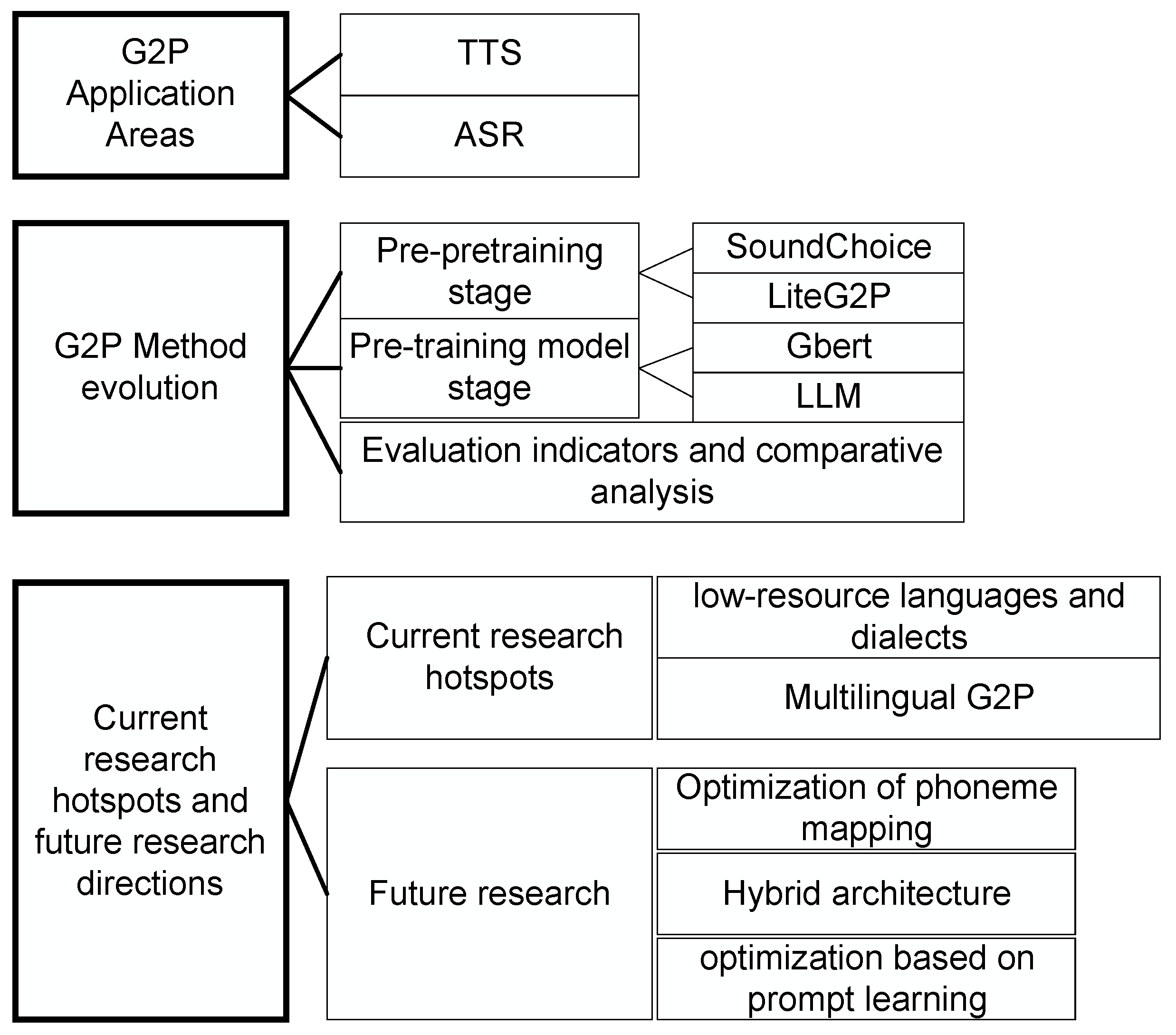

1. Introduction

2. G2P Application Areas

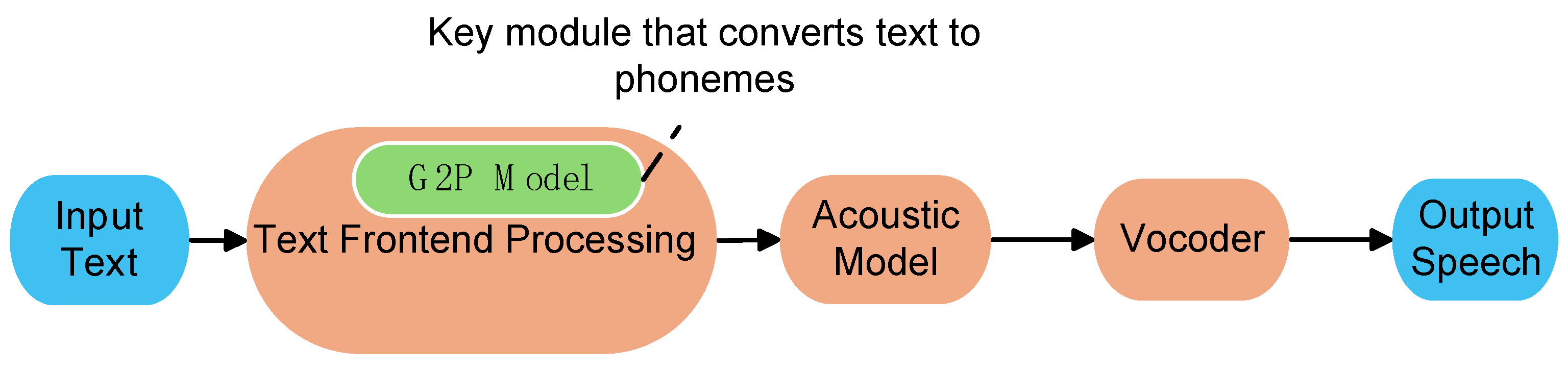

2.1. Speech Synthesis System

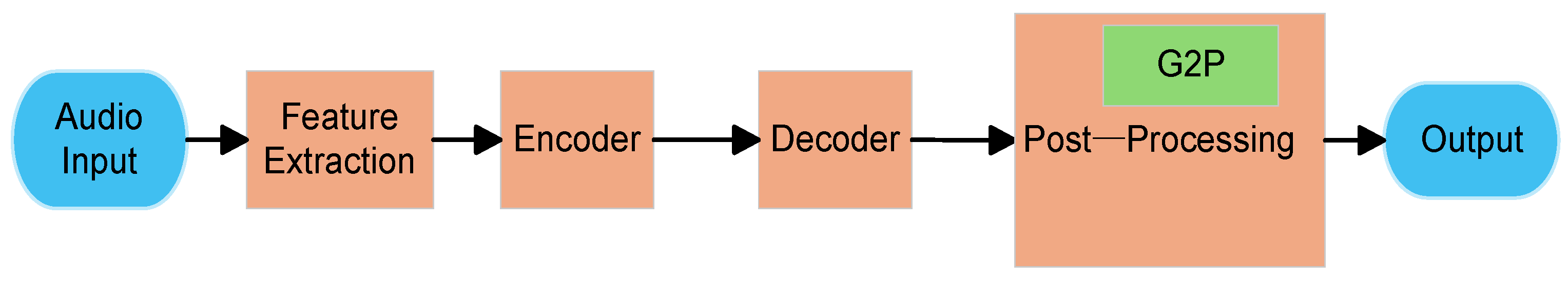

2.2. Automatic Speech Recognition

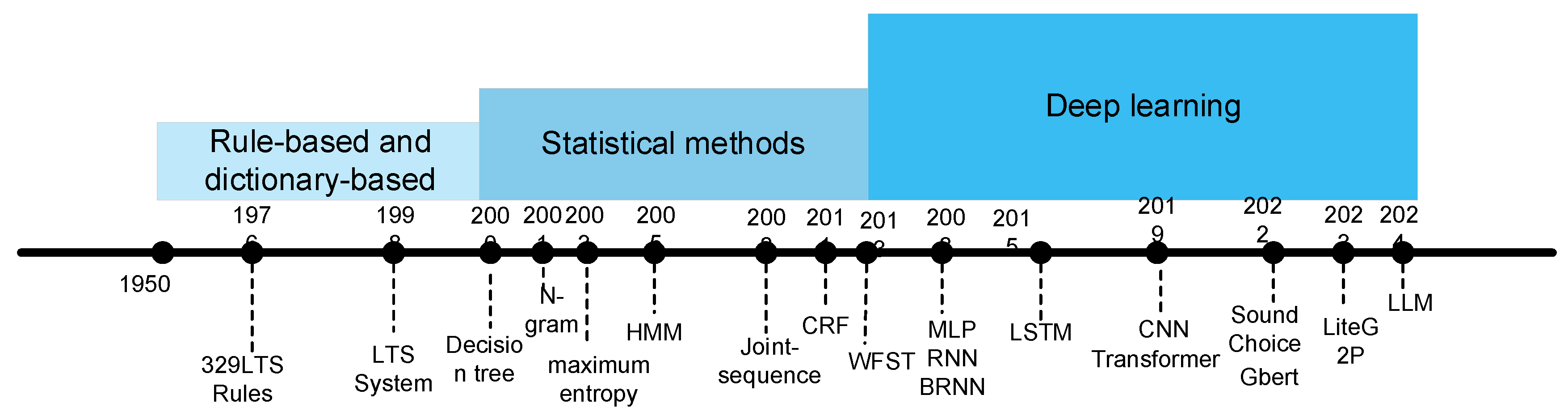

3. G2P Method Evolution

3.1. Pre-Pretraining Stage

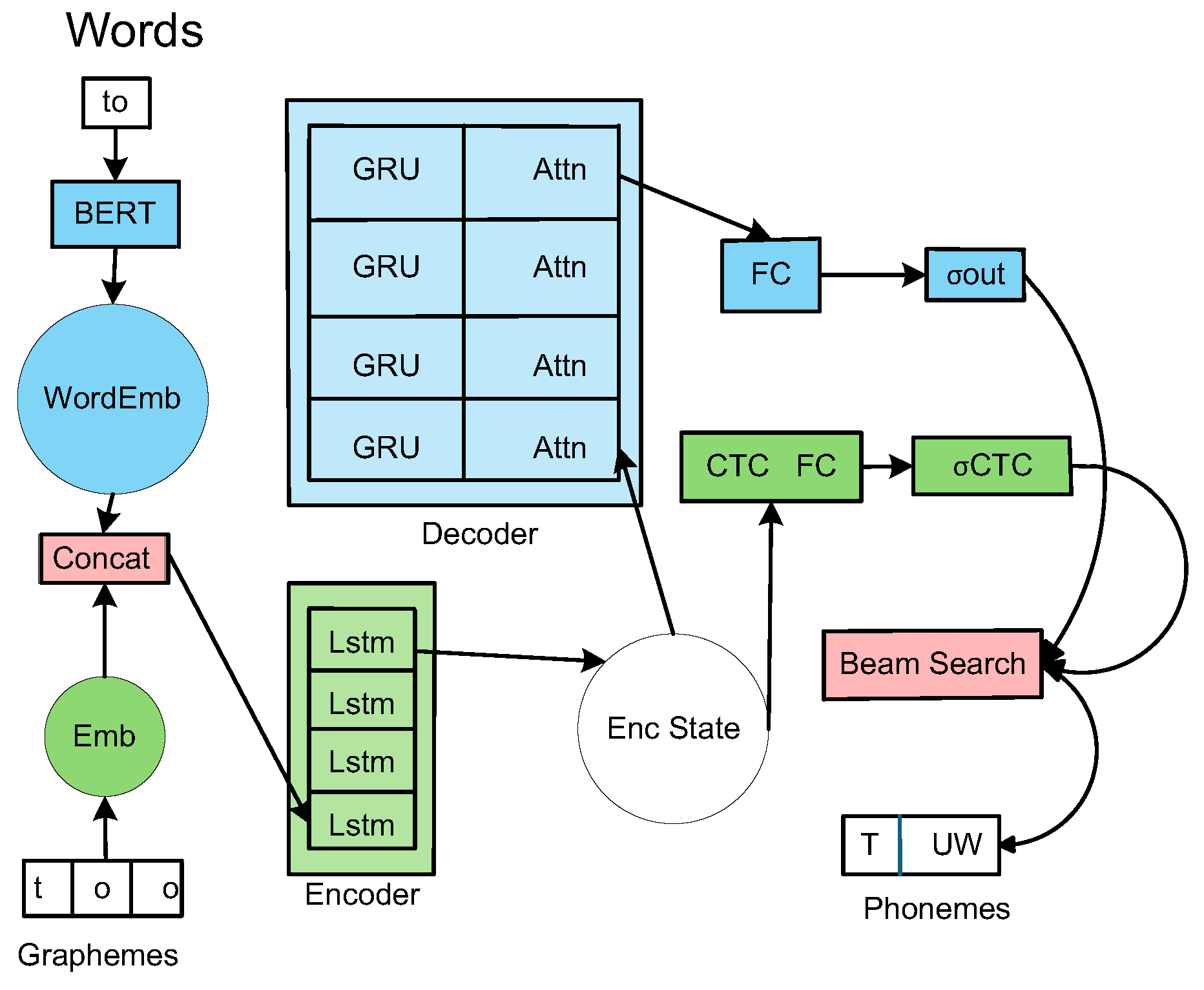

3.1.1. SoundChoice

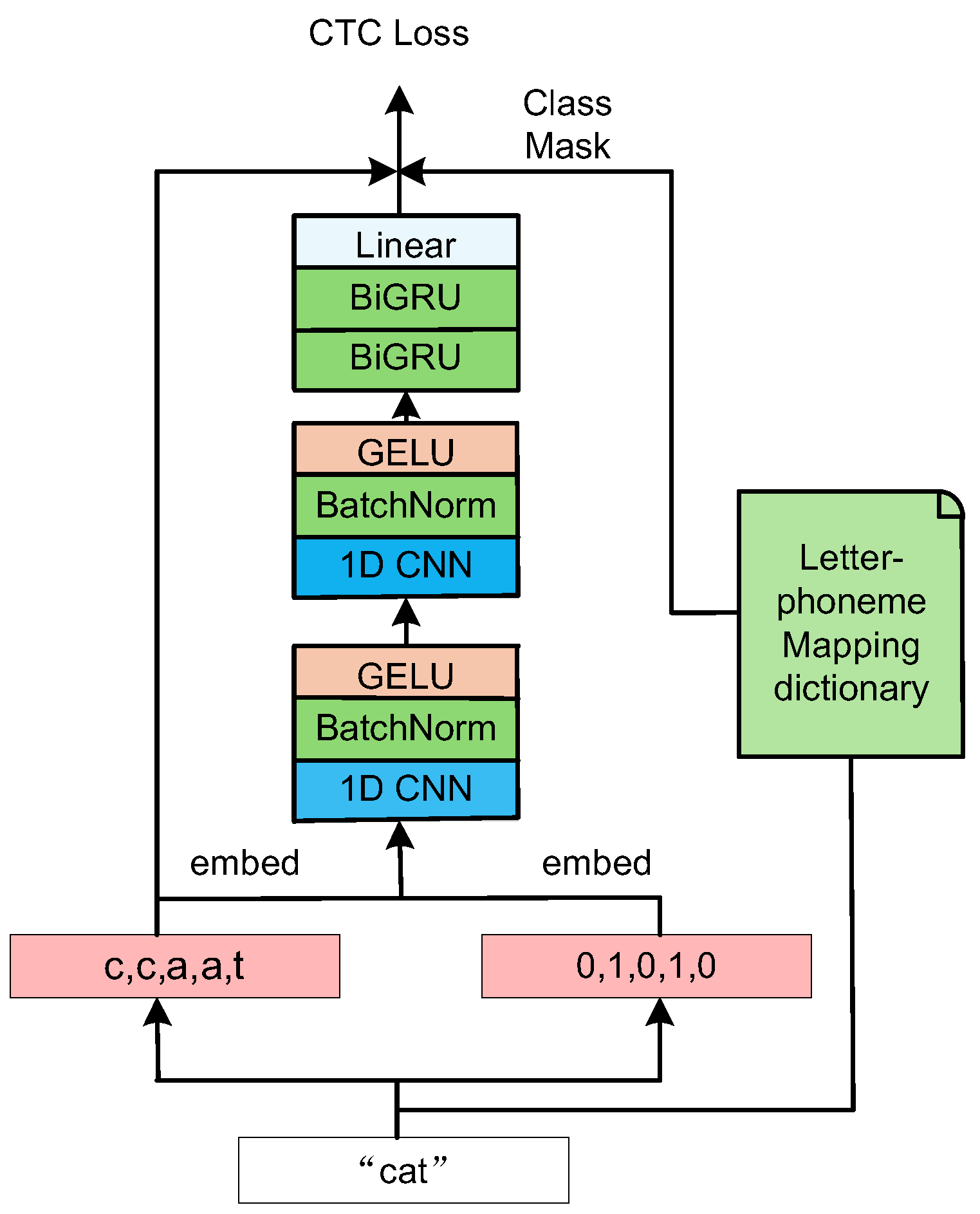

3.1.2. LiteG2P

3.2. Pre-Training Model Stage

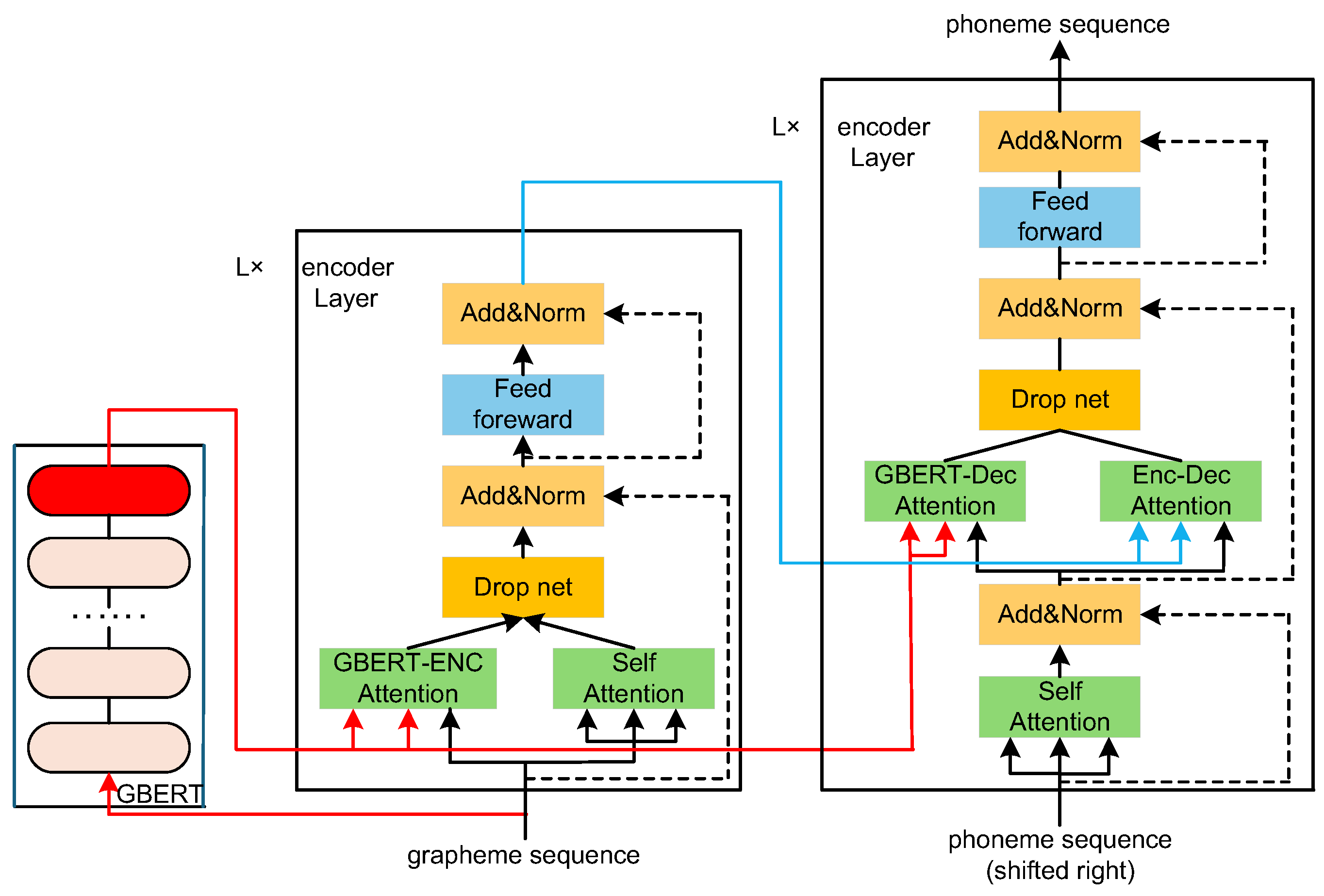

3.2.1. GBERT

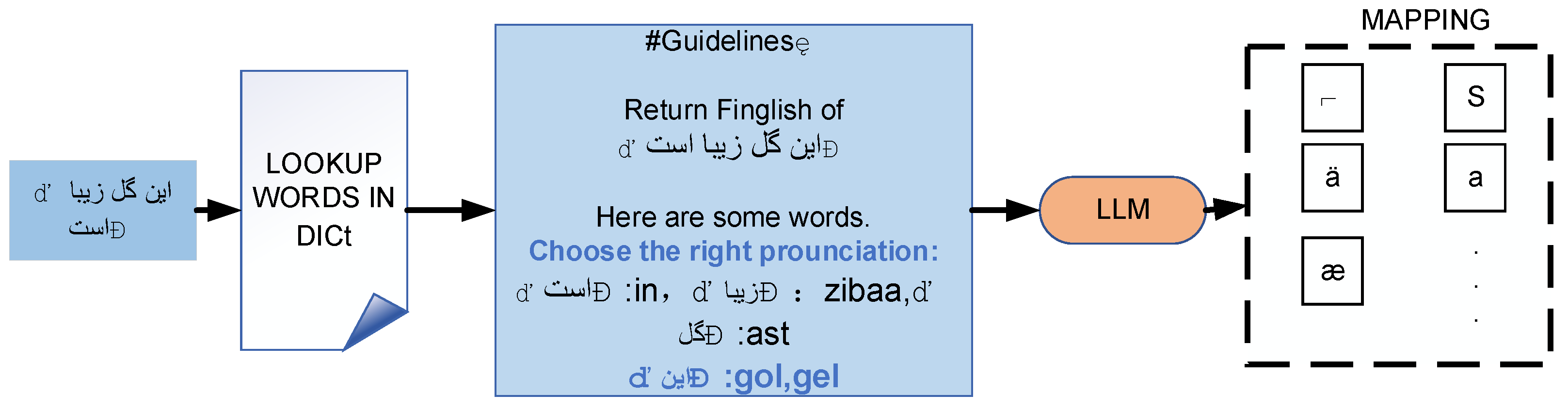

3.2.2. LLM

3.3. Evaluation Indicators and Comparative Analysis

3.3.1. Evaluation Indicators

3.3.2. Comparative Analysis

4. Current Research Hotspots and Future Research Directions

4.1. Current Research Hotspots

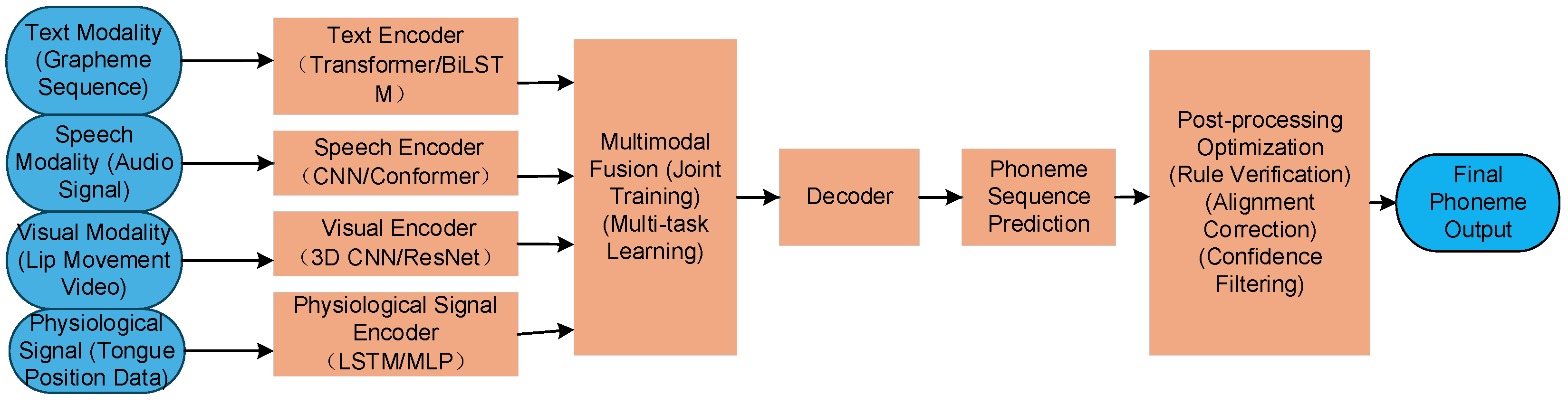

4.1.1. Challenges of Low-Resource Languages and Dialects

4.1.2. Multilingual G2P

4.2. Future Research Directions

4.2.1. Optimization of Phoneme Mapping for Low-Resource Languages

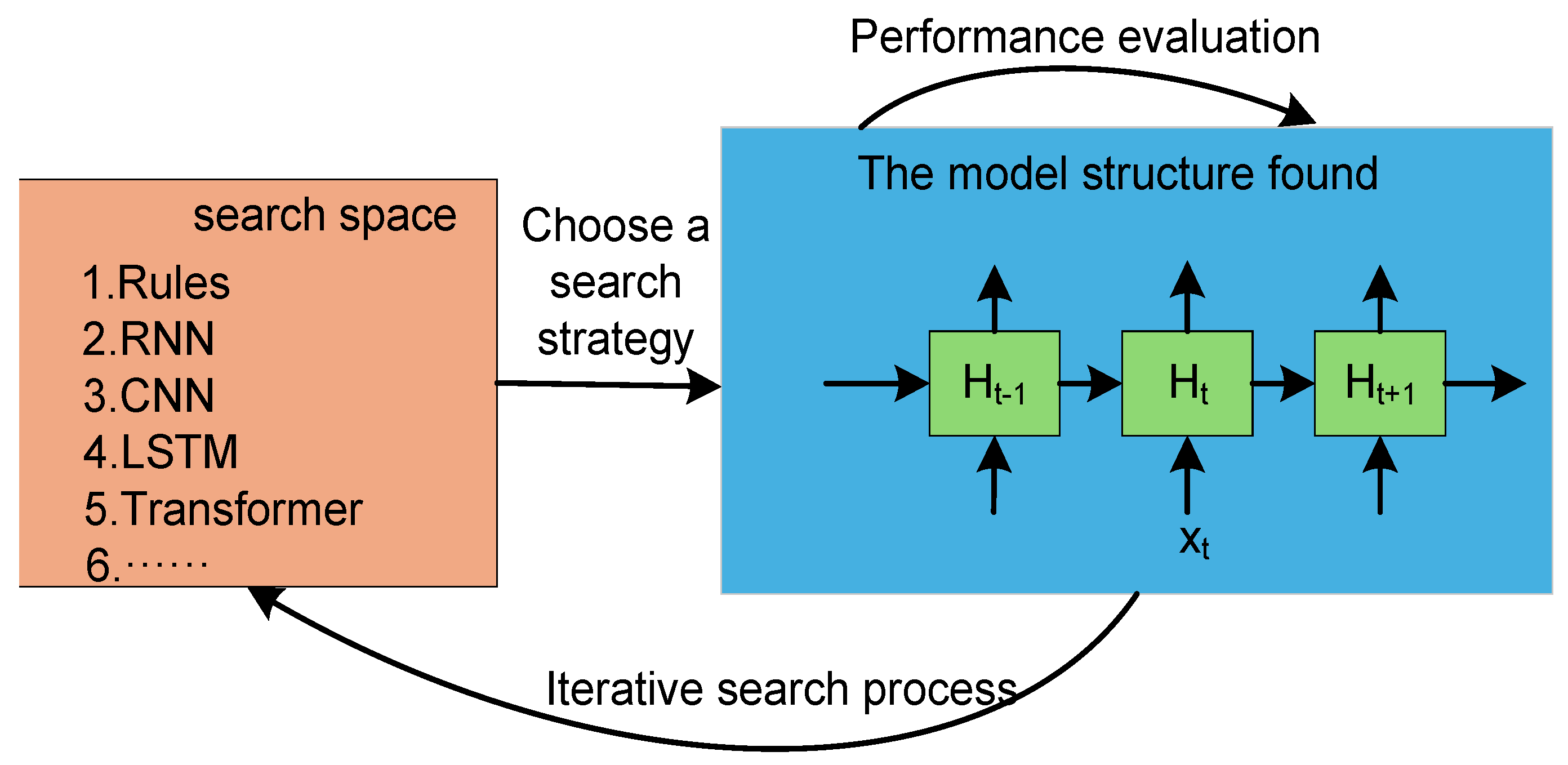

4.2.2. Hybrid Architecture Innovation

4.2.3. Mapping Optimization Based on Prompt Learning

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| G2P | Grapheme-to-phoneme |

| TTS | Text-to-speech |

| ASR | Automatic Speech Recognition |

| LSTM | Long Short-Term Memory |

| CNN | Convolutional Neural Network |

| BERT | Bidirectional Encoder Representations from Transformers |

| MFCC | Mel-Frequency Cepstral Co-efficients |

| GMM | Gaussian Mixture Model |

| DNN | Deep neeral network |

| HMM | Hidden Markov Model |

| CTC | Connectionist Temporal Classification |

| CRF | Conditional Random Field |

| GRU | Gated Recurrent Unit |

| LLM | Large Language Model |

| ICKR | In-context Knowledge Retrieval |

| WER | Word Error Rate |

| PER | Phoneme Error Rate |

References

- Meletis, D. The grapheme as a universal basic unit of writing. Writing Systems Research 2019, 11(1), 26–49. [CrossRef]

- Fromkin, V.; Rodman, R.; Hyams, N. Phonemes: The Phonological Units of Language An Introduction to Language, 11th ed.; Cengage Learning: Boston, MA, USA, 2018; pp. 230–233.

- Rao, K.; Peng, F.; Sak, H.; Beaufays, F.; Schalkwyk, J. Grapheme-to-phoneme conversion using long short-term memory recurrent neural networks. In Proceedings of the 2015 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), South Brisbane, Australia, 19–24 April 2015; IEEE: Piscataway, NJ, USA, 2015; pp. 4225–4229. [CrossRef]

- Yolchuyeva, S.; Németh, G.; Gyires-Tóth, B. Grapheme-to-phoneme conversion with convolutional neural networks. Applied Sciences 2019, 9(6), 1143-1162. [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), Minneapolis, MN, USA, 2–7 June 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 4171–4186.

- Sadjadi, S.O.; Greenberg, C.; Singer, E.; Mason, L.; Reynolds, D.A. The 2021 NIST speaker recognition evaluation. arXiv 2022, arXiv:2204.10242. [CrossRef]

- Hunt, A.J.; Black, A.W. Unit selection in a concatenative speech synthesis system using a large speech database. In Proceedings of the 1996 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Atlanta, GA, USA, 7–10 May 1996; IEEE: Piscataway, NJ, USA, 1996; Volume 1, pp. 373–376.

- Black, A.W.; Taylor, P. Automatically clustering similar units for unit selection in speech synthesis. In Proceedings of the 5th European Conference on Speech Communication and Technology (Eurospeech 1997), Rhodes, Greece, 22–25 September 1997; ESCA: Grenoble, France, 1997; pp. 601–604.

- Gao, Z.F. Research and Implementation of End-to-End Chinese Speech Synthesis. Master's Thesis, Beijing University of Posts and Telecommunications, Beijing, China, 2022.

- Tokuda, K.; Yoshimura, T.; Masuko, T.; Kobayashi, T.; Kitamura, T. Speech parameter generation algorithms for HMM-based speech synthesis. In Proceedings of the 2000 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Istanbul, Turkey, 5–9 June 2000; IEEE: Piscataway, NJ, USA, 2000; Volume 3, pp. 1315–1318.

- Black, A.W.; Zen, H.; Tokuda, K. Statistical parametric speech synthesis. In Proceedings of the 2007 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Honolulu, HI, USA, 15–20 April 2007; IEEE: Piscataway, NJ, USA, 2007; Volume 4, pp. 1229–1232.

- Zen, H.; Senior, A.; Schuster, M. Statistical parametric speech synthesis using deep neural networks. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Vancouver, BC, Canada, 26–31 May 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 7962–7966.

- Tokuda, K.; Nankaku, Y.; Toda, T.; Zen, H.; Yamagishi, J.; Oura, K. Speech synthesis based on hidden Markov models. Proceedings of the IEEE 2013, 101(5), 1234–1252. [CrossRef]

- Zen, H.; Tokuda, K.; Black, A.W. Statistical parametric speech synthesis. Speech Communication 2009, 51(11), 1039–1064. [CrossRef]

- Griffin, D.; Lim, J. Signal estimation from modified short-time Fourier transform. IEEE Transactions on Acoustics, Speech, and Signal Processing 1984, 32(2), 236–243. [CrossRef]

- Van den Oord, A.; Dieleman, S.; Zen, H.; Simonyan, K.; Vinyals, O.; Graves, A.; Kalchbrenner, N.; Senior, A.; Kavukcuoglu, K. WaveNet: A generative model for raw audio. arXiv 2016, arXiv:1609.03499. [CrossRef]

- Wang, Y.; Skerry-Ryan, R.J.; Stanton, D.; Wu, Y.; Weiss, R.J.; Jaitly, N.; Yang, Z.; Xiao, Y.; Chen, Z.; Bengio, S.; et al. Tacotron: Towards end-to-end speech synthesis. In Proceedings of the Interspeech 2017, Stockholm, Sweden, 20–24 August 2017; ISCA: Baixas, France, 2017; pp. 4006–4010.

- Ren, Y.; Hu, C.; Tan, X.; Qin, T.; Zhao, S.; Zhao, Z.; Liu, T.Y. FastSpeech 2: Fast and high-quality end-to-end text to speech. arXiv 2020, arXiv:2006.04558.

- Prenger, R.; Valle, R.; Catanzaro, B. WaveGlow: A flow-based generative network for speech synthesis. In Proceedings of the 2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 12–17 May 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 3617–3621.

- Fox, M.A.; Aschkenasi, C.J.; Kalyanpur, A. Voice recognition is here comma like it or not period. Indian Journal of Radiology and Imaging 2013, 23(3), 191–194. [CrossRef]

- Xuan, G.; Zhang, W.; Chai, P. EM algorithms of Gaussian mixture model and hidden Markov model. In Proceedings of the 2001 International Conference on Image Processing (ICIP), Thessaloniki, Greece, 7–10 October 2001; IEEE: Piscataway, NJ, USA, 2001; Volume 2, pp. 145–148.

- Pan, J.; Liu, C.; Wang, Z.; Hu, Y.; Jiang, H. Investigation of deep neural networks (DNN) for large vocabulary continuous speech recognition: Why DNN surpasses GMMs in acoustic modeling. In Proceedings of the 2012 8th International Symposium on Chinese Spoken Language Processing (ISCSLP), Hong Kong, China, 5–8 December 2012; IEEE: Piscataway, NJ, USA, 2012; pp. 301–305.

- Chan, W.; Jaitly, N.; Le, Q.; Vinyals, O. Listen, attend and spell: A neural network for large vocabulary conversational speech recognition. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 4960–4964.

- Baevski, A.; Zhou, Y.; Mohamed, A.; Auli, M. wav2vec 2.0: A framework for self-supervised learning of speech representations. Advances in Neural Information Processing Systems 2020, 33, 12449–12460.

- Hsu, W.N.; Bolte, B.; Tsai, Y.H.H.; Lakhotia, K.; Salakhutdinov, R.; Mohamed, A. Hubert: Self-supervised speech representation learning by masked prediction of hidden units. IEEE/ACM Transactions on Audio, Speech, and Language Processing 2021, 29, 3451–3460. [CrossRef]

- Jin, X.L. Research and Implementation of End-to-End Speech Recognition Algorithms. Master's Thesis, Lanzhou Jiaotong University, Lanzhou, China, 2023.

- Davis, S.; Mermelstein, P. Comparison of parametric representations for monosyllabic word recognition in continuously spoken sentences. IEEE Transactions on Acoustics, Speech, and Signal Processing 1980, 28(4), 357–366. [CrossRef]

- Allen, J.B.; Rabiner, L.R. A unified approach to short-time Fourier analysis and synthesis. Proceedings of the IEEE 1977, 65(11), 1558–1564. [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556.

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the 31st International Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017; Curran Associates Inc.: Red Hook, NY, USA, 2017; pp. 6000–6010.

- Graves, A.; Fernández, S.; Gomez, F.; Schmidhuber, J. Connectionist temporal classification: Labelling unsegmented sequence data with recurrent neural networks. In Proceedings of the 23rd International Conference on Machine Learning (ICML 2006), Pittsburgh, PA, USA, 25–29 June 2006; ACM: New York, NY, USA, 2006; pp. 369–376.

- Elovitz, H.S.; Johnson, R.; McHugh, A.; Shore, J.E. Letter-to-sound rules for automatic translation of English text to phonetics. IEEE Transactions on Acoustics, Speech, and Signal Processing 1976, 24(6), 446–459. [CrossRef]

- Black, A.W.; Lenzo, K.; Page, V. Issues in building general letter to sound rules. In Proceedings of the 3rd ESCA Workshop on Speech Synthesis, Jenolan Caves, Blue Mountains, Australia, 26–29 November 1998; ISCA: Baixas, France, 1998; pp. 77–80.

- Suontausta, J.; Häkkinen, J. Decision tree based text-to-phoneme mapping for speech recognition. In Proceedings of the Interspeech 2000, Beijing, China, 16–20 October 2000; ISCA: Baixas, France, 2000; Volume 2, pp. 831–834.

- Galescu, L.; Allen, J.F. Bi-directional conversion between graphemes and phonemes using a joint n-gram model. In Proceedings of the 4th ISCA Tutorial and Research Workshop (ITRW) on Speech Synthesis, Perthshire, Scotland, UK, 29 August–1 September 2001; ISCA: Baixas, France, 2001; pp. 6–9.

- Chen, S.F. Conditional and joint models for grapheme-to-phoneme conversion. In Proceedings of the Interspeech 2003, Geneva, Switzerland, 1–4 September 2003; ISCA: Baixas, France, 2003; pp. 2033–2036.

- Taylor, P. Hidden Markov models for grapheme to phoneme conversion. In Proceedings of the Interspeech 2005, Lisbon, Portugal, 4–8 September 2005; ISCA: Baixas, France, 2005; pp. 1973–1976.

- Bisani, M.; Ney, H. Joint-sequence models for grapheme-to-phoneme conversion. Speech Communication 2008, 50(5), 434–451. [CrossRef]

- Illina, I.; Fohr, D.; Jouvet, D. Grapheme-to-phoneme conversion using conditional random fields. In Proceedings of the Interspeech 2011, Florence, Italy, 27–31 August 2011; ISCA: Baixas, France, 2011; pp. 2313–2316.

- Novak, J.R.; Minematsu, N.; Hirose, K. Failure transitions for joint n-gram models and G2P conversion. In Proceedings of the Interspeech 2013, Lyon, France, 25–29 August 2013; ISCA: Baixas, France, 2013; pp. 1821–1825.

- Bilcu, E.B. Text-to-Phoneme Mapping Using Neural Networks: A Neural Approach, 2nd ed.; Smith, A., trans.; Tampere University of Technology: Tampere, Finland, 2008; pp. 47–75.

- Ploujnikov, A.; Ravanelli, M. SoundChoice: Grapheme-to-phoneme models with semantic disambiguation. In Proceedings of the Interspeech 2022, Incheon, South Korea, 18–22 September 2022; ISCA: Baixas, France, 2022; pp. 4561–4565.

- Wang, C.; Huang, P.; Zou, Y.; Cheng, N. LiteG2P: A fast, light and high accuracy model for grapheme-to-phoneme conversion. In Proceedings of the 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Rhodes Island, Greece, 4–10 June 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 1–5.

- Jia, Y.; Zen, H.; Shen, J.; Zhang, Y.; Wu, Y. PnG BERT: Augmented BERT on phonemes and graphemes for neural TTS. arXiv 2021, arXiv:2103.15060. [CrossRef]

- Řeháček, M.; Švec, J.; Tihelka, D. T5G2P: Using text-to-text transfer transformer for grapheme-to-phoneme conversion. In Proceedings of the Interspeech 2021, Brno, Czech Republic, 30 August–3 September 2021; ISCA: Baixas, France, 2021; pp. 6–10.

- Dong, L.; Chen, H.; Guo, Y.; Liu, S.; Xu, B. Neural grapheme-to-phoneme conversion with pre-trained grapheme models. In Proceedings of the 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Singapore, 22–27 May 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 6202–6206.

- Qharabagh, M.F.; Dehghanian, Z.; Rabiee, H.R. LLM-powered grapheme-to-phoneme conversion: Benchmark and case study. In Proceedings of the 2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), San Francisco, CA, USA, 6–11 April 2025; IEEE: Piscataway, NJ, USA, 2025; pp. 1–5.

- Han, D.; Zhang, Y.; Liu, H.; Li, J. Improving grapheme-to-phoneme conversion through in-context knowledge retrieval with large language models. In Proceedings of the 2024 IEEE 14th International Symposium on Chinese Spoken Language Processing (ISCSLP), Hong Kong, China, 1–4 December 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 631–635.

- Yolchuyeva, S.; Németh, G.; Gyires-Tóth, B. Transformer based grapheme-to-phoneme conversion. In Proceedings of the Interspeech 2019, Graz, Austria, 15–19 September 2019; ISCA: Baixas, France, 2019; pp. 2095–2099.

- Toshniwal, S.; Livescu, K. Jointly learning to align and convert graphemes to phonemes with neural attention models. In Proceedings of the 2016 IEEE Spoken Language Technology Workshop (SLT), San Diego, CA, USA, 13–16 December 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 76–82. [CrossRef]

- Yao, K.; Zweig, G. Sequence-to-sequence neural net models for grapheme-to-phoneme conversion. In Proceedings of the Interspeech 2015, Dresden, Germany, 6–10 September 2015; ISCA: Baixas, France, 2015; pp. 3330–3334.

- Mousa, A.E.D.; Schuller, B. Deep bidirectional long short-term memory recurrent neural networks for grapheme-to-phoneme conversion utilizing complex many-to-many alignments. In Proceedings of the Interspeech 2016, San Francisco, CA, USA, 8–12 September 2016; ISCA: Baixas, France, 2016; pp. 2836–2840. [CrossRef]

- Milde, B.; Schmidt, C.; Köhler, J. Multitask sequence-to-sequence models for grapheme-to-phoneme conversion. In Proceedings of the Interspeech 2017, Stockholm, Sweden, 20–24 August 2017; ISCA: Baixas, France, 2017; pp. 2536–2540. [CrossRef]

- Arik, S.Ö.; Chrzanowski, M.; Coates, A.; Diamos, G.; Gibiansky, A.; Kang, Y.; Li, X.; Miller, J.; Ng, A.; Raiman, J.; et al. Deep voice: Real-time neural text-to-speech. In Proceedings of the 34th International Conference on Machine Learning (ICML 2017), Sydney, Australia, 6–11 August 2017; PMLR: Brookline, MA, USA, 2017; Volume 70, pp. 195–204.

- Chae, M.J.; Park, K.; Bang, L.; Kim, H.K. Convolutional sequence to sequence model with non-sequential greedy decoding for grapheme-to-phoneme conversion. In Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Calgary, AB, Canada, 15–20 April 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 2486–2490.

- Wang, Y.; Bao, F.; Zhang, H.; Gao, G. Joint alignment learning-attention based model for grapheme-to-phoneme conversion. In Proceedings of the 2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Toronto, ON, Canada, 6–11 June 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 7788–7792.

- Zhao, C.; Wang, J.; Qu, X.; Zhang, C.; Li, Y. R-G2P: Evaluating and enhancing robustness of grapheme to phoneme conversion by controlled noise introducing and contextual information incorporation. In Proceedings of the 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Singapore, 22–27 May 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 6197–6201.

- Novak, J.R.; Minematsu, N.; Hirose, K.; Kawahara, T. Improving WFST-based G2P conversion with alignment constraints and RNNLM N-best rescoring. In Proceedings of the Interspeech 2012, Portland, OR, USA, 9–13 September 2012; ISCA: Baixas, France, 2012; pp. 2526–2529.

- Sun, H.; Tan, X.; Gan, J.W.; Liu, H.; Qin, T.; Zhao, S.; Liu, T.Y. Token-level ensemble distillation for grapheme-to-phoneme conversion. arXiv 2019, arXiv:1904.03446.

- Yamasaki, T. Grapheme-to-phoneme conversion for Thai using neural regression models. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT), Seattle, WA, USA, 10–15 July 2022; Association for Computational Linguistics: Stroudsburg, PA, USA, 2022; pp. 4251–4255.

- Kim, J.; Han, C.; Nam, G.; Lee, K.; Kim, S.; Lee, J. Good neighbors are all you need for Chinese grapheme-to-phoneme conversion. In Proceedings of the 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Rhodes Island, Greece, 4–10 June 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 1–5.

- Eters, B.; Dehdari, J.; van Genabith, J. Massively multilingual neural grapheme-to-phoneme conversion. In Proceedings of the First Workshop on Building Linguistically Generalizable NLP Systems, Copenhagen, Denmark, 7 September 2017; Association for Computational Linguistics: Stroudsburg, PA, USA, 2017; pp. 19–26.

- Mortensen, D.R.; Dalmia, S.; Littell, P. Epitran: Precision G2P for many languages. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018), Miyazaki, Japan, 7–12 May 2018; European Language Resources Association (ELRA): Paris, France, 2018; pp. 2710–2714.

- Sokolov, A.; Rohlin, T.; Rastrow, A. Neural machine translation for multilingual grapheme-to-phoneme conversion. arXiv 2020, arXiv:2006.14194.

- Yu, M.; Nguyen, H.D.; Sokolov, A.; Rastrow, A.; Stolcke, A. Multilingual grapheme-to-phoneme conversion with byte representation. In Proceedings of the 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 8234–8238.

- Zhu, J.; Zhang, C.; Jurgens, D. ByT5 model for massively multilingual grapheme-to-phoneme conversion. arXiv 2022, arXiv:2204.03067.

- Xue, L.; Constant, N.; Roberts, A.; Kale, M.; Al-Rfou, R.; Siddhant, A.; Barua, A.; Raffel, C. mT5: A massively multilingual pre-trained text-to-text transformer. arXiv 2020, arXiv:2010.11934. [CrossRef]

- Conneau, A.; Khandelwal, K.; Goyal, N.; Chaudhary, V.; Wenzek, G.; Guzmán, F.; Grave, É.; Ott, M.; Zettlemoyer, L.; Stoyanov, V. Unsupervised cross-lingual representation learning at scale. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics (ACL 2019), Florence, Italy, 28 July–2 August 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 3445–3460.

- Route, J.; Hillis, S.; Etinger, I.C.; Smith, J.; Li, W. Multimodal, multilingual grapheme-to-phoneme conversion for low-resource languages. In *Proceedings of the 2nd Workshop on Deep Learning Approaches for Low-Resource NLP (DeepLo 2019)*, Hong Kong, China, 3 November 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 192–201.

- Ribeiro, M.S.; Comini, G.; Lorenzo-Trueba, J. Improving grapheme-to-phoneme conversion by learning pronunciations from speech recordings. arXiv 2023, arXiv:2307.16643.

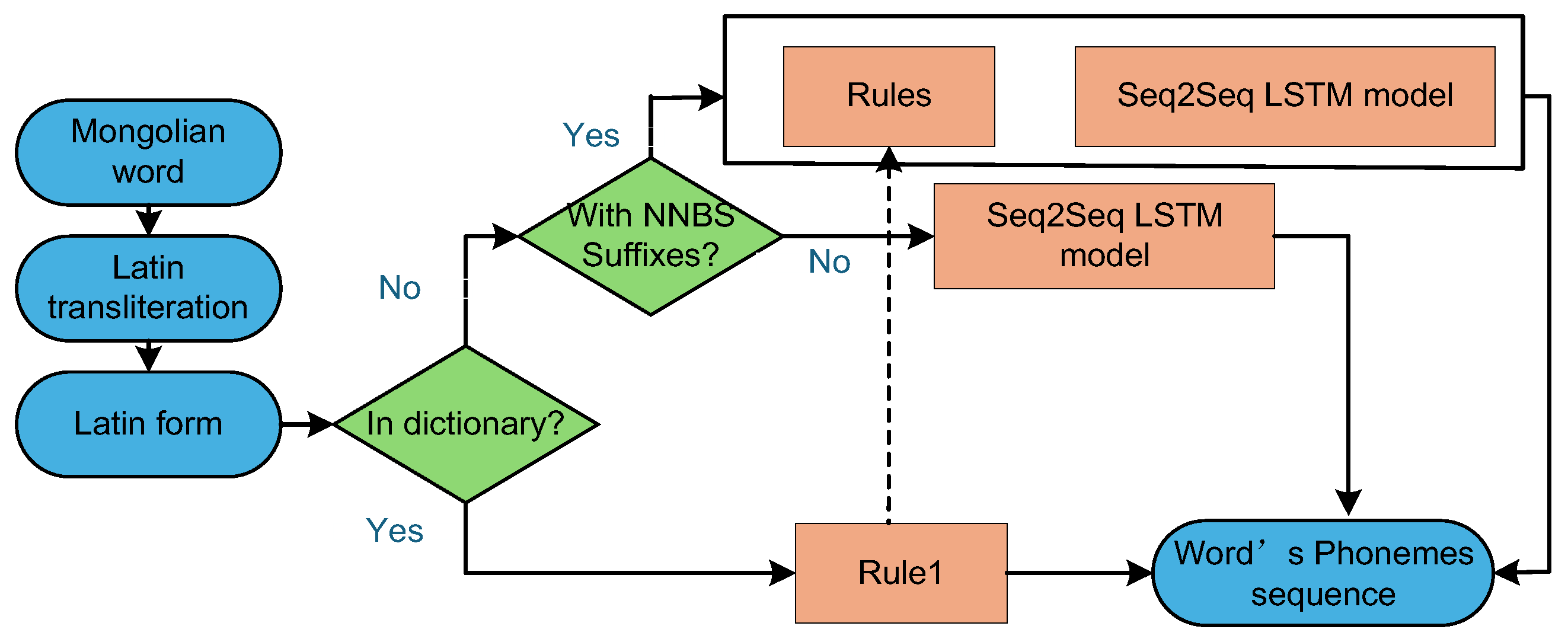

- Liu, Z.; Bao, F.; Gao, G.; Wang, Y. Mongolian grapheme to phoneme conversion by using hybrid approach. In Natural Language Processing and Chinese Computing; Zhang, M., Ng, V., Zhao, D., Li, S., Zan, H., Eds.; Lecture Notes in Computer Science, vol 11108; Springer: Cham, Switzerland, 2018; pp. 40–50.

- Bello, I.; Zoph, B.; Vasudevan, V.; Le, Q.V. Neural optimizer search with reinforcement learning. arXiv 2016, arXiv:1611.01578.

- Jin, H.; Song, Q.; Hu, X. Auto-keras: An efficient neural architecture search system. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining (KDD 2019), Anchorage, AK, USA, 4–8 August 2019; ACM: New York, NY, USA, 2019; pp. 1946–1956. [CrossRef]

- Li, K.; Xian, X.; Wang, J.; Zhang, Y.; Zhang, G. First-principle study on honeycomb fluorated-InTe monolayer with large Rashba spin splitting and direct bandgap. Applied Surface Science 2019, 471, 18–22. [CrossRef]

| Method | PER (%) | WER (%) |

|---|---|---|

| Joint n-gram mode[38] | 7.0 | 28.5 |

| Joint n-gram mode[38] | 5.9 | 24.7 |

| Joint maximum entropy (ME) n-gram mode[36] | 5.88 | 24.53 |

| Failure transitions for joint n-gram models and g2p conversion[40] | 8.24 | 33.55 |

| encoder-decoder LSTM[51] | 7.54 | 29.21 |

| encoder-decoder LSTM (2 layers) [51] | 7.63 | 28.61 |

| uni-directional LSTM[51] | 8.22 | 32.64 |

| uni-directional LSTM (window size 6) [51] | 6.58 | 28.56 |

| bi-directional LSTM[51] | 5.98 | 25.72 |

| bi-directional LSTM (2 layers) [51] | 5.84 | 25.02 |

| bi-directional LSTM (3 layers) [51] | 5.45 | 23.55 |

| DBLSTM-CTC 128 Units [36] | N/A | 27.9 |

| DBLSTM-CTC 512 Units[36] | N/A | 25.8 |

| LSTMwithFull-delay[3] | 9.1 | 30.1 |

| DBLSTM-CTC512Units[3] | N/A | 25.8 |

| 8-gramFST[3] | N/A | 26.5 |

| DBLSTM-CTC512+5-gramFST[3] | N/A | 21.4 |

| Many-to-many alignments with deep BLSTM RNNs[52] | 5.37 | 23.23 |

| Ensemble of 5 [Encoder-decoder + global attention] models[50] | 4.69 | 20.24 |

| Encoder-decoder with global attention[50] | 5.11 | 21.85 |

| Encoder-decoder + local-m attention[50] | 5.39 | 22.83 |

| Encoder-decoder + local-p attention[50] | 5.04 | 21.69 |

| Deep Bi-LSTM with many-to-many alignment[52] | 5.37 | 23.23 |

| Combination of sequitur G2P and seq2seq-attention and multitask learning [53] | 5.76 | 24.88 |

| Encoder-Decoder GRU[54] | 5.8 | 28.7 |

| CNN with NSGD[55] | 5.58 | 24.10 |

| Transformer 3x3[49] | 6.56 | 23.9 |

| Transformer 4x4[49] | 5.23 | 22.1 |

| Transformer 5x5[49] | 5.97 | 24.6 |

| Encoder-Decoder LSTM[4] | 5.68 | 28.44 |

| Encoder-Decoder LSTM with attention layer[4] | 5.23 | 28.36 |

| Encoder-Decoder Bi-LSTM[2] | 5.26 | 27.07 |

| Encoder-Decoder Bi-LSTM with attention layer[4] | 4.86 | 25.67 |

| Encoder CNN, decoder Bi-LSTM[4] | 5.17 | 26.82 |

| End-to-end CNN (with res. connections)[4] | 5.84 | 29.74 |

| Encoder CNN with res. Connections,decoder Bi-LSTM[4] | 4.81 | 25.13 |

| Token-Level Ensemble Distillation with unlabeled source words[59] | 4.60 | 19.88 |

| Joint multi-gram + CRF[55] | 5.5 | 23.4 |

| MTL(512×3,λ=0.2)[56] | 5.26 | 22.96 |

| r-G2P(adv)[57] | 5.22 | 20.14 |

| LiteG2P-medium[43] | N/A | 24.3 |

| Language type | Existing problems | Method |

|---|---|---|

| Agglutinative laguages (Turkish, Finnish |

Complex many-to-many mapping relationships | WFST + n-gram model |

| Polysynthetic language (Inuktitut) |

Complex many-to-many mapping relationships | WFST + n-gram model |

| low resource language | Data resources are scarce | Pre-trained Model + Transfer Learning |

| Thai | Irregular mapping relationship | Neural regression model |

| Polyphonetic languages (English, Chinese, Vietnamese) |

Different pronunciations in different contexts | context modeling |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).