Submitted:

10 September 2025

Posted:

23 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- We combine static program analysis with dynamic execution monitoring to guide seed prioritization in a hybrid fuzzing loop.

- We design an LLM-based input mutation strategy that generates syntactically valid and semantically diverse test cases based on program context.

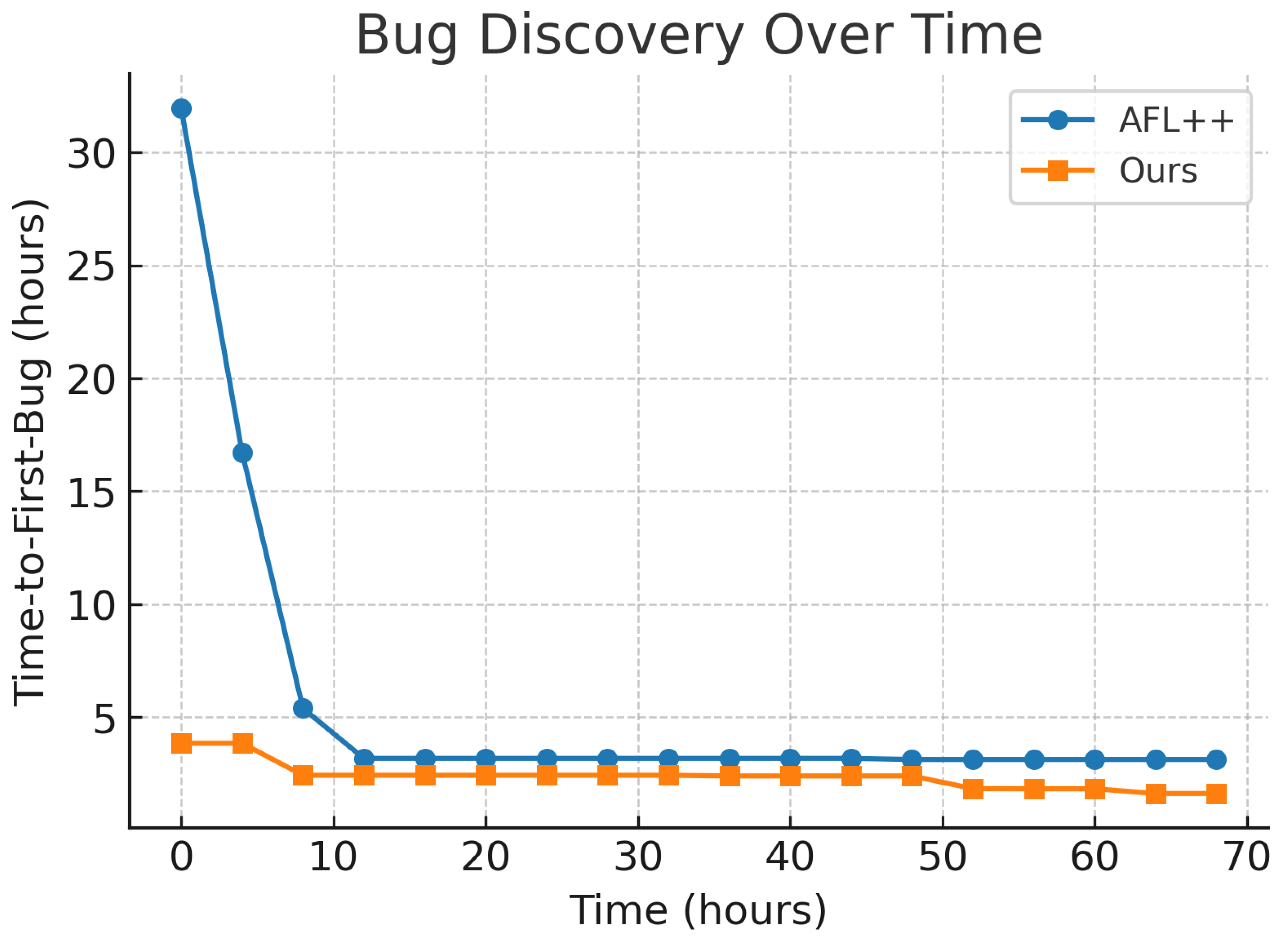

- We introduce a semantic feedback mechanism that evaluates execution novelty through runtime state changes, exception types, and output semantics, enabling the fuzzer to prioritize inputs beyond mere coverage gains, leading to advantages in early bug discovery and semantic exploration.

2. Related Work

2.1. LLM-Assisted Fuzzing Tools

2.2. Technical Differentiation from Prior Work

- Hybrid Static–Dynamic Guidance: Unlike LLAMAFUZZ and Fuzz4All, we combine static program analysis (CFG extraction, API usage pattern detection) with dynamic execution traces to guide LLM mutation prompts. This ensures generated inputs are not only structurally valid but also tailored to unexplored program paths with specific API contexts.

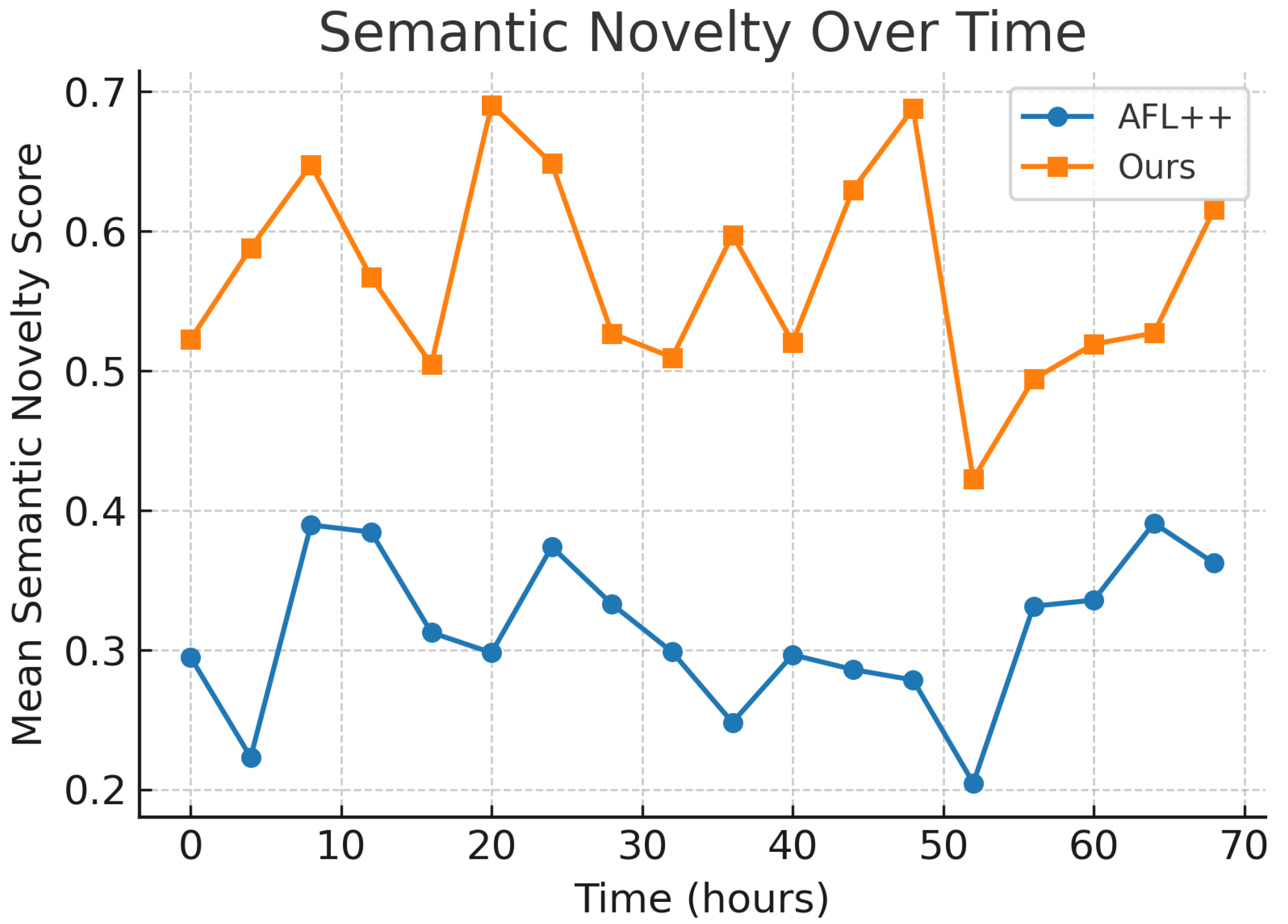

- Semantic Feedback Beyond Validity: Compared to ReFuzzer’s feedback loop, which stops at ensuring syntactic and compilation validity, our semantic feedback mechanism measures behavioral novelty via embedding-based similarity of runtime signals (e.g., output logs, exception types, memory state changes). This enables prioritization of inputs that alter program behavior in novel ways, even if no new code coverage is achieved.

2.3. Novelty Positioning

3. Methodology

3.1. Static Analysis Module

- Control Flow Graph (CFG) Extraction: For source code targets, we compile with LLVM to obtain the LLVM IR, then use llvm-cfg to extract the function-level CFG. For binaries, we use angr to perform symbolic lifting and recover basic blocks.

- API Usage Pattern Analysis: We identify security-relevant API calls (e.g., file I/O, network sockets, string parsing) using a predefined database. We then perform a backward slice from each API call to determine parameter constraints.

- Seed Annotation: We tag each initial seed with the set of functions and APIs it is likely to exercise, using static call graph traversal.

3.2. LLM-Guided Mutation

- Context Encoding: From dynamic execution traces, extract the function call sequence, parameter types, and observed input-output examples.

- Prompt Construction: Embed the context in a structured natural language format, specifying grammar rules, value ranges, and mutation objectives (e.g., “generate inputs that increase buffer length by 20%”).

- LLM Generation: Query the LLM with temperature to produce k candidate inputs.

- Validation and Repair: Use a syntax schema validator to filter invalid inputs. Invalid entries are auto-repaired using a secondary LLM call or a regex-based sanitizer.

3.3. Semantic Feedback Optimization

- Embedding Generation: Convert each signal into a vector embedding using CodeBERT or Sentence-BERT.

- Dimensionality Reduction: Apply incremental PCA to reduce embeddings from to in real time, reducing similarity computation cost.

- Approximate Similarity Search: Store reduced embeddings in a FAISS index, allowing nearest-neighbor queries.

3.4. Implementation Details and Parameter Settings

Prompt Construction Template.

- Execution Context: Function call chain, argument types, observed parameter ranges.

- Objective Instruction: Mutation goal (e.g., “increase string length by 20%”, “introduce uncommon delimiter”).

- Grammar and Syntax Rules: Format constraints (e.g., JSON schema, packet field definitions).

Incremental PCA Parameter Choice.

Semantic Novelty Threshold .

API Usage Database Construction.

- Standard Libraries: POSIX libc, OpenSSL, libpcap, zlib.

- Common Third-Party Libraries: Protocol parsers, image codecs, database engines.

- Security-Relevant APIs: Functions identified from CVE reports (e.g., string manipulation, memory allocation).

3.5. Full Execution Loop

- Select a seed from the pool based on static-dynamic priority.

- Apply LLM-guided mutation to produce candidates.

- Execute candidates with instrumentation.

- Compute coverage and semantic scores; update seed pool.

- Repeat until time budget expires.

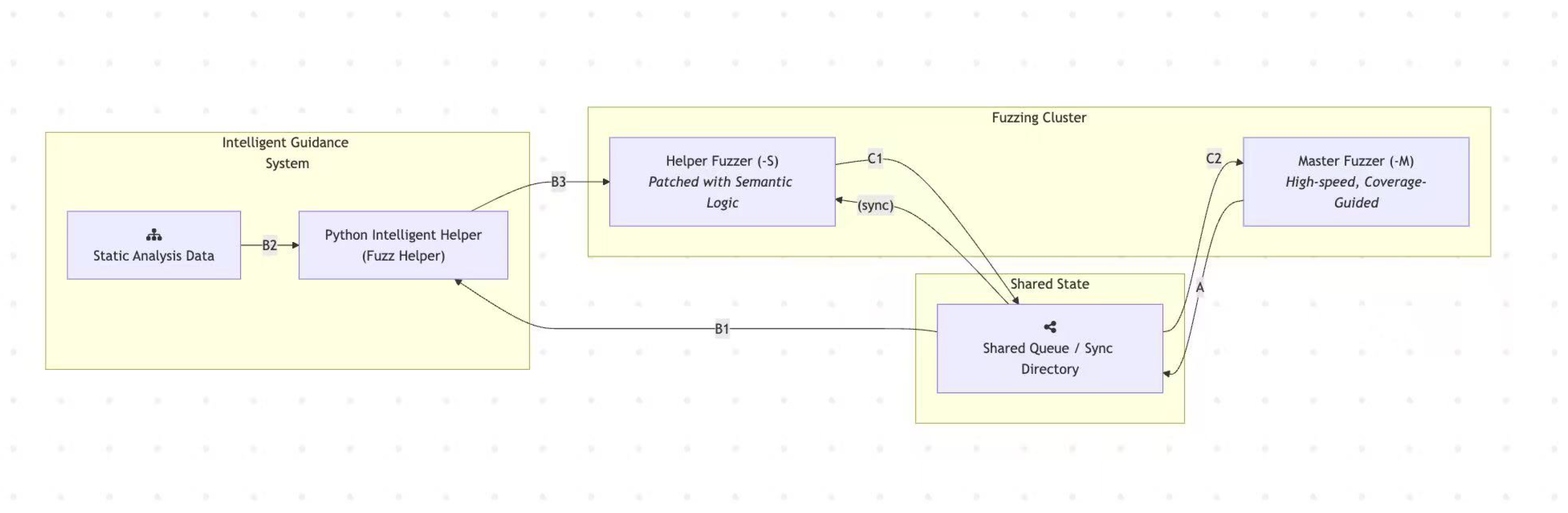

3.6. Detailed Workflow of Components

- A

- Master Fuzzer (-M). The Master Fuzzer performs high-speed, coverage-guided fuzzing. It continuously adds new seeds that increase code coverage to the Shared Queue, serving as the backbone for throughput-oriented exploration.

- B1

- Python Helper – Seed Selection. The Python Helper selects an inspirational seed from the Shared Queue as the basis for semantic-aware mutation.

- B2

- Context Construction. The Helper reads static analysis data to build a rich program context. This information is encoded into structured prompts that guide the LLM.

- B3

- LLM-Guided Input Generation. Leveraging the constructed context, the Helper queries the LLM to generate a new candidate input. This input, together with its semantic score, is passed to the Helper Fuzzer (-S) for evaluation.

- C1

- Helper Fuzzer (-S). The Helper Fuzzer executes the LLM-generated input using its patched semantic feedback logic. If the execution is deemed semantically novel, the new seed is added to the Shared Queue.

- C2

- Feedback to Master Fuzzer. The Master Fuzzer periodically synchronizes with the Shared Queue. New high-quality seeds contributed by the Helper Fuzzer are then amplified through large-scale, in-depth mutation campaigns.

4. Results and Analysis

4.1. Experimental Setup

- libpng v1.6.39 — image parsing library (memory safety vulnerabilities).

- tcpdump v4.99.3 — packet capture utility (protocol parsing vulnerabilities).

- sqlite v3.43.1 — embedded database engine (logic and query processing bugs).

- CPU: AMD EPYC 7543P (32 cores, 2.8 GHz)

- RAM: 128 GB DDR4

- Graphics Card: RTX 3090 (24 GB)

- Storage: NVMe SSD (2 TB)

- OS: Ubuntu 22.04 LTS, Linux kernel 5.15

4.2. Semantic Novelty Scoring Definition

4.3. Additional Time Window Results

4.4. Time Window Sensitivity

| Approach | 24h | 48h | 72h |

|---|---|---|---|

| AFL++ | 3 | 4 | 5 |

| Ours | 4 | 5 | 7 |

4.5. Novelty Threshold Sensitivity

4.6. LLM Model Dependency Analysis

4.7. Failure Case Analysis (Quantitative)

- Coverage improvement over AFL++:

- Novelty score mean difference:

- Unique bugs: 0 (both methods)

4.8. Statistical Significance

4.9. Bug Type Analysis

- libpng: heap buffer overflow (CVE-2023-29488), integer underflow.

- tcpdump: out-of-bounds read in protocol dissector, format string bug.

- sqlite: incorrect query plan generation (logic error), uninitialized memory read.

4.10. Failure Case Analysis

- Static analysis providing less actionable control-flow/API hints in computation-centric programs.

- Semantic novelty metric being less discriminative when runtime signals lack structured output or exception diversity.

5. Discussion

5.1. Impact of Semantic Feedback

5.2. Advantages Over Existing LLM-Assisted Fuzzers

5.3. Limitations

- LLM Quality and Cost: Mutation quality depends on the capability of the LLM. Commercial APIs (e.g., GPT-4) incur recurring costs and potential rate limits.

- Overhead: Although caching mitigates latency, mutation throughput is still lower than purely in-memory mutators.

- Domain Generalization: While our method performed well on parsing- and protocol-heavy software, performance on purely computational workloads remains to be validated.

- Our bug yield is competitive but not always superior to coverage-focused baselines like LLAMAFUZZ, potentially due to overhead in semantic embedding computation.

6. Conclusion and Future Work

6.1. Future Work

- Adaptive Prompt Optimization: Incorporating reinforcement learning to evolve prompts based on past mutation success rates.

- Distributed Deployment: Scaling the framework to large compute clusters with parallelized LLM queries and semantic scoring.

- Multi-Agent Collaboration: Using multiple specialized LLM agents for different input formats or protocol states.

- Lightweight On-Device Models: Reducing cost and latency by employing fine-tuned local LLMs for common mutation tasks.

- Extended Feedback Signals: Integrating taint analysis and symbolic execution into the semantic scoring process for deeper path targeting.

References

- Zhang, X.; Chen, M.; Li, W.; Huang, Z.; Wang, K. LLAMAFUZZ: Large Language Model Enhanced Greybox Fuzzing. arXiv 2024, arXiv:2406.07714. [Google Scholar] [CrossRef]

- Shen, Z.; Zhou, Y.; Wang, Y.; Wang, H.; Zhang, W.; Li, B.; Zhang, D.S.; Zhao, B. Fuzz4All: Universal Fuzzing with Large Language Models. arXiv 2023, arXiv:2308.04748. [Google Scholar]

- Liu, Y.; Jiang, H.; Zhang, Y.; Wang, Z.; Chen, J. FuzzCoder: Byte-level Fuzzing Test via Large Language Model. arXiv 2024, arXiv:2409.01944. [Google Scholar]

- Li, C.; Wang, T.; Yang, H.; Zhao, L.; Zhang, H. ReFuzzer: Feedback-Driven Approach to Enhance Validity of LLM-Generated Test Programs. arXiv 2025, arXiv:2508.03603. [Google Scholar]

- Wang, C.; Quach, H.T. Exploring the effect of sequence smoothness on machine learning accuracy. In Proceedings of the International Conference On Innovative Computing And Communication. Springer Nature Singapore Singapore; 2024; pp. 475–494. [Google Scholar]

- Li, C.; Zheng, H.; Sun, Y.; Wang, C.; Yu, L.; Chang, C.; Tian, X.; Liu, B. Enhancing multi-hop knowledge graph reasoning through reward shaping techniques. In Proceedings of the 2024 4th International Conference on Machine Learning and Intelligent Systems Engineering (MLISE). IEEE; 2024; pp. 1–5. [Google Scholar]

- Wang, C.; Sui, M.; Sun, D.; Zhang, Z.; Zhou, Y. Theoretical analysis of meta reinforcement learning: Generalization bounds and convergence guarantees. In Proceedings of the International Conference on Modeling, Natural Language Processing and Machine Learning; 2024; pp. 153–159. [Google Scholar]

- Wang, C.; Yang, Y.; Li, R.; Sun, D.; Cai, R.; Zhang, Y.; Fu, C. Adapting llms for efficient context processing through soft prompt compression. In Proceedings of the International Conference on Modeling, Natural Language Processing and Machine Learning; 2024; pp. 91–97. [Google Scholar]

- Wu, T.; Wang, Y.; Quach, N. Advancements in natural language processing: Exploring transformer-based architectures for text understanding. In Proceedings of the 2025 5th International Conference on Artificial Intelligence and Industrial Technology Applications (AIITA). IEEE; 2025; pp. 1384–1388. [Google Scholar]

- Sang, Y. Robustness of Fine-Tuned LLMs under Noisy Retrieval Inputs 2025.

- Sang, Y. Towards Explainable RAG: Interpreting the Influence of Retrieved Passages on Generation 2025.

- Gao, Z. Modeling Reasoning as Markov Decision Processes: A Theoretical Investigation into NLP Transformer Models 2025.

- Gao, Z. Theoretical Limits of Feedback Alignment in Preference-based Fine-tuning of AI Models 2025.

- Liu, X.; Wang, Y.; Chen, J. HGFuzzer: Directed Greybox Fuzzing via Large Language Model. arXiv 2025, arXiv:2505.03425. [Google Scholar]

- Zhao, L.; Yang, H.; Zhang, H. ELFuzz: Efficient Input Generation via LLM-driven Synthesis Over Fuzzer Space. arXiv 2025, arXiv:2506.10323. [Google Scholar] [CrossRef]

- Zhang, Z. Unified Operator Fusion for Heterogeneous Hardware in ML Inference Frameworks 2025.

- Quach, N.; Wang, Q.; Gao, Z.; Sun, Q.; Guan, B.; Floyd, L. Reinforcement Learning Approach for Integrating Compressed Contexts into Knowledge Graphs. In Proceedings of the 2024 5th International Conference on Computer Vision, Image and Deep Learning (CVIDL); 2024; pp. 862–866. [Google Scholar] [CrossRef]

- Gao, Z. Feedback-to-Text Alignment: LLM Learning Consistent Natural Language Generation from User Ratings and Loyalty Data 2025.

- Liu, M.; Sui, M.; Nian, Y.; Wang, C.; Zhou, Z. Ca-bert: Leveraging context awareness for enhanced multi-turn chat interaction. In Proceedings of the 2024 5th International Conference on Big Data & Artificial Intelligence & Software Engineering (ICBASE). IEEE, 2024; pp. 388–392.

- Huang, Z.; Liu, Y.; Zhang, Y.; Wang, Z. ChatFuMe: LLM-Assisted Model-Based Fuzzing of Protocol Implementations. arXiv 2025, arXiv:2508.01750. [Google Scholar]

- Shi, W.; Zhang, Y.; Xing, X.; Xu, J. Harnessing large language models for seed generation in greybox fuzzing. arXiv 2024, arXiv:2411.18143. [Google Scholar] [CrossRef]

- Hu, X.; Chen, P.Y.; Ho, T.Y. Radar: Robust ai-text detection via adversarial learning. Advances in neural information processing systems 2023, 36, 15077–15095. [Google Scholar]

- Ma, X.; Chen, P.Y.; He, Y.; Zhang, Y. FuzzGPT: Large Language Models are Edge-Case Fuzzers for Deep Learning Libraries. arXiv 2023, arXiv:2304.02014. [Google Scholar]

- Feng, X.; Wang, H.; Zhang, W.; Zhao, B. WhiteFox: White-Box Compiler Fuzzing Empowered by Large Language Models. arXiv 2023, arXiv:2310.15991. [Google Scholar]

- Li, M.; Zhou, Y.; Zhang, D.S. CHEMFUZZ: Large Language Models-assisted Fuzzing for Quantum Chemistry Software. arXiv 2023, arXiv:2308.04748. [Google Scholar]

| Approach | 24h | 48h | 72h |

|---|---|---|---|

| AFL++ | 4 | 6 | 7 |

| Ours | 5 | 8 | 9 |

| Approach | 24h | 48h | 72h |

|---|---|---|---|

| AFL++ | 2 | 3 | 4 |

| Ours | 4 | 6 | 6 |

| Threshold | 0.15 | 0.20 | 0.25 | 0.30 | 0.35 |

| Unique Bugs Found | 5 | 8 | 9 | 7 | 5 |

| Model | Valid Input Rate | Unique Bugs | Mean TTFB (h) |

|---|---|---|---|

| GPT-4 | 94% | 9 | 4.2 |

| LLaMA-3-70B | 87% | 11 | 6.1 |

| Approach | libpng | tcpdump | sqlite |

|---|---|---|---|

| AFL++ | 5 | 7 | 4 |

| Ours | 7 | 9 | 6 |

| LLAMAFUZZ | 8 | 10 | 7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).