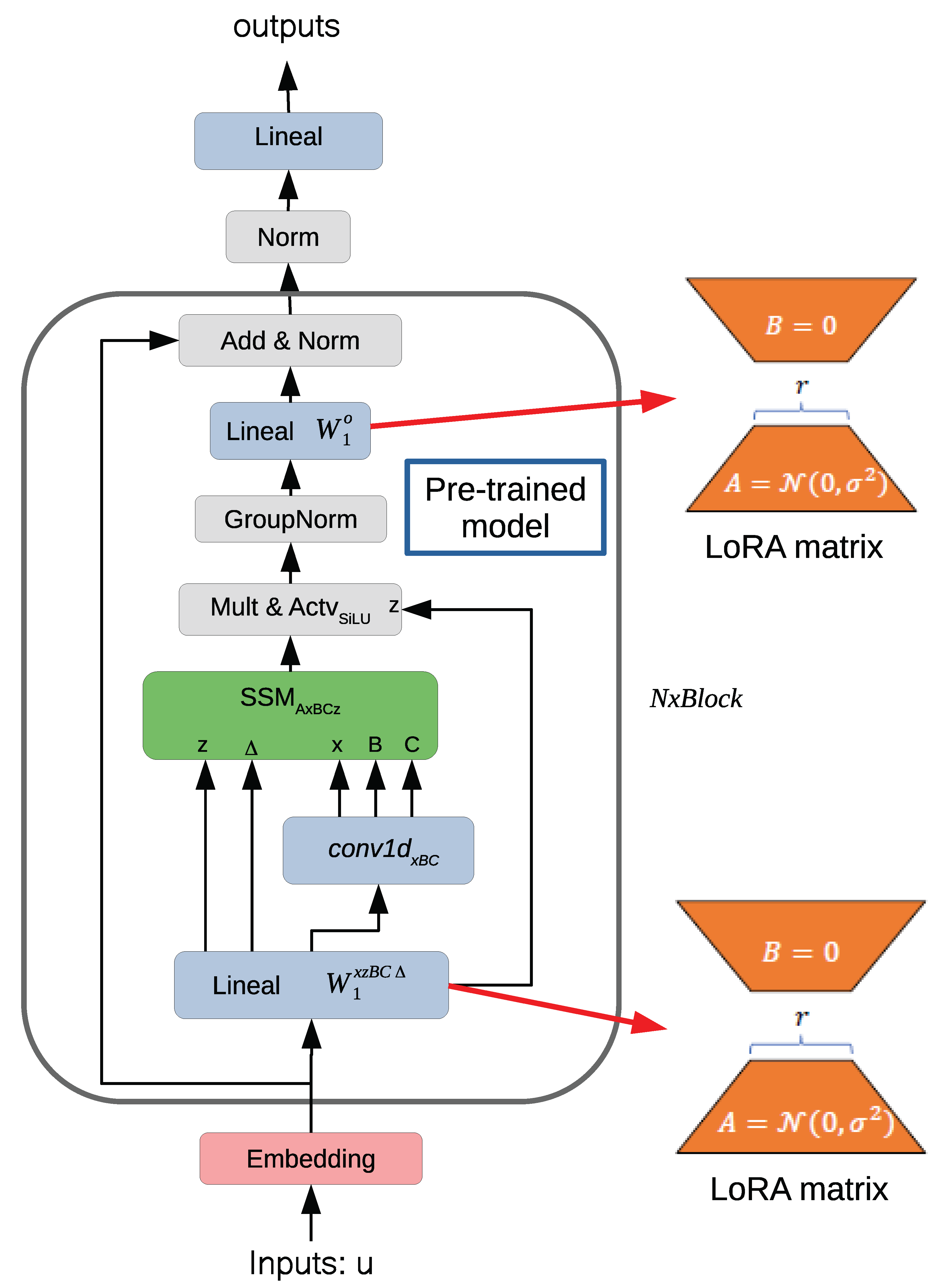

This work presents the first application of LoRA, a Parameter-Efficient Fine-Tuning technique, within the Codestral Mamba framework to evaluate its effectiveness in generating accurate and contextually relevant code from natural language descriptions. The model’s performance was assessed on two benchmark datasets CONCODE/CodeXGLUE and TestCase2Code, focusing on the impact of LoRA integration in enhancing code generation quality.

4.2.2. Model Performance on the TestCase2Code Dataset

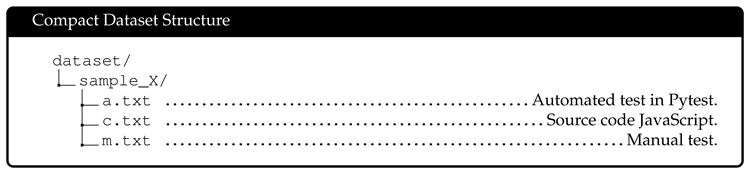

The proprietary TestCase2Code dataset, developed using real project values, provided a unique benchmark for evaluating the model’s ability to generate functional test cases. As detailed in

Table 5, the baseline Codestral Mamba model demonstrated limitations in practical application across several metrics, including n-gram, w-ngram, Syntax Match (SM), Dataflow Match (DM), and CodeBLEU.

Upon fine-tuning with LoRA, the Codestral Mamba model showed substantial improvements across all evaluated metrics. Specifically, the n-gram score increased from 4.82% to 56.2%, the w-ngram score from 11.8% to 67.3%, SM from 39.5% to 91.0%, DM from 51.4% to 84.3%, and CodeBLEU from 26.9% to 74.7%. These enhancements underscore the effectiveness of LoRA in generating code that is not only syntactically correct but also semantically accurate and contextually relevant.

The training process for the LoRA fine-tuned model was remarkably efficient, achieving 200 epochs in just 20 minutes. This time and computational efficiency facilitates rapid experimentation and fine-tuning, significantly reducing the time required to achieve optimal model performance.

4.2.3. Interpretation of Results

The results from both datasets underscore the significant impact of integrating LoRA into the Codestral Mamba model. The fine-tuned model demonstrated competitive performance relative to state-of-the-art models, highlighting its potential for real-world applications in automated test case generation and code development. The computational efficiency of the fine-tuning process further enhances the model’s suitability for tasks requiring quick deployment and continuous optimization.

The integration of LoRA with the Codestral Mamba model represents a substantial advancement in the field of automated code generation. The model’s ability to generate accurate, contextually relevant code, combined with its computational efficiency, positions it as a valuable tool for advancing software testing and development workflows.

4.2.4. Practical Performance Evaluation

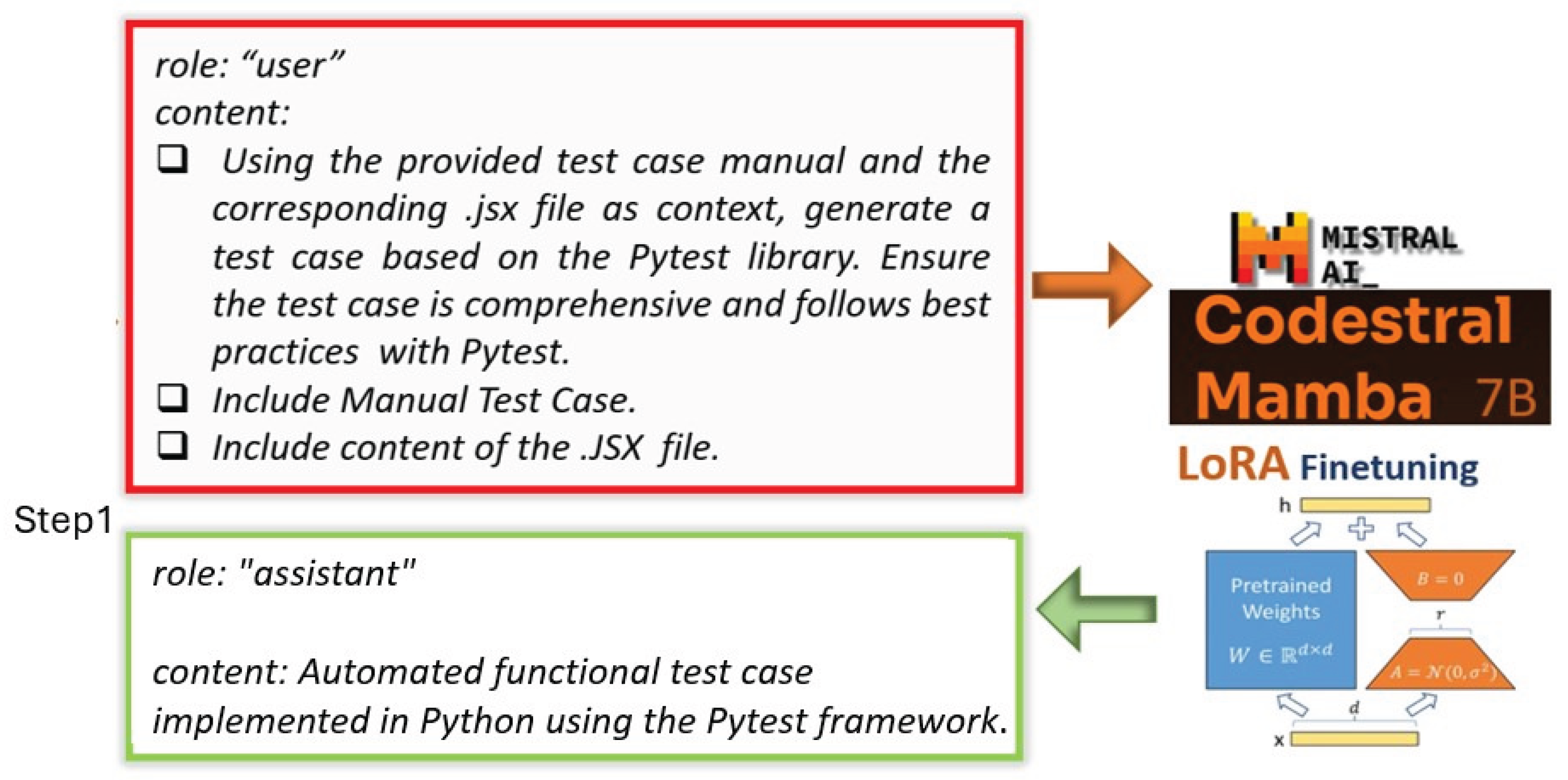

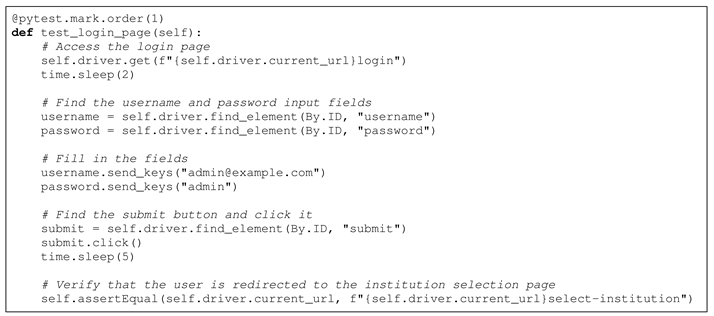

This section presents the practical evaluation of the trained Codestral Mamba model through the development of the Codestral Mamba_QA AI Chatbot, implemented as a web-based service. Unlike purely quantitative assessments, the evaluation relied on expert-driven inspection and visual analysis of the model’s outputs. As shown in

Figure 2, the process followed a prompt–response paradigm: the system input (user role) provided task-specific instructions, combining a manual test case description with a corresponding

.jsx file, while the fine-tuned model, guided by the system prompt, produced the system response (assistant role), namely automated Pytest test case code.

The model was fine-tuned on the proprietary TestCase2Code dataset, with strict exclusion of evaluation cases from the training set to ensure fairness. Testing was conducted using both manual cases from the TestCase2Code database and newly designed cases, as well as general instruction-based prompts. The analysis focused on the model’s capacity to generate coherent, syntactically valid, and structurally sound test case code. Experiments explored variations in LoRA scaling factors, temperature settings, prompt designs, and user inputs, providing insights into the model’s practical utility, adaptability, and robustness in automated test case generation.

The evaluation considered the following elements:

System Prompt: Predefined instructions steering the model’s behavior to align outputs with best practices in Pytest-based test case generation.

Temperature: A parameter controlling response variability; lower values promote determinism and structure, while higher values introduce diversity.

LoRA Scaling Factor: A parameter defining the degree of influence exerted by LoRA fine-tuning, with higher values increasing domain-specific adaptation.

System Input: The user-provided instruction or test case description that serves as the basis for generating a response.

System Response: The output generated by the fine-tuned model, typically comprising structured Pytest code and, in some cases, explanatory details of the test logic.

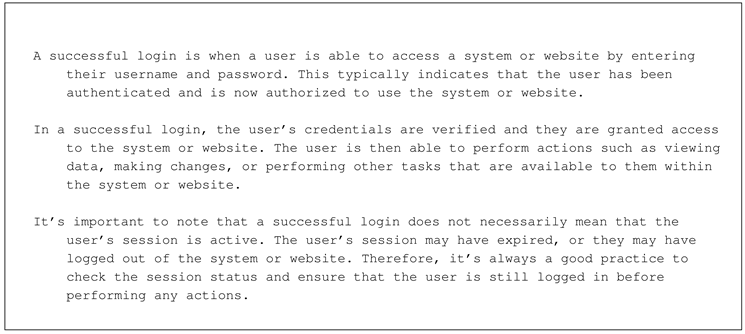

In the baseline scenario, the Codestral Mamba model was evaluated with a LoRA scaling factor of 0 and temperature set to 0. Under these default hyperparameter conditions, the model was prompted with the phrase "successful login." Rather than producing an executable script, it returned a general textual explanation encompassing authentication principles, access validation, and session handling. This behavior highlights the model’s default interpretative mode, favoring descriptive outputs over structured automation in the absence of fine-tuning. A detailed representation of this output is provided in

Table 6.

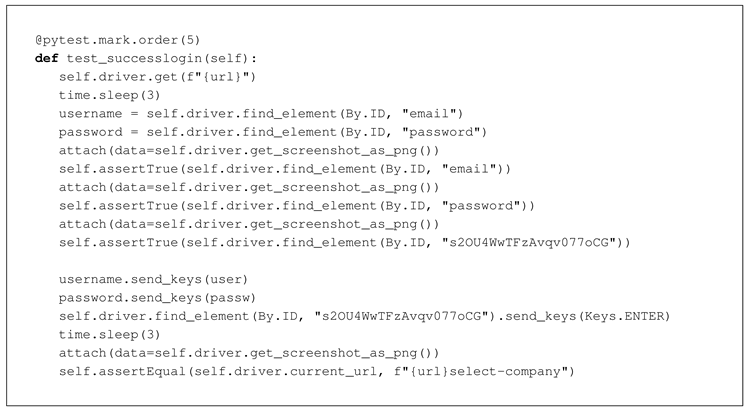

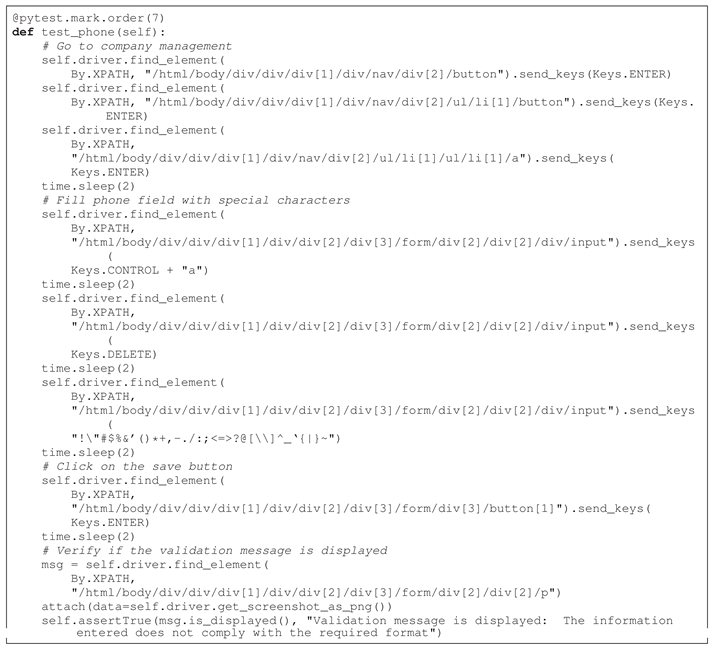

To assess the impact of fine-tuning, the same prompt now more structured as “successful login test case” was processed with the model configured at a LoRA scale factor of 2 and temperature set to 0. In this case, the model generated both a human-readable, step-by-step manual test case and a functional Pytest script. The output demonstrates how LoRA fine-tuning enhances the model’s capability to generalize test patterns and produce executable testing logic based on implicit requirements. The structured output, including both manual and automated representations, is detailed in

Table 7.

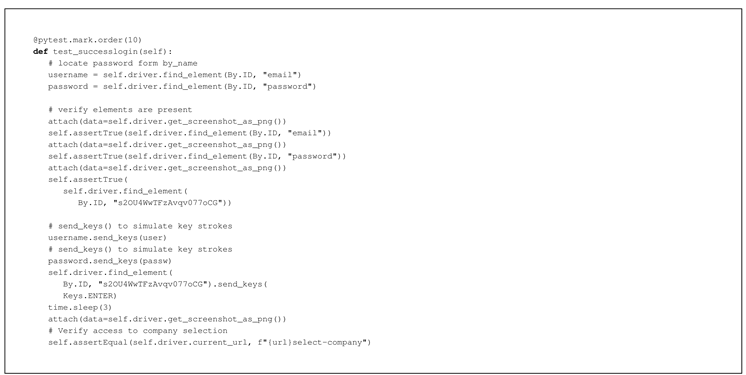

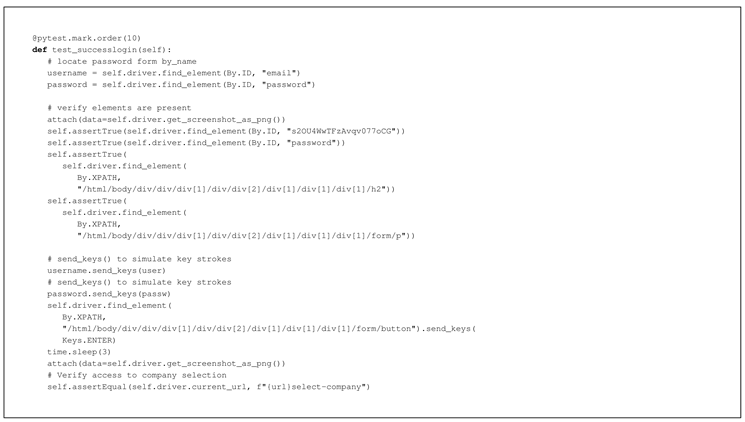

Adjusting the LoRA scale factor and temperature yielded significant changes in output quality and depth. When configured with a scale factor of 3.0 and a moderate temperature of 0.5, the model produced more nuanced automated test cases that included business logic assumptions and comprehensive locator usage without explicit prompt instructions.

Table 8 presents two variants of this output, illustrating the model’s flexibility and improved contextual reasoning when higher creativity and fine-tuning were permitted.

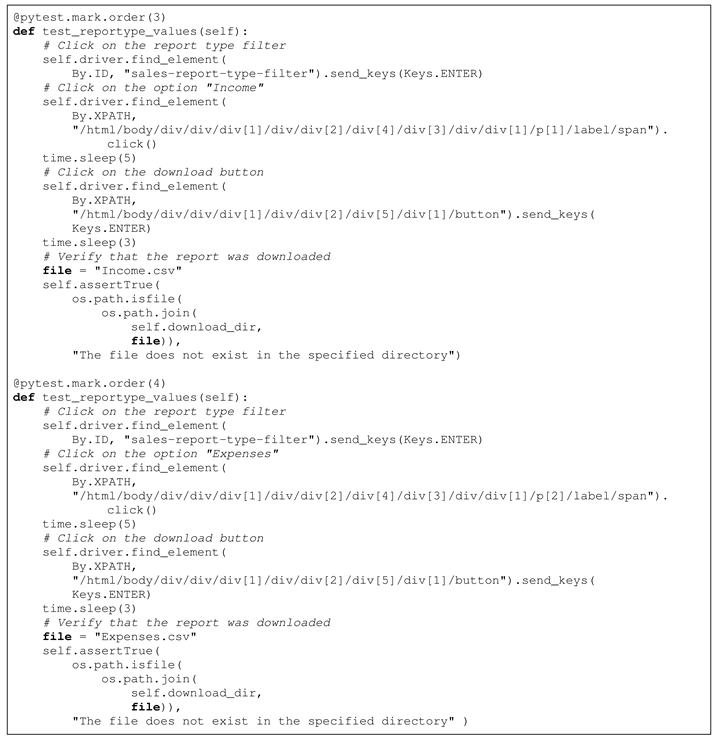

The experiment further evaluated the generation of test cases intended to increase test coverage. Under a LoRA scale factor of 3 and temperature of 0, the model was prompted to generate additional test cases that complemented a base scenario. The generated cases reflected expanded test coverage by incorporating new functional paths and validations, such as alternative input options and download verification. These outputs, summarized in

Table 9, highlight the model’s ability to reason about coverage expansion within the context of previously seen data.

The model’s ability to generalize test generation across projects was tested by providing it with manual test steps and JSX file context from a different project: ALICE4u

7. Using a LoRA scale factor of 1.0 and temperature of 0, the model successfully adapted and generated a Pytest case tailored to the foreign environment. This result, shown in

Table 10, evidences the model’s potential for transfer learning and supports its viability in continuous testing pipelines involving multiple codebases.

Finally, to assess the model’s role in defect prevention, a prompt describing a known bug scenario specifically, the lack of input validation was submitted. With a LoRA scale factor of 2 and a temperature of 0, the model autonomously produced an automated test that enforced input constraints and performed error message validation. As documented in

Table 11, this output underscores the utility of fine-tuned language models in safeguarding against regressions and promoting test-driven development practices.