Submitted:

12 September 2025

Posted:

15 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related work

2.1. Based on Deep Learning

2.2. Based on Generative Network

3. Method

3.1. Problem Formulation

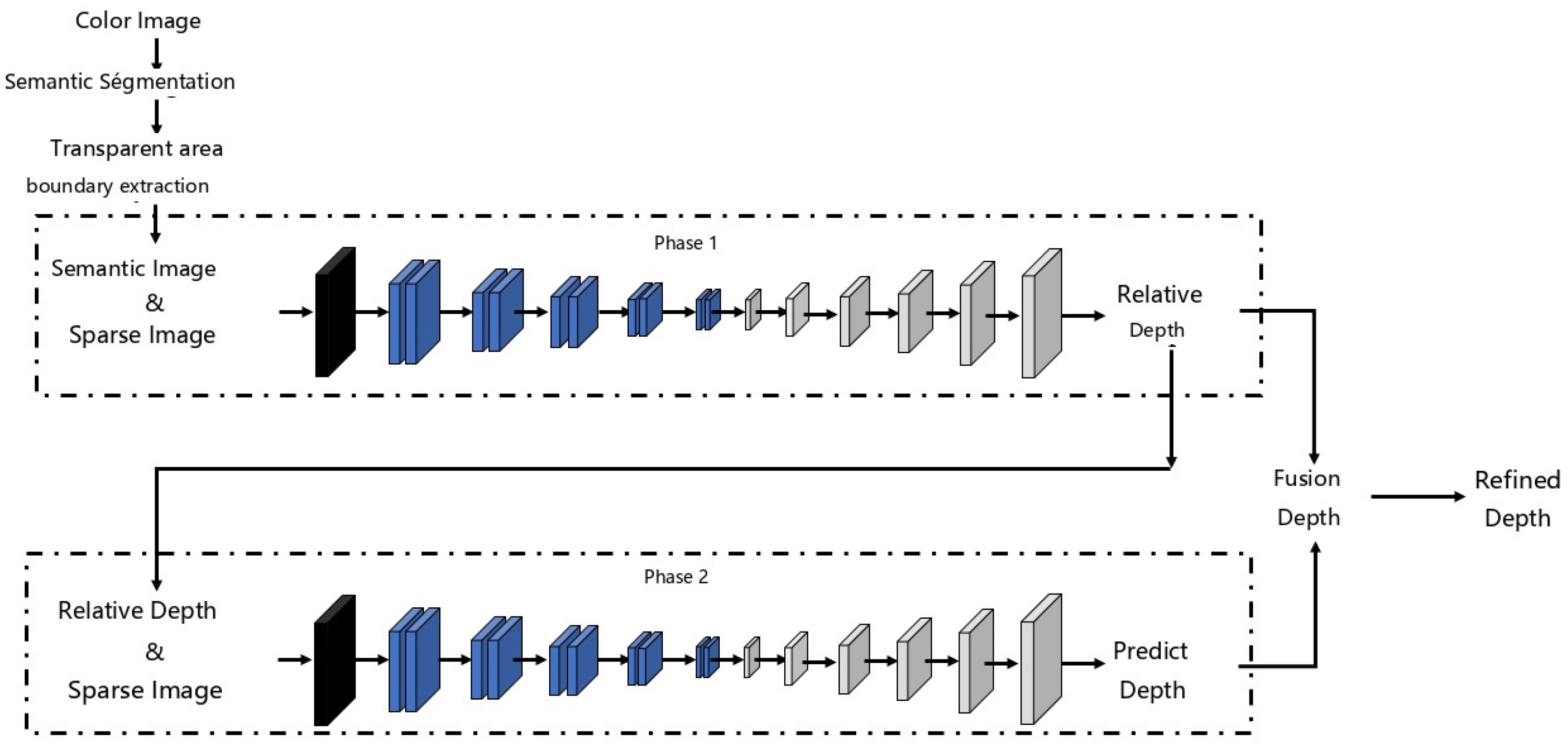

3.2. Network Architecture

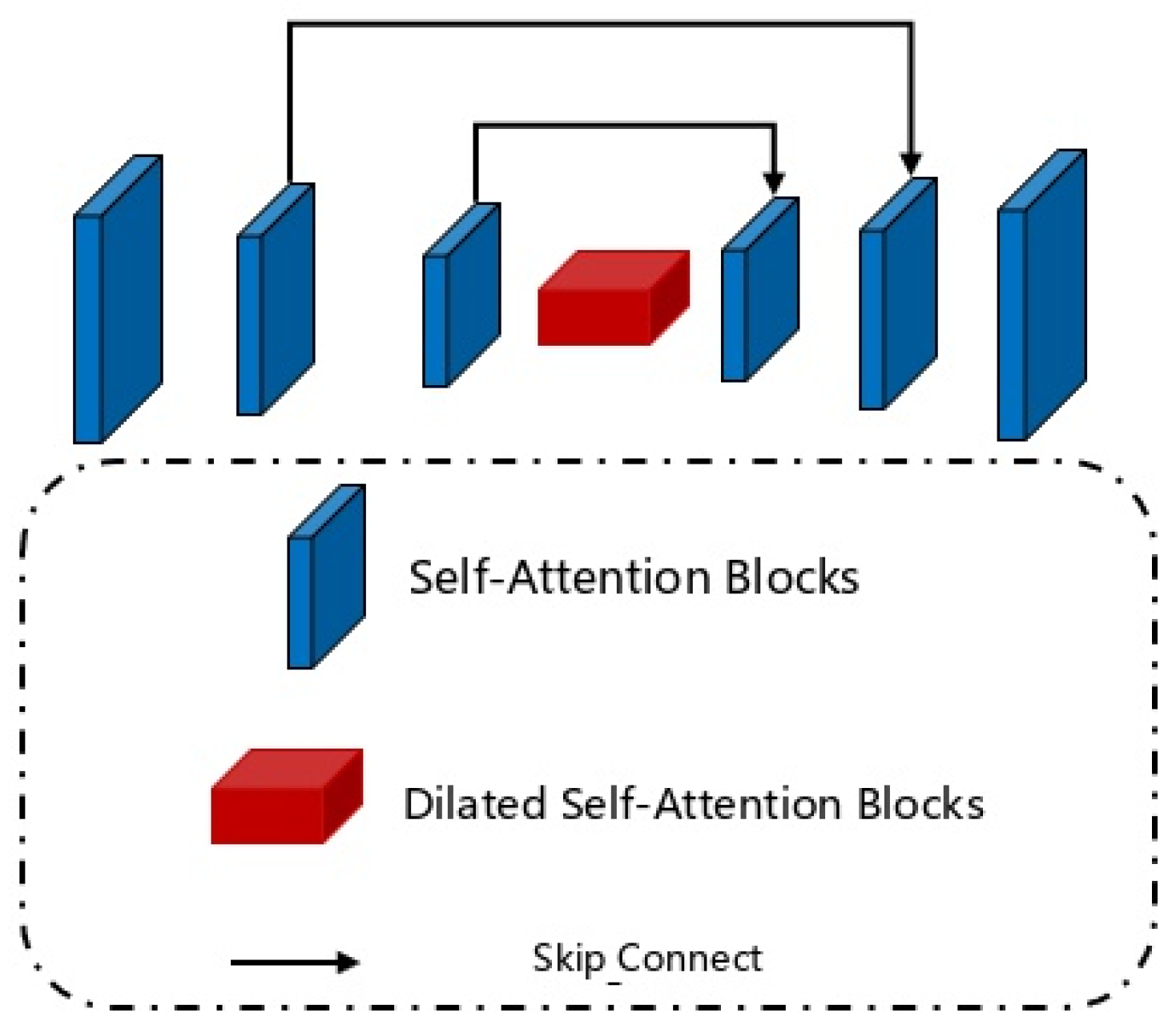

3.2.1. Semantic Information Guidance Phase Based on Self-Attention Mechanism

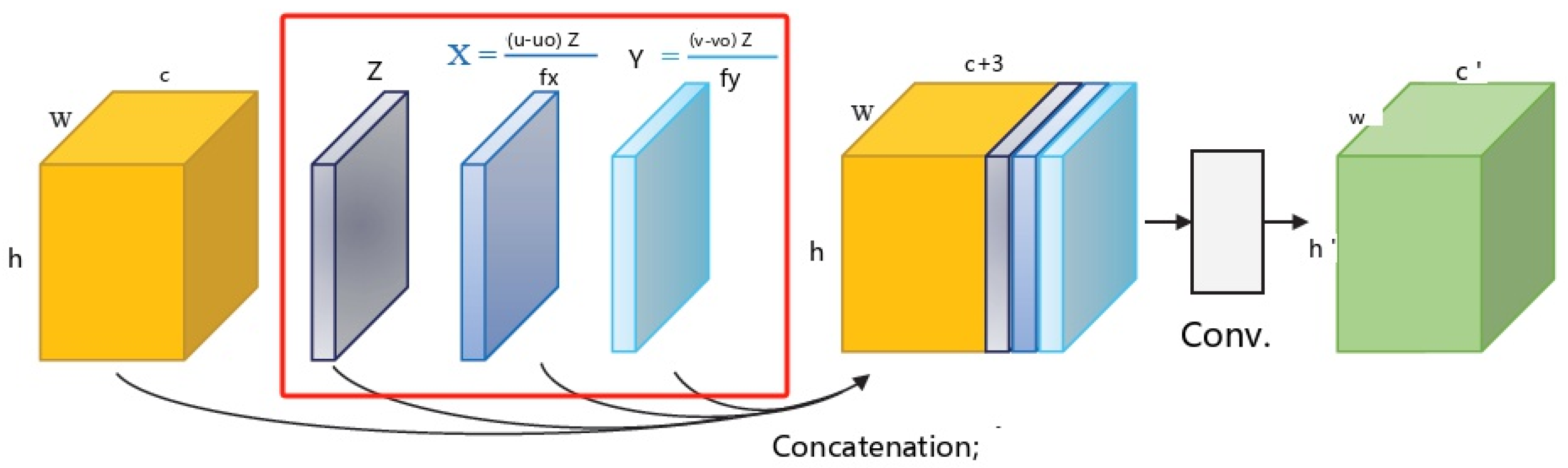

3.2.2. Depth Guidance Phase Based on Geometric Convolution

3.2.3. Fine-Grained Depth Recovery Based on Global Consistency

3.3. Loss Function

4. Experiment

4.1. Dataset

4.2. Evaluation Metrics

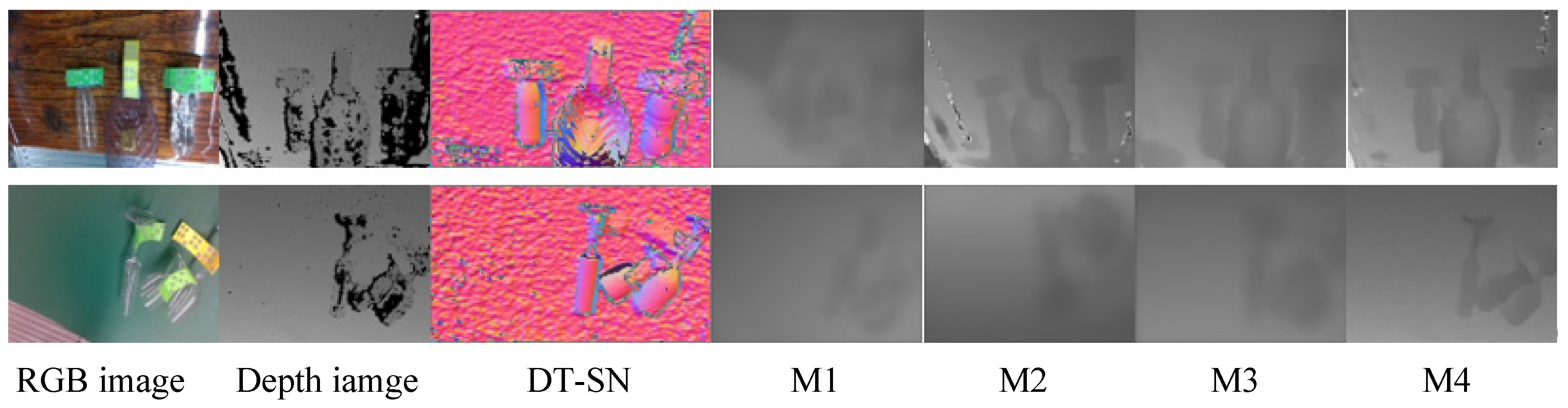

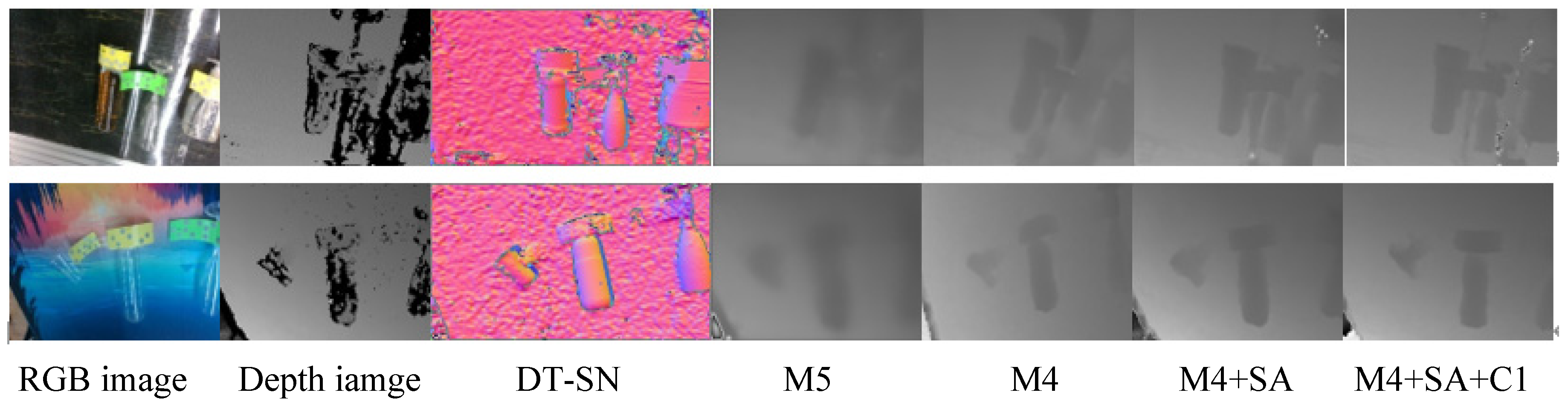

4.3. Ablation Experiment

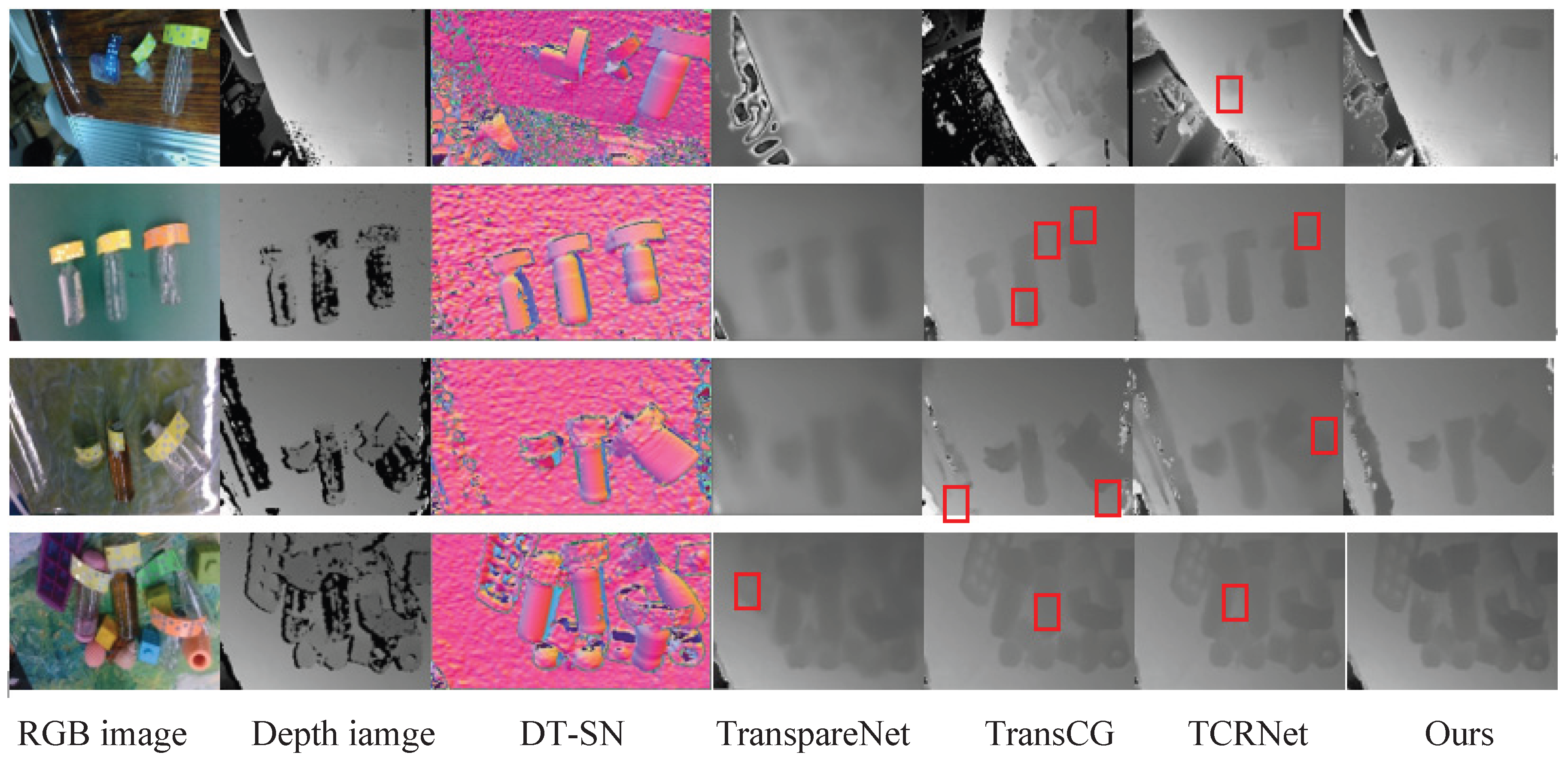

4.4. Comparison with SOTA

5. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Shi J, Yong A, Jin Y, et al. Asgrasp: Generalizable transparent object reconstruction and 6-dof grasp detection from rgb-d active stereo camera[C]//2024 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2024: 5441-5447.

- Jing X, Qian K, Vincze M. CAGT: Sim-to-Real Depth Completion with Interactive Embedding Aggregation and Geometry Awareness for Transparent Objects[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2025.

- Ummadisingu A, Choi J, Yamane K, et al. Said-nerf: Segmentation-aided nerf for depth completion of transparent objects[C]//2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2024: 7535-7542.

- Jin Y, Liao L, Zhang B. Depth Completion of Transparent Objects Based on Feature Fusion[C]//2024 4th International Conference on Artificial Intelligence, Virtual Reality and Visualization. IEEE, 2024: 95-98.

- Meng X, Wen J, Li Y, et al. DFNet-Trans: An end-to-end multibranching network for depth estimation for transparent objects[J]. Computer Vision and Image Understanding, 2024, 240: 103914.

- Liu B, Li H, Wang Z, et al. Transparent Depth Completion Using Segmentation Features[J]. ACM Transactions on Multimedia Computing, Communications and Applications, 2024, 20(12): 1-19.

- Zhai D H, Yu S, Wang W, et al. Tcrnet: Transparent object depth completion with cascade refinements[J]. IEEE Transactions on Automation Science and Engineering, 2024.

- Gao J J, Zong Z, Yang Q, et al. An Enhanced UNet-based Framework for Robust Depth Completion of Transparent Objects from Single RGB-D Images[C]//2024 7th International Conference on Computer Information Science and Application Technology (CISAT). IEEE, 2024: 458-462.

- Li J, Wen S, Lu D, et al. Voxel and deep learning-based depth complementation for transparent objects[J]. Pattern Recognition Letters, 2025.

- Jing X, Qian K, Vincze M. CAGT: Sim-to-Real Depth Completion with Interactive Embedding Aggregation and Geometry Awareness for Transparent Objects[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2025.

- Pathak D, Krahenbuhl P, Donahue J, et al. Context encoders: Feature learning by inpainting[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2016: 2536-2544.

- Yu J, Lin Z, Yang J, et al. Generative image inpainting with contextual attention[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2018: 5505-5514.

- Li S, Yu H, Ding W, et al. Visual–tactile fusion for transparent object grasping in complex backgrounds[J]. IEEE Transactions on Robotics, 2023, 39(5): 3838-3856.

- Fang H, Fang H S, Xu S, et al. Transcg: A large-scale real-world dataset for transparent object depth completion and a grasping baseline[J]. IEEE Robotics and Automation Letters, 2022, 7(3): 7383-7390.

- J. Xiao, Y. Wu, Y. Chen, et. al., “LSTFE-Net: Long Short-Term Feature Enhancement Network for Video Small Object Detection,” 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 2023, pp. 14613-14622.

- J. Xiao, H. Guo, J. Zhou, et. al., Tiny object detection with context enhancement and feature purification, Expert Systems with Applications, 2023, vol 211, 118665.

- Sajjan S, Moore M, Pan M, et al. Clear grasp: 3d shape estimation of transparent objects for manipulation[C]//2020 IEEE international conference on robotics and automation (ICRA). IEEE, 2020: 3634-3642.

- Zhu L, Mousavian A, Xiang Y, et al. RGB-D local implicit function for depth completion of transparent objects[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2021: 4649-4658.

- H. Xu, Y. R. Wang, S. Eppel, A. Aspuru-Guzik, F. Shkurti, and A. Garg, “Seeing glass: Joint point-cloud and depth completion for transparent objects,” in Proc. Conf. Robot Learn., 2022, pp. 827–838.

- Chen K, Wang S, Xia B, et al. Tode-trans: Transparent object depth estimation with transformer[C]//2023 IEEE international conference on robotics and automation (ICRA). IEEE, 2023: 4880-4886.

| Models | SG-Input Sparse depth |

DD-Input relative depth | Guidance Map | Self-attention | Refined | RMSE↓ | MAE↓ | REL↓ |

| M1 | 0.0302 | 0.0176 | 0.0731 | |||||

| M2 | √ | 0.0299 | 0.0172 | 0.0532 | ||||

| M3 | √ | 0.0295 | 0.0157 | 0.0517 | ||||

| M4 | √ | √ | 0.0291 | 0.0153 | 0.0515 | |||

| M5 | √ | √ | √ | 0.0291 | 0.0155 | 0.0619 | ||

| M4+SA | √ | √ | √ | 0.0190 | 0.0113 | 0.0254 | ||

| M4+SA+C1 | √ | √ | √ | √ | 0.0138 | 0.0107 | 0.0155 |

| Model | RMSE↓ | Mean↓ | SSIM↑ | 1.05↑ | 1.10↑ | 1.25↑ |

| SA | 0.1095 | 0.400 | 0.673 | 79.13 | 93.60 | 98.57 |

| SA+SSIM | 0.1096 | 0.407 | 0.776 | 88.47 | 92.45 | 95.49 |

| SA+SSIM+GC | 0.0138 | 0.392 | 0.799 | 90.41 | 97.11 | 99.72 |

| Model | RMSE↓ | MAE↓ | REL↓ | 1.05↑ | 1.10↑ | 1.25↑ |

| ClearGrasp [17] | 0.0540 | 0.0370 | 0.0831 | 50.48 | 68.68 | 95.28 |

| LIDF-Refine [18] | 0.0393 | 0.0150 | 0.0340 | 78.22 | 94.26 | 99.80 |

| TranspareNet [19] | 0.0361 | 0.0134 | 0.0231 | 88.45 | 96.28 | 99.42 |

| TransCG [14] | 0.0182 | 0.0123 | 0.0270 | 83.76 | 95.67 | 99.71 |

| TODE-Trans [20] | 0.0271 | 0.0216 | 0.0487 | 64.24 | 86.98 | 99.51 |

| TCRNet [7] | 0.0170 | 0.0109 | 0.0200 | 88.96 | 96.94 | 99.87 |

| Ours | 0.0138 | 0.0107 | 0.0155 | 90.41 | 97.11 | 99.72 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).