Submitted:

03 September 2025

Posted:

04 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

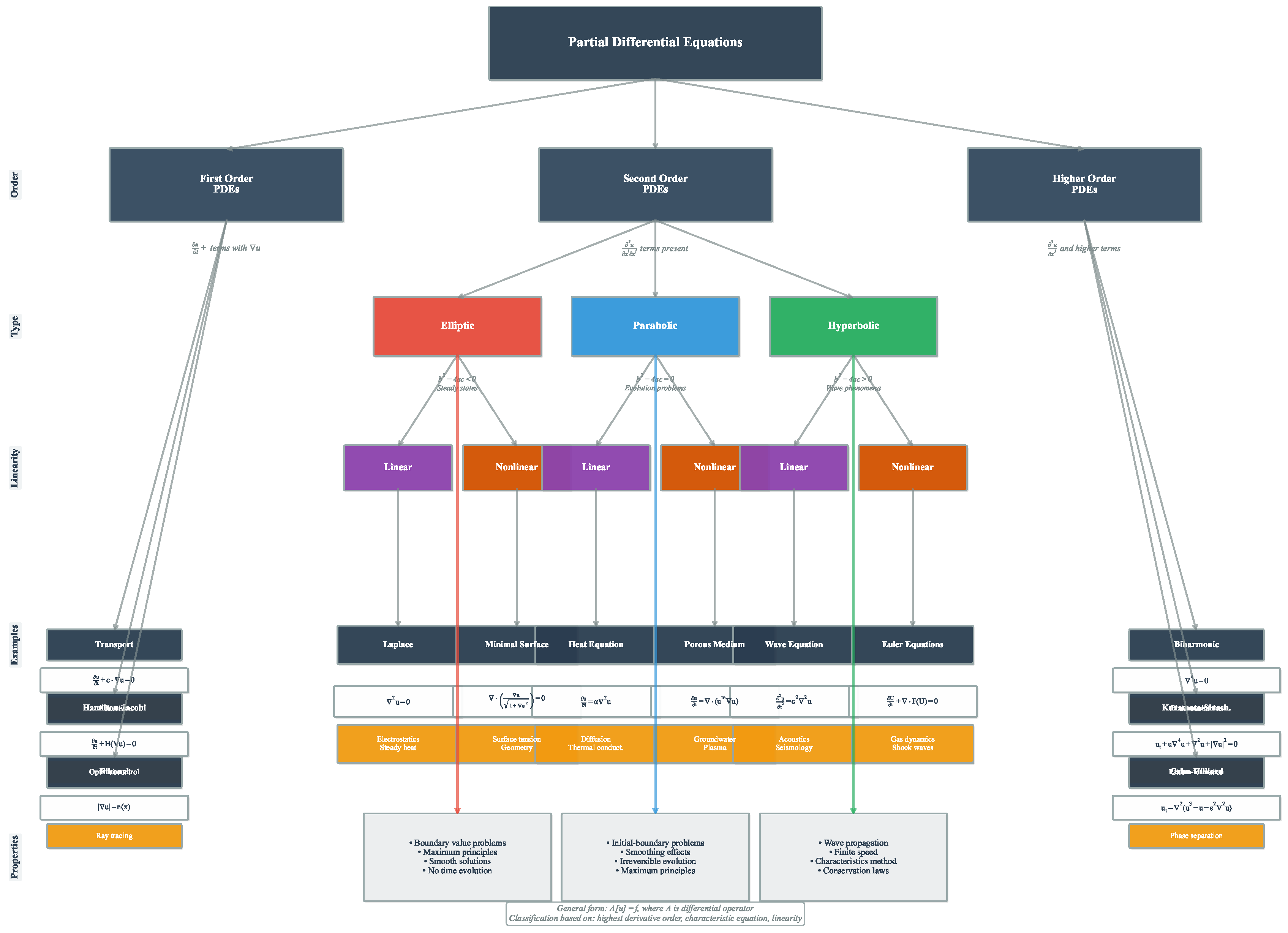

2. Partial Differential Equations

2.1. Definition and Mathematical Formulation

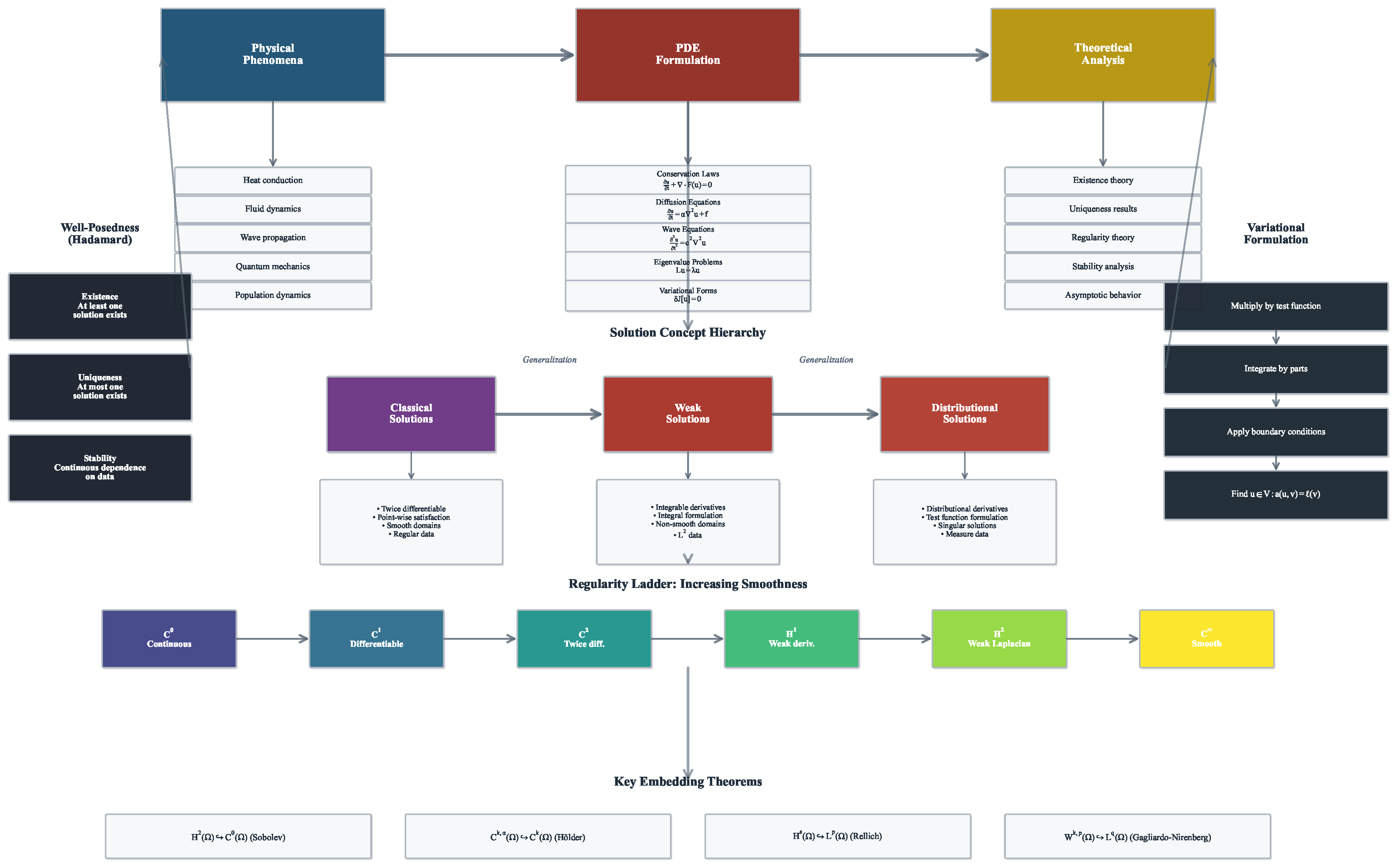

2.2. Theoretical Foundations

2.2.1. Existence and Uniqueness Theory

2.2.2. Regularity Theory

2.2.3. Qualitative Properties

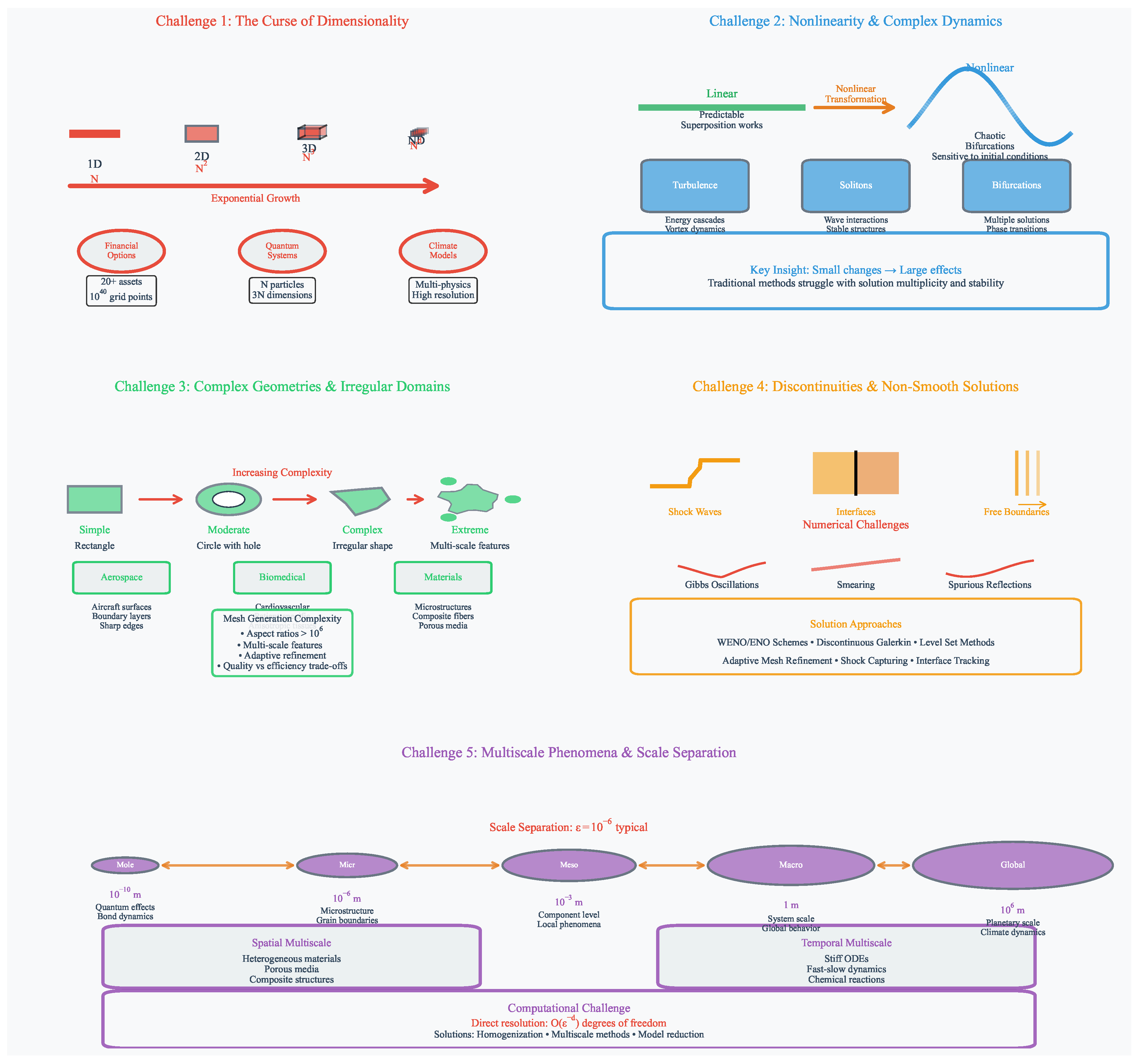

2.3. Fundamental Challenges in PDE Solution

2.3.1. High Dimensionality and the Curse of Dimensionality

2.3.2. Nonlinearity and Complex Solution Behavior

2.3.3. Complex Geometries and Irregular Domains

2.3.4. Discontinuities and Non-Smooth Solutions

2.3.5. Multiscale Phenomena

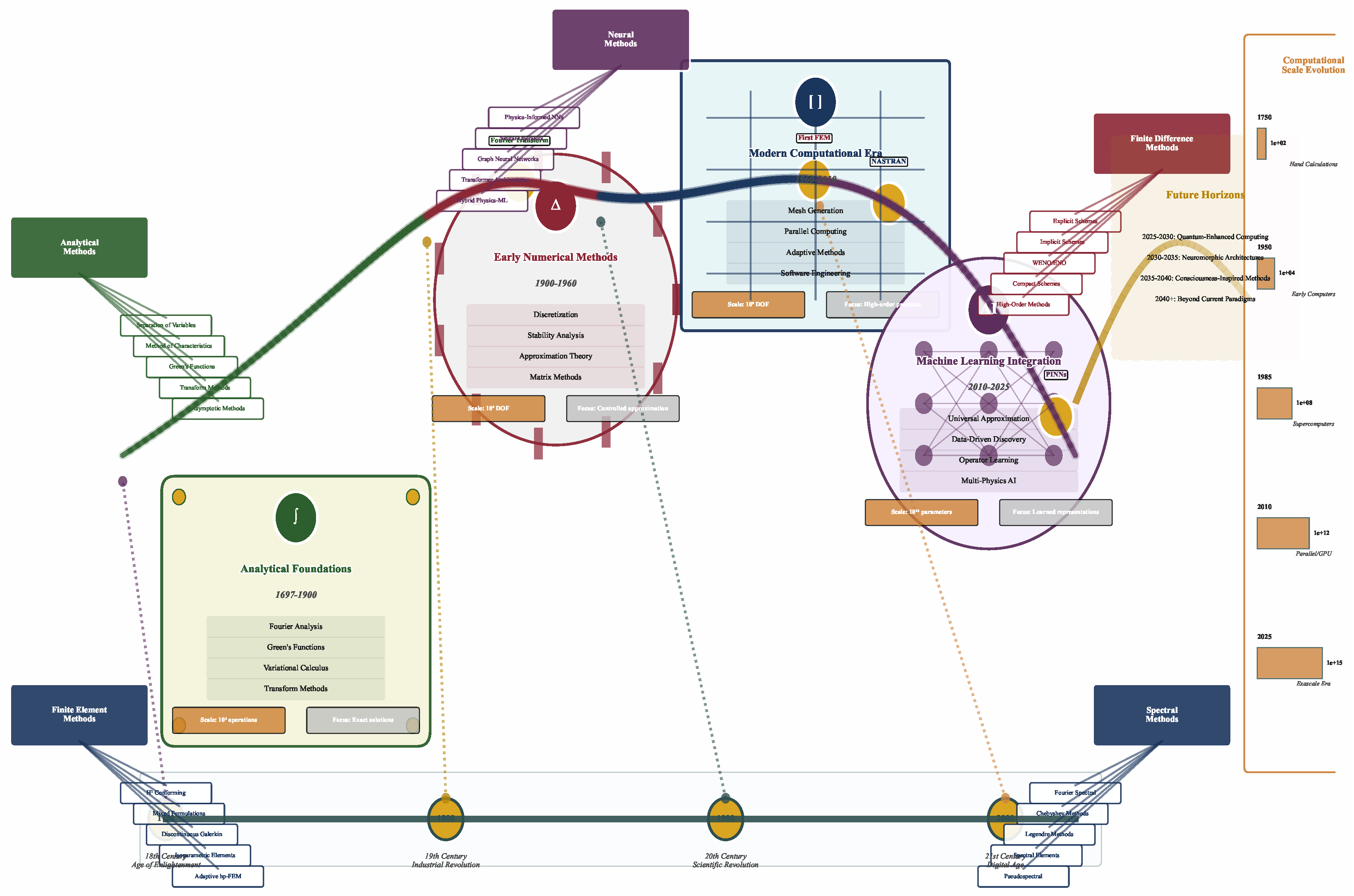

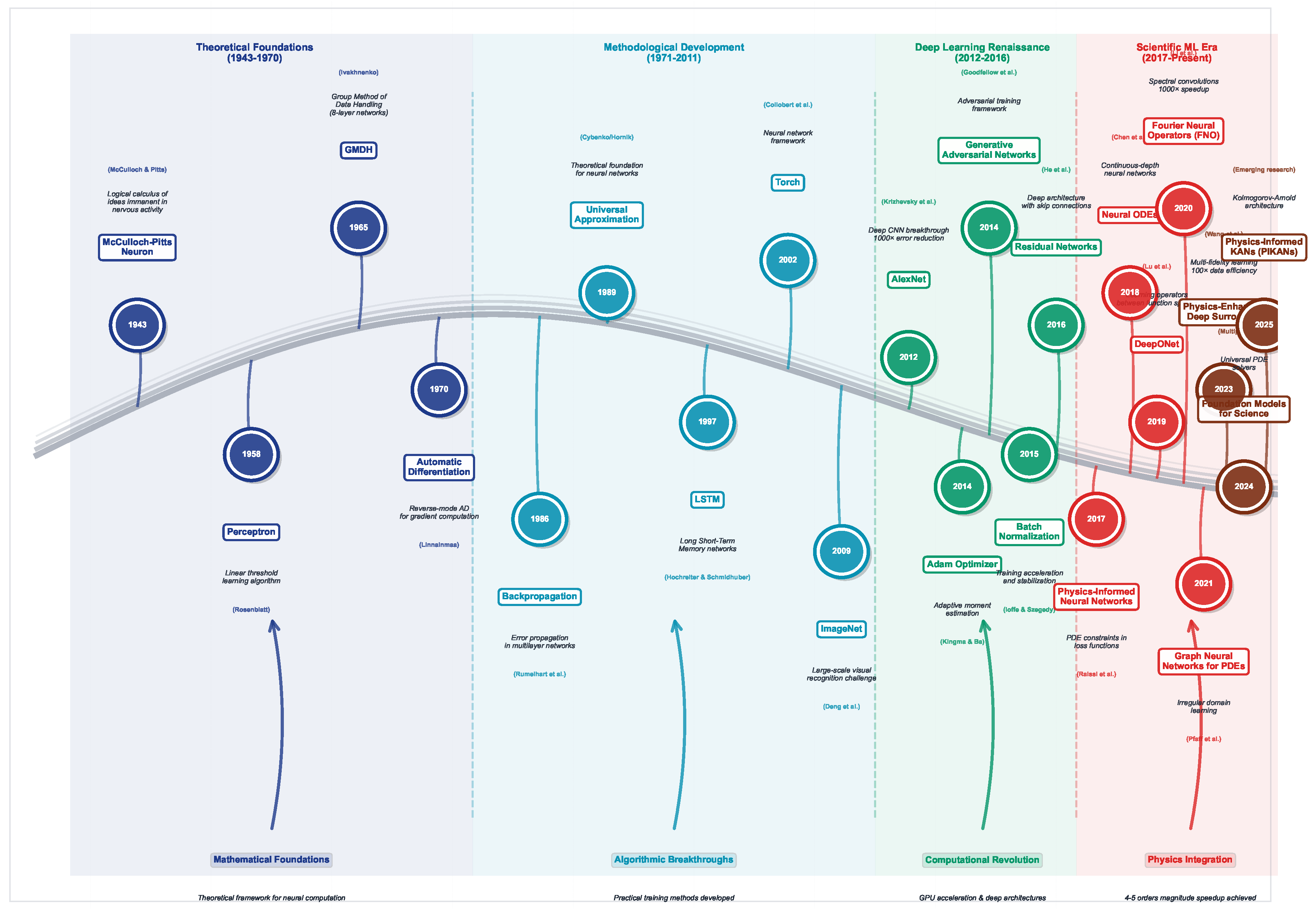

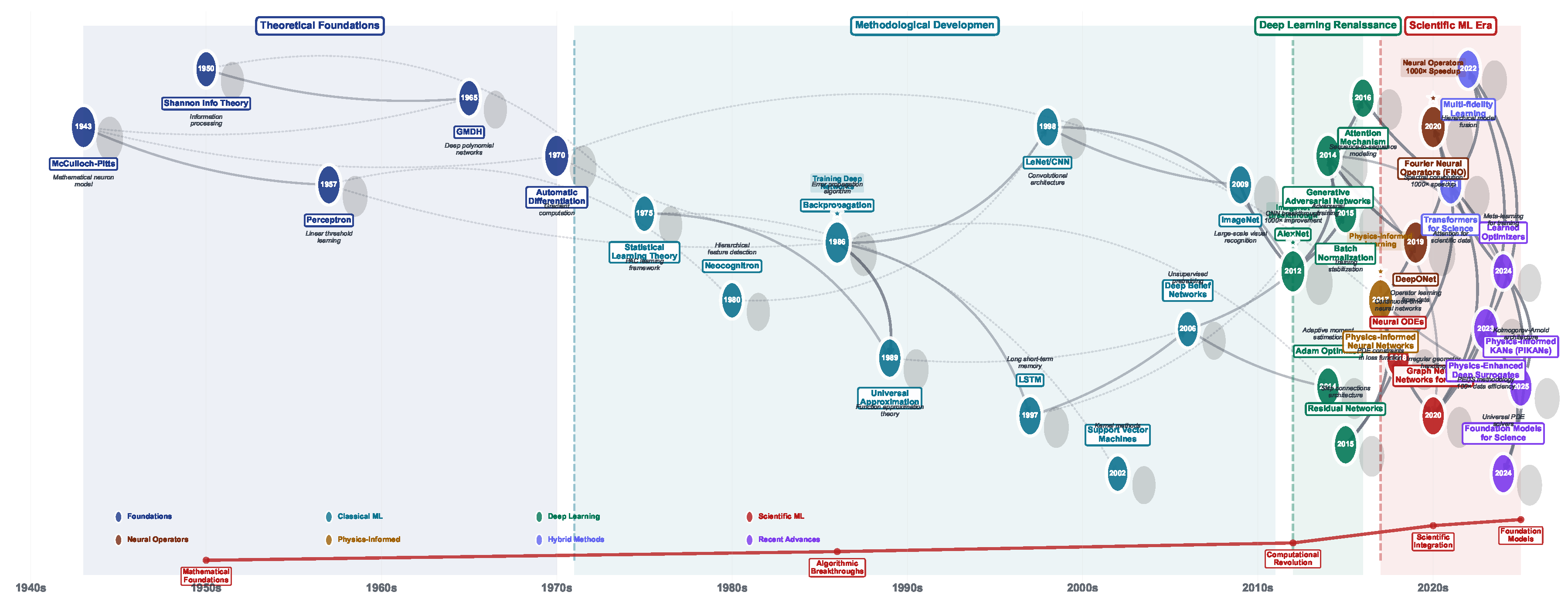

3. Historical Evolution of Solution Methods

3.1. Analytical Solutions and Classical Methods

3.2. Classical Numerical Methods

3.3. Modern Computational Developments

3.4. Emergence of Machine Learning Approaches

4. Advancement of Computational Learning Paradigms

4.1. Foundational Era (1943–1970): Theoretical Underpinnings

4.2. Methodological Development (1971–2011): Algorithm Maturation

4.3. Deep Learning Renaissance (2012–2016): Computational Breakthroughs

4.4. Scientific Machine Learning Era (2017–Present): Domain-Specific Innovation

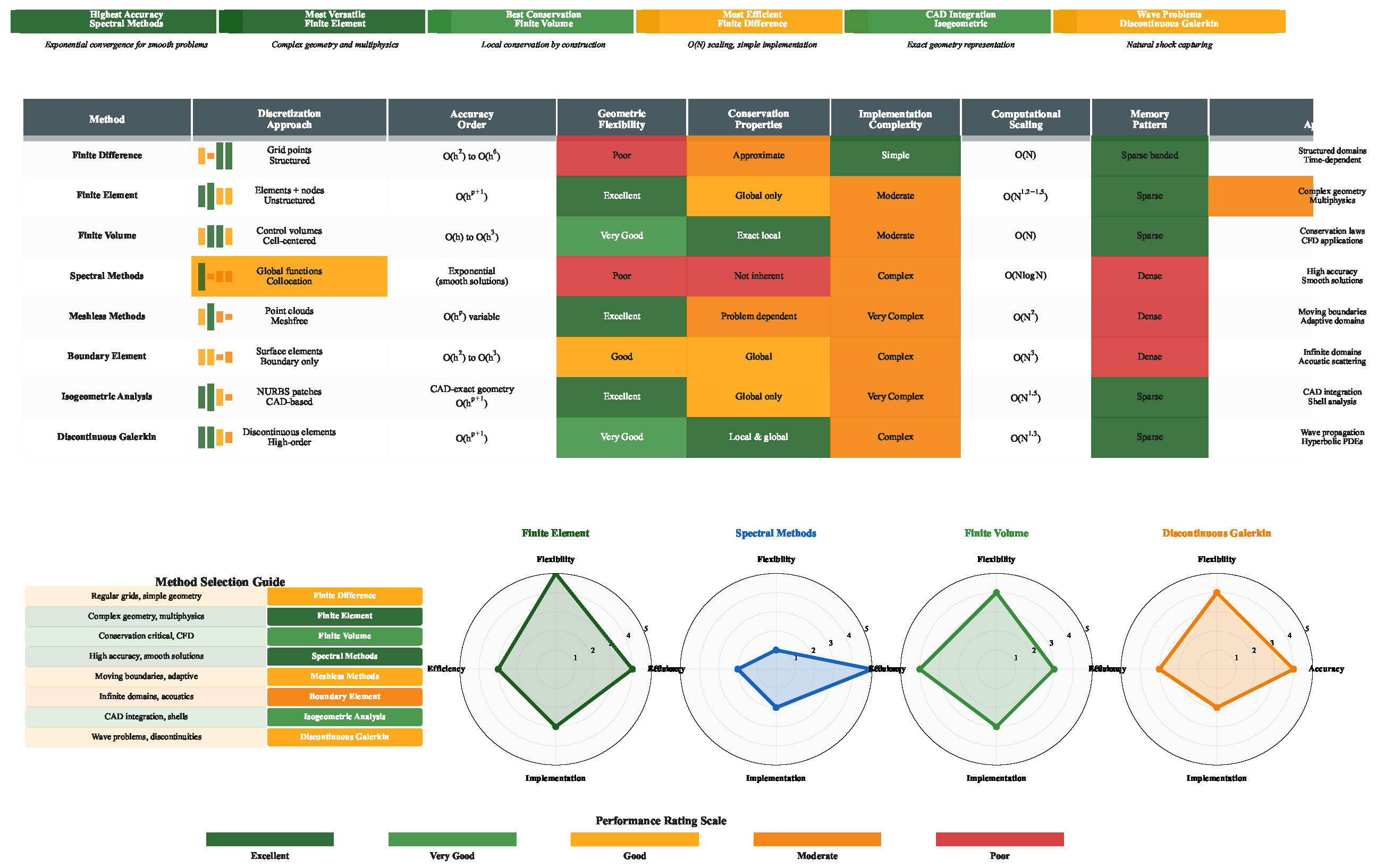

5. Traditional Methods for Solving PDEs

5.1. Finite Difference Methods

5.1.1. Methodology and Mathematical Foundation

5.1.2. Advanced Finite Difference Schemes

5.1.3. Strengths and Advantages

5.1.4. Limitations and Challenges

5.2. Finite Element Methods

5.2.1. Methodology and Variational Foundation

5.2.2. Higher-Order and Adaptive Methods

5.2.3. Strengths and Comparative Advantages

5.2.4. Limitations and Implementation Challenges

5.3. Finite Volume and Conservative Methods

5.3.1. Methodology and Conservation Principles

5.3.2. Cell-Centered and Vertex-Centered Approaches

5.3.3. Strengths and Conservative Properties

5.3.4. Limitations and Implementation Challenges

5.4. Spectral and High-Order Methods

5.4.1. Mathematical Foundation and Implementation

5.4.2. Spectral Element and Advanced Methods

5.4.3. Strengths and Superior Accuracy

5.4.4. Limitations and Challenges

5.5. Advanced Computational Strategies

5.5.1. Adaptive Mesh Refinement

5.5.2. Multigrid Methods

- Pre-smooth:

- Restrict residual:

- Solve coarse problem:

- Interpolate and correct:

- Post-smooth:

5.5.3. Strengths of Advanced Strategies

5.5.4. Implementation Complexities

5.6. Meshless Methods

5.6.1. Fundamental Principles

5.6.2. Specific Meshless Approaches

5.6.3. Advantages of Meshless Approaches

5.6.4. Computational and Theoretical Challenges

5.7. Specialized Classical Methods

5.7.1. Boundary Element Method (BEM)

5.7.2. Isogeometric Analysis (IGA)

5.7.3. Extended Finite Element Method (XFEM)

5.8. Summary and Outlook

6. Critical Evaluation of Classical PDE Solvers

6.1. Computational Complexity Analysis: Beyond Asymptotic Bounds

6.2. Mesh Adaptivity: Intelligence in Computational Resource Allocation

6.3. Uncertainty Quantification: From Afterthought to Integral Design

6.4. Nonlinearity Handling: The Persistent Challenge

6.5. Theoretical Foundations: Rigor to Meet Reality

6.6. Implementation Complexity: The Hidden Cost of Sophistication

6.7. Memory Efficiency and Architectural Considerations

6.8. Performance Metrics Beyond Convergence Rates

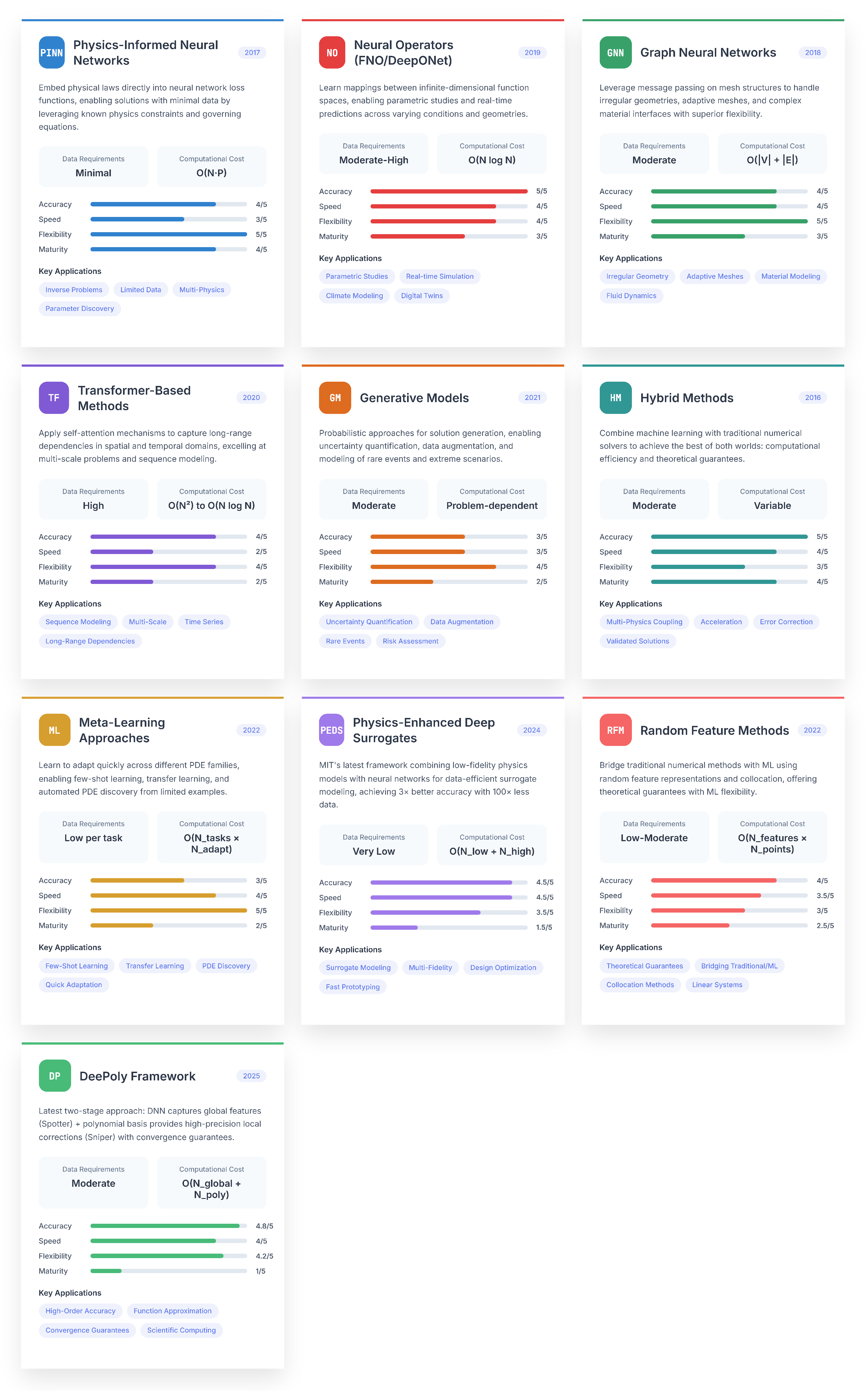

7. Machine Learning-Based PDE Solvers

7.1. Physics-Informed Neural Networks (PINNs)

7.1.1. Core PINN Framework and Variants

Physics-Informed Neural Networks (PINNs)

Variational PINNs (VPINNs)

Conservative PINNs (CPINNs)

Deep Ritz Method

Weak Adversarial Networks (WANs)

Extended PINNs (XPINNs)

Multi-fidelity PINNs

Adaptive PINNs

7.1.2. Strengths and Advantages of PINNs

7.1.3. Limitations and Challenges of PINNs

7.2. Neural Operator Methods

7.2.1. Principal Neural Operator Architectures

Fourier Neural Operator (FNO)

Deep Operator Network (DeepONet)

Graph Neural Operator (GNO)

Multipole Graph Networks (MGN)

Neural Integral Operators

Wavelet Neural Operator

Transformer Neural Operator

Latent Space Model Neural Operators

7.2.2. Strengths and Advantages of Neural Operators

7.2.3. Limitations and Challenges of Neural Operators

7.3. Graph Neural Network Approaches

7.3.1. Key GNN Architectures for PDEs

MeshGraphNets

Neural Mesh Refinement

Multiscale GNNs

Physics-Informed GNNs

Geometric Deep Learning for PDEs

Simplicial Neural Networks

7.3.2. Strengths and Advantages of GNN Approaches

7.3.3. Limitations and Challenges of GNN Approaches

7.4. Transformer and Attention-Based Methods

7.4.1. Transformer Variants for PDEs

Galerkin Transformer

Factorized FNO

U-FNO

Operator Transformer

PDEformer

7.4.2. Strengths and Advantages of Transformer Methods

7.4.3. Limitations and Challenges of Transformer Methods

7.5. Generative and Probabilistic Models

7.5.1. Probabilistic PDE Solving Approaches

Score-based PDE Solvers

Variational Autoencoders (VAEs) for PDEs

Normalizing Flows for PDEs

Neural Stochastic PDEs

Bayesian Neural Networks for PDEs

7.5.2. Strengths and Advantages of Generative Models

7.5.3. Limitations and Challenges of Generative Models

7.6. Hybrid and Multi-Physics Methods

7.6.1. Integration Strategies and Architectures

Neural-FEM Coupling

Multiscale Neural Networks

Neural Homogenization

Multi-fidelity Networks

Physics-Guided Networks

7.6.2. Strengths and Advantages of Hybrid Methods

7.6.3. Limitations and Challenges of Hybrid Methods

7.7. Meta-Learning and Few-Shot Methods

7.7.1. Meta-Learning Strategies for PDEs

Model-Agnostic Meta-Learning (MAML) for PDEs

Prototypical Networks for PDEs

Neural Processes for PDEs

Hypernetworks for PDEs

7.7.2. Advantages of Meta-Learning

7.7.3. Challenges of Meta-Learning

7.8. Physics-Enhanced Deep Surrogates (PEDS)

7.8.1. Core PEDS Framework and Mathematical Formulation

Fundamental Architecture

Multi-fidelity Integration Strategy

Active Learning Integration

7.8.2. Strengths and Advantages of PEDS

7.8.3. Limitations and Challenges of PEDS

7.9. Random Feature Methods (RFM)

7.9.1. Mathematical Foundation and Core Algorithm

Random Feature Representation

Collocation-Based Training

Multi-scale Enhancement

Adaptive Weight Rescaling

Linear System Solution

7.9.2. Strengths and Advantages of RFM

7.9.3. Limitations and Challenges of RFM

7.10. DeePoly Framework

7.10.1. Two-Stage Architecture and Mathematical Framework

Stage 1: Spotter Network for Global Feature Extraction

Stage 2: Sniper Polynomial Refinement

Linear Optimization in Combined Space

Adaptive Basis Construction

Time-Dependent Extensions

7.10.2. Strengths and Advantages of DeePoly

7.10.3. Limitations and Challenges of DeePoly

7.11. Specialized Architectures

7.11.1. Novel Architectural Approaches

Convolutional Neural Operators

Recurrent Neural PDEs

Capsule Networks for PDEs

Neural ODEs for PDEs

Quantum Neural Networks for PDEs

7.11.2. Strengths and Advantages of Specialized Architectures

7.11.3. Limitations and Challenges of Specialized Architectures

8. Critical Analysis of Machine Learning-Based PDE Solvers

8.1. Architectural Foundations and Computational Complexity

8.2. Accuracy Landscape: From Machine Precision to Approximations

8.3. Multiscale Capability: The Persistent Challenge

8.4. Nonlinearity Handling: Beyond Linearization

8.5. Uncertainty Quantification: The Achilles’ Heel

8.6. Inference Speed: The Compelling Advantage

8.7. Implementation Complexity: The Hidden Cost

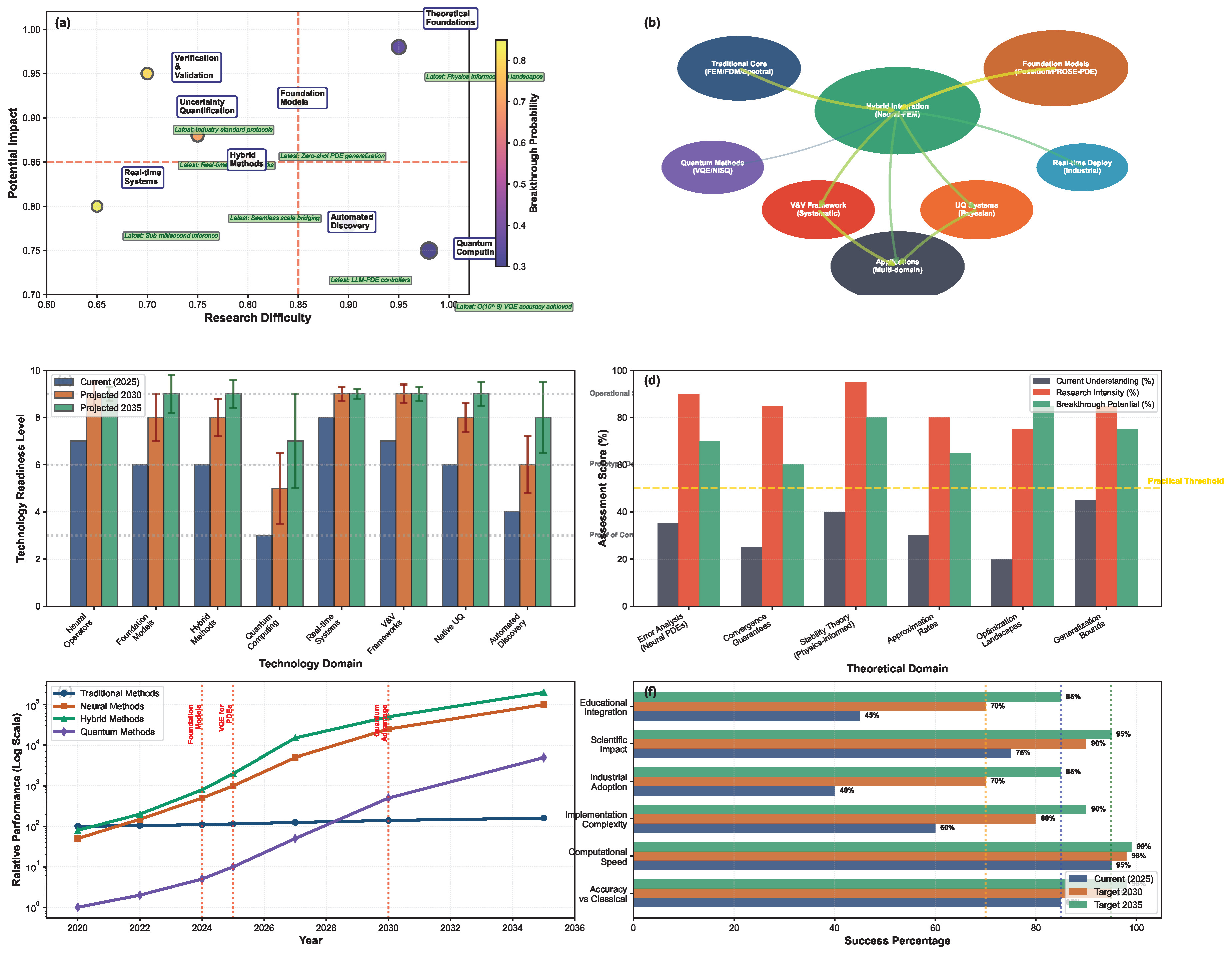

9. Synthesis and Comparative Framework

9.1. Performance Envelopes and Optimal Domains

9.2. The Accuracy-Efficiency-Robustness Trilemma

9.3. Hybrid Paradigms: The Path to Synthesis

9.4. Theoretical Foundations and Open Questions

10. Future Perspectives

10.1. Toward Foundation Models for PDEs

10.2. Quantum Computing and Neuromorphic Architectures

10.3. Automated Scientific Exploration and Inverse Design

10.4. Extreme-Scale Computing and Algorithmic Resilience

Conclusion

Acknowledgments

References

- Vallis, G.K. Atmospheric and oceanic fluid dynamics; Cambridge University Press, 2017.

- Majda, A. Introduction to PDEs and Waves for the Atmosphere and Ocean; Vol. 9, American Mathematical Soc., 2003.

- Cazorla, C.; Boronat, J. Simulation and understanding of atomic and molecular quantum crystals. Reviews of Modern Physics 2017, 89, 035003. [CrossRef]

- Powell, J.L.; Crasemann, B. Quantum mechanics; Courier Dover Publications, 2015.

- Lemarié-Rieusset, P.G. The Navier-Stokes problem in the 21st century; Chapman and Hall/CRC, 2023.

- Tsai, T.P. Lectures on Navier-Stokes equations; Vol. 192, American Mathematical Soc., 2018.

- Huray, P.G. Maxwell’s equations; John Wiley & Sons, 2009.

- Fitzpatrick, R. Maxwell’s Equations and the Principles of Electromagnetism; Jones & Bartlett Publishers, 2008.

- Kondo, S.; Miura, T. Reaction-diffusion model as a framework for understanding biological pattern formation. science 2010, 329, 1616–1620. [CrossRef]

- Golbabai, A.; Nikan, O.; Nikazad, T. Numerical analysis of time fractional Black–Scholes European option pricing model arising in financial market. Computational and Applied Mathematics 2019, 38, 1–24.

- Rude, U.; Willcox, K.; McInnes, L.C.; Sterck, H.D. Research and education in computational science and engineering. Siam Review 2018, 60, 707–754. [CrossRef]

- Alber, M.; Buganza Tepole, A.; Cannon, W.R.; De, S.; Dura-Bernal, S.; Garikipati, K.; Karniadakis, G.; Lytton, W.W.; Perdikaris, P.; Petzold, L.; et al. Integrating machine learning and multiscale modeling—perspectives, challenges, and opportunities in the biological, biomedical, and behavioral sciences. NPJ digital medicine 2019, 2, 115.

- Downey, A. Think complexity: complexity science and computational modeling; " O’Reilly Media, Inc.", 2018.

- Faroughi, S.A.; Pawar, N.; Fernandes, C.; Raissi, M.; Das, S.; Kalantari, N.K.; Mahjour, S.K. Physics-guided, physics-informed, and physics-encoded neural networks in scientific computing. arXiv preprint arXiv:2211.07377 2022.

- Cai, S.; Mao, Z.; Wang, Z.; Yin, M.; Karniadakis, G.E. Physics-informed neural networks (PINNs) for fluid mechanics: A review. Acta Mechanica Sinica 2021, 37, 1727–1738.

- Zhao, C.; Zhang, F.; Lou, W.; Wang, X.; Yang, J. A comprehensive review of advances in physics-informed neural networks and their applications in complex fluid dynamics. Physics of Fluids 2024, 36. [CrossRef]

- Strauss, W.A. Partial differential equations: An introduction; John Wiley & Sons, 2007.

- Olver, P.J.; et al. Introduction to partial differential equations; Vol. 1, Springer, 2014.

- Dupaigne, L. Stable solutions of elliptic partial differential equations; CRC press, 2011.

- Ponce, A.C. Elliptic PDEs, measures and capacities; 2016.

- Nochetto, R.H.; Otárola, E.; Salgado, A.J. A PDE approach to space-time fractional parabolic problems. SIAM Journal on Numerical Analysis 2016, 54, 848–873.

- Perthame, B.; Perthame, B. Parabolic equations in biology; Springer, 2015.

- El-Farra, N.H.; Armaou, A.; Christofides, P.D. Analysis and control of parabolic PDE systems with input constraints. Automatica 2003, 39, 715–725.

- Munteanu, I. Boundary stabilization of parabolic equations; Springer, 2019.

- Nobile, F.; Tempone, R. Analysis and implementation issues for the numerical approximation of parabolic equations with random coefficients. International journal for numerical methods in engineering 2009, 80, 979–1006. [CrossRef]

- Godlewski, E.; Raviart, P.A. Numerical approximation of hyperbolic systems of conservation laws; Vol. 118, Springer Science & Business Media, 2013.

- LeFloch, P.G. Hyperbolic Systems of Conservation Laws: The theory of classical and nonclassical shock waves; Springer Science & Business Media, 2002.

- LeVeque, R.J. Finite volume methods for hyperbolic problems; Vol. 31, Cambridge university press, 2002.

- Drachev, V.P.; Podolskiy, V.A.; Kildishev, A.V. Hyperbolic metamaterials: new physics behind a classical problem. Optics express 2013, 21, 15048–15064.

- Schneider, G.; Uecker, H. Nonlinear PDEs; Vol. 182, American Mathematical Soc., 2017.

- Logan, J.D. An introduction to nonlinear partial differential equations; John Wiley & Sons, 2008.

- Carinena, J.F.; Grabowski, J.; Marmo, G. Superposition rules, Lie theorem, and partial differential equations. Reports on Mathematical Physics 2007, 60, 237–258. [CrossRef]

- Anderson, R.; Harnad, J.; Winternitz, P. Systems of ordinary differential equations with nonlinear superposition principles. Physica D: Nonlinear Phenomena 1982, 4, 164–182.

- Saad, Y. Iterative methods for sparse linear systems; SIAM, 2003.

- Kabanikhin, S.I. Definitions and examples of inverse and ill-posed problems 2008.

- Okereke, M.; Keates, S.; Okereke, M.; Keates, S. Boundary conditions. Finite Element Applications: A Practical Guide to the FEM Process 2018, pp. 243–297.

- Graham, I.G.; Lechner, P.O.; Scheichl, R. Domain decomposition for multiscale PDEs. Numerische Mathematik 2007, 106, 589–626. [CrossRef]

- Tanaka, M.; Sladek, V.; Sladek, J. Regularization techniques applied to boundary element methods 1994.

- Christofides, P.D.; Chow, J. Nonlinear and robust control of PDE systems: Methods and applications to transport-reaction processes. Appl. Mech. Rev. 2002, 55, B29–B30. [CrossRef]

- Klainerman, S. PDE as a unified subject. In Visions in Mathematics: GAFA 2000 Special Volume, Part I; Springer, 2010; pp. 279–315.

- Öffner, P. Approximation and stability properties of numerical methods for hyperbolic conservation laws; Springer Nature, 2023.

- Mazumder, S. Numerical methods for partial differential equations: finite difference and finite volume methods; Academic Press, 2015.

- Karniadakis, G.E.; Kevrekidis, I.G.; Lu, L.; Perdikaris, P.; Wang, S.; Yang, L. Physics-informed machine learning. Nature Reviews Physics 2021, 3, 422–440.

- Markidis, S. The old and the new: Can physics-informed deep-learning replace traditional linear solvers? Frontiers in big Data 2021, 4, 669097.

- Huang, S.; Feng, W.; Tang, C.; He, Z.; Yu, C.; Lv, J. Partial differential equations meet deep neural networks: A survey. IEEE Transactions on Neural Networks and Learning Systems 2025.

- Lawal, Z.K.; Yassin, H.; Lai, D.T.C.; Che Idris, A. Physics-informed neural network (PINN) evolution and beyond: A systematic literature review and bibliometric analysis. Big Data and Cognitive Computing 2022, 6, 140. [CrossRef]

- Toscano, J.D.; Oommen, V.; Varghese, A.J.; Zou, Z.; Ahmadi Daryakenari, N.; Wu, C.; Karniadakis, G.E. From pinns to pikans: Recent advances in physics-informed machine learning. Machine Learning for Computational Science and Engineering 2025, 1, 1–43. [CrossRef]

- Gonon, L.; Jentzen, A.; Kuckuck, B.; Liang, S.; Riekert, A.; von Wurstemberger, P. An Overview on Machine Learning Methods for Partial Differential Equations: from Physics Informed Neural Networks to Deep Operator Learning. arXiv preprint arXiv:2408.13222 2024.

- Kovachki, N.; Li, Z.; Liu, B.; Azizzadenesheli, K.; Bhattacharya, K.; Stuart, A.; Anandkumar, A. Neural operator: Learning maps between function spaces with applications to pdes. Journal of Machine Learning Research 2023, 24, 1–97.

- Willard, J.; Jia, X.; Xu, S.; Steinbach, M.; Kumar, V. Integrating physics-based modeling with machine learning: A survey. arXiv preprint arXiv:2003.04919 2020, 1, 1–34.

- Muther, T.; Dahaghi, A.K.; Syed, F.I.; Van Pham, V. Physical laws meet machine intelligence: current developments and future directions. Artificial Intelligence Review 2023, 56, 6947–7013.

- Hansen, D.; Maddix, D.C.; Alizadeh, S.; Gupta, G.; Mahoney, M.W. Learning physical models that can respect conservation laws. In Proceedings of the International Conference on Machine Learning. PMLR, 2023, pp. 12469–12510.

- Azevedo, B.F.; Rocha, A.M.A.; Pereira, A.I. Hybrid approaches to optimization and machine learning methods: a systematic literature review. Machine Learning 2024, 113, 4055–4097.

- von Rueden, L.; Mayer, S.; Sifa, R.; Bauckhage, C.; Garcke, J. Combining machine learning and simulation to a hybrid modelling approach: Current and future directions. In Proceedings of the Advances in Intelligent Data Analysis XVIII: 18th International Symposium on Intelligent Data Analysis, IDA 2020, Konstanz, Germany, April 27–29, 2020, Proceedings 18. Springer, 2020, pp. 548–560.

- Rai, R.; Sahu, C.K. Driven by data or derived through physics? a review of hybrid physics guided machine learning techniques with cyber-physical system (cps) focus. IEEe Access 2020, 8, 71050–71073. [CrossRef]

- Mattheij, R.; Molenaar, J. Ordinary differential equations in theory and practice; SIAM, 2002.

- Ashyralyev, A.; Sobolevskii, P.E. Well-posedness of parabolic difference equations; Vol. 69, Birkhäuser, 2012.

- Fernández-Real, X.; Ros-Oton, X. Regularity theory for elliptic PDE; 2022.

- Kozono, H.; Yanagisawa, T. Generalized Lax-Milgram theorem in Banach spaces and its application to the elliptic system of boundary value problems. manuscripta mathematica 2013, 141, 637–662.

- Schneider, C. Theory and Background Material for PDEs. Beyond Sobolev and Besov: Regularity of Solutions of PDEs and Their Traces in Function Spaces 2021, pp. 115–143.

- Mugnolo, D. Semigroup methods for evolution equations on networks; Vol. 20, Springer, 2014.

- Pavel, N.H. Nonlinear evolution operators and semigroups: Applications to partial differential equations; Vol. 1260, Springer, 2006.

- Xiao, T.J.; Liang, J. The Cauchy problem for higher order abstract differential equations; Springer, 2013.

- Pata, V.; et al. Fixed point theorems and applications; Vol. 116, Springer, 2019.

- Fabian, M.; Habala, P.; Hájek, P.; Montesinos, V.; Zizler, V. Banach space theory: The basis for linear and nonlinear analysis; Springer Science & Business Media, 2011.

- Sarra, S.A. The method of characteristics with applications to conservation laws. Journal of Online mathematics and its Applications 2003, 3, 1–16.

- Showalter, R.E. Monotone operators in Banach space and nonlinear partial differential equations; Vol. 49, American Mathematical Soc., 2013.

- Antontsev, S.N.; Díaz, J.I.; Shmarev, S.; Kassab, A. Energy Methods for Free Boundary Problems: Applications to Nonlinear PDEs and Fluid Mechanics. Progress in Nonlinear Differential Equations and Their Applications, Vol 48. Appl. Mech. Rev. 2002, 55, B74–B75.

- Wang, X.J.; et al. Schauder estimates for elliptic and parabolic equations. Chinese Annals of Mathematics-Series B 2006, 27, 637. [CrossRef]

- Brezis, H.; Brézis, H. Functional analysis, Sobolev spaces and partial differential equations; Vol. 2, Springer, 2011.

- Sell, G.R.; You, Y. Dynamics of evolutionary equations; Vol. 143, Springer Science & Business Media, 2013.

- Kresin, G.; Maz_i_a_, V. Maximum principles and sharp constants for solutions of elliptic and parabolic systems; Number 183, American Mathematical Soc., 2012.

- Pucci, P.; Serrin, J. The strong maximum principle revisited. Journal of Differential Equations 2004, 196, 1–66. [CrossRef]

- Han, J.; Jentzen, A.; E, W. Solving high-dimensional partial differential equations using deep learning. Proceedings of the National Academy of Sciences 2018, 115, 8505–8510.

- Schwab, C.; Gittelson, C.J. Sparse tensor discretizations of high-dimensional parametric and stochastic PDEs. Acta Numerica 2011, 20, 291–467.

- Continentino, M. Quantum scaling in many-body systems; Cambridge University Press, 2017.

- Frey, R.; Polte, U. Nonlinear Black–Scholes equations in finance: Associated control problems and properties of solutions. SIAM Journal on Control and Optimization 2011, 49, 185–204.

- Berezin, F.A.; Shubin, M. The Schrödinger Equation; Vol. 66, Springer Science & Business Media, 2012.

- Chen, Y.; Khoo, Y. Combining Monte Carlo and Tensor-network Methods for Partial Differential Equations via Sketching. arXiv preprint arXiv:2305.17884 2023.

- Hu, Z.; Shukla, K.; Karniadakis, G.E.; Kawaguchi, K. Tackling the curse of dimensionality with physics-informed neural networks. Neural Networks 2024, 176, 106369.

- Hunt, J.C.R.; Vassilicos, J. Kolmogorov’s contributions to the physical and geometrical understanding of small-scale turbulence and recent developments. Proceedings of the Royal Society of London. Series A: Mathematical and Physical Sciences 1991, 434, 183–210.

- Klewicki, J.C. Reynolds number dependence, scaling, and dynamics of turbulent boundary layers 2010.

- Sulem, C.; Sulem, P.L. The nonlinear Schrödinger equation: self-focusing and wave collapse; Vol. 139, Springer Science & Business Media, 2007.

- Lim, Y.; Le Lann, J.; Joulia, X. Accuracy, temporal performance and stability comparisons of discretization methods for the numerical solution of Partial Differential Equations (PDEs) in the presence of steep moving fronts. Computers & Chemical Engineering 2001, 25, 1483–1492.

- Babuska, I.; Flaherty, J.E.; Henshaw, W.D.; Hopcroft, J.E.; Oliger, J.E.; Tezduyar, T. Modeling, mesh generation, and adaptive numerical methods for partial differential equations; Vol. 75, Springer Science & Business Media, 2012.

- Hairer, E.; Hochbruck, M.; Iserles, A.; Lubich, C. Geometric numerical integration. Oberwolfach Reports 2006, 3, 805–882.

- Formaggia, L.; Saleri, F.; Veneziani, A. Solving numerical PDEs: problems, applications, exercises; Springer Science & Business Media, 2012.

- Martins, J.R. Aerodynamic design optimization: Challenges and perspectives. Computers & Fluids 2022, 239, 105391.

- Sóbester, A.; Forrester, A.I. Aircraft aerodynamic design: geometry and optimization; John Wiley & Sons, 2014.

- Sikkandar, M.Y.; Sudharsan, N.M.; Begum, S.S.; Ng, E. Computational fluid dynamics: A technique to solve complex biomedical engineering problems-A review. WSEAS Transactions on Biology and Biomedicine 2019, 16, 121–137.

- Kainz, W.; Neufeld, E.; Bolch, W.E.; Graff, C.G.; Kim, C.H.; Kuster, N.; Lloyd, B.; Morrison, T.; Segars, P.; Yeom, Y.S.; et al. Advances in computational human phantoms and their applications in biomedical engineering—a topical review. IEEE transactions on radiation and plasma medical sciences 2018, 3, 1–23.

- Wittek, A.; Grosland, N.M.; Joldes, G.R.; Magnotta, V.; Miller, K. From finite element meshes to clouds of points: a review of methods for generation of computational biomechanics models for patient-specific applications. Annals of biomedical engineering 2016, 44, 3–15.

- Jeffrey, M.R.; et al. Modeling with nonsmooth dynamics; Springer, 2020.

- Keyes, D.E.; McInnes, L.C.; Woodward, C.; Gropp, W.; Myra, E.; Pernice, M.; Bell, J.; Brown, J.; Clo, A.; Connors, J.; et al. Multiphysics simulations: Challenges and opportunities. The International Journal of High Performance Computing Applications 2013, 27, 4–83.

- Abbasi, J.; Jagtap, A.D.; Moseley, B.; Hiorth, A.; Andersen, P.. Challenges and advancements in modeling shock fronts with physics-informed neural networks: A review and benchmarking study. arXiv preprint arXiv:2503.17379 2025. [CrossRef]

- Serre, D. Systems of Conservation Laws 1: Hyperbolicity, entropies, shock waves; Cambridge University Press, 1999.

- Christodoulou, D. The Euler equations of compressible fluid flow. Bulletin of the American Mathematical Society 2007, 44, 581–602. [CrossRef]

- Gottlieb, D.; Shu, C.W. On the Gibbs phenomenon and its resolution. SIAM review 1997, 39, 644–668.

- Shu, C.W. Essentially non-oscillatory and weighted essentially non-oscillatory schemes. Acta Numerica 2020, 29, 701–762. [CrossRef]

- Li, Z.; Ito, K. The immersed interface method: numerical solutions of PDEs involving interfaces and irregular domains; SIAM, 2006.

- De Borst, R. Challenges in computational materials science: Multiple scales, multi-physics and evolving discontinuities. Computational Materials Science 2008, 43, 1–15.

- Gupta, S.C. The classical Stefan problem: basic concepts, modelling and analysis with quasi-analytical solutions and methods; Vol. 45, Elsevier, 2017.

- Weinan, E. Principles of multiscale modeling; Cambridge University Press, 2011.

- Peng, G.C.; Alber, M.; Buganza Tepole, A.; Cannon, W.R.; De, S.; Dura-Bernal, S.; Garikipati, K.; Karniadakis, G.; Lytton, W.W.; Perdikaris, P.; et al. Multiscale modeling meets machine learning: What can we learn? Archives of Computational Methods in Engineering 2021, 28, 1017–1037. [CrossRef]

- Vanden-Eijnden, E. Heterogeneous multiscale methods: a review. Communications in Computational Physics2 (3) 2007, pp. 367–450.

- Huang, J.; Cao, L.; Yang, C. A multiscale algorithm for radiative heat transfer equation with rapidly oscillating coefficients. Applied Mathematics and Computation 2015, 266, 149–168.

- Pavliotis, G.A.; Stuart, A. Multiscale methods: averaging and homogenization; Vol. 53, Springer Science & Business Media, 2008.

- Kuehn, C.; et al. Multiple time scale dynamics; Vol. 191, Springer, 2015.

- Archibald, T.; Fraser, C.; Grattan-Guinness, I. The history of differential equations, 1670–1950. Oberwolfach reports 2005, 1, 2729–2794.

- Narasimhan, T.N. Fourier’s heat conduction equation: History, influence, and connections. Reviews of Geophysics 1999, 37, 151–172. [CrossRef]

- Garabedian, P.R. Partial differential equations; Vol. 325, American Mathematical Society, 2023.

- Duffy, D.G. Green’s functions with applications; Chapman and Hall/CRC, 2015.

- Brigham, E.O. The fast Fourier transform and its applications; Prentice-Hall, Inc., 1988.

- Bender, C.M.; Orszag, S.A. Advanced mathematical methods for scientists and engineers I: Asymptotic methods and perturbation theory; Springer Science & Business Media, 2013.

- Maslov, V.P. The Complex WKB Method for Nonlinear Equations I: Linear Theory; Vol. 16, Birkhäuser, 2012.

- Verhulst, F. Methods and applications of singular perturbations: boundary layers and multiple timescale dynamics; Vol. 50, Springer Science & Business Media, 2006.

- Van den Ende, J.; Kemp, R. Technological transformations in history: how the computer regime grew out of existing computing regimes. Research policy 1999, 28, 833–851.

- Benzi, M. Key moments in the history of numerical analysis. In Proceedings of the SIAM Applied Linear Algebra Conference, 2009, Vol. 5, p. 34.

- Thomas, J.W. Numerical partial differential equations: finite difference methods; Vol. 22, Springer Science & Business Media, 2013.

- Warming, R.F.; Hyett, B.J. The modified equation approach to the stability and accuracy analysis of finite-difference methods. Journal of computational physics 1974, 14, 159–179.

- Fenner, R.T. Finite element methods for engineers; World Scientific Publishing Company, 2013.

- Thomée, V. Galerkin finite element methods for parabolic problems; Vol. 25, Springer Science & Business Media, 2007.

- Grätsch, T.; Bathe, K.J. A posteriori error estimation techniques in practical finite element analysis. Computers & structures 2005, 83, 235–265.

- Guo, B. Spectral methods and their applications; World Scientific, 1998.

- Boyd, J.P. Chebyshev and Fourier spectral methods; Courier Corporation, 2001.

- Guinot, V. Godunov-type schemes: an introduction for engineers; Elsevier, 2003.

- Fulton, S.R.; Ciesielski, P.E.; Schubert, W.H. Multigrid methods for elliptic problems: A review. Monthly Weather Review 1986, 114, 943–959.

- Brandt, A.; Dinar, N. Multigrid solutions to elliptic flow problems. In Numerical methods for partial differential equations; Elsevier, 1979; pp. 53–147.

- Sarris, C.D. Adaptive mesh refinement in time-domain numerical electromagnetics; Morgan & Claypool Publishers, 2006.

- Venditti, D.A.; Darmofal, D.L. Grid adaptation for functional outputs: application to two-dimensional inviscid flows. Journal of Computational Physics 2002, 176, 40–69.

- Zhang, W.; Myers, A.; Gott, K.; Almgren, A.; Bell, J. AMReX: Block-structured adaptive mesh refinement for multiphysics applications. The International Journal of High Performance Computing Applications 2021, 35, 508–526. [CrossRef]

- Mathew, T.P. Domain decomposition methods for the numerical solution of partial differential equations; Springer, 2008.

- Lions, P.L.; et al. On the Schwarz alternating method. I. In Proceedings of the First international symposium on domain decomposition methods for partial differential equations. Paris, France, 1988, Vol. 1, p. 42.

- Pechstein, C. Finite and boundary element tearing and interconnecting solvers for multiscale problems; Vol. 90, Springer Science & Business Media, 2012.

- Badia, S.; Nguyen, H. Balancing domain decomposition by constraints and perturbation. SIAM Journal on Numerical Analysis 2016, 54, 3436–3464.

- Shu, C.W. Discontinuous Galerkin methods: general approach and stability. Numerical solutions of partial differential equations 2009, 201, 1–44.

- Cockburn, B.; Karniadakis, G.E.; Shu, C.W. Discontinuous Galerkin methods: theory, computation and applications; Vol. 11, Springer Science & Business Media, 2012.

- Li, Z.; Kovachki, N.; Azizzadenesheli, K.; Liu, B.; Bhattacharya, K.; Stuart, A.; Anandkumar, A. Fourier neural operator for parametric partial differential equations. arXiv preprint arXiv:2010.08895 2020.

- Li, Z.; Huang, D.Z.; Liu, B.; Anandkumar, A. Fourier neural operator with learned deformations for pdes on general geometries. Journal of Machine Learning Research 2023, 24, 1–26.

- McCulloch, W.S.; Pitts, W. A logical calculus of the ideas immanent in nervous activity. The bulletin of mathematical biophysics 1943, 5, 115–133. [CrossRef]

- Rosenblatt, F. The perceptron: a probabilistic model for information storage and organization in the brain. Psychological review 1958, 65, 386.

- Hebb, D.O. The organization of behavior: A neuropsychological theory; Psychology press, 2005.

- Ivakhnenko, A.G. Polynomial theory of complex systems. IEEE transactions on Systems, Man, and Cybernetics 2007, pp. 364–378.

- Linnainmaa, S. The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. PhD thesis, Master’s Thesis (in Finnish), Univ. Helsinki, 1970.

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning representations by back-propagating errors. nature 1986, 323, 533–536.

- Cybenko, G. Approximation by superpositions of a sigmoidal function. Mathematics of control, signals and systems 1989, 2, 303–314.

- Hornik, K.; Stinchcombe, M.; White, H. Multilayer feedforward networks are universal approximators. Neural networks 1989, 2, 359–366.

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural computation 1997, 9, 1735–1780.

- LeCun, Y.; Boser, B.; Denker, J.S.; Henderson, D.; Howard, R.E.; Hubbard, W.; Jackel, L.D. Backpropagation applied to handwritten zip code recognition. Neural computation 1989, 1, 541–551.

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems 2012, 25.

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 2014.

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: a simple way to prevent neural networks from overfitting. The journal of machine learning research 2014, 15, 1929–1958.

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the International conference on machine learning. pmlr, 2015, pp. 448–456.

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. Journal of Computational physics 2019, 378, 686–707. [CrossRef]

- Lu, L.; Jin, P.; Pang, G.; Zhang, Z.; Karniadakis, G.E. Learning nonlinear operators via DeepONet based on the universal approximation theorem of operators. Nature machine intelligence 2021, 3, 218–229.

- Pfaff, T.; Fortunato, M.; Sanchez-Gonzalez, A.; Battaglia, P. Learning mesh-based simulation with graph networks. In Proceedings of the International conference on learning representations, 2020.

- Pestourie, R.; Mroueh, Y.; Rackauckas, C.; Das, P.; Johnson, S.G. Physics-enhanced deep surrogates for partial differential equations. Nature Machine Intelligence 2023, 5, 1458–1465.

- Finn, C.; Abbeel, P.; Levine, S. Model-agnostic meta-learning for fast adaptation of deep networks. In Proceedings of the International conference on machine learning. PMLR, 2017, pp. 1126–1135.

- Rigas, S.; Papachristou, M.; Papadopoulos, T.; Anagnostopoulos, F.; Alexandridis, G. Adaptive training of grid-dependent physics-informed kolmogorov-arnold networks. IEEE Access 2024. [CrossRef]

- Jacob, B.; Howard, A.A.; Stinis, P. Spikans: Separable physics-informed kolmogorov-arnold networks. arXiv preprint arXiv:2411.06286 2024.

- Lele, S.K. Compact finite difference schemes with spectral-like resolution. Journal of computational physics 1992, 103, 16–42. [CrossRef]

- Don, W.S.; Borges, R. Accuracy of the weighted essentially non-oscillatory conservative finite difference schemes. Journal of Computational Physics 2013, 250, 347–372.

- Zienkiewicz, O.C.; Taylor, R.L.; Zhu, J.Z. The finite element method: its basis and fundamentals; Elsevier, 2005.

- Morin, P.; Nochetto, R.H.; Siebert, K.G. Convergence of adaptive finite element methods. SIAM review 2002, 44, 631–658. [CrossRef]

- Bangerth, W.; Rannacher, R. Adaptive finite element methods for differential equations; Springer Science & Business Media, 2003.

- Zeng, W.; Liu, G. Smoothed finite element methods (S-FEM): an overview and recent developments. Archives of Computational Methods in Engineering 2018, 25, 397–435.

- Barth, T.; Herbin, R.; Ohlberger, M. Finite volume methods: foundation and analysis. Encyclopedia of computational mechanics second edition 2018, pp. 1–60.

- Feng, X.; Feng, X. Cell-centered finite volume methods. Magnetohydrodynamic Modeling of the Solar Corona and Heliosphere 2020, pp. 125–337.

- Erath, C.; Praetorius, D. Adaptive vertex-centered finite volume methods with convergence rates. SIAM Journal on Numerical Analysis 2016, 54, 2228–2255.

- Elliott, D.F.; Rao, K.R. Fast transforms algorithms, analyses, applications; Elsevier, 1983.

- Van de Vosse, F.; Minev, P. Spectral element methods: theory and applications 1996.

- Kopriva, D.A. Algorithms for Non-Periodic Functions. In Implementing Spectral Methods for Partial Differential Equations: Algorithms for Scientists and Engineers; Springer, 2009; pp. 59–87.

- Sirca, S.; Horvat, M.; Širca, S.; Horvat, M. Spectral Methods for PDE. Computational Methods for Physicists: Compendium for Students 2012, pp. 575–620.

- Dubey, A.; Almgren, A.; Bell, J.; Berzins, M.; Brandt, S.; Bryan, G.; Colella, P.; Graves, D.; Lijewski, M.; Löffler, F.; et al. A survey of high level frameworks in block-structured adaptive mesh refinement packages. Journal of Parallel and Distributed Computing 2014, 74, 3217–3227.

- Vanek, P.; Mandel, J.; Brezina, M. Algebraic multigrid on unstructured meshes. UCD/CCM Report 1994, 34, 123–146.

- Mavriplis, D.J. Multigrid techniques for unstructured meshes. Technical report, 1995.

- Duarte, C.A.; Oden, J.T. A review of some meshless methods to solve partial differential equations; Texas Institute for Computational and Applied Mathematics Austin, TX, 1995.

- Li, H.; Mulay, S.S. Meshless methods and their numerical properties; CRC press, 2013.

- Alves, C.J. On the choice of source points in the method of fundamental solutions. Engineering analysis with boundary elements 2009, 33, 1348–1361. [CrossRef]

- Fornberg, B.; Flyer, N. Solving PDEs with radial basis functions. Acta Numerica 2015, 24, 215–258.

- Lind, S.J.; Rogers, B.D.; Stansby, P.K. Review of smoothed particle hydrodynamics: towards converged Lagrangian flow modelling. Proceedings of the royal society A 2020, 476, 20190801.

- Katsikadelis, J.T. The boundary element method for engineers and scientists: theory and applications; Academic Press, 2016.

- Agrawal, V.; Gautam, S.S. IGA: a simplified introduction and implementation details for finite element users. Journal of The Institution of Engineers (India): Series C 2019, 100, 561–585.

- Cervera, M.; Barbat, G.; Chiumenti, M.; Wu, J.Y. A comparative review of XFEM, mixed FEM and phase-field models for quasi-brittle cracking. Archives of Computational Methods in Engineering 2022, 29, 1009–1083. [CrossRef]

- Cuomo, S.; Di Cola, V.S.; Giampaolo, F.; Rozza, G.; Raissi, M.; Piccialli, F. Scientific machine learning through physics–informed neural networks: Where we are and what’s next. Journal of Scientific Computing 2022, 92, 88. [CrossRef]

- Kharazmi, E.; Zhang, Z.; Karniadakis, G.E. Variational physics-informed neural networks for solving partial differential equations. arXiv preprint arXiv:1912.00873 2019.

- Rojas, S.; Maczuga, P.; Muñoz-Matute, J.; Pardo, D.; Paszyński, M. Robust variational physics-informed neural networks. Computer Methods in Applied Mechanics and Engineering 2024, 425, 116904.

- Jagtap, A.D.; Kharazmi, E.; Karniadakis, G.E. Conservative physics-informed neural networks on discrete domains for conservation laws: Applications to forward and inverse problems. Computer Methods in Applied Mechanics and Engineering 2020, 365, 113028.

- Ji, X.; Jiao, Y.; Lu, X.; Song, P.; Wang, F. Deep ritz method for elliptical multiple eigenvalue problems. Journal of Scientific Computing 2024, 98, 48.

- Xu, X.; Huang, Z. Refined generalization analysis of the Deep Ritz Method and Physics-Informed Neural Networks. arXiv preprint arXiv:2401.12526 2024.

- Zang, Y.; Bao, G.; Ye, X.; Zhou, H. Weak adversarial networks for high-dimensional partial differential equations. Journal of Computational Physics 2020, 411, 109409.

- Oliva, P.V.; Wu, Y.; He, C.; Ni, H. Towards fast weak adversarial training to solve high dimensional parabolic partial differential equations using XNODE-WAN. Journal of Computational Physics 2022, 463, 111233.

- Jagtap, A.D.; Karniadakis, G.E. Extended physics-informed neural networks (XPINNs): A generalized space-time domain decomposition based deep learning framework for nonlinear partial differential equations. Communications in Computational Physics 2020, 28.

- Hu, Z.; Jagtap, A.D.; Karniadakis, G.E.; Kawaguchi, K. When do extended physics-informed neural networks (XPINNs) improve generalization? arXiv preprint arXiv:2109.09444 2021.

- Meng, X.; Karniadakis, G.E. A composite neural network that learns from multi-fidelity data: Application to function approximation and inverse PDE problems. Journal of Computational Physics 2020, 401, 109020.

- Taghizadeh, M.; Nabian, M.A.; Alemazkoor, N. Multi-fidelity physics-informed generative adversarial network for solving partial differential equations. Journal of Computing and Information Science in Engineering 2024, 24, 111003.

- Wu, C.; Zhu, M.; Tan, Q.; Kartha, Y.; Lu, L. A comprehensive study of non-adaptive and residual-based adaptive sampling for physics-informed neural networks. Computer Methods in Applied Mechanics and Engineering 2023, 403, 115671.

- Torres, E.; Schiefer, J.; Niepert, M. Adaptive Physics-informed Neural Networks: A Survey. arXiv preprint arXiv:2503.18181 2025.

- Herde, M.; Raonic, B.; Rohner, T.; Käppeli, R.; Molinaro, R.; de Bézenac, E.; Mishra, S. Poseidon: Efficient foundation models for pdes. Advances in Neural Information Processing Systems 2024, 37, 72525–72624.

- Sun, J.; Liu, Y.; Zhang, Z.; Schaeffer, H. Towards a foundation model for partial differential equations: Multioperator learning and extrapolation. Physical Review E 2025, 111, 035304.

- Shi, Y.; Wei, P.; Feng, K.; Feng, D.C.; Beer, M. A survey on machine learning approaches for uncertainty quantification of engineering systems. Machine Learning for Computational Science and Engineering 2025, 1, 11.

- Bommasani, R. On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258 2021.

- Rahaman, N.; Baratin, A.; Arpit, D.; Draxler, F.; Lin, M.; Hamprecht, F.; Bengio, Y.; Courville, A. On the spectral bias of neural networks. In Proceedings of the International conference on machine learning. PMLR, 2019, pp. 5301–5310.

- Xu, Z.Q.J.; Zhang, Y.; Luo, T. Overview frequency principle/spectral bias in deep learning. Communications on Applied Mathematics and Computation 2024, pp. 1–38. [CrossRef]

- Ghojogh, B.; Ghodsi, A.; Karray, F.; Crowley, M. Reproducing Kernel Hilbert Space, Mercer’s Theorem, Eigenfunctions, Nystr\" om Method, and Use of Kernels in Machine Learning: Tutorial and Survey. arXiv preprint arXiv:2106.08443 2021.

- Özgüler, A.B. Performance Evaluation of Variational Quantum Eigensolver and Quantum Dynamics Algorithms on the Advection-Diffusion Equation. arXiv preprint arXiv:2503.24045 2025.

- Tilly, J.; Chen, H.; Cao, S.; Picozzi, D.; Setia, K.; Li, Y.; Grant, E.; Wossnig, L.; Rungger, I.; Booth, G.H.; et al. The variational quantum eigensolver: a review of methods and best practices. Physics Reports 2022, 986, 1–128. [CrossRef]

- Alipanah, H.; Zhang, F.; Yao, Y.; Thompson, R.; Nguyen, N.; Liu, J.; Givi, P.; McDermott, B.J.; Mendoza-Arenas, J.J. Quantum dynamics simulation of the advection-diffusion equation. arXiv preprint arXiv:2503.13729 2025.

- Marković, D.; Mizrahi, A.; Querlioz, D.; Grollier, J. Physics for neuromorphic computing. Nature Reviews Physics 2020, 2, 499–510.

- Kudithipudi, D.; Schuman, C.; Vineyard, C.M.; Pandit, T.; Merkel, C.; Kubendran, R.; Aimone, J.B.; Orchard, G.; Mayr, C.; Benosman, R.; et al. Neuromorphic computing at scale. Nature 2025, 637, 801–812.

- Tavanaei, A.; Ghodrati, M.; Kheradpisheh, S.R.; Masquelier, T.; Maida, A. Deep learning in spiking neural networks. Neural networks 2019, 111, 47–63.

- Verma, N.; Jia, H.; Valavi, H.; Tang, Y.; Ozatay, M.; Chen, L.Y.; Zhang, B.; Deaville, P. In-memory computing: Advances and prospects. IEEE solid-state circuits magazine 2019, 11, 43–55.

- Haensch, W.; Gokmen, T.; Puri, R. The next generation of deep learning hardware: Analog computing. Proceedings of the IEEE 2018, 107, 108–122. [CrossRef]

- Soroco, M.; Song, J.; Xia, M.; Emond, K.; Sun, W.; Chen, W. PDE-Controller: LLMs for Autoformalization and Reasoning of PDEs. arXiv preprint arXiv:2502.00963 2025.

- Weng, K.; Du, L.; Li, S.; Lu, W.; Sun, H.; Liu, H.; Zhang, T. Autoformalization in the Era of Large Language Models: A Survey. arXiv preprint arXiv:2505.23486 2025.

- Anzt, H.; Boman, E.; Falgout, R.; Ghysels, P.; Heroux, M.; Li, X.; Curfman McInnes, L.; Tran Mills, R.; Rajamanickam, S.; Rupp, K.; et al. Preparing sparse solvers for exascale computing. Philosophical Transactions of the Royal Society A 2020, 378, 20190053. [CrossRef]

- Shalf, J.; Dosanjh, S.; Morrison, J. Exascale computing technology challenges. In Proceedings of the International Conference on High Performance Computing for Computational Science. Springer, 2010, pp. 1–25.

- Al-hayanni, M.A.N.; Xia, F.; Rafiev, A.; Romanovsky, A.; Shafik, R.; Yakovlev, A. Amdahl’s law in the context of heterogeneous many-core systems–a survey. IET Computers & Digital Techniques 2020, 14, 133–148.

- Ivanov, D.; Chezhegov, A.; Kiselev, M.; Grunin, A.; Larionov, D. Neuromorphic artificial intelligence systems. Frontiers in Neuroscience 2022, 16, 959626.

- Muralidhar, R.; Borovica-Gajic, R.; Buyya, R. Energy efficient computing systems: Architectures, abstractions and modeling to techniques and standards. ACM Computing Surveys (CSUR) 2022, 54, 1–37. [CrossRef]

- Heldens, S.; Hijma, P.; Werkhoven, B.V.; Maassen, J.; Belloum, A.S.; Van Nieuwpoort, R.V. The landscape of exascale research: A data-driven literature analysis. ACM Computing Surveys (CSUR) 2020, 53, 1–43.

| Method | Complexity | Mesh | UQ | Nonlinearity | Theory | Impl. | Error | Implementation Notes |

| Adapt. | Handling | Bounds | Diff. | |||||

| Classical Finite Difference Methods | ||||||||

| Finite Difference (FDM) | to | None | No | Explicit/Implicit | Partial | Low | to | Simple stencil operations; CFL stability conditions for explicit schemes |

| Compact FD Schemes | None | No | Implicit | Strong | Medium | to | Higher-order accuracy; tridiagonal systems; Padé approximations | |

| Weighted Essentially Non-Osc. | None | No | Conservative | Strong | High | to | High-order shock capturing; nonlinear weights; TVD property | |

| Classical Finite Element Methods | ||||||||

| Standard FEM (h-version) | to | h-refine | Limited | Newton-Raphson | Strong | Medium | to | Variational formulation; sparse matrix assembly; a priori error estimates |

| p-Finite Element | to | p-refine | Limited | Newton-Raphson | Strong | High | to | High-order polynomials; hierarchical basis; exponential convergence |

| hp-Finite Element | to | hp-adapt | Limited | Newton-Raphson | Strong | Very High | to | Optimal convergence rates; automatic hp-adaptivity algorithms |

| Mixed Finite Element | to | h-adapt | No | Saddle point | Strong | High | to | inf-sup stability; Brezzi conditions; simultaneous approximation |

| Discontinuous Galerkin | to | hp-adapt | No | Explicit/Implicit | Strong | High | to | Local conservation; numerical fluxes; upwinding for hyperbolic PDEs |

| Spectral and High-Order Methods | ||||||||

| Global Spectral Method | None | No | Pseudo-spectral | Strong | Medium | to | FFT-based transforms; exponential accuracy for smooth solutions | |

| Spectral Element Method | p-adapt | No | Newton-Raphson | Strong | High | to | Gauss-Lobatto-Legendre points; tensorized basis functions | |

| Chebyshev Spectral | None | No | Collocation | Strong | Medium | to | Chebyshev polynomials; Clenshaw-Curtis quadrature | |

| Finite Volume and Conservative Methods | ||||||||

| Finite Volume Method | to | r-adapt | No | Godunov/MUSCL | Partial | Medium | to | Conservation laws; Riemann solvers; flux limiters for monotonicity |

| Cell-Centered FV | AMR | No | Conservative | Partial | Medium | to | Dual mesh approach; reconstruction procedures; slope limiters | |

| Vertex-Centered FV | Unstructured | No | Conservative | Partial | High | to | Median dual cells; edge-based data structures | |

| Advanced Grid-Based Methods | ||||||||

| Adaptive Mesh Refinement | to | Dynamic | No | Explicit/Implicit | Partial | High | to | Hierarchical grid structures; error estimation; load balancing |

| Multigrid Method | Limited | No | V/W-cycles | Strong | High | to | Prolongation/restriction operators; coarse grid correction | |

| Algebraic Multigrid | Graph-based | No | Nonlinear | Strong | Very High | to | Strength of connection; coarsening algorithms; smoothing | |

| Block-Structured AMR | Structured | No | Conservative | Partial | Very High | to | Berger-Colella framework; refluxing; subcycling in time | |

| Meshless Methods | ||||||||

| Method of Fundamental Sol. | None | No | Direct solve | Strong | Medium | to | Fundamental solutions; boundary collocation; no mesh required | |

| Radial Basis Functions | None | No | Global interp. | Weak | High | to | Shape parameter selection; ill-conditioning issues | |

| Meshless Local Petrov-Gal. | Nodal | No | Moving LS | Partial | High | to | Local weak forms; weight functions; integration difficulties | |

| Smooth Particle Hydrodynamics | Lagrangian | No | Explicit | Weak | Medium | to | Kernel approximation; artificial viscosity; particle inconsistency | |

| Specialized Classical Methods | ||||||||

| Boundary Element Method | to | Surface | No | Integral eq. | Strong | High | to | Green’s functions; singular integrals; infinite domains |

| Fast Multipole BEM | Surface | No | Hierarchical | Strong | Very High | to | Tree algorithms; multipole expansions; translation operators | |

| Isogeometric Analysis | k-refinement | No | Newton-Raphson | Strong | High | to | NURBS basis functions; exact geometry; higher continuity | |

| eXtended FEM (XFEM) | Enrichment | No | Level sets | Partial | Very High | to | Partition of unity; discontinuities without remeshing | |

| Multiscale Methods | ||||||||

| Multiscale FEM (MsFEM) | Coarse | No | Implicit | Strong | High | to | Offline basis construction; scale separation; periodic microstructure | |

| Generalized MsFEM | Coarse | No | Implicit | Strong | Very High | to | Spectral basis functions; local eigenvalue problems; oversampling | |

| Heterogeneous Multiscale | Macro | Limited | Constrained | Strong | Very High | to | Macro-micro coupling; constrained problems; missing data | |

| Localized Orthogonal Dec. | Local | No | Implicit | Strong | Very High | to | Exponential decay; corrector problems; quasi-local operators | |

| Variational Multiscale | Coarse | No | Stabilized | Strong | High | to | Fine-scale modeling; residual-based stabilization; bubble functions | |

| Equation-Free Methods | Microscopic | No | Projective | Emerging | Very High | to | Coarse projective integration; gap-tooth schemes; patch dynamics | |

| Two-Scale FEM | Hierarchical | No | Computational | Strong | Very High | to | Representative volume elements; computational homogenization | |

| Reduced Basis Method | Parameter | Yes | Affine decomp. | Strong | High | to | Greedy selection; a posteriori error bounds; parametric problems | |

| Method Family | Key Principle | Typical Applications | Computational Scaling | Data Requirements |

|---|---|---|---|---|

| Physics-Informed NNs | Embed PDE in loss function | General PDEs, inverse problems | Minimal | |

| Neural Operators | Learn function-to-function mappings | Parametric PDEs, multi-query | Moderate to High | |

| Graph Neural Networks | Message passing on meshes | Irregular geometries, adaptive | Moderate | |

| Transformer-Based | Attention mechanisms | Long-range dependencies | to | High |

| Generative Models | Probabilistic solutions | Uncertainty quantification | Problem-dependent | Moderate |

| Hybrid Methods | Combine ML with traditional | Multi-physics, multi-scale | Varies | Moderate |

| Meta-Learning | Rapid adaptation | Few-shot problems, families | Low per task | |

| Physics Enhanced Deep Surrogates | Low-fidelity + neural correction | Complex physical systems | ∼10× less | |

| Random Feature Methods | Random feature functions | Bridge traditional/ML | Low to Moderate | |

| DeePoly Framework | Two-stage DNN+polynomial | High-order accuracy | Moderate | |

| Specialized Architectures | Domain-specific designs | Specific PDE classes | Architecture-dependent | Varies |

| Method | Architecture Type | Training Complex. | UQ | Multiscale Capability | Nonlinearity Handling | Inference Speed | Accuracy Range | Implementation Notes & Key Features |

| Physics-Informed Neural Networks (PINNs) Family | ||||||||

| Physics-Informed NNs | Residual-based | Limited | Moderate | Sensitive | Fast | to | Automatic differentiation for PDE residuals; weak BC enforcement; spectral bias issues | |

| Variational PINNs | Weak formulation | Moderate | Improved | Better | Fast | to | Galerkin projection; better conditioning than PINNs; requires integration | |

| Conservative PINNs | Energy-preserving | No | Moderate | Physical | Fast | to | Hamiltonian structure preservation; symplectic integration principles | |

| Deep Ritz Method | Variational approach | No | Limited | Smooth | Fast | to | Energy minimization; requires known energy functional; high-dimensional problems | |

| Weak Adversarial Networks | Min-max formulation | No | Moderate | Good | Medium | to | Adversarial training; dual formulation; improved boundary handling | |

| Extended PINNs (XPINNs) | Domain decomposition | No | Good | Moderate | Medium | to | Subdomain coupling; interface conditions; parallel training capability | |

| Multi-fidelity PINNs | Hierarchical data | Yes | Good | Moderate | Fast | to | Multiple data fidelities; transfer learning; uncertainty propagation | |

| Adaptive PINNs | Residual-based adapt. | Limited | Good | Moderate | Medium | to | Residual-based point refinement; gradient-enhanced sampling | |

| Neural Operator Methods | ||||||||

| Fourier Neural Operator | Fourier transform | Ensemble | Limited | Periodic | Very Fast | to | FFT-based convolutions; resolution invariance; periodic boundary assumption | |

| DeepONet | Branch-trunk arch. | Dropout | Moderate | Smooth | Fast | to | Universal operator approximation; separable architecture; sensor placement critical | |

| Graph Neural Operator | Graph convolution | Limited | Good | Moderate | Fast | to | Irregular geometries; message passing; edge feature learning | |

| Multipole Graph Networks | Hierarchical graphs | No | Very Good | Good | Fast | to | Fast multipole method inspiration; multiscale graph pooling | |

| Neural Integral Operator | Integral kernels | Limited | Moderate | Good | Medium | to | Learned Green’s functions; non-local operators; memory intensive | |

| Wavelet Neural Operator | Wavelet basis | No | Very Good | Good | Fast | to | Multiscale wavelet decomposition; adaptive resolution; boundary wavelets | |

| Transformer Neural Op. | Self-attention | Attention | Good | Good | Medium | to | Global receptive field; positional encoding for coordinates | |

| LSM-based Neural Op. | Least squares | Variance | Moderate | Linear | Fast | to | Moving least squares kernels; local approximation; meshfree approach | |

| Graph Neural Network Approaches | ||||||||

| MeshGraphNets | Message passing | Limited | Good | Good | Fast | to | Mesh-based GNNs; temporal evolution; learned physics dynamics | |

| Neural Mesh Refinement | Adaptive graphs | No | Very Good | Moderate | Medium | to | Dynamic mesh adaptation; error-driven refinement; graph coarsening | |

| Multiscale GNNs | Hierarchical pooling | Limited | Very Good | Good | Fast | to | Graph U-Net architecture; multiscale feature extraction | |

| Physics-Informed GNNs | Residual constraints | No | Good | Good | Fast | to | PDE residuals as graph losses; physics-constrained message passing | |

| Geometric Deep Learning | Equivariant layers | Limited | Moderate | Good | Fast | to | SE(3) equivariance; geometric priors; irreducible representations | |

| Simplicial Neural Nets | Simplicial complexes | No | Good | Moderate | Medium | to | Higher-order interactions; topological features; persistent homology | |

| Transformer and Attention-Based Methods | ||||||||

| Galerkin Transformer | Spectral attention | Limited | Good | Good | Medium | to | Fourier attention mechanism; spectral bias mitigation | |

| Factorized FNO | Low-rank approx. | No | Moderate | Good | Fast | to | Tucker decomposition; reduced complexity; maintains accuracy | |

| U-FNO | U-Net + FNO | Limited | Very Good | Good | Fast | to | Encoder-decoder structure; multiscale processing; skip connections | |

| Operator Transformer | Cross-attention | Attention | Good | Good | Medium | to | Input-output cross-attention; flexible geometries | |

| PDEformer | Autoregressive | Limited | Moderate | Sequential | Medium | to | Time-stepping transformer; causal attention masks | |

| Generative and Probabilistic Models | ||||||||

| Score-based PDE Solvers | Diffusion models | Native | Limited | Stochastic | Slow | to | Denoising score matching; probabilistic solutions; sampling-based inference | |

| Variational Autoencoders | Latent space | Native | Good | Moderate | Fast | to | Dimensionality reduction; probabilistic encoding; KL regularization | |

| Normalizing Flows | Invertible transforms | Native | Limited | Good | Medium | to | Exact likelihood computation; invertible neural networks | |

| Neural Stochastic PDEs | Stochastic processes | Native | Good | Stochastic | Medium | to | SDE neural networks; path sampling; Wiener process modeling | |

| Bayesian Neural Nets | Posterior sampling | Native | Moderate | Good | Slow | to | Weight uncertainty; Monte Carlo dropout; variational inference | |

| Hybrid and Multi-Physics Methods | ||||||||

| Neural-FEM Coupling | Hybrid discretization | Limited | Very Good | Good | Medium | to | FEM backbone with neural corrections; domain decomposition | |

| Multiscale Neural Nets | Scale separation | Limited | Very Good | Good | Fast | to | Explicit scale separation; homogenization-inspired; scale bridging | |

| Neural Homogenization | Effective properties | Limited | Very Good | Moderate | Fast | to | Representative volume elements; effective medium theory | |

| Multi-fidelity Networks | Data fusion | Good | Good | Moderate | Fast | to | Information fusion; transfer learning; cost-accuracy tradeoffs | |

| Physics-Guided Networks | Domain knowledge | Limited | Good | Physics | Fast | to | Hard constraints; conservation laws; invariance properties | |

| Specialized Architectures | ||||||||

| Convolutional Neural Op. | CNN-based | Limited | Limited | Good | Very Fast | to | Translation equivariance; local receptive fields; parameter sharing | |

| Recurrent Neural PDE | Sequential processing | Limited | Moderate | Temporal | Medium | to | Time-dependent PDEs; memory mechanisms; gradient issues | |

| Capsule Networks | Hierarchical features | No | Good | Moderate | Medium | to | Part-whole relationships; routing algorithms; viewpoint invariance | |

| Neural ODEs for PDEs | Continuous dynamics | Limited | Good | Continuous | Slow | to | Continuous-time modeling; adaptive stepping; memory efficient | |

| Quantum Neural Networks | Quantum computing | Quantum | Limited | Quantum | Slow | to | Quantum advantage potential; NISQ limitations; exponential speedup theory | |

| Meta-Learning and Few-Shot Methods | ||||||||

| MAML for PDEs | Gradient-based | Limited | Moderate | Transfer | Fast | to | Model-agnostic meta-learning; few-shot adaptation; gradient-based | |

| Prototypical Networks | Prototype matching | Limited | Limited | Metric | Fast | to | Metric learning; prototype computation; episodic training | |

| Neural Process for PDEs | Stochastic processes | Native | Good | Contextual | Fast | to | Context-target paradigm; uncertainty quantification; function-space priors | |

| Hypernetworks | Parameter generation | Limited | Good | Adaptive | Fast | to | Weight generation; task conditioning; parameter sharing | |

| Legend:N = problem size, E = graph edges, T = time steps, L = layers, d = dimension, k = polynomial degree, r = rank, h = hidden size, P = parameters. | ||||||||

| Training Complexity: Typical computational cost for training phase; varies significantly with problem size and architecture. | ||||||||

| UQ Capability: Native = built-in uncertainty quantification, Ensemble = multiple model averaging, Limited = requires extensions. | ||||||||

| Inference Speed: Very Fast = ms, Fast = ms, Medium = 100ms-1s, Slow = s for typical problems. | ||||||||

| Accuracy Range: Typical relative error on standard benchmarks; highly problem-dependent. | ||||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).