Submitted:

01 September 2025

Posted:

03 September 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Import of CRIE module: This unit aims to optimize the process of feature extraction and information integration, enhancing the model's ability to learn image feature representations.

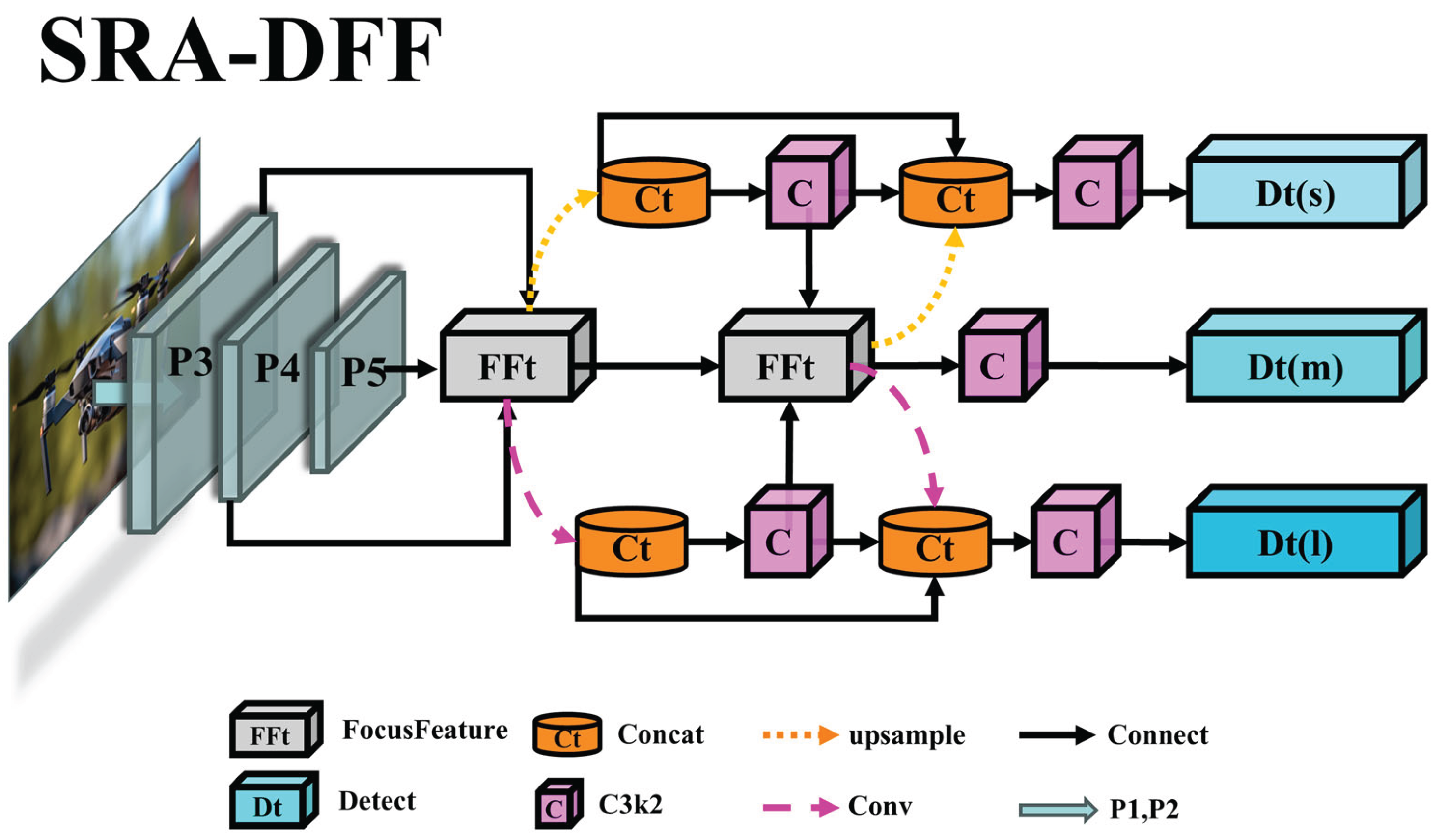

- Design of SRA-DFF network: This innovative architecture is dedicated to improving the multi-level feature extraction effect, strengthening the semantic correlation between feature expression levels, and significantly enhancing the cross-scale feature integration capability.

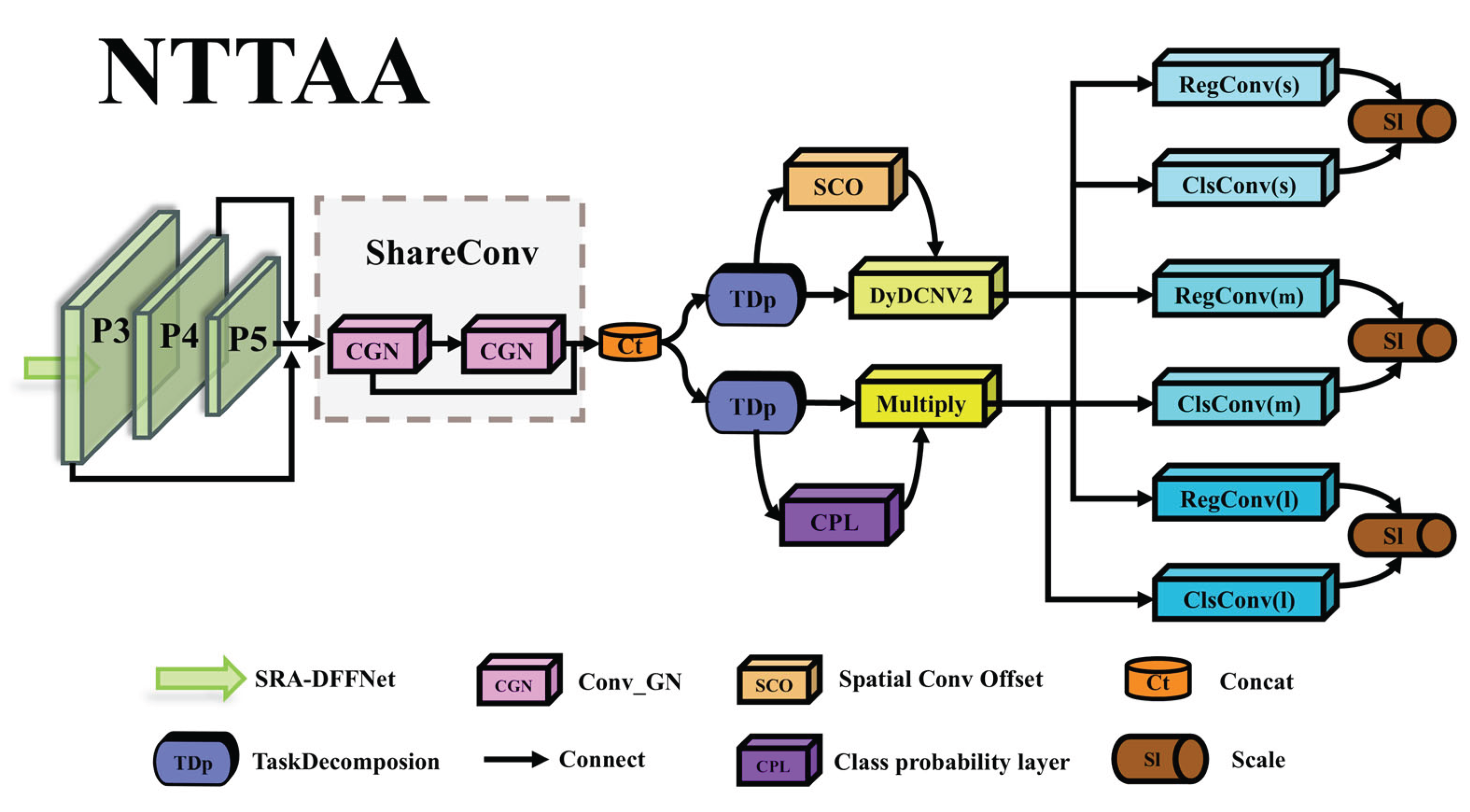

- Construction of NTTAA detection head: This detection head addresses the issue of spatial deviation in classification and localization tasks, designing a bidirectional parallel task alignment path, which strengthens the feature interaction between the two types of tasks.

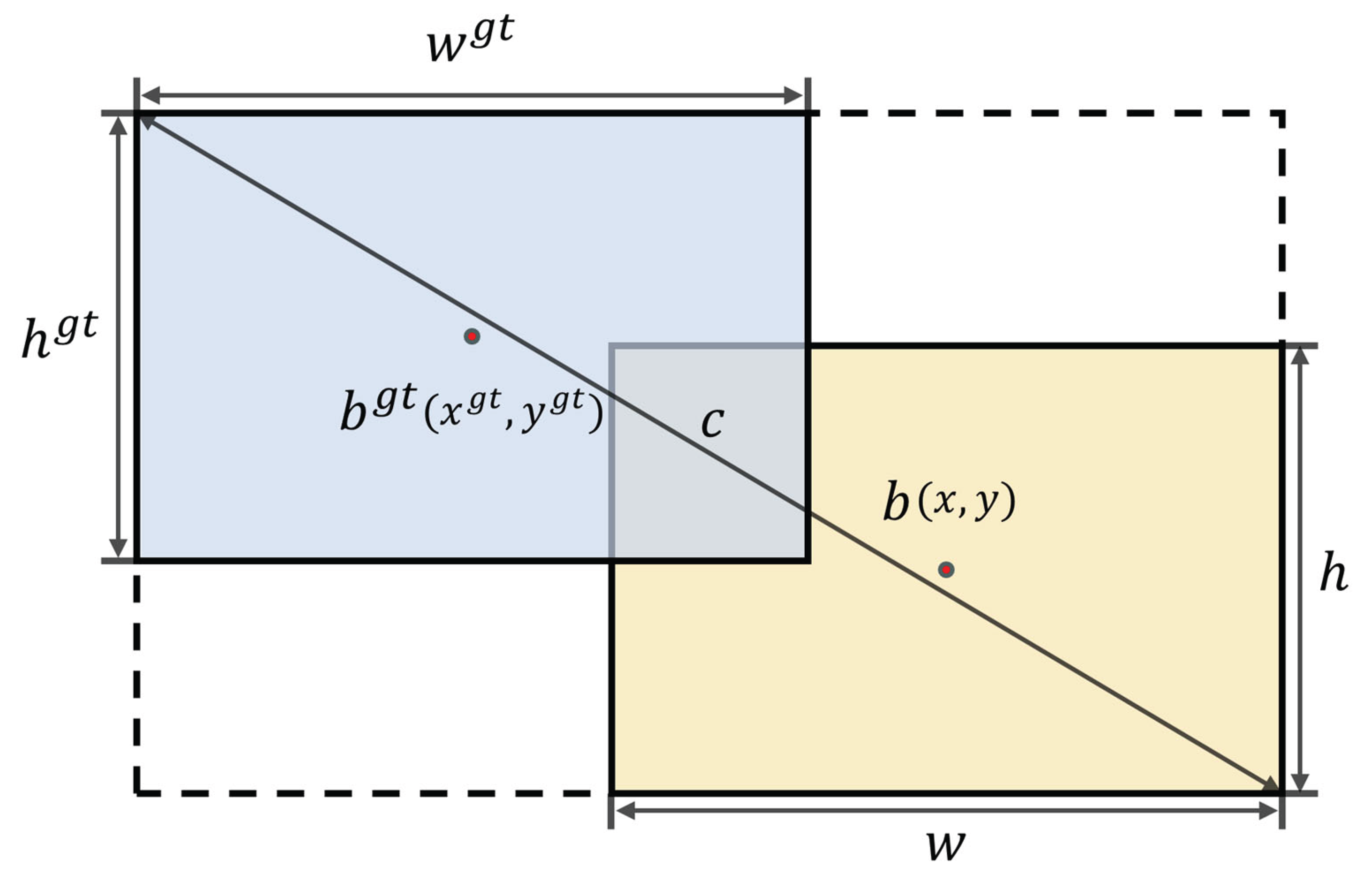

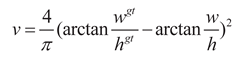

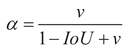

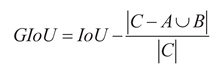

- Adoption of GIoU loss function: To solve the problem of limited accuracy improvement and waste of computing resources of the CIoU loss function in the unmanned aerial vehicle target detection task, a GIoU loss function that is friendly to regular small targets is adopted.

2. Materials and Methods

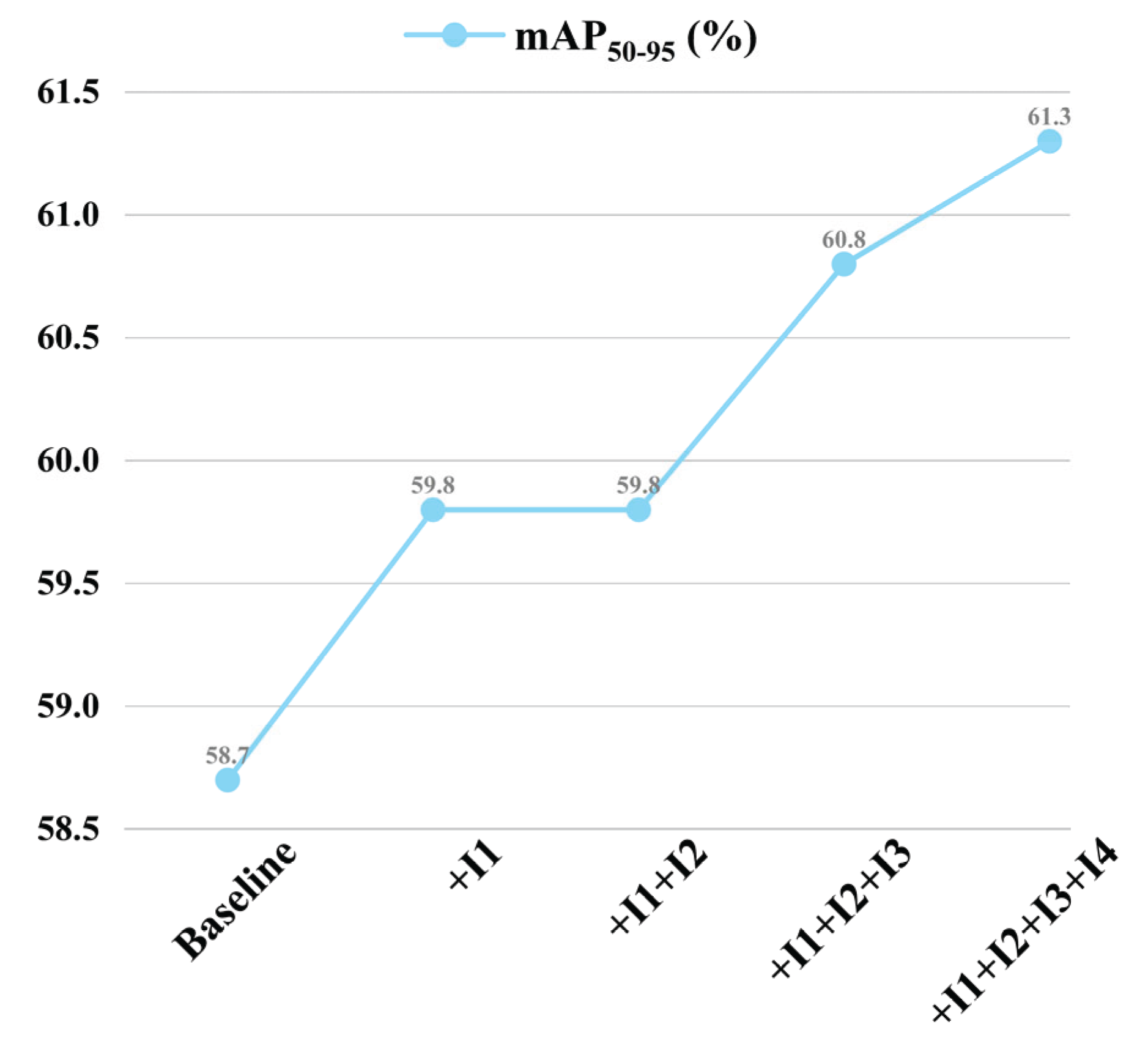

2.1. YOLO11

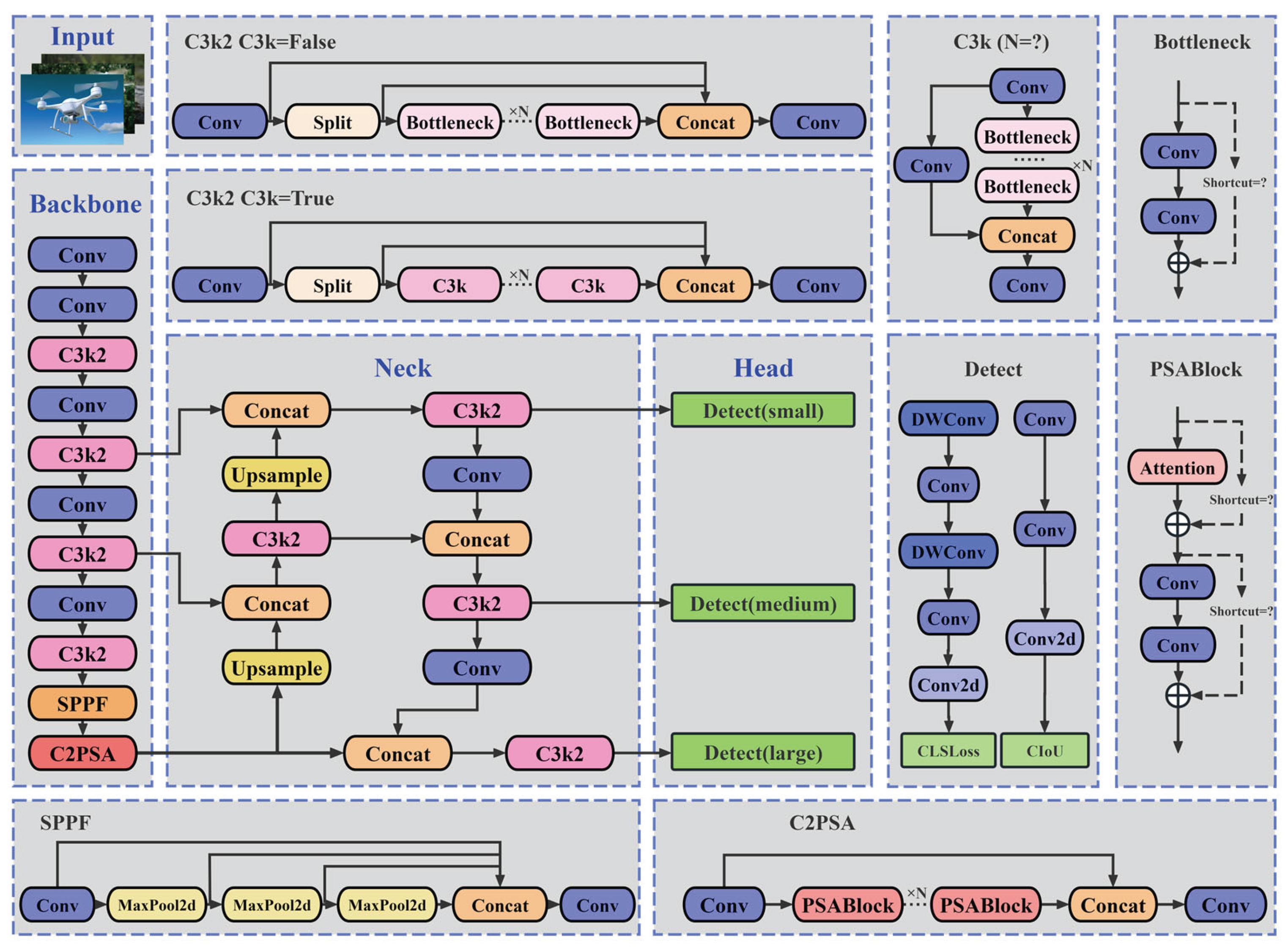

2.2. YOLO11n-SMSH

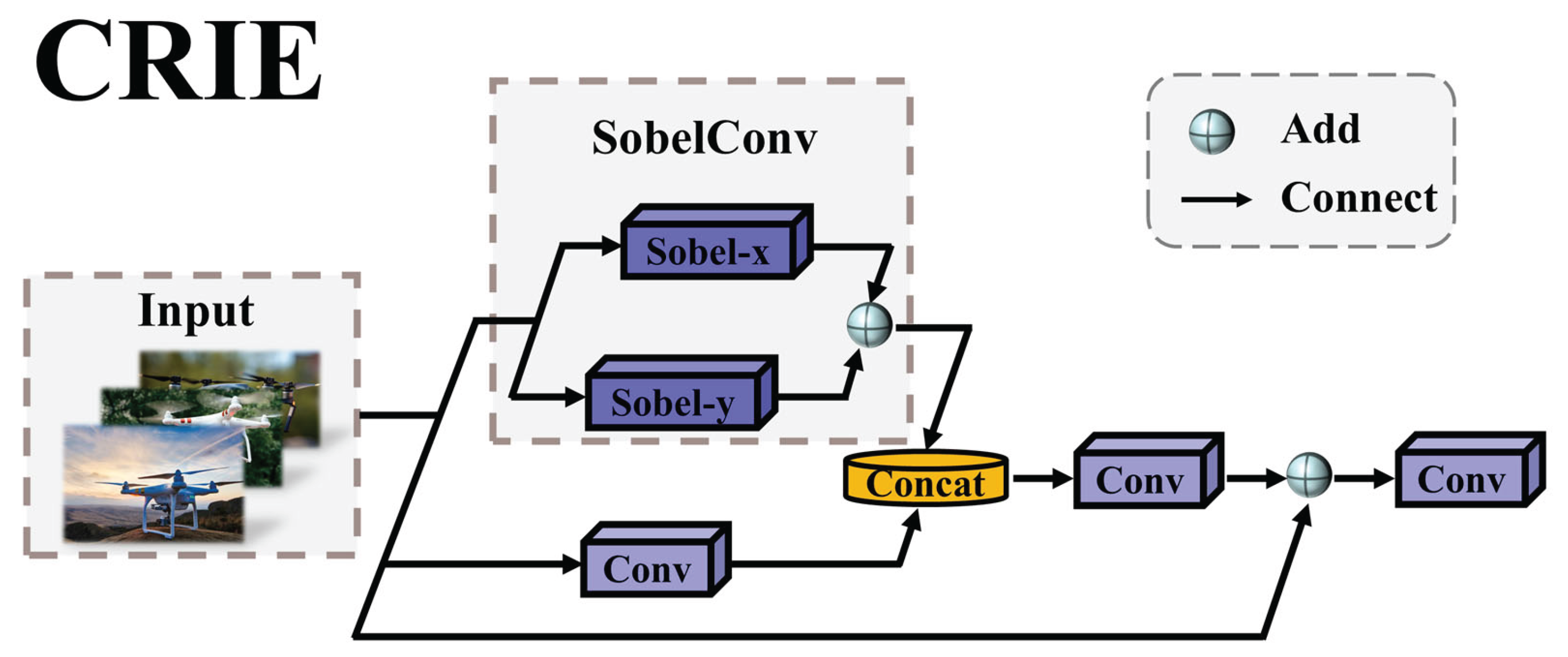

2.2.1. CRIE Module

- Edge feature deep extraction: Convolutional Neural Network (CNN) excels in extracting spatial features of images, but has limitations in capturing edge information. To address this deficiency, the CRIE module innovatively introduces the SobelConv branch - this branch is optimally designed based on the Sobel operator [21], and by accurately identifying the abrupt changes in pixel intensity, it achieves deep extraction of image edge features.

- Efficient retention of spatial features: In the target detection task, edge information is crucial for precise boundary positioning, while spatial information is the fundamental support for understanding the structure of the target. The Conv branch relies on local receptive fields and translation invariance, capturing image texture details, corner distributions, etc. through convolution kernels, and passing the relative positional relationships within the target layer by layer, efficiently retaining the original spatial structure features.

- Perfect fusion of dual-path features: The CRIE module fuses the edge features extracted by the SobelConv branch and the spatial structure features extracted by the conv branch (Concat) to generate a comprehensive representation that combines boundary positioning and spatial integrity. This jointly enhances the robustness and adaptability of target detection in complex scenarios, providing strong support for subsequent target detection tasks.

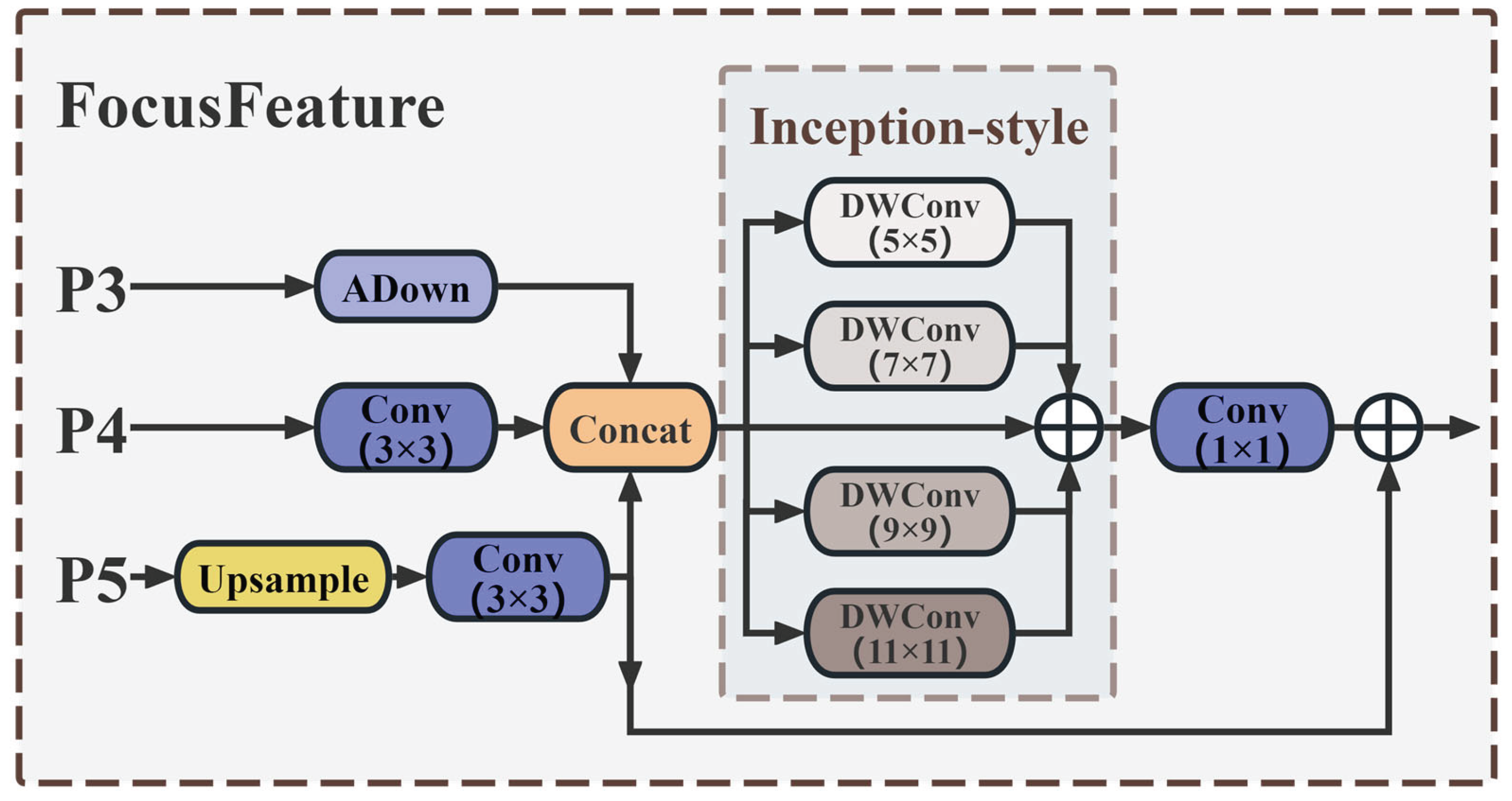

2.2.2. SRA-DFF Network

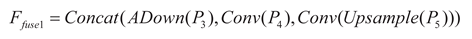

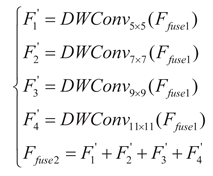

- Feature Integration Path

- FFt Module

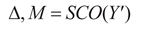

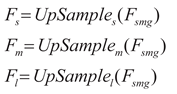

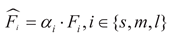

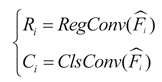

2.2.3. NTTAA Detection Head

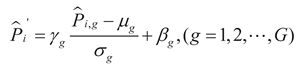

represent the mean

and standard deviation of the features in the g group respectively, G represents the

number of groups along the

channel dimension.

represent the mean

and standard deviation of the features in the g group respectively, G represents the

number of groups along the

channel dimension.

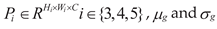

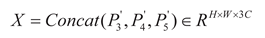

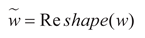

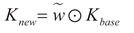

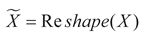

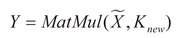

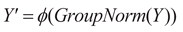

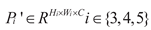

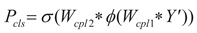

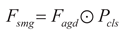

, X represents the

concatenated feature, Wconv1 and Wconv2 respectively

represent the weights of the two convolutional layers in TDp, and respectively

represent the adaptive adjusted weight vector and the concatenated feature. Kbase represents the

initial convolution kernel of the built-in Conv_GN1 module in TDp, σ(·) and ϕ(·) respectively

represent the Sigmoid function and the ReLU function. Thus, the classification

and localization tasks can each obtain the feature representation with their

own characteristics Y′, thereby

achieving task decoupling.

, X represents the

concatenated feature, Wconv1 and Wconv2 respectively

represent the weights of the two convolutional layers in TDp, and respectively

represent the adaptive adjusted weight vector and the concatenated feature. Kbase represents the

initial convolution kernel of the built-in Conv_GN1 module in TDp, σ(·) and ϕ(·) respectively

represent the Sigmoid function and the ReLU function. Thus, the classification

and localization tasks can each obtain the feature representation with their

own characteristics Y′, thereby

achieving task decoupling.

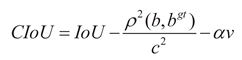

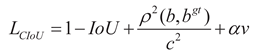

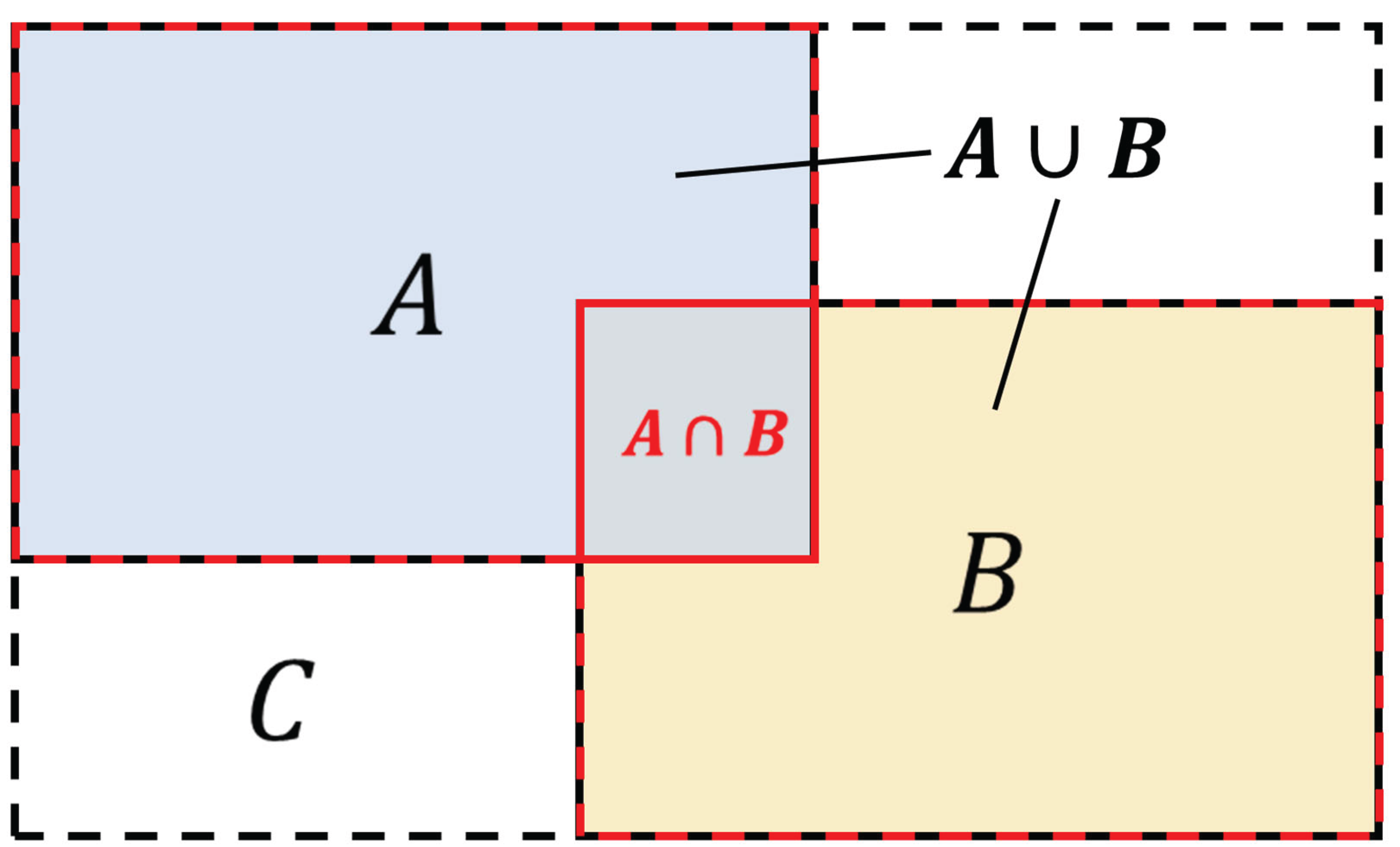

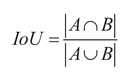

2.2.4. GIoU Loss Function

2.3. Chapter Summary

3. Results

3.1. Experimental Environment and Parameter Configuration

| Parameters | Setup |

|---|---|

| Optimizer | SGD |

| Batch_Size | 16 |

| Epochs | 200 |

| Imges_Size | 640 |

| Learning rate | 0.01 |

| Amp | True |

| workers | 2 |

| Momentum | 0.937 |

| Weight-Decay | 0.0005 |

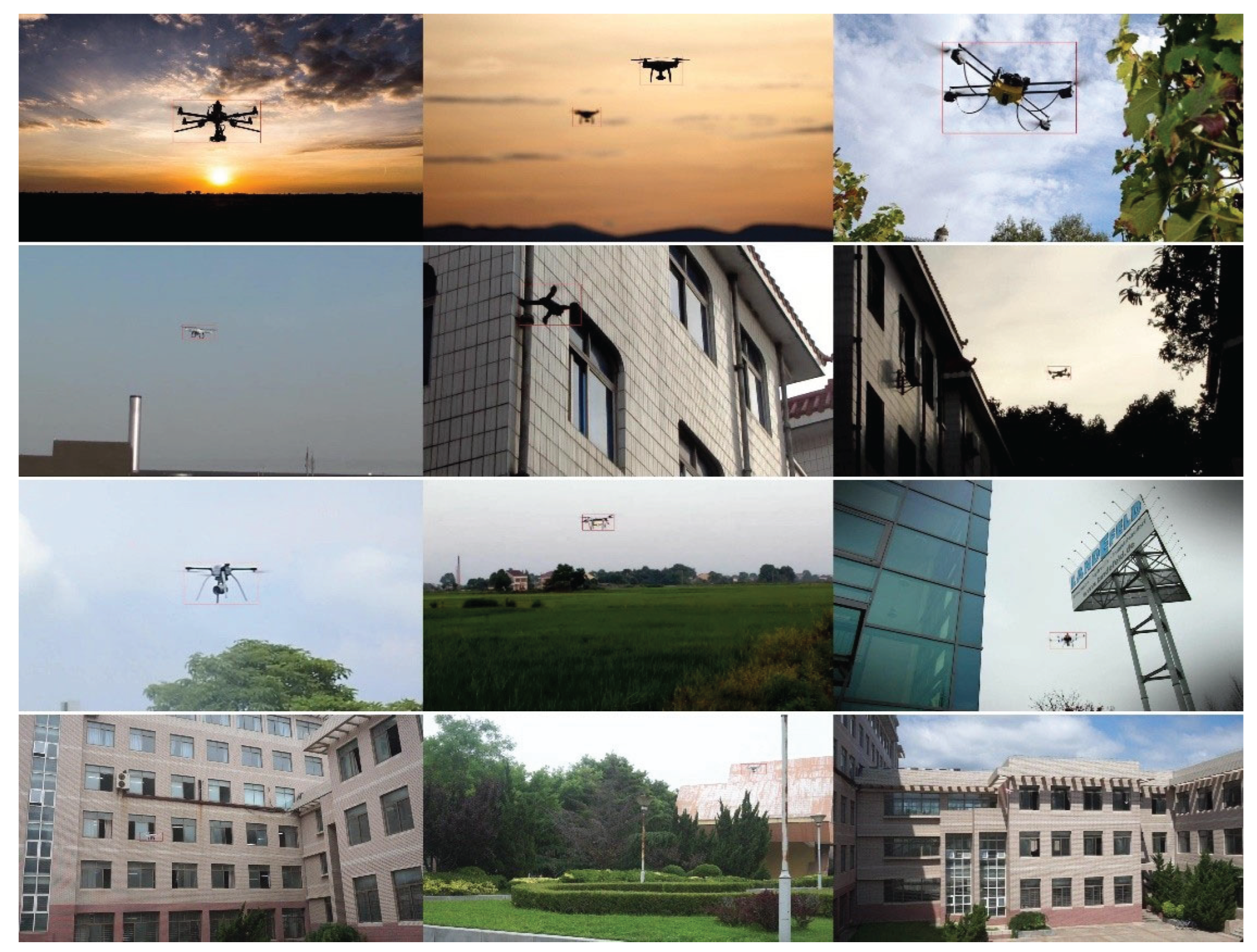

3.2. Dataset

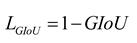

3.3. Evaluation Metrics

3.4. Comparison and Ablation Experiments

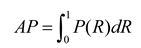

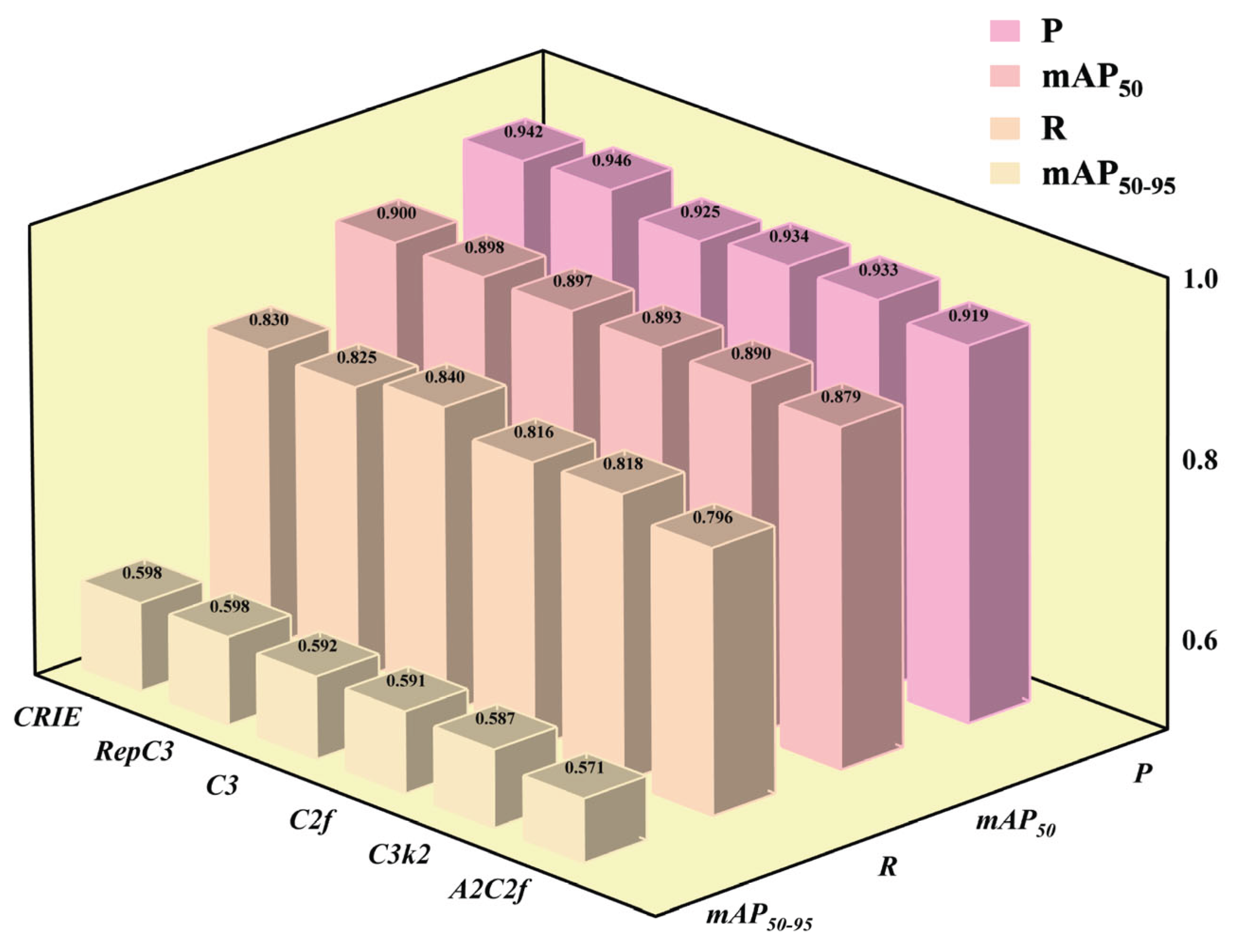

3.4.1. Comparison Effect of Different Block Modules

| Block | P | R | mAP50 | mAP50-95 | GFLOPs | Params/106 |

|---|---|---|---|---|---|---|

| CRIE | 0.942 | 0.83 | 0.9 | 0.598 | 6.4 | 2.57 |

| RepC3 | 0.946 | 0.825 | 0.898 | 0.598 | 6.9 | 2.98 |

| C3 | 0.925 | 0.84 | 0.897 | 0.592 | 5.5 | 2.42 |

| C2f | 0.934 | 0.816 | 0.893 | 0.591 | 6.1 | 2.63 |

| C3k2 | 0.933 | 0.818 | 0.89 | 0.587 | 6.3 | 2.58 |

| A2C2f | 0.919 | 0.796 | 0.879 | 0.571 | 6.4 | 2.97 |

3.4.2. Comparison Effect of CRIE at Different Replacement Position

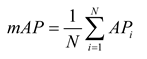

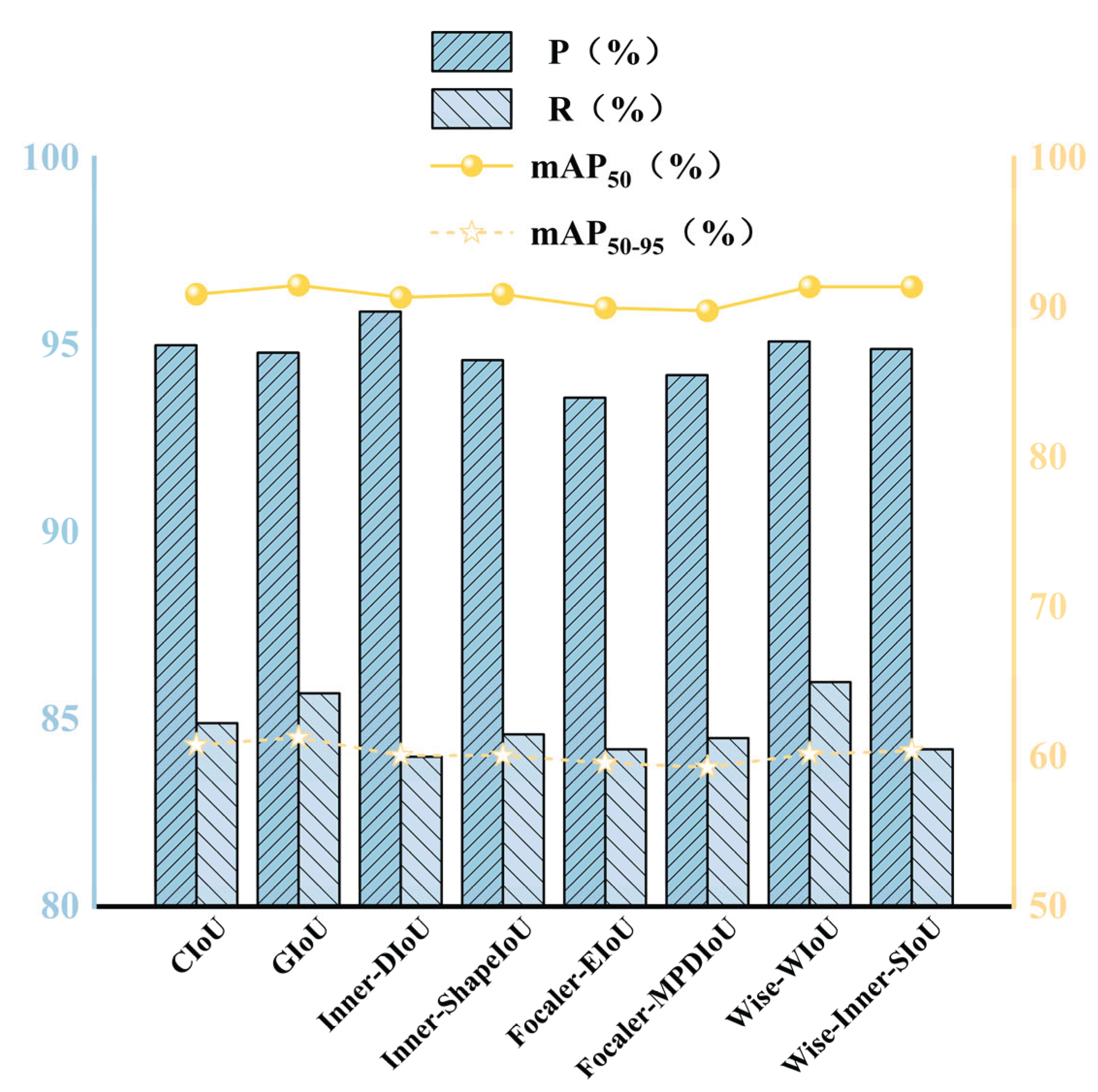

3.4.3. Comparison Effect of Different Loss Function

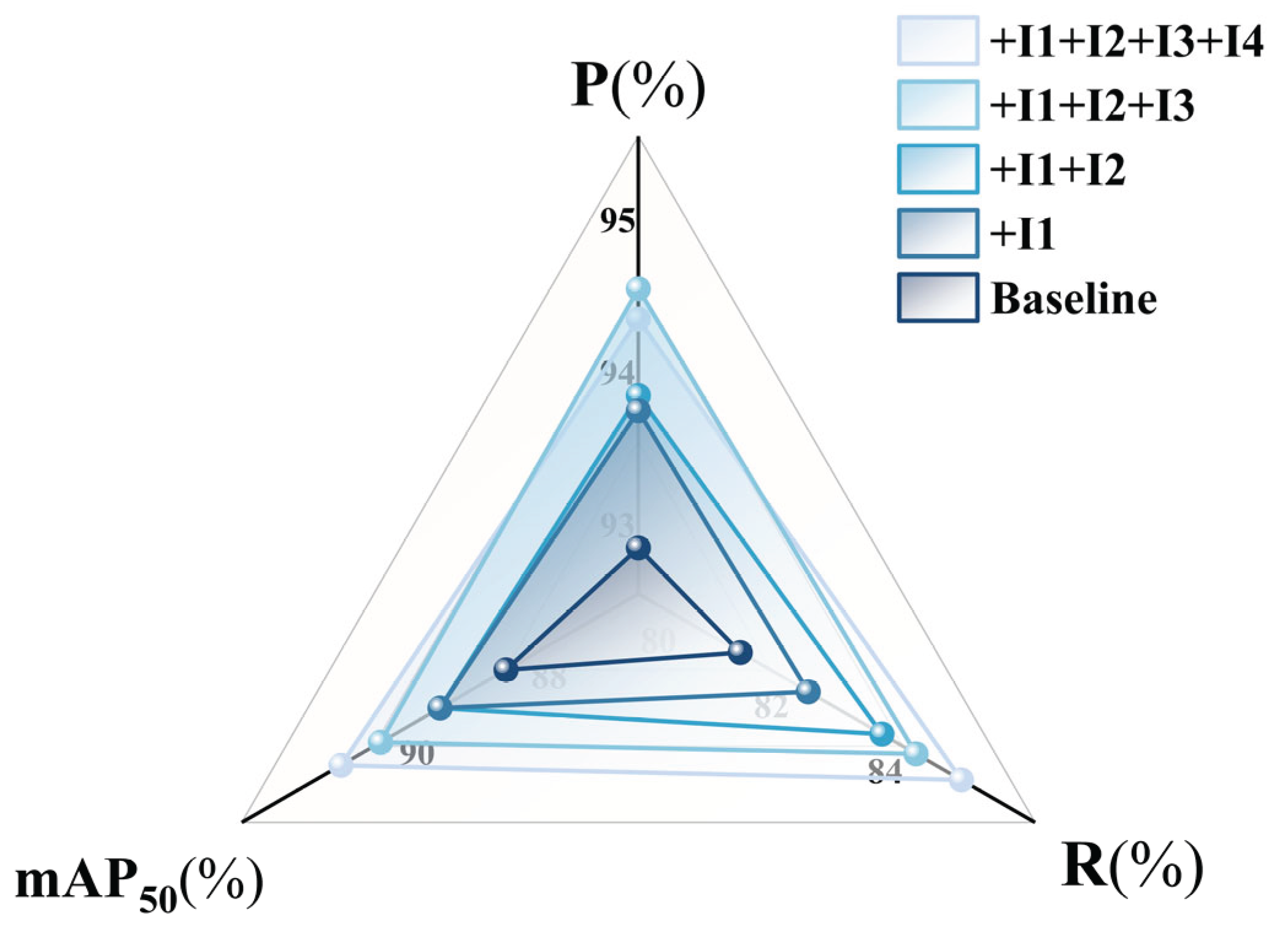

3.4.4. Ablation Experiment

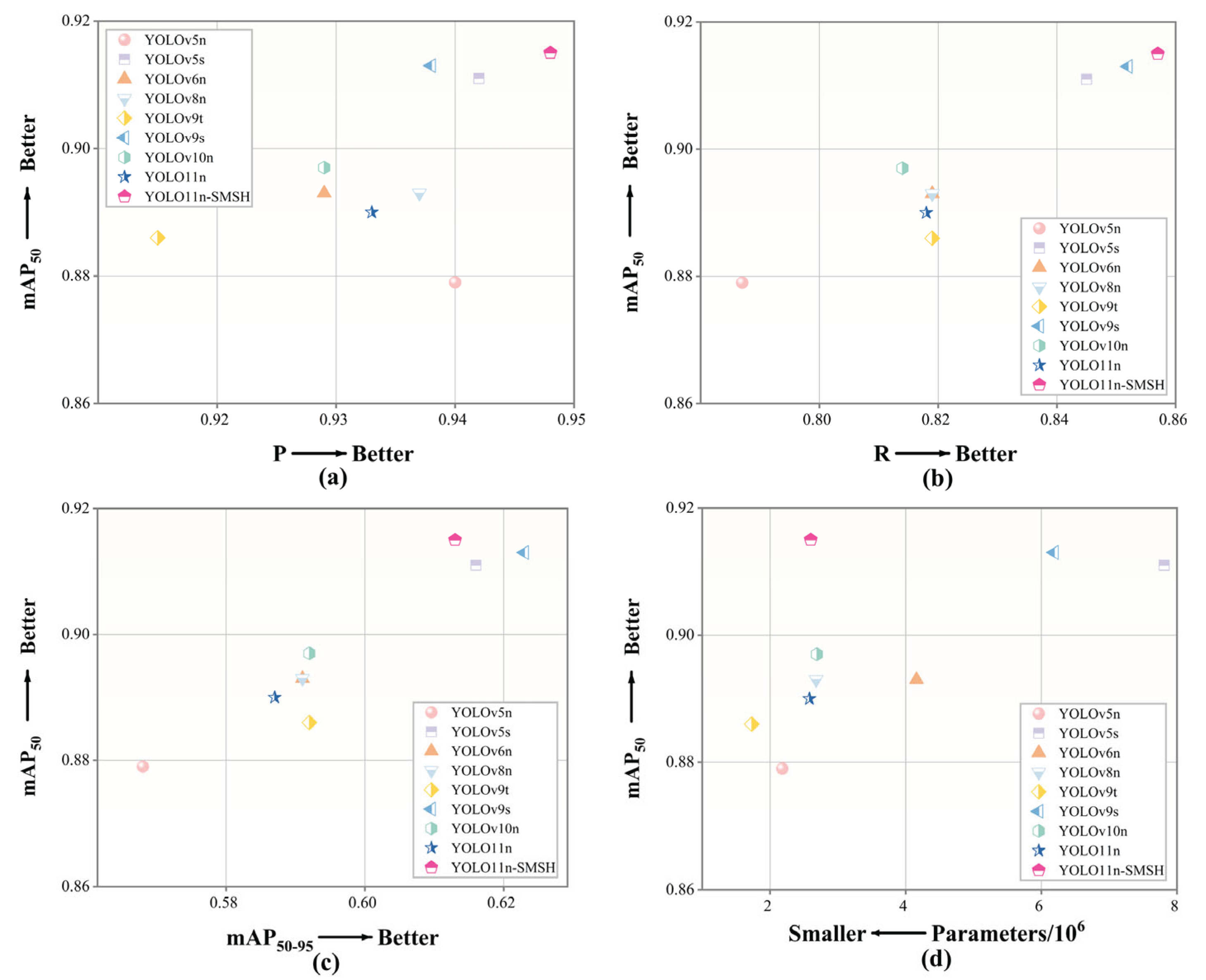

3.4.5. Comparison of Classic Models in the YOLO Series

3.4.6. Model Generalization Verification

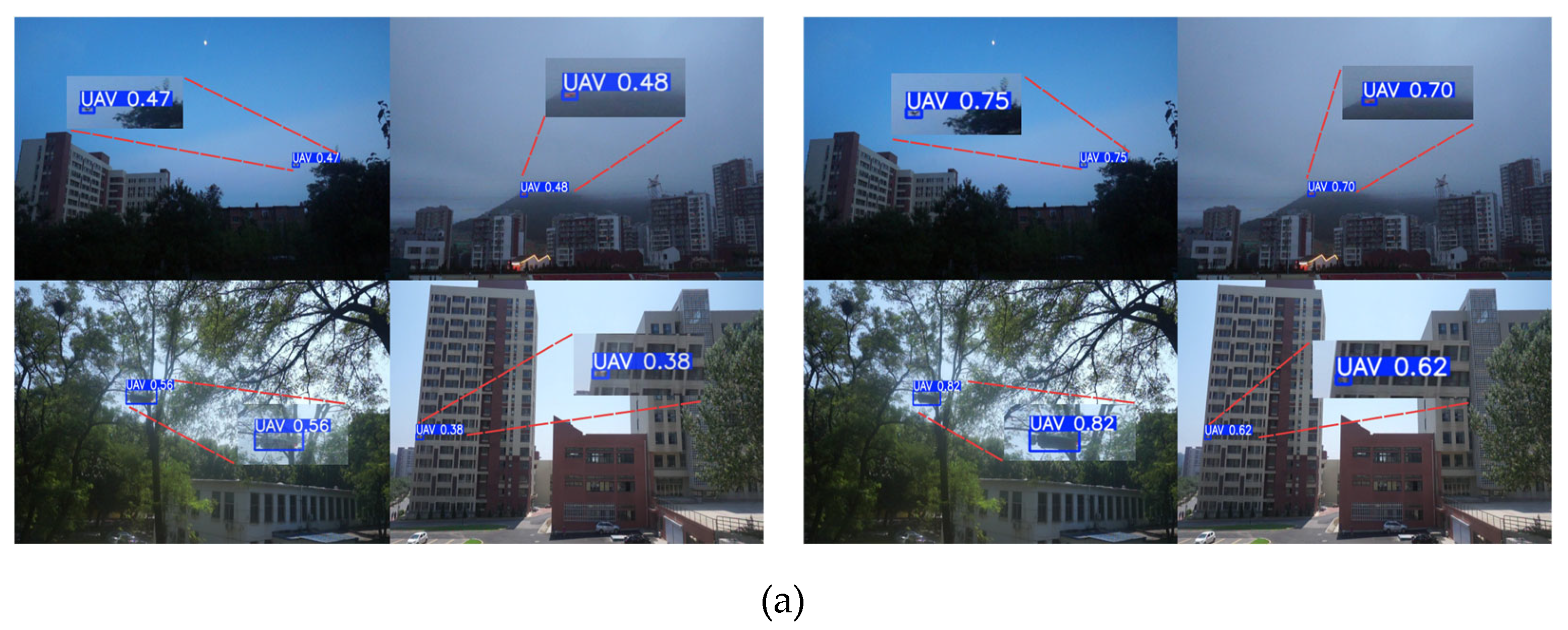

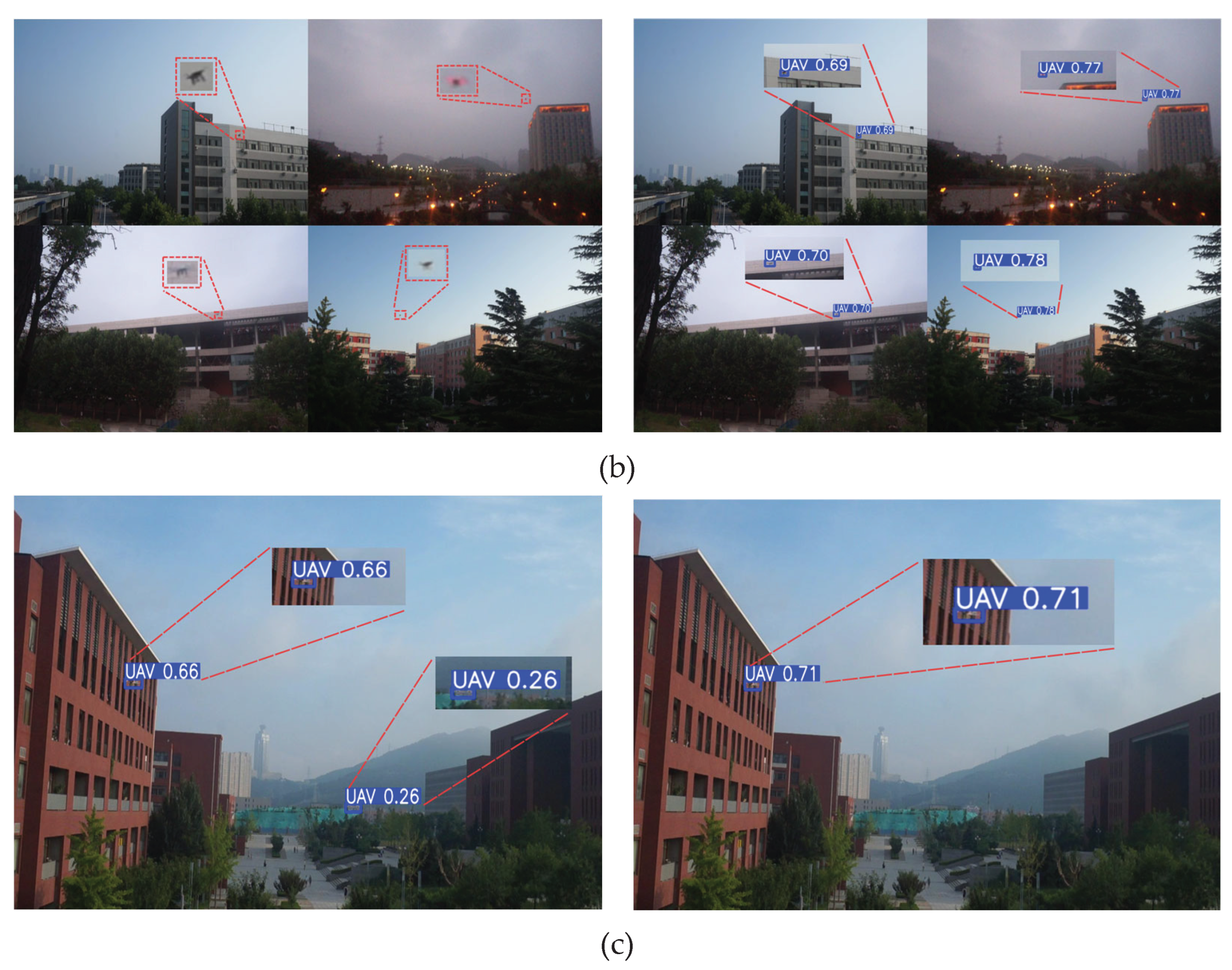

3.5. Visualization of Detection Results

4. Discussion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ma Wen, Chi Gan Xiaoxuan. Research on the Development of Anti-UAV Technology [J]. Aviation Weaponry, 2020, 27(06): 19-24.

- Mei Tao. Research on the Optical Real-time Precise Detection and Tracking System for Urban Low-altitude Anti-UAV [D]. Central South University, 2023. [CrossRef]

- Sun Yibo. Research on Anti-UAV Detection and Tracking Algorithm Based on YOLOv8 [D]. Xidian University, 2024.

- Analysis of the Application of Anti-UAV Detection and Countermeasures in Radio Spectrum Technology [J]. China Security, 2024, (03): 33-38.

- He J, Liu M, Yu C. UAV reaction detection based on multi-scale feature fusion[C]//2022 International Conference on Image Processing, Computer Vision and Machine Learning (ICICML). IEEE, 2022: 640-643.

- Tan Liang, Zhao Liangjun, Zheng Liping, et al. Research on Anti-UAV Target Detection Algorithm Based on YOLOv5s-AntiUAV [J]. Optoelectronics and Control, 2024, 31(05): 40-45 + 107.

- Wang X, Zhou C, Xie J, et al. Drone detection with visual transformer[C]International Conference on Autonomous Unmanned Systems: Springer, 2021: 2689-2699.

- AlDosari K, Osman A I, Elharrouss O, et al. Drone-type-Set: A benchmark for detecting and tracking drone types using drone models [J]. arXiv preprint arXiv:2405.10398, 2024.

- Yasmine G, Maha G, Hicham M. Anti-drone systems: An attention based improved YOLOv7 model for a real-time detection and identification of multi-airborne target[J]. Intelligent Systems with Applications, 2023, 20: 200296.

- Jiao Lihaohao, Cheng Huanxin. YOLO-DAP: An Improved Anti-UAV Target Detection Algorithm Based on YOLOv8 [J]. Opto-Electronic Engineering and Control, 2025, 32(06): 38-43 + 55.

- Jocher, G.; Chaurasia, A.; Qiu, J. YOLO by Ultralytics. 2023. Available online: https://github.com/ultralytics/ultralytics/blob/main/CITATION.cff (accessed on 30 June 2023).

- J. Zhao, J. Zhang, D. Li and D. Wang, "Vision-Based Anti-UAV Detection and Tracking," in IEEE Transactions on Intelligent Transportation Systems, vol. 23, no. 12, pp. 25323-25334, Dec. 2022. [CrossRef]

- Y. Zheng, Z. Chen, D. Lv, Z. Li, Z. Lan and S. Zhao, "Air-to-Air Visual Detection of Micro-UAVs: An Experimental Evaluation of Deep Learning," in IEEE Robotics and Automation Letters, vol. 6, no. 2, pp. 1020-1027, Apr. 2021. [CrossRef]

- Wu Ge, Zhu Yufan, Jia Zhening. An Improved PCB Surface Defect Detection Method Based on YOLO11 [J/OL]. Electronic Measurement Technology, 1-11 [2025-06-06].

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. YOLOv10: Real-Time End-to-End Object Detection. arXiv 2024, arXiv:2405.14458. [CrossRef].

- Jocher, G.; Stoken, A.; Borovec, J.; Chaurasia, A.; Changyu, L.; Hogan, A.; Hajek, J.; Diaconu, L.; Kwon, Y.; Defretin, Y. ultralytics/yolov5:v5. 0-YOLOv5-P6 1280 models, AWS, Supervise. ly and YouTube integrations. Zenodo. Zenodo. 2021. Available online: https://github.com/ultralytics/yolov5 (accessed on 18 May 2020).

- Zhao, H. et al. (2018). PSANet: Point-wise Spatial Attention Network for Scene Parsing. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds) Computer Vision – ECCV 2018. ECCV 2018. Lecture Notes in Computer Science(), vol 11213. Springer, Cham.

- Chollet, F., "Xception: Deep Learning with Depthwise Separable Convolutions", <i>arXiv e-prints</i>, Art. no. arXiv:1610.02357, 2016. [CrossRef]

- LIN T Y, MAIRE M, BELONGIE S, et al. Microsoft coco:Common objects in context[C]// 13th European Conference on Computer Vision, 2014:740-755.

- Zhao, Yian, Lv,et al. DETRs Beat YOLOs on Real-time Object Detection[J]. arXiv,2023.

- Vincent O R, Folorunso O. A descriptive algorithm for Sobel image edge detection [C]/ /Proceedings of the Informing Science & IT Education Conference. Macon: Informing Science Institute, 2009: 97-107.

- Tan, M., Pang, R., and Le, Q. V., "EfficientDet: Scalable and Efficient Object Detection", <i>arXiv e-prints</i>, Art. no. arXiv:1911.09070, 2019. [CrossRef]

- Cai, X., Lai, Q., Wang, Y., Wang, W., Sun, Z., and Yao, Y., "Poly Kernel Inception Network for Remote Sensing Detection", <i>arXiv e-prints</i>, Art. no. arXiv:2403.06258, 2024. [CrossRef]

- Wang, C.-Y., Yeh, I.-H., and Liao, H.-Y. M., "YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information", <i>arXiv e-prints</i>, Art. no. arXiv:2402.13616, 2024. [CrossRef]

- He, K., Zhang, X., Ren, S., and Sun, J., "Deep Residual Learning for Image Recognition", in <i>2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR)</i>, 2016, Art.no.1. [CrossRef]

- LIU S, HUANG D, WANG Y. Learning spatial fusion for single-shot object detection [J]. arXiv: 1911.09516, 2019.

- Zhang Shuai, Wang Baotao, Tu Jiayi, et al. SCE-YOLO: An Improved Lightweight UAV Vision Detection Algorithm [J/OL]. Computer Engineering and Applications, 1-14 [2025-06-24].

- Feng, C., Zhong, Y., Gao, Y., Scott, M. R., and Huang, W., "TOOD: Task-aligned One-stage Object Detection", <i>arXiv e-prints</i>, Art. no. arXiv:2108.07755, 2021. [CrossRef]

- Wu, Y. and He, K., "Group Normalization", <i>arXiv e-prints</i>, Art. no. arXiv:1803.08494, 2018. [CrossRef]

- ZHENG Z H, WANG P, LIU W, et al. Distance-IoU loss: Faster and Better Learning for Bounding Box Regression [C]// Proceedings of the AAAI conference on artificial intelligence,New York: AAAI, 2020: 12993-13000.

- REZATOFIGHI H, HAMID T, NATHAN G, et al. Generalized Intersection over Union: A Metric and a Loss for Bounding Box Regression[C]// Proceedings of the IEEE International Conference on Computer Vision, Long Beach: IEEE, 2019: 658-666.

- Fang Zhengbo, Gao Xiangyang, Zhang Xieshi, et al. Underwater Object Detection Model Based on Improved YOLO11 [J/OL] Electronic Measurement Technology, 1-10 [2025-05-15].

- Xia Shufang, Yin Haonan, Qu Zhong. ETF-YOLO11n: Multi-scale Feature Fusion Object Detection Method for Traffic Images [J/OL]. Computer Science, 1-10 [2025-05-15].

- Zhang Zemin, Meng Xiangyin, Wang Zizhou, et al. YOLO11-LG: Glassware Detection Combining Boundary Enhancement Method [J]. Computer Engineering and Applications, 2025.

- Li Bin, Li Shenglin. Improved YOLOv11n Algorithm for Small Object Detection in Unmanned Aerial Vehicles [J]. Computer Engineering and Applications, 2025, 61.

- Tian, Y., Ye, Q., and Doermann, D., “YOLOv12: Attention-Centric Real-Time Object Detectors”, <i>arXiv e-prints</i>, Art. no. arXiv:2502.12524, 2025. [CrossRef]

- Zhao, Y., “DETRs Beat YOLOs on Real-time Object Detection”, <i>arXiv e-prints</i>, Art. no. arXiv:2304.08069, 2023. [CrossRef]

- Zhang, H., Xu, C., and Zhang, S., “Inner-IoU: More Effective Intersection over Union Loss with Auxiliary Bounding Box”, <i>arXiv e-prints</i>, Art. no. arXiv:2311.02877, 2023. [CrossRef]

- Zhang, H. and Zhang, S., “Shape-IoU: More Accurate Metric considering Bounding Box Shape and Scale”, <i>arXiv e-prints</i>, Art. no. arXiv:2312.17663, 2023. [CrossRef]

- Zhang, H. and Zhang, S., “Focaler-IoU: More Focused Intersection over Union Loss”, <i>arXiv e-prints</i>, Art. no. arXiv:2401.10525, 2024. [CrossRef]

- Ma, S. and Xu, Y., “MPDIoU: A Loss for Efficient and Accurate Bounding Box Regression”, <i>arXiv e-prints</i>, Art. no. arXiv:2307.07662, 2023. [CrossRef]

- Tong, Z., Chen, Y., Xu, Z., and Yu, R., “Wise-IoU: Bounding Box Regression Loss with Dynamic Focusing Mechanism”, <i>arXiv e-prints</i>, Art. no. arXiv:2301.10051, 2023. [CrossRef]

- Gevorgyan, Z., “SIoU Loss: More Powerful Learning for Bounding Box Regression”, <i>arXiv e-prints</i>, Art. no. arXiv:2205.12740, 2022. [CrossRef]

- Zhang, Y., “Deeper Insights into Weight Sharing in Neural Architecture Search”, <i>arXiv e-prints</i>, Art. no. arXiv:2001.01431, 2020. [CrossRef]

| Model | Figure | Replacement Position | P | R | mAP50 | mAP50-95 | |||

| A | B | C | D | ||||||

| YOLO11n | 1 |  |

|

|

0.946 | 0.829 | 0.905 | 0.612 | |

| 2 |  |

0.942 | 0.827 | 0.901 | 0.601 | ||||

| 3 |  |

|

0.942 | 0.83 | 0.9 | 0.598 | |||

| 4 |  |

0.948 | 0.812 | 0.898 | 0.598 | ||||

| 5 |  |

|

|

0.924 | 0.84 | 0.899 | 0.595 | ||

| 6 |  |

|

|

0.945 | 0.809 | 0.894 | 0.596 | ||

| 7 |  |

|

0.939 | 0.821 | 0.897 | 0.595 | |||

| 8 |  |

|

|

|

0.92 | 0.829 | 0.892 | 0.595 | |

| 9 |  |

0.931 | 0.819 | 0.896 | 0.594 | ||||

| 10 |  |

|

|

0.931 | 0.825 | 0.894 | 0.593 | ||

| 11 |  |

|

0.925 | 0.821 | 0.895 | 0.592 | |||

| 12 |  |

|

0.911 | 0.838 | 0.895 | 0.589 | |||

| 13 |  |

|

0.934 | 0.83 | 0.893 | 0.585 | |||

| 14 |  |

|

0.93 | 0.813 | 0.887 | 0.584 | |||

| 15 |  |

0.91 | 0.829 | 0.891 | 0.581 | ||||

| (b) | |||||||||

| Model | Figure | Replacement Position | P | R | mAP50 | mAP50-95 | |||

| A | B | C | D | ||||||

| YOLO11n+ SRA-DFF | 1 |  |

0.935 | 0.844 | 0.91 | 0.598 | |||

| 2 |  |

|

0.943 | 0.843 | 0.9 | 0.598 | |||

| 3 |  |

|

|

|

0.94 | 0.833 | 0.903 | 0.596 | |

| 4 |  |

|

|

0.949 | 0.814 | 0.898 | 0.597 | ||

| 5 |  |

|

0.924 | 0.851 | 0.906 | 0.595 | |||

| 6 |  |

|

0.936 | 0.829 | 0.901 | 0.596 | |||

| 7 |  |

0.949 | 0.821 | 0.902 | 0.595 | ||||

| 8 |  |

|

|

0.931 | 0.834 | 0.897 | 0.595 | ||

| 9 |  |

|

0.943 | 0.831 | 0.9 | 0.594 | |||

| 10 |  |

0.944 | 0.829 | 0.903 | 0.592 | ||||

| 11 |  |

|

|

0.923 | 0.85 | 0.903 | 0.59 | ||

| 12 |  |

|

|

0.934 | 0.838 | 0.902 | 0.589 | ||

| 13 |  |

0.934 | 0.834 | 0.901 | 0.589 | ||||

| 14 |  |

|

0.93 | 0.829 | 0.898 | 0.589 | |||

| 15 |  |

|

0.947 | 0.825 | 0.897 | 0.587 | |||

| Loss | P | R | mAP50 | mAP50-95 |

|---|---|---|---|---|

| CIoU | 0.950 | 0.849 | 0.909 | 0.608 |

| GIoU | 0.948 | 0.857 | 0.915 | 0.613 |

| Inner-DIoU | 0.959 | 0.84 | 0.907 | 0.601 |

| Inner-ShapeIoU | 0.946 | 0.846 | 0.909 | 0.601 |

| Focaler-EIoU | 0.936 | 0.842 | 0.9 | 0.596 |

| Focaler-MPDIoU | 0.942 | 0.845 | 0.898 | 0.593 |

| Wise-WIoU | 0.951 | 0.86 | 0.914 | 0.602 |

| Wise-Inner-SIoU | 0.949 | 0.842 | 0.914 | 0.604 |

| Model | P | R | mAP50 | mAP50-95 | GFLOPs | Params/106 |

|---|---|---|---|---|---|---|

| Baseline | 0.933 | 0.818 | 0.89 | 0.587 | 6.3 | 2.58 |

| +I1 | 0.942 | 0.83 | 0.9 | 0.598 | 6.4 | 2.57 |

| +I1+I2 | 0.943 | 0.843 | 0.9 | 0.598 | 7.7 | 2.72 |

| +I1+I2+I3 | 0.95 | 0.849 | 0.909 | 0.608 | 9.4 | 2.6 |

| +I1+I2+I3+I4 | 0.948 | 0.857 | 0.915 | 0.613 | 9.4 | 2.6 |

| Model | P | R | mAP50 | mAP50-95 | GFLOPs | Params/106 |

|---|---|---|---|---|---|---|

| YOLOv5n | 0.94 | 0.787 | 0.879 | 0.568 | 5.8 | 2.18 |

| YOLOv5s | 0.942 | 0.845 | 0.911 | 0.616 | 18.7 | 7.81 |

| YOLOv6n | 0.929 | 0.819 | 0.893 | 0.591 | 11.5 | 4.16 |

| YOLOv8n | 0.937 | 0.819 | 0.893 | 0.591 | 6.8 | 2.68 |

| YOLOv9t | 0.915 | 0.819 | 0.886 | 0.592 | 6.4 | 1.73 |

| YOLOv9s | 0.938 | 0.852 | 0.913 | 0.623 | 22.1 | 6.19 |

| YOLOv10n | 0.929 | 0.814 | 0.897 | 0.592 | 8.2 | 2.69 |

| YOLO11n | 0.933 | 0.818 | 0.89 | 0.587 | 6.3 | 2.58 |

| YOLO11n-SMSH | 0.948 | 0.857 | 0.915 | 0.613 | 9.4 | 2.6 |

| Block | P | R | mAP50 | mAP50-95 |

|---|---|---|---|---|

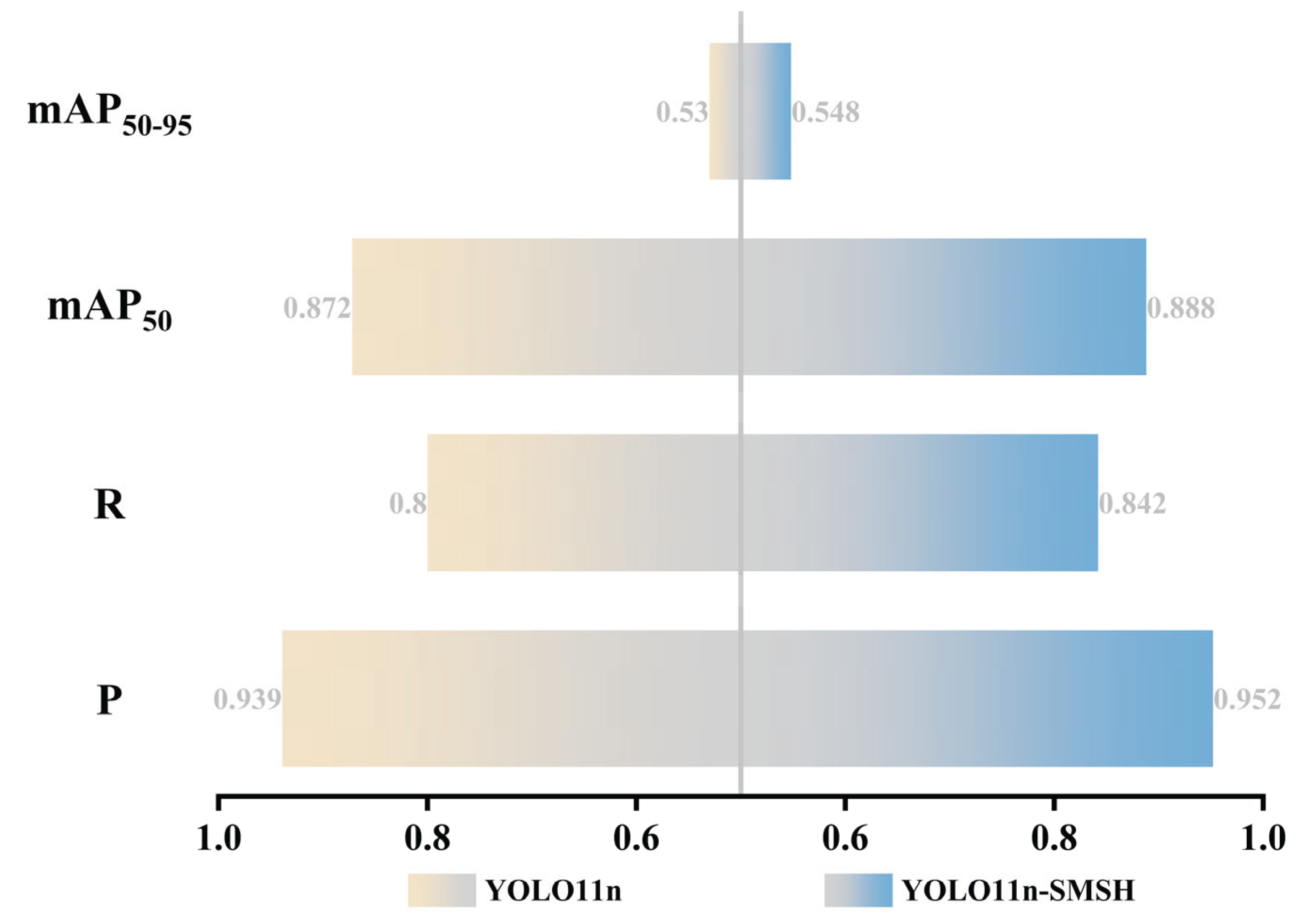

| YOLO11n(baseline) | 0.939 | 0.8 | 0.872 | 0.53 |

| YOLO11n-SMSH(ours) | 0.952 | 0.842 | 0.888 | 0.548 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).