1. Introduction

In the statistical literature, a data set with occurrence times of successive events generally can be modeled by using a counting process (CP). To determine a suitable stochastic CP model, it has to be tested whether the data set has a trend or not. Pekalp and Aydogdu [

32] compared the monotonic trend tests for some counting processes. If the successive interarrival times are independent and identically distributed (iid) (there is no trend), the data set may be modeled by a renewal process (RP). However, in real-life examples, the successive inter-arrival times contain a monotone trend because of the aging effect and the accumulated wear [

8]. In this case, this trend can be modeled by a nonhomogeneous Poisson process (NHPP) [

2,

3,

4,

5,

6,

7,

8,

9,

10]. The data set having a monotone trend can also be analyzed by a geometric process (GP). GP is one of the widely used and known models for the monotone trend. Lam [

17,

18] first introduced the GP process. Furthermore, the process is used as a model in many areas in the reliability context. For details, see [

36]. Lam [

22] presented the GP theory and its applications. The real datasets with a monotone trend are modeled by GP in [

39].

Although the GP is known as the most commonly used model, it has some limitations that can cause difficulties. The two limitations can be given as follows: Using the GP for non-monotone interarrival times with distributions with varying shape parameters is not suitable. The other limitation is that GP only allows logarithmic growth or explosive growth [

7]. The GP model causes difficulties in the applications. Therefore, it can be said that the GP could be unsuitable for the mentioned cases.

To overcome the above difficulties, some stochastic models were developed by Wu and Scarf [

36], Wu [

37], and Wu and Wang [

38]. One of the important models is the DGP for such models.

Wu [

37] compares the DGP with the GP and exhibits the advantages and the preferability of the DGP. The definitions of CP, RP, GP, and DGP are presented as follows.

Definition 1.1 is the number of events that occurred in the intervalthenis called a CP where,be the occurrence time ofevent,inter-arrival time be the time ofandevent;

Definition 1.2.Ifare (iid) random variables with cumulative distribution function (cdf )F, a CPcorresponds to an RP.

Definition 1.3.Let’s assume thatis a CP andis the set of interarrival times of a CP. If there exists a positive constant valuedefined as a ratio parameter such that…,are iid form an RP with a commonis the cdf of the first inter-arrival time then the CP corresponds to a GP.

The cdf of is .Therefore the distribution of uniquely determines the distribution of . Now, let and . Then the expected value and the variance of the random variable are given as .Therefore it is clear that the cdf of uniquely determines the cdf of that is; , ..

Monotonicity has an important role in the theory of stochastic processes. If , then is defined as stochastically increasing (decreasing), and if then the GP corresponds to the RP.

Definition 1.4.Let’s assume thatis a CPis the set of interarrival times of this process. If there exists a positive constantratio parameter such that…are iid form an RP with a common F is the CDF of the, the processis called a DGP, whereis a positive function ofwithand.

Wu [

37] handled different

such as

, and

where

is a real number,

denotes the logarithm with base 10. He has shown that the DGP with

is better than the others for ten real data sets in Lam [

23]. Then it is taken as

in this study.

Now we assume that the random variable

is a continuous random variable with a pdf

Hence

Moreover, the expected value and the variance of

are

Provided the above integral are finite, where .

Wu [

37] gives the monotonicity properties of the DGP given as follows.

i) If and , or if and , increases stochastically.

ii) If and or if and , decreases stochastically.

The parameter estimation problem naturally arises in DGP. The parameter estimation problem for a DGP contains the parameters and These parameters determine the mean and the variance of the first inter-arrival time . Therefore, the parameter estimation problem for DGP is a very important issue as well as in GP. The some of the studies in GP are given as follows.

Lam and Chan [

20], Chan et al. [

8], Aydoğdu et al. [

3], and Kara et al. [

13] used the lognormal, gamma, Weibull, and inverse Gaussian distributions, respectively, for the

interarrival time to estimate the parameters for a GP. Kara et al. [

16] consider the parameter estimation problem for the gamma geometric process.

Pekalp and Aydogdu [

30] considered the power series expansions for the probability distribution, mean value, and variance function of a geometric process with gamma interarrival times. Pekalp and Aydogdu [

33] considered the parameter estimation problem for the mean value and variance functions in GP.

Aydogdu and Altındag [

5] computed the mean value and variance functions in a geometric process. Altındag [

1] evaluated the multiple process data in a geometric process with exponential failures.

Yılmaz et al. [

40] used the Bayesian inference with Lindley distribution by a GP.

Pekalp and Aydogdu [

26] studied the integral equation for the second moment function in a GP. Pekalp and Aydogdu [

27], Pekalp et al. [

28] obtained the asymptotic solution of the integral equation for the second moment function of a GP by discriminating the lifetime distributions of the ten real data sets used in [

21].

Pekalp

et.al [

29,

31,

34] estimate the parameters of a DGP by using the ML method under the exponential, Weibull, and lognormal distribution assumptions, respectively, for the first inter-arrival time

. Eroglu Inan [

11] considered the parameter estimation problem for a DGP under the assumption that the first interarrival time has an inverse Gaussian distribution.

Although there are some studies in GP for the gamma distribution, which is used and is an important model in reliability theory, to the best of our knowledge, no study has been done yet about DGP, which removes the lack of GP model. Therefore, the parametric statistical inference problem for DGP under the gamma distribution assumption can be studied.

The nonparametric problem was studied for GP and

series processes by the some authors [

4,

15,

19,

35,

37]. Similarly, Jasim and Al-Qazaz [

12] proposed inference methods for DGP.

In this study, the parameter estimation problem for a DGP is considered under the assumption that the first inter-arrival time

has a gamma distribution. This paper is organized as follows: In

Section 2, the gamma distribution and its probabilistic properties are reminded. In

Section 3, the log-likelihood function, first derivatives of this function, and the asymptotic joint distribution of the estimators are obtained. In

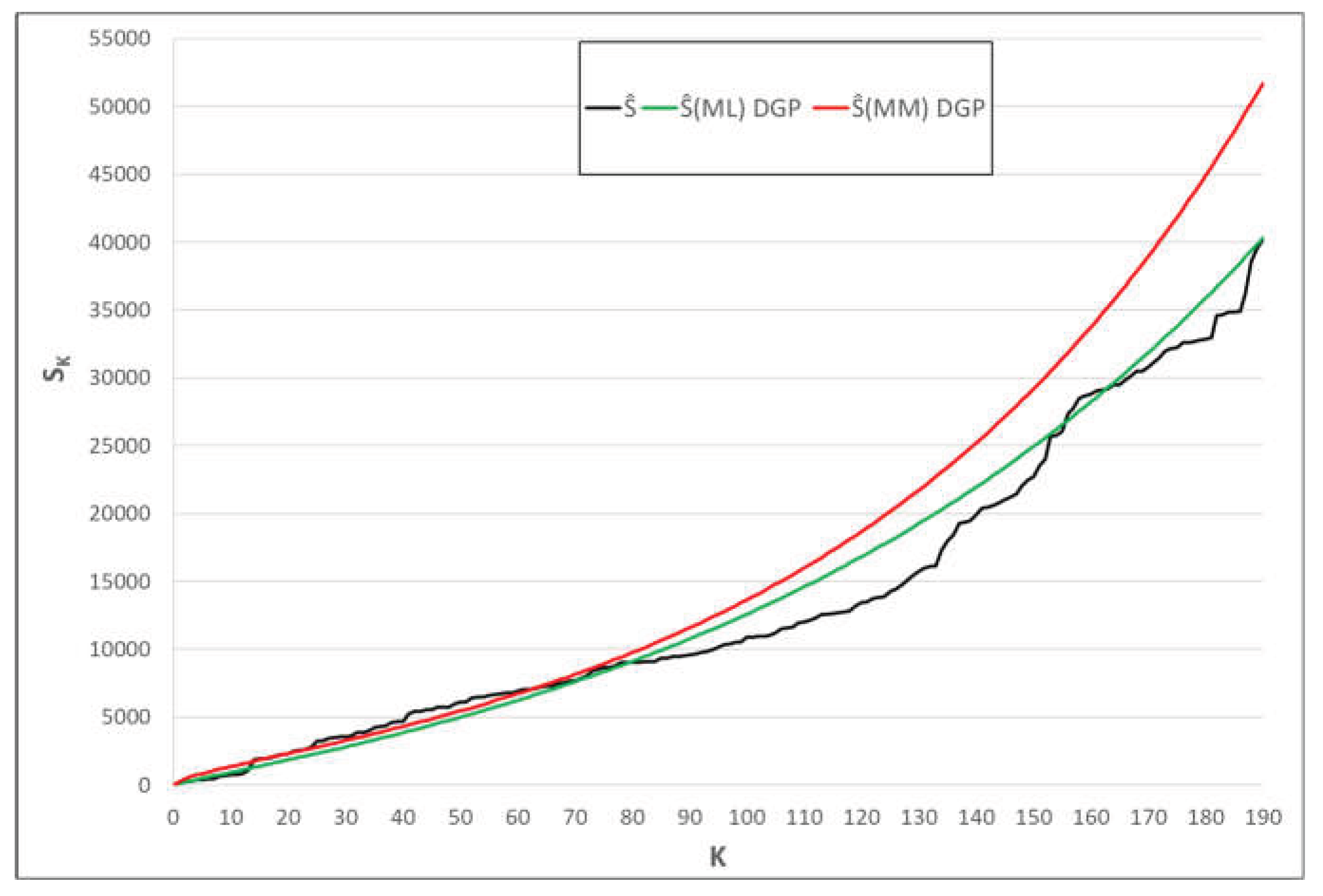

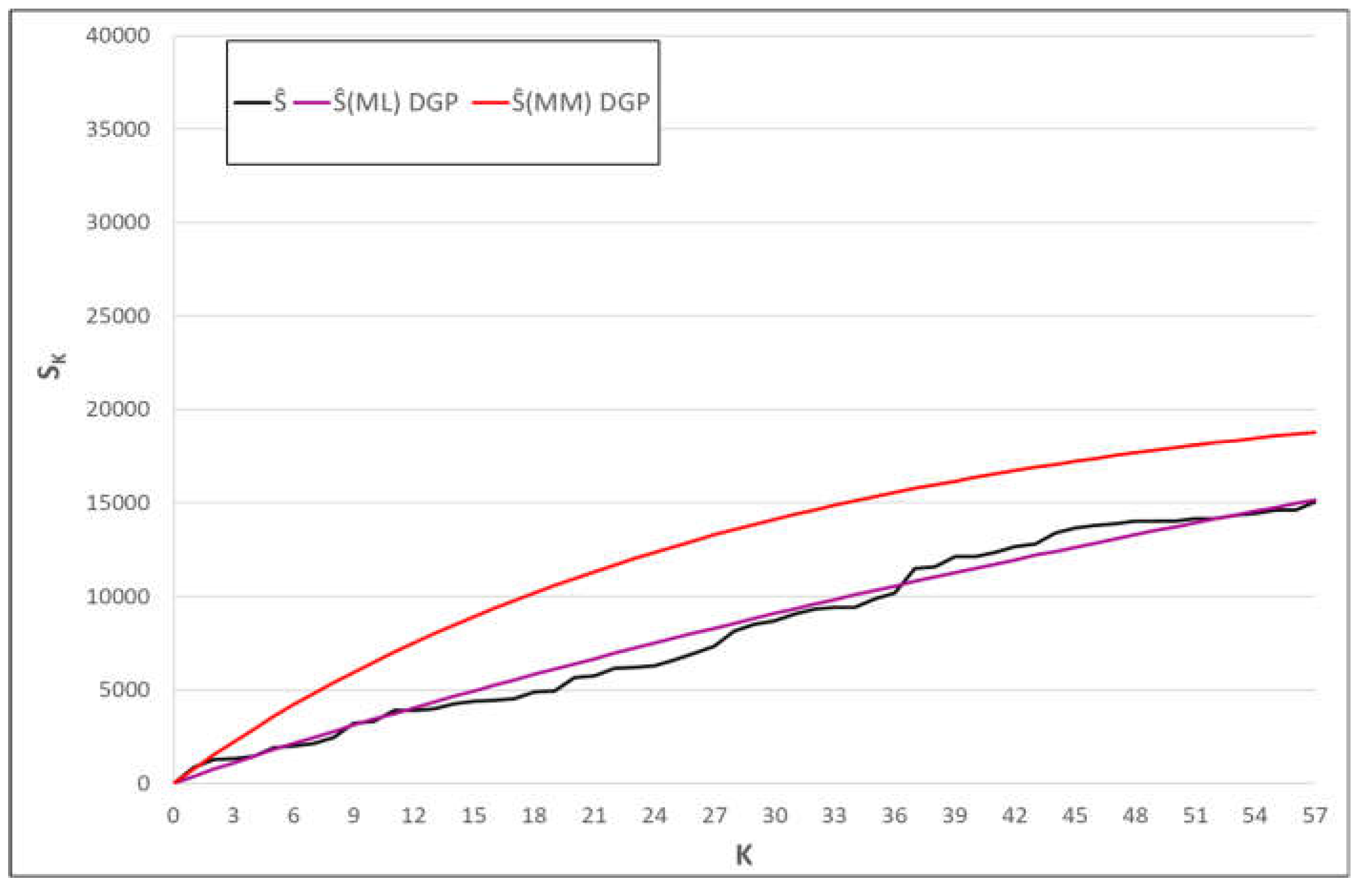

Section 4, an extensive simulation study is performed, and the simulated mean, bias and mean square error (MSE) values are calculated, and the small sample performances of the estimators are evaluated for various values of parameters. In

Section 5, the importance of the modelling with DGP is presented by the two illustrative examples. Finally, in

Section 6, the results are discussed.