5. Discussion

Considering the aspects of predictive effectiveness and computational efficiency, this study conducts a comparative analysis of four machine learning algorithms: Decision Tree, Random Forest, K-Nearest Neighbor (KNN), and Support Vector Machine (SVM) in the task of Kanji character classification. The evaluation is conducted based on key performance metrics such as accuracy, precision, recall, F1-score, and regression metrics (R², MSE, MAE, RMSE), which are summarized in

Table 9. The comparison reveals significant performance variations among the algorithms, which are influenced by model complexity, generalization capacity, and the intrinsic characteristics of the Kanji data used.

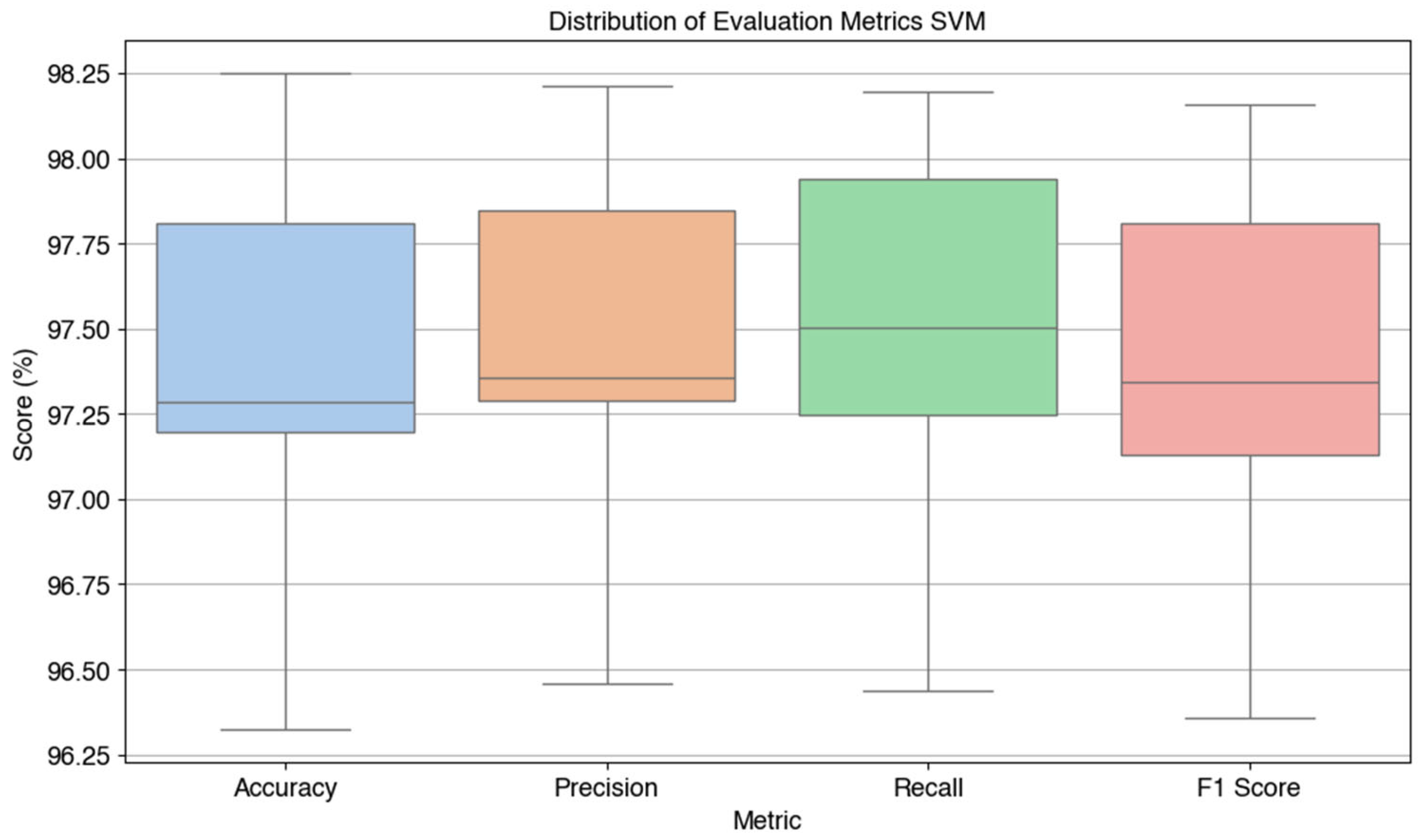

The evaluation was conducted using various performance metrics and aspects of computational efficiency. Based on

Table 9, the evaluation results show that the SVM algorithm provides the best overall performance with an accuracy value of 97.43%, precision of 97.46%, recall of 97.50%, and F1-score of 97.40%. This model also has the highest R² value of 94.80%, accompanied by the lowest MSE, MAE, and RMSE, indicating that the predictions produced are very close to the actual values in the test data.

However, the superiority of SVM in terms of accuracy and precision must be paid for with relatively large training time and model size (29.78 seconds and 110,332 KB). In contrast, KNN shows the fastest training time (0.13 seconds), but its model size is very large (174,928 KB), because this algorithm stores all training data for nearest neighbor-based prediction. Random Forest appears balanced between high accuracy (95.01%) and more moderate model size compared to KNN and SVM. Meanwhile, Decision Tree shows the lowest performance among the four algorithms, both in terms of accuracy (68.23%) and F1-score (67.68%), despite having a small model size and quite efficient training time.

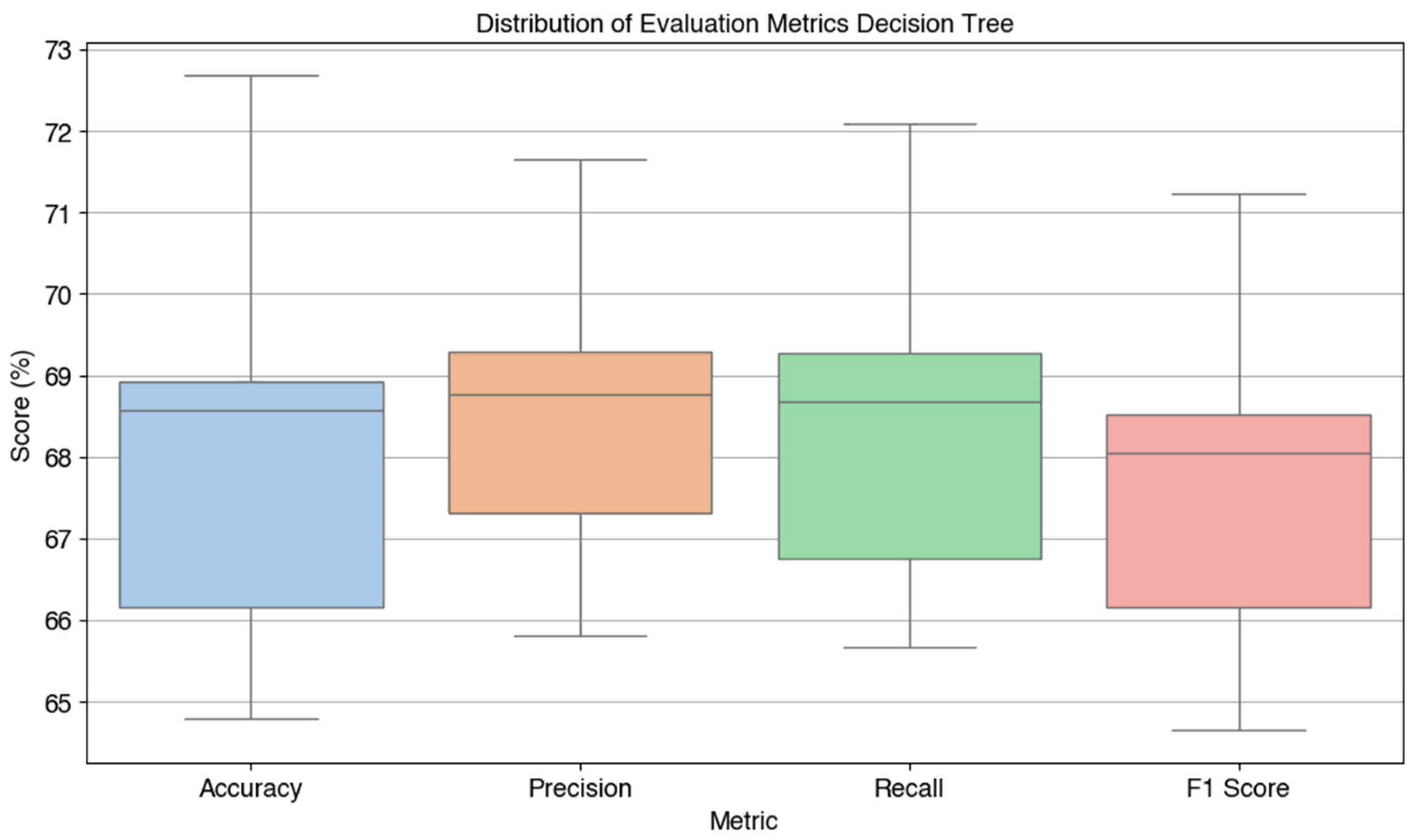

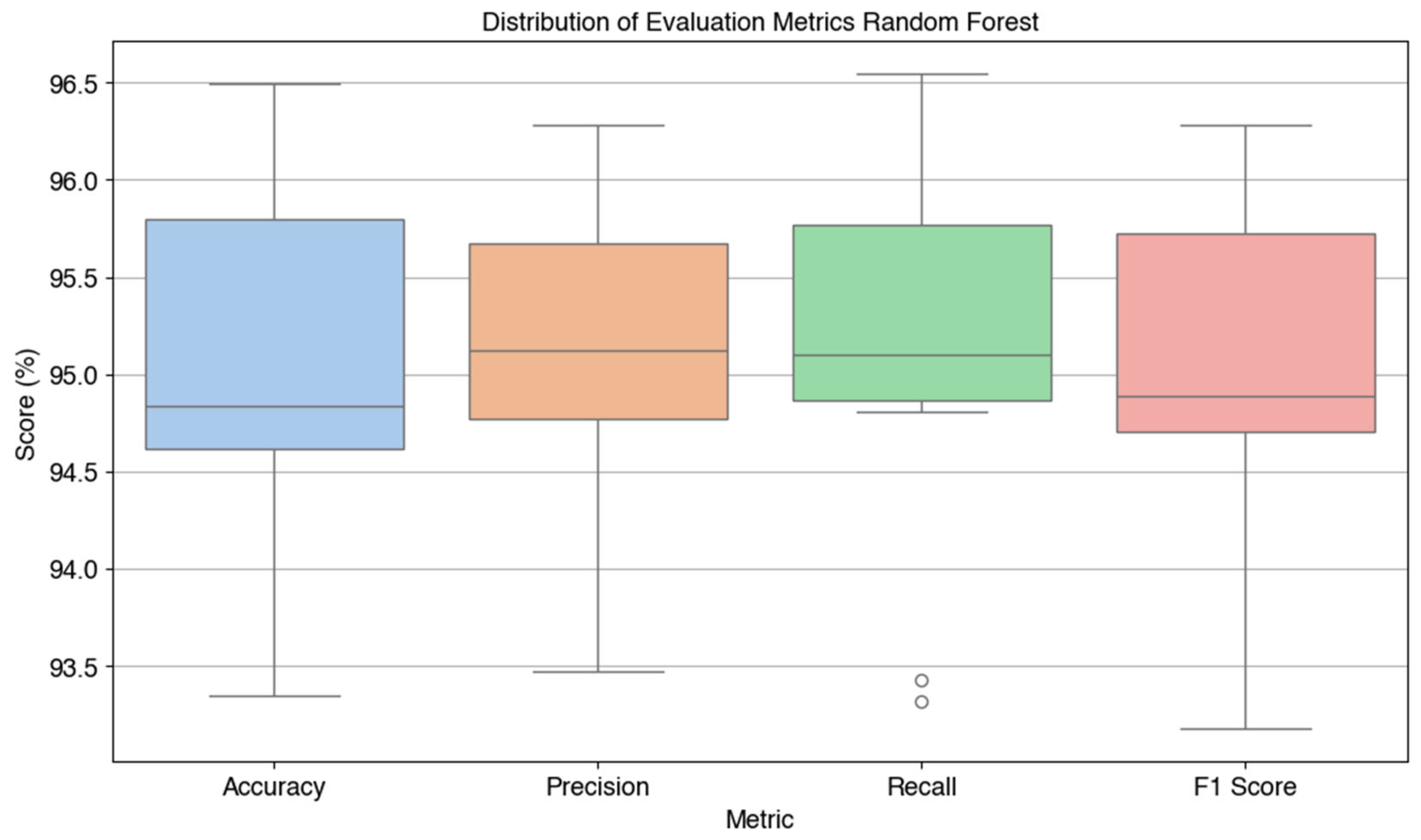

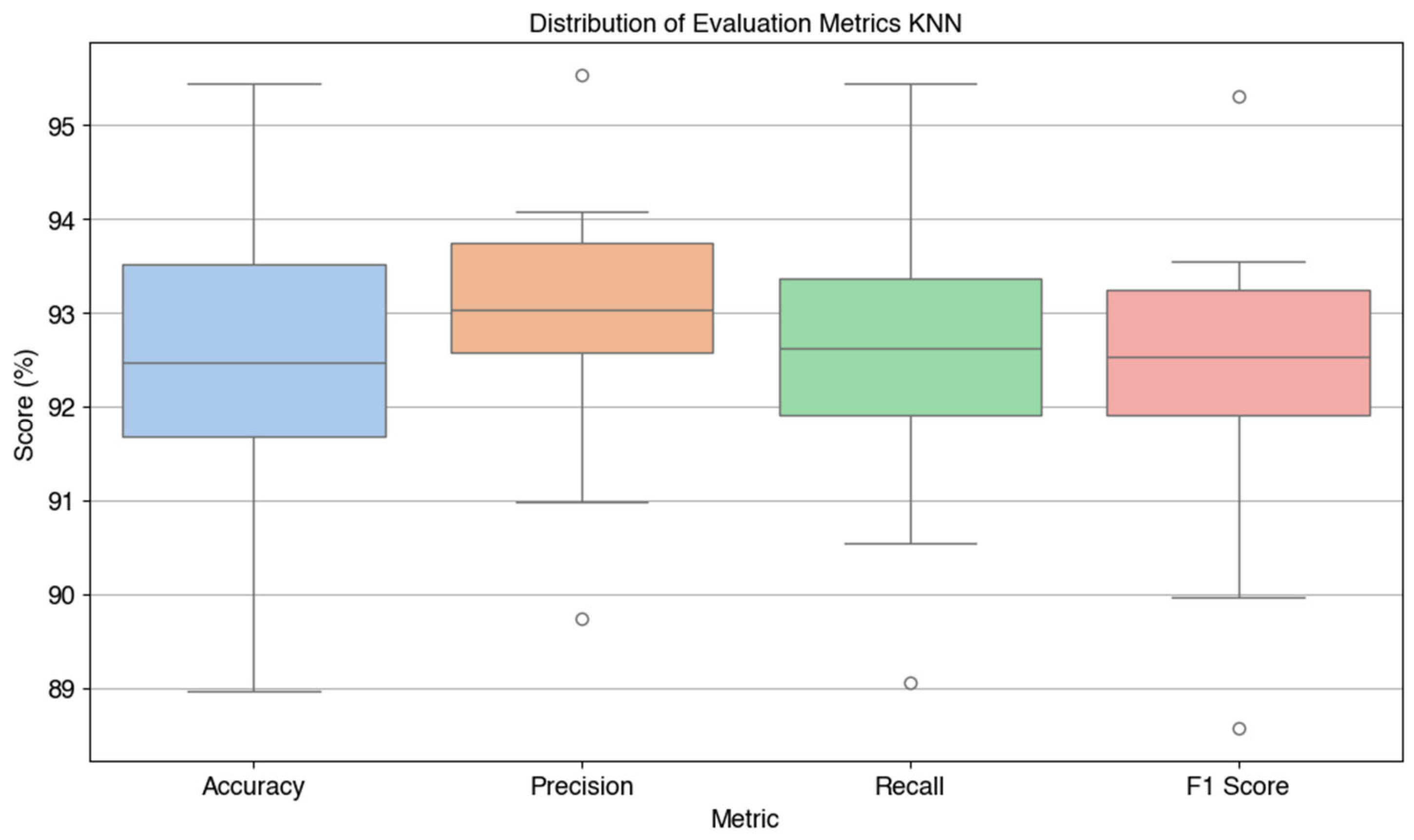

Furthermore, the evaluation results per fold presented in

Table 11 strengthen the superiority of SVM. This model consistently shows high performance on all folds, with an accuracy range of 96.32% to 98.25%. This indicates excellent model stability against variations in data partitions. In contrast, Decision Tree shows quite large performance fluctuations between folds, with the lowest accuracy on the 7th fold (64.80%) and the highest on the 10th fold (72.68%), indicating that this model is more susceptible to varying training data distributions.

The paired t-test statistical test presented in

Table 10 shows that only the Decision Tree algorithm has a statistically significant difference between the accuracy and F1-score values (p = 0.0076). This indicates that in this model, there is an imbalance between the number of correct predictions and the quality of classification in the minority class, which can affect the consistency of performance. In contrast, in the Random Forest, KNN, and SVM algorithms, no significant difference was found between the accuracy and F1-score, indicating that these metrics are consistent with each other and the model is able to maintain the balance of classification between classes.

In addition, the results of the t-test between algorithms (

Table 12) show that all comparisons provide statistically significant differences (p < 0.05). In particular, the comparison between Decision Tree and other algorithms produces a negative t-statistic value with a very small p value, confirming that Decision Tree significantly outperforms. Comparisons between other algorithms such as Random Forest vs SVM or KNN vs SVM also show that although all show high performance, there are significant differences that cannot be ignored.

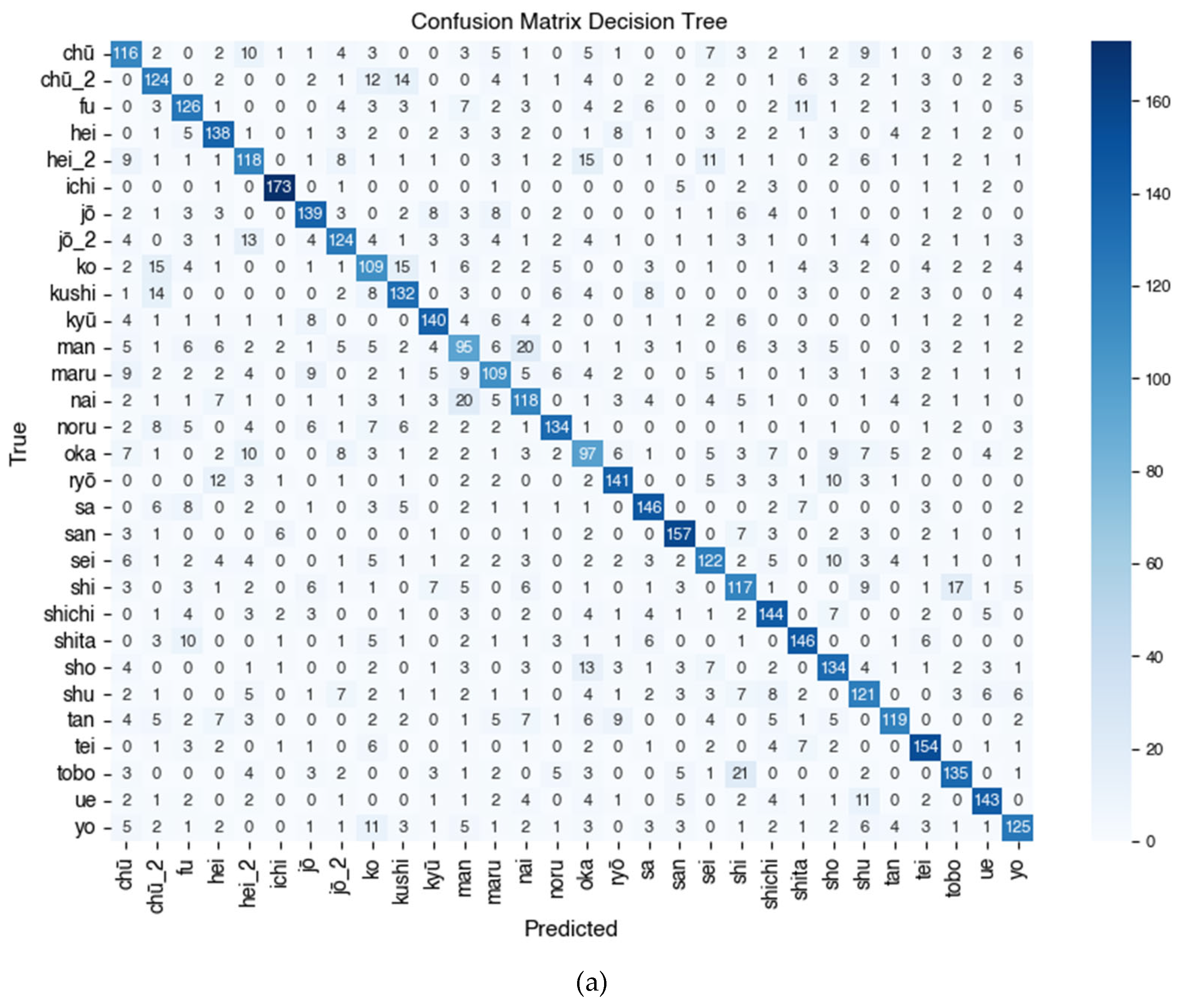

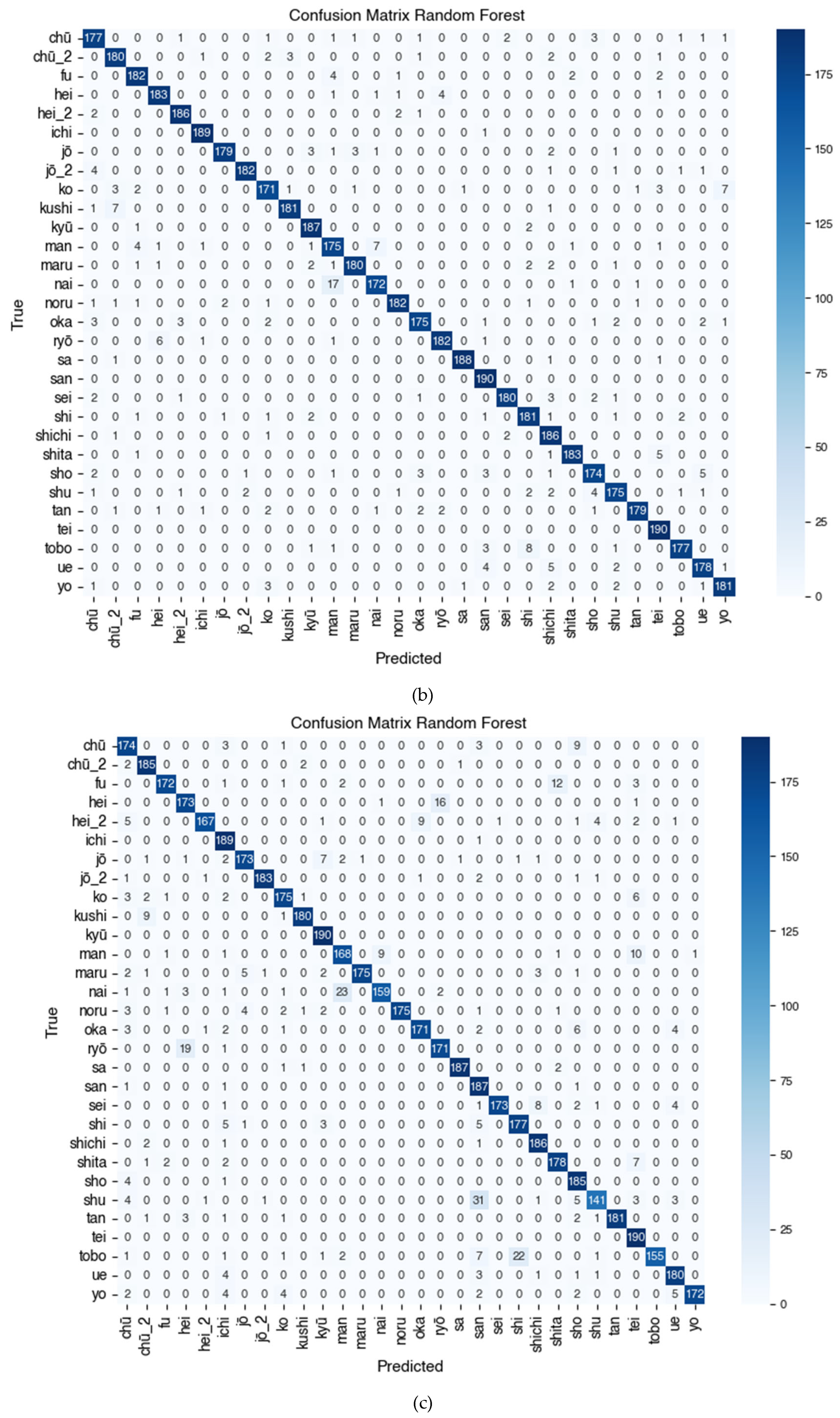

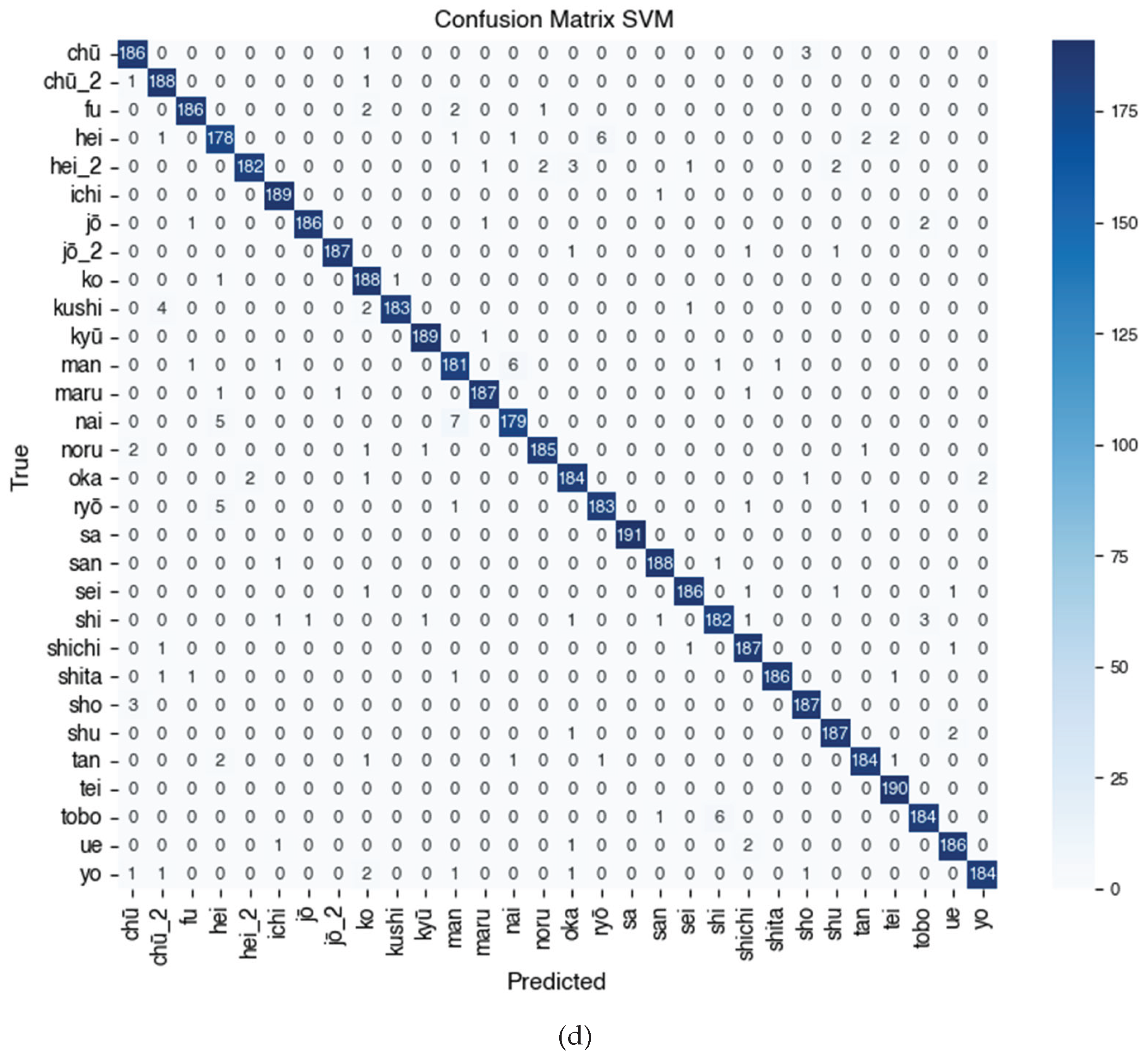

The combined confusion matrix visualization presented in

Figure 7 visually illustrates the performance of each algorithm on 30 Kanji character classes. SVM shows the most even distribution of predictions and minimal errors between classes, while Decision Tree shows more misclassifications (false positives and false negatives) in certain classes.

Figure 7 shows the Confusion Matrix of the Kanji (漢字) character classification results using four tested algorithms, namely (a) Decision Tree, (b) Random Forest, (c) K-Nearest Neighbor (KNN), and (d) Support Vector Machine (SVM). Based on the visualization and quantitative evaluation results, the SVM model shows the most superior and stable performance in recognizing Kanji characters, with precision and recall approaching 1.00 for most classes such as sa (佐), tei (亭), shita (下), jō (上), jō_2 (場), and ichi (一), reflecting almost perfect classification capabilities. The KNN model also shows high performance on classes such as jō_2 (場), kyū (九), kushi (串), maru (丸), and tan (丹), with f1-scores predominantly above 0.95, but experiences a decline in classes such as san (三), shu (主), and nai (内) due to higher misclassification rates. On the other hand, Random Forest consistently performs very well, especially on characters such as ichi (一), hei_2 (兵), kyū (九), tei (亭), and shita (下), and only experiences a decline in classes man (万) and shichi (七). Meanwhile, the Decision Tree algorithm shows greater variability between classes, with optimal performance on characters such as ichi (一), san (三), tei (亭), and ryō (良), but has difficulty classifying characters such as chū (中), ko (子), maru (丸), man (万), and oka (岡), as indicated by lower f1-score values in the range of 0.50–0.60. This indicates that the Decision Tree model tends to be less reliable in distinguishing Kanji characters that have similar shapes or uneven distributions. Overall, the Confusion Matrix analysis in

Figure 7 confirms the finding that SVM is the most accurate algorithm for multiclass classification of Kanji characters (漢字), followed by Random Forest and KNN, while Decision Tree shows the lowest and inconsistent performance between classes.

Overall, the results of this study support that SVM is the best algorithm choice for Kanji character classification in the context of the dataset used, considering its consistency, high accuracy, and stability between folds. However, consideration of computational resources remains important, especially in real implementation scenarios, so the choice of algorithm must be adjusted to the needs of the system.

As a form of external validation of the research results, a comparison was made with a number of previous studies that also focused on the classification of Kanji characters and Japanese characters in general.

Table 13 summarizes the evaluation results of several previous studies, including the methods used and the reported evaluation values such as accuracy, precision, recall, and F1-score. The main purpose of this comparison is to place the performance of the SVM model used in this study in a broader context, as well as to identify its strengths and limitations compared to other approaches that have been applied to similar problems.

When compared to various methods in previous literature, the results of this study place SVM as a superior approach in terms of accuracy, precision, recall, and overall F1-score. The model used shows the best performance compared to other models, such as CNN-SVM [

11], CNN ensemble [

12], as well as various combinations of classical algorithms such as KNN and Random Forest, which are used in research [

10]. Meanwhile, synthetic data-based methods such as Y-AE [

14] shows potential in improving F1-score, even without clear accuracy information.

These findings strengthen the hypothesis that SVM, with proper parameterization and features (in this case HOG features), is very effective for Kanji character classification, especially when the available data is representative enough. Although deep learning methods such as CNN have great potential, the advantages of SVM lie in its stable performance and computational efficiency, which makes it very relevant in resource-constrained systems.

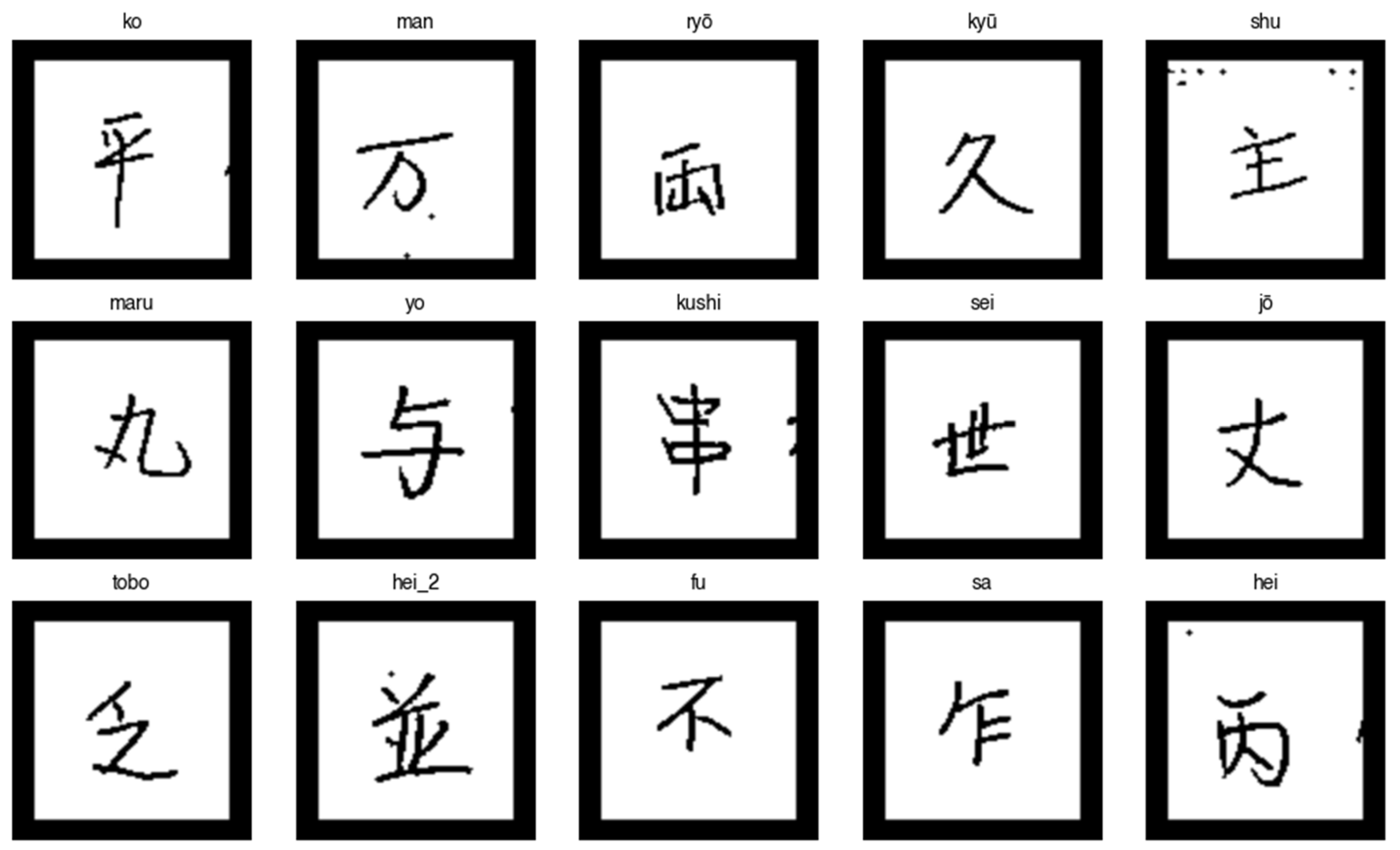

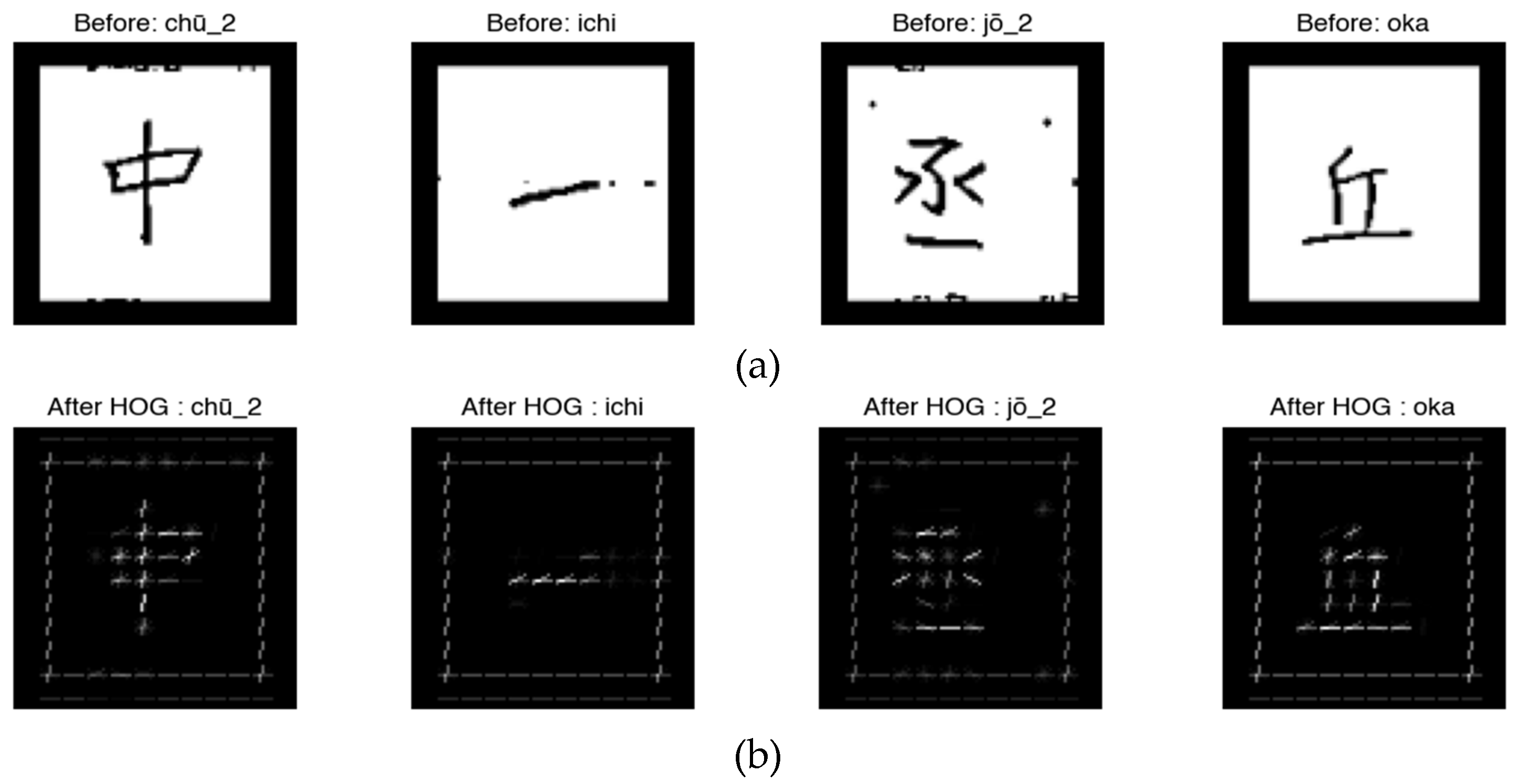

These results indicate that the SVM method in this study is a practical and superior approach for Kanji character classification based on image features. This study provides a comparative analysis of supervised machine learning algorithms, including Decision Tree, Random Forest, K-Nearest Neighbors, and Support Vector Machine, using Histogram of Oriented Gradients (HOG) features to classify 30 randomly selected Kanji characters from the ETL9G dataset. Unlike previous studies that mainly use deep learning approaches, this study explores a lightweight and interpretable model that is suitable for environments with limited computing resources. Experimental results show that SVM with a linear kernel provides the highest accuracy, and the HOG feature effectively represents the complex visual structure of characters. This study offers a useful empirical benchmark as a basis for future research in logographic character recognition.

For further research, hybrid approaches such as integrating SVM with deep learning-based feature extraction methods, or applying data augmentation based on generative models such as Y-AE, could be interesting directions to explore further. In addition, applying to more complex and larger datasets will be a challenge as well as an opportunity to assess the generalization of the SVM model in the context of broad Kanji character recognition.